Solving Data Sparsity in Molecular Optimization: Techniques, Applications, and Future of AI-Driven Drug Discovery

This article addresses the critical challenge of data sparsity in molecular optimization datasets, a major bottleneck in AI-driven drug discovery.

Solving Data Sparsity in Molecular Optimization: Techniques, Applications, and Future of AI-Driven Drug Discovery

Abstract

This article addresses the critical challenge of data sparsity in molecular optimization datasets, a major bottleneck in AI-driven drug discovery. It explores the fundamental causes and consequences of sparse data in cheminformatics, presents cutting-edge methodological solutions including generative models, data augmentation, and transfer learning, and provides practical troubleshooting guidance for implementation. A comparative analysis of validation frameworks and performance metrics is provided to equip researchers and drug development professionals with the knowledge to build more robust, data-efficient models, ultimately accelerating the development of novel therapeutics.

Why Sparse Data is the Silent Killer of AI-Driven Drug Discovery

Troubleshooting Guides and FAQs for Molecular Optimization Experiments

This technical support center addresses common experimental challenges in molecular optimization research, framed within the broader thesis of addressing data sparsity in molecular optimization datasets.

FAQ Section

Q1: Why do high-throughput screening (HTS) campaigns yield such a low hit rate, contributing to data sparsity? A1: The chemical space of synthetically feasible, drug-like molecules is estimated to be between 10^23 and 10^60 compounds. In contrast, the largest public HTS datasets (e.g., PubChem BioAssay) contain on the order of 10^8 data points. This discrepancy creates a sparsity problem where the experimentally explored space is an infinitesimal fraction of the potential space. The hit rate for a typical HTS is often <0.1%.

Q2: What are the main sources of experimental noise that corrupt small, sparse datasets? A2: Key sources include:

- Biochemical Assay Variability: Edge effects in microplates, reagent instability, temperature fluctuations.

- Instrument Artifacts: Liquid handler inaccuracies, reader drift.

- Compound Integrity: Degradation, evaporation, precipitation (especially problematic for virtual library screening where compounds are not physically available).

- Biological Noise: Cell passage number variability, phenotypic drift.

Q3: How can I validate a predictive model trained on a sparse, biased dataset? A3: Standard random split validation fails. Use:

- Temporal Split: Train on older data, validate on newer.

- Scaffold Split: Ensure training and test sets contain distinct molecular cores to assess generalizability.

- Property-Matched Cluster Split: Cluster by descriptors and split clusters.

Troubleshooting Guide: Common Experimental Pitfalls

Issue: Inconsistent SAR (Structure-Activity Relationship) from follow-up synthesis.

| Possible Cause | Diagnostic Check | Solution |

|---|---|---|

| Assay interference | Test compound at multiple concentrations; check for fluorescence/quenching, aggregation (via detergent like Triton X-100). | Use orthogonal assay (e.g., SPR, cellular) for validation. |

| Compound purity/identity | Re-analyze by LC-MS/HPLC. | Repurify or resynthesize with stringent QC. |

| Microplate positional effect | Re-test original hit in plate center vs. edge wells. | Use only interior wells for critical assays; include buffer controls in edge wells. |

Issue: Poor transferability of a virtual screening model to a new target class.

| Possible Cause | Diagnostic Check | Solution |

|---|---|---|

| Descriptor/feature mismatch | Analyze principal components of training vs. new chemical space. | Retrain model with transfer learning using a small, new target-specific dataset. |

| Dataset bias | Compare property distributions (MW, logP) of training actives vs. new library. | Apply generative models to design compounds within the applicability domain. |

Experimental Protocol: Generating a Robust QSAR Dataset from Sparse Primary HTS

Title: Protocol for Hit Triaging and Confirmatory Dose-Response.

Objective: To transform sparse, single-concentration HTS data into a reliable quantitative dataset for model training.

Materials:

- Primary hit list (≤ 0.5% of screened library).

- Source compounds (powders or DMSO stocks).

- Assay reagents and instrumentation (validated).

- 384-well microplates.

Methodology:

- Re-supply: Physically re-acquire hit compounds as powders from vendors or internal archives. Do not rely on original screening stock.

- Reformat: Prepare fresh 10 mM DMSO master stocks. Confirm identity via LC-MS.

- 11-Point Dose-Response: Using an echo liquid handler or precision pipette, perform 1:3 serial dilutions in DMSO across 11 points (e.g., 10 mM to 0.5 nM). Include vehicle (DMSO) control and reference control (known inhibitor/activator) on every plate.

- Duplicate Plates: Run the entire dose-response curve in two independent experiments, on different days, with freshly prepared intermediate stocks.

- Data Processing: Fit curve to 4-parameter logistic model. Calculate IC50/EC50. Compounds must meet criteria: R^2 > 0.9, Hill Slope between -2.5 and -0.5, efficacy >50% of reference control, and IC50 difference between replicates < 3-fold.

Expected Output: A high-confidence dataset of ~100-500 compounds with reliable pIC50 values, suitable for QSAR modeling, derived from an initial sparse screen of 100,000+ compounds.

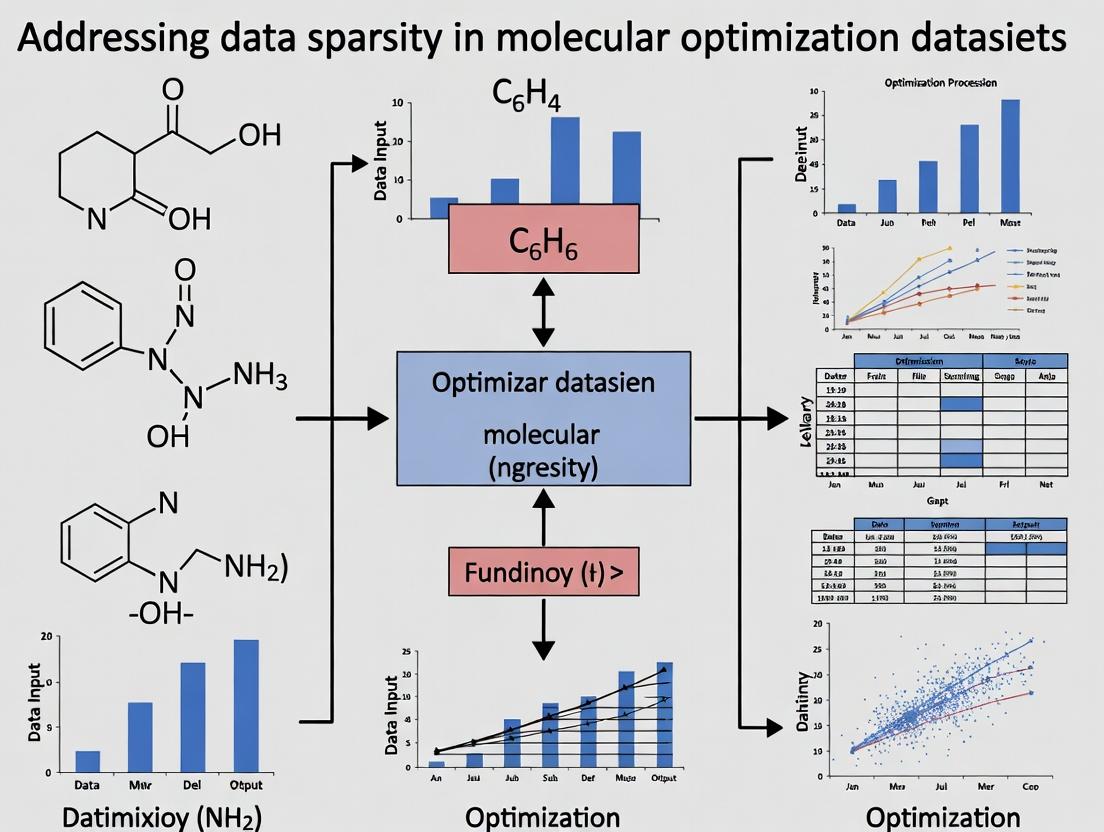

Diagram: The Molecular Optimization Data Sparsity Challenge

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function / Rationale |

|---|---|

| DMSO (Hybrid Grade or Higher) | Universal solvent for compound libraries. Low water content and high purity are critical to prevent compound degradation and assay interference. |

| ECHO Liquid Handler | Enables non-contact, nanoliter-scale transfer of DMSO compounds. Essential for creating accurate dose-response curves from sparse stocks without dilution errors. |

| qPCR-grade 384-well Plates | Optically clear, low-binding plates minimize compound adsorption and reduce edge effects, improving data consistency from sparse samples. |

| Triton X-100 or CHAPS | Used in counter-screening assays to diagnose and eliminate false positives from compound aggregation, a major artifact in sparse datasets. |

| Reference Control (Staurosporine, Oligomycin, etc.) | A well-characterized tool compound for every target class. Included on every plate to normalize data and control for inter-experimental variability. |

| LC-MS with CAD/ELSD | Charged Aerosol or Evaporative Light Scattering Detectors provide quantitative analysis of compound purity in the absence of a UV chromophore, confirming sample integrity. |

Technical Support Center

This support center addresses common bottlenecks in molecular optimization experiments that lead to data sparsity. The FAQs and guides provide solutions framed within the critical research thesis of generating denser, more informative datasets.

Frequently Asked Questions (FAQs)

Q1: My high-throughput screening (HTS) for compound activity yields an overwhelming rate of false negatives, wasting resources and creating sparse, unreliable data. What are the primary troubleshooting steps? A: False negatives in HTS often stem from suboptimal assay conditions. Follow this protocol:

- Positive Control Re-optimization: Titrate your known active compound (positive control) across a wider concentration range within the assay plate to verify the dynamic range is still valid.

- Cell Viability Check: If using cell-based assays, confirm >95% viability at the time of compound addition using a trypan blue exclusion or ATP-based assay. Run a cytotoxicity counter-screen.

- Reagent Stability Audit: Check the storage and thawing history of critical reagents (e.g., enzymes, co-factors, antibodies). Perform a fresh aliquot test against an old one.

- Automated Liquid Handler Calibration: Use a dye-based volume verification test to ensure pins or tips are dispensing accurately and consistently across all wells.

Q2: During hit-to-lead optimization, my compound solubility in physiological buffers is poor, preventing reliable IC50 determination and creating gaps in my SAR dataset. How can I address this? A: Poor solubility is a major bottleneck. Implement this tiered solubility assessment protocol:

- Rapid Kinetic Solubility: Prepare a 10 mM DMSO stock. Add 5 µL of this stock to 995 µL of PBS (pH 7.4) with vigorous vortexing. Incubate for 1 hour at room temperature. Filter through a 0.45 µm hydrophobic filter. Analyze the filtrate by UV-vis against a standard curve.

- Equilibrium Solubility (Gold Standard): Add excess solid compound to the buffer. Agitate for 24-48 hours at the desired temperature (e.g., 37°C). Filter and quantify concentration via HPLC-UV/ELSD.

- Formulation Mitigation: If solubility is below required levels, consider assay-ready formulations: addition of low percentages of co-solvents (e.g., <0.5% DMSO, <1% ethanol), or use of solubilizing agents like cyclodextrins (e.g., 0.1% HP-β-CD).

Q3: My protein target degrades during prolonged biochemical assays, leading to high signal variability and inconsistent dose-response data that I cannot use for modeling. How do I stabilize the protein? A: Protein instability requires a stabilization screen.

- Prepare a matrix of stabilization conditions in a 96-well plate. Variables should include:

- Buffer Additives: Glycerol (5-20%), sucrose (0.2-0.5 M), non-ionic detergents (e.g., 0.01% Tween-20).

- Reducing Agents: TCEP (0.1-1 mM) or DTT (0.5-2 mM) for cysteine-rich proteins.

- Protease Inhibitors: Include a broad-spectrum cocktail (e.g., 1X EDTA-free).

- Carrier Proteins: BSA or casein (0.1-1 mg/mL).

- Incubate your purified protein in each condition at the assay temperature (e.g., 25°C or 37°C).

- At time points (0, 1, 2, 4, 8, 24 hours), remove aliquots and measure remaining activity via a rapid activity endpoint assay.

- Select the condition that maintains >90% activity over your intended assay duration.

Q4: I am encountering significant batch-to-batch variability in my cell-based assays, making it impossible to aggregate data across different experimental runs for model training. What is the solution? A: Implement a rigorous cell line and passage management protocol.

- Master Cell Bank (MCB): Create a large, validated MCB at the lowest possible passage number. Aliquot and store in liquid nitrogen.

- Working Cell Bank (WCB): Generate a WCB from one vial of the MCB. Use the WCB for all experiments.

- Strict Passage Window: Define a maximum passage number differential (e.g., 5 passages) for all experiments. Never exceed it.

- Pre-Assay Phenotyping: Before each critical experiment, validate key markers (e.g., surface receptor expression via flow cytometry, key pathway activity via a control agonist) to ensure phenotypic consistency.

Key Experimental Protocols

Protocol 1: Miniaturization of a Biochemical Assay for 1536-well Format to Increase Data Point Throughput Objective: To reduce reagent cost per data point by 80% and enable larger compound library screening, thereby directly mitigating dataset sparsity. Methodology:

- Assay Re-optimization: Scale down the reaction volume from 50 µL (384-well) to 5 µL (1536-well). Systematically re-optimize enzyme concentration, substrate concentration, and incubation time using a fractional factorial design.

- Liquid Handling: Use a non-contact acoustic liquid handler (e.g., Echo) for precise, low-volume compound transfer. Use a capillary-based dispenser for enzyme/substrate addition.

- Detection: Use a homogeneous time-resolved fluorescence (HTRF) or AlphaLISA readout compatible with ultra-low volumes. Confirm Z'-factor >0.7 in the 1536-well format.

- Validation: Screen a pilot set of 1,280 compounds in both 384-well and 1536-well formats. Calculate Pearson correlation coefficient (r) of resulting activities. Proceed only if r > 0.85.

Protocol 2: Automated LogD Measurement using Liquid Chromatography to Enrich ADMET Property Data Objective: To systematically generate high-quality lipophilicity (LogD at pH 7.4) data for every synthesized compound, enriching sparse ADMET datasets. Methodology:

- Sample Preparation: Prepare 10 mM compound stock in DMSO. Dilute 1:100 in a 1:1 (v/v) mixture of 1-Octanol and Phosphate Buffer (pH 7.4). Vortex vigorously for 10 minutes.

- Phase Separation: Centrifuge at 3,000 x g for 5 minutes to achieve complete phase separation.

- Automated Quantification: Use an HPLC system with autosampler to inject aliquots from both the octanol and buffer phases.

- Analysis: Quantify peak areas. Calculate LogD = log10(AreaOctanol / AreaBuffer). Use a calibration set of compounds with known LogD values to validate system performance monthly.

Data Presentation

Table 1: Comparative Analysis of Assay Formats for Data Density and Cost

| Format | Reaction Volume (µL) | Reagent Cost per Data Point ($) | Max Compounds per Plate | Typical Z'-factor | Key Bottleneck |

|---|---|---|---|---|---|

| 96-well | 100 | 2.50 | 80 - 100 | 0.6 - 0.8 | High reagent consumption |

| 384-well | 25 | 0.75 | 320 - 480 | 0.5 - 0.7 | Evaporation edge effects |

| 1536-well | 5 | 0.15 | 1,280 - 2,000 | 0.4 - 0.6 | Liquid handling precision |

Table 2: Common Sources of Data Sparsity in Molecular Optimization

| Bottleneck Category | Example Failure Mode | Impact on Dataset | Mitigation Strategy |

|---|---|---|---|

| Compound Integrity | Degradation in DMSO stock | Erroneous low activity data | QC stocks via LCMS; use sealed storage plates |

| Assay Robustness | High intra-plate variability (%CV >20%) | Unreliable activity rankings | Implement robust controls; use statistical outlier detection |

| Biological Relevance | Target-based activity but no cell permeability | False positives in screening | Integrate early membrane permeability assay (e.g., PAMPA) |

| Resource Limitation | Can only test 1,000 compounds due to cost | Extremely sparse exploration of chemical space | Use virtual screening to prioritize compounds |

Visualizations

Diagram Title: HTS Bottleneck Identification and Mitigation Pathway

Diagram Title: Experimental Cascade for Dataset Enrichment

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function | Relevance to Mitigating Sparsity |

|---|---|---|

| Acoustic Liquid Handler (e.g., Echo) | Transfers nanoliter volumes of compound stocks with high precision and without tip waste. | Enables miniaturization to 1536-well format, drastically reducing cost per data point and allowing more compounds to be tested. |

| Cryopreserved, Assay-Ready Cells | Pre-plated, frozen cells in microplates that are thawed and ready for use. | Eliminates cell culture variability and passage drift, ensuring consistent biological context across all experimental runs, improving data aggregability. |

| qNMR Reference Standards | Quantitative NMR standards for precise concentration determination of compound stocks. | Ensures the accuracy of the starting concentration in every assay, removing a major source of error that creates noise and gaps in dose-response data. |

| Phospholipid Vesicle Kits (for PAMPA) | Standardized vesicles for the Parallel Artificial Membrane Permeability Assay. | Allows for early, reliable generation of permeability data, filtering out compounds that will fail later due to poor absorption, focusing resources on viable leads. |

| Stable Isotope-Labeled Protein | Protein expressed with 15N/13C for structural studies (NMR, MS). | Provides a robust internal standard for biophysical assays (e.g., SPR, ITC), improving the accuracy of binding affinity measurements critical for SAR. |

| LCMS-UV-ELSD Tri-Detector System | Combines mass spec, UV, and evaporative light scattering detection in one HPLC run. | Provides orthogonal confirmation of compound purity and identity post-synthesis and can quantify solubility/dissolution in buffer matrices, ensuring data integrity. |

Technical Support Center: Troubleshooting Molecular Optimization

FAQs & Troubleshooting Guides

Q1: My generative model for molecular design produces invalid SMILES strings or molecules with incorrect valency at a high rate (>15%). What should I check first? A1: This is a classic symptom of a model overfitting to sparse regions of chemical space. Follow this protocol:

- Diagnose Data Coverage: Calculate the Tanimoto similarity (using ECFP4 fingerprints) between 1000 randomly generated molecules from your model and their nearest neighbors in the training set. Create a histogram.

- Threshold: If >40% of generated molecules have a similarity >0.85 to a training set molecule, your model is likely memorizing and not generalizing.

- Immediate Action: Implement a "Frechet ChemNet Distance (FCD)" validation step. A high FCD score indicates poor distribution matching. Integrate a rule-based valency checker (e.g., using RDKit's

SanitizeMol) into your generation pipeline to filter invalid structures pre-validation.

Q2: My model's performance (e.g., predicted binding affinity) drops severely (>30% decrease in R²) when tested on a new scaffold series not present in the training data. A2: This indicates catastrophic failure in generalization due to data sparsity in scaffold diversity.

- Root Cause Analysis: Perform a Bemis-Murcko scaffold analysis on your training vs. validation sets.

- Protocol: a. Use RDKit to extract Bemis-Murcko scaffolds for all molecules. b. Calculate the Jaccard distance between the scaffold sets. c. If scaffold overlap is <10%, your dataset is scaffold-sparse.

- Solution: Implement scaffold-based splitting for training/validation/testing to reveal this issue early. Remediate by incorporating transfer learning from a larger, more diverse chemical database or using data augmentation techniques like side-chain enumeration.

Q3: During active learning cycles, my model's proposed molecules quickly converge to a narrow local optimum of chemical space, failing to explore novel regions. A3: This is an exploration failure often stemming from an acquisition function over-exploiting sparse but high-scoring areas.

- Troubleshooting Step: Monitor the diversity of the proposed batch in each cycle using Average Pairwise Tanimoto Distance.

- Experimental Adjustment: Hybridize your acquisition function. Combine an exploitation term (e.g., expected improvement) with an explicit exploration term (e.g., predictive entropy or distance to the training set). A weight parameter (β) controls the balance. Start with β=0.5 and adjust.

Q4: How can I quantify whether my molecular dataset is "too sparse" for a given model architecture (e.g., a large Graph Neural Network)? A4: Use the following diagnostic table to correlate sparsity metrics with model behavior risks.

Table 1: Diagnostic Metrics for Data Sparsity in Molecular Datasets

| Metric | Calculation Method | Threshold Indicating High Risk | Associated Risk |

|---|---|---|---|

| Scaffold Diversity Index | # Unique Bemis-Murcko Scaffolds / Total Molecules | < 0.2 | Poor generalization to novel chemotypes. |

| Property Space Coverage | Principal Component Analysis (PCA) on molecular descriptors; calculate convex hull volume of training set. | Validation set points lying >2 std. outside training hull >20% | Extrapolation errors and failed validation. |

| Nearest Neighbor Similarity | Mean Tanimoto similarity (ECFP4) of each validation molecule to its nearest training set neighbor. | Mean > 0.7 | Model is operating largely via memorization. |

| Activity Cliff Density | Proportion of molecule pairs with high similarity (Tanimoto >0.85) but large activity difference (>100-fold pIC50). | > 0.05 | Models will struggle to learn smooth structure-activity relationships. |

Experimental Protocols

Protocol P1: Scaffold-Based Dataset Splitting for Sparsity Assessment Objective: To create train/validation/test splits that accurately assess a model's ability to generalize to novel chemotypes. Materials: RDKit, Pandas, NumPy. Steps:

- For each molecule in the full dataset, generate its Bemis-Murcko scaffold using

rdkit.Chem.Scaffolds.MurckoScaffold.GetScaffoldForMol(mol). - Group all molecules by their unique scaffold.

- Sort scaffold groups by size (number of molecules).

- Implement iterative assignment: Starting with the largest scaffold group, assign all its molecules to the training set. Proceed to the next largest, assigning to the validation set, then test set, then back to training, in a round-robin fashion.

- This ensures scaffold groups are not split across sets, providing a rigorous test of generalization.

Protocol P2: Calculating Frechet ChemNet Distance (FCD) for Generative Model Validation Objective: To quantify the statistical similarity between generated and real molecular distributions, beyond simple validity checks. Materials: Pre-trained ChemNet model, TensorFlow/PyTorch, RDKit. Steps:

- Generate Molecules: Sample a large set (e.g., 10,000) of molecules from your generative model. Filter for valid, unique molecules.

- Prepare Reference Set: Use an equivalent-sized random sample from your training data or a standard benchmark set (e.g., ChEMBL).

- Compute Activations: For both the generated and reference sets, compute the activations from the last hidden layer of ChemNet (a 512-dimensional vector per molecule).

- Calculate Statistics: Compute the mean (μ) and covariance (Σ) matrices for the two sets of activations.

- Compute FCD: FCD = ||μ₁ - μ₂||² + Tr(Σ₁ + Σ₂ - 2(Σ₁Σ₂)^(1/2)). A lower FCD indicates better distributional match.

Visualizations

The Domino Effect of Sparsity in Molecular AI

Sparsity-Aware Model Development Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Addressing Data Sparsity

| Tool / Reagent | Provider / Library | Primary Function in Sparsity Context |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Core library for scaffold analysis, fingerprint generation, molecule sanitization, and descriptor calculation. |

| DeepChem | Open-Source ML for Chemistry | Provides scaffold splitter functions, standard molecular datasets, and pre-built model architectures for fair benchmarking. |

| GuacaMol | BenevolentAI | Benchmark suite for generative models, including metrics for novelty, diversity, and distribution learning (FCD). |

| MOSES | Insilico Medicine | Benchmarking platform with standardized training data, metrics, and baselines to evaluate generalization. |

| ChemBERTa | Deep Chemistry | Pre-trained transformer model for molecular representation; enables transfer learning from large corpora to sparse target datasets. |

| Directed Message Passing Neural Network (D-MPNN) | Stanford / ChEMBL | A robust GNN architecture often used as a strong baseline for property prediction, with scripts for scaffold splitting. |

| REINVENT | AstraZeneca (Open-Source) | Advanced generative framework for de novo design, suitable for implementing exploration-focused active learning cycles. |

Technical Support Center

Troubleshooting Guide: Common Experimental Issues in Molecular Property Prediction

Issue 1: Poor Model Performance Due to Sparse/Imbalanced Data

- Problem: Predictive models for properties like toxicity or binding affinity show high variance and poor generalization to new chemical space.

- Diagnosis: Check the distribution of your training data. Are certain property value ranges or molecular scaffolds underrepresented?

- Solution: Implement advanced data augmentation techniques tailored for molecular graphs, such as:

- SMILES Enumeration: Generate valid alternative SMILES strings for the same molecule.

- Atom/Bond Masking: Randomly mask atoms or bonds during training to force the model to learn robust representations.

- Adversarial Generation: Use a generative model to create plausible synthetic molecules in the underrepresented regions of property space.

- Transfer Learning: Pre-train your model on a large, diverse molecular dataset (e.g., ChEMBL, PubChem) before fine-tuning on your sparse, target-specific dataset.

Issue 2: Inconsistent Solubility Measurements Affecting Model Training

- Problem: Experimental solubility data from different sources (e.g., kinetic vs. thermodynamic solubility) are inconsistent, leading to noisy labels.

- Diagnosis: Compare the experimental protocol details for each data point. Inconsistent pH, temperature, or buffer composition are common culprits.

- Solution:

- Curate Rigorously: Standardize data by filtering to a specific assay type (e.g., thermodynamic solubility at pH 7.4, 25°C).

- Use Uncertainty Quantification: Train models that output a prediction interval alongside the point estimate, weighting data points by their reported experimental uncertainty.

- Hierarchical Modeling: Build a model that first predicts the "assay condition" effect, then the intrinsic molecular property.

Issue 3: Disconnect Between In Vitro Binding Affinity and In Vivo Efficacy Predictions

- Problem: A model accurately predicts strong binding (low Ki/Kd) but compounds fail in animal models due to poor ADMET properties.

- Diagnosis: The optimization objective was too narrow. Binding affinity is only one component of the complex in vivo journey.

- Solution: Implement multi-objective optimization with Pareto ranking. Develop separate QSAR models for each critical ADMET property (e.g., CYP inhibition, hERG liability, metabolic stability) and optimize compounds across all fronts simultaneously.

Frequently Asked Questions (FAQs)

Q1: In my molecular optimization pipeline, how do I prioritize which property (e.g., solubility vs. binding affinity) to optimize first when data is limited for both? A: Adopt a scaffold-centric, tiered approach. First, use available data (even if sparse) to identify molecular scaffolds with a minimal acceptable level for all key properties. Then, focus your data generation efforts (e.g., synthesis, testing) on optimizing the most critical deficiency within those promising scaffolds. This is more efficient than broadly optimizing a single property across all chemical space.

Q2: What are the most reliable experimental protocols to generate high-quality data for filling gaps in solubility and toxicity datasets? A: Adopt standardized, high-throughput protocols:

- Solubility (Thermodynamic): Use the shake-flask method coupled with UV-plate reading or LC-MS quantification. A standardized protocol involves equilibrating the compound in phosphate buffer (pH 7.4) for 24 hours at 25°C, followed by filtration and concentration analysis.

- Early Toxicity (hERG liability): Use fluorescence-based membrane potential assays on engineered cell lines (e.g., HEK293-hERG) as a higher-throughput, cost-effective surrogate for patch-clamp electrophysiology in early screening.

Q3: Can I use predictive models trained on public data for my proprietary scaffold, and how accurate will they be? A: You can use them as a starting point via transfer learning, but expect decreased accuracy (domain shift). The model's uncertainty estimates will be higher for scaffolds dissimilar to its training set. The recommended strategy is to fine-tune the public model on your proprietary data, even if it's a small set (e.g., 50-100 compounds). This typically yields better performance than training from scratch on your sparse data.

Q4: How do I visualize and analyze the trade-offs between optimizing multiple conflicting properties like potency and metabolic stability? A: Use a Pareto front analysis. Plot your candidate molecules in a multi-dimensional property space (e.g., Binding Affinity vs. CLhep). The Pareto front consists of molecules where no single property can be improved without worsening another. Optimization should aim to push the front toward the ideal region of the plot.

Table 1: Comparison of Data Augmentation Techniques for Sparse Molecular Datasets

| Technique | Mechanism | Best For | Typical Increase in Effective Dataset Size | Key Limitation |

|---|---|---|---|---|

| SMILES Enumeration | Generating canonical variations of the same molecule. | Simple QSAR models using string-based representations. | 2x - 10x | Does not create new chemical information. |

| Atom/Bond Masking | Randomly removing node/edge features during training. | Graph Neural Networks (GNNs). | N/A (Regularization) | Can generate unrealistic "broken" molecules if over-applied. |

| Generative Model | Using VAEs/GANs to create novel molecules with desired properties. | Exploring entirely new regions of chemical space. | Can be large & targeted. | Risk of generating synthetically inaccessible structures. |

| Transfer Learning | Pre-training on large general corpus, fine-tuning on specific data. | All deep learning models when target data < 10,000 points. | Leverages millions of pre-training points. | Requires careful tuning to avoid catastrophic forgetting. |

Table 2: Standardized Experimental Protocols for Key Property Assays

| Property | Recommended Assay | Key Protocol Steps | Output Metric | Approx. HTS Capacity (compounds/week) |

|---|---|---|---|---|

| Aqueous Solubility | Thermodynamic Shake-Flask (UV) | 1. 24h equilibrium in pH 7.4 buffer. 2. Filtration (0.45 µm). 3. Quantification via UV calibration curve. | Solubility (µg/mL) | 500-1000 |

| Cytochrome P450 Inhibition | Fluorescent Probe Substrate | 1. Incubate human liver microsomes with compound & probe. 2. Measure fluorescence of metabolite. 3. Calculate IC50. | IC50 (µM) | 10,000+ |

| hERG Channel Liability | Fluorescence Membrane Potential | 1. Load engineered cells with voltage-sensitive dye. 2. Add compound. 3. Measure fluorescence shift. | % Inhibition at 10 µM | 5,000+ |

| Metabolic Stability | Microsomal Half-Life | 1. Incubate compound with liver microsomes & NADPH. 2. Sample at T=0,5,15,30,45 min. 3. Analyze by LC-MS/MS for parent loss. | In vitro T1/2 (min), CLint (µL/min/mg) | 200-500 |

Visualizations

Diagram 1: Molecular Optimization Workflow Addressing Data Sparsity

Diagram 2: Key ADMET Property Interdependencies

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Molecular Property Research | Key Consideration for Data Sparsity |

|---|---|---|

| High-Throughput LC-MS/MS Systems | Quantification of compound concentration in solubility, metabolic stability, and permeability assays. | Enables rapid generation of high-quality, consistent data to fill dataset gaps. |

| Fluorescent Dye-Based Assay Kits (e.g., hERG, CYP450) | Higher-throughput surrogate for gold-standard assays to screen for early toxicity and DDI liability. | Allows profiling of thousands of compounds, expanding data coverage in under-explored chemical series. |

| Ready-to-Use Liver Microsomes & Hepatocytes | Standardized metabolic stability and metabolite identification studies. | Ensures experimental consistency across different labs/batches, reducing data noise. |

| Parallel Artificial Membrane Permeability Assay (PAMPA) Plates | Predict passive transcellular permeability in a high-throughput, low-cost format. | Enables generation of permeability estimates for large virtual libraries to guide in silico model training. |

| Graph Neural Network (GNN) Software (e.g., DGL, PyTor Geometric) | Building deep learning models that directly learn from molecular graph structure. | Essential for applying transfer learning and data augmentation techniques to sparse datasets. |

| Active Learning Platform Software | Intelligently selects the next most informative compounds to synthesize and test. | Maximizes the value of each new data point, strategically reducing sparsity in key areas of chemical space. |

Technical Support Center

Troubleshooting Guides

Issue 1: High Sparsity in Public Bioactivity Matrices

- Symptoms: Machine learning models fail to train or show poor predictive performance. The compound-target activity matrix has over 90% missing values.

- Root Cause: Public repositories aggregate data from diverse sources with varying experimental protocols, targets, and measured endpoints, leading to inconsistent coverage.

- Resolution Steps:

- Filter by Confidence: Use only data points with high confidence scores (e.g., ChEMBL's

pCHEMBLvalue, PubChem's BioActivity Analysis scores). - Define a Unified Endpoint: Standardize activity measurements (e.g., convert all to Ki or IC50 nM values) within a narrow experimental range.

- Apply a Coverage Threshold: Retain only targets and compounds with data points above a minimum count (e.g., >50 distinct measurements). This creates a smaller but denser matrix for initial modeling.

- Filter by Confidence: Use only data points with high confidence scores (e.g., ChEMBL's

Issue 2: Inconsistent Data Merging from Multiple Sources

- Symptoms: Duplicate compound entries, conflicting activity values for the same compound-target pair, or loss of structural information.

- Root Cause: Differences in compound identifiers (name, SMILES, InChIKey), units, and assay descriptions.

- Resolution Steps:

- Standardize Identifiers: Use canonical SMILES or full InChIKey as the primary compound key. Use tools like RDKit for standardization.

- Resolve Conflicts: Implement a consensus rule (e.g., use the mean or median of reported values, or the value from the most trusted source).

- Preserve Metadata: Maintain a provenance log linking each data point to its original source and assay description.

Issue 3: Proprietary Data Cannot Be Integrated with Public Data for Publication

- Symptoms: Need to benchmark internal models without disclosing confidential structures or activities.

- Root Cause: Legal and intellectual property restrictions prevent sharing of proprietary chemical structures and exact values.

- Resolution Steps:

- Use Descriptive Features: Train models on non-structural features (e.g., physicochemical properties, predicted descriptors) that can be shared.

- Report Aggregated Statistics: Publish only aggregate metrics (e.g., model performance distributions, sparsity statistics of the proprietary set compared to public sets) as shown in Table 1.

- Apply Differential Privacy: Add controlled noise to proprietary data to allow utility while preserving confidentiality.

FAQs

Q1: What is the typical range of data matrix sparsity in public vs. proprietary datasets? A1: Sparsity is highly dependent on the specific data slice. A broad comparison is summarized below.

Table 1: Typical Sparsity in Molecular Datasets

| Dataset Type | Example Source | Typical Compound-Target Matrix Density | Key Sparsity Driver |

|---|---|---|---|

| Broad Public Repository | PubChem BioAssay | < 0.1% | Massive diversity of compounds and targets tested in single-point screens. |

| Curated Public Repository | ChEMBL (selective slices) | 1-5% | Focus on established target families; standardized data curation. |

| Proprietary HTS Database | Pharma Company Archive | 5-15% | Focused chemical libraries against internal target panels; but target diversity is lower. |

| Proprietary Lead Optimization | Pharma Project Data | 20-50% | Intensive testing of analog series against a primary target and key off-targets. |

Q2: What are the best practices for creating a benchmark dataset from ChEMBL to study sparsity? A2: Follow this experimental protocol for reproducible dataset creation.

Experimental Protocol 1: Constructing a Sparse Benchmark from ChEMBL

- Objective: Extract a standardized, realistically sparse bioactivity matrix for method development.

- Query: Use the ChEMBL web interface or API to retrieve all

KiandIC50data for human targets belonging to the "Kinase" protein family. - Standardization:

- Convert all values to nM and

-log10scale (pKi/pIC50). - For duplicate measurements, calculate the median pChEMBL value.

- Filter for compounds with a molecular weight between 200 and 600 Da.

- Convert all values to nM and

- Matrix Formation: Create a compound vs. target matrix, where each cell contains the median pChEMBL value.

- Sparsity Calculation: Compute matrix density as

(Number of Measured Data Points) / (Total Number of Cells). - Output: A CSV file of the matrix and a report of key statistics (number of compounds, targets, density).

Q3: How can I simulate a proprietary data environment using only public data? A3: Use this protocol to create a realistic sparse "hold-out" test set.

Experimental Protocol 2: Simulating Proprietary-Style Blind Sets

- Start with a Dense Core: From your curated ChEMBL benchmark (from Protocol 1), filter to a denser sub-matrix (e.g., density >10%).

- Define a "Project Series": Cluster compounds using molecular fingerprints (ECFP4) and select the largest cluster as an "analog series".

- Create a "Confidential" Hold-Out: For a single high-value target T1 in the matrix, randomly select 30% of the activity values for the analog series. Treat these as "proprietary" and remove them from the public training matrix.

- Challenge: Train a model (e.g., a graph neural network or Random Forest on fingerprints) on the remaining "public" data. The goal is to predict the held-out values for the analog series on target T1, simulating the extrapolation challenge in lead optimization.

Q4: What are essential reagent solutions for experiments in data sparsity research? A4: The following toolkit is required for computational studies in this domain.

Table 2: Research Reagent Solutions (Computational Toolkit)

| Item | Function | Example/Note |

|---|---|---|

| Chemical Standardization Tool | Converts diverse structural representations into a canonical form. | RDKit (Chem.MolFromSmiles, CanonicalSmiles). |

| Descriptor/Fingerprint Calculator | Generates numerical features from molecular structures for model input. | RDKit (ECFP4, Physicochemical Descriptors), Mordred. |

| Cheminformatics Database | Manages and queries large-scale chemical and bioactivity data. | PostgreSQL with RDKit cartridge, ChEMBL SQLite. |

| Sparse Matrix Library | Efficiently handles and computes operations on sparse matrices. | SciPy (scipy.sparse). |

| Imputation & Matrix Completion Library | Provides algorithms to fill missing values. | Scikit-learn (IterativeImputer), fancyimpute. |

| Deep Learning Framework (GNNs) | Builds models that learn directly from graph-structured molecular data. | PyTorch Geometric, DGL-LifeSci. |

Visualizations

Data Sparsity Analysis Workflow

Simulating a Proprietary Data Blind Test

From Theory to Bench: Modern Techniques to Combat Molecular Data Scarcity

Technical Support Center: Troubleshooting & FAQs

Thesis Context: This support center is framed within the ongoing research thesis "Addressing Data Sparsity in Molecular Optimization Datasets for Generative AI Models." The following guides address common experimental pitfalls when using generative models to overcome limited and sparse chemical data.

Frequently Asked Questions (FAQs)

Q1: My VAE for molecular generation only produces invalid SMILES strings or repeats the same structures. What could be wrong? A: This is a classic symptom of mode collapse or insufficient training, often exacerbated by sparse datasets.

- Primary Checks:

- Data Preprocessing: Ensure your SMILES canonicalization and tokenization are consistent. A small, sparse dataset is highly sensitive to preprocessing noise.

- Latent Space Regularization: The Kullback–Leibler (KL) divergence weight in your loss function might be too high, forcing latent vectors to cluster too tightly. Try annealing the KL weight from 0 to its target value over the first several epochs.

- Decoder Capacity: A decoder that is too powerful can ignore the latent vector. Reduce network depth or use dropout.

- Protocol - KL Annealing:

β_final = 0.01 # Your target weightfor epoch in range(total_epochs):β_current = min(β_final * (epoch / warmup_epochs), β_final)loss = reconstruction_loss + β_current * kl_loss

Q2: During GAN training for molecular generation, the generator loss drops to zero while the discriminator loss remains high, and no diverse molecules are produced. How can I fix this? A: This indicates a training imbalance where the generator exploits a weakness in the discriminator.

- Troubleshooting Steps:

- Update Ratio: Implement a "n_critic" step where the discriminator is updated 3-5 times for every single generator update.

- Gradient Penalty: Replace Wasserstein GAN's weight clipping with a gradient penalty (WGAN-GP) to enforce Lipschitz continuity. This stabilizes training significantly.

- Label Smoothing: Apply one-sided label smoothing (e.g., use 0.9 for real data labels) to prevent the discriminator from becoming overconfident.

- Protocol - Gradient Penalty Loss (WGAN-GP):

# Given real_data, fake_data, and discriminator model Dalpha = torch.rand(real_data.size(0), 1, 1, 1)interpolates = alpha * real_data + ((1 - alpha) * fake_data)interpolates.requires_grad_(True)d_interpolates = D(interpolates)gradients = torch.autograd.grad(outputs=d_interpolates, inputs=interpolates, grad_outputs=torch.ones_like(d_interpolates), create_graph=True)[0]gradient_penalty = ((gradients.norm(2, dim=1) - 1) 2).mean()loss_D = loss_D + lambda_gp * gradient_penalty

Q3: My diffusion model for 3D molecular generation produces molecules with incorrect bond lengths or steric clashes. What parameters should I adjust? A: This points to issues in the noise schedule or the denoising network's handling of geometric constraints.

- Key Adjustments:

- Noise Schedule: For 3D coordinates, use a cosine-based noise schedule rather than a linear one. It adds noise more gradually, which can help preserve geometric integrity during the reverse process.

- Loss Weighting: Incorporate auxiliary loss terms that penalize unrealistic bond lengths and angles directly during training, alongside the standard denoising score matching loss.

- Equivariance: Ensure your denoising network is E(3)-equivariant (invariant to rotations, translations, and reflections of the 3D space). Models like EGNN (E(n) Equivariant Graph Neural Networks) are critical for this.

- Protocol - Cosine Noise Schedule:

def cosine_beta_schedule(timesteps, s=0.008):steps = timesteps + 1x = torch.linspace(0, timesteps, steps)alphas_cumprod = torch.cos(((x / timesteps) + s) / (1 + s) * torch.pi * 0.5) 2alphas_cumprod = alphas_cumprod / alphas_cumprod[0]betas = 1 - (alphas_cumprod[1:] / alphas_cumprod[:-1])return torch.clip(betas, 0, 0.999)

Q4: How can I quantitatively evaluate if my generated molecules are truly diverse and novel, not just memorized from a sparse training set? A: Relying on a single metric is insufficient. Use the following comparative table to design your evaluation suite.

Table 1: Quantitative Metrics for Evaluating Generative Molecular Models

| Metric | What it Measures | Target Value (Guide) | Tool/Library |

|---|---|---|---|

| Validity | % of chemically valid SMILES/structures | >95% (VAE), >99% (Diffusion) | RDKit |

| Uniqueness | % of unique molecules from a large sample (e.g., 10k) | >80% | Internal Calculation |

| Novelty | % of generated molecules not in training set | High, but context-dependent. >50% is a common benchmark. | Internal Calculation |

| Fréchet ChemNet Distance (FCD) | Distribution similarity between generated and training molecules in a learned chemical space. | Lower is better. Compare to a test set FCD for reference. | GuacaMol/chemnet_metrics |

| SA Score | Synthetic accessibility (1=easy, 10=hard) | <4.5 for drug-like molecules | RDKit |

| QED | Quantitative Estimate of Drug-likeness | >0.6 for lead-like compounds | RDKit |

| NP Score | Natural-product-likeness | Varies by target; >0 for NP-inspired design | RDKit |

Experimental Protocol: Benchmarking Models on Sparse Data

Objective: To compare the robustness of VAE, GAN, and Diffusion models when trained on progressively sparser subsets of the ZINC250k dataset.

Methodology:

- Dataset Creation: Start with the full ZINC250k dataset. Create stratified subsets representing 100%, 50%, 25%, and 10% of the data, ensuring chemical diversity is preserved in each subset.

- Model Training: Train a standard ChemVAE, a ORGAN (GAN), and a DiffLinker-type diffusion model on each subset. Use identical molecular representations (SMILES for VAE/GAN; 3D graphs for diffusion) and comparable parameter counts where possible.

- Evaluation: For each trained model, generate 10,000 molecules. Evaluate them using the metrics in Table 1. Pay special attention to Novelty and FCD as indicators of performance under data sparsity.

- Analysis: Plot metric performance (y-axis) against training set size (x-axis) for each model architecture to identify which degrades more gracefully.

Visualizations

Title: Experimental Workflow for Benchmarking Models on Sparse Data

Title: VAE Architecture for Molecular Generation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Libraries for Generative Molecular Design

| Item/Software | Primary Function | Application in De Novo Design |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. | Core molecule handling: SMILES I/O, validity checks, descriptor calculation (QED, SA, etc.), fingerprint generation. |

| PyTorch / TensorFlow | Deep learning frameworks. | Building, training, and deploying VAE, GAN, and Diffusion model architectures. |

| GuacaMol / MOSES | Benchmarking suites for molecular generation. | Provides standardized datasets, metrics, and baselines for fair model comparison. |

| Environments (Conda, Docker) | Dependency and environment management. | Ensures reproducibility of complex computational experiments across different systems. |

| Molecular Dynamics (MD) Software (e.g., GROMACS, OpenMM) | Simulates physical movements of atoms and molecules. | Used for post-generation refinement and validation of 3D molecular structures (especially from diffusion models). |

| High-Performance Computing (HPC) Cluster or Cloud GPU (e.g., AWS, GCP) | Provides significant parallel computing power. | Essential for training diffusion models and large GANs on billions of parameters in a feasible timeframe. |

| Weights & Biases (W&B) / TensorBoard | Experiment tracking and visualization. | Logs training loss curves, hyperparameters, and generated molecule samples for analysis and debugging. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During SMILES enumeration for my QSAR model, I am experiencing a drastic increase in dataset size, leading to memory errors. How can I manage this? A: This is a common issue. Implement a canonicalization and deduplication step before scaling. Use a tool like RDKit to canonicalize each enumerated SMILES string, then remove duplicates. For extreme cases, employ a two-stage approach: first enumerate a subset, train a preliminary model, and use it to filter low-probability SMILES before full enumeration.

Q2: When applying atomic perturbation (e.g., atom substitution), my generated molecules are often chemically invalid or unstable. What are the best practices?

A: Always combine stochastic perturbation with valency and chemical rule checks. Use a fragment library derived from known drug-like molecules (e.g., BRICS fragments in RDKit) for substitutions instead of single atoms. Post-generation, filter molecules using a combined rule set (e.g., RDKit's SanitizeMol check, removal of molecules with unspecified stereo centers, and basic synthetic accessibility score thresholds).

Q3: 3D conformer generation for large datasets is computationally prohibitive. What are efficient alternatives? A: For initial screening phases, use fast, rule-based methods (e.g., RDKit's ETKDGv3) but with a low convergence threshold. Reserve high-quality, force-field optimized conformers (e.g., with Open Babel or CREST) only for your final, top-ranked candidates. Consider using a representative conformer for highly similar molecules within a cluster.

Q4: I've augmented my dataset, but my molecular property prediction model's performance on the original test set has degraded. Why? A: This indicates potential distribution shift or introduction of noise. Verify the chemical space of your augmented data. Use a dimensionality reduction technique (like t-SNE) to visualize original vs. augmented molecules. Ensure your augmentation strategy preserves the core activity-determining scaffolds. Implement a weighted loss function that gives slightly higher importance to original, experimentally-validated data points.

Q5: How do I choose the optimal augmentation strategy for my specific molecular optimization task? A: The choice is context-dependent. Use the following diagnostic table:

| Primary Challenge | Recommended Augmentation Strategy | Key Parameter to Tune |

|---|---|---|

| Very small dataset (< 100 compounds) | 3D Conformer Generation + SMILES Enumeration | Number of conformers per molecule; Enumeration depth |

| Limited scaffold diversity | Atomic & Bond Perturbation (using BRICS) | Maximum fragment size; Permissible bond types |

| Need for robust stereo-chemical modeling | 3D Conformer Generation | RMSD threshold for diversity; Force field used |

| Training a generative model (VAE, etc.) | SMILES Enumeration | Canonicalization (Yes/No); Use of randomized SMILES |

Experimental Protocols

Protocol 1: Standardized SMILES Enumeration & Canonicalization Workflow

- Input: A list of canonical SMILES strings.

- Enumeration: For each SMILES, use the

rdkit.Chem.MolFromSmiles()andrdkit.Chem.MolToRandomSmiles()function in a loop. SetnumVariants(e.g., 10-50 per molecule). - Canonicalization: Convert each variant back to a canonical SMILES using

rdkit.Chem.MolToSmiles(mol, canonical=True). - Deduplication: Merge all lists and remove duplicate SMILES strings using a set operation.

- Validation: Sanitize all resulting molecules (

Chem.SanitizeMol()). Discard any that fail.

Protocol 2: Atomic Perturbation via BRICS Fragment Decomposition & Recombination

- Fragment Library Creation: Decompose your entire dataset (or a large drug database like ChEMBL) using RDKit's

BRICS.BRICSDecompose()function. - Filtering: Filter fragments by frequency and size (e.g., keep fragments appearing >5 times, with 3-10 heavy atoms).

- Perturbation: For a target molecule, identify all cleavable BRICS bonds. Randomly select one bond to break, splitting the molecule into two fragments.

- Recombination: Replace one of the generated fragments with a compatible fragment from the library (matching the bond type label) using

BRICS.BRICSBuild(). - Sanitization & Filtering: Sanitize the new molecule. Apply drug-likeness filters (e.g., Lipinski's Rule of Five, PAINS filter via RDKit).

Protocol 3: High-Throughput 3D Conformer Generation with ETKDGv3

- Input Preparation: Start with a sanitized RDKit molecule object. Add hydrogens (

Chem.AddHs(mol)). - Parameter Setting: Use the

ETKDGv3algorithm. Key parameters:numConfs=50,pruneRmsThresh=0.5(for diversity),useRandomCoords=True. - Generation: Call

AllChem.EmbedMultipleConfs(mol, numConfs=numConfs, params=params). - Minimization (Optional but Recommended): Perform a quick MMFF94 force field minimization (

AllChem.MMFFOptimizeMoleculeConfs) with a low maximum iteration count (e.g., 200) to resolve clashes. - Selection: Select the minimum energy conformer, or a diverse subset based on RMSD clustering.

Visualization

Data Augmentation Workflow for Molecular Datasets

SMILES Enumeration & Canonicalization Process

The Scientist's Toolkit: Research Reagent Solutions

| Item / Software | Primary Function in Augmentation | Key Consideration |

|---|---|---|

| RDKit | Core cheminformatics toolkit for SMILES I/O, canonicalization, fragmentation, conformer generation, and molecular property calculation. | Open-source. Use the latest stable release for bug fixes and new algorithms (e.g., ETKDGv3). |

| Open Babel | Tool for converting file formats, energy minimization, and conformer generation. Useful as a cross-check for RDKit results. | Command-line interface is powerful for batch processing in pipelines. |

| CREST (GFN-FF) | Advanced, automated conformer-rotamer ensemble sampling based on quantum-mechanical methods. | Computationally expensive. Use for final validation or high-accuracy conformational analysis on small sets. |

| BRICS Fragments | A systematic methodology to define and break molecules into meaningful, recombinable fragments. | Building a relevant, project-specific fragment library from known actives yields more realistic perturbations. |

| MMFF94/MMFF94s | Force fields used for quick geometry optimization and energy scoring of generated 3D conformers. | Not suitable for all chemistries (e.g., organometallics). Always visually inspect critical molecules. |

| PCA & t-SNE | Dimensionality reduction techniques to visualize the chemical space of original vs. augmented datasets. | Essential for diagnosing distribution shift and ensuring augmentation expands space meaningfully. |

Technical Support Center: Troubleshooting for Molecular Optimization Research

FAQs & Troubleshooting Guides

Q1: My fine-tuned molecular property predictor is performing poorly on a small target dataset despite using a pre-trained model. What could be wrong? A: This is a classic symptom of catastrophic forgetting or excessive domain shift. Follow this protocol:

- Diagnose: Compare the latent space representations of your pre-trained model's output for the pre-training corpus (e.g., ZINC20) and your small target dataset using t-SNE. High separation indicates domain shift.

- Mitigate: Implement progressive unfreezing or differential learning rates. Use a lower learning rate for earlier layers of the network to preserve general chemical knowledge, and a higher rate for the final task-specific layers.

- Regulate: Apply strong regularization (e.g., dropout >0.5, weight decay) and consider adversarial domain adaptation techniques to align feature distributions.

Q2: How do I choose between a Transformer-based (e.g., ChemBERTa) and a Graph Neural Network-based (e.g., Pretrained GNN) pre-trained model for my molecular optimization task? A: The choice depends on your data representation and task.

- Use Transformer-based models if your data is primarily in SMILES or SELFIES string format, and your task involves sequence-based generation or property prediction from 1D representations.

- Use GNN-based models if you are working with molecular graphs directly, and your task critically depends on explicit spatial/structural relationships (e.g., bond angles, 3D conformation) for predicting properties like binding affinity.

Table 1: Comparison of Pre-trained Model Architectures for Molecular Tasks

| Model Type | Example | Best For Data Format | Key Strength | Typical Target Task |

|---|---|---|---|---|

| Transformer | ChemBERTa, MolT5 | SMILES, SELFIES (Sequences) | Capturing long-range dependencies in linear notation | Text-based generation, reaction prediction |

| Graph Neural Network | Pretrained GNN, GraphMVP | Molecular Graphs (2D/3D) | Explicit modeling of topology and geometry | Structure-based property prediction, conformer generation |

| Hybrid | MoleculeGPT | Graphs + Sequences | Flexibility in input modality | Multi-modal molecular design |

Q3: During transfer learning, my model's generated molecules are valid but chemically unreasonable. How can I improve novelty while maintaining realism? A: This indicates the model is overfitting to the patterns in the small target dataset. Implement a reinforcement learning (RL) fine-tuning loop with a combined reward:

- Reward Function: R = α * Rproperty + β * Rsimilarity + γ * Rvalidity + δ * Rnovelty.

- Protocol: Start from the fine-tuned model. Use policy gradient methods (e.g., PPO) to update the model to maximize the reward. The pre-trained model's output distribution can serve as a prior to penalize divergence from realistic chemical space.

Experimental Protocol: Fine-tuning a Pre-trained GNN for a Sparse Toxicity Prediction Dataset

Objective: Adapt a GNN pre-trained on 10M unlabeled molecules (from PubChem) to predict hepatotoxicity using a proprietary dataset of only 500 labeled compounds.

Materials & Workflow:

Fine-tuning a Pre-trained GNN for Sparse Toxicity Data

Protocol Steps:

- Data Preparation: Featurize your 500 molecules into graph representations (nodes: atoms, edges: bonds) matching the pre-trained model's input schema. Apply rigorous scaffold split (80/10/10) to ensure the test set contains novel molecular backbones.

- Model Initialization: Load the pre-trained GNN weights. Replace the final prediction head with a randomly initialized layer suited for your binary classification task.

- Staged Fine-tuning:

- Phase 1: Freeze all layers except the final prediction head. Train for 20 epochs with a low learning rate (1e-5) to allow only the new head to adapt.

- Phase 2: Unfreeze the last 2-3 graph convolutional layers of the GNN. Train for 50+ epochs with a higher learning rate (1e-3), using early stopping on the validation loss.

- Regularization: Use high dropout (0.6) on the penultimate layer and weight decay (1e-4) to prevent overfitting.

- Evaluation: Report AUC-ROC, precision-recall on the scaffold-separated test set. Use t-SNE plots to visualize latent space alignment.

Q4: What are the key computational resources and research reagents for setting up a transfer learning pipeline in molecular AI? A: The following toolkit is essential:

Table 2: Research Reagent Solutions for Molecular Transfer Learning

| Item / Reagent | Function / Purpose | Example / Specification |

|---|---|---|

| Pre-trained Model Weights | Provides foundational knowledge of chemical space; starting point for transfer learning. | ChemBERTa-2 (77M params), Pretrained GNN from MoleculeNet, GROVER-base. |

| Curated Target Dataset | Small, high-quality labeled data for the specific downstream task (e.g., solubility, binding affinity). | Proprietary assay data, cleaned subsets of ChEMBL (e.g., solubility <500 compounds). |

| CHEMICAL Validation Suite | Ensures generated molecules are chemically valid and realistic. | RDKit (for SMILES validity, synthetic accessibility score), FCD (Frechet ChemNet Distance) for distributional similarity. |

| Differentiable Molecular Representation | Enables gradient-based optimization. | SELFIES (100% validity), DeepSMILES, or differentiable graph representations via DGL/PyG. |

| High-Performance Computing (HPC) Node | Handles the computational load of model fine-tuning and generation. | GPU with >16GB VRAM (e.g., NVIDIA A100, V100), CUDA/cuDNN support. |

| Hyperparameter Optimization Framework | Systematically finds optimal fine-tuning settings for small data. | Ray Tune, Weights & Biases Sweeps, or Optuna. |

From Large Corpus to Specific Task via Transfer Learning

Technical Support Center: Troubleshooting & FAQs

This support center provides guidance for common issues encountered when implementing active learning (AL) and Bayesian optimization (BO) loops for molecular optimization.

Frequently Asked Questions (FAQs)

Q1: My acquisition function (e.g., Expected Improvement, Upper Confidence Bound) fails to select diverse candidates and gets stuck in a local region of chemical space. How can I encourage exploration?

A: This is a common issue of over-exploitation. Implement a hybrid acquisition strategy. Add an explicit diversity-promoting term, such as a kernel-based repulsion from already-selected points. Alternatively, use a batch selection method like q-EI or Thompson Sampling with a penalization for similarity within the batch. Periodically inject random or space-filling samples (e.g., 5-10% of each batch) to refresh the model's exploration.

Q2: The Gaussian Process (GP) model surrogate becomes computationally intractable as my dataset grows beyond a few thousand molecules. What are my options? A: For scalability, consider these alternatives:

- Sparse Gaussian Processes: Use inducing point methods (SVGP) to approximate the full GP.

- Bayesian Neural Networks (BNNs): They scale better with data and can capture complex, non-stationary patterns.

- Random Forest-based models: Such as those used in SMAC3 or a Random Forest with built-in uncertainty estimates.

- Deep Kernel Learning: Combine neural network feature extractors with a Gaussian process layer.

Q3: How do I handle mixed, non-numerical molecular representations (like SMILES strings and numerical descriptors) within the BO framework? A: You must use a kernel function capable of handling your representation.

- For SMILES/Strings: Use a string kernel (e.g., Tanimoto kernel on Morgan fingerprints) or leverage a pre-trained molecular deep learning model (e.g., from ChemBERTa) to generate continuous latent vectors, then apply a standard kernel (e.g., RBF) on those vectors.

- For Graphs: Use a graph kernel or, more commonly, a graph neural network (GNN) as a feature extractor.

- For Mixed Inputs: Construct a composite kernel that is the sum or product of kernels defined on different feature subsets.

Q4: The performance of my AL/BO loop is highly sensitive to the initial "seed" set of molecules. How can I make it more robust? A: The quality of the initial dataset is critical in data-sparse regimes.

- Initialization Protocol: Do not use random selection. Employ space-filling designs (e.g., Latin Hypercube Sampling) on your molecular descriptor space or cluster-based sampling from a large, unlabeled pool to maximize initial diversity.

- Robustness Check: Always run multiple optimization loops with different, carefully chosen initial sets to assess variability. Report the median and interquartile range of your final outcomes.

Q5: How do I define a meaningful and computable "acquisition function" for multi-objective optimization (e.g., maximizing potency while minimizing toxicity)? A: For multi-objective Bayesian optimization (MOBO), common strategies include:

- Scalarization: Combine objectives into a single function (e.g., weighted sum, penalty method) and run standard BO.

- ParEGO: A popular method that uses random scalarizations in each iteration.

- Expected Hypervolume Improvement (EHVI): Directly targets improving the Pareto front. It is effective but computationally more intensive.

Experimental Protocol: A Standard AL/BO Loop for Molecular Property Optimization

1. Objective: To efficiently discover molecules with optimized target properties (e.g., high binding affinity, ADMET) within a limited experimental budget.

2. Prerequisites:

- A molecular representation (e.g., ECFP fingerprints, graph features, latent vectors).

- An initial dataset (D_initial) of molecules with measured property values. Size: Typically 50-500 data points.

- A large, unlabeled candidate pool (Pool) of molecules to propose for evaluation (e.g., from a virtual library).

- A surrogate model (e.g., Gaussian Process) capable of predicting property mean and uncertainty.

- An acquisition function (α(x)) to score candidates.

3. Step-by-Step Protocol: 1. Initial Model Training: Train the surrogate model (e.g., GP) on Dinitial. 2. Candidate Scoring: Use the trained model to predict the mean (μ(x)) and uncertainty (σ(x)) for all molecules (x) in the candidate Pool. 3. Acquisition Calculation: Compute the acquisition function α(x) = f(μ(x), σ(x)) for all x in Pool. 4. Batch Selection: Select the top K molecules (e.g., K=5) from Pool that maximize α(x). For batch selection, use a method that penalizes similarity within the batch. 5. Experimental Evaluation: Send the selected K molecules for *in silico*, *in vitro*, or *in vivo* evaluation (the "oracle") to obtain their true property values (ynew). 6. Dataset Update: Append the new (xnew, ynew) pairs to the training dataset: D = D ∪ (xnew, ynew). 7. Iteration: Retrain the surrogate model on the updated D. Repeat steps 2-6 until the experimental budget is exhausted or a performance target is met. 8. Final Analysis: Report the best molecule(s) found and plot the optimization history (best found value vs. iteration).

Table 1: Common Acquisition Functions for Molecular BO

| Acquisition Function | Formula (Maximization) | Key Property | Best Use Case |

|---|---|---|---|

| Expected Improvement (EI) | EI(x) = E[max(f(x) - f(x*), 0)] |

Balances exploration/exploitation | General-purpose, single-objective optimization. |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + β * σ(x) |

Explicit β controls exploration | Easy to tune exploration; theoretical guarantees. |

| Probability of Improvement (PI) | PI(x) = P(f(x) ≥ f(x*) + ξ) |

Tends to be more exploitative | When refinement near a known good point is desired. |

| q-EI (Batch EI) | Multi-point generalization of EI | Selects diverse, high-value batches | When parallel experimental evaluation is available. |

| Expected Hypervolume Improvement (EHVI) | Improvement in Pareto hypervolume | Directly optimizes Pareto front | Multi-objective optimization without scalarization. |

Table 2: Key Research Reagent Solutions for AL/BO in Molecular Optimization

| Item / Reagent | Function / Explanation |

|---|---|

| BO-TK Library (e.g., BoTorch, GPyOpt) | Provides core Bayesian optimization algorithms, surrogate models (GPs), and acquisition functions. |

| Molecular Featurization Tool (e.g., RDKit, DeepChem) | Converts SMILES or molecular structures into numerical features (fingerprints, descriptors, graph tensors). |

| Gaussian Process Library (e.g., GPyTorch, scikit-learn) | Implements scalable and flexible GP models for building the surrogate. |

| Chemical Space Visualization (e.g., t-SNE, UMAP) | Projects high-dimensional molecular representations to 2D for monitoring diversity and coverage. |

| High-Throughput Virtual Screen (HTVS) | Acts as a computational "oracle" to score large libraries on primary targets (e.g., docking score). |

| ADMET Prediction Suite | Serves as in silico oracles for secondary objectives (toxicity, solubility, metabolism) within MOBO loops. |

Workflow & Pathway Visualizations

Title: Active Learning & Bayesian Optimization Closed Loop

Title: Molecular Representations & Kernels for Bayesian Optimization

Troubleshooting Guides & FAQs

Q1: During multi-task training, one property prediction task is performing well but the others are failing to converge. What could be the cause and how can I fix it?

A: This is a classic symptom of negative transfer or task imbalance. The primary cause is often a significant difference in loss scale or gradient magnitude between tasks, causing the optimizer to prioritize one task. Solutions include:

- Gradient Normalization: Implement GradNorm or PCGrad to balance gradient magnitudes during backpropagation.

- Loss Weighting: Use uncertainty weighting (Kendall et al., 2018) to automatically tune task weights based on homoscedastic uncertainty. Update weights every N steps (e.g., 100) during training.

- Architecture Check: Ensure your shared encoder has sufficient capacity. A bottleneck layer that is too small can cause tasks to compete destructively.

Q2: When performing few-shot fine-tuning on a new molecular property, the model overfits to the small support set within a few epochs. How can I improve generalization?

A: Overfitting in few-shot regimes is expected but manageable. Your protocol should include:

- Meta-Learning Fine-Tuning: Use a MAML (Model-Agnostic Meta-Learning) style approach where you fine-tune with a small inner-loop learning rate (e.g., 0.001) for only 1-5 gradient steps per episode. Avoid full epoch-based training.

- Strong Regularization: Apply high dropout rates (0.5-0.7) and weight decay (1e-4) specifically during the few-shot adaptation phase.

- Data Augmentation: For molecular graphs, use rule-based augmentation (e.g., SMILES enumeration, atom/bond masking) on the support set to artificially expand its size.

Q3: How do I decide which tasks to group together in a multi-task framework versus keeping separate? Are there metrics to predict synergy?

A: Task grouping should be hypothesis-driven but can be validated quantitatively. Pre-experiment, calculate the pairwise correlation of gradients or representations from single-task models on a shared validation set. A high positive correlation (>0.6) often predicts beneficial multi-task learning. Post-experiment, use the Multi-Task Learning Gain (MTLG) metric:

MTLG = (1/N) * Σ (Performance_Multi_i - Performance_Single_i) / Performance_Single_i

A positive average MTLG indicates successful knowledge sharing.

Q4: My framework works in simulation but fails to transfer to a real, sparse molecular optimization dataset. What are the key validation steps?

A: The gap often lies in distributional shift. Implement this validation protocol:

- Create a realistic sparse split: From your dataset, hold out an entire scaffold or structural cluster to simulate a "new" chemical series with zero training examples.

- Benchmark: Compare your multi-task/few-shot model against a strong single-task baseline and a simple k-NN predictor on this held-out cluster.

- Calibration Check: Use Expected Calibration Error (ECE) to ensure prediction uncertainties are meaningful for the sparse domain; miscalibration is common in transfer scenarios.

Q5: What are the common pitfalls in evaluating few-shot learning performance for molecular property prediction, and what is the correct evaluation protocol?

A: The major pitfall is data leakage across meta-training, meta-validation, and meta-testing splits. The correct protocol is:

- Strict Scaffold Split: Ensure molecular scaffolds (using Bemis-Murcko method) in the N-shot support/k-shot query sets of the meta-test phase are never seen during any phase of meta-training. This simulates true novelty.

- Episode-Based Evaluation: Report the mean and 95% confidence interval over multiple (≥ 100) randomly sampled few-shot episodes from the held-out meta-test set.

- Baselines: Always compare against a fine-tuned single-task pre-trained model, not just its zero-shot performance.

Protocol 1: Benchmarking Multi-Task Learning Gain (MTLG)

- Dataset Partition: For T related tasks, create a shared training set (80%) and separate validation/test sets per task (10%/10%).

- Single-Task Baseline: Train T independent models (e.g., GCNs) to convergence. Record test RMSE/MAE for each task →

Performance_Single_i. - Multi-Task Model: Train one model with a shared encoder and T task-specific heads. Use uncertainty weighting. Record test metrics →

Performance_Multi_i. - Calculation: Compute

MTLGfor each task and the average.

Protocol 2: Few-Shot Meta-Training & Evaluation (ProtoNet-Based)

- Meta-Training Phase:

- Sample an episode: Select

Ntasks (molecular properties) from a meta-training pool. - For each task, sample a

support set(e.g., 5 molecules) and aquery set(e.g., 10 molecules). - Embed all molecules via the shared encoder.

- Compute a task

prototypeas the mean embedding of its support set. - For each query molecule, predict property via distance (e.g., Euclidean) to all task prototypes.

- Update model via the query set loss. Repeat for 20,000+ episodes.

- Sample an episode: Select

- Meta-Testing Phase:

- Fix model weights. Sample novel tasks from a held-out scaffold-split meta-test set.

- For each novel task, provide only the

support set(K=5,10,20 shots). - The model generates a prototype and predicts on the novel task's query set.

- Aggregate metrics (RMSE, R²) over ≥100 episodes.

Key Research Reagent Solutions

| Item | Function in Framework | Example / Specification |

|---|---|---|

| Pre-Trained Molecular Encoder | Provides a rich, generalized feature representation to mitigate data sparsity. | ChemBERTa or Grover. Use embeddings from the penultimate layer as input features. |

| Task-Specific Head | Small NN that maps shared embeddings to a property value. Prevents catastrophic forgetting. | A 2-layer MLP with ReLU and Dropout (p=0.1). Output dimension = 1 (regression). |

| Meta-Learning Optimizer | Facilitates few-shot adaptation by simulating episode-based learning during training. | Use learn2learn or higher PyTorch libraries to implement MAML or Reptile. |

| Gradient Manipulation Library | Balances multi-task learning by modifying the backward pass. | LibMTL or custom implementation of PCGrad (project conflicting gradients). |

| Calibration Tool | Ensures predictive uncertainties are reliable for decision-making in sparse data regimes. | netcal Python library for implementing Platt scaling or Temperature Scaling post-hoc. |

Table 1: Performance Comparison on Sparse Molecular Datasets (QM9 Derived)

| Model Type | Avg. RMSE (Core Tasks) | Avg. RMSE (Sparse Tasks)* | Avg. MTLG | Few-Shot R² (5-shot) |

|---|---|---|---|---|

| Single-Task GCN | 0.89 ± 0.11 | 1.52 ± 0.34 | 0.00 (baseline) | 0.15 ± 0.12 |

| Multi-Task (Hard Sharing) | 0.75 ± 0.08 | 1.41 ± 0.29 | +0.12 | 0.18 ± 0.10 |

| Multi-Task (GradNorm) | 0.71 ± 0.07 | 1.28 ± 0.27 | +0.19 | 0.22 ± 0.11 |

| Meta-Learning (ProtoNet) | 0.82 ± 0.09 | 1.05 ± 0.23 | N/A | 0.41 ± 0.15 |

*Sparse Tasks: Properties with <100 available training samples in the dataset.

Table 2: Effect of Support Set Size on Few-Shot Performance

| K-Shots | RMSE (Mean ± CI) | R² (Mean ± CI) | Required Adaptation Steps |

|---|---|---|---|

| 5 | 1.05 ± 0.23 | 0.41 ± 0.15 | 3-5 |

| 10 | 0.92 ± 0.19 | 0.55 ± 0.13 | 5-10 |

| 20 | 0.81 ± 0.16 | 0.65 ± 0.10 | 10-15 |

| 50 | 0.75 ± 0.14 | 0.70 ± 0.09 | 15-20 |

Visualizations

Multi-Task Learning Model Architecture

Few-Shot Learning with Prototypical Networks

Integrating Synthetic Data and Physics-Based Simulations (e.g., Molecular Dynamics) to Fill Gaps

Technical Support Center

This support center addresses common challenges in integrating synthetic data and physics-based simulations like Molecular Dynamics (MD) to address data sparsity in molecular optimization datasets.

Troubleshooting Guides

TG-1: MD Simulation Fails to Converge or Crashes

- Step 1: Check system preparation. Ensure your initial molecular structure is properly solvated and neutralized. Use

gmx pdb2gmx(GROMACS) ortleap(AMBER) with consistent force field parameters. - Step 2: Verify energy minimization. Run a steepest descent minimization until the maximum force is below 1000 kJ/mol/nm. Failure here indicates bad contacts.

- Step 3: Examine equilibration steps. Monitor temperature and pressure during NVT and NPT equilibration. Large fluctuations may require smaller time steps (e.g., reduce from 2 fs to 1 fs) or longer coupling constants.

- Step 4: Check log files for specific error codes (e.g., "LINCS warning"). This often requires increasing the

lincs_itervalue or constraining all bonds with LINCS.

TG-2: Synthetic Data Shows Low Fidelity to Physical Reality

- Step 1: Validate against ab initio calculations. For a subset of generated conformers, compute DFT-level energies and compare with your generative model's output. Implement a root-mean-square deviation (RMSD) threshold filter (< 2.0 Å).

- Step 2: Calibrate with short MD. Use each synthetic conformation as a starting point for a short (10-100 ps) MD simulation. If the structure rapidly diverges (high energy), it indicates non-physical starting geometry.

- Step 3: Augment training with physical descriptors. Incorporate basic physical invariants (e.g., rotatable bond counts, SA Score) as regularization terms in your generative model's loss function.

TG-3: Poor Generalization of Hybrid (Simulation + Synthetic) Model

- Step 1: Conduct a bias audit. Compare the distribution of key molecular descriptors (e.g., molecular weight, logP) in your hybrid dataset versus a known reference (e.g., ChEMBL). Apply statistical tests (Kolmogorov-Smirnov).

- Step 2: Implement active learning. Use the model's uncertainty estimates to selectively run new, expensive MD simulations on molecules where the synthetic data is least confident, filling the most informative gaps.

- Step 3: Review data splitting. Ensure your train/test split is scaffold-based (using Bemis-Murcko scaffolds) to avoid artificial inflation of performance metrics.

Frequently Asked Questions (FAQs)

FAQ-1: How much synthetic data is needed relative to real simulation data to see a benefit? Recent benchmarks indicate that a ratio between 10:1 and 100:1 (synthetic:simulation) can be effective, but quality is paramount. A smaller set of high-fidelity synthetic data, validated by short MD, is superior to a large set of poor data.

Table 1: Impact of Synthetic-to-Simulation Data Ratio on Model Performance

| Synthetic:Simulation Ratio | R² on Test Set (Binding Affinity) | Mean RMSD of Predicted Conformer (Å) | Key Requirement |

|---|---|---|---|

| 1:1 (Baseline) | 0.65 | 2.1 | N/A |

| 10:1 | 0.72 | 1.8 | MD-validated synth |

| 100:1 | 0.75 | 1.7 | Curated diversity |

| 1000:1 (Uncurated) | 0.58 | 2.5 | None (Low fidelity) |