REINVENT 4 AI Molecule Design: A Step-by-Step Guide for Drug Discovery Researchers

This article provides a comprehensive guide to REINVENT 4, a state-of-the-art open-source platform for AI-driven *de novo* molecular design.

REINVENT 4 AI Molecule Design: A Step-by-Step Guide for Drug Discovery Researchers

Abstract

This article provides a comprehensive guide to REINVENT 4, a state-of-the-art open-source platform for AI-driven *de novo* molecular design. Tailored for computational chemists and drug discovery professionals, we cover its foundational principles, detailed workflow implementation, strategies for troubleshooting and optimization, and methods for validating and benchmarking results against other tools. The guide aims to empower researchers to effectively leverage this generative chemistry framework to accelerate hit identification and lead optimization in their discovery pipelines.

What is REINVENT 4? A Primer on Its Architecture and Core Concepts for Generative Chemistry

1. Application Notes: Evolution and Core Advancements

REINVENT 4 represents a significant architectural and functional overhaul from its predecessors, transitioning from a Reinforcement Learning (RL)-based framework to a more flexible, scoring-focused paradigm. The table below summarizes the key evolutionary changes.

Table 1: Evolutionary Comparison of REINVENT Versions

| Feature/Aspect | REINVENT 2.x/3.x | REINVENT 4 | Impact of Change |

|---|---|---|---|

| Core Paradigm | Reinforcement Learning (RL) with a prior likelihood agent. | Scoring-centric, agent-agnostic "run mode" architecture. | Decouples molecule generation from specific learning algorithms, enabling plug-and-play of various models. |

| Model Dependencies | Tightly coupled to a specific Prior model. | Supports any generative model (e.g., Hugging Face Transformers) as an "Agent." | Increases flexibility; users can leverage state-of-the-art public models or fine-tuned custom models. |

| Scoring Framework | Intrinsic (e.g., SAS, LogP) and extrinsic (proxy) scores combined into a single composite score. | Modular "Scoring Function" components (e.g., Predictive, PhysChem, Custom) with a configuration file. | Enhances transparency, modularity, and ease of configuring complex, multi-parameter optimization. |

| Library Enumeration | Limited or built-in capabilities. | Integrated and explicit "Library Enumeration" step (e.g., for R-groups, scaffolds). | Directly supports lead optimization and analog generation workflows common in medicinal chemistry. |

| Configuration | Less structured, often requiring code modification. | YAML-based configuration files for all run modes and components. | Standardizes and simplifies experiment setup, reproducibility, and sharing. |

| Primary Output | SMILES sequences with scores. | Structured data (JSON, SDF) with comprehensive metadata, including origin of score components. | Facilitates downstream analysis and interpretation of why a molecule scored highly. |

The key advancements in REINVENT 4 include its agent-agnostic design, which treats the generative model as a component; its modular scoring stack, allowing complex multi-parameter optimization; and its explicit library enumeration step, bridging de novo design with lead optimization.

2. Protocol: Basic De Novo Molecule Generation for a Target Activity

This protocol outlines a standard workflow for generating novel molecules predicted to be active against a specific target using a publicly available pre-trained generative model.

Objective: To generate and score 10,000 novel molecules with high predicted pChEMBL activity for target PKx and favorable drug-like properties.

Research Reagent Solutions (The Scientist's Toolkit):

Table 2: Essential Components for REINVENT 4 Experiment

| Component | Function / Example | Source / Note |

|---|---|---|

| Generative Agent Model | The AI model that proposes new molecular structures. | e.g., ChemBERTa from Hugging Face, or a fine-tuned REINVENT prior model. |

| Predictive Model (QSPR) | Provides the primary activity score (e.g., pKi, pIC50). | A trained Random Forest or Neural Network model on relevant bioactivity data. |

| PhysChem Scoring Components | Calculate properties like LogP, Molecular Weight, TPSA. | Built-in components like rocs and alerts (structural alerts). |

| Configuration YAML File | The master file defining the entire experiment pipeline. | Created by the user; defines agent, scoring, sampling, and logging parameters. |

| Conda Environment | A reproducible software environment with all dependencies. | Created from the reinvent.yml file provided in the REINVENT 4 repository. |

Experimental Workflow:

Environment Setup:

Prepare Configuration File (

de_novo_config.yaml):Execute the Run:

Output Analysis: The primary output is a

results.sdffile. Each molecule includes properties (e.g.,pkx_activity_score,drug_likeness_score,total_score). Load this file in a cheminformatics toolkit (e.g., RDKit) for analysis, filtering, and visualization of the top-scoring compounds.

3. Protocol: Lead Optimization via Library Enumeration

This protocol uses the library enumeration mode to generate analog libraries around a identified hit compound.

Objective: To enumerate and score an R-group library from a core scaffold to optimize potency and reduce lipophilicity.

Experimental Workflow:

Prepare Input Files:

scaffold.smi: The core molecule with attachment points (e.g.,[*]c1ccc([*])cn1).rgroups.smi: A list of R-groups to attach, one SMILES per line.enumeration_config.yaml:

enumeration: scaffoldfile: "./scaffold.smi" rgroupfile: "./rgroups.smi" chemistry: default

agent: null

scoring: - name: pkxpotency component: type: predictive modelpath: "./models/pkxnnmodel.h5" weight: 2.0 transform: type: reversesigmoid high: 9.0 low: 7.0 - name: reducelogp component: type: rocs parameters: ["LogP"] weight: -1.0 # Negative weight to penalize high LogPExecute Enumeration Run:

Output Analysis: The output SDF will contain all enumerated molecules. Sort by

total_scoreto find analogs with the best projected balance of higher potency (pkx_potency) and lower LogP (reduce_logp).

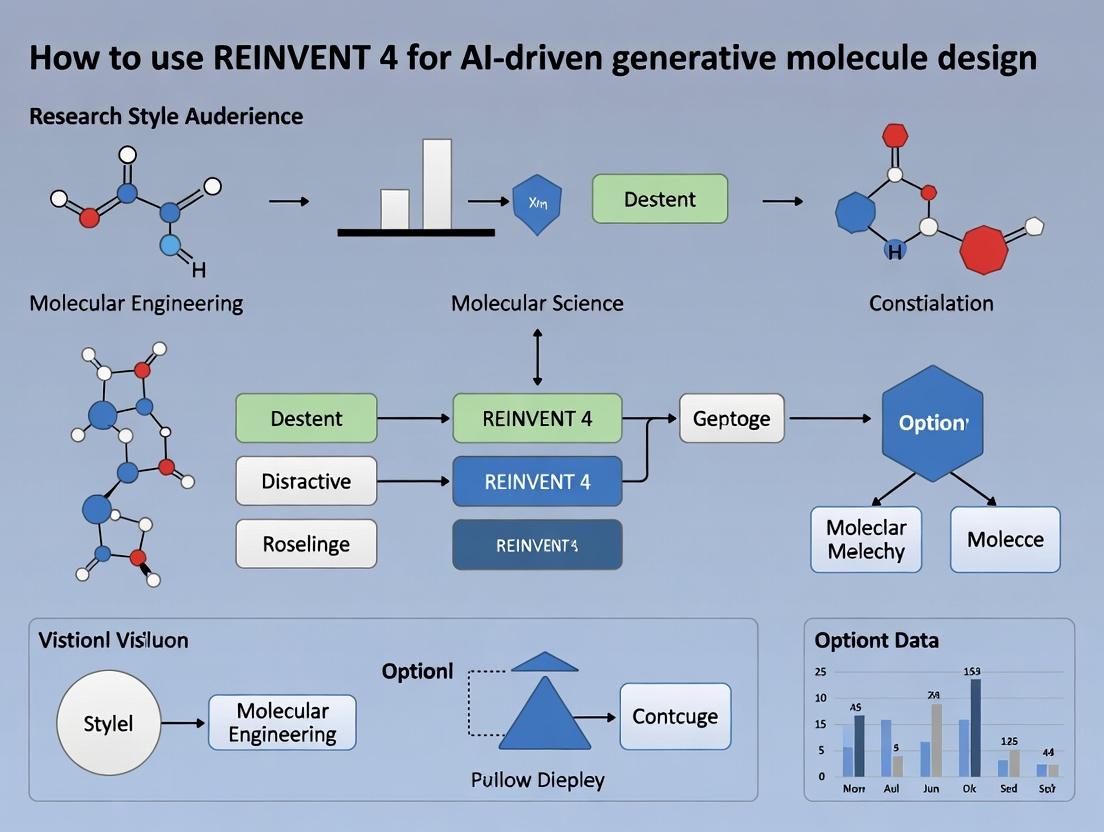

4. Visualization of REINVENT 4 Architecture and Workflow

Title: REINVENT 4 Modular Architecture and Data Flow

Title: Scoring Component Logic Flow

Application Notes

Within the REINVENT 4 framework for AI-driven molecular design, the core components form a closed-loop system that iteratively generates and optimizes compounds toward desired property profiles. The Agent is a generative neural network (typically an RNN or Transformer) that proposes new molecular structures as SMILES strings. It is initialized from a Prior, a pre-trained model on a broad chemical space (e.g., ChEMBL), which encapsulates general chemical knowledge and syntax. The Scoring Function is a multi-component function that quantitatively evaluates generated molecules against target criteria (e.g., bioactivity prediction, physicochemical properties, synthetic accessibility). The Replay Buffer stores high-scoring molecules from previous iterations, enabling the agent to learn from its past successes and maintain diversity, mitigating mode collapse.

The optimization process involves fine-tuning the Agent using policy-based reinforcement learning, where the Scoring Function provides the reward signal. The Prior acts as a regularizer, preventing the Agent from drifting into chemically unrealistic regions.

Protocols

Protocol 1: Initialization of the Prior Model

Objective: To load and configure a pre-trained Prior model for use within REINVENT 4.

- Source: Download a publicly available pre-trained model (e.g., the official REINVENT prior trained on ChEMBL) or prepare a custom prior trained on a relevant dataset.

- Framework Setup: Ensure Python environment with REINVENT 4 installed. Import necessary libraries:

torch,reinvent. - Loading: Instantiate the Prior class using the provided configuration file (

prior.json). Load the model weights (prior.prior) usingtorch.load. - Validation: Run a batch of random sampling from the Prior to verify it produces valid SMILES strings. Calculate basic chemical metrics (e.g., validity, uniqueness) on 1000 samples.

- Expected Outcome: Validity > 97%.

Protocol 2: Configuration of a Multi-Parameter Scoring Function

Objective: To define a composite scoring function for multi-objective optimization.

- Define Components: Identify and script individual scoring components. Common components include:

- Predictive Model (pIC50): Use a pre-trained on-target QSAR model. Input: SMILES; Output: Predicted activity score (0-1).

- Physicochemical Filter: Implement rule-based filters for properties like Molecular Weight (MW), LogP, Number of H-bond donors/acceptors.

- Chemical Intelligence (NIHS): Score based on the presence of undesirable structural alerts.

- Diversity: Compute Tanimoto similarity against molecules in the Replay Buffer.

- Assign Weights: Determine the relative importance of each component. Weights sum to 1.0.

- Integration: Use the

FinalScore = Σ (Component_Score_i * Weight_i)within the REINVENTScoringFunctionclass. Configure a threshold for the total score to determine "high-scoring" molecules for the Replay Buffer. - Validation: Test the scoring function on a set of 10 known active and 10 known inactive molecules to confirm it discriminates appropriately.

Protocol 3: Running an Optimization Campaign with Replay Buffer

Objective: To execute a full iterative optimization cycle.

- Parameter Initialization: Set learning parameters: learning rate (e.g., 0.0001), batch size (e.g, 128), number of epochs per iteration (e.g., 1), sigma (for scaling rewards, e.g., 128).

- Sampling Phase: The Agent samples a batch of SMILES (e.g., 1024).

- Scoring Phase: The Scoring Function evaluates each molecule in the batch.

- Agent Update: The Agent's likelihoods for generating the high-scoring molecules are increased using the augmented likelihood loss:

Loss = -Σ (Score_i * log(Agent(SMILES_i)) / Prior(SMILES_i)). - Replay Buffer Update: Molecules with a total score above a defined threshold (e.g., 0.7) are stored in the Replay Buffer (capacity: e.g., 1000). If full, replace lowest-scoring entries.

- Iteration: Repeat steps 2-5 for a predefined number of steps (e.g., 500-2000).

- Monitoring: Track the average score, top score, and structural diversity (internal pairwise Tanimoto similarity) per iteration.

Table 1: Typical Performance Metrics for REINVENT 4 Components in a Benchmark Optimization

| Component | Metric | Value Range / Typical Result | Notes |

|---|---|---|---|

| Prior (Initialization) | SMILES Validity | > 97% | On random sampling. |

| Novelty (vs. Training Set) | > 99% | ||

| Scoring Function | Component Count | 3-6 | More than 6 can lead to noisy gradients. |

| Weight per Component | 0.1 - 0.8 | Dominant objective usually 0.5-0.8. | |

| Agent Optimization | Learning Rate | 1e-5 to 1e-4 | Critical for stable learning. |

| Sigma (σ) | 32 - 256 | Controls reward scaling. High σ encourages exploration. | |

| Replay Buffer | Capacity | 500 - 5000 molecules | Prevents overfitting to recent successes. |

| Update Threshold (Score) | 0.5 - 0.8 | Depends on scoring function rigor. | |

| Campaign Output | Top Score Achieved | 0.8 - 1.0 | Problem-dependent. |

| % Novel Actives Generated | 60% - 100% | vs. known databases. |

Diagrams

Title: REINVENT 4 Core Architecture & Optimization Loop

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for REINVENT 4 Experiments

| Item | Function / Description | Example / Source |

|---|---|---|

| Pre-trained Prior Model | Provides foundational knowledge of chemical space and valid SMILES syntax. Serves as the starting point for the Agent. | Official REINVENT Prior (trained on ChEMBL), GuacaMol benchmark models. |

| Target-Specific Predictive Model | Key component of the Scoring Function. Predicts bioactivity (pIC50, Ki) or ADMET properties for generated molecules. | In-house QSAR model, publicly available models from ChEMBL or MoleculeNet. |

| Chemical Filtering Library | Enables rule-based scoring components to enforce physicochemical properties and remove undesirable sub-structures. | RDKit (for MW, LogP, etc.), NIHS/PAINS filter sets, REOS rules. |

| Diversity Metrics Package | Calculates molecular similarity to manage exploration/exploitation trade-off via the Replay Buffer and diversity scoring. | RDKit Fingerprints & Tanimoto, FCD (Frèchet ChemNet Distance) calculator. |

| Replay Buffer Implementation | Software module to store, retrieve, and manage high-scoring molecules across optimization iterations. | REINVENT's Experience class, custom FIFO buffer with score-based sorting. |

| Visualization & Analysis Suite | Tools to monitor campaign progress and analyze output chemistry. | Matplotlib/Seaborn (for metrics), t-SNE/UMAP plots (for chemical space), CheS-Mapper. |

Understanding the Reinforcement Learning (RL) Framework for Molecule Generation

Within the thesis "How to use REINVENT 4 for AI-driven generative molecule design research," a foundational pillar is the application of Reinforcement Learning (RL). RL reframes molecule generation as a sequential decision-making problem, where an agent (a generative model) interacts with an environment (chemical space and scoring functions) to learn a policy for generating molecules with optimized properties.

The standard RL framework in this context consists of:

- Agent: Typically a Recurrent Neural Network (RNN) or Transformer-based model that generates molecular string representations (e.g., SMILES) token-by-token.

- Environment: Defines the state (the current partial molecule) and provides a reward based on the completed molecule's properties.

- Reward Function: A critical component that calculates a numerical score quantifying the desirability of a generated molecule, often combining multiple objectives (e.g., drug-likeness, synthetic accessibility, target affinity).

- Policy: The agent's strategy for choosing the next token, which is iteratively updated to maximize the expected cumulative reward.

Key RL Paradigms in Molecule Generation

Table 1: Comparison of RL Paradigms for Molecule Generation

| Paradigm | Agent Update Method | Key Advantage | Common Challenge | Typical Use in REINVENT 4 Context |

|---|---|---|---|---|

| Policy Gradient (e.g., REINFORCE) | Directly optimizes policy parameters using estimated reward gradients. | Stable, on-policy learning. | High variance in gradient estimates. | Core algorithm for optimizing the Prior network against a customized Scoring Function. |

| Actor-Critic | Uses a Critic network to estimate value function, reducing variance in Actor (policy) updates. | Lower variance, more sample-efficient. | More complex to implement and tune. | Used in advanced configurations for faster convergence. |

| Proximal Policy Optimization (PPO) | Constrains policy updates to prevent destructive large steps. | More robust and reliable training. | Requires careful clipping parameter tuning. | Alternative for stabilizing fine-tuning of generative models. |

Application Notes: Integrating RL with REINVENT 4

REINVENT 4 operationalizes this RL framework through a modular architecture. The Prior network (the Agent) is initialized, often with a model pre-trained on a large corpus of known molecules. The Agent network is a copy of the Prior that is actively updated. A user-defined Scoring Function (the Environment's reward function) evaluates generated molecules.

Core Workflow:

- The Agent generates a batch of molecules (sequences).

- Each molecule is scored by the composite Scoring Function.

- The scores are converted into a loss function that encourages high-rearding actions.

- The Agent's policy is updated via gradient ascent on the loss.

- The updated Agent may be used for the next iteration, or a modified transfer learning strategy is applied.

Quantitative Performance Metrics

Table 2: Typical RL-Based Molecule Generation Benchmarks (Illustrative Values)

| Metric | Description | Target Range (Ideal) | Example Baseline (Random Generation) | Example RL-Optimized Run |

|---|---|---|---|---|

| Internal Diversity | Average Tanimoto dissimilarity between generated molecules. | High (>0.8) | ~0.85 | ~0.70-0.80 |

| Novelty | Fraction of molecules not present in training set. | High (>0.9) | ~1.0 | ~0.95-1.0 |

| Success Rate | % of molecules passing all score filters. | Problem-dependent | <5% | 20-60% |

| Pharmacokinetic (QED) Score | Quantitative drug-likeness. | 0.6 - 1.0 | ~0.5 | ~0.7 - 0.9 |

| Synthetic Accessibility (SA) Score | Ease of synthesis (lower is easier). | < 4.5 | ~5.0 | ~3.0 - 4.0 |

Experimental Protocols

Protocol: Standard RL Run in REINVENT 4 for Optimizing a Single Property

Objective: To fine-tune a generative model to produce molecules with high predicted activity against a target protein.

Materials: See "The Scientist's Toolkit" below. Software: REINVENT 4.0+ installed in a Conda environment.

Method:

- Configuration Preparation:

- Prepare a valid JSON configuration file.

- In the

"parameters"section, set"agent"and"prior"to the same initial model file (e.g., a pre-trained USPTO model). - Define the

"scoring_function". For a single property:

Run Initialization:

- Execute:

reinvent run -c config.json -o run_results/. - The system loads the Prior, copies it to create the Agent, and initializes the optimizer (e.g., Adam).

- Execute:

Sampling and Optimization Loop (per epoch):

- The Agent samples a set number of SMILES strings (

"batch_size"). - Invalid SMILES are penalized with a score of 0.

- Valid SMILES are passed to the scoring function, returning a score between 0-1.

- The Negative Log Likelihood (NLL) loss of the Agent for generating the sequence is weighted by the score-adjusted importance sampling factor:

exp((Score - Prior_NLL) / sigma). - The weighted loss is backpropagated to update the Agent's weights.

- Save the state of the Agent periodically.

- The Agent samples a set number of SMILES strings (

Analysis:

- Monitor the

"results.csv"file for average scores and diversity metrics. - Visualize the top-scoring SMILES structures from the final epoch.

- Monitor the

Protocol: Multi-Objective Optimization with Composite Score and Diversity Filter

Objective: To generate novel, synthetically accessible molecules with high activity and acceptable solubility.

Method:

- Configure Composite Scoring Function:

Apply Diversity Filter (DF):

- In the

"diversity_filter"section of the config, enable the filter (e.g.,"NoFilterWithPenalty"or"IdenticalMurckoScaffold"). - The DF tracks unique scaffolds (Murcko or otherwise) and applies a penalty to molecules with scaffolds that have already been discovered, promoting exploration.

- Set parameters like

"bucket_size"and"penalty_multiplier".

- In the

Run and Validate:

- Execute the run. The RL agent now receives a reward shaped by multiple objectives and a diversity penalty.

- Post-process generated molecules through more rigorous property prediction or clustering analyses.

Visualizations

RL Framework for Molecule Generation

REINVENT 4 RL Optimization Loop

The Scientist's Toolkit

Table 3: Essential Research Reagents & Solutions for RL-Driven Molecule Generation

| Item | Function in the Experiment | Example/Specification |

|---|---|---|

| Pre-trained Prior Model | Provides a foundational understanding of chemical space and valid SMILES syntax. Serves as the starting policy for RL. | Model pre-trained on ChEMBL, PubChem, or USPTO datasets (e.g., random.prior or ChEMBL.prior in REINVENT). |

| Target-Specific Predictive Model | Core of the scoring function. Predicts the property (e.g., pIC50, solubility) for a given molecule structure. | A scikit-learn/Random Forest or a simple neural network model saved as a .pkl file. Must accept SMILES or fingerprints as input. |

| Computational Environment | Isolated software environment with all necessary dependencies. | Conda environment with REINVENT 4, RDKit, TensorFlow/PyTorch, and standard data science libraries. |

| Validation Dataset | A set of known actives/inactives used to validate the generative output and scoring function performance. | CSV file containing SMILES and measured activity for the target of interest. |

| Diversity Filter Parameters | Algorithmic "reagent" that directs exploration in chemical space by managing scaffold memory. | Configuration defining scaffold type (Murcko, Bemis), bucket sizes, and penalty multipliers. |

| RL Hyperparameter Set | Tunes the learning dynamics of the policy update. | Defined values for sigma (exploitation vs. exploration), learning_rate, batch_size, and number of steps. |

| Chemical Intelligence Software (RDKit) | Performs essential cheminformatics tasks: SMILES validation, descriptor calculation, scaffold decomposition, and visualization. | RDKit library installed in the Python environment. |

This document serves as a foundational technical guide for the thesis "How to use REINVENT 4 for AI-driven generative molecule design research." Successfully deploying and utilizing the REINVENT 4 platform requires a correctly configured computational environment. This section details the essential software prerequisites, environment management strategies, and hardware considerations to ensure reproducible and efficient generative molecular design experiments.

Essential Python Libraries and Dependencies

REINVENT 4 is built upon a specific stack of Python libraries for deep learning, cheminformatics, and workflow management. The following table summarizes the core libraries and their roles in the generative pipeline.

Table 1: Core Python Libraries for REINVENT 4

| Library | Version Range (Current) | Primary Function in REINVENT 4 |

|---|---|---|

| PyTorch | 2.0+ | Provides the core deep learning framework for running and training the Reinforcement Learning (RL) agent and prior network. |

| RDKit | 2022.09+ | Handles molecule manipulation, fingerprint generation, SMILES parsing, and calculation of chemical properties/descriptors. |

| REINVENT-Core | 4.0 | The central library containing the reinforcement learning logic, scoring functions, and the main application programming interface (API). |

| REINVENT-Community | 4.0 | Provides standardized scoring components (e.g., QSAR models, similarity), parsers, and user-friendly utilities. |

| PyTorch Lightning | 2.0+ | Simplifies the training loop and experiment organization for the generative model. |

| Pandas | 1.5+ | Manages tabular data for input libraries, generated compounds, and results analysis. |

| NumPy | 1.23+ | Supports numerical operations for array manipulations within scoring functions. |

| Jupyter | 1.0+ | Facilitates interactive prototyping and analysis of generative runs in notebook environments. |

Conda Environment Configuration Protocol

Using Conda is the recommended method to manage dependencies and avoid conflicts. Below is a step-by-step protocol for setting up the environment.

Protocol 3.1: Creating a Conda Environment for REINVENT 4

- Install Miniconda: Download and install the latest Miniconda distribution from https://docs.conda.io/en/latest/miniconda.html.

- Create Environment: Open a terminal (Anaconda Prompt on Windows) and execute:

Install PyTorch: Install the appropriate version of PyTorch with CUDA support for GPU or CPU-only. Check https://pytorch.org/get-started/locally/ for the latest command.

For NVIDIA GPU (CUDA 11.8):

For CPU only:

Install RDKit: Install via conda-forge.

Install REINVENT 4 Libraries: Install the core and community packages via pip.

Verify Installation: Start a Python interpreter and test imports:

Hardware Considerations and Benchmarking

The choice between CPU and GPU significantly impacts the speed of compound generation and model training.

Table 2: Hardware Configuration Comparison

| Component | Minimum Viable | Recommended for Research | High-Throughput |

|---|---|---|---|

| CPU | 4-core modern CPU (Intel i7 / AMD Ryzen 5) | 8-core CPU (Intel i9 / AMD Ryzen 7) | 16+ core CPU (Xeon / Threadripper) |

| RAM | 16 GB | 32 GB | 64+ GB |

| GPU | Integrated / None (CPU-only) | NVIDIA RTX 4070 Ti (12GB VRAM) | NVIDIA RTX 4090 (24GB) or A100 (40/80GB) |

| Storage | 100 GB HDD/SSD | 500 GB NVMe SSD | 1 TB+ NVMe SSD |

| Throughput (Est.) | ~100-1k molecules/sec (CPU) | ~10k-50k molecules/sec | ~100k+ molecules/sec |

Protocol 4.1: Benchmarking Hardware for a Generative Run

- Objective: Quantify the molecules generated per second (MGPS) for a standard REINVENT 4 run on your hardware.

- Setup: Activate the

reinvent4Conda environment and prepare a standard configuration JSON file (e.g.,benchmark.json). - Execution: Run REINVENT for a fixed number of steps (e.g., 1000) using the command line interface.

- Data Collection: In the generated log file, locate the line reporting "MGPS" (Molecules Generated Per Second).

- Analysis: Record the MGPS value. Repeat the run 3 times and calculate the average to account for system variability.

System Architecture and Workflow

The following diagram illustrates the logical flow and component interaction within a standard REINVENT 4 run.

Title: REINVENT 4 Generative Design Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Beyond software, successful experimentation requires curated data and computational "reagents."

Table 3: Essential Research Materials & Resources

| Item | Function/Source | Description |

|---|---|---|

| Initial Compound Library | ZINC, ChEMBL, in-house databases | A set of starting molecules (in SMILES format) for seeding the generative model or for similarity scoring. |

| Prior Network Weights | Provided with REINVENT or pre-trained. | A pre-trained neural network that provides the initial generative policy for molecule creation. |

| Validation Dataset | PubChem, ChEMBL. | A held-out set of bioactive molecules for benchmarking the model's ability to generate valid, novel scaffolds. |

| Scoring Function Components | REINVENT-Community, custom code. | Modular functions (e.g., QSAR, similarity, synthetibility) that define the objective for optimization. |

| Configuration JSON Template | REINVENT documentation. | The master file that defines all run parameters: paths, scoring, learning rates, and stopping criteria. |

| Benchmarked Hardware Profile | Self-generated (Protocol 4.1). | A performance baseline (MGPS) for planning experiment durations and resource allocation. |

This document provides application notes and protocols for utilizing the REINVENT 4 repository, framed within a thesis on AI-driven generative molecule design for research professionals.

The official REINVENT 4 repository (GitHub: molecularinformatics/reinvent-community) is the central hub for resources. The table below summarizes its key quantitative aspects.

Table 1: REINVENT 4 Repository Core Components & Metrics

| Component | Description | Key Metrics / Notes |

|---|---|---|

| Releases | Versioned stable builds. | Latest version: 4.1 (as of late 2025). |

| Stars | GitHub repository popularity. | ~500 stars (indicative of community adoption). |

| Forks | Repository copies for development. | ~150 forks (indicative of derivative work). |

| Issues | Bug reports and feature requests. | ~50 open issues; demonstrates active maintenance. |

| Wiki | Primary official documentation. | Contains setup, theory, and tutorial guides. |

| Notebooks/ | Jupyter notebook tutorials. | Contains 5+ core tutorial notebooks. |

| Examples/ | Configuration and script examples. | Includes demo configs for standard workflows. |

Key Documentation & Tutorial Pathways

Protocol 1: Initial Setup and Validation

Objective: To establish a functional local REINVENT 4 environment and validate its core components.

Materials & Reagents:

- Hardware: Computer with CUDA-capable GPU (recommended) or CPU.

- Software: Conda package manager (Miniconda or Anaconda), Git.

- Repository: REINVENT 4 GitHub repository.

Methodology:

- Clone Repository: Execute

git clone https://github.com/molecularinformatics/reinvent-community.git. - Create Conda Environment: Navigate to the cloned directory and run

conda env create -f reinvent_env.yaml. This creates an environment namedreinvent. - Activate Environment: Run

conda activate reinvent. - Install Package: Execute

pip install -e .to install REINVENT in development mode. - Validation Test: Run the provided unit tests via

pytest tests/ -vto verify installation integrity. A successful run confirms core functionality.

Diagram: REINVENT 4 Setup and Validation Workflow

Protocol 2: Running a Standard De Novo Design Experiment

Objective: To execute a basic generative run for a single-activity target using provided example configurations.

Materials & Reagents:

- REINVENT 4 Environment: As established in Protocol 1.

- Configuration File:

examples/runconfigs/simple_start.json. - Input Files: PRIOR model (

models/random.prior), scoring function component (examples/scoring_functions/simple.json).

Methodology:

- Configure Run: Examine the

simple_start.jsonfile. Key parameters include:"num_steps": 100,"batch_size": 128,"sigma": 120. The"scoring_function"section points to the component JSON. - Adapt Scoring Function: Open the scoring function JSON. It defines a simple

"matching_substructure"penalty. Modify the SMARTS pattern to a relevant scaffold for your project. - Launch Experiment: In the terminal, with the

reinventenvironment active, run:python /reinvent.py -c examples/runconfigs/simple_start.json -o results/simple_run/. The-oflag specifies the output directory. - Monitor Output: The run logs progress to the console. The output directory will contain

progress.log,scaffold_memory.csv, andresults.csvwith generated structures and scores.

The Scientist's Toolkit: Core Research Reagents for REINVENT 4 Table 2: Essential Components for a Generative Experiment

| Item | Function | Example / Note |

|---|---|---|

| Prior Model | Provides the base language model for molecule generation. Encodes chemical grammar. | random.prior (untrained), or a transfer-learned model. |

| Agent | The model being optimized during Reinforcement Learning (RL). Starts as a copy of the Prior. | Defined in run configuration. |

| Scoring Function | The multi-component function that calculates the desirability (score) of a generated molecule. | Sum of weighted components (e.g., QED, SAScore, docking). |

| Configuration JSON | The main experiment file defining model paths, parameters, and workflow steps. | simple_start.json, transfer_learning.json. |

| Sampled SMILES | The molecular structures (as text strings) generated by the Agent in each step. | Primary output for analysis. |

Protocol 3: Utilizing the Wiki and Issue Tracker for Troubleshooting

Objective: To effectively diagnose and solve common runtime errors by leveraging community knowledge.

Methodology:

- Error Identification: When an error occurs, note the exact traceback message (e.g.,

CUDA out of memory,Invalid SMILES). - Wiki Search: First, consult the repository's Wiki. Search for keywords like "Installation", "Troubleshooting", or "FAQ".

- Issue Tracker Search: Navigate to the GitHub "Issues" tab. Use the search bar with error keywords. Filter by "closed" issues to see resolved cases.

- Solution Application: Follow the steps outlined in a matching issue (e.g., reduce

batch_sizefor memory errors, check input SMILES format). - Engagement: If no solution exists, create a new issue. Provide the full error log, your configuration, and system details.

Diagram: Community-Powered Problem Resolution Pathway

Protocol 4: Building a Custom Scoring Function Component

Objective: To design and implement a user-defined scoring component, such as a predicted IC50 value from a QSAR model.

Materials & Reagents:

- Template:

examples/scoring_functions/simple.json. - Python Script: Your predictive model encapsulated in a class.

- REINVENT 4 Environment: For testing.

Methodology:

- Define Component JSON: Create a new JSON file (e.g.,

my_qsar.json). Use the standard structure:{"name": "my_ic50", "weight": 1, "specific_parameters": {"model_path": "my_model.pkl", "threshold": 6.0}}. - Develop Python Class: Create a file

my_qsar_component.py. The class must inherit fromScoringFunctionComponentand implement thecalculate_score()method. It should load your model and predict scores for a list of SMILES. - Integrate: Ensure your component is added to the scoring function registry within REINVENT's codebase, or place the script in a location where it can be imported dynamically (advanced).

- Test: Reference

my_qsar.jsonin your main run configuration. Run a short validation to ensure scores are computed without error.

Table 3: Structure of a Custom Scoring Component

| Layer | Content | Purpose |

|---|---|---|

| Configuration (JSON) | Name, weight, parameters (paths, thresholds). | Declares how the component integrates into the scoring function. |

| Logic (Python Class) | __init__(): Loads models. calculate_score(): Computes score per molecule. |

Contains the executable logic for score calculation. |

| Registry | Entry point or import mechanism. | Makes the component visible to the REINVENT core. |

Your First REINVENT 4 Run: A Practical Tutorial from Configuration to Novel Compound Generation

This protocol details the initial setup for REINVENT 4, a de novo molecular design platform for AI-driven generative chemistry. A stable environment is critical for reproducible research in computational drug discovery.

System Requirements & Prerequisites

The following table summarizes the minimum and recommended system configurations.

Table 1: System Requirements for REINVENT 4

| Component | Minimum Requirement | Recommended Specification |

|---|---|---|

| Operating System | Linux (Ubuntu 20.04/22.04) or Windows 10/11 (WSL2) | Linux (Ubuntu 22.04 LTS) |

| CPU | 64-bit, 4 cores | 64-bit, 8+ cores |

| RAM | 16 GB | 32 GB or more |

| GPU | Not required for basic runs | NVIDIA GPU (e.g., RTX 3080/4090, A100) with 8+ GB VRAM |

| Storage | 10 GB free space | 50 GB free SSD space |

| Python Version | 3.8 | 3.9 or 3.10 |

Environment Setup Using Conda

Conda is the recommended method as it manages non-Python dependencies.

Protocol 3.1: Creating a Dedicated Conda Environment

- Install Miniconda/Anaconda: If not installed, download and install Miniconda from https://docs.conda.io/en/latest/miniconda.html.

- Open a terminal (or Anaconda Prompt on Windows).

Create a new environment with Python 3.9:

Activate the environment:

Protocol 3.2: Installing REINVENT 4 Core Package

With the reinvent4 environment active, install the package via pip.

Note: As of the latest search, the core REINVENT 4 package is available on PyPI. Version specification ensures stability.

Environment Setup Using Pip & Virtualenv

For users preferring lightweight virtual environments.

Protocol 4.1: Creating a Virtual Environment

- Ensure

venvis installed (standard with Python 3.3+). Create a virtual environment:

Activate it:

- Linux/Mac:

source reinvent4_venv/bin/activate - Windows:

.\reinvent4_venv\Scripts\activate

- Linux/Mac:

Protocol 4.2: Installing REINVENT and Dependencies

Upgrade pip and setuptools:

Install REINVENT 4:

Critical Dependency Installation

Certain functionalities require additional system libraries.

Protocol 5.1: Installing RDKit Dependencies (Linux)

RDKit is a core cheminformatics dependency. Install system libraries before the Python package.

Subsequently, install within your environment:

Verification and Testing

Confirm a successful installation.

Protocol 6.1: Basic Functionality Test

- In your activated environment, start a Python interpreter.

Run the following import statements:

A successful import without errors indicates a correct core setup.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Software & Tools for REINVENT 4 Research

| Item | Function/Benefit | Recommended Source/Version |

|---|---|---|

| REINVENT 4 Core | Primary Python library for generative model orchestration, scoring, and reinforcement learning. | PyPI: reInvent-ai==4.0 |

| PyTorch | Deep learning framework backend for running generative models (e.g., RNNs, Transformers). | Conda/Pip: Match CUDA version to GPU. |

| RDKit | Cheminformatics toolkit for molecular manipulation, descriptor calculation, and SMILES handling. | Conda: rdkit or PyPI: rdkit-pypi |

| Jupyter Lab | Interactive development environment for prototyping workflows and analyzing results. | Pip: jupyterlab |

| Pandas & NumPy | Data manipulation and numerical computation for processing large datasets of molecules and scores. | Bundled with installation. |

| Matplotlib/Seaborn | Visualization of chemical space, score distributions, and training metrics. | Pip: matplotlib, seaborn |

| Standardizer (e.g., chemblstructurepipeline) | Tool for standardizing molecular structures to ensure consistent input and output representations. | Pip: chembl-structure-pipeline |

Visual Workflow: REINVENT 4 Setup and Validation Pathway

Title: REINVENT 4 Installation and Validation Workflow

Title: Software Toolkit Interdependencies for REINVENT 4 Research

In the broader thesis on using REINVENT 4 for AI-driven generative molecule design, preparing the input files constitutes the critical foundation for a successful experiment. This step defines the chemical space, the objectives for the AI to optimize, and the runtime parameters. This protocol details the creation of three essential files: the input SMILES file, the scoring function configuration, and the main run configuration JSON.

Key Input Files & Their Functions

Table 1: Core Input Files for REINVENT 4

| File Name | Format | Primary Function | Required/Optional |

|---|---|---|---|

input.smi |

Text (.smi) | Provides starting molecules for the generation. | Required |

scoring_function.json |

JSON | Defines the components and weights of the objective function for the AI. | Required |

config.json |

JSON | Sets all parameters for the reinforcement learning run (e.g., agent, prior, innovation). | Required |

Detailed Protocols

Protocol 3.1: Preparing the Input SMILES File

Objective: To create a file containing valid SMILES strings that serve as starting points for the generative model.

- Source Molecules: Collect a set of molecules relevant to your target. This can be:

- Known actives from literature or internal databases.

- A diverse set from a public library (e.g., ZINC) to encourage exploration.

- A single scaffold of interest.

- Formatting:

- Use a plain text editor or spreadsheet software.

- Place one canonical SMILES string per line. No headers or other columns are required.

- Example

input.smicontent:

- Validation: Use RDKit (via Python or KNIME) to ensure all SMILES are valid and canonicalized. Remove any that fail parsing.

Protocol 3.2: Configuring the Scoring Function (scoring_function.json)

Objective: To architect the multi-parameter objective that the AI will learn to optimize.

- Structure: The file contains a JSON list of "component" dictionaries, each with a

name,weight, and specificparameters. - Component Selection: Choose from built-in components (see Table 2) and/or custom Python scripts.

- Parameter Definition: For each component, define its specific parameters (e.g., SMARTS pattern for substructure filters, target value for QED).

- Weight Assignment: Assign positive (desired) or negative (penalized) weights to balance the contribution of each component. Normalization is often applied internally.

- Example Component for

scoring_function.json:

Table 2: Common Scoring Function Components (REINVENT 4)

| Component Name | Key Function | Typical Weight Range | Key Parameters |

|---|---|---|---|

qed |

Quantitative Estimate of Drug-likeness | 0.5 - 1.5 | {} |

matching_substructure |

Penalizes/Encourages specific substructures | -2.0 - 2.0 | "smiles": ["[SMARTS]"] |

custom_alerts |

Penalizes unwanted structural alerts | -1.5 - 0.0 | "smiles": ["[SMARTS]"] |

predictive_property |

Links to external ML model (e.g., pIC50) | Variable | Model path, transform |

selectivity |

Optimizes for selectivity between two models | Variable | Model paths, transform |

tanimoto_similarity |

Encourages similarity to a reference | 0.0 - 1.5 | "smiles": ["CCO"] |

rocs |

Shape/feature overlay (requires ROCS) | Variable | Ref. molecule, input params |

Protocol 3.3: Configuring the Main Run (config.json)

Objective: To set the hyperparameters and paths for the reinforcement learning cycle.

- Use the Template: Start from the official REINVENT 4

config_template.json. - Critical Path Settings:

"input": "/path/to/input.smi""output_dir": "/path/to/results/""scoring_function": "/path/to/scoring_function.json""diversity_filter":Configure to maintain molecular diversity.

- Key Hyperparameter Groups:

"reinforcement_learning":Set"sigma"(exploration), learning rate, batch size."stage":Define number of steps ("n_steps"), e.g., 1000-5000."agent":&"prior":Specify the paths to the agent and prior network files (.ckptor.json).

- Validation: Ensure all file paths are absolute or correctly relative. Validate JSON syntax using an online validator or Python's

json.load().

Workflow Diagram

Diagram Title: REINVENT 4 Input File Preparation Workflow

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Tools

| Item | Category | Function in Input Preparation | Example/Note |

|---|---|---|---|

| RDKit | Cheminformatics Library | Validates and canonicalizes SMILES; generates descriptors for custom scoring. | Use Chem.CanonSmiles() in Python. |

| KNIME / PaDEL | GUI Cheminformatics | Alternative for researchers to prepare and filter SMILES files without coding. | PaDEL-Descriptor node. |

| ChEMBL / PubChem | Public Database | Source for bioactive SMILES strings to use in input.smi. |

Download SDF, extract SMILES. |

| SMILES/SMARTS | Chemical Notation | Standard language for representing molecules (SMILES) and substructure patterns (SMARTS). | [#6]1:[#6]:[#6]:[#6]:[#6]:1 is benzene. |

| JSON Validator | Code Utility | Ensures config.json and scoring_function.json are syntactically correct. |

Online JSONLint or Python's json module. |

| Custom Prediction Model (e.g., Random Forest) | Machine Learning Model | Used as a component in the scoring function to predict bioactivity or ADMET properties. | Must be saved in a REINVENT-compatible format (.pkl). |

| ROCS (Optional) | Shape Comparison Software | Provides 3D shape-based scoring component if licensed and installed. | Integrated via the rocs component. |

This application note details the critical configuration phase within REINVENT 4.0 for generative molecular design. Proper parameterization of the sampling, learning, and diversity components dictates the success of the AI-driven exploration of chemical space, balancing the discovery of novel, valid structures with the optimization towards desired properties.

Core Parameter Tables

Table 1: Primary Configuration Parameters for a Standard REINVENT 4.0 Run

| Parameter Group | Key Parameter | Typical Value/Range | Function & Impact |

|---|---|---|---|

| Sampling | number_of_steps |

500 - 2000 | Total number of SMILES generated per epoch. Scales computational cost. |

batch_size |

64 - 256 | Number of SMILES sampled in parallel. Affects memory usage and speed. | |

sampling_model |

randomize / multinomial |

Strategy for selecting next token. Randomize encourages exploration. |

|

temperature |

0.7 - 1.2 | Controls randomness in sampling. Higher = more diverse/risky output. | |

| Learning | learning_rate |

0.0001 - 0.001 | Step size for optimizer. Too high causes instability; too low slows learning. |

sigma |

128 | Scaling factor for the augmented likelihood (prior component). | |

learning_rate_decay |

Enabled/Disabled | Reduces learning rate over time to converge more stably. | |

kl_threshold |

0.0 - 0.5 | Constrains policy update to prevent catastrophic forgetting of prior. | |

| Diversity Filter | filter_threshold |

0.5 - 0.8 | Minimum Tanimoto similarity to keep a scaffold in the memory. |

memory_size |

100 - 500 | Max number of unique scaffolds to store. Limits long-term memory. | |

minsimilarity |

0.4 - 0.7 | Threshold for declaring a scaffold as "novel" compared to memory. |

Table 2: Scoring Function Component Parameters (Example: Dual Objectives)

| Component Name | Weight | Parameters | Purpose |

|---|---|---|---|

qed |

1.0 | N/A | Maximizes Quantitative Estimate of Drug-likeness. |

custom_alerts |

-1.0 | smarts: [[#7]!@[#6]1:[#6]:[#6]:[#6]:[#6]:[#6]:1] |

Penalizes molecules with unwanted structural motifs (e.g., aniline). |

predictive_model |

2.0 | model_path: drd2_model.pkl |

Maximizes predicted activity from a pre-trained DRD2 model. |

tpsa |

0.5 | min: 40, max: 120 |

Rewards molecules with Topological Polar Surface Area in a desired range. |

Experimental Protocols

Protocol 3.1: Configuring and Launching a REINVENT 4.0 Run

Objective: To set up and initiate a generative run targeting dopamine receptor D2 (DRD2) activity with high synthetic accessibility.

Materials: REINVENT 4.0 installation, Prior model (Prior.pkl), DRD2 predictive model (DRD2.pkl), configuration JSON template.

Procedure:

- Parameter File Creation: Copy the default

config.jsontemplate. Define therun_typeasreinforcement_learning. - Sampling Settings: Set

"number_of_steps": 1000,"batch_size": 128,"sampling_model": "randomize","temperature": 1.0. - Diversity Filter: Configure

"diversity_filter": {"name": "IdenticalMurckoScaffold", "memory_size": 200, "minsimilarity": 0.5}. - Scoring Function: Define a composite score as the weighted sum of:

PredictiveProperty(weight=2.0, modelpath=DRD2.pkl, transform=sigmoid).SAScore(weight=1.0, transform=reverse_sigmoid, high=4.0).CustomAlerts(weight=-1.0, smartspatterns for pan-assay interference).

- Learning Parameters: Set

"sigma": 128,"learning_rate": 0.0005,"kl_threshold": 0.3. - Output: Specify

"save_every_n_epochs": 50and an output directory. - Validation: Validate JSON syntax using a JSON linter.

- Execution: Run the command:

reinvent run CONFIG.json.

Protocol 3.2: Parameter Sweep for Optimizing Diversity

Objective: Systematically evaluate the impact of the Diversity Filter's minsimilarity and memory_size on scaffold novelty.

Materials: Configured REINVENT run (from Protocol 3.1), computing cluster/scheduler.

Procedure:

- Design of Experiments: Create a matrix of parameters:

minsimilarity[0.3, 0.5, 0.7] xmemory_size[100, 300, 500]. This yields 9 unique configurations. - Batch Configuration: Generate 9 configuration files, varying only the target parameters.

- Execution: Launch all 9 runs in parallel with identical random seeds for comparability.

- Analysis: After 200 epochs, analyze the output for each run:

- Calculate the total number of unique Murcko scaffolds generated.

- Plot scaffolds per epoch to assess the rate of novel discovery.

- Compare the average score of the top 100 molecules from each run.

- Selection: Choose the parameter set that best balances high scores with a steady influx of novel scaffolds.

Visualizations

Title: REINVENT 4.0 Core Loop & Parameter Injection

Title: REINVENT Learning & Sampling Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital & Computational Tools for REINVENT 4.0 Configuration

| Item | Function & Relevance in Configuration |

|---|---|

| REINVENT 4.0 | Core open-source platform for molecular generation. Provides the reinvent CLI and API for run execution. |

Prior Model (Prior.pkl) |

A pre-trained RNN on a large chemical database (e.g., ChEMBL). Serves as the baseline probability generator and policy regularizer. |

Predictive Model(s) (*.pkl) |

Pre-trained machine learning models (e.g., scikit-learn, XGBoost) for on-the-fly property prediction (activity, ADMET). Integrated via the scoring function. |

| Configuration JSON File | The central file defining all parameters for sampling, learning, scoring, and logging. Must be syntactically correct. |

| SMARTS Patterns | String representations of molecular substructures for use in CustomAlerts to penalize or reward specific motifs. |

| RDKit | Open-source cheminformatics toolkit. Used internally by REINVENT for SMILES handling, scaffold generation, and descriptor calculation. |

| Job Scheduler (e.g., SLURM) | For deploying parameter sweeps or long runs on high-performance computing clusters. Essential for large-scale optimization. |

| Jupyter Notebook / Python Scripts | For post-analysis of run results, visualizing score progression, and analyzing generated molecule libraries. |

Within the thesis "How to use REINVENT 4 for AI-driven generative molecule design research," Step 4 represents the critical transition from configuration to active computation. This phase executes the generative model to explore chemical space, producing novel molecular structures predicted to meet specified biological and physicochemical criteria. Effective command-line execution and diligent log monitoring are essential for ensuring the run's integrity, capturing results, and enabling real-time troubleshooting.

Command-Line Execution: Protocols & Application Notes

Launching a REINVENT 4 run involves invoking the main script with a configuration JSON file. The process is managed via a terminal session, which can be local or on a high-performance computing (HPC) cluster.

Core Execution Protocol

Key Parameters and Variables

Table 1: Essential Command-Line Execution Parameters

| Parameter/Variable | Description | Typical Value/Example |

|---|---|---|

| Configuration File | Path to the JSON file defining the run (model, scoring, sampling). | reinvent_config.json |

--run-id |

Optional flag to assign a unique identifier to the run. | --run-id=EXP_001 |

--log-dir |

Optional flag to specify a custom directory for log files. | --log-dir=./logs |

nohup |

Command to run process in background, immune to hangup signals. | nohup python reinvent.py ... & |

| Output Redirection | > redirects stdout, 2>&1 redirects stderr to the same file. |

> output.log 2>&1 |

| Conda Environment | The Python environment with REINVENT 4 and dependencies installed. | conda activate reinvent_env |

Monitoring Logs: Protocols & Application Notes

REINVENT 4 outputs detailed logs to the console (stdout/stderr), which should be captured to files for monitoring progress, performance, and errors.

Log File Structure and Monitoring Protocol

Protocol: Real-Time Log Monitoring

- Navigate to the log directory:

cd [PATH_TO_RUN_DIRECTORY]/log - Use

tailto follow the main log file in real-time:

- Monitor for key phases: Look for log entries signaling:

- Configuration validation.

- Model initialization (e.g., "Loading prior and agent").

- Start of each epoch/step (e.g., "Starting epoch 1").

- Scoring function outputs (e.g., "Running scoring...").

- Agent model updates (e.g., "Updating Agent").

- Generation of structures (SMILES) and their scores.

- Check for errors: Monitor for keywords like

ERROR,CRITICAL,Traceback. - Periodically check summary statistics: Logs report means and standard deviations for scores, including the total composite score.

Application Note: For long-running jobs, use terminal multiplexers like screen or tmux to persist the monitoring session.

Interpreting Key Log Outputs

Table 2: Critical Log Entries and Their Interpretation

| Log Entry / Metric | Significance | Target/Healthy Indicator |

|---|---|---|

Starting epoch X |

Main iterative loop of generation/learning. | Steady progression through epochs. |

Sampled molecules: Y |

Number of molecules generated per step. | Matches "num_steps" in config. |

Total score stats |

Mean/STD of the composite score for the batch. | Mean score should evolve with learning. |

Valid SMILES: Z% |

Percentage of chemically valid molecules generated. | Should be >95%, ideally >99%. |

Agent update |

Indicates the generative model is being optimized. | Should occur each epoch. |

Saving model |

Checkpoint of the agent model is saved. | Occurs at "save_every_n_epochs" interval. |

Scoring function duration |

Time taken to evaluate molecules. | Varies by complexity; watch for drastic increases. |

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for REINVENT 4 Execution

| Item | Function/Description |

|---|---|

| REINVENT 4 Core Repository | The main codebase containing reinvent.py, modules for models, scoring, and chemistry. |

| Anaconda/Miniconda | Package and environment manager to create an isolated Python environment with specific dependencies. |

| CUDA-enabled GPU Driver | Software that allows the PyTorch library to leverage NVIDIA GPUs for accelerated model training. |

| Configuration JSON File | The "experimental blueprint" defining all run parameters (paths, model architecture, scoring components). |

| Prior Model (.json or .pkl) | The pre-trained generative model that provides the foundation for molecule generation and likelihood calculation. |

| Scoring Component Libraries | External software or libraries (e.g., for docking, RDKit for physicochemical properties) called by the scoring function. |

| Terminal Emulator (e.g., iTerm2, Terminal) | Interface for executing command-line instructions and monitoring processes. |

| Log File Parser (Custom Script) | Optional tool to automatically parse log files, extract performance metrics, and generate progress plots. |

Visualizing the Execution and Monitoring Workflow

Diagram 1: REINVENT 4 launch, run cycle, and monitoring workflow.

This protocol details the systematic analysis of outputs generated by REINVENT 4, a platform for de novo molecular design. Within the broader thesis on AI-driven generative chemistry, this step is critical for validating model performance, assessing the chemical novelty and attractiveness of generated compounds, and guiding iterative model refinement. Proper interpretation of logs, molecular data, and progress plots enables researchers to translate computational outputs into viable candidates for experimental validation.

Key Output Components and Their Analysis

The primary outputs from a REINVENT 4 run consist of: 1) Generated molecular structures (SMILES), 2) Log files detailing the reinforcement learning process, and 3) Progress plots visualizing training dynamics.

Analysis of Generated Molecules

The generated molecules (typically in *.smi files) must be evaluated against multiple criteria. Key metrics should be calculated and compared.

Table 1: Quantitative Metrics for Generated Molecule Analysis

| Metric | Calculation/Tool | Ideal Range | Interpretation |

|---|---|---|---|

| Internal Diversity | Average pairwise Tanimoto similarity (ECFP4) | 0.3 - 0.7 | Lower values may indicate excessive randomness; higher values suggest lack of exploration. |

| QED | Quantitative Estimate of Drug-likeness | 0.6 - 1.0 | Measures drug-likeness based on physicochemical properties. |

| SA Score | Synthetic Accessibility Score (RDKit) | 1 (Easy) - 10 (Hard) | Target < 4.5 for synthetically tractable leads. |

| NP-likeness | Score from pytorch-nlp-tools |

-5 (Synthetic) to +5 (Natural) | Positive scores indicate natural product-like structures. |

| Rule-of-5 Violations | Lipinski's Rule of Five | ≤ 1 | Flags for potential poor oral bioavailability. |

| Unique Molecules | Percentage of unique isomeric SMILES | ~100% | Indicates the model's ability to generate novel structures. |

| Scoring Function Profile | Mean/Median of agent scores | Context-dependent | Tracks optimization against the desired objective. |

Protocol 1: Profiling a Set of Generated Molecules

- Input Preparation: Load the SMILES from the final epoch/generation (

scored_<epoch>.smi). - Descriptor Calculation:

a. Use RDKit to compute basic properties (MW, LogP, HBD, HBA, TPSA).

b. Calculate QED and SA Score using RDKit's

Descriptorsandsascorermodule. c. Generate ECFP4 fingerprints for diversity analysis. - Analysis:

a. Plot distributions of key properties (e.g., MW, LogP) against a reference set (e.g., ChEMBL).

b. Calculate the average internal diversity:

avg = sum(Tanimoto_sim(i,j)) / N_pairsfor a random sample of 1000 molecules. c. Assess novelty: Remove duplicates in-house and calculate the percentage not found in the training set.

Interpreting Log Files and Progress Plots

Log files (progress.log) and real-time plots provide a temporal view of the reinforcement learning (RL) process.

Table 2: Critical Columns in REINVENT 4 Logs and Progress Plots

| Plot/Log Metric | Description | What to Look For |

|---|---|---|

| Agent Score | The score output by the scoring function for the agent's molecules. | Steady increase or convergence at a high value. High variance may indicate instability. |

| Prior Likelihood | Log-likelihood of molecules under the prior model. | Should remain relatively stable. A sharp drop may indicate agent divergence from chemical space. |

| Augmented Likelihood | Combined score (agent score + sigma * prior likelihood). | The optimization driver. Should trend similarly to the agent score. |

| Score Components | Breakout of individual scoring function elements. | Identifies which objectives are being optimized/sacrificed. |

| Unique & Valid % | Percentage of valid and unique SMILES generated. | Should remain near 100% (valid) and ideally >80% (unique). |

Protocol 2: Diagnostic Workflow from Logs and Plots

- Open the

progress.logfile (tab-separated) in a data analysis tool (e.g., Pandas, Excel). - Generate Trend Plots:

a. For epochs 0 to N, plot

Agent Score,Prior Likelihood, andUnique %on separate y-axes. b. Visually identify phases: early exploration, optimization plateau, potential collapse. - Diagnose Common Issues:

- Mode Collapse (low diversity): Indicated by a sharp rise in

Agent Scorecoupled with a crash inUnique %and stable/highPrior Likelihood. Intervention: Increase thesigmaparameter to strengthen prior constraint. - Divergence (poor chemistry): Indicated by a sharp drop in

Prior LikelihoodandValid %. Intervention: Check scoring function for overly harsh penalties or errors. - Lack of Learning:

Agent Scorefluctuates around baseline. Intervention: Review scoring function gradients and consider adjusting the learning rate.

- Mode Collapse (low diversity): Indicated by a sharp rise in

The Scientist's Toolkit

Table 3: Essential Research Reagents & Software for Output Analysis

| Item | Function in Analysis | Example/Tool |

|---|---|---|

| RDKit | Core cheminformatics toolkit for descriptor calculation, fingerprinting, and molecule manipulation. | rdkit.Chem.Descriptors, rdkit.Chem.QED |

| Matplotlib/Seaborn | Library for creating static, animated, and interactive visualizations of property distributions and trends. | seaborn.histplot, matplotlib.pyplot.plot |

| Pandas | Data manipulation and analysis library for handling log files and molecular data tables. | pandas.read_csv, DataFrame.groupby |

| Jupyter Notebook | Interactive development environment for prototyping analysis scripts and visualizing results. | - |

| SA Score Calculator | Evaluates the synthetic accessibility of a molecule. | RDKit integration or standalone sascorer.py |

| NP-Scorer | Tool to calculate natural product-likeness score. | https://github.com/mpimp-comas/np-likeness |

| Reference Dataset | A set of known drug-like molecules (e.g., from ChEMBL) for comparative analysis. | ChEMBL SQLite database |

Visualizing the Output Analysis Workflow

Title: Workflow for Interpreting REINVENT 4 Outputs

Effective interpretation of REINVENT 4 outputs is an iterative, multi-faceted process that bridges AI generation and practical drug discovery. By rigorously profiling generated molecules, diagnosing learning dynamics from logs and plots, and synthesizing these analyses, researchers can confidently select promising chemical series for further in silico screening or in vitro testing, thereby closing the loop in AI-driven molecular design.

Solving Common REINVENT 4 Challenges and Tuning for Optimal Molecular Properties

Troubleshooting Installation and Dependency Conflicts

1. Introduction Within the broader thesis on leveraging REINVENT 4 for AI-driven generative molecule design, a critical preliminary step is establishing a stable, reproducible software environment. This document details common installation and dependency conflicts, provides structured data on resolutions, and outlines protocols for environment management, ensuring researchers can proceed with robust computational experiments.

2. Common Conflict Analysis & Resolution Matrix The following table summarizes frequent issues based on current community reports and dependency analysis.

Table 1: Common Installation Conflicts and Resolutions for REINVENT 4

| Conflict Symptom | Root Cause | Quantitative Data (Typical Versions) | Recommended Solution |

|---|---|---|---|

ImportError: libcudart.so.11.0 |

CUDA/cuDNN version mismatch with PyTorch. | REINVENT 4 requires CUDA 11.x. PyTorch 1.11.0+cu113 is typical. | Install correct PyTorch: pip install torch==1.11.0+cu113 -f https://download.pytorch.org/whl/torch_stable.html |

pkg_resources.DistributionNotFound: rdkit |

RDKit not installed via conda; pip install fails. | RDKit 2022.09.5 or 2023.03.5 is required. | Install via conda: conda install -c conda-forge rdkit==2022.09.5 |

Conflict: reinvent-chemistry vs. reinvent-scoring |

Incompatible version ranges for shared dependencies (e.g., NumPy). | reinvent-chemistry==0.0.50 may need numpy<1.24. |

Create a fresh conda env with Python 3.9, install NumPy 1.23.3 first, then REINVENT. |

ValueError: invalid __spec__ |

Path mismatch or incompatible Python version. | REINVENT 4 is validated for Python 3.7-3.9. | Use Python 3.9.19. Ensure sys.path does not contain stale package directories. |

RuntimeError: Expected all tensors on same device |

Model weights loaded to CPU but data on GPU (or vice versa). | Common with custom model loading scripts. | Explicitly set device: agent.load_state_dict(torch.load(path, map_location=torch.device('cuda'))) |

3. Experimental Protocols for Environment Setup

Protocol 3.1: Creation of a Conflict-Free Conda Environment

- Objective: To establish an isolated Python environment with compatible dependencies for REINVENT 4.

- Materials: Computer with Miniconda/Anaconda installed, internet connection.

- Procedure:

- Open a terminal (Linux/Mac) or Anaconda Prompt (Windows).

- Create a new environment with Python 3.9:

conda create -n reinvent4_env python=3.9.19 -y - Activate the environment:

conda activate reinvent4_env - Install critical numerical libraries with pinned versions:

pip install numpy==1.23.3 - Install RDKit via conda-forge:

conda install -c conda-forge rdkit==2022.09.5 - Install the correct PyTorch build for your CUDA version (e.g., for CUDA 11.3):

pip install torch==1.11.0+cu113 torchvision==0.12.0+cu113 torchaudio==0.11.0 --extra-index-url https://download.pytorch.org/whl/cu113 - Finally, install REINVENT 4 core packages:

pip install reinvent-chemistry==0.0.50 reinvent-scoring==0.0.50 reinvent-models==0.0.41

- Validation: Run

python -c "import rdkit; import torch; import reinvent_chemistry as rc; print('All imports successful')"

Protocol 3.2: Dependency Conflict Resolution via Dependency Tree Analysis

- Objective: To diagnose and resolve deep dependency version clashes.

- Materials: Activated

reinvent4_env,pipdeptreetool. - Procedure:

- Install the tree visualization tool:

pip install pipdeptree - Generate a full dependency tree:

pipdeptree > dependencies.txt - Examine

dependencies.txtfor lines containingRequires,!!, orConflict. These indicate version mismatches. - For each conflict (e.g.,

PackageA requires numpy>=1.24, but you have numpy==1.23.3), determine the upstream package causing the requirement. - Attempt to upgrade/downgrade the upstream package to a version compatible with the common dependency version. If impossible, consider using the

--use-deprecated=legacy-resolverflag withpip installas a last resort.

- Install the tree visualization tool:

- Validation: Re-run

pipdeptreeto confirm conflicts are resolved.

4. Visualization of Troubleshooting Workflow

Diagram Title: REINVENT 4 Installation Troubleshooting Decision Tree

5. The Scientist's Toolkit: Essential Research Reagent Solutions Table 2: Key Software "Reagents" for REINVENT 4 Environment Management

| Item | Function & Purpose | Typical Specification / Version |

|---|---|---|

| Conda/Mamba | Creates isolated software environments to prevent cross-project dependency conflicts. | Miniconda 23.10.0 or Mamba 1.5.1. |

| PyTorch (CUDA) | Deep learning framework optimized for GPU acceleration; core to REINVENT's neural networks. | PyTorch 1.11.0 built for CUDA 11.3 (cu113). |

| RDKit | Open-source cheminformatics toolkit essential for molecular representation and operations. | RDKit 2022.09.5 (installed via conda-forge). |

| NumPy | Foundational package for numerical computations in Python; version pinning is critical. | NumPy 1.23.3 (compatible with core stack). |

| pipdeptree | Diagnostic tool to visualize the installed dependency tree and identify version conflicts. | pipdeptree 2.13.0. |

| Docker | Containerization platform for creating reproducible, system-agnostic execution environments. | Docker Engine 24.0+ (alternative to conda). |

| NVIDIA Container Toolkit | Enables Docker containers to access host GPU resources for CUDA acceleration. | Version 1.14.1+ (if using Docker). |

Debugging Configuration File Errors and Input File Path Issues

Application Notes

Within AI-driven generative molecule design using REINVENT 4, configuration files (JSON) dictate all parameters for the generative model, reinforcement learning (RL) strategy, and scoring components. Input file paths specify the location of starting molecules, prior models, and validation sets. Errors in these areas are primary failure points, halting pipelines and consuming significant researcher time. Systematic debugging is essential for maintaining research velocity.

Table 1: Common Configuration Errors & Quantitative Impact on Runtime

| Error Category | Specific Error Example | Average Debug Time (Researcher Hours) | Pipeline Failure Rate | Required Fix |

|---|---|---|---|---|

| JSON Syntax | Missing comma, trailing comma, incorrect bracket | 0.5 - 1.5 | 100% | Validate JSON with linter. |

| Parameter Value | "sigma": 800 (vs. typical 120) |

2.0 - 5.0 | 100% | Cross-check with protocol defaults. |

| Path Specification | Relative path ("./data/smiles.csv") when absolute required |

1.0 - 3.0 | 100% | Use absolute paths or verify working directory. |

| File Format | SMILES file with incorrect delimiter or header | 3.0 - 6.0 | ~85% | Validate input file structure with parser script. |

| Missing Key | Omission of "reinforcement_learning" section |

0.5 - 1.0 | 100% | Compare with template configuration. |

| Issue Type | Detection Method | Resolution Protocol Success Rate | Automated Check Available |

|---|---|---|---|

| Path Does Not Exist | File I/O exception at initialization | 100% | Yes (pre-launch script) |

| Insufficient Permissions | Permission denied error | 100% | Yes (pre-launch script) |

| Incorrect File Format | Parser error during read | 95% | Yes (format validator) |

| Path with Spaces (Unix/Linux) | String parsing error | 100% | Yes (path sanitizer) |

| Symbolic Link Broken | File not found error | 100% | Yes (link resolver) |

Experimental Protocols

Protocol 1: Pre-Execution Configuration Validation

Objective: To catch JSON and path errors before initiating a costly REINVENT 4 run.

- JSON Schema Validation:

- Obtain the latest REINVENT 4 JSON schema from the official repository.

- Use a validator (e.g.,

jsonschemaPython package). Execute:python -m jsonschema -i config.json schema.json. - If errors are output, correct the

config.jsonfile iteratively.

- Path Existence and Permissions Check:

- Write a Python script to parse the

config.jsonfile. - Extract all string values that end with key file extensions (e.g.,

.csv,.json,.ckpt,.smi). - For each extracted path, use

os.path.exists()andos.access(path, os.R_OK)to verify existence and read permissions. - Log all missing or unreadable files.

- Write a Python script to parse the

- Input File Sanity Check:

- For SMILES files, use RDKit (

from rdkit import Chem) to attempt to read the first 10-100 lines. Calculate the percentage of successfully parsed molecules. Acceptable thresholds are >95% for most runs.

- For SMILES files, use RDKit (

Protocol 2: Debugging a Failed Run Due to Input Error

Objective: To diagnose and resolve errors from a REINVENT 4 run that has terminated unexpectedly.

- Locate and Inspect Log Files:

- Navigate to the run's output directory. The main log is typically

reinvent.log. - Open the log file and search for critical keywords:

"ERROR","Traceback","FileNotFound","Permission denied".

- Navigate to the run's output directory. The main log is typically

- Isolate the Error Context:

- Identify the module and function where the error occurred (e.g.,

"reinvent.chemistry.file_reader"). - Note the exact error message and the file path involved.

- Identify the module and function where the error occurred (e.g.,

- Reproduce the Error in a Minimal Test:

- Create a small Python script that isolates the operation from the error context (e.g., attempting to read the specified SMILES file with the same library function).

- This confirms the root cause independent of the full REINVENT pipeline.

- Implement and Test the Fix:

- Apply the correction (e.g., fix file path, reformat input data).

- Re-run the minimal test to confirm successful operation.

- Optionally, run REINVENT 4 on a single iteration or short run to validate the full pipeline.

Diagrams

Title: REINVENT 4 Error Debugging Workflow

Title: Configuration and Inputs in REINVENT 4 System

The Scientist's Toolkit: Research Reagent Solutions

| Item | Category | Function in Debugging |

|---|---|---|

JSON Linter (e.g., jsonlint) |

Software Tool | Validates syntax of configuration files, catching missing commas, brackets. |

JSON Schema Validator (jsonschema Python pkg) |

Software Tool | Ensures configuration structure and parameter values adhere to REINVENT 4's required format. |

| Path Sanitizer Script | Custom Script | Converts relative paths to absolute, checks existence/permissions, and handles OS-specific formatting (e.g., spaces). |

| SMILES Validator (RDKit) | Chemistry Library | Parses input molecular files to verify format correctness and chemical validity before run initiation. |

Structured Log Parser (e.g., grep/awk scripts) |

Software Tool | Quickly filters large log files (reinvent.log) to find critical ERROR or Traceback messages. |

| Minimal Reproducible Test Environment | Methodology | Isolates the error condition in a small script, allowing rapid iteration on fixes without full pipeline costs. |

| Template Configuration Repository | Research Data | Provides a set of known-working config files for different experiment types (e.g., de novo design, scaffold hopping). |

This application note details the practical implementation and optimization of multi-objective scoring functions within the REINVENT 4 platform for de novo molecular design. We provide protocols for integrating and balancing predictive models for biological activity, ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties, and synthesizability into a unified scoring strategy to guide generative AI toward producing viable drug candidates.

REINVENT 4 is an open-source platform for AI-driven generative molecular design. Its core principle involves using a scoring function to bias the generation of a Recurrent Neural Network (RNN) toward molecules with desired properties. A key research challenge is constructing a single scoring function that effectively balances often competing objectives, such as high target activity, favorable ADMET profiles, and ease of synthesis. This document provides a framework for building, testing, and deploying such composite scoring functions.

Key Components of a Multi-Objective Scoring Function

The composite score (S_total) is typically a weighted sum or a more complex transformation of individual component scores.

Table 1: Common Scoring Components and Implementation Models

| Objective | Typical Metrics/Models | Output Range | Common Weight Range | Notes |

|---|---|---|---|---|

| Primary Activity | pIC50, pKi, ΔG (kcal/mol) from QSAR, Docking, or ALPHAFOLD3 | 0-1 (normalized) | 0.3 - 0.5 | High weight, but requires careful validation. |

| Selectivity | Ratio or difference in activity against off-targets. | 0-1 | 0.1 - 0.2 | Critical for reducing toxicity. |

| Lipinski's Rule of 5 | Binary (Pass/Fail) or continuous score. | 0 or 1 | 0.05 - 0.1 | Often used as a filter or penalty term. |

| Predicted Solubility (LogS) | Regression model (e.g., from AqSolDB). | Continuous | 0.05 - 0.15 | Aim for > -4 log mol/L. |

| Predicted Hepatotoxicity | Classification model (e.g., from DeepTox). | 0 (toxic) - 1 (safe) | 0.1 - 0.2 | High-impact penalty for failure. |

| Predicted CYP Inhibition | Probability of 2C9, 2D6, 3A4 inhibition. | 0-1 per isoform | 0.05 - 0.1 each | Often summed or max penalty applied. |

| Synthetic Accessibility (SA) | SAscore (1-easy to 10-hard), RAscore. | 1-10 (inverted & normalized) | 0.1 - 0.25 | Encourages practical chemistry. |

| Retrosynthetic Complexity | SCScore or AiZynthFinder feasibility. | 1-5 (inverted & normalized) | 0.05 - 0.15 | Estimates synthetic steps/effort. |

Protocol: Building a Balanced Scoring Function in REINVENT 4

Protocol 3.1: Component Model Preparation & Integration

Objective: To configure individual scoring components as "filters" or "scorers" within REINVENT's configuration JSON. Materials: See "The Scientist's Toolkit" below. Procedure:

- Model Containerization: Package each predictive model (e.g., a trained scikit-learn QSAR model for activity, or a command-line call to a solubility predictor) into a Docker/Singularity container. The container must accept a SMILES string as input and return a numeric score.

- Define in Configuration: In the REINVENT

config.json, define each component under the"scoring"section.

- Transformation: Apply appropriate transforms (e.g., sigmoid, reverse sigmoid, step function) to each raw model output to normalize scores to a comparable 0-1 scale, where 1 is ideal.

Protocol 3.2: Pareto Front Optimization for Weight Tuning

Objective: To empirically determine the optimal set of weights for scoring components that maximizes the Pareto front of candidate molecules. Materials: REINVENT 4, a validation set of 100-200 diverse molecules with known experimental data for key objectives. Procedure:

- Design of Experiment: Define a grid or use a random sampler to explore weight combinations for 3-4 primary objectives (e.g., Activity, SAscore, LogP). Constrain total weight sum to 1.0.

- Parallel Runs: Execute multiple short REINVENT sampling runs (e.g., 1000 steps) for each weight set.

- Evaluation: For the top 50 molecules from each run, calculate the predicted values for all key objectives.

- Analysis: Plot the results in 2D/3D objective space (e.g., Predicted Activity vs. SAscore). The weight set that generates a population of molecules spanning the largest non-dominated frontier (Pareto front) is preferred.

Table 2: Example Pareto Weight Screening Results