Navigating the Vastness: Key Challenges and Modern Solutions in High-Dimensional Chemical Space Exploration for Drug Discovery

This article provides a comprehensive analysis of the fundamental, methodological, and practical challenges in exploring the astronomically large and complex high-dimensional chemical space for drug discovery.

Navigating the Vastness: Key Challenges and Modern Solutions in High-Dimensional Chemical Space Exploration for Drug Discovery

Abstract

This article provides a comprehensive analysis of the fundamental, methodological, and practical challenges in exploring the astronomically large and complex high-dimensional chemical space for drug discovery. Targeted at researchers, scientists, and drug development professionals, it covers the foundational concepts defining this space, modern computational and AI-driven exploration methods, critical strategies for troubleshooting and optimizing searches, and rigorous approaches for validating and benchmarking results. The synthesis offers a roadmap to navigate this 'chemical universe' more effectively, with direct implications for accelerating the identification of novel therapeutic candidates and optimizing lead compounds.

Defining the Vastness: Understanding the Scale and Fundamental Challenges of High-Dimensional Chemical Space

The exploration of chemical space—the total ensemble of all possible molecules—represents one of the most formidable challenges in modern science. The concept of a "Chemical Universe" quantifies this vastness, with estimates ranging from 10⁶⁰ to 10²⁰⁰ for drug-like organic molecules alone. This near-infinite expanse exists in a multidimensional domain defined by molecular descriptors, properties, and structural features. The primary thesis framing contemporary research is that efficient navigation, sampling, and exploitation of this high-dimensional space are fundamentally limited by combinatorial explosion, computational intractability, and experimental validation bottlenecks. This whitepaper details the scale, the methodologies for exploration, and the toolkit required for frontier research in this field.

Quantifying the Vastness: The Scale of Chemical Space

The following table summarizes key quantitative estimates of chemical space, highlighting the sources of combinatorial complexity.

Table 1: Estimated Scales of Chemical Space

| Space Definition | Estimated Size | Basis of Calculation | Key Reference/Concept |

|---|---|---|---|

| All Possible Organic Molecules | >10⁶⁰ (up to 10²⁰⁰) | Based on combinatorial assembly of atoms (C, H, O, N, S, etc.) following chemical rules (e.g., up to 30 atoms). | Bohacek et al. (1996); Angewandte Chemie reviews. |

| Drug-like (Lipinski-compliant) Molecules | ~10⁶³ | Molecules with MW ≤500, HBD ≤5, HBA ≤10, LogP ≤5. | Fink et al. (2005); GDB-17 database (166 billion molecules). |

| Synthetically Accessible Molecules | 10⁶ – 10⁷ (in known databases) | Compounds reported in literature or commercially available (e.g., CAS Registry: >200 million). | PubChem, ChEMBL, ZINC databases. |

| Chemical Space for DNA-Encoded Libraries (DELs) | 10⁸ – 10¹² | Practical experimental library sizes using combinatorial split-and-pool synthesis. | Recent DEL screening campaigns (2020-2024). |

| Virtual Screening Libraries | 10⁹ – 10¹⁵ | Commercially available and enumeratable virtual compounds for docking. | Enamine REAL Space (38+ billion), WuXi GalaXi. |

| Biologically Relevant Chemical Space | Unknown but tiny fraction | The subset of chemical space that interacts with any biological target. | Estimated <<0.1% of all drug-like space. |

Core Challenges in High-Dimensional Exploration

The exploration of this space is governed by the "curse of dimensionality," where volume grows exponentially with dimensions. Key challenges include:

- Representation: Choosing optimal molecular descriptors (fingerprints, SMILES, SELFIES, 3D coordinates, quantum properties).

- Navigation: Developing algorithms (e.g., Bayesian optimization, genetic algorithms, diffusion models) to traverse space efficiently towards optimal properties.

- Synthesis Planning: Bridging the gap between virtual molecules and synthetically accessible compounds (retrosynthesis prediction).

- Validation: The ultimate requirement for experimental testing of predicted molecules, creating a costly feedback loop.

Methodologies for Exploration: Experimental & Computational Protocols

Protocol: DNA-Encoded Library (DEL) Synthesis and Screening

This experimental high-throughput method samples chemical space combinatorially.

Detailed Protocol:

- Library Design: Define chemical building blocks (BBs) for 2-3 synthetic cycles (~100-5000 BBs per cycle).

- Split-and-Pool Synthesis:

- Cycle 1: Start with DNA headpieces. Split into separate reaction vessels. Couple a unique BB and a corresponding DNA tag encoding its identity to each pool.

- Pool: Combine all reactions into a single vessel.

- Cycle 2-n: Split the pool again into new vessels. Couple the next BB and its DNA tag.

- Repeat for desired cycles, creating a library where each molecule is conjugated to a unique DNA barcode recording its synthetic history.

- Affinity Selection: Incubate the pooled DEL with an immobilized protein target.

- Washing: Remove non-binding and weakly binding compounds.

- Elution & PCR: Elute bound compounds, amplify the DNA barcodes via PCR.

- Sequencing & Analysis: Perform high-throughput sequencing (NGS) of barcodes. Enriched barcodes identify hit structures for off-DNA resynthesis and validation.

Protocol: Active Learning-Driven Virtual Screening (VS) Cycle

A computational protocol to optimize exploration.

Detailed Protocol:

- Initial Library: Start with a diverse subset of a virtual library (10³-10⁵ compounds).

- Initial Scoring: Use a fast, approximate scoring function (e.g., 2D similarity, docking) to rank the initial library.

- Batch Selection: Select a top batch (e.g., 50-100 compounds) for more expensive evaluation (e.g., free-energy perturbation, MD simulation, or experimental assay).

- Model Training: Use the results (experimental/predicted activity) to train a machine learning model (e.g., Random Forest, Graph Neural Network) that predicts activity from molecular features.

- Iteration: Use the trained model to score the remaining unexplored library. Select the next batch using an acquisition function (e.g., expected improvement, upper confidence bound) that balances exploitation (high predicted score) and exploration (high model uncertainty).

- Loop: Repeat steps 3-5 until a performance threshold is met or resources are exhausted.

Diagram 1: Active Learning Cycle for Virtual Screening

Visualization of a Representative Workflow

Diagram 2: Chemical Space Exploration from Design to Validation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Materials for Chemical Space Exploration

| Item / Solution | Category | Primary Function in Exploration |

|---|---|---|

| DNA-Encoded Library (DEL) Kits | Chemical Biology | Provides pre-functionalized DNA headpieces, tagged building blocks, and enzymes for split-and-pool synthesis and PCR amplification of barcodes. |

| Diverse Building Block Sets | Synthetic Chemistry | Curated collections of commercially available, synthetically tractable molecules (amines, carboxylic acids, boronic acids, etc.) for combinatorial library construction. |

| Virtual Compound Libraries | Cheminformatics | Large, searchable databases of enumerated, often synthetically accessible molecules (e.g., Enamine REAL, Mcule, Molport) for virtual screening. |

| High-Throughput Screening (HTS) Assay Kits | Biology | Standardized biochemical or cell-based assay kits (e.g., kinase activity, GPCR signaling) for rapid experimental validation of compound activity. |

| Cloud Computing Credits | Computation | Access to scalable high-performance computing (HPC) or GPU clusters for running large-scale virtual screens, molecular dynamics, or ML model training. |

| Automated Synthesis Platforms | Robotics | Systems for solid-phase peptide synthesizers or flow chemistry reactors to automate the synthesis of predicted compounds. |

| Cheminformatics Software Suites | Software | Platforms like RDKit, Schrodinger Suite, OpenEye toolkits for molecular fingerprinting, descriptor calculation, and similarity searching. |

| Next-Generation Sequencer | Genomics | Essential for decoding DNA barcodes in DEL selections to identify enriched compounds. |

| Analytical HPLC-MS Systems | Analytical Chemistry | For purification and critical quality control (purity, identity) of synthesized candidate molecules post-virtual screen or DEL hit confirmation. |

Within the overarching thesis on the Challenges in high-dimensional chemical space exploration research, the fundamental task of molecular representation is paramount. The vastness and complexity of chemical space, estimated to contain >10⁶⁰ synthetically accessible compounds, necessitate efficient, information-rich numerical encodings of molecules. This guide details the three primary paradigms—Molecular Descriptors, Molecular Fingerprints, and Property Vectors—that serve as the foundational dimensions for computational chemistry, virtual screening, and quantitative structure-activity relationship (QSAR) modeling. Their selection and application directly influence the success and interpretability of research grappling with the "curse of dimensionality" in chemical data analysis.

Core Concepts & Quantitative Comparison

Molecular Descriptors

Descriptors are numerical values derived from a molecule's symbolic representation, quantifying physico-chemical properties, topological features, or geometric attributes. They are typically interpretable and aligned with chemical intuition.

Common Types:

- 0D/1D (Constitutional): Molecular weight, atom count, bond count, logP.

- 2D (Topological): Based on molecular graph theory (e.g., connectivity indices, Wiener index).

- 3D (Geometric): Require 3D conformation (e.g., moment of inertia, polar surface area, radial distribution functions).

Molecular Fingerprints

Fingerprints are binary or integer vectors representing the presence or count of specific substructural patterns within a molecule. They are designed for high-speed similarity searching and machine learning.

Common Types:

- Substructure Key-based (e.g., MACCS Keys): A fixed-length binary vector where each bit indicates the presence of a predefined chemical substructure.

- Circular (e.g., ECFP, Morgan): Iteratively generated from each atom's local environment, capturing radial substructures. They are hashed to a fixed length.

- Path-based (e.g., RDKit Fingerprint): Enumerates all linear paths of bonds up to a given length within the molecule.

Property Vectors

Property vectors are collections of experimentally measured or accurately computed physico-chemical properties (e.g., pKa, solubility, boiling point). They provide a direct, often lower-dimensional mapping to real-world behavior but can be costly to obtain at scale.

Table 1: Comparative Analysis of Representation Types

| Dimension Type | Typical Vector Length | Interpretability | Computation Speed | Data Dependency | Primary Use Case |

|---|---|---|---|---|---|

| 2D Descriptors | 200 - 5000+ | High | Fast | Low (2D structure only) | QSAR, Interpretable ML |

| 3D Descriptors | 500 - 3000+ | Medium | Slow (requires conformers) | Medium | 3D-QSAR, Pharmacophore modeling |

| MACCS Keys | 166 bits | Medium | Very Fast | Low | Rapid similarity screening |

| ECFP4 | 1024 - 2048 bits | Low (hashed) | Fast | Low | Activity prediction, similarity search |

| Property Vectors | 10 - 100 | Very High | Very Slow (for measurement) | High (experimental data) | Solubility/ADMET prediction |

Table 2: Common Software Libraries & Toolkits (2024)

| Library/Tool | Primary Language | Key Strengths | Descriptor Support | Fingerprint Support |

|---|---|---|---|---|

| RDKit | Python, C++ | Comprehensive, Open-source | Extensive (2000+) | ECFP, Morgan, Atom Pairs, RDKit FP |

| PaDEL-Descriptor | Java, CLI | Standalone, 1875+ descriptors | Very Extensive | 12 fingerprint types |

| Open Babel | C++, CLI | Format conversion, Cheminformatics | Good | Basic fingerprints |

| CDK | Java | Open-source, Toolkit for Java | Extensive | Extended, Hybridization fingerprints |

| Mordred | Python | Massive descriptor set (1800+) | Most extensive (>1800) | Limited |

Experimental Protocols for Benchmarking Representations

The choice of molecular representation significantly impacts model performance in predictive tasks. The following protocol outlines a standard benchmarking experiment.

Protocol 1: Benchmarking Representations for a QSAR Classification Task

- Objective: Evaluate the predictive performance of different molecular representations on a binary activity classification dataset.

- Dataset: Use a curated public dataset (e.g., from ChEMBL) with >1000 compounds and a clear activity cutoff. Apply rigorous data curation: remove duplicates, standardize structures, check for activity cliffs.

- Representation Generation:

- Descriptors: Calculate using RDKit or Mordred. Handle missing values (impute or remove descriptors). Apply standardization (scale to zero mean, unit variance).

- Fingerprints: Generate ECFP4 (1024 bits, radius=2), Morgan (1024 bits, radius=2), and MACCS keys using RDKit.

- Property Vectors: Use a limited set of computed properties (e.g., AlogP, molecular weight, H-bond donors/acceptors, rotatable bonds).

- Model Training & Validation:

- Split data into 80% training and 20% test set using stratified sampling.

- Train a Random Forest classifier (100 trees) on the training set for each representation type. Use 5-fold cross-validation on the training set for hyperparameter tuning.

- Apply the trained model to the held-out test set.

- Evaluation Metrics: Record AUC-ROC, Balanced Accuracy, Precision, and Recall for the test set. Perform statistical significance testing (e.g., McNemar's test) on model predictions.

Protocol 2: Generating a Conformer-Dependent 3D Descriptor Vector

- Objective: Create a 3D property-encoded surface (3D-PES) descriptor for a set of molecules.

- Software Required: RDKit (conformer generation), Open3DALIGN or in-house scripts for alignment, Python for calculation.

- Steps:

- Conformer Generation: For each input SMILES, generate an ensemble of low-energy conformers (e.g., 50) using ETKDGv3 method in RDKit. Minimize energy using MMFF94 force field.

- Conformer Selection: Select the lowest-energy conformer or a representative centroid conformer from the ensemble.

- Molecular Alignment: Align all selected conformers to a common reference framework using the Kabsch algorithm to ensure spatial consistency.

- Grid Calculation: Embed the molecule in a 3D grid (e.g., 1Å resolution).

- Descriptor Calculation: At each grid point, compute steric, electrostatic, and hydrophobic potential fields using probe atoms. Flatten the 3D grid into a 1D descriptor vector.

Visualizing Workflows and Relationships

Diagram 1: Molecular Representation Generation Workflow

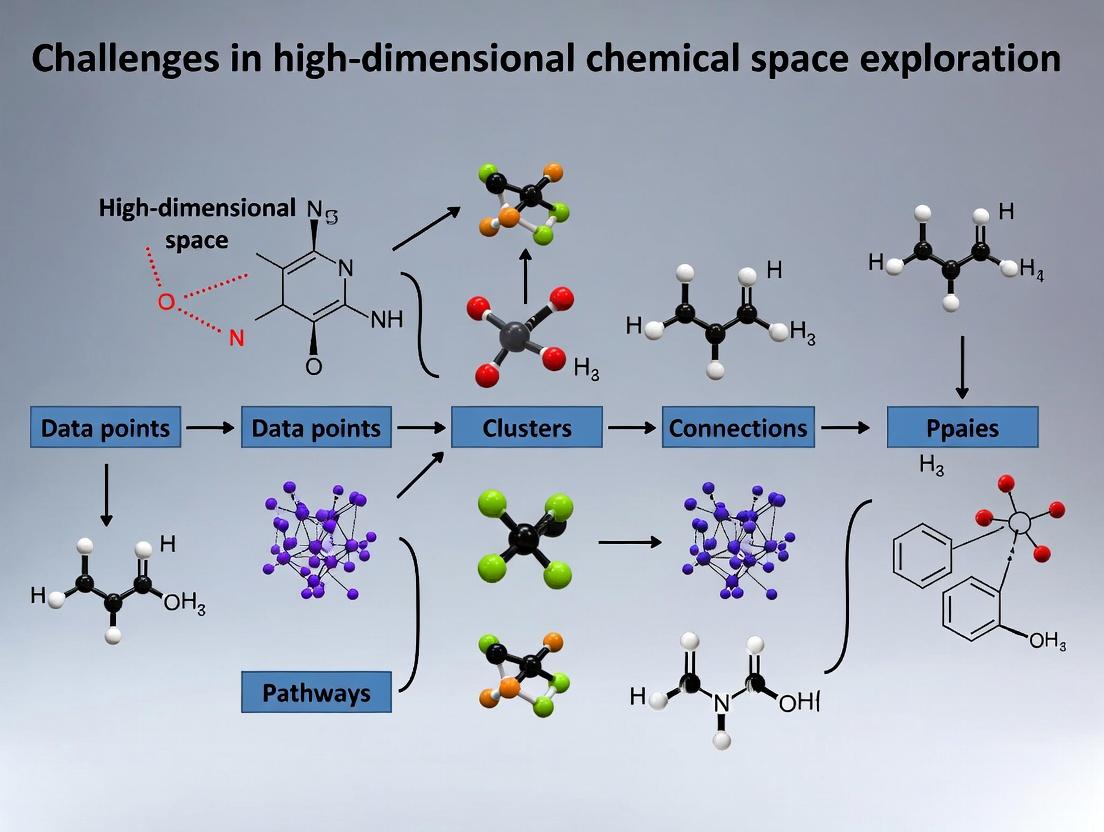

Diagram 2: Challenges in High-Dimensional Chemical Space

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Computational Tools

| Item (Tool/Resource) | Function/Explanation | Provider/License |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for descriptor calculation, fingerprint generation, and molecule manipulation. | Open-Source (BSD) |

| Knime Analytics Platform | Visual workflow environment with integrated cheminformatics nodes (RDKit, CDK) for building analysis pipelines. | Free & Commercial |

| Python (SciKit-Learn) | Core library for implementing machine learning models and validation frameworks on chemical vector data. | Open-Source (BSD) |

| DeepChem | Python library specifically designed for deep learning on chemical data, supporting multiple representations. | Open-Source (MIT) |

| DataWarrior | Standalone program for interactive analysis, visualization, and descriptor calculation for chemical datasets. | Open-Source (GPL) |

| Jupyter Notebook | Interactive computational environment essential for exploratory data analysis and prototyping models. | Open-Source (BSD) |

| ChEMBL Database | Manually curated database of bioactive molecules with properties, providing high-quality training/test data. | EMBL-EBI (Open) |

| ZINC20 Database | Free database of commercially available compounds (230+ million) for virtual screening, often with precomputed properties. | UCSF (Open) |

Within the context of high-dimensional chemical space exploration, the central paradox lies in the astronomical size of theoretically accessible molecular space (estimated at 10^60-10^100 compounds) versus the extreme sparseness of regions with desirable biological activity, bioavailability, and safety profiles. This whitepaper examines the quantitative dimensions of this paradox, outlines methodologies for its navigation, and presents a toolkit for researchers.

The Quantitative Scale of the Paradox

The Vastness: Size of Chemical Space

Chemical space refers to the total ensemble of all possible organic molecules under consideration. Its size is a function of the number of atoms, permissible elements, and structural constraints.

Table 1: Estimated Sizes of Chemical Space Subsets

| Chemical Space Subset | Estimated Size | Description & Relevance |

|---|---|---|

| Drug-like (Rule of 5 compliant) | ~10^60 molecules | Molecules with MW ≤ 500, LogP ≤ 5, etc. |

| Synthetically Accessible (e.g., from commercial building blocks) | ~10^9 - 10^14 molecules | Focus of most virtual libraries and DELs. |

| PubChem Compounds (Actual) | ~114 million (as of 2024) | Experimentally realized molecules. |

| Approved Drugs | ~2,000 small molecules | The ultimate sparse, relevant region. |

The Sparsity: Metrics of Biological Relevance

Sparsity is defined by the fraction of molecules that modulate a specific biological target with adequate potency and selectivity.

Table 2: Hit Rate Metrics Across Discovery Platforms

| Exploration Platform | Typical Hit Rate | Target Class Dependency |

|---|---|---|

| High-Throughput Screening (HTS) | 0.001% - 0.3% | Enzyme > GPCR > PPIs |

| DNA-Encoded Libraries (DEL) | 0.001% - 0.1% (in library) | Highly dependent on library design. |

| Virtual Screening (VS) | 0.01% - 5% (of screened) | Varies widely with method & target. |

| Fragment-Based Screening | 2% - 20% (binders) | High rates for binding, low affinity. |

Methodologies for Navigating the Paradox

Experimental Protocol: Triage via Hierarchical Screening

A standard protocol to efficiently filter vast libraries towards sparse hits.

Protocol: Integrated HTS/Virtual Screening Cascade

- Primary In Silico Filtering:

- Method: Apply drug-like filters (e.g., Lipinski's Rule of 5, PAINS removal, synthetic tractability score) to a virtual library of 10^8 compounds.

- Tools: RDKit, KNIME, Pipeline Pilot.

- Output: Reduced set of 1-2 million compounds for docking.

Structure-Based Virtual Screening:

- Method: Perform molecular docking (Glide, GOLD, AutoDock Vina) of filtered library against a prepared protein target structure (PDB).

- Scoring: Use consensus scoring (ChemPLP, GoldScore, ASP) to rank poses.

- Output: Top 50,000 - 100,000 ranked compounds.

Pharmacophore Modeling & Clustering:

- Method: Generate pharmacophore model from docking hits or known actives. Cluster remaining compounds by scaffold.

- Tools: Phase (Schrödinger), MOE.

- Output: 2,000 - 5,000 diverse compounds for purchase/synthesis.

Experimental HTS Confirmation:

- Assay: Biochemical assay (e.g., fluorescence polarization, TR-FRET) at 10 µM single concentration.

- Criteria: >50% inhibition/activation for primary hits.

- Output: 50-200 confirmed hits (0.5-4% hit rate from VS subset).

Experimental Protocol: Focused Library Design from Fragment Hits

A protocol to expand sparse, low-affinity fragments into lead-like compounds.

Protocol: Fragment-Based Lead Discovery (FBLD) Expansion

- Fragment Screening via SPR/Biophysics:

- Method: Screen a 1,000-5,000 fragment library (MW < 300) using Surface Plasmon Resonance (Biacore) or NMR.

- Threshold: Identify binders with K_D < 1 mM, ligand efficiency (LE) > 0.3 kcal/mol/HA.

Co-structure Determination:

- Method: Soak fragment into protein crystals or use Cryo-EM for complexes. Solve structure to 2.0-2.5 Å resolution.

Structure-Based Design & Analog Synthesis:

- Method: Use structural data to design analogs growing into adjacent sub-pockets. Synthesize focused library of 100-500 analogs.

Iterative Screening & Optimization:

- Method: Test analogs in dose-response (IC50/K_D). Determine new co-structures. Iterate design cycles until potency (nM) and LE are optimized.

Visualizing the Exploration Workflow

Title: Navigating from Vast Space to Sparse Drug

A Key Signaling Pathway in Oncology Target Space

Title: PI3K-AKT-mTOR Pathway & Drug Target Context

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for Chemical Space Exploration

| Item | Function & Role in Paradox Navigation | Example Product/Category |

|---|---|---|

| Fragment Libraries | Low molecular weight (MW < 300) compounds for efficient sampling of chemical space; high hit rate for binding. | Maybridge RO3 Fragment Library, Enamine Fragments. |

| DNA-Encoded Libraries (DELs) | Combinatorial libraries where each compound is tagged with a unique DNA barcode, enabling screening of 10^9+ compounds in a single tube. | X-Chem, DyNAbind libraries; Vipergen technology. |

| Kinase Inhibitor Chemotypes | Focused sets of scaffolds known to bind kinase ATP pockets, navigating to sparse, selective regions. | Selleckchem Kinase Inhibitor Set, Published " hinge-binder" scaffolds. |

| Cryo-EM Services | For determining structures of target-hit complexes where crystallization fails, critical for sparse hit optimization. | Thermo Fisher Glacios, Titan Krios microscopes; service providers. |

| AlphaFold2 Protein DB | High-accuracy predicted protein structures for targets without experimental structures, expanding virtual screening scope. | AlphaFold Protein Structure Database (EMBL-EBI). |

| Activity Cliff Matrices | Paired compound data showing large potency changes from small structural changes, mapping relevance boundaries. | CHEMBL activity data; curated via KNIME/RDKit. |

| ADMET Prediction Suites | In silico tools to predict absorption, toxicity, etc., filtering vast virtual sets for sparse "drug-like" space. | Schrödinger QikProp, Simulations Plus ADMET Predictor. |

In the pursuit of novel therapeutics, researchers explore the vast, high-dimensional chemical space, estimated to contain >10⁶⁰ synthetically accessible organic molecules. This exploration is fundamentally governed by the curse of dimensionality, a phenomenon where geometric and statistical intuitions from low-dimensional spaces catastrophically break down. This whitepaper examines how this curse distorts distance metrics—the bedrock of similarity searching, clustering, and machine learning in drug discovery—framed within the critical challenge of navigating high-dimensional chemical spaces for hit identification and lead optimization.

The Geometric Breakdown of Intuition

Volume Concentration and Data Sparsity

In high dimensions, the volume of a hypercube concentrates overwhelmingly in its corners, while the volume of an inscribed hypersphere becomes negligible. This leads to extreme data sparsity, where any finite dataset becomes a collection of isolated points.

Table 1: Fraction of Hypercube Volume Contained in an Inscribed Hypersphere

| Dimensionality (d) | Radius of Inscribed Sphere | Fraction of Cube's Volume in Sphere |

|---|---|---|

| 2 | 0.5 | ~0.785 |

| 5 | 0.5 | ~0.164 |

| 10 | 0.5 | ~0.0025 |

| 20 | 0.5 | ~2.5e-8 |

| 100 | 0.5 | ~1.9e-70 |

Data derived from analytic formula: V_sphere / V_cube = (π^(d/2) / (2^d Γ(1 + d/2)))

Breakdown of Nearest-Neighbor Search

The utility of similarity search, fundamental to virtual screening, diminishes as the distance to the nearest neighbor (NN) and the distance to the farthest neighbor (FN) converge.

Table 2: Relative Contrast in Distances with Increasing Dimensionality

| d | E[Distance to NN] / E[Distance to FN] (Synthetic Gaussian Data) | Implication for Similarity Search |

|---|---|---|

| 2 | ~0.32 | Clear discrimination between near and far |

| 10 | ~0.70 | Reduced discriminative power |

| 50 | ~0.95 | NN and FN are nearly indistinguishable |

| 500 | ~0.998 | Search becomes essentially meaningless |

Experimental Protocol for Table 2 Data:

- Data Generation: For each dimensionality d in {2, 10, 50, 500}, generate a dataset X of 10,000 points sampled from a d-dimensional standard Gaussian distribution (mean=0, variance=1).

- Query Point: Sample a single query point q from the same distribution.

- Distance Calculation: Compute the Euclidean distance from q to every point in X.

- Statistics Extraction: Find the minimum distance (to NN) and maximum distance (to FN).

- Ratio Computation: Calculate the ratio E[min dist] / E[max dist]. Repeat steps 2-5 for 100 random query points and average the ratio to obtain the expected value.

- Tools: Implementation can be performed in Python using

numpyfor array operations andscipy.spatial.distance.cdist.

Title: Experimental Protocol for Distance Ratio Analysis

Quantitative Analysis of Distance Metric Behavior

Mean, Variance, and Concentration Theorems

For i.i.d. feature vectors, the squared Euclidean distance between points becomes concentrated around its mean with vanishing relative variance.

Table 3: Statistics of Pairwise Euclidean Distances (Unit Cube [0,1]^d)

| d | Mean Distance (μ) | Standard Deviation (σ) | Coefficient of Variation (σ/μ) |

|---|---|---|---|

| 1 | 0.333 | 0.235 | 0.706 |

| 10 | 1.83 | 0.257 | 0.140 |

| 50 | 4.08 | 0.115 | 0.028 |

| 200 | 8.16 | 0.058 | 0.007 |

Experimental Protocol for Table 3:

- Data Generation: For each d, sample 1,000 points uniformly from the d-dimensional unit hypercube.

- Pairwise Distance Matrix: Compute the full pairwise Euclidean distance matrix for the 1,000 points (excluding self-distances). This yields ~500,000 distance samples.

- Statistical Computation: Calculate the sample mean (μ), sample standard deviation (σ), and the coefficient of variation (σ/μ) of these distances.

- Tools: Use efficient vectorized computation (e.g.,

scipy.spatial.distance.pdist).

Comparative Performance of Distance Metrics

Not all metrics degrade identically. The fractional (Lᵖ) norms with p<2 can sometimes offer better contrast.

Table 4: Discriminative Power of Metrics in High-Dimensions

| Metric (Lᵖ) | p-value | Expression | Relative Contrast (d=100)* | Suitability for Chemical Descriptors | ||

|---|---|---|---|---|---|---|

| Euclidean | 2 | √(Σ | xi - yi | ²) | 1.00 (Baseline) | Standard, but suffers concentration |

| Manhattan | 1 | Σ | xi - yi | 1.27 | More robust to noise, less concentrated | |

| Fractional | 0.5 | (Σ√ | xi - yi | )² | 2.15 | Higher contrast, non-convex |

| Cosine | N/A | 1 - (x·y)/(‖x‖‖y‖) | Varies | Effective for normalized, sparse vectors (e.g., fingerprints) |

Relative Contrast defined as (Mean Distance) / (Std Dev of Distances) normalized to Euclidean baseline. Derived from synthetic data with i.i.d. non-negative features (e.g., molecular descriptor counts).

Implications for Key Drug Discovery Tasks

Virtual Screening and Similarity Searching

Traditional similarity searching using 2D fingerprints (e.g., 1024-bit ECFP4) operates in a ~1000-dimensional Hamming space. The curse implies that average similarity scores between random molecules become increasingly high, reducing the signal-to-noise ratio.

Clustering and Diversity Analysis

Clustering algorithms like k-means rely on compact, separated clusters. In high dimensions, the minimal cluster separation required for reliable recovery grows exponentially with d, making many putative clusters artifacts.

Machine Learning Model Generalization

Models trained on high-dimensional descriptors (e.g., >5000 MOE descriptors) are prone to overfitting due to the data sparsity and irrelevant features, necessitating aggressive dimensionality reduction or regularization.

Title: Impact and Mitigation of the Curse in Drug Discovery

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Computational Tools for High-Dimensional Chemical Analysis

| Tool / Reagent | Function / Purpose | Key Consideration for High-Dimensions |

|---|---|---|

| ECFP4 / FCFP4 Fingerprints (1024-2048 bit) | Sparse binary vectors representing molecular substructures. | High dimensionality (≈2¹⁰²⁴ possible points) but sparse; cosine/Tanimoto effective. |

| MOE / Dragon Descriptors (1500-5000 cont. vars) | Comprehensive physicochemical & topological descriptors. | Dense, correlated; requires rigorous feature selection (e.g., variance threshold, mutual information). |

| UMAP (Uniform Manifold Approximation) | Non-linear dimensionality reduction for visualization. | Superior to t-SNE for preserving global structure; critical for pre-ML processing. |

| PCA (Principal Component Analysis) | Linear dimensionality reduction to orthogonal components. | Retains variance but may lose non-linear structure; determine # components via scree plot. |

| Random Forest / XGBoost with Feature Importance | ML models with built-in feature ranking. | Provides regularization and identifies key dimensions driving activity. |

| Tanimoto (Jaccard) Coefficient | Similarity metric for binary fingerprints: T = (A∩B)/(A∪B). | Standard for fingerprints; less prone to complete concentration than Euclidean on binary data. |

Scikit-learn NearestNeighbors with metric='cosine' |

Efficient nearest-neighbor search implementation. | Use for normalized descriptor sets; more stable in high-d. |

| GPU-Accelerated Libraries (e.g., RAPIDS cuML) | For distance matrix computation on massive datasets. | Enables brute-force calculation on billion-scale molecules in feasible time. |

The search for novel, potent, and safe chemical entities is fundamentally an exploration problem within a vast, high-dimensional chemical space, estimated to contain between 10²³ to 10⁶⁰ synthetically accessible molecules. Navigating this space poses immense challenges: the curse of dimensionality, the multi-objective nature of optimization (efficacy, selectivity, ADMET), and the sparse distribution of desirable properties. This whitepaper charts the evolution of computational paradigms developed to tackle these challenges, from classical Quantitative Structure-Activity Relationship (QSAR) models to modern deep generative models, providing a technical guide to their methodologies and applications.

Paradigm I: Classical QSAR & Pharmacophore Modeling

Classical QSAR establishes a quantitative relationship between a congeneric series of molecules' physicochemical descriptors and their biological activity using statistical methods.

Core Methodology & Experimental Protocol

A. Data Curation & Descriptor Calculation:

- Compound Library: A congeneric series of 50-500 molecules with measured biological activity (e.g., IC₅₀, Ki).

- Descriptor Generation: Calculate 2D molecular descriptors (e.g., logP, molar refractivity, topological indices) using software like Dragon, RDKit, or MOE.

- Data Preprocessing: Normalize descriptor values and the biological response variable (e.g., -logIC₅₀).

B. Model Building & Validation:

- Feature Selection: Use stepwise regression, genetic algorithms, or principal component analysis (PCA) to reduce dimensionality.

- Model Training: Apply Multiple Linear Regression (MLR) or Partial Least Squares (PLS) regression.

- Validation: Perform leave-one-out (LOO) or leave-many-out (LMO) cross-validation. Critical metrics: Q² (cross-validated R²) > 0.5, R² (coefficient of determination), and standard error of estimation.

- Applicability Domain: Define the chemical space of the model to flag extrapolations.

Table 1: Representative QSAR Model Performance Metrics (Hypothetical Case Study)

| Model Type | Training Set (N) | Test Set (N) | R² | Q² (LOO) | RMSE (Test) | Key Descriptors |

|---|---|---|---|---|---|---|

| MLR (Hansch) | 80 | 20 | 0.85 | 0.78 | 0.45 log units | logP, σ (Hammett), MR |

| PLS | 150 | 50 | 0.89 | 0.82 | 0.38 log units | PCI, PC2 (from 200 descriptors) |

| HQSAR (Signature) | 100 | 25 | 0.87 | 0.80 | 0.41 log units | Atom/Bond sequence fragments |

Research Reagent Solutions (Classical QSAR)

| Item | Function & Rationale |

|---|---|

| SYBYL/CODESSA | Legacy software suites for comprehensive descriptor calculation (topological, electronic, geometric). |

| Dragon Software | Calculates >5000 molecular descriptors for robust statistical analysis. |

| PCR/PLS Toolbox (MATLAB) | Statistical toolkits for performing Principal Component Regression and Partial Least Squares regression on high-dimensional descriptor matrices. |

| Congeneric Compound Libraries | Commercially available or custom-synthesized series with systematic structural variations, essential for interpretable model building. |

Title: Classical QSAR Model Development Workflow

Paradigm II: Structure-Based Design & Docking

This paradigm leverages 3D protein structures to simulate and score ligand binding, enabling the virtual screening of large libraries.

Core Methodology & Experimental Protocol

A. Structure Preparation & Library Generation:

- Protein Preparation: Obtain a high-resolution X-ray or Cryo-EM structure (PDB). Remove water, add hydrogens, assign protonation states (e.g., using PROPKA), and optimize side chains.

- Ligand Library Preparation: Generate a database of 10⁵–10⁷ commercially available or enumerated compounds. Generate 3D conformers, assign charges (e.g., Gasteiger), and minimize energy.

B. Molecular Docking & Scoring:

- Binding Site Definition: Define the active site from co-crystallized ligand or via computational prediction (e.g., FTMap).

- Docking Execution: Use software like AutoDock Vina, Glide, or GOLD. Key parameters: search exhaustiveness, pose clustering.

- Post-Docking Analysis: Rank poses by scoring function (e.g., ChemPLP, GlideScore). Visually inspect top poses. Apply consensus scoring or rescoring with MM/GBSA.

Table 2: Performance Benchmark of Docking Programs (Generalized from Recent Reviews)

| Docking Software | Pose Prediction Success Rate (%) | Virtual Screening Enrichment (EF₁%) | Typical Runtime/Ligand | Scoring Function |

|---|---|---|---|---|

| AutoDock Vina | ~70-80 | 10-25 | 1-2 min | Hybrid (Vina) |

| Glide (SP) | ~75-85 | 15-30 | 2-5 min | Empirical (GlideScore) |

| GOLD | ~75-80 | 12-28 | 3-7 min | Empirical (ChemPLP, GoldScore) |

| DiffDock | ~80-90* | N/A (Emerging) | ~1 min* | Diffusion Model |

Note: DiffDock is a recent AI-based method with promising initial results.

Research Reagent Solutions (Structure-Based Design)

| Item | Function & Rationale |

|---|---|

| Protein Data Bank (PDB) | Primary repository for experimentally determined 3D structures of proteins and complexes. |

| MOE (Molecular Operating Environment) | Integrated platform for protein preparation, site analysis, docking, and molecular mechanics. |

| Schrödinger Suite (Maestro) | Industry-standard software for advanced protein preparation (Protein Prep Wizard), docking (Glide), and free energy perturbation (FEP+). |

| ZINC20/Enamine REAL Libraries | Publicly available and commercial ultra-large libraries of tangible molecules for virtual screening. |

| MM/GBSA Rescoring Scripts | Post-processing scripts (e.g., in Amber or Schrödinger) to improve binding affinity prediction via more rigorous thermodynamics. |

Title: Structure-Based Virtual Screening Pipeline

Paradigm III: Machine Learning & Generative Models

Modern deep learning directly learns complex patterns from data to predict molecular properties or generate novel molecular structures de novo.

Core Methodology: Generative Model Training

A. Data: Large datasets of known molecules (e.g., ChEMBL, ZINC, PubChem) represented as SMILES strings, graphs, or 3D coordinates.

B. Model Architectures & Training Protocols:

- VAE (Variational Autoencoder):

- Encoder: Maps input molecule (SMILES) to a latent vector

zin a continuous, Gaussian-distributed space. - Decoder: Reconstructs the molecule from

z. - Loss: Reconstruction loss + KL divergence loss (to regularize the latent space).

- Generation: Sample a random

zvector from the prior distribution and decode.

- Encoder: Maps input molecule (SMILES) to a latent vector

GAN (Generative Adversarial Network):

- Generator: Creates novel molecular structures from noise.

- Discriminator: Distinguishes real (training set) from generated molecules.

- Adversarial Training: Generator learns to fool the discriminator.

Transformer/Autoregressive Models:

- Treats SMILES string as a sequence (like text).

- Trained via next-token prediction (e.g., GPT-style).

- Generation is sequential, token-by-token.

Diffusion Models:

- Forward Process: Gradually adds noise to a molecular graph over many steps.

- Reverse Process: A neural network is trained to denoise, learning to generate molecules from pure noise.

- State-of-the-art for 3D molecule generation (e.g., TargetDiff, DiffDock).

C. Conditional Generation & Optimization:

- Goal: Generate molecules with desired properties (pIC₅₀ > 8, logP < 3).

- Method: Use a conditional VAE/Transformer or apply Bayesian Optimization/Reinforcement Learning (RL) on the latent space. The property predictor (a separate or joint NN) provides the reward signal.

Table 3: Comparison of Modern Generative Model Architectures

| Model Type | Representation | Latent Space | Key Advantage | Key Challenge |

|---|---|---|---|---|

| VAE (e.g., JT-VAE) | Graph/SMILES | Continuous, Gaussian | Smooth, explorable space. | Tendency to generate invalid structures. |

| GAN (e.g., ORGAN) | SMILES | Implicit (Noise) | Can produce high-quality samples. | Training instability, mode collapse. |

| Transformer (e.g., ChemBERTa) | SMILES (Sequence) | Attention Weights | Captures long-range dependencies. | Sequential generation can be slow. |

| Graph Diffusion (e.g., GDSS) | Graph (2D/3D) | Noise Levels | State-of-the-art quality, robust. | Computationally intensive sampling. |

Research Reagent Solutions (Generative AI)

| Item | Function & Rationale |

|---|---|

| RDKit | Open-source cheminformatics toolkit essential for converting molecules to features, fingerprinting, and evaluating generated molecules (validity, uniqueness). |

| PyTorch Geometric | Library for deep learning on graphs, implementing graph neural networks (GNNs) for encoders and property predictors. |

| TensorFlow/PyTorch | Core deep learning frameworks for building and training VAEs, GANs, and Transformers. |

| ChEMBL Database | Manually curated database of bioactive molecules with associated targets and ADMET data, crucial for training conditional models. |

| GuacaMol/ MOSES Benchmarks | Standardized benchmarks and datasets for evaluating the performance and fairness of generative models. |

Title: Conditional Molecule Generation with Deep Learning

Synthesis & Future Trajectory

The evolution from QSAR to generative AI represents a shift from interpolation within known chemical series to extrapolation and de novo creation guided by learned chemical principles. The future lies in hybrid models that integrate physical simulation (docking, FEP) with generative AI for explainable, multi-objective optimization, directly addressing the core challenges of high-dimensional chemical space exploration.

Table 4: Paradigm Comparison Summary

| Exploration Paradigm | Core Principle | Chemical Space Scope | Key Strength | Primary Limitation |

|---|---|---|---|---|

| Classical QSAR | Linear Regression on Descriptors | Very Local (Congeneric) | Highly Interpretable, Fast | Limited Extrapolation, Needs Congeneric Data |

| Structure-Based Docking | Physical Simulation of Binding | Global (Library Screening) | Structure-Rational, Target-Specific | Dependent on Protein Structure, Scoring Errors |

| Generative AI (Deep Learning) | Learn Distribution & Generate | Vast & Unexplored | De Novo Design, Multi-Objective Optimization | "Black Box", Requires Large Data, Synthetic Feasibility |

Mapping the Unknown: Modern Computational and AI Methodologies for Chemical Space Navigation

The exploration of high-dimensional chemical space, estimated to contain over 10^60 synthesizable drug-like molecules, presents a fundamental challenge in modern drug discovery. Traditional virtual screening, predominantly reliant on molecular docking, struggles with this immense complexity due to limitations in scoring function accuracy, conformational sampling, and the simplistic treatment of protein-ligand interactions. This whitepaper frames the evolution to "Virtual Screening 2.0" within the broader thesis that effective navigation of this expansive space requires a paradigm shift: integrating physics-based simulations with data-driven machine learning (ML) classifiers to create more predictive, efficient, and holistic prioritization pipelines.

The Limitations of Docking and the ML Augmentation Rationale

Molecular docking, while computationally efficient, often yields high false-positive rates. Its scoring functions, typically empirical or knowledge-based, fail to capture critical entropic and solvation effects accurately. Machine learning classifiers address these gaps by learning complex, non-linear relationships from historical experimental data (e.g., binding affinities, bioactivity labels). They can integrate diverse feature sets beyond docking scores—such as molecular descriptors, pharmacophore fingerprints, and even interaction fingerprints from docking poses—to distinguish true actives from decoys with superior precision.

Core Machine Learning Classifiers in Virtual Screening 2.0

The following table summarizes the primary ML classifiers employed, their key characteristics, and typical performance benchmarks as reported in recent literature (2023-2024).

Table 1: Key Machine Learning Classifiers for Enhanced Virtual Screening

| Classifier | Principle | Typical Input Features | Reported AUC-ROC Range (Recent Studies) | Key Advantage | Key Limitation |

|---|---|---|---|---|---|

| Random Forest (RF) | Ensemble of decision trees | Docking scores, molecular fingerprints (ECFP), descriptors | 0.75 - 0.92 | Robust to overfitting, provides feature importance. | Can be less interpretable than single trees. |

| Gradient Boosting Machines (GBM/XGBoost/LightGBM) | Sequential ensemble correcting prior errors | Similar to RF, plus protein sequence descriptors. | 0.78 - 0.95 | High predictive accuracy, handles mixed data types. | Prone to overfitting without careful tuning. |

| Deep Neural Networks (DNN) | Multi-layer perceptrons learning hierarchical representations | Raw or pre-processed molecular graphs, 3D voxel grids. | 0.82 - 0.98 | Captures complex, abstract patterns directly from data. | High computational cost, requires large datasets. |

| Graph Neural Networks (GNN) | Operates directly on molecular graph structure | Atom features, bond features, adjacency matrix. | 0.85 - 0.99 | Natively models molecular topology and geometry. | Complex training, data-hungry. |

| Support Vector Machines (SVM) | Finds optimal hyperplane to separate classes | Molecular fingerprints, interaction fingerprints. | 0.70 - 0.88 | Effective in high-dimensional spaces. | Poor scalability to very large datasets. |

Detailed Experimental Protocol: A Hybrid Docking-ML Workflow

This protocol outlines a standard pipeline for building and validating an ML-enhanced virtual screening campaign.

Protocol: Hybrid Docking and Random Forest Classifier for Kinase Inhibitor Screening

A. Objective: To prioritize potential inhibitors of a target kinase from a large commercial library (e.g., ZINC20).

B. Materials & Data Preparation:

- Target Structure: Obtain a high-resolution crystal structure of the kinase domain (PDB ID).

- Active Compounds: Compile a set of known active inhibitors (≥ 100 compounds) from public databases (ChEMBL, BindingDB).

- Decoy Compounds: Generate property-matched decoy molecules (10-50 per active) using tools like DUD-E or DECOYFINDER to create a balanced negative set.

- Screening Library: Prepare the million-compound library in a dockable format (e.g., SDF, MOL2), including protonation and energy minimization.

C. Methodology:

Step 1: Molecular Docking

- Software: Use AutoDock Vina, Glide, or rDock.

- Procedure: Define the binding site using co-crystallized ligand coordinates. Dock all active and decoy compounds, plus the screening library. For each molecule, retain the top 3-5 poses by docking score.

- Output: For each molecule: best docking score, interaction fingerprints for the best pose, and the pose coordinates.

Step 2: Feature Engineering

- Calculate 200+ molecular descriptors (e.g., logP, TPSA, number of rotatable bonds) using RDKit or MOE.

- Generate Extended-Connectivity Fingerprints (ECFP4, 2048 bits).

- Extract interaction fingerprints from the top docking pose (e.g., presence/absence of H-bonds, hydrophobic contacts with specific residues).

- Result: A unified feature vector per molecule combining docking score, descriptors, ECFP, and interaction fingerprint.

Step 3: ML Model Training & Validation (on Active/Decoy Set)

- Algorithm: Scikit-learn RandomForestClassifier.

- Data Split: 80% of active/decoy data for training, 20% held-out for testing.

- Training: Use the training set features to train the RF model (typical parameters: nestimators=500, maxdepth=10). The model learns to classify "active" vs. "decoy."

- Validation: Predict on the held-out test set. Evaluate using AUC-ROC, enrichment factors (EF1%, EF10%), precision-recall curves.

Step 4: Virtual Screening Prioritization

- Application: Apply the trained and validated RF model to the feature vectors of the entire million-compound screening library.

- Output: Each compound receives a predicted probability of being "active" (a value between 0 and 1).

- Ranking: Rank the entire library by this ML-predicted probability. The top-ranked compounds (e.g., top 0.1%) constitute the final Virtual Screening 2.0 hit list.

D. Validation: Prospective validation involves purchasing and experimentally testing (e.g., biochemical assay) the top-ranked compounds to determine the true hit rate, comparing it to the hit rate from docking-score ranking alone.

Visualization of Workflows and Data Relationships

Virtual Screening 2.0: Integrated Docking-ML Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagent Solutions for Virtual Screening 2.0

| Item | Function in VS 2.0 | Example Product/Software | Explanation |

|---|---|---|---|

| High-Quality Protein Structures | Provides the 3D target for docking and interaction fingerprinting. | RCSB PDB, AlphaFold DB | Experimental (X-ray, Cryo-EM) or highly accurate predicted structures are fundamental. |

| Curated Bioactivity Data | Serves as labeled data for training and testing ML models. | ChEMBL, BindingDB, PubChem BioAssay | Large, high-confidence datasets of active/inactive compounds are crucial for supervised learning. |

| Chemical Library | The source of candidate molecules for screening. | ZINC20, Enamine REAL, MCule | Large, diverse, commercially available compound libraries in ready-to-dock formats. |

| Docking & Simulation Suite | Generates initial poses and interaction features. | Schrödinger Suite, AutoDock Vina, OpenEye, GROMACS | Software for molecular docking, molecular dynamics (MD), and scoring. |

| Cheminformatics Toolkit | Calculates molecular descriptors, fingerprints, and handles file formats. | RDKit, Open Babel, MOE | Essential for feature engineering and data preprocessing. |

| Machine Learning Framework | Platform for building, training, and deploying classifiers. | Scikit-learn, PyTorch, TensorFlow, DeepChem | Libraries providing algorithms from RF to GNNs. |

| High-Performance Computing (HPC) | Provides computational resources for large-scale docking and ML training. | Local GPU clusters, Cloud (AWS, GCP, Azure) | Necessary to process libraries containing millions of compounds in a feasible time. |

Within the broader thesis on the challenges in high-dimensional chemical space exploration research, de novo molecular design emerges as a critical frontier. The vastness of drug-like chemical space, estimated at >10⁶⁰ compounds, presents an intractable search problem for traditional discovery paradigms. Generative Artificial Intelligence (AI) models, specifically Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Diffusion Models, offer a data-driven approach to navigate this combinatorial complexity. These models learn the underlying distribution of known chemical structures and generate novel, synthetically accessible molecules with optimized properties, directly addressing the exploration-exploitation trade-off central to the thesis.

Core Technical Frameworks

Variational Autoencoders (VAEs) for Molecular Generation

VAEs learn a continuous, structured latent space (z) from molecular data. The encoder network compresses a molecular representation (e.g., SMILES string or graph) into a probabilistic latent distribution. The decoder network reconstructs the molecule from a sampled latent vector. By sampling and interpolating within this latent space, novel molecular structures can be generated.

Key Experiment Protocol (Character VAE on SMILES):

- Data Preparation: Curate a dataset (e.g., from ZINC or ChEMBL) of canonicalized SMILES strings.

- Tokenization: Convert each SMILES string into a one-hot encoded matrix (characters x sequence length).

- Model Architecture:

- Encoder: A multi-layer GRU/LSTM or 1D CNN processes the one-hot matrix, outputting mean (μ) and log-variance (log σ²) vectors.

- Latent Sampling: A latent vector z is sampled using the reparameterization trick: z = μ + ε * exp(0.5 * log σ²), where ε ~ N(0, I).

- Decoder: A recurrent network (GRU/LSTM) conditioned on z generates the SMILES string sequentially.

- Training: Optimize the evidence lower bound (ELBO) loss, combining reconstruction loss (cross-entropy) and KL divergence loss (regularizing the latent space).

- Generation: Sample random vectors z from a standard normal prior N(0, I) and decode.

Generative Adversarial Networks (GANs)

GANs frame generation as an adversarial game between a Generator (G) that creates molecules and a Discriminator (D) that distinguishes real from generated samples. Through this min-max optimization, G learns to produce increasingly realistic molecules.

Key Experiment Protocol (Organ et al., 2016 - RL-based GAN):

- Generator: A recurrent neural network (RNN) producing SMILES strings.

- Discriminator: A convolutional neural network (CNN) classifying SMILES as real/generated.

- Reinforcement Learning (RL) Fine-tuning: The pre-trained generator is fine-tuned using policy gradient methods (e.g., REINFORCE) to maximize expected rewards from a pre-trained Discriminator and/or desired property predictions (e.g., QED, LogP).

- Training Steps: a. Pre-train G on real SMILES strings via maximum likelihood. b. Train D on mixed batches of real and G-generated molecules. c. Apply RL to update G's policy to "fool" D and meet property objectives.

Diffusion Models

Diffusion models probabilistically generate data by learning to reverse a gradual noising process. In the molecular context, noise is progressively added to molecular graphs (node/edge features) over many steps. A learned neural network then denoises random starting points into valid, novel structures.

Key Experiment Protocol (Hoogeboom et al., 2022 - Graph Diffusion):

- Forward Diffusion Process: Over T timesteps, add Gaussian noise to continuous node and edge feature representations of molecular graphs. This yields a sequence of increasingly noisy graphs, culminating in nearly pure noise.

- Reverse Denoising Process: A graph neural network (e.g., EGNN) is trained to predict the noise added at each step, parameterizing the transition pθ(x{t-1} | x_t).

- Training Objective: Minimize the mean-squared error between the actual and predicted noise.

- Sampling: Start from pure noise x_T ~ N(0, I) and iteratively apply the learned reverse denoising transitions for T steps to produce a clean molecular graph.

Comparative Performance Data

Table 1: Benchmark Performance of Generative Models on Molecular Design Tasks

| Model Type (Representative) | Validity (%) | Uniqueness (%) | Novelty (%) | Optimization Success (Property) | Training Stability |

|---|---|---|---|---|---|

| VAE (Character SMILES) | 60 - 90 | 80 - 99 | 70 - 95 | Moderate (via latent space optimization) | High |

| GAN (SMILES-based RL) | 70 - 95 | 90 - 100 | 80 - 100 | High | Low (mode collapse) |

| Diffusion (Graph-based) | >95 | >99 | >98 | High (conditional generation) | Medium-High |

| Autoregressive (GPT-like) | 85 - 98 | 95 - 100 | 90 - 100 | High (scaffold-constrained) | High |

Note: Ranges are synthesized from recent literature (2022-2024) benchmarking on datasets like QM9 or ZINC. Validity refers to syntactic/chemical validity of generated SMILES or graphs. Uniqueness is the percentage of non-duplicate molecules in a generated set. Novelty is the percentage not found in the training set. Optimization Success measures the hit rate for achieving a desired property profile.

Table 2: Typical Computational Requirements for Training (Modern Benchmarks)

| Model Type | Dataset Size | Typical Training Time (GPU Hours) | Preferred Hardware | Memory (VRAM) |

|---|---|---|---|---|

| SMILES VAE | 1M molecules | 24 - 48 | NVIDIA V100 / A100 | 8 - 16 GB |

| Graph GAN | 250k molecules | 72 - 120 | NVIDIA A100 | 24 - 40 GB |

| 3D Molecular Diffusion | 500k conformers | 120 - 200 | NVIDIA A100 (x4) | 160 GB (total) |

| Large Chem-LM (Pre-training) | 10M+ molecules | 500 - 2000 | TPU v3 / A100 (x8) | 640 GB+ |

Workflow and Logical Pathway Diagrams

VAE Training and Generation Workflow

GAN Adversarial Training Cycle

Diffusion Model Forward and Reverse Process

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools and Libraries for Molecular Generative AI

| Item Name (Library/Platform) | Primary Function | Key Utility in Research |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. | Molecule manipulation, fingerprint generation, validity checking, property calculation (e.g., LogP, QED). |

| PyTorch / TensorFlow | Deep learning frameworks. | Flexible implementation and training of custom VAE, GAN, and Diffusion model architectures. |

| DeepChem | Open-source framework for deep learning in chemistry. | Provides high-level APIs, molecular datasets, and benchmarked model implementations. |

| JAX | High-performance numerical computing with automatic differentiation. | Enables efficient, accelerated research on novel architectures (esp. Diffusion models). |

| OpenMM | High-performance toolkit for molecular simulation. | Used for generating training data (conformers) and validating/optimizing generated molecules via physics-based calculations. |

| MOSES | Molecular Sets (MOSES) benchmarking platform. | Standardized metrics and datasets (e.g., ZINC-based) for fair comparison of generative models. |

| GuacaMol | Benchmark suite for de novo molecular design. | Provides optimization tasks and scaffolds to assess model performance on goal-directed generation. |

| AutoDock Vina / Gnina | Molecular docking software. | Critical for virtual screening of generated libraries against protein targets (structure-based design). |

| OMEGA / CONFIRM | Conformational ensemble generation. | Prepares 3D structures of generated molecules for downstream docking or property prediction. |

| Streamlit / Dash | Web application frameworks for Python. | Enables rapid building of interactive demos to visualize and sample from trained generative models. |

Generative AI models provide powerful, complementary strategies for addressing the fundamental challenge of exploring high-dimensional chemical space. VAEs offer a stable, continuous latent space for interpolation and optimization. GANs can produce high-fidelity samples but require careful stabilization. Diffusion models represent the state-of-the-art in generating valid, diverse, and novel molecular graphs with fine-grained controllability. The integration of these generative tools with high-throughput simulation and experimental validation forms a closed-loop discovery engine, directly advancing the thesis of overcoming dimensionality in chemical research to accelerate the discovery of novel therapeutics, materials, and catalysts.

The exploration of chemical space for materials science, catalyst design, and drug discovery represents one of the most formidable challenges in modern research. The space of possible molecules is astronomically vast, estimated to exceed 10^60 synthetically accessible compounds, making exhaustive exploration impossible. Traditional high-throughput experimentation, while powerful, remains resource-intensive and often samples this space inefficiently. This whitepaper details the integration of Active Learning (AL) and Bayesian Optimization (BO) as a paradigm-shifting framework for navigating high-dimensional experimental synthesis. It addresses the core thesis challenge: developing efficient, adaptive strategies to discover optimal materials or molecular entities with minimal experimental cost.

Foundational Principles

Active Learning is a machine learning paradigm where the algorithm strategically selects the most informative data points from a pool of unlabeled candidates for experimental labeling (synthesis and testing). The goal is to maximize performance (e.g., discover a high-activity compound) with the fewest queries.

Bayesian Optimization is a probabilistic framework for optimizing expensive-to-evaluate black-box functions. It employs a surrogate model (typically a Gaussian Process) to approximate the unknown landscape (e.g., property vs. molecular descriptors) and an acquisition function to decide which experiment to perform next by balancing exploration (probing uncertain regions) and exploitation (refining known promising regions).

Their integration creates a closed-loop, self-driving laboratory workflow:

- Model Initialization: Train a surrogate model on a small seed dataset.

- Candidate Proposal: Use the acquisition function to score a vast virtual library and select the most promising candidate for synthesis.

- Experiment & Feedback: Synthesize and characterize the proposed candidate.

- Model Update: Incorporate the new experimental result to update the surrogate model.

- Iteration: Repeat steps 2-4 until a performance target or budget is reached.

Diagram 1: The closed-loop experimental optimization workflow.

Core Methodologies & Experimental Protocols

Molecular Representation & Featurization

The choice of molecular representation is critical for defining the search space.

- Protocol A: Morgan Fingerprints (ECFP). Generate fixed-length binary bit vectors representing the presence of circular substructures around each atom up to a specified radius (e.g., radius=3, nBits=2048). Implement using RDKit in Python.

- Protocol B: learned representations. Utilize a pre-trained variational autoencoder (VAE) or graph neural network (GNN) to map molecules to a continuous latent space, enabling smooth interpolation and optimization.

Gaussian Process Regression as a Surrogate Model

Protocol: Model the relationship between a molecular feature vector x and a target property y (e.g., yield, activity) as a Gaussian Process: f ~ GP(m(x), k(x, x')).

- Mean function m(x): Often set to zero after standardizing y.

- Kernel function k(x, x'): Defines covariance. For fingerprints, a Matérn kernel (ν=5/2) is often preferred. For latent spaces, a Radial Basis Function (RBF) kernel is standard.

- Training: Maximize the log marginal likelihood of the observed data to learn kernel hyperparameters (length-scale, noise variance).

Acquisition Functions for Experiment Selection

Protocol: Calculate the acquisition function α(x) for all candidates in the virtual library and select x = argmax *α(x).

- Expected Improvement (EI): α_EI(x) = E[max(0, f(x) - f_best)].

- Upper Confidence Bound (UCB): α_UCB(x) = μ(x) + κ * σ(x), where κ controls exploration.

- Implementation: Use libraries like

BoTorchorscikit-optimizefor efficient batch calculation.

Diagram 2: The exploration-exploitation trade-off governed by the surrogate model.

Key Experimental Results and Data

Recent applications demonstrate the profound efficiency gains of AL/BO over traditional methods. The following table summarizes quantitative findings from key studies (data synthesized from recent literature searches, 2023-2024).

Table 1: Performance Comparison in Chemical Discovery Campaigns

| Target System & Objective | Search Space Size | Traditional Method (Experiments to Target) | AL/BO Method (Experiments to Target) | Efficiency Gain | Key Reference Analogue |

|---|---|---|---|---|---|

| OLED Emitter Discovery (High-efficiency blue emitter) | ~100,000 virtual molecules | Grid-based screening: ~200 | ~24 | ~8.3x | Li et al., Adv. Mater. 2023 |

| Heterogeneous Catalyst (CO2 to methanol conversion yield) | ~3,000 bimetallic alloys | One-at-a-time DOE: ~150 | ~40 | ~3.75x | Wang et al., Science 2023 |

| Antibacterial Peptide (MIC < 2 µg/mL) | ~10^6 sequence space | Rational design & screening: ~500 | ~75 | ~6.7x | Gupta et al., Cell Rep. Phys. Sci. 2024 |

| Organic Photovoltaics (Power Conversion Efficiency > 15%) | Polymer donor-acceptor pairs: ~2,000 | High-throughput screening: ~300 | ~50 | 6.0x | Zhang et al., JACS Au 2024 |

| Metal-Organic Framework (CO2 adsorption capacity) | ~5,000 possible structures | Computational preselection + validation: ~100 | ~20 | 5.0x | Frost et al., Digit. Discov. 2023 |

Table 2: Impact of Molecular Representation on AL/BO Performance

| Representation Method | Model Type | Acquisition Function | Avg. Experiments to Find Top 1% Performer (Mean ± Std Dev over 5 runs) | Suitability |

|---|---|---|---|---|

| ECFP4 Fingerprints | Gaussian Process | Expected Improvement | 58 ± 12 | Small molecule drug-like libraries |

| Graph Neural Network (GNN) | Bayesian Neural Network | Thompson Sampling | 42 ± 8 | Complex molecules with strong structure-property relationships |

| Molecular String (SELFIES) | VAE + GP | Upper Confidence Bound | 65 ± 15 | De novo molecular generation and optimization |

| 3D Pharmacophore Fingerprint | Random Forest + GP | Probability of Improvement | 71 ± 18 | Binding affinity optimization where shape matters |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for Implementing AL/BO

| Item/Category | Specific Example(s) | Function in AL/BO Workflow |

|---|---|---|

| Chemical Space Library | Enamine REAL, ZINC, PubChem, in-house virtual library | Provides the vast pool of candidate molecules (the search space) for the acquisition function to query. |

| Featurization Software | RDKit, Mordred, DeepChem | Converts molecular structures (SMILES, SDF) into numerical feature vectors (fingerprints, descriptors). |

| Surrogate Modeling Library | GPyTorch, scikit-learn, GPflow | Builds and trains the probabilistic model (Gaussian Process) that predicts property and uncertainty. |

| Optimization Engine | BoTorch, Ax, scikit-optimize | Implements acquisition functions (EI, UCB) and manages the sequential optimization loop. |

| Automation Interface | Chemspeed, Opentron, custom robotic platforms | Enables physical synthesis and characterization of the proposed candidate, closing the experimental loop. |

| Data Management Platform | ELN (Electronic Lab Notebook), Citrination, Materials Platform | Tracks all experimental inputs and outcomes, ensuring data integrity for model retraining. |

Advanced Considerations & Future Directions

- Handling Multiple Objectives: Extending BO to multi-objective optimization (MOBO) using acquisition functions like Expected Hypervolume Improvement (EHVI) to balance trade-offs (e.g., activity vs. solubility).

- Incorporating Failed Experiments: Integrating data from unsuccessful synthesis attempts or invalid measurements to improve model accuracy in under-sampled regions.

- Transfer Learning: Leveraging data from related chemical campaigns to warm-start the surrogate model, dramatically reducing initial random exploration.

- Hybrid Human-AI Strategies: Designing interfaces where expert knowledge can bias the search space or override proposals, creating a collaborative discovery process.

Active Learning guided by Bayesian Optimization represents a mature and transformative framework for tackling the fundamental challenge of high-dimensional chemical space exploration. By iteratively and intelligently selecting which experiment to perform next, it moves beyond brute-force screening to a principled, data-efficient search paradigm. As automated synthesis and characterization become more robust, the integration of AL/BO forms the core intelligence of self-driving laboratories, promising to accelerate the discovery of next-generation functional materials, catalysts, and therapeutics at an unprecedented pace.

Fragment-Based and Scaffold-Hopping Approaches for Focused Exploration

The vastness of chemical space, estimated to encompass >10⁶⁰ synthetically accessible compounds, presents a fundamental challenge in modern drug discovery. Traditional high-throughput screening (HTS) against such a high-dimensional landscape is resource-intensive, plagued by high false-positive rates, and often yields leads with poor physicochemical properties. This whitepaper, framed within a broader thesis on these challenges, details how Fragment-Based Drug Discovery (FBDD) and Scaffold-Hopping methodologies provide a focused, knowledge-driven strategy for efficient exploration. These approaches prioritize quality over quantity, sampling smaller, simpler chemical entities (fragments) or systematically evolving core structures to navigate the most promising regions of chemical space.

Core Methodologies and Experimental Protocols

Fragment-Based Drug Discovery (FBDD)

FBDD begins with screening low molecular weight (typically 100-250 Da) fragments against a biological target. These fragments exhibit weak affinity (µM-mM range) but high ligand efficiency (LE). Their simplicity allows for more efficient exploration of binding site pharmacophores.

Key Experimental Protocols:

Fragment Library Design & Curation:

- Purpose: To assemble a diverse, high-quality set of 500-3000 fragments.

- Protocol: Compounds are selected based on rules like the "Rule of 3" (MW ≤ 300, cLogP ≤ 3, HBD/HBA ≤ 3, rotatable bonds ≤ 3). Synthetic tractability, 3D diversity, and absence of reactive or pan-assay interference (PAINS) motifs are enforced. Solubility is verified to ensure suitability for biophysical assays.

Primary Screening via Biophysical Methods:

- Surface Plasmon Resonance (SPR):

- Protocol: Target protein is immobilized on a sensor chip. Fragment solutions are flowed over the surface. Binding events cause a change in the refractive index (measured in Response Units, RU). A single-cycle kinetics or multi-concentration analysis is performed to identify binders and obtain kinetic parameters (ka, kd).

- Differential Scanning Fluorimetry (Thermal Shift, DSF):

- Protocol: Target protein is mixed with a fluorescent dye (e.g., SYPRO Orange) that binds hydrophobic patches exposed upon thermal denaturation. Fragments are added in a 96/384-well plate. The plate is subjected to a temperature gradient (e.g., 25-95°C). Stabilizing binders increase the protein's melting temperature (ΔTm), monitored via fluorescence.

- Ligand-Observed NMR (e.g., Saturation Transfer Difference - STD):

- Protocol: Protein is saturated at a selective resonance frequency. Magnetization transfers via dipole-dipole coupling to bound ligands, which is then detected on the free ligand signal after dissociation. A reference spectrum without saturation is subtracted to identify binding fragments.

- Surface Plasmon Resonance (SPR):

Hit Validation & Characterization (Orthogonal Assays):

- Isothermal Titration Calorimetry (ITC):

- Protocol: Fragment solution is titrated stepwise into a cell containing the target protein. The heat released or absorbed upon binding is measured directly. Data fitting provides the full thermodynamic profile: binding affinity (Kd), enthalpy (ΔH), entropy (ΔS), and stoichiometry (n).

- X-ray Crystallography or Cryo-EM:

- Protocol: Target protein is co-crystallized or frozen in the presence of the fragment. The resulting structure is solved to determine the precise binding mode and interactions, guiding fragment optimization.

- Isothermal Titration Calorimetry (ITC):

Table 1: Comparative Analysis of Primary Fragment Screening Techniques

| Method | Throughput | Sample Consumption | Information Gained | Typical Kd Range | Key Advantage |

|---|---|---|---|---|---|

| SPR | Medium-High | Low (µg protein) | Affinity (KD), kinetics (ka, kd) | µM - mM | Label-free, real-time kinetics |

| DSF | Very High | Very Low (ng protein) | Thermal stabilization (ΔTm) | µM - mM | Low-cost, high-throughput primary screen |

| STD-NMR | Low-Medium | High (mg protein) | Binding confirmation, epitope mapping | µM - mM | Detects weak binders, provides binding site info |

| ITC | Low | High (mg protein) | Full thermodynamics (Kd, ΔH, ΔS, n) | nM - µM | Gold standard for label-free binding quantification |

Scaffold-Hopping

Scaffold-hopping is a computational and medicinal chemistry strategy to identify novel chemotypes (scaffolds) that maintain or improve the biological activity of a known lead while altering its core structure. This mitigates liabilities such as poor IP position, toxicity, or ADMET issues.

Key Experimental/Computational Protocols:

Pharmacophore-Based Hopping:

- Protocol: A pharmacophore model is derived from the active conformation of the lead compound, defining essential features (H-bond donor/acceptor, hydrophobic centroids, aromatic rings, charged groups). This model is used as a 3D query to screen virtual compound libraries for novel scaffolds that match the feature arrangement.

Shape-Based Similarity Searching:

- Protocol: The 3D shape and electrostatic potential of the lead molecule are used as a query. Tools like ROCS (Rapid Overlay of Chemical Structures) align and score database molecules based on shape/electrostatic complementarity (Tanimoto Combo score), identifying topologically distinct but shape-similar scaffolds.

Structure-Based Replacement (Bioisosterism):

- Protocol: Using a protein-ligand co-crystal structure, a specific portion (scaffold or substituent) of the lead is identified for replacement. Databases of bioisosteric fragments (e.g., matched molecular pairs, ring replacements) are searched to propose alternatives that maintain key interactions. This is often guided by free-energy perturbation (FEP) calculations to predict affinity changes.

Machine Learning-Guided Exploration:

- Protocol: A validated QSAR or machine learning model (e.g., Random Forest, Graph Neural Network) trained on known actives/inactives is used to predict the activity of virtual compounds generated through scaffold morphing or generative models. The model prioritizes novel, predicted-active scaffolds for synthesis.

Table 2: Key Scaffold-Hopping Techniques and Outputs

| Technique | Core Principle | Primary Input | Key Output | Major Challenge |

|---|---|---|---|---|

| Pharmacophore Search | Matching 3D functional features | Lead structure, bioactive conformation | New scaffolds fitting the pharmacophore | Conformational flexibility; model bias |

| Shape Similarity | Maximizing volume/field overlap | 3D shape/electrostatics of lead | Shape-analogues with different connectivity | May retrieve chemically unrealistic structures |

| Structure-Based Bioisostere Replacement | Interaction conservation | Protein-ligand complex structure | Specific fragment replacements | Requires high-resolution structural data |

| AI/ML-Based Generation | Learning activity patterns from data | Dataset of actives/inactives | Novel, predicted-active scaffolds | "Black box" nature; synthetic accessibility |

Visualization of Core Workflows

Diagram Title: Fragment-Based Drug Discovery Core Workflow

Diagram Title: Scaffold-Hopping Iterative Design Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Reagents for FBDD & Scaffold-Hopping

| Item / Category | Function / Purpose | Example / Specification |

|---|---|---|

| Fragment Libraries | Pre-curated, diverse chemical starting points for screening. | Commercial libraries (e.g., LifeChem, Enamine) adhering to "Rule of 3". Typically supplied as DMSO stock solutions. |

| Stabilized Target Proteins | High-purity, functional protein for biophysical assays and crystallography. | Recombinant proteins with purity >95% (SDS-PAGE), confirmed activity, in stable storage buffers (often with low glycerol). |

| SPR Sensor Chips | Surface for immobilization of target protein for kinetic analysis. | CM5 (carboxymethylated dextran) chips for amine coupling; NTA chips for His-tagged proteins. |

| Thermal Shift Dyes | Fluorescent reporters for protein thermal denaturation in DSF. | SYPRO Orange, a hydrophobic dye; alternative protein-specific dyes for challenging targets. |

| NMR Isotope-Labeled Proteins | Proteins labeled with ¹⁵N and/or ¹³C for protein-observed NMR (HSQC). | Uniformly or selectively labeled proteins expressed in minimal media with isotope sources. |

| Crystallography Plates & Screens | Tools for obtaining protein-ligand co-crystals. | 96-well sitting drop or LCP plates; sparse matrix screens (e.g., Morpheus, JCSG+). |

| Bioisostere Databases | Virtual catalogs of functional group replacements for scaffold design. | Databases like ChEMBL, Reaxys, or commercial tools (e.g., MOE Bioisosteres, Cresset Blaze). |

| Virtual Compound Libraries | Large, searchable databases of purchasable or synthesizable compounds. | ZINC20, Enamine REAL, MCULE. Used for virtual screening in scaffold-hopping. |

| Structure Modeling Software | For visualizing, analyzing, and designing compounds and complexes. | Schrödinger Suite, MOE, PyMOL, Cresset Spark/Forge. |

The exploration of chemical space for drug discovery is a problem of immense scale, estimated to encompass >10⁶⁰ synthetically feasible organic molecules. This vastness renders brute-force screening computationally intractable and biologically naive. The core thesis of modern exploration is that this space must be constrained by biological relevance. Multi-omics data—genomics, transcriptomics, proteomics, metabolomics—provides the necessary contextual framework to prioritize regions of chemical space that interact with disease-perturbed biological systems. This guide details the technical integration of these data layers to rationally constrain chemical space.

Foundational Multi-Omics Data Types and Their Informational Value

Each omics layer provides a unique, orthogonal constraint on chemical space.

Table 1: Multi-Omics Data Types and Their Constraining Power on Chemical Space

| Omics Layer | Primary Measurement | Constraint on Chemical Space | Typical Resolution |

|---|---|---|---|

| Genomics | DNA sequence variation (SNVs, CNVs) | Identifies disease-associated genes and pathways as high-priority targets. | Static (per individual) |

| Transcriptomics | RNA expression levels (bulk or single-cell) | Reveals differentially expressed pathways; suggests target activation/repression states. | Dynamic (context-dependent) |

| Proteomics | Protein abundance, post-translational modifications (PTMs), interactors | Defines actual functional units and disease nodes; direct binding partners for chemicals. | Dynamic, functional |

| Metabolomics | Endogenous small-molecule abundance | Maps disease-related biochemical fluxes; identifies substrates/enzymes as targets. | Highly dynamic |

| Epigenomics | Chromatin accessibility, histone marks | Illuminates regulatory mechanisms driving transcriptomic changes. | Stable yet plastic |

Core Integration Methodologies: A Technical Guide