Navigating the Vast Combinatorics: Advanced Strategies for Molecular Optimization in Discrete Chemical Spaces

Molecular optimization in discrete chemical spaces represents a fundamental challenge in computational drug discovery and materials science.

Navigating the Vast Combinatorics: Advanced Strategies for Molecular Optimization in Discrete Chemical Spaces

Abstract

Molecular optimization in discrete chemical spaces represents a fundamental challenge in computational drug discovery and materials science. This article provides a comprehensive analysis of the latest computational strategies designed to efficiently navigate the vast combinatorial complexity of molecular structures. We explore foundational concepts of chemical space, detail innovative methodologies including multi-objective evolutionary algorithms, Bayesian optimization in latent spaces, and fragment-based discrete diffusion models. The content addresses critical troubleshooting aspects such as data scarcity and objective balancing, and provides a rigorous validation framework comparing state-of-the-art approaches. Designed for researchers, scientists, and drug development professionals, this review synthesizes cutting-edge advances that are reshaping how we explore and optimize molecular structures for therapeutic applications.

The Challenge and Conundrum of Discrete Molecular Search Spaces

The concept of discrete chemical space provides a foundational framework for understanding and navigating the vast universe of possible molecules. Defined as the set of all possible molecules described by a multi-dimensional space representing their structural and functional properties, chemical space represents a critical concept in modern drug discovery and materials science. [1] This application note explores the theoretical underpinnings, computational methodologies, and practical protocols for defining and exploring discrete chemical spaces, with particular emphasis on optimization techniques including discrete, gradient, and hybrid approaches. [2] Framed within broader research on molecular optimization, this work provides researchers with structured protocols and visualization tools to advance compound design and discovery in discrete chemical spaces.

Fundamental Concepts and Definitions

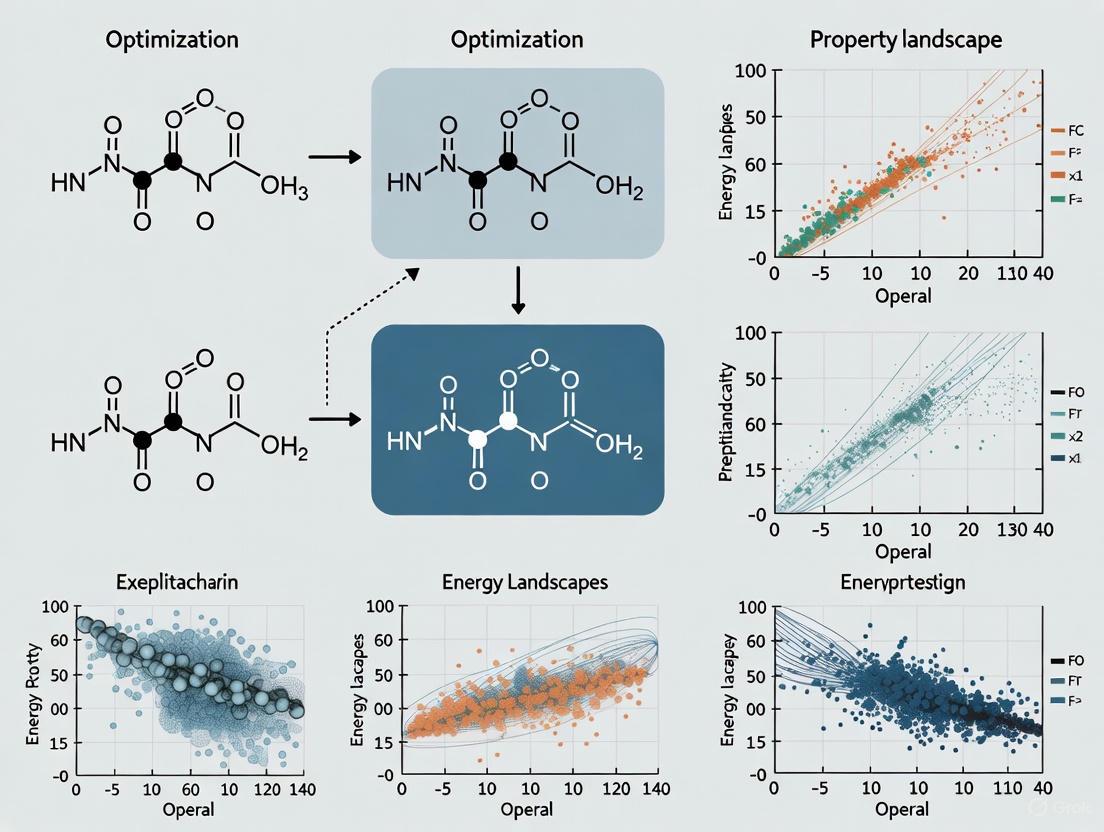

Chemical space represents a multidimensional descriptor space where molecules are positioned based on their structural and physicochemical properties. [1] As depicted in Figure 1, this space can be conceptualized as an M-dimensional Cartesian system where each of the n molecules is described by a numerical vector D containing M descriptors that encode molecular characteristics. [1]

Table 1: Established Definitions of Chemical Space

| Author(s) | Chemical Space Definition | Reference |

|---|---|---|

| Dobson | "All possible small organic molecules, including those present in biological systems" | [1] |

| Reymond et al. | "Ensemble of all known and possible molecules described by their chemical properties" | [1] |

| Varnek and Baskin | "The ensemble of graphs or descriptor vectors forms a chemical space in which some relations between the objects must be defined" | [1] |

| von Lilienfeld et al. | "The combinatorial set of all compounds that can be isolated and constructed from possible combinations and configurations of N1 atoms and Ne electrons in real space" | [1] |

The "chemical multiverse" concept has emerged as a powerful framework, emphasizing that a comprehensive understanding requires analyzing compound datasets through multiple chemical spaces, each defined by different chemical representations. [1] This approach contrasts with single-representation views and enables more robust diversity analysis, virtual screening, and structure-activity relationship studies.

The Challenge of Multi-Objective Optimization in Chemical Space

Identifying novel therapeutics that balance requirements for potency, safety, metabolic stability, and pharmacodynamic profile presents a major challenge in discrete chemical space exploration. [3] This challenge is further exacerbated by recent interest in designing compounds with properties that enable them to engage multiple targets, requiring researchers to balance different, sometimes competing chemical features. [3] Multi-objective optimization methods have shown particular promise in addressing these challenges by helping design novel small molecules optimized for conflicting pharmacological attributes using generative models. [3]

Computational Methodologies for Chemical Space Exploration

Optimization Approaches for Discrete Chemical Spaces

Several computational approaches have been developed for exploring and optimizing molecules within discrete chemical spaces. A comparative analysis of these methods reveals distinct advantages and applications for each approach.

Table 2: Performance Comparison of Chemical Space Optimization Methods

| Optimization Method | Key Characteristics | Molecular Optimization Performance | Cost Effectiveness |

|---|---|---|---|

| Discrete Branch and Bound | Robust strategy for inverse chemical design involving diverse chemical structures | Effective for moderate-sized molecular optimization | More cost-effective than genetic algorithms for moderate-sized problems [2] |

| Gradient Methods | Utilizes gradient information for optimization | Improved performance when combined with discrete methods | Variable depending on implementation |

| Hybrid Discrete-Gradient | Linear combination of atomic potentials significantly improves gradient method performance | Better than dead-end elimination; competes with branch and bound and genetic algorithms [2] | Highly efficient for diverse chemical structures |

| Genetic Algorithms | Evolutionary approach to molecular optimization | Effective but may be outperformed by other methods | Less cost-effective than branch and bound for moderate-sized problems [2] |

Chemical Space Visualization and Navigation

Visual representation of chemical space has become increasingly important for effective navigation and analysis. Dimensionality reduction techniques such as t-distributed stochastic neighbor embedding (t-SNE), principal component analysis (PCA), self-organized maps (SOMs), and generative topographic mapping (GTM) enable researchers to visualize high-dimensional chemical data in two or three dimensions. [1] These visualization approaches implement human-in-the-loop principles, allowing researchers to interactively explore chemical maps and identify promising regions for further investigation. [4]

Experimental Protocols for Chemical Space Exploration

Protocol: Hybrid Discrete-Gradient Optimization for Molecular Property Maximization

Purpose: To identify molecules with optimized properties within discrete chemical spaces using a hybrid discrete-gradient optimization approach.

Background: This protocol implements the hybrid method that significantly improves upon pure gradient methods by incorporating discrete optimization strategies, making it competitive with branch and bound and genetic algorithms for molecular optimization. [2]

Materials and Reagents:

- Computational chemistry software environment (e.g., Python with RDKit)

- Tight-binding model for property calculation (e.g., first electronic hyperpolarizability)

- Molecular descriptor calculation package

- Optimization algorithm library

Procedure:

- Problem Formulation:

- Define the target molecular property for optimization (e.g., static first electronic hyperpolarizability)

- Establish constraints on molecular size and composition

- Select appropriate molecular descriptors for chemical space definition

Initial Space Exploration:

- Generate initial diverse set of molecular structures

- Calculate descriptor vectors for all initial molecules

- Map initial molecules into predefined chemical space

Hybrid Optimization Cycle:

- Apply discrete branch and bound methods to identify promising regions

- Utilize gradient methods for local optimization within promising regions

- Implement linear combination of atomic potentials to enhance gradient performance

- Iterate until convergence criteria met (e.g., no significant improvement after 10 cycles)

Validation and Analysis:

- Synthesize top-performing molecules identified through optimization

- Experimentally validate target properties

- Compare experimental results with predictions

Troubleshooting:

- For slow convergence: Adjust balance between discrete and gradient components

- For limited diversity: Increase initial population size or introduce mutation operators

- For computational bottlenecks: Implement hierarchical screening approaches

Protocol: Multi-Objective Optimization for Conflicting Property Balancing

Purpose: To generate de novo compounds predicted to have a good balance between desired, sometimes conflicting pharmacological attributes.

Background: This protocol addresses the critical challenge of balancing multiple, often competing molecular properties, which is essential for designing compounds that engage multiple targets while maintaining favorable ADMET profiles. [3]

Procedure:

- Objective Definition:

- Identify primary optimization objectives (e.g., potency against multiple targets, metabolic stability)

- Define relative weights for each objective based on project priorities

- Establish acceptability thresholds for each property

Generative Model Setup:

- Train generative model on relevant chemical space (even with limited public data)

- Implement multi-objective optimization algorithms (e.g., NSGA-II, MOEA/D)

- Define chemical feasibility constraints

Optimization Execution:

- Generate candidate molecules using generative model

- Evaluate candidates against all defined objectives

- Select Pareto-optimal candidates for further exploration

- Iterate until satisfactory balance achieved

Compound Selection and Validation:

- Select compounds from Pareto front representing different property trade-offs

- Synthesize and experimentally validate selected compounds

- Refine models based on experimental results

Visualization Framework for Discrete Chemical Space

Chemical Space Exploration Workflow

The following diagram illustrates the integrated workflow for discrete chemical space exploration and optimization, incorporating the key methodologies discussed in this application note.

Chemical Multiverse Representation

The chemical multiverse concept emphasizes that comprehensive chemical space analysis requires multiple descriptor sets and representations, as illustrated in the following diagram.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Discrete Chemical Space Exploration

| Research Tool Category | Specific Examples | Function in Chemical Space Exploration |

|---|---|---|

| Chemical Space Enumeration Tools | Chemical Universe Database (GDB) | Generates unbiased insight into entire chemical space through molecular enumeration using simple chemical stability and synthetic feasibility criteria [1] |

| Molecular Descriptor Packages | RDKit, Dragon, MOE | Calculates comprehensive molecular descriptors for positioning compounds in multi-dimensional chemical space [1] |

| Dimensionality Reduction Algorithms | t-SNE, PCA, GTM, SOM | Enables 2D/3D visualization of high-dimensional chemical space for navigation and analysis [1] |

| Multi-Objective Optimization Platforms | NSGA-II, MOEA/D, custom implementations | Balances conflicting molecular properties during optimization in discrete chemical spaces [3] |

| Generative Molecular Models | Deep graph networks, generative AI | Creates novel molecular structures optimized for target properties within defined chemical spaces [5] |

| Target Engagement Assays | CETSA (Cellular Thermal Shift Assay) | Provides quantitative, system-level validation of drug-target engagement in intact cells and tissues [5] |

| Tight-Binding Model Hamiltonians | Custom computational models | Enables efficient property calculation (e.g., first electronic hyperpolarizability) for molecular optimization [2] |

The exploration of discrete chemical spaces through sophisticated computational methodologies represents a transformative approach to molecular design and optimization. By implementing the protocols and frameworks outlined in this application note, researchers can more effectively navigate the vast chemical multiverse to identify compounds with optimized property profiles. The integration of discrete, gradient, and hybrid optimization methods with advanced visualization techniques and multi-objective optimization frameworks provides a comprehensive toolkit for addressing the complex challenges of modern drug discovery and materials science. As the field continues to evolve, approaches that leverage multiple chemical representations and balance conflicting design objectives will become increasingly essential for successful molecular optimization in discrete chemical spaces.

The concept of "chemical space" represents the universe of all possible molecules and compounds, a domain of almost incomprehensible vastness central to cheminformatics and drug discovery [6]. For drug-like molecules adhering to typical constraints such as a molecular weight under 500 Da and composed primarily of carbon, hydrogen, oxygen, nitrogen, and sulfur, this space is estimated to encompass approximately 10^60 to 10^63 viable compounds [7] [6] [8]. This number dramatically exceeds the number of atoms in our solar system, presenting a fundamental "immensity problem" for molecular discovery [8]. Navigating this vastness to find molecules with specific, desirable properties represents one of the most significant challenges in modern computational chemistry and drug development. This document outlines practical protocols and application notes for researchers tackling molecular optimization within these discrete, combinatorial chemical spaces, providing a framework for efficient exploration and identification of candidate compounds.

Quantitative Landscape of Chemical Space

Table 1: Quantifying the Scale and Composition of Chemical Space

| Category | Estimated Scale / Number | Description & Constraints | Data Source |

|---|---|---|---|

| Total Drug-Like Space | 10^60 - 10^63 molecules | Small molecules; MW < 500; elements C, H, O, N, S; max ~30 atoms [7] [6] [8] | Theoretical Estimation |

| Known Drug Space (KDS) | ~1,834 molecules | Defined by molecular descriptors of marketed drugs [7] [6] | ChEMBL34 (Approved Drugs) |

| Public Bioactive Compounds | ~2.4 million molecules | Molecules with recorded biological activities [6] | ChEMBL Database |

| CAS Registered Compounds | 219 million molecules | Assigned CAS Registry Numbers (as of Oct 2024) [6] | Chemical Abstracts Service |

| Commercial Virtual Libraries | 10^10 - 36 billion molecules | Examples: Enamine's REAL Space (36B), WuXi's GalaXi (8B) [7] | Commercial Databases |

Experimental Protocols for Chemical Space Exploration

Protocol 1: Multi-Level Bayesian Optimization with Hierarchical Coarse-Graining

This protocol uses a multi-resolution active learning strategy to efficiently navigate chemical space for free energy-based molecular optimization, such as enhancing phase separation in phospholipid bilayers [9].

Materials & Software:

- Molecular Dynamics (MD) Simulation Software: e.g., GROMACS, OpenMM.

- Coarse-Graining (CG) Software: Tools for building transferable coarse-grained models.

- Computational Environment: High-performance computing (HPC) cluster.

Procedure:

- Chemical Space Compression: Transform discrete molecular spaces into smooth latent representations using multiple coarse-graining resolutions. This creates a hierarchical view of chemical space, balancing combinatorial complexity and chemical detail.

- Low-Resolution Exploration: Perform initial Bayesian optimization within the lower-resolution, coarse-grained latent spaces. Use molecular dynamics simulations to calculate target free energies of the coarse-grained compounds. This phase prioritizes broad exploration.

- High-Resolution Exploitation: Leverage neighborhood information and promising regions identified during the low-resolution exploration. Guide subsequent Bayesian optimization in higher-resolution, more chemically detailed spaces. This phase focuses on exploitation and refinement.

- Iterative Optimization & Validation: Iterate between resolution levels in a funnel-like strategy. The final output is a set of suggested optimal compounds with insight into relevant neighborhoods in chemical space.

Protocol 2: RNN-Based De Novo Molecular Generation for Kinase Inhibitors

This protocol employs Recurrent Neural Networks (RNNs) to generate novel molecules, specifically applied to discovering new kinase inhibitors (e.g., for Pim1 and CDK4) by exploring spaces near and far from known active compounds [10].

Materials & Software:

- Programming Environment: Python with TensorFlow or PyTorch.

- Cheminformatics Toolkit: RDKit.

- Data Sources: ChEMBL database, DrugBank.

Procedure:

- Data Curation & SMILES Preparation:

- Download known active molecules (e.g., inhibitors with IC50 values) from ChEMBL.

- Preprocess molecules using RDKit: sanitize, remove chirality, and remove replicates.

- Generate both canonical and randomized SMILES sequences from the curated datasets to enhance model robustness.

Model Training & Transfer Learning:

- Architecture: Build an RNN model, typically using two stacked Long Short-Term Memory (LSTM) layers with dropout for regularization.

- Tokenization: Divide SMILES sequences into a defined token set (atoms, bonds, symbols). Use one-hot encoding for input and target tokens.

- Pre-training (Optional): Pre-train the model on a large, general molecular dataset (e.g., DrugBank) to learn fundamental chemical grammar.

- Fine-tuning: Fine-tune the pre-trained model on the target dataset (e.g., specific kinase inhibitors) using transfer learning.

Molecular Generation & Sampling:

- Sample new molecules from the trained model by feeding a starting token and allowing the model to predict subsequent tokens, thereby generating new SMILES strings.

- Use the generated molecules for virtual screening against the target of interest.

Table 2: Research Reagent Solutions for Computational Exploration

| Reagent / Resource | Function / Application | Example / Source |

|---|---|---|

| ChEMBL Database | Manually curated database of bioactive molecules with drug-like properties; used for training and validation. | https://www.ebi.ac.uk/chembl/ [10] [7] |

| RDKit | Open-source cheminformatics toolkit; used for molecule sanitization, descriptor calculation, and fingerprint generation. | RDKit [10] [7] |

| Chemical Fingerprints | High-dimensional vector representations of molecular structure for chemical space analysis and similarity search. | Extended Connectivity Fingerprints (ECFPs), PubChem Fingerprints [7] |

| TensorFlow / PyTorch | Open-source machine learning libraries for building and training generative models like RNNs and GNNs. | Google / Meta |

| UMAP | Dimensionality reduction technique for projecting high-dimensional chemical data into 2D/3D for visualization. | Uniform Manifold Approximation and Projection [7] |

Protocol 3: Hybrid Quantum-Classical Generative Modeling

This protocol describes a hybrid approach combining a Quantum Circuit Born Machine (QCBM) with a classical Long Short-Term Memory (LSTM) model to explore chemical space for historically undruggable targets like the KRAS protein [11].

Materials & Software:

- Quantum Computing Simulator/Hardware: Access to a quantum computing resource.

- Classical ML Framework: Python with a deep learning library.

- Scoring Platform: e.g., Chemistry42 or other ML-based scoring platforms.

Procedure:

- Quantum Fragment Generation: Employ the QCBM to create initial, high-quality molecular fragments that serve as the starting tokens for the classical model.

- Classical Sequence Expansion: Feed the quantum-generated fragments into a classical LSTM model. The LSTM builds upon these fragments, progressively adding atoms and bonds to complete the molecules.

- Hybrid Model Training: Train the QCBM using a custom-designed local filter for the specific target during initial epochs. Subsequently, switch the training to optimize based on a reward score provided by a computational platform (e.g., Chemistry42), which evaluates the generated molecules for desired properties.

- Candidate Optimization: The hybrid model is optimized to produce ligand candidates with enhanced potential for binding the challenging target.

High-Throughput Screening (HTS) represents a foundational methodology in early drug discovery, enabling the rapid experimental assessment of thousands to millions of chemical compounds for biological activity [12] [13]. This approach operates within discrete chemical spaces, testing defined libraries of synthesized or acquired compounds to identify initial "hit" molecules that can then be optimized into therapeutic leads [14]. By leveraging automation, miniaturization, and robotics, HTS has addressed critical bottlenecks in traditional drug discovery, allowing researchers to efficiently explore vast chemical territories that would be impractical to investigate through manual methods [13].

The global HTS market, valued at US$28.8 billion in 2024 and projected to reach US$50.2 billion by 2029, reflects its entrenched position in pharmaceutical research and development [15]. Within the context of molecular optimization research, HTS serves as a primary source of initial structure-activity relationship (SAR) data, providing the experimental foundation upon which iterative molecular optimization campaigns are built [14] [12]. This article examines the principles, protocols, and persistent limitations of traditional HTS approaches, with particular focus on their role in informing molecular optimization in discrete chemical spaces.

Core Principles and Methodologies

Fundamental Concepts and Workflow

High-Throughput Screening is defined by its ability to rapidly test large compound libraries using automated, miniaturized assays. A typical HTS workflow can process between 10,000 to 100,000 compounds per day, while Ultra-High Throughput Screening (uHTS) extends this capacity to millions of daily tests [15] [12]. The methodology fundamentally relies on several integrated components: compound library preparation, assay development, automation and robotics, detection technologies, and data analysis systems [15] [12].

The screening process follows a defined sequence, as illustrated in the workflow below:

Key HTS Formats and Their Applications

HTS approaches are broadly categorized into several formats, each with distinct applications and implementation requirements. The table below summarizes the primary HTS types and their characteristics:

Table 1: Classification of High-Throughput Screening Approaches

| Screening Type | Throughput Capacity | Primary Applications | Key Features |

|---|---|---|---|

| Biochemical Screening | 10,000-100,000 compounds/day | Enzyme activity, receptor binding, molecular interactions [15] [12] | Focuses on molecular targets; uses purified proteins [12] |

| Cell-Based Screening | 10,000-100,000 compounds/day | Cellular pathway impact, toxicity assessment, therapeutic potential [15] [12] | Uses live cells; provides physiological context [15] |

| Virtual Screening (In Silico) | Varies significantly | Compound activity prediction, library prioritization [15] | Computational approach; reduces experimental workload [15] |

| Ultra-HTS (uHTS) | >300,000 compounds/day [12] | Massive library screening, primary discovery campaigns [15] [12] | Maximum throughput; requires advanced robotics [12] |

Application Notes: Established HTS Protocols

Representative Protocol: Isomerase Activity Screening

The following detailed protocol for screening L-rhamnose isomerase (L-RI) activity demonstrates a robust, statistically validated HTS methodology applicable to enzyme targets. This protocol exemplifies the key considerations in HTS assay development and validation [16].

Table 2: Key Research Reagent Solutions for Isomerase HTS Protocol

| Reagent/Material | Function/Description | Optimization Notes |

|---|---|---|

| L-Rhamnose Isomerase (L-RI) | Catalyzes isomerization of D-allulose to D-allose [16] | Target enzyme; source: Geobacillus sp. [16] |

| D-allulose Substrate | Enzyme substrate for reaction quantification [16] | Consumption measured via Seliwanoff's reaction [16] |

| Seliwanoff's Reagent | Colorimetric detection of ketose reduction [16] | Enables activity measurement through absorbance changes [16] |

| 96-/384-Well Microplates | Miniaturized assay format for HTS [12] [16] | Optimized for automation and reduced reagent consumption [16] |

| Positive/Negative Controls | Assay validation and quality control [16] | Essential for statistical assessment and hit confirmation [16] |

Experimental Workflow and Optimization

The isomerase screening protocol follows a carefully optimized sequence to ensure reliability and statistical robustness in the HTS context:

Critical Protocol Steps:

Initial Single-Tube Optimization: Reaction conditions were systematically refined in single-tube format to establish optimal parameters while minimizing interfering factors [16].

HPLC Validation: The optimized protocol was validated against high-performance liquid chromatography (HPLC) measurements, confirming its accuracy in quantifying D-allulose depletion [16].

Miniaturization to 96-Well Format: The validated protocol was adapted to 96-well plates with additional optimizations for protein expression and removal of denatured enzymes to reduce assay interference [16].

Interference Reduction: Specific methods including cell harvest, supernatant removal, and filtration were implemented to minimize background interference in the detection system [16].

Quality Control Assessment: The established HTS protocol was evaluated using statistical metrics, yielding a Z'-factor of 0.449, signal window (SW) of 5.288, and assay variability ratio (AVR) of 0.551 - all meeting acceptance criteria for high-quality HTS assays [16].

HTS Assay Development and Validation Framework

Successful HTS implementation requires rigorous assay development and validation. The following diagram illustrates the critical decision points in establishing a robust HTS assay:

Key Validation Parameters:

- Robustness and Reproducibility: HTS assays must demonstrate consistent performance under automated screening conditions [12].

- Sensitivity: Detection systems must reliably identify active compounds at relevant physiological concentrations [12].

- Miniaturization Compatibility: Assay formats must maintain performance when scaled down to 384- or 1536-well formats to reduce reagent consumption and costs [12].

- Statistical Quality Control: Assays require validation according to predefined statistical concepts, with methods like Z'-factor calculation essential for quality assessment [12] [16].

Limitations and Challenges in Traditional HTS

Technical and Operational Limitations

Despite its transformative impact on drug discovery, traditional HTS faces several persistent limitations that affect its efficiency and output quality:

Table 3: Key Limitations of Traditional High-Throughput Screening

| Limitation Category | Specific Challenges | Impact on Drug Discovery |

|---|---|---|

| Financial and Resource Barriers | High initial investment in robotics and automation systems [15] [12] | Significant capital expenditure required before screening campaigns |

| Technical Complexity | Requirement for specialized technical expertise for operation and data interpretation [15] [12] | Limited accessibility for organizations with restricted resources |

| Data Quality Issues | Generation of false positives/negatives requiring additional validation [15] [12] | Resource-intensive confirmation processes and potential missed opportunities |

| Assay Interference | Chemical reactivity, metal impurities, autofluorescence, colloidal aggregation [12] | Inaccurate activity assessment and wasted resources on artifact-based hits |

| Compound Library Limitations | Inflated physicochemical properties (high lipophilicity, molecular weight) [12] | Poor aqueous solubility and lowered clinical exposure in humans |

| Physiological Relevance | Limited representation of complex disease biology in reductionist assays [17] | Poor translation of in vitro hits to in vivo efficacy |

Strategic Limitations in Molecular Optimization Context

Within the framework of molecular optimization research in discrete chemical spaces, HTS presents several strategic constraints:

Chemical Space Exploration Boundaries: HTS is inherently limited to testing existing compound libraries, restricting exploration to predefined chemical territories [14]. This contrasts with de novo molecular generation approaches that can explore broader chemical spaces.

High Attrition Rates: Traditional HTS often identifies compounds with favorable in vitro activity but poor drug-like properties, contributing to high attrition rates in clinical development [12].

Limited Structure-Activity Relationship (SAR) Information: While HTS provides initial activity data, it often generates limited structural insight for optimization campaigns, requiring extensive follow-up studies [14] [13].

Incompatibility with Complex Biology: Target-based HTS approaches may oversimplify complex disease biology, potentially missing compounds that act through multi-target mechanisms or complex phenotypic responses [17].

Traditional High-Throughput Screening remains a cornerstone methodology in early drug discovery, providing an unparalleled capacity for empirical testing of compound libraries in discrete chemical spaces. The established protocols, statistical validation frameworks, and miniaturized technologies enable systematic exploration of chemical-biological interactions at scale. However, significant limitations persist—including financial barriers, data quality issues, and constraints in physiological relevance—that impact the efficiency and output quality of HTS campaigns.

Within molecular optimization research, HTS serves as a critical source of initial structure-activity data, yet its value is maximized when integrated with complementary approaches. The emergence of artificial intelligence-driven screening, advanced phenotypic assays, and structure-based design methods represents an evolution beyond traditional HTS paradigms. These integrated approaches address many inherent limitations while leveraging the core strength of HTS: the ability to generate robust experimental data at scale for discrete chemical entities. As drug discovery continues to evolve, traditional HTS methodologies will likely maintain their role as a foundational element in molecular optimization, albeit with increasing integration of computational and targeted approaches to overcome their historical constraints.

Molecular representation is a cornerstone of computational chemistry and drug design, acting as the critical bridge between chemical structures and their biological, chemical, or physical properties [18]. It involves translating molecules into mathematical or computational formats that algorithms can process to model, analyze, and predict molecular behavior [18]. In the context of molecular optimization in discrete chemical spaces, the choice of representation directly influences the efficiency and success of exploring the vast, nearly infinite chemical space to identify compounds with desired biological properties [18]. The rapid evolution of these representation methods has significantly advanced the drug discovery process, with AI-driven strategies now facilitating exploration of broader chemical spaces and accelerating key tasks like scaffold hopping [18].

The transition from traditional, rule-based representations to modern, data-driven approaches marks a paradigm shift in computational chemistry and materials science [19]. This shift enables data-driven predictions of molecular properties, inverse design of compounds, and accelerated discovery of chemical and crystalline materials [19]. This review provides a comprehensive examination of three fundamental representation schemes: string-based notations (SMILES), graph-based representations, and fragment-based encoding, detailing their theoretical foundations, practical applications, and implementation protocols for molecular optimization research.

Fundamental Representation Schemes

SMILES (Simplified Molecular-Input Line-Entry System)

SMILES is a specification in the form of a line notation for describing the structure of chemical species using short ASCII strings [20]. Developed by David Weininger in the 1980s and later extended as OpenSMILES by the open-source chemistry community, SMILES provides a compact and efficient way to encode chemical structures that is both human-readable and machine-processable [20] [18].

The SMILES syntax follows specific grammatical rules:

- Atoms are represented by standard chemical element symbols, typically enclosed in brackets for atoms outside the organic subset (B, C, N, O, P, S, F, Cl, Br, I) or when specifying formal charge, hydrogen count, or stereochemistry [20]. For example, gold is represented as

[Au], while ethanol isCCO[20] [21]. - Bonds are indicated with specific symbols: single bonds (

-or omitted), double bonds (=), triple bonds (#), and aromatic bonds (:) [20]. - Branches are specified using parentheses, as in acetone:

CC(=O)C[21]. - Ring closures are indicated by matching numerals after atoms, with cyclohexane written as

C1CCCCC1[20]. - Stereochemistry is specified using

@and@@symbols, requiring explicit mention of all four substituents around chiral centers [21].

A key advantage of SMILES is its ability to generate canonical forms through algorithms that produce unique string representations for each molecular structure, enabling efficient database indexing and similarity searching [20]. However, a single molecule can have multiple valid SMILES strings (e.g., CCO, OCC, and C(O)C for ethanol), necessitating canonicalization for consistent comparison [20].

Graph-Based Representations

Graph-based representations conceptualize molecules as mathematical graphs where atoms correspond to nodes and bonds to edges [19]. This approach explicitly encodes structural relationships and connectivity patterns that are implicit in SMILES strings, providing a more natural abstraction of molecular structure [19].

Graph representations form the backbone for Graph Neural Networks (GNNs), which have demonstrated significant advancements in learning meaningful molecular features directly from raw molecular graphs [19]. These representations are particularly valuable for predicting molecular activity and synthesizing new compounds because they capture structural and dynamic properties that are challenging to represent in linear notations [19].

Recent extensions include 3D graph representations that incorporate spatial geometry through atomic coordinates, bond lengths, and angles, enabling the modeling of conformational behavior and spatial interactions critical for understanding molecular properties and binding affinities [19] [22]. Methods like 3D Infomax utilize 3D geometries to enhance the predictive performance of GNNs by pre-training on existing 3D molecular datasets [19].

Fragment-Based Encoding

Fragment-based encoding decomposes molecules into chemically meaningful substructures, such as functional groups, rings, or other common molecular motifs [23] [24]. This approach bridges the gap between atomic-level representations and whole-molecule descriptions by focusing on intermediate structural units that often correlate with specific chemical properties or biological activities [24].

In fragment-based drug discovery (FBDD), this strategy has proven particularly valuable for targeting challenging protein classes, with approximately 70 drug candidates currently in clinical trials and at least 7 marketed medicines originating from fragment screens [24]. The method involves screening small molecular fragments (typically 150-300 Da) against biological targets, followed by systematic elaboration or linking of hits to develop higher-affinity ligands [24].

Modern implementations often employ hybrid approaches, such as fragment-based tokenization of SMILES strings or targeted masking of functional groups during pre-training, to incorporate chemical domain knowledge into representation learning [23]. For example, the MLM-FG model randomly masks subsequences corresponding to chemically significant functional groups during pre-training, compelling the model to better infer molecular structures and properties by learning the context of these key units [23].

Table 1: Comparative Analysis of Molecular Representation Schemes

| Representation | Data Structure | Key Advantages | Limitations | Primary Applications |

|---|---|---|---|---|

| SMILES | Linear string | Human-readable, compact, database-friendly, canonicalization possible | Limited structural explicitness, sensitivity to syntax variations | Molecular generation, similarity search, database indexing |

| Molecular Graph | Node-edge graph | Explicit connectivity, natural structure abstraction, stereochemistry handling | Computational complexity, variable-sized inputs | Property prediction, molecular interaction modeling |

| 3D Graph | Geometric graph | Spatial information, conformational awareness, quantum property prediction | 3D data requirement, computational intensity | Quantum chemistry, molecular dynamics, protein-ligand docking |

| Fragment-Based | Substructural units | Chemical intuition, scaffold hopping, functional group focus | Fragment library dependency, reconstruction complexity | Lead optimization, scaffold hopping, medicinal chemistry |

Quantitative Comparison of Representation Performance

Recent benchmarking studies across diverse chemical property prediction tasks provide insights into the relative performance of different representation schemes. The evaluation typically employs metrics such as Area Under the Receiver Operating Characteristic Curve (AUC-ROC) for classification tasks and Mean Absolute Error (MAE) or Root Mean Squared Error (RMSE) for regression tasks, often using scaffold splitting to test model generalizability [23].

Notably, advanced representation methods have demonstrated competitive performance across multiple benchmarks. For instance, the MLM-FG model, which employs functional group masking during pre-training, outperformed existing SMILES- and graph-based models in 9 out of 11 benchmark tasks, including BBBP, ClinTox, Tox21, HIV, and MUV datasets [23]. Remarkably, this SMILES-based approach even surpassed some 3D-graph-based models, highlighting its exceptional capacity for representation learning without explicit 3D structural information [23].

Similarly, multi-graph representation approaches like MGRFN (Multi-Graph Representation Fusion Network), which integrates both 2D chemical features and 3D geometric information, have shown superior performance in predicting molecular quantum chemical properties and various conformational properties, particularly for distinguishing stereoisomers that share the same bond connections but different spatial configurations [22].

Table 2: Performance Comparison of Representation Learning Models on Molecular Property Prediction

| Model | Representation Type | BBBP (AUC) | Tox21 (AUC) | HIV (AUC) | QM9 (MAE) | Chiral Dataset Accuracy |

|---|---|---|---|---|---|---|

| MLM-FG (RoBERTa) | SMILES with functional group masking | 0.923 | 0.851 | 0.813 | - | - |

| MLM-FG (MoLFormer) | SMILES with functional group masking | 0.915 | 0.842 | 0.806 | - | - |

| GEM | 3D Graph | 0.901 | 0.831 | 0.794 | - | - |

| MolCLR | 2D Graph | 0.892 | 0.825 | 0.783 | - | - |

| MGRFN | Multi-Graph Fusion | - | - | - | 0.0012 (α) | 94.7% |

Experimental Protocols

Protocol 1: SMILES-Based Pre-Training with Targeted Masking

This protocol details the implementation of MLM-FG, a molecular language model with functional group masking for improved representation learning [23].

Materials and Reagents:

- Large-scale unlabeled molecular dataset (e.g., 100 million molecules from PubChem)

- RDKit or OpenBabel for SMILES parsing and functional group identification

- Transformer-based architecture (RoBERTa or MoLFormer implementation)

- GPU computing resources with ≥16GB memory

Procedure:

- Data Preprocessing: Standardize SMILES representation using canonicalization tools in RDKit to ensure consistent atomic ordering and ring numbering.

- Functional Group Identification: Parse each SMILES string to identify subsequences corresponding to chemically significant functional groups (e.g., carboxylic acids, esters, amines) using SMILES-based pattern matching.

- Masked Pre-Training:

- For each SMILES string in the training batch, randomly select 15-30% of functional group subsequences for masking.

- Replace selected subsequences with a special

[MASK]token. - Train the transformer model to predict the masked functional groups based on contextual information from the remaining SMILES string.

- Employ cross-entropy loss between predicted and actual tokens.

- Model Configuration: Use 12-16 transformer layers, 768-1024 hidden dimensions, and 12-16 attention heads depending on model size requirements.

- Fine-Tuning: Transfer the pre-trained model to downstream tasks using task-specific heads and datasets with standard fine-tuning protocols.

Troubleshooting:

- For unstable training, reduce the masking percentage or apply gradual masking strategy.

- If model fails to converge, check SMILES standardization and functional group identification steps.

- For memory constraints, reduce batch size or implement gradient accumulation.

Protocol 2: Multi-Graph Representation Fusion

This protocol describes the MGRFN framework for integrating 2D and 3D molecular graph representations [22].

Materials and Reagents:

- Molecular datasets with 2D structures and 3D conformations (e.g., QM9, MD17)

- Graph neural network libraries (PyTorch Geometric, DGL)

- Conformation generation tools (RDKit, Merck Molecular Force Field)

- GPU computing resources with ≥32GB memory for 3D operations

Procedure:

- 2D Graph Construction:

- Represent molecules as graphs G₂D = (V, E) where nodes V correspond to atoms and edges E to bonds.

- Initialize node features using atom properties (element type, hybridization, formal charge).

- Initialize edge features using bond properties (bond type, conjugation, ring membership).

- 3D Graph Construction:

- Generate molecular conformations using RDKit's MMFF94 implementation or similar force fields.

- Construct 3D graphs G₃D = (V, E, P) where P represents 3D coordinates for each atom.

- Calculate spatial relationships (distances, angles) for edge features.

- Dual-Stream Encoding:

- Process 2D graphs using Graph Attention Network (GAT) to capture chemical connectivity patterns.

- Process 3D graphs using SphereNet or other geometry-aware GNN to extract spatial molecular features.

- Apply attention mechanisms in both streams to weight important substructures.

- Bilinear Fusion:

- Combine 2D and 3D representations using a bilinear fusion module: Z_fused = σ(Z₂D × W × Z₃Dᵀ + b) where Z₂D and Z₃D are representations from 2D and 3D streams, W is a learnable weight tensor, and σ is activation function.

- Property Prediction: Feed the fused representation to task-specific prediction heads (MLP for regression/classification).

Troubleshooting:

- For conformation generation failures, adjust force field parameters or use alternative methods.

- If fusion results in performance degradation, adjust fusion weights or try alternative fusion strategies.

- For overfitting on small datasets, implement regularization in both GNN streams.

Protocol 3: Fragment-Based Scaffold Hopping Implementation

This protocol outlines a fragment-based approach for scaffold hopping in lead optimization [18] [24].

Materials and Reagents:

- Fragment library with 500-2000 compounds (150-300 Da molecular weight)

- Target protein with known active compound

- Structural biology tools (X-ray crystallography, NMR, or homology modeling)

- Molecular docking software (AutoDock, Glide, or similar)

Procedure:

- Fragment Screening:

- Screen fragment library against target using biophysical methods (SPR, NMR, or thermal shift).

- Identify initial hits with weak to moderate binding affinity (μM-mM range).

- Validate hits through dose-response experiments and competition assays.

- Structural Characterization:

- Determine co-crystal structures of target protein with fragment hits.

- Analyze binding modes, key interactions, and fragment growing vectors.

- Fragment Evolution:

- Systematically grow or merge fragments based on structural information.

- Explore structure-activity relationships through analog synthesis.

- Optimize physicochemical properties while maintaining binding interactions.

- Scaffold Hop Evaluation:

- Assess new scaffolds for maintained target engagement and improved properties.

- Validate functional activity in relevant biological assays.

- Confirm scaffold novelty through patent landscape analysis.

Troubleshooting:

- For weak fragment binding, consider linking strategies or fragment merging.

- If synthetic accessibility is problematic, explore alternative growth vectors.

- For poor physicochemical properties, implement property-based design early.

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Molecular Representation Research

| Category | Item | Specifications | Application Function |

|---|---|---|---|

| Chemical Databases | PubChem | 100+ million compounds | Large-scale pre-training data source [23] |

| ChEMBL | Bioactivity data for drug-like compounds | Curated bioactivity data for fine-tuning | |

| Software Libraries | RDKit | Open-source cheminformatics | SMILES parsing, molecular standardization, descriptor calculation [25] |

| PyTorch Geometric | Graph neural network library | Implementation of GNNs for molecular graphs [22] | |

| OPSIN | IUPAC name to structure converter | Chemical name resolution in automated workflows [25] | |

| Benchmarking Resources | MoleculeNet | Curated molecular property datasets | Standardized benchmarking across representations [23] |

| TDC | Therapeutic Data Commons | Specialized therapeutic activity prediction tasks | |

| Specialized Tools | MoleculeResolver | Multi-source structure resolution | Crosschecked chemical structure validation [25] |

| Promethium | Quantum chemistry platform | F-SAPT analysis for protein-ligand interactions [24] |

Implementation Workflows

SMILES Processing and Canonicalization Workflow

SMILES Processing Workflow

This workflow illustrates the sequential steps for processing and canonicalizing SMILES strings, beginning with input standardization to handle various formatting conventions [25]. The parsing stage interprets atomic symbols, bond types, branching, and ring closures according to SMILES specification [20]. Validation checks for syntactic and semantic correctness, while canonicalization generates unique representations through deterministic atom ordering [20] [25]. Invalid structures are flagged for manual inspection or correction, ensuring data quality for subsequent analysis.

Multi-Representation Fusion Architecture

Multi-Representation Fusion Architecture

This architecture demonstrates the integration of multiple molecular representations for enhanced property prediction [22]. SMILES strings are processed through transformer-based encoders to capture sequential patterns, while 2D graphs are analyzed by GNNs to extract topological features [23] [19]. 3D graphs provide spatial and geometric information through geometry-aware networks [22]. The fusion module combines these complementary representations using attention mechanisms or bilinear fusion, enabling the model to leverage both structural and sequential information for more accurate molecular property prediction [22].

Applications in Molecular Optimization

Scaffold Hopping in Drug Discovery

Scaffold hopping represents a critical application of molecular representations in lead optimization, aimed at discovering new core structures while retaining similar biological activity [18]. This strategy is particularly valuable for addressing issues such as toxicity, metabolic instability, or patent limitations in existing lead compounds [18].

Traditional scaffold hopping approaches typically utilize molecular fingerprinting and structural similarity searches to identify compounds with similar properties but different core structures [18]. However, modern AI-driven methods have greatly expanded the potential for scaffold hopping through more flexible and data-driven exploration of chemical diversity [18]. These approaches leverage advanced molecular representations to capture nuances in molecular structure that may have been overlooked by traditional methods, allowing for more comprehensive exploration of chemical space and the discovery of new scaffolds with unique properties [18].

Notably, fragment-based encoding has proven particularly effective for scaffold hopping, as demonstrated by the classification of hopping strategies into heterocyclic substitutions, ring opening/closing, peptide mimicry, and topology-based hops [18]. By focusing on conserved molecular interactions rather than overall structure, these methods can identify structurally diverse compounds that maintain key binding features.

Property Prediction and Virtual Screening

Molecular representations form the foundation for predictive models in virtual screening, where the goal is to identify potential drug candidates from vast compound libraries [19]. The transition from traditional descriptors to learned representations has significantly improved prediction accuracy for key drug discovery endpoints, including activity, toxicity, and pharmacokinetic properties [18] [19].

Graph-based representations have demonstrated particular strength in property prediction tasks due to their ability to explicitly model atomic interactions and connectivity patterns [19] [26]. Similarly, SMILES-based transformer models pre-trained on large unlabeled datasets have shown competitive performance across diverse molecular property benchmarks, sometimes even surpassing graph-based approaches despite their simpler input representation [23].

The integration of multiple representation types through fusion architectures has emerged as a promising direction for improving prediction accuracy, especially for properties that depend on both 2D connectivity and 3D geometry [22]. These multi-representation approaches can distinguish between stereoisomers and conformers that share identical 2D structures but exhibit different properties due to spatial arrangements [22].

Future Perspectives

The field of molecular representation continues to evolve rapidly, with several emerging trends shaping future research directions. Self-supervised learning on large unlabeled molecular datasets represents a promising approach for learning transferable representations without extensive labeled data [19]. Similarly, multi-modal learning frameworks that integrate diverse data types—including structural, sequential, and physicochemical information—are likely to yield more comprehensive molecular representations [19].

The development of 3D-aware representations remains an active area of research, with equivariant models and learned potential energy surfaces offering physically consistent, geometry-aware embeddings that extend beyond static graphs [19]. These approaches are particularly valuable for modeling molecular interactions and conformational dynamics that underlie biological activity.

For molecular optimization in discrete chemical spaces, future work will likely focus on better integration of domain knowledge through chemically informed pre-training strategies and attention mechanisms that highlight pharmacophoric features [23] [19]. Additionally, improving the interpretability of representation learning models will be crucial for building trust and facilitating collaboration between computational and medicinal chemists.

As representation methods continue to mature, their impact on drug discovery and materials science is expected to grow, potentially accelerating progress in sustainable chemistry, renewable energy materials, and the development of safer, more effective therapeutics [19].

The exploration of chemical space represents a fundamental challenge in molecular optimization for drug discovery and materials science. Traditional approaches have operated within discrete molecular frameworks, utilizing defined sets of structures and fingerprints. However, recent advances in generative artificial intelligence and Bayesian optimization have catalyzed a paradigm shift toward continuous representations of chemical space. This transition enables more efficient navigation, optimization, and design of synthesizable molecules with tailored properties. This article examines the theoretical foundations, methodological implementations, and practical applications of this conceptual shift, providing application notes and experimental protocols for researchers engaged in molecular optimization.

Molecular optimization requires efficient exploration of extremely large chemical spaces. Historically, this challenge has been approached through discrete methods that treat molecules as distinct entities within a combinatorial space. While these methods have proven valuable, they face significant limitations in scalability and efficiency when dealing with the vastness of possible molecular structures. The conceptual shift from discrete to continuous space navigation represents a transformative approach in computational molecular design. By embedding discrete molecular structures into continuous latent spaces or using continuous-time processes, researchers can apply powerful optimization techniques from machine learning and mathematics to efficiently traverse chemical space. This continuum-based approach has demonstrated particular value in addressing the critical challenge of synthetic accessibility, ensuring that designed molecules can be practically synthesized in laboratory settings [27].

The integration of continuous representations has enabled more sophisticated molecular optimization strategies, including multi-property optimization and constrained design based on textual descriptions or structural requirements. This article explores the theoretical underpinnings of this conceptual shift, provides detailed protocols for implementing these approaches, and demonstrates their application through case studies in synthesizable molecular design.

Theoretical Foundations: From Discrete Structures to Continuous Representations

Discrete Chemical Space Frameworks

Traditional molecular optimization operates in discrete chemical space, where molecules are represented as distinct entities with defined structures. The Markov chain formalism provides a mathematical foundation for many discrete approaches, where molecular transformations follow a stochastic process with transitions dependent only on the current state. This "memoryless" property makes Markov processes particularly suitable for modeling sequential decision-making in molecular design [28]. In discrete-time Markov chains (DTMC), the system transitions between states at discrete time steps, while continuous-time Markov chains (CTMC) allow for transitions at any continuous time point. These formalisms underlie many classical molecular optimization approaches, including Monte Carlo tree search methods and fragment-based molecular generation.

The discrete representation of chemical space often relies on molecular fingerprints, graphs, or string-based representations such as SMILES (Simplified Molecular-Input Line-Entry System). While conceptually straightforward, these discrete representations create a challenging optimization landscape characterized by combinatorial complexity and discontinuous property functions. Navigating this landscape requires sophisticated algorithms that can efficiently explore the high-dimensional, structured space of possible molecular structures [29].

Continuous Representations and Embeddings

The shift to continuous representations addresses fundamental limitations of discrete approaches by embedding molecular structures into continuous vector spaces. This embedding enables the application of powerful continuous optimization techniques, including gradient-based methods and Bayesian optimization. Variational autoencoders (VAEs), normalizing flows, and deep kernel learning (DKL) represent prominent approaches for learning continuous molecular embeddings that capture structural, electronic, and topological information [29].

Continuous-time discrete diffusion processes provide another mathematical framework for this conceptual shift, characterized by continuous temporal evolution over discrete state spaces. These processes employ integro-differential equations to model probability distributions over molecular states:

[\frac{\partial p(x, t)}{\partial t} - \int0^t g(t - t1) \frac{\partial p(x, t1)}{\partial t1} dt1 = D \int0^t g(t - t1) \frac{\partial^2}{\partial x^2} p(x, t1) dt_1]

where (p(x,t)) represents the probability distribution over discrete states (x) at time (t), (g(t)) is the waiting time distribution between transitions, and (D) is the diffusion coefficient. For exponential waiting times, the process becomes Markovian and simplifies to the standard diffusion equation [30].

This mathematical foundation enables the development of generative models that operate in continuous time while producing discrete molecular structures. The reverse process of these models allows for controlled generation of molecules with specific properties by reversing the diffusion process through learned gradients [30] [31].

Methodological Implementations: Protocols for Continuous Space Navigation

Synthesis-Centric Generative Framework

Protocol: SynFormer Implementation for Synthesizable Molecular Design

SynFormer represents a transformative approach that ensures synthetic feasibility by generating synthetic pathways rather than just molecular structures. The framework operates within a chemical space defined by purchasable building blocks and reliable reaction templates, ensuring high likelihood of synthesizability [27].

Experimental Procedure:

Reaction Template Curation: Select 115 reaction templates focusing on bi- and trimolecular couplings, augmented with functional group interconversions. Ensure templates represent robust, reliable transformations with high experimental success rates.

Building Block Selection: Curate 223,244 commercially available building blocks from Enamine's U.S. stock catalog to ensure practical accessibility.

Pathway Representation: Implement postfix notation for linear representation of synthetic pathways using four token types: [START], [END], [RXN] (reaction), and [BB] (building block). Place reactions after reagents in the sequence to accommodate both linear and convergent syntheses.

Model Architecture:

- Employ a scalable transformer architecture with standard transformer layers for sequence processing.

- Implement a multilayer perceptron (MLP) classification head for token type prediction.

- Utilize a denoising diffusion probabilistic module for building block selection from large commercial inventories.

Training Protocol: Train on synthetic pathways generated from the defined reaction network using autoregressive decoding. Optimize parameters to maximize reconstruction accuracy of known synthesizable molecules.

Validation: Evaluate reconstruction rates on both Enamine REAL Space and ChEMBL molecules. Assess synthetic accessibility of generated molecules through expert chemists and computational metrics.

Application Notes: The encoder-decoder variant (SynFormer-ED) enables local chemical space exploration around query molecules, while the decoder-only variant (SynFormer-D) facilitates global exploration toward property optimization. The framework's scalability allows performance improvement with increased computational resources and training data [27].

Bayesian Optimization in Adaptive Subspaces

Protocol: MolDAIS Framework for Data-Efficient Molecular Optimization

The Molecular Descriptors with Actively Identified Subspaces (MolDAIS) framework enables efficient Bayesian optimization in continuous descriptor spaces by adaptively identifying task-relevant subspaces, particularly valuable in low-data regimes common to molecular discovery [29].

Experimental Procedure:

Molecular Featurization: Compute comprehensive molecular descriptor libraries including:

- Simple atom-level counts (hydrogen bond donors/acceptors, molecular weight)

- Complex graph-derived features (topological indices, connectivity patterns)

- Quantum-informed features (partial charges, polarizability estimates)

Surrogate Modeling: Implement Gaussian process (GP) with sparse axis-aligned subspace (SAAS) prior to induce sparsity in the descriptor space. The SAAS prior enables automatic relevance determination of descriptors during optimization.

Alternative Screening Methods: For computational efficiency, implement mutual information (MI) and maximal information coefficient (MIC) based screening as alternatives to full Bayesian inference.

Optimization Loop:

- Initialize with random selection or diverse set of molecules from chemical library.

- Train surrogate model on existing property data.

- Optimize acquisition function (expected improvement or upper confidence bound) to identify promising candidates.

- Query expensive oracle (experimental measurement or simulation) for selected candidates.

- Update dataset and surrogate model iteratively.

Convergence Criteria: Terminate after fixed evaluation budget or when improvement falls below threshold for consecutive iterations.

Application Notes: MolDAIS demonstrates particular strength in optimizing molecular properties with fewer than 100 evaluations, making it suitable for expensive experimental or computational properties. The framework successfully identifies near-optimal candidates from libraries exceeding 100,000 molecules with minimal evaluation costs [29].

Multi-Modality Molecular Optimization

Protocol: 3DToMolo for Text-Structure Aligned Optimization

3DToMolo represents a multi-modality approach that aligns textual descriptions with molecular structures in continuous space, enabling optimization guided by diverse constraints including qualitative descriptions, quantitative properties, and structural requirements [31].

Experimental Procedure:

Multi-Modality Representation:

- Molecular Representation: Encode both 2D molecular graphs and 3D conformer structures using an SE(3)-equivariant graph transformer to preserve spatial symmetry.

- Text Representation: Encode textual descriptions of target properties or structural constraints using a lightweight large language model (LLM).

Cross-Modality Alignment: Train representation pairs through contrastive learning to align molecular and textual embeddings in shared continuous space.

Diffusion Process:

- Forward Process: Define Markov chain that gradually adds noise to molecular structures: [dMt = f(Mt,t) dt + g(t)\cdot dWt] where (Wt) denotes Brownian motion, and (f) and (g) are smooth functions.

- Reverse Process: Parameterize denoising process using SE(3)-equivariant graph transformer (S{\theta}) to learn gradient of log-likelihood: (\nabla \log pt(M_t)).

Conditional Optimization: For goal-directed optimization, use conditional reverse process: [dM = [f(M,t) - g^2(t)\cdot \nabla \log pt(M, y)]dt + g(t)\cdot dWt] where (\nabla \log pt(M, y) = \nabla \log pt(M) + \nabla \log p_t(y | M)) and (y) represents the guidance prompt.

Substructure Constraint Implementation: For scenarios requiring specific substructure preservation, fix atomic coordinates of target substructures and optimize only remaining molecular regions.

Application Notes: 3DToMolo enables flexible optimization controlled by natural language prompts, accommodating diverse goals from simple property improvement to complex structural constraints. The approach demonstrates capability to discover novel molecules with specified target substructures without prior knowledge of effective modifications [31].

Comparative Analysis: Quantitative Assessment of Methodologies

Table 1: Performance Comparison of Molecular Optimization Frameworks

| Framework | Chemical Space Coverage | Synthetic Accessibility | Sample Efficiency | Multi-Property Optimization | Interpretability |

|---|---|---|---|---|---|

| SynFormer | Billions of synthesizable molecules | Ensured through pathway generation | Moderate (100-1000 evaluations) | Limited to property predictors | Medium (explicit pathways) |

| MolDAIS | Library-dependent (typically 10^4-10^6 molecules) | Not explicitly addressed | High (<100 evaluations) | Single-objective focus | High (descriptor importance) |

| 3DToMolo | Training data-dependent | Not explicitly addressed | Moderate (100-1000 evaluations) | Excellent (textual guidance) | Medium (latent space) |

Table 2: Application Scope and Limitations

| Framework | Optimal Application Context | Computational Requirements | Integration with Experimental Data | Scalability |

|---|---|---|---|---|

| SynFormer | Early-stage lead optimization with synthetic constraints | High (transformer architecture) | Compatible with property predictors | Excellent with compute resources |

| MolDAIS | Data-scarce optimization of expensive properties | Moderate (Bayesian optimization) | Direct incorporation of experimental results | Limited by descriptor computation |

| 3DToMolo | Multi-goal optimization with complex constraints | High (diffusion models + LLMs) | Compatible with various oracles | Moderate for large molecules |

Table 3: Key Research Reagent Solutions for Molecular Optimization

| Resource Category | Specific Examples | Function in Molecular Optimization | Access Information |

|---|---|---|---|

| Building Block Libraries | Enamine REAL Space, GalaXi, eXplore | Provides synthesizable chemical space foundation; ensures practical accessibility of designed molecules | Commercial vendors; >223,000 compounds |

| Reaction Template Sets | Curated 115 reaction templates (SynFormer) | Defines feasible chemical transformations; ensures synthetic tractability | Custom curation from literature and established databases |

| Molecular Descriptor Libraries | RDKit descriptors, Dragon descriptors, Quantum-chemical features | Enables featurization for machine learning models; provides structured representation for optimization | Open-source and commercial software |

| Property Prediction Oracles | Quantum chemistry simulations, QSAR models, Experimental assays | Provides target property evaluation; guides optimization toward desired objectives | Institutional computational resources or contract research organizations |

| Textual Prompt Databases | Natural language descriptions of molecular properties and constraints | Guides multi-modality optimization; enables incorporation of diverse design criteria | Custom compilation from literature and expert knowledge |

Visualization of Workflows and Signaling Pathways

Diagram 1: Conceptual Framework for Molecular Space Navigation

Diagram 2: MolDAIS Bayesian Optimization Workflow

Diagram 3: 3DToMolo Multi-Modality Optimization Process

Molecular optimization in discrete chemical spaces is a cornerstone of modern computational drug discovery, aiming to identify or design novel compounds with enhanced properties while navigating the intricate trade-offs between multiple, often competing, objectives. This document outlines application notes and detailed experimental protocols for addressing three core challenges in this field: Similarity Constraints, which ensure optimized molecules retain key characteristics of a lead compound; Multi-property Balancing, which involves the simultaneous optimization of several physicochemical or biological properties; and the pursuit of Novelty, which focuses on exploring new regions of chemical space to identify innovative molecular entities. The frameworks discussed herein, including MolDAIS, CMOMO, and SynFormer, provide sophisticated, data-efficient strategies for navigating these complex optimization landscapes [29] [32] [27].

Application Note 1: Adherence to Similarity Constraints

Background and Rationale

Maintaining structural or functional similarity to a known lead molecule is crucial in drug discovery to preserve pre-existing desirable properties, such as pharmacological activity or synthetic accessibility, while improving upon specific liabilities. Bayesian Optimization (BO) frameworks operating on fixed molecular representations are particularly adept at this task, as they can efficiently search vast chemical spaces under explicit similarity boundaries.

Key Methodologies and Data

Table 1: Summary of Similarity-Constrained Optimization Methods

| Method Name | Core Approach | Molecular Representation | Reported Performance (Tanimoto Similarity Constraint ≥ 0.4) |

|---|---|---|---|

| MolDAIS [29] | Bayesian Optimization with adaptive descriptor subspace selection | Precomputed molecular descriptor libraries | Identified near-optimal candidates from >100,000 molecules using <100 property evaluations |

| QMO [33] | Query-based framework using zeroth-order optimization in latent space | SMILES strings via a molecule autoencoder | Achieved superior performance on benchmark tasks (QED, penalized LogP); ~15% higher success rate on QED optimization |

| MOLRL [34] | Reinforcement Learning (PPO) in generative model's latent space | Latent representation from a pre-trained autoencoder (e.g., VAE, MolMIM) | Comparable or superior to state-of-the-art on penalized LogP optimization benchmark |

Detailed Protocol: Similarity-Constrained Bayesian Optimization with MolDAIS

Primary Application: Optimizing a target property (e.g., binding affinity) while maintaining a minimum Tanimoto similarity to a starting lead molecule.

Research Reagent Solutions:

- Software: Python environment with libraries for cheminformatics (e.g., RDKit) and machine learning (e.g., PyTorch, GPyTorch).

- Molecular Database: A discrete library of candidate molecules (e.g., ZINC, Enamine REAL).

- Featurization Tool: RDKit for computing a comprehensive library of molecular descriptors (e.g., topological, electronic, structural).

- Property Predictor: A pre-trained model or a function to compute the target property (e.g., a random forest model for pIC50) and the Tanimoto similarity based on molecular fingerprints.

Workflow Steps:

- Define Search Space: Compile a discrete molecular library (

M) from which candidates will be selected. - Featurize Molecules: For every molecule

minM, compute a high-dimensional vector of molecular descriptors using a tool like RDKit. - Initialize Data: Select one or more initial lead molecules and add them to the dataset

D = {(m_i, y_i)}, wherey_iis the measured property value. - Constrained BO Loop: For a pre-defined number of iterations (

n_iter < 100), perform the following: a. Train Surrogate Model: Train a Gaussian Process (GP) model on the current datasetD. The MolDAIS framework uses a sparsity-inducing prior to adaptively identify the most relevant molecular descriptors for the task [29]. b. Optimize Acquisition Function: Select the next candidate moleculem_candidateto evaluate by maximizing an acquisition function (e.g., Expected Improvement) only over molecules inMthat meet the pre-set Tanimoto similarity constraint relative to the lead molecule. c. Evaluate Candidate: Obtain the property valuey_candidateform_candidatevia simulation or a predictive model. d. Update Dataset: Augment the dataset:D = D ∪ (m_candidate, y_candidate). - Output Results: After the loop terminates, report the molecule in

Dwith the besty_ithat satisfies all constraints.

Figure 1: Workflow for similarity-constrained Bayesian optimization using an adaptive subspace, illustrating the iterative process of model training, candidate acquisition under constraints, and data set expansion.

Application Note 2: Multi-property Balancing

Background and Rationale

Real-world molecular optimization requires satisfying multiple criteria simultaneously, such as high activity, low toxicity, and good solubility. This creates a complex, high-dimensional landscape where improving one property can detrimentally affect another. Frameworks that dynamically handle these constraints are essential for identifying high-quality, well-rounded candidates.

Key Methodologies and Data

Table 2: Summary of Multi-Property Optimization Frameworks

| Method Name | Core Optimization Strategy | Reported Application & Performance |

|---|---|---|

| CMOMO [32] | Constrained Multi-Objective Molecular Optimization; dynamic cooperative handling of constraints | Simultaneously optimized multiple non-biological activity properties under two structural constraints; successfully identified β2-adrenoceptor GPCR ligands and GSK-3β inhibitors under drug-like constraints. |

| MPOGAN [35] | Multi-Property Optimizing GAN with Real-Time Knowledge-Updating (RTKU) | Generated antimicrobial peptides (AMPs) with potent activity, low cytotoxicity, and high diversity; 9 out of 10 synthesized designed peptides showed experimental antimicrobial activity and low cytotoxicity. |

| SAGE-Amine [36] | Scoring-Assisted Generative Exploration for multi-property optimization | Designed novel amines for CO2 capture, simultaneously achieving high basicity with low viscosity and vapor pressure. |

Detailed Protocol: Constrained Multi-Property Optimization with CMOMO

Primary Application: Simultaneously optimizing several target properties while strictly satisfying a set of property or structural constraints.

Research Reagent Solutions:

- Software: Python environment with deep learning frameworks (e.g., TensorFlow, PyTorch).

- Property Predictors: Multiple pre-trained models for each target property and constraint (e.g., activity, solubility, toxicity predictors).

- Molecular Generator: A generative model capable of producing novel molecular structures (e.g., a VAE or a GAN).

- Evaluation Metrics: Defined functions to calculate property values and check constraint satisfaction.

Workflow Steps:

- Define Objectives and Constraints: Formally specify the properties to be optimized (e.g., maximize pIC50, minimize cytotoxicity) and the constraints to be satisfied (e.g., LogP ≤ 5, molecular weight ≤ 500).

- Initialize Population: Generate or select an initial population of molecules,

P. - Evolutionary Optimization Loop: For a set number of generations, proceed as follows:

a. Evaluate Population: Use the property predictors to score all molecules in

Pfor each objective and constraint. b. Dynamic Constraint Handling: CMOMO dynamically adjusts its focus on constraints based on the current population's performance, prioritizing unsatisfied constraints to guide the search more effectively [32]. c. Selection and Variation: Select parent molecules fromPbased on a fitness function that incorporates both objective performance and constraint satisfaction. Apply evolutionary operators (e.g., crossover, mutation) in the molecular representation space (e.g., SMILES, graph) to create a new offspring population. d. Cooperative Search: CMOMO employs a cooperative strategy, evaluating properties within discrete chemical spaces and using the evolution of molecules in an implicit space to guide the search [32]. e. Update Population: Combine parents and offspring to form the populationPfor the next generation. - Output Results: Return the set of non-dominated molecules from the final population that satisfy all constraints.

Figure 2: Workflow for constrained multi-property molecular optimization, showing the dynamic handling of constraints and the evolutionary search process.

Application Note 3: Ensuring Novelty and Synthesizability

Background and Rationale

A key challenge in generative molecular design is the tendency of models to propose molecules that are either chemically intractable or structurally trivial. True novelty requires a deliberate escape from confined chemical spaces while ensuring that proposed molecules can be synthesized, thereby bridging the gap between in-silico design and real-world application.

Key Methodologies and Data

Table 3: Methods for Novel and Synthesizable Molecular Design

| Method Name | Core Strategy for Synthesizability & Novelty | Key Outcome |

|---|---|---|

| SynFormer [27] | Generative modeling of synthetic pathways (not just structures) using transformers and diffusion models. | Ensures every generated molecule has a viable synthetic pathway, enabling exploration of novel analogs and global optimization while maintaining synthetic feasibility. |