Navigating the Molecular Search Dilemma: Strategies to Balance Exploration and Exploitation in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on the critical challenge of balancing exploration (searching new chemical space) and exploitation (optimizing known leads) in molecular...

Navigating the Molecular Search Dilemma: Strategies to Balance Exploration and Exploitation in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical challenge of balancing exploration (searching new chemical space) and exploitation (optimizing known leads) in molecular search and design. We cover the foundational theory from multi-armed bandits to active learning, detail modern methodological implementations like Bayesian optimization and reinforcement learning, address common pitfalls and optimization strategies for real-world projects, and compare validation frameworks to assess algorithmic performance. The synthesis offers a roadmap to accelerate hit identification and lead optimization while managing resource constraints.

The Core Dilemma: Understanding Exploration vs. Exploitation in Chemical Space

Technical Support Center: Troubleshooting Guide & FAQs

Framed within the thesis on Balancing Exploration and Exploitation in Molecular Search Research.

FAQ 1: During a High-Throughput Virtual Screen (Exploration Phase), my hit rate is unacceptably low (<0.1%). What are the primary troubleshooting steps?

Answer: A low hit rate in exploratory virtual screening typically indicates a mismatch between your compound library and the target's binding site. Follow this protocol:

- Re-evaluate Library Composition: Ensure your library is diverse and not biased towards a single chemotype. Use principal component analysis (PCA) on molecular descriptors.

- Validate Docking Protocol: Re-dock a known active ligand (positive control). If the protocol cannot reproduce the known pose within an RMSD < 2.0 Å, recalibrate parameters.

- Check Binding Site Definition: Confirm the pocket definition is correct and allows for reasonable ligand placement. Consider using a consensus from multiple pocket detection algorithms.

- Adjust Scoring Function Rigor: Overly stringent scoring may filter out novel scaffolds. Iteratively relax thresholds.

Experimental Protocol: Validation of Docking Pose (Step 2 above)

- Objective: Reproduce the co-crystallized ligand pose.

- Method:

- Download the PDB file of your target with a bound ligand.

- Prepare the protein (add hydrogens, assign charges) using software like Schrodinger's Protein Preparation Wizard or UCSF Chimera.

- Extract the native ligand, generate a 3D conformation, and re-prepare it.

- Define the grid box centered on the native ligand's centroid.

- Perform docking with your standard settings.

- Calculate the Root-Mean-Square Deviation (RMSD) between the top-scored docked pose and the crystal structure pose.

- Success Criteria: RMSD < 2.0 Å.

FAQ 2: In the Exploitation (Lead Optimization) phase, my SAR (Structure-Activity Relationship) is becoming erratic and non-linear. How can I resolve this?

Answer: Erratic SAR during optimization often signals underlying issues with compound integrity, assay variability, or the presence of multiple binding modes.

- Verify Compound Purity & Identity: Re-analyze all analogs by LC-MS. Purity should be >95%. See Table 1 for common causes.

- Implement Redundant Assays: Run a secondary, orthogonal assay (e.g., SPR alongside biochemical assay) to confirm activity trends.

- Probe for Conformational Flexibility: Use molecular dynamics (MD) simulations (50-100 ns) to see if modifications induce protein loop movements or alternative binding poses.

Table 1: Common Causes of Erratic SAR During Exploitation

| Cause | Diagnostic Test | Corrective Action |

|---|---|---|

| Compound Degradation | LC-MS analysis after 24h in assay buffer. | Reformulate compounds, use fresh DMSO stocks, add stabilizers. |

| Assay Edge Effects | Review plate heat maps for spatial patterns. | Re-run with plate randomization, use smaller wells. |

| Off-Target Activity | Counter-screen against related protein family members. | Design more selective analogs based on off-target profile. |

| Aggregation | Dynamic light scattering (DLS) of compound in buffer. | Add detergent (e.g., 0.01% Triton X-100) to assay buffer. |

| Covalent Modification | Mass spectrometry of protein after incubation with compound. | Re-evaluate design strategy for reactive groups. |

FAQ 3: When designing a library for "focused exploration" around a novel scaffold, how do I balance novelty with synthesizability?

Answer: Use a computational workflow that integrates generative models with synthetic feasibility filters.

Experimental Protocol: Focused Exploration Library Design

- Input: Your novel hit scaffold (SMILES format).

- Generation: Use a generative AI model (e.g., REINVENT, Lib-INVENT) conditioned on your scaffold to propose analogs. Set a "novelty" threshold (e.g., Tanimoto similarity < 0.4 to known drugs).

- Filtering Pipeline: Apply sequential filters:

- Drug-Likeness: Rule of 5, QED score.

- Synthetic Accessibility: Score using SAscore or SYBA.

- Retrosynthesis: Use Al software (e.g., ASKCOS, AiZynthFinder) to validate a viable route for top candidates.

- Output: A set of 50-200 novel, synthetically tractable candidates for testing.

Library Design Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function in Exploration/Exploitation | Example / Notes |

|---|---|---|

| DNA-Encoded Library (DEL) | Enables ultra-high-throughput exploration (10^6-10^9 compounds) against purified protein targets. | Commercially available (e.g., from X-Chem, HitGen) or custom-built. |

| Surface Plasmon Resonance (SPR) Chip | Provides kinetic data (KD, kon, koff) during exploitation for binding optimization. | CM5 sensor chip for amine coupling of target protein. |

| Cryo-EM Grids | Enables structure-based exploitation of difficult targets without crystallization. | UltraFoil R1.2/1.3 gold grids for membrane proteins. |

| Phospholipid Vesicles (Nanodiscs) | Provides a native-like membrane environment for exploring membrane protein ligands. | MSP1E3D1 nanodiscs for GPCR stabilization. |

| Metabolic Stability Microsomes | Critical for exploitation-phase ADME/Tox profiling of lead series. | Human liver microsomes (HLM) for intrinsic clearance assays. |

FAQ 4: My exploitation campaign is stuck; potency gains are plateauing despite extensive analoging. What novel exploration strategies should I consider?

Answer: This is a classic signal to re-initiate exploration. Shift from local to global search.

- Scaffold Hop: Use computational methods (e.g., feature-based pharmacophores, shape similarity) to identify chemically distinct scaffolds that maintain key interactions.

- Allosteric Site Exploration: Perform a fragment-based screen (using X-ray crystallography or NMR) to identify binders in novel pockets.

- Covalent Library Screen: If applicable, screen a targeted covalent library (e.g., acrylamides) against a non-conserved cysteine to unlock new chemical space.

Overcoming Optimization Plateaus

Troubleshooting Guides & FAQs

Common Issues in Experimental Design

Q1: My contextual bandit model for virtual molecular screening is converging too quickly to a suboptimal set of compounds. How can I encourage more meaningful exploration? A: This is a classic sign of insufficient exploration, often due to an improperly tuned exploration parameter (e.g., ε in ε-greedy or the temperature in a softmax policy). First, log the action selection probabilities over time to confirm the issue. Recommended steps:

- Adaptive Epsilon Schedule: Instead of a fixed ε, use a decay schedule:

ε_t = ε_initial / (1 + β * t), wheretis the iteration andβis a decay rate (e.g., 0.01). Start with a high exploration rate (ε_initial = 0.3-0.5). - Switch to Upper Confidence Bound (UCB): Implement UCB1 action selection:

A_t = argmax_a [ Q_t(a) + c * sqrt( ln(t) / N_t(a) ) ], wherecis a tunable confidence parameter (start with c=2.0). This explicitly balances the estimated rewardQand the uncertainty (inversely proportional to selection countN). - Diagnostic Check: Ensure your reward function is correctly scaled. Normalize rewards (e.g., binding affinity scores) to a [0,1] range to prevent one high-but-accidental early reward from dominating.

Q2: When implementing Q-learning for a reaction condition optimization RL environment, the agent's performance collapses after a period of improvement. What could cause this? A: This "catastrophic forgetting" or divergence is often linked to unstable learning or non-stationarity.

- Primary Fix - Experience Replay: Do not learn from consecutive state transitions. Instead, store experiences

(s_t, a_t, r_t, s_{t+1})in a replay buffer (size: 10,000-50,000) and sample random mini-batches for training. This breaks temporal correlations. - Secondary Fix - Target Network: Use a separate, slowly updated target network to calculate the

max Q(s_{t+1}, a)target in the Q-learning update rule. Update this target network everyτsteps (e.g., τ=100) by copying the weights from the main network. This stabilizes the learning target. - Protocol: Implement the Deep Q-Network (DQN) architecture with the following hyperparameters as a starting point:

- Learning rate (α): 0.0001

- Discount factor (γ): 0.99

- Replay buffer size: 50,000

- Target network update frequency (τ): 100 steps

- Batch size: 32

Q3: How do I define the "state" for a bandit or RL agent in a real-world molecular design experiment where properties are not instantly known? A: This is a fundamental challenge in moving from simulation to wet-lab integration.

- For Bandits (Contextual): The "state" or "context" can be the computed molecular descriptors (e.g., Morgan fingerprints, molecular weight, logP) of the compound before it is synthesized or tested. The agent selects a molecule based on this pre-computed context.

- For RL with Delayed Reward: The state can be a history vector. For example, at step

t, the states_tcould be the concatenation of the descriptors of the lastk=3molecules synthesized, along with their measured outcomes (or placeholders if results are pending). This requires a system to track the experimental pipeline's status.

Algorithm Selection & Implementation FAQs

Q4: When should I choose a simple Multi-Armed Bandit (MAB) over a full Reinforcement Learning (RL) setup for my molecular search? A: Use the decision table below.

| Criterion | Multi-Armed Bandit (Contextual) | Full Reinforcement Learning (e.g., DQN, PPO) |

|---|---|---|

| State Definition | Single, static context per choice. | Sequential, evolving state over a "session" or synthetic pathway. |

| Decision Dependency | Each choice is independent; no long-term sequence planning. | Current choice critically affects future options and outcomes. |

| Typical Molecular Task | Selecting the best compound from a fixed library for a single assay. | Optimizing a multi-step process (e.g., designing a synthetic route, iteratively modifying a lead compound's scaffold). |

| Data & Complexity | Lower complexity, faster to implement and train. Suitable for smaller search spaces (<10k compounds) or limited initial data. | Higher complexity, requires more interaction data. Necessary for large, combinatorial chemical spaces or multi-objective optimization. |

| Example | "Which of these 2000 pre-enumerated molecules should I synthesize next for binding assay X?" | "How should I iteratively modify this lead molecule over 5 design cycles to optimize binding, solubility, and synthetic accessibility simultaneously?" |

Q5: What are the most critical hyperparameters to tune for Thompson Sampling in a Bayesian optimization-led bandit, and what are good starting values? A: Thompson Sampling performance hinges on the prior and reward model. Start with the following protocol:

Protocol: Implementing Thompson Sampling for a Continuous Reward (e.g., binding score)

- Model: Assume the reward

r_afor arm (molecule)afollows a Gaussian distribution with unknown meanμ_aand known varianceσ^2. Use a Gaussian prior forμ_a:N( μ_0, σ_0^2 ). - Initialization: Set prior parameters. For normalized rewards (mean=0, std=1), use

μ_0 = 0,σ_0 = 1. Set observed varianceσ = 1. - Update Rule: After observing reward

rfrom armaat timet:- Let

n_abe the number of times armahas been pulled. - Calculate posterior for

μ_a:N( μ_post, σ_post^2 ), where:μ_post = ( μ_0/σ_0^2 + (Σ r_i)/σ^2 ) / (1/σ_0^2 + n_a/σ^2 )σ_post^2 = 1 / (1/σ_0^2 + n_a/σ^2)

- Let

- Action Selection: At each step, for each arm

a, sample a valueμ_a_samplefrom its current posteriorN( μ_post, σ_post^2 ). Select the arm with the highest sampled value. - Tuning Focus: The key hyperparameter is the prior variance

σ_0^2. A largerσ_0^2(e.g., 10) implies higher initial uncertainty, encouraging more exploration. A smallerσ_0^2(e.g., 0.1) makes the algorithm more conservative. Start withσ_0^2 = 1and adjust based on the observed rate of exploration.

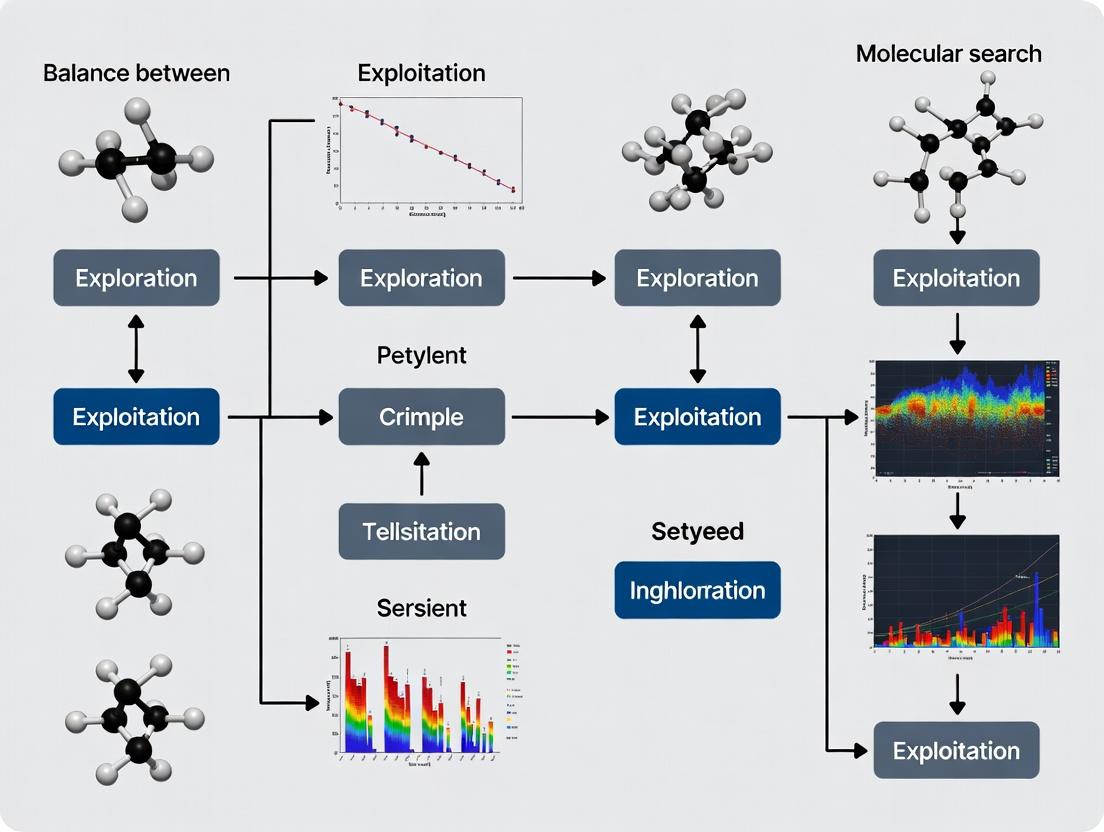

Visualizations

Title: Bandit/RL Molecular Search Iterative Workflow

Title: MAB vs RL Decision Structure in Molecular Search

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Bandit/RL Molecular Experiment |

|---|---|

| High-Throughput Screening (HTS) Assay Kits | Provides the "reward function" environment. Measures biological activity (e.g., binding, inhibition) for selected compounds, generating the quantitative feedback for the agent. |

| Chemical Database & Descriptor Software (e.g., RDKit) | Generates the "state/context" representation. Converts molecular structures into numerical feature vectors (fingerprints, descriptors) usable by the agent's model. |

| Automated Synthesis/Sample Handling Platform | The physical "action" executor. Enables the rapid synthesis or retrieval of the molecule selected by the agent, closing the loop between decision and experimental testing. |

| Bayesian Optimization Library (e.g., BoTorch, GPyOpt) | Implements probabilistic models for Thompson Sampling or Bayesian optimization bandits. Manages priors, posteriors, and acquisition function (exploration policy) calculations. |

| Reinforcement Learning Framework (e.g., Stable-Baselines3, Ray RLlib) | Provides pre-implemented, optimized RL algorithms (DQN, PPO, SAC) and utilities (replay buffers, environment wrappers) for developing sequential design agents. |

| Laboratory Information Management System (LIMS) | Tracks the state of experiments. Crucial for managing delayed rewards by logging compound status (planned, synthesized, under assay, completed) for accurate state representation. |

This technical support center addresses common challenges in navigating chemical space, framed within the essential research paradigm of balancing exploration (searching new regions) and exploitation (optimizing promising leads).

Troubleshooting Guides & FAQs

Q1: My virtual screening of a large library (e.g., 10^6 compounds) yielded zero hits with acceptable binding affinity. Is my docking protocol broken? A: Not necessarily. This often indicates an exploitation failure in a poorly explored region. First, validate your protocol with a known active control against your target. If that works, the issue is likely the chemical space coverage of your library. Shift strategy from pure exploitation to exploration: use a diverse subset screening or apply generative models to propose novel scaffolds outside your initial library's domain.

Q2: My lead compound series shows rapidly diminishing returns during optimization (SAR cliffs). How do I escape this local optimum? A: You are over-exploiting a narrow region. Implement a strategic exploration step:

- Analyze: Perform a matched molecular pair analysis to identify specific modifications causing the activity cliff.

- Pivot: Use a scaffold hop or topology-based search to generate structurally distinct analogs that maintain key pharmacophores but explore new geometry.

Q3: My generative AI model for molecule design keeps proposing similar, non-diverse structures. How do I improve exploration? A: This is a classic mode collapse. Adjust your exploration-exploitation balance within the algorithm.

- Troubleshoot: Check the reward function—it may be overly greedy for a single property (e.g., pIC50). Introduce diversity penalties or multi-objective rewards (e.g., including synthetic accessibility, lipophilicity).

- Protocol: Retrain with a batch-wise diversity filter or implement a reinforcement learning strategy with a curiosity reward for novel structural features.

Q4: Experimental HTS data and computational predictions for the same compound set are in conflict. Which should I trust for directing the next search iteration? A: Use discrepancy as a guide for targeted verification, a key step in active learning loops.

- Protocol:

- Curate Data: Clean both datasets (remove compounds with assay interference flags, check prediction confidence scores).

- Analyze Discrepancies: Tabulate compounds into consensus actives/inactives and disputed compounds.

- Strategic Test: Prioritize experimental re-testing of the disputed compounds. This focused experiment directly informs and improves your predictive model for the next cycle.

Q5: How do I quantitatively decide when to stop exploring a series and when to abandon it? A: Implement a go/no-go dashboard with key metrics. Continuously compare your current series against project thresholds and the potential of other explored series.

Table 1: Lead Series Progression Dashboard

| Metric | Exploitation Phase Target | Exploration Trigger Threshold | Measurement Protocol |

|---|---|---|---|

| Primary Potency (pIC50) | > 8.0 | < 6.5 for >50 new analogs | Dose-response assay (n=3, triplicate) |

| Selectivity Index | > 100-fold vs. related target | < 10-fold | Parallel assay against anti-target |

| Ligand Efficiency (LE) | > 0.35 | < 0.30 | LE = (1.37 * pIC50) / Heavy Atom Count |

| Synthetic Complexity | SAscore < 4.0 | SAscore > 6.0 | Calculate using RDKit Synthesis Accessibility score |

| Patent Space Coverage | > 70 novel analogs | < 20 novel analogs feasible | Substructure search in patent databases |

Experimental Protocols

Protocol 1: Diverse Subset Selection for Initial Exploration Screening Objective: To maximize the coverage of chemical space with a minimal compound set. Methodology:

- Input: Large library (e.g., corporate collection, purchaseable set).

- Descriptor Calculation: Compute extended-connectivity fingerprints (ECFP4, radius 2) for all compounds.

- Clustering: Use the Butina clustering algorithm (RDKit implementation) with a Tanimoto similarity cutoff of 0.6.

- Selection: From each cluster, select the compound closest to the cluster centroid.

- Output: A diverse subset (~1-5% of the original library) for primary screening.

Protocol 2: Automated Molecular Optimization with Balanced Multi-Parameter Scoring Objective: To iteratively propose new analogs that balance potency improvement with other key properties. Methodology:

- Define: A starting molecule (lead), a reaction library, and a multi-parameter scoring function (e.g., Score = 0.5ΔpIC50 + 0.2ΔLE - 0.3*ΔSAscore).

- Generate: Apply all applicable reactions from the library to the lead to create a virtual progeny (e.g., 200 analogs).

- Predict: Use QSAR models to predict pIC50 and logP for all progeny. Calculate LE and SAscore.

- Score & Rank: Apply the scoring function to all progeny.

- Select: Synthesize and test the top 5 ranked compounds. Use the new data to retrain QSAR models for the next iteration.

Visualizations

Diagram 1: The Strategic Search Cycle in Chemical Space

Diagram 2: Lead Optimization Decision Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Strategic Molecular Search

| Reagent / Tool | Function in Search Strategy | Key Provider Examples |

|---|---|---|

| Diverse Screening Library | Enables broad exploration of chemical space in initial campaigns. | Enamine REAL, ChemBridge DIVERSet, WuXi AppTec Core |

| DNA-Encoded Library (DEL) | Facilitates ultra-high-throughput exploration (10^6-10^9 compounds) against purified protein targets. | X-Chem, DyNAbind, Vipergen |

| Building Blocks for Analogs | Enables exploitation via rapid synthesis of analog series for SAR. | Enamine Building Blocks, Sigma-Aldrich, Combi-Blocks |

| Kinase/GPCR Panel Services | Provides critical selectivity data to exploit safely and avoid off-targets. | Eurofins DiscoverX, Reaction Biology, Cerep |

| Generative Chemistry Software | Uses AI to propose novel molecules, balancing exploration (novelty) and exploitation (property optimization). | BenevolentAI, Iktos, IBM RXN |

| ADMET Prediction Suite | Computational filters to prioritize molecules with higher probability of drug-like properties. | Simulations Plus ADMET Predictor, OpenADMET, Schrödinger QikProp |

Technical Support Center: Troubleshooting Guides & FAQs for Molecular Search

This support center is framed within the thesis of balancing exploration (novel target/compound discovery) and exploitation (optimization of known chemical matter) in modern drug discovery. The following guides address common experimental bottlenecks.

FAQs & Troubleshooting

Q1: Our high-throughput screening (HTS) campaign against Target X yielded an unusually high hit rate (>5%). How do we triage these results to avoid exploitation of assay artifacts? A1: This indicates a potential false positive. Follow this systematic triage protocol:

- Confirm Activity: Re-test all primary hits in a dose-response format (10-point, 1:3 serial dilution).

- Counter-Screen: Test compounds in an orthogonal assay format (e.g., switch from fluorescence to luminescence readout) to rule out technology interference.

- Assay Interference Check:

- Test for compound aggregation: Add 0.01% v/v Triton X-100. True inhibitors retain activity; aggregators lose it.

- Test for fluorescence quenching/interference: Include compound-only controls at all tested concentrations.

- Prioritize: Apply the following filters sequentially to prioritize for follow-up (exploitation).

Q2: Our AI/ML model for virtual screening consistently proposes molecules that are synthetically intractable or violate Lipinski's Rule of Five. How can we refine the search? A2: This is an exploration-exploitation balance issue. The model is exploring chemical space without sufficient constraints.

- Apply Hard Filters: Pre-filter the generative model's output with rules for synthetic accessibility (SAscore) and lead-like properties (MW <450, LogP <4).

- Retrain with Feedback: Incorporate a "druggability" penalty term into the model's loss function based on historical compound data from your organization.

- Implement a Hybrid Workflow: Use the AI model for initial exploration, then pass top candidates to a rules-based or fragment-based exploitation pipeline for optimization.

Q3: Our fragment-based lead discovery (FBLD) surface plasmon resonance (SPR) data shows binding, but no functional activity is observed in the cellular assay. What are the next steps? A3: This disconnect between binding and function is common. Follow this diagnostic pathway:

- Validate Binding Affinity: Confirm SPR binding kinetics with Isothermal Titration Calorimetry (ITC).

- Check Cell Permeability: Run a parallel artificial membrane permeability assay (PAMPA) or a cell-based uptake assay (e.g., LC-MS/MS detection).

- Investigate Target Engagement: Use a cellular thermal shift assay (CETSA) to confirm the fragment engages the target in the cellular milieu.

- Evaluate Mechanism: The fragment may bind an allosteric site without modulating function. Consider structural studies (X-ray crystallography/cryo-EM).

Detailed Experimental Protocols

Protocol 1: Orthogonal Assay for HTS Hit Validation (From FAQ A1)

- Objective: To confirm activity of primary HTS hits while eliminating false positives.

- Materials: Primary hit compounds, target protein, assay plates, reagents for primary assay (Fluorescence-based) and orthogonal assay (Luminescence-based).

- Method:

- Prepare compound dilution series in DMSO (10 mM stock, serially diluted).

- Transfer 50 nL of each dilution to a 384-well assay plate.

- For the Primary Assay Re-confirmation: Add fluorescence-based assay reagents according to original HTS protocol. Incubate and read.

- For the Orthogonal Assay: In a separate plate, add luminescence-based assay reagents that measure the same biochemical activity. Incubate and read.

- Calculate IC50/EC50 values for both assays. Prioritize compounds that show potent, congruent dose-response curves in both assays.

Protocol 2: Cellular Target Engagement via CETSA (From FAQ A3)

- Objective: To confirm fragment binding to the intracellular target protein.

- Materials: Live cells expressing target, fragment compound, vehicle control, heating block, qPCR tubes, lysis buffer, Western blot or ELISA kit for target protein.

- Method:

- Treat cells with fragment or vehicle for a predetermined time (e.g., 2 hours).

- Harvest cells, wash, and aliquot equal cell suspensions into PCR tubes.

- Heat each tube at a gradient of temperatures (e.g., 37°C to 67°C, 8 points) for 3 minutes.

- Lyse cells by freeze-thaw cycles.

- Centrifuge to separate soluble protein. Analyze supernatant for target protein abundance via Western blot/ELISA.

- Plot remaining soluble protein vs. temperature. A rightward shift in the melting curve (increased protein stability) for fragment-treated samples indicates cellular target engagement.

Data Presentation

Table 1: Comparison of Molecular Search Strategies

| Strategy | Primary Goal (Exploration/Exploitation) | Avg. Hit Rate | Typical Timeline | Key Risk |

|---|---|---|---|---|

| High-Throughput Screening (HTS) | Exploration | 0.1% - 1% | 6-12 months | High false positive rate, cost |

| Virtual Screening (AI/ML) | Exploration | 1% - 10% (post-filtering) | 1-3 months | Synthetic tractability, model bias |

| Fragment-Based Lead Discovery (FBLD) | Balanced | >90% (binding), low functional | 12-24 months | Difficulty achieving cellular potency |

| Medicinal Chemistry Optimization | Exploitation | N/A (iterative) | 24+ months | Optimization dead-ends, PK/tox issues |

Table 2: Triage Analysis of Hypothetical HTS Campaign (From FAQ A1)

| Triage Step | Compounds Input | Compounds Output | Attrition Reason | Action |

|---|---|---|---|---|

| Primary HTS | 500,000 | 5,000 (1% hit rate) | N/A | Initial exploration |

| Dose-Response Confirm | 5,000 | 1,000 | Lack of potency/curve | Remove |

| Orthogonal Assay | 1,000 | 400 | Assay technology artifact | Remove |

| Aggregation Test (Triton) | 400 | 300 | Compound aggregation | Remove |

| Viable for Exploitation | 300 | - | - | Advance to lead optimization |

Pathway & Workflow Visualizations

Title: HTS Hit Triage Workflow to Isolate True Leads

Title: Fragment Screening Diagnostic Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Molecular Search Experiments

| Item | Function in Context | Example (Supplier) |

|---|---|---|

| Triton X-100 | Non-ionic detergent used to identify and eliminate compound aggregation-based false positives in biochemical assays. | Thermo Fisher Scientific (AC32737) |

| AlphaScreen/AlphaLISA Kits | Bead-based, no-wash assay technology for orthogonal confirmation of HTS hits (e.g., protein-protein interaction assays). | Revvity (formerly PerkinElmer) |

| CETSA Kits | Pre-optimized kits for cellular target engagement studies, often including specific antibodies and buffers. | Proteintech (K1002) |

| SPR Biosensor Chips (CM5) | Gold-standard sensor chips for measuring binding kinetics (KD, kon, koff) of fragments/hits to immobilized targets. | Cytiva (BR100530) |

| PAMPA Plate System | High-throughput tool to predict passive transcellular permeability of early-stage compounds. | Corning (4515) |

| SAscore Calculator | Computational tool integrated into cheminformatics pipelines to evaluate synthetic accessibility of AI-generated molecules. | RDKit/Pipelinable Component |

Troubleshooting Guides & FAQs

Q1: My molecular diversity sampling appears biased, how can I diagnose and correct this?

A: Bias in exploration breadth often stems from flawed library design or sampling algorithms. To diagnose:

- Calculate Scaffold and Feature Distributions: Compute the frequency of molecular scaffolds (e.g., using Bemis-Murcko skeletons) and key physicochemical property bins (e.g., molecular weight, logP, polar surface area) within your sampled set.

- Compare to Reference: Create a table comparing these distributions to your full virtual library or a known diverse set (e.g., ZINC20). Significant deviation indicates bias.

- Corrective Protocol: Implement a maximum common substructure (MCS) filter or use a diversity-picking algorithm like sphere exclusion or k-means clustering based on molecular fingerprints (ECFP4). Re-sample, ensuring weight is given to underrepresented regions of chemical space.

Q2: During exploitation, my focused library consistently yields compounds with poor synthetic accessibility (SA) scores. What is the issue?

A: This is a common exploitation depth problem where optimization drives scores into synthetically complex regions.

- Diagnosis: Calculate the SAscore (using RDKit or a similar toolkit) for all proposed molecules. A cluster of proposals with SAscore > 6 indicates a problematic trend.

- Solution: Integrate a synthetic accessibility penalty term into your objective function. Use a multi-parameter optimization (MPO) protocol that balances the primary activity score with SAscore and other drug-like properties. Re-run the proposal algorithm with this constrained objective.

Q3: The agent-based search model gets "stuck" optimizing a single scaffold and ignores other promising leads. How do I increase exploration?

A: This is a classic exploitation trap. Implement an "epsilon-greedy" or Upper Confidence Bound (UCB) strategy.

- Protocol: Modify your selection algorithm. For a fraction (

epsilon, e.g., 5-10%) of iterations, force the agent to select a molecule at random from a diverse subset of the unexplored region, instead of choosing the top-scoring candidate. - Metric Monitoring: Track the "scaffold novelty introduced per iteration" metric (see Table 1). This should show periodic spikes corresponding to exploration phases.

Q4: How do I quantitatively know if I am effectively balancing exploration and exploitation in a single campaign?

A: You must track paired metrics simultaneously. See Table 1 for the core metrics. A healthy campaign will show progressive increases in both Cumulative Unique Scaffolds (exploration) and Average Potency of Top-100 Compounds (exploitation) over iterations or time.

Experimental Protocols

Protocol 1: Measuring Exploration Breadth via Chemical Space Coverage Objective: Quantify the diversity of a tested compound set. Materials: Tested compound structures, a reference chemical database (e.g., ChEMBL), computing environment with RDKit/ChemAxon. Steps:

- For both your test set and a large reference set, compute 2D physicochemical descriptors (e.g., MW, LogP, HBD, HBA, TPSA) and ECFP4 fingerprints.

- Perform Principal Component Analysis (PCA) on the descriptor matrix. Use the first two principal components (PC1, PC2) to define a 2D chemical space.

- Draw a convex hull around your test set points in this PC space.

- Calculate Exploration Breadth Metric: Divide the area of your test set's convex hull by the area of the reference set's convex hull. This yields a "Relative Chemical Space Coverage" ratio (0-1).

Protocol 2: Measuring Exploitation Depth via Potency Trend Analysis Objective: Quantify the improvement in compound quality within a focused region. Materials: Time-stamped assay data for a congeneric series, curve-fitting software. Steps:

- Isolate all compounds belonging to the top-3 most frequently sampled molecular scaffolds from your campaign.

- For each scaffold series, plot the measured potency (pIC50, pKi) against the chronological order of synthesis or testing.

- Fit a linear regression line to the data for each series.

- Calculate Exploitation Depth Metric: The slope of the regression line (ΔPotency/Iteration) is the "Local Optimization Rate." A steep positive slope indicates effective exploitation.

Data Presentation

Table 1: Core Metrics for Balancing Molecular Search

| Metric Category | Specific Metric | Formula/Description | Ideal Trend |

|---|---|---|---|

| Exploration Breadth | Unique Scaffold Count | # of distinct Bemis-Murcko scaffolds tested | Increases over time, then plateaus |

| Chemical Space Coverage | Area of convex hull in PCA space (see Prot. 1) | Rapid initial increase | |

| Novelty Rate | # of new scaffolds discovered per iteration | High early, decreases later | |

| Exploitation Depth | Average Potency (Top-N) | Mean pIC50 of the best N compounds | Monotonically increases |

| Local Optimization Rate | Slope of potency vs. time for a series (see Prot. 2) | Steady positive value | |

| Property Profile Success | % of Top-N compounds meeting ADMET criteria | Increases to >80% | |

| Balance Metrics | Exploration-Exploitation Ratio | (New Scaffolds Sampled) / (Analogues of Top-Scaffold Sampled) | Decreases from >1 to <1 |

| Pareto Front Progress | # of non-dominated solutions in multi-parameter space | Increases steadily |

Table 2: Research Reagent Solutions Toolkit

| Item | Function | Example/Supplier |

|---|---|---|

| Diversity-Oriented Synthesis (DOS) Libraries | Provides broad, scaffold-diverse starting sets for exploration. | ChemDiv DOSet, Life Chemicals NTD |

| DNA-Encoded Library (DEL) Technology | Enables ultra-deep sampling (10^6-9) of chemical space for hit discovery. | X-Chem, Vipergen |

| Fragment Screening Library | Explores fundamental binding motifs with low molecular complexity. | Zenobia, Astex F2X |

| Analogue-Producing Building Blocks | Focused sets of reagents for rapid SAR exploitation around a hit. | Enamine REAL, Sigma-Aldrich |

| In Silico Design Software | Virtual screening & generative models for guided exploration/exploitation. | Schrodinger, OpenEye, REINVENT |

| High-Throughput Screening (HTS) Assays | Provides primary activity data for large, diverse sets (exploration). | Axxam, Eurofins |

| Medium-Throughput SAR Assays | Provides detailed data for focused libraries (exploitation). | Custom biochemical/biophysical |

Diagrams

Title: Molecular Search Strategy Divergence

Title: Exploitation Depth Optimization Pathways

Modern Algorithms and Practical Implementation in Drug Discovery Pipelines

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My Bayesian Optimization (BO) loop appears to get "stuck," repeatedly suggesting similar molecular structures and not exploring the chemical space effectively. How can I improve exploration? A: This indicates an imbalance favoring exploitation. Implement or adjust the following:

- Increase κ (kappa) in Upper Confidence Bound (UCB): Start with a higher value (e.g., κ=5) to weight uncertainty more heavily, encouraging exploration of regions with high model variance.

- Switch or modify the acquisition function: Consider using Expected Improvement (EI) with a larger ξ (xi) parameter, or try Probability of Improvement (PI) for more aggressive exploration near boundaries.

- Diversify the initial design: Ensure your initial dataset for the surrogate model (DoE) is space-filling (e.g., using Sobol sequences) and sufficiently large (>50 points for moderate-dimensional spaces).

- Periodically inject random samples: Introduce a small percentage (e.g., 5%) of purely random candidates into each iteration's batch to break cycles.

Q2: The optimization converges too quickly to a suboptimal region, likely due to a flawed surrogate model. What are the key diagnostic steps? A: Follow this diagnostic protocol:

- Check model fit: Plot observed vs. predicted values for a held-out test set. Calculate quantitative metrics.

- Review kernel choice: A standard Matérn kernel is a robust default. For molecular descriptors, consider composite kernels (e.g., Matérn + WhiteKernel to model noise).

- Validate hyperparameters: Re-run the optimization of the Gaussian Process (GP) hyperparameters (length scales, noise) from multiple random starts to avoid poor local minima.

- Assess input features: The problem may lie with your molecular representation. Evaluate the sensitivity of predictions to small perturbations in the feature vector.

Key Diagnostic Metrics Table

| Metric | Formula | Target Value | Indication of Problem |

|---|---|---|---|

| Root Mean Square Error (RMSE) | $\sqrt{\frac{1}{N}\sum{i=1}^{N}(yi - \hat{y}_i)^2}$ | Close to measurement noise | High value indicates poor predictive accuracy. |

| Coefficient of Determination (R²) | $1 - \frac{\sum (yi - \hat{y}i)^2}{\sum (y_i - \bar{y})^2}$ | Close to 1.0 | Low or negative value indicates the model explains little variance. |

| Mean Standardized Log Loss (MSLL) | $\frac{1}{N}\sum [\frac{(yi - \mui)^2}{2\sigmai^2} + \frac{1}{2}\ln(2\pi\sigmai^2)]$ | Negative (lower is better) | High positive values indicate poorly calibrated uncertainty estimates. |

Q3: How do I handle discrete and mixed-type variables (e.g., categorical functional groups, integer counts) within a BO framework for molecules? A: Standard GPs assume continuous inputs. Use these adaptation strategies:

- One-Hot Encoding: Transform categorical variables into binary vectors. Use a kernel that operates on this representation, like a dot product kernel combined with a continuous kernel.

- Specialized Kernels: Implement kernels designed for discrete spaces, such as the Hamming kernel for categorical variables or the Tanimoto kernel for molecular fingerprints.

- Latent Variable Approach: Embed discrete choices into a continuous latent space learned jointly with the GP model.

Q4: Batch parallelization is essential for my high-throughput screening. How can I run parallel BO without invalidating the acquisition function? A: Use batch acquisition strategies that penalize intra-batch similarity:

- Local Penalization: Approximate the acquisition function and then iteratively penalize areas around already-selected points in the same batch.

- Thompson Sampling: Draw a sample function from the posterior GP and optimize multiple points on this single sample.

q-Acquisition Functions: Use formalq-EIorq-UCBmethods that select a batch ofqpoints by integrating over the joint posterior of their outcomes (computationally intensive but exact).

Experimental Protocol: Implementing a Balanced BO Cycle for Molecular Property Optimization

Objective: To optimize a target molecular property (e.g., binding affinity prediction score) while maintaining a balance between exploring novel chemical regions and exploiting known high-performance scaffolds.

Materials & Reagents: Research Reagent Solutions Table

| Item | Function & Specification |

|---|---|

| Molecular Dataset | Curated set of molecules with associated property data (e.g., ChEMBL, PubChem). Serves as initial Design of Experiments (DoE). |

| Fingerprint/Descriptor Generator | Software (e.g., RDKit) to convert SMILES strings to numerical features (e.g., ECFP4 fingerprints, physico-chemical descriptors). |

| Gaussian Process Library | Python library (e.g., GPyTorch, scikit-learn) to build the surrogate model that predicts property and uncertainty. |

| Acquisition Function Optimizer | Global optimizer (e.g., L-BFGS-B, DIRECT, or a genetic algorithm) to find the molecule maximizing the acquisition function. |

| Molecular Sampler/Generator | Method to propose new candidate molecules (e.g., a chemical space enumeration tool, a genetic algorithm, or a SMILES generator). |

| Property Evaluation Function | A in silico model (e.g., QSAR, docking score) or an in vitro assay protocol to yield the target property value for new molecules. |

Methodology:

- Initialization (DoE): Select

N_init(e.g., 50) diverse molecules from your available space. Compute their target property values to form the initial datasetD = {(x_i, y_i)}. - Surrogate Model Training: Train a Gaussian Process on

D. Standardize theyvalues. Optimize kernel hyperparameters by maximizing the marginal log-likelihood. - Acquisition Function Maximization:

- Define the balance parameter (e.g., κ for UCB).

- Using your molecular sampler, generate a large candidate pool

C. - Compute the mean

μ(x)and varianceσ²(x)for allxinCusing the trained GP. - Calculate the acquisition function

a(x)(e.g.,UCB(x) = μ(x) + κ * σ(x)) for each candidate. - Select the top

qcandidates (q= batch size) maximizinga(x).

- Parallel Evaluation: Subject the selected

qcandidates to the property evaluation function (simulation or experiment) to obtain their true valuesy_new. - Data Augmentation & Iteration: Augment the dataset:

D = D ∪ {(x_new, y_new)}. Return to Step 2. Continue for a predefined number of iterations or until performance plateaus. - Analysis: Plot the best observed property value vs. iteration number to assess the efficiency and balance of the search.

Visualizations

Diagram 1: BO Cycle for Molecular Design

Diagram 2: The Exploration-Exploitation Trade-off in Acquisition

Active Learning and Diversity Selection for Efficient Exploration

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My active learning loop is selecting very similar molecules in each iteration, leading to poor exploration. How can I improve diversity? A: This is a classic exploitation bias. Implement a diversity selection module. Use a distance metric (e.g., Tanimoto distance on Morgan fingerprints) to ensure new batches are not only high-scoring but also dissimilar from each other and the training set. A common strategy is to use MaxMin sampling: for each candidate in a pool, calculate its minimum distance to the already-selected batch and the existing training data, then select the candidate with the maximum of these minimum distances.

Q2: The surrogate model's predictions are inaccurate for regions of chemical space far from the training data. How should I handle this? A: This indicates high model uncertainty in unexplored areas. Use an acquisition function that balances exploration (high uncertainty) and exploitation (high predicted score). Implement Upper Confidence Bound (UCB) or Thompson Sampling. For probabilistic models (e.g., Gaussian Process), query points with the highest predictive variance. For other models, train an ensemble; use the standard deviation of ensemble predictions as an uncertainty metric and select points where this is high.

Q3: My computational budget for property evaluation (e.g., docking, simulation) is very limited. What's the most efficient experimental protocol? A: Adopt a batch-mode active learning protocol with a diversity-uncertainty hybrid query strategy.

- Initialization: Randomly select and evaluate a small, diverse seed set (50-100 molecules).

- Surrogate Model Training: Train your predictive model (e.g., Graph Neural Network, Random Forest) on all evaluated data.

- Batch Selection: From a large, unlabeled pool (~10k molecules): a. Calculate predictions and uncertainty estimates for all molecules. b. Shortlist the top 20% by predicted score (exploitation). c. From this shortlist, apply MaxMin diversity selection (see Q1) to choose the final batch (e.g., 10-20 molecules) for evaluation.

- Iteration: Evaluate the batch, add data to the training set, retrain the model, and repeat from step 3 until the budget is exhausted.

Q4: How do I quantitatively know if my search strategy is effectively balancing exploration and exploitation? A: Monitor key metrics throughout the campaign and log them in a table for each iteration.

Table 1: Key Performance Metrics for Active Learning Campaigns

| Metric | Formula/Description | Target | Interpretation |

|---|---|---|---|

| Cumulative Max | Highest activity score found up to iteration t | Monotonically increasing | Measures exploitation success. |

| Average Batch Diversity | Mean pairwise distance within each acquired batch | Stable or slowly decreasing | High values indicate sustained exploration. |

| Exploration Ratio | (Avg. min. distance of batch to training set) / (Avg. intra-batch distance) | ~1.0 (balanced) | >>1: over-exploration. <<1: over-exploitation. |

| Model Uncertainty | Avg. predictive variance/ensemble std. dev. of acquired batch | Initially high, then decreasing | Validates exploration of uncertain regions. |

| Hit Rate | % of molecules in batch exceeding a score threshold S | Ideally increases over time | Measures efficient identification of actives. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for an Active Learning-Driven Molecular Search

| Item | Function in the Experiment |

|---|---|

| Molecular Library (e.g., ZINC20, Enamine REAL) | Large, searchable virtual pool of synthesizable compounds representing the explorable chemical space. |

| Molecular Fingerprints (e.g., ECFP4, Morgan) | Numerical vector representations of molecular structure enabling similarity/distance calculations for diversity selection. |

| Surrogate Model (e.g., Directed Message Passing Neural Network, Gaussian Process Regression) | Machine learning model trained on existing data to predict molecular properties, enabling fast virtual screening. |

| Uncertainty Quantification Method (e.g., Ensemble, Monte Carlo Dropout, Bayesian NN) | Technique to estimate the model's confidence in its predictions, crucial for identifying exploration frontiers. |

| Acquisition Function (e.g., UCB, Expected Improvement) | Algorithmic rule that uses the surrogate model's prediction and uncertainty to score and rank candidate molecules for the next experiment. |

| Diversity Selection Algorithm (e.g., MaxMin, K-Means Clustering, Leaderboard) | Method to ensure selected molecules are structurally diverse, preventing cluster bias and promoting broad exploration. |

| Property Evaluation Engine (e.g., Molecular Docking, MD Simulation, In Vitro Assay) | The (often costly) ground-truth experiment that provides training labels for the surrogate model. |

Experimental Protocol: Batch Active Learning for Virtual Screening

Title: Iterative Batch Selection for Molecular Property Optimization

Methodology:

- Data Preparation: Assemble a curated virtual library (e.g., 50,000 molecules) and compute their Morgan fingerprints (radius=2, nBits=2048).

- Initial Seed Set: Use k-medoids clustering on the fingerprints to select 100 maximally diverse molecules as the initial training set. Obtain their target property values (e.g., docking score) to form labeled data D.

- Iterative Loop (Repeat for N cycles, e.g., 20 cycles): a. Model Training: Train an ensemble of 5 Random Forest regressors on D to predict property y from fingerprints x. b. Pool Prediction: For all unlabeled molecules in the library U, obtain the mean prediction (μ) and standard deviation (σ) from the ensemble. c. Acquisition Scoring: Calculate the Upper Confidence Bound score: UCB(x) = μ(x) + κ * σ(x), where κ is an exploration weight (start with κ=3.0). d. Candidate Shortlisting: Rank U by UCB and retain the top 1000 candidates. e. Diverse Batch Selection: Apply the MaxMin algorithm on the fingerprints of the shortlist, with reference to D, to select the final batch B of 25 molecules. f. Expensive Evaluation: Obtain the true property value for each molecule in batch B via the high-fidelity method (e.g., docking). g. Data Augmentation: Add the new (x, y) pairs from B to the training set: D = D ∪ B.

- Analysis: Plot the cumulative maximum property value and average batch diversity versus iteration number to assess performance.

Visualizations

Diagram 1: Active Learning Cycle for Molecular Search

Diagram 2: Exploration-Exploitation Trade-off in Acquisition

Reinforcement Learning (RL) forde novoMolecular Generation

Technical Support Center

Troubleshooting Guides

Issue 1: Agent Fails to Generate Valid Molecular Structures

- Problem: The RL agent outputs strings that are not parsable by the chemical representation toolkit (e.g., SMILES, SELFIES), resulting in 100% invalid generation.

- Diagnosis: This is often an exploration-exploitation imbalance where the agent explores syntax spaces too aggressively without exploiting known grammatical rules.

- Solution:

- Implement Curriculum Learning: Start training with a simplified action space or shorter sequence lengths.

- Adjust Reward Shaping: Introduce a small negative reward (-0.1) for each invalid step during early training to guide exploitation of valid syntax.

- Switch Representation: Transition from SMILES to SELFIES, which has a guaranteed 100% validity rate, to isolate policy learning from grammar constraints.

Issue 2: Mode Collapse in Generative Model

- Problem: The generator produces a very limited set of highly similar molecules, despite a diverse training set, indicating failed exploration.

- Diagnosis: The discriminator or reward model over-exploits a specific high-scoring region, causing the generator to collapse.

- Solution:

- Apply Gradient Penalty: Use a Wasserstein GAN with Gradient Penalty (WGAN-GP) to stabilize training and prevent discriminator over-fitting.

- Introduce Stochasticity: Add a diversity-promoting term (e.g., based on Tanimoto similarity) to the reward function:

R_total = R_property + λ * Diversity(P_generated). - Use a Experience Replay Buffer: Maintain a large buffer of past generated molecules. Sample batches from this buffer to prevent the policy from over-optimizing for the current discriminator's preferences.

Issue 3: Reward Hacking or Optimization Artifacts

- Problem: Molecules achieve high predicted reward (e.g., QED, binding affinity) but contain chemically meaningless or unstable substructures (e.g., long carbon chains without stabilizing groups).

- Diagnosis: The reward function is incomplete, allowing the agent to exploit the predictive model's weaknesses.

- Solution:

- Multi-Objective Reward: Combine the primary objective with penalizing rewards from a ring-based penalty or a synthetic accessibility (SA) score.

- Adversarial Validation: Train a classifier to distinguish between generated molecules and known drug-like molecules (e.g., from ChEMBL). Use its output as a regularization reward.

- Post-Hoc Filtering: Implement a rule-based filter to remove molecules with undesired substructures (e.g., PAINS) before they are added to the experience buffer.

Issue 4: Unstable or Divergent Training Loss

- Problem: The policy gradient loss exhibits large spikes or diverges, making learning impossible.

- Diagnosis: Typically caused by too high a learning rate, poor normalization of advantages, or extremely large gradient updates.

- Solution:

- Gradient Clipping: Enforce a maximum norm (e.g., 0.5) for policy gradient updates.

- Advantage Normalization: Normalize advantages within each batch to have zero mean and unit variance.

- Tune Hyperparameters Systematically: Follow a protocol to find optimal settings for learning rate, entropy coefficient, and discount factor (γ).

Frequently Asked Questions (FAQs)

Q1: How do I quantitatively balance exploration and exploitation in molecular RL? A: Use metrics that separately capture each aspect. Track Exploitation via the average property score of the top 10% generated molecules. Track Exploration via the internal diversity (average pairwise Tanimoto dissimilarity) of a generated batch (e.g., 1000 molecules). Aim for improvements in both over time.

Q2: What is a recommended benchmark setup to compare different RL algorithms for this task? A: Use the GuacaMol benchmark suite. A standard protocol is:

- Objective: Maximize the Quantitative Estimate of Drug-likeness (QED) score.

- Baselines: Compare against Hill-Climb, Best of 1000, and a random sampler.

- Key Metrics: Report the Score (best QED found), Diversity (average pairwise Tanimoto distance in final population), and Number of Calls to the scoring function (efficiency).

Q3: My agent learns slowly. What are the most impactful speed optimizations? A: 1) Vectorized Environment: Use parallelized molecular generation (e.g., 64-128 workers) to gather more experience per second. 2) Pre-computed Features: Cache calculated molecular descriptors/fingerprints. 3) Simplified Reward Model: Start with a fast, approximate reward function (like a random forest QSAR model) before switching to a more accurate, slower one (like a docking simulation).

Q4: How do I integrate prior chemical knowledge (biasing) into the RL process without stifling creativity?

A: Implement a Bias-Reward mechanism. Use a pretrained model on a large corpus of known molecules (e.g., a GPT on PubChem SMILES) to assign a likelihood (P_prior) to a generated molecule. The final reward becomes: R = R_objective + β * log(P_prior). Adjust β to control the strength of the bias, balancing prior knowledge exploitation with novel space exploration.

Data Presentation

Table 1: Performance Comparison of RL Algorithms on GuacaMol QED Benchmark

| Algorithm | Best QED Score (↑) | Top 100 Diversity (↑) | Scoring Function Calls (↓) | Key Exploration Mechanism |

|---|---|---|---|---|

| REINVENT | 0.948 | 0.856 | ~5,000 | Augmented Likelihood (Prior) |

| MolDQN | 0.927 | 0.912 | ~15,000 | ε-Greedy & Experience Replay |

| GraphGA | 0.943 | 0.905 | ~20,000 | Genetic Crossover/Mutation |

| Best of 1000 (Baseline) | 0.948 | 0.802 | 1,000 | Random Sampling |

Table 2: Impact of Entropy Coefficient (β) on Exploration-Exploitation Trade-off (Experiment: PPO agent trained for 2,000 steps to maximize Penalized LogP)

| Entropy Coefficient (β) | Avg. Final Reward (↑) | Valid Molecule % (↑) | Unique Molecule % (↑) | Description |

|---|---|---|---|---|

| 0.01 | 2.34 ± 0.41 | 98.5% | 65.2% | High exploitation, lower diversity |

| 0.10 | 3.01 ± 0.52 | 99.1% | 82.7% | Balanced trade-off |

| 1.00 | 1.89 ± 0.87 | 99.4% | 96.3% | High exploration, lower reward |

Experimental Protocols

Protocol 1: Training a REINVENT-style Agent with a Prior Objective: Generate novel molecules with high ScafHop score (scaffold hopping potential).

- Data Preparation: Curate a set of 10,000 known active molecules from a target family (e.g., kinases). Compute their Morgan fingerprints (radius 2, 2048 bits).

- Prior Training: Train a RNN (1 LSTM layer, 512 hidden units) on SMILES strings from ChEMBL (~1.5M molecules) for 20 epochs. This is the "Prior" agent.

- Agent Initialization: Duplicate the Prior network to create the "Agent" network.

- Reward Definition:

R = Σ (Similarity(Agent_mol, Ref_mol) for Ref_mol in 10 nearest neighbors from known actives). - Rollout & Update: For N epochs:

- Agent generates a batch of 64 SMILES.

- Compute reward R for each valid SMILES.

- Compute augmented likelihood:

log(P_agent) + σ * R, where σ is a scalar weight. - Update Agent network weights via gradient ascent to maximize the augmented likelihood relative to the Prior (Kullback–Leibler divergence regularization).

- Evaluation: Assess generated molecules for novelty (Tanimoto < 0.4 to training set) and ScafHop score.

Protocol 2: Implementing a MolDQN Agent (Deep Q-Learning) Objective: Optimize multiple properties simultaneously (e.g., QED > 0.6, SAS < 4, MW < 500).

- Environment Definition: State

s_t= current partial SMILES string. Actiona_t= next character from the SMILES vocabulary. - Multi-Objective Reward: Design a final step reward:

R_final = (QED/0.9) + (5/SAS) + (500/MW). Clip each term to a max of 1. Intermediate steps receiveR_step = 0. - Network Architecture: Use a Dueling DQN with three 256-unit dense layers after an embedding layer for the SMILES string.

- Training Loop: For T steps:

- Select action via ε-greedy (ε decays from 1.0 to 0.01).

- Execute action, observe new state and reward.

- Store transition

(s_t, a_t, r_t, s_{t+1})in replay buffer (capacity 1M). - Sample minibatch of 128, compute Q-targets:

r + γ * max_a Q_target(s_{t+1}, a). - Update online network by minimizing MSE loss against Q-targets.

- Update target network every 100 steps (soft or hard update).

- Evaluation: Monitor the Pareto front of the three objectives over time.

Mandatory Visualization

Title: RL for Molecular Generation Core Loop

Title: Balancing Exploration & Exploitation in Molecular Search

The Scientist's Toolkit

Table 3: Essential Research Reagents & Tools for RL Molecular Generation

| Item | Function & Relevance |

|---|---|

| RDKit | Open-source cheminformatics toolkit. Used for molecule validation, descriptor calculation, fingerprint generation, and substructure analysis. Core to defining the state and reward. |

| GuacaMol Benchmark Suite | Standardized benchmarks and datasets for assessing de novo molecular generation models. Provides objectives (e.g., QED, LogP) and baselines for fair comparison. |

| SELFIES (Self-Referencing Embedded Strings) | A 100% robust molecular string representation. Eliminates the problem of invalid SMILES, allowing RL agents to focus purely on property optimization. |

| DeepChem | A library providing out-of-the-box implementations of molecular featurizers, deep learning models, and hyperparameter tuning tools, useful for building reward models. |

| OpenAI Gym / ChemGym | API for creating custom RL environments. Allows researchers to define their own molecular state, action space, and reward function for specialized tasks. |

| WGAN-GP (Wasserstein GAN with Gradient Penalty) | A stable framework for training the discriminator in adversarial-style RL. Prevents mode collapse, encouraging the generator to explore a wider molecular space. |

| TensorBoard / Weights & Biases | Experiment tracking tools. Critical for visualizing the trade-off between exploration and exploitation metrics (reward vs. diversity) over training time. |

| ChEMBL Database | A large-scale, open database of bioactive molecules with curated property data. Used to train prior models and as a source of known actives for similarity-based rewards. |

Technical Support Center

This center provides troubleshooting guidance for common issues encountered when running multi-objective optimization (MOO) campaigns within the thesis paradigm of Balancing Exploration and Exploitation in Molecular Search.

Troubleshooting Guides & FAQs

Q1: My optimization loop is getting stuck in a local Pareto front, generating structurally similar, non-diverse candidates. How can I improve exploration?

- Problem: The algorithm is over-exploiting a narrow chemical space.

- Solution Checklist:

- Algorithm Tuning: Increase the weight or parameter for diversity metrics (e.g., Tanimoto distance penalty) in your acquisition function. For evolutionary algorithms, increase the mutation rate.

- Initialization: Review your initial candidate set. Ensure it is structurally diverse. If not, supplement with random or maximally dissimilar compounds.

- Descriptor Space: Check if your molecular descriptors are sufficiently expressive. Consider switching from simple fingerprints (ECFP4) to more continuous descriptors (e.g., RDKit descriptors, latent space vectors from a generative model) to smooth the optimization landscape.

- Incorporate a Explicit Exploration Policy: Implement a probability (e.g., ε-greedy, UCB) to occasionally select candidates that score poorly on current objectives but are highly dissimilar to the existing archive.

Q2: Predictions for synthesizability (e.g., SA Score, RA Score) and in vitro ADMET endpoints are frequently contradictory. Which should be prioritized?

- Problem: Conflicting objectives lead to optimization paralysis.

- Solution Protocol:

- Tiered Filtering: Implement a sequential, hierarchical protocol. First, apply hard filters for critical failures (e.g., reactive functional groups). Then, optimize within the feasible space.

- Weight Adjustment: Dynamically adjust objective weights based on project phase. Early discovery: weight

Potency >> ADMET > Synthesizability. Late-stage selection: weightSynthesizability ~ Key ADMET (e.g., hERG, CYP inhibition) > Potency. - Pareto Analysis: Explicitly generate and visualize the 3D Pareto surface. Use this to identify the "knee" region where small sacrifices in potency yield large gains in synthesizability/ADMET. The choice is strategic, not purely algorithmic.

Q3: My generative model produces molecules with high predicted potency but unrealistic chemistry (e.g., incorrect valence). How do I fix this?

- Problem: The model's exploration exceeds the bounds of chemical reality.

- Solution Guide:

- Validity Enforcement: Use a post-generation valency and ring sanity check. Discard or repair invalid structures.

- Constrained Generation: Retrain or fine-tune your generative model (e.g., VAE, GAN, Transformer) on a corpus pre-filtered for synthetic accessibility. Use reinforcement learning (RL) with a validity penalty.

- Grammar-Based Approach: Switch to or incorporate a grammar-based method (e.g., SMILES/SELFIES grammar, molecular graph grammar) which guarantees 100% syntactically and chemically valid outputs by construction.

Q4: The computational cost of evaluating all three objectives (Potency, ADMET, Synthesizability) for each candidate is prohibitive. How can I speed this up?

- Problem: High-dimensional objective evaluation bottlenecks the search.

- Solution Protocol:

- Surrogate Models: Train fast, approximate surrogate models (e.g., Random Forest, Gaussian Process, Graph Neural Networks) for each expensive objective. Update them asynchronously with new experimental data.

- Experimental Design: Use a Batch Bayesian Optimization loop. Select a diverse batch of candidates for parallel evaluation (balancing exploration and exploitation within the batch) to maximize information gain per cycle.

- Objective Selection: In early rounds, use ultra-fast 2D-QSAR or descriptor-based filters for ADMET. Reserve slower, more accurate 3D-QSAR or simulation-based methods for the final shortlist.

Data Presentation: Common Multi-Objective Optimization Algorithms

Table 1: Comparison of MOO Algorithms for Molecular Design

| Algorithm | Key Mechanism | Pros for Exploration/Exploitation | Cons | Best For |

|---|---|---|---|---|

| NSGA-II (Genetic Algorithm) | Non-dominated sorting & crowding distance | Excellent for discovering diverse Pareto fronts (Exploration). | Can be computationally heavy; may require many evaluations. | Global search in large, discrete chemical space. |

| MOEA/D | Decomposes MOO into single-objective subproblems | Efficient convergence (Exploitation) towards specific regions of the Pareto front. | Diversity depends on weight vectors; may miss discontinuous fronts. | Focused search with pre-defined objective preferences. |

| Bayesian Optimization (EHVI) | Models objectives with GPs; selects points maximizing Expected Hypervolume Improvement | Intelligent balance; very sample-efficient (Exploitation-focused). | Scalability to high dimensions & large batches is challenging. | Expensive objectives (e.g., docking, simulations). |

| Thompson Sampling | Draws random samples from posterior surrogate models | Natural stochasticity encourages exploration. | Can be slower to converge precisely. | Maintaining diversity in batch selection. |

Experimental Protocol: A Standard MOO Cycle for Lead Optimization

Protocol Title: Iterative Multi-Objective Molecular Optimization with Surrogate Models

Initialization:

- Input: A starting library of 500-2000 molecules with data for Potency (e.g., pIC50), ADMET predictors (e.g., QikProp logP, PSA), and Synthesizability (e.g., SA Score, RA Score).

- Step: Train initial surrogate models (e.g., Gaussian Process Regressors) for each objective using this data.

Candidate Generation:

- Step: Use a generative model (e.g., JT-VAE) or a large virtual library (e.g., Enamine REAL) to propose 50,000 candidate molecules.

Surrogate Prediction & Multi-Objective Selection:

- Step: Use the surrogate models to predict all three objectives for all candidates.

- Step: Apply the NSGA-II selection algorithm to the predicted objectives to identify the Pareto-optimal set of ~1000 candidates.

Acquisition & Batch Selection:

- Step: From the Pareto set, apply the Expected Hypervolume Improvement (EHVI) acquisition function to select a final, diverse batch of 5-20 molecules for synthesis and testing. This balances picking high-performance molecules (exploitation) and uncertain ones (exploration).

Experimental Evaluation & Loop Closure:

- Step: Synthesize and experimentally test the batch for true potency and key ADMET endpoints (e.g., metabolic stability, permeability).

- Step: Add the new experimental data to the training set.

- Step: Retrain/update the surrogate models.

- Step: Return to Step 2. Repeat for 5-10 cycles.

Visualizations

Diagram 1: MOO Balancing Exploration and Exploitation

Diagram 2: Multi-Objective Optimization Workflow

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Computational MOO

| Item / Software | Function in MOO Cycle | Example / Vendor |

|---|---|---|

| Cheminformatics Toolkit | Handles molecule I/O, descriptor calculation, fingerprinting, and basic filtering. | RDKit, OpenBabel |

| Generative Chemistry Model | Explores chemical space by generating novel molecular structures. | JT-VAE, REINVENT, Generative Graph Networks |

| Surrogate Model Library | Provides algorithms to build fast predictive models for expensive objectives. | scikit-learn (GP, RF), DeepChem (GNN), GPyTorch |

| Multi-Objective Optimization Framework | Implements selection, sorting, and acquisition functions for MOO. | pymoo, BoTorch, DESMART |

| ADMET Prediction Suite | Offers a battery of pre-built or trainable models for key pharmacokinetic properties. | ADMET Predictor (Simulations Plus), StarDrop, QikProp (Schrödinger) |

| Synthesizability Scorer | Quantifies the ease of synthesis via learned rules or fragment complexity. | RAscore, SA Score, SYBA, AiZynthFinder |

| High-Throughput Virtual Library | Provides a vast, commercially accessible space for candidate screening. | Enamine REAL, Mcule, ZINC |

| Laboratory Information Management System (LIMS) | Tracks the experimental results of synthesized batches, closing the digital loop. | Benchling, Dotmatics, self-hosted solutions |

Integration with High-Throughput Screening and Virtual Libraries

Technical Support Center

Troubleshooting Guides & FAQs

FAQ Category 1: Data Integration & Management

Q1: Our HTS hit list and virtual screening (VS) hits show no overlap. How do we reconcile these datasets?

- A: This is a classic exploration (VS) vs. exploitation (HTS) conflict. First, ensure data normalization. Use the table below to compare key metrics and identify biases.

Table 1: Comparative Analysis of HTS vs. Virtual Screening Outputs

Parameter HTS Campaign Virtual Library Screen Recommended Reconciliation Action Library Size 500,000 compounds 10,000,000 compounds Prioritize HTS hits for exploitation; sample top VS hits for exploration. Hit Rate 0.1% 0.05% The higher HTS hit rate validates the assay. Use VS to explore novel chemotypes. Avg. Molecular Weight 450 Da 380 Da Filter both sets to a consistent range (e.g., 350-500 Da). Primary Scaffolds 3 predominant chemotypes 15+ diverse chemotypes Cluster VS hits. Select 1-2 representative from each novel cluster for experimental validation. Q2: What is the optimal protocol for integrating real HTS data with virtual library priors?

- A: Protocol for Bayesian Update of Virtual Screening Models.

- Input: Confirmed active/inactive compounds from HTS primary screen.

- Training: Use the HTS data to fine-tune or retrain the initial VS machine learning model (e.g., Random Forest, Deep Neural Net). Weight HTS data points higher than the original virtual library data.

- Rescoring: Re-score the enlarged virtual library (10M+ compounds) with the updated model.

- Selection: Apply a diversity filter to the top 5,000 rescored compounds to ensure novel scaffold exploration alongside similarity to HTS hits.

- A: Protocol for Bayesian Update of Virtual Screening Models.

FAQ Category 2: Experimental Validation

Q3: How do we prioritize compounds from a merged HTS/VS list for confirmatory assays?

- A: Implement a multi-parameter scoring system. Calculate a weighted "Priority Score" for each compound:

Priority Score = (0.4 * pActivity) + (0.3 * Synthetic Accessibility) + (0.2 * Novelty Score) + (0.1 * Drug-likeness). Novelty Score is 1 - Tanimoto similarity to nearest HTS hit. Rank compounds and select the top 100 for confirmation.

- A: Implement a multi-parameter scoring system. Calculate a weighted "Priority Score" for each compound:

Q4: Our secondary assay invalidates >80% of primary HTS/VS hits. Is this a workflow issue?

- A: Likely yes. Follow this strict counter-screen protocol to identify false positives.

- Protocol: Orthogonal Assay Cascade for Hit Confirmation.

- Primary Hit: Compound shows >50% activity at 10 µM in target biochemical assay.

- Dose-Response: Generate a 10-point IC50/EC50 curve in the primary assay. Discard compounds with poor curve fit (R² < 0.8) or efficacy <50%.

- Orthogonal Biophysical Assay: Test compounds passing step 2 in a Surface Plasmon Resonance (SPR) or Thermal Shift Assay (TSA). Discard compounds showing no direct binding or stabilization.

- Cell-Based Counter-Screen: Test remaining compounds in a cell viability assay and a reporter assay against an unrelated target to rule out nonspecific cytotoxicity and promiscuous inhibition.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Integrated HTS/VS Workflows

| Item | Function & Rationale |

|---|---|

| FRET-based Assay Kit | Enables homogeneous, high-throughput biochemical screening. Provides robust signal-to-noise for primary HTS. |

| SPR Chip with Immobilized Target | Provides label-free, biophysical confirmation of direct compound binding, filtering out assay artifacts. |

| Ready-to-Assay Membrane Protein | For difficult targets (GPCRs, ion channels), these pre-purified proteins ensure consistent performance in binding assays. |

| Diversity-Oriented Synthesis (DOS) Library | A physically available library of synthetically tractable compounds with high scaffold diversity, ideal for testing exploration strategies post-virtual screen. |

| qPCR Reagents for Gene Expression | Critical for cell-based secondary assays to measure functional downstream effects of target modulation. |

Visualizations

Integrated HTS and VL Screening Workflow

Hit Validation Cascade for HTS/VS Integration

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: In a multi-parameter lead optimization campaign (e.g., optimizing for potency, solubility, and metabolic stability), should I use a single composite reward score or a multi-armed bandit for each objective? A: For most drug discovery campaigns, a single composite reward is recommended. Define a weighted scoring function (e.g., pIC50 > 7.0 = 3 points, CLhep < 15 µL/min/mg = 2 points) that aligns with your target product profile. This simplifies the bandit problem to a single reward, allowing standard Thompson Sampling (TS) or Upper Confidence Bound (UCB) application. Running separate bandits per objective ignores crucial trade-offs and can lead to conflicting compound selections.

Q2: My initial compound library is small (< 100 compounds). How do I prevent the algorithm from over-exploiting poor leads due to limited early data? A: Implement a forced exploration phase. Synthesize and test a diverse subset (e.g., 20-30 compounds) selected via clustering (e.g., fingerprint-based) to establish a prior baseline before activating TS/UCB. During the main campaign, artificially inflate the exploration parameter (β in TS, c in UCB) by 50-100% for the first 5-10 batches to compensate for high uncertainty.

Q3: How do I handle batch synthesis and testing, which introduces a delay between compound selection and reward observation? A: Use a delayed feedback model. Maintain a "pending" queue for selected but unevaluated compounds. Update the model's priors (for TS) or confidence intervals (for UCB) only when all data from a batch is received. For TS, sample from the posterior excluding the pending compounds to avoid resampling them while awaiting results.

Q4: The synthetic feasibility of proposed compounds varies greatly. How can I incorporate this cost into the algorithm?

A: Implement a cost-adjusted reward. Define Adjusted Reward = (Predicted Reward) / (Synthetic Complexity Score), where complexity is scored from 1 (easy) to 5 (very difficult). Alternatively, use a constrained bandit variant that selects the arm with the highest reward subject to a complexity threshold per batch.

Q5: My reward metrics are noisy (high experimental variability). Which algorithm is more robust: Thompson Sampling or UCB? A: Thompson Sampling generally performs better under high noise conditions, as it samples from the full posterior distribution, naturally incorporating uncertainty. UCB can become overly optimistic. If using UCB, increase the confidence parameter (c) to encourage more exploration. For both, ensure you model the noise explicitly (e.g., using a Gaussian likelihood in TS).

Troubleshooting Guides

Issue: Algorithm Convergence to a Suboptimal Lead Series Symptoms: After several iterations, the algorithm persistently selects compounds from one chemical series despite predictive models suggesting higher potential in other regions. Diagnosis & Resolution:

- Check Prior Mis-specification: In TS, incorrectly optimistic priors for the exploited series can trap the search. Fix: Re-initialize priors to be more conservative (centered at lower reward with higher variance) and re-run from iteration 5.

- Check for Reward Stagnation: The scoring function may saturate, failing to differentiate between good and excellent compounds. Fix: Introduce a logarithmic or exponential transform to the reward scale to accentuate high-end differences.

- Check Exploration Parameter: The balance may be too skewed toward exploitation. Fix: For UCB, systematically increase c from 2 to 5. For TS, if using a Beta(α,β) prior, ensure β is not too low relative to α.

Issue: High Variance in Batch Performance Symptoms: The average reward of selected compounds fluctuates wildly between synthesis batches. Diagnosis & Resolution:

- Check Batch Size: Batches may be too small (< 5 compounds) for stable reward estimation. Fix: Increase batch size to 8-12 compounds to average out noise.

- Check Contextual Features: The algorithm may be ignoring important molecular descriptors. Fix: Switch from a standard bandit to a contextual bandit (e.g., Linear UCB or Contextual TS) using engineered features (e.g., ECFP6 fingerprints projected via PCA).

- Validate Assay Reliability: Run control compounds in each assay batch to quantify inter-batch experimental noise.

Issue: Infeasible or Long-Synthesis Compounds Being Selected Symptoms: The algorithm frequently proposes compounds estimated by medicinal chemists to have synthetic timelines > 4 weeks. *Diagnosis & Resolution:

- Implement a Feasibility Filter: Integrate a rule-based or ML-based synthetic accessibility filter (e.g., SAscore, RAscore) as a pre-selection gate. Only compounds below a threshold are passed to the bandit algorithm.

- Use a Multi-Fidelity Model: Incorporate a "synthesis time" cost layer. Treat quickly made analogs as "cheap arms" and complex ones as "expensive arms," using a bandit strategy optimized for cost (e.g., UCB with a cost penalty).

Table 1: Simulated Performance Comparison of TS vs. UCB in a 1000-Compound Virtual Campaign

| Metric | Thompson Sampling (Gaussian) | UCB (c=2.5) | Random Selection |

|---|---|---|---|

| Mean Reward at Iteration 50 | 8.7 ± 0.4 | 8.2 ± 0.5 | 5.1 ± 0.8 |

| Cumulative Regret (Lower is Better) | 42.3 | 58.7 | 192.5 |

| % of Batches with Top-10% Compounds | 34% | 28% | 9% |

| Time to Identify Best Compound (Iterations) | 31 | 38 | N/A (not guaranteed) |

Table 2: Key Hyperparameters and Their Typical Ranges

| Algorithm | Parameter | Typical Range | Impact of Increasing Value | |

|---|---|---|---|---|

| Thompson Sampling | Prior Variance (σ²) | 1-10 | Increases initial exploration | |

| Likelihood Variance | 0.1-1.0 | Increases sampling noise, more exploration | ||

| Upper Confidence Bound | Confidence Multiplier (c) | 1.5-3.0 | Increases exploration | |