Navigating the Molecular Maze: Strategies for Resolving Multi-Property Optimization Conflicts in Modern Drug Design

This article provides a comprehensive guide for researchers and drug development professionals on managing the critical challenge of multi-property optimization (MPO) conflicts in drug discovery.

Navigating the Molecular Maze: Strategies for Resolving Multi-Property Optimization Conflicts in Modern Drug Design

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on managing the critical challenge of multi-property optimization (MPO) conflicts in drug discovery. We explore the fundamental origins of property trade-offs, such as potency versus solubility or permeability versus metabolic stability. We detail contemporary methodological frameworks, including weighted scoring, Pareto optimization, and AI-driven approaches, for navigating these conflicts. The article offers practical troubleshooting advice for common optimization dead-ends and compares the validation strategies for emerging de novo design and active learning platforms against traditional methods. The synthesis provides a actionable roadmap for balancing conflicting molecular properties to increase the probability of clinical success.

Understanding the Molecular Compromise: The Inevitable Clash of ADMET, Potency, and Selectivity

Defining the Multi-Property Optimization (MPO) Problem in Drug Discovery

Technical Support Center

FAQs & Troubleshooting Guides

Q1: Our lead compound shows excellent in vitro potency but poor predicted metabolic stability. How do we prioritize which property to optimize first? A: This is a classic MPO conflict. Follow this protocol:

- Calculate a Quantitative MPO Score: Use a desirability function (e.g., Zhou et al., 2022). For each property (Potency: pIC50, Stability: Clint), define a desirability score (d) from 0 to 1.

- Assign Weights: In your therapeutic area (e.g., chronic treatment), stability may be weighted higher (0.7) than potency (0.3).

- Decision: The weighted MPO score guides prioritization. A compound with high potency but very low stability will have a low overall score, indicating stability optimization is critical.

Q2: During scaffold hopping to improve solubility, we observe a sharp drop in target binding affinity. What systematic approaches can rescue the project? A: This suggests the new scaffold disrupts key pharmacophore interactions.

- Troubleshooting Steps:

- Perform Molecular Dynamics (MD) Simulations: Compare binding poses of old and new scaffolds. Identify lost H-bonds or hydrophobic contacts.

- Analyze Structure-Activity Relationship (SAR) Cliffs: Use matched molecular pair analysis on your chemical library to find modifications that increased solubility without affecting potency.

- Propose Targeted Synthesis: Based on MD and SAR, design new hybrids that reintroduce critical interactions (e.g., a specific hydrogen bond donor) while retaining solubility-enhancing groups.

Q3: Our MPO algorithm suggests conflicting structural changes—one to reduce hERG inhibition and another to increase permeability. How do we resolve this? A: Conflicting suggestions often arise from models trained on different chemical spaces.

- Resolution Protocol:

- Audit Training Data: Check the chemical space coverage of your hERG and permeability models. If disjoint, the suggestions may not be globally valid.

- Employ a Pareto Front Analysis: Generate a focused library (~50 compounds) that spans the trade-off space between these two properties. Plot them to visualize the Pareto front.

- Experimental Testing: Screen this focused set to find real-world compromises and refine your models with the new data.

Q4: When applying an MPO scoring function from the literature to our internal project, the top-ranked compounds perform poorly in assays. What could be wrong? A: This indicates a lack of contextual alignment.

- Checklist:

- ✓ Chemical Space Transferability: The published function may be tuned for a specific chemotype (e.g., kinase inhibitors) and fail for yours (e.g., GPCR ligands).

- ✓ Assay Alignment: Ensure your internal assay protocols and endpoints (e.g., kinetic solubility vs. thermodynamic solubility) match those used to train the MPO model.

- ✓ Recalibration: Use a subset of your internal data to recalibrate the weights of the MPO function using Bayesian optimization.

Key MPO Property Ranges & Desirability Functions

Table 1: Common Property Targets and Desirability Thresholds for Oral Drugs.

| Property | Optimal Range | Low Desirability (d=0) | High Desirability (d=1) | Common Assay |

|---|---|---|---|---|

| Potency (pIC50) | > 8.0 | < 6.0 | > 8.0 | Biochemical Assay |

| Microsomal Stability (% remaining) | > 50% | < 20% | > 70% | Human Liver Microsomes |

| Caco-2 Permeability (Papp, 10⁻⁶ cm/s) | > 10 | < 2 | > 20 | Caco-2 Monolayer |

| hERG Inhibition (pIC50) | < 5.0 | > 5.5 | < 4.5 | Patch Clamp / Binding |

| Kinetic Solubility (µM) | > 100 | < 10 | > 500 | Nephelometry |

Table 2: Example Weighted MPO Calculation for a Hypothetical Compound.

| Property | Value | Desirability (dᵢ) | Assigned Weight (wᵢ) | Weighted Score (wᵢ * dᵢ) |

|---|---|---|---|---|

| Potency | pIC50 = 7.2 | 0.60 | 0.25 | 0.15 |

| Stability | 40% remaining | 0.50 | 0.30 | 0.15 |

| Permeability | Papp = 15 | 0.65 | 0.25 | 0.16 |

| hERG Safety | pIC50 = 4.8 | 0.90 | 0.20 | 0.18 |

| Overall MPO Score | Sum = 1.00 | 0.64 |

Experimental Protocols

Protocol 1: Generating a Pareto Front for Two Conflicting Properties Objective: To empirically map the trade-off between metabolic stability (Clint) and target potency (IC50). Materials: See "Scientist's Toolkit" below. Method:

- Library Design: Select 3-5 core scaffolds. For each, plan systematic decoration at the R1 and R2 positions using 5-10 commercially available building blocks known to influence ClogP and aromaticity.

- Parallel Synthesis: Synthesize the planned 50-100 compound library using high-throughput parallel synthesis techniques (e.g., automated microwave reactor).

- High-Throughput Screening: Run all compounds in parallel in:

- A target inhibition assay (e.g., fluorescence polarization).

- A rapid metabolic stability assay (e.g., human liver microsomes with LC-MS/MS readout).

- Data Analysis: Plot Log(Clint) vs. pIC50 for all compounds. Identify the Pareto frontier—compounds where improving one property necessarily worsens the other. These define the optimal trade-off curve.

Protocol 2: Triaging Compounds Using a Tiered MPO Screen Objective: To efficiently filter a large virtual library (>10,000 compounds) before synthesis. Method:

- Tier 1 (Computational Filters): Apply hard filters: Pan-assay interference compounds (PAINS) removal, synthetic accessibility score > 3.5, and rule-of-5 violations > 1.

- Tier 2 (MPO Scoring): For remaining compounds, predict key ADMET properties (QPPR models for permeability, solubility, hERG). Calculate a unified MPO score using a weighted desirability function.

- Tier 3 (Clustering & Selection): Rank by MPO score. From the top 1000, perform maximum dissimilarity selection to choose 100-200 compounds representing diverse chemotypes for synthesis.

- Tier 4 (Experimental Validation): Test the synthesized compounds in the protocol described in Protocol 1.

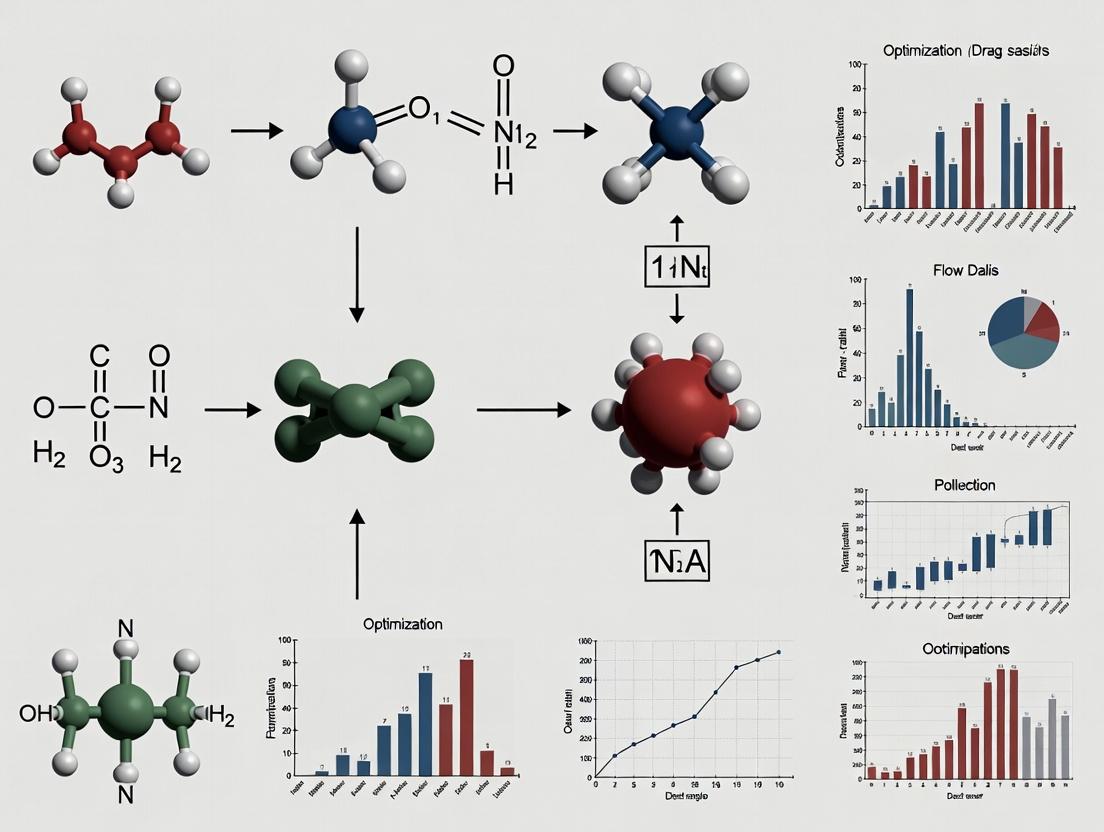

Visualizations

Tiered MPO Screening Workflow

MPO Property Optimization Conflicts

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for MPO-Driven Drug Discovery.

| Item | Function in MPO Experiments | Example Product/Catalog |

|---|---|---|

| Human Liver Microsomes (HLM) | In vitro assessment of Phase I metabolic stability (Clint). | Corning Gentest UltraPool HLM 150-donor |

| Caco-2 Cell Line | Model for predicting intestinal permeability and efflux. | ATCC HTB-37 |

| Phospholipid Vesicles (PLV) | For measuring membrane permeability (PAMPA) as a high-throughput permeability proxy. | Sigma P5358 |

| Recombinant hERG Channel | Key target for in vitro cardiac safety screening. | Eurofins DiscoverX hERG Assay Service |

| Cryopreserved Hepatocytes | For advanced metabolic stability and metabolite identification studies. | BioIVT Human Hepatocytes |

| Multiparameter Assay Plates | Enable simultaneous measurement of cytotoxicity and efficacy in one well. | Corning 3600 Cell Culture Microplates |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My lead compound shows excellent in vitro potency (IC50 < 10 nM) but has very poor aqueous solubility (< 1 µg/mL). What are my primary strategies to improve solubility without destroying potency?

A: This is a classic potency-solubility conflict. High potency often requires strong, lipophilic target binding, which reduces solubility. Your primary strategies are:

- Salt Formation: If the compound has an ionizable group (pKa between 5-12), form a salt with an appropriate counterion (e.g., HCl, Na, mesylate). This is the fastest way to increase solubility.

- Prodrug Approach: Attach a solubilizing promoiety (e.g., phosphate ester) that is cleaved in vivo to release the active parent drug.

- Structural Modification: Introduce a minimal, localized polar group (e.g., hydroxyl, amine) or a heteroatom into a lipophilic region not critical for target binding. Micronization or amorphization can also help physically.

Experimental Protocol: Kinetic Solubility Measurement (UV-plate method)

- Prepare a 10 mM DMSO stock solution of your compound.

- Dilute the stock 1:100 into phosphate-buffered saline (PBS, pH 7.4) in a 96-well plate (final DMSO 1%, compound ~100 µM).

- Shake the plate for 1 hour at room temperature.

- Filter the suspension using a 96-well filter plate (e.g., 0.45 µm hydrophobic PVDF) into a clean receiver plate.

- Quantify the concentration of the filtrate using a UV-plate reader, comparing to a standard curve of the compound in a known solvent (e.g., 1:1 DMSO:MeOH).

Q2: My compound has good passive permeability in Caco-2 assays but shows low apparent permeability (Papp) and high efflux ratio (ER > 3). What does this indicate, and how can I confirm and address it?

A: This indicates your compound is likely a substrate for efflux transporters, predominantly P-glycoprotein (P-gp). Good passive permeability is being counteracted by active efflux. To confirm and address:

- Confirmatory Experiment: Run the Caco-2 assay in both directions (A-to-B and B-to-A) with and without a selective efflux inhibitor (e.g., 10 µM Elacridar for P-gp). A significant increase in A-to-B Papp and decrease in ER with the inhibitor confirms transporter involvement.

- Mitigation Strategies: Reduce molecular weight and lipophilicity (cLogP). Modify structure to remove hydrogen bond donors (HBDs), particularly those >5, as they are key recognition elements for P-gp. Consider scaffold hopping to reduce planar aromatic surface area.

Experimental Protocol: Bidirectional Caco-2 Permeability Assay with Inhibitor

- Culture Caco-2 cells on 24-well transwell inserts for 21-23 days to form confluent, differentiated monolayers (TEER > 300 Ω·cm²).

- Prepare transport buffer (HBSS-HEPES, pH 7.4). For inhibitor studies, add Elacridar (10 µM) to both apical and basolateral sides 30 minutes pre-incubation and during the experiment.

- Add compound (typically 10 µM) to the donor compartment (A or B). Take samples from the receiver compartment at 30, 60, 90, and 120 minutes.

- Analyze samples by LC-MS/MS. Calculate Papp and Efflux Ratio (ER = Papp(B-A) / Papp(A-B)).

Q3: I increased the lipophilicity (cLogP from 2 to 4) of my series to improve permeability, but now I'm seeing signs of off-target toxicity (hERG inhibition, cytotoxicity). How can I dial back toxicity while maintaining permeability?

A: You are facing the lipophilicity-toxicity conflict. High lipophilicity increases membrane partitioning but also promiscuous binding to off-target proteins and metabolic instability.

- Strategic De-risking: Reduce cLogP by ~0.5-1.0 unit through introduction of polarity. Focus on adding polarity to the molecule's center or on side chains not involved in permeability, rather than at the ends.

- Reduce Aromaticity: Replace a phenyl ring with a saturated or partially saturated bioisostere (e.g., cyclohexyl, piperidine) to lower planar surface area, which is linked to hERG and cytotoxicity.

- Introduve Metabolic Soft Spots: Purposefully add a site for Phase I metabolism (e.g., aliphatic hydroxyl) to shorten half-life and reduce accumulation-related toxicity.

Experimental Protocol: hERG Inhibition Patch Clamp Assay (Manual)

- Culture hERG-transfected HEK293 or CHO cells on coverslips.

- Use a patch-clamp rig in whole-cell configuration. Establish a stable baseline current by holding at -80 mV, stepping to +20 mV for 4 sec, then to -50 mV for 6 sec (to elicit tail current).

- Perfuse cells with increasing concentrations of test compound (e.g., 0.1, 1, 10 µM). Record tail current amplitude at each concentration.

- Fit the concentration-response data to a Hill equation to calculate IC50.

Table 1: Ideal Property Ranges to Balance Key Conflicts

| Property | Optimal Range (General Oral Drugs) | Potency-Solubility Conflict | Permeability-Efflux Conflict | Lipophilicity-Toxicity Conflict |

|---|---|---|---|---|

| cLogP | 1-3 | Often >3 for potency | Often >3 for passive permeability | Keep <4 to reduce toxicity risk |

| Solubility (pH 7.4) | >100 µM | Can be <10 µM | Not primary driver | Can be moderate |

| Permeability (Caco-2 Papp, 10⁻⁶ cm/s) | >5 | Not primary driver | High passive (>10) but low net due to efflux | Must be monitored when reducing LogP |

| Efflux Ratio | <2.5 | Not primary driver | >3 is key indicator | Not primary driver |

| Molecular Weight (Da) | <500 | Can exceed for complex targets | Lower is better (<450) | Lower is better (<500) |

| hERG IC50 | >10 µM | Not primary driver | Not primary driver | Often <10 µM if LogP high |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Key Experiments

| Item | Function & Application |

|---|---|

| PBS (Phosphate Buffered Saline), pH 7.4 | Standard aqueous buffer for solubility and permeability assays, mimicking physiological pH. |

| Caco-2 Cell Line | Human colon adenocarcinoma cell line; the gold standard in vitro model for predicting intestinal permeability and efflux. |

| Transwell Permeable Supports | Polycarbonate membrane inserts for culturing cell monolayers for bidirectional transport assays. |

| Elacridar (GF120918) | Potent, selective dual inhibitor of P-gp and BCRP efflux transporters; used in mechanistic permeability studies. |

| hERG-Transfected Cell Line (e.g., HEK293-hERG) | Cell line stably expressing the hERG potassium channel for cardiac safety screening. |

| LC-MS/MS System | Essential analytical tool for quantifying low compound concentrations in complex matrices like transport buffer or plasma. |

Experimental Workflow & Relationship Diagrams

Title: Strategies to Resolve Potency-Solubility Conflict

Title: Diagnosing and Addressing Efflux Transporter Issues

Title: Lipophilicity-Driven Toxicity and Mitigation Pathways

Technical Support Center: Troubleshooting Multi-Property Optimization in Drug Design

FAQs & Troubleshooting Guides

Q1: Our lead compound shows excellent in vitro potency (IC50 < 10 nM) but suffers from extremely poor aqueous solubility (< 1 µg/mL), halting formulation. What are the primary chemical structural drivers of this conflict, and how can we diagnose them?

A: This is a classic Absorption-Potency conflict. High potency often requires large, planar, lipophilic structures for strong target binding (e.g., in kinase inhibitors), which directly opposes solubility needs. Diagnose using these steps:

- Structural Analysis: Calculate logP (ClogP > 5 is a strong indicator), count aromatic rings (>3 is a risk), and identify planar fused ring systems.

- Thermodynamic Solubility Measurement: Follow the Shake-Flask Protocol below to confirm the intrinsic solubility limit.

- Data Correlation: Use the table below to correlate structural features with your measured properties.

Key Structural Drivers of Low Solubility:

| Structural Feature | Impact on Solubility | Typical Threshold for Conflict |

|---|---|---|

| High Lipophilicity (ClogP/LogD) | Reduces aqueous dissolution | ClogP > 5, LogD7.4 > 4 |

| Molecular Rigidity (Fraction sp3) | Increases melting point, reduces dissolution | Fraction sp3 (Fsp3) < 0.3 |

| Aromatic Ring Count | Increases crystal packing density | Number of Aromatic Rings > 3 |

| Low Ionizability (pKa) | Limits salt formation potential | No ionizable group in pKa range 3-10 |

Experimental Protocol: Thermodynamic Solubility (Shake-Flask Method)

- Objective: Determine the equilibrium concentration of the compound in aqueous buffer.

- Materials: Excess solid compound, relevant pH buffer (e.g., Phosphate Buffered Saline, pH 7.4), water bath shaker, HPLC system.

- Method:

- Add a 5-10 mg excess of solid compound to 1 mL of buffer in a sealed vial.

- Agitate in a water bath shaker at 25°C for 24 hours to reach equilibrium.

- Filter the suspension through a 0.45 µm hydrophobic filter (e.g., PVDF) to remove undissolved solid.

- Dilute the filtrate appropriately and quantify concentration using a validated HPLC-UV method against a standard curve.

- Perform in triplicate.

Q2: We are optimizing for metabolic stability (targeting low CYP3A4 clearance) but see a sharp increase in hERG inhibition (cardiotoxicity risk) in the same compound series. What is the structural link?

A: This conflict arises from shared pharmacophores. Blocking metabolically labile sites often involves adding lipophilic, basic amines or incorporating large, planar heteroaromatic systems—features that are also known to bind the hydrophobic/aromatic cavity of the hERG channel pore.

Diagnostic & Mitigation Strategy:

- Calculate pKa and Lipophilicity: Compounds with a basic pKa > 8.0 and high LogD7.4 (>3) are high risk for hERG.

- Introduce Polarity: Strategically add polar groups (e.g., hydroxyl, amide) to reduce LogD without removing the metabolic blocker. Consider carboxylic acids or neutral groups to eliminate the basic center.

- Utilize Predictive Models: Run in silico hERG models early. Use the table below to guide redesign.

Structural Modifications to Balance Stability & hERG:

| Optimization Goal | Typical Structural Change | hERG Risk Consequence | Mitigation Tactic |

|---|---|---|---|

| Block CYP3A4 Oxidation | Add bulky substituent near soft spot | Increases lipophilicity/planarity | Introduce polarity within the bulky group (e.g., morpholine instead of phenylpiperazine) |

| Improve Microsomal Stability | Replace labile group with stable aromatic ring | Increases aromatic count/planarity | Reduce ring count elsewhere or break planarity with sp3 linkers. |

Q3: How do we systematically manage the conflict between achieving high membrane permeability (for CNS targets) and maintaining sufficient solubility for intravenous administration?

A: This Permeability-Solubility conflict is governed by the "Rule of 5" extensions and requires a quantitative balance. The key is to manipulate Lipophilic Efficiency (LipE) and Property-Based Design.

Workflow for Balancing Permeability & Solubility:

- Measure/Calculate Key Properties: Determine LogD7.4, Polar Surface Area (TPSA), and intrinsic solubility.

- Calculate LipE: LipE = pIC50 (or pEC50) - LogD. Aim for high LipE (>5), meaning potency is not purely driven by lipophilicity.

- Apply Solubility-Enhancing Modifications Judiciously: Use the toolkit below. The goal is to add just enough polarity to meet solubility criteria without dropping LogD below the permeability threshold (~LogD 1-3 for good passive permeability).

Title: Workflow for Permeability-Solubility Conflict Resolution

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Primary Function | Role in Resolving Property Conflicts |

|---|---|---|

| Chromatographic LogD7.4 Assay Kit | Measures distribution coefficient at physiological pH. | Quantifies lipophilicity, the central driver of permeability/solubility/toxicity conflicts. |

| Artificial Membrane Permeability Assay (PAMPA) | Predicts passive transcellular permeability. | Screens compounds early for permeability before costly cell-based assays. |

| Recombinant CYP Enzymes (e.g., 3A4, 2D6) | Identifies specific metabolic liabilities and soft spots. | Allows targeted structural blocking to improve stability without indiscriminate lipophilicity increase. |

| hERG Channel Expressing Cell Line | In vitro assessment of cardiotoxicity risk (patch-clamp or flux). | Directly tests the metabolic stability hERG inhibition conflict. |

| High-Throughput Thermodynamic Solubility Assay | Measures equilibrium solubility in buffer. | Provides reliable solubility data to correlate with structural changes. |

| Molecular Fragmentation/Library of Bricks | Pre-synthesized fragments (e.g., polar heterocycles, sp3-rich linkers). | Enables rapid "property-scanning" by introducing specific features to modulate LogD, TPSA, pKa. |

Q4: When applying molecular rigidity (e.g., macrocyclization, adding fused rings) to improve selectivity and potency, we observe a catastrophic drop in solubility and synthetic yield. How can this be planned for?

A: This is a Potency/Specificity vs. Developability conflict. Rigidity reduces the entropic penalty upon binding but often maximizes crystal packing. Proactive planning is essential.

Pre-Modification Risk Assessment Checklist:

- Calculate Fraction sp3 (Fsp3). A starting Fsp3 < 0.25 is high risk; rigidity will push it lower.

- Analyze Synthetic Complexity: Count chiral centers and ring strain in the proposed rigid structure.

- Simulate First: Use computational tools to predict the solubilities of virtual rigid analogs before synthesis.

Mitigation Protocol: "Rigidity with a Polar Handle"

- During the rigid scaffold design, intentionally incorporate at least one heteroatom (N, O) directly into the new ring or bridge.

- This atom can serve as a site for salt formation (if ionizable) or hydration.

- Example: When creating a macrocycle from a linear peptide, replace one hydrocarbon linker with a polyethylene glycol (PEG)-type unit or an ester.

Title: Strategic Rigidification to Avoid Developability Failure

Technical Support Center

Troubleshooting Guide: Multi-Property Optimization (MPO) Conflicts in Drug Design

Issue 1: High In Vitro Potency but Poor Metabolic Stability

- Q: My lead compound shows excellent target binding (IC50 < 10 nM) in biochemical assays but suffers from rapid clearance in human liver microsome (HLM) stability tests. What are the primary troubleshooting steps?

- A: This is a classic MPO conflict between potency and metabolic stability. Follow this protocol:

- Analyze Metabolic Soft Spots: Use liquid chromatography-mass spectrometry (LC-MS) to identify major metabolites from the HLM assay. Common sites include N-dealkylation, O-dealkylation, and aromatic hydroxylation.

- Structure-Guided Mitigation: Employ strategic fluorination or deuteration to block labile sites, or introduce small steric hindrances (e.g., methyl groups) near metabolically labile positions.

- Iterative Design & Testing: Synthesize a focused library of 5-10 analogs with modifications identified in step 2. Re-test in parallel for both potency (binding assay) and stability (HLM half-life).

Issue 2: Achieving Target Engagement but Failing Due to hERG Inhibition

- Q: Our candidate demonstrates robust proof of concept in a disease model but shows concerning hERG channel inhibition in a patch-clamp assay, posing a cardiac safety risk. How can we resolve this?

- A: To navigate the conflict between efficacy and cardiac safety:

- Molecular Determinants Analysis: Perform a computational analysis (e.g., homology modeling, molecular docking) to understand the compound's interaction with the hERG channel's inner cavity, often driven by basic amines and aromatic groups.

- Structural Alert Mitigation: Reduce pKa of basic centers (pKa < 8.0 is often targeted), introduce polarity, or reduce lipophilicity (clogP reduction) to disrupt hydrophobic interactions with hERG.

- Selective Optimization of Side Activities (SOSA): Use the core scaffold but systematically alter the substituent suspected of hERG interaction. Test analogs in a medium-throughput hERG binding assay (in vitro safety panel) early in the optimization cycle.

Issue 3: Optimal Physicochemical Properties but Low In Vivo Efficacy

- Q: A compound series has ideal calculated properties (clogP ~3, TPSA ~80 Ų) but shows weak or no efficacy in the mouse efficacy model. What should I check?

- A: This indicates a potential disconnect between in vitro and in vivo performance.

- Confirm Exposure: First, re-run the in vivo study with robust pharmacokinetic (PK) sampling. Ensure the compound reaches the target site at sufficient concentration (Cmax) and duration (AUC). Low exposure often explains failure.

- Check for Off-Target Binding: If exposure is adequate, profile the compound in a broad in vitro pharmacological panel to identify potential off-target activities that could counteract the intended effect.

- Assess Target Engagement In Vivo: If possible, use a pharmacodynamic (PD) biomarker assay (e.g., phosphorylation status of a downstream protein) in tissues from the dosed animals to confirm that the compound is engaging its intended target in vivo.

Frequently Asked Questions (FAQs)

Q1: What are the most common property conflicts leading to Phase I failure? A: The primary conflicts leading to early clinical failure are between efficacy/physicochemical properties and safety. Specifically:

- Efficacy vs. Metabolic Stability: Achieving high potency often requires lipophilic, aromatic structures, which are prone to rapid Phase I metabolism.

- Permeability vs. Solubility: Increasing lipophilicity to cross cell membranes (e.g., for CNS targets) often decreases aqueous solubility, compromising oral bioavailability.

- Target Potency vs. Selectivity: Highly potent molecules can bind to off-target proteins with similar active sites, leading to toxicity (e.g., hERG inhibition).

Q2: How can I prioritize which MPO conflict to solve first in a lead series? A: Prioritize based on clinical attrition risk. Use this decision matrix:

- Address show-stoppers first: Resolve clear safety liabilities (e.g., genotoxicity, strong hERG inhibition) or fatal ADME flaws (e.g., no oral bioavailability) immediately.

- Quantify trade-offs: Use quantitative metrics like Ligand Efficiency (LE) and Lipophilic Ligand Efficiency (LLE). A compound with high potency but very high lipophilicity (low LLE) is a priority for optimization.

- Consider the target product profile (TPP): Align optimization with the intended route of administration and dosing regimen (e.g., a once-daily oral drug requires higher metabolic stability than an injectable).

Q3: What in silico tools are most effective for early MPO conflict prediction? A: A tiered computational approach is recommended:

- Early Filtering: Use rule-based filters (e.g., RO5, PAINS) and rapid property calculators (clogP, TPSA, HBD/HBA).

- Conflict Prediction: Employ machine learning-based MPO scoring platforms (e.g., AstraZeneca's AZLogD, Random Forest models trained on chemical success) to score compounds across multiple parameters simultaneously.

- Deep Dive: For specific conflicts like hERG, use structure-based modeling (e.g., homology models of the hERG channel) or advanced QSAR models.

Q4: What is a practical experimental workflow for managing MPO? A: Implement an integrated, parallelized workflow to avoid sequential optimization traps.

Title: Integrated MPO Lead Optimization Workflow

Data Presentation: Primary Causes of Attrition in Development

Table 1: Quantitative Analysis of Clinical Phase Attrition Causes (Simplified)

| Development Phase | Primary Cause of Attrition | Estimated Failure Rate | Key MPO Conflict Implicated |

|---|---|---|---|

| Preclinical to Phase I | Poor Pharmacokinetics (PK) / Bioavailability | ~40% | Potency vs. Metabolic Stability; Permeability vs. Solubility |

| Phase II | Lack of Efficacy | ~50-55% | Inadequate in vivo target engagement due to suboptimal physicochemical properties or off-target binding. |

| Phase III | Safety/Toxicity | ~30% | Insufficient selectivity (Potency vs. Selectivity), reactive metabolite formation. |

Table 2: Key Property Ranges for Oral Drug Candidates

| Property | Optimal Range (General Oral Drugs) | "Red Flag" Zone | Measurement Method |

|---|---|---|---|

| clogP | 1 - 3 | >5 | Chromatographic (logD7.4) or computational |

| Molecular Weight (MW) | <500 Da | >600 Da | -- |

| Total Polar Surface Area (TPSA) | 60 - 140 Ų | <40 or >160 Ų | Computational |

| hERG IC50 | >10 µM | <1 µM | Patch-clamp or binding assay |

| Human Liver Microsome (HLM) Stability | % remaining > 50% | % remaining < 20% | LC-MS/MS analysis |

| Solubility (pH 7.4) | >100 µM | <10 µM | Kinetic or thermodynamic assay |

Experimental Protocol: Integrated MPO Profiling for Lead Series

Protocol Title: Parallel In Vitro Profiling to Identify and Mitigate MPO Conflicts

Objective: To simultaneously evaluate key drug-like properties of a compound series (5-20 compounds) to identify optimization conflicts and guide chemical design.

Materials & Reagents (The Scientist's Toolkit):

- Target Binding Assay Kit: (e.g., fluorescence polarization, TR-FRET). Function: Measures primary pharmacological potency (IC50/Kd).

- Human Liver Microsomes (HLM) Pool: Function: Assess metabolic stability by measuring intrinsic clearance.

- Caco-2 Cell Line: Function: Model for predicting intestinal permeability and potential for oral absorption.

- hERG Inhibition Assay Kit: (e.g., Fluorescent membrane potential dye or patch-clamp cells). Function: Flags potential cardiac safety liabilities.

- Phosphate Buffer Saline (PBS) at pH 7.4: Function: Medium for thermodynamic solubility measurement.

- LC-MS/MS System: Function: Quantifies compound concentration in stability, permeability, and solubility assays.

Methodology:

- Sample Preparation: Prepare a master stock solution (10 mM in DMSO) of each test compound. Dilute in appropriate assay buffers for each protocol, ensuring final DMSO concentration ≤0.5% (v/v).

- Parallel Assay Execution:

- Potency: Perform dose-response target inhibition assay (n=3). Calculate IC50.

- Metabolic Stability: Incubate 1 µM compound with 0.5 mg/mL HLM + NADPH. Sample at 0, 5, 15, 30, 60 min. Quench with acetonitrile. Use LC-MS/MS to determine parent compound remaining. Calculate half-life (t1/2).

- Permeability: Seed Caco-2 cells on transwell inserts. On day 21, apply compound to donor chamber (apical for A→B, basolateral for B→A). Sample from receiver chamber at 60 and 120 min. Calculate apparent permeability (Papp) and efflux ratio.

- Solubility: Shake excess solid compound in PBS pH 7.4 for 24h at 25°C. Filter and quantify concentration of supernatant by LC-MS/MS.

- hERG Inhibition: Perform a single-point inhibition assay at 10 µM compound concentration. For hits (>50% inhibition), perform a full IC50 determination.

- Data Integration: Compile all results into a single data table. Calculate efficiency indices (LLE = pIC50 - clogP). Normalize and weight scores based on project TPP to generate a ranked list.

Diagram: Key ADME & Safety Pathways in Drug Attrition

Title: Key ADME & Safety Pathways Leading to Attrition

Technical Support Center: Troubleshooting Multi-Property Optimization Conflicts

This support center provides targeted guidance for researchers navigating the complex landscape of multi-property optimization (MPO) in drug design. The FAQs and protocols are framed within the historical analysis of campaigns where competing objectives—such as potency, solubility, metabolic stability, and selectivity—led to success or failure.

FAQ 1: My lead compound has excellent in vitro potency but consistently fails in vivo efficacy models. What are the primary historical conflict points I should investigate?

Answer: Historically, this is one of the most common optimization failures, often due to a myopic focus on a single property. The conflict typically lies between Target Potency and Drug Metabolism & Pharmacokinetics (DMPK). Successful campaigns retrospectively analyzed this as a systems conflict.

- Primary Culprits: Poor metabolic stability (rapid clearance), low solubility (limiting bioavailability), or inadequate permeability.

- Historical Lesson (VEGFR-2 Inhibitors): Early candidates with sub-nanomolar IC50 failed in vivo due to high lipophilicity (cLogP >5), leading to excessive plasma protein binding and low free drug concentration. Successful candidates (e.g., Sorafenib analogs) balanced potency with controlled lipophilicity (cLogP ~3-4).

Key Quantitative Data from Historical Campaigns: Table 1: Comparative Analysis of Failed vs. Successful Optimization Campaigns on Key Parameters

| Campaign / Compound Series | Primary Target (Potency, IC50) | Conflicting Property | Key Compromise / Solution | Outcome |

|---|---|---|---|---|

| Early β-Secretase (BACE1) Inhibitors (Failed) | <10 nM | High Molecular Weight (>700), Poor BBB Permeability (P-gp substrate) | None initially; potency-driven design. | Clinical failure for Alzheimer's. |

| Later BACE1 Inhibitors (Successful) | ~10-20 nM | Maintained MW <650, introduced polarity to reduce P-gp efflux. | Sacrificed maximal in vitro potency for brain penetrance. | Approved drugs (e.g., Elenbecestat). |

| Early Kinase Inhibitor (c-Met) (Failed) | <1 nM | Off-target toxicity (hERG inhibition, IC50 < 1µM) | None; project halted. | Terminated due to cardiac risk. |

| Successful c-Met Inhibitor (Capmatinib) | ~0.13 nM | Rigorously screened against hERG and optimized structure to reduce basicity. | Introduced steric hindrance near basic amine to disrupt hERG binding. | Approved for NSCLC. |

| Pre-2010 COX-2 Inhibitors (Failed) | High COX-2 Selectivity | Cardiovascular safety (unforeseen off-target effects). | Optimization for selectivity alone was insufficient. | Market withdrawals (e.g., Rofecoxib). |

| Modern NSAID Design (Lesson Learned) | Balanced COX-1/COX-2 inhibition | Integrated cardiovascular safety panels early in lead optimization. | MPO includes broad in vitro safety pharmacology. | Safer therapeutic window. |

FAQ 2: How can I systematically diagnose the root cause of a solubility-potency conflict in my analog series?

Answer: Implement a Parallel Medicinal Chemistry (PMC) diagnostic protocol. Historical successes show that systematic, hypothesis-driven variation is more effective than serial optimization.

Experimental Protocol: Diagnostic PMC Array Objective: To decouple the effects of specific structural motifs on solubility (measured by kinetic solubility in PBS pH 7.4) and potency (target enzyme IC50).

- Define your Core Scaffold: Identify the common structure in your active series.

- Select 3-4 "High-Risk" Regions: Choose sites on the scaffold historically linked to lipophilicity (e.g., aromatic rings, halogens) and potency (e.g., hinge-binding motifs).

- Design a Sparse Matrix Library: Synthesize 20-30 analogs where each "risk region" is varied with 2-3 distinct substituents representing a range of calculated properties (e.g., cLogP, H-bond donors/acceptors, topological polar surface area [TPSA]).

- Parallel Measurement: Test all analogs in parallel for:

- In vitro potency (primary target assay).

- Kinetic solubility (shake-flask method, HPLC/UV quantification).

- Calculated cLogP and TPSA (computational).

- Data Analysis: Plot IC50 vs. Solubility. Use color-coding for specific substituent changes. This visual map will identify which specific change improves solubility with minimal potency loss, revealing the actionable structural handle.

Diagram 1: Diagnostic workflow for solubility-potency conflicts.

FAQ 3: My compound shows promising activity and DMPK but has triggered a toxicity flag in a panel. How do I prioritize optimization efforts without losing key properties?

Answer: This is a Safety vs. Efficacy conflict. The critical step is to determine if the toxicity is mechanism-based (on-target) or off-target. Historical failures often misdiagnosed this.

Experimental Protocol: Toxicity De-risking Cascade

- Confirmatory Assay: Repeat the initial toxicity assay (e.g., mitochondrial toxicity, hERG inhibition, genotoxicity) with a fresh sample in a dose-response format to establish a robust IC50 or TC50.

- Selectivity Ratio: Calculate the ratio between the toxic concentration (e.g., hERG IC50) and the target efficacy concentration (e.g., primary target IC50 or projected human Cmax). A ratio <30 is a major red flag.

- Counter-Screen - On-Target vs. Off-Target:

- On-Target Test: In a relevant cell-based model, does the toxic effect (e.g., cytotoxicity) occur at a similar concentration to the primary pharmacological effect? Use genetic knockdown (siRNA) of your target. If toxicity remains, it is likely off-target.

- Off-Target Profiling: Engage a broad-panel in vitro safety pharmacology screen (e.g., against 50+ GPCRs, kinases, ion channels).

- Structural Informatics: If off-target, use computational models (e.g., similarity searching, 3D pharmacophore) to identify the potential offending off-target. Synthesize minimal analogs to break interaction with the off-target while monitoring primary potency.

Diagram 2: Decision cascade for investigating toxicity flags.

The Scientist's Toolkit: Research Reagent Solutions for MPO Conflict Resolution

Table 2: Essential Tools for Multi-Property Optimization Experiments

| Reagent / Tool | Function in MPO Conflict Resolution | Example / Vendor (Illustrative) |

|---|---|---|

| Phospholipid Vesicle (PLV) Assay Kits | Measures membrane permeability independent of active transport, diagnosing passive diffusion limits in potency-PK conflicts. | PAMPA (Parallel Artificial Membrane Permeability) kits. |

| Metabolic Stability Microsomes (Human, Rat, Mouse Liver) | Provides early, high-throughput data on intrinsic clearance, informing the stability-potency trade-off. | Pooled liver microsomes from Xenotech or Corning. |

| Recombinant CYP450 Isozyme Panels | Identifies specific metabolic soft spots driven by structural motifs, guiding targeted synthesis. | Baculosomes (Invitrogen) for CYP3A4, 2D6, etc. |

| hERG Channel Inhibition Assay | Non-negotiable early screen for cardiovascular risk, a common conflict with basic, lipophilic amines in kinase inhibitors. | Patch-clamp or flux-based assays (Eurofins, ChanTest). |

| Kinetic Solubility Assay Plates | Enables high-throughput measurement of thermodynamic/kinetic solubility for diagnostic PMC libraries. | 96-well filter plates with UV quantification. |

| In Silico Property Prediction Suites | Predicts cLogP, TPSA, pKa, metabolic sites, and ligand efficiencies before synthesis, enabling virtual MPO scoring. | Software like StarDrop, Schrodinger's Suite, MOE. |

| Selectivity Screening Panels | Broad profiling against related targets (e.g., kinase panels) or safety targets to identify off-target toxicity sources early. | Eurofins Cerep Profile, DiscoverX ScanMax. |

Frameworks for Balance: Modern Computational and Experimental MPO Strategies

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My weighted scoring function yields a high score for a compound that fails a key in vitro assay. How do I debug this conflict?

A: This indicates a misalignment between your scoring function weights and experimental reality. Follow this protocol:

- Recalibration Check: Re-evaluate your property weights. A common error is over-emphasizing predicted binding affinity (e.g., docking score) while under-weighting ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties.

- Reference Compound Analysis: Score a set of known active and inactive compounds with your function. If actives score poorly or inactives score highly, the function is not capturing the correct property landscape.

- Sensitivity Analysis Protocol:

- Systematically vary each weight in your function (±10%, ±25%).

- Re-rank your compound library for each variation.

- Identify which weight change causes the problematic compound to drop in rank. This property's weight was likely set too high.

- Solution: Adjust weights based on sensitivity analysis and incorporate a penalty term for the failed assay's predicted property. Re-validate with a separate test set.

Q2: When using the Derringer-Suich desirability function, how do I choose the appropriate shape (linear vs. non-linear) for individual property transformations?

A: The shape determines the penalty for moving away from the target. Use this decision framework:

| Desired Response | Shape Parameter (s, t) | Typical Use Case |

|---|---|---|

| "Target is Best" (Two-sided) | s and t > 1 | Precisely hitting a target pKa or logP value. |

| "Larger is Better" | s = 1 (Linear) | General case for increasing efficacy (e.g., % inhibition). |

| "Larger is Better" | s > 1 (Convex) | Aggressive penalty for falling below target; for critical efficacy thresholds. |

| "Smaller is Better" | t = 1 (Linear) | General case for reducing toxicity or cost. |

| "Smaller is Better" | t > 1 (Convex) | Aggressive penalty for exceeding limit; for stringent safety limits (e.g., hERG IC50). |

Experimental Protocol for Determining Shape:

- Define "acceptable" and "ideal" ranges for each property through literature review and preliminary experiments.

- Plot your transformed desirability (d_i) from 0 to 1 against the raw property value.

- If a linear drop from "ideal" to "acceptable" is tolerable, use a linear shape (s or t=1).

- If performance degrades rapidly outside the ideal zone, use a convex shape (s or t > 1). Fit the parameter to match your historical data on property-activity relationships.

Q3: How do I handle properties with different units and scales when combining them into a single index, without the composite score being dominated by one property?

A: This requires normalization before applying weights or desirability functions.

Detailed Methodology for Robust Normalization:

- Collect Data: Gather property data for all compounds in your optimization set.

- Choose Normalization Method:

- Min-Max Scaling:

(X - X_min) / (X_max - X_min). Sensitive to outliers. - Z-score Standardization:

(X - μ) / σ. Assumes normal distribution. - Robust Scaling:

(X - Median) / IQR. Best for data with outliers.

- Min-Max Scaling:

- Protocol for Min-Max Scaling (Most Common for Desirability):

- For "Larger is Better":

Scaled_Score = (Value - Min_Value) / (Max_Value - Min_Value) - For "Smaller is Better":

Scaled_Score = 1 - [(Value - Min_Value) / (Max_Value - Min_Value)] - Note: Use biologically relevant min/max (e.g., assay limits) or percentiles (e.g., 5th and 95th) instead of absolute dataset min/max to avoid outlier distortion.

- For "Larger is Better":

- Apply Weighting: Multiply normalized scores by their respective weights (wi) where Σwi = 1.

- Combine: Calculate final composite score:

Σ (w_i * Normalized_Score_i).

Q4: My desirability index gives several compounds a perfect score of 1.0, making them indistinguishable. How can I introduce further discrimination?

A: This is a known limitation of the multiplicative geometric mean approach (Overall Desirability D = (Π di^wi)^(1/Σw_i)). Implement a penalized desirability approach.

Protocol for Penalized Desirability Index:

- Calculate individual desirabilities (d_i) as usual.

- Apply a severity factor (pi) for critical properties. Instead of D = geometric mean(di), use:

D_penalized = (Π d_i^(w_i * p_i))^(1/Σ(w_i * p_i))where pi >= 1. For a critical property (e.g., solubility), set pi = 2. This squares the desirability term for that property, applying a harsher penalty if it is sub-optimal. - Alternative: Lexicographic Sorting. First, sort all "perfect" compounds (D=1.0) by the value of your single most critical property (e.g., metabolic stability half-life). Then sort by the second most critical property.

Key Data Tables

Table 1: Example Weighted Scoring Function for Lead Optimization

| Property | Target | Weight (w_i) | Normalization Method | Reason for Weight |

|---|---|---|---|---|

| pIC50 (Potency) | > 8.0 | 0.35 | "Larger is Better", Min-Max | Primary efficacy driver. |

| Clint (Microsomal Stability) | < 10 μL/min/mg | 0.25 | "Smaller is Better", Robust Scaling | Critical for PK half-life. |

| Solubility (pH 7.4) | > 100 μM | 0.20 | "Larger is Better", Min-Max | Limits oral absorption. |

| hERG IC50 (Safety) | > 30 μM | 0.15 | "Smaller is Better", Binary Cut-off | Avoids cardiac toxicity. |

| LogP (Lipophilicity) | 2.0 - 4.0 | 0.05 | "Target is Best", Two-sided Linear | Balances permeability/solubility. |

| Composite Score | Maximize | Σ = 1.0 | Weighted Sum | Overall compound quality. |

Table 2: Comparison of Multi-Property Optimization Methods

| Feature | Weighted Sum Scoring | Desirability Index (Derringer-Suich) |

|---|---|---|

| Core Principle | Linear combination of normalized values. | Geometric mean of transformed, bounded functions. |

| Output Range | Unbounded (can be any positive/negative number). | Bounded [0, 1]. |

| Handling "Showstoppers" | Poor. A bad score in one property can be offset by excellent others. | Excellent. A zero desirability (d_i=0) in any property zeros the overall index (D=0). |

| Ease of Interpretation | Intuitive; direct trade-offs. | Less intuitive; requires understanding transformations. |

| Best For | Early-stage filtering, ranking where all properties are "nice-to-have". | Late-stage lead optimization where any property failure is unacceptable. |

Visualizations

Title: Multi-Property Optimization Workflow

Title: Desirability Function Shape Key

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Optimization | Example / Specification |

|---|---|---|

| Human Liver Microsomes (HLM) | Assess metabolic stability (intrinsic clearance, Clint). | Pooled, 50-donor, gender-balanced. Correlates with in vivo hepatic clearance. |

| hERG-Expressing Cell Line (e.g., HEK293-hERG) | Evaluate cardiac toxicity risk via patch-clamp or flux assays. | Measures compound inhibition of the hERG potassium channel. |

| Caco-2 Cell Monolayers | Predict human intestinal permeability and efflux risk (P-gp substrate). | Measures apparent permeability (Papp) and efflux ratio. |

| Phospholipid Vesicles (PLVs) or PAMPA Plate | High-throughput model for passive membrane permeability. | Alternative to cell-based assays for early-stage screening. |

| LC-MS/MS System | Quantify compound concentrations in all in vitro ADMET assays. | Essential for accurate solubility, metabolic stability, and permeability measurements. |

| Statistical Software (e.g., JMP, R, Python SciPy) | Perform normalization, transformation, weighting, and composite score calculation. | Enables automation of weighted scoring and desirability index workflows. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My computed Pareto frontier shows only a few points clustered together, lacking diversity in solutions. What is the likely cause and how can I fix it?

A: This is often caused by an unbalanced objective function scaling or an inadequate search algorithm configuration.

- Cause: If one objective (e.g., binding affinity in pM) has a numeric range orders of magnitude larger than another (e.g., synthetic accessibility score from 1-10), the optimizer will prioritize the first objective.

- Solution: Implement objective normalization. Scale all objectives to a comparable range (e.g., 0 to 1) using min-max scaling or z-score standardization before optimization.

- Protocol: For each objective

ito be minimized:- Run a preliminary broad exploration of your chemical space (e.g., 1000 random samples).

- Record the observed minimum (

min_i) and maximum (max_i) for each objective. - For your main optimization, transform each objective value:

obj_i_scaled = (obj_i - min_i) / (max_i - min_i). - Proceed with your multi-objective algorithm (e.g., NSGA-II) using the scaled objectives.

Q2: I am using an algorithm like NSGA-II, but the optimization stalls, failing to converge towards the true Pareto front. What steps should I take?

A: This indicates issues with the evolutionary algorithm's parameters or diversity preservation.

- Check 1: Algorithm Parameters. Increase the population size and the number of generations. For drug-like molecule optimization (e.g., 50-100 variables), a population size of 100-200 is often a minimum starting point.

- Check 2: Genetic Operators. Review your crossover and mutation rates. For molecular representation (like SELFIES or graphs), ensure your mutation operators are diverse enough (e.g., atom change, bond alteration, fragment attachment) to adequately explore the chemical space.

- Protocol for Parameter Tuning:

- Start with standard parameters: population size=100, generations=50, crossover probability=0.9, mutation probability=0.1.

- Run for 20 generations and plot the hypervolume indicator over time. If it plateaus early, increase mutation probability to 0.2.

- If diversity is low (crowding distance is minimal), increase the population size to 200 and ensure your crowding distance computation is correctly implemented for diversity maintenance.

Q3: How do I effectively visualize a Pareto frontier with more than three objectives for drug design?

A: Direct visualization beyond 3D is impossible. Use dimensionality reduction or parallel coordinates.

- Solution 1: Parallel Coordinates Plot. This is the most common method. Each vertical axis represents one objective (e.g., Potency, Selectivity, Solubility, Clearance, Synthesizability). Each candidate molecule is a line crossing all axes at its respective objective values. The Pareto-optimal set will form a "band" of lines, visually revealing trade-offs.

- Solution 2: Pairwise 2D Scatter Plot Matrix. Create a matrix of 2D scatter plots for every pair of objectives. Pareto-optimal points will lie on the outer edges in each plot. This is computationally intensive but precise.

- Experimental Protocol for Parallel Coordinates:

- Normalize all objective values to a [0, 1] range.

- Using a library like Plotly or matplotlib, plot each molecule's property vector.

- Highlight the identified Pareto-optimal molecules in a contrasting color (e.g., red) and non-optimal ones in a neutral color (e.g., light grey).

- Add interactive filters to allow selection of ranges on specific axes.

Q4: After identifying the Pareto frontier, how do I select a single candidate molecule for further development?

A: This requires post-Pareto decision-making, often incorporating domain knowledge or additional criteria. Implement a Multi-Criteria Decision Making (MCDM) method.

- Method: Technique for Order of Preference by Similarity to Ideal Solution (TOPSIS). It selects the solution closest to the "ideal" point (best in all objectives) and farthest from the "nadir" point (worst in all objectives).

- Protocol for TOPSIS:

- From your final Pareto set of

mmolecules, create anm x nmatrix, wherenis the number of objectives. - Normalize the matrix (e.g., vector normalization).

- Apply weights to each objective if some are more important (e.g., Potency weight = 0.4, Solubility weight = 0.3, etc.). Sum of weights must equal 1.

- Determine the ideal (

A+) and negative-ideal (A-) solutions. - Calculate the Euclidean distance of each molecule to

A+andA-. - Calculate the relative closeness to the ideal solution:

C_i = d_i- / (d_i+ + d_i-). - Rank molecules by

C_i(higher is better). The top-ranked molecule represents the best compromised solution given your weights.

- From your final Pareto set of

Key Quantitative Data in Drug Design Pareto Frontiers

Table 1: Typical Objective Ranges and Targets in Small Molecule Optimization

| Objective | Common Metric | Desirable Range | Optimization Direction |

|---|---|---|---|

| Potency | IC₅₀ / Kᵢ | < 100 nM | Minimize |

| Selectivity | Selectivity Index (SI) | > 100-fold | Maximize |

| Metabolic Stability | % remaining (human liver microsomes) | > 50% | Maximize |

| Aqueous Solubility | Kinetic Solubility (pH 7.4) | > 100 µM | Maximize |

| CYP Inhibition | IC₅₀ (for 3A4, 2D6) | > 10 µM | Maximize (Minimize Inhibition) |

| Synthesizability | SA Score (from 1 to 10) | < 4.5 | Minimize |

Table 2: Comparison of Multi-Objective Optimization Algorithms

| Algorithm | Type | Pros | Cons | Best For |

|---|---|---|---|---|

| NSGA-II | Evolutionary | Excellent spread, handles non-convex fronts | Computationally heavy, many parameters | Exploratory design, complex spaces |

| MOEA/D | Evolutionary | Efficient for many objectives, uses aggregation | May miss extreme points | >3 objectives, known decomposition |

| ParEGO | Bayesian | Sample-efficient, models uncertainty | Sequential, slower per iteration | Expensive evaluations (e.g., FEP) |

| Random Search | Naive | Simple, parallelizable, no assumptions | Inefficient, no convergence guarantee | Baseline comparison |

Experimental Protocol: Generating a Pareto Frontier for a Kinase Inhibitor Series

Title: Multi-Objective Lead Optimization Workflow

Objective: To identify kinase inhibitor candidates optimizing for potency (pIC₅₀), selectivity (against kinase FAMILY B), and predicted human clearance (CL).

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Library Design: Using the core scaffold from HTS hit CP-123, generate a virtual library of 5000 analogues via enumerated R-group substitutions (Br, Cl, F, CH₃, OCH₃, CF₃, etc.) at positions R1 and R2.

- Objective Calculation:

- Obj 1 (Potency): Predict pIC₅₀ using a pre-trained Graph Neural Network (GNN) model on internal kinase data.

- Obj 2 (Selectivity): Calculate as the difference in predicted pIC₅₀ between the primary target (Kinase A) and the anti-target (Kinase B):

Selectivity = pIC₅₀(Kinase A) - pIC₅₀(Kinase B). - Obj 3 (Clearance): Predict human hepatic clearance (mL/min/kg) using a QSAR model (e.g., from Volsurf+ descriptors).

- Multi-Objective Optimization: Configure NSGA-II algorithm.

- Representation: Use SELFIES strings for molecules.

- Population: 200 individuals.

- Generations: 100.

- Genetic Operators: Crossover (80%), Mutation (20% using chemical mutation rules).

- Objectives: Maximize pIC₅₀, Maximize Selectivity, Minimize Predicted Clearance.

- Analysis: After 100 generations, extract the non-dominated front. Visualize using a 3D scatter plot and parallel coordinates. Apply TOPSIS with weights (Potency: 0.5, Selectivity: 0.3, Clearance: 0.2) to select top 5 candidates for synthesis and validation.

Mandatory Visualizations

Title: Drug Design Pareto Optimization Workflow

Title: Core Trade-Offs in Multi-Property Drug Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Pareto-Led Drug Design

| Item / Reagent | Function / Purpose | Example Vendor/Category |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, descriptor calculation, and library enumeration. | Open Source |

| PyMol / Maestro | Molecular visualization software for analyzing protein-ligand interactions and guiding structural modifications. | Schrödinger, Open Source |

| AutoDock Vina / GOLD | Molecular docking software for rapid in-silico assessment of binding affinity and pose prediction. | Open Source, CCDC |

| Human Liver Microsomes (HLM) | In-vitro system for phase I metabolic stability assessment, a key objective in optimization. | Corning, Xenotech |

| Kinase Profiling Service | Panel-based screening to experimentally determine selectivity across a wide range of kinases. | Eurofins, Reaction Biology |

| NSGA-II / pymoo | Python library implementing NSGA-II and other MOO algorithms for custom optimization workflows. | pymoo (Open Source) |

| Parallel Coordinates Plot (Plotly) | Interactive visualization library for exploring high-dimensional Pareto fronts. | Plotly Technologies |

Frequently Asked Questions (FAQs)

Q1: During multi-property prediction, my model achieves high accuracy for one target property (e.g., solubility) but poor performance for another (e.g., metabolic stability). How can I address this imbalance? A1: This is a classic optimization conflict. Implement a weighted multi-task learning architecture. Adjust the loss function to apply higher weights to tasks with larger prediction errors or higher research priority. Monitor individual task performance per epoch to dynamically adjust these weights if necessary.

Q2: My dataset for a target property is very small (<100 compounds). Can I still effectively train a predictive model? A2: Yes, using transfer learning. Start with a model pre-trained on a large, general chemical dataset (e.g., ChEMBL). Then, perform fine-tuning on your small, specific dataset. Utilize data augmentation techniques like SMILES enumeration to artificially expand your training set.

Q3: The model's predictions are accurate for known chemical scaffolds but fail on novel scaffold structures. How do I improve generalizability? A3: This indicates a domain shift problem. Ensure your training data encompasses broad chemical space. Incorporate diverse molecular representations (e.g., ECFP fingerprints, graph-based features, and 3D descriptors). Use adversarial validation to detect significant differences between your training and novel compound sets.

Q4: How do I handle missing property data in my training dataset, which is common in early-stage design? A4: Do not simply discard compounds with missing values. Use multi-task learning where each property is a separate task; the model can learn from shared representations even when some labels are absent. Alternatively, employ data imputation methods specifically designed for chemical data, but always validate their impact.

Q5: My experimental validation results consistently deviate from model predictions for certain compound classes. What steps should I take? A5: First, perform error analysis to characterize the problematic classes. Retrain your model with additional data from these classes if available. If data is scarce, apply ensemble methods (e.g., Random Forest, Gradient Boosting) which can be more robust for heterogeneous data. Re-evaluate your feature set for relevance to the deviant property.

Troubleshooting Guides

Issue: Model Performance Degradation After Deployment on New Data Symptoms: High validation accuracy during training, but poor predictive performance on newly synthesized compounds. Diagnostic Steps:

- Check for Data Drift: Compare the distributions (e.g., molecular weight, logP) of your training set and the new compounds. Use statistical tests (Kolmogorov-Smirnov) or PCA visualization.

- Verify Assay Consistency: Ensure the experimental protocol for generating the new property data is identical to that of the training data.

- Inspect Feature Calculation: Confirm that the molecular descriptor/fingerprint calculation pipeline for new compounds is exactly the same as used in training.

Resolution Protocol:

- If data drift is detected, initiate active learning: select the most informative new compounds (e.g., highest prediction uncertainty) for experimental testing and add them to the training set.

- If assay inconsistency is suspected, curate a small benchmark set of compounds to re-run through the assay and recalibrate the model.

- Implement a continuous monitoring system to track prediction errors and flag emerging discrepancies automatically.

Issue: Conflicting Predictions in Multi-Property Optimization Symptoms: The model suggests Compound A for high potency but predicts poor solubility, while Compound B has good solubility but predicted low potency. No ideal candidate emerges. Diagnostic Steps:

- Analyze the Pareto Front: Plot the predicted properties against each other to identify the non-dominated set (Pareto front) of compounds.

- Review Objective Function: Examine how the multi-property score (e.g., weighted sum) is formulated. The conflict may arise from inappropriate weightings.

Resolution Protocol:

- Employ Multi-Objective Optimization: Use algorithms like NSGA-II (Non-dominated Sorting Genetic Algorithm II) to generate and explore the Pareto front, presenting a set of optimal trade-offs to the chemist.

- Implement a Penalized Reward: In the scoring function, apply non-linear penalties that severely downgrade compounds falling below critical thresholds (e.g., solubility < 10 µM).

- Enable Interactive Exploration: Provide a tool that allows researchers to dynamically adjust property weights and visualize the resulting changes in top-ranked compounds in real-time.

Data Presentation

Table 1: Performance Comparison of ML Algorithms for Dual Property Prediction Dataset: 5000 compounds with experimental data for IC50 (Potency) and Clearance (Metabolic Stability). 80/20 Train/Test Split.

| Algorithm | Potency (IC50) RMSE (nM) | Potency R² | Clearance RMSE (mL/min/kg) | Clearance R² | Multi-Task Loss |

|---|---|---|---|---|---|

| Random Forest (Single-Task) | 45.2 | 0.72 | 8.1 | 0.65 | N/A |

| XGBoost (Single-Task) | 41.7 | 0.76 | 7.8 | 0.67 | N/A |

| Neural Network (Multi-Task) | 38.5 | 0.79 | 7.2 | 0.71 | 0.241 |

| Graph Neural Network (Multi-Task) | 39.1 | 0.78 | 7.2 | 0.71 | 0.243 |

Table 2: Impact of Transfer Learning on Small Dataset Performance Target: hERG inhibition prediction. Base Model: Pre-trained on 200k general ADMET properties.

| Fine-Tuning Dataset Size | Model Type | Accuracy | AUC-ROC | Improvement vs. Train-From-Scratch |

|---|---|---|---|---|

| 50 compounds | Train-From-Scratch | 0.58 | 0.55 | (Baseline) |

| 50 compounds | Transfer Learning | 0.71 | 0.69 | +25% |

| 200 compounds | Train-From-Scratch | 0.69 | 0.72 | (Baseline) |

| 200 compounds | Transfer Learning | 0.78 | 0.81 | +13% |

Experimental Protocols

Protocol 1: Building a Multi-Task Learning Model for Property Prediction Objective: Train a single model to predict potency (pIC50) and solubility (logS) simultaneously. Materials: See "The Scientist's Toolkit" below. Method:

- Data Curation: Assay a diverse compound library for pIC50 (target binding) and logS (shake-flask method). Standardize values and handle missing labels per FAQ A4.

- Featurization: Compute 2048-bit ECFP4 fingerprints and 200-dimension RDKit 2D descriptors for each compound. Concatenate into a unified feature vector.

- Model Architecture: Implement a neural network with:

- A shared dense input layer (512 neurons, ReLU activation).

- Two separate task-specific output branches, each with a dense layer (128 neurons, ReLU) and a single linear output neuron.

- Training: Use a combined loss function:

Total Loss = w1 * MSE(pIC50) + w2 * MSE(logS). Start with equal weights (w1=w2=1.0). Use the Adam optimizer (lr=0.001) and train for 500 epochs with early stopping. - Conflict Analysis: Post-training, analyze the correlation of prediction errors between tasks. High negative correlation indicates a modeling conflict requiring weighted loss adjustment.

Protocol 2: Active Learning Loop for Model Improvement Objective: Efficiently improve model accuracy by selecting the most informative compounds for experimental testing. Materials: Initial trained model, untested compound library. Method:

- Uncertainty Sampling: Use the initial model to predict properties for all compounds in the untested library. Calculate prediction uncertainty (e.g., variance across an ensemble of models, or predictive entropy).

- Compound Selection: Rank compounds by highest prediction uncertainty. Select the top N (e.g., 20-50) compounds for experimental validation.

- Experimental Testing: Synthesize and assay the selected compounds for the target properties using standardized protocols.

- Model Retraining: Add the new experimental data to the original training set. Retrain the model following Protocol 1.

- Iteration: Repeat steps 1-4 for 3-5 cycles or until model performance meets a predefined threshold.

Mandatory Visualizations

ML-Guided Molecular Design Workflow

Common Multi-Property Optimization Conflicts

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in ML-Based Property Prediction |

|---|---|

| Curated Chemical Databases (e.g., ChEMBL, PubChem) | Source of experimental bioactivity and ADMET data for training and benchmarking models. |

| Molecular Featurization Software (e.g., RDKit, Mordred) | Computes standardized molecular descriptors, fingerprints, and graph representations from chemical structures. |

| Deep Learning Frameworks (e.g., PyTorch, TensorFlow) | Provides flexible environment to build, train, and deploy complex multi-task and graph neural network models. |

| Multi-Objective Optimization Libraries (e.g., pymoo, DEAP) | Implements algorithms (NSGA-II, SPEA2) to navigate property trade-offs and identify Pareto-optimal compounds. |

| Automated Assay Platforms (HTS, LC-MS) | Generates high-quality, consistent experimental data for model training and the active learning loop. |

| Cheminformatics Platforms (e.g., KNIME, Pipeline Pilot) | Enables creation of reproducible data preprocessing, modeling, and analysis workflows without extensive coding. |

Application of Multi-Objective Optimization (MOO) in De Novo Molecular Design

Technical Support Center

FAQs & Troubleshooting

Q1: In a MOO run for a CNS drug candidate, my Pareto front contains only a handful of molecules, and they seem very similar. What is the cause and how can I improve diversity?

A1: This is a common issue known as premature convergence, often due to an imbalance in objective weighting or insufficient exploration in the generative algorithm.

- Troubleshooting Steps:

- Adjust Objective Scalarization: If using a weighted sum method, the weights may overly favor one property. Shift to a true Pareto-based method (e.g., NSGA-II, SPEA2) that explicitly maintains a diverse front.

- Tune Diversity Penalties: Increase coefficients for diversity-promoting terms (e.g., Tanimoto dissimilarity) in your fitness function.

- Modify Sampling Parameters: Increase the mutation rate, exploration budget, or temperature parameter in your reinforcement learning or genetic algorithm to encourage broader chemical space exploration.

- Check Property Gradients: Ensure your property prediction models provide meaningful gradients across a wide range of structures; flat regions can stall optimization.

Q2: My MOO process frequently generates molecules predicted to have high synthetic accessibility (SA) scores but are flagged as "unsynthesizable" by experienced medicinal chemists. How do I resolve this disconnect?

A2: This indicates a gap between computational SA scoring functions and real-world synthetic feasibility.

- Troubleshooting Steps:

- Implement a Multi-Faceted SA Score: Combine a standard SA score (e.g., SAscore, RAscore) with additional, stricter rules:

- Retrosynthesis Check: Integrate a retrosynthesis planning tool (e.g., AiZynthFinder, ASKCOS) to flag molecules with no plausible route.

- Complexity Heuristics: Add penalties for specific problematic motifs (e.g., strained macrocycles, multiple stereocenters in complex arrays).

- Incorporate a "Chemist-in-the-Loop" Feedback: Use an active learning protocol where flagged molecules are reviewed by chemists, and this feedback is used to retrain or adjust the SA filter.

- Protocol: Set up a post-generation filter pipeline:

Generated Molecule → SAscore Filter (<5) → Retrosynthesis Filter (>0.8 plausibility) → Structural Alert Filter → Output.

- Implement a Multi-Faceted SA Score: Combine a standard SA score (e.g., SAscore, RAscore) with additional, stricter rules:

Q3: When optimizing for potency (pIC50), solubility (LogS), and permeability (LogPapp) simultaneously, I observe a strong negative correlation between solubility and permeability in the results. How should I handle this fundamental conflict?

A3: This conflict is a classic Pareto trade-off. The goal is not to eliminate it but to find the optimal compromises.

- Troubleshooting Steps:

- Visualize the Trade-off: Generate a 3D scatter plot or parallel coordinates plot of your Pareto front to identify clusters of molecules that balance the objectives differently.

- Employ Constrained Optimization: Redefine the problem. Set potency as the primary objective to maximize, and define solubility and permeability as constraints (e.g., LogS > -5, predicted LogPapp > -5.5). The MOO algorithm then seeks the most potent compounds within that acceptable property window.

- Protocol for Constrained MOO:

- Step 1: Define objective:

Maximize: pIC50. - Step 2: Define constraints:

LogS >= -5.0,LogPapp >= -5.5,MW <= 500. - Step 3: Run a constrained MOO algorithm (e.g., NSGA-II with constraint dominance).

- Step 4: Analyze the resulting "satisficing" front for the best-potency molecules meeting all criteria.

- Step 1: Define objective:

Q4: The computational cost of running MOO with high-fidelity molecular dynamics (MD) simulations for property prediction is prohibitive. What are practical alternatives?

A4: Use a surrogate model-based approach to approximate expensive simulations.

- Troubleshooting Steps:

- Build a Surrogate Model Pipeline:

- Step 1: Curate a diverse training set of 1000-5000 molecules.

- Step 2: Run the high-fidelity MD simulation for key properties (e.g., binding free energy, membrane permeability) on this training set.

- Step 3: Train fast machine learning models (e.g., Graph Neural Networks, Random Forest) on molecular descriptors/features (Morgan fingerprints, RDKit descriptors) to predict the MD-derived properties.

- Step 4: Integrate these surrogate models into the MOO loop for rapid evaluation.

- Implement Active Learning: Periodically re-run MD on promising molecules from the Pareto front to validate and retrain the surrogate models, improving their accuracy iteratively.

- Build a Surrogate Model Pipeline:

Quantitative Data Summary

Table 1: Comparison of Common MOO Algorithms in Molecular Design

| Algorithm | Type | Key Strength | Key Limitation | Best Use Case |

|---|---|---|---|---|

| Weighted Sum | Scalarization | Simple, fast | Misses concave Pareto fronts; sensitive to weight choice | Quick exploration with 2-3 loosely correlated objectives |

| NSGA-II | Pareto-based | Excellent diversity preservation; handles many objectives | Computational cost scales with population size | Standard choice for most de novo design (3-5 objectives) |

| MOEA/D | Decomposition | Efficient for many objectives; uses neighbor information | Parameter tuning for decomposition weight vectors | Problems with >4 highly conflicting objectives |

| SMPSO | Pareto-based (Particle Swarm) | Fast convergence; good for continuous spaces | May require adaptation for discrete molecular space | Optimizing continuous molecular descriptors or latent vectors |

Table 2: Typical Target Ranges for Key Drug Properties in MOO

| Property | Target Range | Optimization Goal | Common Prediction Model |

|---|---|---|---|

| Potency (pIC50) | > 8.0 (nM) | Maximize | Random Forest/GNN on binding affinity data |

| Solubility (LogS) | > -4.0 | Maximize | ESOL or AqSol ML model |

| Permeability (LogPapp) | > -5.5 cm/s | Maximize | PAMPA-based QSAR or MD simulation |

| Synthetic Accessibility | < 4.0 (SAscore) | Minimize | Rule-based (SAscore) or ML-based (RAscore) |

| hERG Inhibition (pIC50) | < 5.0 | Minimize | Classification model (e.g., SVM, GNN) |

| Lipinski's Rule of 5 | Violations ≤ 1 | Constrain | Rule-based filter |

Experimental Protocols

Protocol 1: Standard Workflow for MOO-Based De Novo Design Using a GA

- Problem Definition: Specify 3-4 objectives (e.g., Max pIC50, Max LogS, Min SAscore, Min hERG pIC50). Define constraints (MW, Ro5).

- Initialization: Generate a population of 100-500 random molecules from a validated fragment library.

- Evaluation: Calculate all objective properties for each molecule using pre-trained QSAR/QSPR models.

- Pareto Ranking: Rank the population using the Non-Dominated Sorting algorithm (part of NSGA-II).

- Selection & Breeding: Select top-ranked molecules. Apply genetic operators:

- Crossover: Perform fragment-based crossover between two parent molecules.

- Mutation: Apply random mutations (atom/bond change, fragment addition/deletion).

- Replacement: Form a new generation by combining elite parents and offspring.

- Iteration: Repeat steps 3-6 for 50-100 generations.

- Analysis: Extract the final Pareto front for further analysis and selection.

Protocol 2: Active Learning Loop for Surrogate Model Refinement

- Initial Surrogate Model Training: Train initial models on a base dataset (D~base~) of ~5000 molecules with properties from fast, low-fidelity predictors.

- MOO Run: Execute a MOO cycle (using Protocol 1) employing the surrogate models for evaluation.

- Acquisition: Select a batch of 50-100 diverse molecules from the resulting Pareto front.

- High-Fidelity Validation: Run expensive, high-fidelity simulations (e.g., FEP, MD) on the acquired molecules to obtain "ground truth" properties.

- Dataset Update: Add the newly acquired molecules and their high-fidelity properties to create an updated dataset: D~updated~ = D~base~ ∪ D~acquired~.

- Model Retraining: Retrain the surrogate models on D~updated~.

- Convergence Check: Repeat from Step 2 until the Pareto front stabilizes (e.g., <5% change in hypervolume for 3 cycles).

Diagrams

MOO-Driven Molecular Design Workflow

Common Property Trade-offs in Drug MOO

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools for MOO in Molecular Design

| Item/Software | Category | Function | Example/Provider |

|---|---|---|---|

| RDKit | Cheminformatics | Core library for molecule manipulation, descriptor calculation, and fragment-based operations. | Open-source (rdkit.org) |

| JAX/DeepChem | ML Framework | Enables gradient-based optimization through molecular networks and differentiable scoring. | Google / DeepChem |

| PyG/DGL | Graph ML | Libraries for building Graph Neural Networks (GNNs) for molecular property prediction. | PyTorch Geometric / Deep Graph Library |

| pymoo | MOO Algorithms | Python library implementing NSGA-II, MOEA/D, and other algorithms for optimization. | pymoo.org |

| REINVENT | Generative Framework | RL-based platform for de novo molecular design, easily adaptable for MOO. | AstraZeneca (Open Source) |

| AutoDock Vina/Gold | Docking | Provides rapid potency estimates (docking scores) for virtual screening within a MOO loop. | Scripps / CCDC |

| Schrödinger Suite | Commercial Platform | Integrated modeling, simulation, and prediction tools for high-fidelity property calculation. | Schrödinger, Inc. |

| AiZynthFinder | SA Tool | Retrosynthesis analysis to assess synthetic feasibility of generated molecules. | AstraZeneca (Open Source) |

Integrating High-Throughput Experimentation (HTE) with MPO Algorithms for Closed-Loop Design

Troubleshooting Guides & FAQs

Q1: During closed-loop optimization, my MPO algorithm stalls and repeatedly suggests similar compounds despite poor performance scores. What could be the issue?

A: This is often a sign of "model collapse" or exploration failure. The algorithm's acquisition function may be overly exploitative. First, check your data for leakage or incorrect labeling from the HTE platform. Verify that the chemical diversity of your initial library is sufficient; a lack of diversity can trap the algorithm. Adjust the algorithm's balance parameter (e.g., β in UCB, ε in ε-greedy) to favor exploration. Incorporating a diversity penalty or switching to a batch selection method like Thompson sampling can help.

Q2: Our HTE biological assay results show high intra-plate variability, which corrupts the MPO model training. How can we mitigate this?

A: High variability often stems from edge effects, pipetting inconsistencies, or cell health issues. Implement rigorous plate normalization controls (e.g., Z'-score, B-score normalization) before feeding data to the MPO algorithm. Use randomized plate layouts to avoid confounding. From an HTE protocol perspective, ensure reagents are equilibrated to room temperature, use larger volume transfers for accuracy, and include replicate controls on every plate. The MPO algorithm can also be made more robust by using techniques like Gaussian Process regression that can model noise.