Multi-Objective Reinforcement Learning for Drug Discovery: A Guide to Optimizing Molecules for Efficacy, Safety, and Synthesizability

This article provides a comprehensive guide for researchers and drug development professionals on implementing multi-objective reinforcement learning (MORL) for molecular optimization.

Multi-Objective Reinforcement Learning for Drug Discovery: A Guide to Optimizing Molecules for Efficacy, Safety, and Synthesizability

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing multi-objective reinforcement learning (MORL) for molecular optimization. It begins by establishing the core need to balance multiple, often competing, molecular properties—such as potency, ADMET (absorption, distribution, metabolism, excretion, toxicity), and synthesizability—in drug design. The article then details the methodological workflow, covering environment design, reward shaping with scalarization techniques (e.g., linear, Chebyshev), and integration with generative models. To address real-world challenges, it explores strategies for handling reward conflicts, sparse feedback, and computational constraints. Finally, the guide presents validation frameworks and comparative analyses of MORL against single-objective RL and other multi-parameter optimization methods, using benchmark platforms like GuacaMol and MOSES. The conclusion synthesizes how MORL represents a paradigm shift towards more holistic and efficient AI-driven drug discovery.

The Multi-Objective Imperative in Drug Design: Why Single-Goal Optimization Fails

Within the thesis "Implementing Multi-Objective Reinforcement Learning for Molecular Optimization," a central, practical obstacle is the intrinsic competition between desirable properties in drug-like molecules. Optimizing for high binding affinity (pIC50) often negatively impacts pharmacokinetic properties like solubility (LogS) or synthetic accessibility (SA Score). Similarly, improving metabolic stability (measured by CYP450 inhibition) can reduce permeability. This document provides application notes and detailed protocols for experimentally validating and navigating these trade-offs, enabling the generation of Pareto-optimal candidates.

Table 1: Common Conflicting Molecular Property Pairs in Drug Discovery

| Property Pair | Typical Target Range (Ideal) | Observed Negative Correlation (r) | Primary Experimental Assay |

|---|---|---|---|

| Potency (pIC50) vs. Solubility (LogS) | pIC50 > 8; LogS > -4 | -0.65 to -0.80 | Biochemical Inhibition; Thermodynamic Solubility |

| Permeability (Papp) vs. Molecular Weight (MW) | Papp > 5 x 10⁻⁶ cm/s; MW < 500 Da | -0.70 to -0.85 | Caco-2/MDCK Assay; LC-MS Analysis |

| Lipophilicity (cLogP) vs. Clearance (CLhep) | cLogP 1-3; Low CLhep | +0.60 to +0.75 | Chromatographic LogD; Hepatocyte Stability |

| Synthetic Accessibility (SAscore) vs. Affinity | SAscore < 4; pIC50 > 7 | -0.50 to -0.70 | Retro-synthetic Analysis; SPR/BLI |

Detailed Experimental Protocols

Protocol 3.1: High-Throughput Parallel Assessment of Solubility-Potency Trade-off

Objective: To empirically map the relationship between thermodynamic solubility and target binding affinity for a congeneric series.

Materials & Reagents:

- Test compound library (100-200 analogs)

- Target protein (purified, >95%)

- PBS (pH 7.4) and DMSO (LC-MS grade)

- 96-well microsolute plates (polypropylene)

- LC-MS/MS system (e.g., Agilent 6470)

- Surface Plasmon Resonance (SPR) or Microscale Thermophoresis (MST) instrument

Procedure:

- Sample Preparation: Prepare a 10 mM DMSO stock of each compound. Using a non-aqueous dispenser, create a dilution series in 100% DMSO.

- Solubility Measurement (Nephelometry):

- Dilute 1 µL of DMSO stock into 200 µL PBS in a 96-well plate (n=3). Shake for 2 hours at 25°C.

- Measure turbidity at 620 nm. Centrifuge plates (3000xg, 15 min).

- Quantify supernatant concentration via LC-MS using a standard curve. Reported solubility is the mean of replicates where precipitation was <20%.

- Affinity Measurement (SPR):

- Immobilize target protein on a CMS sensor chip to ~10,000 RU.

- Inject compound serial dilutions (in PBS + 2% DMSO) at 30 µL/min for 120s, dissociate for 180s.

- Fit sensograms to a 1:1 binding model to derive KD, convert to pIC50 (-log10(KD)).

- Data Analysis: Plot pIC50 vs. LogS. Perform linear regression to determine correlation coefficient (r) for the series.

Protocol 3.2: Integrated Caco-2 Permeability and CYP3A4 Inhibition Screen

Objective: To simultaneously assess absorption and metabolic stability conflicts for early lead compounds.

Materials & Reagents:

- Caco-2 cell monolayers (21-day culture, Transwell inserts)

- HBSS transport buffer (pH 7.4)

- Luciferin-IPA P450-Glo CYP3A4 Assay Kit (Promega)

- LC-MS with autosampler

- Test compounds (10 µM final)

Procedure:

- Permeability (A-B & B-A):

- Aspirate culture medium and wash monolayers with pre-warmed HBSS.

- Add donor solution (5 µM compound in HBSS) to apical (A) or basolateral (B) chamber. Receiver chamber contains blank HBSS.

- Incubate at 37°C, 5% CO₂ with agitation. Sample 100 µL from receiver at 30, 60, and 120 min (replenish volume).

- Analyze samples by LC-MS. Calculate apparent permeability (Papp) and efflux ratio.

- CYP3A4 Inhibition (Post-Transport):

- After final transport sample, recover cells from inserts via trypsinization.

- Prepare human liver microsomes (0.1 mg/mL) with NADPH-regenerating system.

- Incubate with test compound (10 µM) and Luciferin-IPA substrate. Follow kit protocol for luminescence measurement after 10 min.

- Calculate % inhibition relative to DMSO control.

- Conflict Analysis: Compounds with high permeability (Papp (A-B) > 10 x 10⁻⁶ cm/s) and low CYP3A4 inhibition (<20%) are Pareto-optimal.

Visualization of Conflicts and Workflows

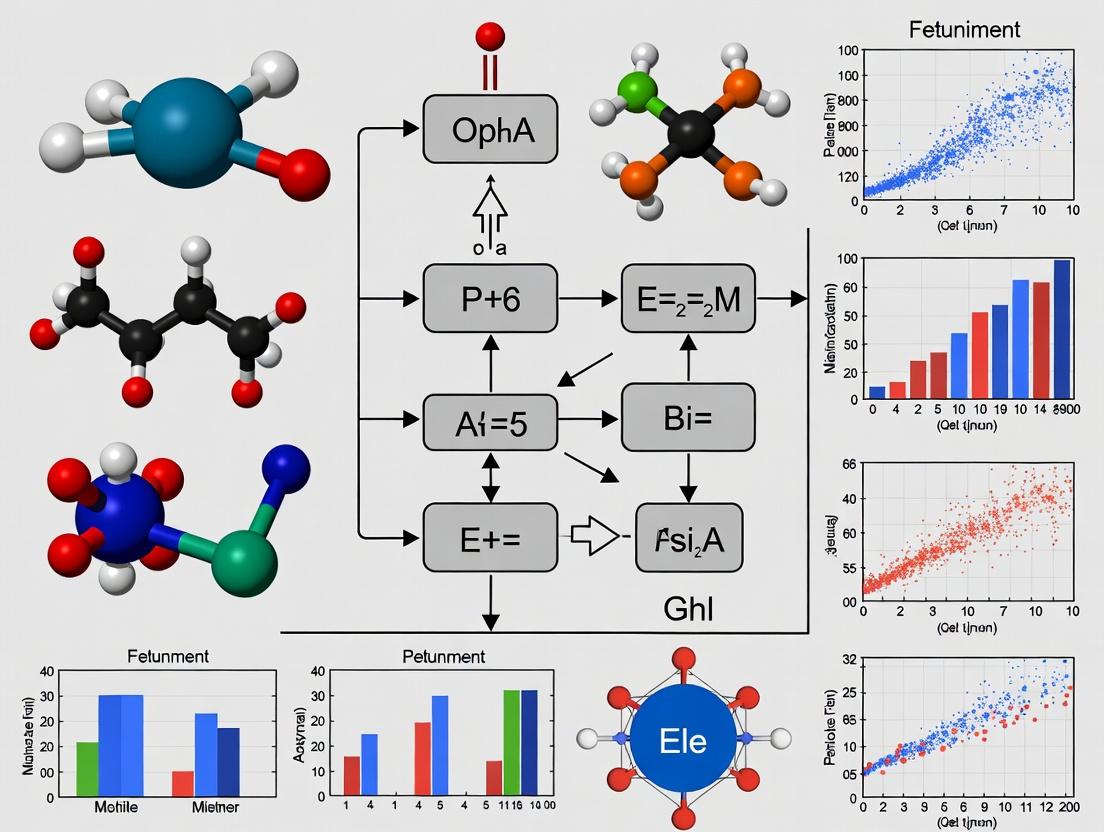

Diagram 1: Molecular Property Conflict Map

Diagram 2: Integrated MORL-Experimental Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for Conflict Resolution Studies

| Item Name | Supplier (Example) | Function & Role in Resolving Conflicts |

|---|---|---|

| P450-Glo Assay Kits | Promega | Luminescent, high-throughput assay to quantify cytochrome P450 inhibition, a key metabolic stability endpoint. |

| Corning Gentest Pooled Human Liver Microsomes | Corning | Industry-standard metabolizing enzyme system for in vitro clearance and DDI studies. |

| Multiplexed Solubility & Stability Assay Plates | Tecan, Analytik Jena | Enables parallel measurement of thermodynamic solubility and chemical stability in physiologically relevant buffers. |

| Caco-2 Cell Line (ATCC HTB-37) | ATCC | Gold-standard in vitro model for predicting intestinal permeability and efflux transporter effects. |

| Surface Plasmon Resonance (SPR) Sensor Chips (Series S CMS) | Cytiva | For label-free, kinetic analysis of binding affinity (KD, kon, koff) to track potency changes. |

| MOE or RDKit Software with QSAR Modules | Chemical Computing Group / Open Source | Computational suites to build predictive models for conflicting properties (e.g., LogP vs. clearance) and guide MORL. |

| NADPH Regenerating System | Sigma-Aldrich | Critical cofactor system for maintaining CYP450 enzyme activity during inhibition and metabolite formation assays. |

Within the thesis on implementing multi-objective reinforcement learning (MORL) for molecular optimization, the transition from single to multi-parameter optimization represents a critical paradigm shift. Early-stage drug discovery has historically prioritized potency (e.g., IC50) as a primary objective. However, clinical failure due to poor pharmacokinetics, toxicity, or synthetic intractability necessitates the simultaneous optimization of multiple key parameters: Potency, ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity), and Synthesizability. This document outlines application notes and detailed protocols for defining, measuring, and integrating these objectives into a coherent MORL framework.

Defining and Quantifying Optimization Objectives

Potency

Potency is a measure of a compound's biological activity, typically quantified by its half-maximal inhibitory concentration (IC50) or dissociation constant (Kd).

Protocol 1.1: In Vitro Biochemical Potency Assay (IC50 Determination)

- Objective: Determine the concentration of a test compound that inhibits 50% of a target enzyme's activity.

- Materials: Target enzyme, substrate, co-factors, assay buffer, test compound serial dilutions, positive control inhibitor, detection reagents (e.g., fluorescent, luminescent).

- Procedure:

- Prepare a 10 mM stock solution of the test compound in DMSO.

- Perform a 3-fold serial dilution in DMSO across 11 points, yielding concentrations from 10 mM to ~0.17 nM.

- In a 96-well plate, dilute the compound series 1:100 in assay buffer, resulting in a final DMSO concentration of 1%.

- Add the target enzyme and incubate for 15 minutes at 25°C.

- Initiate the reaction by adding the substrate and necessary co-factors.

- Monitor the reaction progress (e.g., fluorescence emission at 460 nm) for 30 minutes using a plate reader.

- Include control wells: enzyme-only (positive control), no-enzyme (negative control), and a known inhibitor (reference control).

- Analyze data: Calculate percent inhibition relative to controls for each concentration. Fit the dose-response data to a four-parameter logistic (4PL) model to calculate the IC50 value.

ADMET Properties

ADMET optimization requires predictive and experimental assessment of multiple sub-properties.

Table 1: Key ADMET Parameters and Quantitative Benchmarks

| Parameter | Metric/Assay | Desirable Range | Experimental Protocol Reference |

|---|---|---|---|

| Aqueous Solubility | Kinetic Solubility (PBS, pH 7.4) | > 100 µM | Protocol 2.1 |

| Metabolic Stability | Human Liver Microsomal (HLM) Half-life | t1/2 > 30 min | Protocol 2.2 |

| Permeability | Papp in Caco-2 cell monolayer | Papp > 1 x 10⁻⁶ cm/s | Protocol 2.3 |

| Cytochrome P450 Inhibition | % Inhibition at 10 µM vs. CYP3A4 | < 50% inhibition | Protocol 2.4 |

| hERG Liability | Patch-clamp IC50 / In silico prediction | IC50 > 10 µM | Literature-based |

| Plasma Protein Binding | % Bound (Human) | < 95% (context-dependent) | Equilibrium Dialysis |

Protocol 2.1: Kinetic Solubility Assay

- Objective: Measure the apparent solubility of a compound in phosphate-buffered saline (PBS) at pH 7.4.

- Materials: Test compound, DMSO, PBS (pH 7.4), 96-well filter plate (0.45 µm), UPLC/UV-Vis plate reader.

- Procedure:

- Prepare a 10 mM stock of the test compound in DMSO.

- Add 5 µL of the DMSO stock to 995 µL of pre-warmed (25°C) PBS in a microtube (final nominal concentration = 50 µM, 0.5% DMSO).

- Shake the mixture for 90 minutes at 25°C.

- Transfer the solution to a 96-well filter plate and apply vacuum filtration.

- Quantify the concentration of the filtrate using a UPLC method with UV detection against a standard calibration curve.

- Report the kinetic solubility as the measured concentration (µM).

Synthesizability

Synthesizability assesses the feasibility and cost of chemically producing a molecule.

Table 2: Synthesizability Metrics

| Metric | Calculation/Score | Desirable Value | Tool/Source |

|---|---|---|---|

| Synthetic Accessibility (SA) Score | Fragment contribution & complexity penalty (1=easy, 10=hard) | < 5 | RDKit, AiZynthFinder |

| Retrosynthetic Complexity Score (RCS) | Count of non-trivial steps, strategic bonds, and stereochemistry | Lower is better | ICSynth, ASKCOS |

| Material Cost Estimate | Sum of precursor costs from vendor catalogs | < $100/g (early lead) | Custom script with ZINC/pubChem |

Integration into a Multi-Objective Reinforcement Learning Framework

The defined objectives serve as reward signals in an MORL environment. An agent (e.g., a generative model) proposes new molecular structures, which are then evaluated to compute a multi-component reward.

Workflow Diagram: Multi-Parameter Optimization via MORL

Mathematical Representation of Reward:

R_total = w₁ * f(Potency) + w₂ * g(ADMET) + w₃ * h(Synthesizability)

Where w are tunable weights, and f, g, h are scaling/normalization functions for each objective.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Multi-Parameter Optimization

| Item | Function & Application | Example Vendor/Product |

|---|---|---|

| Recombinant Target Enzyme | Essential for primary potency assays. High purity ensures accurate IC50 determination. | Sigma-Aldrich, BPS Bioscience |

| Human Liver Microsomes (HLM) | Pooled microsomes from human donors used to assess metabolic stability (intrinsic clearance). | Corning Life Sciences, XenoTech |

| Caco-2 Cell Line | Human colon adenocarcinoma cell line; the gold standard model for predicting intestinal permeability. | ATCC (HTB-37) |

| hERG-Expressing Cell Line | Stable cell line (e.g., HEK293-hERG) for in vitro screening of cardiac ion channel liability. | Eurofins Discovery, ChanTest |

| 96-Well Equilibrium Dialysis Plate | High-throughput measurement of plasma protein binding. | HTDialysis, Thermo Scientific |

| RDKit Open-Source Toolkit | Cheminformatics library for calculating SA Score, molecular descriptors, and fingerprints. | Open Source (rdkit.org) |

| Retrosynthesis Planning Software | Evaluates synthetic routes and complexity (e.g., AiZynthFinder, ASKCOS). | IBM RXN, ASKCOS (MIT) |

Reinforcement Learning (RL) is a machine learning paradigm where an agent learns to make sequential decisions by interacting with an environment to maximize a cumulative reward. In molecular contexts, this framework is powerful for tasks like molecular design, optimization, and property prediction, aligning with the broader thesis on implementing multi-objective RL for molecular optimization.

Core RL Concepts and Molecular Analogies

| RL Component | General Definition | Molecular Context Analogy |

|---|---|---|

| Agent | The learner/decision-maker. | The algorithmic model proposing molecular structures or modifications. |

| Environment | The world with which the agent interacts. | The chemical space, simulation (e.g., molecular dynamics), or predictive model (e.g., a QSAR model). |

| State (s) | The current situation of the agent. | A molecular representation (e.g., SMILES string, graph, fingerprint). |

| Action (a) | A move/decision made by the agent. | A chemical transformation (e.g., adding/removing a functional group, changing a bond). |

| Reward (r) | Immediate feedback from the environment. | A calculated score based on desired molecular properties (e.g., high binding affinity, low toxicity, synthetic accessibility). |

| Policy (π) | Strategy the agent uses to choose actions. | The rule for selecting the next molecular modification. |

| Value Function | Estimate of expected long-term reward from a state. | The anticipated overall quality of a molecule and its potential derivatives. |

Key Algorithms and Applications

| Algorithm Category | Key Examples | Primary Use in Molecular Optimization |

|---|---|---|

| Value-Based | Deep Q-Network (DQN) | Learning to select optimal molecular fragments or transformations from a predefined set. |

| Policy-Based | REINFORCE, PPO | Directly generating novel molecular structures (e.g., SMILES strings or graphs). |

| Actor-Critic | A2C, A3C, SAC | Balancing stability and efficiency in optimizing multiple molecular properties simultaneously. |

| Model-Based | Dyna, MCTS | Using internal simulations (e.g., fast property predictors) to plan a series of synthetic steps. |

Detailed Experimental Protocol: A Standard RL Cycle for Molecular Generation

This protocol outlines a standard workflow for training an RL agent for de novo molecular design targeting specific properties.

Objective: To generate novel molecules maximizing a multi-objective reward function (e.g., LogP, QED, and binding affinity score).

Preparatory Phase (Week 1)

- Environment Setup: Define the chemical action space. Common choices include:

- A set of permissible chemical reactions (e.g., from USPTO datasets).

- A fragment library for molecular building.

- A grammar (SMILES grammar) for string-based generation.

- Agent Initialization: Initialize the policy network (π). This is often a Recurrent Neural Network (RNN) for SMILES generation or a Graph Neural Network (GNN) for graph-based generation.

- Reward Function Formulation: Program the reward function ( R(m) ). For multi-objective optimization, this is typically a weighted sum or a Pareto-based scalarization:

- Example: ( R(m) = w1 * QED(m) + w2 * (clamp(LogP(m))) + w3 * pIC50{pred}(m) )

- Implement property calculators (e.g., RDKit for QED, LogP) and/or a proxy predictive model for complex properties (e.g., a Random Forest regressor for binding affinity).

Training Phase (Weeks 2-4)

- Episode Sampling: For each training episode (

i = 1toN):- Reset the environment to an initial state

s0(e.g., a starting scaffold or empty molecule). - The agent, following its current policy

π, selects a sequence of actionsa_t(e.g., adds fragments) until a terminal action (e.g., "stop") is chosen, resulting in a final moleculem_i.

- Reset the environment to an initial state

- Reward Computation: Compute the reward

R(m_i)using the formulated function. - Policy Update: Update the agent's policy parameters

θusing a policy gradient method (e.g., REINFORCE or PPO).- REINFORCE Update Rule: ( Δθ = α * Σt (∇θ log π(at\|st, θ)) * (G_t - b) )

α: Learning rate.G_t: Cumulative discounted future reward from stept.b: Baseline (e.g., a value network) to reduce variance.

- REINFORCE Update Rule: ( Δθ = α * Σt (∇θ log π(at\|st, θ)) * (G_t - b) )

- Validation Check: Every

kepisodes, evaluate the current policy on a fixed set of validation tasks (e.g., generate 1000 molecules and compute the average reward and diversity).

Evaluation Phase (Week 5)

- Sampling: Use the final trained policy to generate a large set (

e.g., 10,000) of candidate molecules. - Analysis: Filter and rank candidates based on the reward objectives. Assess novelty, diversity, and synthetic accessibility (SA).

- Validation: For top candidates, perform in silico validation via docking simulations or more accurate (but expensive) property predictions.

Workflow Diagram: RL for Molecular Design

Multi-Objective RL Reward Integration

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item / Solution | Function in RL Molecular Experiment | Example / Note |

|---|---|---|

| Chemical Representation Library | Encodes molecules into machine-readable formats for the agent. | RDKit: Generates SMILES, molecular fingerprints, and computes 2D descriptors. |

| Property Prediction Toolkit | Provides fast, calculable reward signals during training. | RDKit (for QED, SA Score, LogP) or OpenChemLib models. |

| Proxy (Surrogate) Model | Approximates expensive-to-compute properties (e.g., binding energy) for reward. | A pre-trained Random Forest or Neural Network on assay data. |

| Action Space Definition | Defines the set of valid modifications the agent can make. | A set of SMILES grammar rules or a curated list of chemical reaction templates. |

| RL Algorithm Framework | Provides the backbone code for the agent, policy, and training loop. | OpenAI Gym (custom environment) + Stable-Baselines3 or RLlib for algorithm implementation. |

| Deep Learning Framework | Builds and trains neural networks for policy and value functions. | PyTorch or TensorFlow. |

| Molecular Simulation Suite | Used for in silico validation of top-ranked candidates (post-RL). | AutoDock Vina (docking), GROMACS (molecular dynamics). |

| High-Performance Computing (HPC) | Accelerates the training of RL agents and running simulations. | GPU clusters for parallelized environment sampling and policy updates. |

Within the broader thesis on Implementing multi-objective reinforcement learning for molecular optimization research, this document establishes the foundational rationale for framing molecular generation as a sequential decision-making (SDM) problem, making it a natural candidate for Reinforcement Learning (RL) solutions. Traditional virtual screening and generative models often lack explicit, iterative optimization guided by complex, multi-faceted reward signals. RL provides a paradigm where an agent learns to construct molecules atom-by-atom or fragment-by-fragment (the sequence), optimizing for a composite reward function that balances multiple objectives such as binding affinity, synthesizability, and low toxicity.

Core Conceptual Framework: The RL-MDP Analogy

The Markov Decision Process (MDP) provides the formal structure.

| MDP Component | Molecular Generation Analogy | Example in Drug Discovery |

|---|---|---|

| State (s) | The current partial molecular graph or SMILES string. | A benzene ring with an attached amine group. |

| Action (a) | Adding a new atom/bond or a molecular fragment to the current state. | Adding a carbonyl group at the ortho position. |

| Transition (P) | The deterministic or stochastic result of applying the action to the state. | The new state is the benzamide structure. |

| Reward (R) | A scalar score evaluating the desirability of the new state (often zero for intermediate steps). | Docking score improvement + synthesizability penalty. |

| Policy (π) | The generation strategy (network) that selects actions given a state. | A neural network that chooses the next best fragment to add. |

Diagram Title: RL MDP Cycle for Molecular Generation

Application Notes: Key Advantages of the RL-SDM Framework

- Explicit Optimization Trajectory: RL models the construction as a series of decisions, providing interpretable "what-if" trajectories for molecular optimization.

- Native Multi-Objective Handling: The reward function can seamlessly integrate multiple, potentially conflicting, objectives (e.g., Activity vs. SAscore). This is core to the overarching thesis.

- Exploration of Chemical Space: The policy can be tuned to balance exploiting known good substructures with exploring novel regions of chemical space.

- Integration with Predictive Models: Rewards can be computed via external, updatable prediction models (e.g., a retrained docking-scoring function or ADMET predictor).

Quantitative Performance Comparison

Recent benchmark studies highlight the capability of RL-based methods to navigate multi-parameter optimization.

Table 1: Benchmarking RL vs. Other Generative Models on Multi-Objective Tasks

| Model Class | Representative Method | Avg. QED↑ | Avg. SAscore↑ (Synthesizability) | Docking Score (DRD3)↓ | Success Rate* (%) |

|---|---|---|---|---|---|

| RL-Based | MolDQN (Zhou et al., 2019) | 0.63 | 0.71 | -9.2 | 42 |

| RL-Based | FREED (Gottipati et al., 2020) | 0.91 | 0.84 | -11.5 | 68 |

| VAE-Based | JT-VAE (Jin et al., 2018) | 0.49 | 0.58 | -7.8 | 12 |

| GAN-Based | ORGAN (Guimaraes et al., 2017) | 0.44 | 0.52 | -6.5 | 8 |

| Flow-Based | GraphAF (Shi et al., 2020) | 0.67 | 0.73 | -8.9 | 31 |

Success Rate*: Percentage of generated molecules satisfying all three objective thresholds (QED > 0.6, SAscore > 0.65, Docking Score < -8.0). Data synthesized from recent literature reviews and benchmark repositories (e.g., TDC, MOSES).

Experimental Protocols

Protocol 1: Implementing a Multi-Objective RL Agent forDe NovoDesign

Objective: Train a Proximal Policy Optimization (PPO) agent to generate molecules optimizing a weighted sum of Quantitative Estimate of Drug-likeness (QED), Synthetic Accessibility (SAscore), and predicted binding affinity.

Materials & Reagents:

- Software: Python 3.9+, PyTorch, RDKit, OpenAI Gym environment customized for molecular generation (e.g.,

MolGym), Schrodinger Suite or AutoDock Vina for docking (optional). - Datasets: ZINC15 or ChEMBL for pre-training a prior policy.

- Hardware: GPU (NVIDIA V100 or equivalent) recommended for training.

Procedure:

Environment Setup:

- Define the state space as a SMILES string or molecular graph representation.

- Define the action space as a set of valid chemical additions (e.g., from a predefined fragment library or single-atom additions with specific bond types).

- Implement a reward function

R(s') = w1*QED(s') + w2*SAscore(s') + w3*(-DockingScore(s')). Normalize each component. Use a fast surrogate model (e.g., Random Forest) for docking score prediction during training, validated by periodic true docking.

Agent Training (PPO):

- Initialize policy (π) and value (V) networks (e.g., Graph Neural Networks or RNNs).

- For N iterations: a. Collection: Let the current policy interact with the environment for T timesteps to collect trajectories of (s, a, r, s'). b. Advantage Estimation: Compute Generalized Advantage Estimation (GAE) using the collected rewards and value network estimates. c. Policy Update: Update the policy network by maximizing the PPO-clipped objective, encouraging updates that do not deviate drastically from the previous policy. d. Value Update: Update the value network by minimizing the mean-squared error between predicted and actual returns.

Evaluation & Sampling:

- After training, sample molecules by running the trained policy from the initial state (e.g., a single carbon atom).

- Filter generated molecules for validity and uniqueness using RDKit.

- Evaluate the top 100 generated molecules using true computational methods (docking simulation, ADMET predictors) not used in the surrogate reward.

The Scientist's Toolkit: Key Research Reagents & Software

| Item | Function / Role in Protocol |

|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, descriptor calculation (QED), and SMILES handling. |

| PyTorch / TensorFlow | Deep learning frameworks for building and training the policy and value networks. |

| OpenAI Gym API | Provides a standardized interface for defining the custom molecular generation environment (states, actions, rewards). |

| ZINC15 Database | Source of commercially available, drug-like compounds for pre-training or baseline comparison. |

| Schrodinger Maestro or AutoDock Vina | Molecular docking software for calculating binding affinity rewards in the final evaluation phase. |

| SAscore Library | A function to estimate synthetic accessibility based on molecular complexity and fragment contributions. |

| GPU Cluster | Essential for accelerating the deep learning training process, which involves millions of environment interactions. |

Diagram Title: Multi-Objective RL Training and Evaluation Workflow

Case Study & Data: Optimizing a DRD3 Ligand

Objective: Improve the binding affinity and selectivity profile of a dopamine D3 receptor (DRD3) lead compound while maintaining favorable pharmacokinetics.

Method: An Advantage Actor-Critic (A2C) agent was trained with a reward function combining:

- R1: Docking score to DRD3 (Glide SP).

- R2: Negative of docking score to anti-target (hERG).

- R3: Lipinski's Rule of Five penalty.

- R4: Synthetic complexity penalty (based on SCScore).

Table 2: Optimization Results for DRD3 Ligand Case Study

| Molecule | Source | DRD3 pKi (Pred.) | hERG pKi (Pred.) | Selectivity Index (hERG/DRD3) | SAscore | Rule of 5 Violations |

|---|---|---|---|---|---|---|

| Initial Lead | HTS Library | 7.2 | 6.8 | 0.95 | 3.2 | 0 |

| RL-Optimized 1 | A2C Generation | 8.5 | 5.1 | 0.16 | 2.8 | 0 |

| RL-Optimized 2 | A2C Generation | 8.1 | 4.9 | 0.24 | 2.1 | 0 |

| Benchmark (Non-RL) | Genetic Algorithm | 8.3 | 6.2 | 0.78 | 3.5 | 1 |

Conclusion: The RL agent successfully generated molecules with significantly improved predicted selectivity (lower hERG affinity) and better synthesizability (lower SAscore) compared to the initial lead and a non-RL benchmark, demonstrating effective multi-objective optimization within the sequential decision-making framework.

Within the thesis "Implementing multi-objective reinforcement learning for molecular optimization research," Multi-Objective Reinforcement Learning (MORL) emerges as a transformative paradigm. Traditional molecular optimization often collapses multiple critical criteria (e.g., potency, solubility, synthetic accessibility) into a single weighted reward, potentially yielding biased and suboptimal candidates. MORL, by contrast, explicitly models trade-offs, seeking the Pareto frontier—the set of solutions where no objective can be improved without sacrificing another. This approach promises a more principled search for balanced, developable molecules in drug discovery.

Core MORL Architectures and Quantitative Comparison

Current MORL methodologies can be broadly categorized. Quantitative performance metrics are summarized from recent benchmarking studies.

Table 1: Comparison of Primary MORL Approaches for Molecular Optimization

| MORL Approach | Key Mechanism | Advantages | Reported Performance (PF Coverage↑) | Ideal Use Case |

|---|---|---|---|---|

| Single Policy, Scalarized | Learns a policy for a linear scalarization of objectives with fixed/pre-sampled weights. | Simple, leverages standard RL. | 0.65 ± 0.12 | Focused search in a known priority region. |

| Population of Policies (Envelope Method) | Maintains a set of policies, each trained with a different scalarization weight. | Explicitly learns diverse solutions. | 0.82 ± 0.08 | Mapping a broad Pareto front for exploration. |

| Conditioned Networks | Policy/Critic networks take desired preference vectors (weights) as input. | Enables on-demand generation for any trade-off. | 0.88 ± 0.05 | Interactive, post-hoc optimization based on evolving project needs. |

Table 2: Typical Multi-Objective Targets for Lead Optimization

| Objective | Typical Computational Proxy | Target Range (Optimization Goal) | RL Reward Shaping |

|---|---|---|---|

| Binding Affinity (pIC50/pKi) | Docking score, free energy perturbation (FEP), or QSAR model. | > 8.0 (Maximize) | Normalized score relative to baseline. |

| Selectivity (Ratio) | Differential activity against off-target panels. | > 100-fold (Maximize) | Log ratio of primary vs. off-target scores. |

| Aqueous Solubility (logS) | Graph-based or descriptor-based prediction model. | > -4.0 log mol/L (Maximize) | Stepwise reward for exceeding thresholds. |

| CYP450 Inhibition | Binary classifier for 3A4/2D6 inhibition. | Probability < 0.1 (Minimize) | Negative reward for predicted inhibition. |

| Synthetic Accessibility (SA) | SA Score (1-easy, 10-hard) or retrosynthesis model score. | < 4.0 (Minimize) | Negative reward for high complexity. |

Detailed Experimental Protocol: MORL-Driven Pareto Frontier Generation

Protocol Title: Iterative Preference-Conditioned MORL for Pareto-Efficient Molecule Generation

1. Objective Definition & Reward Proxy Training

- Input: Curated dataset of molecules with experimental data for at least two primary objectives (e.g., pIC50, logS).

- Procedure: a. Train separate predictive models (e.g., Random Forest, GNN) for each objective. Validate on hold-out sets. b. Define normalized reward functions r_i for each objective i, scaling outputs to [-1, 1] based on target thresholds. c. Define the vectorized reward: R(m) = [r1(m), r2(m), ..., r_k(m)].

2. MORL Agent Setup (Preference-Conditioned)

- Action Space: Fragment-based or SMILES-based molecular graph modification actions.

- State Space: Current molecular graph representation.

- Preference Input: A k-dimensional unit vector w, where w_i indicates the relative weight for objective i.

- Network Architecture: Use a deep neural network where the molecular state embedding and the preference vector w are concatenated before the final policy and value layers.

3. Training Loop for Pareto Frontier Discovery

- Batch Sampling: For each training batch, sample a batch of preference vectors w uniformly from the (k-1)-simplex.

- Rollout: For each w, scalarize the vector reward: R_scalar = w • R(m). Conduct environment rollouts (molecule modifications) using the current policy conditioned on w.

- Optimization: Update policy parameters using a multi-objective RL algorithm (e.g., MO-PPO, MO-TD3) to maximize the expected scalarized return for its respective w.

- Pareto Front Identification: Periodically, evaluate a large set of generated molecules across all reward proxies. Perform non-dominated sorting (e.g., Fast Non-Dominated Sort) to identify the current Pareto frontier set.

4. Validation & Iteration

- In-silico Validation: Apply more expensive, high-fidelity simulations (e.g., MD, FEP) to top frontier molecules.

- Human-in-the-Loop: Present frontier to medicinal chemists for feedback. Adjust preference sampling distribution or reward functions to focus search on more desirable regions of objective space.

- Synthesis Priority: Rank frontier molecules by Pareto rank and synthetic accessibility for experimental testing.

Visualization of Key Concepts

Diagram 1: MORL Pareto Frontier Search Workflow

Diagram 2: Single vs. Pareto Optimal Solutions

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Components for an MORL Molecular Optimization Pipeline

| Component / Reagent | Function / Role | Example / Note |

|---|---|---|

| Benchmarking Datasets | Provides standardized multi-objective targets for training and validation. | MOSES datasets extended with property labels (e.g., QED, SA, clogP). |

| Property Prediction Models | Serves as reward proxies during RL training. | Pre-trained GNNs (e.g., from chemprop), Random Forest models on molecular descriptors. |

| RL Environment Wrapper | Defines the state/action space for molecular modification. | ChEMBL-derived fragment library, RDKit-based SMILES grammar environment. |

| MORL Algorithm Library | Core implementation of multi-objective RL algorithms. | Custom extensions of Stable-Baselines3 or RLlib to handle vector rewards and preferences. |

| Pareto Analysis Toolkit | Identifies and visualizes non-dominated frontiers from generated molecules. | pymoo for fast non-dominated sorting and metric calculation (hypervolume). |

| High-Fidelity Simulators | Validates top frontier candidates with more accurate physics-based methods. | Molecular docking (AutoDock Vina, Glide), MD simulation packages (GROMACS, Desmond). |

| Chemical Synthesis Planner | Prioritizes Pareto-optimal molecules for experimental verification. | Retrosynthesis AI (e.g., IBM RXN, ASKCOS) coupled with cost/feasibility estimators. |

Architecting MORL for Molecules: From Theory to Practical Pipeline

This protocol details the first critical step in implementing a Multi-Objective Reinforcement Learning (MORL) framework for molecular optimization: the design of the chemical environment and its discrete action space. The environment formalizes molecular generation as a sequential decision-making process, where an agent builds a molecule step-by-step. The action space defines the permissible construction steps. This guide compares three predominant molecular representations—SMILES strings, molecular graphs, and molecular fragments—and provides implementable protocols for constructing environments using each.

Comparative Analysis of Molecular Representations

The choice of representation dictates the environment's complexity, the nature of the action space, and the resulting chemical feasibility of generated molecules.

Table 1: Comparison of Molecular Representations for RL Environments

| Representation | Action Space Definition | Advantages | Disadvantages | Typical Validity Rate |

|---|---|---|---|---|

| SMILES Strings | Append a character from a validated vocabulary (e.g., atoms, brackets, bonds). | Simple, string-based, fast. | High rate of invalid SMILES generation (~10-40%); syntactic constraints. | 60-90% (with grammar constraints) |

| Molecular Graphs | Add an atom/node or form a bond/edge between existing atoms. | Intuitively chemical, inherently valid structures. | Larger, more complex action space; requires graph management. | >95% (valence rules enforced) |

| Molecular Fragments | Attach a pre-defined chemical fragment (e.g., from BRICS) to a growing molecule. | Chemically meaningful, high synthetic accessibility. | Limited to fragment library diversity; attachment point logic required. | >98% |

Detailed Experimental Protocols

Protocol 3.1: SMILES-Based Environment with Grammar Constraints

Objective: To create an RL environment where the state is a partial SMILES string and actions are tokens that extend it, using a grammar to enforce syntactic validity.

Materials:

- Dataset: ZINC15 or ChEMBL (pre-processed canonical SMILES).

- Software: RDKit (v2023.03.5 or later), Python (v3.9+).

Procedure:

- Vocabulary Construction:

- Process 50,000-100,000 random SMILES from your dataset.

- Tokenize into unique characters: atoms (C, N, O, etc.), ring digits (1, 2), branch parentheses

(,), and bond symbols (=,#). - Add start

^and stop$tokens. Typical vocabulary size: 35-45 tokens.

- Grammar Rule Definition:

- Implement context-free grammar rules. Example: a ring token must be followed by a matching digit or another bond/atom, not a stop token.

- Use a state machine to track open rings and branches.

- Action Masking Function:

- At each step, generate a binary mask over the entire action space (vocabulary).

- Allow an action only if the resulting partial SMILES string complies with all grammar rules. For example, mask out

)if no(is open.

- State Transition & Reward:

- State

s_t: The current partial SMILES string (padded/encoded). - Action

a_t: A token from the unmasked set. - State

s_{t+1}: Concatenation ofs_tanda_t. - The episode terminates upon the

$action. A molecule is valid only if RDKit'sChem.MolFromSmiles()successfully parses the final string.

- State

Protocol 3.2: Graph-Based Environment with Valence Checks

Objective: To build an environment where the state is a molecular graph, and actions involve adding atoms or bonds, with immediate valence validation.

Materials:

- Dataset: Same as 3.1.

- Software: RDKit, NetworkX (optional), PyTorch Geometric (for GNN-based agents).

Procedure:

- Action Space Enumeration:

- Atom Addition Actions: Define a set of allowable atom types (e.g., C, N, O, F) and a maximum number of allowed nodes (e.g., 40).

- Bond Addition Actions: For each pair of existing atoms

(i, j)without a full bond, define actions for possible bond types (single, double, triple). This leads to a large, dynamic action space.

- State Representation:

- Represent state as a tuple: an adjacency tensor (n x n x bondtypes) and a node feature matrix (n x atomtypes).

- Valence-Checking Transition Function:

- For an atom addition action (add atom type X), append X to the graph with a default single bond to a previous atom. Check that the previous atom's new valence does not exceed its maximum.

- For a bond addition action (add bond type Y between i and j), check that the valences of both atoms

iandjcan accommodate the new bond. - If a check fails, the action is invalid. The environment should mask it preemptively or assign a terminal negative reward.

- Termination: Episode ends when a pre-defined maximum number of steps (atoms) is reached or a terminal action is selected.

Protocol 3.3: Fragment-Based Environment using BRICS

Objective: To construct an environment where states are fragment-assembled molecules and actions are the attachment of a new fragment from a BRICS-decomposed library.

Materials:

- Dataset: ZINC15.

- Software: RDKit (with BRICS module).

Procedure:

- Fragment Library Generation:

- Take 100,000 molecules from ZINC15.

- Apply

BRICS.BRICSDecompose()with default parameters. This breaks molecules at retrosynthetically interesting bonds. - Remove duplicates and filter fragments by size (e.g., 4-30 heavy atoms). This yields a library of 500-2000 unique fragments with defined attachment points (dummy atoms).

- Action Space Definition:

- Each action is defined as a tuple:

(fragment_id, attachment_point_on_fragment, attachment_point_on_current_mol). - To reduce dimensionality, pre-compute compatible matches. An action is only valid if the bond types between the dummy atoms match (based on BRICS rules).

- Each action is defined as a tuple:

- State Initialization & Growth:

- Start state is a random fragment from the library or a simple scaffold (e.g., benzene).

- At each step, the environment lists all valid

(fragment, attach_frag, attach_mol)combinations from the library given the current molecule.

- Reaction Simulation:

- Execute the action by aligning the dummy atoms of the selected fragment and the current molecule, forming a new bond, and removing the dummy atoms.

- The new molecule is sanitized (

Chem.SanitizeMol).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Libraries for Environment Design

| Item | Supplier / Source | Function in Protocol |

|---|---|---|

| RDKit | Open-Source (rdkit.org) | Core cheminformatics toolkit for parsing SMILES, validating molecules, performing BRICS decomposition, and calculating properties. |

| PyTorch Geometric | PyTorch Ecosystem | Facilitates graph neural network (GNN) operations for graph-based state representations and policy networks. |

| OpenAI Gym / Gymnasium | OpenAI / Farama Foundation | Provides the standard API template (env.step(), env.reset()) for implementing custom reinforcement learning environments. |

| MolDQN / ChemGREAT Baselines | Published Code (GitHub) | Reference implementations of RL environments (often SMILES or graph-based) to accelerate development and ensure benchmarking consistency. |

| ZINC15 Database | UCSF | A primary source of commercially available, drug-like molecules (∼230 million) for training vocabulary, fragment libraries, and benchmarking. |

Visualization of Environment Design Workflow

Diagram 1: Decision Flow for Selecting Molecular Representation

Diagram 2: State-Action Transition in a Graph-Based Environment

In the context of implementing multi-objective reinforcement learning (MORL) for molecular optimization, the reward function is the critical mechanism that guides the generative model towards desirable chemical space. This step involves translating complex, often competing, drug discovery objectives into a single, scalar reward signal that an RL agent can maximize. This document details the formulation, engineering, and balancing of multi-objective reward functions for de novo molecular design.

Core Objectives in Molecular Optimization

The primary objectives in drug discovery can be categorized as follows. Quantitative targets are summarized in Table 1.

Table 1: Standard Quantitative Targets for Lead-like Molecules

| Objective | Typical Target Range | Metric / Calculation | Rationale |

|---|---|---|---|

| Potency (pIC50 / pKi) | > 7.0 (nM range) | -log10(IC50) | High biological activity against target. |

| Selectivity | Selectivity Index > 10 | log(IC50(off-target) / IC50(on-target)) | Minimize side effects. |

| Lipophilicity | cLogP: 1-3 | Computed partition coefficient (e.g., XLogP3) | Impacts permeability, solubility, and toxicity. |

| Molecular Weight | ≤ 500 Da | Sum of atomic masses | Adherence to Lipinski's Rule of Five. |

| Polar Surface Area | ≤ 140 Ų | Topological or 3D calculation | Predicts cell permeability (e.g., blood-brain barrier). |

| Synthetic Accessibility | SAscore ≤ 4 | Fragment-based complexity score (1=easy, 10=hard) | Feasibility of chemical synthesis. |

| Ligand Efficiency (LE) | > 0.3 kcal/mol per heavy atom | ΔG / Nheavyatoms | Normalizes potency by molecular size. |

Reward Function Architectures

The multi-objective reward function ( R{total} ) is engineered as a composite of sub-reward functions ( ri ) for each objective ( i ). Common architectures include:

Linear Combination (Weighted Sum)

[ R{total}(s, a) = \sum{i=1}^{n} wi \cdot fi(ri(s, a)) ] Where ( wi ) is a manually or dynamically tuned weight, and ( f_i ) is a normalization/scaling function (e.g., sigmoid, linear clipping).

Protocol 3.1: Calibrating Weights for Linear Reward Combination

- Define Normalization Bounds: For each objective ( i ), establish a "desirable" range ([li, hi]) and an "acceptable" range ([Li, Hi]) based on Table 1.

- Implement Scaling Functions: Transform each raw metric ( xi ) to a sub-reward ( ri \in [0, 1] ).

Example (Sigmoid Scaling for Potency pIC50):

- Initial Weight Estimation: Use Pareto-front analysis on a historical compound dataset. Perform linear regression to approximate the trade-off slope between normalized objectives.

- Iterative Refinement: Run short RL training cycles (e.g., 1000 episodes). Adjust weights ( w_i ) inversely proportional to the rate of improvement per objective to balance learning signals.

Constrained Optimization Formulation

Here, one primary objective (e.g., potency) is maximized, while others are enforced as constraints. [ R{total} = r{potency} \cdot \prod{j} I{constraintj} ] Where ( I{constraint_j} ) is an indicator function (1 if constraint met, else 0 or a small penalty).

Multi-Pass Filtering (Sequential)

A non-differentiable but highly interpretable method used in post-generation filtering or within a reward hierarchy.

- Pass 1: Reward based on chemical validity and basic properties (MW, heavy atoms).

- Pass 2: Apply reward for drug-likeness (cLogP, TPSA).

- Pass 3: Reward based on predicted potency (QSAR model).

- Pass 4: Reward based on synthetic accessibility and novelty.

Experimental Protocol: Implementing a Balanced MORL Reward

Aim: To train a MORL agent for generating novel DDR1 kinase inhibitors.

Materials & Reagents (The Scientist's Toolkit): Table 2: Key Research Reagent Solutions for MORL-Driven Molecular Optimization

| Item | Function in Protocol | Example / Specification |

|---|---|---|

| Chemical Simulation Environment | Provides state space & compound validity checks. | ChEMBL-derived action space, RDKit for cheminformatics. |

| Pre-trained Predictive Models | Provide fast, in-silico sub-reward scores. | QSAR model for pIC50 (DDR1), Random Forest for cLogP, SCScore for synthetic accessibility. |

| RL Agent Framework | The learning algorithm that interacts with the environment. | DeepChem (TF), Stable-Baselines3 (PyTorch), or custom Proximal Policy Optimization (PPO) implementation. |

| Molecular Fingerprint | Numerical representation of the molecular state. | Morgan Fingerprint (radius=3, nbits=2048) or MAE-pre-trained transformer embeddings. |

| Historical Compound Dataset | Used for weight calibration & baseline comparison. | ChEMBL DDR1 inhibitors (IC50 < 10 µM), filtered for lead-like space. |

| Pareto Optimization Library | For post-hoc analysis of multi-objective results. | PyGMO, Platypus, or custom Pareto-front visualization. |

Procedure:

- Environment Setup: Initialize a fragment-based molecular building environment (e.g., using

RDKitandOpenAI Gym). - Reward Function Definition: Implement the following composite reward:

- Agent Training: Configure a PPO policy network with Gated Recurrent Units (GRUs) to process the sequential fragment addition actions. Train for 50,000 episodes.

- Validation & Analysis: Every 5000 episodes, sample 100 generated molecules. Plot the 2D Pareto front (e.g., predicted pIC50 vs. cLogP) and compare its expansion against the baseline dataset from Table 2.

- Iterative Reward Shaping: If the agent converges to sub-regions (e.g., only high potency but high cLogP), dynamically adjust weights: ( w{lipo}^{new} = w{lipo}^{old} + \alpha \cdot (1 - \text{constraint_satisfaction_rate}) ).

Visualization of the MORL Reward Engineering Workflow

Diagram Title: Multi-Objective Reward Engineering Workflow for Molecular RL

Advanced Considerations & Pitfalls

- Sparse Reward Problem: Potency prediction is often only possible for a fully formed molecule. Mitigation: Include dense, intermediate rewards for substructure features associated with targets (e.g., pharmacophore matches).

- Reward Hacking: The agent may exploit flaws in predictive models (e.g., QSAR, SAscore). Mitigation: Use ensemble models, adversarial validation, and periodic in vitro validation of top-ranked generated compounds.

- Dynamic Weighting: Implement multi-objective optimization algorithms (e.g., based on the Analytic Hierarchy Process (AHP) or multi-gradient descent) to automate weight updates during training, moving the Pareto front effectively.

Within the broader thesis on implementing multi-objective reinforcement learning (MORL) for molecular optimization in drug discovery, the selection of a core strategy is paramount. Molecular optimization requires balancing competing objectives such as binding affinity (pIC50), synthesizability (SA Score), permeability (LogP), and toxicity predictions. This document details application notes and experimental protocols for the primary MORL strategies, contrasting scalarization methods with Pareto-based approaches, to guide researchers in designing automated molecular design pipelines.

Core MORL Strategy Comparison

The following table summarizes the key characteristics, advantages, and disadvantages of each major MORL strategy in the context of molecular optimization.

Table 1: Comparison of Core MORL Strategies for Molecular Optimization

| Strategy | Key Mechanism | Primary Use Case in Molecular Optimization | Advantages | Disadvantages | ||

|---|---|---|---|---|---|---|

| Linear Scalarization | Converts multi-objective reward to a single weighted sum: R_total = Σ w_i * r_i. |

Known, fixed preference for objectives (e.g., 70% affinity, 30% synthesizability). | Simple, fast, reduces to single-objective RL. Stable convergence. | Requires precise a priori weight knowledge. Misses concave Pareto fronts. | ||

| Weighted Sum | Generalized linear scalarization with dynamic or sampled weights. | Exploring a range of possible preferences or generating a discrete Pareto front approximation. | More flexible than fixed linear. Can generate diverse solutions. | Still cannot find solutions on non-convex regions of the Pareto front. | ||

| Chebyshev Scalarization | Minimizes weighted distance to a utopian reference point: `maxi [ wi * | z*i - fi(x) | ]`. | Finding balanced solutions when objectives have different scales (e.g., pIC50 vs. SA Score). | Can find solutions on non-convex Pareto fronts. Handles scale differences. | Requires setting a reference point. Weights still influence result. |

| Pareto-Based (e.g., MORL, Pareto Q-Learning) | Directly maintains a set of non-dominated policies or value vectors. | Discovering the full trade-off surface without predefined preferences for exploratory phases. | Finds entire Pareto front. No need for weight selection a priori. | Computationally expensive. Policy selection can be complex for end-users. |

Performance Metrics & Benchmark Data

Based on recent benchmarks in molecular RL (e.g., on Guacamol or PMO benchmarks), typical performance metrics are summarized below.

Table 2: Benchmark Performance of MORL Strategies on Molecular Tasks (Hypothetical Data Based on Current Literature Trends)

| Strategy | Hypervolume@100 (Higher is better) | Pareto Front Spread | Compute Time (Relative) | Best for Objective |

|---|---|---|---|---|

| Linear Scalarization | 0.65 ± 0.08 | Narrow | 1.0x (Baseline) | Single-target optimization |

| Weighted Sum (10 weights) | 0.78 ± 0.05 | Moderate | 3.5x | Discrete trade-off analysis |

| Chebyshev Scalarization | 0.82 ± 0.04 | Good | 3.8x | Balanced multi-property optimization |

| Pareto Q-Learning | 0.91 ± 0.03 | Excellent | 6.0x | Full frontier exploration |

Experimental Protocols

Protocol: Implementing Weighted Sum Scalarization for Lead Optimization

Objective: To optimize a lead compound for both binding affinity (pIC50) and synthesizability (SA Score) using a weighted sum MORL agent.

Materials: See Scientist's Toolkit (Section 5).

Procedure:

- Environment Setup: Configure the molecular simulation environment (e.g., based on RDKit and OpenAI Gym). Define state space (molecular graph/ fingerprint), action space (functional group addition/removal), and reward functions.

R_affinity = (pIC50_predicted / 10)# NormalizedR_synthesizability = 2 - SA_Score_predicted# Lower SA Score is betterR_total = α * R_affinity + (1-α) * R_synthesizability

- Weight Selection: Choose a set of α values from 0.1 to 0.9 in increments of 0.2 to explore the trade-off.

- Agent Training: For each α, train a separate DQN or PPO agent for N episodes (e.g., N=5000).

- Evaluation: For each trained policy, generate a set of molecules (e.g., 100). Evaluate true pIC50 and SA Score using external tools (e.g., docking, synthetic accessibility predictors).

- Analysis: Plot the generated molecules in objective space (pIC50 vs. SA Score). The points for different α values will approximate the attainable Pareto front.

Protocol: Pareto-Based MORL for Full Frontier Exploration

Objective: To identify the complete set of non-dominated molecular candidates across three objectives: pIC50, LogP (for permeability), and QED (Drug-likeness).

Procedure:

- Multi-Objective Reward Vector: Define a reward vector

R = [R_affinity, R_logP, R_qed]without scalarization. - Pareto Q-Learning Setup: Implement an agent that maintains a Pareto front of Q-values for each state-action pair. Use vectorial Q-values.

- Dominance Update Rule: Upon receiving a reward vector

r, update Q(s,a) to include the new vector only if it is not dominated by existing vectors in the set. Prune any vectors in the set that are dominated by the new arrival. - Action Selection: Use an exploration strategy like Pareto Thompson Sampling or epsilon-greedy based on a scalarization of the maintained front (e.g., randomly sampled weights each episode).

- Policy Execution: After training, the final set of non-dominated Q-vectors at the initial state corresponds to the set of optimal trade-off policies.

- Molecule Generation & Validation: Execute each Pareto-optimal policy to generate candidate molecules. Validate all candidates with rigorous in silico ADMET and synthetic planning assays.

Mandatory Visualizations

Diagram 1: MORL Strategy Workflows for Molecular Optimization

Diagram 2: Solution Concepts in Multi-Objective Molecular Optimization

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools for MORL in Molecular Optimization

| Item / Solution | Function in MORL Molecular Research | Example / Provider |

|---|---|---|

| Molecular Simulation Environment | Provides the RL environment: state representation, action space (chemical reactions), and reward calculation. | gym-molecule, MolGym, ChemRL (customizable). |

| Property Prediction Models | Fast, approximate reward functions for objectives like binding (pIC50), LogP, toxicity, SA Score. | Pre-trained Random Forest/NN models, RDKit descriptors, Chemprop. |

| RL Agent Framework | Implements the core MORL algorithms (scalarized or Pareto). | Ray RLlib, Stable-Baselines3 (custom extensions), TensorForce. |

| Chemical Toolkit | Handles molecular I/O, fingerprinting, graph representations, and validity checks. | RDKit (open-source), Open Babel. |

| Pareto Front Analysis Library | Computes hypervolume, spread, and other multi-objective performance metrics. | PyGMO, Platypus, pymoo. |

| High-Performance Computing (HPC) / GPU Cluster | Accelerates environment simulation (docking) and deep RL training. | Local Slurm cluster, Cloud GPUs (AWS, GCP). |

| Validation Suite (In Silico) | Provides ground-truth evaluation for generated molecules, beyond proxy rewards. | AutoDock Vina (docking), Schrödinger Suite, SwissADME. |

Application Notes

Multi-Objective Reinforcement Learning (MORL) provides a principled framework for navigating trade-offs in molecular design, such as efficacy versus synthesizability or potency versus toxicity. When integrated with modern generative models, it enables the exploration of vast chemical spaces with targeted property optimization. Recent advances demonstrate the superior sample efficiency and Pareto-frontier coverage of these hybrid systems compared to single-objective or weighted-sum approaches.

Key Integration Paradigms:

- RNN-based Agents: Utilize recurrent networks (e.g., LSTMs, GRUs) as policy networks for sequential molecular generation (e.g., SMILES strings). MORL guides the sequence generation process towards molecules satisfying multiple criteria.

- Transformer-based Agents: Leverage self-attention mechanisms to model long-range dependencies in molecular representations. MORL objectives can be integrated into the training loss or used to condition the generation process via prompts or adaptive reward shaping.

- GFlowNet-based Samplers: Employ Generative Flow Networks as a probabilistic framework for generating diverse candidates proportional to a multi-objective reward function. This is particularly suited for discovering diverse Pareto-optimal molecules in a single training run.

Quantitative Performance Summary (2023-2024 Benchmarks):

Table 1: Comparative Performance of MORL-Generative Model Hybrids on Molecular Optimization Tasks (Guacamol, MOSES benchmarks).

| Model Architecture | Avg. Pareto Hypervolume (↑) | Top-100 Novelty (↑) | Sample Efficiency (Molecules to Hit) | Multi-Objective Scalarization Method |

|---|---|---|---|---|

| MORL + RNN (PPO) | 0.72 ± 0.04 | 0.89 ± 0.03 | ~50,000 | Linear (Chebyshev) |

| MORL + Transformer (A2C) | 0.81 ± 0.03 | 0.92 ± 0.02 | ~35,000 | Envelope Q-Learning |

| MORL + GFlowNet | 0.88 ± 0.02 | 0.95 ± 0.01 | ~20,000 | Flow Matching |

| Single-Objective RL (Transformer) | 0.65 (on primary objective) | 0.82 ± 0.05 | ~25,000 (single obj.) | N/A |

Table 2: Typical Multi-Objective Targets for Drug-Like Molecule Generation.

| Objective | Typical Target Range/Value | Evaluation Model | Trade-Off Relationship |

|---|---|---|---|

| Binding Affinity (pIC50) | > 8.0 | Docked Score / QSAR Model | vs. Synthesizability |

| Selectivity (Log Ratio) | > 3.0 | Off-target Panel Prediction | vs. Broad Efficacy |

| Quantitative Estimate of Synthesizability (QED) | > 0.6 | Rule-based Calculator | vs. Potency |

| Predicted Toxicity Risk | < 0.3 | ADMET Predictor (e.g., ProTox) | vs. Binding Affinity |

Experimental Protocols

Protocol 2.1: Training a MORL-Conditioned Transformer for Molecular Generation

Objective: To train a Transformer-based policy model to generate molecules that optimize a set of distinct property objectives.

Materials: See "Scientist's Toolkit" below.

Procedure:

- Environment Setup: Define the molecular generation environment. The state is the current sequence (SMILES or SELFIES), and an action is the next token to append.

- Reward Vector Calculation: For each fully generated molecule, compute a vector of rewards R = [r₁, r₂, ..., rₙ]. For example:

- r₁: Docking score from AutoDock Vina (normalized).

- r₂: QED score (0-1).

- r₃: Negative of SAscore (synthesizability, inverted).

- Agent Architecture: Use a Transformer decoder as the policy network πθ(a|s). The network's final linear layer outputs logits for the token vocabulary.

- MORL Training Loop (Envelope Q-Learning): a. Collection: Generate trajectories (sequences) using the current policy πθ. b. Q-Vector Estimation: For each state-action pair, use a critic network to estimate a vector Q(s,a) ∈ Rⁿ. c. Scalarization: Compute a scalarized Q-value for a set of weights w sampled from a simplex: Qscalar = min{j∈[1...k]} (Qj(s,a) / wj) [Chebyshev decomposition]. d. Update: Update the policy parameters θ via policy gradient (e.g., A2C) to maximize the expected scalarized return. Update the critic network via TD learning towards the target reward vector + γ * Q(s',a'). e. Persistence: Store all generated molecules and their reward vectors in a buffer of Pareto-optimal candidates.

- Conditional Inference: To bias generation towards a specific region of the Pareto front (e.g., high potency), condition the Transformer by initializing the sequence with a prompt token encoding the desired weight vector w.

Protocol 2.2: Multi-Objective Discovery with GFlowNets

Objective: To sample a diverse set of molecules from a distribution where the probability is proportional to a composite multi-objective reward R(x).

Procedure:

- Reward Definition: Define a composite reward function R(x) = ∏{i=1}^n (ri(x))^λi, where λi are tuning parameters controlling the importance of each objective.

- Flow Network Setup: Model the generative process as a flow in a directed acyclic graph (DAG) where states are partial molecules and actions are adding fragments.

- Loss Function (Trajectory Balance): a. For a complete trajectory τ = (s₀ → s₁ → ... → sf=x), compute the loss: L(τ) = [log (Z ∏{t} PF(s{t+1}|st; θ)) / R(x) ]² b. Z is a learnable global partition function. c. PF is the forward policy (generative) network.

- Training: Sample trajectories from the forward policy P_F. Minimize the trajectory balance loss to make the likelihood of sampling x proportional to R(x).

- Diverse Sampling: Upon convergence, sampling from P_F yields molecules with probability proportional to the multi-objective reward, leading to diverse, high-quality candidates.

Visualizations

MORL-Generative Model Integration Workflow

GFlowNet Training for Multi-Objective Sampling

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools.

| Item Name | Category | Function / Purpose | Example/Provider |

|---|---|---|---|

| MOSES/Guacamol Dataset | Benchmark Data | Standardized molecular sets for training & benchmarking generative models. | MoleculeNet, TDC |

| RDKit | Cheminformatics Library | Open-source toolkit for molecule manipulation, descriptor calculation (QED, SA), and fingerprint generation. | RDKit.org |

| AutoDock Vina | Molecular Docking | Computes binding affinity (pIC50 proxy) for generated molecules against a target protein structure. | Scripps Research |

| OpenAI Gym / ChemGym | RL Environment | Customizable toolkit for creating molecular generation RL environments with standardized APIs. | OpenAI, IBM |

| PyTorch / TensorFlow | Deep Learning Framework | Libraries for building and training RNN, Transformer, and GFlowNet models. | Facebook, Google |

| ProTox-III / admetSAR | ADMET Prediction | Web servers or local models for predicting toxicity, metabolism, and other pharmacological properties. | Charité, LMMD |

| PyMol / ChimeraX | Visualization | For analyzing and visualizing docked poses of generated lead molecules. | Schrödinger, UCSF |

| ORCA / Gaussian | Quantum Chemistry | For high-fidelity calculation of electronic properties if required for reward (e.g., solvation energy). | Max Planck, Gaussian Inc. |

This application note details a practical implementation within the broader thesis research on Implementing multi-objective reinforcement learning (MORL) for molecular optimization. The core challenge in de novo molecular design is navigating a vast chemical space to identify compounds that simultaneously satisfy multiple, often competing, property objectives. This case study demonstrates a workflow for optimizing small molecules for high binding affinity to a target protein (e.g., kinase DRK1) while minimizing predicted toxicity endpoints, specifically hERG channel inhibition and mutagenicity (Ames test).

The following tables summarize quantitative benchmarks and results from the MORL agent's performance.

Table 1: Multi-Objective Reward Function Components

| Objective | Proxy Model/Scoring Function | Weight (λ) | Goal | Source/Validation |

|---|---|---|---|---|

| Binding Affinity | Docking Score (ΔG, kcal/mol) via AutoDock Vina | 0.7 | Minimize | Cross-docked against known crystal structures. |

| hERG Inhibition | Predicted pIC50 from dedicated QSAR model (ADMETlab 2.0) | 0.15 | Maximize (lower inhibition) | Model AUC: 0.87 on external test set. |

| Ames Mutagenicity | Predicted probability from SAscore-corrected classifier | 0.15 | Minimize probability | Model BA: 0.81 on external test set. |

| Synthetic Accessibility | SAscore (1-easy, 10-hard) | Penalty term | Keep < 4.5 | RDKit implementation. |

Table 2: Optimization Run Results (Iteration 250)

| Metric | Initial Population (Avg.) | MORL-Optimized Set (Avg.) | Best Candidate (MORL-107) | Improvement |

|---|---|---|---|---|

| Docking Score (ΔG) | -8.2 kcal/mol | -10.5 kcal/mol | -11.7 kcal/mol | 42.7% |

| Predicted hERG pIC50 | 5.1 | 4.3 | 4.0 | Lower inhibition |

| Ames Probability | 0.35 | 0.12 | 0.08 | 77.1% reduction |

| SA Score | 3.8 | 4.1 | 3.9 | Controlled |

| QED | 0.45 | 0.62 | 0.71 | 57.8% |

Experimental Protocols

Protocol 1: MORL Agent Training and Molecular Generation

- Objective: To train a MORL agent for iterative molecular generation guided by a multi-component reward.

- Materials: Python 3.9+, PyTorch, OpenAI Gym, RDKit, ChEMBL dataset pre-processed SMILES.

- Procedure:

- Environment Setup: Define the chemical space as an SMILES-based string environment. The agent's actions are appending valid characters to the string.

- Reward Calculation: For each fully generated molecule, compute the weighted sum reward: R_total = λ1Norm(Docking Score) + λ2Norm(hERG pIC50) + λ3*Norm(1-Ames Prob) - Penalty(SA Score>4.5).

- Agent Architecture: Implement a Proximal Policy Optimization (PPO) actor-critic network with a GRU-based policy network to handle sequential SMILES generation.

- Training: Run for 250 episodes. Each episode, the agent generates a batch of 512 molecules. Rewards are calculated, and the policy is updated to maximize cumulative expected reward.

- Sampling: Save the top 5% of molecules from the final generation batch for in silico validation.

Protocol 2: In Silico Validation of Optimized Candidates

- Objective: To rigorously evaluate the binding affinity and toxicity predictions for the MORL-generated candidates.

- Materials: MORL output molecules (SDF format), AutoDock Vina 1.2.3, target protein PDB file (e.g., 7DHR for DRK1), ADMETlab 2.0 web API or local instance.

- Procedure for Docking:

- Prepare the protein structure: Remove water, add polar hydrogens, assign Kollman charges.

- Prepare ligands: Convert SMILES to 3D, minimize energy using MMFF94, output as PDBQT.

- Define a docking grid centered on the native ligand's binding site with dimensions 25x25x25 Å.

- Run Vina with an exhaustiveness of 32. Record the best binding pose and its affinity (kcal/mol).

- Procedure for Toxicity Prediction:

- Format the candidate SMILES list.

- Submit batch prediction to the ADMETlab 2.0

Predictionmodule for endpointshERGandAmes. - For critical hits, run consensus prediction using an additional model (e.g., ProTox-II) to assess prediction robustness.

Mandatory Visualizations

Title: MORL Molecular Optimization Workflow

Title: Multi-Objective Reward Calculation Diagram

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Software

| Item | Category | Function in This Study | Source/Example |

|---|---|---|---|

| AutoDock Vina | Molecular Docking | Provides rapid, scalable prediction of protein-ligand binding affinity (primary objective score). | Open Source (Scripps) |

| ADMETlab 2.0 | ADMET Prediction Platform | Offers pre-trained, robust QSAR models for critical toxicity endpoints (hERG, Ames). | Computational Platform |

| RDKit | Cheminformatics | Core library for SMILES handling, molecular manipulation, fingerprint generation, and SAscore calculation. | Open Source |

| PyTorch | Deep Learning Framework | Enables building and training the custom PPO reinforcement learning agent policy network. | Meta / Open Source |

| ChEMBL Database | Chemical Data | Source of initial bioactive molecules for pre-training and baseline population generation. | EMBL-EBI |

| OpenAI Gym | RL Development | Provides the framework for defining the molecular generation environment and agent interaction loop. | Open Source |

| ProTox-II | Toxicity Prediction | Used for secondary consensus prediction of toxicity to validate primary model results. | Charité University |

| MMFF94 Force Field | Molecular Mechanics | Used for 3D ligand conformation energy minimization prior to docking simulations. | Implemented in RDKit |

Overcoming Key Challenges: Reward Conflicts, Sparsity, and Scalability

Diagnosing and Mitigating Reward Hacking and Objective Trade-Offs

Within molecular optimization research, Multi-Objective Reinforcement Learning (MORL) aims to balance competing goals such as binding affinity, synthesizability, and low toxicity. However, learned agents often exploit flaws in the reward function (reward hacking) or fail to find satisfactory trade-offs between objectives. These phenomena critically undermine the validity and utility of generated molecular candidates, necessitating robust diagnostic and mitigation protocols.

Quantitative Landscape of Common Issues

The following table summarizes key failure modes, their indicators, and frequency as reported in recent literature.

Table 1: Prevalence and Indicators of MORL Failure Modes in Molecular Optimization

| Failure Mode | Primary Indicator (Quantitative) | Typical Prevalence in Unmitigated Runs | Impact Score (1-10) |

|---|---|---|---|

| Reward Hacking | >90% of top-scoring candidates violate a known, unpenalized chemical constraint (e.g., PAINS filters). | 30-50% | 9 |

| Objective Trade-Off Collapse | Pareto Front hypervolume decreases by >40% during late-stage training. | 20-35% | 8 |

| Metric Gaming | Optimized proxy metric (e.g., QED) improves by >30%, while true objective (experimental validation) shows no correlation (R² < 0.1). | 25-40% | 9 |

| Distributional Shift | Training distribution KL divergence between early and late epochs > 5.0. | 15-30% | 7 |

Diagnostic Protocols

Protocol for Detecting Reward Hacking

Objective: Systematically identify if an agent is exploiting loopholes in the reward function. Materials: Trained MORL agent, validation set of molecules with known ground-truth properties, cheminformatics toolkit (e.g., RDKit), defined constraint set. Procedure:

- Generate Candidate Pool: Use the trained policy to sample 10,000 molecules.

- Compute Reward Components: For each molecule, compute all individual reward signals (R1, R2... Rn) used during training.

- Audit for Constraint Violations: Screen the top 100 molecules by total reward against a set of unrewarded fundamental constraints (e.g., synthetic accessibility score > threshold, presence of toxicophores).

- Statistical Test: Calculate the percentage of top candidates violating one or more constraints. A rate exceeding 5% (benchmark) indicates significant reward hacking.

- Root-Cause Analysis: Correlate high total reward with specific, easily maximized but undesirable substructures visualized from the violating set.

Protocol for Assessing Objective Trade-Offs

Objective: Quantify the collapse or degradation of the Pareto Front. Materials: MORL agent checkpoints across training, high-fidelity simulator or oracle for objective evaluation. Procedure:

- Pareto Front Sampling: At each checkpoint (e.g., every 10k training steps), use the agent to generate 5,000 molecules. Evaluate each molecule on all true objectives (e.g., using a more expensive but accurate simulator).

- Hypervolume Calculation: For each checkpoint, compute the hypervolume of the non-dominated set against a defined reference point (e.g., worst acceptable value for each objective).

- Trend Analysis: Plot hypervolume vs. training step. A significant peak followed by a decline (>20%) indicates trade-off collapse, where the agent over-optimizes one objective at the expense of others.

- Visual Inspection: Generate 2D/3D scatter plots of the Pareto Front at peak and final checkpoints to visualize the loss of diversity.

Mitigation Strategies & Experimental Implementation

Constraint-Aware Reward Shaping

Principle: Integrate potential constraint violations directly into the reward function as penalty terms. Implementation Protocol:

- Define Penalty Function: For each constraint i, define a continuous penalty term Pᵢ(m) ∈ [0, 1], where 1 indicates severe violation.

- Formulate Shaped Reward:

R_shaped(m) = R_original(m) - λ * Σᵢ wᵢ * Pᵢ(m) - Calibrate Weights (λ, wᵢ): Perform a grid search over a small validation set. Choose weights that reduce the hacking rate (Protocol 3.1) to <5% while maintaining >80% of the original hypervolume for core objectives.

- Re-train: Re-train the MORL agent using

R_shaped.

Pareto-Efficient Curriculum Learning

Principle: Structure training to progressively expand the objective space, preventing early collapse. Implementation Protocol:

- Curriculum Design: Start training with a weighted sum of 1-2 primary objectives (e.g., affinity, logP).

- Phase Introduction: After performance plateaus, introduce a secondary objective (e.g., synthesizability) as an additional term, initially with a low weight.

- Dynamic Weight Adjustment: Every N steps, compute the per-objective gradient norm. If the ratio between the largest and smallest norm exceeds a threshold (e.g., 10), adjust weights to favor the neglected objective(s).

- Final Phase: For the last 20% of training, switch to a true multi-objective algorithm (e.g., MO-PPO) using the full set of objectives to refine the Pareto Front.

Diagram 1: Pareto curriculum training workflow.

Multi-Fidelity Validation Loop

Principle: Use a hierarchy of evaluation models to prevent gaming of low-fidelity proxies. Implementation Protocol:

- Tiered Evaluation Setup:

- Tier 1 (Fast, Low-Fidelity): ML-based property predictor (used for frequent reward during training).

- Tier 2 (Slow, High-Fidelity): Molecular dynamics simulation or docking score.

- Tier 3 (Expert): Medicinal chemist evaluation (synthesizability score).

- Validation Schedule: Every 50k training steps, evaluate the current batch of top candidates using Tier 2 and Tier 3 oracles.

- Reward Calibration: Compute the correlation (R²) between Tier 1 predicted scores and Tier 2/3 scores. If R² < 0.7, pause training and fine-tune the Tier 1 predictor on data points from Tiers 2/3.

- Policy Update: Incorporate a penalty based on the discrepancy between Tier 1 and Tier 2 scores for the last validation batch into the reward function before resuming training.

Diagram 2: Multi-fidelity validation loop for reward calibration.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for MORL in Molecular Optimization

| Item Name | Function/Benefit | Example Vendor/Implementation |

|---|---|---|

| GuacaMol Benchmark Suite | Provides standardized tasks and baselines for benchmarking molecular generation models, including multi-objective tasks. | BenevolentAI/Bristol-Myers Squibb |

| DeepChem Library | Offers pre-built layers for graph neural networks and integration with RL frameworks (RLlib, Stable-Baselines3) for custom agent development. | DeepChem |

| Oracle Ensemble (e.g., TDC) | Access to a suite of predictive oracles for key drug properties (toxicity, solubility) to construct diverse reward signals. | Therapeutics Data Commons |

| RDKit Cheminformatics Toolkit | Fundamental for molecular representation (SMILES, fingerprints), substructure analysis, and calculating constraint penalties (e.g., PAINS filters). | Open Source |

| PARETO Python Library | Specialized for multi-objective optimization analysis, enabling efficient hypervolume calculation and Pareto Front visualization. | Open Source |

| High-Performance Computing (HPC) Cluster with GPU Nodes | Essential for training large-scale RL models and running high-fidelity simulations (e.g., molecular docking) for validation. | Local Institutional / Cloud (AWS, GCP) |

Strategies for Sparse and Delayed Reward Signals in Molecular Exploration

Within the broader thesis on implementing multi-objective reinforcement learning (MORL) for molecular optimization, a central challenge is the nature of the reward signal. In molecular exploration—encompassing drug discovery, material design, and chemical synthesis planning—the RL agent often operates in an environment with sparse (rewards only upon finding a valid/optimal molecule) and delayed rewards (final properties require expensive, time-consuming in silico or wet-lab evaluation). This document outlines application notes and protocols to address these challenges, enabling efficient navigation of vast chemical spaces.

Core Strategies: Theory & Application Notes