Molecular Representation in AI: A Comprehensive Guide for Drug Discovery and Biomedical Research

This article provides a comprehensive overview of molecular representation methods for AI models, tailored for researchers, scientists, and drug development professionals.

Molecular Representation in AI: A Comprehensive Guide for Drug Discovery and Biomedical Research

Abstract

This article provides a comprehensive overview of molecular representation methods for AI models, tailored for researchers, scientists, and drug development professionals. It explores the foundational concepts of why molecules are not strings and the evolution from SMILES to graphs, delves into modern methodological approaches including graph neural networks (GNNs), 3D conformers, and self-supervised learning. The guide addresses common pitfalls in data quality, model generalization, and computational challenges, and offers comparative analyses of representation techniques across key tasks like property prediction and virtual screening. Finally, it synthesizes validation best practices and outlines future directions impacting biomedical innovation.

Beyond Strings: Why Molecules Are Graphs and the Evolution of AI Representations

The foundational thesis of modern AI-driven molecular science posits that the accurate and information-rich digital representation of a compound's structure is the primary determinant of model performance in downstream tasks. This guide addresses the core technical challenge of that thesis: transforming the multidimensional reality of a molecule—its atoms, bonds, conformations, and electronic properties—into a structured, machine-readable format suitable for computational analysis and model training.

Core Representation Modalities: Quantitative Comparison

The field utilizes several complementary schemas for molecular representation, each with distinct advantages and computational trade-offs.

Table 1: Core Molecular Representation Modalities

| Representation Format | Data Structure | Key Features | Common Use Cases | Dimensionality | Typical File Size (Avg. Small Molecule) |

|---|---|---|---|---|---|

| SMILES | Linear String | Human-readable, compact, lossless 2D representation. | High-throughput screening, database indexing, QSAR. | 1D | 1-2 KB |

| InChI/InChIKey | Layered String | Standardized, unique, non-proprietary identifier. | Database deduplication, web search, unambiguous reference. | 1D | ~150 bytes (Key) |

| Molecular Graph | Graph (G=(V,E)) | Natural representation of atoms (nodes) and bonds (edges). | Graph Neural Networks (GNNs), property prediction. | 2D (topology) | Variable (tensor) |

| Molecular Fingerprint | Bit Vector (e.g., 1024-bit) | Hashed structural features, fixed length, efficient similarity search. | Virtual screening, similarity-based retrieval, clustering. | 1D (binary) | 128-4096 bytes |

| 3D Coordinate File (e.g., SDF, PDB) | List of Cartesian coordinates + connectivity | Explicit 3D conformation, essential for stereochemistry and docking. | Molecular dynamics, docking simulations, conformational analysis. | 3D | 5-100 KB |

| Quantum Mechanical Descriptors | Tensor/Vector | Electronic properties (e.g., partial charges, orbital energies). | Quantum chemistry, reactivity prediction, high-accuracy modeling. | High-D | >100 KB |

Detailed Methodologies for Key Translation Experiments

Protocol: Generating a Standardized 3D Conformer Set from SMILES

This protocol is critical for creating consistent 3D data for model training.

- Input Sanitization: Validate and canonicalize the input SMILES string using a toolkit like RDKit.

- 2D to 3D Conversion: Use the

RDKit.Chem.rdmolfiles.MolFromSmiles()followed byRDKit.Chem.rdmolops.AddHs()andRDKit.Chem.rdDistGeom.EmbedMolecule()to generate an initial 3D conformation. - Force Field Optimization: Refine the initial geometry using a molecular mechanics force field (e.g., MMFF94 or UFF). Execute minimization until a convergence threshold (e.g., gradient norm < 0.01 kcal/mol/Å) is reached.

- Conformer Ensemble Generation: Use a distance geometry or torsion drive method (e.g.,

ETKDGalgorithm in RDKit) to generate a diverse set of conformers (e.g., 50 per molecule). - Ensemble Optimization and Ranking: Optimize each conformer with the force field and rank them by energy. Select the lowest-energy conformer as the representative, or retain a diverse subset for conformationally sensitive tasks.

- Output: Write the final 3D structure(s) to a standardized file format (SDF, PDBQT).

Protocol: Constructing a Labeled Molecular Graph for a GNN

This protocol details the featurization process for atom and bond nodes.

Graph Construction:

- Parse the molecule from a SMILES or SDF file.

- Define atoms as graph nodes (

V). Define bonds as graph edges (E). - For undirected graphs, represent each bond as two directed edges.

Node (Atom) Featurization:

- Extract a feature vector for each atom

v_i. Common features include:- Atomic number (one-hot encoded)

- Degree of connectivity

- Hybridization (

sp,sp2,sp3) - Formal charge

- Number of attached hydrogens

- Membership in a ring

- Aromaticity

- Extract a feature vector for each atom

Edge (Bond) Featurization:

- Extract a feature vector for each bond

e_ij. Common features include:- Bond type (single, double, triple, aromatic) (one-hot)

- Conjugation

- Stereochemistry (cis/trans)

- Presence in a ring

- Extract a feature vector for each bond

Global Context (Optional): Append a master node connected to all atoms or compute molecular-level descriptors as an additional feature vector.

Output Format: The graph is represented as a tuple of feature tensors:

(V {n_atoms x n_node_features}, E {n_edges x n_edge_features}, Adjacency {n_atoms x n_atoms}).

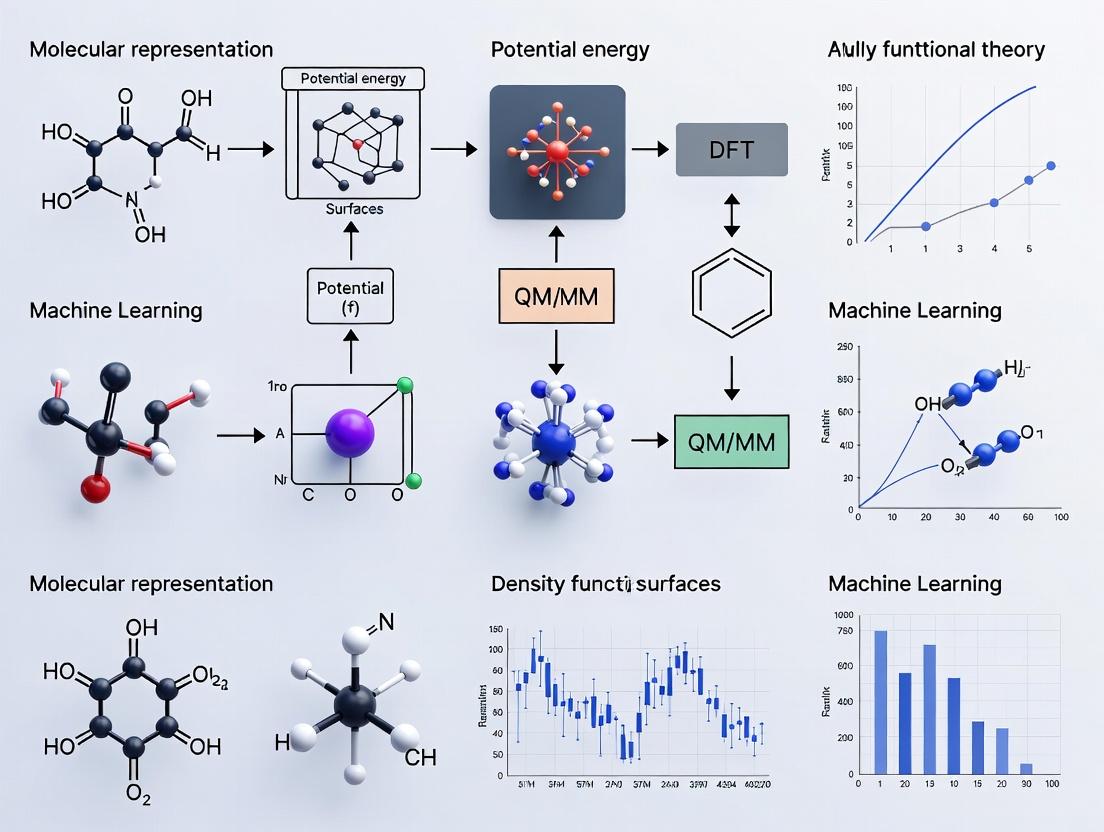

Visualization of Key Workflows

Diagram: Molecular Representation Translation Pipeline

Diagram: Molecular Graph Featurization Process

Note: The image attribute in the second DOT script is a placeholder. In a live implementation, a local or URL path to a caffeine structure image would be required.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Software Tools & Libraries for Molecular Translation

| Tool/Library | Primary Function | Key Capabilities | Language |

|---|---|---|---|

| RDKit | Core cheminformatics | SMILES I/O, 2D/3D operations, fingerprint generation, graph construction, molecular descriptors. | Python, C++ |

| Open Babel | Chemical file conversion | Supports >110 formats, command-line and API access, batch processing, energy minimization. | C++, Python bindings |

| PyTorch Geometric (PyG) / DGL-LifeSci | Deep learning for graphs | Specialized layers for GNNs on molecules, batch handling, dataset utilities. | Python |

| MoleculeNet | Benchmark datasets | Curated datasets (e.g., QM9, Tox21) with standardized splits for model evaluation. | Python |

| CONFLEX or OMEGA | Advanced conformer generation | High-quality, rule-based 3D conformer ensemble generation for drug-like molecules. | Commercial |

| Psi4 or Gaussian | Quantum chemical calculations | Generate high-fidelity electronic structure descriptors (orbitals, charges, energies). | C++ / Fortran |

| MDTraj or MDAnalysis | Molecular dynamics trajectory analysis | Process 3D coordinate time-series data for dynamic feature extraction. | Python |

This paper constitutes a foundational chapter in a broader thesis on the Basics of Molecular Representation in AI Models Research. The evolution from deterministic, rule-based notations to learned, continuous vector embeddings represents the critical enabling paradigm shift for modern computational chemistry, drug discovery, and materials science. Effective representation determines the upper bound of predictive model performance, making its history and technical progression essential knowledge for researchers and practitioners.

The Era of String-Based Representations: SMILES and Beyond

The journey begins with symbolic representations designed for human interpretability and database storage.

SMILES (Simplified Molecular Input Line Entry System)

Developed by David Weininger in the 1980s, SMILES is a line notation using ASCII strings to represent molecular structures via a depth-first traversal of the molecular graph.

Experimental Protocol for SMILES Generation (Canonicalization):

- Input: A molecular structure (e.g., from a 2D drawing or 3D coordinates).

- Hydrogen Suppression: Implicitly represent hydrogens adhering to standard valency rules.

- Graph Traversal: Apply the Depth-First Search (DFS) algorithm, starting from a chosen atom according to canonical labeling rules (e.g., the Morgan algorithm for atom prioritization).

- Bond & Branch Notation: Write atomic symbols in square brackets for atoms with non-standard valency or isotopic information. Use symbols

-,=,#,:for single, double, triple, and aromatic bonds, respectively. Represent branches with parentheses and ring closures with matching digit labels. - Output: A unique canonical SMILES string ensuring one representation per molecule.

InChI (International Chemical Identifier)

A non-proprietary, layered standard developed by IUPAC and NIST to provide a unique, hash-like identifier.

Key Research Reagent Solutions for String-Based Era

| Reagent / Tool | Function in Molecular Representation |

|---|---|

| Open Babel | An open-source chemical toolbox for converting between file formats and descriptors (e.g., SMILES, InChI, 3D coordinates). |

| RDKit (Cheminformatics Library) | Provides functions for SMILES parsing, canonicalization, fingerprint generation, and molecular substructure searching. |

| CDK (Chemistry Development Kit) | A Java library offering similar functionalities to RDKit for cheminformatics and bioinformatics. |

| CANON Algorithm | The canonicalization algorithm for generating unique SMILES; often implemented via the Morgan atom connectivity index. |

The Quantitative Descriptor & Fingerprint Phase

This phase introduced numerical vectors encoding molecular properties and substructures.

Molecular Descriptors

These are numerical values quantifying physical-chemical properties (e.g., molecular weight, logP, polar surface area) or topological indices (e.g., Wiener index).

Structural Fingerprints

Bit vectors indicating the presence or absence of specific molecular substructures or paths.

- MACCS Keys: A fixed set of 166 structural fragments.

- Circular Fingerprints (ECFP, Morgan): Represent atoms in the context of their circular neighborhoods (radius-based).

Experimental Protocol for Generating ECFP4 Fingerprints (Using RDKit):

- Input: A canonical SMILES string.

- Parsing: Use

rdkit.Chem.rdmolfiles.MolFromSmiles()to create a molecule object. - Atom Initialization: Assign each atom a unique integer identifier based on its local invariant properties (atomic number, degree, etc.).

- Iterative Neighborhood Expansion: For

niterations from 0 to the specified radiusR(e.g., R=2 for ECFP4): a. For each atom, generate a string representing the set of identifiers within the radial distancen. b. Hash each string to a 32-bit integer. c. Collect all hashes from all atoms for this iteration. - Folding: Combine all hashed identifiers from all iterations and fold into a fixed-length bit vector (e.g., 2048 bits) using modulo operations.

- Output: A binary bit vector representing the molecule’s substructural features.

Table 1: Comparison of Key Molecular Fingerprint Methods

| Method | Type | Length | Information Encoded | Key Algorithm/Concept |

|---|---|---|---|---|

| MACCS Keys | Substructure Key | 166 bits | Presence of 166 pre-defined chemical substructures | Structural fragment dictionary |

| ECFP / Morgan FP | Circular | Configurable (e.g., 2048) | Circular atomic neighborhoods up to radius R | Morgan algorithm, hashing, folding |

| Atom Pair FP | Topological | Configurable | Pairs of atoms and the shortest path distance between them | Distance matrix enumeration |

| RDKit Topological Torsion FP | Topological | Configurable | Sequences of 4 connected atoms and their torsion angles | Linear atom path enumeration |

The Deep Learning Revolution: Learned Representations

Deep learning models autonomously learn continuous, task-informed vector representations (embeddings) from data.

Sequence-Based Models (Treating SMILES as Text)

Models like RNNs and Transformers process SMILES strings as sequences of characters/tokens.

Experimental Protocol for SMILES-Based Transformer Pre-training (e.g., ChemBERTa):

- Data Curation: Gather a large corpus of canonical SMILES strings (e.g., from PubChem or ZINC).

- Tokenization: Apply Byte-Pair Encoding (BPE) or WordPiece tokenization to segment SMILES into meaningful subword units (e.g.,

"C","=O","n1"). - Model Architecture: Implement a Transformer encoder stack with multi-head self-attention and feed-forward layers.

- Pre-training Objective: Use Masked Language Modeling (MLM)—randomly mask 15% of tokens and train the model to predict them from context.

- Fine-tuning: For a downstream task (e.g., property prediction), replace the output head and train on labeled data, often with a lighter learning rate.

Graph-Based Models (Direct Structure Processing)

Graph Neural Networks (GNNs) operate directly on the molecular graph G = (V, E), where nodes V are atoms and edges E are bonds.

Experimental Protocol for a Message-Passing Neural Network (MPNN):

- Graph Construction: Convert SMILES to a graph. Node features: atomic number, hybridization, etc. Edge features: bond type, conjugation.

- Message Passing (Multiple Steps):

a. Message Function: For each edge, a neural network generates a message

m_{vw}from sender nodevand edge features. b. Aggregation: For each nodew, aggregate incoming messages (e.g., sum) to formM_w. c. Update Function: A GRU or NN updates node stateh_wusingM_wand its previous state. - Readout (Graph Pooling): After

kmessage-passing steps, aggregate all node states into a single graph-level representation using a permutation-invariant function (e.g., sum, mean, or attention-weighted sum). - Prediction: Pass the graph-level vector through a feed-forward network for property prediction.

Table 2: Performance Comparison of Representation Types on Benchmark Tasks (MoleculeNet)

| Representation Model | Dataset: ESOL (RMSE ↓) | Dataset: BBBP (ROC-AUC ↑) | Dataset: HIV (ROC-AUC ↑) | Key Advantage |

|---|---|---|---|---|

| Classical (ECFP4 + RF) | 0.90 | 0.81 | 0.79 | Interpretability, computational speed |

| SMILES Transformer (ChemBERTa) | 0.58 | 0.85 | 0.82 | Contextual token embeddings, transfer learning |

| Graph Network (MPNN) | 0.53 | 0.90 | 0.84 | Direct 3D capability, structure-awareness |

| Graph Network (Attentive FP) | 0.49 | 0.92 | 0.86 | Attention mechanism for adaptive feature weighting |

Visualization of Evolution and Model Architectures

Diagram Title: Evolution of Molecular Representation Paradigms

Diagram Title: MPNN Workflow for Molecular Property Prediction

The Scientist's Toolkit for Modern Molecular Representation Research

| Essential Tool / Platform | Function & Role in Research |

|---|---|

| RDKit | Core cheminformatics operations: molecule I/O, fingerprint generation, substructure search, 2D/3D coordinate generation. |

| PyTorch Geometric (PyG) / DGL-LifeSci | Specialized libraries for building and training Graph Neural Networks on molecular graphs with standardized datasets and models. |

| Transformers Library (Hugging Face) | Framework for implementing and using Transformer models; adapted for chemistry (e.g., ChemBERTa, MolBERT). |

| MoleculeNet Benchmark | Curated collection of molecular datasets for fair comparison of machine learning models across multiple property prediction tasks. |

| GPU Computing Cluster | Essential for training large deep learning models (Transformers, GNNs) on datasets with hundreds of thousands of molecules. |

| Automated ML Platforms (e.g., DeepChem) | Provides high-level APIs that streamline the process of experimenting with different molecular representations and model architectures. |

The history of molecular representation from SMILES to deep learning embodies a shift from human-designed, sparse, and local descriptors to machine-learned, dense, and holistic embeddings. Within the broader thesis, this evolution underscores a core principle: the representation of a molecule is not a fixed chemical truth but a design choice that fundamentally shapes the capabilities of the AI model. The future lies in geometrically aware representations (3D GNNs), multi-modal models (combining sequences, graphs, and spectra), and self-supervised learning paradigms that leverage vast, unlabeled chemical space to discover representations encoding richer chemical and biological intent.

Within the foundational thesis of molecular representation for AI model research, defining a "good" representation is paramount. Effective representations act as the critical interface between raw chemical data and machine learning algorithms, directly dictating model performance in drug discovery, materials science, and chemistry. This technical guide deconstructs the three core pillars—Invariance, Completeness, and Efficiency—that underpin robust molecular representations for AI.

Invariance

A representation must be invariant to transformations that do not alter the molecule's intrinsic identity or properties. This ensures the model learns fundamental chemistry, not arbitrary input formats.

Key Invariance Requirements:

- Permutation Invariance: The representation must not depend on the arbitrary ordering of atoms or bonds in the input data.

- Rotation/Translation Invariance: For 3D structures, the representation should be unchanged by the molecule's orientation or position in space.

- Symmetry Invariance: The representation must respect the molecule's point group symmetries.

Experimental Protocol for Validating Invariance:

- Dataset Preparation: Curate a set of molecular structures (e.g., from QM9 database). For each molecule, generate multiple "augmented" versions: a) Randomly permute atom indices. b) Apply random 3D rotations and translations. c) Generate tautomers or resonance structures.

- Representation Generation: Compute the candidate representation (e.g., Coulomb matrix, Smooth Overlap of Atomic Positions (SOAP), 3D graph) for both the canonical and all augmented versions.

- Similarity Metric Calculation: For each molecule, compute the pairwise similarity (e.g., cosine similarity, Euclidean distance) between the canonical representation and all its augmented variants.

- Analysis: A perfectly invariant representation will show near-identical similarity scores (cosine similarity ~1.0, Euclidean distance ~0.0). Statistical analysis (mean, variance) across the dataset quantifies the level of invariance.

Completeness

The representation must capture all chemically relevant information necessary for the target task. A complete representation uniquely defines the molecular system and allows for the reconstruction of its essential features.

Quantitative Metrics for Completeness:

- Reconstruction Fidelity: Ability to reconstruct atomic coordinates or connectivity from the representation.

- Property Prediction Limit: Theoretical upper bound (e.g., using quantum mechanical calculations as ground truth) on prediction accuracy for a diverse set of molecular properties.

Table 1: Comparison of Representation Completeness

| Representation Type | Typical Dimensionality | Captures 2D Connectivity? | Captures 3D Geometry? | Captures Electronic State? | Known Limitations |

|---|---|---|---|---|---|

| SMILES String | Variable (Sequence) | Yes | No | No | Non-unique, sensitive to syntax. |

| Extended Connectivity Fingerprints (ECFP) | 1024-4096 bits | Yes (Substructures) | No | No | Loss of explicit topology. |

| Coulomb Matrix (Eig.) | Fixed (~30 values) | Implicitly | Yes (for a conformation) | Approximate (via nuclear charge) | Not strictly invariant, conformation-dependent. |

| Smooth Overlap of Atomic Positions (SOAP) | ~100-5000 descriptors | Implicitly | Yes (Local env.) | No | Describes local, not global, structure. |

| 3D Graph (with Coords) | Variable (Graph) | Yes | Yes (Explicit) | Optional (via node features) | Conformation-dependent. |

| Equivariant Neural Network Features | Variable (Tensor) | Yes | Yes | Yes (if trained on QM data) | Computationally intensive. |

Experimental Protocol for Assessing Completeness (via Reconstruction):

- Target Data: Use a standardized dataset (e.g., GEOM-Drugs) with high-quality 2D and 3D molecular structures.

- Encoding: Generate representations ( R ) for all molecules.

- Decoding: Train a separate decoder model (e.g., graph decoder, 3D coordinate generator) to map ( R ) back to molecular structure.

- Evaluation: Measure reconstruction accuracy using metrics like:

- Graph Accuracy: Match of reconstructed adjacency matrix vs. original.

- 3D RMSD: Root Mean Square Deviation of atomic positions (for 3D-aware representations).

Efficiency

The representation must be computationally feasible to generate and suitable for model training. This includes the cost of computing the representation itself and the downstream efficiency of the AI model using it.

Table 2: Computational Efficiency of Common Representations

| Representation | Time Complexity (Generation) | Space Complexity (Storage) | Suited for Model Type | Scalability to Large Molecules (>100 atoms) |

|---|---|---|---|---|

| SMILES | O(1) (if pre-stored) | O(n) (string length) | RNN, Transformer | Excellent |

| Molecular Graph (2D) | O(n^2) for full adjacency | O(n^2) | GNN, GCN | Good |

| Coulomb Matrix | O(n^2) | O(n^2) | Dense Neural Network | Poor |

| 3D Graph (with Distances) | O(n^2) (for pairwise dist.) | O(n^2) | Geometric GNN | Moderate |

| SOAP Descriptors | O(n * m^2 * L^3) (m: basis, L: ang. mom.) | O(n * descriptors) | Kernel Methods, DNN | Moderate to Poor |

| Learned Representation (e.g., from GNN) | O(T * (E + V)) (T: GNN layers) | O(E + V) | Task-specific | Good |

Experimental Protocol for Benchmarking Efficiency:

- Hardware Standardization: Perform all experiments on a machine with specified CPU/GPU/RAM.

- Dataset Scaling: Use a dataset (e.g., PubChem) with molecules of varying sizes (10 to 200 heavy atoms). Create subsets binned by atom count.

- Timing/Memory Profiling: For each representation and subset, measure:

- Wall-clock time for batch generation.

- Peak memory usage during representation generation.

- Time per epoch for a standard model (e.g., 3-layer GNN, 2-layer DNN) trained on a fixed task.

- Analysis: Plot time/memory vs. molecule size to establish empirical complexity. Compare trade-offs between accuracy (from a separate validation run) and computational cost.

Visualization of Core Concepts and Workflows

Diagram Title: The Three Pillars of a Good Molecular Representation

Diagram Title: Invariance Validation Workflow

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Tools for Molecular Representation Research

| Item | Function in Research | Example/Note |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for generating 2D/3D structures, fingerprints (ECFP), and descriptors. | Primary tool for SMILES parsing, graph construction, and feature calculation. |

| PyTorch Geometric (PyG) / DGL | Specialized libraries for Graph Neural Networks (GNNs), enabling easy implementation of graph-based molecular representations. | Essential for building invariant 3D graph models. Includes standard molecular datasets. |

| JAX / Equivariant Libs (e3nn) | Libraries for building machine learning models with built-in symmetry constraints (equivariance). | Critical for developing rotationally equivariant representations for 3D data. |

| Quantum Chemistry Software (Psi4, xtb) | Generate high-fidelity ground-truth data (energies, wavefunctions) for training and evaluating complete representations. | Used to compute target properties and validate representation quality. |

| Standardized Datasets (QM9, GEOM, MoleculeNet) | Curated, benchmark datasets with diverse chemical properties and structures for fair comparison. | Provides the experimental "substrate" for training and evaluation. |

| High-Performance Compute (HPC) Cluster | CPUs/GPUs for generating representations (e.g., SOAP) and training large AI models, especially on 3D data. | Efficiency benchmarks require controlled hardware environments. |

| Visualization Tools (VMD, PyMol, matplotlib) | For inspecting 3D conformations, analyzing model attention, and visualizing representation spaces (via t-SNE/PCA). | Aids in qualitative understanding and debugging. |

This whitepaper addresses a fundamental challenge within the broader thesis on the Basics of Molecular Representation in AI Models Research. The effective application of artificial intelligence in molecular discovery hinges on solving the "Chemical Space Problem": how to computationally represent, navigate, and quantify the relationships between molecules. This document provides an in-depth technical guide to the core methodologies for representing molecular diversity and similarity, which form the foundational layer for predictive AI models in drug development.

Core Concepts and Quantitative Metrics

The chemical space is astronomically large, estimated to contain between 10^60 and 10^100 possible drug-like molecules. Representing this space requires mapping discrete molecular structures into a continuous, feature-rich numerical landscape where meaningful operations can be performed.

Table 1: Key Quantitative Descriptors for Molecular Representation

| Descriptor Category | Specific Examples | Dimensionality | Typical Use Case | Computational Cost |

|---|---|---|---|---|

| 1D: String-Based | SMILES, SELFIES, InChI | Variable (string length) | Database storage, generative model output | Low |

| 2D: Topological | Molecular Fingerprints (ECFP, Morgan), Graph Features | 1024 to 4096 bits/features | Similarity search, QSAR, virtual screening | Low-Medium |

| 3D: Geometric | Coulomb Matrices, Smooth Overlap of Atomic Positions (SOAP), 3D Pharmacophores | 100s to 1000s of features | Conformation-sensitive binding, quantum property prediction | High |

| Quantum Chemical | Partial Charges, HOMO/LUMO energies, Dipole moment | 10s to 100s of features | Reactivity prediction, electronic property modeling | Very High |

Table 2: Common Molecular Similarity/Diversity Metrics

| Metric Name | Formula / Principle | Range | Sensitivity | ||||

|---|---|---|---|---|---|---|---|

| Tanimoto Coefficient | ( T = \frac{ | A \cap B | }{ | A \cup B | } ) (for fingerprints) | 0 (dissimilar) to 1 (identical) | High for structural features |

| Cosine Similarity | ( \cos(\theta) = \frac{\mathbf{A} \cdot \mathbf{B}}{|\mathbf{A}||\mathbf{B}|} ) | -1 to 1 | Good for continuous vectors | ||||

| Euclidean Distance | ( d = \sqrt{\sum{i=1}^n (Ai - B_i)^2} ) | 0 to ∞ | Global spatial difference | ||||

| Mahalanobis Distance | ( D_M = \sqrt{(\mathbf{A} - \mathbf{B})^T \mathbf{S}^{-1} (\mathbf{A} - \mathbf{B})} ) | 0 to ∞ | Accounts for feature covariance |

Experimental Protocols for Key Analyses

Protocol 3.1: Benchmarking Molecular Similarity Searches

Objective: Evaluate the performance of different fingerprint representations in retrieving active compounds from a decoy database (e.g., DUD-E).

- Dataset Preparation: Select a target (e.g., kinase). Use the actives set and the corresponding decoys.

- Fingerprint Generation: Compute multiple 2D fingerprints (ECFP4, FCFP6, Morgan radius 2) for all actives and decoys.

- Reference Compound Selection: Randomly select 5 known active compounds as queries.

- Similarity Calculation: For each query and fingerprint type, calculate the Tanimoto coefficient to every molecule in the database.

- Ranking & Evaluation: Rank all database molecules by descending similarity. Calculate the enrichment factor (EF) at 1% of the screened database and the area under the ROC curve (AUC).

- Analysis: Compare the EF and AUC across fingerprint types to determine optimal representation for this target class.

Protocol 3.2: Assessing Chemical Library Diversity

Objective: Quantify the structural diversity of a corporate screening library or a generated virtual library.

- Library Representation: Encode all library molecules using a consistent fingerprint (e.g., 2048-bit ECFP4).

- Distance Matrix Calculation: Compute the pairwise Tanimoto distance matrix (1 - Tanimoto coefficient).

- Diversity Metrics:

- Average Pairwise Dissimilarity: Mean of all off-diagonal entries in the distance matrix.

- Intra-Cluster Distance: Perform k-means clustering (k=10) on fingerprint vectors. Calculate the mean distance of molecules to their cluster centroid.

- Coverage of Reference Space: Using a principal component analysis (PCA) map of a large reference space (e.g., ChEMBL), calculate the percentage of occupied PCA bins by the library.

- Visualization: Generate a t-SNE or UMAP projection of the fingerprint vectors to visually inspect library spread and clustering.

Visualization of Core Methodologies

Diagram Title: Molecular Representation Pathways to AI Tasks

Diagram Title: Chemical Diversity Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Chemical Space Analysis Experiments

| Tool / Reagent | Provider (Example) | Function in Experiment | Key Consideration |

|---|---|---|---|

| RDKit | Open Source Cheminformatics | Core library for fingerprint generation, molecule I/O, and similarity calculations. | Python/C++ library; foundation for most workflows. |

| Open Babel | Open Source | Chemical file format interconversion and batch descriptor calculation. | Critical for handling diverse vendor data formats. |

| DUD-E / DEKOIS 2.0 | Public Benchmark Sets | Provide curated sets of active molecules and matched decoys for validation. | Essential for benchmarking virtual screening performance. |

| ChEMBL Database | EMBL-EBI | Large-scale bioactivity data for reference space construction and model training. | Requires careful data curation and standardization. |

| MATLAB Chemoinformatics Toolbox | MathWorks | Integrated environment for prototyping descriptor calculations and statistical analysis. | Commercial license required; useful for robust statistical testing. |

| KNIME Analytics Platform | KNIME AG | Visual workflow builder with cheminformatics nodes (RDKit integration) for pipeline creation. | Low-code environment, excellent for reproducible, documented workflows. |

| DeepChem Library | DeepChem | Provides high-level APIs for deep learning on molecular representations (graphs, grids). | Streamlines the transition from fingerprints to advanced AI models. |

| GPU Computing Resource | (e.g., NVIDIA) | Accelerates training of deep learning models on graph or 3D representations. | Critical for scaling to large datasets and complex models. |

Modern Techniques: Implementing Graph Neural Networks, 3D Conformers, and Transformer Models

This whitepaper is a core chapter in a broader thesis on the Basics of molecular representation in AI models research. A fundamental paradigm shift in computational chemistry and drug discovery has been the move from fixed-dimensional fingerprint-based representations to graph-based representations, where atoms are explicitly modeled as nodes and bonds as edges. This approach natively encodes molecular topology, enabling more expressive and accurate models for property prediction, molecular generation, and reactivity analysis. This document provides an in-depth technical guide to the dominant neural architectures operating on these representations: Message Passing Neural Networks (MPNNs), Graph Convolutional Networks (GCNs), and Graph Attention Networks (GATs).

Foundational Concepts & Mathematical Formalism

A molecule is represented as an undirected graph G = (V, E), where V is the set of n nodes (atoms) and E is the set of edges (bonds). Each node v_i has a feature vector x_i encoding atomic properties (e.g., element type, hybridization, formal charge). Each edge (v_i, v_j) may have a feature vector e_ij encoding bond properties (e.g., type, stereochemistry).

The core operation of all discussed architectures is neighborhood aggregation or message passing. In a layer l, a node's representation h_i^l is updated by combining its previous state with aggregated information from its neighboring nodes N(i).

Key Architectures: Protocols and Methodologies

Graph Convolutional Networks (GCNs)

GCNs perform a simplified, spectral-based convolution operation directly on the graph.

Experimental Protocol (Single GCN Layer):

- Input: Node feature matrix H^(l) ∈ ℝ^(n×d),* adjacency matrix A (with self-loops added).

- Normalization: Compute the normalized adjacency matrix  = D^(-1/2) A D^(-1/2), where D is the degree matrix.

- Linear Transformation: Apply a learned weight matrix W^(l).

- Activation: Apply a non-linear activation function σ (e.g., ReLU).

- Output (Node Embeddings): H^(l+1) = σ(Â H^(l) W^(l)).

Message Passing Neural Networks (MPNNs)

MPNNs provide a general framework unifying many graph neural networks through two phases: message passing and readout.

Detailed Experimental Protocol (Forward Pass):

- Initialization: Set node embeddings h_i^0 = x_i.

- Message Passing (for T steps):

- For each node vi, a message mi^(t+1) is computed: mi^(t+1) = ∑(j ∈ N(i)) Mt(hi^t, hj^t, eij), where M_t is a learned message function (e.g., a neural network).

- Node Update: The node state is updated: h_i^(t+1) = U_t(h_i^t, m_i^(t+1)), where U_t is a learned update function (e.g., a GRU cell).

- Readout (Graph-Level Prediction): After T steps, a graph-level feature vector is computed: ŷ = R({h_i^T | v_i ∈ V}), where R is a permutation-invariant readout function (e.g., sum, mean, or a more sophisticated set pooling).

Graph Attention Networks (GATs)

GATs introduce an attention mechanism to weigh the importance of each neighbor's contribution dynamically.

Experimental Protocol (Single GAT Head):

- Input: Node features {h_1, ..., h_n}.

- Attention Coefficients: Compute unnormalized attention score between nodes i and j: e_ij = a(W h_i, W h_j), where a is a learned attention function (e.g., a single-layer feedforward network). j ∈ N(i).

- Normalization: Normalize scores using softmax: α_ij = softmax_j(e_ij) = exp(e_ij) / ∑_(k ∈ N(i)) exp(e_ik).

- Aggregation: Compute updated node embedding as weighted sum: h_i' = σ(∑_(j ∈ N(i)) α_ij W h_j).

- Multi-head Attention: Stabilize learning by employing K independent attention heads, concatenating or averaging their outputs.

Comparative Performance Data

Table 1: Benchmark Performance on MoleculeNet Datasets (Classification AUC-ROC / Regression RMSE)

| Model | Tox21 (Avg. AUC) | ClinTox (AUC) | ESOL (RMSE ↓) | QM9 (MAE ↓, U0) | Key Distinguishing Feature |

|---|---|---|---|---|---|

| GCN | 0.829 | 0.832 | 1.050 | 43 (meV) | Simplicity, computational efficiency. |

| MPNN | 0.851 | 0.887 | 0.900 | 21 (meV) | Flexible framework, explicit edge features. |

| GAT | 0.843 | 0.870 | 0.965 | 28 (meV) | Adaptive, interpretable neighbor weighting. |

| Weave | 0.856 | 0.854 | 1.105 | N/A | Uses pairwise atom features. |

Table 2: Computational Complexity & Characteristics

| Model | Time Complexity per Layer | Spatial Locality | Explicit Edge Features | Inductive Bias |

|---|---|---|---|---|

| GCN | O(|E|d) | Yes | No | Low-pass spectral filter. |

| MPNN | O(|E|d^2) | Yes | Yes | General message function. |

| GAT | O(|E|d^2 + |V|d^2) | Yes | Can be extended | Adaptive local filter. |

Visual Workflows

Diagram 1: Molecular Graph to Prediction Workflow (100 chars)

Diagram 2: MPNN Message Passing Step (87 chars)

Diagram 3: GAT Attention Weighted Aggregation (96 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Libraries for Molecular GNN Research

| Item Name | Provider / Library | Primary Function in Research |

|---|---|---|

| Molecular Featurizer | RDKit, DeepChem | Converts SMILES strings or molecular files into graph-structured data with node/edge features. Essential for dataset preparation. |

| Graph Neural Network Library | PyTorch Geometric (PyG), DeepGraphLibrary (DGL) | Provides optimized, batched implementations of GCN, MPNN, GAT, and other layers, drastically accelerating model development. |

| Message Passing Framework | JAX + Jraph, TensorFlow GN | Offers flexible, high-performance environments for prototyping custom MPNN variants and novel message functions. |

| Benchmark Suite | MoleculeNet (via DeepChem) | Curated collection of molecular datasets for standardized training, validation, and benchmarking of model performance. |

| Hyperparameter Optimization | Optuna, Ray Tune | Automates the search for optimal model architectures, learning rates, and layer depths to maximize predictive accuracy. |

| Interpretation Tool | GNNExplainer, Captum | Provides post-hoc explanations for model predictions by identifying important subgraph structures and features. |

| High-Performance Compute | NVIDIA CUDA, A100/GPU | Accelerates the training of deep GNNs on large molecular datasets from days to hours, enabling rapid experimentation. |

Within the broader thesis on the Basics of molecular representation in AI models for drug discovery, the evolution from 2D to 3D representations marks a pivotal paradigm shift. Early AI models relied on simplified 2D graph representations (SMILES, molecular fingerprints), which encode topology but ignore the spatial reality of molecules. This whitepaper argues that incorporating 3D conformational geometry is not merely an incremental improvement but a fundamental necessity for accurate molecular property prediction. The 3D conformation dictates intermolecular interactions, binding affinities, and ultimately biological activity, making it a critical data dimension for models predicting pharmacokinetic, thermodynamic, and toxicity endpoints.

The Limits of 2D Representations and the Case for 3D

2D representations treat molecules as topological graphs, losing all spatial information. This leads to the "conformational degeneracy" problem: multiple distinct 3D shapes, with potentially different properties, map to the same 2D representation. For example, the active conformation of a drug bound to a protein target is a specific 3D pose, not an abstract graph. Key properties rooted in 3D geometry include:

- Solvation Energy: Dependent on molecular surface area and shape.

- Membrane Permeability: Influenced by 3D polar surface area.

- Protein-Ligand Binding Affinity: Determined by complementary shape and electrostatic fields (e.g., hydrogen bonding, pi-stacking).

- Spectroscopic Properties: NMR chemical shifts and vibrational spectra are direct reporters of 3D structure.

Methodologies for Incorporating 3D Geometry

Experimental Protocols for Conformational Sampling and Data Generation

Protocol 1: Quantum Mechanics (QM)-Based Conformational Ensemble Generation

- Input: A single 2D molecular structure (e.g., SMILES).

- Initial Sampling: Use a rule-based or distance geometry method (e.g., ETKDG) to generate a diverse set of initial 3D conformers.

- Geometry Optimization: Employ semi-empirical methods (e.g., GFN2-xTB) to optimize each conformer's geometry, minimizing its energy.

- High-Fidelity Optimization and Ranking: Re-optimize low-energy candidates using Density Functional Theory (DFT) with a basis set like def2-SVP. Perform frequency calculations to confirm true minima (no imaginary frequencies).

- Output: A Boltzmann-weighted ensemble of low-energy 3D conformations in standard format (e.g., .sdf, .xyz), with associated electronic properties (partial charges, orbital energies).

Protocol 2: Molecular Dynamics (MD) for Solvated Conformational Sampling

- System Preparation: Place a single molecule of interest in a periodic simulation box filled with explicit solvent molecules (e.g., TIP3P water).

- Energy Minimization: Use steepest descent/conjugate gradient algorithms to remove steric clashes.

- Equilibration: Run simulations in NVT and NPT ensembles (100-500 ps) to stabilize temperature and pressure.

- Production Run: Perform an extended MD simulation (10-100 ns) at constant temperature/pressure, saving atomic coordinates at regular intervals (e.g., every 10 ps).

- Trajectory Analysis: Cluster frames based on root-mean-square deviation (RMSD) to identify representative conformations. Calculate time-averaged geometric and electronic properties.

AI Model Architectures for 3D Molecular Data

- 3D Graph Neural Networks (3D-GNNs): Augment standard GNNs by using 3D coordinates to update node and edge features. Edge updates incorporate distance and angle information.

- Model:

SchNet,DimeNet++,SphereNet. - Input: Atom types (nodes), bonds (edges), and 3D coordinates.

- Mechanism: Continuous-filter convolutional layers that generate features invariant to translation and rotation.

- Model:

- Equivariant Neural Networks (ENNs): Explicitly preserve the geometric symmetries of 3D space (rotation, translation, permutation).

- Model:

SE(3)-Transformers,EGNN. - Advantage: Naturally learns from spatial data without requiring extensive data augmentation for rotational invariance.

- Model:

- Geometric Deep Learning on Point Clouds: Treat atoms as points in 3D space with feature vectors.

- Model:

PointNet++adapted for molecules. - Process: Hierarchical feature learning from local atomic neighborhoods.

- Model:

Quantitative Comparison: 2D vs. 3D Model Performance

The following table summarizes benchmark results on key molecular property prediction tasks, demonstrating the superior performance of 3D-aware models.

Table 1: Performance Comparison of Molecular Representation Models

| Model Class | Model Name | Representation | QM9 (MAE) ← Atomization Energy (meV) | ESOL (RMSE) ← Solubility (log mol/L) | FreeSolv (RMSE) ← Hydration Free Energy (kcal/mol) | PDBBind (RMSE) ← Binding Affinity (pKd) |

|---|---|---|---|---|---|---|

| 2D-Graph | MPNN | Graph (Topology) | ~38 | 0.58 | 1.15 | 1.40 |

| 2D-Graph | AttentiveFP | Graph (Topology) | ~35 | 0.56 | 1.10 | 1.37 |

| 3D-Graph | SchNet | 3D Coordinates | ~14 | 0.48 | 0.96 | 1.30 |

| 3D-Graph | DimeNet++ | 3D Coordinates + Angles | ~6 | 0.42 | 0.84 | 1.19 |

| Equivariant | SE(3)-Transformer | 3D Coordinates (Equivariant) | ~12 | 0.45 | 0.89 | 1.22 |

MAE: Mean Absolute Error; RMSE: Root Mean Square Error. Lower values indicate better performance. Data synthesized from recent literature (2022-2024).

Visualizing the 3D-Aware Prediction Workflow

Title: Workflow for 3D-Aware Molecular Property Prediction

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools and Datasets for 3D Molecular Modeling Research

| Item Name | Category | Function/Benefit |

|---|---|---|

| RDKit | Cheminformatics Library | Open-source toolkit for 2D/3D molecular manipulation, conformer generation (ETKDG), and fingerprint calculation. |

| Open Babel | File Format Tool | Converts between >110 chemical file formats, crucial for pipeline interoperability. |

| GFN2-xTB | Computational Chemistry | Fast, semi-empirical quantum method for geometry optimization and conformational search of large molecules. |

| PyMOL | Molecular Visualization | Industry-standard for high-quality 3D visualization and analysis of molecular structures and surfaces. |

| ANI-2x | Machine Learning Potential | A deep learning potential that provides near-DFT accuracy at dramatically lower cost for MD and optimization. |

| PDBbind | Curated Dataset | Provides experimentally determined 3D protein-ligand complexes with binding affinity data for model training/validation. |

| QM9 | Quantum Dataset | Contains DFT-calculated geometric and electronic properties for ~134k small molecules, a standard benchmark. |

| TorchMD-NET | AI Model Framework | PyTorch framework for building state-of-the-art 3D-GNNs and equivariant models for molecular simulation. |

| OpenMM | MD Simulation Engine | High-performance toolkit for running GPU-accelerated molecular dynamics simulations. |

| MoleculeNet | Benchmarking Suite | Curated collection of molecular property prediction tasks for fair model comparison. |

The integration of 3D geometric information addresses a fundamental shortcoming of traditional 2D molecular representations in AI. As demonstrated by superior performance on physics-based and biological property prediction tasks, models that reason over conformation—such as 3D-GNNs and Equivariant Networks—capture the essential physical determinants of molecular behavior. This shift aligns with the core thesis of advancing molecular representation: moving from symbolic, topology-only models towards physically-grounded, geometry-aware AI systems. The future of accurate in silico property prediction in drug development is unequivocally three-dimensional.

Within the foundational thesis of molecular representation for AI models, the evolution from fixed fingerprints to sequence-based representations marks a pivotal shift. The Simplified Molecular-Input Line-Entry System (SMILES) strings provide a grammatical, sequence-based description of molecular structure, enabling the direct application of sophisticated natural language processing (NLP) architectures. This guide examines the adaptation of Transformer architectures and Large Language Models (LLMs) for molecular property prediction, de novo design, and reaction outcome forecasting, positioning SMILES as a powerful language for chemistry.

Foundational Architectures: From NLP to Chemical Language Models

The core innovation lies in treating SMILES strings as sentences and atoms or sub-structures as tokens. The Transformer's self-attention mechanism is uniquely suited for capturing long-range dependencies in molecular graphs, analogous to syntactic relationships in language.

Key Architectural Adaptations:

- Tokenization: SMILES strings are tokenized using specialized chemical-aware tokenizers (e.g., Byte Pair Encoding adapted for common chemical substrings) rather than simple character-level splitting.

- Positional Encoding: Standard sinusoidal or learned positional encodings are used to inform the model of token order, which is critical for valency and ring closure information in SMILES.

- Pre-training Objectives: Models are often pre-trained using masked language modeling (MLM) on large unlabeled molecular databases (e.g., 10+ million compounds from PubChem). Advanced strategies include using SELFIES (a more robust SMILES alternative) to guarantee validity or incorporating auxiliary objectives like property prediction.

Quantitative Performance Benchmarks

Recent studies demonstrate the efficacy of SMILES Transformers across diverse tasks. The following table summarizes key performance metrics from state-of-the-art models.

Table 1: Benchmark Performance of SMILES Transformer Models on MoleculeNet Tasks

| Model / Architecture | Dataset (Task) | Key Metric | Performance | Reference Year |

|---|---|---|---|---|

| ChemBERTa (RoBERTa-based) | BBBP (Classification) | ROC-AUC | 0.923 | 2021 |

| MolFormer (Large-Scale Transformer) | FreeSolv (Regression) | RMSE (kcal/mol) | 0.91 | 2022 |

| SMILES-BERT | ClinTox (Classification) | ROC-AUC | 0.942 | 2023 |

| GPT-3.5 Fine-Tuned | HIV (Classification) | ROC-AUC | 0.802 | 2023 |

| ChemGPT (Generative) | ZINC20 (Reconstruction) | Valid & Novel SMILES | >99% | 2023 |

| T5-Based Reaction Model | USPTO (Yield Prediction) | MAE (%) | 8.5 | 2024 |

Table 2: Comparison of Molecular Representation Paradigms

| Representation | Format | Model Type | Key Advantage | Key Limitation |

|---|---|---|---|---|

| SMILES String | 1D Sequence | Transformer, LSTM | Direct LLM transfer, generative power | Ambiguity, syntactic invalidity |

| SELFIES String | 1D Sequence (Grammar-based) | Transformer, RNN | 100% syntactic validity, robust | Slightly less human-readable |

| Molecular Graph | 2D Graph | GNN, GCN | Explicit structure, invariant | Complex architecture, slower generation |

| Extended-Connectivity Fingerprints (ECFP) | Fixed-length Bit Vector | Random Forest, MLP | Fast, interpretable bits | Information loss, not generative |

Detailed Experimental Protocol: Pre-training a SMILES Transformer

The following protocol outlines a standard methodology for pre-training a base Transformer model on a corpus of SMILES strings.

Objective: To learn general-purpose, contextualized representations of chemical structures via self-supervised learning. Materials: 10-100 million canonical SMILES strings from public databases (e.g., PubChem, ZINC). Software: Hugging Face Transformers, DeepChem, PyTorch or TensorFlow, RDKit for validation.

Data Curation & Cleaning:

- Download SMILES datasets. Filter for unique, canonical representations using RDKit.

- Apply basic chemical sanity filters (e.g., correct atom valency, removal of metals).

- Split data into training (99%) and validation (1%) sets.

Tokenization & Vocabulary Generation:

- Implement a Byte Pair Encoding (BPE) tokenizer on the training corpus. Set a vocabulary size between 500-1000 to capture common chemical substrings (e.g., "C=", "c1ccc", "-NH2").

Model Architecture Configuration:

- Use a standard Transformer encoder architecture (e.g., BERT-base: 12 layers, 768 hidden dimensions, 12 attention heads, 110M parameters).

- Set maximum sequence length (token limit) to 512.

Pre-training Task - Masked Language Modeling (MLM):

- During training, randomly mask 15% of tokens in each input sequence.

- Replace masked tokens with: [MASK] (80%), random token (10%), or original token (10%).

- The model's objective is to predict the original token for each masked position using the final hidden state.

Training Specifications:

- Optimizer: AdamW with learning rate of 5e-5, linear warmup for first 10k steps, then linear decay.

- Batch Size: 256-1024 sequences per batch, using gradient accumulation if needed.

- Hardware: Train on 4-8 NVIDIA A100 or V100 GPUs for 5-10 epochs.

- Validation: Monitor MLM accuracy and perplexity on the held-out validation set.

Downstream Fine-tuning:

- The pre-trained model can be fine-tuned on supervised tasks (e.g., property prediction) by adding a task-specific prediction head (e.g., a multilayer perceptron) on the [CLS] token's output representation.

Visualization of Workflows and Architectures

Diagram 1: SMILES Transformer Pre-training via Masked LM

Diagram 2: Downstream Task Fine-tuning Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for SMILES Transformer Experiments

| Item / Resource | Category | Function & Explanation |

|---|---|---|

| RDKit | Software Library | Open-source cheminformatics toolkit for SMILES canonicalization, validity checking, substructure search, and descriptor calculation. Essential for data preprocessing and post-generation validation. |

| PubChem SQLite | Database | Pre-processed, queryable format of the PubChem database containing millions of SMILES strings and associated bioassay data. Primary source for pre-training corpora. |

| Hugging Face Transformers | Software Library | Provides state-of-the-art implementations of Transformer architectures (BERT, GPT, T5) and easy-to-use APIs for training, fine-tuning, and sharing models. |

| DeepChem | Software Library | An open-source toolkit for AI-driven chemistry, offering curated molecular datasets (MoleculeNet), model layers, and integration with RDKit and Transformers. |

| SELFIES Python Package | Software Library | Encodes/decode molecules into SELFIES strings, a robust alternative to SMILES that guarantees 100% valid molecular structures during generative tasks. |

| NVIDIA A100 GPU Cluster | Hardware | High-performance computing resource with substantial VRAM (40-80GB) necessary for training large Transformer models on millions of sequences. |

| Weights & Biases (W&B) | MLOps Platform | Tracks experiments, logs metrics, hyperparameters, and model predictions in real-time, enabling reproducibility and collaboration. |

| ChEMBL or ZINC20 Dataset | Database | High-quality, curated databases of bioactive molecules or commercially available compounds, used for benchmarking generative and predictive tasks. |

Within the foundational thesis on Basics of molecular representation in AI models research, the evolution from classic molecular fingerprints to modern neural representations forms a critical narrative. This guide examines the technical transition, benchmark performance, and practical implementation of these methods in contemporary computational chemistry and drug discovery.

From Circular Fingerprints to Continuous Embeddings

The Extended-Connectivity Fingerprint (ECFP) and its variants (FCFP) have long served as the standard for molecular representation. Operating via an iterative neighborhood identification algorithm, they generate a fixed-length, sparse bit vector denoting the presence of specific substructural patterns.

ECFP Generation Algorithm:

- Initialization: Assign each non-hydrogen atom a unique integer identifier based on its atomic number, degree, connectivity, charge, and isotopic mass.

- Iterative Update: For n iterations (radius R), gather information from each atom's neighbors within the current radius. The identifier for atom i at iteration t is a hash of the identifiers from its neighbors at t-1.

- Folding: The resulting set of integer identifiers is folded via modulo operation into a fixed-length bit vector (typically 1024, 2048 bits).

While effective, ECFPs are inherently sparse, lack geometric awareness, and cannot be optimized for a downstream task.

The Deep Learning Shift: Learned Representations

Deep learning models circumvent ECFP's limitations by learning continuous, task-informed vector representations directly from molecular structures or SMILES strings.

Key Architectures:

- Graph Neural Networks (GNNs): Operate directly on the molecular graph. Atoms (nodes) and bonds (edges) are embedded and updated through message-passing layers, aggregating neighborhood information analogous to—but more flexibly than—ECFP's circular neighborhoods.

- Transformer-based Models: Treat SMILES strings as sequences, using self-attention to capture long-range relationships within the molecular structure.

- 3D-Convolutional Networks: Utilize three-dimensional molecular conformations to explicitly model spatial and steric interactions.

Quantitative Performance Comparison

Recent benchmarks illustrate the performance gains of learned representations over traditional fingerprints on standard public datasets.

Table 1: Benchmark Performance on MoleculeNet Classification Tasks

| Representation / Model | BBBP (AUC-ROC) | Tox21 (AUC-ROC) | SIDER (AUC-ROC) | Avg. Training Data Req. |

|---|---|---|---|---|

| ECFP4 + Random Forest | 0.718 | 0.801 | 0.635 | Low |

| ECFP4 + DNN | 0.732 | 0.829 | 0.658 | Medium |

| Directed MPNN | 0.921 | 0.851 | 0.638 | High |

| Attentive FP | 0.893 | 0.861 | 0.682 | High |

| GROVER (Transformer) | 0.936 | 0.886 | 0.691 | Very High |

Data aggregated from recent literature (2022-2024). AUC-ROC scores are dataset averages. MPNN: Message Passing Neural Network.

Table 2: Key Characteristics of Representation Types

| Characteristic | ECFP/FCFP | GNN (e.g., MPNN) | Transformer (e.g., SMILES-based) |

|---|---|---|---|

| Representation | Sparse bit vector | Continuous graph embedding | Continuous sequence embedding |

| Geometry Awareness | None | Explicit (if 3D coords used) | Implicit (learned from SMILES) |

| Differentiable | No | Yes | Yes |

| Interpretability | High (substructure keys) | Medium (attention maps) | Medium (attention maps) |

| Data Efficiency | High | Medium | Low |

Experimental Protocol for Benchmarking Representations

A standardized protocol for evaluating molecular representations ensures comparable results.

Protocol: Model Training & Evaluation for a Classification Task

Dataset Curation:

- Source a benchmark dataset (e.g., from MoleculeNet).

- Apply standard stratified splitting (80/10/10) by scaffold or random split, as defined for the benchmark.

- Standardize SMILES representation and remove duplicates.

Feature Generation:

- For ECFP: Use RDKit (

rdkit.Chem.AllChem.GetMorganFingerprintAsBitVect) with radius=2 (ECFP4), nBits=2048. - For GNN: Represent molecules as graphs with node features (atomic number, degree, hybridization) and edge features (bond type, conjugation).

- For ECFP: Use RDKit (

Model Training:

- ECFP Baseline: Train a Scikit-learn RandomForestClassifier (n_estimators=500) or a simple DNN (3 fully connected layers, ReLU, dropout).

- GNN Model: Implement a model like AttentiveFP or a basic MPNN using PyTorch Geometric. Use 3-5 message-passing layers, global pooling, and a final classifier head.

- Hyperparameters: Optimize learning rate, dropout rate, and hidden dimension via Bayesian optimization over 50 trials, using the validation set.

Evaluation:

- Report the mean Area Under the Receiver Operating Characteristic Curve (AUC-ROC) over 3 independent training runs with different random seeds on the held-out test set.

- Perform statistical significance testing (e.g., paired t-test) on the results from multiple runs.

Visualization of Key Concepts

Title: Molecular Representation Learning Pathways

Title: Standard Benchmarking Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Libraries for Molecular Representation Research

| Item / Resource | Primary Function | Typical Use Case |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit; generates traditional fingerprints (ECFP), molecular graphs, and handles SMILES I/O. | Featurization for baseline models, molecular standardization, substructure search. |

| PyTorch Geometric (PyG) | A library for deep learning on graphs; implements many state-of-the-art GNN layers and utilities. | Building and training custom GNN models for molecular property prediction. |

| Deep Graph Library (DGL) | Alternative to PyG for building and training GNNs, with a strong focus on performance and scalability. | Large-scale molecular graph learning and batch processing. |

| Hugging Face Transformers | Provides pre-trained Transformer models; increasingly includes chemical models like SMILES-based checkpoints. | Fine-tuning large language models for chemical tasks (e.g., property prediction). |

| MoleculeNet | A benchmark collection of molecular datasets for machine learning. | Standardized dataset access for fair model evaluation and comparison. |

| OMEGA (OpenEye) | Commercial software for generating high-quality, diverse conformational ensembles. | Providing 3D structural inputs for geometric deep learning models. |

| Schrödinger Suite | Commercial platform offering tools for ligand-based (including fingerprint) and structure-based drug design. | Industrial-scale virtual screening and QSAR modeling workflows. |

| Azure Quantum Elements | Cloud platform integrating AI, HPC, and quantum computing for molecular simulation and generative chemistry. | Accelerated discovery of novel materials and molecules using AI-driven pipelines. |

This whitepaper constitutes a core chapter in a broader thesis on the Basics of Molecular Representation in AI Models Research. The fundamental challenge in computational chemistry and drug discovery lies in selecting and integrating representations that capture the complex, multi-faceted nature of molecules. Early AI models relied on single-modality inputs, such as Simplified Molecular Input Line Entry System (SMILES) strings or molecular fingerprints, which provided a limited, often lossy, view of molecular structure and properties. This work argues that robust and predictive models necessitate multi-modal and hybrid approaches that synergistically combine 2D topological graphs, 3D conformational geometries, and explicit physicochemical descriptors. This integration allows AI models to learn complementary information, mirroring the multi-parameter optimization practiced by human medicinal chemists, thereby accelerating the identification and optimization of viable drug candidates.

Feature Modalities: Definitions and Extraction

2D Topological Features

2D representations encode the connectivity and atom/bond types within a molecule, disregarding spatial coordinates.

- Extraction: Derived directly from molecular structure files (e.g., SDF, MOL).

- Common Representations:

- Molecular Graph: A graph

G = (V, E)where verticesVare atoms (featurized by element, hybridization, degree, etc.) and edgesEare bonds (featurized by type, conjugation, etc.). This is the native input for Graph Neural Networks (GNNs). - SMILES/SELFIES Strings: String-based notations that are processed via natural language processing (NLP) techniques like recurrent neural networks (RNNs) or Transformers.

- Molecular Fingerprints (e.g., ECFP, Morgan): Bit vectors indicating the presence of specific substructural patterns.

- Molecular Graph: A graph

3D Geometric Features

3D representations capture the spatial arrangement of atoms, which is critical for modeling intermolecular interactions like docking and predicting quantum chemical properties.

- Extraction: Requires 3D conformer generation using tools like RDKit (

ETKDGmethod), OMEGA, or computation via density functional theory (DFT). - Common Representations:

- Atomic Coordinates & Distance Matrix: The

(x, y, z)coordinates for each atom and the pairwise Euclidean distance matrix. - Volumetric Grids: Electron density or potential mapped to a 3D voxel grid for use with 3D Convolutional Neural Networks (3D-CNNs).

- Geometric Graph: Augments the 2D molecular graph with 3D spatial distances as edge attributes or uses invariant/scalar features (e.g., distances, angles, dihedrals).

- Surface Meshes: Represent the solvent-accessible surface area for protein-ligand interaction studies.

- Atomic Coordinates & Distance Matrix: The

Explicit Physicochemical Features

These are pre-computed, human-engineered descriptors that encode specific chemical intuitions about molecular properties.

- Extraction: Calculated using libraries like RDKit, Mordred, or PaDEL-Descriptor.

- Categories:

- Constitutional: Molecular weight, atom count, bond count.

- Topological: Connectivity indices (e.g., Wiener index, Zagreb index).

- Electronic: Partial charges, dipole moment, HOMO/LUMO energies (often from DFT).

- Geometrical: Principal moments of inertia, radius of gyration.

- Hybrid: Pharmacophoric features (hydrogen bond donors/acceptors, aromatic rings, hydrophobes).

Hybridization Architectures and Methodologies

The core technical challenge is the fusion of heterogeneous feature spaces. Below are detailed protocols for key integration strategies.

Early Fusion (Feature-Level Concatenation)

Protocol: Features from all modalities are calculated and concatenated into a single, high-dimensional input vector before being fed into a standard machine learning model (e.g., Random Forest, Fully Connected Network).

- Input Preparation:

- Generate a low-energy 3D conformer for each molecule using the ETKDGv3 method in RDKit.

- For each molecule, compute: a) A 2048-bit Morgan fingerprint (radius=2). b) A set of 3D geometric descriptors: radius of gyration, principal moments of inertia, plane of best fit (PBF), and normalized spatial distance histograms. c) A set of 200 Mordred descriptors (filtering out constant and correlated features).

- Feature Standardization: Standardize each feature column (mean=0, variance=1) using a

StandardScalerfit on the training set only. - Dimensionality Reduction (Optional): Apply Principal Component Analysis (PCA) to the concatenated vector to reduce noise and computational load.

- Model Training: Train a model (e.g., Gradient Boosted Tree) on the final fused feature vector.

Joint Deep Learning (Late Fusion)

Protocol: Separate neural network branches (encoders) process each modality. The learned latent representations are fused at a later stage, typically before the final prediction layers.

- Branch Architecture:

- 2D Graph Branch: A Message Passing Neural Network (MPNN) or Graph Attention Network (GAT) processes the molecular graph. The final graph-level representation is obtained via global pooling (e.g., global mean pool).

- 3D Geometric Branch: A distance-aware GNN (e.g., SchNet, DimeNet++) or a Transformer operating on point clouds processes atomic coordinates and types.

- Descriptor Branch: A simple multi-layer perceptron (MLP) processes the vector of physicochemical descriptors.

- Fusion Layer: The output vectors from each branch (

z_2d,z_3d,z_desc) are concatenated or aggregated via an attention-weighted sum. - Prediction Head: The fused representation

z_fusedis passed through a final MLP for property prediction (e.g., pIC50, solubility).

Diagram Title: Late Fusion Architecture for Molecular AI

Cross-Modal Attention and Transformer-Based Fusion

Protocol: A Transformer architecture treats features from different modalities as a sequence of tokens, using self-attention to model intra- and inter-modal relationships dynamically.

- Tokenization:

- 2D Tokens: Node embeddings from a shallow GNN or learned embeddings for molecular subgraphs.

- 3D Tokens: Atom embeddings projected from atomic coordinates and numbers via a linear layer.

- Descriptor Tokens: Each significant physicochemical descriptor is embedded as a token.

- Positional Encoding: Modality-type encoding and (for 3D tokens) spatial positional encoding are added.

- Transformer Encoder: The sequence of tokens is passed through a standard Transformer encoder stack. The multi-head self-attention mechanism allows a 3D atom token to attend to relevant 2D substructure tokens and descriptor tokens.

- Pooling and Prediction: The token corresponding to a

[CLS](classification) symbol is used as the final molecular representation for property prediction.

Quantitative Data Comparison

Table 1: Benchmark Performance of Modality Combinations on MoleculeNet Datasets Performance measured by Mean Absolute Error (MAE) or ROC-AUC. Lower MAE and higher ROC-AUC are better.

| Model Architecture | Modalities Used | ESOL (MAU) ↓ | FreeSolv (MAE) ↓ | HIV (ROC-AUC) ↑ | Avg. Rank |

|---|---|---|---|---|---|

| Random Forest (RF) | 2D (Fingerprints) Only | 0.58 | 1.15 | 0.763 | 5.3 |

| Graph Convolution (GC) | 2D (Graph) Only | 0.51 | 1.06 | 0.801 | 4.0 |

| SchNet | 3D (Geometry) Only | 0.49 | 0.92 | 0.712* | 4.7 |

| Early Fusion (RF) | 2D FP + 3D Desc + PhysChem | 0.48 | 0.98 | 0.822 | 3.0 |

| Late Fusion (GC+MLP) | 2D Graph + PhysChem | 0.45 | 0.89 | 0.845 | 2.0 |

| Multi-modal Transformer | 2D Graph + 3D Coord + PhysChem | 0.42 | 0.81 | 0.868 | 1.0 |

*3D-only models struggle on non-geometry-specific tasks like HIV classification without hybrid features.

Table 2: Computational Cost of Feature Extraction (Avg. Time per Molecule)

| Feature Modality | Tool/Library | CPU Time (s) | GPU Time (s) | Notes |

|---|---|---|---|---|

| 2D Morgan Fingerprint (2048) | RDKit | ~0.001 | N/A | Extremely fast. |

| 2D Graph (Atom/Bond Feats) | RDKit | ~0.005 | N/A | Fast. |

| 3D Conformer Generation (ETKDG) | RDKit | ~0.3 | N/A | Single conformer, fast. |

| 3D Multi-Conformer Ensemble | OMEGA | ~2.5 | N/A | More accurate, slower. |

| Quantum Chemical (DFT) Features | ORCA/Psi4 | 300-3600+ | N/A | Highly accurate, prohibitive for large sets. |

| Mordred Descriptors (1600+) | Mordred | ~0.05 | N/A | Comprehensive, moderate speed. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for Multi-Modal Molecular Experiments

| Item / Reagent | Function / Purpose | Example Source / Tool |

|---|---|---|

| Chemical Structure Datasets | Provides standardized molecular structures (SMILES/SDF) and associated property labels for training and testing. | MoleculeNet, ZINC20, ChEMBL, PDBbind |

| 3D Conformer Generator | Generates realistic, low-energy 3D molecular geometries from 2D inputs. Essential for 3D feature extraction. | RDKit (ETKDG), OMEGA (OpenEye), CONFAB |

| Quantum Chemistry Software | Calculates high-fidelity electronic structure properties (HOMO/LUMO, partial charges) for physicochemical descriptors. | ORCA, Psi4, Gaussian, xtb (for semi-empirical) |

| Descriptor Calculation Library | Computes a wide array of pre-defined molecular descriptors from structures. | RDKit, Mordred, PaDEL-Descriptor, Dragon |

| Deep Learning Framework | Provides environment to build and train hybrid neural network models (GNNs, Transformers, MLPs). | PyTorch, PyTorch Geometric (PyG), TensorFlow, DeepGraphLibrary (DGL) |

| Model Training Infrastructure | Accelerates training of large hybrid models, especially those processing 3D point clouds or graphs. | NVIDIA GPUs (CUDA), Google Colab, AWS/Azure ML Instances |

| Hyperparameter Optimization Suite | Automates the search for optimal model architecture and training parameters across complex multi-modal pipelines. | Weights & Biases (W&B), Optuna, Ray Tune |

Advanced Experimental Protocol: Benchmarking a Hybrid Model

Objective: To evaluate the predictive performance gain of a hybrid 2D/3D/PhysChem model versus unimodal baselines on a quantum property prediction task (HOMO-LUMO gap).

Dataset Curation:

- Source the QM9 dataset (~133k molecules with DFT-calculated properties).

- Split: 80% train, 10% validation, 10% test. Ensure no structural analogs leak across splits.

Feature Extraction Pipeline:

- For each molecule SMILES:

a. Generate a single 3D conformer using RDKit's

EmbedMoleculefunction withuseRandomCoords=TrueanduseBasicKnowledge=True. b. 2D Features: Compute a 1024-bit radius-2 Morgan fingerprint. Also create a graph object with atom features (atomic number, degree, hybridization) and bond features (type, conjugation). c. 3D Features: Extract the distance matrix and compute the radius of gyration. d. PhysChem Features: Calculate a curated set of 10 descriptors directly related to electronic structure:Molecule Polarity Index,BalabanJ,Molar Refractivity(from RDKit), andMax Partial Charge(estimated via Gasteiger method).

- For each molecule SMILES:

a. Generate a single 3D conformer using RDKit's

Model Implementation (PyTorch/PyG):

- Baseline 1 (2D): A 5-layer GIN convolutional network with global mean pooling.

- Baseline 2 (3D): A SchNet model operating on atomic numbers and coordinates.

- Hybrid Model: A late-fusion model.

- Branch 1: Identical 5-layer GIN network as Baseline 1.

- Branch 2: A 4-layer MLP processing the concatenated 3D and PhysChem feature vector.

- Fusion: The 128-dimensional outputs from each branch are concatenated, passed through a 2-layer fusion MLP with ReLU activation and dropout (p=0.1), and then to a final linear regressor.

Training & Evaluation:

- Loss: Mean Squared Error (MSE).

- Optimizer: AdamW (lr=1e-3, weight_decay=1e-5).

- Scheduler: ReduceLROnPlateau (patience=10).

- Batch Size: 32.

- Metric: Report MAE (eV) and RMSE (eV) on the held-out test set after training for 300 epochs with early stopping (patience=30).

Diagram Title: QM9 Hybrid Model Experimental Workflow

Integrating 2D, 3D, and physicochemical features is not merely an incremental improvement but a foundational advance in molecular representation learning. As demonstrated, hybrid models consistently outperform their single-modality counterparts across diverse benchmarks by capturing complementary aspects of molecular identity—connectivity, shape, and intrinsic chemical properties. This multi-modal paradigm, central to the thesis on molecular representation basics, provides a more holistic and predictive framework. Future work will focus on developing more efficient cross-modal alignment techniques, dynamic fusion mechanisms, and leveraging these rich representations for generative tasks in de novo molecular design, ultimately closing the loop between AI-driven prediction and actionable drug discovery.

Overcoming Pitfalls: Data Challenges, Model Generalization, and Computational Limits

Within the broader thesis on the Basics of Molecular Representation in AI Models, data quality is the foundational pillar. Molecular datasets, derived from high-throughput screening, computational simulations, or public repositories, are inherently complex and prone to specific artifacts that directly compromise model generalizability and predictive power. This guide details three pervasive issues—noise, imbalance, and lack of standardization—and provides technical methodologies for their mitigation.

Noise in Molecular Data

Noise refers to stochastic errors or irrelevant variations that obscure the true signal. In molecular AI, noise manifests as experimental measurement error, molecular representation ambiguity, and label inconsistency.

Quantitative Impact of Noise

Table 1: Reported Impact of Noise on Model Performance for Different Molecular Tasks

| Task | Noise Type | Reported Performance Drop (AUC-ROC/ RMSE) | Primary Source |

|---|---|---|---|

| Activity Prediction | Assay measurement error | 0.08 - 0.15 AUC | High-throughput screening variability studies |

| Quantum Property Prediction | Conformational sampling noise | 10-15% increase in RMSE | Benchmarking on QM9 with noisy conformers |

| Toxicity Classification | Inconsistent labeling (PubChem) | 0.10 - 0.12 AUC | Comparative analysis of curated vs. raw data |

Protocol: Consensus-Based Noise Filtering for Bioactivity Data

- Data Collection: Gather bioactivity measurements (e.g., IC50, Ki) for the same target-molecule pair from multiple public sources (ChEMBL, PubChem BioAssay).

- Threshold Definition: Set a tolerance threshold (e.g., 1 log unit) for acceptable variation between reported values.

- Consensus Calculation: For each unique pair, calculate the median activity value. Discard all outlier measurements that fall outside the defined tolerance from the median.

- Aggregation: Use the median value as the consensus label for model training. This protocol reduces variance introduced by single-lab or single-assay artifacts.

Class Imbalance

Imbalance is a structural skew in dataset labels, where one class (e.g., inactive compounds) vastly outnumbers another (e.g., active compounds). This leads to models biased toward the majority class.

Imbalance Statistics in Common Repositories

Table 2: Prevalence of Class Imbalance in Standard Molecular Datasets

| Dataset | Prediction Task | Majority:Minority Class Ratio | Typical Baseline Accuracy (Majority Class) |

|---|---|---|---|

| PubChem BioAssay (AID: 1851) | HIV Inhibitor | 99:1 | 99% |

| Tox21 | Nuclear Receptor SR-mmp | 95:5 | 95% |

| MUV | Purposely designed for imbalance | 99.7:0.3 | 99.7% |

Protocol: Hybrid Sampling for Training Imbalanced Classification Models

- Stratified Split: Perform a stratified train/validation/test split to preserve imbalance in all sets.

- Training Set Resampling:

- SMOTE (Synthetic Minority Over-sampling Technique): Apply SMOTE to the minority class in the training set only to generate synthetic examples in descriptor/embedding space.

- Random Under-Sampling: Randomly reduce the majority class in the training set to a desired ratio (e.g., 3:1).

- Model Training & Evaluation: Train the model on the resampled training set. Use the untouched validation and test sets for hyperparameter tuning and final evaluation, employing metrics like Balanced Accuracy, MCC (Matthews Correlation Coefficient), or Precision-Recall AUC.

Workflow for mitigating class imbalance via hybrid sampling.

Lack of Standardization

Standardization encompasses consistent molecular representation (e.g., tautomer, salt, stereochemistry handling) and feature scaling. Inconsistency here introduces systematic bias.

Protocol: Standardization Pipeline for Molecular Input

- Descriptor Calculation (RDKit/ Mordred): Generate a comprehensive set of molecular descriptors (2D/3D).

- Sanitization & Neutralization: Strip salts, remove solvent molecules, and neutralize charges where appropriate using toolkits like RDKit or OpenBabel.

- Tautomer Canonicalization: Apply a consistent tautomerization rule (e.g., CACTVS rules via RDKit) to ensure a single representative structure per molecule.

- Stereochemistry Handling: Explicitly define stereochemistry from 3D coordinates or remove it, documenting the choice.