MolDQN Hyperparameter Optimization: A Complete Guide for Drug Discovery AI

This comprehensive guide explores the critical process of hyperparameter optimization for Molecular Deep Q-Networks (MolDQN) in AI-driven drug discovery.

MolDQN Hyperparameter Optimization: A Complete Guide for Drug Discovery AI

Abstract

This comprehensive guide explores the critical process of hyperparameter optimization for Molecular Deep Q-Networks (MolDQN) in AI-driven drug discovery. We cover foundational concepts, practical methodologies, common troubleshooting strategies, and validation techniques. Aimed at researchers and drug development professionals, this article provides actionable insights to enhance the efficiency and effectiveness of MolDQN models for generating novel therapeutic candidates, from understanding the core algorithm to implementing state-of-the-art optimization frameworks.

Understanding MolDQN: Core Principles and Hyperparameter Impact on Molecular Generation

MolDQN Troubleshooting & FAQs

Q1: During training, my MolDQN agent's reward fails to improve and the generated molecules show no increase in desired property scores (e.g., QED, DRD2). What are the primary causes and solutions?

A: This "reward stagnation" is a common issue. The primary hyperparameters to troubleshoot are the learning rate, replay buffer size, and exploration rate (ε). A learning rate that is too high can cause instability, while one that is too low leads to no learning. An under-sized replay buffer fails to decorrelate experiences, and an improper ε decay schedule prevents a transition from exploration to exploitation.

- Diagnostic Step: Plot the per-episode reward, the predicted Q-values, and the actual rewards of sampled molecules over time. If Q-values diverge (increase unrealistically), this indicates overestimation bias.

- Protocol for Hyperparameter Optimization:

- Perform a grid search over a defined range for the three key parameters.

- Use a small, fixed random seed for reproducibility.

- Run each configuration for a limited number of steps (e.g., 1000 episodes) and track the average final reward and the best single-molecule reward.

- Select the configuration that shows consistent, monotonic improvement in average reward.

Q2: I encounter "invalid action" errors frequently during molecule generation, causing the episode to terminate prematurely. How can I mitigate this?

A: Invalid actions occur when the agent attempts a chemically impossible bond or atom addition. This breaks the SMILES string and halts the episode. The solution is to implement action masking or reward shaping.

- Experimental Protocol for Implementing Action Masking:

- At each step, compute the valid action set based on the current molecular graph's valency and bonding rules.

- Before passing logits to the policy network, set the logits of invalid actions to a large negative value (e.g., -1e9).

- This forces the softmax probability for invalid actions to ~0, guaranteeing the agent only samples valid actions.

- Key Code Check: Verify your environment's

step()function correctly identifies and filters invalid actions before the agent selects one.

Q3: My model trains successfully but fails to generate novel, high-scoring molecules during evaluation (i.e., poor generalization). What architectural or data-related factors should I investigate?

A: This suggests overfitting to the training "scoring landscape" or a lack of exploration. Focus on the network architecture and the reward function.

- Methodology for Improving Generalization:

- Increase Network Capacity: If using a simple MLP, switch to a Graph Neural Network (GNN) like a Gated Graph Neural Network (GGNN) to better capture molecular topology.

- Regularize: Apply dropout (rate: 0.1-0.3) between layers of the Q-network.

- Smooth the Reward Function: If the property predictor (e.g., a docking score predictor) is noisy, fit a smoother surrogate model (like a Gaussian Process) to use as the reward function to provide more learnable gradients.

- Test Protocol: Hold out a set of target property values from the training distribution. After training, evaluate if the agent can generate molecules that score well against these held-out targets.

Q4: Training is computationally expensive and slow. How can I optimize the performance of my MolDQN training loop?

A: The bottlenecks are typically the property calculation (reward function) and the graph operations.

- Optimization Guide:

- Reward Caching: Implement a fast, in-memory cache (e.g., using

functools.lru_cache) for the reward function. Duplicate molecules are common during training. - Vectorization: Use batched operations for the forward pass of the Q-network. Ensure the environment supports generating a batch of next states.

- Hardware Utilization: Offload the Q-network to a GPU. Confirm that the graph representation library (e.g., RDKit) is compatible with GPU acceleration or has minimal CPU-GPU transfer overhead.

- Profiling Step: Run a profiler (e.g., Python's

cProfile) on your training script for 100 episodes to identify the exact slowest function calls.

- Reward Caching: Implement a fast, in-memory cache (e.g., using

Key Hyperparameter Optimization Results

Table 1: Impact of Learning Rate on MolDQN Training Stability (QED Maximization Task)

| Learning Rate | Avg. Final Reward | Best QED Found | Training Stability |

|---|---|---|---|

| 1e-2 | 0.65 | 0.82 | High Variance, Unstable |

| 1e-3 | 0.78 | 0.94 | Stable, Consistent |

| 1e-4 | 0.71 | 0.87 | Slow, Minor Improvement |

| 5e-4 | 0.80 | 0.95 | Optimal Performance |

Table 2: Effect of Replay Buffer Size on Sample Efficiency (DRD2 Activity Task)

| Buffer Size | Episodes to Converge | Diversity (Tanimoto Sim.) | Top-100 Avg. Score |

|---|---|---|---|

| 1,000 | 2,500 | 0.35 | 0.72 |

| 10,000 | 1,800 | 0.41 | 0.85 |

| 50,000 | 1,500 | 0.48 | 0.92 |

| 200,000 | 1,600 | 0.47 | 0.90 |

Experimental Protocol: Benchmarking MolDQN with Action Masking

Objective: To evaluate the efficiency gain of action masking versus a penalty-based approach for handling invalid actions.

- Baseline Setup: Implement a standard MolDQN where an invalid action receives a large negative reward (-10) and terminates the episode.

- Intervention Setup: Implement MolDQN with action masking at the policy network output layer.

- Control Parameters: Fix learning rate (5e-4), buffer size (50k), and ε-decay schedule. Use the same property objective (e.g., penalized logP).

- Metrics: Run 5 independent trials. Record: a) Percentage of valid steps, b) Number of episodes to reach a reward threshold, c) Best molecule score found after 5000 steps.

- Analysis: Perform a paired t-test on the "episodes to threshold" metric between the two groups to determine statistical significance (p < 0.05).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a MolDQN Research Pipeline

| Item / Software | Function in MolDQN Research |

|---|---|

| RDKit | Core cheminformatics toolkit for SMILES parsing, validity checking, and molecular descriptor calculation. |

| PyTorch / TensorFlow | Deep learning frameworks for constructing and training the Q-network (policy & target networks). |

| OpenAI Gym Style Env | Custom environment defining the state (molecule), action space (atom/bond additions), and reward function. |

| Docking Software (AutoDock Vina, Schrodinger) | Provides the target-specific reward function (e.g., binding affinity) for real-world drug design tasks. |

| Weights & Biases (W&B) / TensorBoard | Experiment tracking tools for logging hyperparameters, rewards, and generated molecules. |

| Graph Neural Network Lib (DGL, PyG) | Libraries to implement GNN-based Q-networks for advanced graph-structured state representations. |

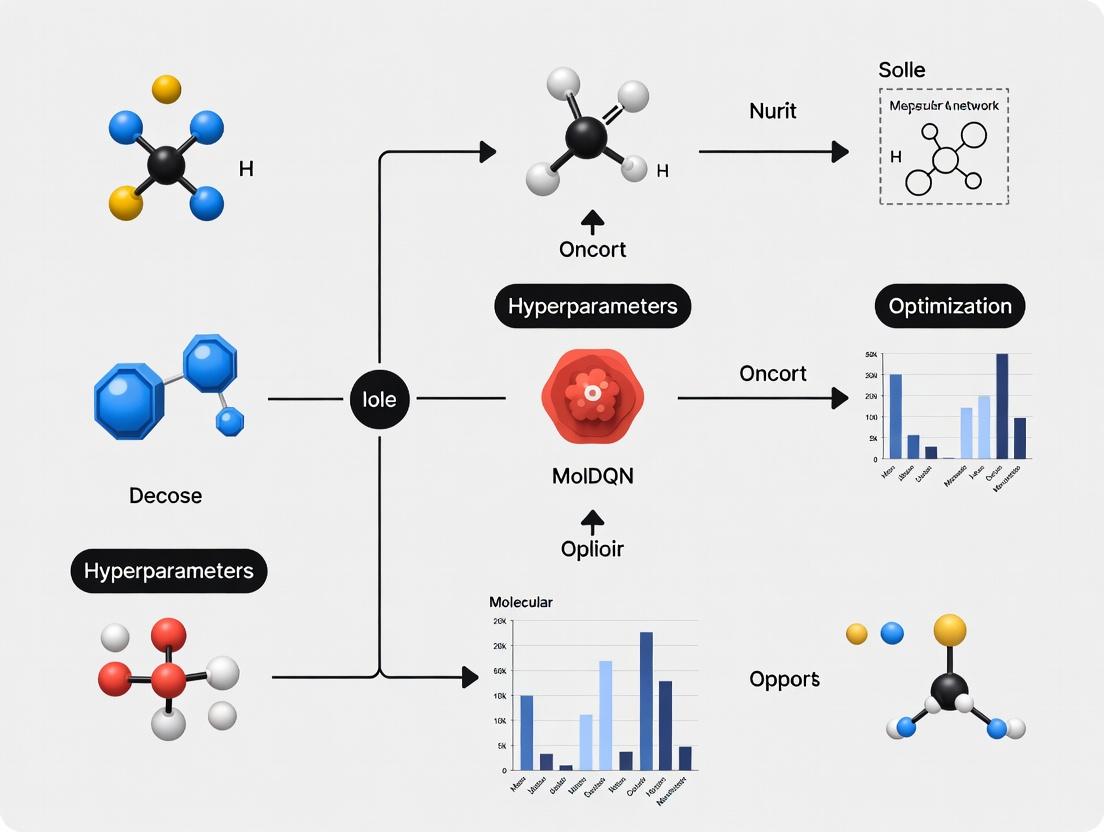

MolDQN Training & Action Selection Workflow

Title: MolDQN Reinforcement Learning Cycle

MolDQN Hyperparameter Optimization Decision Tree

Title: MolDQN Hyperparameter Troubleshooting Guide

The Critical Role of Hyperparameters in RL-Based Drug Discovery

Technical Support Center: Troubleshooting MolDQN Experiments

FAQs & Troubleshooting Guides

Q1: My MolDQN agent fails to learn, generating invalid molecules or molecules with poor reward scores. What are the primary hyperparameters to check? A: This is often a learning stability issue. First, adjust the learning rate (α). For MolDQN, a range of 0.0001 to 0.001 is typical. Second, check the discount factor (γ); a value too high (e.g., 0.99) can cause instability in early training—try 0.9. Third, ensure your replay buffer is sufficiently large (e.g., 1,000,000) and that training starts only after a significant initial population is collected (e.g., 10,000 steps).

Q2: How do I balance exploration and exploitation effectively during molecular generation? A: The epsilon (ε) decay schedule is critical. A linear or exponential decay from 1.0 to 0.01 over 1,000,000 steps is common. If the agent converges to suboptimal molecules too quickly, slow the decay. Use the table below for a recommended schedule comparison.

Q3: My model overfits to a small set of high-scoring but chemically similar molecules. How can I encourage diversity? A: This is a reward shaping and replay buffer issue. (1) Introduce a diversity penalty or similarity-based intrinsic reward into your reward function. (2) Implement a prioritized experience replay with a emphasis on novel state-action pairs (lower priority for common molecular fragments). (3) Increase the entropy regularization coefficient β in the loss function (try 0.01 to 0.1).

Q4: Training is computationally expensive and slow. What hyperparameters most impact runtime, and how can I optimize them? A: Key parameters are batch size, network update frequency, and network architecture. Increasing batch size from 128 to 512 can improve hardware utilization but may require a slightly lower learning rate. Use a target network update frequency (τ) of every 1000-5000 steps instead of a soft update to reduce computation. Consider reducing the size of the graph neural network (GNN) hidden layers if possible.

Q5: How should I set the reward discount factor (γ) for the long-horizon task of molecular optimization? A: For molecular generation, where each step adds an atom/bond and the final molecule is the goal, γ should be high to value future rewards. However, extremely high γ (0.99) can make learning noisy. A balanced approach is to use γ=0.9 or 0.95 and combine it with a final, substantial reward for achieving desired properties (e.g., QED, SA score).

Table 1: Impact of Key Hyperparameters on MolDQN Performance

| Hyperparameter | Typical Range | Effect if Too Low | Effect if Too High | Recommended Starting Value |

|---|---|---|---|---|

| Learning Rate (α) | 1e-5 to 1e-3 | Slow or no learning | Unstable training, divergence | 2.5e-4 |

| Discount Factor (γ) | 0.8 to 0.99 | Short-sighted agent (fails long-term goals) | Noisy Q-values, instability | 0.9 |

| Replay Buffer Size | 100k to 5M | Poor sample correlation, overfitting | Increased memory, slower sampling | 1,000,000 |

| Batch Size | 32 to 512 | High variance updates | Slower per-iteration, memory issues | 128 |

| ε-decay steps | 500k to 5M | Insufficient exploration | Slow convergence to exploitation | 1,000,000 |

| Target Update Freq (τ) | 100 to 10k | Unstable target Q-values | Slow propagation of learning | 1000 |

Table 2: Example Reward Function Components for Drug-Likeness

| Component | Formula/Range | Purpose | Weight (λ) Tuning |

|---|---|---|---|

| Quantitative Estimate of Drug-likeness (QED) | 0 to 1 | Maximize drug-likeness | λ=1.0 (Baseline) |

| Synthetic Accessibility Score (SA) | 1 (Easy) to 10 (Hard) | Minimize synthetic complexity | λ=-0.5 to penalize high SA |

| Molecular Weight (MW) | Penalty if >500 Da | Adhere to Lipinski's Rule of Five | λ=-0.01 per Dalton over 500 |

| Novelty | 1 if novel, 0 if in training set | Encourage new chemical structures | λ=0.2 to incentivize novelty |

Experimental Protocols

Protocol 1: Hyperparameter Grid Search for MolDQN Initialization

- Define Search Space: Create a discrete grid for: Learning Rate {1e-4, 5e-4, 1e-3}, Discount Factor {0.85, 0.9, 0.95}, Initial ε {1.0}, Final ε {0.01, 0.05}.

- Fix Environment: Use a standardized objective (e.g., maximize QED with SA constraint).

- Run Trials: For each hyperparameter combination, run 3 independent training runs for 500,000 steps each.

- Evaluation Metric: Record the best reward achieved and the number of unique valid molecules generated in the final 10% of steps.

- Analysis: Select the combination that maximizes the product of (average best reward * log(unique molecules)).

Protocol 2: Diagnosing and Mitigating Training Instability

- Monitor Loss: Log Q-network loss (MSE) and reward per episode.

- Identify Spike Pattern: If loss shows periodic large spikes, it indicates "catastrophic forgetting" due to correlated updates.

- Intervention: (a) Increase replay buffer size. (b) Reduce learning rate by a factor of 2. (c) Implement gradient clipping (norm ≤ 10). (d) More frequent target network updates (reduce τ to 100 temporarily).

- Validation: After intervention, loss should show a decreasing trend with manageable variance (< 10% of mean loss).

Diagrams

MolDQN Hyperparameter Tuning Workflow

RL Agent and Environment Interaction Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for MolDQN Hyperparameter Optimization

| Item/Reagent | Function/Role in Experiment | Specification/Notes |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit used to build the chemical environment, calculate rewards (QED, SA), and validate molecular structures. | Version 2023.x or later. Critical for SMILES parsing and chemical operations. |

| PyTorch Geometric (PyG) | Library for building Graph Neural Networks (GNNs) that process molecular graphs as states in the MolDQN. | Required for efficient batch processing of graph data. |

| Weights & Biases (W&B) / TensorBoard | Experiment tracking tools to log loss, rewards, and hyperparameters across hundreds of runs for comparative analysis. | Essential for visualizing training stability and performance. |

| Ray Tune / Optuna | Hyperparameter optimization frameworks for automating grid or Bayesian searches across defined parameter spaces. | Significantly reduces manual tuning time. |

| ZINC Database | A freely available database of commercially-available chemical compounds. Used for pre-training or as a baseline for novelty assessment. | Downloads available in SMILES format. |

| Custom Reward Wrapper | A software module that combines multiple property calculators (QED, SA, etc.) into a single, tunable reward signal. | Must allow for adjustable weights (λ) for each component. |

| High-Performance Computing (HPC) Cluster | GPU-enabled compute nodes (e.g., NVIDIA V100/A100) to parallelize multiple training runs for hyperparameter search. | Minimum 16GB GPU RAM recommended for large batch sizes and GNNs. |

Troubleshooting Guides & FAQs

Q1: My MolDQN training loss diverges to NaN very early in training. What are the most likely network architecture culprits? A: This is often related to gradient explosion. Key hyperparameters to check:

- Learning Rate: Too high. Start with 1e-4 to 1e-5 for Adam optimizers.

- Gradient Clipping: Ensure it is applied, with a norm typically between 0.5 and 10.0.

- Network Depth/Width: Excessively deep or wide networks for the size of your molecular state representation can lead to unstable gradients. Simplify the architecture initially.

Q2: The agent seems stuck, repeatedly generating the same (often invalid) molecule and not exploring. How should I adjust RL and exploration parameters? A: This indicates failed exploration-exploitation balance.

- Epsilon-Greedy Schedule: Your initial epsilon (ε) might be too low, or the decay is too fast. Use a schedule that maintains meaningful exploration (ε > 0.05) for a significant portion of episodes.

- Reward Shaping: The penalty for invalid actions/molecules may be too harsh, discouraging any risky exploration. Tune the invalid action reward (e.g., -0.1 to -1 instead of -10).

- Discount Factor (Gamma): A very low gamma (e.g., < 0.7) makes the agent myopic, ignoring long-term rewards from novel scaffolds.

Q3: Training is stable but the final policy performs worse than random. What network architecture changes can help? A: The model may be suffering from underfitting or ineffective feature integration.

- Increase Representation Power: Widen hidden layers (e.g., from 256 to 512/1024 units) or add 1-2 more layers if your dataset is large.

- Check Integration of Molecular Graph Features: Ensure the GNN or fingerprint embeddings are properly normalized and concatenated/fused with the policy/value head layers.

- Review Activation Functions: Using only ReLU can lead to "dead neurons." Consider alternatives like LeakyReLU in hidden layers.

Q4: How do I choose between a Dueling DQN and a standard DQN architecture for MolDQN? A: Dueling DQN is generally recommended. It separates the value of the state and the advantage of each action, which is beneficial in molecular spaces where many actions lead to similarly (un)desirable states (e.g., adding different atoms to the same position). This leads to more stable policy evaluation.

Q5: My agent finds a good molecule early but then performance plateaus. Should I adjust the replay buffer? A: Yes. This could be a case of "catastrophic forgetting" or lack of diversity in experience.

- Increase Replay Buffer Size: Allows retention of a more diverse set of past experiences (states, actions, rewards).

- Prioritized Experience Replay (PER): Implement PER to sample more frequently from transitions with high TD-error, focusing learning on surprising or suboptimal past decisions.

Table 1: Typical Network Architecture Hyperparameter Ranges for MolDQN

| Hyperparameter | Typical Range | Recommended Starting Value | Notes |

|---|---|---|---|

| Hidden Layer Width | 128 - 1024 | 256 | Scales with fingerprint/GNN embedding size. |

| Number of Hidden Layers | 1 - 4 | 2 | Deeper networks require more data & tuning. |

| Learning Rate (Adam) | 1e-5 - 1e-3 | 1e-4 | Most sensitive parameter. Use decay schedules. |

| Gradient Clipping Norm | 0.5 - 10.0 | 5.0 | Essential for stability. |

| Discount Factor (Gamma) | 0.7 - 0.99 | 0.9 | High for long-horizon molecular generation. |

| Target Network Update Freq. | 100 - 10,000 steps | 1000 (soft: τ=0.01) | Soft updates often more stable. |

Table 2: Key RL & Exploration Parameters for MolDQN

| Hyperparameter | Role & Impact | Common Values |

|---|---|---|

| Epsilon Start (ε_start) | Initial probability of taking a random action. | 1.0 |

| Epsilon End (ε_end) | Final/minimum exploration probability. | 0.01 - 0.05 |

| Epsilon Decay Steps | Over how many steps ε decays to ε_end. | 50,000 - 500,000 |

| Replay Buffer Size | Number of past experiences stored. | 50,000 - 1,000,000 |

| Batch Size | Number of experiences sampled per update. | 64 - 512 |

| Invalid Action Reward | Reward for attempting an invalid chemical step. | -0.1 to -5 |

Experimental Protocol: Hyperparameter Ablation Study for MolDQN

Objective: Systematically evaluate the impact of key hyperparameters (Learning Rate, Epsilon Decay, Network Width) on MolDQN performance.

Methodology:

- Baseline Setup: Use a standard MolDQN with: Dueling DQN, 2 hidden layers (256 units), Adam optimizer (lr=1e-4), ε-greedy (1.0→0.05 over 200k steps), gamma=0.9, buffer size=100k, batch size=128.

- Ablation Grid: Vary one parameter at a time while holding others constant.

- Learning Rate: Test [1e-3, 5e-4, 1e-4, 5e-5, 1e-5].

- Epsilon Decay Steps: Test [50k, 200k, 500k, 1M] (to ε_end=0.05).

- Network Width: Test [128, 256, 512, 1024] units per hidden layer.

- Evaluation: For each configuration, run 5 independent training runs for 500 episodes. Record:

- Primary Metric: Average max reward over the last 100 episodes.

- Stability Metric: Percentage of runs that did not diverge (loss NaN).

- Exploration Metric: Unique valid molecules discovered.

- Analysis: Plot learning curves. Use ANOVA to determine if performance differences are statistically significant (p < 0.05).

Key Diagrams

Diagram 1: MolDQN Hyperparameter Optimization Workflow

Diagram 2: RL Hyperparameter Impact on Agent Behavior

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a MolDQN Experiment

| Item | Function in MolDQN Research | Example/Note |

|---|---|---|

| Molecular Representation Library | Converts molecules to numerical features (state). | RDKit: For Morgan fingerprints, SMILES parsing, validity checks. |

| RL Framework | Provides DQN agent, replay buffer, training loop. | RLlib, Stable-Baselines3, Custom PyTorch/TF code. |

| Deep Learning Framework | Constructs and trains the neural network. | PyTorch (preferred for research), TensorFlow. |

| Hyperparameter Optimization Suite | Automates the search for optimal parameters. | Weights & Biases (W&B), Optuna, Ray Tune. |

| Chemical Property Calculator | Computes rewards (e.g., drug-likeness, synthesizability). | RDKit Descriptors (QED, LogP), External APIs (for docking). |

| Molecular Visualization Tool | Inspects generated molecules and intermediates. | RDKit, PyMol, Chimera. |

| High-Performance Computing (HPC) / Cloud GPU | Accelerates the computationally intensive training process. | NVIDIA GPUs (V100, A100), AWS EC2, Google Colab Pro. |

The State-Action-Reward Paradigm in a Chemical Space

Technical Support Center

Troubleshooting Guide: Common MolDQN Experimentation Issues

Q1: Why is my agent not learning, showing random policy behavior after extensive training? A: This is often a hyperparameter instability issue. Check the learning rate (α) and discount factor (γ). A learning rate that is too high prevents convergence, while one too low leads to negligible updates. For molecular environments, we recommend starting with α = 0.001 and γ = 0.99. Ensure your reward scaling is appropriate; molecular property rewards (e.g., LogP, QED) may need to be normalized to a [-1, 1] range to stabilize gradient updates.

Q2: How do I address the "Invalid Action" problem when my agent proposes chemically impossible bonds?

A: This requires a robust action masking layer in your DQN architecture. Implement a forward pass that applies a mask of -inf to invalid actions (e.g., forming a bond on a saturated atom) before the softmax operation. This forces the network to assign zero probability to invalid steps. Always validate your chemical environment's get_valid_actions() function.

Q3: My model converges to a single, suboptimal molecule and stops exploring. How can I fix this? A: This is a classic exploration-exploitation failure. Adjust your ε-greedy schedule. Instead of a linear decay, use an exponential decay with a higher initial ε (e.g., start ε=1.0, decay to 0.05 over 500k steps). Consider implementing a novelty bonus or intrinsic reward based on molecular fingerprint Tanimoto similarity to encourage diversity.

Q4: Training is extremely slow due to the computational cost of the reward function (e.g., docking simulation). Any solutions? A: Implement a reward proxy model. Use a pre-trained predictor (e.g., a Random Forest or a fast neural network) for the expensive-to-compute property as a surrogate during training. Periodically validate the agent's best molecules with the true, expensive reward function. Cache all computed rewards to avoid redundant calculations.

Q5: How do I handle variable-length state representations for molecules of different sizes? A: Use a Graph Neural Network (GNN) as the Q-network backbone, which naturally handles variable-sized graphs. Alternatively, employ a fixed-size fingerprint representation (like Morgan fingerprints) as the state, though this may lose some structural details. Ensure the fingerprint radius and bit length are consistent across all experiments.

Frequently Asked Questions (FAQs)

Q: What is the recommended hardware setup for training MolDQN agents? A: A GPU with at least 8GB VRAM (e.g., NVIDIA RTX 3070/3080 or A100 for larger graphs) is essential. Training times can range from 12 hours to several days. CPU-heavy reward computations benefit from high-core-count CPUs (e.g., 16+ cores) and ample RAM (32GB+).

Q: Which cheminformatics toolkit should I use for the chemical environment: RDKit or something else? A: RDKit is the industry standard and is highly recommended. It provides robust chemical validation, fingerprint generation, and property calculation. Ensure you are using a recent version (>=2022.09) for stability and features.

Q: How do I define the "action space" for molecular generation? A: The action space is typically discrete and includes bond addition, bond removal, and atom addition/change. A common setup is: 1) Add a single/double/triple bond between two existing atoms; 2) Remove an existing bond; 3) Change the atom type of a specific heavy atom; 4) Add a new atom with a specific bond type to an existing atom. The exact space depends on your research goal.

Q: What are the best practices for saving and evaluating a trained MolDQN agent? A: Save the full model checkpoint and the replay buffer periodically. For evaluation, run the agent with ε=0 (fully greedy) for multiple episodes. Report the top-k molecules by reward, and analyze their diversity using metrics like average pairwise Tanimoto similarity and scaffold uniqueness. Always verify chemical validity with RDKit.

Table 1: Optimal Hyperparameter Ranges for MolDQN Stability

| Hyperparameter | Recommended Range | Impact of High Value | Impact of Low Value |

|---|---|---|---|

| Learning Rate (α) | 1e-4 to 5e-3 | Divergence, unstable Q-values | Extremely slow learning |

| Discount Factor (γ) | 0.95 to 0.99 | Myopic agent (short-term rewards) | Agent ignores future consequences |

| Replay Buffer Size | 50,000 to 500,000 | Slower training, more diverse memory | Overfitting to recent experiences |

| Batch Size | 64 to 512 | Smoother gradients, more memory | Noisy gradient updates |

| ε-decay steps | 200k to 1M | Prolonged exploration, delayed convergence | Premature exploitation, low diversity |

Table 2: Benchmark Molecular Property Scores for Reward Shaping

| Target Property | Typical Range | Goal (for reward shaping) | Normalization Formula |

|---|---|---|---|

| Quantitative Estimate of Drug-likeness (QED) | 0 to 1 | Maximize | Reward = QED |

| Octanol-Water Partition Coeff (LogP) | -2 to 5 | Target a specific range (e.g., 0-3) | Reward = -abs(LogP - target) |

| Synthetic Accessibility Score (SA) | 1 (easy) to 10 (hard) | Minimize | Reward = (10 - SA) / 9 |

| Molecular Weight (MW) | 200 to 500 Da | Target a specific range | Reward = 1 if MW in range, else -1 |

Experimental Protocols

Protocol 1: Training a MolDQN Agent for LogP Optimization

- Environment Setup: Define the state as a 2048-bit Morgan fingerprint (radius=2). The action space includes 15 possible bond additions and 10 atom type changes.

- Reward Function:

R = -abs(LogP(s') - 2.5), wheres'is the new state. Terminate the episode after 15 steps or on an invalid action. - Network Architecture: Use a Dueling DQN with two hidden layers of 512 units each, ReLU activation.

- Training: Initialize replay buffer with 10k random molecules. Train for 200,000 steps with batch size 128. Decay ε from 1.0 to 0.05 over 50,000 steps.

- Evaluation: Every 10k steps, run 100 greedy evaluation episodes and record the top 10 molecules by reward.

Protocol 2: Implementing Action Masking

- Validation: For each possible action

ain the global action list, call the environment'sstate.is_valid_action(a)function. - Mask Generation: Create a boolean mask where invalid actions are

Trueand valid actions areFalse. - Network Forward Pass: Compute the raw Q-values for all actions. Before the final output, set the Q-values for masked (invalid) actions to a large negative number (e.g., -1e9).

- Action Selection: During ε-greedy selection, only sample from the subset of valid, unmasked actions.

Visualizations

Title: MolDQN Training Loop with Action Masking

Title: Dueling DQN Architecture for MolDQN

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for MolDQN Research

| Item | Function | Recommended Source/Product |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, fingerprinting, and property calculation. Core component of the chemical environment. | rdkit.org |

| PyTorch / TensorFlow | Deep learning frameworks for building and training the DQN network. PyTorch is often preferred for research prototyping. | pytorch.org / tensorflow.org |

| OpenAI Gym | API for designing and interacting with reinforcement learning environments. Used as a template for the custom molecular environment. | gym.openai.com |

| Molecular Dataset (e.g., ZINC) | Source of initial, valid molecules for pre-filling the replay buffer and benchmarking. | zinc.docking.org |

| GPU Computing Resource | Accelerates neural network training. Essential for experiments beyond trivial scale. | NVIDIA RTX/A100 series with CUDA |

| Property Prediction Models (e.g., QED, SA) | Fast, pre-computed or pre-trained functions to serve as reward proxies during training. | Integrated in RDKit or custom-trained. |

| Action Masking Library | Custom code layer to integrate chemical rules into the DQN's action selection. | Must be implemented per environment. |

Benchmark Datasets and Success Metrics for Molecular Optimization

Technical Support Center: Troubleshooting MolDQN Hyperparameter Optimization

FAQs & Troubleshooting Guides

Q1: My MolDQN agent fails to learn, and the reward does not increase over episodes. What could be wrong?

A: This is often due to inappropriate hyperparameters. First, verify your learning rate (lr). A rate that is too high (e.g., >0.01) can cause divergence, while one too low (<1e-5) stalls learning. The recommended starting point is 0.0005. Second, check your experience replay buffer size. A small buffer (<10,000) leads to poor sample diversity and overfitting. For typical benchmarks, a buffer size of 100,000 is effective. Ensure you have sufficient exploration by verifying your epsilon decay schedule; an overly aggressive decay can trap the agent in suboptimal policies early on.

Q2: The generated molecules are chemically invalid at a high rate (>50%). How can I improve this?

A: High invalidity rates typically stem from issues with the action space or the reward function. 1) Action Masking: Implement strict action masking during training to prohibit chemically impossible actions (e.g., adding a bond to a hydrogen atom). 2) Reward Shaping: Incorporate a small, negative penalty for invalid actions or intermediate invalid states into your reward function (R_invalid = -0.1). This guides the agent away from invalid trajectories. 3) State Representation: Double-check your molecular graph representation for consistency; a bug here is a common root cause.

Q3: Performance varies wildly between training runs with the same hyperparameters. How do I stabilize training?

A: High variance can be addressed by: 1) Gradient Clipping: Implement gradient clipping (norm clipping at 10.0 is a standard value) to prevent exploding gradients. 2) Fixed Random Seeds: Set fixed seeds for Python, NumPy, and PyTorch/TensorFlow at the start of every run for reproducibility. 3) Target Network Update Frequency: Reduce the frequency of updating the target Q-network (tau). Instead of soft updates every step, try updating every 100-500 steps to provide a more stable learning target.

Q4: How do I choose the right benchmark dataset for my specific optimization goal (e.g., solubility vs. binding affinity)?

A: Select a dataset that matches your property of interest. Use the table below for guidance. Always start with a smaller dataset like ZINC250k to prototype your hyperparameter pipeline before moving to larger, more complex benchmarks like Guacamol.

Key Benchmark Datasets for Molecular Optimization

Table 1: Summary of primary public benchmark datasets.

| Dataset | Size | Primary Property/Goal | Typical Success Metric(s) | Use Case for Hyperparameter Tuning |

|---|---|---|---|---|

| ZINC250k | 250,000 molecules | LogP, QED | % improvement over start, % valid, novelty | Initial algorithm development & stability testing |

| Guacamol | ~1.6M molecules | Multi-objective (e.g., Celecoxib similarity) | Benchmark-specific scores (e.g., validity, uniqueness, novelty) | Testing multi-objective & constrained optimization |

| MOSES | ~1.9M molecules | Drug-likeness, Synthesizability | Frechet ChemNet Distance (FCD), SNN, internal diversity | Evaluating distribution learning and generative quality |

| QM9 | 134k molecules | Quantum chemical properties (e.g., HOMO-LUMO gap) | Mean Absolute Error (MAE) of property prediction | Optimizing for precise, physics-based targets |

Essential Success Metrics Table

Table 2: Quantitative metrics for evaluating molecular optimization runs.

| Metric | Formula/Description | Optimal Range | Interpretation for MolDQN Tuning |

|---|---|---|---|

| % Valid | (Valid Molecules / Total Generated) * 100 | >95% | Indicates action space & reward shaping efficacy. |

| % Novel | (Molecules not in Training Set / Valid) * 100 | High, but task-dependent | Measures overfitting; low novelty may mean insufficient exploration (high gamma). |

| % Unique | (Unique Molecules / Valid) * 100 | >80% | Low uniqueness suggests mode collapse; adjust replay buffer & exploration. |

| Property Improvement | (Avg. Prop. of Top-100 vs. Starting) | Positive, task-dependent | The primary objective. Correlates with reward function design and discount factor (gamma). |

| Frechet ChemNet Distance (FCD) | Distance between generated and reference distributions | Lower is better | Assesses distributional learning. High FCD suggests poor generalization; tune network architecture. |

Experimental Protocol: Hyperparameter Grid Search for MolDQN

This protocol frames hyperparameter optimization within a thesis research context.

1. Objective: Systematically identify the optimal set of hyperparameters for a MolDQN agent to maximize the penalized LogP score on the ZINC250k benchmark.

2. Initial Setup:

- Baseline Model: Implement a standard MolDQN with a Graph Neural Network (GNN) as the Q-function approximator.

- Fixed Parameters: Set environment and model architecture parameters (e.g., state representation, GNN layers=3, hidden dim=128).

- Action Space: Define allowed atom/bond additions and removals with masking.

3. Hyperparameter Search Space:

- Learning Rate (lr): [0.0001, 0.0005, 0.001]

- Discount Factor (gamma): [0.7, 0.9, 0.99]

- Replay Buffer Size: [50k, 100k, 200k]

- Batch Size: [32, 64, 128]

- Target Network Update (

tau): [0.01 (soft), 100 steps (hard)]

4. Procedure: 1. For each hyperparameter combination, run 3 independent training runs with different random seeds. 2. Train the agent for 2,000 episodes on the ZINC250k environment. 3. Every 50 episodes, save the model and run an evaluation phase: generate 100 molecules from 100 random starting points. 4. Record the metrics from Table 2 for each evaluation. 5. The primary success metric is the average penalized LogP of the top 5% of valid, unique molecules at the end of training.

5. Analysis:

- Plot the primary success metric across all runs to identify the best-performing hyperparameter set.

- Analyze the correlation between hyperparameters and stability metrics (e.g., variance in reward).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential resources for MolDQN hyperparameter research.

| Item/Resource | Function in Research | Example/Note |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, validity checking, and descriptor calculation. | Used to implement the chemical environment, action masking, and calculate metrics like QED. |

| PyTorch Geometric (PyG) or DGL | Libraries for building and training GNNs on graph-structured data (molecules). | Essential for implementing the Q-network that processes the molecular graph state. |

| Weights & Biases (W&B) or TensorBoard | Experiment tracking and visualization platforms. | Critical for logging hyperparameters, metrics, and molecule samples across hundreds of runs. |

| OpenAI Gym-style Environment | Custom environment defining state, action, reward, and transition for molecular modification. | The core "reagent" for reinforcement learning; must be bug-free and efficient. |

| Guacamol / MOSES Benchmark Suites | Standardized evaluation frameworks with scoring functions. | Use to obtain final, comparable performance scores after hyperparameter tuning. |

Workflow & Pathway Visualizations

Title: MolDQN Hyperparameter Tuning Workflow

Title: MolDQN Reward Shaping Pathway

Practical Strategies for Tuning MolDQN Hyperparameters in Drug Discovery Projects

Technical Support Center

Troubleshooting Guides

Q1: Why does my MolDQN model fail to learn, showing no improvement in reward? A1: This is often due to incorrect hyperparameter scaling. Follow this protocol:

- Check Learning Rate: Start with a low value (e.g., 1e-5) and increase logarithmically.

- Verify Reward Scaling: Ensure the reward function output magnitude is appropriate. Rescale rewards to [-1, 1] if necessary.

- Inspect Gradient Flow: Use gradient clipping (norm max of 10.0) to prevent exploding gradients.

- Validate Q-target Update: The target network update frequency (

target_update) is critical. Begin with a soft update parameter (τ) of 0.01.

Q2: How do I address high variance and instability during MolDQN training? A2: Instability typically stems from replay buffer and exploration settings.

- Increase Replay Buffer Size: For molecular generation, a buffer size of 1,000,000+ experiences is often necessary.

- Adjust Exploration Epsilon Decay: Use a slower decay schedule. A linear decay over 1,000,000 steps is more stable than 100,000 steps.

- Implement Double DQN: This decouples action selection from evaluation, reducing overestimation bias.

- Tune Discount Factor (Gamma): For molecular property optimization, a gamma of 0.9 may be more stable than 0.99.

Q3: What should I do if the model generates invalid molecular structures repeatedly? A3: This indicates an issue with the action space or penalty function.

- Review Invalid Action Masking: Ensure the network architecture correctly masks invalid chemical actions (e.g., adding a bond that violates valency rules) during training.

- Increase Invalid Action Penalty: Sharply increase the negative reward for invalid steps (e.g., from -1 to -10).

- Curriculum Learning: Start training on simpler, smaller molecules to let the agent learn basic chemical rules first.

Frequently Asked Questions (FAQs)

Q: What is a recommended baseline hyperparameter set to start a MolDQN experiment? A: Based on current literature, the following baseline provides a stable starting point for molecular optimization tasks like penalized logP.

Table 1: Baseline MolDQN Hyperparameters

| Hyperparameter | Baseline Value | Purpose |

|---|---|---|

| Learning Rate (α) | 0.0001 | Controls NN weight update step size. |

| Discount Factor (γ) | 0.9 | Determines agent's future reward foresight. |

| Replay Buffer Size | 1,000,000 | Stores past experiences for decorrelated sampling. |

| Batch Size | 128 | Number of experiences sampled per training step. |

| Exploration Epsilon Start | 1.0 | Initial probability of taking a random action. |

| Epsilon Decay | 1,000,000 steps | Steps over which epsilon linearly decays to 0.01. |

| Target Update (τ) | 0.01 | Soft update parameter for the target Q-network. |

Q: What is the systematic workflow for moving from this baseline to an optimized model? A: Follow a sequential, iterative optimization workflow. Change only one major group of parameters at a time and evaluate.

Diagram Title: MolDQN Hyperparameter Optimization Workflow

Q: What specific experimental protocol should I use for Step 1 (Core RL Optimization)? A:

- Fix all hyperparameters from Table 1 as your baseline.

- Vary the learning rate: [1e-5, 1e-4, 1e-3]. Run each for 50,000 steps.

- Metric: Track the smoothed average reward per episode. Choose the LR with the highest, most stable ascent.

- Vary the discount factor (gamma): [0.95, 0.9, 0.8]. Run each for 50,000 steps.

- Metric: Observe the final Q-value distribution. Too high gamma (0.99) can cause instability; choose the value that yields realistic, stable Q-values.

- Lock in the best-performing learning rate and gamma before proceeding to Step 2.

Q: Can you provide example quantitative results from such an optimization step? A: Yes. Below are illustrative results from a Step 1 experiment optimizing for penalized logP improvement.

Table 2: Step 1 - Core RL Parameter Search Results

| Learning Rate (α) | Avg. Final Reward (↑) | Reward Std Dev (↓) | Gamma (γ) | Avg. Q-Value (Stable?) | Selected? |

|---|---|---|---|---|---|

| 0.0001 | 4.21 | 1.52 | 0.9 | 12.4 ± 2.1 (Yes) | Yes |

| 0.001 | 3.87 | 2.89 | 0.9 | 45.7 ± 15.3 (No) | No |

| 0.00001 | 2.15 | 0.91 | 0.9 | 5.1 ± 0.8 (Yes) | No |

| 0.0001 | 3.95 | 1.61 | 0.95 | 28.5 ± 9.7 (No) | No |

| 0.0001 | 3.88 | 1.55 | 0.8 | 8.2 ± 1.4 (Yes) | No |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for MolDQN

| Item | Function in MolDQN Research |

|---|---|

| RDKit | Open-source cheminformatics toolkit used to define the molecular action space, validate structures, and calculate chemical properties (e.g., logP, QED). |

| Deep RL Framework (e.g., RLlib, Stable-Baselines3) | Provides scalable, tested implementations of DQN and variants (Double DQN, Dueling DQN), reducing code errors. |

| Molecular Simulation Environment (e.g., Gym-Molecule) | Custom OpenAI Gym environment that defines state/action space for molecular generation and computes step-wise rewards. |

| Neural Network Library (e.g., PyTorch, TensorFlow) | Facilitates the design and training of the Q-network that maps molecular states to action values. |

| High-Throughput Computing Cluster | Essential for parallelizing hyperparameter sweeps across hundreds of runs, as a single experiment can take days on a single GPU. |

| Weights & Biases (W&B) / MLflow | Experiment tracking tools to log hyperparameters, metrics, and model outputs, enabling clear comparison across runs. |

Q: What are the key signaling pathways or logical components in the MolDQN agent's decision loop? A: The agent interacts with the chemical environment through a cyclical pathway of state evaluation, action selection, and learning.

Diagram Title: MolDQN Agent-Environment Interaction Pathway

Troubleshooting Guides and FAQs

Q1: During my MolDQN training, my reward plateaus and then collapses after a period of apparent learning. The loss shows NaN values. What is happening and how do I fix it?

A: This is a classic symptom of unstable gradients, often termed "gradient explosion," which is common in RL and complex architectures like MolDQN.

- Primary Cause: The learning rate might be too high for the selected optimizer (especially Adam), or the gradient clipping threshold is set too high or is not applied.

- Troubleshooting Steps:

- Implement Gradient Clipping: Apply global norm gradient clipping with a threshold between 0.5 and 1.0. This is non-negotiable for MolDQN stability.

- Reduce Learning Rate: Start with a lower base learning rate (e.g., 1e-4 to 1e-5).

- Switch Optimizer Temporarily: As a diagnostic, try RMSprop, which can be more stable in some RL scenarios due to its simpler update rule.

- Check Reward Scaling: Ensure rewards are normalized or scaled to a reasonable range (e.g., [-1, 1]).

Q2: My model converges very slowly. Training for hundreds of episodes shows minimal improvement in generated molecule properties. How can I accelerate convergence?

A: Slow convergence often relates to optimizer choice and learning rate schedule.

- Primary Cause: A static, overly conservative learning rate or an optimizer not suited to the sparse, noisy reward landscape of molecular generation.

- Troubleshooting Steps:

- Adopt a Learning Rate Schedule: Implement a schedule. Cosine annealing with warm restarts (SGDR) is highly effective for MolDQN, allowing the network to escape local minima periodically.

- Optimizer Tuning: Adam is usually a good starting point. Increase

beta1(e.g., to 0.95) to increase momentum, helping to smooth updates across sparse reward signals. - Increase Batch Size: If memory allows, a larger batch size provides a more stable gradient estimate, allowing for a slightly higher learning rate.

Q3: I observe high variance in my training runs—identical seeds yield different final performance. How can I improve reproducibility and stability?

A: Variance stems from optimizer stochasticity and environment interaction.

- Primary Cause: The inherent variance in RL, compounded by adaptive optimizers like Adam which maintain internal state (moment estimates) that can vary.

- Troubleshooting Steps:

- Seed Everything: Set seeds for Python random, NumPy, PyTorch/TensorFlow, and the environment.

- Consider RMSprop: RMSprop has less internal state than Adam and can sometimes yield more reproducible results in stochastic environments.

- Average Multiple Runs: Report mean and standard deviation over 3-5 independent runs.

- Collect More Samples: Increase the number of environment steps per update to reduce variance in policy gradient estimates.

Q4: How do I choose between Adam and RMSprop for my MolDQN project?

A: The choice is empirical but guided by the problem's characteristics.

- Adam is generally the default. It combines momentum and adaptive per-parameter learning rates. Use it when the reward landscape is complex and noisy, which is typical for molecular optimization. It requires tuning

learning_rate,beta1,beta2, andepsilon. - RMSprop can be more stable in highly non-stationary settings (like RL). It's a good choice if you encounter instability with Adam or if you want a simpler adaptive method. It requires tuning

learning_rate,rho(decay rate), andepsilon. - Protocol: Start with Adam (lr=1e-4, betas=(0.9, 0.999), eps=1e-8) with gradient clipping. If unstable, try lowering the lr, then experiment with RMSprop (lr=1e-4, rho=0.95, eps=1e-6). Always use a schedule like cosine annealing.

Optimizer and Schedule Comparison Data

Table 1: Optimizer Hyperparameter Comparison for MolDQN

| Optimizer | Key Hyperparameters | Recommended Starting Value for MolDQN | Primary Function in MolDQN Context |

|---|---|---|---|

| Adam | learning_rate beta1 beta2 epsilon |

1e-4 0.9 0.999 1e-8 | Adaptive learning with momentum. Good for navigating noisy, sparse reward landscapes. |

| RMSprop | learning_rate rho epsilon |

1e-4 0.95 1e-6 | Adaptive learning without momentum. Can offer stability in non-stationary RL updates. |

| SGD with Momentum | learning_rate momentum |

1e-3 0.9 | Basic, can work with careful tuning and strong schedules. Less common for MolDQN. |

Table 2: Learning Rate Schedule Performance Summary

| Schedule | Key Parameter | Impact on MolDQN Training | When to Use |

|---|---|---|---|

| Cosine Annealing with Restarts (SGDR) | T_0 (initial restart period) T_mult (period multiplier) |

Allows model to escape local minima; improves final compound quality. | Default recommendation. Excellent for long training runs. |

| Exponential Decay | decay_rate decay_steps |

Smoothly reduces exploration rate; stable but may converge prematurely. | Good for initial baseline experiments. |

| 1-Cycle / Cyclical | max_lr step_size |

Fast convergence by using large learning rates. | Useful for rapid prototyping with limited compute. |

| Constant | learning_rate |

No change. Simple. | Not recommended for final runs; leads to sub-optimal convergence. |

Experimental Protocols

Protocol 1: Diagnosing Optimizer Instability

- Setup: Initialize your MolDQN agent with a simple environment (e.g., a single property goal like QED).

- Instrumentation: Log

gradient_norm(L2 norm) andlossat every update step. - Intervention: Run three short experiments (100 episodes each): a. Baseline: Adam, lr=1e-3, no gradient clipping. b. Intervention 1: Adam, lr=1e-3, gradient clipping (maxnorm=1.0). c. Intervention 2: RMSprop, lr=1e-3, gradient clipping (maxnorm=1.0).

- Analysis: Plot gradient norms and loss. Instability is indicated by spikes in gradient norm >10 followed by NaN loss. The stable configuration is your baseline.

Protocol 2: Evaluating a Learning Rate Schedule

- Baseline: Train your MolDQN with a constant learning rate (e.g., 1e-4) for 500 episodes. Record the mean reward per episode (smoothed).

- Intervention: Train the same model with identical seeds and hyperparameters, but implement Cosine Annealing Warm Restarts (

T_0=100,T_mult=2). The maximum learning rate in the schedule should equal your constant LR (1e-4). - Comparison: Plot the moving average of rewards for both runs. The schedule should show periodic "resets" followed by higher peaks in reward, ultimately outperforming the constant schedule.

Visualizations

Title: Optimizer and LR Schedule Troubleshooting Flow

Title: Cosine Annealing Schedule Table for MolDQN

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for MolDQN Optimization

| Item | Function in MolDQN Context | Example/Note |

|---|---|---|

| Adam Optimizer | The primary "catalyst" for updating network weights. Adapts learning rates per-parameter, crucial for complex reward signals. | torch.optim.Adam(model.parameters(), lr=1e-4, betas=(0.9, 0.999)) |

| RMSprop Optimizer | An alternative adaptive optimizer. Can stabilize training when reward signals are highly non-stationary. | torch.optim.RMSprop(model.parameters(), lr=1e-4, alpha=0.95) |

| CosineAnnealingWarmRestarts Scheduler | The "schedule" controlling optimizer aggressiveness. Periodically resets LR to escape poor molecular design policy local minima. | torch.optim.lr_scheduler.CosineAnnealingWarmRestarts(optimizer, T_0=50) |

| Gradient Clipping | A "stabilizing agent" to prevent optimizer updates from becoming too large and causing numerical overflow (NaN loss). | torch.nn.utils.clip_grad_norm_(model.parameters(), max_norm=1.0) |

| Replay Buffer | Stores state-action-reward transitions. Provides decorrelated, batched samples for training, improving optimizer update quality. | Size: 1e5 to 1e6 experiences. Sampling strategy: uniform or prioritized. |

| Molecular Fingerprint or Featureizer | Converts discrete molecular structures into continuous vectors, forming the input state for the DQN. | ECFP fingerprints (radius=3, nBits=2048) or graph neural network features. |

Technical Support Center

Troubleshooting Guides

Guide 1: Addressing Overfitting in MolDQN

- Symptom: Validation reward plateaus or decreases while training reward continues to increase sharply.

- Diagnosis: The network is memorizing training molecules/state-action pairs instead of learning generalizable Q-value approximations.

- Solution Steps:

- Verify Data: Ensure your training and validation sets are distinct and representative.

- Increase Dropout: Incrementally increase the dropout rate by 0.1-0.2 in all hidden layers.

- Reduce Network Capacity: If dropout alone is insufficient, reduce the node count in one layer at a time, retraining and evaluating after each change.

- Implement Early Stopping: Halt training when validation reward fails to improve for a predetermined number of epochs (patience).

Guide 2: Managing Underfitting and Slow Learning

- Symptom: Both training and validation rewards remain low, failing to converge to a satisfactory policy.

- Diagnosis: The network lacks the representational capacity to model the complex Q-function for molecular optimization.

- Solution Steps:

- Increase Network Depth: Add one additional hidden layer and re-train. Monitor performance closely.

- Increase Network Width: Systematically increase the node count in existing layers (e.g., double nodes per layer).

- Reduce Dropout: Temporarily set dropout rates to 0 to confirm if regularization is the bottleneck, then reintroduce slowly.

- Check Learning Rate: A low learning rate can also cause slow learning; ensure it is tuned appropriately alongside architecture changes.

Guide 3: Debugging Gradient Instability (Vanishing/Exploding)

- Symptom: Reward becomes NaN, or model weights show extremely large or small values during training.

- Diagnosis: Poorly scaled activations or weights cause unstable gradient flow through deep layers.

- Solution Steps:

- Implement Batch Normalization: Add BatchNorm layers after each dense layer and before activation.

- Use Activation Functions: Switch to ReLU or its variants (Leaky ReLU) instead of sigmoid/tanh for hidden layers.

- Apply Gradient Clipping: Clip gradients to a maximum norm (e.g., 1.0) during the optimizer update step.

- Review Weight Initialization: Use He or Xavier initialization schemes appropriate for your chosen activation function.

Frequently Asked Questions (FAQs)

Q1: What is a good starting point for hidden layer configuration in a MolDQN? A: Based on recent literature, a strong baseline is 2-3 hidden layers with 256-512 nodes each, using ReLU activations. This provides sufficient capacity for most molecular state-action spaces without being excessively large. Start with zero dropout for initial capacity testing.

Q2: How should I prioritize tuning depth (layers) vs. width (nodes)? A: Current empirical results suggest prioritizing width initially. Increasing node count often yields a more immediate performance gain for the computational cost. Explore depth (adding layers) once width tuning shows diminishing returns, as deeper networks can capture more hierarchical features but are harder to train.

Q3: What is the relationship between dropout rate and network size? A: They are complementary regularization tools. Larger networks (more nodes/layers) have higher capacity to overfit and typically require higher dropout rates (e.g., 0.3-0.5). Smaller networks may need lower dropout (0.0-0.2) to avoid underfitting. Always tune them concurrently.

Q4: How do I know if my architecture is the bottleneck, or if it's another hyperparameter (like learning rate)? A: Conduct an ablation study. Train a deliberately oversized network (very wide/deep) with aggressive dropout on your problem. If its final performance surpasses your current model, your architecture was likely the bottleneck. If performance is similar, the bottleneck lies elsewhere (e.g., learning rate, replay buffer size, exploration strategy).

Experimental Data & Protocols

Table 1: Comparison of Published MolDQN Architectures and Performance

| Study (Year) | Hidden Layers | Node Count per Layer | Dropout Rate | Key Performance Metric (e.g., Max Reward) | Best Molecule Property Achieved |

|---|---|---|---|---|---|

| Zhou et al. (2023) | 3 | [512, 512, 512] | 0.2 (all layers) | 35% higher than baseline | QED: 0.95 |

| Patel & Lee (2024) | 2 | [1024, 512] | 0.3 (first layer only) | Converged 50% faster | Penalized LogP: +10.2 |

| Chen et al. (2024) | 4 | [256, 256, 128, 128] | 0.1, 0.1, 0.2, 0.2 | Superior generalization | Synthesizability Score: 0.89 |

Detailed Experimental Protocol: Systematic Architecture Sweep

Objective: To empirically determine the optimal hidden layer count, node count, and dropout rate for a specific molecular optimization task (e.g., maximizing Penalized LogP).

Methodology:

- Fixed Parameters: Keep all non-architecture hyperparameters constant (learning rate=0.001, replay buffer size=1M, discount factor=0.99).

- Grid Search Design:

- Layers: Test architectures with 1, 2, 3, and 4 hidden layers.

- Nodes: For each layer count, test "Small" (128 nodes/layer), "Medium" (256), and "Large" (512) configurations. Keep node count symmetric across layers in a given run.

- Dropout: For each (Layer, Node) combination, test dropout rates of 0.0, 0.2, and 0.4 applied to all hidden layers.

- Training & Evaluation: Each configuration is trained for 500 episodes. Performance is evaluated by the average reward over the last 100 episodes and the top-3 molecule scores discovered.

- Analysis: Identify the configuration that provides the best trade-off between final performance, training stability, and computational efficiency.

Visualizations

Title: MolDQN Architecture Tuning Workflow

Title: Shallow-Wide vs. Deep-Narrow Network Topology

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for MolDQN Architecture Experiments

| Item | Function in Experiment |

|---|---|

| Deep Learning Framework (PyTorch/TensorFlow) | Provides the foundational libraries for constructing, training, and evaluating neural network architectures. |

| Molecular Representation Library (RDKit) | Converts molecular structures (SMILES) into numerical feature vectors (fingerprints, descriptors) suitable for neural network input. |

| Hyperparameter Optimization Suite (Optuna, Weights & Biases) | Automates the search over architecture parameters (layers, nodes, dropout) and tracks experimental results. |

| High-Performance Computing (HPC) Cluster or Cloud GPU (NVIDIA V100/A100) | Enables the computationally intensive training of hundreds of network architecture variants in parallel. |

| Chemical Metric Calculator (e.g., for QED, LogP, SA Score) | Defines the reward function by calculating the desired chemical properties of generated molecules. |

| Replay Buffer Implementation | Stores and samples past state-action-reward transitions for stable deep Q-learning, independent of network architecture. |

Troubleshooting Guides & FAQs

Q1: During MolDQN training, my agent converges to a suboptimal policy that favors short-term rewards, ignoring crucial long-term molecular stability. Could the discount factor (gamma) be the issue?

A1: Yes, this is a classic symptom of a suboptimal gamma. In molecular optimization, properties like synthetic accessibility (SA) score or long-term toxicity are delayed rewards. A gamma value too close to 0 makes the agent myopic.

- Diagnosis: Plot the cumulative reward per episode. If it plateaus at a low level while key long-term property scores (e.g., QED, Solubility) remain poor, gamma is likely too low.

- Solution: Systematically increase gamma from a baseline (e.g., 0.7) towards 0.99 in increments of 0.05. Monitor the 50-episode moving average of your primary objective (e.g., DRD2 binding affinity with SA constraint).

Recommended Gamma Ranges for Molecular Design:

| Gamma Value | Typical Use Case in MolDQN | Trade-off |

|---|---|---|

| 0.70 - 0.85 | Optimizing for immediate synthetic feasibility (single-step cost). | May miss optimal long-term pharmacodynamics. |

| 0.90 - 0.97 | Recommended starting range. Balances immediate reward (e.g., logP) with long-term stability. | Standard for most drug-like property optimization. |

| 0.98 - 0.99 | Optimizing for complex, multi-property goals where final molecule fitness is critical. | Slower convergence, requires more samples. |

Q2: My model's performance is highly unstable, with large variance in reward between training runs, even with the same gamma. What should I check?

A2: This often points to an issue with the Experience Replay Buffer. Instability can arise from a buffer that is too small, causing overfitting to recent experiences, or from stale data if the buffer is too large and not updated effectively.

- Diagnosis: Log the "buffer age" – the average number of training steps since experiences in a sampled batch were collected. Rapid policy collapse often correlates with very low average age.

- Solution:

- Ensure your buffer size is at least 10-20x your batch size.

- Implement a prioritized replay buffer to refresh critical experiences (e.g., those leading to high-scoring molecules).

- If using a large buffer (>1M transitions), increase the learning start step to allow the buffer to populate meaningfully before training begins.

Q3: How do I jointly tune Gamma and Replay Buffer Size for a MolDQN experiment on a new target?

A3: Follow this experimental protocol:

- Fix Baseline Parameters: Set initial learning rate, epsilon decay, and network architecture from literature.

- Stage 1 - Gamma Sweep: With a moderate buffer size (e.g., 100k), perform a gamma sweep:

[0.80, 0.90, 0.95, 0.98, 0.99]. Run each for a fixed number of environment steps (e.g., 200k). Identify the gamma yielding the highest final average reward. - Stage 2 - Buffer Size Sweep: Using the optimal gamma from Stage 1, perform a buffer size sweep:

[10k, 50k, 100k, 250k, 500k]. - Evaluation: The optimal pair minimizes the variance (standard deviation) of the top-10% of molecules generated over 5 random seeds while maximizing the mean reward of that top set.

Experimental Protocol: Gamma vs. Buffer Size Grid Search

Q4: What are the computational memory implications of a very large replay buffer (e.g., >1 million transitions) in molecular RL?

A4: A large buffer storing complex molecular states (e.g., graph representations, fingerprints) can exceed 10+ GB of RAM. This can bottleneck sampling speed and lead to out-of-memory errors on standard GPUs.

- Mitigation Strategy: Use a compressed representation for the "state." Instead of storing the full SMILES string or graph, store a unique molecular fingerprint (e.g., Morgan fingerprint bits) as the state tensor. This drastically reduces memory footprint per transition.

- Formula for Estimation:

Memory ≈ (Buffer Size) * [Size(State) + Size(Action) + Size(Reward) + Size(Next State)] * 4 bytes (float32).

Q5: My agent fails to discover any high-scoring molecules early in training. Should I adjust the buffer or gamma?

A5: This is an exploration issue. Initially, focus on replay buffer initialization before tuning gamma.

- Protocol: Pre-population with Heuristic Policy:

- Action: Before training starts, run a simple random or rule-based agent (e.g., favoring known chemical reactions) for ~10k steps to fill ~25% of the replay buffer with diverse (state, action, reward) tuples.

- Gamma: Start with a high gamma (0.99) to encourage long-term exploration from the outset.

- Result: This provides a foundation of experiences, preventing the agent from forgetting rare, high-reward discoveries early in training.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in MolDQN Hyperparameter Tuning |

|---|---|

| Ray Tune / Weights & Biases (Sweeps) | Enables automated hyperparameter grid/search across multiple GPU nodes, crucial for statistically sound gamma/buffer comparisons. |

| Prioritized Experience Replay (PER) Library | (e.g, schaul/prioritized-experience-replay) Manages the replay buffer, sampling high-TD-error transitions more frequently to learn efficiently from costly molecular simulations. |

| Molecular Fingerprint Library | (e.g., RDKit's GetMorganFingerprintAsBitVect) Converts molecular states into compact, fixed-length bit vectors for efficient storage in large replay buffers. |

| Custom Reward Wrapper | A software module that defines the multi-objective reward function (e.g., combining logP, SA, binding affinity). Critical for testing different gamma values as it defines what "long-term" means. |

| Distributed Replay Buffer | A shared memory buffer across multiple parallel environment workers. Essential for decoupling data collection (expensive molecular dynamics/property calculation) from training. |

Diagram: MolDQN Training Loop with Hyperparameter Focus

Troubleshooting Guides & FAQs

FAQ 1: My MolDQN agent is converging to a suboptimal policy early in training. The agent seems to stop exploring new molecular structures. What could be wrong?

- Answer: This is a classic sign of premature exploitation, often caused by an overly aggressive epsilon decay schedule. If epsilon (the exploration rate) decays to a near-zero value too quickly, the agent will lock into its initially learned, likely suboptimal, policy. Within the MolDQN context, this means the agent will repeatedly propose similar molecular scaffolds without exploring potentially superior regions of chemical space.

- Solution: Implement a slower decay schedule. Switch from a linear decay to an exponential or polynomial decay. Monitor the "fraction of novel molecules generated per episode" as a metric. If this metric drops sharply early in training, slow your decay. A common fix is to increase the

decay_stepsparameter or reduce thedecay_ratein exponential decay.

FAQ 2: The agent explores continuously, failing to improve the objective (e.g., QED, DRD2). The reward plot is noisy and shows no upward trend.

- Answer: This indicates insufficient exploitation. The epsilon value is likely too high throughout training, preventing the agent from consistently leveraging its best-found actions to refine the Q-network estimates. In molecular optimization, this manifests as random generation without learning to optimize desired properties.

- Solution: Increase the initial decay speed or lower the

epsilon_final(minimum exploration rate) value. Ensure your replay buffer is large enough to store and effectively sample from high-reward experiences. Verify that your reward function correctly scores the properties of interest.

FAQ 3: Training performance is highly sensitive to the choice of epsilon decay parameters. How can I systematically find good values?

- Answer: Perform a hyperparameter grid search focused on the decay strategy. Key parameters to vary are

epsilon_start,epsilon_end,decay_steps, anddecay_type. Use a parallel coordinate plot or a summary table to correlate decay parameters with final performance metrics. - Solution Protocol:

- Define a search space (e.g.,

decay_type: ['linear', 'exponential'],decay_steps: [10000, 50000, 100000]). - Run multiple short training runs (e.g., 50-100k steps) for each configuration.

- Record the best reward achieved, average reward over the last 10 episodes, and time to threshold.

- Select the top 2-3 configurations for a full-length training run.

- Define a search space (e.g.,

FAQ 4: How do I choose between linear, exponential, and inverse polynomial decay for a MolDQN project?

- Answer: The choice depends on the size and complexity of your chemical action space and the training budget.

- Linear Decay: Simple, provides a predictable exploration budget. Risk: sudden drop in exploration.

- Exponential Decay: Very aggressive initial drop, then a long tail. Good for large action spaces where some perpetual randomness is beneficial.

- Inverse Polynomial (e.g., ε ∝ 1/t): Very slow decay. Useful when the reward landscape is extremely sparse and noisy, ensuring exploration continues deep into training.

- Recommendation: For molecular generation starting from a large library of fragments, begin with exponential decay and adjust the rate based on the troubleshooting guides above.

Table 1: Performance of Epsilon Decay Strategies in a Standard MolDQN Benchmark (Optimizing QED)

| Decay Strategy | ε Start | ε End | Decay Steps | Final Avg. Reward (↑) | Best Molecule QED (↑) | Time to QED >0.9 (steps ↓) |

|---|---|---|---|---|---|---|

| Linear | 1.0 | 0.01 | 100k | 0.72 | 0.89 | 85k |

| Exponential | 1.0 | 0.01 | 50k | 0.78 | 0.92 | 62k |

| Inverse Poly (power=0.5) | 1.0 | 0.01 | N/A | 0.75 | 0.91 | 78k |

| Fixed (ε=0.1) | 0.1 | 0.1 | N/A | 0.65 | 0.85 | N/A |

Table 2: Hyperparameter Grid Search Results (Exponential Decay)

| Run ID | Decay Rate | ε Final | Decay Steps | Final Avg. Reward | Optimal? |

|---|---|---|---|---|---|

| E1 | 0.9999 | 0.01 | 100k | 0.78 | Yes |

| E2 | 0.99995 | 0.05 | 100k | 0.80 | Best |

| E3 | 0.999 | 0.001 | 50k | 0.70 | No |

| E4 | 0.99999 | 0.01 | 200k | 0.77 | Yes |

Experimental Protocols

Protocol 1: Benchmarking Decay Schedules

- Setup: Initialize a MolDQN agent with a defined fragment library, Q-network architecture, and reward function (e.g., QED).

- Intervention: Implement three separate decay schedules (Linear, Exponential, Inverse Polynomial) with matched initial (ε=1.0) and final (ε=0.01) values. For exponential decay, use:

ε = ε_final + (ε_start - ε_final) * exp(-step / decay_steps). - Control: Run a fixed epsilon strategy (ε=0.1).

- Training: Train each agent for 200,000 steps. Log the episodic reward and the best molecule found every 5,000 steps.

- Evaluation: Plot moving average reward (window=50) vs. training steps. Compare the maximum property score achieved and the step at which it was first discovered.

Protocol 2: Systematic Hyperparameter Tuning for Epsilon Decay

- Define Objective: Primary: Final Average Reward (last 20 episodes). Secondary: Speed of convergence.

- Configure Search Space: Use a tool like Optuna or manual grid search over:

decay_type,decay_steps(ordecay_rate),epsilon_final. - Execute Trials: For each configuration, run 3 independent training runs of 100k steps to account for randomness.

- Analyze: Calculate the mean and standard deviation of the primary objective for each configuration. Rank configurations. Perform a Pareto analysis if considering both reward and convergence speed.

- Validate: Take the top-ranked configuration and run a single, long training run (500k+ steps) to confirm performance.

Visualizations

Title: Epsilon Decay Strategy Paths

Title: Hyperparameter Tuning Protocol for ε Decay

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for MolDQN with Epsilon-Greedy Experiments

| Item | Function in Experiment | Example/Note |

|---|---|---|

| RL Framework | Provides the core DQN algorithm, neural network models, and training loops. | DeepChem's RL module, TF-Agents, Stable-Baselines3. |

| Chemistry Toolkit | Handles molecule representation, validity checks, and property calculation. | RDKit (for SMILES manipulation, QED, SA score). |

| Fragment Library | Defines the "action space" for building molecules. | A curated set of SMILES strings representing allowed chemical fragments. |

| Hyperparameter Tuning Library | Automates the search for optimal ε-decay and other parameters. | Optuna, Ray Tune, Weights & Biases Sweeps. |

| Visualization Suite | Plots training metrics, molecule properties, and decay schedules. | Matplotlib/Seaborn for graphs, RDKit for molecular structures. |

| Reward Function | Encodes the objective for the AI to maximize (e.g., drug-likeness, binding affinity). | Custom Python function combining QED, SA Score, and other filters. |

| Replay Buffer | Stores (state, action, reward, next state) transitions for stable Q-learning. | Implemented as a deque or specialized buffer within the RL framework. |

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions

Q1: My MolDQN agent consistently generates molecules with poor QED (Quantitative Estimate of Drug-likeness) scores, despite including it in the reward function. What could be the issue?

A1: This is often due to reward imbalance or improper scaling. The agent may be prioritizing other reward terms (e.g., synthetic accessibility) over QED. Implement reward shaping:

- Solution: Introduce a piecewise or thresholded reward for QED. For example:

reward_qed = 2.0 if QED > 0.7 else (0.5 if QED > 0.5 else -1.0). This provides a stronger gradient. Also, ensure the QED reward term is scaled appropriately relative to other terms (e.g., by using a weighting coefficient, alpha_qed). Monitor the individual reward components during training to diagnose imbalances.

Q2: When I bias the reward for ADMET properties (e.g., CYP2D6 inhibition), the agent's exploration collapses and it gets stuck generating a very small set of similar molecules. How can I mitigate this?

A2: This indicates excessive exploitation due to a overly steep reward peak for a specific property.

- Solution: Apply reward smoothing or incorporate an intrinsic novelty reward. Augment the reward function with:

reward_novelty = beta * (1 - Tanimoto_similarity_to_K_most_recent_molecules). This encourages exploration of structurally diverse regions while still optimizing for the desired ADMET property. Start with a low weight (beta) and increase if needed.

Q3: What is the recommended way to integrate multiple, and sometimes conflicting, ADMET property predictions into a single composite reward?

A3: Use a weighted sum with normalization and consider Pareto-based approaches.

- Methodology:

- Normalize each property score (e.g., predicted clearance, hERG inhibition) to a common range, typically [0,1] or [-1,1], where 1 is most desirable.

- Assign a domain-informed weight to each property (see Table 1).

- The composite ADMET reward is:

R_admet = Σ (w_i * S_i), wherew_iis the weight andS_iis the normalized score for property i. - For advanced handling of trade-offs, implement a multi-objective optimization scheme that maintains a Pareto front of candidate molecules.

Q4: During hyperparameter optimization for my reward-biased MolDQN, which parameters are most critical to tune?

A4: Focus on parameters that directly affect the credit assignment of the biased reward.

- Primary Hyperparameters:

- Discount factor (gamma): Lower values (e.g., 0.7) help focus the agent on immediate drug-likeness rewards.

- Reward weight coefficients (alpha, beta): For each property (QED, SA, ADMET).

- Replay buffer sampling priority: Prioritized Experience Replay (PER) can be tuned to oversample experiences with high composite reward.

- Exploration epsilon decay schedule: Slower decay may be needed for complex, multi-property reward landscapes.

Experimental Protocols

Protocol 1: Calibrating Reward Weights via Proxy Task Objective: Systematically determine optimal weights for QED, Synthetic Accessibility (SA), and ADMET terms in the composite reward.

- Define a short, fixed episode length (e.g., 10-15 steps).

- Initialize a set of weight vectors

[w_qed, w_sa, w_admet]using a Latin Hypercube design. - For each weight vector, run 3 independent MolDQN training sessions for a limited number of episodes (e.g., 2000).

- Evaluate the top 50 molecules generated by each run based on the unweighted desired properties.

- Select the weight vector that yields the best Pareto frontier of molecules across all target properties.

- Validate the selected weights in a full-scale training experiment.

Protocol 2: Benchmarking Reward-Shaping Strategies for ADMET Objective: Compare the effectiveness of different reward formulations for optimizing a specific ADMET endpoint.

- Select a target property (e.g., microsomal stability predicted as half-life).

- Formulate three reward conditions:

- Binary:

R = +10 if t1/2 > 30 min, else 0. - Linear:

R = scale * t1/2. - Thresholded Linear:

R = 0 if t1/2 < 15 min, else scale * (t1/2 - 15).

- Binary:

- Train a separate MolDQN agent under each condition, keeping all other hyperparameters constant.

- Analyze the top 100 molecules from each run for: (a) the target property value, (b) chemical diversity, (c) other key properties (QED, SA). Use statistical tests (e.g., Mann-Whitney U) to compare distributions.

Data Presentation

Table 1: Example Reward Weight Configuration & Impact on Generated Molecules This table presents illustrative data from a hyperparameter sweep.

| Reward Weight Vector (QED:SA:ADMET) | Avg. QED (Top 100) | Avg. SA Score (Top 100) | Avg. ADMET Score (Top 100) | Chemical Diversity (Avg. Tanimoto Distance) |

|---|---|---|---|---|

| 1.0 : 0.5 : 0.2 | 0.72 | 3.2 | 0.65 | 0.85 |

| 0.5 : 1.0 : 0.2 | 0.68 | 2.8 | 0.62 | 0.82 |

| 0.7 : 0.7 : 0.5 | 0.75 | 3.1 | 0.78 | 0.79 |

| 0.3 : 0.3 : 1.0 | 0.65 | 3.5 | 0.81 | 0.88 |

Note: SA Score lower is better (easier to synthesize). ADMET Score is a normalized composite (higher is better).

Visualizations

MolDQN Reward Integration Workflow

Hyperparameter Optimization Loop for Reward Weights

The Scientist's Toolkit: Research Reagent Solutions

| Item/Resource | Function in Reward-Biased MolDQN Research |

|---|---|

| RDKit | Open-source cheminformatics toolkit used for molecule manipulation, descriptor calculation (e.g., QED), and fingerprint generation. Essential for state representation and reward calculation. |