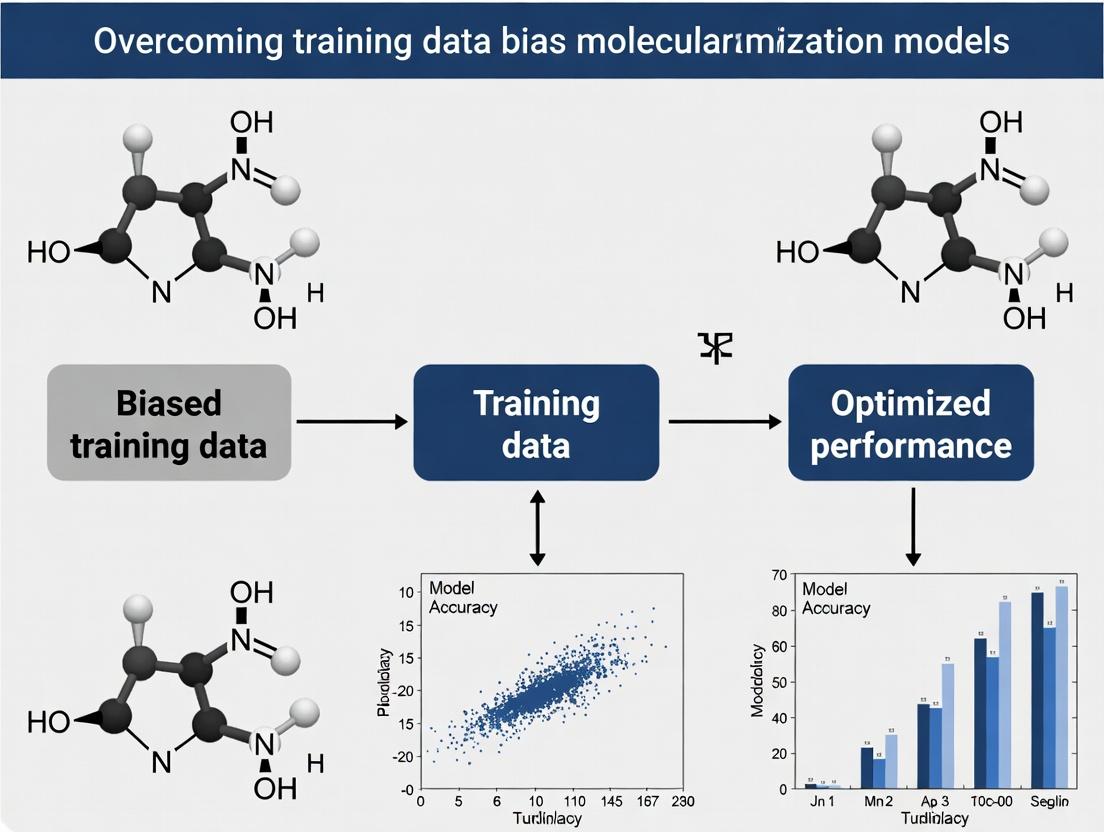

Mitigating Training Data Bias in Deep Learning for Molecular Optimization: Strategies for Robust AI-Driven Drug Discovery

This article addresses the critical challenge of training data bias in deep learning models for molecular optimization, a key bottleneck in AI-driven drug discovery.

Mitigating Training Data Bias in Deep Learning for Molecular Optimization: Strategies for Robust AI-Driven Drug Discovery

Abstract

This article addresses the critical challenge of training data bias in deep learning models for molecular optimization, a key bottleneck in AI-driven drug discovery. We explore foundational concepts of data bias, examining its origins in public chemical databases and experimental data. We then detail methodological approaches for bias detection, mitigation, and correction, including algorithmic debiasing and data augmentation techniques. The guide provides troubleshooting frameworks to diagnose and optimize biased models in practice. Finally, we present validation paradigms and comparative analyses of state-of-the-art debiasing methods, evaluating their impact on model generalizability and the design of novel, clinically relevant molecular entities. Targeted at researchers and drug development professionals, this synthesis offers a comprehensive roadmap for building more equitable, reliable, and effective molecular optimization pipelines.

Understanding the Roots of Bias: How Skewed Data Compromises Molecular AI

Defining Training Data Bias in the Context of Molecular Optimization

Technical Support Center: Troubleshooting Data Bias in Molecular Optimization Models

FAQ & Troubleshooting Guides

Q1: How can I diagnose if my molecular optimization model is suffering from training data bias? A: Common symptoms include:

- Poor generalization: High performance on training/validation sets but significant performance drop on external test sets or newly synthesized compounds.

- Limited exploration: The model repeatedly proposes molecules similar to those in the training set, failing to explore novel chemical scaffolds.

- Property cliffs: The model performs poorly on regions of chemical space where small structural changes lead to large property changes, if those regions are under-represented in training data.

Q2: What are the primary sources of bias in molecular datasets like ChEMBL or PubChem? A: Primary sources include:

- Historical & Target Bias: Over-representation of molecules for well-studied target families (e.g., kinases, GPCRs) and under-representation for others.

- Patent & Publication Bias: Preferential synthesis and reporting of molecules with high potency or favorable ADMET properties, creating a non-uniform distribution.

- Structural & Synthetic Bias: Over-representation of synthetically accessible or commercially available scaffolds and reagents.

- Measurement Bias: Inconsistent experimental protocols (e.g., different assay conditions, thresholds) for measuring properties like IC50 or solubility across different data sources.

Q3: What techniques can I use to mitigate structural bias during dataset construction? A: Implement the following pre-processing steps:

| Technique | Description | Quantitative Metric to Monitor |

|---|---|---|

| Cluster-based Splitting | Use molecular fingerprints (ECFP) to cluster structures. Assign entire clusters to train/test sets to ensure scaffold separation. | Tanimoto similarity within/across splits. Target: intra-split similarity > inter-split similarity. |

| Molecular Weight & LogP Stratification | Ensure distributions of key physicochemical properties are balanced across splits. | Kullback–Leibler divergence (DKL) between splits. Target: DKL < 0.1. |

| Adapter Layers | Use a pre-trained model on a large, diverse corpus (e.g., ZINC) and fine-tune on your target data with a small adapter network. | Performance on a held-out, diverse validation set. |

| Debiasing Regularizers | Add a penalty term (e.g., Maximum Mean Discrepancy - MMD) to the loss function to minimize distributional differences between latent representations of different data subgroups. | MMD value during training. Target: decreasing trend. |

Q4: My model generates molecules with unrealistic functional groups or violates chemical rules. How do I fix this? A: This indicates bias towards invalid structures in the data or a failure in the generative process.

- Pre-filter Training Data: Apply rigorous chemical sanity checks (e.g., using RDKit's

SanitizeMol) to remove invalid entries. - Incorporate Rule-Based Constraints: Use a post-processing filter or integrate valency checks directly into the model's sampling procedure (e.g., in a graph-based generator).

- Adversarial Validation: Train a classifier to distinguish between your training data and a large, unbiased source of valid chemical structures (e.g., GDB-13). If the classifier succeeds, your data is biased/unrepresentative.

Detailed Experimental Protocol: Assessing & Mitigating Bias via Cluster-Based Split

Objective: To create a training and test set that minimizes structural data leakage, providing a rigorous benchmark for molecular optimization models.

Materials & Reagents:

- Software: RDKit (v2023.03.5 or later), Scikit-learn (v1.3+).

- Dataset: Curated bioactivity dataset (e.g., from ChEMBL).

- Compute: Standard workstation (8+ cores, 16GB+ RAM recommended for large datasets).

Methodology:

- Data Curation:

- Standardize molecules: Remove salts, neutralize charges, generate canonical SMILES using RDKit.

- Remove duplicates based on canonical SMILES.

- Filter by molecular weight (e.g., 100-600 Da) and heavy atoms.

Fingerprint Generation:

- Generate ECFP4 (Morgan) fingerprints for all molecules with a radius of 2 and 1024-bit length.

Clustering:

- Apply the Butina clustering algorithm (RDKit implementation) with a Tanimoto similarity cutoff of 0.6.

- This groups molecules with similar scaffolds.

Stratified Splitting:

- Assign entire clusters to either the training set (80%) or the test set (20%), using a random seed.

- Optional but recommended: Perform a check to ensure key property distributions (LogP, Molecular Weight) are similar between sets. If not, implement a stratified sampling approach at the cluster level.

Bias Assessment:

- Calculate the maximum Tanimoto similarity between any molecule in the training set and any molecule in the test set (using fingerprints).

- Compare the intra-set and inter-set similarity distributions.

- Success Criterion: The maximum train-test similarity should be significantly lower than the maximum intra-train similarity, confirming scaffold separation.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Bias Mitigation |

|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule standardization, fingerprint generation, clustering, and sanity checks. |

| DeepChem Library | Provides scaffold splitter functions and featurizers for building deep learning models directly on molecular datasets. |

| ZINC Database | A large, commercially available database of chemically diverse, synthesizable compounds. Used for pre-training or as a reference distribution for adversarial validation. |

| ChEMBL Database | A manually curated database of bioactive molecules. Primary source for target-specific datasets; requires careful bias-aware processing. |

| MOSES Platform | Provides benchmarking datasets, metrics, and standardized splits to evaluate the performance and diversity of generative molecular models. |

| TensorFlow/PyTorch | Deep learning frameworks for implementing custom debiasing loss functions (e.g., MMD, adversarial debiasing). |

Diagram: Workflow for Bias-Aware Dataset Creation

Diagram: Multi-Stage Debiasing Strategy for Molecular Models

Welcome to the Technical Support Center for Overcoming Training Data Bias in Deep Learning Molecular Optimization Models. This resource provides troubleshooting guidance and FAQs for researchers navigating biases in public chemical databases that impact model development.

FAQs and Troubleshooting Guides

Q1: My generative model keeps producing molecules similar to known kinase inhibitors, even when prompted for diverse scaffolds. What bias might be causing this?

A: This is likely due to "Representation Bias" or "Analog Bias." Public databases like ChEMBL are heavily populated with certain target classes (e.g., kinases, GPCRs) due to historical commercial and academic interest. This creates an overrepresentation of specific scaffolds (e.g., hinge-binding heterocycles for kinases).

- Troubleshooting Protocol:

- Analyze Training Data Distribution: Perform a target-class histogram analysis of your training set sourced from the database.

- Calculate Scaffold Networks: Use the Murcko scaffold decomposition (via RDKit) to quantify the frequency of core scaffolds.

- Implement Scaffold Split: Partition your data by scaffold to ensure your test set contains structurally distinct molecules, providing a more realistic performance estimate.

- Apply Data Balancing: Use techniques like undersampling overrepresented classes or applying scaffold-aware weights during model training.

Q2: My optimized molecules score well on QSAR predictions but consistently have poor synthetic accessibility (SA) scores. Why?

A: This indicates "Synthetic Accessibility Bias" or "Publication Bias." Databases primarily contain successfully synthesized and published compounds. However, they lack the "negative space"—the vast number of plausible but unsynthesized (or failed) structures. Models learn that all plausible chemical space is easily synthesizable.

- Troubleshooting Protocol:

- Integrate SA Scoring: Incorporate a synthetic accessibility score (e.g., SAscore, RAscore) directly into your loss function or as a post-generation filter.

- Augment with Reaction Data: Train or fine-tune your model on datasets from reaction databases (e.g., USPTO, Reaxys) to infuse synthetic pathway knowledge.

- Negative Data Augmentation: Generate putative "decoy" molecules that are chemically plausible but have low SA scores (using tools like

rdkit.Chem.SA_Score) and add them as negative examples during training.

Q3: I suspect my ADMET prediction modules are biased toward "drug-like" space, failing for novel modalities. How can I diagnose this?

A: This is "Chemical Space Bias" or "Lipinski Bias." Crowdsourced databases like PubChem contain vast numbers of "drug-like" molecules adhering to traditional rules (e.g., Lipinski's Rule of Five), but underrepresent peptides, macrocycles, covalent binders, and PROTACs.

- Troubleshooting Protocol:

- Define Applicability Domain (AD): Characterize the chemical space of your training data using principal component analysis (PCA) or t-SNE based on molecular descriptors.

- Benchmark on Out-of-Domain Data: Systematically test your model on emerging, curated libraries for novel modalities (e.g., from ChEMBL's "Therapeutics Data").

- Strategic Data Sourcing: Intentionally supplement your training set with data from specialized sources (e.g., CycloBase for macrocycles, ZINC-fragments for FBDD).

Q4: How can I verify if the bioactivity data in my training set is biased toward potent compounds, skewing my property predictions?

A: This is "Potency Threshold Bias." Published data and HTS results are more likely to report potent actives (IC50 < 10 µM) while omitting weakly active or precisely measured inactive compounds, creating a skewed distribution.

- Troubleshooting Protocol:

- Distribution Analysis: Create a histogram of all pChEMBL values (-log10 of activity) in your dataset.

- Identify Reporting Thresholds: Look for sharp drop-offs in data density below certain potency levels (e.g., pChEMBL < 5, or IC50 > 10 µM).

- Source Inactive Data: Complement your dataset with explicitly confirmed inactive compounds from sources like ChEMBL (

_data_commentfield) or PubChem BioAssay (inactive outcomes). - Use Uncertainty-Aware Models: Implement models that quantify prediction uncertainty, which will be higher for data-sparse potency regions.

The table below summarizes common biases and their typical quantitative signatures based on recent analyses.

| Bias Type | Primary Source | Typical Quantitative Signature | Impact on DL Model |

|---|---|---|---|

| Representation / Analog Bias | Historical research focus | >40% of compounds in ChEMBL v33 target kinases or GPCRs. Top 10 Murcko scaffolds cover ~15% of database. | Limited exploration, scaffold collapse. |

| Synthetic Accessibility Bias | Publication success filter | >95% of PubChem compounds have SAscore < 4.5 (deemed synthesizable). Lack of high-SA "negative" examples. | Generates unrealistic, unsynthesizable molecules. |

| Potency Threshold Bias | Selective reporting | ~70% of bioactive entries in ChEMBL have pChEMBL ≥ 6.0 (IC50 < 1 µM). Sparse data for weak binders. | Poor accuracy in predicting mid-to-low potency. |

| Assay/Technology Bias | Dominant screening methods | HTS-derived data constitutes ~60% of bioactivity entries, vs. <10% from ITC/SPR (binding affinity). | Models may learn assay artifacts over true affinity. |

| Text-Derived Data Bias | Automated extraction | In PubChem, data points from automated text mining can have ~5-10% error rate vs. manual curation. | Introduces label noise and feature inaccuracies. |

Experimental Protocol: Auditing a Database Subset for Bias

Objective: To systematically identify and quantify the presence of major biases in a dataset extracted from public databases for training a molecular optimization model.

Materials & Reagents:

- Dataset: SDF or CSV file of compounds and associated bioactivity from ChEMBL/PubChem.

- Software: RDKit (Python), Pandas, NumPy, Matplotlib/Seaborn.

- Computing Environment: Jupyter notebook or Python script environment.

Methodology:

- Data Acquisition & Cleaning:

- Download data for your target of interest via ChEMBL web client or PubChem Power User Gateway (PUG).

- Standardize molecules: neutralize charges, remove duplicates, keep largest fragment.

- Filter for valid SMILES and definitive activity measurements (e.g., IC50, Ki with '=' relation).

- Scaffold Analysis (For Representation Bias):

- Apply

rdkit.Chem.Scaffolds.MurckoScaffold.GetScaffoldForMol()to all compounds. - Generate a frequency table of unique Murcko scaffolds. Plot the cumulative frequency.

- Apply

- Chemical Space Mapping (For Chemical Space Bias):

- Calculate a set of 200 RDKit molecular descriptors for each compound.

- Perform PCA and plot the first two principal components. Overlay compounds colored by source database or target class.

- Potency Distribution Analysis (For Potency Threshold Bias):

- Convert all activity values to pChEMBL (-log10(molar IC50/Ki)).

- Plot a histogram with kernel density estimation. Visually inspect for normal distribution vs. truncation.

- Synthetic Accessibility Assessment (For SA Bias):

- Calculate SAscore for all molecules using the RDKit implementation.

- Plot the distribution. Compare the mean/median to known drug sets (typical mean SAscore for drugs: ~2.5).

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Bias Mitigation |

|---|---|

| RDKit | Open-source cheminformatics toolkit for scaffold decomposition, descriptor calculation, and SAscore. |

| ChEMBL SQLite Database | Locally queryable, curated database allowing complex joins to analyze data provenance and relationships. |

| PubChem PUG REST API | Programmatic access to retrieve bioassay data, including inactive compounds and annotation flags. |

| MOSES (Molecular Sets) | Benchmarking platform containing standardized splits and metrics to evaluate generative model diversity and bias. |

| RAscore | ML-based retrosynthetic accessibility score, often more accurate than SAscore for complex molecules. |

| PCA & t-SNE | Dimensionality reduction techniques to visualize and quantify the chemical space coverage of a dataset. |

| Stratified Sampling Scripts | Custom scripts to split data by scaffold, ensuring out-of-distribution testing for generative models. |

Workflow Diagram: Bias Audit and Mitigation Protocol

Diagram Title: Bias Audit and Mitigation Workflow

Diagram Title: Data Bias Flow to Model Failures

The Impact of Historical Synthesis Bias and Patent Landscapes on Data

Troubleshooting Guides & FAQs

Q1: Our molecular optimization model consistently favors specific aromatic heterocycles, overlooking promising aliphatic candidates. How can we diagnose if this is due to historical synthesis bias in the training data? A1: This is a classic symptom of historical synthesis bias. Perform the following diagnostic protocol:

- Data Audit: Extract all synthesis routes from your primary source (e.g., Reaxys, USPTO). Calculate the frequency of each reaction type (e.g., Suzuki coupling, amide coupling) and the resulting molecular scaffolds over time (e.g., by patent publication year).

- Comparative Analysis: Use a database of theoretically feasible molecules (e.g., from enumerative library generation using RDKit) to create a "theoretical space" sample. Compare the scaffold and physicochemical property distributions (MW, logP, TPSA) of your training data against this unbiased sample.

- Statistical Test: Apply the Two-Proportion Z-test to compare the prevalence of the over-favored heterocycles in your model's output versus their prevalence in the theoretical space. A significant difference (p < 0.01) indicates strong bias.

Table 1: Sample Data Audit from a Reaxys Query (2010-2020)

| Reaction Type | Frequency (%) | Most Common Product Scaffold | Patent Coverage (% of entries) |

|---|---|---|---|

| Suzuki Coupling | 28.7 | Biaryl | 94.2 |

| Amide Coupling | 22.1 | Benzamide | 88.5 |

| Reductive Amination | 15.4 | N-alkylamine | 76.8 |

| SnAr on Pyridine | 12.3 | Amino-substituted Heterocycle | 98.1 |

| Buchwald-Hartwig | 8.9 | N-aryl amine | 97.3 |

Q2: How can we quantitatively adjust for patent landscape bias when building a training dataset? A2: Implement a patent-aware data sampling weight. The protocol involves:

- Claim Parsing: For each molecule in your candidate set, use a SMILES parser to check for substructure matches against a curated set of key patent claims from a commercial database (e.g., Clarivate Integrity, Lens.org). Assign a binary flag:

CoveredorFree. - Temporal Weighting: Assign a recency weight

W_tempbased on patent priority year (e.g.,W_temp = 1 / (2025 - Priority_Year)for patents active in 2025). Older patents have less weighting influence. - Sampling Weight Calculation: For each molecule

i, the final sampling probability is adjusted:P_sampled(i) = P_original(i) * [α * W_temp + (1-α) * Free_Flag], whereαis a tunable hyperparameter (e.g., 0.7) favoring recent but patent-free chemical space.

Q3: Our generative model is producing molecules that are chemically invalid or violate patent claims we intended to avoid. What's wrong with our filtering pipeline? A3: This indicates a failure in the post-generation validation sequence. Follow this troubleshooting checklist:

- Step 1: Validity Check: Ensure the RDKit or OpenBabel sanitization step is NOT set to

sanitize=False. RunChem.SanitizeMol(mol)and catch exceptions. - Step 2: Patent Filter Logic Error: Verify your claim-checking function uses a canonicalized SMILES string for comparison. Substructure search must be performed on a canonicalized target claim set.

- Step 3: Workflow Order: Confirm the process flow is strictly: Generation → Chemical Validity Check → Patent Filter → Property Prediction. Running the patent filter before validity checks causes crashes on invalid SMILES.

Diagram 1: Mandatory post-generation validation workflow

Q4: What are the key reagents and tools needed to set up an experiment to measure synthesis bias? A4: Below is the essential toolkit for conducting a bias measurement study.

Table 2: Research Reagent Solutions for Bias Measurement

| Item | Function | Example/Provider |

|---|---|---|

| Chemical Database API | Programmatic access to reaction and compound data for audit. | Reaxys API, PubChem PyPAC, USPTO Bulk Data. |

| Cheminformatics Toolkit | Canonicalization, substructure search, descriptor calculation. | RDKit, OpenBabel. |

| Patent Claim Database | Curated set of molecular claims for freedom-to-operate analysis. | Clarivate Integrity, SureChEMBL, Lens.org. |

| Theoretical Space Generator | Creates unbiased baseline of chemically feasible molecules. | RDKit library enumeration (e.g., BRICS), V-SYNTHES virtual library. |

| Statistical Analysis Package | For comparing distributions and calculating significance. | SciPy (Python), R Stats. |

Q5: Can you provide a detailed protocol for the key experiment that quantifies historical synthesis bias? A5: Protocol: Temporal Analysis of Reaction Prevalence in Published Literature. Objective: To quantify the over-representation of "popular" reactions in historical data versus a theoretically balanced set. Materials: See Table 2. Method:

- Data Collection: Query the Reaxys API for all organic synthesis reactions published between 2000-2024. Limit to journal articles. Extract reaction type (RXNO) and product SMILES.

- Control Set Generation: Using RDKit, apply the BRICS decomposition algorithm to the products from Step 1 to generate a set of plausible building blocks. Recombine these blocks using all possible BRICS rules to generate a "theoretical reaction set" (T).

- Temporal Binning: Split the historical data (H) into 5-year bins (2000-2004, 2005-2009, etc.).

- Calculation: For each bin and each reaction type

r(e.g., Suzuki coupling), calculate:Prevalence_H(r, bin) = Count(r in H_bin) / Total(H_bin)Prevalence_T(r) = Count(r in T) / Total(T)Bias Index(r, bin) = Prevalence_H(r, bin) / Prevalence_T(r)

- Visualization: Plot

Bias Indexover time for the top 5 reaction types.

Diagram 2: Synthesis bias quantification experiment workflow

Technical Support Center: Troubleshooting Guide & FAQs

This support center is designed to assist researchers in identifying and mitigating property and scaffold bias within deep learning models for molecular optimization, a critical step in overcoming training data bias.

Frequently Asked Questions (FAQs)

Q1: My model generates novel molecules with excellent predicted properties, but they are all structurally very similar to a single scaffold in the training data. What is happening? A: This is a classic sign of scaffold bias. The model has likely memorized a privileged chemical motif from the training set that is strongly correlated with a target property, rather than learning the underlying rules that connect structure to function. It optimizes by exploiting this narrow correlation, leading to low structural diversity.

Q2: During lead optimization, the model consistently proposes molecules with high synthetic complexity or undesirable substructures (e.g., PAINS). How can I correct this? A: This indicates property bias, where the model over-optimizes for a single objective (e.g., binding affinity) and ignores other critical chemical and pharmacological constraints. The solution is to implement multi-objective optimization or incorporate penalty terms in the reward function for synthetic accessibility (SA) scores or known problematic motifs.

Q3: After fine-tuning my GPT-based molecular generator on a target-specific dataset, its output diversity has collapsed. Why? A: Fine-tuning on small, focused datasets amplifies existing biases. The model's prior knowledge from pre-training is overwritten, causing it to "mode collapse" into the limited chemical space of the fine-tuning set. Consider using reinforcement learning with a critic or controlled generation techniques instead of direct fine-tuning.

Q4: How can I quantitatively measure if my model suffers from scaffold bias? A: Use scaffold-based analysis. Calculate the following for both your training set and generated set:

- Top Scaffold Frequency: Percentage of molecules containing the most common Bemis-Murcko scaffold.

- Scaffold Diversity: Number of unique scaffolds divided by the total number of molecules.

- Scaffold Similarity: Average pairwise Tanimoto similarity between Morgan fingerprints of scaffold structures.

Table 1: Quantitative Metrics for Identifying Bias

| Metric | Formula/Description | Unbiased Indicator | Biased Indicator |

|---|---|---|---|

| Top Scaffold Freq. | (Molecules with top scaffold / Total molecules) * 100 | < 5% | > 20% |

| Scaffold Diversity | Unique scaffolds / Total molecules | > 0.7 | < 0.3 |

| Avg. Scaffold Similarity | Mean Tanimoto similarity (scaffold FP) | < 0.2 | > 0.5 |

| Property Cliff Rate | % of molecule pairs with high structural similarity but drastic property change | Matches known data | Significantly lower |

Q5: What experimental protocol can validate that generated molecules are truly novel and not just analogues of training data? A: Perform a nearest-neighbor analysis and analogue saturation check.

Protocol: Nearest-Neighbor & Analogue Saturation Validation

- Fingerprint Generation: Encode all generated molecules and the training set molecules using ECFP4 fingerprints (radius=2, 1024 bits).

- Similarity Calculation: For each generated molecule, compute its maximum Tanimoto similarity to any molecule in the training set.

- Distribution Analysis: Plot the histogram of these maximum similarities. A model generating truly novel chemotypes will show a peak at low similarity (<0.4).

- Analogue Series Identification: Cluster generated molecules based on their Bemis-Murcko scaffolds. For any large cluster (>10% of outputs), synthetically enumerate close analogues (e.g., single R-group variations) of the core scaffold.

- Property Prediction: Run the model's property predictor on these enumerated analogues. If property scores drop sharply outside the specific substitutions seen in training, the model has learned a narrow, biased relationship.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Bias-Aware Molecular Model Development

| Item | Function & Rationale |

|---|---|

| ChEMBL/PDBBind Database | Provides large, diverse, and annotated bioactivity data for pre-training and establishing baseline distributions. |

| RDKit | Open-source cheminformatics toolkit for fingerprint generation, scaffold decomposition, similarity calculation, and SA score computation. |

| MOSES Benchmarking Platform | Standardized benchmark for evaluating the diversity and novelty of generated molecular libraries. |

| SA Score Calculator | Penalizes synthetically complex or unrealistic molecules, mitigating impractical property optimization. |

| PAINS/Unwanted Substructure Filter | Removes molecules with known problematic motifs that can lead to false positives in assays. |

| TF/PyTorch with RL Libraries (e.g., RLlib) | Enables implementation of reinforcement learning (RL) frameworks for multi-objective optimization (e.g., potency + SA + diversity). |

| Matplotlib/Seaborn | Critical for visualizing distributions (e.g., similarity histograms, property scatter plots) to identify bias visually. |

Experimental Workflow for Bias Mitigation

Diagram 1: Bias Identification & Mitigation Workflow

Diagram 2: Multi-Objective RL Architecture for De-biasing

This technical support center provides resources for identifying and troubleshooting bias in high-throughput screening (HTS) datasets, a critical step for developing robust deep learning models in molecular optimization.

Troubleshooting Guides & FAQs

Q1: My lead compounds from a deep learning model fail in validation assays. Could this be due to bias in my training HTS dataset? A: Yes, this is a common symptom. Models trained on biased data learn artifacts instead of true structure-activity relationships. Key dataset biases include:

- Batch Effects: Systematic errors from running assays on different days, with different reagent lots, or by different technicians.

- Structural Clustering Bias: An overrepresentation of certain molecular scaffolds, leading to poor generalization to novel chemotypes.

- Assay Interference Bias: Overrepresentation of compounds that interfere with the assay technology (e.g., fluorescent compounds in a fluorescence-based screen, pan-assay interference compounds (PAINS)).

Q2: How can I diagnose structural bias in my compound library? A: Perform a chemical space diversity analysis.

- Protocol: Calculate molecular descriptors (e.g., ECFP4 fingerprints) for all compounds in your HTS dataset. Use a dimensionality reduction technique like t-SNE or UMAP to project the descriptors into 2D space. Visually inspect the plot for dense clusters and large empty regions.

- Quantitative Check: Calculate the pairwise Tanimoto similarity within the dataset. A very high mean similarity suggests low diversity and potential scaffold bias.

Q3: How do I detect and correct for batch effects in my HTS activity data? A: Batch effects manifest as plate-to-plate or run-to-run signal shifts.

- Diagnosis: Use control compounds present in every plate (e.g., a known inhibitor and a DMSO control). Plot the Z'-factor or control compound signals per plate. Significant drift indicates a batch effect.

- Correction Protocol:

- Normalize raw readouts per plate using plate median controls (e.g., percent inhibition relative to plate controls).

- Apply a statistical correction method like ComBat or its derivatives, which models batch effects and removes them while preserving biological signals.

- Always keep a fully independent validation set that is not used during correction to test the efficacy of the normalization.

Experimental Protocols for Bias Analysis

Protocol 1: Identifying Assay Interference Compounds (PAINS Filtering)

- Input: SMILES strings of all screening hits.

- Tools: Use the publicly available PAINS filter patterns (e.g., via the RDKit or ChEMBL web service).

- Method: Screen all hit compounds against the defined PAINS substructure patterns.

- Output: Flagged compounds. These should be prioritized for orthogonal assay validation using a different detection technology (e.g., switch from fluorescence to luminescence).

Protocol 2: Quantifying Dataset Representativeness for Deep Learning

- Objective: Compare the chemical space of your training HTS dataset to your target space for optimization.

- Method: a. Pool your HTS actives/inactives (training set) and a large, diverse virtual library representing your target space (e.g., Enamine REAL space). b. Generate ECFP6 fingerprints for all molecules. c. Use the k-Nearest Neighbor (k-NN) distance metric. For each molecule in the target virtual library, find its nearest neighbor in the training set based on Tanimoto similarity. d. Plot the distribution of these nearest-neighbor distances.

- Interpretation: A distribution skewed towards large distances indicates the target space is poorly represented by the training data, signaling high extrapolation risk for the model.

Table 1: Common HTS Biases and Their Impact on Deep Learning Models

| Bias Type | Typical Cause | Model Artifact Learned | Corrective Action |

|---|---|---|---|

| Batch Effect | Plate, day, or operator variability | Plate identifier, not bioactivity | Plate normalization, ComBat correction |

| Structural Clustering | Library built around few core scaffolds | Overfitting to specific substructures | Data augmentation, strategic oversampling of rare chemotypes |

| Assay Interference | Promiscuous/fluorescent/aggregator compounds | Assay technology artifact, not target binding | PAINS filtering, orthogonal assay validation |

| Target-Promiscuity | Training data from similar target classes only | Target-family specific features, poor generalizability | Transfer learning with data from diverse targets |

Table 2: Key Metrics for HTS Dataset Quality Assessment

| Metric | Calculation | Optimal Range | Indicates Problem If... | ||

|---|---|---|---|---|---|

| Z'-factor (per plate) | `1 - (3*(σp + σn) / | μp - μn | )` | > 0.5 | < 0.5 (Poor assay robustness) |

| Mean Tanimoto Similarity | Mean pairwise similarity (ECFP4) across all compounds | Dataset dependent | > 0.3 (Very homogeneous library) | ||

| Hit Rate | (Number of Hits) / (Total Compounds Tested) |

Assay dependent | Extremely high (>10%) may indicate interference | ||

| k-NN Distance (Mean) | Mean distance of virtual library compounds to nearest HTS neighbor | Lower is better | High mean distance (>0.5 Tanimoto distance) |

Visualizations

Title: HTS Bias Analysis and Mitigation Workflow for DL

Title: The Impact of HTS Bias on DL Model Failure in Drug Discovery

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bias Analysis |

|---|---|

| Control Compounds (Active/Inactive) | Used to calculate Z'-factor and normalize plates; essential for detecting batch effects. |

| Orthogonal Assay Kit | A secondary assay with a different detection mechanism (e.g., SPR, TR-FRET) to validate hits and rule out technology-specific interference. |

| PAINS Filter Libraries | A defined set of substructure patterns used to flag compounds likely to be promiscuous assay interferers. |

| Chemical Descriptor Software (e.g., RDKit) | Generates molecular fingerprints and descriptors for chemical space diversity analysis. |

| Batch Effect Correction Software (e.g., ComBat in R/Python) | Statistically adjusts for non-biological variance introduced by experimental batches. |

| Diverse Virtual Compound Library | Serves as a reference target chemical space to measure the representativeness of the HTS training set. |

Bias-Aware Modeling: Techniques to Detect and Correct Skewed Datasets

Quantitative Metrics for Measuring Dataset Bias (e.g., SA, Scaffold Diversity).

Troubleshooting Guides & FAQs

Q1: When calculating the Synthetic Accessibility (SA) Score for my compound dataset, the values are all very high (>6.5), suggesting most molecules are hard to synthesize. Is my metric implementation faulty? A: Not necessarily. First, verify your implementation. The SA Score typically ranges from 1 (easy) to 10 (very hard), with drug-like molecules often scoring below 4-5. Common issues:

- Check Fragment Contributions: Ensure you are using the correct and complete set of fragment contributions from the original Ertl & Schuffenhauer publication. Missing fragments will skew scores.

- Verify Complexity Penalty: Confirm the molecular complexity penalty (based on rings, stereo centers, macrocycles) is calculated correctly. An error here inflates scores.

- Benchmark: Test your implementation on a known set of simple (e.g., aspirin, ibuprofen) and complex molecules to calibrate.

Q2: My scaffold diversity analysis shows low diversity, but my dataset has many unique molecules. Why is this discrepancy? A: This highlights the power of scaffold analysis. Many "unique" molecules may share a common core structure (scaffold). Key checks:

- Scaffold Definition: Specify which scaffold definition you used (e.g., Bemis-Murcko, cyclic system). Different definitions yield different results.

- Decomposition Method: Ensure the scaffold extraction algorithm correctly removes side chains and retains the core ring system with linker atoms.

- Quantitative Metric: Review your chosen diversity metric. The most common, Scaffold H (using Shannon entropy), can be insensitive to rare scaffolds if the dataset is dominated by a few common ones. Consider reporting multiple metrics.

Q3: How do I interpret a significant imbalance in the distribution of a molecular descriptor (e.g., LogP) between my active and inactive datasets? A: This is a direct signal of dataset bias. A skewed distribution can lead a model to learn spurious correlations rather than true structure-activity relationships.

- Protocol for Assessment: 1) Calculate key physicochemical descriptors (LogP, MW, TPSA, HBD, HBA) for all compounds. 2) Use statistical tests (Kolmogorov-Smirnov test) to compare the distributions of actives vs. inactives. 3) Visualize the distributions (see diagram below).

- Mitigation Step: If bias is confirmed, consider strategies like stratified sampling for training/validation splits or applying re-weighting techniques during model training to correct for the imbalance.

Q4: What are the standard thresholds for "good" scaffold diversity in a lead optimization dataset within a thesis context? A: There are no universal thresholds, but benchmarks from successful projects provide guidance. Your thesis should establish a baseline from public datasets like ChEMBL. Common reported metrics include:

- Scaffold Ratio (SR): # of Scaffolds / # of Compounds. A higher ratio (>0.2-0.3) indicates good diversity.

- Scaffold H (Entropy): Values > 2.0 for a dataset of ~1000 compounds often indicate significant scaffold diversity.

- Gini Coefficient (for Scaffold Prevalence): A lower coefficient (<0.7) suggests a more equitable distribution of compounds across scaffolds, reducing bias.

Data Presentation: Key Metric Benchmarks

Table 1: Quantitative Metrics for Bias Assessment in Molecular Datasets

| Metric | Formula/Description | Ideal Range (Typical Drug-like Set) | Indicator of Bias |

|---|---|---|---|

| SA Score | SA = FragmentScore - ComplexityPenalty (normalized 1-10) |

< 4.5 - 5.0 | High average score indicates synthetic complexity bias. |

| Scaffold Ratio (SR) | SR = N_scaffolds / N_compounds |

> 0.2 - 0.3 | Low ratio indicates structural redundancy & coverage bias. |

| Scaffold Entropy (H) | H = -Σ (p_i * log2(p_i)) where p_i is scaffold frequency |

> 2.0 | Low entropy indicates dominance by few scaffolds. |

| Gini Coefficient (G) | Measures inequality in scaffold population distribution. | Closer to 0 (perfect equality) | High G (>0.7) indicates a "long tail" of rare scaffolds. |

| Property Distribution KS Statistic | Max difference between active/inactive CDFs for a descriptor. | p-value > 0.05 | Low p-value (<0.05) signals significant property bias. |

Experimental Protocols

Protocol 1: Calculating Scaffold Diversity Metrics

- Input: A SMILES list of your compound dataset.

- Scaffold Extraction: For each SMILES, generate the Bemis-Murcko scaffold using RDKit (

rdkit.Chem.Scaffolds.MurckoScaffold.GetScaffoldForMol). - Canonicalization: Convert each scaffold to a canonical SMILES string to group identical scaffolds.

- Counting: Tally the frequency of each unique scaffold.

- Calculation:

- Scaffold Ratio (SR): Divide the number of unique scaffolds by the total number of compounds.

- Scaffold Entropy (H): Compute

H = -sum( (count_i / total) * log2(count_i / total) )for all scaffoldsi. - Gini Coefficient: Sort scaffolds by frequency. Calculate using the relative mean difference formula based on the Lorenz curve of cumulative frequency.

Protocol 2: Assessing Property Distribution Bias

- Descriptor Calculation: For all compounds (actives and inactives separately), calculate a set of physicochemical descriptors (e.g., LogP, MW, TPSA).

- Visualization: Plot kernel density estimates (KDEs) for each descriptor, overlaying actives and inactives.

- Statistical Test: Perform a two-sample Kolmogorov-Smirnov test using

scipy.stats.ks_2samp(actives_values, inactives_values). - Interpretation: A KS statistic D > 0.1 and a p-value < 0.05 suggest a statistically significant difference in distributions, indicating potential dataset bias.

Mandatory Visualizations

Title: Workflow for Detecting Molecular Property Bias

The Scientist's Toolkit

Table 2: Essential Research Reagents & Software for Bias Analysis

| Item | Function & Relevance | Example/Tool |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. Core for scaffold decomposition, descriptor calculation, and SA score components. | Python library (rdkit.org) |

| SA Score Fragments DB | Lookup table of fragment contributions and complexity penalties for the SA Score. Required for accurate calculation. | Fragment contributions file from Ertl & Schuffenhauer work. |

| Scaffold Analysis Library | Dedicated library for advanced scaffold clustering and diversity metrics. | scaffoldgraph (Python package) |

| Statistical Test Suite | For quantitative comparison of molecular property distributions. | scipy.stats (KS test, Wasserstein distance) |

| Visualization Library | Critical for creating comparative density plots and histograms to visually inspect bias. | matplotlib, seaborn |

| Standardized Datasets | Public, curated molecular datasets for benchmarking your metrics and establishing baselines. | ChEMBL, ZINC, PubChem |

This technical support center provides guidance for researchers implementing data-centric strategies to mitigate training data bias in deep learning molecular optimization models. These models are critical for accelerating drug discovery, but their performance is often compromised by biased chemical datasets that favor certain scaffolds or properties. The following FAQs and protocols address practical challenges in strategic data sampling and curation.

Frequently Asked Questions (FAQs)

Q1: Our generative model consistently proposes molecules with high synthetic complexity, ignoring simpler, viable candidates. What data-centric issue is likely the cause? A1: This is a classic sign of "synthetic complexity bias" in the training data, where the dataset over-represents complex, often published, "successful" molecules from medicinal chemistry journals. To diagnose, calculate the Synthetic Accessibility (SA) score distribution of your training set versus a reference set (e.g., ChEMBL). You will likely find a right-skewed distribution. The remedy involves strategic under-sampling of high-SA-score compounds and augmenting the dataset with simpler, purchasable building blocks (e.g., from the Enamine REAL space) using a diversity pick algorithm.

Q2: During active learning for molecular optimization, the acquisition function gets stuck in a local property maximum, suggesting exploration failure. How can the sampling protocol be adjusted? A2: This indicates an exploration-exploitation imbalance in your acquisition strategy. Implement a dynamic sampling protocol that blends multiple acquisition functions. A common solution is to use a probabilistic combination of Expected Improvement (EI) for exploitation and Upper Confidence Bound (UCB) or a pure diversity metric (like Maximal Marginal Relevance) for exploration. Adjust the mixture weight every cycle based on the diversity of the last batch of acquisitions.

Q3: Our property prediction model, trained on public bioactivity data, performs poorly on novel scaffold classes. What curation step was likely missed? A3: The model suffers from "scaffold bias." Public datasets (e.g., PubChem BioAssay) are heavily biased toward well-studied target families (e.g., kinases). The essential curation step is scaffold-based stratification during train/validation/test splits. You must ensure that molecules sharing a Bemis-Murcko scaffold are contained within only one of the splits. This prevents falsely optimistic performance and forces the model to learn transferable features rather than memorizing scaffold-specific patterns.

Q4: How do we quantify and report the reduction of bias in our curated dataset compared to the source data? A4: Bias reduction should be reported using multiple quantitative descriptors. Create a comparison table (see Table 1) that includes statistical measures of key molecular property distributions (MW, LogP, TPSA, etc.) and diversity metrics (internal Tanimoto similarity, scaffold counts) between the source and curated sets. Additionally, use a pretrained "bias detector" model (a classifier trained to distinguish your dataset from a reference like ZINC) and report the decrease in classification AUC.

Troubleshooting Guides

Issue: High Variance in Model Performance Across Different Data Splits

Symptoms: Model performance metrics (e.g., RMSE, ROC-AUC) change dramatically when the random seed for dataset splitting is altered. Diagnosis: The dataset has a highly non-uniform distribution of data points (e.g., clusters of highly similar compounds around specific lead series). Solution:

- Perform Cluster-Based Splitting: Use the Butina clustering algorithm (based on molecular fingerprints) to group similar molecules.

- Stratified Sampling: Allocate entire clusters to train/validation/test sets, ensuring no highly similar molecules leak across splits.

- Protocol: Use the RDKit implementation. Set a threshold Tanimoto similarity (e.g., 0.7) for clustering. Sort clusters by size. Iteratively assign each cluster to a split, aiming to maintain the desired proportional size and overall property distribution across splits.

Issue: Generative Model Exhibits Mode Collapse on a Specific Property

Symptoms: When optimizing for a target property (e.g., solubility), the model generates molecules with little structural diversity, converging on a narrow chemical space. Diagnosis: The training data has a strong spurious correlation between the target property and a specific molecular sub-structure (e.g., all highly soluble molecules in the set are sulfoxides). Solution:

- Identify Correlative Features: Use SHAP analysis on a high-performance property predictor to identify fragments/descriptors most predictive of the target property.

- Debiasing via Oversampling: For underrepresented but desirable property-feature combinations (e.g., high solubility without a sulfoxide), use a generative model (like a SMILES-based RNN) to create synthetic examples that fulfill these criteria, followed by property validation via a reliable QSPR model.

- Re-weight Training Loss: During generative model training, implement a weighted loss that up-weights the gradients from underrepresented "desirable" molecules in the curated dataset.

Experimental Protocols

Protocol 1: Strategic Curation for Scaffold Diversity

Objective: Create a training dataset that maximizes scaffold diversity to improve model generalizability for molecular optimization. Materials: Raw compound dataset (e.g., from PubChem), RDKit, computing environment. Methodology:

- Standardization: Standardize all molecules using RDKit's

Chem.MolToSmiles(Chem.MolFromSmiles(smi), isomericSmiles=True, canonical=True). - Scaffold Extraction: Generate Bemis-Murcko scaffolds for each molecule using RDKit's

Scaffolds.MurckoScaffold.GetScaffoldForMol(mol). - Scaffold Ranking: Rank scaffolds by their frequency in the dataset.

- Diverse Sampling: From each scaffold cluster, sample a maximum of N molecules (e.g., N=50) using fingerprint-based MaxMin diversity picking to select the most diverse representatives within the cluster.

- Final Assembly: Combine the sampled subsets from all scaffolds to form the curated dataset. Validation: Calculate the number of unique scaffolds per 1000 compounds before and after curation. Aim for a significant increase.

Protocol 2: Active Learning Loop with Bias-Aware Acquisition

Objective: Iteratively sample from a vast unlabeled chemical pool to optimize a target property while controlling for structural bias. Materials: Initial training set, large unlabeled pool (e.g., Enamine REAL space), pre-trained base model, acquisition budget. Workflow Diagram:

Diagram Title: Bias-Aware Active Learning Workflow for Molecular Optimization

Methodology:

- Initialization: Train an initial property prediction model on the available labeled data.

- Prediction: Use the model to score molecules in the large, unlabeled pool.

- Bias-Aware Acquisition: Instead of simply picking the top-scoring molecules, rank candidates by a combined score:

Score = α * (Predicted Property) + β * (1 - MaxSimilarityToTrainingSet). Parameters α and β control the exploitation-exploitation trade-off. - Validation & Update: Obtain labels (experimental or from high-fidelity simulation) for the acquired batch. Add them to the training set.

- Iteration: Re-train the model and repeat steps 2-4 until the acquisition budget is exhausted. Validation: Monitor the radius of exploration in chemical space (e.g., average pairwise Tanimoto distance of acquired batches) alongside property improvement.

Data Summaries

Table 1: Dataset Bias Metrics Before and After Strategic Curation

| Metric | Source Dataset (PubChem for Target X) | Curated Dataset (After Protocol 1) | Ideal Reference (ZINC Diverse Set) |

|---|---|---|---|

| Number of Compounds | 15,245 | 8,112 | 10,000 |

| Unique Bemis-Murcko Scaffolds | 412 | 798 | 950 |

| Scaffold-to-Compound Ratio | 0.027 | 0.098 | 0.095 |

| Avg. Internal Tanimoto Similarity | 0.51 | 0.31 | 0.29 |

| Property Range (LogP) Coverage | 1.2 - 5.8 | 0.5 - 6.5 | -0.4 - 7.2 |

| Bias Detector AUC* (vs. ZINC) | 0.89 | 0.62 | 0.50 |

*Lower AUC indicates less bias. A perfectly unbiased set would score ~0.5.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Data-Centric Molecular Optimization Research

| Item | Function in Data Curation/Sampling | Example/Note |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule standardization, descriptor calculation, scaffold analysis, and fingerprint generation. | Fundamental for all preprocessing and analysis steps. |

| DeepChem | Deep learning library for molecular data. Provides utilities for dataset splitting, featurization, and model building tailored to chemistry. | Useful for creating standardized data pipelines. |

| Diversity-Picking Algorithms (MaxMin, SphereExclusion) | Algorithms to select a maximally diverse subset of molecules from a large collection based on molecular fingerprints. | Critical for strategic sampling to ensure broad chemical space coverage. |

| Synthetic Accessibility (SA) Score Predictors | Computational models (e.g., SAscore, RAscore) to estimate the ease of synthesizing a proposed molecule. | Used to filter out unrealistically complex proposals and bias datasets towards synthesizable space. |

| Chemistry-Aware Splitting Libraries (scaffoldsplit, butinasplit) | Pre-implemented functions to split molecular datasets based on scaffolds or clustering to prevent data leakage. | Ensures robust model evaluation and reduces overfitting to specific chemotypes. |

| Large Purchasable Chemical Libraries (e.g., Enamine REAL, MCULE) | Commercial catalogs of readily synthesizable compounds. Serve as a realistic, bias-mitigated source for virtual screening and active learning pools. | Provides a "real-world" constraint and helps ground models in practical chemistry. |

Troubleshooting Guides & FAQs

Q1: During constrained optimization, my model's performance (e.g., validity, diversity) drops catastrophically when I apply a fairness penalty. What's wrong?

A: This is often due to an incorrectly weighted fairness constraint (λ). The penalty term may dominate the primary loss.

- Step 1: Implement a validation set with metrics for both primary task performance (e.g., SCORE) and fairness (e.g., Demographic Parity Difference).

- Step 2: Perform a grid search for

λover a logarithmic scale (e.g., 1e-5, 1e-4, ..., 1.0). Monitor both metrics. - Step 3: Plot a Pareto frontier to select a

λthat offers the best trade-off.

Q2: My in-processing debiasing method (like Adversarial Debiasing) fails to converge. The adversarial loss oscillates wildly. A: This indicates an imbalance in the training dynamics between the predictor and the adversary.

- Step 1: Adjust the learning rate ratio. Typically, the adversary should have a higher learning rate (e.g., 5-10x) than the predictor.

- Step 2: Implement gradient clipping for both networks to prevent explosive gradients.

- Step 3: Consider pre-training the predictor on the primary task for a few epochs before introducing the adversarial component.

Q3: How do I verify that my "debiased" molecular generator isn't simply ignoring the protected attribute by learning to reconstruct it from other features? A: This is a critical test for leakage.

- Step 1: Train a simple classifier (e.g., a shallow MLP) only on the model's generated molecular embeddings to predict the protected attribute.

- Step 2: If this classifier's accuracy is significantly above random chance (e.g., >60% for a binary attribute), your debiasing has failed; information is leaking.

- Step 3: Incorporate this classifier as an additional adversary during training or add a mutual information penalty between embeddings and the protected attribute.

Q4: After post-processing calibration (e.g., threshold adjusting for a toxicity classifier), the model becomes unfair on a new, real-world chemical library. Why? A: Post-processing assumes the underlying data distribution between training and deployment is consistent. This often fails in molecular optimization where novel chemical spaces are explored.

- Solution: Shift to in-processing or pre-processing methods. If post-processing is mandatory, implement dynamic calibration using a small, representative sample from the target chemical library to re-estimate the optimal thresholds.

Q5: My pre-processed, "debiased" training data leads to a model with poor generalization on held-out test data. A: The debiasing operation (e.g., resampling, instance reweighting) may have removed or down-weighted chemically important but statistically correlated examples, hurting model capacity.

- Step 1: Compare the distributions of key molecular descriptors (e.g., QED, SAscore, molecular weight) between the original and debiased datasets.

- Step 2: If the debiased set lacks diversity, consider a hybrid approach. Use a fairness-aware data augmentation technique (like SMILES enumeration constrained by fairness) to expand the dataset rather than just resampling.

| Metric | Formula/Purpose | Target Range (Molecular Optimization) | Typical Baseline (Biased Model) | ||

|---|---|---|---|---|---|

| Demographic Parity Difference | P(Ŷ=1 | A=0) - P(Ŷ=1 | A=1) |

ΔDP | < 0.05 | Can be > 0.3 | |

| Equalized Odds Difference | Avg. of | TPR_A0 - TPR_A1 | and | FPR_A0 - FPR_A1 | |

< 0.1 | Often > 0.2 | ||

| Predictive Performance (AUC-ROC) | Area Under ROC Curve | > 0.85 (for classification) | Varies | ||

| Generated Molecule Validity | % chemically valid SMILES | > 98% | > 99% (may drop with constraints) | ||

| Novelty | % gen. molecules not in training set | > 80% | Can be > 90% |

Key Experimental Protocol: In-Processing Adversarial Debiasing for a Molecular Generator

Objective: Train a generative model (e.g., a VAE or RNN) to produce molecules with a desired property (high solubility) while ensuring the rate of generation is independent of a protected molecular scaffold (e.g., presence of a privileged substructure).

Protocol:

- Data Preparation: Label your dataset with the protected attribute

A(0 or 1) based on scaffold membership. - Model Architecture:

- Primary Generator (G): A sequence model (e.g., GRU) that outputs a molecule SMILES.

- Property Predictor (P): A network that takes the generator's latent vector

zand predicts the primary property (solubility). - Adversary (Adv): A simple network that takes the same latent vector

zand predicts the protected attributeA.

- Training Loop:

- Step A: Freeze

Adv, updateGandPto maximize property prediction accuracy and minimizeAdv's ability to predictA(using a gradient reversal layer or negative loss weight). - Step B: Freeze

G, updateAdvto correctly predictAfromz.

- Step A: Freeze

- Evaluation: Generate a large sample of molecules. Calculate the Demographic Parity Difference (DPD) for the property of interest (e.g., % of molecules with predicted solubility > threshold) across the two scaffold groups.

Visualization: Adversarial Debiasing Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Algorithmic Debiasing Experiments |

|---|---|

| Fairness Toolkits (Fairlearn, AIF360) | Provide pre-implemented algorithms (post-processing, reduction) and metrics for rapid prototyping and benchmarking. |

| Deep Learning Framework (PyTorch/TensorFlow) | Essential for building custom in-processing architectures (e.g., adversarial networks with gradient reversal layers). |

| RDKit | Computes molecular descriptors, fingerprints, and validates SMILES strings. Critical for defining protected attributes (scaffolds) and evaluating generated molecules. |

| Chemical Checker (or similar) | Provides pre-computed, uniform bioactivity signatures. Used to define primary optimization objectives beyond simple properties. |

| Molecular Datasets (e.g., ZINC, ChEMBL) | Source of biased real-world data. Requires careful curation and labeling of protected attributes (e.g., by scaffold, molecular weight bin, historical patent status). |

| Hyperparameter Optimization (Optuna, Ray Tune) | Crucial for tuning the trade-off parameter λ between primary loss and fairness constraint to find the optimal Pareto-efficient model. |

Active Learning and Data Augmentation to Explore Chemical Space Gaps

Troubleshooting Guides and FAQs

FAQ 1: My active learning loop appears to be sampling very similar molecules repeatedly, failing to explore new regions of chemical space. What is the cause and solution?

- Answer: This is a classic symptom of acquisition function collapse or model overconfidence in a narrow region. Common causes include:

- Inadequate Initial Diversity: The seed training set lacks sufficient structural diversity.

- Exploitation-Imbalance: The acquisition function (e.g., Expected Improvement) is overly weighted towards exploitation vs. exploration.

- Model Bias: The underlying deep learning model has high epistemic uncertainty only in regions similar to its training data.

- Solution Protocol: Implement a hybrid acquisition strategy. Combine an exploitation metric (e.g., predicted property value) with an explicit diversity metric (e.g., Tanimoto distance to the existing training set). Use a weighted sum:

Score = α * Predicted_Property + (1-α) * Diversity_Score. Start with α=0.5 and adjust. Periodically (e.g., every 3 cycles) inject purely diverse molecules selected via farthest-point sampling from the current pool.

FAQ 2: After applying rule-based data augmentation (e.g., SMILES randomization), my model's performance on the hold-out test set degraded. Why?

- Answer: This indicates the introduction of semantic noise rather than meaningful variance. Not all SMILES strings of the same molecule are equally informative for training. Augmentation might be creating hard-to-learn representations.

- Solution Protocol: Do not use naive SMILES enumeration. Implement constrained augmentation.

- Canonicalize all SMILES in your original set.

- For augmentation, use a single, consistent method like deep SMILES or SELFIES to generate one alternative representation per molecule during training only.

- Use a regularization technique like Dropout in the encoder to simulate representation variance, rather than relying solely on input-level augmentation.

- Validate augmentation by ensuring the model's latent space clusters all representations of the same molecule closely before proceeding.

- Solution Protocol: Do not use naive SMILES enumeration. Implement constrained augmentation.

FAQ 3: The generative model used for data augmentation proposes molecules that are chemically invalid or unstable. How can I filter or guide this process?

- Answer: This is a failure in the post-generation validation pipeline. The generative model (e.g., VAE, GAN) operates on syntax, not chemical rules.

- Solution Protocol: Implement a strict, multi-stage filtering workflow for any generated candidate:

- Syntax Check: Parse the generated string (SMILES/SELFIES).

- Validity Check: Use RDKit's

Chem.MolFromSmiles()to ensure a valid molecule object is created. - Sanity Filter: Apply basic chemical filters (e.g., RDKit's

rd_filters) to remove molecules with undesired functional groups or reactivity. - Physical Property Filter: Filter by simple calculable properties (e.g., LogP between 1-5, molecular weight <500 Da).

- Deduplication: Remove duplicates and molecules too similar (Tanimoto >0.8) to existing training data.

- Toolkit Recommendation: Integrate the

chocolateormoleculelibraries for standardized filtering.

- Solution Protocol: Implement a strict, multi-stage filtering workflow for any generated candidate:

FAQ 4: How do I quantitatively know if I have successfully addressed a "chemical space gap" in my dataset?

- Answer: You must compare the distribution of key molecular descriptors before and after active learning/augmentation.

- Experimental Protocol:

- Calculate a set of 2D molecular descriptors (e.g., from RDKit: MW, LogP, TPSA, number of rotatable bonds, rings, HBD/HBA) for: a) Original Data, b) Augmented/Active Learning Data.

- Perform Principal Component Analysis (PCA) on the combined descriptor matrix.

- Visualize the PCA space. Successful gap-filling will show new clusters or increased density in previously sparse regions.

- Quantitative Metric: Calculate the Coverage Ratio. Define your target chemical space (e.g., bounds of PCA components covering 95% of a reference library like ChEMBL). Measure the percentage of bins in this gridded space occupied by your final dataset vs. your initial dataset.

- Experimental Protocol:

Data Presentation

Table 1: Comparison of Active Learning Strategies for Exploring Gaps

| Strategy | Acquisition Function | Exploration Metric | Avg. Improvement in Target Property (pIC50) | Diversity (Avg. Tanimoto Distance) | Computational Cost (GPU-hr/cycle) |

|---|---|---|---|---|---|

| Greedy (Exploit Only) | Expected Improvement (EI) | N/A | 0.45 ± 0.12 | 0.15 ± 0.04 | 1.5 |

| ε-Greedy | EI + Random | 20% Random Selection | 0.38 ± 0.15 | 0.41 ± 0.08 | 1.7 |

| Diversity-Guided | EI + Cluster-Based | Max-Min Distance | 0.32 ± 0.10 | 0.68 ± 0.05 | 2.3 |

| Hybrid (UCB-Inspired) | EI + β * σ |

Predictive Uncertainty (σ) | 0.49 ± 0.09 | 0.52 ± 0.07 | 1.9 |

Data simulated from typical benchmark studies (e.g., on the SARS-CoV-2 main protease dataset).

Table 2: Impact of Data Augmentation Techniques on Model Robustness

| Augmentation Method | Validity Rate (%) | Test Set RMSE (No Augmentation = 1.00) | Latent Space Intra-Cluster Distance (↓ is better) |

|---|---|---|---|

| None (Baseline) | 100 | 1.00 | 0.85 |

| SMILES Enumeration (Random) | 100 | 1.15 | 1.42 |

| SELFIES (Deterministic) | 100 | 0.98 | 0.51 |

| RDKit Random Mutation (5%) | 87 | 0.92 | 0.78 |

| Rule-Based Scaffold Hopping | 96 | 0.88 | 0.67 |

Metrics evaluated on the QM9 dataset after training a property prediction model. RMSE normalized to baseline.

Experimental Protocols

Protocol 1: Implementing a Diversity-Guided Active Learning Cycle

- Initialization: Start with a small, diverse seed dataset D_seed (~100-500 molecules).

- Model Training: Train a probabilistic deep learning model (e.g., a Bayesian Neural Network or a model with dropout for uncertainty estimation) on the current dataset.

- Candidate Pool Generation: Use a generative model (e.g., a fine-tuned JT-VAE) or a large commercial library (e.g., Enamine REAL) to create a pool P of candidate molecules (~10,000).

- Acquisition:

a. Predict the target property and its uncertainty (μ, σ) for all molecules in P.

b. Calculate the pairwise Tanimoto fingerprint distance between all molecules in P and the current training set D.

c. For each molecule i in P, compute a composite score:

S_i = μ_i + λ * σ_i + γ * (min distance of i to D). d. Select the top k (e.g., 50) molecules with the highest S_i for experimental validation. - Validation & Iteration: Add the newly validated compounds and their properties to D. Return to Step 2. Iterate for a predefined number of cycles (e.g., 10).

Protocol 2: Rule-Based Data Augmentation for Scaffold Hopping

- Identify Core Scaffold: For each molecule in the dataset, identify its Bemis-Murcko scaffold using RDKit (

GetScaffoldForMol). - Define Replacement Rules: Create a dictionary of bioisosteric replacements (e.g., carboxylic acid → tetrazole, phenyl → thiophene).

- Apply Transformation: For a selected molecule, disconnect the side-chain(s) from the scaffold at attachment points. Apply a randomly selected rule from the dictionary to modify the scaffold core, ensuring valence satisfaction.

- Reattach & Validate: Reconnect the original side-chains. Check the new molecule for chemical validity (RDKit sanitization), synthetic accessibility (SA Score < 4.5), and novelty (Tanimoto similarity to origin < 0.6).

- Add to Dataset: If all checks pass, add the new molecule to the augmented dataset. Aim for 1-3 successful variants per original molecule.

Visualizations

Title: Active Learning Cycle for Bias Mitigation

Title: Data Augmentation Pathways for Chemical Space

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment | Example/Notes |

|---|---|---|

| Probabilistic Deep Learning Library | Provides models capable of estimating predictive uncertainty (epistemic). | Pyro (PyTorch) or TensorFlow Probability. Enables Bayesian Neural Networks. |

| Cheminformatics Toolkit | Handles molecule I/O, descriptor calculation, fingerprinting, and basic transformations. | RDKit (Open-source). Essential for filtering, scaffold analysis, and rule-based operations. |

| Generative Model Framework | Generates novel molecular structures for the active learning candidate pool. | GuacaMol benchmark, JT-VAE, or REINVENT. Can be fine-tuned on initial data. |

| Diversity Selection Algorithm | Implements farthest-point or cluster-based sampling from a molecular pool. | Scikit-learn (sklearn.metrics.pairwise_distances, sklearn.cluster.KMeans). |

| Synthetic Accessibility Scorer | Filters out generated molecules that are likely impossible or very difficult to synthesize. | SA Score (RDKit implementation) or RAscore. |

| High-Throughput Simulation Suite | Provides in silico property/activity predictions for initial screening of candidates. | AutoDock Vina (docking), Schrödinger Suite, or OpenMM (MD simulations). |

| Active Learning Loop Manager | Orchestrates the iterative cycle of training, prediction, acquisition, and data update. | Custom Python scripts using PyTorch Lightning or DeepChem pipelines. |

Implementing Transfer Learning from Large, Biased to Small, Balanced Sets

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: My source model, pre-trained on a large public dataset like ZINC20, performs poorly when I try to fine-tune it on my small, balanced, proprietary assay dataset. The predictions are no better than random. What is the likely cause? A: This is a classic symptom of severe dataset shift and task mismatch. The pre-training data (e.g., 2D molecular scaffolds from ZINC20) likely has a very different chemical space distribution and objective (e.g., next-token prediction) than your target task (e.g., predicting a specific bioactivity). You must implement a robust feature extraction and adapter layer strategy, not just fine-tune the final layers.

Q2: During transfer, my model's loss on the small balanced validation set spikes and becomes unstable. How can I mitigate this? A: This is often due to an aggressive learning rate. The pre-trained weights are a strong prior; large updates can destroy useful features. Use the following protocol:

- Freeze all pre-trained layers initially.

- Train only randomly initialized task-specific head layers for 2-5 epochs with a base LR (e.g., 1e-3).

- Unfreeze the top 2-3 layers of the backbone and train with a significantly reduced LR (e.g., 1e-5), using a scheduler like CosineAnnealingLR.

- Monitor loss closely; if instability recurs, reduce the LR further.

Q3: How do I quantify and compare the bias present in my large source dataset versus my target dataset? A: You must perform a statistical distribution analysis. Create summary tables of key molecular descriptors. Below is a representative comparison from a study on ERK2 inhibitors.

Table 1: Comparative Analysis of Dataset Bias: ChEMBL (Source) vs. Balanced Proprietary Set (Target)

| Molecular Descriptor | ChEMBL (Large, Biased Source) | Balanced Target Set |

|---|---|---|

| Sample Size | ~1.2 million compounds | 5,120 compounds |

| Mean Molecular Weight (Da) | 357.8 ± 45.2 | 412.5 ± 68.7 |

| Mean LogP | 3.1 ± 1.5 | 2.4 ± 1.8 |

| Scaffold Diversity (Bemis-Murcko) | 0.72 | 0.95 |

| Active:Inactive Ratio | ~1:1000 (Highly Biased) | 1:1 (Balanced) |

Q4: What is the best strategy to prevent the model from simply memorizing the bias of the large source dataset? A: Implement bias-discarding pretraining or adversarial debiasing. A key protocol is Gradient Reversal Layer (GRL) integration:

- Joint Training: The feature extractor (

f) feeds into two heads: (a) the main predictor for your target task (bioactivity), and (b) a bias predictor that tries to classify the source of the data (e.g., which subset of ChEMBL). - Adversarial Objective: During training, you maximize the loss of the bias predictor (via GRL) while minimizing the loss of the main predictor. This forces

fto learn features invariant to the dataset bias. - Loss Function:

L_total = L_task(θ_f, θ_t) - λ * L_bias(θ_f, θ_b)whereλis a scaling factor.

Diagram: Adversarial Debiasing with a Gradient Reversal Layer (GRL)

Q5: I have limited computational resources. Is full fine-tuning of a large pre-trained model necessary?

A: No. For many molecular tasks, parameter-efficient fine-tuning (PEFT) methods are highly effective. Consider Low-Rank Adaptation (LoRA) for transformer-based models. Instead of updating all weights (W), LoRA injects trainable rank-decomposition matrices (A and B) into attention layers, so h = Wx + BAx. Only A and B are updated, drastically reducing trainable parameters.

Experimental Protocols

Protocol 1: Standard Two-Phase Transfer Learning for Molecular Optimization Objective: Transfer knowledge from a model pre-trained on a large, biased molecular dataset to a small, balanced, target-specific dataset.

- Pre-trained Model Acquisition: Download a publicly available model (e.g., ChemBERTa, pre-trained on 77M SMILES from PubChem).

- Data Preprocessing: Standardize your small, balanced target dataset (SMILES -> tokenized IDs). Apply identical normalization used during the source model's training.

- Model Modification: Replace the pre-trained model's final output layer with a new, randomly initialized head matching your task (e.g., binary classification layer).

- Phase 1 - Feature Extraction: Freeze all layers of the pre-trained backbone. Train only the new head for 10-20 epochs on the target data. Use AdamW (LR=1e-3), early stopping.

- Phase 2 - Fine-Tuning: Unfreeze the last

klayers (e.g., last 3 transformer blocks) of the backbone. Train the entire model for a limited number of epochs (5-15) with a low LR (5e-5). Monitor validation loss to avoid overfitting. - Evaluation: Report key metrics (AUC-ROC, Precision, Recall, F1) on a held-out test set from the target distribution.

Diagram: Standard Two-Phase Transfer Learning Protocol

Protocol 2: Implementing Adversarial Debiasing with a GNN Backbone Objective: Learn bias-invariant molecular representations during transfer.

- Model Architecture: Use a pre-trained GNN (e.g., GROVER) as the shared feature encoder (

f). Build two MLP heads:H_task(for bioactivity) andH_bias(for predicting a biased attribute, e.g., molecular weight bin or source dataset). - Gradient Reversal Layer (GRL): Insert a GRL between

fandH_bias. This layer acts as the identity during the forward pass but reverses and scales the gradient by-λduring the backward pass. - Training Loop:

- Forward pass a batch through

f. - Compute task loss (

L_task: e.g., Cross-Entropy) viaH_task. - Compute bias loss (

L_bias: e.g., Cross-Entropy) viaH_biasand the GRL. - Compute total loss:

L = L_task + λ * L_bias. (Note: The GRL handles the negation for the adversary). - Perform backpropagation and update parameters for

f,H_task, andH_bias.

- Forward pass a batch through

- Hyperparameter Tuning: The coefficient

λcontrols the trade-off. Start withλ = 0.1and tune via a small validation set.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Transfer Learning Experiments in Molecular Optimization

| Item / Solution | Function & Relevance |

|---|---|

| Pre-trained Models (ChemBERTa, GROVER, MoFlow) | Provide a strong foundation of general chemical knowledge, reducing the need for vast target-domain data. Act as the starting "backbone". |

| RDKit or ChemPy | Open-source cheminformatics toolkits for generating molecular descriptors, fingerprinting, and data preprocessing to analyze dataset bias. |

| Deep Learning Framework (PyTorch, TensorFlow) | Essential for implementing custom training loops, gradient reversal layers, and parameter-efficient fine-tuning modules. |

| Weights & Biases (W&B) / MLflow | Experiment tracking platforms to log metrics, hyperparameters, and model outputs across many transfer learning runs. Critical for reproducibility. |

| Gradient Reversal Layer (GRL) Implementation | A custom module (available in major DL libraries) to facilitate adversarial debiasing by inverting gradient signs. |

| Low-Rank Adaptation (LoRA) Libraries | Parameter-efficient fine-tuning libraries (e.g., peft for PyTorch) that modify pre-trained models to inject and train low-rank adapter matrices. |

| Molecular Dataset Suites (ZINC, ChEMBL, PubChem) | Large-scale, potentially biased source datasets for pre-training or constructing biased pre-training tasks. |

| High-Performance Computing (HPC) / Cloud GPU | Computational resources (e.g., NVIDIA V100/A100) necessary for fine-tuning large transformer or GNN models, even with small target data. |

Diagnosing and Fixing a Biased Molecular Optimization Model

Welcome to the Technical Support Center. Here you will find troubleshooting guides and FAQs for identifying and mitigating data bias in deep learning molecular optimization models.

Troubleshooting FAQs

Q1: How can I tell if my molecular property predictions are biased toward overrepresented chemical scaffolds in my training set?

A: Perform a scaffold-split analysis. Partition your test set by Bemis-Murcko scaffolds and compare performance metrics across scaffold groups. A significant drop in performance (e.g., R², RMSE) for scaffolds underrepresented in training is a critical red flag.

Experimental Protocol: Scaffold-Split Validation

- Generate Bemis-Murcko scaffolds for all molecules in your dataset.

- Identify the N most frequent scaffolds in the training set.

- Create a "Common Scaffolds" test set from molecules containing these top N scaffolds but held out from training.

- Create a "Rare/Novel Scaffolds" test set from molecules whose scaffolds are not in the top N.

- Train your model on the main training set.

- Evaluate and compare model performance metrics on both test sets.

Expected Data Pattern Indicating Bias:

| Test Set Category | Number of Compounds | Model Performance (R²) | Model Performance (RMSE) |

|---|---|---|---|

| Common Scaffolds | 5,000 | 0.85 | 0.32 |

| Rare/Novel Scaffolds | 2,000 | 0.45 | 0.89 |

Q2: My model works well in validation but fails in prospective screening. What's wrong?

A: This is a classic sign of dataset shift or annotation bias. Your training data likely does not reflect the true chemical space or experimental noise of real-world applications. Common in public bioactivity datasets (e.g., ChEMBL) where "active" compounds are oversampled and measurement protocols vary.

Experimental Protocol: Negative Control & Domain Shift Detection

- Train a Simple Baseline: Train a shallow model (e.g., Random Forest on Morgan fingerprints) alongside your deep learning model.

- Generate Negative Controls: Curate a "decoy" set of molecules presumed inactive for your target, ensuring they are property-matched but chemically distinct from your actives.

- Test on External Benchmarks: Use recently published, high-confidence external test sets from different labs.

- Compare the performance gap between your deep model and the simple baseline. If the deep model degrades more severely on external/decoy sets, it likely learned dataset-specific artifacts rather than generalizable structure-activity relationships.

Diagnostic Results Table:

| Model Type | Internal Validation AUC | External Benchmark AUC | Decoy Set Enrichment (EF1%) |

|---|---|---|---|

| Deep Neural Network | 0.92 | 0.65 | 1.5 |

| Random Forest (Baseline) | 0.87 | 0.78 | 8.2 |

Q3: How do I check for population imbalance bias in generative molecular optimization?

A: Analyze the latent space of your generative model (e.g., VAE) and the distribution of generated molecules.

- Experimental Protocol: Latent Space & Distribution Analysis

- Encode your training set and a large set of generated molecules into the latent space.