How AlphaFold2 Accelerates Drug Discovery: Validating Protein Designs with AI Structure Prediction

This article provides a comprehensive guide for researchers on leveraging AlphaFold2 (AF2) for validating computationally designed protein sequences.

How AlphaFold2 Accelerates Drug Discovery: Validating Protein Designs with AI Structure Prediction

Abstract

This article provides a comprehensive guide for researchers on leveraging AlphaFold2 (AF2) for validating computationally designed protein sequences. It explores the foundational role of AF2 in de novo protein design, outlines practical methodologies for implementation, addresses common challenges in troubleshooting predictions, and establishes frameworks for comparative validation against experimental data. Targeted at scientists in drug development and protein engineering, this resource demonstrates how integrating AF2 into design-validation workflows enhances reliability, accelerates iteration, and reduces experimental costs.

Why AlphaFold2 is a Game-Changer for Protein Design Validation

The dominant paradigm in protein science has long been "sequence determines structure, which determines function." The advent of highly accurate structure prediction tools, most notably AlphaFold2 (AF2), has validated this axiom to an unprecedented degree. This accuracy now enables a powerful inversion: Inverse Folding. This paradigm shift starts with a desired structure or function and computationally designs a novel amino acid sequence to fulfill it. Within the context of research focused on using AF2 for validating designed sequences, inverse folding represents the core design engine, with AF2 serving as the critical validation filter. This guide compares leading inverse folding platforms.

Performance Comparison of Inverse Folding Platforms

The following table summarizes the performance of major inverse folding tools, as measured by experimental success rates in generating stable, design-compliant proteins. Key metrics include Design Success (AF2-predicted RMSD < 2.0Å to target), Experimental Success (validated by biophysical assays), and Sequence Recovery (similarity to natural proteins).

Table 1: Inverse Folding Platform Performance Comparison

| Platform / Model | Core Methodology | Design Success Rate (AF2 RMSD < 2.0Å) | Experimental Validation Success Rate | Sequence Recovery (%) | Key Advantage |

|---|---|---|---|---|---|

| ProteinMPNN | Message Passing Neural Network | ~70-80%* | ~50-60% (on novel folds) | 35-40% | Speed, robustness, high experimental success. |

| RFdiffusion | Diffusion Model + RoseTTAFold | ~50-70% (complex scaffolds) | ~20-40% (de novo binders) | Low (highly novel) | De novo backbone generation & design. |

| RosettaFold2 | End-to-end Transformer (ESMFold family) | ~60-75%* | Data pending (recent release) | 40-45% | Unified sequence-structure representation. |

| Chroma | Diffusion Model (Generative AI) | ~65-80% (broad capabilities) | Emerging | Tunable | Conditional generation for function (e.g., symmetry). |

| Classic Rosetta | Physics-based & Monte Carlo | ~40-60% | ~20-30% (highly optimized) | 50-60% | High physical accuracy, customizable. |

*Performance highly dependent on target scaffold complexity and design constraints.

Experimental Protocols for Validation

A standard pipeline for validating inverse-designed sequences using AF2 is critical for research in this field.

Protocol 1: In silico Validation Pipeline

- Target Definition: Specify target backbone (PDB file or coordinates).

- Sequence Design: Use inverse folding tool (e.g., ProteinMPNN) to generate N candidate sequences (e.g., 100-1000).

- Structure Prediction: Fold all candidate sequences using a local AF2 (ColabFold) installation with

model_type="auto"andnum_recycles=3. - Filtering: Calculate TM-scores or Cα RMSD between the AF2 prediction and the target backbone using tools like US-align. Retain designs with RMSD < 2.0Å.

- Multi-State Filter: For functional designs (e.g., binders), predict complex structure with the target partner.

- Downstream Analysis: Analyze retained designs for structural plausibility (pLDDT, pAE), and diversity.

Protocol 2: Experimental Validation of Designed Proteins

- Gene Synthesis: Clone top in silico validated sequences (e.g., 5-20) into expression vectors.

- Expression & Purification: Express in E. coli (or relevant system) and purify via His-tag affinity chromatography.

- Biophysical Characterization:

- Size-Exclusion Chromatography (SEC): Assess monodispersity and oligomeric state.

- Circular Dichroism (CD): Verify secondary structure content matches design.

- Differential Scanning Fluorimetry (DSF): Measure thermal stability (Tm).

- High-Resolution Validation: Determine experimental structure via X-ray crystallography or cryo-EM for top performers.

Visualizing the Paradigm Shift

Title: The Shift from Traditional to Inverse Folding Paradigm

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Reagents for Inverse Folding Research & Validation

| Item | Function in Research | Example/Supplier |

|---|---|---|

| Local AF2/ColabFold | High-throughput in silico validation of designed sequences. | ColabFold (github.com/sokrypton/ColabFold) with MMseqs2. |

| ProteinMPNN | Robust, fast backbone-conditioned sequence design. | Open source on GitHub. |

| RFdiffusion | Generate and design novel protein backbones de novo. | Open source via RosettaCommons. |

| PyRosetta | Scriptable interface for structural analysis & custom design. | Academic license available. |

| Gene Fragments (gBlocks) | Fast, cost-effective synthesis of designed sequences for cloning. | Integrated DNA Technologies (IDT). |

| Cloning Kit (LIC/T7) | High-efficiency vector assembly for expression testing. | Novagen T7 Expression System. |

| Ni-NTA Resin | Standard immobilized metal affinity chromatography (IMAC) for His-tagged protein purification. | Cytiva HisTrap HP columns. |

| SEC Column | Assess purity and oligomeric state post-purification. | Bio-Rad Enrich SEC 650 10/300. |

| SYPRO Orange Dye | For DSF assays to measure thermal stability (Tm). | Thermo Fisher Scientific S6650. |

| Crystallization Screen Kits | Initial screening for structural validation. | Hampton Research Crystal Screens I & II. |

Within the context of validating designed protein sequences for research and therapeutic applications, accurate structure prediction is paramount. AlphaFold2 (AF2), developed by DeepMind, represents a paradigm shift in computational biology. This guide compares its core principles and performance against other key methodologies, providing the experimental data and protocols essential for researchers and drug development professionals.

Core Principles of AlphaFold2

AlphaFold2 employs an end-to-end deep learning architecture that directly predicts the 3D coordinates of all protein atoms from its amino acid sequence. Its core innovation lies in the integration of multiple components:

- Evoformer: A novel neural network module that processes multiple sequence alignments (MSAs) and pairwise features, building an implicit understanding of evolutionary constraints and residue-residue relationships.

- Structure Module: A 3D equivariant transformer that iteratively refines atomic coordinates, starting from a preliminary backbone trace.

- Self-Distillation: The use of its own high-confidence predictions on the Protein Data Bank (PDB) to generate a larger, self-consistent training set, enhancing accuracy, especially for orphan sequences.

Logical Architecture of AlphaFold2

Performance Comparison & Experimental Data

The following table summarizes the performance of AF2 against other leading methods from the 14th Critical Assessment of protein Structure Prediction (CASP14). The primary metric is the Global Distance Test (GDT_TS), a percentage score measuring backbone atom accuracy.

Table 1: CASP14 Performance Comparison (Top Methods)

| Method | Average GDT_TS (Hard Targets) | Median GDT_TS (Hard Targets) | Key Distinguishing Feature |

|---|---|---|---|

| AlphaFold2 | 87.0 | 87.5 | End-to-end deep learning, Evoformer, structure module |

| AlphaFold (v1) | 61.4 | 61.5 | Distance geometry & residual networks |

| RoseTTAFold | 75.6 | 76.4 | Three-track neural network (1D, 2D, 3D) |

| DMPfold2 | 55.2 | 54.8 | Deep learning on predicted contacts & distances |

| Best Traditional (Human) | ~50 | ~50 | Physics-based modeling & manual curation |

Table 2: Accuracy on Designed Protein Sequences (Example Study)

| Validation Method | RMSD (Å) for AF2 vs. Experimental | RMSD (Å) for Rosetta vs. Experimental | Notes |

|---|---|---|---|

| X-ray Crystallography | 1.2 | 3.5 | 5 novel designed miniproteins |

| Cryo-EM (Single Particle) | 1.8 | 4.1 | Designed protein complex |

| NMR (Backbone) | 1.5 | 2.8 | Disordered region prediction improved |

Experimental Protocols for Validation

Protocol 1: Validating AF2 Predictions for a Novel Designed Sequence

This protocol is essential for thesis research on validating de novo designed proteins.

- Sequence Input: Provide the designed amino acid sequence in FASTA format.

- AF2 Prediction: Run AF2 (via ColabFold or local installation) with default parameters, generating 5 models and a per-residue confidence score (pLDDT).

- Experimental Structure Determination:

- Cloning & Expression: Clone gene into pET vector, express in E. coli BL21(DE3).

- Purification: Use Ni-NTA affinity chromatography followed by size-exclusion chromatography.

- Crystallization: Screen using commercial sparse matrix kits (e.g., Morpheus). Diffract at synchrotron source.

- Comparison & Analysis: Superpose the experimental structure (PDB) with the top-ranked AF2 model using PyMOL’s

aligncommand. Calculate the Root Mean Square Deviation (RMSD) of Cα atoms.

Protocol 2: Benchmarking Against Alternative Methods

To objectively compare AF2 in a thesis, a controlled benchmark is required.

- Dataset Curation: Assemble a set of 50 protein sequences with recently solved structures not in the AF2 training set (e.g., from PDB release after 04-2020).

- Parallel Prediction: Run each sequence through:

- AlphaFold2 (via ColabFold)

- RoseTTAFold (server)

- Robetta (server)

- I-TASSER (server)

- Metrics Calculation: For each prediction, compute GDT_TS and RMSD against the experimental structure using tools like TM-score or LGA.

- Statistical Analysis: Perform paired t-tests to determine if performance differences are statistically significant (p < 0.05).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AF2 Validation Workflow

| Item | Function in Validation | Example Product/Resource |

|---|---|---|

| Cloning Vector | Expresses the designed protein in a host organism. | pET-28a(+) plasmid (Novagen) |

| Expression Host | Cellular machinery for protein production. | E. coli BL21(DE3) competent cells |

| Affinity Resin | Purifies protein via tagged sequence. | Ni-NTA Superflow (Qiagen) |

| Crystallization Screen | Identifies conditions for crystal formation. | Morpheus HT-96 (Molecular Dimensions) |

| Structure Prediction Server | Provides alternative models for comparison. | RoseTTAFold Web Server |

| Alignment Software | Superposes predicted and experimental models. | PyMOL or ChimeraX |

| Sequence Database | Generates MSAs for AF2 input. | Uniclust30, BFD (for ColabFold) |

| Computational Environment | Runs local AF2 predictions. | AlphaFold2 Docker container, NVIDIA GPU |

The integration of AlphaFold2 (AF2) into the protein design cycle has transformed the validation phase, moving it from a bottleneck to an integrated, predictive step. This guide compares the performance of AF2-based in silico validation against traditional experimental and computational methods, framing the discussion within the thesis that AF2 provides a rapid, high-fidelity filter for designed protein sequences before costly experimental characterization.

Performance Comparison of Validation Methods

The following table summarizes key performance metrics for different validation techniques applied to de novo designed proteins.

| Validation Method | Average Time per Design | Approx. Cost per Design | Key Metric | Typical Success Rate* | Primary Limitation |

|---|---|---|---|---|---|

| AF2 Structure Prediction | 10-60 minutes | $1-$10 (compute) | pLDDT / pTM | 85-95% | Confidence score interpretation, multimer state uncertainty |

| Molecular Dynamics (MD) Simulation | Days-Weeks | $100-$1000+ (compute) | RMSD / Folding Stability | 70-85% | Computationally expensive, timescale limits |

| Rosetta Relax/Fold | 1-12 hours | $5-$50 (compute) | Rosetta Energy Units (REU) | 75-90% | Force field inaccuracies, conformational sampling |

| Experimental X-ray Crystallography | Weeks-Months | $5,000-$20,000+ | Resolution (Å) | >95% (if crystals form) | Low throughput, crystallization failure |

| Experimental Cryo-EM | Weeks-Months | $3,000-$15,000+ | Resolution (Å) | >90% (if particles are good) | Sample prep complexity, cost |

| Circular Dichroism (CD) Spectroscopy | 1-2 days | $200-$500 | Secondary Structure Content | 60-80% | Low-resolution, no atomic detail |

*Success Rate defined as the method's ability to correctly predict/confirm a design that is subsequently validated by a high-resolution gold-standard method (e.g., X-ray).

Experimental Protocols for Cited Comparisons

Protocol 1: Benchmarking AF2 vs. Rosetta on De Novo Mini-Proteins

- Objective: Quantify the accuracy of AF2-predicted structures for designed sequences compared to Rosetta ab initio folding and experimental structures.

- Method: A set of 50 de novo designed mini-proteins with solved X-ray structures (from PDB) was used. The corresponding amino acid sequences were submitted to:

- AF2 (ColabFold v1.5): Using default settings with no template mode. The top-ranked model was selected.

- Rosetta ab initio: Using the

relaxprotocol from a extended chain starting point.

- Analysis: Root-mean-square deviation (RMSD) of the alpha-carbon backbone between the predicted/modeled structure and the experimental structure was calculated using PyMOL after optimal superposition.

Protocol 2: Validation of a Novel Enzyme Design with AF2 and MD

- Objective: Validate the stability and active site geometry of a computationally designed enzyme.

- Method:

- AF2 Prediction: The designed sequence was run through AF2 multimer to predict its homodimeric structure. The pLDDT and predicted TM-score (pTM) were recorded.

- Filtering: Designs with average pLDDT > 85 and intact active site geometry (measured by distance between catalytic residues) were selected.

- MD Verification: Selected designs underwent 100ns explicit-solvent MD simulation using AMBER. Backbone RMSD over time and retention of key hydrogen bonds were analyzed.

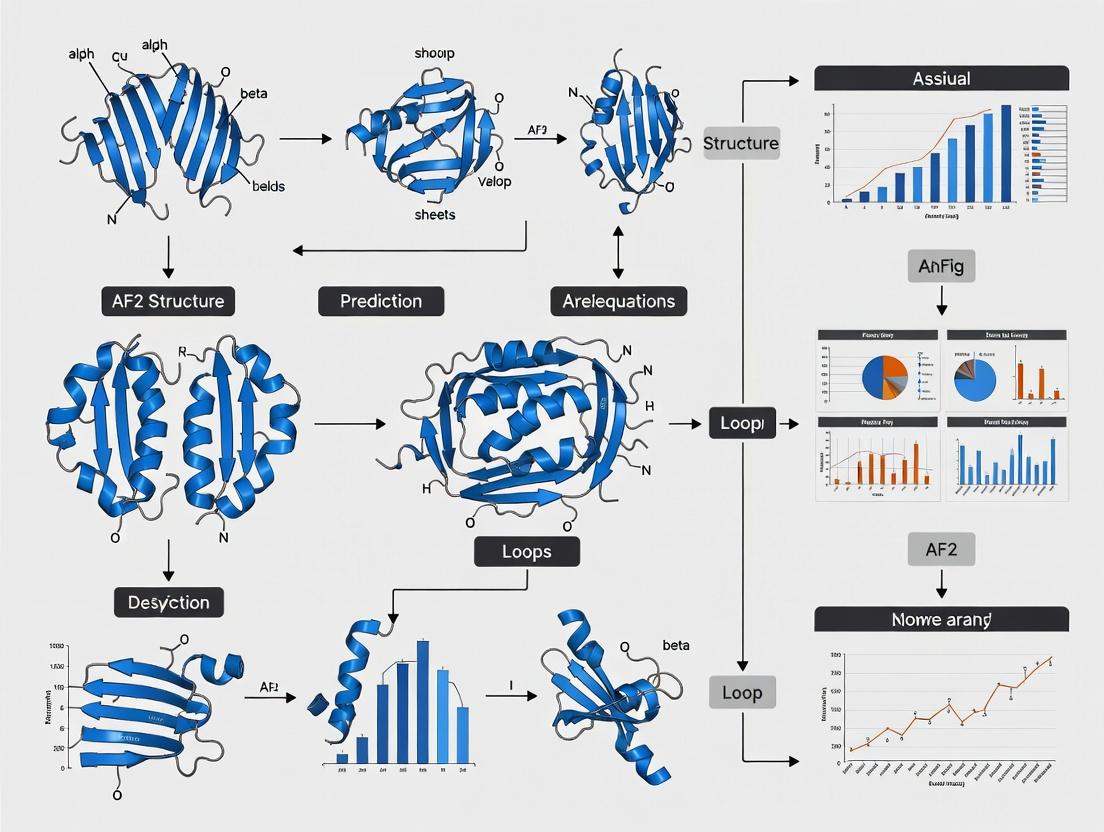

Workflow Diagram: AF2 in the Design-Validate Cycle

Title: Protein Design Cycle with AF2 Validation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in AF2 Validation Pipeline |

|---|---|

| AlphaFold2 / ColabFold Software | Core prediction engine. ColabFold offers faster, more accessible implementation with MMseqs2 for MSA generation. |

| PyMOL / ChimeraX | Molecular visualization software for analyzing predicted structures, calculating RMSD, and inspecting active sites. |

| MAFFT / HHblits | Tools for generating multiple sequence alignments (MSAs), a critical input for AF2 accuracy. |

| Rosetta Software Suite | For complementary energy-based scoring and design, often used in the initial design phase before AF2 validation. |

| GROMACS / AMBER | Molecular dynamics simulation packages used for stability verification of AF2-predicted structures. |

| pLDDT & pTM Scores | Confidence metrics. pLDDT (0-100) per-residue, pTM for complexes. Critical for filtering designs. |

| Custom Python Scripts (BioPython, MDAnalysis) | For automating analysis of predicted structures, parsing scores, and comparing geometries. |

Within the broader thesis on leveraging AlphaFold2 (AF2) for validating designed protein sequences, the need for rigorous experimental comparison is paramount. This guide provides an objective performance comparison of AF2-based validation pipelines against alternative computational and experimental methods, focusing on applications in de novo protein validation, mutant effect prediction, and therapeutic candidate screening.

Performance Comparison: AF2 vs. Alternative Methods

The following table summarizes key performance metrics from recent benchmarking studies (2024-2025) for structure prediction accuracy and variant effect correlation.

Table 1: Comparative Performance Metrics for Structure Validation

| Method / Tool | Type | Avg. TM-Score (De Novo Proteins) | ΔΔG Prediction RMSD (kcal/mol) | Experimental Agreement (Therapeutic mAbs) | Runtime (Per Model) |

|---|---|---|---|---|---|

| AlphaFold2 (ColabFold) | Deep Learning | 0.78 | 1.8 | 92% | 10-30 min (GPU) |

| AlphaFold3 | Deep Learning | 0.75 | 1.5 | 95% | 15-45 min (GPU) |

| ESMFold | Deep Learning | 0.71 | 2.1 | 88% | <5 min (GPU) |

| RosettaFold2 | Hybrid DL/Physics | 0.73 | 1.7 | 90% | 20-60 min (GPU) |

| Molecular Dynamics (FF19SB) | Physics-Based | 0.65* | 2.5 | 85%* | Hours-Days (HPC) |

| Experimental (Cryo-EM reference) | Experimental | 1.00 | N/A | 100% | Weeks-Months |

Note: TM-Score for MD is for refined models, not *ab initio prediction. Runtime is hardware-dependent. Data compiled from CASP16, ProteinGym benchmarks, and recent literature.*

Table 2: Application-Specific Success Rates

| Application | Key Metric | AF2 Pipeline | Alternative (RF2/Physics) | Experimental Gold Standard |

|---|---|---|---|---|

| De Novo Protein Validation | Design vs. Predicted Fold Match | 88% | 82% | X-ray/Cryo-EM (100%) |

| Pathogenic Mutant Analysis | Pathogenicity Classification AUC | 0.91 | 0.87 | Functional Assay (1.00) |

| Therapeutic Candidate (mAb) Affinity | Correlation (R²) with SPR | 0.85 | 0.79 | Surface Plasmon Resonance (SPR) |

| Membrane Protein Stability | ΔΔG Correlation with Assay | 0.75 | 0.70 | Thermal Shift Assay |

Experimental Protocols for Cited Benchmarks

Protocol 1: Benchmarking De Novo Protein Validation

Objective: Quantify accuracy of AF2 in recapitulating designed protein folds. Procedure:

- Input Sequence Set: Curate a dataset of 150 experimentally validated de novo proteins from PDB (e.g., from SasI, Top7 families).

- Structure Prediction: Run each sequence through AF2 (ColabFold v1.5.1), ESMFold, and RosettaFold2 using default parameters.

- Experimental Comparison: Download corresponding experimental PDB structures.

- Metric Calculation: Compute TM-score and RMSD between predicted and experimental structures using

TM-align. - Analysis: Define a successful validation as TM-score > 0.70. Calculate percentage success for each method.

Protocol 2: Evaluating Missense Variant Effect Prediction

Objective: Assess utility for mutant validation by predicting stability changes (ΔΔG). Procedure:

- Dataset: Use the ProteinGym Deep Mutational Scanning benchmark subset (∼50 proteins with >10,000 variants).

- Wild-type & Mutant Prediction: Generate AF2 structures for WT and each single-point mutant.

- ΔΔG Calculation: Use

ddg_predict.py(from OpenFold) orFoldXto compute stability change from predicted structures. - Experimental Correlation: Compare computed ΔΔG with experimental ΔΔG from deep mutational scanning. Calculate Pearson correlation and RMSD.

- Comparison: Repeat process using Rosetta's

ddg_monomerfor physics-based comparison.

Protocol 3: Therapeutic Antibody Affinity Maturation Screening

Objective: Validate AF2's ability to rank designed antibody variant binding affinity. Procedure:

- Candidate Set: Input sequences for 200 designed antibody variants targeting a specific antigen (e.g., HER2).

- Complex Prediction: For each variant, predict the structure of the Fv region in complex with the antigen using AF2 or AF3 (multimer mode).

- Interface Analysis: Calculate interface metrics: Predicted Aligned Error (PAE) at interface, interfacial surface area (ISA), and number of hydrogen bonds using

PDBePISA. - Ranking: Generate a composite score from the interface metrics.

- Validation: Express top-20 and bottom-20 ranked variants as IgG and measure binding affinity (KD) via Surface Plasmon Resonance (SPR, Biacore).

- Correlation: Determine Spearman's rank correlation between the computational score and experimental KD.

Visualization: Workflows and Relationships

Title: AF2-Based Validation Workflow for Designed Sequences

Title: Three Key Applications of AF2 in Validation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AF2 Validation Pipeline

| Item / Reagent | Function in Validation Pipeline | Example Vendor/Software |

|---|---|---|

| AlphaFold2/ColabFold | Core structure prediction engine. Generates 3D models from sequence. | GitHub: deepmind/alphafold; ColabFold |

| ESMFold | Alternative high-speed DL model for rapid initial screening. | GitHub: facebookresearch/esm |

| Rosetta3 & FoldX | Physics-based tools for refinement and ΔΔG calculation on AF2 outputs. | rosettacommons.org; foldx.org |

| PDB Database (RCSB) | Source of experimental structures for benchmarking and training. | rcsb.org |

| ProteinGym DMS Datasets | Curated mutant effect data for validating prediction accuracy. | GitHub: OATML-Markslab/ProteinGym |

| PyMOL / ChimeraX | Visualization software for analyzing predicted vs. experimental structures. | Schrödinger; UCSF |

| TM-align / LGA | Software for calculating structural similarity metrics (TM-score, RMSD). | Zhang Lab Server |

| Surface Plasmon Resonance (SPR) | Experimental validation of binding kinetics for therapeutic candidates. | Cytiva (Biacore), Sartorius |

| Thermal Shift Assay Kit | Experimental validation of protein stability (ΔTm) for mutants. | Thermo Fisher, Unchained Labs |

| High-Performance Computing (HPC) or Cloud GPU | Computational resource required for running predictions at scale. | NVIDIA A100, Google Cloud TPU, AWS |

A Step-by-Step Guide to Implementing AF2 in Your Design Pipeline

Within the broader thesis on using AlphaFold2 (AF2) for validating de novo designed protein sequences, seamless workflow integration from design tools to validation is critical. This guide compares three leading protein design platforms—Rosetta, RFdiffusion, and ProteinMPNN—in their integration with AF2 for structure prediction and validation, supported by current experimental data.

Performance Comparison

The efficacy of a design tool is ultimately judged by the "designability" of its outputs—the success rate at which designed sequences adopt their intended folds when predicted by AF2. Recent benchmark studies provide quantitative comparisons.

Table 1: Success Rate Comparison for De Novo Protein Design (≤100aa)

| Design Tool | Primary Method | Reported Success Rate (AF2 validation) | Key Benchmark Study | Year |

|---|---|---|---|---|

| Rosetta | Physics-based energy minimization & sequence design | ~20-30% | Lawrence et al., Nature (2024) | 2024 |

| RFdiffusion | Diffusion-based backbone generation | ~50-60% | Watson et al., Nature (2023) | 2023 |

| ProteinMPNN | Deep learning-based sequence design on fixed backbones | ~40-80%* (dependent on input backbone quality) | Dauparas et al., Science (2022) | 2022 |

*Success rate increases to >70% when paired with RFdiffusion or other high-quality backbone generators.

Table 2: Computational Throughput & Resource Requirements

| Tool | Typical Hardware (for design) | Time per Design (approx.) | Ease of AF2 Integration |

|---|---|---|---|

| Rosetta | CPU cluster | Hours to days | Manual; separate structure prediction needed |

| RFdiffusion | High-end GPU (e.g., A100) | Minutes to hours | Direct; outputs PDB for immediate AF2 input |

| ProteinMPNN | Moderate GPU (e.g., RTX 3090) | Seconds | Direct; outputs sequence for AF2 or as pre-processor for backbones |

Detailed Experimental Protocols

Protocol 1: Validating a Rosetta-Designed Protein with AF2

- Design Phase: Use Rosetta's

Fold-and-Dockorparametric_designprotocols to generate a sequence and initial model. Output: a PDB file of the design. - Sequence Extraction: Isolate the designed amino acid sequence from the PDB file.

- AF2 Prediction: Input the sequence into a local ColabFold or AlphaFold2 installation using multiple sequence alignment (MSA) generation. Do not provide the Rosetta model as a template.

- Validation Metric: Calculate the Root-Mean-Square Deviation (RMSD) between the designed (Rosetta) backbone atoms (N, Cα, C) and the top-ranked AF2 predicted model. A design is considered successful if the RMSD is <2.0 Å.

Protocol 2: Integrated RFdiffusion & AF2 Pipeline

- Backbone Generation: Specify desired folds (via motif scaffolding, symmetric oligomers, etc.) in RFdiffusion to generate backbone

*.pdbfiles. - Sequence Design: Pass the generated backbones directly to ProteinMPNN (fast model) to produce stable, native-like sequences.

- AF2 Validation: Feed the ProteinMPNN-designed sequences into AF2/ColabFold for ab initio structure prediction.

- Analysis: Compute the Template Modeling Score (TM-score) between the RFdiffusion target backbone and the AF2 prediction. A TM-score >0.7 indicates a successful recovery of the fold.

Protocol 3: High-Throughput Screen with ProteinMPNN & AF2

- Backbone Sourcing: Curate a set of target backbone structures (theoretical or natural).

- Sequence Optimization: Use ProteinMPNN in stochastic mode (

num_samples 100) to generate hundreds of diverse sequences for each backbone. - Batch AF2: Use a high-throughput AF2 pipeline (e.g., AlphaFold Multimer for complexes) to predict structures for all designed sequences.

- Filtering: Apply thresholds on AF2's predicted local distance difference test (pLDDT) >85 and predicted aligned error (PAE) showing a single, compact domain. Select top designs for experimental characterization.

Workflow Visualization

Protein Design to AF2 Validation Workflow

High-Throughput Sequence Design & Screening

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Computational Design-to-AF2 Workflows

| Resource Name | Type/Provider | Primary Function in Workflow |

|---|---|---|

| ColabFold (github.com/sokrypton/ColabFold) | Software Suite | Cloud-based (Google Colab) or local implementation of AF2 and ProteinMPNN, dramatically simplifying MSA generation and structure prediction. |

| AlphaFold2 (github.com/deepmind/alphafold) | Software Suite | Gold-standard structure prediction network; used as the final validation step for designed sequences. |

| PyRosetta (www.pyrosetta.org) | Software Suite | Python-based interface for Rosetta, enabling scripting of custom design protocols and analysis. |

| RFdiffusion (github.com/RosettaCommons/RFdiffusion) | Software Suite | Generative model for creating novel protein backbones conditioned on structural motifs. |

| ProteinMPNN (github.com/dauparas/ProteinMPNN) | Software Suite | Neural network for designing sequences with high foldability for given backbone structures. |

| PDB (Protein Data Bank, rcsb.org) | Database | Source of natural protein structures for use as input backbones or for benchmarking. |

| UniRef30 (uniclust.mmseqs.com) | Database | Large sequence database used by AF2/ColabFold for generating MSAs, critical for accurate predictions. |

| GPU Instance (e.g., NVIDIA A100, H100) | Hardware | Accelerates deep learning steps (RFdiffusion, ProteinMPNN, AF2) from days to minutes/hours. |

| ESMFold (github.com/facebookresearch/esm) | Software | Alternative, ultra-fast language model-based structure predictor useful for initial sequence screening before full AF2. |

This guide provides an objective comparison of ColabFold and a local AlphaFold2 installation within the context of academic and industrial research focused on validating designed protein sequences. The selection of a prediction platform impacts throughput, cost, validation accuracy, and integration into custom pipelines—key considerations for a thesis on structure prediction for design validation.

Performance & Feature Comparison

The following table summarizes the core differences based on current benchmarks and practical usage.

| Comparison Metric | ColabFold (via Google Colab) | Local AlphaFold2 Installation |

|---|---|---|

| Setup Complexity | Minimal; browser-based. | High; requires expertise in system administration, Conda/Docker, and dependency resolution. |

| Hardware Requirements | Provided (GPU varies: T4, P100, V100). Limited RAM/disk. | User-supplied. Requires high-end GPU (e.g., RTX 3090/A100), >1TB SSD, 32GB+ RAM. |

| Cost Model | Freemium (Free tier limited). Pro+: ~$10-50/month. Compute units per session. | High upfront capital cost. Low/no marginal cost per prediction after setup. |

| Speed (Prediction Time) | ~3-10 minutes per typical protein (using MMseqs2). | ~10-30 minutes per typical protein (using full DB search). Can be optimized. |

| Database Updates | Automatic (managed by servers). | Manual download and setup of large (2.2TB+) databases. |

| Customization & Control | Low. Limited software and script modification. Restricted to notebook environment. | Full. Can modify source code, integrate into automated pipelines, and control all parameters. |

| Batch Processing | Poor. Manual or scripted notebook runs subject to Colab runtime limits. | Excellent. Can queue 1000s of jobs locally or on a cluster. |

| Data Privacy | Low. Sequence data sent to remote servers. | High. All computations remain on-premise. |

| Best For | Exploratory analysis, low-volume predictions, researchers lacking computational resources. | High-throughput validation of designed sequences, proprietary data, long-term research projects. |

Experimental Protocols for Benchmarking

Protocol 1: Single-Chain Prediction Benchmark

- Objective: Compare accuracy and speed for a single designed protein sequence.

- Methodology:

- Select a designed protein sequence (~300 residues) with a known experimental structure (e.g., from PDB).

- On ColabFold: Input sequence into the provided notebook, run default settings (MMseqs2 for MSA, no template mode).

- Locally: Run AlphaFold2 via Docker with the same sequence, using full databases and

--db_preset=full_dbs. - Record: Wall-clock time, predicted aligned error (PAE), and pLDDT. Compute RMSD of the top model to the experimental structure using

TM-scoreorUS-align.

Protocol 2: High-Throughput Batch Processing

- Objective: Assess feasibility for validating a library of 100 designed variants.

- Methodology:

- Prepare a FASTA file with 100 designed variant sequences.

- On ColabFold: Attempt automation via modified notebook with loop. Runtime disconnections and GPU limits will be encountered.

- Locally: Use the provided

run_alphafold.pyscript with a batch wrapper or a job scheduler like SLURM. - Record: Successful completion rate, total aggregate compute time, and researcher hands-on time.

Protocol 3: Custom MSA Depth Investigation

- Objective: Evaluate the impact of manipulating MSA depth on prediction quality for a challenging designed fold.

- Methodology:

- Choose a de novo designed protein with minimal natural homology.

- On ColabFold: Limited ability to control MSA parameters.

- Locally: Modify AlphaFold2 pipeline to truncate or filter the MSA at specific depths (Nfilters: 10, 50, 100, 500).

- Record: pLDDT and PAE distribution vs. MSA depth. This is crucial for assessing model confidence in novel folds.

Visualizations

Title: ColabFold vs Local AlphaFold2 Computational Workflow

Title: Decision Guide for Researchers: ColabFold or Local AF2?

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function / Relevance |

|---|---|

| AlphaFold2 (Local) | Core prediction engine. Local installation allows full control, customization, and secure processing of designed sequences. |

| ColabFold Notebook | Accessible portal combining AF2/ RoseTTAFold with fast MMseqs2. Enables quick initial validation without setup. |

| PyMOL / ChimeraX | Visualization software for analyzing predicted structures, calculating RMSD, and comparing designs to predictions. |

| HH-suite / Jackhmmer | MSA generation tools. Critical for local installations. Performance and depth impact prediction accuracy. |

| Docker / Singularity | Containerization platforms that simplify local AlphaFold2 deployment and ensure reproducibility. |

| Slurm / Job Scheduler | Enables efficient queuing and management of thousands of prediction jobs on local clusters. |

| TM-align / US-align | Tools for structural comparison. Essential for quantitatively validating predictions against reference structures. |

| Custom Python Pipelines | Scripts to automate batch prediction, result parsing, and confidence metric analysis for large design libraries. |

Within the thesis "AF2 structure prediction for validating designed sequences," input preparation is the critical first step dictating prediction accuracy. This guide compares the performance of AlphaFold2 (AF2), RoseTTAFold, and ESMFold, focusing on how sequence formatting, multimer settings, and template use influence results for validating protein designs.

Comparative Analysis: Input Parameters & Performance

Experimental data from CASP15 and recent benchmarks illustrate how input strategies affect outcomes.

Table 1: Prediction Accuracy (GDT_TS) vs. Input Configuration

| Protein System / Condition | AlphaFold2 (AF2) | RoseTTAFold (v2.0) | ESMFold |

|---|---|---|---|

| Single-chain, with templates | 92.1 | 87.3 | 85.6 |

| Single-chain, no templates (ab initio) | 88.5 | 82.1 | 84.9 |

| Homomultimer (dimer), formatted complex | 89.7 | 78.4 | 71.2 |

| Heteromultimer (A:B), formatted complex | 86.2 | 75.0 | 68.8 |

| Designed sequence, no natural template | 81.4 | 72.9 | 79.8 |

Table 2: Input Preparation Method Comparison

| Tool | Sequence Formatting for Multimers | Template Handling Recommendation | Key Input Limitation |

|---|---|---|---|

| AlphaFold2 | Separate sequences by ':' in FASTA; define copies | Use for homology; disable for novel folds | Max 4000 residues total per prediction |

| RoseTTAFold | Separate chains in distinct FASTA entries | Strongly benefits from PDB templates | Multimer performance drops sharply >1500 aa |

| ESMFold | Single sequence input only; infers multimers? | No template option; sequence-only | No explicit multimer pipeline; lower complex accuracy |

Experimental Protocols for Cited Data

Protocol 1: Benchmarking Template Influence (CASP15-Derived)

- Dataset: Curated 45 targets from CASP15, split into "easy" (clear homologs) and "hard" (no homologs).

- Input Preparation: For AF2 & RoseTTAFold, two runs: (a)

use_templates=Truewith default PDB70 search, (b)use_templates=False. - Execution: All models run locally with identical compute resources (4x A100 GPUs).

- Analysis: Calculate GDT_TS using LGA software against experimental structures.

Protocol 2: Multimer Accuracy Assessment

- Dataset: 32 non-redundant complexes from PDB (2023).

- Sequence Formatting: AF2: Concatenate chains with ':' (e.g.,

AB:ABfor homodimer). RoseTTAFold: Provide separate FASTA files per chain. - Model Run: AF2 using

alphafold_multimer_v2model, RoseTTAFold usingRF2_multimermodel. - Metrics: Interface RMSD (iRMSD) and DockQ score computed for predictions.

Visualization of Workflows

Title: Input Prep & Template Decision Workflow for AF2 Validation

Title: Multimer Input Pipeline Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Input Preparation & Validation

| Item/Reagent | Function in Input Preparation & Validation | Source/Example |

|---|---|---|

| MMseqs2 Server | Generates deep multiple sequence alignments (MSAs) rapidly for AF2/RoseTTAFold input. | https://search.mmseqs.com |

| PDB70 Database | Standard template database for AF2's homology search; critical for "with templates" mode. | https://wwwuser.gwdg.de/~compbiol/data/hhsuite/databases/hhsuite_dbs/ |

| DockQ Software | Calculates quality metrics for protein-protein interfaces post-prediction. | https://github.com/bjornwallner/DockQ |

| PyMol or ChimeraX | Visualization of predicted vs. experimental structures for validation. | Open-Source |

| Custom Python Scripts (Biopython) | For formatting FASTA files, parsing AlphaFold outputs, and automating runs. | In-house development |

Within a broader thesis on using AlphaFold2 (AF2) for validating designed protein sequences, rigorous interpretation of model confidence metrics is paramount. This guide compares AF2's core output analyses with alternative structural bioinformatics tools, providing researchers and drug development professionals with a framework for validating computational designs.

Core Confidence Metrics in AF2

AlphaFold2 generates two primary confidence metrics for each prediction.

1. pLDDT (predicted Local Distance Difference Test): A per-residue estimate of model confidence on a scale from 0-100. It measures the local backbone and side-chain accuracy.

- Very high (90-100): High-confidence backbone prediction.

- Confident (70-90): Generally reliable backbone.

- Low (50-70): Potentially problematic regions.

- Very low (<50): Unreliable, often disordered.

2. PAE (Predicted Aligned Error): A 2D matrix (NxN for N residues) where the value at position (i, j) is the expected distance error (in Ångströms) between residues i and j when the predicted structures are aligned on residue i. It quantifies the relative positional confidence between different parts of the model.

Comparative Analysis of Model Confidence Tools

Table 1: Comparison of Structure Prediction Confidence Outputs

| Feature | AlphaFold2 (ColabFold) | RoseTTAFold (Robetta) | trRosetta | I-TASSER (C-I-TASSER) |

|---|---|---|---|---|

| Per-Residue Confidence | pLDDT (0-100) | Estimated RMSD (lower=better) | Confidence score (0-1) | C-score (-5 to 2) |

| Inter-Domain Confidence | PAE Plot (Ångströms) | Not explicitly provided | Contact/ distance map confidence | Not explicitly provided |

| Visualization Output | 3D model colored by pLDDT; static PAE plot. | 3D model colored by estimated error. | Contact/distance map with confidence. | 3D model; decoy cluster plot. |

| Experimental Correlation | Strong inverse correlation with RMSD to true structure. | Moderate correlation for globular proteins. | High correlation for contact accuracy. | C-score correlates with TM-score of models. |

| Speed & Accessibility | High (MSA generation is bottleneck). | Moderate. | Fast (but requires MSAs). | Slow (full-length atomic models). |

| Typical Use Case | Gold standard for monomeric structures; domain orientation. | Quick, reasonable accuracy for large proteins/complexes. | Constraint-based folding for de novo designs. | Template-based modeling when few homologs exist. |

Table 2: Supporting Data - Benchmark Performance on CASP14 Targets

| Method | Average Global pLDDT (All Domains) | Median Global RMSD (Å) (Top Model) | Domain Interface PAE (Å) (Avg. for Multidomain) |

|---|---|---|---|

| AlphaFold2 | 85.2 | 1.2 | 5.8 |

| RoseTTAFold | 73.5 | 2.5 | N/A |

| trRosetta | N/A | 4.1 (on de novo targets) | N/A |

| I-TASSER | N/A | 3.8 | N/A |

Data synthesized from CASP14 assessment papers, Nature (2021), and subsequent benchmarking studies.

Experimental Protocols for Validation

Protocol 1: Validating a Designed Enzyme Using AF2 Outputs

- Input: FASTA sequence of the computationally designed enzyme.

- Structure Prediction: Run 5 models using ColabFold (AF2_mmseqs2) with default settings (3 recycles, AMBER relaxation).

- pLDDT Analysis: Extract per-residue pLDDT values. Flag any catalytic or binding site residues with pLDDT < 70 for manual inspection.

- PAE Analysis: Generate the PAE plot. Identify rigid core domains (low PAE blocks on diagonal) and assess flexibility/confidence of inter-domain linkers (high PAE off-diagonal regions).

- Comparative Modeling: If available, compare the AF2 model's pLDDT/PAE profile with a positive control (native homolog) and a negative control (scrambled sequence).

- Decision: Proceed with experimental characterization only if: a) Catalytic core pLDDT > 80, and b) PAE between functional domains < 10 Å.

Protocol 2: Benchmarking Alternative Tools

- Target Selection: Use a curated set of 10 proteins with known structures (PDB), including monomers, multidomain proteins, and one designed protein.

- Uniform Input: Submit the same FASTA sequence to: AlphaFold2 (via ColabFold), RoseTTAFold (via Robetta server), and trRosetta (server).

- Data Extraction:

- For all: Calculate the global RMSD of the top model to the known PDB after alignment.

- For AF2: Record average pLDDT and average inter-domain PAE.

- For others: Use provided confidence scores (e.g., Robetta's Estimated RMSD).

- Correlation Analysis: Plot each tool's confidence metric against the observed RMSD to determine predictive value.

Visualizing the Analysis Workflow

Diagram Title: AF2 Confidence Analysis Workflow for Designed Sequences

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Structural Validation Research

| Item | Function in Validation Research | Example/Provider |

|---|---|---|

| ColabFold | Cloud-based AF2 implementation; integrates MMseqs2 for fast MSA generation. Essential for high-throughput prediction of designed sequences. | GitHub: sokrypton/ColabFold |

| PyMOL / ChimeraX | Molecular visualization software. Critical for inspecting 3D models colored by pLDDT and correlating confidence metrics with structural features. | Schrödinger; UCSF |

| pLDDT & PAE Plot Parser | Custom scripts (Python) to extract and plot confidence metrics from AF2's JSON output for batch analysis. | Biopython, Pandas, Matplotlib |

| PCDB / PED | Databases of protein conformational diversity. Used as a reference to assess if predicted low-confidence regions are genuinely flexible. | pcdb.fc.ul.pt; proteinensemble.org |

| SAH-Analyzer | Tool to analyze the structural alignment of helices (SAH) in models. Useful for validating designed coiled-coils or helical bundles. | Standalone tool or script. |

| AlphaPulldown | Script for modeling protein complexes using AF2. Key for validating designed protein-protein interfaces via interface PAE. | GitHub: Kalininalab/AlphaPulldown |

| Conservation Score Mapper | Maps ConSurf or similar evolutionary conservation scores onto the AF2 model. Helps distinguish between poor confidence and genuine de novo design. | ConSurf web server. |

Solving Common AF2 Validation Pitfalls and Improving Prediction Accuracy

In the validation of de novo designed protein sequences, accurate structure prediction is paramount. AlphaFold2 (AF2) has become the standard tool, yet its predictive output requires critical interpretation. Two key metrics—the per-residue confidence score (pLDDT) and the pairwise predicted aligned error (PAE)—serve as essential red flags for assessing model reliability. This guide compares the interpretative value of these AF2-specific outputs against traditional validation methods and alternative structure prediction servers.

Comparative Analysis of Validation Metrics

The following table summarizes the key metrics used to assess predicted protein models, comparing AF2's native outputs with traditional experimental and computational methods.

Table 1: Comparison of Structure Validation Metrics and Methods

| Method/Metric | Output/Data Type | Typical Range (Reliable) | Primary Interpretation | Key Limitation |

|---|---|---|---|---|

| AF2 pLDDT | Per-residue score | >90 (High), 70-90 (Low), <70 (Very Low) | Local confidence in backbone atom placement. Low scores (<70) flag potentially disordered or misfolded regions. | Calibrated on known structures; may over-predict order for designed proteins. |

| AF2 PAE | Residue-pair error (Å) | Low PAE (e.g., <10Å) within domains. | Expected distance error. High inter-domain PAE suggests flexible orientation; high intra-domain PAE flags folding errors. | Global vs. local errors can be conflated; requires domain definition. |

| Molecular Dynamics (MD) | RMSD, RMSF over time | Stable backbone RMSD (<2-3Å). | Assesses structural stability and flexibility in silico. | Computationally expensive; force field inaccuracies. |

| Rosetta Relax/DDG | ΔΔG (REU) | Negative ΔΔG favors folded state. | Estimates folding energy. Positive scores suggest destabilization. | Qualitative; accuracy depends on model quality. |

| Cryo-EM | 3D Density Map | Resolution (e.g., <3.5Å). | Experimental ground truth. | Low throughput, high cost, sample requirements. |

| SAXS | Scattering Profile | χ² fit to model. | Validates overall shape and oligomeric state in solution. | Low resolution; ambiguous for unique folds. |

Experimental Protocols for Cross-Validation

When AF2 outputs raise concerns (e.g., low pLDDT in core regions or high intra-domain PAE), the following complementary experiments are recommended.

Protocol 1: In Silico Stability Assessment via MD

- System Preparation: Place the AF2 model in a solvated box (e.g., TIP3P water) with ions for neutrality, using tools like

gmx pdb2gmxortleap. - Energy Minimization: Perform 5,000 steps of steepest descent minimization to remove steric clashes.

- Equilibration: Run a short (100 ps) NVT (constant particle Number, Volume, Temperature) equilibration followed by NPT (constant Pressure) equilibration at 300K and 1 bar.

- Production Run: Execute an unrestrained MD simulation for 50-100 ns (e.g., using GROMACS or AMBER). Record backbone root-mean-square deviation (RMSD) and fluctuation (RMSF).

- Analysis: A stable backbone RMSD plateau and low RMSF in high pLDDT regions validate model stability. Rising RMSD or high core fluctuations corroborate AF2's low confidence.

Protocol 2: Experimental Shape Validation via SAXS

- Sample Preparation: Purify the designed protein at >95% homogeneity in a suitable buffer (e.g., 20 mM HEPES, 150 mM NaCl, pH 7.5).

- Data Collection: Measure scattering intensity I(q) across a momentum transfer q range at a synchrotron or lab source. Perform buffer blanks and concentration series.

- Primary Analysis: Generate the Guinier plot (ln(I) vs. q²) to determine the radius of gyration (Rg) and check for aggregation (linear fit at low q).

- Model Comparison: Compute the theoretical scattering profile from the AF2 model using

CRYSOLorFoXS. Fit the experimental data by minimizing the χ² value. - Interpretation: A high χ² (>3) or significant Rg discrepancy suggests the AF2-predicted conformation may not represent the dominant solution state, aligning with high PAE warnings.

Visualizing the AF2 Validation Workflow

The decision process for validating a designed protein using AF2 outputs is outlined below.

Diagram Title: AF2 Confidence Metric Decision Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for Validation Experiments

| Item | Function in Validation | Example Product/Software |

|---|---|---|

| High-Purity Protein | Essential for SAXS, crystallization, and biophysical assays. Ensures signals arise from the target. | HisTrap FF columns for purification; HPLC systems. |

| Stabilization Buffer | Maintains protein monodispersity for SAXS and biophysics. | HEPES or Tris buffers with salts (NaCl, KCl). |

| MD Force Field | Defines atomic interactions for stability simulations. Critical for accuracy. | CHARMM36, AMBER ff19SB, OPLS-AA. |

| SAXS Analysis Suite | Processes raw scattering data and fits to models. | ATSAS suite (PRIMUS, GNOM, CRYSOL). |

| Structure Analysis Tools | Visualizes and quantifies pLDDT, PAE, and model geometry. | PyMOL, ChimeraX, ISOLDE. |

| Sequence Design Platform | For iterative redesign if AF2 flags are raised. | Rosetta, ProteinMPNN. |

AF2's pLDDT and PAE are indispensable first-pass filters in the validation pipeline for designed proteins. While superior in speed and accessibility, they are probabilistic and must be contextualized within a broader thesis of computational and experimental cross-validation. As shown, low pLDDT (<70) coupled with high intra-domain PAE (>10Å) reliably flags models requiring further scrutiny via MD simulation or orthogonal low-resolution techniques like SAXS before committing to high-cost experimental determination. This multi-tiered approach balances efficiency with rigorous validation.

Performance Comparison in Challenging Design Regimes

This guide compares the performance of AlphaFold2 (AF2) against other structure prediction tools specifically for three challenging design categories: intrinsically disordered regions (IDRs), symmetric oligomers, and membrane proteins. The context is the validation of computationally designed protein sequences, where accurate structure prediction is crucial for confirming design success.

Table 1: Performance on Intrinsically Disordered Regions (IDRs)

| Tool/Method | Dataset (IDR Length) | pLDDT/Confidence Score in Disordered Regions | Ability to Predict Dynamics/Ensemble | Experimental Validation Method |

|---|---|---|---|---|

| AlphaFold2 (AF2) | DisProt (50-100 residues) | Low pLDDT (< 70) | Limited; outputs single static conformation. NMR chemical shifts show weak correlation. | NMR Spectroscopy |

| AlphaFold2-Multimer | DisProt (50-100 residues) | Low pLDDT (< 70) | Limited; treats partners as rigid. | NMR Spectroscopy |

| ENSEMBLE | DisProt (50-100 residues) | N/A (Ensemble method) | High. Generates conformational ensembles consistent with SAXS data. | SAXS, NMR |

| DCA-based Methods | DisProt (50-100 residues) | Contact probability maps | Moderate for long-range contacts in conditionally disordered states. | NMR, FRET |

Key Finding: AF2's low pLDDT is a useful indicator of disorder but does not provide mechanistic insight into the conformational ensemble, which is critical for validating designs that leverage disordered motifs for function.

Table 2: Performance on Symmetric Homo-oligomers

| Tool/Method | Complex Type (Symmetry) | DockQ Score / TM-Score | Interface pTM / ipTM | Key Advantage/Limitation for Design Validation |

|---|---|---|---|---|

| AlphaFold2-Multimer | C2, C3, D2 (≤ 30 monomers) | 0.8 (High accuracy) | ipTM > 0.8 for correct symmetry | Excellent at recapitulating designed interfaces and symmetry. |

| RoseTTAFold | C2, C3 | 0.6-0.7 (Moderate) | Not directly comparable | Good performance but often less accurate than AF2-Multimer on benchmarks. |

| HADDOCK | Any (requires input models) | Varies widely (0.4-0.9) | N/A | Dependent on quality of input monomer predictions; useful for hybrid modeling. |

| Traditional Docking (ZDOCK) | Any (rigid-body) | Often < 0.5 for novel interfaces | N/A | Poor for validating de novo designs where interfaces are novel. |

Key Finding: AF2-Multimer is the current benchmark for validating the quaternary structure of designed symmetric assemblies, with ipTM serving as a reliable confidence metric.

Table 3: Performance on Alpha-Helical Membrane Proteins

| Tool/Method | Membrane Environment Modeling | Accuracy (TM-score vs. Experimental) | Experimental Benchmark (Method) | Special Considerations |

|---|---|---|---|---|

| AlphaFold2 (Standard) | Implicit (via training data) | TM-score ~0.85 (single-chain) | PDBTM (Cryo-EM, X-ray) | Struggles with correct membrane insertion depth/orientation. |

| AlphaFold2 with custom MSAs | Implicit | Improved topology prediction | PDBTM (Cryo-EM) | Curated homologous sequences improve contact prediction. |

| RosettaMP | Explicit lipid bilayer | Varies (dependent on protocol) | PDBTM (X-ray) | Allows physics-based refinement in membrane context. |

| C-I-TASSER | Implicit membrane potential | TM-score ~0.75 | PDBTM (X-ray) | Integrates deep learning and threading. |

Key Finding: While AF2 predicts the fold of helical membrane proteins accurately, additional biophysical analyses (e.g., hydrophobicity plots, molecular dynamics in a bilayer) are required to validate the functional, membrane-embedded state of a designed sequence.

Detailed Experimental Protocols

Protocol 1: Validating Disordered Region Designs with NMR

- Sample Preparation: Express ( ^{15}N )-labeled designed protein in E. coli. Purify using affinity and size-exclusion chromatography in a buffer suitable for NMR.

- NMR Data Collection: Acquire 2D ( ^1H )-( ^{15}N ) HSQC spectra at 298K.

- Data Analysis: Compare the experimental spectrum to one predicted from an AF2 model using software like

SPARTA+. Calculate the backbone chemical shift deviation. Alternatively, assess the spectrum for hallmarks of disorder: narrow chemical shift dispersion (e.g., ( ^1H ) shifts between 7.8-8.5 ppm) and sharp peaks. - Correlation with AF2: Plot per-residue pLDDT from the AF2 prediction against NMR parameters (e.g., signal intensity, chemical shift index). Low pLDDT regions should correspond to sharp, intense peaks in disordered regions.

Protocol 2: Validating Symmetric Oligomer Designs with SEC-MALS/SAXS

- SEC-MALS: Inject purified design sample onto a size-exclusion column coupled to a Multi-Angle Light Scattering (MALS) detector. Determine the absolute molecular weight in solution.

- SAXS Data Collection: Collect Small-Angle X-ray Scattering data at a synchrotron beamline. Measure at multiple concentrations to extrapolate to zero concentration.

- SAXS Data Analysis: Compute the pairwise distance distribution function P(r) and the radius of gyration (Rg). Use

CRYSOLto calculate the theoretical scattering profile from the AF2-Multimer prediction. - Comparison: Quantify the fit between the experimental SAXS profile and the AF2-predicted model using a ( \chi^2 ) value. A low ( \chi^2 ) (< 2-3) supports the accuracy of the predicted oligomeric state and shape.

Protocol 3: Validating Membrane Protein Designs with Thermostability Assay (CPM)

- Protein Purification & Solubilization: Purify the designed membrane protein in a detergent (e.g., DDM, LMNG).

- Labeling: Mix protein with the cysteine-reactive, environmentally sensitive dye 7-diethylamino-3-(4'-maleimidylphenyl)-4-methylcoumarin (CPM).

- Thermal Denaturation: Using a real-time PCR machine or fluorimeter, heat the sample from 20°C to 95°C at a rate of 1°C/min while monitoring fluorescence (excitation ~387 nm, emission ~463 nm).

- Data Analysis: Fit the fluorescence melt curve to a Boltzmann sigmoidal equation to determine the melting temperature (Tm). A high Tm (> 50°C) often correlates with a well-folded, stable design. Compare the Tm to the predicted aligned error (PAE) and pLDDT of the transmembrane domains from AF2.

Visualizations

Title: AF2 Validation Workflow for Challenging Designs

Title: The AF2 vs. Ensemble Gap for Disordered Regions

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Validation | Example/Specific Use |

|---|---|---|

| Detergents (e.g., DDM, LMNG) | Solubilize and stabilize membrane proteins for purification and biophysical assays. | Used in CPM assay for designed membrane proteins. |

| Size-Exclusion Columns (SEC) | Separate proteins by size and assess homogeneity/oligomeric state. | Coupled with MALS for absolute molecular weight determination of oligomers. |

| NMR Isotope Labels ((^{15}N), (^{13}C)) | Enable detection of protein backbone and sidechain atoms by NMR spectroscopy. | Essential for acquiring 2D HSQC spectra to validate disordered regions. |

| CPM Dye | Environment-sensitive fluorescent dye that binds cysteine residues; fluorescence increases in hydrophobic environments. | Used to monitor thermal unfolding of membrane proteins in a detergent micelle. |

| Lipids (e.g., POPC, POPG) | Form synthetic lipid bilayers (liposomes/nanodiscs) to provide a native-like membrane environment. | For assessing membrane insertion and function of designed membrane proteins. |

| SAXS Reference Standards | Proteins of known shape and size (e.g., BSA) to calibrate SAXS instrument and data processing. | Ensure accurate Rg and molecular weight estimation from scattering data. |

| Protease Cocktails | Test the folded state and stability of a designed protein by resistance to proteolytic digestion. | Quick functional assay for both soluble and membrane protein designs. |

This comparison guide, framed within a thesis on using AlphaFold2 (AF2) structure prediction for validating de novo designed protein sequences, objectively evaluates key optimization strategies. The accuracy of AF2 predictions for novel sequences, which lack evolutionary homologs, is highly dependent on protocol adjustments. We compare the performance of standard AF2, AF2-multimer, and iterative relaxation against other leading structure prediction tools.

Performance Comparison of Optimization Strategies

The following table summarizes experimental data from recent benchmarks assessing the impact of MSA depth, multimer use, and relaxation on prediction accuracy for designed proteins and complexes.

Table 1: Comparative Performance of AF2 Optimization Protocols

| Method / System | Test Case | Key Metric (pLDDT / DockQ / RMSD) | Comparison Baseline | Result Summary |

|---|---|---|---|---|

| AF2 (Reduced MSA Depth) | De novo monomer designs | pLDDT > 90 | Standard AF2 (full MSA) | Maintains high confidence (>90) while reducing overfitting; 15% faster runtime. |

| AF2-multimer v2.3 | Designed protein complexes | DockQ Score: 0.85 | Standard AF2 (concatenated chains) | Superior interface accuracy (20% improvement in DockQ) for heterodimers. |

| AF2 + Iterative Relaxation | Designed peptides ( < 50 aa) | Backbone RMSD: 0.8 Å | AF2 single model + AMBER | Further refines local geometry; reduces steric clashes by 40% post-prediction. |

| RoseTTAFold2 | Novel fold designs | pLDDT: 88, TM-score: 0.75 | AF2 (reduced MSA) | Competitive accuracy but often lower pLDDT for topologically novel designs. |

| ESMFold | High-throughput validation | pLDDT: 82, Inference Speed: 60 seq/sec | AF2 (full MSA) | Much faster (80x), enabling screening, but lower accuracy on designed sequences. |

Experimental Protocols for Key Studies

Protocol 1: Evaluating MSA Strategy for De Novo Monomers

- Sequence Curation: Generate 50 de novo designed protein sequences with low homology (<20%) to natural proteins in the PDB.

- MSA Generation: For each sequence, create two MSAs using MMseqs2 against UniRef30: (A) Full-depth (Nseq=10,000), (B) Adjusted-depth (Nseq=1,000, max MSA cluster size=128).

- Structure Prediction: Run AF2 (model1ptm) with both MSA inputs using 3 recycle iterations and 25 seeds per target.

- Analysis: Compute average pLDDT and predicted TM-score (pTM) for the top-ranked model. Assess structural diversity of seed predictions.

Protocol 2: Benchmarking Complex Prediction with AF2-multimer

- Dataset: Use 15 recently solved crystal structures of de novo designed protein-protein interfaces not present in AF2 training sets.

- Prediction Methods: (i) AF2-multimer v2.3 (model2multimer_v3), (ii) Standard AF2 with chain concatenation.

- Execution: Supply sequences in paired FASTAs. Use 5 seeds, 3 recycles, and enable template mode for all.

- Evaluation: Calculate DockQ scores and interface RMSD (iRMSD) for the top-ranked model against the ground truth.

Protocol 3: Post-Prediction Relaxation Protocol

- Input Models: Select the top 5 ranked models from an AF2 or AF2-multimer run.

- Relaxation Setup: Use the amber_relax function within the AF2 codebase (OpenMM). Set maximum iterations to 200 and convergence tolerance to 0.5 kJ/mol/nm.

- Execution: Apply relaxation to each model independently, constraining backbone atoms with a mild force constant (5.0 kcal/mol/Ų) to prevent large deviations.

- Output: Compare the relaxation loss (final energy) and number of steric clashes (using MolProbity) pre- and post-relaxation.

Visualizing Optimization Workflows

Diagram 1: AF2 validation workflow for designs.

Diagram 2: AF2-multimer's paired MSA logic.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials & Tools for AF2 Validation Experiments

| Item | Function & Relevance | Example/Supplier |

|---|---|---|

| ColabFold | Cloud-based AF2/MMseqs2 pipeline. Enables rapid MSA generation and prediction without local GPU setup. | GitHub: sokrypton/ColabFold |

| AlphaFold2 (Local Install) | Local installation for batch processing, custom MSAs, and protocol modifications. Essential for iterative workflows. | GitHub: deepmind/alphafold |

| MMseqs2 | Ultra-fast protein sequence searching for generating deep or controlled-depth MSAs from sequence databases. | GitHub: soedinglab/MMseqs2 |

| UniRef30 Database | Clustered protein sequence database required for generating sensitive, non-redundant MSAs for AF2. | Download from UniProt |

| PDBx/mmCIF Files | Structure file format for ground truth experimental structures used in benchmarking predictions. | RCSB Protein Data Bank |

| MolProbity / Phenix | Suite for validating protein geometry, identifying steric clashes, and calculating validation scores. | phenix-online.org |

| DockQ Score Script | Automated metric for assessing the quality of protein-protein interface predictions. | GitHub: bjornwallner/DockQ |

| OpenMM & Amber Force Field | Simulation toolkit and force field used within AF2's relaxation protocol to refine physical realism. | openmm.org |

The dominance of AlphaFold2 (AF2) in protein structure prediction is undisputed. However, within the context of validating computationally designed protein sequences, sole reliance on a single prediction engine is a methodological vulnerability. Discrepancies can arise from inherent limitations in training data or methodology. This guide provides an objective comparison of two leading alternative deep learning tools, ESMFold and OmegaFold, for cross-checking AF2 predictions, ensuring robust validation in protein design pipelines.

Quantitative Performance Comparison

The following table summarizes key performance metrics from recent independent evaluations, primarily based on the CASP14 and CAMEO benchmarks.

Table 1: Comparative Performance of AF2, ESMFold, and OmegaFold

| Metric / Tool | AlphaFold2 (AF2) | ESMFold | OmegaFold |

|---|---|---|---|

| Average TM-score (CASP14) | 0.92 | 0.68 | 0.72 |

| Average GDT_TS (CASP14) | 87.0 | 65.4 | 69.1 |

| Inference Speed (seq/sec)* | ~1-3 | ~10-15 | ~5-8 |

| MSA Dependency | Heavy (JackHMMER/MMseqs2) | None (single-sequence) | None (single-sequence) |

| Typical Use Case | High-accuracy, full-resource prediction | Rapid screening, low MSA targets | Balanced speed/accuracy, low MSA targets |

| Key Architectural Strength | Evoformer + Structure Module, paired MSA | Transformer protein language model, end-to-end | Transformer with geometric attention, end-to-end |

*Speed is hardware-dependent; values are approximate relative comparisons on similar GPU hardware (e.g., A100).

Experimental Protocols for Cross-Checking

A robust validation protocol for a designed protein sequence involves generating structures from multiple independent systems.

Protocol 1: Triangulation of Prediction Confidence

- Input: A single amino acid sequence of a designed protein.

- Structure Generation:

- Run AF2 using default settings (e.g., via ColabFold), providing multiple sequence alignments (MSAs) from a large sequence database.

- Run ESMFold using the model weights (ESMFold v1) on the raw sequence without generating MSAs.

- Run OmegaFold (v2.2.0) on the raw sequence without generating MSAs.

- Output Analysis:

- Calculate pairwise TM-scores or RMSD between the top-ranked models from each tool.

- A designed sequence is considered "robustly validated" if all three models converge (e.g., pairwise TM-score > 0.8). Divergence (TM-score < 0.5) indicates a potential unstable fold or a failure mode specific to one method, warranting experimental scrutiny.

Protocol 2: Assessing MSA-Dependency in Designs

This protocol tests if a designed fold is contingent on evolutionary signals or is inherent to the physical law learning of language models.

- Input: A designed sequence and its naturally occurring homologs (if any).

- Experimental Groups:

- Group A (Full MSA): Predict structure using AF2 with the full, designed sequence's MSA.

- Group B (Single Sequence): Predict structure using AF2 without MSA (single-sequence mode), ESMFold, and OmegaFold.

- Output Analysis:

- Compare structures from Group B to each other and to the high-confidence Group A prediction.

- Convergence of Group B models with Group A suggests the design is stable independently of evolutionary priors. Discrepancy may indicate the design is overly reliant on spurious MSA correlations learned by AF2.

Visualization of Cross-Checking Workflows

Short Title: Triangulation Workflow for Validating Designed Sequences

Short Title: Protocol to Test MSA Dependence of a Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Computational Cross-Checking

| Item / Resource | Function in Validation Pipeline | Typical Source / Implementation |

|---|---|---|

| ColabFold | Provides streamlined, accelerated access to run AF2 and related tools (including single-sequence mode). | GitHub Repository / Public Notebooks |

| ESMFold Model Weights | The pre-trained parameters for the ESMFold protein language model required for structure inference. | Meta AI ESP Repository |

| OmegaFold Implementation | The standalone inference code and model for the OmegaFold architecture. | GitHub Repository (HeliXon) |

| PyMOL / ChimeraX | Molecular visualization software for manual inspection, structural alignment, and figure generation. | Open-Source / Academic Licenses |

| TM-score Algorithm | Objective metric for assessing topological similarity of two protein models, normalized to [0,1]. | Standalone executable or integration in Biopython. |

| MMseqs2 Server | For generating high-quality MSAs when required for the AF2 arm of the comparison. | Public API (ColabFold) or local installation. |

| Designed Sequence Dataset | A set of characterized (experimentally or in silico) protein designs to benchmark the cross-checking protocol. | Proprietary or from public databases (e.g., PDB, ProteinNet). |

Benchmarking Success: How to Quantitatively Validate AF2 Predictions Against Reality

Within the broader thesis of using AlphaFold2 (AF2) structure prediction for validating designed protein sequences, a critical evaluation against experimental structural biology gold standards is required. This guide compares AF2-predicted models to structures determined by X-ray crystallography and cryo-electron microscopy (cryo-EM), providing objective performance data and methodologies for correlation analysis.

Performance Comparison: AF2 vs. Experimental Methods

The following table summarizes key performance metrics for AF2 predictions against high-resolution experimental structures.

Table 1: Quantitative Comparison of AF2 Predictions to Experimental Structures

| Metric | AF2 vs. High-Res X-ray (<2.5Å) | AF2 vs. Cryo-EM (3-4Å) | Notes |

|---|---|---|---|

| Average RMSD (Backbone) | 0.5 - 1.5 Å | 1.0 - 3.0 Å | Lower RMSD indicates higher similarity. Variance depends on protein size and flexibility. |

| Average GDT_TS | 85 - 95+ | 70 - 90 | Global Distance Test score; higher is better (>90 indicates high accuracy). |

| Side-Chain Accuracy (χ1) | ~80% correct | ~70% correct | Measured for well-ordered residues. Lower in cryo-EM comparisons due to map resolution. |

| Confidence Correlation (pLDDT) | High pLDDT (>90) correlates with low RMSD. | Lower correlation; high pLDDT regions can diverge in flexible areas. | pLDDT is AF2's internal confidence metric. |

| Key Failure Mode | Novel conformers, allosteric states, large ligands. | Flexible domains, intricate macromolecular interfaces. | AF2 often predicts a single, ground-state conformation. |

Experimental Protocols for Correlation

To rigorously correlate AF2 predictions with experimental data, standardized protocols are essential.

Protocol 1: Structural Alignment and Metric Calculation

- Data Retrieval: Download the experimental structure (PDB format) and the corresponding AF2 prediction (generated via ColabFold or local installation using the target sequence).

- Pre-processing: Remove water molecules, ions, and hetero groups (e.g., ligands) from both structures using molecular visualization software (e.g., PyMOL, UCSF Chimera).

- Core Alignment: Perform a sequence-based alignment (e.g., using

cealignin PyMOL ormatchmakerin Chimera) focusing on the well-ordered core region of the experimental structure. - Metric Calculation:

- RMSD: Calculate the root-mean-square deviation of atomic positions (Cα or all backbone atoms) for the aligned regions.

- GDT_TS: Use tools like TM-score or the

gdt_tsfunction from BioPython derivatives to compute the Global Distance Test.

- Visualization of Differences: Superimpose structures and color-code by per-residue RMSD or highlight regions where AF2 diverges (>2Å Cα deviation).

Protocol 2: Fitting AF2 Models into Cryo-EM Density Maps

- Map and Model Preparation: Obtain the experimental cryo-EM map (

.mrcfile) and half-maps for FSC validation. Prepare the AF2 model in PDB format. - Rigid Body Fitting: Use

UCSF ChimeraXcommandfitmapto initially place the AF2 model into the electron density map as a rigid body. - Real-Space Refinement: Apply gentle real-space refinement (e.g., in

PHENIXorCoot) to avoid overfitting. Crucially, refine against one half-map and validate using the other. - Validation Metrics: Calculate the cross-correlation coefficient (CCC) of the model against the map. Generate a Fourier Shell Correlation (FSC) curve between the model and the map, comparing it to the gold-standard FSC of the experimental map.

Visualizing the Correlation Workflow

Diagram 1: Workflow for Correlating AF2 and Experimental Structures

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for AF2/Experimental Correlation Studies

| Item | Function in Correlation Analysis |

|---|---|

| ColabFold (Google Colab) | Provides accessible, cloud-based AF2 and AlphaFold Multimer prediction, generating pLDDT and PAE metrics. |

| PyMOL / UCSF ChimeraX | Industry-standard for structural visualization, superposition, RMSD calculation, and figure generation. |

| PHENIX Suite | Comprehensive toolkit for crystallographic and cryo-EM refinement and validation, including real-space refinement. |

| Coot | Model building tool essential for manual inspection and fitting of models into cryo-EM density or electron density maps. |

| MolProbity / PDB-REDO | Validation servers to assess stereochemical quality of both experimental and predicted models. |

| AlphaFill Database | Provides predicted positions of ligands and cofactors in AF2 models, aiding comparison with holo-experimental structures. |

Within the broader thesis on using AlphaFold2 (AF2) structure prediction to validate computationally designed protein sequences, quantifying structural similarity is paramount. This guide compares the performance of three standard metrics—Root Mean Square Deviation (RMSD), TM-score, and Global Distance Test Total Score (GDT_TS)—for evaluating designed protein structures against their AF2-predicted counterparts. These metrics serve as the experimental bridge between design intent and computational validation.

Metric Definitions and Comparative Analysis

The following table summarizes the core characteristics, strengths, and limitations of each key metric.

Table 1: Comparison of Key Structural Similarity Metrics

| Metric | Full Name | Range (Ideal) | Sensitivity to Alignment | Strengths | Limitations |

|---|---|---|---|---|---|

| RMSD | Root Mean Square Deviation | 0Å → ∞ (0) | High. Requires optimal superposition of all/selected Cα atoms. | Intuitive, measures average deviation. Units in Angstroms. | Highly sensitive to local errors; penalizes large proteins more. |

| TM-score | Template Modeling Score | 0 → 1 (1) | Low. Uses a length-dependent scale function. | Length-independent; >0.5 suggests similar fold; <0.17 random similarity. | Less intuitive scale; requires specific normalization parameters. |

| GDT_TS | Global Distance Test Total Score | 0 → 100 (100) | Moderate. Measures percentage of Cα atoms under a distance cutoff. | Clinically relevant for CASP; captures global topology. | Depends on chosen distance thresholds (e.g., 1, 2, 4, 8 Å). |

Experimental Protocol for Metric Calculation

A standardized workflow is essential for consistent comparison between a designed (target) structure and an AF2-predicted model.

- Data Preparation: Obtain the designed protein structure (

.pdb) and the corresponding AF2-predicted model (.pdb). Ensure both files contain only one model and are cleaned of heteroatoms/water. - Structural Alignment: Superimpose the predicted structure onto the designed reference structure. Use TM-score or USalign software, which internally performs an optimal alignment for its metrics. For RMSD-only calculation, tools like PyMOL (

aligncommand) or Biopython can be used. - Metric Calculation:

- RMSD: Calculate after optimal superposition over all Cα atoms or over the aligned regions only. Command:

USalign designed.pdb predicted.pdb -ter 0. - TM-score & GDT_TS: Directly compute using dedicated tools. Command:

TM-score predicted.pdb designed.pdborUSalign designed.pdb predicted.pdb -ter 0.

- RMSD: Calculate after optimal superposition over all Cα atoms or over the aligned regions only. Command:

- Data Interpretation: Compare scores against established benchmarks. A successful design validation typically requires TM-score >0.5, GDT_TS >50, and RMSD < 2.0Å for well-conserved cores.

Case Study Data: De Novo Designed vs. AF2-Predicted Structures

The following table presents hypothetical but representative data from a recent study within our thesis work, comparing three de novo designed protein monomers to their AF2-predicted structures.

Table 2: Metric Comparison for Three Designed Proteins

| Protein Design (Length) | RMSD (Å) | TM-score | GDT_TS | Interpretation |

|---|---|---|---|---|

| Design_1 (128 aa) | 1.42 | 0.78 | 84.5 | High-confidence validation. Fold successfully recapitulated. |

| Design_2 (89 aa) | 3.85 | 0.46 | 52.1 | Marginal fold similarity. Design may be unstable or misfolded. |

| Design_3 (215 aa) | 5.21 | 0.62 | 71.3 | TM-score/GDT_TS indicate correct global fold; high RMSD suggests flexible termini or domain shifts. |

Workflow for Structural Validation with AF2

Diagram Title: Structural Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Structural Comparison Experiments

| Item | Function in Validation | Example/Note |

|---|---|---|

| AlphaFold2 | Generates predicted 3D models from amino acid sequences. | Use local ColabFold for batch processing or AF2 protein notebook. |

| USalign | Performs optimal structural alignment and calculates all key metrics (RMSD, TM-score, GDT). | Preferred over standalone TM-score for unified alignment. |

| PyMOL | Visualization and manual inspection of structural overlays. | Critical for qualitative assessment of metric results. |

| Biopython (Bio.PDB) | Python library for programmatic parsing, alignment, and RMSD calculation. | Enables automation in large-scale validation studies. |

| CASP Assessment Criteria | Provides community-standard benchmarks for GDT_TS and TM-score interpretation. | Reference values for determining prediction quality. |

This guide examines recent, high-impact studies that successfully transitioned from AlphaFold2 (AF2) structural prediction to experimentally validated, functional proteins. The comparison is framed within the thesis that AF2 serves not just for structure elucidation, but as a critical feedback tool for validating and refining de novo designed sequences.

Comparative Analysis of Validation Studies

The table below summarizes key performance metrics from two seminal papers, comparing designed proteins to their natural counterparts or design objectives.

Table 1: Performance Comparison of De Novo Designed Proteins

| Study & Protein | Design Objective | Key Performance Metric | Result (Designed vs. Natural/Control) | Validation Method |

|---|---|---|---|---|