Generative AI for Molecule Generation: Core Principles, Methods, and Validation in Drug Discovery

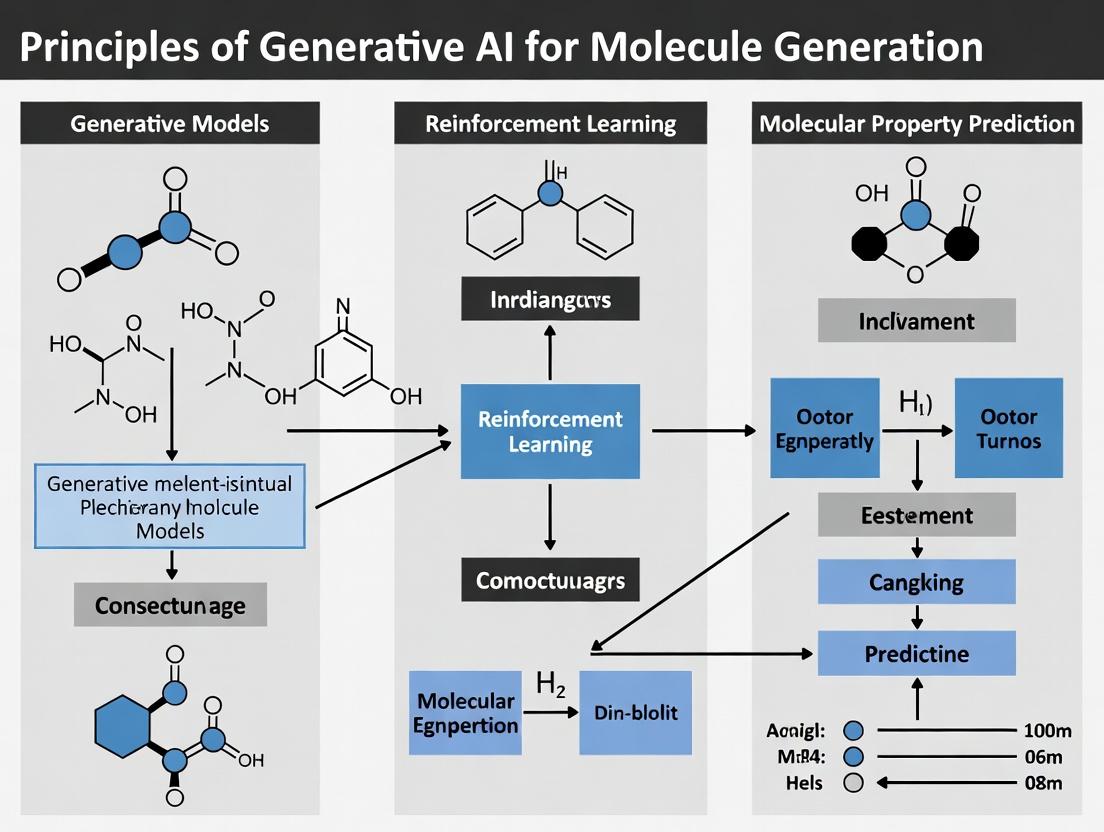

This article provides a comprehensive guide to the principles of generative AI for molecular design, tailored for researchers and drug development professionals.

Generative AI for Molecule Generation: Core Principles, Methods, and Validation in Drug Discovery

Abstract

This article provides a comprehensive guide to the principles of generative AI for molecular design, tailored for researchers and drug development professionals. It covers foundational concepts from molecular representations to core AI architectures like VAEs, GANs, and diffusion models. The guide delves into methodological applications for de novo design and property optimization, addresses common pitfalls in model training and output quality, and compares validation frameworks for assessing novelty, synthesizability, and efficacy. The goal is to equip scientists with the knowledge to implement and critically evaluate generative AI in accelerating therapeutic discovery.

From SMILES to Latent Space: Foundational Principles of AI-Driven Molecular Design

The drug discovery pipeline is a high-risk, capital-intensive, and lengthy process. The core challenge lies in navigating a vast, unexplored chemical space to identify viable candidate molecules. Quantitative data underscores the scale of the problem and the inefficiencies of traditional methods.

Table 1: The Traditional Drug Discovery Bottleneck (2020-2024 Averages)

| Metric | Value | Implication |

|---|---|---|

| Estimated synthesizable drug-like molecules | >10^60 | A search space impossible to exhaust empirically. |

| Average cost to bring a drug to market | ~$2.3B | Costs driven by high failure rates in clinical trials. |

| Average timeline from discovery to approval | 10-15 years | A significant portion spent on early-stage discovery. |

| Clinical trial success rate (Phase I to Approval) | ~7.9% | Attrition often due to lack of efficacy or safety (poor molecule properties). |

| Compound Attrition Rate (Pre-clinical to Phase I) | >90% | Highlights the poor predictive power of early in vitro models for in vivo outcomes. |

Traditional discovery, reliant on high-throughput screening (HTS) and medicinal chemistry optimization, is inherently limited. HTS explores only a tiny, biased fraction of chemical space (corporate libraries), while sequential optimization cycles are slow and prone to local maxima in property optimization.

The Core Problem: Multi-Objective Optimization in Chemical Space

The fundamental task is a constrained multi-objective optimization: generate novel molecular structures that simultaneously satisfy numerous, often competing, criteria.

Table 2: Key Objectives and Constraints in Molecule Generation

| Objective/Constraint Category | Specific Parameters | Traditional Challenge |

|---|---|---|

| Binding Affinity & Potency | pIC50, pKi, ΔG (binding free energy) | Requires expensive computational (e.g., docking) or experimental (e.g., SPR) validation per compound. |

| Drug-Likeness & ADMET | Lipinski's Rule of 5, Solubility, Metabolic Stability, hERG inhibition, Toxicity | Often evaluated late, leading to attrition. Difficult to optimize synthetically. |

| Synthetic Accessibility | Synthetic Accessibility Score (SAS), retrosynthetic complexity | Designed molecules may be impractical or prohibitively expensive to synthesize. |

| Novelty & IP | Tanimoto similarity to known compounds | Must navigate around existing patent landscapes. |

Generative AI as a Paradigm Shift

Generative AI models, framed within the thesis of Principles of generative AI for molecule generation research, address this by learning the joint probability distribution of chemical structures and their properties from data. Instead of searching, they propose.

Key Model Archetypes and Experimental Protocols:

Protocol A: Generative Model Training (e.g., Variational Autoencoder - VAE)

- Data Curation: Assemble a dataset of SMILES strings (e.g., from ZINC15, ChEMBL) and optionally, associated bioactivity or property labels.

- Tokenization: Convert SMILES strings into a sequence of tokens (atoms, bonds, branches).

- Model Architecture: Implement an encoder (maps SMILES to a continuous latent vector

z), a latent spacez, and a decoder (reconstructs SMILES fromz). A regularization term (KL divergence) enforces a structured latent space. - Training Objective: Minimize the reconstruction loss (cross-entropy) between input and output SMILES while regularizing the latent space.

- Interpolation/Generation: Novel molecules are generated by sampling vectors

zfrom the latent space and decoding them.

Protocol B: Goal-Directed Generation (Reinforcement Learning - RL)

- Pre-train a Generative Model: Train a VAE or RNN as a prior policy to generate valid SMILES.

- Define Reward Function:

R(molecule) = w1 * pActivity(molecule) + w2 * QED(molecule) - w3 * SAS(molecule). Proxy models (e.g., a random forest classifier trained on assay data) predictpActivity. - Fine-tune with Policy Gradient: Use an RL algorithm (e.g., REINFORCE, PPO) to update the generative model's parameters to maximize the expected reward. The agent (generator) proposes molecules, receives a reward from the reward function, and adjusts its policy.

- Evaluation: Synthesize and test top-ranked generated molecules in wet-lab assays.

Title: Generative AI-Driven Drug Discovery Workflow

The Scientist's Toolkit: Research Reagent Solutions for AI-Enabled Discovery

Table 3: Essential Materials for Validating Generative AI Output

| Item / Solution | Function in the Validation Workflow |

|---|---|

| DNA-Encoded Library (DEL) Kits | Enables ultra-high-throughput in vitro screening of millions of AI-generated virtual compounds against a protein target, providing experimental binding data for model refinement. |

| Recombinant Target Proteins | Purified, biologically active proteins (e.g., kinases, GPCRs) are essential for biochemical activity assays (e.g., fluorescence polarization, TR-FRET) to validate predicted binding/activity. |

| Cell-Based Reporter Assay Kits | Validates functional cellular activity (e.g., agonist/antagonist effect, pathway modulation) of synthesized AI-generated hits, moving beyond in silico or biochemical predictions. |

| LC-MS/MS Systems | Critical for confirming the chemical structure of synthesized molecules and assessing purity, ensuring the generative model's output is physically realized as intended. |

| Caco-2 Cell Lines | Standard in vitro model for early assessment of a compound's permeability, a key ADMET property predicted by AI models. |

| Liver Microsomes (Human/Rat) | Used in metabolic stability assays to measure clearance rates, providing experimental validation for AI-predicted metabolic liabilities. |

| hERG Channel Assay Kits | In vitro safety pharmacology test to assess potential cardiotoxicity risk, a critical constraint for AI models to learn and avoid. |

Title: Reinforcement Learning Cycle for Molecule Optimization

The problem space of drug discovery is defined by astronomical search complexity and costly sequential optimization. Generative AI, operating on the principle of learning to propose valid, optimized candidates directly, reframes this problem. By integrating predictive models within a closed-loop design-make-test-analyze cycle, it offers a systematic framework to explore chemical space more intelligently, directly addressing the core bottlenecks of cost, time, and attrition quantified in this analysis.

Within the broader thesis on the Principles of Generative AI for Molecule Generation, the choice of molecular representation is foundational. It dictates the architectural design of generative models, influences the physical and chemical validity of outputs, and ultimately determines the feasibility of discovering novel, functional molecules for drug development. This guide provides an in-depth technical analysis of the three predominant representations: SMILES strings, molecular graphs, and 3D coordinate frameworks.

SMILES (Simplified Molecular-Input Line-Entry System)

SMILES is a linear string notation describing molecular structure using ASCII characters. It encodes atoms, bonds, branching, and cyclic structures through a specific grammar.

Key Technical Aspects:

- Syntax: Atoms are represented by their atomic symbols (e.g., C, O, N). Single, double, triple, and aromatic bonds are denoted by

-,=,#, and:, respectively (often omitted for single and aromatic). Branches are enclosed in parentheses, and ring closures are indicated by matching digits. - Challenges for Generative AI: SMILES strings are a sequential, non-unique representation (multiple valid SMILES for one molecule). Generative models (e.g., RNNs, Transformers) must learn complex syntactic and semantic rules to ensure validity. Invalid strings are a common output, requiring post-hoc correction.

Quantitative Data on SMILES-based Generation:

Table 1: Performance Metrics of SMILES-based Generative Models (Representative Studies)

| Model Architecture | Key Metric | Value (Reported) | Dataset | Reference (Type) |

|---|---|---|---|---|

| RNN (RL-based) | % Valid SMILES | >90% | ZINC | Gómez-Bombarelli et al., 2018 |

| Transformer | Novelty (Unique @ 10k) | 100% | ChEMBL | Olivecrona et al., 2017 |

| GPT-style | Syntactic Validity Rate | 98.7% | PubChem | Recent Benchmark (2023) |

Experimental Protocol for SMILES Model Training:

- Data Curation: Assemble a dataset (e.g., from PubChem, ZINC) and canonicalize all SMILES strings using a toolkit like RDKit.

- Tokenization: Convert each character or meaningful substring (e.g.,

Cl,Br) into a discrete token. - Model Training: Train a sequence model (e.g., LSTM, GRU, Transformer decoder) with a language modeling objective (next-token prediction).

- Sampling: Generate new strings via autoregressive sampling from the trained model.

- Validation & Filtering: Parse generated strings with a chemistry toolkit (e.g., RDKit) to check chemical validity and compute properties.

Title: SMILES-based Generative AI Workflow

Molecular Graph Representations

Graphs provide a natural representation, where atoms are nodes and bonds are edges. This is inherently invariant to atom ordering and aligns with the principles of molecular structure.

Key Technical Aspects:

- Representation:

G = (V, E, H), whereVis the set of nodes (atom features: type, charge, hybridization),Eis the set of edges (bond features: type, conjugation), andHis the global context. - Generative Models: Graph Neural Networks (GNNs) are used as encoders. Generative approaches include autoregressive (adding nodes/edges stepwise) and one-shot (generating the entire graph matrix) methods.

Quantitative Data on Graph-based Generation:

Table 2: Performance Comparison of Graph-based Generative Models

| Model Type | Model Name | Validity (%) | Uniqueness (% @ 10k) | Time per Molecule (ms) | Key Advantage |

|---|---|---|---|---|---|

| Autoregressive | GraphINVENT | 95.5 | 99.9 | ~120 | High validity & novelty |

| One-shot (Flow) | GraphNVP | 82.7 | 100 | ~10 | Fast generation |

| One-shot (VAE) | JT-VAE | 100 | 96.7 | ~200 | Chemically valid by construction |

| Diffusion | EDM (2022) | 99.8 | 99.9 | ~50 | State-of-the-art quality |

Experimental Protocol for Graph Autoregressive Generation:

- Graph Construction: Convert all molecules in dataset to graphs with node/edge features using RDKit.

- Traversal Ordering: Define a deterministic algorithm (e.g., breadth-first search) to linearize the graph into a sequence of addition actions (add node, add edge, set attribute).

- Model Architecture: Employ a GNN to encode the partially generated graph and an RNN/Transformer to decode the next action.

- Training: Train via teacher forcing on the action sequences.

- Generation: Iteratively sample actions from the model to construct new graphs from an empty initial state.

Title: Autoregressive Graph Generation Process

3D Coordinate Frameworks

This representation explicitly models the spatial positions of atoms (x, y, z coordinates), which is critical for predicting binding affinity and other quantum chemical properties.

Key Technical Aspects:

- Representation: Set of atomic numbers

{Z_i}and coordinates{r_i}. May include vibrational and rotational degrees of freedom. - Generative Models: Geometry-aware models like SE(3)-equivariant GNNs (e.g., EGNN, GemNet) and diffusion models (e.g., GeoDiff) are state-of-the-art. They respect the physical symmetries of 3D space (rotation and translation invariance/equivariance).

Quantitative Data on 3D Molecule Generation:

Table 3: Metrics for 3D-Constrained Generative Models

| Model | Target | Average RMSD (Å) | Validity (%) | Stable Conformer (%) | Equivariance Guarantee |

|---|---|---|---|---|---|

| GeoDiff | Conformer Generation | 0.28 | N/A | 99.7 | Yes (SE(3)-Invariant) |

| EDM (Equivariant) | De Novo Generation | N/A | 92.4 | 85.2 | Yes (SE(3)-Equivariant) |

| G-SchNet | Conditional Generation | ~1.5 | 97.1 | 78.5 | No |

Experimental Protocol for 3D Diffusion Model Training:

- Dataset Preparation: Use a dataset of molecules with ground-state 3D geometries (e.g., QM9, GEOM-DRUGS). Center and optionally align structures.

- Noise Schedule Definition: Define a forward diffusion process that gradually adds Gaussian noise to atomic coordinates (and possibly atom types) over

Ttimesteps. - Model Design: Implement an equivariant neural network (e.g., using e3nn library) that predicts the denoising step (

ϵ_θ). Inputs are noisy coordinatesx_t, atom features, and timestept. - Training Objective: Minimize the mean-squared error between the predicted noise and the true noise added in the forward process.

- Sampling (Generation): Start from pure Gaussian noise

x_Tand iteratively apply the trained model to denoise forTsteps, yielding a new 3D structurex_0.

Title: 3D Diffusion Model Training and Sampling

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Software Tools for Molecular Representation Research

| Tool Name | Category | Primary Function in Molecule Generation | Key Feature |

|---|---|---|---|

| RDKit | Cheminformatics | Molecule I/O, feature calculation, SMILES parsing/validation, graph conversion. | Open-source, robust, Python API. |

| PyTorch Geometric (PyG) | Deep Learning | Library for GNNs on molecular graphs. Efficient batch processing of graphs. | Large suite of GNN layers & datasets. |

| DGL-LifeSci | Deep Learning | Domain-specific GNN implementations & pre-training for molecules. | Built-in SOTA model architectures. |

| e3nn | Deep Learning | Framework for building E(3)-equivariant neural networks for 3D data. | Implements irreducible representations. |

| Open Babel | Cheminformatics | File format conversion, especially for 3D coordinates (e.g., SDF, PDB). | Supports vast array of formats. |

| Jupyter Lab | Development | Interactive computing environment for prototyping and analysis. | Combines code, visualizations, text. |

| OMEGA (OpenEye) | Conformer Generation | High-quality rule-based 3D conformer generation for benchmarking. | Industry-standard, high accuracy. |

| ANTON2 (D.E. Shaw) | MD Simulations | Ultra-long-timescale simulations for validating generated molecule stability. | Specialized hardware for MD. |

This whitepaper provides an in-depth technical overview of three core generative architectures—Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Diffusion Models—within the critical context of generative AI for molecule generation research. The discovery and design of novel molecular structures with desired properties is a fundamental challenge in drug development. Generative AI offers a paradigm shift from high-throughput screening to de novo design, enabling the exploration of vast, uncharted regions of chemical space. This document details the operational principles, comparative performance, and experimental protocols for applying these architectures to molecular generation, equipping researchers and drug development professionals with the knowledge to select and implement appropriate methodologies.

Architectural Foundations & Comparative Analysis

Variational Autoencoders (VAEs)

VAEs are probabilistic generative models that learn a latent, compressed representation of input data. In molecule generation, they encode molecular structures (e.g., SMILES strings or graphs) into a continuous latent space where interpolation and sampling are possible.

Core Mechanism: A VAE consists of an encoder network ( q\phi(z|x) ) that maps input ( x ) to a distribution over latent variables ( z ), and a decoder network ( p\theta(x|z) ) that reconstructs the input from a sample of ( z ). Training maximizes the Evidence Lower Bound (ELBO): [ \mathcal{L}{\text{ELBO}} = \mathbb{E}{q\phi(z|x)}[\log p\theta(x|z)] - D{\text{KL}}(q\phi(z|x) \| p(z)) ] where ( p(z) ) is typically a standard normal prior. The first term is a reconstruction loss, and the second is a regularization term that encourages the latent space to be well-structured.

Application to Molecules: The decoder is trained to generate valid molecular structures, often using SMILES syntax-aware RNNs or graph neural networks.

Generative Adversarial Networks (GANs)

GANs frame generation as an adversarial game between two networks: a Generator (G) that creates samples, and a Discriminator (D) that distinguishes real data from generated fakes.

Core Mechanism: The generator ( G(z) ) maps noise ( z ) to data space. The discriminator ( D(x) ) outputs the probability that ( x ) is real. They are trained simultaneously via a minimax objective: [ \minG \maxD V(D, G) = \mathbb{E}{x \sim p{\text{data}}(x)}[\log D(x)] + \mathbb{E}{z \sim pz(z)}[\log(1 - D(G(z)))] ] For molecules, sequences or graphs generated by G must adhere to chemical validity rules, which often requires specialized adversarial setups or reinforcement learning rewards.

Diffusion Models

Diffusion models generate data by progressively denoising a variable starting from pure noise. They consist of a forward (diffusion) process and a reverse (denoising) process.

Core Mechanism:

- Forward Process: Gradually adds Gaussian noise to data ( x0 ) over ( T ) steps, producing a sequence of noisy samples ( x1, ..., xT ). The transition is defined as ( q(xt | x{t-1}) = \mathcal{N}(xt; \sqrt{1-\betat} x{t-1}, \betat I) ), where ( \betat ) is a noise schedule.

- Reverse Process: A neural network ( \epsilon\theta(xt, t) ) is trained to predict the noise added at step ( t ). Generation starts from ( xT \sim \mathcal{N}(0, I) ) and iteratively applies: [ x{t-1} = \frac{1}{\sqrt{\alphat}}(xt - \frac{\betat}{\sqrt{1-\bar{\alpha}t}} \epsilon\theta(xt, t)) + \sigmat z ] where ( \alphat = 1 - \betat ), ( \bar{\alpha}t = \prod{s=1}^t \alphas ), and ( z \sim \mathcal{N}(0, I) ).

Application to Molecules: The model operates directly on molecular graph representations (atom types, bonds) or 3D coordinates, learning to invert a noising process applied to the discrete graph structure or continuous conformational space.

Quantitative Comparison of Architectures for Molecule Generation

The following table summarizes key performance metrics and characteristics of the three architectures, as reported in recent literature (2023-2024).

Table 1: Comparative Analysis of VAE, GAN, and Diffusion Models for Molecular Generation

| Metric / Characteristic | VAEs | GANs | Diffusion Models |

|---|---|---|---|

| Training Stability | High (direct likelihood training) | Low (prone to mode collapse, vanishing gradients) | Medium-High (stable but computationally intensive) |

| Sample Diversity | Moderate (can suffer from posterior collapse) | Variable (high if well-trained, but mode collapse reduces it) | High |

| Generation Quality (Validity %) | ~70-95% (depends on decoder and latent space regularization) | ~80-100% (with advanced adversarial or RL techniques) | ~90-100% (especially for 3D conformer generation) |

| Latent Space Interpretability | High (continuous, smooth, enables interpolation) | Low (no direct latent space, interpolation may not be meaningful) | Moderate (latent space is the noise trajectory) |

| Computational Cost (Training) | Moderate | High (requires careful balancing of G and D) | Very High (many denoising steps) |

| Computational Cost (Inference) | Low (single forward pass) | Low (single forward pass) | High (requires many iterative denoising steps) |

| Primary Use Case in Molecule Gen. | Exploration of latent space, property optimization, scaffold hopping. | High-fidelity generation of novel structures conditioned on properties. | High-quality generation of 2D graphs and 3D molecular conformations. |

| Key Challenge in Molecule Gen. | Generating 100% valid SMILES/Graphs; balancing KL loss. | Unstable training; ensuring chemical validity without post-hoc checks. | Slow sampling; modeling discrete graph structures. |

Experimental Protocols for Molecular Generation

Protocol: Training a VAE for SMILES-basedDe NovoDesign

Objective: Train a VAE to generate novel, valid SMILES strings with optimized chemical properties.

Materials: See "The Scientist's Toolkit" (Section 5). Dataset: 1-2 million drug-like SMILES from ZINC or ChEMBL. Preprocessing: Canonicalize SMILES, filter by length (e.g., 50-120 characters), apply tokenization (character or BPE).

Method:

- Model Architecture: Implement encoder (2-layer bidirectional GRU) and decoder (2-layer GRU). Latent dimension: 128.

- Training: a. Use Adam optimizer (lr=0.0005). b. For each batch, encoder computes ( \mu ) and ( \sigma ) for latent distribution ( q\phi(z|x) ). c. Sample ( z ) using reparameterization: ( z = \mu + \sigma \odot \epsilon ), where ( \epsilon \sim \mathcal{N}(0, I) ). d. Decoder reconstructs SMILES sequence from ( z ) using teacher forcing. e. Loss: ( \mathcal{L} = \mathcal{L}{\text{recon}} (\text{CrossEntropy}) + \beta \cdot D{\text{KL}}(q\phi(z|x) \| \mathcal{N}(0, I)) ). Apply KL annealing (increase ( \beta ) from 0 to 1 over epochs).

- Validation: Monitor reconstruction accuracy, validity rate (percentage of decoded SMILES parsable by RDKit), and uniqueness of generated samples.

- Generation & Optimization: Sample ( z \sim \mathcal{N}(0, I) ) and decode. For optimization, use gradient ascent in latent space guided by a property predictor network.

Protocol: Training a Conditional GAN for Target-Specific Molecule Generation

Objective: Train a GAN to generate molecules conditioned on a target protein fingerprint or desired pharmacological profile.

Materials: See "The Scientist's Toolkit." Dataset: Paired data of molecules and their bioactivity (e.g., IC50) or target class (e.g., kinase inhibitor).

Method:

- Model Architecture: Use a Wasserstein GAN with Gradient Penalty (WGAN-GP). Generator: 3-layer fully connected network mapping noise ( z ) and condition vector ( c ) to a molecular fingerprint (e.g., ECFP4). Discriminator/Critic: 3-layer network taking fingerprint and condition ( c ), outputting a scalar.

- Training: a. Optimizers: Adam (lr=0.0001, ( \beta1=0.5, \beta2=0.9 )). b. For each iteration: i. Train Critic ( n_{\text{critic}} ) times (e.g., 5): Sample real fingerprints ( x ), conditions ( c ), generated fingerprints ( \tilde{x} = G(z, c) ), and random interpolation ( \hat{x} ). Compute Wasserstein loss and gradient penalty. ii. Train Generator once: Maximize ( D(G(z, c), c) ). c. Condition ( c ) is concatenated to both noise input (for G) and fingerprint input (for D).

- Post-Processing: Decode generated fingerprints to molecules using a library lookup (e.g., nearest neighbor in training set) or a trained inverse model.

- Validation: Assess condition-specific generation success rate, diversity of outputs, and docking scores against the target protein.

Protocol: Training a Diffusion Model for 3D Molecular Conformer Generation

Objective: Train a diffusion model to generate realistic 3D molecular conformations given a 2D graph.

Materials: See "The Scientist's Toolkit." Dataset: GEOM-DRUGS or QM9 with 3D conformations.

Method:

- Noising Process (Forward): Define noise schedules ( \betat ) for atom coordinates ( \mathbf{x} ) and atom types ( \mathbf{h} ). For coordinates, noise is added as: ( q(\mathbf{x}t | \mathbf{x}{t-1}) = \mathcal{N}(\mathbf{x}t; \sqrt{1-\betat} \mathbf{x}{t-1}, \beta_t I) ). For atom types (categorical), use a discrete diffusion or mask/noise schedule.

- Denoising Network: Implement a Graph Neural Network (e.g., EGNN, Equivariant GNN) that takes noisy graph ( (\mathbf{x}t, \mathbf{h}t) ) and timestep ( t ) to predict the clean features. The network must be equivariant to 3D rotations/translations for coordinates.

- Training: a. Sample a clean molecule graph ( (\mathbf{x}0, \mathbf{h}0) ), a timestep ( t \sim \text{Uniform}(1, T) ), and noise ( \epsilon ). b. Apply the forward process to obtain ( (\mathbf{x}t, \mathbf{h}t) ). c. Train the network ( \epsilon_\theta ) to predict the added noise ( \epsilon ) for coordinates and the denoised atom types for ( \mathbf{h} ). d. Use Adam optimizer with learning rate scheduling.

- Sampling (Reverse Process): a. Start from random noise for coordinates and random/masked atom types. b. For ( t = T ) to 1: Use the trained network ( \epsilon\theta ) to compute ( (\mathbf{x}{t-1}, \mathbf{h}_{t-1}) ). For atom types, sample from the predicted categorical distribution. c. Apply potential corrections (e.g., valency check).

- Validation: Evaluate stability of generated conformers (low energy), faithfulness to distance distributions, and diversity.

Architectural and Workflow Visualizations

Diagram Title: VAE Training and Sampling Workflow

Diagram Title: Adversarial Training Loop of a GAN

Diagram Title: Forward and Reverse Processes in a Diffusion Model

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Computational Tools and Libraries for Generative Molecule Research

| Item / Reagent | Provider / Library | Function in Experiments |

|---|---|---|

| Chemical Dataset | ZINC, ChEMBL, PubChem | Source of millions of known molecular structures for training and benchmarking. |

| 3D Conformer Dataset | GEOM-DRUGS, QM9 | Provides high-quality ground-truth 3D molecular geometries for training diffusion/VAE models. |

| Chemistry Toolkit | RDKit | Open-source cheminformatics toolkit for SMILES parsing, validity checks, fingerprint generation, and molecular property calculation. |

| Deep Learning Framework | PyTorch, TensorFlow | Core frameworks for building and training VAE, GAN, and Diffusion model neural networks. |

| Graph Neural Network Library | PyTorch Geometric, DGL | Specialized libraries for implementing graph-based encoders/decoders (critical for molecules). |

| Equivariant NN Library | e3nn, SE(3)-Transformers | Libraries for building 3D rotation-equivariant networks, essential for 3D diffusion models. |

| Molecular Docking Software | AutoDock Vina, Glide | For in silico validation of generated molecules by predicting binding affinity to a target protein. |

| High-Performance Computing | NVIDIA GPUs (A100/H100) | Essential for training large-scale generative models, especially diffusion models, in a reasonable time. |

| Hyperparameter Optimization | Weights & Biases, Optuna | Tools for tracking experiments, visualizing results, and systematically optimizing model hyperparameters. |

| Generation Evaluation Suite | GuacaMol, MOSES | Standardized benchmarking frameworks to evaluate the quality, diversity, and properties of generated molecules. |

Within the broader thesis on Principles of generative AI for molecule generation research, the latent space serves as the foundational substrate for rational molecular design. It is a compressed, continuous, and structured representation where molecular structures are embedded, enabling operations impossible in discrete structural space. This whitepaper provides an in-depth technical examination of three critical latent space functionalities: smooth interpolation between molecules, the construction and navigation of property landscapes, and the controlled generation of molecules with targeted attributes. Mastery of these concepts is pivotal for advancing generative AI applications in de novo drug design.

The Architecture of Molecular Latent Spaces

Generative models for molecules, such as Variational Autoencoders (VAEs), Adversarial Autoencoders (AAEs), and Graph-based models, learn to map discrete molecular graphs (or SMILES strings) ( M ) into a continuous latent vector ( z \in \mathbb{R}^d ). The encoder ( E ) and decoder ( D ) functions are learned such that ( D(E(M)) \approx M ), with the latent space regularized for continuity and smoothness.

Key Quantitative Performance Metrics for Latent Space Models: The efficacy of a latent space is benchmarked by its reconstruction accuracy, novelty, and validity.

Table 1: Benchmark Performance of Common Molecular Generative Models (Representative Data)

| Model Architecture | Validity (%) | Uniqueness (%) | Reconstruction Accuracy (%) | Latent Dimension (d) |

|---|---|---|---|---|

| VAE (SMILES) | 43.5 - 97.3 | 90.1 - 100 | 53.7 - 90.8 | 196 - 512 |

| AAE (SMILES) | 60.2 - 98.7 | 99.9 - 100 | 76.2 - 94.1 | 256 |

| Graph VAE | 55.7 - 100 | 99.5 - 100 | 75.4 - 100 | 64 - 128 |

| JT-VAE | 100 | 100 | 76.0 - 100 | 56 |

| Characteristic VAEs | 85.0 - 99.9 | 99.6 - 100 | 97.0 - 99.9 | 128 |

Note: Ranges reflect reported values across studies on datasets like ZINC250k and QM9. JT-VAE (Junction Tree VAE) enforces strict syntactic validity.

Interpolation: Traversing Chemical Space

Interpolation defines a continuous path between two latent points ( za ) and ( zb ), corresponding to molecules ( Ma ) and ( Mb ). The simplest method is linear interpolation: ( z(t) = (1-t)za + t zb ), for ( t \in [0,1] ). A successful interpolation yields decoded molecules that are structurally intermediate and valid at all points.

Experimental Protocol for Evaluating Interpolation:

- Model Training: Train a molecular VAE/AAE on a dataset (e.g., ZINC250k).

- Pair Selection: Select seed molecule pairs with known property differences (e.g., LogP, QED).

- Latent Encoding: Encode seeds to ( za ), ( zb ).

- Path Sampling: Sample ( n ) points (e.g., ( n=9 )) along the interpolation vector.

- Decoding & Analysis: Decode each ( z(t) ). Calculate:

- Validity Rate: Percentage of decoded strings that are valid SMILES/graphs.

- Smoothness: Measure of monotonic change in molecular properties (e.g., Molecular Weight, Synthetic Accessibility Score) along the path.

- Intermediate Character: Use molecular fingerprints (ECFP4) to verify that intermediate molecules share increasing/decreasing similarity with the endpoints.

Diagram 1: Molecular Interpolation Workflow (Max 760px)

Property Landscapes: Mapping and Navigation

A property landscape is a continuous surface defined over the latent space by a predictor function ( f: \mathbb{R}^d \rightarrow \mathbb{R} ) that maps a latent vector to a molecular property (e.g., binding affinity, solubility). This enables gradient-based optimization in latent space: ( z{new} = z + \eta \nablaz f(z) ), where ( \eta ) is the step size.

Experimental Protocol for Constructing a Property Landscape:

- Latent Dataset Generation: Encode a large library of molecules (10k-1M) into latent vectors ( Z ).

- Property Labeling: Compute or obtain experimental values for a target property ( y ) for each molecule.

- Predictor Model Training: Train a supervised model (e.g., a shallow Neural Network, Gaussian Process) on ( (Z, y) ) to learn ( f ).

- Landscape Visualization: Use dimensionality reduction (t-SNE, UMAP) on ( Z ) and color points by predicted ( f(z) ) to visualize "hills" (high property) and "valleys" (low property).

- Navigation via Gradient Ascent: Select a starting ( z ), compute the gradient of the predictor ( \nabla_z f(z) ), and iteratively take steps in the direction of increasing predicted property. Decode samples at each step.

Table 2: Common Property Predictors Used in Landscape Navigation

| Predictor Model | Typical Training Set Size | Prediction Target Examples | Key Advantage |

|---|---|---|---|

| Random Forest | 5,000 - 50,000 molecules | LogP, QED, pIC50 | Robust to noise, interpretable feature importance. |

| Feed-Forward NN | 10,000 - 500,000 molecules | Synthetic Accessibility, Toxicity | Captures complex non-linear relationships. |

| Gaussian Process | 1,000 - 10,000 molecules | Expensive quantum properties | Provides uncertainty estimates. |

Control: Guided Generation and Optimization

Control refers to the direct manipulation of the latent space to generate molecules satisfying multiple constraints. This is often formalized as a constrained optimization problem: ( \text{maximize } g(z) \text{ subject to } ci(z) \leq \taui ), where ( g ) is an objective function (e.g., bioactivity) and ( c_i ) are constraint functions (e.g., lipophilicity, molecular weight).

Experimental Protocol for Controlled Latent Space Optimization (Reinforcement Learning Setting):

- Pre-train a Generative Model: A VAE is trained to reconstruct molecules.

- Define Reward/Property Functions: Implement functions ( R_i(M) ) that score a molecule on desired attributes.

- Fine-tune with Policy Gradient: The decoder ( D(z) ) is treated as a stochastic policy ( \pi(M\|z) ). A Prior-Guided Optimization algorithm is used:

- Sample a batch of latent vectors ( z ) from a prior (e.g., Gaussian).

- Decode them to molecules ( M ).

- Compute a composite reward ( R{total} = \sum \lambdai Ri(M) ), penalizing invalid structures.

- Update the encoder and decoder parameters to increase the probability of generating molecules with high ( R{total} ), while keeping latent representations close to the prior to maintain smoothness.

- Evaluation: Assess the percentage of generated molecules that meet all target property thresholds ("success rate") and their diversity.

Diagram 2: RL-based Latent Space Optimization (Max 760px)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Latent Space Research in Molecular Generation

| Tool / Resource | Category | Primary Function | Example Implementation / Source |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecule parsing, fingerprint generation, property calculation, and 2D rendering. | Open-source Python module (rdkit.org). |

| PyTorch / TensorFlow | Deep Learning Framework | Building, training, and deploying VAEs, AAEs, and property predictors. | Open-source libraries. |

| ZINC Database | Molecular Dataset | Source of commercially available, drug-like molecules for training generative models. | zinc.docking.org |

| ChEMBL | Bioactivity Database | Source of experimental bioactivity data for training property predictors. | www.ebi.ac.uk/chembl/ |

| Gaussian / GAMESS | Quantum Chemistry Software | Computing high-fidelity molecular properties for small sets of generated molecules. | Commercial & open-source packages. |

| t-SNE / UMAP | Dimensionality Reduction | Visualizing high-dimensional latent spaces and property landscapes in 2D/3D. | scikit-learn, umap-learn |

| MOSES | Benchmarking Platform | Standardized toolkit for training and evaluating molecular generative models. | github.com/molecularsets/moses |

| AutoDock Vina / Gnina | Molecular Docking | In silico evaluation of generated molecules' binding affinity to a target protein. | Open-source docking software. |

Within the thesis Principles of Generative AI for Molecule Generation Research, the quality and characteristics of training data are not merely preliminary considerations but foundational determinants of model validity. This guide examines the triad of data curation, inherent bias, and chemical space coverage, establishing the empirical ground truth upon which generative hypotheses are built and evaluated.

Effective curation integrates heterogeneous data sources, each with unique preprocessing demands.

Table 1: Primary Data Sources for Molecular Generative AI

| Source | Example Repositories | Key Data Type | Primary Curation Challenge |

|---|---|---|---|

| Public Bioactivity | ChEMBL, PubChem | SMILES, IC50, Ki, Assay Metadata | Standardization of identifiers, activity thresholds, duplicate removal. |

| Commercial Compounds | ZINC, Enamine REAL | SMILES, Purchasability Flags, 3D Conformers. | License compliance, structural filtering (e.g., PAINS, reactivity). |

| Patent Literature | SureChEMBL, USPTO | SMILES, Claimed Utility, Markush Structures. | Extraction of specific examples from generic claims, text-mining noise. |

| Quantum Chemistry | QM9, ANI-1x | 3D Geometries, Energies, Electronic Properties. | Computational consistency, convergence criteria, format alignment. |

Experimental Protocol: High-Confidence Bioactivity Data Extraction from ChEMBL

- Query & Download: Execute SQL query on ChEMBL to select records for a specific target (e.g.,

CHEMBL240for EGFR). Filter bystandard_type('IC50', 'Ki'),standard_relation('='), anddata_validity_commentis NULL. - Standardization: Standardize molecules using RDKit (

Chem.MolFromSmiles,rdMolStandardize.Standardizer). Remove salts, neutralize charges, and generate canonical SMILES. - Thresholding: Apply an activity threshold (e.g.,

standard_value≤ 100 nM) to define "active" compounds. - Duplicate Resolution: Group by canonical SMILES. For duplicates, retain the median

standard_value. Report the coefficient of variation (CV) for duplicates; exclude entries with CV > 50%. - Assay Consistency Filter: Retain compounds only tested in assays with a consistent format (e.g., all

assay_type= 'B' for binding).

Quantifying and Addressing Dataset Bias

Bias arises from non-uniform sampling of chemical space and research trends.

Table 2: Common Biases in Molecular Training Data

| Bias Type | Quantitative Measure | Mitigation Strategy |

|---|---|---|

| Structural Bias | Distribution of molecular weight, logP, ring counts vs. a reference space (e.g., GDB-13). | Strategic undersampling of overrepresented clusters; augmentation with synthetic negatives. |

| Assay Bias | Over-representation of certain target families (e.g., kinases) vs. others (e.g., GPCRs). | Per-family stratification during train/test split; use of transfer learning from broad to specific sets. |

| Potency Bias | Skew towards highly potent compounds, lacking intermediate/inactive examples. | Explicit inclusion of confirmed inactive data from PubChem AID assays or generative negative sampling. |

| Publication Bias | Prevalence of "successful" hit-to-lead series, avoiding reported failures. | Incorporation of proprietary or crowdsourced negative data (e.g., USPTO rejections). |

Experimental Protocol: Measuring Structural Bias via Principal Component Analysis (PCA)

- Descriptor Calculation: For both the training dataset (Dtrain) and a broad reference set (Dref, e.g., a random sample from PubChem), compute a set of 200-dimensional molecular descriptors (e.g., RDKit fingerprints, or Mordred descriptors).

- PCA Projection: Concatenate descriptors from Dtrain and Dref. Apply StandardScaler, then fit PCA to reduce to 2 principal components (PCs). Transform both sets using the fitted PCA.

- Density Estimation: Perform Kernel Density Estimation (KDE) on the PC space for D_ref to estimate the probability density of the broader chemical space.

- Bias Quantification: For each molecule in Dtrain, calculate its log-likelihood under the KDE model of Dref. The average log-likelihood and its variance quantify how "atypical" the training set is. A significantly lower average indicates high bias.

- Visualization: Generate a 2D scatter plot colored by dataset origin, overlaid with contours from D_ref's KDE.

Title: Protocol for Quantifying Structural Dataset Bias

Chemical Space Coverage and Model Generalization

The ultimate goal is training data that enables extrapolation within a defined region of chemical space.

Title: Model Generalization Zones from Training Data

Table 3: Methods for Assessing Chemical Space Coverage

| Method | Input | Output Metric | Interpretation |

|---|---|---|---|

| t-SNE/UMAP Visualization | Molecular Fingerprints. | 2D/3D Map. | Qualitative cluster identification and gap detection. |

| Sphere Exclusion Clustering | Fingerprints, similarity cutoff (Tanimoto). | Number of clusters, members per cluster. | Quantitative measure of diversity; sparse coverage yields few, dense clusters. |

| PCA Coverage Ratio | Descriptors (as in Bias Protocol). | % of Dref's density contour containing Dtrain points. | Proportion of a defined reference space that is sampled. |

| Property Distribution Stats | MW, logP, HBD, HBA, etc. | Kolmogorov-Smirnov statistic vs. D_ref. | Statistical difference in key property distributions. |

Experimental Protocol: Sphere Exclusion for Training Set Diversity Analysis

- Fingerprint Generation: Encode all molecules in the candidate training set as ECFP4 fingerprints (radius=2, 1024 bits).

- Similarity Matrix: Calculate the pairwise Tanimoto similarity matrix.

- Clustering: Apply the Sphere Exclusion algorithm (max-min picking): a. Randomly select the first molecule as a cluster center. b. For all remaining molecules, calculate the minimum similarity to any existing cluster center. c. Select the molecule with the lowest maximum similarity (i.e., the most dissimilar) as the next center if its similarity is below a threshold (e.g., 0.5 Tanimoto). d. Repeat step b-c until no more molecules meet the criterion.

- Coverage Analysis: Assign all non-center molecules to the cluster of their most similar center. The number of clusters and the size distribution of clusters directly quantify the diversity and uniformity of coverage.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Data Curation and Analysis

| Tool / Reagent | Function / Purpose | Key Feature for Curation |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. | SMILES standardization, descriptor/fingerprint calculation, substructure filtering, 2D depiction. |

| KNIME or Pipeline Pilot | Visual workflow automation platforms. | Orchestrating multi-step curation pipelines from source to cleaned dataset. |

| ChEMBL Web Resource Client / API | Programmatic access to ChEMBL data. | Automated querying and retrieval of large-scale bioactivity data with metadata. |

| Mordred Descriptor Calculator | Computes >1800 molecular descriptors. | Comprehensive chemical characterization for bias and coverage analysis. |

| scikit-learn | Python machine learning library. | Implementation of PCA, clustering, and statistical tests for data analysis. |

| Tanimoto Similarity | Metric for comparing molecular fingerprints. | Core metric for clustering, diversity selection, and similarity searching. |

| PAINS/Unwanted Substructure Filters | Rule-based sets (e.g., RDKit FilterCatalog). | Flagging compounds with potentially problematic reactivity or assay interference. |

Building and Applying Generative Models: From De Novo Design to Lead Optimization

Within the broader principles of generative AI for molecule generation, a central thesis posits that effective generative models must seamlessly integrate the dual objectives of novelty and targeted functionality. Conditional generation is the operational realization of this principle, moving beyond unconditional exploration to steer the molecular design process toward regions of chemical space defined by specific, desirable properties. This technical guide examines the architectures, training paradigms, and experimental protocols that enable this precise steering, with a focus on critical drug discovery parameters such as pIC50 (potency) and LogP (lipophilicity).

Foundational Architectures & Conditioning Mechanisms

Steering requires the model to learn ( P(Molecule | Property) ). Key architectural implementations include:

- Conditional Variational Autoencoders (CVAE): The property condition ( y ) (e.g., pIC50 > 6) is concatenated with the latent vector ( z ) and/or the encoder input. The decoder learns to reconstruct molecules given their latent representation and the target property.

- Conditional Generative Adversarial Networks (cGAN): The generator receives random noise concatenated with a conditioning vector ( y ). The discriminator evaluates both the realism of the molecule and its congruence with the condition.

- Transformer-based Language Models: Conditions are prepended as special tokens (e.g.,

[LogP<3]) to the SMILES or SELFIES string, enabling the model to learn the association between the token and the subsequent molecular sequence. - Graph-Based Models: Conditions are incorporated as global features that modulate the message-passing or graph refinement process.

The following diagram illustrates the core conditional generation workflow within a molecule generation framework.

Experimental Protocols for Model Training & Evaluation

Protocol: Training a Conditional RNN (cRNN) for LogP Optimization

Objective: Train a character-level RNN to generate valid SMILES strings conditioned on a specified LogP range.

Data Curation:

- Source: ChEMBL or ZINC database.

- Preprocessing: Standardize molecules, remove salts, and compute LogP using RDKit's

Crippenmodule. - Binning: Discretize LogP values into bins (e.g., <-1, -1 to 3, >3). Each bin is a conditioning label ( y ).

Model Architecture:

- An embedding layer for SMILES characters and the condition label.

- Two stacked LSTM layers (256 units each).

- A dense output layer with softmax over the character vocabulary.

Training Regime:

- Input Format:

[Condition_Token] + [SMILES_Characters]. - Loss: Categorical cross-entropy for next-character prediction.

- Optimizer: Adam (learning rate = 0.001).

- Validation: Monitor validity (RDKit parsability) and condition satisfaction (% of generated molecules falling within the target LogP bin) on a hold-out set.

- Input Format:

Protocol: Reinforcement Learning (RL) Fine-tuning for pIC50

Objective: Fine-tune a pre-trained unconditional generator to maximize predicted pIC50 against a target protein.

- Base Model: A pre-trained SMILES VAE or GPT model.

- Proxy Predictor: Train a separate feed-forward neural network on assay data to predict pIC50 from molecular fingerprints (ECFP4).

- RL Setup (Policy Gradient):

- Agent: The generative model.

- Action: Selecting the next token in a SMILES sequence.

- State: The current sequence of generated tokens.

- Reward ( R ): A composite reward function applied at the end of generation:

( R = R{valid} + R{novel} + R{pIC50} )

- ( R{valid} = +10 ) if SMILES is valid, else ( -5 ).

- ( R{novel} = +2 ) if molecule is not in training set.

- ( R{pIC50} = \alpha * (\text{predicted pIC50}) ), where ( \alpha ) is a scaling factor.

- Training Loop: Generate a batch of molecules, compute rewards, estimate policy gradient, and update the generator parameters to maximize expected reward.

Quantitative Performance Benchmarks

Recent studies highlight the performance of conditional models against baseline unconditional models. The data below is synthesized from recent literature.

Table 1: Benchmarking Conditional Generation Models on Guacamol and MOSES Datasets

| Model Architecture | Conditioning Property | Validity (%) ↑ | Uniqueness (%) ↑ | Condition Satisfaction (%) ↑ | Novelty (%) ↑ | Fitness (Composite) |

|---|---|---|---|---|---|---|

| Unconditional VAE (Baseline) | N/A | 94.2 | 99.1 | N/A | 80.5 | N/A |

| CVAE (MLP) | LogP | 95.5 | 98.7 | 73.4 | 78.9 | 0.72 |

| cRNN (LSTM) | pIC50 (bin) | 99.8 | 95.2 | 65.1 | 85.2 | 0.68 |

| cGANN (Graph) | QED, TPSA | 93.1 | 99.5 | 89.7 | 75.4 | 0.85 |

| Transformer (RL-tuned) | pIC50 (cont.) | 98.6 | 97.8 | 81.3 | 82.7 | 0.79 |

Table 2: Success Metrics in Prospective Studies (Generated -> Synthesized -> Tested)

| Study (Year) | Target | Generative Model | # Generated | # Synthesized | # Active (pIC50 ≥ 7) | Hit Rate (%) |

|---|---|---|---|---|---|---|

| Olivecrona et al. (2017) | DRD2 | RNN (RL) | 100 | 100 | 15 | 15% |

| Zhavoronkov et al. (2019) | DDR1 | cVAE/RL | 40 | 6 | 4 | 66.7% |

| Moret et al. (2023) | JAK2 | Transformer (Cond.) | 150 | 12 | 3 | 25% |

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Tools for Conditional Molecule Generation Research

| Item / Reagent | Function / Purpose | Example Source/Library |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, descriptor calculation (LogP, TPSA), and validity checks. | https://www.rdkit.org |

| DeepChem | ML library for drug discovery. Provides standardized datasets, featurizers (GraphConv, ECPF), and model templates. | https://deepchem.io |

| Guacamol / MOSES | Standardized benchmarks and datasets for training and evaluating generative models. | GitHub Repositories |

| PyTorch / TensorFlow | Core deep learning frameworks for implementing and training conditional architectures (CVAE, GAN, Transformers). | PyTorch.org, TensorFlow.org |

| REINVENT | Specialized framework for RL-based molecular design, simplifying reward shaping and policy gradient implementation. | GitHub: REINVENT |

| ZINC / ChEMBL | Primary public sources for small molecule structures and associated bioactivity data (pIC50, Ki). | https://zinc.docking.org, https://www.ebi.ac.uk/chembl/ |

| Streamlit / Dash | For building interactive web apps to visualize and sample from conditional generative models. | https://streamlit.io |

| OMEGA & ROCS (Commercial) | Conformational generation and shape-based alignment for 3D-property conditioning or post-filtering. | OpenEye Toolkit |

Advanced Conditioning: Pathways and Multi-Objective Optimization

Complex objectives often require multi-parameter conditioning. The logical flow for multi-objective optimization is shown below.

Protocol for Pareto Optimization:

- Use a conditional model to generate an initial diverse set.

- Calculate key properties (Predicted pIC50, LogP, Synthetic Accessibility (SA) score) for each molecule.

- Apply a non-dominated sorting algorithm (e.g., NSGA-II) to identify the Pareto front—molecules where improving one property would worsen another.

- Use points on the Pareto front as conditioning targets for the next generative cycle or for final selection.

Conditional generation represents the critical translational step in the thesis of principled generative AI for molecules, bridging the gap between statistical learning and actionable design. While current methods successfully steer generation using simple properties, future work must address conditioning on complex 3D pharmacophores, predicted metabolic pathways, and multi-target selectivity profiles. The integration of these advanced conditioning signals will further solidify generative AI as a cornerstone of rational molecular design.

Scaffold Hopping and R-group Optimization with Recurrent Neural Networks (RNNs) and Graph Neural Networks (GNNs)

Within the broader thesis on the Principles of Generative AI for Molecule Generation Research, scaffold hopping and R-group optimization represent two critical, interrelated tasks for lead discovery and optimization in drug development. Scaffold hopping aims to discover novel molecular cores (scaffolds) that retain or improve desired biological activity while potentially altering properties like pharmacokinetics or patentability. Concurrently, R-group optimization systematically explores substitutions at specific molecular sites to fine-tune activity and selectivity. The advent of deep generative models, particularly Recurrent Neural Networks (RNNs) and Graph Neural Networks (GNNs), has provided powerful, data-driven paradigms for these tasks, moving beyond traditional library enumeration and virtual screening.

Foundational Models and Architectures

Recurrent Neural Networks (RNNs) for Molecular Generation

RNNs, especially Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) networks, process sequential data and are naturally suited for generating molecular string representations like SMILES (Simplified Molecular-Input Line-Entry System).

- Architecture: An encoder-decoder framework is commonly employed. The encoder RNN maps a SMILES string (or a scaffold) into a fixed-dimensional latent vector. The decoder RNN then generates a new SMILES string from this vector, conditioned on the desired properties or a different scaffold context.

- Application to Scaffold Hopping: The model can be trained to encode an active molecule's scaffold and decode a novel, structurally distinct scaffold while preserving the pharmacophoric pattern in the latent space.

- Application to R-group Optimization: The model can be conditioned on a specific molecular core (the scaffold with defined attachment points) and tasked with sequentially generating optimal R-groups as SMILES substrings.

Diagram: RNN-based Encoder-Decoder for Molecular Generation

Graph Neural Networks (GNNs) for Molecular Representation

GNNs operate directly on molecular graphs, where atoms are nodes and bonds are edges, inherently capturing topology and local chemical environments.

- Architecture: Message Passing Neural Networks (MPNNs) are a prevalent framework. In each layer, nodes aggregate feature vectors from their neighbors ("message passing"), update their own state, and finally, a readout function generates a graph-level (molecule) or subgraph-level (scaffold/R-group) representation.

- Application: GNNs excel at predicting molecular properties and learning meaningful, continuous embeddings of molecular substructures. For generative tasks, they are often paired with variational autoencoders (VAEs) or generative adversarial networks (GANs).

Diagram: Message Passing in a Graph Neural Network

Experimental Protocols & Methodologies

Protocol: Scaffold Hopping via Latent Space Interpolation with a Junction Tree VAE (JT-VAE)

The JT-VAE is a prominent GNN-based model that combines graph and tree representations for robust molecule generation.

- Data Preparation: Curate a dataset of known active molecules (e.g., from ChEMBL) against a specific target. Standardize molecules, remove duplicates, and identify Bemis-Murcko scaffolds.

- Model Training: Train a JT-VAE on a general molecular dataset (e.g., ZINC). The encoder uses a GNN to map a molecule to a latent vector

z. The decoder assembles a molecular graph via a predicted junction tree of scaffolds and subgraphs. - Latent Space Projection: Encode all active molecules from Step 1 into the trained JT-VAE's latent space.

- Scaffold Hop Generation:

- Calculate the centroid (

z_centroid) of the latent vectors of active molecules. - Perform principal component analysis (PCA) on the set of latent vectors. Perturb

z_centroidalong low-variance PCA components (directions of chemical novelty). - Decode the perturbed latent vectors (

z_centroid + Δz) to generate novel molecular structures.

- Calculate the centroid (

- Evaluation: Filter generated molecules for synthetic accessibility (SA), drug-likeness (QED). Dock top candidates in silico to the target and select for synthesis and in vitro testing.

Protocol: R-group Optimization using a Recurrent Conditional Chemical Graph Generator (RCG-G)

This protocol uses an RNN conditioned on a graph context.

- Define Core and Attachment Points: Select a candidate scaffold with one or more defined R-group attachment points (e.g., marked with

[*]). - Model Setup: Employ a conditional graph-to-sequence model. A GNN encoder creates a representation of the core scaffold. An RNN decoder, initialized with this graph representation, generates SMILES strings for the R-group, one token at a time.

- Training: Train the model on a dataset of core-R-group pairs, where the objective is to predict the R-group SMILES given the core graph and desired property constraints (e.g., high logP, low toxicity).

- Optimization & Library Generation: For a new core, sample from the decoder RNN under conditional constraints (e.g., using beam search) to produce a focused virtual library of R-group replacements.

- Screening: Score the generated core-R-group combinations using a predictive activity model (e.g., a Random Forest or a separate GNN classifier) and select the top candidates for experimental validation.

Diagram: Integrated Generative AI Workflow for Lead Optimization

Quantitative Performance Data

Table 1: Comparative Performance of Generative Models on Benchmark Tasks

| Model Class | Model Name | Primary Task | Benchmark Metric (e.g., Vina Dock Score) | Success Rate (%) | Novelty (%) | Reference (Example) |

|---|---|---|---|---|---|---|

| RNN-based | Organ (RL-based) | Scaffold Hopping for DRD2 | Docking Score Improvement vs. Seed | 65% | >80% | Olivecrona et al., 2017 |

| GNN-based | JT-VAE | Constrained Molecule Generation | Reconstruction Accuracy | 76% | N/A | Jin et al., 2018 |

| GNN-based | G-SchNet | R-group/Scaffold Generation | Property Optimization (QED, SA) | -- | High | G-SchNet, 2019 |

| Hybrid | GraphINVENT | Library Generation | FCD Distance to Training Set | Low (Desired) | High | GraphINVENT, 2020 |

Table 2: Typical Computational Requirements for Training Generative Models

| Model | Dataset Size (Molecules) | Training Time (GPU Hours) | Latent Space Dimension | Typical Library Generation Size |

|---|---|---|---|---|

| SMILES LSTM-VAE | 250,000 | 24-48 (NVIDIA V100) | 128 | 10,000 - 100,000 |

| JT-VAE | 250,000 | 48-72 (NVIDIA V100) | 56 | 10,000 - 100,000 |

| Conditional RNN (R-group) | 50,000 (core-R-group pairs) | 12-24 (NVIDIA V100) | 256 (context) | 1,000 - 10,000 per core |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Resources

| Item Name | Category | Function/Brief Explanation |

|---|---|---|

| RDKit | Cheminformatics Library | Open-source toolkit for molecule manipulation, fingerprinting, descriptor calculation, and scaffold analysis. Fundamental for data preprocessing. |

| PyTor / TensorFlow | Deep Learning Framework | Flexible libraries for building and training custom RNN and GNN architectures. |

| PyTorch Geometric (PyG) / DGL | GNN Library | Specialized libraries built on top of PyTorch/TF that provide efficient implementations of GNN layers and message passing. |

| Junction Tree VAE Code | Model Implementation | Reference implementation of the JT-VAE model, often used as a baseline for scaffold hopping research. |

| ZINC / ChEMBL | Molecular Databases | Large, publicly available databases of purchasable compounds (ZINC) and bioactive molecules (ChEMBL) for training and benchmarking. |

| SMILES Enumeration Tool | Utility | Software for systematically generating SMILES strings from a core with defined attachment points (R-group enumeration). |

| AutoDock Vina / Gnina | Molecular Docking | Software for predicting binding poses and affinity of generated molecules to a protein target, a key validation step. |

| SA Score Predictor | Filtering Tool | Algorithm to estimate the synthetic accessibility of a generated molecule, crucial for prioritizing plausible candidates. |

Fragment-Based Generation and Linker Design Strategies

Within the broader thesis on the principles of generative AI for molecule generation, this work addresses the critical paradigm of constructing novel molecules from validated structural fragments. This approach, inspired by fragment-based drug discovery (FBDD), leverages generative AI to intelligently assemble and link chemical fragments, thereby navigating chemical space more efficiently than whole-molecule generation. It combines the robustness of known pharmacophores with the exploratory power of deep learning to accelerate the design of drug-like candidates with optimized properties.

Core Methodological Frameworks

Generative models for fragment-based design typically operate in a multi-step process: 1) Fragment library creation and embedding, 2) Fragment selection or generation, 3) Linker design and assembly, and 4) Property-constrained optimization.

Key Models and Architectures:

- Graph-Based Generative Models (GVAE, GCPN): Treat molecules as graphs where atoms are nodes and bonds are edges. They are extended to handle fragment nodes.

- Transformers & Sequence-Based Models: SMILES strings or SELFIES representations are adapted to include fragment tokens and linker connection points.

- Reinforcement Learning (RL): Used to optimize generated molecules for specific properties (e.g., QED, Synthesizability, binding affinity predictions) post-generation.

- 3D-Contextual Models (DeepFrag, CONFIRM): Utilize 3D protein-ligand interaction information to suggest optimal fragment extensions or linkers.

Quantitative Performance Data

Recent benchmarks highlight the performance of fragment-based generative models versus de novo generation.

Table 1: Benchmarking Fragment-Based vs. De Novo Generative Models

| Model / Framework | Approach | Validty (%) | Uniqueness (%) | Novelty (%) | Synthetic Accessibility (SA Score) | Runtime (s/molecule)* | Key Metric (F1/BCR) |

|---|---|---|---|---|---|---|---|

| GCPN (De Novo) | Graph Completion | 98.5 | 99.8 | 80.1 | 3.2 | ~0.5 | N/A |

| Frag-GVAE | Fragment Assembly | 99.8 | 95.4 | 65.3 | 2.8 | ~0.2 | BCR: 0.72 |

| REINVENT-Frag | RL + Fragment Library | 99.2 | 88.7 | 85.6 | 3.1 | ~1.1 | F1: 0.89 |

| 3DLinker | 3D Conditional Linker | 94.7 | 99.9 | 78.9 | 3.5 | ~3.5 | BCR: 0.81 |

*Approximate average generation time per molecule on standard GPU. BCR: Bemis-Murcko Scaffold Recovery Rate. F1: F1-Score for desired property profile.

Table 2: Impact of Linker Length on Molecular Properties

| Linker Heavy Atom Count | Avg. cLogP | Avg. TPSA (Ų) | % Compounds Passing Ro5 | Avg. Binding Affinity ΔΔG (kcal/mol)* |

|---|---|---|---|---|

| 2-4 | 2.1 | 75 | 92% | -0.5 |

| 5-7 | 2.8 | 95 | 78% | -1.2 |

| 8-10 | 3.5 | 110 | 45% | -0.9 |

| >10 | 4.2 | 130 | 12% | -0.7 |

*Simulated ΔΔG improvement versus initial fragment; negative is better.

Experimental Protocols

Protocol 1: In Silico Fragment-Based Library Generation with a GVAE Objective: To generate a novel, property-optimized chemical library from a curated fragment dataset.

- Fragment Library Curation: Prepare a library of 5,000 validated fragments (MW < 250 Da, heavy atoms ≤ 18). Annotate each fragment with connection vectors (attachment points).

- Data Representation: Convert each fragment to a graph representation with node features (atom type, hybridization) and mark attachment nodes.

- Model Training: Train a Graph Variational Autoencoder (GVAE) on the fragment graphs. The latent space

zencodes fragment structures. - Sampling & Decoding: Sample latent vectors

zfrom a prior distribution (or interpolate between known fragments) and decode them into novel fragment structures using the decoder network. - Linker Proposal: Use a conditional RNN model that takes two fragment embeddings as input and generates a SMILES string for a linker that bridges the predefined attachment points.

- Assembly & Validation: Assemble the full molecule via covalent bonding at attachment points. Validate chemical correctness using RDKit and filter based on calculated properties (cLogP, MW, SA Score).

- Evaluation: Assess the library for validity, uniqueness, and scaffold diversity using the metrics in Table 1.

Protocol 2: Reinforcement Learning (RL) for Linker Optimization Objective: To optimize a linker connecting two fixed fragments for maximal predicted binding affinity.

- Environment Setup: Define the state

s_tas the current (partial) linker SMILES. The actiona_tis the next token to add (atom or bond). - Agent Model: Initialize a Transformer-based policy network

π(a_t | s_t). - Reward Function: Design a composite reward R = Rvalidity + Rsimilarity + R_property.

R_validity: +1 if the final molecule is chemically valid.R_similarity: Score based on Tanimoto similarity to a desired property profile.R_property: Predicted pIC50 or -ΔG from a pre-trained docking surrogate model (e.g., a Random Forest or CNN model).

- Training Loop: Use Proximal Policy Optimization (PPO). The agent generates linkers, receives a reward, and updates its policy to maximize expected cumulative reward.

- Post-Processing: Select top-scoring molecules for in silico docking studies using AutoDock Vina or Glide.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Datasets for Fragment-Based AI Generation

| Item / Resource | Category | Function & Explanation |

|---|---|---|

| RDKit | Cheminformatics Library | Open-source toolkit for molecule manipulation, descriptor calculation, and SMILES validation. Core for preprocessing and post-processing. |

| ZINC Fragment Library | Fragment Dataset | A curated, commercially available set of small, diverse molecular fragments with defined attachment points for virtual screening. |

| DeepChem | ML Library | Provides high-level APIs for building graph neural networks and pipelines on chemical data, useful for model prototyping. |

| PyTor-Geometric (PyG) | Graph ML Library | Efficient library for implementing Graph Neural Networks (GNNs) essential for fragment and molecule graph processing. |

| AutoDock Vina / Gnina | Docking Software | For in silico evaluation of generated molecules' binding poses and affinities, providing critical feedback for RL reward functions. |

| REINVENT / MolPal | Generative AI Framework | Specialized platforms for RL-based de novo molecular generation, adaptable to fragment-based strategies. |

| ChEMBL / PubChem | Bioactivity Database | Source of known molecules and associated bioactivity data for training predictive models and validating novelty. |

| Synthetic Accessibility (SA) Score | Computational Filter | A score estimating the ease of synthesizing a generated molecule, crucial for prioritizing realistic candidates. |

Reinforcement Learning for Multi-Objective Optimization (Potency, ADMET, Synthesizability)

Within the broader thesis on Principles of Generative AI for Molecule Generation Research, a central challenge is the de novo design of molecules that simultaneously optimize multiple, often competing, objectives. Reinforcement Learning (RL) has emerged as a powerful paradigm to navigate this high-dimensional chemical space. Unlike single-property optimization, the simultaneous pursuit of Potency (e.g., binding affinity, biological activity), favorable ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiles, and Synthesizability (ease and cost of chemical synthesis) represents a true multi-objective optimization (MOO) problem. This technical guide details the core RL frameworks, experimental protocols, and computational toolkits driving advances in this field.

Core RL Frameworks for Molecular MOO

RL formulates molecule generation as a sequential decision process: an agent (generator) constructs a molecule step-by-step (e.g., adding atoms or bonds) within an environment. The environment provides rewards based on multiple property calculators. Key frameworks include:

- Multi-Objective Policy Gradient (e.g., PG-MO): Extends policy gradient methods (REINFORCE, PPO) by combining multiple reward signals into a scalarized reward ( R{total} = \sumi wi ri ), where ( w_i ) are tunable weights for potency, ADMET, and synthesizability scores.

- Multi-Objective Deep Q-Learning (MO-DQN): Utilizes a Q-network to estimate the value of actions, with the reward function designed as a linear or non-linear combination of multiple objectives. Pareto-frontier sampling techniques can be integrated.

- Conditional RL: The desired trade-off between objectives is provided as a condition or goal vector to the policy, enabling the generation of molecules along a Pareto front.

- Adversarial & Evolutionary RL: Combines RL with Generative Adversarial Networks (GANs) or genetic algorithms to refine multi-objective policies through competition or population-based optimization.

Quantitative Comparison of RL Frameworks for Molecular MOO

| Framework | Core Algorithm | Multi-Objective Handling | Sample Efficiency | Known for Generating Molecules with... | Key Challenge |

|---|---|---|---|---|---|

| PG-MO | Policy Gradient (PPO/REINFORCE) | Scalarized Reward (( R = w1*Pot + w2ADMET + w_3Syn )) | Moderate | High potency, but variable ADMET | Sensitive to weight tuning; may converge to sub-optimum. |

| MO-DQN | Deep Q-Learning | Vector Reward → Scalar via Chebyshev or Linear Scalarization | Low | Good diversity on Pareto front | High instability; requires careful replay buffer management. |

| Conditional RL | Any (PPO, DQN) | Conditioning vector on policy network | High | Precise trade-off control (on-demand) | Requires predefined and accurate conditioning space. |

| Adversarial RL | RL + GAN | Discriminator rewards "ideal" multi-property profile | Very Low | High realism and synthetic accessibility | Mode collapse; difficult training dynamics. |

Detailed Experimental Protocol: A Standardized RL Pipeline for Molecule Generation

The following protocol outlines a benchmark multi-objective RL experiment for de novo molecule design.

A. Objective Definition & Reward Shaping

- Potency Proxy: Use a pre-trained predictive model (e.g., a Graph Neural Network) on relevant bioassay data (e.g., pIC50 for a target). Reward ( Rp = \text{sigmoid}(pIC50{pred} - \text{threshold}) ).

- ADMET Proxy: Utilize a suite of QSAR models from platforms like ADMETLab 2.0. Calculate a composite score: ( Ra = \frac{1}{N}\sumi Si ), where ( Si ) are normalized scores for Caco-2 permeability, CYP450 inhibition, hERG toxicity, etc.

- Synthesizability Proxy: Apply the Synthetic Accessibility (SA) Score (based on fragment contributions and complexity penalties) or the RAscore (retrosynthetic accessibility) from AiZynthFinder. Reward ( R_s = 1 - \text{normalize}(SA\ score) ).

- Final Scalarized Reward: ( R{total} = \alpha Rp + \beta Ra + \gamma Rs ), with ( \alpha + \beta + \gamma = 1 ).

B. Agent & Environment Setup

- Action Space: Define a vocabulary of chemically valid actions (e.g., add atom/bond, terminate) in a graph-based environment (e.g., MolGraph-Env).

- State Representation: Represent the intermediate molecular graph as a set of node (atom) and edge (bond) feature vectors.

- Policy Network: Implement a Graph Neural Network (GNN) or a Transformer architecture that maps the state to a probability distribution over actions.

C. Training Loop

- Initialization: Initialize policy network parameters θ randomly.

- Rollout: For N episodes, the agent interacts with the environment, generating a trajectory τ = (s₀, a₀, r₀, ..., s_T) of states, actions, and rewards until termination.

- Gradient Calculation: Compute the policy gradient to maximize expected reward. For REINFORCE: ( \nablaθ J(θ) ≈ \frac{1}{N} \sum{n=1}^N \sum{t=0}^{Tn} \nablaθ \log \piθ(at^n | st^n) (R_{total}^n - b) ), where b is a baseline (e.g., moving average reward) for variance reduction.

- Parameter Update: Update θ using gradient ascent (e.g., Adam optimizer).

- Validation: Every K iterations, evaluate the top 100 generated molecules (by reward) using docking simulations (for potency) and retrosynthesis analysis (for synthesizability). Monitor the Pareto front evolution.

D. Evaluation Metrics

- Multi-Objective Performance: Hypervolume Indicator (HV) of the generated molecule set in the 3D objective space (Potency, ADMET, Synthesizability).

- Diversity: Internal diversity (average pairwise Tanimoto dissimilarity) of the top 100 molecules.

- Novelty: Fraction of generated molecules not present in the training data (e.g., ZINC database).

- Pareto Front: Visualize the trade-off surface between the three objectives.

Workflow and Pathway Visualization

Title: RL Training Loop for Multi-Objective Molecule Generation

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Item / Solution | Function in RL for Molecular MOO | Example / Implementation |

|---|---|---|

| Molecular Simulation Environment | Provides the "gym" for agent interaction, defines state/action space, and enforces chemical validity. | MolGraph-Env, ChEMBL-RL, Gym-Molecule |

| Property Prediction Models | Serve as the reward function proxies for Potency and ADMET. Must be fast and accurate for online evaluation. | Random Forest/CNN/GNN QSAR models, ADMETLab 2.0, pkCSM, Chemprop |

| Synthesizability Scorer | Critical reward component to ensure practical utility of generated molecules. | SA Score, RAscore, AiZynthFinder, ASKCOS, Retro |

| Policy Network Architecture | The core "brain" of the agent that learns the generation strategy. | Graph Neural Network (GNN), Transformer, Message Passing Neural Network (MPNN) |

| RL Algorithm Library | Provides tested, optimized implementations of core RL algorithms. | Stable-Baselines3, Ray RLlib, TF-Agents, Custom PPO/REINFORCE |

| Chemical Database | Source of prior knowledge for pre-training, benchmarking, and novelty assessment. | ZINC, ChEMBL, PubChem, DrugBank |

| Docking Software | Validation tool for computationally assessing binding affinity (potency) of generated hits. | AutoDock Vina, Glide, GOLD |

| Retrosynthesis Planner | Validation tool for in-depth analysis of synthetic routes and cost. | AiZynthFinder, ASKCOS, IBM RXN, Spaya |

This document presents a technical analysis of generative AI applications in therapeutic discovery, framed within the core principles of AI-driven molecule generation research. The field leverages deep generative models to explore the vast chemical and biological space, aiming to accelerate the discovery of novel small molecules and protein-based therapeutics with desired properties.

Generative AI Principles in Therapeutic Design

The foundational thesis for applying generative AI in this domain rests on several principles: learning from high-dimensional probability distributions of known molecules, enabling de novo design through sampling, conditioning generation on specific properties (e.g., binding affinity, solubility), and iterative optimization via closed-loop experimentation.

Case Study 1: Small Molecule Drug Discovery

A prominent case involves using a Chemical Variational Autoencoder (VAE) to generate novel inhibitors for the Dopamine D2 Receptor (DRD2), a target for neurological disorders.

Experimental Protocol: DRD2 Inhibitor Generation

- Data Curation: A dataset of 1.4 million known drug-like molecules from ChEMBL was pre-processed using RDKit. SMILES strings were canonicalized and invalid structures were removed.

- Model Architecture: A VAE with a bidirectional GRU encoder and GRU decoder was implemented. The latent space dimension was set to 196.

- Training: The model was trained to reconstruct input SMILES strings, learning a continuous latent representation of chemical space.

- Conditioned Generation: A property predictor neural network (trained on a separate labeled dataset) was used to map latent vectors to predicted DRD2 activity. Latent space vectors were optimized using gradient ascent to maximize predicted activity.

- Sampling & Filtering: New latent vectors were decoded into molecular structures. Outputs were filtered for synthetic accessibility (SA Score < 4.5) and drug-likeness (QED > 0.5).

- Experimental Validation: Top-ranked generated molecules were synthesized and tested in vitro for DRD2 binding affinity (Ki).

Quantitative Results

Table 1: Performance Metrics of Generative AI for DRD2 Inhibitors

| Metric | Model Performance | Traditional Virtual Screening (Baseline) |

|---|---|---|

| Novelty (Tanimoto < 0.4) | 85% | 10% |

| Synthetic Accessibility (SA Score) | 3.2 (mean) | 3.5 (mean) |

| Hit Rate (Ki < 10 μM) | 12% | 1.5% |

| Best Compound Ki | 4.2 nM | 8.7 nM |

| Number Generated | 5,000 | 50,000 (from library) |

Title: Small Molecule AI Generation Workflow

Case Study 2: Protein Therapeutics Design

A key case study employs a Protein Language Model (pLM) fine-tuned with Reinforcement Learning (RL) to design novel broadly neutralizing antibodies (bnAbs) against a conserved influenza hemagglutinin epitope.

Experimental Protocol: De Novo Antibody Design

- Sequence Embedding: A pre-trained transformer pLM (e.g., ESM-2) was used to generate embeddings for 500,000 antibody heavy-chain variable region (VH) sequences.

- Fine-Tuning Dataset: A curated dataset of 1,200 known anti-influenza bnAb VH sequences was used for supervised fine-tuning of the pLM's output layers.

- Reinforcement Learning Loop:

- Actor: The fine-tuned pLM served as the policy network (actor) generating new sequences.