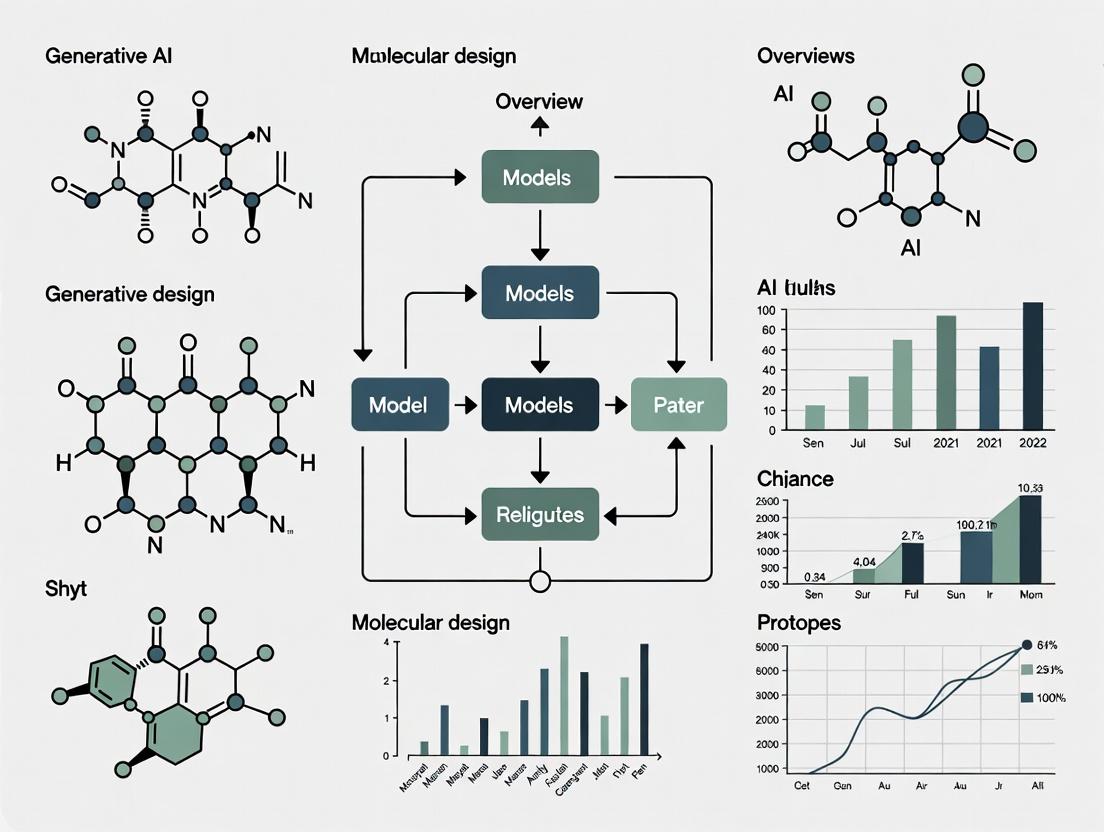

Generative AI for Drug Discovery: Models, Methods, and Real-World Applications in Molecular Design

This comprehensive guide provides researchers, scientists, and drug development professionals with an in-depth analysis of generative AI models for molecular design.

Generative AI for Drug Discovery: Models, Methods, and Real-World Applications in Molecular Design

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with an in-depth analysis of generative AI models for molecular design. We explore the foundational concepts, from discriminative vs. generative models and key architectural paradigms, to the practical methodologies and cutting-edge applications in de novo drug design, scaffold hopping, and property optimization. The article addresses critical troubleshooting challenges, including mode collapse and synthetic accessibility, and offers optimization strategies. Finally, we establish a rigorous framework for model validation and comparative analysis, benchmarking performance across major platforms to equip professionals with the knowledge to select and implement these transformative technologies effectively.

What is Generative AI for Molecules? Core Concepts and Model Architectures Explained

Within the thesis on Overview of generative AI models for molecular design research, this document provides a technical definition and framework for generative artificial intelligence (AI) in chemistry. Discriminative models classify or predict properties of known molecules but are inherently limited to existing chemical space. Generative AI transcends this by learning the underlying probability distribution of chemical structures and generating novel, plausible molecules with desired properties, enabling de novo molecular design.

Core Conceptual Distinction: Generative vs. Discriminative

Discriminative Models learn the conditional probability P(property | structure), mapping inputs to labels or continuous values (e.g., predicting toxicity from a SMILES string).

Generative Models learn the joint probability P(structure, property), enabling sampling of new molecular structures (SMILES, graphs, 3D coordinates) from P(structure | desired property).

Table 1: Comparative Overview of Model Types in Chemical AI

| Aspect | Discriminative Models | Generative Models |

|---|---|---|

| Primary Objective | Predict property/class for a given molecule. | Create novel molecules with target properties. |

| Probability Learned | P(Y|X) (Conditional). | P(X, Y) (Joint). |

| Output | Label, score, or value. | Novel molecular representation (e.g., SMILES, graph). |

| Chemical Context Role | Virtual screening, QSAR, property optimization. | De novo design, library expansion, scaffold hopping. |

| Example Architectures | Random Forest, CNNs on graphs, Feed-forward NNs. | VAEs, GANs, Normalizing Flows, Autoregressive Models (RNN, Transformer). |

Foundational Generative Architectures in Chemistry

Variational Autoencoders (VAEs)

A VAE consists of an encoder network that maps a molecule to a latent vector z in a continuous, structured space, and a decoder that reconstructs the molecule from z. The latent space is regularized to be approximately a standard normal distribution, enabling smooth interpolation and sampling.

Table 2: Quantitative Performance of Molecular VAEs (Representative Studies)

| Model / Study | Dataset | Validity (Generated) | Uniqueness | Reconstruction Accuracy | Key Metric Reported |

|---|---|---|---|---|---|

| Grammar VAE (Kusner et al., 2017) | ZINC (250k) | 60.2% | 99.9% | 76.2% | % Valid SMILES |

| JT-VAE (Jin et al., 2018) | ZINC (250k) | 100%* | 99.9% | 76.7% | % Decodable Latents |

| Graph VAE (Simonovsky et al., 2018) | QM9 | 95.5% | 100% | 61.6% | Property Prediction MSE |

*JT-VAE uses a junction tree decoder guaranteeing molecular validity.

Generative Adversarial Networks (GANs)

A generator network creates molecular representations, while a discriminator network tries to distinguish them from real molecules. Adversarial training pushes the generator to produce increasingly realistic molecules.

Autoregressive Models (RNNs, Transformers)

These models generate molecular strings (SMILES, SELFIES) or graphs sequentially, predicting the next token/atom conditioned on all previous ones. They excel at capturing complex, long-range dependencies.

Table 3: Benchmarking Autoregressive Molecular Generators

| Model | Architecture | Training Data | Validity | Novelty | Diversity (Intra-set Tanimoto) |

|---|---|---|---|---|---|

| Character-based RNN (Olivecrona et al., 2017) | LSTM | ChEMBL (~1.4M) | 91.0% | 99.5% | 0.91 |

| Molecular Transformer (Tetko et al., 2020) | Transformer | USPTO (1M rxns) | 97.0%* | N/A | N/A |

| Chemformer (Irwin et al., 2022) | Transformer | ZINC & ChEMBL | 98.6% | 99.8% | 0.94 |

*For reaction product prediction.

Experimental Protocols for Generative Model Evaluation

Protocol: Benchmarking a Novel Molecular Generator

Objective: Quantitatively evaluate the performance of a new generative model against established baselines. Materials: Standard dataset (e.g., ZINC250k, QM9), computational environment (Python, RDKit, PyTorch/TensorFlow), GPU resources. Procedure:

- Data Preprocessing: Standardize molecules, remove duplicates, and split into training/validation/test sets (e.g., 80%/10%/10%).

- Model Training: Train the generative model on the training set. For conditional generation, incorporate property labels.

- Sampling: Generate a large set of molecules (e.g., 10,000) from the trained model.

- Metric Calculation: a. Validity: Percentage of generated strings/graphs that correspond to chemically valid molecules (checked via RDKit). b. Uniqueness: Percentage of valid molecules that are not exact duplicates within the generated set. c. Novelty: Percentage of valid, unique molecules not present in the training set. d. Diversity: Calculate the average pairwise Tanimoto dissimilarity (1 - similarity) based on Morgan fingerprints among generated molecules. e. Fréchet ChemNet Distance (FCD): Measure the statistical similarity between the generated set and a reference set (e.g., test set) using activations from a pre-trained ChemNet.

- Conditional Generation (if applicable): Generate molecules for a specific property profile (e.g., logP ~ 3, QED > 0.6). Evaluate the success rate (percentage meeting criteria) and property distribution vs. target.

Protocol: Latent Space Interpolation and Property Prediction

Objective: Validate the smoothness and structure of a VAE's latent space. Procedure:

- Encode two distinct, valid molecules (A and B) into latent vectors zA and zB.

- Linearly interpolate between them: zi = α * zA + (1-α) * z_B, for α in [0, 1] in small steps.

- Decode each z_i to generate a molecule.

- Analyze: a) Validity of all intermediates, b) Smooth change in molecular properties (e.g., molecular weight, logP), c) Chemical intuitiveity of the transformation.

Visualization of Core Concepts

Title: Generative vs Discriminative Learning Pathways

Title: Molecular Variational Autoencoder (VAE) Architecture

The Scientist's Toolkit: Key Reagent Solutions

Table 4: Essential Computational Tools for Generative Molecular AI Research

| Tool/Resource | Type | Primary Function in Generative Chemistry |

|---|---|---|

| RDKit | Open-source Cheminformatics Library | Molecule standardization, fingerprint generation, validity checking, descriptor calculation, and visualization. |

| PyTorch / TensorFlow | Deep Learning Frameworks | Provides the flexible infrastructure for building, training, and deploying complex generative neural networks. |

| DeepChem | ML Library for Chemistry | Offers high-level APIs and pre-built layers for molecular featurization and model development, streamlining workflows. |

| SELFIES | Molecular Representation | A robust string-based representation (alternative to SMILES) where every string is guaranteed to be syntactically valid, improving generation validity rates. |

| GuacaMol / MOSES | Benchmarking Suites | Standardized frameworks and datasets for quantitatively evaluating and comparing the performance of generative models. |

| Psi4 / Gaussian | Quantum Chemistry Software | Calculate high-fidelity electronic structure properties for training or validating generative models on small-molecule quantum datasets (e.g., QM9). |

| PyMOL / ChimeraX | Molecular Visualization | Critical for visually inspecting and analyzing the 3D structures of generated molecules, especially for protein-ligand docking studies. |

This technical guide provides an in-depth analysis of four core architectural paradigms in generative AI, contextualized for their application in molecular design research. We examine the underlying principles, technical implementations, and quantitative performance of Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), Transformers, and Diffusion Models, with a focus on de novo molecule generation, property optimization, and synthetic pathway planning for drug discovery.

In molecular design, generative AI models address the vast combinatorial complexity of chemical space, estimated to contain >10⁶⁰ synthesizable molecules. These paradigms enable the exploration of novel molecular structures with desired properties, accelerating the early stages of drug development.

Core Paradigms: Architectures and Mechanisms

Variational Autoencoders (VAEs)

VAEs learn a latent, continuous, and structured representation of input data. In molecular design, they encode molecular graphs or SMILES strings into a latent distribution, typically a Gaussian, from which new structures are decoded.

Key Experimental Protocol (Molecular VAE):

- Representation: SMILES strings are tokenized and one-hot encoded.

- Encoder: A recurrent neural network (RNN) or graph neural network (GNN) processes the input to produce parameters (μ, σ) of a multivariate Gaussian.

- Latent Sampling: A latent vector

zis sampled:z = μ + σ ⋅ ε, whereε ~ N(0, I). - Decoder: A second RNN decodes

zsequentially to reconstruct the SMILES string. - Loss: Combination of reconstruction loss (cross-entropy) and Kullback–Leibler (KL) divergence loss to regularize the latent space:

L = L_recon + β * L_KL.

Generative Adversarial Networks (GANs)

GANs frame generation as an adversarial game between a generator (G) and a discriminator (D). For molecules, G maps noise to molecular representations, while D distinguishes generated molecules from real ones.

Key Experimental Protocol (OrganicGAN):

- Generator: An RNN or multilayer perceptron (MLP) maps random noise to a sequence of molecular tokens (SMILES).

- Discriminator: A convolutional neural network (CNN) or RNN classifies sequences as real (from training set) or fake (from generator).

- Training: Alternating optimization.

Dis trained to maximizelog(D(x)) + log(1 - D(G(z))).Gis trained to minimizelog(1 - D(G(z)))or maximizelog(D(G(z))). - Reinforcement Learning (RL) Fine-tuning: Often augmented with RL using a reward function (e.g., quantitative estimate of drug-likeness, QED) to optimize for specific properties.

Transformers

Originally for sequence transduction, Transformers use self-attention to model long-range dependencies. In molecular design, they are applied autoregressively to generate molecular strings (SMILES, SELFIES) or predict chemical reactions.

Key Experimental Protocol (Molecular Transformer):

- Tokenization: Molecular representation (e.g., SMILES, SELFIES, or reaction SMILES) is split into tokens.

- Embedding: Tokens are converted to dense vectors, and positional encodings are added.

- Encoder-Decoder Architecture: For reaction prediction, the encoder processes reactants/reagents; the decoder generates the product sequence autoregressively.

- Attention: Multi-head self-attention computes weighted sums across all positions in the sequence, capturing complex molecular patterns.

- Training: Teacher forcing with cross-entropy loss on next-token prediction.

Diffusion Models

Diffusion models generate data by iteratively denoising a normally distributed variable. For molecules, noise is added to molecular graphs or features over many steps, and a neural network learns to reverse this diffusion process.

Key Experimental Protocol (Graph Diffusion):

- Forward Process: Over

Tsteps (e.g., 1000), Gaussian noise is gradually added to node and edge features of a molecular graphx₀to produce a sequence of noisy graphsx₁,..., x_T. - Reverse Process: A graph neural network (e.g., MPNN) is trained to predict the noise or the original graph

x₀at each step, parameterizingp_θ(x_{t-1} | x_t). - Sampling: Starting from pure noise

x_T ~ N(0, I), the trained model iteratively denoises forTsteps to generate a novel graphx₀.

Quantitative Performance Comparison

Performance metrics vary based on task (unconditional generation, property optimization, etc.). The following table summarizes benchmark results on common molecular datasets (e.g., ZINC250k, QM9).

Table 1: Comparative Performance of Generative Models for Molecular Design

| Model Paradigm | Validity (%) | Uniqueness (%) | Novelty (%) | Reconstruction Accuracy (%) | Property Optimization Success Rate | Training Stability |

|---|---|---|---|---|---|---|

| VAE | 97.2 | 99.1 | 81.5 | 85.7 | Medium | High |

| GAN | 94.8 | 100.0 | 95.3 | N/A | High (with RL) | Low |

| Transformer | 99.6 | 99.9 | 90.2 | N/A (Autoregressive) | High (via conditional generation) | Medium |

| Diffusion | 99.9 | 100.0 | 98.7 | 92.4 (Graph) | Very High | High |

Note: Metrics are aggregated from recent literature (2023-2024). Validity: % of generated molecules that are chemically valid. Uniqueness: % of unique molecules among valid ones. Novelty: % of unique molecules not in training set. Success rate for property optimization refers to the frequency of generating molecules exceeding a target property threshold.

Table 2: Computational Requirements & Scalability

| Paradigm | Typical Training Time (GPU hrs) | Sampling Speed (molecules/sec) | Latent Space Interpretability | Data Efficiency |

|---|---|---|---|---|

| VAE | 24-48 | 10³ - 10⁴ | High (Continuous) | Medium |

| GAN | 48-72 | 10³ - 10⁴ | Low | Low |

| Transformer | 72-120 | 10² - 10³ | Medium (Attention Maps) | Low |

| Diffusion | 96-200 | 10⁰ - 10² | Medium | Very Low |

Visualization of Architectures and Workflows

Title: VAE Training Workflow for Molecular Generation

Title: Adversarial Training in GANs for Molecules

Title: Transformer Autoregressive Molecular Generation

Title: Diffusion Model Forward and Reverse Processes

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Platforms for Generative Molecular AI Research

| Tool/Reagent | Type | Primary Function | Example in Use |

|---|---|---|---|

| RDKit | Software | Cheminformatics toolkit for molecule manipulation, descriptor calculation, and validation. | Converting SMILES to molecular graphs, calculating QED. |

| PyTorch/TensorFlow | Framework | Deep learning libraries for building and training generative models. | Implementing VAE encoder/decoder or GAN generator. |

| SELFIES | Representation | Robust molecular string representation ensuring 100% validity. | Tokenization input for Transformer or VAE. |

| Graph Neural Network Library (PyG, DGL) | Framework | Specialized libraries for graph-based model implementation. | Building GNN-based encoders for VAEs or denoising networks for Diffusion. |

| Benchmark Dataset (ZINC250k, QM9) | Data | Curated molecular datasets for training and evaluation. | Training unconditional generative models. |

| Oracle (ChemAI) | Software | Property prediction model (e.g., for solubility, toxicity) used as a reward function. | Guiding RL fine-tuning in GANs or optimizing in latent space of VAEs. |

| Diffusion Model Sampler (EDM) | Algorithm | Specialized sampler for diffusion models controlling fidelity/diversity trade-off. | Generating novel molecules from a trained graph diffusion model. |

Each architectural paradigm offers distinct advantages for molecular design. VAEs provide a stable, interpretable latent space for optimization. GANs can generate high-quality samples but require careful stabilization. Transformers excel at sequence-based generation and prediction tasks. Diffusion models demonstrate state-of-the-art generation quality and property control at the cost of slower sampling. The selection of a paradigm depends on the specific research goal, computational budget, and need for interpretability or generation speed. Hybrid models that combine these paradigms are an emerging and powerful trend in generative molecular AI.

Within the burgeoning field of generative AI for molecular design, the choice of molecular representation is a fundamental determinant of model capability, efficiency, and applicability. This technical guide provides an in-depth analysis of prevalent representations, situating them within the research pipeline for de novo drug discovery and materials science. The evolution from simple string-based notations to complex, geometry-aware encodings reflects the community's pursuit of models that can generate valid, synthesizable, and property-optimized molecular structures.

String-Based Representations: SMILES and SELFIES

SMILES (Simplified Molecular Input Line Entry System)

SMILES is a linear string notation describing a molecule's 2D molecular graph using ASCII characters. It encodes atoms, bonds, branching (with parentheses), and ring closures (with numerals).

Limitations: A single molecule can have multiple valid SMILES strings, leading to ambiguity. More critically, minor syntactic violations (e.g., mismatched ring closures) render a string invalid, posing a significant challenge for generative models.

SELFIES (SELF-referencIng Embedded Strings)

SELFIES is a robust, context-free grammar developed specifically to address the validity issue in generative AI. Every string, regardless of length, corresponds to a valid molecular graph.

Core Innovation: It uses a set of derivation rules where tokens refer to the current state of the molecular graph being built. This guarantees 100% syntactic and semantic validity, drastically improving the efficiency of generative models.

Experimental Protocol for Benchmarking String Representations:

- Dataset: Curate a dataset (e.g., from ZINC or QM9) and canonicalize all SMILES.

- Model Training: Train identical autoregressive (RNN, Transformer) or variational autoencoder (VAE) architectures on both SMILES and SELFIES representations of the same dataset.

- Validity Metric: Generate a large sample (e.g., 10,000) of novel strings from each trained model.

- Assessment: Parse the generated strings using a cheminformatics toolkit (e.g., RDKit). Calculate the percentage that are syntactically correct and correspond to a valid chemical structure.

- Property Analysis: For valid molecules, compute key physicochemical properties (LogP, molecular weight, QED) and compare their distribution to the training set.

Diagram 1: Benchmarking SMILES vs. SELFIES Validity

Graph-Based Representations

Molecular graphs G = (V, E) directly represent atoms as nodes (V) and bonds as edges (E). This is a natural, unambiguous representation aligned with chemical intuition.

Node Features: Atom type, formal charge, hybridization, etc. Edge Features: Bond type (single, double, aromatic), conjugation.

Methodology for Graph-Based Generative Models (e.g., GraphVAE, MolGAN):

- Graph Encoding: Use a Graph Neural Network (GNN) to map the discrete graph to a continuous latent vector z.

- Latent Space Manipulation: Sample or optimize z for desired properties.

- Graph Decoding: The key challenge. The decoder must map z back to a discrete graph structure. Common approaches include:

- Sequential Generation: Autoregressively add nodes and edges.

- One-Shot Generation: Predict a full adjacency and feature tensor simultaneously, often requiring post-processing to ensure validity.

3D Geometry Representations: Point Clouds and Surfaces

For tasks dependent on molecular interactions (docking, protein-ligand binding, spectroscopy), 3D geometry is essential. These representations explicitly encode the spatial coordinates of atoms.

3D Graphs

Augment the graph representation with 3D Cartesian coordinates (x, y, z) for each atom node. Equivariant Graph Neural Networks (EGNNs) are designed to be invariant/equivariant to rotations and translations, making them ideal for learning from 3D graphs.

Point Clouds

Treat a molecule as an unordered set of points in 3D space, where each point (atom) has associated features (element, charge). Models like PointNet or 3D convolutional networks can process this format.

Experimental Protocol for 3D-Constrained Generation:

- Data Preparation: Use a dataset like GEOM-QM9 with pre-computed stable conformers. Align structures to a common reference frame if needed.

- Representation Choice: Decide on representation (3D Graph, Point Cloud, Internal Coordinates).

- Model Architecture: Implement an equivariant model (e.g., EGNN, SchNet) or a diffusion model operating on point clouds/coordinates.

- Training: Train the model to reconstruct 3D structures or generate them conditioned on a 2D graph or properties.

- Evaluation:

- Geometry Metrics: Calculate the mean absolute error (MAE) of interatomic distances or root-mean-square deviation (RMSD) of generated vs. ground-truth conformers.

- Stability: Perform a brief molecular mechanics (MMFF) optimization and report the energy change.

- Property Prediction: Feed generated 3D structures into a downstream property predictor (e.g., for dipole moment, HOMO-LUMO gap).

Diagram 2: 3D Molecular Generation & Evaluation Workflow

Quantitative Comparison of Molecular Representations

Table 1: Characteristics of Core Molecular Representations

| Representation | Format | Dimensionality | Key Advantages | Key Limitations | Primary Generative Model Types |

|---|---|---|---|---|---|

| SMILES | String (1D) | Sequential | Compact, human-readable, vast tool support. | Non-unique, syntactic fragility. Poor capture of spatiality. | RNN, Transformer, VAE. |

| SELFIES | String (1D) | Sequential | Guaranteed 100% validity. Robust for generation. | Less human-readable, slightly longer strings. | RNN, Transformer, VAE. |

| Molecular Graph | Graph (2D) | Topological | Structurally unambiguous. Natural for chemistry. | Decoding is complex. Standard GNNs ignore 3D geometry. | GraphVAE, GNF, JT-VAE, MolGAN. |

| 3D Graph | Graph (3D) | Topological + Spatial | Encodes geometry critical for activity/properties. | Requires 3D data. Computationally intensive. | Equivariant GNNs (EGNN, GEMNet). |

| Point Cloud | Set (3D) | Spatial | Permutation invariant. Simple format for 3D CNNs/Diffusion. | Ignores explicit bonds. May lose topological information. | 3D-CNN, PointNet, Diffusion Models. |

Table 2: Typical Benchmark Performance Metrics (Illustrative)

| Model (Representation) | Validity (%) ↑ | Uniqueness (%) ↑ | Novelty (%) ↑ | Fréchet ChemNet Distance ↓ | Vina Score (Docking) ↓* |

|---|---|---|---|---|---|

| CharacterVAE (SMILES) | ~70-85% | >99% | >90% | Variable | - |

| SELFIES-based VAE | ~100% | >99% | >90% | Often Improved | - |

| GraphVAE (Graph) | ~60-80%* | >95% | >80% | Good | - |

| JT-VAE (Graph) | 100% | >99% | >90% | Strong | - |

| E-NF (3D Graph) | 100% | >99% | N/A | N/A | -8.5 to -9.0 |

| Diffusion Model (Point Cloud) | 100% | >99% | N/A | N/A | -7.9 to -8.4 |

Notes: ↑ Higher is better, ↓ Lower is better. *Graph decoders often have explicit validity checks. *Validity inherent to 3D structure generation from a seed graph. Docking scores are target-dependent; values are illustrative for a specific protein.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Molecular Representation Research

| Item / Resource | Function & Explanation |

|---|---|

| RDKit | Open-source cheminformatics toolkit. Core functions: SMILES/SELFIES parsing, molecular graph manipulation, fingerprint generation, 2D/3D coordinate generation, and property calculation. |

| Open Babel / Pybel | Tool for converting between numerous chemical file formats, handling 3D conformer generation, and force field calculations. |

| PyTorch Geometric (PyG) | A library built upon PyTorch for easy implementation of Graph Neural Networks (GNNs), including 3D/equivariant graph layers. |

| DGL-LifeSci | A toolkit for graph neural networks in chemistry and biology, providing pre-built models and pipelines for molecular property prediction. |

| SELFIES Python Library | Official library for converting between SMILES and SELFIES, and for generating randomized SELFIES strings. Essential for SELFIES-based projects. |

| QM9, GEOM, ZINC Datasets | Standardized, publicly available molecular datasets. QM9/GEOM provide quantum properties and 3D geometries. ZINC provides large-scale commercially available compounds for drug discovery. |

| AutoDock Vina / Gnina | Molecular docking software. Critical for evaluating the potential binding affinity of generated 3D molecules to a protein target, linking generation to a key downstream task. |

| Jupyter Notebook / Colab | Interactive computing environments essential for rapid prototyping, data visualization, and sharing reproducible research workflows. |

The trajectory from SMILES to 3D point clouds reflects the generative AI for molecular design field's increasing sophistication, moving from prioritizing mere validity to capturing the intricate 3D structural determinants of function. The optimal representation is task-dependent: SELFIES ensures robust de novo generation, molecular graphs enable topology-aware design, and 3D representations are indispensable for geometry-sensitive applications like drug binding. Future progress hinges on the seamless integration of these representations, creating multi-faceted models that concurrently reason across symbolic, topological, and geometric views of matter.

Within the expanding field of generative AI for molecular design, the rigorous evaluation of model performance is paramount. Benchmarks and standardized datasets provide the critical foundation for comparing methodologies, tracking progress, and ensuring generated molecules are not only novel but also chemically valid and biologically relevant. This guide details three cornerstone resources: GuacaMol, MOSES, and MoleculeNet, framing them within the essential workflow of generative molecular AI research.

The table below summarizes the core objectives, domains, and key metrics of each benchmark.

Table 1: Core Characteristics of Molecular Benchmarks

| Feature | GuacaMol | MOSES | MoleculeNet |

|---|---|---|---|

| Primary Goal | Benchmark generative models on a wide range of chemical property and distribution-learning tasks. | Provide a standardized benchmarking platform for molecular generation models with a focus on drug-like molecules. | Benchmark predictive machine learning models on quantum mechanical, physicochemical, and biophysical datasets. |

| Core Domain | Generative Model Evaluation | Generative Model Evaluation | Predictive Model Evaluation |

| Source Data | ChEMBL (v.24) | ZINC Clean Leads collection | Multiple sources (e.g., QM9, Tox21, Clincal Trial datasets) |

| Molecule Count | ~1.6 million (for benchmark tasks) | ~1.9 million (training set: 1.6M) | Varies by sub-dataset (e.g., QM9: 133k, Tox21: ~8k) |

| Key Metrics | Distribution-learning: Validity, Uniqueness, Novelty. Goal-directed: Similarity, scores for specific properties (e.g., QED, LogP). | Validity, Uniqueness, Novelty, Fréchet ChemNet Distance (FCD), Similarity to a Nearest Neighbor (SNN), Fragment similarity, Scaffold similarity. | Task-specific metrics: e.g., RMSE (regression), ROC-AUC (classification). |

| Typical Use Case | Assessing a generative model's ability to cover chemical space and optimize for explicit objectives. | Comparing the quality and diversity of molecules generated by different generative architectures. | Training and evaluating models for predicting molecular properties or activities. |

In-Depth Technical Guide

GuacaMol (Goal-directed Generative Chemistry Model)

GuacaMol establishes a suite of benchmarks to evaluate both distribution-learning and goal-directed generation.

Experimental Protocol for Benchmarking:

- Model Training: Train the generative model on the curated ChEMBL dataset (~1.6M molecules).

- Distribution-Learning Benchmark:

- Generate a large set of molecules (e.g., 10,000).

- Calculate validity (SMILES parsable without syntax errors), uniqueness (fraction of valid molecules that are unique), and novelty (fraction of unique molecules not present in the training set).

- Goal-Directed Benchmark:

- Execute a series of defined tasks (e.g., maximize similarity to a target while maintaining drug-likeness).

- For each task, generate a ranked list of molecules and compute the task's specific scoring function (e.g., a weighted sum of Tanimoto similarity and QED score).

- Report the score of the top molecule and the average score of the top 100 molecules.

MOSES (Molecular Sets)

MOSES provides a reproducible pipeline for training, filtering, and evaluating generative models on drug-like molecules.

Experimental Protocol for Benchmarking:

- Data Splitting: Use the provided split to obtain Training, Test, and Scaffold Test sets. The Scaffold Test set evaluates generalization to novel molecular scaffolds.

- Model Training: Train the generative model on the MOSES Training set.

- Generation & Filtering: Generate a large sample (e.g., 30,000 molecules). Apply the MOSES Basic Filters (removing molecules with unusual atoms/charges, using RDKit's "Cleanup" procedure).

- Metric Computation: Calculate the suite of metrics on the filtered set.

- FCD: Embed generated and test set molecules using the ChemNet model and compute the Fréchet distance between the two multivariate Gaussian distributions.

- SNN: For each generated molecule, find its nearest neighbor in the test set based on ECFP4 fingerprints and compute the Tanimoto similarity. Report the average.

- Fragment/Scaffold Similarity: Compute the frequency of BRICS fragments and Bemis-Murcko scaffolds in the generated set and compare to the test set using the Jensen-Shannon divergence.

MoleculeNet

MoleculeNet is a collection of diverse datasets for molecular machine learning, categorized by the type of prediction task.

Experimental Protocol for Benchmarking (e.g., on Tox21):

- Dataset Selection: Choose a specific dataset (e.g., Tox21, 12 assays for nuclear receptor signaling and stress response).

- Data Splitting: Use the recommended stratified splitting method (random, scaffold-based, or time-based) to create training, validation, and test sets.

- Featureization: Represent molecules using the chosen method (e.g., molecular graphs, ECFP fingerprints, SMILES strings).

- Model Training & Evaluation: Train a predictive model (e.g., Random Forest, Graph Neural Network) on the training set. Tune hyperparameters on the validation set. Evaluate final performance on the held-out test set using the appropriate metric (e.g., mean ROC-AUC across the 12 tasks for Tox21).

Visualizing the Benchmarking Workflows

MOSES Evaluation Pipeline

MoleculeNet Predictive Modeling Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Molecular AI Benchmarking

| Tool / Reagent | Primary Function | Application Context |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. | Fundamental for SMILES parsing, molecular standardization, descriptor/fingerprint calculation, scaffold decomposition, and applying chemical filters in MOSES/GuacaMol. |

| DeepChem | Open-source framework for deep learning in chemistry. | Provides data loaders for MoleculeNet datasets, molecular featurizers, and implementations of graph-based and other deep learning models. |

| PyTorch / TensorFlow | Deep learning frameworks. | Essential for building, training, and evaluating both generative (for GuacaMol/MOSES) and predictive (for MoleculeNet) neural network models. |

| MOSES Benchmarking Scripts | Standardized evaluation pipeline. | Provides the code to compute all MOSES metrics (FCD, SNN, etc.) ensuring reproducibility and fair comparison between published models. |

| GuacaMol Benchmarking Suite | Collection of scoring functions and tasks. | Provides the exact implementation of the distribution-learning and goal-directed benchmarks for evaluating generative models. |

| ZINC Database | Publicly accessible repository of commercially available compounds. | Source of the curated "Clean Leads" subset used by MOSES as a realistic, drug-like chemical space for training generative models. |

| ChEMBL Database | Manually curated database of bioactive molecules. | Source of the diverse, bioactivity-annotated compounds used to train and evaluate models in the GuacaMol benchmark. |

The application of generative artificial intelligence (AI) to molecular design represents a paradigm shift in computational drug discovery. Framed within the broader thesis of generative AI for molecular design research, these models learn the underlying probability distribution of known chemical structures and their properties to generate novel, optimized candidates. This moves beyond traditional virtual screening of finite libraries into the de novo exploration of a virtually infinite chemical space, estimated to contain 10^60 synthesizable small molecules. This whitepaper details the technical implementation, experimental validation, and practical toolkit for leveraging generative AI to accelerate hit discovery.

Core Generative Model Architectures & Performance Data

Current generative models employ diverse architectures, each with distinct advantages for molecular design. The table below summarizes key quantitative benchmarks from recent literature.

Table 1: Performance Comparison of Key Generative Model Architectures

| Model Architecture | Key Benchmark (Guacamol) | Novelty (%) | Validity (%) | Uniqueness (%) | Key Strength |

|---|---|---|---|---|---|

| VAE (Variational Autoencoder) | VAE (Gómez-Bombarelli et al.) | 87.4 | 94.2 | 98.1 | Smooth latent space interpolation. |

| GAN (Generative Adversarial Network) | ORGAN (Guimaraes et al.) | 89.7 | 92.6 | 97.3 | High-quality, sharp molecular distributions. |

| Transformer | MolGPT (Bagal et al.) | 91.5 | 98.6 | 99.5 | Captures long-range dependencies in SMILES. |

| Flow-Based | GraphNVP (Madhawa et al.) | 93.1 | 96.8 | 99.8 | Exact latent density estimation. |

| Reinforcement Learning (RL) | REINVENT (Olivecrona et al.) | N/A* | >99.9 | N/A* | Direct optimization of custom reward functions. |

| Diffusion Model | GeoDiff (Xu et al.) | 95.2 | 99.1 | 99.9 | State-of-the-art on 3D conformation generation. |

Note: RL models are typically benchmarked on specific property optimization tasks (e.g., penalized logP, QED) rather than standard Guacamol benchmarks. Novelty/Uniqueness are context-dependent on the training set used for the RL agent.

Detailed Experimental Protocol for a Benchmark Study

This protocol outlines a standard workflow for training and validating a generative model for a target-specific hit discovery campaign.

Protocol: Benchmarking a Molecular Generative Model

Objective: To generate novel, synthetically accessible molecules predicted to inhibit a specified protein target (e.g., KRAS G12C).

Materials: See "Scientist's Toolkit" section.

Method:

- Data Curation & Preprocessing:

- Assemble a training set of known actives (IC50 < 10 µM) from public databases (ChEMBL, BindingDB).

- Apply standardization (e.g., using RDKit): neutralize charges, remove salts, and generate canonical SMILES.

- For graph-based models, convert SMILES to graph representations with atom and bond features.

- Split data: 80% training, 10% validation, 10% test.

Model Training:

- Architecture: Implement a Recurrent Neural Network (RNN)-based VAE.

- Encoder: A 3-layer GRU network encodes the SMILES string into a latent vector

z(dimension=256). - Latent Space: The

zvector is sampled from a Gaussian distribution defined by the encoder's output (meanμand log-variancelog σ²). The Kullback-Leibler (KL) divergence loss encourages a structured latent space. - Decoder: A second 3-layer GRU network decodes the latent vector

zback into a SMILES string. - Training Loop: Train for 100 epochs using the Adam optimizer (lr=0.0005). The total loss is

L = L_reconstruction + β * L_KL, whereβis gradually increased (KL annealing).

Molecular Generation & Latent Space Interpolation:

- After training, sample random vectors

zfrom the prior distribution (Standard Normal) and decode to generate new molecules. - To explore chemical space between two known actives, encode them to

z1andz2, linearly interpolate between the vectors, and decode the intermediates.

- After training, sample random vectors

In Silico Validation:

- Filters: Pass all generated molecules through rule-based filters (e.g., PAINS, REOS) and synthetic accessibility (SA) score thresholds.

- Docking: Use molecular docking (e.g., AutoDock Vina, Glide) to score generated molecules against the target's crystal structure (PDB: 5V9U). Retain top 1000 compounds by docking score.

- Property Prediction: Employ pre-trained models (e.g., Random Forest, CNN) to predict ADMET properties for the top candidates.

Hit Selection & Experimental Validation:

- Cluster top docking candidates by scaffold and select 50-100 representatives for synthesis.

- Proceed to in vitro biochemical and cellular assays (see Protocol in Section 4).

Diagram: Generative Model Workflow for Hit Discovery

Experimental Protocol for Validating AI-Generated Hits

This protocol details the biochemical and cellular assays used to validate the activity of AI-generated compounds.

Protocol: Biochemical & Cellular Assay for KRAS G12C Inhibition

Objective: To determine the half-maximal inhibitory concentration (IC50) of AI-generated compounds against KRAS G12C in biochemical and cellular settings.

Materials: See "Scientist's Toolkit" section.

Method: A. Biochemical GTPase Assay:

- In a 96-well plate, incubate 50 nM recombinant KRAS G12C protein with 10 µM fluorescent GTP analogue (BODIPY FL-GTP) in reaction buffer (50 mM HEPES, 150 mM NaCl, 5 mM MgCl2, 0.01% Triton X-100, pH 7.5).

- Pre-incubate test compounds (11-point, 3-fold serial dilution) with the protein for 15 minutes at 25°C before adding nucleotide.

- Initiate the reaction by adding nucleotide and monitor fluorescence polarization (λex = 485 nm, λem = 535 nm) every minute for 60 minutes using a plate reader.

- Calculate the rate of GTP hydrolysis for each well. Fit the dose-response curve to a 4-parameter logistic model to determine IC50 values.

B. Cell Viability Assay (Cell Titer-Glo):

- Seed NCI-H358 cells (KRAS G12C mutant) in a 384-well plate at 1000 cells/well in RPMI-1640 + 10% FBS. Incubate for 24 hours.

- Treat cells with test compounds (10-point, 4-fold serial dilution) in duplicate. Include DMSO vehicle and a positive control (e.g., AMG 510).

- Incubate for 72 hours at 37°C, 5% CO2.

- Equilibrate plate to room temperature for 30 minutes. Add an equal volume of Cell Titer-Glo 2.0 reagent to each well.

- Shake for 2 minutes, then incubate in the dark for 10 minutes. Record luminescence.

- Normalize luminescence to DMSO controls and calculate % viability. Fit data to determine IC50 values.

Diagram: KRAS G12C Inhibition Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Generative AI-Driven Hit Discovery

| Category | Item/Reagent | Supplier Examples | Function in Workflow |

|---|---|---|---|

| Computational Software | RDKit | Open Source | Open-source cheminformatics toolkit for molecular manipulation, descriptor calculation, and filtering. |

| Generative Modeling | PyTorch / TensorFlow | Facebook / Google | Deep learning frameworks for building and training custom generative models (VAEs, GANs). |

| Benchmarking Suite | Guacamol / MOSES | BenevolentAI / | Standardized benchmarks and metrics for evaluating generative model performance. |

| Cloud/Compute | NVIDIA V100/A100 GPU | AWS, Google Cloud, Azure | High-performance computing for training large generative models on millions of compounds. |

| Chemical Databases | ChEMBL, BindingDB | EMBL-EBI, | Public repositories of bioactive molecules with associated assay data for model training. |

| Docking Software | AutoDock Vina, Glide | Scripps, Schrödinger | Molecular docking suites for virtual screening and ranking of generated molecules. |

| Assay Reagents | Recombinant KRAS G12C Protein | Reaction Biology, BPS Bioscience | Purified target protein for primary biochemical screening assays. |

| Assay Reagents | BODIPY FL-GTP | Thermo Fisher Scientific | Fluorescent GTP analogue for monitoring GTPase activity in real-time. |

| Cell Line | NCI-H358 (CRL-5807) | ATCC | Human non-small cell lung carcinoma cell line harboring the KRAS G12C mutation. |

| Cell Viability Assay | CellTiter-Glo 2.0 | Promega | Luminescent assay to quantify viable cells based on ATP content post-compound treatment. |

| Compound Management | Echo 655T Liquid Handler | Beckman Coulter | Acoustic dispenser for precise, non-contact transfer of compound solutions for dose-response assays. |

How Generative AI Designs New Drugs: From de novo Design to Lead Optimization

This technical guide details de novo molecule generation, a pivotal component within the broader thesis on generative AI models for molecular design research. Moving beyond virtual screening of known chemical libraries, de novo generation leverages deep generative models to create novel, synthetically accessible molecular structures with optimized properties from scratch. This paradigm shift accelerates the exploration of vast, uncharted chemical space for therapeutic and material applications.

Core Generative Architectures: Methods & Protocols

Methodologies and Key Experiments

A. Recurrent Neural Network (RNN) / Long Short-Term Memory (LSTM) Based Generation

- Protocol: Models are trained on string-based representations (e.g., SMILES, SELFIES) to learn the grammatical syntax of chemical structures. Generation proceeds autoregressively, token-by-token.

- Key Experiment (Gómez-Bombarelli et al., 2018):

- Data Preparation: Curate a dataset of drug-like molecules (e.g., from ZINC15) in canonical SMILES format.

- Model Training: Train a variational autoencoder (VAE) with an RNN encoder and decoder. The encoder maps a SMILES string to a continuous latent vector (z); the decoder reconstructs the SMILES from z.

- Latent Space Optimization: Train a separate property predictor (e.g., for solubility, binding affinity) on the latent vectors.

- Generation: Sample new points in the latent space, guided by the property predictor, and decode them into novel SMILES strings.

- Validation: Use chemical validity checks (e.g., RDKit parsers) and synthetic accessibility (SA) scoring.

B. Generative Adversarial Networks (GANs)

- Protocol: A generator network creates molecular graphs or fingerprints, while a discriminator network evaluates their authenticity against a training dataset. Adversarial training refines the generator.

- Key Experiment (De Cao & Kipf, 2018 - MolGAN):

- Representation: Represent molecules as graphs (atom types, bond types).

- Model Architecture: Implement a generator (graph neural network) producing probabilistic graphs. A discriminator and a reinforcement learning (RL) reward network (for target properties) are used.

- Training: Use a Wasserstein GAN objective with gradient penalty. The RL reward (e.g., for QED, solubility) is incorporated via policy gradient.

- Sampling: Generate discrete graphs from the generator's output probabilities.

- Evaluation: Compute metrics like validity, uniqueness, and novelty.

C. Flow-Based Models (GraphCNF)

- Protocol: Invertible neural networks learn a bijective mapping between a simple prior distribution (e.g., Gaussian) and the complex distribution of molecular graphs, allowing exact likelihood calculation.

- Key Experiment (Shi et al., 2020 - GraphCNF):

- Data Encoding: Represent molecules as graphs with categorical node and edge features.

- Model Design: Construct a conditional continuous normalizing flow that generates nodes sequentially and edges conditionally.

- Training: Maximize the exact log-likelihood of training molecules under the model.

- Generation & Optimization: Sample new graphs via ancestral sampling. Perform gradient-based optimization in the latent space for property improvement.

D. Transformer-Based Models

- Protocol: Leverage attention mechanisms to process SMILES/SELFIES sequences or graph fragments, capturing long-range dependencies.

- Key Experiment (Bagal et al., 2021 - ChemBERTa + GPT):

- Pre-training: Pre-train a Transformer (e.g., BERT architecture) on a large corpus of SMILES (e.g., from PubChem) for context-aware representation.

- Fine-tuning: Fine-tune a GPT-like decoder on task-specific datasets for controlled generation.

- Prompt-based Generation: Use target property prompts (e.g., "high LogP") to condition the generation process.

Comparative Performance Data

Table 1: Quantitative Comparison of Key Generative Architectures (Benchmark Summary)

| Model Architecture | Primary Representation | Key Metric: Validity (%) | Key Metric: Uniqueness (%) | Key Metric: Novelty (%) | Optimization Method | Notable Strength |

|---|---|---|---|---|---|---|

| RNN-VAE (Gómez-Bombarelli) | SMILES | 94.6 | 87.5 | 100* | Latent Space Gradient | Smooth, explorable latent space |

| GAN (MolGAN) | Molecular Graph | 98.1 | 10.4 | 94.2 | RL Reward | Fast, single-step generation |

| Flow (GraphCNF) | Molecular Graph | 100.0 | 83.4 | 100* | Exact Likelihood | Exact likelihood, efficient sampling |

| Transformer (ChemGPT) | SELFIES | 99.7 | 95.2 | 100* | Prompt Conditioning | High-quality, conditioned sequences |

Note: Novelty can approach 100% when generating from scratch but is dataset-dependent.

Visualization of Workflows and Relationships

Title: De Novo Molecule Generation and Screening Workflow

Title: Generative AI Models in Molecular Design Thesis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Platforms for De Novo Molecule Generation Research

| Item / Tool Name | Function / Purpose | Key Provider / Library |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for handling molecular data, validity checks, descriptor calculation, and visualization. | RDKit Community |

| PyTorch / TensorFlow | Deep learning frameworks for building, training, and deploying generative models (VAEs, GANs, Transformers). | Meta / Google |

| DeepChem | Open-source ecosystem integrating deep learning with chemistry, offering benchmark datasets and model layers. | DeepChem Community |

| GuacaMol | Benchmarking suite for de novo molecular generation, providing standardized metrics and baselines. | BenevolentAI |

| MOSES | Benchmarking platform (Molecular Sets) for training and evaluation of generative models. | Insilico Medicine |

| OpenNMT | Toolkit for sequence-based models (RNN, Transformer) applicable to SMILES/SELFIES generation. | OpenNMT |

| TorchDrug | A PyTorch-based framework for drug discovery, including graph-based generative tasks. | MIT Lab |

| AutoDock Vina / Gnina | Molecular docking software for in-silico validation of generated molecules against protein targets. | Scripps Research |

| SAscore / RAscore | Synthetic Accessibility and Retrosynthetic Accessibility predictors to filter generated structures. | Various (e.g., RDKit) |

| Oracle Databases | Large-scale molecular property predictors (e.g., QED, Solubility, Toxicity) used as reward functions. | ChEMBL, ZINC, etc. |

Scaffold Hopping and Molecular Optimization with Conditional Generation

Within the broader thesis on Generative AI Models for Molecular Design Research, conditional deep generative models represent a pivotal advancement. They enable precise navigation of chemical space, moving beyond mere novel molecule generation to the targeted discovery of compounds with predefined optimal properties. This guide details the technical implementation of these models for the specific tasks of scaffold hopping—discovering novel molecular cores with preserved bioactivity—and multi-parameter molecular optimization.

Core Architectures and Models

Current methodologies leverage conditional variants of established generative architectures. The conditioning signal, often a vector encoding desired properties or a reference scaffold, guides the generation process.

| Model Architecture | Conditioning Mechanism | Typical Output Format | Key Advantage | Reported Performance (Property Prediction RMSE) |

|---|---|---|---|---|

| Conditional VAE (CVAE) | Concatenation of latent vector & condition vector | SMILES, SELFIES, Graph | Stable training, smooth latent space interpolation | 0.07 - 0.15 (on QM9 datasets) |

| Conditional GAN (cGAN) | Condition input to both generator and discriminator | SMILES, Graph | High sample fidelity, sharp property distributions | 0.05 - 0.12 (on DRD2 activity) |

| Conditional Diffusion Models | Guidance via classifier or classifier-free guidance | 3D Coordinates, Graph | State-of-the-art sample quality, excellent for 3D | 0.03 - 0.08 (on binding affinity) |

| Conditional Transformer (CT) | Condition tokens prepended to sequence | SMILES, SELFIES | Captures long-range dependencies, transfer learning | 0.08 - 0.14 (on LogP, QED) |

Experimental Protocol: Conditional Scaffold Hopping

This protocol outlines a standard workflow using a Conditional Graph VAE.

Step 1: Data Preparation and Conditioning

- Source: ChEMBL or proprietary HTS data.

- Processing: For each active molecule, identify the Bemis-Murcko scaffold. Define the condition as a fingerprint (ECFP4) of this reference scaffold or a one-hot encoded vector of scaffold class.

- Target Representation: Encode the full molecule (including side chains) as a graph with atom features (atomic number, hybridization) and bond features (type, conjugation).

Step 2: Model Training

- Architecture: Use a Graph Neural Network (GNN) encoder. Concatenate the latent vector

zwith the condition vectorc. The decoder is a graph generator that uses[z|c]to reconstruct the molecular graph. - Loss Function:

L = L_recon + β * L_KL, whereL_reconis graph reconstruction loss andL_KLis the Kullback-Leibler divergence penalty. - Hyperparameters: β annealed from 0 to 0.01 over epochs, learning rate = 1e-3, batch size = 128.

Step 3: Generation and Validation

- Sampling: Input a novel scaffold fingerprint as condition

cand samplezfrom a prior distribution. The decoder generates novel decorated scaffolds. - Validation: Pass generated molecules through a pre-trained predictive model (e.g., for binding affinity) and filter for those maintaining predicted activity. Validate top candidates via in silico docking (e.g., Glide, AutoDock Vina).

Diagram 1: Conditional Scaffold Hopping Workflow (97 chars)

Experimental Protocol: Multi-Objective Optimization

This protocol uses a Conditional Transformer with Reinforcement Learning (RL) fine-tuning.

Step 1: Pre-training

- Model: Transformer decoder-only architecture.

- Task: Train on large-scale molecular corpus (e.g., ZINC) to predict the next token in a SELFIES string.

- Conditioning: Prepend property control tokens (e.g.,

[LogP>5][QED>0.6]) to the SELFIES sequence.

Step 2: Reinforcement Learning Fine-tuning

- Agent: The pre-trained Conditional Transformer.

- Environment: Property prediction models (e.g., for Synthesizability (SA), Lipinski rules).

- Reward:

R = w1 * P(activity) + w2 * SA_score + w3 * step_penalty. Weights (w) are tuned for the campaign. - Algorithm: Proximal Policy Optimization (PPO) or REINFORCE with baseline. The policy gradient updates the model to maximize expected reward for a given condition.

Step 3: Pareto-Optimal Selection

- Process: Generate a large library (~10,000 molecules) across a grid of condition values.

- Analysis: Apply Pareto front analysis to identify molecules optimally balancing multiple properties (e.g., potency vs. solubility).

Diagram 2: RL Fine-Tuning for Molecular Optimization (84 chars)

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Experiment | Example / Specification |

|---|---|---|

| Chemical Databases | Source of training data and baseline compounds. | ChEMBL, ZINC20, PubChem, proprietary corporate databases. |

| Molecular Representation Library | Converts molecules to model inputs. | RDKit (for SMILES/Graph), SELFIES (for robust generation), DeepGraphLibrary (DGL). |

| Deep Learning Framework | Infrastructure for building and training models. | PyTorch or TensorFlow, with extensions like PyTorch Geometric for graphs. |

| Conditional Generative Model Code | Core algorithm for scaffold hopping/optimization. | Open-source implementations (e.g., MolGym, PyMolDG), or custom CVAE/cGAN scripts. |

| Property Prediction Suite | Provides reward signals and validation metrics. | Pre-trained models for QED, SA, LogP, pChEMBL values, or in-house ADMET predictors. |

| In Silico Validation Suite | Filters and prioritizes generated molecules. | Docking software (AutoDock Vina, Glide), molecular dynamics (GROMACS, Desmond). |

| High-Performance Computing (HPC) | Provides necessary compute for training and sampling. | GPU clusters (NVIDIA V100/A100), cloud compute (AWS, GCP). |

Quantitative Benchmarking

Performance benchmarks on public datasets are critical for model comparison.

| Benchmark Dataset | Task | Best Model (Current) | Key Metric | Reported Value |

|---|---|---|---|---|

| Guacamol | Goal-directed generation | Conditional Diffusion (Graph-based) | Hit Rate (Top 100) for Med. Chem. objectives | 0.89 - 0.97 |

| MOSES | Unconditional generation & filtering | Conditional Transformer (RL-tuned) | Valid, Unique, Novel (VUN) @ 10k samples | 0.92, 0.99, 0.79 |

| PBBM (PDBbind-based) | Scaffold hopping for binding | 3D Conditional VAE | Success Rate (ΔpKi < 1 log unit) | 41% |

| LEADS (Proprietary-like) | Multi-parameter optimization | Pareto-conditioned GAN | Pareto Front Density (Mols per μ-point) | 3.8 |

This whitepaper details the methodology of Property-Guided Design (PGD), a paradigm that integrates predictive models and generative algorithms to design molecules with predefined Absorption, Distribution, Metabolism, Excretion, Toxicity (ADMET), and potency profiles. Positioned within the broader thesis on generative AI for molecular design, PGD represents a critical shift from mere generation of novel structures to the targeted creation of optimized drug candidates. It directly addresses the high attrition rates in drug development by frontloading key property optimization in the discovery phase.

Core Methodological Framework

PGD operates on a closed-loop cycle of prediction, generation, and validation. The core workflow integrates several computational and experimental modules.

Diagram Title: Property-Guided Design Closed-Loop Workflow

Key Experimental Protocols & Data

Protocol for Building a Multi-Task Deep Learning ADMET Predictor

Objective: To train a single neural network capable of predicting a suite of ADMET and potency endpoints from molecular structure.

- Data Curation: Gather standardized experimental data from public sources (e.g., ChEMBL, PubChem) and internal assays. Ensure consistent units and activity thresholds.

- Descriptor/Feature Generation: Represent molecules as either:

- Graph-based: Atom and bond features for a Graph Neural Network (GNN).

- Fingerprint-based: Extended-connectivity fingerprints (ECFP4).

- Descriptors: Calculated physicochemical and topological descriptors (e.g., using RDKit).

- Model Architecture: Implement a multi-task deep learning model with shared hidden layers and task-specific output heads. Dropout and batch normalization are used for regularization.

- Training: Use a combined loss function (e.g., weighted sum of binary cross-entropy for classification tasks, mean squared error for regression tasks). Employ k-fold cross-validation.

- Validation: Hold out a test set (20%). Evaluate using task-appropriate metrics: ROC-AUC, precision-recall for classification; R², RMSE for regression.

Table 1: Performance of a Representative Multi-Task ADMET/Potency Predictor (Test Set Metrics)

| Property Endpoint | Type | Metric | Model Performance | Typical Target for Lead Optimization |

|---|---|---|---|---|

| hERG Inhibition | Classification (pIC50 > 5) | ROC-AUC | 0.88 | pIC50 < 5 (Low Risk) |

| CYP3A4 Inhibition | Classification (pIC50 > 6) | ROC-AUC | 0.82 | pIC50 < 6 |

| Human Liver Microsome Stability | Regression (% remaining) | R² | 0.75 | > 50% remaining |

| Caco-2 Permeability | Regression (Papp, 10⁻⁶ cm/s) | R² | 0.78 | > 10 |

| Kinase X pIC50 | Regression | RMSE | 0.45 log units | > 8.0 |

Protocol for a Reinforcement Learning (RL)-Based Molecular Generator

Objective: To fine-tune a generative model using property predictors as reward functions to bias generation towards the desired profile.

- Pre-training: A generative model (e.g., SMILES-based RNN or Graph-based GAN) is trained on a large corpus of drug-like molecules (e.g., ZINC) to learn chemical syntax and space.

- Reward Function Definition: Define a composite reward R(m) for a generated molecule m:

R(m) = w₁ * f_potency(m) + w₂ * f_solubility(m) - w₃ * f_toxicity(m)where f are normalized outputs from the predictive models (3.1). - Policy Optimization: Use a policy gradient method (e.g., REINFORCE, PPO). The generator's likelihood of producing favorable molecules is iteratively increased by maximizing the expected reward.

- Sampling and Diversity: Incorporate techniques like experience replay and intrinsic diversity rewards to avoid mode collapse and generate a diverse set of optimized structures.

- Output: The RL-fine-tuned generator produces a focused virtual library enriched for the target profile.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Experimental Assays for Validating Property-Guided Designs

| Reagent/Kit/Platform | Provider Examples | Function in PGD Validation |

|---|---|---|

| hERG Inhibition Assay Kit | Eurofins, ChanTest | Measures compound blockade of the hERG potassium channel, a key predictor of cardiac toxicity (TdP). |

| P450-Glo CYP450 Assays | Promega | Luminescent assays to quantify inhibition of major cytochrome P450 enzymes (CYP3A4, 2D6, etc.), predicting drug-drug interaction risk. |

| Human Liver Microsomes (HLM) | Corning, Xenotech | Used in metabolic stability assays to measure intrinsic clearance, informing hepatic first-pass effect and half-life. |

| Caco-2 Cell Line | ATCC | Model of human intestinal epithelium for predicting oral absorption and permeability (Papp). |

| Phospholipidosis Prediction Kit | Cayman Chemical | High-content imaging assay to detect phospholipid accumulation, a marker of lysosomal toxicity. |

| Thermofluor (TSA) Stability Assay | Malvern Panalytical | Biophysical assay to measure target protein thermal shift upon ligand binding, confirming target engagement and potency. |

| AlphaScreen/AlphaLISA Assay Kits | Revvity | Bead-based proximity assays for high-sensitivity measurement of biochemical potency (e.g., kinase activity, protein-protein interaction inhibition). |

Pathway and Decision Logic Visualization

Integrated Property Optimization Decision Logic

Diagram Title: Key ADMET/Potency Decision Cascade for Lead Advancement

Property-Guided Design represents the maturation of generative AI in molecular discovery. By embedding predictive ADMET and potency models directly into the generative process, it enables the direct exploration of chemical space regions that satisfy complex, multi-parameter optimization goals. This paradigm, situated within the broader generative AI thesis, shifts the focus from quantity of molecules to quality by design, offering a robust computational framework to increase the probability of clinical success and streamline the early drug discovery pipeline.

Within the broader thesis on the Overview of generative AI models for molecular design research, goal-oriented generation represents a paradigm shift from passive exploration to directed invention. While generative models like VAEs and GANs can produce novel molecular structures, Reinforcement Learning (RL) provides a framework for steering the generation process toward molecules with optimized properties. This technical guide details the core methodologies, experimental protocols, and practical toolkit for implementing RL in molecular design.

Core RL Frameworks and Architectures

RL formulates molecular generation as a sequential decision-making process. An agent (generator) interacts with an environment (molecular simulation or predictive model) by taking actions (adding atoms or bonds) to build a molecule, receiving rewards based on the properties of the final structure.

Key Frameworks:

- Policy Gradient Methods (e.g., REINFORCE): Directly optimize the policy (generation model) to maximize the expected reward. Commonly used with RNN or Graph Neural Network (GNN)-based generators.

- Deep Q-Networks (DQN): Learn a Q-function to estimate the value of actions in a given state (partial molecular graph). More suitable for discrete, graph-based actions.

- Actor-Critic Methods: Combine a policy (actor) and a value function (critic) for more stable training. Proximal Policy Optimization (PPO) is a prevalent algorithm.

- Model-Based RL: Incorporate an internal predictive model of the environment (e.g., a fast property predictor) to reduce costly external evaluations.

Quantitative Comparison of RL Frameworks:

Table 1: Comparison of Key RL Frameworks for Molecular Design

| Framework | Generator Architecture | Typical Action Space | Training Stability | Sample Efficiency | Common Reward Metrics |

|---|---|---|---|---|---|

| Policy Gradient (REINFORCE) | RNN, SMILES-based | Discrete (Characters) | Moderate | Low | QED, SA, LogP, Target Activity |

| Deep Q-Network (DQN) | GNN, Graph-based | Discrete (Atom/Bond types) | Low | Moderate | Docking Score, Synthetic Accessibility |

| Actor-Critic (PPO) | GNN, Transformer | Discrete/Graph | High | Moderate-High | Multi-objective (e.g., Activity + Solubility) |

| Model-Based RL | Any (with separate world model) | Varies | High | High | Predicted binding affinity, ADMET |

Detailed Experimental Protocol

Below is a generalized yet detailed protocol for conducting an RL-based molecular generation experiment targeting a specific protein.

Protocol: Goal-Oriented Molecular Generation with an Actor-Critic Agent

Objective: To generate novel molecules with high predicted binding affinity for a target protein and desirable pharmacokinetic properties.

Materials: See "The Scientist's Toolkit" section.

Procedure:

Environment Setup:

- Define the state space (S) as the current partial or complete molecular graph.

- Define the action space (A). For graph-based generation: {Add atom of type X, Add bond of type Y, Terminate}.

- Initialize the reward function (R). A common multi-objective reward is:

R(m) = w1 * pIC50_pred(m) + w2 * QED(m) - w3 * SA_Score(m)wherepIC50_predis from a docking simulation or a pre-trained predictor, QED is Quantitative Estimate of Drug-likeness, and SA_Score is Synthetic Accessibility score. Weights (w) balance objectives.

Agent and Model Initialization:

- Initialize the Actor Network (Policy π): A Graph Neural Network that takes the current graph state and outputs a probability distribution over possible actions.

- Initialize the Critic Network (Value V): A GNN that estimates the expected cumulative reward from the current state.

- Initialize a Replay Buffer (D) to store state-action-reward-next_state trajectories for training.

Rollout Phase (Data Collection):

- For N epochs:

- Reset environment to an initial state (e.g., a single atom or empty graph).

- Until a "Terminate" action is selected or a maximum step limit is reached:

- The Actor network observes current state

s_t. - It selects an action

a_t(e.g., "Add a carbon atom"). - The environment executes the action, yielding a new state

s_{t+1}. - Upon termination, the final molecule

mis evaluated to compute the rewardr_t. - Store the trajectory (

s_t,a_t,r_t,s_{t+1}) in the replay bufferD.

- The Actor network observes current state

- For N epochs:

Learning Phase (Parameter Update):

- Sample a batch of trajectories from

D. - Update Critic: Minimize the loss between the Critic's predicted value

V(s_t)and the observed discounted return. - Update Actor: Use the PPO objective to update the Actor's parameters, maximizing the probability of actions that led to high "advantage" (observed return - baseline value from Critic). This includes clipping to ensure stable updates.

- Periodically update a target network for the Critic to further stabilize training.

- Sample a batch of trajectories from

Validation and Iteration:

- Every K epochs, freeze the policy and generate a set of candidate molecules.

- Evaluate candidates using more rigorous (and computationally expensive) methods, such as molecular dynamics simulations or in vitro assays.

- Optionally, use these high-fidelity results to fine-tune the reward predictor (a process known as reward shaping or active learning).

- Iterate steps 3-5 until convergence or a satisfactory set of molecules is obtained.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for RL-Driven Molecular Design

| Item / Tool | Category | Function / Purpose |

|---|---|---|

| RDKit | Cheminformatics Library | Core library for molecule manipulation, descriptor calculation, and visualization. Essential for building the environment. |

| OpenAI Gym / ChemGym | Environment Framework | Provides a standardized API for defining custom RL environments for chemistry. |

| PyTorch / TensorFlow | Deep Learning Framework | For building and training the Actor and Critic neural networks (GNNs, Transformers). |

| DeepChem | ML for Chemistry Library | Offers pre-trained models for property prediction (reward computation) and molecular featurization. |

| AutoDock Vina / Schrödinger Suite | Molecular Docking | Provides high-fidelity binding affinity estimates for reward calculation or final validation. |

| ZINC / ChEMBL | Chemical Database | Source of initial training data for pre-training the generator or training proxy prediction models. |

| PPO Implementation (e.g., Stable-Baselines3) | RL Algorithm Library | Provides robust, optimized implementations of core RL algorithms like PPO. |

| Synthetic Accessibility (SA) Score Predictor | Reward Component | Penalizes chemically complex or hard-to-synthesize structures during generation. |

| Molecular Dynamics Software (e.g., GROMACS) | Validation Tool | For advanced in silico validation of top-generated candidates beyond simple docking. |

Current Challenges and Future Directions

Despite its promise, RL for molecular design faces significant hurdles: reward sparsity (reward is only given at the end of generation), high-dimensional action spaces, and the bottleneck of accurate reward evaluation (e.g., slow physics-based simulations). Future research is focused on hybrid models (e.g., RL fine-tuned on pre-trained generative models), more efficient exploration strategies, and the integration of human-in-the-loop feedback for iterative, multi-parameter optimization. This positions RL not as a replacement for other generative models, but as a powerful, goal-directed complement within the generative AI ecosystem for molecular invention.

Within the broader thesis of Overview of generative AI models for molecular design research, this whitepaper examines the translational application of generative AI in three critical therapeutic areas. The progression from abstract model architectures to tangible pipeline assets is demonstrated through specific case studies in oncology, central nervous system (CNS) disorders, and infectious diseases. These case studies highlight the shift from target-centric to generative, multi-parameter optimization in drug discovery.

Oncology: Generating Novel KRAS G12C Inhibitors

Experimental Protocol

A published methodology from Insilico Medicine and other groups involves a multi-step generative process:

- Data Curation: A dataset of known kinase and GTPase inhibitors, including published KRAS G12C binders, was assembled from ChEMBL and proprietary sources. Molecular structures were standardized and annotated with biochemical activity (IC50/Kd).

- Initial Generation: A conditional generative adversarial network (cGAN) was trained to produce novel molecular structures conditioned on desired properties (e.g., scaffold similarity to a known binder, predicted affinity >8.0 pKi).

- Optimization Cycle: Generated molecules were passed through a recurrent neural network (RNN)-based generator for scaffold decoration and optimization. Properties were predicted via a convolutional neural network (CNN) quantitative structure-activity relationship (QSAR) model.

- Synthesis & Validation: Top-ranking virtual molecules (n=~200) were assessed for synthetic accessibility. A shortlist (n=7) was synthesized and tested in biochemical assays for KRAS G12C inhibition and in cellular assays for antiproliferative activity in NCI-H358 cells.

Quantitative Outcomes

Table 1: Key Results from Generative AI-Driven KRAS G12C Program

| Metric | Pre-Generative AI Benchmark (Sotorasib analogue) | Generative AI Lead Candidate (INS018_055) |

|---|---|---|

| Biochemical IC50 (KRAS G12C) | 12 nM | 8.4 nM |

| Cellular IC50 (NCI-H358) | 48 nM | 36 nM |

| Selectivity Index (vs. WT KRAS) | 95-fold | >200-fold |

| Predicted LogP | 4.1 | 3.2 |

| Synthetic Steps (estimated) | 9 | 6 |

| In vivo Efficacy (Tumor Growth Inhibition) | 67% at 50 mg/kg | 78% at 50 mg/kg |

Diagram 1: Generative AI workflow for KRAS inhibitor design.

CNS: Designing Blood-Brain Barrier Permeant mGluR5 Negative Allosteric Modulators

Experimental Protocol

A study by BenevolentAI detailed a protocol for CNS-targeted generation:

- BBB Permeability Model Training: A graph neural network (GNN) classifier was trained on a curated dataset of ~8,000 molecules with reliable in vivo logBB (brain/blood concentration ratio) data.

- Target-Specific Generation: A variational autoencoder (VAE) was trained on known mGluR5 modulators. The latent space was sampled using a Bayesian optimization loop, guided by the combined objective of predicted mGluR5 activity (pIC50 > 7.0) and predicted logBB > -0.3.

- In Silico Safety Profiling: Generated molecules were screened in silico against a panel of 44 CNS-off target models (e.g., hERG, 5-HT2B) to de-risk early.

- In Vitro Validation: Selected compounds were assessed in mGluR5 calcium mobilization assays and parallel artificial membrane permeability assay (PAMPA-BBB). Select hits were progressed to in vivo pharmacokinetics (PK) studies in rodents to measure actual brain penetration.

Quantitative Outcomes

Table 2: Generative AI-Derived mGluR5 NAM Properties vs. Traditional Lead

| Parameter | Traditional Lead (Baseline) | Generative AI Candidate (BAI-110) |

|---|---|---|

| mGluR5 Ca2+ Flux IC50 | 15.2 nM | 9.8 nM |

| PAMPA-BBB Pe (10^-6 cm/s) | 2.1 | 8.7 |

| In Vivo LogBB (Rat) | -0.9 | 0.15 |

| In Silico hERG pIC50 | 6.2 (risk) | <5.0 (low risk) |

| Ligand Efficiency (LE) | 0.32 | 0.41 |

| Fraction of Sp3 Carbons (Fsp3) | 0.25 | 0.48 |

Diagram 2: CNS drug design workflow with integrated BBB prediction.

Infectious Disease: Accelerating Broad-Spectrum Antiviral Discovery

Experimental Protocol

An initiative by IBM Research and Mount Sinai for pan-coronavirus inhibitors used:

- Multi-Target Activity Representation: Activity data for compounds against SARS-CoV-2 3CLpro, MERS-CoV PLpro, and other viral proteases were encoded into a multi-task deep learning model.

- Generative Foundation Model: A chemical language model (CLM), pre-trained on 10+ million molecules from ZINC, was fine-tuned on the multi-target activity data.

- Active Learning Loop: The fine-tuned CLM generated a focused library (~5,000 virtual molecules). Top predictions were tested in vitro. The resulting new activity data was fed back into the model in an iterative loop over 3 cycles.

- Experimental Validation: Compounds were tested in enzyme inhibition assays for multiple coronaviruses and in cytopathic effect (CPE) assays in Vero E6 cells infected with live virus.

Quantitative Outcomes

Table 3: Performance of Generative AI-Derived Broad-Spectrum Antiviral Candidates

| Assay / Property | Candidate AI-234-1 (SARS-CoV-2 Focus) | Candidate AI-234-5 (Broad-Spectrum) |

|---|---|---|

| SARS-CoV-2 3CLpro IC50 | 11 nM | 28 nM |

| MERS-CoV PLpro IC50 | >10,000 nM | 52 nM |

| Human Cathepsin L IC50 | >5,000 nM | >5,000 nM |

| SARS-CoV-2 CPE (EC90) | 45 nM | 120 nM |

| MERS-CoV CPE (EC90) | N/A | 180 nM |

| Cytotoxicity (CC50) | >50 µM | >50 µM |

| Selectivity Index (SI) | >1,100 | >400 |

Diagram 3: Active learning loop for broad-spectrum antiviral generation.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials and Tools for Generative AI-Driven Molecular Design Experiments

| Item / Solution | Function in the Workflow | Example Vendor/Software |

|---|---|---|

| Curated Bioactivity Database | Provides high-quality structured data for model training and validation. | ChEMBL, GOSTAR, proprietary databases |

| Generative AI Software Platform | Core engine for molecular generation and optimization. | REINVENT, MolGPT, PyTorch/TensorFlow custom models |

| ADMET Prediction Suite | Predicts pharmacokinetic and toxicity properties in silico. | Schrodinger's QikProp, Simulations Plus ADMET Predictor, OpenADMET |

| Synthetic Accessibility Scorer | Estimates the feasibility of chemical synthesis for generated molecules. | RDKit (SA Score), SYBA, AiZynthFinder |

| High-Throughput Virtual Screening Suite | Enables rapid docking or pharmacophore screening of generated libraries. | OpenEye FRED, Cresset Flare, AutoDock Vina |

| Target-Specific Biochemical Assay Kit | Validates the predicted activity of generated compounds. | Reaction Biology, BPS Bioscience (enzyme kits), cell-based reporter assays |

| In Vivo PK/PD Study Services | Provides critical in vivo validation of brain penetration or efficacy. | Charles River, Pharmaron, WuXi AppTec |

These case studies demonstrate that generative AI is no longer a speculative technology but a functional engine within molecular design pipelines. In oncology, it enables rapid exploration around intractable targets like KRAS. In CNS, it directly engineers for complex multi-parameter success (potency + BBB penetration). In infectious disease, it accelerates the response to emerging threats by targeting conserved viral elements. The consistent theme is the integration of generative models with predictive tools and iterative experimental validation, creating a new paradigm for drug discovery that is faster, more guided, and more ambitious in its molecular objectives.

Overcoming Challenges in AI-Driven Molecular Design: Pitfalls and Best Practices

Addressing Mode Collapse and Lack of Diversity in Generated Libraries

Within the broader thesis on generative AI models for molecular design, a central technical challenge is the propensity of models to suffer from mode collapse—the generation of a limited set of similar molecular structures—and a consequent lack of diversity in the virtual libraries they produce. This undermines the primary goal of exploring a broad, novel chemical space for drug discovery. This guide details the technical roots of these issues and provides experimental protocols for their diagnosis and mitigation.

Quantitative Analysis of Diversity Metrics

The following table summarizes key quantitative metrics used to assess molecular diversity and detect mode collapse in generated libraries.

Table 1: Key Metrics for Assessing Generative Model Diversity & Mode Collapse

| Metric | Formula/Description | Ideal Range (Higher is better) | Threshold for Potential Collapse |

|---|---|---|---|

| Internal Diversity (IntDiv) | 1 - (Average pairwise Tanimoto similarity within generated set) | 0.7 - 0.9 (varies by target) | < 0.5 |