From SAR to AI: A Comprehensive Guide to Modern Molecular Optimization Algorithms

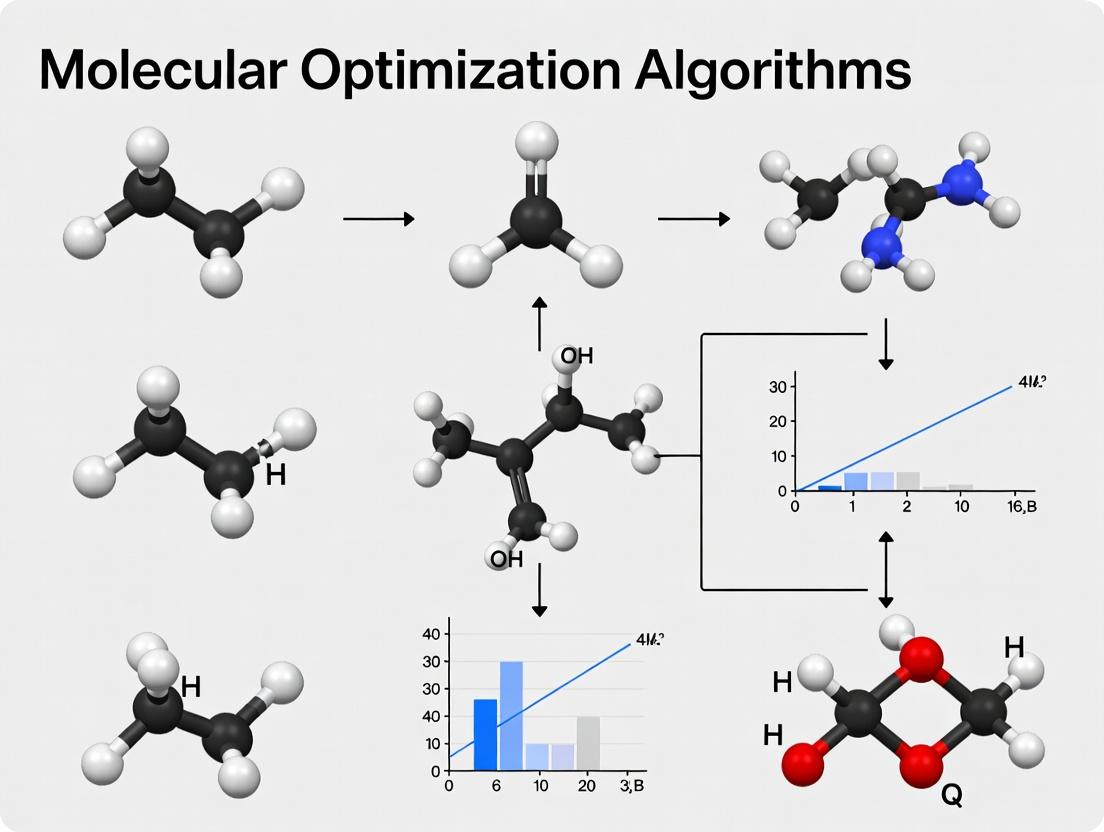

This article provides a detailed overview of the algorithms driving modern molecular optimization, a critical process in drug discovery and materials science.

From SAR to AI: A Comprehensive Guide to Modern Molecular Optimization Algorithms

Abstract

This article provides a detailed overview of the algorithms driving modern molecular optimization, a critical process in drug discovery and materials science. We begin by establishing the foundational principles of the molecular optimization problem, including property prediction and chemical space navigation. We then delve into core methodological categories, from traditional Quantitative Structure-Activity Relationship (QSAR) models to cutting-edge deep generative and reinforcement learning techniques. The guide addresses common challenges in algorithm deployment, such as data scarcity and synthetic feasibility, offering practical troubleshooting and optimization strategies. Finally, we present a framework for validating and comparing these algorithms, examining key benchmarks, metrics, and real-world case studies. Designed for researchers and drug development professionals, this resource synthesizes current knowledge to inform the selection and implementation of effective optimization strategies for biomedical research.

What is Molecular Optimization? Defining the Problem and Chemical Space

Molecular optimization algorithms research is fundamentally driven by the need to navigate high-dimensional chemical spaces towards compounds that satisfy multiple, often competing, criteria. This whitepaper addresses the core challenge of this field: simultaneously optimizing a suite of molecular properties—such as potency, selectivity, solubility, metabolic stability, and lack of toxicity—to arrive at viable drug candidates. Traditional sequential optimization often fails, as improving one property can degrade another. This guide details modern computational and experimental strategies to balance these properties effectively.

Quantitative Landscape of Molecular Property Goals

Successful drug candidates must reside within a narrowly defined multi-property space. The following tables summarize current target thresholds for small-molecule therapeutics, based on recent literature and industry standards.

Table 1: Core Physicochemical & ADMET Property Targets

| Property | Optimal Range/Target | Critical Threshold (Typical) | Measurement Assay |

|---|---|---|---|

| Lipophilicity (cLogP/LogD) | 1-3 | <4 | Chromatographic (e.g., HPLC) |

| Molecular Weight (MW) | ≤500 Da | ≤600 Da | Calculated |

| Polar Surface Area (PSA) | 60-140 Ų | N/A | Calculated |

| Solubility (PBS, pH 7.4) | >100 µM | >10 µM | Kinetic or Thermodynamic Solubility |

| Metabolic Stability (HLM Clint) | <30 µL/min/mg | <50 µL/min/mg | Human Liver Microsome Incubation |

| hERG Inhibition (IC₅₀) | >10 µM | >1 µM | Patch-clamp or binding assay |

| CYP Inhibition (IC₅₀) | >10 µM (3A4, 2D6) | >1 µM | Fluorescent or LC-MS/MS probe assay |

Table 2: In Vitro Potency & Selectivity Targets

| Property | Ideal Target | Minimum Acceptable | Key Experimental Model |

|---|---|---|---|

| Primary Target Potency (IC₅₀/EC₅₀) | <100 nM | <1 µM | Cell-based or biochemical assay |

| Selectivity Index (vs. closest ortholog) | >100-fold | >30-fold | Counter-screening panel |

| Cytotoxicity (CC₅₀ in HEK293/HepG2) | >30 µM | >10 µM | Cell viability assay (e.g., MTT) |

| Plasma Protein Binding (%) | <95% (moderate) | N/A | Equilibrium dialysis or ultrafiltration |

| Passive Permeability (Papp, Caco-2/MDCK) | >5 x 10⁻⁶ cm/s | >1 x 10⁻⁶ cm/s | Cell monolayer assay |

Methodologies for Multi-Objective Optimization (MOO)

Computational Pareto Front Identification

Protocol: To identify compounds balancing potency (pIC₅₀) and solubility (LogS):

- Library Design: Generate a focused library of 10,000 analogs around a lead using enumerated structural modifications (e.g., R-group variations on core).

- Property Prediction: Calculate pIC₅₀ using a validated QSAR model and LogS using a physics-based method (e.g., General Solubility Equation).

- Pareto Analysis: Plot all compounds in a 2D space (pIC₅₀ vs. LogS). Identify the Pareto front—the set of compounds where improving one property necessitates worsening the other.

- Selection: Prioritize compounds on the Pareto front for synthesis. Compounds far from the front are sub-optimal.

Diagram Title: Pareto Front for Two Molecular Properties

Integrated Machine Learning-Guided Design Cycle

Protocol: A closed-loop iterative optimization.

- Initial Data: Start with 50-100 compounds with measured data for 3+ key properties.

- Model Training: Train multi-task deep neural networks or Bayesian models to predict all properties from molecular structure.

- Virtual Exploration: Use the models to score a large virtual library (e.g., 10⁶ compounds). Apply a scalarization function (e.g., weighted sum) or a multi-objective genetic algorithm (e.g., NSGA-II) to propose 50-100 new compounds predicted to improve the property balance.

- Synthesis & Testing: Synthesize and test the proposed compounds.

- Data Augmentation: Add new data to the training set and retrain models. Repeat steps 2-5 for 3-5 cycles.

Diagram Title: Closed-Loop Multi-Objective Optimization Cycle

Experimental Protocols for Key Parallel Assessments

High-Throughput Parallel Metabolic Stability & CYP Inhibition

Protocol:

- Stock Solutions: Prepare test compound (10 mM in DMSO).

- Metabolic Stability Incubation:

- Dilute compound to 1 µM in 0.1 M PBS (pH 7.4) with 0.5 mg/mL human liver microsomes (HLM).

- Initiate reaction with 1 mM NADPH. Run in triplicate.

- Aliquot 50 µL at T=0, 5, 15, 30, 45, 60 min into 100 µL acetonitrile (containing internal standard) to stop reaction.

- Centrifuge, analyze supernatant via LC-MS/MS. Calculate intrinsic clearance (CLint).

- CYP Inhibition (Cocktail Assay):

- In separate wells, incubate HLM with compound (5 concentrations, 0.3-30 µM) and a cocktail of CYP-specific probe substrates (e.g., phenacetin for 1A2, bupropion for 2B6, amodiaquine for 2C8, diclofenac for 2C9, S-mephenytoin for 2C19, dextromethorphan for 2D6, testosterone for 3A4).

- Quantify metabolite formation by LC-MS/MS. Determine IC₅₀ for each CYP enzyme.

Parallel Solubility-Permeability Assessment (PSP)

Protocol:

- High-Throughput Solubility (μSol):

- Dispense compound (as DMSO stock) into a 96-well plate. Evaporate DMSO under N₂.

- Add PBS buffer (pH 7.4), shake for 24h at 25°C.

- Filter through a 96-well filter plate (e.g., 0.45 µm hydrophilic PTFE).

- Quantify concentration in filtrate by UV spectrometry (using a calibration curve) or CLND.

- Parallel Artificial Membrane Permeability Assay (PAMPA):

- Use a 96-well PAMPA plate system. Coat filter membranes with a lipid-oil-lipid trilayer (e.g., phosphatidylcholine in dodecane).

- Fill acceptor wells with PBS (pH 7.4). Add compound from solubility step to donor wells.

- Incubate for 4-16 hours. Quantify compound in both compartments by HPLC-UV.

- Calculate effective permeability (Pe).

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Multi-Property Optimization

| Reagent/Material | Function in Optimization | Example Vendor/Product |

|---|---|---|

| Human Liver Microsomes (Pooled) | In vitro assessment of Phase I metabolic stability and CYP inhibition. | Corning Gentest, XenoTech |

| Caco-2 or MDCK-II Cells | Cell-based model for predicting intestinal permeability and efflux transport (P-gp). | ATCC, ECACC |

| Recombinant CYP Enzymes | Isoform-specific cytochrome P450 inhibition studies. | Sigma-Aldrich, Becton Dickinson |

| PAMPA Plate System | High-throughput, cell-free assessment of passive transcellular permeability. | pION, Corning |

| Phospholipid Vesicles (e.g., POPC) | For membrane binding assays and modeling cellular partition coefficients. | Avanti Polar Lipids |

| Cryopreserved Human Hepatocytes | Gold-standard for in vitro assessment of intrinsic clearance and metabolite ID. | BioIVT, Lonza |

| hERG-Expressing Cell Line | Essential for screening potassium channel blockade linked to cardiotoxicity. | ChanTest, Eurofins |

| 96-Well Equilibrium Dialysis Block | High-throughput measurement of plasma protein binding. | HTDialysis, Thermo Fisher |

This article serves as a foundational component of a broader thesis on molecular optimization algorithms, which are tasked with navigating this immense search space to identify compounds with desired properties.

The Conceptual and Quantitative Dimensions of Chemical Space

Chemical space is the abstract, multidimensional domain encompassing all possible organic molecules. Its size is astronomically vast. Estimates vary based on the rules of stability and synthesizability applied.

Table 1: Estimated Sizes of Chemical Space

| Description | Estimated Number of Molecules | Key Constraints | Source/Reference |

|---|---|---|---|

| Drug-like (Ro5) | ~10⁶⁰ | Rule of 5, MW ≤ 500 Da | Bohacek et al. (1996) |

| Synthetically Accessible (GDB) | ~10⁶⁶ | Up to 17 atoms (C, N, O, S, Halogens) | Reymond (2010) - GDB-17 |

| Small Organic Molecules | ~10⁸⁰ | Stable, synthesizable, ≤ 30 atoms | Kirkpatrick & Ellis (2004) |

| All Possible Organic | >10¹⁸⁰ | All plausible combinations of atoms | Theoretical maximum |

The dimensions of this space are defined by molecular descriptors, which can be:

- 1D: Molecular weight, formula, fingerprint counts.

- 2D: Structural fingerprints (ECFP), graph-based invariants, topological indices.

- 3D: Conformer energies, surface area, volume, quantum mechanical properties.

- 4D & Beyond: Incorporating protein-ligand interactions, dynamics, and ensemble representations.

Experimental Protocol: Mapping a Local Chemical Space via High-Throughput Screening (HTS)

A primary method for empirically exploring chemical space is HTS.

Objective: To experimentally determine the bioactivity of a defined library of compounds against a specific biological target.

Materials:

- Target protein (purified, recombinant).

- Chemical compound library (e.g., 100,000 diversity-oriented compounds).

- Microtiter plates (384- or 1536-well).

- Automated liquid handling robotics.

- Fluorescence/ Luminescence plate reader.

- Assay reagents (substrate, cofactors, detection dyes).

Procedure:

- Library Preparation: Dissolve compounds in DMSO to create 10 mM master stocks. Using an acoustic dispenser or pin tool, transfer nanoliter volumes to assay plates, creating a final test concentration (e.g., 10 µM).

- Assay Setup: Dilute the target protein in assay buffer and dispense into each well of the compound-containing assay plate. Incubate for 30-60 minutes to allow binding.

- Reaction Initiation: Add the fluorescent or luminescent substrate to initiate the enzymatic reaction. The signal is inversely proportional to compound inhibition.

- Signal Detection: Incubate for a defined period, then measure the signal intensity using a plate reader.

- Data Analysis: Normalize signals using positive (no compound) and negative (no enzyme) controls. Calculate percent inhibition for each compound. Compounds exceeding a threshold (e.g., >70% inhibition) are designated "hits."

Title: HTS Experimental Workflow for Chemical Space Screening

Navigating Chemical Space: From Mapping to Optimization

Mapping reveals active regions; optimization algorithms guide efficient traversal. Key algorithmic families include:

- Similarity Search: Exploits the "neighborhood principle" using Tanimoto coefficients on ECFP4 fingerprints.

- De Novo Design: Generative models (VAEs, GANs, Transformers) propose novel structures within desired property bounds.

- Bayesian Optimization: Builds a probabilistic model of the structure-activity relationship to suggest the most informative compounds for synthesis.

Table 2: Comparison of Chemical Space Navigation Algorithms

| Algorithm Type | Core Principle | Typical Step | Advantage | Limitation |

|---|---|---|---|---|

| Similarity Search | Neighborhood Behavior | Identify nearest neighbors to a known hit. | Simple, interpretable, high成功率. | Limited exploration, scaffold hopping not guaranteed. |

| Genetic Algorithm | Evolutionary Selection | Crossover, mutation, fitness selection. | Good at scaffold hopping, explores diverse regions. | Can get stuck in local optima, requires many evaluations. |

| Bayesian Optimization | Surrogate Model & Acquisition | Select compound maximizing Expected Improvement. | Sample-efficient, balances exploration/exploitation. | Model-dependent, performance degrades in very high dimensions. |

| Deep Generative | Learn Distribution & Sample | Train model on known actives, sample from latent space. | Can design truly novel scaffolds, high throughput in silico. | Can generate unrealistic molecules, requires large training data. |

Title: Algorithmic Strategies for Navigating Chemical Space

The Scientist's Toolkit: Research Reagent Solutions for Chemical Space Exploration

Table 3: Essential Materials and Reagents

| Item | Function/Application | Example Vendor/Product |

|---|---|---|

| Diversity-Oriented Synthesis (DOS) Libraries | Provides broad, scaffold-diverse coverage of chemical space for initial screening. | ChemDiv, Enamine REAL, WuXi AppTec |

| Focused/Targeted Libraries | Covers chemical space around known pharmacophores for specific target families (e.g., kinases, GPCRs). | Selleckchem, Tocris, MedChemExpress |

| DNA-Encoded Libraries (DELs) | Enables ultra-high-throughput (millions-billions) in vitro screening by tagging each molecule with a unique DNA barcode. | X-Chem, Vipergen, DyNAbind |

| Fragment Libraries | Covers chemical space with low MW, high efficiency compounds for identifying weak binding starting points. | Zenobia, Astex, Charles River |

| Assay Kits (HTS-ready) | Validated biochemical kits for common target classes (kinases, proteases, epigenetic targets) to rapidly initiate screening. | Promega, Cisbio, PerkinElmer |

| Microtiter Plates (1536-well) | Standardized format for ultra-high-throughput screening to maximize throughput and minimize reagent use. | Greiner, Corning, Agilent |

| Automated Liquid Handlers | Robotics for precise, high-speed dispensing of compounds, reagents, and cells in nanoliter volumes. | Beckman Coulter (Biomek), Hamilton, Labcyte Echo |

| Chemical Descriptor & Modeling Software | Computes molecular fingerprints, descriptors, and models to quantify and visualize chemical space. | RDKit, OpenEye, Schrodinger |

This whitepaper details the four pillars of modern molecular optimization in drug discovery: Potency, Selectivity, ADMET, and Synthesizability. Within the broader thesis on Overview of molecular optimization algorithms research, these objectives represent the core multi-parameter optimization challenge that computational algorithms—from QSAR and molecular docking to generative AI and multi-objective reinforcement learning—are designed to address. The evolution of these algorithms is fundamentally driven by the need to balance these often-competing objectives to produce viable clinical candidates.

The Core Optimization Objectives: Definitions and Metrics

Potency

Potency refers to the concentration or amount of a drug required to produce a desired biological effect, typically measured as IC50 (inhibitory concentration) or Ki (inhibition constant). High potency is desirable to minimize dose and potential off-target effects.

Key Experimental Protocol: Determination of IC50 via Biochemical Assay

- Plate Setup: Serially dilute the test compound (e.g., 10 mM starting in DMSO, 1:3 dilutions, 8-12 points) in assay buffer. Include DMSO-only control wells (0% inhibition) and a control inhibitor well (100% inhibition).

- Reaction Initiation: In a 96-well plate, combine enzyme, substrate, and cofactors in buffer. Start the enzymatic reaction by adding the substrate.

- Incubation: Incubate at room temperature or 37°C for a predetermined time (e.g., 30-60 minutes) to allow product formation.

- Detection: Quench the reaction if necessary. Detect product using fluorescence, luminescence, or absorbance. For a kinase assay, ADP-Glo or a coupled enzyme system is common.

- Data Analysis: Plot signal vs. log[compound]. Fit data to a four-parameter logistic (4PL) curve:

Y = Bottom + (Top-Bottom)/(1+10^((LogIC50-X)*HillSlope)). The IC50 is the compound concentration at the curve's inflection point.

Selectivity

Selectivity is the degree to which a compound acts on a given target relative to other targets. It is crucial for minimizing adverse effects. It is quantified using selectivity indices (e.g., IC50(off-target)/IC50(primary target)) or profiling against panels of related proteins (e.g., kinase panels).

Key Experimental Protocol: Selectivity Screening via Kinome-Wide Profiling

- Platform Selection: Utilize a commercial service or platform (e.g., DiscoverX KINOMEscan, Eurofins KinaseProfiler) offering >400 human kinases.

- Compound Submission: Provide test compound at a single high concentration (e.g., 10 µM) in DMSO.

- Competition Binding Assay (e.g., KINOMEscan): Each kinase is produced as a T7 phage fusion protein. The compound competes with an immobilized, active-site directed ligand. Binding is detected via quantitative PCR of phage DNA.

- Data Output: Results are reported as percent control (%Ctrl), where lower %Ctrl indicates stronger binding/displacement. A compound's binding to each kinase is quantified.

- Analysis: Calculate S(35) or S(10) scores—the number of kinases with <35% or <10% residual binding at the test concentration. Generate a kinome tree visualization.

ADMET

ADMET encompasses Absorption, Distribution, Metabolism, Excretion, and Toxicity. These properties determine a compound's pharmacokinetic and safety profile.

Key Experimental Protocols Summary Table:

| Property | Primary Assay | Protocol Summary | Key Output |

|---|---|---|---|

| Absorption | Caco-2 Permeability | Grow Caco-2 cells on transwell inserts for 21 days. Apply compound apically. Sample basolateral side at intervals (e.g., 30, 60, 120 min). Measure concentration by LC-MS/MS. | Apparent Permeability (Papp), Efflux Ratio. |

| Metabolic Stability | Human Liver Microsome (HLM) Incubation | Incubate compound (1 µM) with HLM (0.5 mg/mL) and NADPH in phosphate buffer. Take timepoints (0, 5, 15, 30, 60 min). Quench with cold acetonitrile. Analyze by LC-MS/MS. | Half-life (t1/2), Intrinsic Clearance (CLint). |

| CYP Inhibition | Fluorescent Probe Assay | Incubate CYP isoform (e.g., 3A4) with test compound and isoform-specific fluorogenic probe. Measure fluorescence increase over time. Test multiple concentrations. | IC50 for each major CYP (1A2, 2C9, 2C19, 2D6, 3A4). |

| Toxicity | hERG Channel Binding | Use a competitive binding assay (e.g., Predictor hERG, Invitrogen). Incubate test compound with membrane expressing hERG channel and a radio- or fluorescence-labeled hERG ligand. | % Inhibition at 10 µM; IC50. |

Synthesizability

Synthesizability assesses the feasibility and ease of chemically synthesizing a molecule. It is predicted computationally via retrosynthetic analysis and scored based on complexity, step count, and availability of building blocks.

Computational Protocol: Retrosynthetic Analysis with AI

- Input: SMILES string of target molecule.

- Algorithm Processing: Use a tool like ASKCOS, IBM RXN, or Synthia. The algorithm applies a database of reaction rules in reverse, recursively breaking the target into available starting materials.

- Scoring: Routes are scored based on predicted yield, step count, cost of materials, and safety/hazard considerations.

- Output: A ranked list of suggested synthetic routes with diagrams and commercially available precursors.

Table 1: Benchmark Targets for Optimized Drug Candidates

| Objective | Ideal Range/Value | Warning Zone | Assay Type |

|---|---|---|---|

| Potency (IC50) | < 100 nM (enzyme); < 10 nM (cell) | > 1 µM | Biochemical / Cellular |

| Selectivity Index | > 100x vs. nearest ortholog | < 10x | Panel Screening |

| Caco-2 Papp (10^-6 cm/s) | > 10 (high) | < 1 (low) | In vitro permeability |

| HLM CLint (µL/min/mg) | < 15 (low clearance) | > 30 (high clearance) | Metabolic Stability |

| hERG IC50 | > 30 µM | < 10 µM | In vitro toxicity |

| Synthetic Steps | < 10 linear steps | > 15 linear steps | Retrosynthetic Analysis |

Table 2: Example Compound Profiling Data

| Compound ID | Target IC50 (nM) | Anti-target IC50 (nM) | Selectivity Index | HLM t1/2 (min) | hERG %Inh @ 10 µM | Papp (10^-6 cm/s) |

|---|---|---|---|---|---|---|

| Lead-001 | 25 | 250 (Kinase A) | 10 | 12 | 85 | 5 |

| Opt-001 | 15 | 4500 (Kinase A) | 300 | 45 | 15 | 18 |

| Clinical Candidate | 8 | >10,000 | >1250 | 60 | 5 | 22 |

Visualizing the Molecular Optimization Workflow

Title: Iterative Molecular Optimization Feedback Cycle

Title: Integrated Multi-Objective Candidate Screening Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Molecular Optimization Experiments

| Item/Category | Example Product/Provider | Function in Optimization |

|---|---|---|

| Kinase Enzyme Panels | DiscoverX KINOMEscan Panel, Eurofins KinaseProfiler | Provides broad selectivity profiling against hundreds of kinases in a consistent assay format. |

| Human Liver Microsomes | Corning Gentest HLM, Xenotech HLM | Pooled from multiple donors for standardized assessment of metabolic stability and metabolite ID. |

| Caco-2 Cell Line | ATCC HTB-37 | The gold-standard in vitro model for predicting intestinal absorption and efflux. |

| hERG Inhibition Assay Kit | Invitrogen Predictor hERG Fluorescence Polarization Assay | High-throughput, non-radioactive screening for cardiac toxicity liability. |

| Retrosynthesis Software | Synthia (Merck), ASKCOS (MIT), IBM RXN | AI-driven analysis of synthetic accessibility and route suggestion. |

| Multi-Parameter Optimization (MPO) Software | Schrödinger's COSMOselect, OpenEye's OE MPO | Computationally scores and ranks compounds by balancing potency, ADMET, and physicochemical properties. |

The transition from initial screening "hits" to viable "leads" represents the most critical optimization phase in the drug discovery pipeline. This stage is governed by molecular optimization algorithms, a core research area within computational chemistry and chemoinformatics. These algorithms systematically modify chemical structures to simultaneously enhance multiple properties—primarily potency, selectivity, and pharmacokinetics—while reducing toxicity. This whitepaper provides a technical guide to the optimization frameworks and experimental protocols that underpin this transformative process.

The Molecular Optimization Paradigm: Algorithms and Objectives

Hit-to-lead optimization is a multi-objective problem. The primary goal is to evolve a compound with confirmed activity (a "hit") into a "lead" candidate suitable for preclinical development. This requires balancing often competing parameters through iterative design-make-test-analyze (DMTA) cycles.

Key Optimization Algorithms and Their Applications

| Algorithm Class | Primary Function | Typical Use-Case in Hit-to-Lead | Key Advantage |

|---|---|---|---|

| Matched Molecular Pairs (MMP) | Identifies common structural transformations and their associated property changes. | Predicting the effect of a specific R-group substitution on solubility. | Data-driven, interpretable transformations. |

| Quantitative Structure-Activity Relationship (QSAR) | Builds regression/classification models linking molecular descriptors to biological activity. | Prioritizing analogues for synthesis based on predicted pIC50. | Can model complex, non-linear relationships. |

| Free-Wilson Analysis | Deconstructs activity contributions of specific substituents at defined molecular positions. | Optimizing a scaffold by selecting the best combination of substituents at R1 and R2. | Additive, highly interpretable. |

| Multi-Objective Optimization (MOO) | Simultaneously optimizes multiple parameters (e.g., potency, lipophilicity, metabolic stability). | Balancing potency (pIC50 > 8) with ligand lipophilicity efficiency (LLE > 5). | Finds Pareto-optimal solutions, avoiding local minima. |

| De Novo Design & Generative Models | Generates novel molecular structures from scratch conditioned on desired properties. | Exploring novel chemical space around a hit scaffold to improve intellectual property (IP) position. | Explores vast chemical space beyond analogue libraries. |

Quantitative Target Profile for a Lead Candidate

| Property | Hit (Typical Range) | Lead (Target Range) | Optimization Goal |

|---|---|---|---|

| Potency (IC50/Ki) | 1 µM – 10 µM | < 100 nM | Increase affinity by 10-100x. |

| Selectivity (Fold vs. off-target) | < 10x | > 30x | Minimize off-target binding via structural tweaks. |

| Lipophilicity (clogP) | Often > 3.5 | Ideally < 3 | Lower to reduce toxicity and clearance risk. |

| Ligand Lipophilicity Efficiency (LLE = pIC50 - clogP) | < 5 | > 5 | Improve efficiency of lipophilic interactions. |

| Solubility (PBS, pH 7.4) | < 10 µM | > 100 µM | Enhance for reliable in vivo dosing. |

| Microsomal Stability (% remaining) | < 30% after 30 min | > 50% after 30 min | Reduce metabolic lability. |

| CYP Inhibition (IC50) | < 10 µM for major CYPs | > 10 µM | Structural modification to avoid CYP binding. |

Experimental Protocols for Key Optimization Cycles

Protocol 1: Parallel Medicinal Chemistry (PMC) for Rapid SAR Exploration

Objective: To efficiently map structure-activity relationships (SAR) around a hit core.

- Design: Use combinatorial chemistry principles to generate a virtual library of 500-2000 analogues. Apply simple property filters (MW < 450, clogP < 4) and cluster for diversity.

- Synthesis: Employ solid-phase or solution-phase parallel synthesis techniques in 96-well plates. Utilize a set of commercially available building blocks (e.g., carboxylic acids, amines, boronic acids) and robust coupling reactions (e.g., amide bond formation, Suzuki coupling).

- Purification: Automated high-throughput purification via reverse-phase HPLC-MS.

- Primary Assay: Test all compounds in a high-throughput target binding or functional assay (e.g., fluorescence polarization, TR-FRET) at a single concentration (e.g., 10 µM). Confirm actives with dose-response (8-point curve) to determine IC50.

- Data Analysis: Perform Free-Wilson or group contribution analysis to identify favorable substituents. Iterate design for a second focused library.

Protocol 2:In VitroADMET Profiling Cascade

Objective: To prioritize leads based on absorption, distribution, metabolism, excretion, and toxicity (ADMET) properties.

- Metabolic Stability: Incubate compound (1 µM) with human or rat liver microsomes (0.5 mg/mL protein) for 0, 5, 15, 30, and 45 minutes. Quench with acetonitrile. Measure % parent compound remaining via LC-MS/MS. Calculate intrinsic clearance.

- CYP450 Inhibition: Using pooled human liver microsomes, measure the inhibition of major CYP isoforms (3A4, 2D6, 2C9) via fluorescent or LC-MS/MS probe substrate assays. Report IC50.

- Permeability (PAMPA): Perform the Parallel Artificial Membrane Permeability Assay. Use a 96-well filter plate coated with a lipid-infused artificial membrane. Donor well: compound in pH 7.4 buffer. Receiver well: pH 7.4 buffer. Measure compound appearance in receiver compartment by UV after 4-16 hours. Calculate effective permeability (Pe).

- Solubility (Kinetic): Prepare a saturated solution of the compound in phosphate-buffered saline (PBS, pH 7.4) by shaking for 24 hours. Filter through a 0.45 µm filter and quantify concentration by HPLC-UV against a standard curve.

- hERG Liability (Patch Clamp): For prioritized leads, test inhibition of the hERG potassium channel expressed in mammalian cells using automated patch clamp electrophysiology to assess cardiac safety risk (IC50 target > 30 µM).

Signaling Pathway & Workflow Visualizations

Title: The Iterative Hit-to-Lead Optimization Workflow

Title: Lead Compound Mechanism: Inhibiting a Signaling Pathway

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent/Tool Category | Specific Example | Function in Optimization |

|---|---|---|

| Building Block Libraries | Enamine REAL Space, WuXi LabNetwork Fragments. | Provides diverse, high-quality chemical matter for parallel synthesis and rapid SAR exploration. |

| Assay Kits for Primary Target | Cisbio Kinase TracerBind, BPS Bioscience Enzyme Activity Kits. | Enables high-throughput, robust biochemical potency screening of synthesized analogues. |

| In Vitro ADMET Screening Panels | Corning Gentest Liver Microsomes, Solvo Transporter Assays. | Provides standardized systems for profiling metabolic stability, CYP inhibition, and transporter interactions. |

| Cell-Based Phenotypic Assays | Promega CellTiter-Glo (Viability), Essen Incucyte (Proliferation/Migration). | Confirms functional cellular activity and monitors for cytotoxicity early in the lead series. |

| Analytical & Purification | Waters Acquity UPLC-H-Class with SQD2 MS, Biotage Isolera Prime. | Essential for compound purity analysis (>95%) and purification post-synthesis. |

| Molecular Modeling Software | Schrödinger Suite (Maestro), OpenEye Toolkits, MOE. | Platforms for applying QSAR, molecular docking, free-energy perturbation (FEP), and de novo design algorithms. |

The overarching thesis of modern molecular optimization algorithms research posits that the systematic encoding of chemical intuition into computational rules, and subsequently into self-improving algorithms, represents a paradigm shift in drug discovery. This evolution moves the discipline from a craft, reliant on individual expertise and serendipity, to an engineering science driven by prediction, multi-parameter optimization, and generative exploration.

The Manual MedChem Era: Foundations and Limitations

Traditional medicinal chemistry was an iterative, experience-driven cycle: design-make-test-analyze (DMTA). Optimization relied on heuristic rules (e.g., Lipinski's Rule of Five) and analog synthesis, focusing primarily on potency and simple physicochemical properties.

Key Experimental Protocol (Historical SAR by Analogue):

- Design: Based on a hit from high-throughput screening (HTS), a chemist designs analogues by modifying one substituent at a time (e.g., on an aromatic ring).

- Synthesis: Compounds are synthesized manually or using solid-phase techniques, often taking weeks per compound.

- Testing: Compounds are assayed for primary target potency (e.g., IC50 in an enzymatic assay).

- Analysis: The chemist interprets the structure-activity relationship (SAR) table to plan the next round of synthesis.

Table 1: Typical Output from a Manual MedChem Cycle

| Compound ID | R-Group Modification | IC50 (nM) | ClogP | Molecular Weight (Da) |

|---|---|---|---|---|

| Lead-0 | -H | 1000 | 2.1 | 350 |

| Lead-1 | -CH3 | 500 | 2.5 | 364 |

| Lead-2 | -OCH3 | 250 | 2.3 | 380 |

| Lead-3 | -CF3 | 50 | 3.0 | 418 |

The Rise of Algorithmic Design: Core Paradigms

Algorithmic design introduces predictive models and search algorithms into the DMTA loop, enabling proactive design and multi-objective optimization.

3.1. Quantitative Structure-Activity Relationship (QSAR) QSAR represents the first major computational shift, using statistical methods to correlate molecular descriptors (e.g., ClogP, polar surface area) with biological activity.

Experimental Protocol for 2D-QSAR Model Development:

- Data Curation: Assemble a consistent dataset of 50-500 compounds with measured activity (e.g., pIC50).

- Descriptor Calculation: Compute molecular descriptors (e.g., using RDKit or Dragon software) for each compound.

- Model Training: Apply a regression algorithm (e.g., Partial Least Squares, Random Forest) on a training set (70-80% of data).

- Validation: Test the model on a held-out validation set. Critical metrics include R² (goodness-of-fit) and Q² (predictive ability).

3.2. Structure-Based Design and Molecular Docking With protein structures, algorithms could predict binding poses and scores.

Experimental Protocol for Molecular Docking:

- Protein Preparation: Obtain a 3D protein structure (PDB). Remove water, add hydrogens, assign charges (e.g., using Schrödinger's Protein Preparation Wizard).

- Ligand Preparation: Generate 3D conformers for the ligand and assign charges.

- Grid Generation: Define the binding site and create a scoring grid.

- Docking & Scoring: Use an algorithm (e.g., Glide, AutoDock Vina) to sample ligand poses and rank them with a scoring function (e.g., force field-based, empirical).

3.3. Multi-Parameter Optimization (MPO) and de novo Design This phase integrated multiple properties (potency, selectivity, ADMET) into a single score. De novo algorithms (e.g., LEGO, SMOG) began generating novel structures in silico.

Modern Algorithmic Landscape: Machine Learning & Generative AI

Current research focuses on deep learning models that learn directly from data, bypassing manual descriptor selection.

4.1. Key Algorithm Classes

- Predictive Models: Graph Neural Networks (GNNs) for property prediction.

- Generative Models: Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Transformers for de novo molecule generation.

- Reinforcement Learning (RL): Agents learn to generate molecules optimized for a reward function combining multiple properties.

Experimental Protocol for a Generative VAE in Molecular Optimization:

- Data Encoding: Train a VAE on a large corpus of SMILES strings (e.g., from ChEMBL). The encoder learns a continuous latent space representation (z) of molecules.

- Latent Space Interpolation: Optimize a compound by adjusting its latent vector z to improve a predictive model's output (e.g., predicted potency) using gradient ascent.

- Decoding: The decoder converts the optimized latent vector z* back into a novel SMILES string.

- Validation: Synthesize and test the top-generated compounds in biological assays.

Table 2: Comparison of Algorithmic Design Paradigms

| Paradigm | Key Methodology | Primary Input | Optimization Goal | Typical Throughput (compounds/cycle) |

|---|---|---|---|---|

| Manual MedChem | Analogue Synthesis | Chemist's Heuristic | Potency, Lipinski Rules | 10 - 100 |

| QSAR | Statistical Modeling | 2D Molecular Descriptors | Predictive Activity | In silico: 1,000 - 10,000 |

| Structure-Based | Molecular Docking | Protein 3D Structure | Docking Score, Binding Pose | In silico: 10,000 - 1,000,000 |

| Generative AI | Deep Learning (VAE, GAN, RL) | Chemical Library (SMILES) | Multi-parameter Reward Function | In silico: 1,000,000+ |

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Item/Category | Function & Explanation |

|---|---|

| CHEMBL Database | A curated database of bioactive molecules with annotated properties; the primary source of training data for predictive and generative models. |

| RDKit | Open-source cheminformatics toolkit for descriptor calculation, fingerprint generation, and molecule manipulation. |

| Schrödinger Suite | Commercial software platform for protein preparation (Maestro), molecular docking (Glide), and free energy perturbation (FEP+). |

| AutoDock Vina | Open-source, widely used program for molecular docking and virtual screening. |

| PyTorch/TensorFlow | Deep learning frameworks used to build and train GNNs, VAEs, and other generative models. |

| REINVENT | A popular open-source framework for molecular design using Reinforcement Learning. |

| ACD/Percepta | Software for predicting physicochemical properties and ADMET parameters. |

| Enzo Life Sciences SCREEN-WELL library | A curated library of known bioactive compounds used for initial HTS and model validation. |

| CYP450 Assay Kits (e.g., from Promega) | Experimental kits to assess cytochrome P450 inhibition, a key ADMET liability, for validating computational predictions. |

Visualization of the Evolutionary Workflow

Diagram 1 Title: Evolution from Manual to Algorithmic MedChem Workflow

Diagram 2 Title: Closed-Loop AI-Driven Molecular Optimization Cycle

A Taxonomy of Optimization Algorithms: From QSAR to Generative AI

Within the broader thesis on molecular optimization algorithms, traditional and interpretable methods remain foundational. These approaches provide chemically intuitive insights that guide the modification of lead compounds to enhance potency, selectivity, and pharmacokinetic properties. This technical guide details three core methodologies: Matched Molecular Pairs (MMP), Quantitative Structure-Activity Relationships (QSAR), and Pharmacophore Modeling.

Matched Molecular Pairs (MMP)

Core Concept & Algorithm

MMP analysis identifies a pair of molecules that differ only by a single, well-defined structural transformation at a specific site. The method correlates this transformation with a change in a biological or physicochemical property.

- Formal Definition: An MMP is defined as two compounds (A, B) that can be converted into a common skeleton (C) by cleaving a single, non-cyclic bond in each, resulting in complementary fragments (F1, F2). The transformation is denoted as F1 → F2.

- Algorithmic Steps:

- Fragmentation: Systematically cleave every single, non-cyclic bond in each molecule in the dataset.

- Canonicalization: Generate canonical SMILES for the core and fragment to ensure consistent representation.

- Hashing & Indexing: Use the canonical core as a key to index pairs of molecules that share it.

- Transformation Extraction: For each core, the difference between fragments defines the transformation.

- Statistical Analysis: Aggregate all transformations and compute the mean (ΔP) and standard deviation of the associated property change (e.g., pIC50, LogP).

Experimental Protocol for MMP Analysis

Objective: To derive actionable design rules from a corporate compound database.

- Data Curation: Assay data (e.g., potency, solubility) for >10,000 compounds are standardized (units, error filtering).

- Preprocessing: Molecules are neutralized, salts are stripped, and tautomers are standardized.

- Fragmentation: Execute MMP fragmentation using an algorithm (e.g., Hussain-Rea algorithm) with parameters: max heavy atoms in fragment = 10, exclude stereochemistry.

- Transformation Mining: Apply a minimum occurrence filter (n ≥ 5) for a transformation to be considered statistically relevant.

- Delta Calculation: For property P, calculate ΔP = P(B) - P(A) for each pair. Compute the mean ΔP and its confidence interval for each transformation.

- Rule Generation: Transformations with |mean ΔP| > a predefined significance threshold (e.g., 0.5 log units for potency) and a low standard deviation are codified as design rules.

Table 1: Example MMP Transformations and Their Impact on pIC50 (Hypothetical Dataset)

| Core Structure | Transformation (F1 → F2) | Frequency (n) | Mean ΔpIC50 | Std. Dev. | Interpretation |

|---|---|---|---|---|---|

| Phenyl | -H → -CF3 | 28 | +0.85 | 0.22 | Potency increase likely due to enhanced hydrophobic interaction. |

| Piperidine | -CH3 → -CONH2 | 15 | -0.72 | 0.31 | Potency decrease, possibly due to reduced membrane permeability. |

| Benzothiazole | -Cl → -N(CH3)2 | 9 | +1.50 | 0.18 | Significant gain, suggesting a key H-bond donor/acceptor role. |

Diagram Title: MMP Analysis Workflow

Quantitative Structure-Activity Relationships (QSAR)

Core Methodology

QSAR models mathematically relate a set of molecular descriptors (independent variables) to a biological activity (dependent variable) using statistical or machine learning techniques.

- Descriptor Calculation: Numerical representation of molecular properties (e.g., logP, molar refractivity, topological indices, quantum-chemical properties).

- Model Building: Application of regression (Linear, PLS) or classification (SVM, Random Forest) algorithms.

- Validation: Critical use of internal (cross-validation) and external (hold-out test set) validation to assess predictive power and avoid overfitting.

Experimental Protocol for 2D-QSAR Modeling

Objective: Build a predictive model for cyclooxygenase-2 (COX-2) inhibition.

- Dataset Preparation: Curate 150 compounds with reliable IC50 values. Convert IC50 to pIC50. Apply a 70/30 split for training and external test sets.

- Descriptor Generation: Calculate ~2000 2D molecular descriptors (e.g., topological, electronic, physicochemical) using software like RDKit or PaDEL-Descriptor. Standardize the data (mean-centering, scaling).

- Descriptor Selection: Perform univariate correlation filtering to remove non-informative descriptors. Apply a multivariate method (e.g., Genetic Algorithm or Stepwise Regression) to select the final descriptor subset (5-10 descriptors).

- Model Development: Use Partial Least Squares (PLS) regression on the training set. Determine the optimal number of latent variables via 5-fold cross-validation.

- Model Validation:

- Internal: Report Q² (cross-validated R²) and RMSE from cross-validation.

- External: Predict the held-out test set. Report R²pred and RMSEtest.

- Interpretation: Analyze the PLS loadings plot to understand which descriptors drive activity and propose a chemical interpretation.

Table 2: Performance Metrics for a Hypothetical COX-2 Inhibition QSAR Model

| Model Type | Training R² | Cross-Val. Q² | Test Set R²_pred | RMSE (pIC50) | Key Descriptors |

|---|---|---|---|---|---|

| PLS (3 LVs) | 0.82 | 0.78 | 0.75 | 0.45 | ALogP, Topological Polar Surface Area, HOMO Energy |

| Random Forest | 0.95 | 0.80 | 0.72 | 0.48 | (Multiple, complex) |

Diagram Title: QSAR Model Development Workflow

Pharmacophore Modeling

Core Concept

A pharmacophore is an abstract description of the molecular features necessary for biological activity and their spatial arrangement. It represents the essential interaction capabilities of a ligand.

- Features: Hydrogen Bond Donor (HBD), Hydrogen Bond Acceptor (HBA), Hydrophobic (H), Positive/Negative Ionizable (PI/NI), Aromatic Ring (AR).

- Generation Methods:

- Ligand-Based: From a set of active molecules (common features approach).

- Structure-Based: From a protein-ligand complex (extraction of interaction points).

Experimental Protocol for Structure-Based Pharmacophore Generation

Objective: Create a pharmacophore model for kinase inhibition using a known co-crystal structure.

- Protein-Ligand Complex Preparation: Obtain PDB structure (e.g., 1M17). Process the structure: add hydrogens, correct protonation states, optimize side chains.

- Interaction Analysis: Manually or automatically analyze ligand-protein interactions (e.g., using MOE, Discovery Studio). Identify key H-bonds, ionic interactions, and hydrophobic contacts.

- Feature Mapping: Translate identified interactions into pharmacophore features.

- A ligand carbonyl forming H-bond with backbone NH → HBA feature.

- A ligand phenyl ring engaging in pi-stacking → Aromatic feature.

- A ligand alkyl chain in a hydrophobic pocket → Hydrophobic feature.

- Model Definition: Define the spatial constraints (tolerances, angles, distances) for each feature based on the observed geometry.

- Model Validation: Screen a decoy set (actives + inactives). Generate an enrichment curve (e.g., EF1% or ROC-AUC) to assess the model's ability to prioritize active compounds.

Table 3: Example Pharmacophore Features from a Kinase Inhibitor Complex (PDB: 1M17)

| Pharmacophore Feature | Protein Interaction Partner | Distance Constraint (Å) | Role in Binding |

|---|---|---|---|

| Hydrogen Bond Acceptor | Backbone NH of Met-318 | 2.9 ± 0.5 | Key hinge-binding interaction |

| Hydrogen Bond Donor | Side-chain O of Asp-381 | 3.1 ± 0.5 | Salt bridge / charge stabilization |

| Hydrophobic (Sphere) | Side-chains of Val-339, Ala-481 | 4.5 ± 1.0 | Occupies selectivity pocket |

| Aromatic Ring (Plane) | Side-chain of Phe-517 (pi-stacking) | 4.0 ± 1.0 (plane-to-plane) | Stabilizes DFG-out conformation |

Diagram Title: Structure-Based Pharmacophore Generation

The Scientist's Toolkit

Table 4: Key Research Reagent Solutions & Tools

| Item / Software | Category | Primary Function |

|---|---|---|

| RDKit | Cheminformatics Library | Open-source toolkit for descriptor calculation, fingerprint generation, MMP-like fragmentation, and molecular operations. |

| MOE (Molecular Operating Environment) | Integrated Software Suite | Comprehensive platform for QSAR (model building, validation), pharmacophore modeling (creation, screening), and molecular docking. |

| Schrödinger Suite | Integrated Software Suite | Industry-standard for structure-based design, includes tools for QSAR (QSAR-Prime), pharmacophore (Phase), and advanced simulations. |

| KNIME / Python (scikit-learn) | Data Analytics Platform | Workflow orchestration and machine learning model development for building and validating advanced QSAR models. |

| PyMOL / Maestro | Molecular Visualization | Critical for inspecting protein-ligand complexes to derive structure-based pharmacophores and validate hypotheses. |

| ChEMBL / PubChem | Public Database | Sources of bioactivity data for building training sets for QSAR and finding analogs for MMP analysis. |

| CORINA Classic | 3D Structure Generator | Converts 2D structures to 3D conformations, a prerequisite for 3D-QSAR and pharmacophore alignment. |

| Gold / Glide | Docking Software | Used to generate protein-ligand complexes when experimental structures are unavailable, informing pharmacophore creation. |

Within the broader thesis on the Overview of Molecular Optimization Algorithms Research, this paper examines two foundational and synergistic paradigms for discovering and optimizing molecules, primarily for drug development. The first paradigm leverages existing chemical knowledge through Library-Based Virtual Screening (VS), a fast, knowledge-driven approach. The second employs Evolutionary Algorithms (EAs), such as Genetic Algorithms (GAs) and Particle Swarm Optimization (PSO), which are adaptive, population-based search methods for de novo molecular design and optimization. This guide details the technical principles, methodologies, and integration of these approaches, providing researchers with a comprehensive framework for modern computational molecular discovery.

Virtual Screening: Principles and Protocols

Virtual Screening computationally evaluates large libraries of compounds to identify those most likely to bind to a target biological macromolecule (e.g., a protein). It is typically categorized into Ligand-Based and Structure-Based methods.

2.1 Core Methodologies

- Ligand-Based VS: Utilizes known active compounds (a "pharmacophore" or quantitative structure-activity relationship, QSAR model) to search for structurally or property-similar molecules. Common techniques include:

- Similarity Searching: Uses molecular fingerprints (e.g., ECFP4, MACCS keys) and similarity metrics (e.g., Tanimoto coefficient).

- Pharmacophore Modeling: Identifies essential 3D arrangements of functional groups necessary for activity.

- Structure-Based VS (Molecular Docking): Predicts the preferred orientation (pose) and binding affinity (score) of a small molecule within a protein's binding site.

2.2 Detailed Experimental Protocol for a Structure-Based VS Workflow

- Target Preparation:

- Obtain the 3D protein structure from PDB (Protein Data Bank).

- Process using software (e.g., Schrödinger's Protein Preparation Wizard, UCSF Chimera): add hydrogens, assign protonation states, fix missing side chains, optimize hydrogen bonds, and minimize structure.

- Ligand Library Preparation:

- Source compounds from databases (e.g., ZINC20, ChemBL, Enamine REAL).

- Prepare ligands: generate tautomers and stereoisomers, perform conformational sampling, assign correct ionization states at physiological pH (e.g., using OpenBabel, OMEGA).

- Docking Grid Generation:

- Define the binding site coordinates (often from a co-crystallized ligand) and create a scoring grid (e.g., using AutoDock Tools, Glue).

- Molecular Docking Execution:

- Run docking simulations (e.g., using AutoDock Vina, Glide, FRED). Each ligand is posed and scored.

- Post-Docking Analysis:

- Rank compounds by docking score. Visually inspect top poses for key interactions (hydrogen bonds, hydrophobic contacts, pi-stacking).

- Apply filters (e.g., drug-likeness via Lipinski's Rule of Five, PAINS filters to remove promiscuous binders).

Table 1: Common Virtual Screening Software and Databases

| Tool/Database | Type | Key Features/Description |

|---|---|---|

| AutoDock Vina | Docking Software | Open-source, fast, widely used for flexible ligand docking. |

| Schrödinger Glide | Docking Software | High-performance, tiered precision (SP, XP), robust scoring. |

| RDKit | Cheminformatics Toolkit | Open-source, for fingerprint generation, descriptor calculation, and molecule manipulation. |

| ZINC20 | Compound Library | >230 million commercially available compounds for virtual screening. |

| ChEMBL | Bioactivity Database | Manually curated database of bioactive molecules with drug-like properties. |

Title: Virtual Screening Workflow Diagram

Evolutionary Algorithms: Genetic Algorithms and PSO

Evolutionary Algorithms mimic natural selection and collective behavior to optimize molecular structures towards a desired property profile.

3.1 Genetic Algorithms (GAs) for Molecular Optimization GAs treat molecules as "individuals" encoded by a representation (e.g., SMILES string, graph). A population evolves over generations via:

- Evaluation: Fitness is computed (e.g., predicted binding affinity, synthesizability score).

- Selection: High-fitness individuals are selected to "reproduce."

- Crossover: Pairs of parents exchange genetic material to create offspring.

- Mutation: Random modifications are introduced to maintain diversity.

3.2 Particle Swarm Optimization (PSO) for Molecular Optimization In PSO, each "particle" represents a candidate molecule in a multi-dimensional chemical space. Particles move through this space, updating their position based on:

- Their personal best position (

pBest). - The global best position (

gBest) found by the swarm. - This is often applied to optimize real-valued molecular descriptors or within a continuous chemical space defined by a generative model's latent space.

3.3 Detailed Protocol for a GA-driven De Novo Design Experiment

- Initialization:

- Generate an initial population of N valid molecules (e.g., 100-1000) using a random SMILES generator or from a seed list.

- Fitness Evaluation:

- For each molecule, calculate a multi-objective fitness function, e.g.,

Fitness = w1 * pIC50 + w2 * SA_Score + w3 * QED.pIC50is predicted activity,SA_Scorepenalizes synthetic complexity,QEDrewards drug-likeness.

- For each molecule, calculate a multi-objective fitness function, e.g.,

- Selection:

- Perform tournament selection: randomly select k individuals from the population and choose the one with the highest fitness to be a parent.

- Variation (Crossover & Mutation):

- Crossover: For selected parent pairs, perform a substring crossover on their SMILES representations, ensuring chemical validity with a grammar checker (e.g., RDKit's SanitizeMol).

- Mutation: Apply random mutations: atom/bond change, ring addition/removal, or fragment replacement from a curated library.

- Replacement:

- Form a new generation by replacing the worst-performing individuals with the newly created offspring.

- Termination & Analysis:

- Repeat steps 2-5 for a set number of generations (e.g., 100-500) or until convergence. Analyze the Pareto front of optimal solutions.

Table 2: Comparison of Evolutionary Algorithm Parameters

| Parameter | Genetic Algorithm (GA) | Particle Swarm Optimization (PSO) |

|---|---|---|

| Representation | String (SMILES), Graph, Tree | Real-valued vector (Descriptors, Latent Vector) |

| Core Operators | Selection, Crossover, Mutation | Velocity Update, Position Update |

| Key Coefficients | Crossover Rate, Mutation Rate | Inertia Weight (ω), Cognitive (c1), Social (c2) |

| Exploration Driver | Mutation, Diversity-preserving selection | Inertia, Personal Best (pBest) |

| Exploitation Driver | Fitness-proportionate selection | Global Best (gBest), Social component |

| Typical Application | Discrete structural optimization, scaffold hopping | Optimizing in continuous chemical space, hybrid with VAEs. |

Title: Genetic Algorithm Molecular Optimization Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Resources for Molecular Optimization

| Item / Solution | Category | Function / Purpose |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Core library for molecule I/O, fingerprint calculation, descriptor generation, and substructure operations. Essential for pre- and post-processing. |

| Open Babel / ChemAxon | Chemical Format Toolkits | Convert chemical file formats, calculate properties, and perform standardizations. |

| AutoDock Vina / GNINA | Docking Engine | Open-source software for performing structure-based virtual screening and pose prediction. |

| Schrödinger Suite / OpenEye Toolkit | Commercial Software Platforms | Integrated platforms offering high-accuracy docking (Glide), force fields, and ligand-based design tools. |

| ZINC20 / Enamine REAL | Compound Libraries | Sources of purchasable or virtual compounds for screening and fragment libraries for de novo design. |

| JT-VAE / MolGPT | Generative Models | Deep learning models that create a continuous latent molecular space for optimization via PSO or GA. |

| Python (NumPy, pandas) | Programming Environment | The de facto language for scripting workflows, data analysis, and integrating diverse computational tools. |

| High-Performance Computing (HPC) Cluster | Computational Infrastructure | Necessary for large-scale virtual screens (10^6-10^9 compounds) and running parallelized evolutionary algorithm generations. |

Integration and Future Outlook

The convergence of library-based and evolutionary approaches represents the cutting edge. Current research integrates VS as a fast pre-filter or fitness evaluator within EA loops. More profoundly, generative models like VAEs or GANs create a continuous, smooth chemical latent space. Evolutionary algorithms like PSO can efficiently navigate this space to optimize compounds for multiple objectives, effectively blending the explorative power of EAs with the learned chemical intuition of deep learning. This hybrid paradigm, framed within the comprehensive study of molecular optimization algorithms, promises to accelerate the discovery of novel, synthetically accessible, and potent therapeutic agents.

This technical guide provides an in-depth analysis of three pivotal deep generative models—Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Transformers—within the context of molecular optimization algorithms for drug discovery. Molecular optimization is a core challenge in modern pharmaceutical research, requiring the generation of novel, synthetically accessible compounds with optimized properties such as binding affinity, solubility, and low toxicity. Generative models provide a data-driven approach to explore the vast chemical space beyond the constraints of traditional library-based screening, enabling de novo molecular design. This document details their core architectures, experimental implementations, and comparative performance in generating optimized molecular structures.

Core Architectural Principles

Variational Autoencoders (VAEs)

VAEs are probabilistic generative models that learn a compressed, continuous latent representation (latent space, z) of input data. In molecular optimization, the input is typically a molecular structure represented as a string (e.g., SMILES) or graph. The encoder network (qᵩ(z|x)) maps a molecule to a distribution over the latent space, while the decoder network (pθ(x|z)) reconstructs the molecule from a sampled latent vector. The training objective is the Evidence Lower Bound (ELBO), which balances reconstruction loss and the Kullback-Leibler (KL) divergence between the learned latent distribution and a prior (usually a standard normal distribution). This continuous latent space allows for smooth interpolation and optimization via gradient-based search.

Key VAE-based Molecular Models:

- CharacterVAE/JT-VAE: Encodes SMILES strings or molecular junction trees, enabling generation and property optimization by moving in the latent space toward regions associated with desired properties.

Generative Adversarial Networks (GANs)

GANs frame generation as an adversarial game between two neural networks: a Generator (G) and a Discriminator (D). G learns to map random noise (z) to synthetic data (e.g., a molecular string), while D learns to distinguish real data from G's outputs. The networks are trained concurrently, with G aiming to "fool" D. For discrete sequences like SMILES, reinforcement learning (RL) techniques such as Policy Gradient are often incorporated (as in SeqGAN or ORGAN) to provide a gradient signal to G.

Key GAN-based Molecular Models:

- MolGAN: Directly generates molecular graphs using a generator that produces an adjacency matrix and node attribute tensor, with a discriminator and a reward network guiding property optimization.

Transformers

Originally designed for sequence-to-sequence tasks, Transformers have become dominant generative models. They rely on a self-attention mechanism to capture long-range dependencies in sequential data. In molecular generation, Transformer decoders (like GPT architecture) are trained autoregressively to predict the next token in a molecular string (SMILES, SELFIES). Their ability to model complex, high-dimensional distributions makes them powerful for de novo design. Conditional generation can be guided by property tags or desired scaffolds.

Key Transformer-based Molecular Models:

- Chemformer/MolGPT: Pre-trained on large molecular corpora, these models can generate molecules, predict properties, and perform tasks like reaction prediction.

Comparative Performance in Molecular Optimization

The following table summarizes the quantitative performance of representative models from each architecture class on benchmark molecular generation and optimization tasks. Metrics assess the quality, diversity, and property satisfaction of generated molecules.

Table 1: Quantitative Comparison of Generative Models on Molecular Tasks

| Model (Architecture) | Benchmark/ Task | Validity (%) | Uniqueness (%) | Novelty (%) | Property Optimization (e.g., QED, DRD2) | Reference |

|---|---|---|---|---|---|---|

| JT-VAE (VAE) | ZINC250K Optimization | 100 | 100 | 100 | Success Rate: 76.7% (QED), 92.5% (DRD2)* | Gómez-Bombarelli et al., 2018 |

| MolGAN (GAN) | QM9 Generation | 98.3 | 10.3 | 94.2 | Property scores match target distribution | De Cao & Kipf, 2018 |

| ChemicalVAE (VAE) | Latent Space Interpolation | 96.0 | 94.0 | 85.0 | Smooth property gradients observed | Blaschke et al., 2020 |

| REINVENT (RL+Prior) | De Novo Design | >99 | >90 | >80 | Significant improvement in target properties (e.g., solubility) | Olivecrona et al., 2017 |

| MolGPT (Transformer) | MOSES Benchmark | 99.6 | 98.2 | 91.5 | High FCD Diversity & Scaffold Similarity | Bagal et al., 2022 |

| GraphINVENT (GNN) | Guacamol v1 | 99.9 | 99.9 | N/A | Top-1 on 7/20 benchmarks | Moret et al., 2021 |

Note: Metrics are illustrative from key literature. Validity: % of chemically valid structures. Uniqueness: % of unique molecules among valid. Novelty: % not in training set. QED: Quantitative Estimate of Drug-likeness. DRD2: dopamine receptor D2 activity. DRD2 optimization success rate defined as generating molecules with pIC50 > 6.

Diagram Title: Core Architectures of VAE, GAN, and Transformer for Molecular Generation

Detailed Experimental Protocols

Protocol: Benchmarking Molecular Generation with MOSES

The MOSES (Molecular Sets) platform provides a standardized benchmark for evaluating generative models.

- Data Preparation: Use the curated MOSES training set (derived from ZINC Clean Leads) containing ~1.9M molecules. Pre-process SMILES strings using canonicalization and salt stripping.

- Model Training: Train the generative model (e.g., VAE, GAN, Transformer) on the training set. For VAEs, use a character-based or graph-based encoder/decoder. For GANs, use a reinforcement learning objective for sequence generation. For Transformers, train via teacher-forced maximum likelihood.

- Generation: Sample 30,000 novel molecules from the trained model.

- Evaluation:

- Metrics Calculation: Use the MOSES package to compute:

- Validity: Fraction of chemically valid SMILES (RDKit parsable).

- Uniqueness: Fraction of unique molecules among valid ones.

- Novelty: Fraction of unique, valid molecules not present in the training set.

- Filters: Fraction passing medicinal chemistry filters (e.g., PAINS).

- Frechet ChemNet Distance (FCD): Measures distribution similarity between generated and test sets using a pre-trained ChemNet.

- Scaffold Similarity: Measures the similarity of Bemis-Murcko scaffolds between generated and test sets.

- Visualization: Plot distributions of key molecular descriptors (e.g., logP, molecular weight) for generated vs. test sets.

- Metrics Calculation: Use the MOSES package to compute:

Protocol: Goal-Directed Optimization with the Guacamol Benchmark

Guacamol defines specific objective functions for property optimization.

- Task Selection: Choose a specific goal-directed benchmark (e.g., improve "Celecoxib" similarity and QED, or optimize dopamine receptor DRD2 activity).

- Model Setup: Implement a generative model with a guiding mechanism.

- For VAE: Use Bayesian optimization or a genetic algorithm to search the latent space, scoring proposals with the objective function.

- For RL-based (GAN or Transformer): Define a reward function combining the Guacamol objective with a prior likelihood (to maintain chemical realism). Train the generator policy with a policy gradient method (e.g., PPO).

- Optimization Run: Generate molecules iteratively, with the objective score guiding the search. Track the best score achieved over a fixed number of steps (e.g., 5,000).

- Evaluation: Report the top score achieved and the percentage of runs achieving a threshold (if applicable). Analyze the top-ranked generated molecules for structural novelty and synthetic accessibility.

Diagram Title: Molecular Optimization Workflow with Generative Models

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Libraries for Molecular Generative Modeling Research

| Item / Reagent | Provider / Library | Primary Function in Experiments |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Core toolkit for molecule I/O (SMILES), descriptor calculation, substructure searching, chemical validity checks, and rendering. |

| PyTorch / TensorFlow | Meta / Google | Deep learning frameworks for building and training VAE, GAN, and Transformer models. |

| DeepChem | DeepChem Community | Provides high-level APIs for molecular datasets, featurization (graphs, grids), and pre-built model architectures. |

| Guacamol | BenevolentAI | Benchmark suite for goal-directed molecular generation, providing standardized objective functions and scoring. |

| MOSES | Insilico Medicine | Standardized benchmarking platform for evaluating distribution-learning generative models, including metrics and datasets. |

| SELFIES | University of Toronto | Robust molecular string representation (alternative to SMILES) guaranteeing 100% validity, useful for autoregressive models. |

| Molecular Docking Software (e.g., AutoDock Vina) | Scripps Research | For physics-based property evaluation within an optimization loop, estimating binding affinity. |

| Psi4 / Gaussian | Open-Source / Commercial | Quantum chemistry packages for calculating precise electronic properties (e.g., HOMO-LUMO, dipole moment) of generated molecules. |

| REINVENT | AstraZeneca (Open-Source) | A comprehensive, production-ready framework for molecular design using RL and recurrent neural networks (RNNs). |

| Jupyter Notebook | Project Jupyter | Interactive development environment for prototyping, data analysis, and visualization of model outputs. |

This technical guide is situated within the comprehensive thesis Overview of Molecular Optimization Algorithms Research, which systematically reviews computational strategies for automating and accelerating the discovery of novel molecular entities. The thesis delineates a spectrum of approaches, from traditional quantitative structure-activity relationship (QSAR) models and genetic algorithms to contemporary deep generative models. Reinforcement Learning (RL) emerges as a pivotal paradigm within this landscape, framing molecular design as a sequential decision-making problem where an agent learns to construct molecules with optimized properties through interaction with a simulated environment.

Core RL Framework in Molecular Design

In RL for molecular design, the process is modeled as a Markov Decision Process (MDP):

- State (s_t): The current partial molecular graph or string representation (e.g., SMILES).

- Action (a_t): The next step in constructing the molecule, such as adding a specific atom or bond, or selecting a molecular fragment.

- Policy (π(a|s)): A neural network (the Policy Network) that defines the probability distribution over possible actions given the current state. It is the primary learnable component that guides the design strategy.

- Reward (r_t): A scalar feedback signal received upon completing a molecule (or at intermediate steps). Reward shaping is critical to provide meaningful guidance toward desired chemical properties.

- Environment: A simulator that validates the chemical legality of actions, computes properties, and dispenses rewards.

The objective is to train the policy network to maximize the expected cumulative reward, thereby generating molecules with high scores for target properties like drug-likeness (QED), synthetic accessibility (SA), or binding affinity (docking score).

Diagram Title: RL Agent-Environment Interaction Loop

Policy Network Architectures

Policy networks encode the state (partial molecule) and output action probabilities. Common architectures include:

1. Recurrent Neural Networks (RNNs): Treat molecule generation (SMILES string) as a sequence prediction task. The state is the hidden layer representation of the sequence so far. 2. Graph Neural Networks (GNNs): Directly operate on the molecular graph. The state is a graph representation, and actions involve node or edge additions. This respects molecular invariances. 3. Transformer Networks: Utilize self-attention mechanisms over a sequence of tokens representing molecular fragments or atoms, capturing long-range dependencies.

Table 1: Comparison of Policy Network Architectures

| Architecture | State Representation | Action Space | Key Advantage | Key Limitation |

|---|---|---|---|---|

| RNN (LSTM/GRU) | Hidden vector of SMILES sequence | Next character in SMILES | Simple, fast iteration. | May generate invalid SMILES; ignores graph topology. |

| Graph Neural Network | Latent graph embedding | Add atom/bond or fragment | Enforces valence rules; inherent chemistry awareness. | Computationally heavier; complex action masking. |

| Transformer | Contextual token embeddings | Next fragment or token | Captures long-range patterns via attention. | Requires large datasets; pre-training beneficial. |

Reward Shaping Strategies

The reward function is the primary conduit for embedding design objectives. A complex objective ( R ) is often decomposed into weighted components:

[ R(m) = \sumi wi \cdot f_i(m) ]

Table 2: Common Reward Components for Molecular Design

| Component | Function (f_i) | Typical Goal | Computational Method |

|---|---|---|---|

| Drug-Likeness | Quantitative Estimate (QED) | Maximize (0 to 1) | Analytic function based on molecular properties. |

| Synthetic Accessibility | SA Score | Minimize (1 to 10) | Fragment-based scoring (RDKit, SYBA). |

| Target Activity | pIC50 / Docking Score | Maximize | Predictive model (e.g., Random Forest, CNN) or molecular docking simulation (e.g., AutoDock Vina). |

| Novelty | Tanimoto similarity to known set | Minimize/Maximize | Fingerprint comparison (ECFP4). |

| Pharmacokinetics | Predicted LogP, TPSA | Optimize within range | Rule-based or ML-predicted values. |

| Structural Constraints | Penalty for undesired substructures | Minimize (0/1 penalty) | SMARTS pattern matching. |

Critical Technique: Multi-objective Scalarization. Weights ( wi ) balance competing objectives. Adaptive weighting or Pareto-frontier search methods are advanced alternatives. Penalized Rewards: A common shaped reward: ( R = \text{Activity} - \lambda \cdot \text{SAScore} + \text{QED} ).

Diagram Title: Multi-Objective Reward Shaping Pipeline

Experimental Protocols & Training Algorithms

Standard Training Workflow (REINFORCE with Baseline)

Objective: Maximize ( J(\theta) = \mathbb{E}{\tau \sim \pi\theta}[R(\tau)] ), where ( \tau ) is a trajectory (complete molecule).

Protocol:

- Initialization: Initialize policy network parameters ( \theta ). Initialize a predictive baseline network (e.g., a value network) to estimate expected reward and reduce variance.

- Rollout Generation: For N epochs: a. Use current policy ( \pi_\theta ) to generate a batch of M molecules (sequences of actions). b. For each molecule, compute its total reward ( R ) using the shaped reward function.

- Baseline Fitting: Train the baseline network on the generated molecules to predict their reward from their initial state/representation.

- Policy Update: Using the REINFORCE with baseline algorithm: a. For each molecule trajectory ( \tau^i ), calculate advantage: ( A^i = R(\tau^i) - V(s0^i) ), where ( V ) is the baseline prediction. b. Compute policy gradient estimate: ( \nabla\theta J(\theta) \approx \frac{1}{M} \sum{i=1}^M \sum{t=0}^{T^i} A^i \nabla\theta \log \pi\theta(at^i | st^i) ). c. Update parameters: ( \theta \leftarrow \theta + \alpha \nabla_\theta J(\theta) ), where ( \alpha ) is the learning rate.

- Validation: Periodically, sample molecules from the policy without training and evaluate them on the true objectives using independent validation scripts or simulations.

Advanced Algorithm: Proximal Policy Optimization (PPO)

PPO is widely adopted for its stability and sample efficiency. It constrains policy updates to prevent destructive large steps.

Key Modification to Protocol (Step 4 above):

- The objective function becomes ( L^{CLIP}(\theta) = \mathbb{E}t [ \min( rt(\theta) At, \text{clip}(rt(\theta), 1-\epsilon, 1+\epsilon) At ) ] ), where ( rt(\theta) = \frac{\pi\theta(at|st)}{\pi{\theta{old}}(at|s_t)} ).

- This requires storing the old policy ( \pi{\theta{old}} ) and computing the probability ratio ( r_t(\theta) ) during update.

Table 3: Comparison of RL Training Algorithms for Molecular Design

| Algorithm | Update Rule | Key Feature | Sample Efficiency | Stability |

|---|---|---|---|---|

| REINFORCE | Monte Carlo gradient | Simple to implement. | Low | High variance, unstable. |

| REINFORCE with Baseline | ( \nabla J \propto At \nabla \log \pi(at|s_t) ) | Reduced variance via baseline. | Medium | More stable than REINFORCE. |

| PPO | Clipped surrogate objective | Constrained updates; robust. | High | High, industry standard. |

| Deep Q-Network (DQN) | Q-value maximization | Off-policy; uses replay buffer. | Medium | Can be unstable, requires tuning. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Software Tools and Libraries for RL Molecular Design

| Item (Software/Library) | Category | Function/Brief Explanation |

|---|---|---|

| RDKit | Cheminformatics | Open-source toolkit for molecule manipulation, descriptor calculation, fingerprint generation, and chemical reaction processing. Essential for state representation and reward calculation (QED, SA). |

| OpenAI Gym/ ChemGym | RL Environment | Provides a standardized API for creating RL environments. Custom molecular design environments (e.g., MolGym, ChemGym) build upon this. |

| PyTorch / TensorFlow | Deep Learning Framework | Libraries for building and training neural network-based policy and value functions. Autograd functionality is crucial for gradient-based policy updates. |

| Stable-Baselines3 / RLlib | RL Algorithm Library | High-quality implementations of state-of-the-art RL algorithms (PPO, DQN, SAC), allowing researchers to focus on environment and reward design. |

| AutoDock Vina / GNINA | Molecular Docking | Software for simulating and scoring the binding pose and affinity of a small molecule to a protein target. Used for computationally expensive but high-fidelity reward signals. |

| ZINC / ChEMBL | Molecular Database | Public repositories of commercially available and bioactive compounds. Used for pre-training prior policies, defining similarity metrics for novelty, and benchmarking. |

| DeepChem | Deep Learning for Chemistry | Provides layers (GraphConv), featurizers, and model architectures tailored for chemical data, facilitating the integration of ML-based property predictors into the RL loop. |

This whitepaper provides an in-depth technical guide on hybrid and emerging architectures, including diffusion models, graph-based generation, and Large Language Model (LLM) applications. It is framed within the broader thesis context of molecular optimization algorithms research, a field critical for accelerating drug discovery and materials science. The convergence of these architectures represents a paradigm shift in generative modeling, offering unprecedented capabilities for designing novel molecular structures with optimized properties.

Core Architectures: Technical Foundations

Denoising Diffusion Probabilistic Models (DDPMs) for Molecular Generation

Diffusion models learn a data distribution by gradually denoising a variable sampled from a Gaussian distribution. In molecular optimization, the forward process corrupts a molecular structure (e.g., atom types and coordinates) over time t by adding Gaussian noise. The reverse process, parameterized by a neural network (typically a U-Net or transformer), learns to iteratively denoise to generate novel, valid structures.

Key Algorithm (Training):

- Input: A dataset of molecules with desired properties.

- Forward Process (Fixed): For t = 1...T, compute q(x_t | x_{t-1}) = N(x_t; √(1-β_t) x_{t-1}, β_t I). The

β_tschedule increases linearly from β1=1e-4 to βT=0.02. - Reverse Process (Learned): Train a neural network ε_θ to predict the added noise. The loss function is L(θ) = E_{t, x_0, ε}[||ε - ε_θ(√(ᾱ_t)x_0 + √(1-ᾱ_t)ε, t)||^2], where ᾱt = ∏{s=1}^t (1-β_s).

- Conditioning: For property-guided generation, the network is conditioned on a scalar property value y: ε_θ(x_t, t, y).

Graph-Based Generative Models

Molecules are inherently graph-structured data (atoms as nodes, bonds as edges). Graph Neural Networks (GNNs) are the backbone of generative models like Graph Convolutional Policy Networks (GCPN) and Molecular Graph Sparse Transformer (MGST).

Experimental Protocol for Graph-Based Generation (GCPN):

- State Representation: Represent the molecular graph as G = (V, E), where V is the node feature matrix (atom type, formal charge) and E is the adjacency tensor (bond type).

- Action Space: Define actions as graph modifications: add/remove atom, add/remove bond, or terminate generation.

- Policy Network: A Graph Convolutional Network (GCN) maps state G to a probability distribution over actions. The GCN update rule for layer l is: H^{(l+1)} = σ(à H^{(l)} W^{(l)}), where à is the normalized adjacency matrix.

- Reinforcement Learning: Use Proximal Policy Optimization (PPO) with a reward function R = R_{property} + λ R_{validity}. Training runs for 1,000 episodes with a learning rate of 0.001.

Large Language Models for Molecular SMILES and Beyond

LLMs, trained on massive corpora of text (including SMILES strings and scientific literature), learn rich representations of chemical space. They can be adapted for molecular generation and optimization via fine-tuning.

Methodology for LLM Fine-tuning on Molecular Tasks:

- Pre-trained Model: Start with a base model (e.g., GPT-3 architecture, 125M parameters).