From Molecules to Medicine: How Deep Reinforcement Learning is Revolutionizing Drug Discovery and Molecule Optimization

This article provides a comprehensive guide to deep reinforcement learning (DRL) for molecule optimization, tailored for researchers, scientists, and drug development professionals.

From Molecules to Medicine: How Deep Reinforcement Learning is Revolutionizing Drug Discovery and Molecule Optimization

Abstract

This article provides a comprehensive guide to deep reinforcement learning (DRL) for molecule optimization, tailored for researchers, scientists, and drug development professionals. We begin by establishing the fundamental concepts, contrasting DRL with traditional methods, and outlining its unique value proposition. Next, we delve into core algorithms, agent-environment frameworks, and real-world application case studies in drug discovery. We then address critical challenges, including reward function design, exploration-exploitation trade-offs, and computational efficiency. Finally, we cover validation strategies, benchmark comparisons to other AI methods, and metrics for assessing real-world impact. The article concludes by synthesizing the transformative potential of DRL for accelerating and de-risking the pipeline from preclinical research to clinical candidates.

Demystifying Deep Reinforcement Learning: The AI Paradigm Set to Transform Molecule Design

Traditional drug discovery is a high-cost, high-failure endeavor, often described by Eroom's Law (Moore's Law reversed), where the cost to develop a new drug doubles approximately every nine years. The central challenge is the astronomical size of chemical space, estimated at 10^60 synthesizable organic molecules, making exhaustive exploration impossible. This whiteprames the application of Deep Reinforcement Learning (DRL) as a transformative methodology for de novo molecule design and optimization, directly addressing the core bottleneck of identifying viable lead compounds with desired pharmacokinetic and pharmacodynamic properties.

Quantitative Landscape of the Bottleneck

The following tables summarize the quantitative challenges in traditional drug discovery and the performance metrics of AI-driven approaches.

Table 1: The Traditional Drug Discovery Bottleneck (2020-2024 Averages)

| Metric | Value | Source/Notes |

|---|---|---|

| Average Cost per Approved Drug | $2.3 Billion | Includes cost of failures (Tufts CSDD) |

| Average Timeline from Discovery to Approval | 10-15 Years | FDA/Cognizant Reports |

| Clinical Phase Transition Success Rates | Phase I: 52.0%, Phase II: 28.9%, Phase III: 57.8% | BIO, Informa, QLS 2024 Analysis |

| Chemical Space Size (Est.) | 10^60 synthesizable molecules | Based on organic chemistry rules |

| Typical High-Throughput Screening Library Size | 10^5 - 10^6 compounds | Major pharmaceutical benchmarks |

Table 2: Performance of AI-Driven Molecule Optimization (Selected Studies)

| Model/Approach | Key Achievement | Benchmark/Validation |

|---|---|---|

| Deep Reinforcement Learning (DRL) with Policy Gradient | 100% validity rate of generated molecules; >100% improvement over target property (e.g., solubility) | ZINC250k dataset, property optimization tasks (Olivecrona et al., 2017) |

| Graph Neural Networks (GNN) + DRL (MolDQN) | Outperformed Bayesian optimization in multi-property optimization (QED, SA, MW) | Guacamol benchmark suite |

| Fragment-based DRL (REINVENT 2.0) | Successfully generated novel compounds with high predicted activity against DRD2 and JAK2 | In-silico target-specific scoring functions |

| Generative Pre-trained Transformer (GPT) for Molecules | High novelty (90%) and synthetic accessibility for kinase inhibitors | Conditional generation on specific protein targets |

Core DRL Framework for Molecule Optimization

Deep Reinforcement Learning formulates molecule design as a sequential decision-making process. An agent (the AI model) interacts with an environment (the chemical space and property prediction models) by taking actions (adding a molecular fragment or atom) to build a molecular graph, receiving rewards based on the predicted properties of the intermediate or final molecule.

Experimental Protocol: A Standard DRL Workflow

Protocol Title: End-to-End DRL for De Novo Molecule Design with Multi-Objective Reward

Objective: To generate novel molecules that maximize a composite reward function balancing drug-likeness (QED), synthetic accessibility (SA), and target binding affinity (docked score).

Materials & Environment Setup:

- Chemical Action Space: Defined as a set of valid chemical reactions (e.g., from USPTO datasets) or fragment additions compliant with valency rules.

- State Representation: Molecules are represented as SMILES strings or, preferably, as graphs using Graph Neural Networks (GNNs).

- Reward Function (R):

R(m) = w1 * QED(m) + w2 * (10 - SA(m)) + w3 * pChEMBL(m)where weightsware tuned, andpChEMBLis a predicted activity proxy. - Agent Architecture: A Policy Network (Actor) implemented as a Recurrent Neural Network (RNN) for SMILES or a GNN for graphs, paired with a Value Network (Critic) for stability (Actor-Critic method).

Procedure:

- Initialization: Pre-train the policy network on a large corpus of known molecules (e.g., ChEMBL) via supervised learning to learn grammatical rules of chemical structures.

- Episode Simulation: For each training episode:

a. The agent starts with an initial state (e.g., a single carbon atom or a core scaffold).

b. At each step

t, the agent selects an action (next fragment) based on its current policyπ. c. The environment updates the molecular state and provides an intermediate reward (if using a progressive reward) or a final reward only upon molecule completion. d. The episode terminates when a "stop" action is chosen or a maximum length is reached. - Policy Optimization: Trajectories (state-action-reward sequences) are collected. The policy gradient (e.g., Proximal Policy Optimization - PPO) is computed to update the agent's parameters, increasing the probability of actions leading to high-reward molecules.

- Evaluation: Generated molecules are validated using independent quantitative structure-activity relationship (QSAR) models, docking simulations, and assessment of novelty and synthetic accessibility.

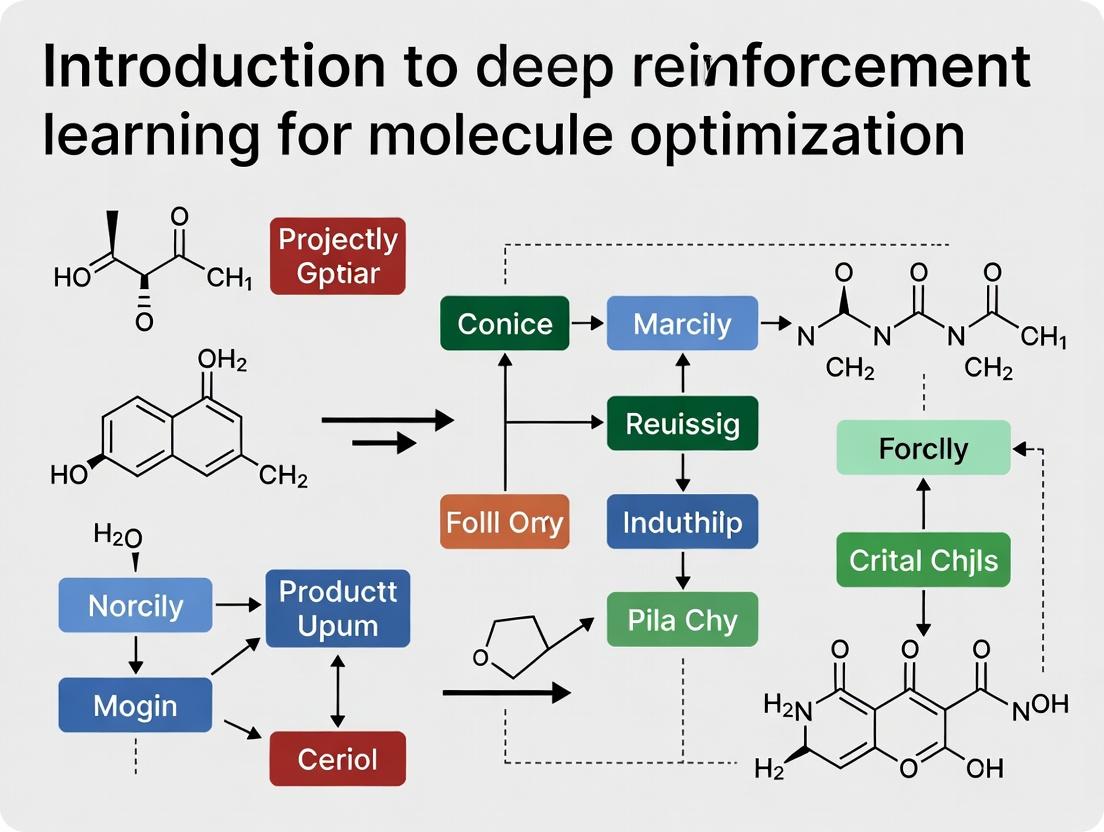

Diagram Title: DRL Molecule Optimization Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials & Tools for AI-Driven Molecule Optimization Research

| Item | Function & Relevance in Experiment | Example/Provider |

|---|---|---|

| Chemical Databases | Provide structured data for pre-training and benchmarking. Essential for defining the "universe" of known chemistry. | ChEMBL, PubChem, ZINC, GOSTAR |

| Molecular Representation Libraries | Convert chemical structures into machine-readable formats (numerical vectors/graphs). | RDKit (SMILES, fingerprints), DeepChem (featurizers) |

| Property Prediction Models | Act as surrogate reward functions during RL training. Predict ADMET, activity, etc. | Random Forest/QSAR models, Pre-trained GNNs (e.g., Attentive FP) |

| DRL Frameworks | Provide optimized, stable implementations of reinforcement learning algorithms. | RLlib, Stable-Baselines3, custom TensorFlow/PyTorch code |

| Generative Model Toolkits | Offer benchmarked implementations of state-of-the-art molecular generation models. | REINVENT, GuacaMol, Molecular AI (DeepMind) |

| Cheminformatics Suites | For post-generation analysis: novelty, diversity, synthetic accessibility, and clustering. | RDKit, Schrödinger Suite, OpenEye Toolkit |

| In-Silico Validation Suites | Perform computational validation via docking or free-energy calculations on generated hits. | AutoDock Vina, Schrodinger Glide, OpenMM |

Advanced Architectures & Signaling Pathways in AI-Driven Discovery

Modern DRL integrates with other neural architectures. A key paradigm involves using a multi-objective reward that signals through a hybrid agent to balance conflicting properties.

Diagram Title: Multi-Objective Reward Signaling Pathway

AI-driven molecule optimization, particularly through Deep Reinforcement Learning, presents a paradigm shift from serendipitous screening to intentional, goal-directed molecular generation. By integrating multi-faceted chemical intelligence into a closed-loop design process, DRL directly attacks the fundamental bottleneck of navigating vast chemical space. This approach promises to drastically reduce the time and cost associated with the early discovery phase, enabling a more efficient and targeted pipeline for bringing new therapeutics to patients in need. The future lies in integrating these generators with automated synthesis and testing platforms, closing the loop between in-silico design and empirical validation.

This technical guide provides a foundational overview of reinforcement learning (RL) concepts specifically framed for application in molecular optimization, a critical subfield in drug discovery and materials science. It details the core RL triad—Agent, Environment, and Reward—within chemical reaction and property prediction contexts, serving as an introductory component to a broader thesis on deep reinforcement learning for molecule optimization research.

In molecule optimization, the RL paradigm is mapped directly onto chemical processes:

- Agent: The computational algorithm that proposes molecular modifications.

- Environment: The simulated or real-world chemical system (e.g., a predictive Quantitative Structure-Activity Relationship (QSAR) model, a virtual reaction flask, or a laboratory automation system).

- Reward: A numerical signal quantifying the desirability of a generated molecule, based on target properties like binding affinity, solubility, or synthetic accessibility.

The agent learns a policy (a strategy for molecular modification) to maximize the cumulative reward over a sequence of actions, thereby navigating chemical space towards optimal compounds.

Core Components: A Detailed Technical Breakdown

The Agent: Molecular Architect

The agent is typically a deep neural network. Its design is crucial for handling complex, structured chemical representations.

Common Architectures:

- Recurrent Neural Networks (RNNs)/GRUs/LSTMs: Operate on molecular string representations (e.g., SMILES) sequentially.

- Graph Neural Networks (GNNs): Directly process molecular graphs, naturally capturing topology and features of atoms and bonds.

- Transformer-based Models: Operate on tokenized SMILES or molecular fragments with attention mechanisms.

Policy: The agent's strategy, often parameterized as $\pi_\theta(a|s)$, representing the probability of taking action a (e.g., adding a functional group) given the current state s (the current molecule).

The Environment: Chemical Simulator

The environment must evaluate the agent's actions. In early research, this is predominantly a computationally efficient surrogate model.

Environment Types:

- Virtual Molecular Simulators: Software like RDKit or Open Babel provides calculated properties (cLogP, molecular weight, etc.) and reaction rules.

- Predictive QSAR/QSPR Models: Pre-trained machine learning models that predict target biological activity or physicochemical properties from molecular structure.

- Multi-objective Environments: Combine multiple reward signals (e.g., activity, toxicity, synthesizability) into a single, Pareto-informed reward.

The Reward Function: Objective Quantification

The reward function $R(s, a, s')$ is the most critical design element, as it encapsulates the entire research goal.

Typical Reward Components:

- Primary Objective: e.g., predicted IC50 against a target protein.

- Physicochemical Constraints: Penalties/rewards for adhering to Lipinski's Rule of Five or other drug-likeness metrics.

- Synthetic Accessibility Score (SA): Rewards molecules that are easier to synthesize (e.g., based on retrosynthetic complexity).

- Novelty/Uniqueness: Encourages exploration of chemical space by rewarding molecules distant from a known set.

Table 1: Common Reward Function Components in Molecule Optimization

| Component | Typical Metric | Goal | Weight Range (Relative) |

|---|---|---|---|

| Target Activity | pIC50, pKi | Maximize | High (1.0 - 0.7) |

| Selectivity | Ratio against off-target | Maximize | Medium (0.5 - 0.3) |

| Toxicity | Predicted LD50, hERG inhibition | Minimize | High (1.0 - 0.7) |

| Solubility | cLogS | Maximize | Medium (0.4 - 0.2) |

| Synthetic Accessibility | SA Score (1=easy, 10=hard) | Minimize | Medium (0.5 - 0.3) |

| Drug-likeness | QED Score (0 to 1) | Maximize | Low-Medium (0.3 - 0.1) |

Experimental Protocols & Methodologies

Protocol 1: Benchmarking an RL Agent with a Public Dataset

Objective: To train and validate an RL agent for generating molecules with high predicted DRD2 (Dopamine Receptor D2) activity.

Environment Setup:

- Use the ZINC250k dataset or a ChEMBL-derived dataset filtered for DRD2 activity.

- Implement a pre-trained predictive model (e.g., a random forest or GCN) for DRD2 activity as the environment's core.

- Integrate RDKit for calculating property-based penalties (cLogP, molecular weight).

Agent Training:

- Initialize a policy network (e.g., a GRU-based sequence generator).

- Use Policy Gradient (REINFORCE) or Proximal Policy Optimization (PPO) algorithms.

- Hyperparameters: Learning rate: 0.0001 to 0.001; Discount factor (γ): 0.9 to 0.99; Batch size: 64 to 128.

- Allow the agent to perform a maximum of 40 steps (modifications) per episode, starting from a random valid SMILES.

Validation:

- Generate a set of molecules from the trained agent.

- Filter for validity and uniqueness using RDKit.

- Evaluate the top candidates through the same predictive model and report the percentage meeting a defined activity threshold (e.g., pIC50 > 7).

Table 2: Representative Benchmark Results (Synthetic Data)

| Study (Example) | Agent Algorithm | Environment/Task | Key Metric | Result (Top 100 Molecules) |

|---|---|---|---|---|

| Zhou et al., 2019 | PPO | QED + SA Optimization | Avg. QED | 0.93 |

| You et al., 2018 | PG (Graph-based) | Penalized LogP Optimization | Avg. Improvement | +4.85 |

| Benchmark Run (DRD2) | REINFORCE | DRD2 Activity Prediction | % with pIC50 > 7 | 72% |

Visualizing the RL Cycle for Molecule Optimization

Title: The Reinforcement Learning Cycle in Molecular Design

Title: Full RL-Driven Molecular Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Libraries

| Item (Software/Library) | Primary Function | Key Utility in RL for Chemistry |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. | Core environment component. Calculates molecular descriptors, fingerprints, properties (cLogP, SA), validates chemical structures, and performs basic reactions. |

| PyTorch / TensorFlow | Deep learning frameworks. | Used to build and train the neural network components of the RL agent (policy & value networks) and predictive environment models. |

| OpenAI Gym / ChemGym | Toolkit for developing and comparing RL algorithms. | Provides a standardized API for creating custom chemical reaction environments, enabling benchmark comparisons. |

| Stable-Baselines3 | Set of reliable RL algorithm implementations. | Offers pre-built, tuned RL algorithms (PPO, DQN, SAC) that can be integrated with custom chemical environments, accelerating development. |

| ChEMBL / PubChem | Public databases of bioactive molecules. | Primary sources of structured chemical and bioactivity data for training predictive environment models and providing initial compound sets. |

| SMILES | Simplified Molecular-Input Line-Entry System. | The standard string-based representation for molecules, enabling the use of sequence-based neural networks (RNNs, Transformers) as agents. |

This whitepaper serves as a core technical chapter within a broader thesis on Introduction to Deep Reinforcement Learning (DRL) for Molecule Optimization Research. The optimization of molecules for desired properties (e.g., drug efficacy, synthetic accessibility) via DRL requires the agent to navigate an astronomically vast chemical space. The fundamental bottleneck is the representation of the molecular "state." Traditional fingerprint-based or descriptor-based methods are often lossy and lack the granularity for sequential decision-making in a DRL loop. This guide details the integration of deep neural networks (NNs)—specifically graph neural networks (GNNs)—to learn continuous, informative, and predictive representations of molecular states, forming the critical perceptual system for a DRL agent in molecular design.

Core Neural Architectures for Molecular Representation

The state-of-the-art approach represents a molecule as a graph ( G = (V, E) ), where atoms are nodes ( V ) and bonds are edges ( E ). Neural networks process this structure to produce a fixed-size latent vector ( h_G ), the molecular state representation.

Key Architecture: Message Passing Neural Networks (MPNNs)

The predominant framework is the Message Passing Neural Network, which operates through iterative steps of message passing, aggregation, and node updating.

Detailed Protocol for MPNN-based State Representation:

- Input Encoding: Each node ( vi ) is initialized with a feature vector ( hi^0 ) encoding atom properties (atomic number, degree, hybridization, etc.). Each edge ( e_{ij} ) is initialized with a feature vector encoding bond properties (type, conjugation, stereo).

- Message Passing (T steps): For ( t = 1 ) to ( T ):

- Message Function ( Mt ): For each pair of connected nodes ( (vi, vj) ), a message ( m{ij}^{t} ) is computed: ( m{ij}^{t} = Mt(hi^{t-1}, hj^{t-1}, e{ij}) ), typically a neural network (e.g., a Multi-Layer Perceptron - MLP).

- Aggregation ( At ): For each node ( vi ), incoming messages from its neighborhood ( N(i) ) are aggregated: ( \bar{m}i^{t} = At({m{ij}^{t} | j \in N(i)}) ), often a permutation-invariant operation like sum, mean, or max.

- Update Function ( Ut ): The node's state is updated using its previous state and the aggregated message: ( hi^{t} = Ut(hi^{t-1}, \bar{m}_i^{t}) ), another trainable NN (e.g., a Gated Recurrent Unit - GRU).

- Readout/Graph Pooling: After ( T ) steps, a graph-level representation ( hG ) is computed from the set of final node embeddings ( {hi^T} ): ( hG = R({hi^T | i \in V}) ). ( R ) is a readout function, which can be a simple global pooling (sum) followed by an MLP, or a more advanced hierarchical pooling layer.

Diagram: MPNN Workflow for Molecular State Encoding

Alternative and Advanced Architectures

- Graph Attention Networks (GATs): Use attention mechanisms to weigh neighbor contributions during aggregation.

- Graph Isomorphism Networks (GINs): Provably as powerful as the Weisfeiler-Lehman graph isomorphism test, offering strong discriminative capacity.

- 3D-Conformal GNNs: Incorporate spatial (3D) molecular geometry by using invariant/equivariant neural layers.

Quantitative Performance of Representation Models

The quality of a learned representation ( h_G ) is typically evaluated by its performance in downstream predictive tasks.

Table 1: Performance of GNN Architectures on MoleculeNet Benchmark Datasets (Classification AUC-ROC / Regression RMSE)

| Model Architecture | HIV (AUC-ROC) | BBBP (AUC-ROC) | ESOL (RMSE) | FreeSolv (RMSE) | Key Characteristic |

|---|---|---|---|---|---|

| MPNN (Gilmer et al.) | 0.783 | 0.720 | 1.150 | 2.043 | General framework, widely adaptable. |

| GIN (Xu et al.) | 0.801 | 0.768 | 1.060 | 1.990 | High expressive power (WL-test equivalent). |

| GAT (Veličković et al.) | 0.792 | 0.739 | 1.110 | 2.120 | Learns importance of neighbor nodes. |

| 3D-GNN (Schütt et al.) | - | - | 0.890 | 1.600 | Incorporates spatial distance/geometry. |

| Molecular Fingerprint (ECFP4) | 0.761 | 0.695 | 1.290 | 2.390 | Traditional baseline, non-learned. |

Data is representative from recent literature (MoleculeNet benchmarks). Performance varies with specific hyperparameters and training regimes.

Experimental Protocol: Training a State Representation Model

This protocol outlines supervised training of a GNN to predict molecular properties, yielding a pre-trained state representation encoder.

Title: End-to-End Supervised Training of a GNN for Property Prediction

Detailed Methodology:

- Data Curation: Acquire a dataset of molecules with associated target properties (e.g., solubility, biological activity). Standardize structures, compute features (using toolkits like RDKit), and split into training/validation/test sets (80/10/10%).

- Model Configuration: Implement a GNN encoder (e.g., 3-5 message passing layers, hidden dimension 300). Append a task-specific prediction head (e.g., a 2-layer MLP with dropout).

- Training Loop: For

Nepochs:- Sample a batch of molecular graphs.

- Forward pass: Encode graphs to

h_G, pass through predictor to get predictionsŷ. - Compute loss (e.g., Mean Squared Error for regression, Cross-Entropy for classification) between

ŷand true labelsy. - Backpropagate gradients and update model weights using an optimizer (e.g., Adam).

- Output: The trained GNN encoder can now produce

h_Gfor any input molecule. This encoder can be frozen and used as the state representation module within a DRL agent for molecule optimization.

Integration with Deep Reinforcement Learning

In the DRL framework for molecule optimization, the state s_t is the current molecule. The GNN encoder ( f{GNN}(st) = h{st} ) provides the state representation for the policy network ( \pi(at | h{s_t}) ), which selects an action a_t (e.g., add a functional group).

Diagram: GNN-State within the DRL Loop for Molecule Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Developing Neural Molecular State Representations

| Item / Solution | Function in Research | Example / Implementation |

|---|---|---|

| Molecular Featurization Library | Converts raw molecular formats (SMILES, SDF) into graph-structured data with node/edge features. | RDKit: Open-source cheminformatics. mol = Chem.MolFromSmiles(smiles). |

| Deep Learning Framework | Provides flexible, auto-differentiable environment to build and train GNN models. | PyTorch with PyTorch Geometric (PyG), or TensorFlow with Deep Graph Library (DGL). |

| Graph Neural Network Library | Offers pre-implemented, optimized GNN layers (MPNN, GAT, GIN) and graph utilities. | PyTorch Geometric (PyG), Deep Graph Library (DGL), Jraph (JAX). |

| Benchmark Datasets | Standardized datasets for training and fair evaluation of representation models. | MoleculeNet (collection), QM9, PCBA, Tox21. Accessed via torch_geometric.datasets. |

| High-Performance Computing (HPC) | Accelerates training of large GNNs on extensive chemical databases (GPU/TPU clusters). | NVIDIA A100 GPUs, Google Cloud TPU v4, Amazon EC2 P4d instances. |

| Hyperparameter Optimization Suite | Automates the search for optimal model architecture and training parameters. | Weights & Biases (W&B) Sweeps, Optuna, Ray Tune. |

| Chemical Simulation & Scoring | Provides the "environment" for DRL, calculating rewards (e.g., docking scores, QSAR predictions). | AutoDock Vina (docking), Schrödinger Suite, OpenMM (MD simulations). |

| Visualization Toolkit | Enables interpretation of learned representations and model decisions. | UMAP/t-SNE (for h_G projection), RDKit (structure rendering), Captum (for GNN explainability). |

Deep Reinforcement Learning (DRL) represents a paradigm shift in computational molecule optimization, a core subtask within drug discovery. Unlike traditional methods constrained by linear exploration or brute-force sampling, DRL agents learn to navigate the vast chemical space through sequential decision-making, optimizing for complex, multi-objective reward functions. This guide details the technical advantages of DRL over Structure-Activity Relationship (SAR) analysis and High-Throughput Screening (HTS), contextualized within modern research workflows.

Quantitative Comparison of Core Methodologies

Table 1: Performance Comparison of Molecule Optimization Approaches

| Metric | Traditional SAR | High-Throughput Screening (HTS) | Deep Reinforcement Learning (DRL) |

|---|---|---|---|

| Chemical Space Explored | Local around hit series (~10²-10³ compounds) | Large but finite library (~10⁵-10⁶ compounds) | Vast, continuous space (>10⁶⁰ potential compounds) |

| Cycle Time per Iteration | Weeks to months (synthesis-driven) | Days to weeks (assay-driven) | Minutes to hours (computation-driven) |

| Primary Optimization Driver | Medicinal chemist intuition & heuristic rules | Random physical sampling | Learned policy from reward maximization |

| Multi-Objective Optimization | Sequential, often subjective | Limited to primary assay hits | Explicit, quantifiable (e.g., QED, SA, binding affinity) |

| Average Success Rate* | ~30% (lead identified from hit) | <0.01% (hit rate from library) | 40-60% (in-silico generation of valid leads) |

| Typical Cost per Campaign* | $1M - $5M | $500K - $2M+ (library & assays) | <$100K (compute time) |

Representative estimates from published literature (2020-2024). *Success defined by in-silico metrics (e.g., synthetic accessibility, drug-likeness, docking score).

Technical Advantages & Detailed Protocols

Overcoming the Limitations of Sequential SAR

Traditional SAR relies on a one-dimensional, cycle-by-cycle modification of a core scaffold. DRL replaces this with a multidimensional search.

DRL Protocol for Scaffold Hopping:

- Environment Definition: The chemical space is defined by a SMILES-based grammar or molecular graph representation.

- Agent & Policy Network: A Recurrent Neural Network (RNN) or Graph Neural Network (GNN) serves as the policy network (π), predicting the next action (e.g., add a fragment, change a bond).

- State (st): The current partial or complete molecular structure.

- Action (at): A defined chemical transformation (e.g., add methyl, replace carbonyl).

- Reward (rt): A composite function computed at the end of an episode (a complete molecule):

R = α * pIC₅₀(predicted) + β * QED + γ * SAscore + δ * Lipinski(where α, β, γ, δ are weighting coefficients). - Training: Using Proximal Policy Optimization (PPO) or REINFORCE with baseline, the agent is trained over millions of simulated episodes to maximize expected cumulative reward.

Surpassing the Stochastic Nature of HTS

HTS is fundamentally a stochastic sampling method. DRL introduces directed, intelligent exploration.

DRL Protocol for Directed Exploration:

- Pre-training with a Prior: The policy network is pre-trained via supervised learning on large databases (e.g., ChEMBL) to generate drug-like molecules, providing a strong initial bias.

- Exploration-Exploitation Balance: The agent uses stochastic policy output to try novel modifications (exploration) while favoring actions that led to high rewards historically (exploitation).

- Transfer Learning: An agent pre-trained on a general compound library can be fine-tuned with a small set of actives from a target-specific HTS, effectively amplifying the informational value of the HTS data.

Visualization of Workflows

Diagram 1: DRL Molecule Optimization Closed Loop

Diagram 2: Contrasting Molecule Discovery Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a DRL-Based Optimization Pipeline

| Item/Reagent | Function in DRL for Molecules | Example/Tool |

|---|---|---|

| Chemical Representation | Encodes molecular structure as machine-readable input for the DRL agent. | SMILES, DeepSMILES, SELFIES, Molecular Graph (via RDKit). |

| DRL Algorithm Framework | Provides the optimization algorithm for training the agent. | OpenAI Spinning Up, Stable-Baselines3, Ray RLLib. |

| Policy Network Architecture | The neural network that decides which action to take. | RNN (LSTM/GRU), Graph Neural Network (GNN), Transformer. |

| Reward Function Components | Quantitative metrics that define the optimization goals. | pIC₅₀ Predictor (e.g., trained Random Forest, CNN), QED (Drug-likeness), SAscore (Synthetic Accessibility), CLogP (Lipophilicity). |

| Molecular Simulation/Docking | Provides in-silico potency and binding mode estimates for the reward function. | AutoDock Vina, GNINA, Molecular Dynamics (OpenMM). |

| Benchmarking Datasets | Standardized sets for training and comparing model performance. | Guacamol, MOSES, ZINC20. |

| Wet-Lab Validation Kit | Essential for final experimental confirmation of DRL-generated leads. | Target Protein (purified), Cell-Based Assay (for functional activity), LC-MS (for compound characterization). |

This technical guide provides a formal introduction to the core mathematical frameworks of reinforcement learning (RL)—Markov Decision Processes (MDPs), policies, and value functions—within the context of molecule optimization research. By establishing this foundation, we bridge the conceptual gap between computational decision theory and experimental chemistry, enabling researchers to design, interpret, and implement deep RL agents for molecular design.

In molecule optimization, an RL agent learns to perform sequential decision-making—such as adding a functional group or modifying a scaffold—to maximize a reward signal, often a predicted or computed molecular property. This process is formally described by an MDP.

Core Terminology & Mathematical Definitions

Markov Decision Process (MDP)

An MDP is a 5-tuple $(S, A, P, R, \gamma)$ that provides a mathematical model for sequential decision-making under uncertainty, directly analogous to a stepwise synthetic or design process.

| MDP Component | Mathematical Symbol | Chemical Research Analogy | Typical Quantitative Range/Example |

|---|---|---|---|

| State ($S$) | $s_t \in S$ | Representation of the current molecule (e.g., SMILES string, molecular graph, descriptor vector). | State space size: $10^3$ to $10^{60}$+ for virtual libraries. |

| Action ($A$) | $a_t \in A$ | A valid chemical transformation (e.g., "add methyl," "open ring," "change atom type"). | Discrete action sets of 10-1000+ possible steps. |

| Transition Dynamics ($P$) | $P(s{t+1} | st, a_t)$ | The deterministic or stochastic outcome of applying a reaction rule or transformation. | Often modeled as deterministic ($P=1$) in de novo design. |

| Reward ($R$) | $rt = R(st, at, s{t+1})$ | The feedback signal (e.g., predicted binding affinity, synthetic accessibility score, logP improvement). | Scalar, e.g., -10 to +10, or normalized [0,1]. |

| Discount Factor ($\gamma$) | $\gamma \in [0, 1]$ | Controls preference for immediate vs. long-term rewards (e.g., final product property vs. intermediate stability). | Commonly $\gamma = 0.9$ to $0.99$. |

Policy ($\pi$)

A policy $\pi$ is the agent's strategy, defining the probability of taking any action from a given state. It is the core object of optimization.

- Mathematical Definition: $\pi(a|s) = P(at=a | st=s)$. Can be deterministic ($a = \mu(s)$).

- Chemical Interpretation: The "synthetic protocol" or "design heuristic" the AI uses. A stochastic policy explores; an optimized, deterministic policy exploits known high-yielding steps.

Value Functions

Value functions estimate the long-term desirability of states or state-action pairs, guiding the policy.

State-Value Function $V^{\pi}(s)$

The expected cumulative reward starting from state $s$ and following policy $\pi$ thereafter. $V^{\pi}(s) = \mathbb{E}{\pi}[\sum{k=0}^{\infty} \gamma^k r{t+k} | st = s]$

Action-Value Function $Q^{\pi}(s, a)$

The expected cumulative reward after taking action $a$ in state $s$ and subsequently following policy $\pi$. $Q^{\pi}(s, a) = \mathbb{E}{\pi}[\sum{k=0}^{\infty} \gamma^k r{t+k} | st = s, a_t = a]$

| Value Function | Interpretation in Molecule Optimization | Key Equation (Bellman Expectation) |

|---|---|---|

| $V^{\pi}(s)$ | "How good is it to have this current intermediate molecule, given my design strategy $\pi$?" | $V^{\pi}(s) = \suma \pi(a|s) \sum{s'} P(s'|s,a)[R(s,a,s') + \gamma V^{\pi}(s')]$ |

| $Q^{\pi}(s, a)$ | "How good is it to perform this specific chemical transformation on the current molecule, then continue with strategy $\pi$?" | $Q^{\pi}(s,a) = \sum{s'} P(s'|s,a)[R(s,a,s') + \gamma \sum{a'} \pi(a'|s') Q^{\pi}(s',a')]$ |

The optimal Q-function $Q^(s,a)$ obeys the Bellman optimality equation: $Q^(s,a) = \sum{s'} P(s'|s,a)[R(s,a,s') + \gamma \max{a'} Q^(s',a')]$. An optimal policy is then $\pi^(s) = \arg\max_a Q^*(s,a)$.

Experimental Protocols for RL in Molecule Optimization

A standard workflow for training a deep RL agent for molecular design involves the following detailed methodology:

Protocol 1: Policy Gradient Training with a Predictive Reward Model

- Objective: Learn a stochastic policy $\pi\theta(a|s)$ (e.g., a Graph Neural Network) to generate molecules maximizing a property predicted by a pre-trained reward model $R\phi(s)$.

- Initialization:

- Initialize policy network parameters $\theta$ randomly.

- Load a pre-trained property predictor $R_\phi$ (e.g., a Random Forest or NN regressor trained on QSAR data).

- Episode Simulation:

- For episode = 1 to N:

- Start from an initial state $s0$ (e.g., a simple scaffold).

- For t = 0 to T (max steps):

- Sample an action $at \sim \pi\theta(\cdot|st)$.

- Apply the action deterministically to get new molecule $s{t+1}$.

- If $s{t+1}$ is invalid, terminate with large negative reward.

- If a terminal action (e.g., "stop") is chosen, proceed to reward computation.

- The final state $s_{final}$ is the generated molecule.

- For episode = 1 to N:

- Reward Computation:

- Compute reward $r = R\phi(s{final}) + \lambda \cdot \text{SAscore}(s_{final})$, where SAscore is a synthetic accessibility penalty.

- Policy Update (REINFORCE):

- Compute returns $Gt = \sum{k=t}^{T} \gamma^{k-t} r$ (here, $r$ is only received at termination).

- Estimate policy gradient: $\nabla\theta J(\theta) \approx \sum{t=0}^{T} Gt \nabla\theta \log \pi\theta(at|st)$.

- Update parameters: $\theta \leftarrow \theta + \alpha \nabla\theta J(\theta)$.

- Validation: Evaluate the policy by sampling a batch of final molecules and assessing their properties via the predictor and using computational chemistry (e.g., docking) on top candidates.

Protocol 2: Q-Learning for Molecular Optimization

- Objective: Learn the optimal $Q^*(s,a)$ function using a deep Q-network (DQN).

- Replay Buffer: Initialize an experience replay buffer $D$ with capacity $C$ (e.g., $C=10^5$ transitions).

- Network Initialization: Initialize Q-network $Q\theta$ and a target network $Q{\theta^-}$ with $\theta^- = \theta$.

- Training Loop (for many episodes):

- Generate a molecule trajectory using an $\epsilon$-greedy policy derived from $Q_\theta$.

- Store each transition $(st, at, rt, s{t+1}, done)$ in $D$.

- For update step = 1 to M:

- Sample a random mini-batch of transitions from $D$.

- Compute target: $y = r + \gamma (1 - done) \max{a'} Q{\theta^-}(s', a')$.

- Minimize loss: $\mathcal{L}(\theta) = \mathbb{E}{(s,a,r,s')}[(y - Q\theta(s,a))^2]$.

- Update $\theta$ via gradient descent.

- Periodically soft-update target network: $\theta^- \leftarrow \tau \theta + (1-\tau)\theta^-$, with $\tau \ll 1$.

- Inference: The final policy is $\pi(s) = \arg\maxa Q\theta(s, a)$.

Visualizing the RL-MDP Framework for Chemistry

Diagram Title: RL-MDP Cycle for Molecular Design

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential computational "reagents" for implementing RL for molecule optimization.

| Tool/Component | Function in the RL Experiment | Example Libraries/Software |

|---|---|---|

| Molecular Representation | Encodes the chemical structure (state $s_t$) into a machine-readable format for the RL agent. | RDKit (SMILES, fingerprints), DeepGraphLibrary (DGL) for graphs, Selfies. |

| Action Space Definition | Defines the set of permissible chemical transformations ($A$) the agent can perform. | Molecular editing rules (e.g., BRICS), reaction templates, fragment libraries. |

| Reward Model/Predictor | Provides the reward signal $r_t$, often a surrogate for expensive experimental assays. | Pre-trained QSAR models (scikit-learn, XGBoost), docking scores (AutoDock Vina), physical property calculators. |

| RL Algorithm Core | The implementation of the policy or value function optimization algorithm. | Stable-Baselines3, Ray RLlib, custom PyTorch/TensorFlow implementations of DQN, PPO, etc. |

| Environment Simulator | The computational engine that applies actions, checks validity, and returns new states, enforcing $P(s'|s,a)$. | Custom Python environment using RDKit for chemical validity, conformer generation, and property calculation. |

| Experience Replay Buffer | Stores past transitions $(st, at, rt, s{t+1})$ for stable off-policy training, decorrelating sequential data. | Custom circular buffer or implementation within RL libraries. |

| Policy/Value Network | The parameterized function approximator (e.g., neural network) representing $\pi\theta$ or $Q\theta$. | Multilayer Perceptrons (MLPs), Graph Neural Networks (GNNs), Transformers. |

| Orchestration & Analysis | Manages training loops, hyperparameter sweeps, logs results, and visualizes generated molecular series. | MLflow, Weights & Biases (W&B), Jupyter Notebooks, matplotlib, seaborn. |

Building a Molecular AI: A Step-by-Step Guide to DRL Frameworks and Real-World Applications

This document constitutes a core chapter in the broader thesis, Introduction to Deep Reinforcement Learning for Molecule Optimization Research. It provides an in-depth technical exposition of three pivotal Reinforcement Learning (RL) algorithms—Policy Gradients, Actor-Critic, and Proximal Policy Optimization (PPO)—and their specific adaptations and applications in the domain of de novo molecular generation and optimization. The focus is on framing molecular design as a sequential decision-making process, where an agent (the "chemist") constructs a molecule step-by-step (e.g., atom by atom or fragment by fragment) to maximize a reward signal encoding desired chemical properties.

Foundational Concepts: Molecular Design as an MDP

In RL-based molecular generation, the process is formalized as a Markov Decision Process (MDP):

- State (s_t): The partially constructed molecular graph or its representation (e.g., SMILES string, fingerprint, graph embedding) at step t.

- Action (a_t): The next step in construction (e.g., adding a specific atom/bond, attaching a predefined fragment, or terminating generation).

- Policy (π(a|s)): A stochastic strategy, parameterized by a neural network, that defines the probability of taking action a in state s. This is the generative model.

- Reward (R): A (often sparse) scalar signal provided upon completion of a molecule (episode termination). It quantifies the success of the generated molecule against objectives like drug-likeness (QED), synthetic accessibility (SA), binding affinity (docking score), or multi-objective combinations.

The objective is to find the optimal policy π* that maximizes the expected cumulative reward, J(θ) = E{τ∼πθ}[R(τ)], where τ is a trajectory (sequence of states and actions) culminating in a complete molecule.

Algorithmic Deep Dive

Policy Gradients (REINFORCE)

Core Idea: Directly optimize the policy parameters θ by ascending the gradient of the expected reward. The gradient is estimated from sampled trajectories.

Algorithm (REINFORCE for Molecules):

- Initialize policy network π_θ (e.g., an RNN for SMILES generation or a Graph Neural Network).

- For iteration N: a. Generate a batch of M molecule trajectories τ^i by sampling actions from πθ until termination. b. For each trajectory τ^i, compute the total reward R(τ^i). c. Estimate the policy gradient: ∇θ J(θ) ≈ (1/M) Σi [R(τ^i) * Σt ∇θ log πθ(at^i | st^i)]. d. Update parameters: θ ← θ + α * ∇_θ J(θ).

Molecular Adaptation: The key challenge is the high-variance of the gradient estimate due to the vast action space and sparse reward. Reward shaping (e.g., intermediate rewards for valid sub-structures) and baseline subtraction are critical.

Actor-Critic Methods

Core Idea: Extend Policy Gradients by introducing a Critic network (value function Vϕ(s)) to reduce variance. The Critic evaluates the "goodness" of a state, providing a baseline for the Actor (the policy πθ).

Algorithm (Basic Actor-Critic):

- Initialize Actor πθ and Critic Vϕ.

- For each step in a trajectory: a. In state st, sample action at ∼ πθ(·|st). b. Execute at, observe next state s{t+1} and (if terminal) reward R. c. Compute the temporal difference (TD) error: δt = Rt + γVϕ(s{t+1}) - Vϕ(st) (γ is a discount factor). d. Critic Update: Minimize the TD error loss: L(ϕ) = δt². e. Actor Update: Adjust θ using the advantage estimate: ∇θ J(θ) ≈ δt * ∇θ log πθ(at | s_t).

Molecular Adaptation: The Critic learns to predict the expected final reward from any intermediate molecular state, guiding the Actor more efficiently than a monolithic trajectory reward. Advanced variants use Advantage Actor-Critic (A2C) for parallel exploration.

Proximal Policy Optimization (PPO)

Core Idea: A state-of-the-art Actor-Critic variant that constrains policy updates to prevent destructively large steps, ensuring stable and sample-efficient training. It is the current de facto standard in molecular RL.

Key Innovation: The PPO-Clip objective function. It modifies the surrogate objective to penalize changes that move the new policy (πθ) too far from the old policy (πθ_old).

Algorithm (PPO-Clip for Molecular Generation):

- Collect trajectories using the current policy πθold.

- Compute advantage estimates Ât (e.g., using Generalized Advantage Estimation - GAE) based on the Critic Vϕ.

- Optimize the clipped surrogate objective over K epochs on the sampled data: L^{CLIP}(θ) = Et [ min( rt(θ)Ât, clip(rt(θ), 1-ε, 1+ε)Ât ) ] where rt(θ) = πθ(at|st) / πθold(at|s_t), and ε is a small hyperparameter (e.g., 0.2).

- Simultaneously update the Critic by minimizing the MSE between Vϕ(st) and the target returns.

Why it Dominates Molecular RL: PPO's robustness to hyperparameters, ability to perform multiple optimization steps on a batch of molecule data, and prevention of catastrophic policy collapse make it exceptionally suitable for the noisy, expensive-to-evaluate molecular reward landscapes.

Comparative Analysis & Quantitative Data

Table 1: Algorithm Comparison for Molecular Generation

| Feature | REINFORCE | Actor-Critic (A2C) | PPO |

|---|---|---|---|

| Core Mechanism | Direct policy gradient using full Monte-Carlo returns. | Policy gradient using TD error as a baseline (Advantage). | Clipped objective to constrain policy update steps. |

| Sample Efficiency | Low (high variance). | Medium. | High (can reuse data for multiple epochs). |

| Training Stability | Low, sensitive to step size. | Medium. | High, less sensitive to hyperparameters. |

| Variance Reduction | Relies on simple baseline (e.g., moving avg). | Uses value function (Critic). | Uses value function + clipping. |

| Common Molecular Metric (e.g., QED) | Can achieve high but with high experimental variance. | More consistent improvement over epochs. | Consistently achieves highest median scores in benchmark tasks. |

| Typical Use Case | Foundational proof-of-concept. | More efficient than REINFORCE for smaller action spaces. | Standard for de novo design with complex property objectives. |

Table 2: Typical Performance on the Guacamol Benchmark (Simplified)

| Algorithm | Avg. Score (Top-100) on 'Medicinal Chemistry' Tasks | Time to Convergence (Relative) | Notes |

|---|---|---|---|

| REINFORCE | 0.45 - 0.65 | 1.0x (Baseline) | Highly task-dependent; requires careful reward tuning. |

| A2C | 0.60 - 0.75 | 0.7x | Faster per-epoch learning than REINFORCE. |

| PPO | 0.70 - 0.85 | 0.9x | Slower per-iteration but fewer total iterations needed; robust. |

Experimental Protocol: Benchmarking PPO for Molecular Generation

Objective: Train a PPO agent to generate molecules that maximize the Quantitative Estimate of Drug-likeness (QED) score.

Materials & Model Architecture:

- Agent: SMILES-based RNN (LSTM) or Graph Neural Network (GIN).

- Action Space: Vocabulary of atoms/bonds or set of molecular fragments.

- State Representation: Hidden state of the RNN or node embeddings of the partial graph.

- Reward Function: R(molecule) = QED(molecule) + λ * ValidityPenalty. (λ tunes penalty for invalid SMILES/graphs).

- Critic Network: A separate but similar network that maps the state representation to a scalar value.

Procedure:

- Initialization: Initialize Actor (policy πθ) and Critic (Vϕ) networks with random weights.

- Data Collection: For N episodes (e.g., N=1000): a. Start with an empty molecule (or start token). b. The Actor network sequentially selects actions (next token/fragment) until a "stop" action is chosen. c. Store the trajectory (states, actions, rewards=0) for the complete molecule. d. Compute the final QED reward for the valid molecule and assign it to the terminal step (or propagate discounted reward backward).

- Advantage Estimation: For all collected trajectories, compute advantages Â_t using GAE(λ) with the current Critic network.

- Optimization: For K epochs (e.g., K=4): a. Shuffle the collected trajectory data. b. Compute the PPO-Clip loss for the Actor and the value function loss for the Critic on mini-batches. c. Update both networks using Adam optimizer.

- Iteration: Repeat steps 2-4 for a set number of iterations or until convergence (plateau in average reward).

- Evaluation: Sample 1000 molecules from the final policy and report the mean/median QED, uniqueness, and novelty.

Visualizations

Diagram Title: REINFORCE Workflow for Molecule Generation

Diagram Title: Actor-Critic Molecular Design Loop

Diagram Title: PPO Training Cycle for Molecules

The Scientist's Toolkit: Research Reagents & Solutions

Table 3: Essential Tools for RL-Based Molecular Generation Research

| Item / Solution | Function / Purpose | Example (Open Source) | Notes for Researchers |

|---|---|---|---|

| RL Environment | Defines the MDP: state/action spaces and reward function. | ChEMBL, ZINC (for initial libraries), Guacamol (benchmark suite), OpenAI Gym custom env. | Must be tailored to specific representation (SMILES, Graph). |

| Policy Network | The parameterized generative model (Actor). | PyTorch/TensorFlow RNNs, DGL or PyG for Graph Neural Networks (GNNs). | GNNs are state-of-the-art for graph-based generation. |

| Value Network | The Critic that estimates state value for baseline. | Typically a simpler feed-forward network or GNN readout layer. | Shares some feature layers with the Actor in many implementations. |

| Reward Calculator | Computes the property-based reward signal. | RDKit (for QED, SA, LogP, etc.), AutoDock Vina/gnina (for docking). | Bottleneck: Docking is computationally expensive, requiring surrogate models (oracles) for scaling. |

| RL Algorithm Library | Provides optimized, tested implementations of PG, A2C, PPO. | Stable-Baselines3, RLlib, Tianshou. | Stable-Baselines3 is highly recommended for PPO out-of-the-box use. |

| Molecular Metrics | Evaluates the quality, diversity, and success of generated molecules. | Internal Diversity, Novelty, Frechet ChemNet Distance, Success Rate (@ top-k). | Crucial for reporting beyond simple reward maximization. |

| (Optional) Surrogate Model | A fast proxy (e.g., neural network) for expensive reward functions. | Custom Random Forest or DNN trained on property data. | Key for practical application when real-world evaluation is slow/costly. |

This whitepaper serves as a technical guide to designing the molecular environment for deep reinforcement learning (DRL), a cornerstone of modern molecule optimization research. The objective is to formalize the core components—action spaces, state representations, and transition rules—that enable an RL agent to navigate the vast chemical space towards molecules with optimized properties. This framework is foundational to the broader thesis of applying DRL to accelerate therapeutic discovery.

State Representations: Encoding Molecular Information

The state representation defines how a molecule is presented to the RL agent. The choice of representation significantly impacts the model's ability to learn valid and complex chemical structures.

SMILES Strings

The Simplified Molecular-Input Line-Entry System (SMILES) is a line notation encoding molecular structure as a string of ASCII characters.

- Advantages: Simple, compact, and compatible with many cheminformatics tools. Amenable to sequence-based models (e.g., RNNs, Transformers).

- Disadvantages: A single molecule can have multiple valid SMILES, creating redundancy. Small changes in the string can lead to large, invalid structural changes.

Molecular Graphs

A molecule is represented as a graph ( G = (V, E) ), where atoms are nodes ( V ) and bonds are edges ( E ).

- Advantages: Naturally captures molecular topology. Suitable for graph neural networks (GNNs), which excel at learning over relational data.

- Disadvantages: Requires more complex neural network architectures and processing.

3D Geometric Representations

Encodes the spatial coordinates (conformation) of atoms, providing information on bond angles, torsions, and non-covalent interactions.

- Advantages: Critical for predicting properties dependent on 3D structure, such as binding affinity or solubility.

- Disadvantages: Computationally expensive. A molecule has many possible conformers, complicating state definition.

Table 1: Comparison of Primary Molecular State Representations

| Representation | Data Format | Typical Model Architecture | Key Advantage | Primary Limitation |

|---|---|---|---|---|

| SMILES | Sequential string (ASCII) | RNN, Transformer | Simplicity & speed | Non-unique; syntactic fragility |

| Molecular Graph | Attributed graph (V, E) | Graph Neural Network (GNN) | Natural topology encoding | Higher computational cost |

| 3D Geometry | Point cloud/tensor (coordinates, features) | SE(3)-Equivariant Network | Captures stereochemistry & shape | Conformer ambiguity; high cost |

Action Spaces: Defining Molecular Modifications

The action space defines the set of operations an agent can perform to modify the current molecular state. Design choices balance expressivity, validity, and learning complexity.

Bond-based Actions

The agent modifies existing bonds (e.g., change bond order from single to double) or adds/removes bonds between existing atoms.

- Protocol: The action is typically a tuple

(atom_i_index, atom_j_index, action_type), whereaction_type∈ {addsingle, adddouble, remove_bond, etc.}. Validity checks must ensure atoms exist and actions respect valency rules.

Atom-based Actions

The agent adds a new atom (with a specified element) to the existing structure or removes an existing atom.

- Protocol: For addition, the action can be

(new_atom_type, connected_atom_index, new_bond_type). A canonicalization step (e.g., using RDKit) is often applied post-modification to ensure a standard representation.

Scaffold-based / Fragment-based Actions

The agent performs larger, pharmacophorically meaningful changes by attaching, linking, or replacing predefined molecular fragments or scaffolds.

- Protocol: A library of validated fragments (e.g., from BRICS fragmentation) is defined. An action selects a fragment and a specific attachment point on the current molecule. This improves synthetic accessibility and exploration efficiency.

Table 2: Characteristics of Action Space Paradigms

| Action Space | Granularity | Chemical Validity Rate | Exploration Efficiency | Synthetic Accessibility (SA) |

|---|---|---|---|---|

| Bond-based | Atomic | Low (requires strict rules) | Low (small steps) | Variable |

| Atom-based | Atomic | Medium | Medium | Often Low |

| Scaffold-based | Macro | High (if fragments are valid) | High (large steps) | High (if fragments are SA-friendly) |

Transition Rules: Ensuring Validity and Guiding Exploration

Transition rules govern the application of an action to a state to produce a new state. They are crucial for enforcing chemical rules and incorporating domain knowledge.

Validity Enforcement

A deterministic function applies the action and then checks/adjusts the resulting molecule.

- Methodology:

- Apply Action: Attempt the structural change in memory.

- Sanitize: Use a toolkit like RDKit to sanitize the molecule (adjust hydrogens, check valencies, aromatization).

- Validity Check: If sanitization fails or creates an impossible structure (e.g., radical atoms), the transition is invalid. The episode may terminate or a negative reward be given.

- Canonicalize: Convert the valid molecule to a canonical representation (e.g., canonical SMILES) to define the new state uniquely.

Reward Shaping as a Soft Rule

Reward functions incorporate domain knowledge to guide transitions toward desirable regions.

- Protocol: The reward ( R(s, a, s') ) is computed as a weighted sum of multiple objectives:

( R = w1 * \text{PropertyScore}(s') + w2 * \text{SAScore}(s') - w3 * \text{SimilarityPenalty}(s, s') )

Where

PropertyScoreis the primary objective (e.g., QED, binding energy),SA_Scorerewards synthetic accessibility, andSimilarityPenaltyencourages/discourages drastic exploration.

Title: DRL Molecular Environment Transition Logic

Experimental Protocol: A Standardized DRL Molecule Optimization Workflow

A typical experimental pipeline integrating the above components is outlined below.

- Environment Setup: Implement the molecular environment class (e.g., using OpenAI Gym interface) with

step()andreset()methods. - State Initialization:

reset()returns the initial molecular state (e.g., a random valid SMILES or a specific scaffold). - Action Selection: The agent (e.g., a PPO or DQN policy) processes the state and selects an action from the defined space.

- State Transition: The environment's

step(action)function: a. Applies the action using the chosen chemistry toolkit. b. Runs sanitization and validity checks (transition rules). c. If invalid, terminates the episode with negative reward. d. If valid, canonicalizes the new molecule to creates'. - Reward Calculation: Calculates the multi-objective reward ( R(s, a, s') ).

- Termination Check: Checks if episode length exceeds maximum or a target property threshold is met.

- Learning: The tuple

(s, a, r, s', done)is stored in a replay buffer and used to update the agent's policy network. - Evaluation: Periodically, the trained policy is run from novel starting points to generate new molecules, which are evaluated on held-out property predictors and for diversity.

Title: DRL Molecule Optimization Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools & Libraries for DRL in Molecule Optimization

| Item Name (Software/Library) | Category | Primary Function in Research |

|---|---|---|

| RDKit | Cheminformatics | Core chemistry operations: reading/writing SMILES, molecule sanitization, fragmenting, descriptor calculation, and 2D/3D rendering. |

| OpenAI Gym | RL Framework | Provides the standard API (reset, step, action_space, observation_space) for defining custom environments, ensuring compatibility with RL agent libraries. |

| Stable-Baselines3 | RL Algorithm | Offers reliable, PyTorch-based implementations of state-of-the-art RL algorithms (PPO, SAC, DQN) for training agents on custom environments. |

| PyTorch Geometric | Deep Learning | A library for building and training Graph Neural Networks (GNNs) on irregular graph data, essential for graph-based state/action representations. |

| DeepChem | Cheminformatics & ML | Provides high-level APIs for molecular featurization (graphs, grids), property prediction models, and molecular dataset handling. |

| BRICS | Fragment Library | A method for decomposing molecules into chemically meaningful, synthetically accessible fragments, used to build scaffold-based action spaces. |

| RAscore / SAscore | Synthetic Accessibility | Pre-trained models to score the synthetic accessibility of generated molecules, often used as a term in the reward function. |

| MOSES | Benchmarking Platform | A benchmarking platform with standardized datasets, metrics, and baselines to evaluate and compare generative models for molecules. |

Deep Reinforcement Learning (DRL) has emerged as a transformative paradigm in de novo molecular design. Within this framework, an agent iteratively proposes molecular structures (actions) to maximize a cumulative reward, guided by a policy network. The core challenge lies in the formulation of the reward function, which must succinctly encode the complex, multi-faceted objectives of modern drug discovery. A poorly crafted reward leads to mode collapse (e.g., generating only high-potency, toxic molecules) or failure to learn. This guide details the technical construction of a multi-objective reward function that balances the quintessential drug discovery criteria: potency (against a target), selectivity (over anti-targets), ADMET properties (Absorption, Distribution, Metabolism, Excretion, Toxicity), and synthesizability.

Decomposing the Reward Function

The aggregate reward ( R(m) ) for a molecule ( m ) is typically a weighted sum or a Pareto-optimal formulation of sub-rewards:

[ R(m) = \sum{i} wi \cdot ri(m) \quad \text{or} \quad R(m) = \min{i} (ri(m)) \quad \text{or} \quad R(m) = \prod{i} r_i(m) ]

where ( ri(m) ) are normalized sub-scores for each objective and ( wi ) are tunable weights reflecting priority.

Table 1: Core Objectives and Their Quantitative Benchmarks

| Objective | Key Metric(s) | Ideal Range (Typical Drug-like) | Normalization Function | Data Source |

|---|---|---|---|---|

| Potency | pIC50, pKi, pKd | > 7 (nM range) | ( r_{pot} = \text{sigmoid}( \frac{pXC50 - \text{threshold}}{\text{scale}} ) ) | In vitro assay (e.g., SPR, biochemical) |

| Selectivity | Selectivity Index (SI = IC50(off-target)/IC50(target)), Fold difference | SI > 30-fold | ( r_{sel} = 1 - \exp(-\text{SI} / \text{scale}) ) | Panel of related target assays |

| ADMET | ||||

| - Solubility | LogS (aq. sol.) | > -4 log mol/L | Piecewise linear clamp | Thermodynamic measurement |

| - Permeability | PAMPA, Caco-2, LogP | LogP 1-3, Papp > 10e-6 cm/s | Gaussian kernel around optimum | In vitro permeability models |

| - Metabolic Stability | Microsomal half-life, CLint | t1/2 > 30 min, CLint < 15 µL/min/mg | Linear scaling up to threshold | Human liver microsome assays |

| - Toxicity | hERG pIC50, Ames test, HepG2 viability | hERG pIC50 < 5; Ames negative | Step/penalty function (e.g., -1 if toxic) | In vitro safety panels |

| Synthesizability | SA Score (1-10), RA Score, Accessible Synthetic Routes | SA Score < 4.5, RA Score > 0.5 | ( r_{syn} = 1 - (\text{SA Score} - 1)/9 ) | Retrospective synthetic analysis (RDKit, AiZynthFinder) |

Detailed Experimental Protocols for Reward Component Validation

Protocol 1:In VitroPotency & Selectivity Assay (Enzyme Inhibition)

Objective: Generate quantitative pIC50 data for primary target and related anti-targets. Reagents: See Scientist's Toolkit (Table 3). Method:

- Prepare serial dilutions of test compound in DMSO, then in assay buffer.

- In a 384-well plate, combine enzyme, substrate, and co-factors in appropriate buffer.

- Initiate reaction by adding pre-diluted compound. Include positive (no compound) and negative (no enzyme) controls.

- Incubate at RT for 30-60 min. Quench reaction as needed.

- Detect product formation via fluorescence, luminescence, or absorbance.

- Fit dose-response curves using a 4-parameter logistic model (e.g., in GraphPad Prism) to derive IC50. Convert to pIC50 (-log10(IC50)).

- Calculate Selectivity Index (SI) for each off-target.

Protocol 2: High-Throughput Metabolic Stability Assay (Human Liver Microsomes)

Objective: Determine intrinsic clearance (CLint) and half-life (t1/2). Method:

- Prepare incubation mix: 0.5 mg/mL HLM, 1 µM test compound in PBS with Mg2+.

- Pre-incubate for 5 min at 37°C. Initiate reaction with 1 mM NADPH.

- Aliquot samples at t = 0, 5, 15, 30, 45, 60 min into quenching solution (acetonitrile with internal standard).

- Centrifuge, analyze supernatant via LC-MS/MS.

- Plot ln(peak area ratio) vs. time. Slope ( k = -\text{CLint} ).

- Calculate ( t_{1/2} = 0.693 / k ) and scaled ( \text{CLint} ).

Reward Function Architectures & Implementation

The integration of sub-rewards can follow several patterns, each with trade-offs.

Diagram Title: Multi-Objective Reward Function Architecture

Workflow for DRL-Based Optimization with Multi-Objective Reward

Diagram Title: DRL Molecule Optimization Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Experimental Validation

| Item / Reagent | Function in Context | Example Supplier / Tool |

|---|---|---|

| Recombinant Target Protein | Primary protein for potency/biochemical assays. | Thermo Fisher, Sino Biological |

| Selectivity Panel Proteins | Related off-target proteins for selectivity indexing. | Eurofins DiscoverX, Reaction Biology |

| Human Liver Microsomes (HLM) | In vitro system for metabolic stability assessment. | Corning, Xenotech |

| Caco-2 Cell Line | In vitro model for intestinal permeability prediction. | ATCC |

| hERG-Expressing Cell Line | Key cardiac safety assay for early toxicity screening. | ChanTest (Eurofins), Thermo Fisher |

| RDKit | Open-source cheminformatics toolkit for SA Score, descriptors. | Open Source |

| AiZynthFinder | Toolkit for retrosynthetic route analysis and RA Score. | Open Source (MIT) |

| PPO/DDPG Implementation | DRL algorithms for policy optimization (e.g., in Ray RLlib). | OpenAI, DeepMind frameworks |

1. Introduction and Thesis Context This case study is situated within the broader thesis that Deep Reinforcement Learning (DRL) represents a paradigm shift in de novo molecular design, offering a principled framework for navigating vast chemical spaces toward multi-parameter optimization. Traditional virtual screening is limited to pre-enumerated libraries, while generative models often lack explicit goal-directed optimization. DRL, by framing molecule generation as a sequential decision-making process, enables the direct exploration of chemical space to discover novel, synthetically accessible kinase inhibitors with tailored properties.

2. Core DRL Framework for Molecule Design The design process is modeled as a Markov Decision Process (MDP).

- State (s_t): The partial molecular graph or SMILES string at step t.

- Action (a_t): Adding a specific atom, bond, or molecular fragment to the current state.

- Reward (r_t): A computed score based on the final molecule's properties. A common reward shaping is:

R(m) = w1 * pKi + w2 * SA + w3 * QED - w4 * SIM(existing), where pKi is predicted binding affinity, SA is synthetic accessibility, QED is quantitative estimate of drug-likeness, and SIM penalizes excessive similarity to known inhibitors. - Agent: Typically a deep neural network (e.g., RNN, Graph Neural Network) trained via policy gradient methods (e.g., REINFORCE, PPO) or actor-critic architectures to maximize the expected cumulative reward.

Diagram Title: DRL Agent-Environment Loop for Molecule Generation

3. Experimental Protocol: A Standardized Workflow

- Step 1 - Problem Formulation: Define target kinase (e.g., EGFR T790M mutant). Set desired property thresholds: pKi > 8.0, SA Score < 3, QED > 0.6.

- Step 2 - Agent Initialization: Initialize a policy network (e.g., a 3-layer GRU for SMILES generation or a Message Passing Neural Network for graph generation) with random weights.

- Step 3 - Simulation & Rollout: The agent generates a batch of molecules (e.g., 1024) step-by-step from scratch.

- Step 4 - Reward Computation: Each completed molecule is evaluated using computational models.

- Docking & Scoring: Docked into the kinase's active site (e.g., using AutoDock Vina or Glide). The docking score is normalized into a pKi prediction via a pre-calibrated linear model.

- Property Prediction: SA Score and QED are calculated using RDKit.

- Similarity Penalty: Tanimoto fingerprint similarity to a reference set of known inhibitors is computed.

- Step 5 - Policy Update: The policy gradient is calculated based on the rewards, and the agent's network parameters are updated to increase the probability of generating high-reward molecules.

- Step 6 - Iteration: Steps 3-5 are repeated for thousands of episodes until convergence.

Diagram Title: DRL Kinase Inhibitor Design Workflow

4. Key Research Reagent Solutions (In-silico Toolkit)

| Tool/Reagent | Function in the DRL Pipeline |

|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, descriptor calculation (QED), and SA Score estimation. |

| OpenMM | GPU-accelerated molecular dynamics engine for advanced binding free energy calculations (MM/PBSA, MM/GBSA). |

| AutoDock Vina / Glide | Molecular docking software for predicting binding poses and generating initial affinity scores. |

| PyTorch / TensorFlow | Deep learning frameworks for building and training the DRL agent's policy and value networks. |

| RLlib / OpenAI Gym | Libraries for scalable reinforcement learning implementations and environment standardization. |

| ZINC / ChEMBL | Public molecular databases used for pre-training the agent or as a source of known inhibitors for similarity analysis. |

| Schrödinger Suite | Commercial software platform offering integrated solutions for high-throughput docking (Glide) and physics-based scoring. |

5. Quantitative Results & Benchmarking The following table summarizes hypothetical but representative results from a DRL study targeting EGFR, benchmarked against a conventional virtual screening (VS) approach on a library of 1M compounds.

Table 1: Performance Comparison: DRL vs. Virtual Screening for EGFR Inhibitors

| Metric | DRL-Generated Set (1000 molecules) | Virtual Screening Top-1000 | Notes |

|---|---|---|---|

| Avg. Predicted pKi | 8.7 (± 0.5) | 7.2 (± 1.1) | Higher mean & lower variance. |

| Success Rate (pKi > 8.0) | 84% | 22% | Percentage of molecules meeting primary affinity goal. |

| Avg. SA Score | 2.1 (± 0.4) | 3.5 (± 1.2) | Lower score indicates better synthetic accessibility. |

| Avg. QED | 0.78 (± 0.08) | 0.65 (± 0.15) | Higher score indicates better drug-likeness. |

| Structural Novelty | High (Tanimoto < 0.3) | Low (Tanimoto > 0.6) | Max similarity to training set/VS library. |

| In-silico Validation (MM/GBSA) | -45.2 kcal/mol (± 3.1) | -38.9 kcal/mol (± 5.6) | More favorable predicted binding free energy. |

6. Signaling Pathway Context for Kinase Inhibition The therapeutic objective is to disrupt the target kinase's role in its pathogenic signaling cascade.

Diagram Title: Kinase Inhibition Blocks Pro-Survival Signaling

7. Conclusion This case study demonstrates that DRL provides a powerful and flexible framework for the de novo design of novel kinase inhibitors, directly addressing the multi-objective challenges of drug discovery. By integrating predictive models within a reward function, DRL agents can efficiently explore chemical space beyond known scaffolds, generating structurally novel candidates with optimized binding, drug-like properties, and synthetic accessibility. This approach substantiates the core thesis that DRL is a transformative methodology for goal-directed molecule optimization in medicinal chemistry.

This case study is framed within the broader thesis on Introduction to Deep Reinforcement Learning (DRL) for Molecule Optimization Research. A primary challenge in modern drug discovery is the optimization of lead compounds, which often exhibit promising target affinity but suffer from suboptimal pharmacokinetic (PK) properties—such as poor solubility, metabolic instability, or low permeability. Traditional medicinal chemistry approaches are resource-intensive and iterative. DRL offers a paradigm shift, enabling the de novo design or systematic modification of molecular structures to satisfy multi-property optimization objectives, with PK parameters as critical rewards in the agent's policy network. This guide details the technical strategies and experimental validations for PK optimization, positioning DRL as the engine for navigating the vast chemical space towards drug-like candidates.

Core Pharmacokinetic Parameters & Optimization Targets

The key ADME (Absorption, Distribution, Metabolism, Excretion) properties targeted for optimization are summarized below.

Table 1: Key PK/ADME Parameters and Target Ranges for Oral Drugs

| Parameter | Description | Typical Optimization Goal | Common Experimental Assay |

|---|---|---|---|

| Aqueous Solubility | Concentration in aqueous solution at physiological pH. | >100 µM (pH 7.4) | Kinetic Solubility (UV-plate), Thermodynamic Solubility (HPLC) |

| Lipophilicity (logP/D) | Partition coefficient between octanol and water/buffer. | LogD₇.₄: 1-3 | Shake-flask method, HPLC-derived logP/D |

| Metabolic Stability | Half-life or intrinsic clearance in liver microsomes/hepatocytes. | Low CLint, t₁/₂ > 30 min | Microsomal/Hepatocyte Stability Assay |

| Permeability | Rate of compound crossing biological membranes (e.g., gut). | Caco-2 Papp (A-B) > 10 x 10⁻⁶ cm/s | Caco-2 Monolayer Assay, PAMPA |

| CYP Inhibition | Potential to inhibit major Cytochrome P450 enzymes. | IC₅₀ > 10 µM (for CYP3A4, 2D6) | Fluorescent or LC-MS/MS Probe Substrate Assay |

| Plasma Protein Binding (PPB) | Fraction of compound bound to plasma proteins. | Moderate to low (%Fu > 5%) | Equilibrium Dialysis, Ultracentrifugation |

Deep Reinforcement Learning Framework for PK Optimization

The DRL agent is trained to modify molecular structures through a defined set of chemical transformations to improve a composite reward function (R) based on predicted PK properties.

- State (s): A representation of the current molecular graph (e.g., SMILES, fingerprint, or graph neural network embedding).

- Action (a): A predefined set of chemically valid reactions (e.g., add methyl, replace -OH with -F, form amide) applied to a specific site on the molecule.

- Reward (R):

R = w₁ * f(Solubility) + w₂ * f(logD) + w₃ * f(Metabolic Stability) + w₄ * f(Synthetic Accessibility) - Penalty(Similarity < Threshold).f()scales experimental or predicted values to a normalized score.- Penalties enforce exploration beyond close analogs of the starting lead.

Diagram 1: DRL Agent for Molecule Optimization

Experimental Protocols for Validating DRL-Optimized Compounds

Candidate molecules generated by the DRL agent must be synthesized and experimentally validated.

Protocol 4.1: High-Throughput Kinetic Solubility Assay

- Preparation: Prepare a 10 mM DMSO stock solution of the test compound.

- Dilution: Using a liquid handler, dilute 1 µL of stock into 100 µL of phosphate-buffered saline (PBS, pH 7.4) in a 96-well plate (final [DMSO] = 1%).

- Incubation: Shake plate at 25°C for 1 hour.

- Filtration: Transfer the solution to a 96-well filter plate (e.g., 0.45 µm hydrophilic PVDF) and apply vacuum.

- Quantification: Dilute filtrate 1:1 with acetonitrile containing internal standard. Analyze by UPLC-UV at λmax of the compound. Calculate solubility from a standard curve.

Protocol 4.2: Metabolic Stability in Liver Microsomes

- Reaction Mix: In a 96-well incubation plate, combine:

- 0.5 mg/mL human liver microsomes (HLM) in 100 mM potassium phosphate buffer (pH 7.4).

- 1 µM test compound (from 100x DMSO stock).

- Pre-incubate at 37°C for 5 min.

- Initiation: Start reaction by adding NADPH regenerating system (1 mM NADP⁺, 5 mM glucose-6-phosphate, 1 U/mL G6PDH, 5 mM MgCl₂).

- Time Points: Aliquot 50 µL at t = 0, 5, 15, 30, 45, 60 min into a stop plate containing 100 µL of cold acetonitrile with internal standard.

- Analysis: Centrifuge, dilute supernatant, and analyze by LC-MS/MS. Plot ln(peak area ratio) vs. time. Calculate half-life (t₁/₂) and intrinsic clearance (CLint).

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for PK Property Assays

| Item | Function/Brief Explanation |

|---|---|

| Human Liver Microsomes (HLM) | Pooled subcellular fractions containing CYP enzymes for in vitro metabolic stability and inhibition studies. |

| Caco-2 Cell Line | Human colon adenocarcinoma cells that differentiate into monolayers with tight junctions, modeling intestinal permeability. |

| HT-PAMPA Lipid Membrane Plate | Pre-formulated plates for high-throughput parallel artificial membrane permeability assay, a non-cell-based permeability model. |

| NADPH Regenerating System | Enzymatic system to maintain constant NADPH levels, essential for CYP-mediated oxidation reactions in microsomal assays. |

| Equilibrium Dialysis Device | Apparatus with semi-permeable membranes to separate protein-bound and free drug for plasma protein binding studies. |

| LC-MS/MS System | Triple quadrupole mass spectrometer coupled to UPLC for sensitive, specific quantification of compounds in biological matrices. |

| Chemical Synthesis Toolkit | Automated synthesizers, solid-phase chemistry equipment, and purification systems (HPLC, flash chromatography) to produce DRL-designed compounds. |

Diagram 2: Experimental PK Screening Workflow

Case Study: Optimization of a PDE4 Inhibitor Lead

A lead compound for Phosphodiesterase 4 (PDE4) inhibition had high potency (IC₅₀ = 5 nM) but poor solubility (<1 µM) and high metabolic clearance (HLM CLint > 200 µL/min/mg).

- DRL Strategy: The reward function heavily weighted solubility and metabolic stability predictions. The agent explored fluorination, pyridine N-oxidation, and introduction of small polar groups.

- Results: After 15 policy update cycles, the top candidate showed:

- Improved Solubility: 85 µM (pH 7.4).

- Reduced Clearance: HLM CLint = 35 µL/min/mg.

- Retained Potency: PDE4 IC₅₀ = 8 nM.

Table 3: Comparative Data for PDE4 Lead Optimization

| Property | Initial Lead | DRL-Optimized Candidate | Assay Method |

|---|---|---|---|

| PDE4 IC₅₀ (nM) | 5 | 8 | Enzyme Inhibition (FRET) |

| Kinetic Solubility (µM) | <1 | 85 | UV-plate, PBS pH 7.4 |

| HLM CLint (µL/min/mg) | 210 | 35 | LC-MS/MS, 0.5 mg/mL HLM |

| Caco-2 Papp (10⁻⁶ cm/s) | 15 | 22 | LC-MS/MS |

| CYP3A4 IC₅₀ (µM) | 2.5 | >20 | Fluorescent Probe |

| Predicted Human CL (mL/min/kg) | High (>25) | Moderate (15) | In vitro-in vivo extrapolation |

Integrating deep reinforcement learning into the lead optimization pipeline provides a powerful, data-driven strategy to simultaneously address multiple, often competing, pharmacokinetic objectives. By framing chemical modification as a sequential decision-making process guided by a reward function informed by both predictive models and experimental data, researchers can accelerate the discovery of compounds with a higher probability of in vivo success. This case study exemplifies the transition from heuristic-based design to an AI-optimized workflow, a core tenet of the encompassing thesis on DRL for molecular optimization.

This guide is framed within the broader thesis of applying deep reinforcement learning (DRL) to molecule optimization for drug discovery. The core challenge is to efficiently search vast chemical spaces to identify compounds with optimized properties (e.g., binding affinity, solubility, synthetic accessibility). DRL, which combines the representational power of deep learning with the decision-making framework of reinforcement learning, is emerging as a powerful paradigm for this iterative design task. This document provides a practical, technical guide to three foundational open-source toolkits—DeepChem, RLlib, and TorchDrug—that together form a robust pipeline for conducting state-of-the-art molecular optimization research.

The following table summarizes the primary function, key features, and role within the DRL-for-molecules workflow for each toolkit.

Table 1: Core Toolkit Comparison for Molecular DRL

| Toolkit | Primary Purpose | Key Features | Role in Molecular DRL Pipeline |

|---|---|---|---|

| DeepChem | Democratizing Deep Learning for Life Sciences | Curated molecular datasets (e.g., QM9, PCBA), featurization methods (GraphConv, Coulomb Matrix), standard model implementations, hyperparameter tuning. | Data Preprocessing & Initial Modeling: Handles molecule featurization, dataset splitting, and provides baseline predictive models for property estimation (the "reward" function). |