De Novo Design vs. Molecular Optimization: A Strategic Guide for AI-Driven Drug Discovery

This article provides a comprehensive comparison of molecular optimization and de novo molecular generation for researchers and drug development professionals.

De Novo Design vs. Molecular Optimization: A Strategic Guide for AI-Driven Drug Discovery

Abstract

This article provides a comprehensive comparison of molecular optimization and de novo molecular generation for researchers and drug development professionals. It explores the foundational concepts, core methodologies, and practical applications of each paradigm. The content addresses common challenges, validation strategies, and comparative insights to guide strategic decision-making in hit-to-lead optimization, scaffold hopping, and novel chemical space exploration. By synthesizing current trends in generative AI and machine learning, this guide aims to equip scientists with the knowledge to select and implement the most effective approach for their specific drug discovery objectives.

Core Concepts Demystified: Defining Optimization and De Novo Generation in Drug Design

This technical whitepaper examines the core methodologies of molecular optimization and de novo molecular generation within computational drug discovery. These approaches represent two fundamentally different philosophies in the quest for novel therapeutic candidates.

Conceptual Framework & Core Definitions

Molecular Optimization (Iterative Refinement) is a directed search process. It begins with a known molecule (a "hit" or "lead") possessing desirable properties but requiring improvement in specific areas, such as potency, selectivity, or metabolic stability. The process involves making incremental, rational modifications to the molecular structure.

De Novo Molecular Generation (Creation from Scratch) is a constructive process. It generates entirely novel molecular structures from first principles (e.g., atomic components or molecular fragments) based solely on a set of predefined constraints and objectives, without a specific starting template.

Quantitative Comparison of Methodological Outputs

The following table synthesizes data from recent benchmarking studies (2023-2024) comparing the performance of leading optimization and generation platforms.

Table 1: Performance Metrics of Optimization vs. De Novo Generation Approaches

| Metric | Molecular Optimization (e.g., SAR Analysis, RL-based Optimization) | De Novo Generation (e.g., Generative AI, Fragment-Based Assembly) |

|---|---|---|

| Primary Objective | Improve 2-3 key parameters of a lead compound. | Explore vast chemical space for novel scaffolds meeting multi-parameter goals. |

| Typical Output Novelty | Low to Moderate (analogs, close derivatives). | High (novel scaffolds, unprecedented chemotypes). |

| Success Rate (Clinical Candidate) | ~8-12% (from lead) – Higher due to known starting point. | ~1-3% (to clinical candidate) – High initial attrition. |

| Computational Throughput | 10² - 10⁴ compounds evaluated per campaign. | 10⁵ - 10⁷ compounds generated per campaign. |

| Key Strength | High interpretability, preserves known pharmacophore. | Unlocks unexplored chemical space, ideal for undrugged targets. |

| Key Limitation | Limited by the "innovation ceiling" of the starting scaffold. | Generated molecules often have synthetic intractability (low % are easily made). |

| Docking Score Improvement | +20-40% over starting lead (target-specific). | Can achieve native-like scores, but wider distribution. |

| QED / SA Score Profile | Incremental improvement (+0.1-0.2 in QED). | Can generate high QED (>0.9) and good SA (<3.5) de novo. |

Detailed Experimental Protocols

Protocol A: Iterative Refinement via Deep Reinforcement Learning (RL)

Title: Multi-Objective Lead Optimization using an Actor-Critic RL Agent.

Objective: To improve the binding affinity (ΔG) and predicted metabolic stability (HLM t₁/₂) of a lead compound over 10 design cycles.

- Environment Setup: Define the chemical space as a set of permitted structural transformations (e.g., R-group replacements at 3 sites, scaffold hopping via defined bioisosteres).

- Agent Initialization: Initialize an actor neural network (policy) and a critic network (value function). The state (Sₜ) is the current molecule's fingerprint (ECFP6) and property vector.

- Action Space: The set of all valid chemical transformations (e.g., "replace -CH₃ at R₁ with -CF₃").

- Reward Function (R):

R = w₁ * Δ(ΔG) + w₂ * Δ(HLM t₁/₂) + w₃ * (SA Score Penalty). Weights (w) are normalized. Δ(ΔG) is the change in predicted binding energy. - Iteration: For each episode (molecule):

- Agent (Actor) selects an action (transformation) based on policy π(A|S).

- New molecule is created, its properties predicted via oracle models (e.g., Random Forest for HLM, docking for ΔG).

- Reward is calculated.

- Critic network evaluates the state-value.

- Policy gradients are used to update the actor network to maximize cumulative reward.

- Termination: After 10 cycles or when reward plateaus. Top 50 molecules are selected for in vitro synthesis and validation.

Protocol B:De NovoGeneration via Conditional Generative Model

Title: Target-Aware De Novo Design using a Conditional Variational Autoencoder (cVAE).

Objective: To generate 10,000 novel molecules predicted to inhibit kinase X with an IC₅₀ < 100 nM and a LogP between 2 and 4.

- Data Curation: Assemble a dataset of 500,000 diverse drug-like molecules and, if available, known actives against kinase X.

- Model Training: Train a cVAE where the encoder (E) maps a molecule (SMILES) to a latent vector (z), and the decoder (D) reconstructs it. A conditioning vector (c) concatenated with (z) includes target properties (e.g., predicted pIC₅₀ for kinase X, calculated LogP).

- Conditional Sampling: To generate molecules:

- Define the condition vector:

c = [pIC₅₀_target: >7.0, LogP_target: 3.0]. - Sample random latent vectors (z) from a Gaussian distribution.

- Decode the concatenated

[z | c]to produce novel SMILES strings.

- Define the condition vector:

- Post-Generation Filtering: Pass generated molecules through a cascade filter:

- Step 1: Validity and uniqueness (RDKit).

- Step 2: Property filter (2 < LogP < 4, 200 < MW < 500).

- Step 3: Structural alert filter (e.g., PAINS).

- Step 4: Docking against kinase X structure (PDB: XXXX).

- Output: The top 100 ranked molecules by docking score are subject to synthetic accessibility (SA) scoring. The top 20 with SA Score < 4 are proposed for procurement or synthesis.

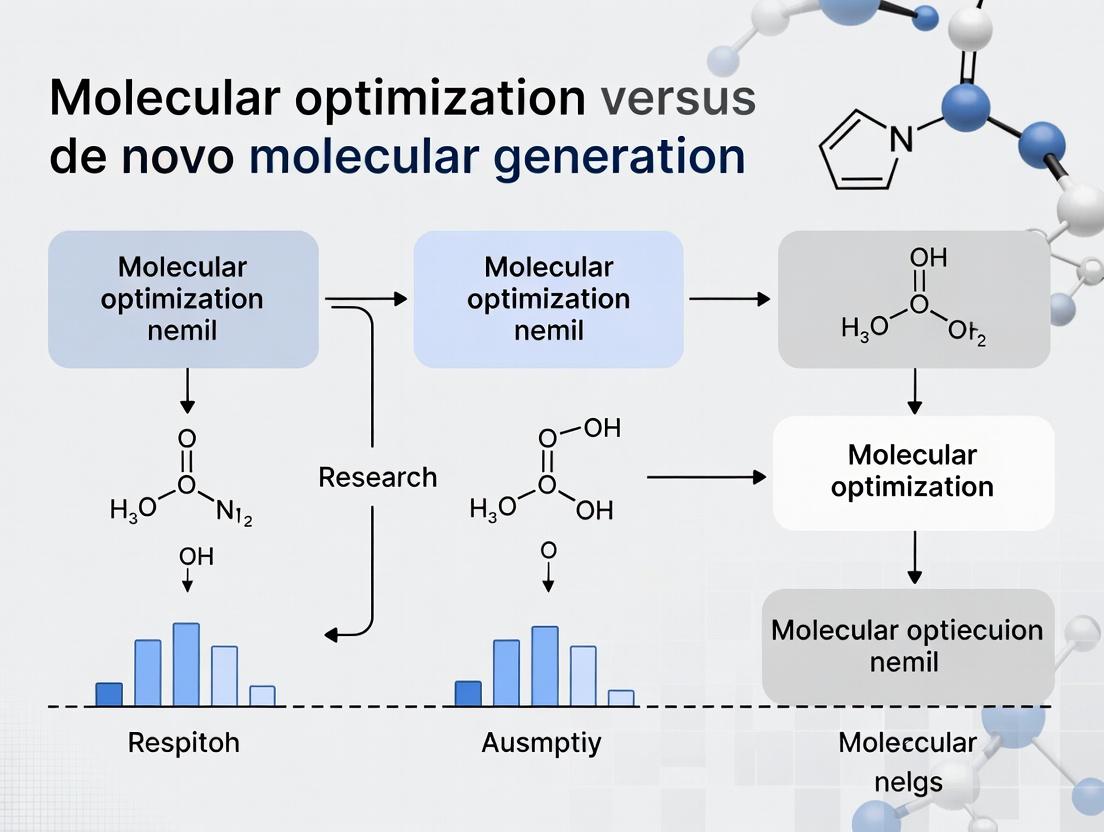

Visualizing the Core Workflows

Title: Iterative Molecular Optimization Feedback Loop

Title: De Novo Generation Linear Pipeline

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents & Resources for Molecular Design Experiments

| Item / Solution | Function / Role | Example Vendor/Resource |

|---|---|---|

| CHEMBL or PubChem Bioassay Data | Provides high-quality, structured SAR data for model training and validation. | EMBL-EBI, NCBI |

| RDKit or OpenEye Toolkit | Open-source or commercial cheminformatics libraries for molecule manipulation, fingerprinting, and descriptor calculation. | Open Source, OpenEye |

| MOE, Schrödinger Suite, or SeeSAR | Integrated molecular modeling platforms for force-field calculations, docking, and property prediction. | CCG, Schrödinger, BioSolveIT |

| Target Protein Structure (e.g., Kinase) | 3D atomic coordinates (experimental or AlphaFold2 model) essential for structure-based design and docking. | PDB, AlphaFold DB |

| REINVENT or MolDQN | Specialized open-source frameworks implementing RL for molecular design. | GitHub Repositories |

| AutoDock-GPU, Glide, or FRED | Docking software to predict ligand binding pose and affinity. | Scripps, Schrödinger, OpenEye |

| SYBA or SYNOPSIS | Synthetic accessibility predictors to triage generated molecules. | Open Source, Elsevier |

| Enamine REAL, Mcule, or Molport | Commercial libraries for virtual compound sourcing and "make-on-demand" synthesis of proposed molecules. | Enamine, Mcule, Molport |

The evolution of computational chemistry from traditional Structure-Activity Relationship (SAR) analysis to modern generative AI models represents a paradigm shift in molecular design. This paper frames this progression within the core thesis: Molecular optimization is an iterative, constraint-driven refinement of a known scaffold, while de novo molecular generation is a creation of novel chemical structures from scratch, often with minimal initial constraints.

Core Conceptual Differences: Optimization vs. Generation

The table below summarizes the fundamental distinctions between the two research paradigms.

| Aspect | Molecular Optimization | De Novo Molecular Generation |

|---|---|---|

| Primary Goal | Improve specific properties (e.g., potency, selectivity) of a lead compound. | Generate entirely novel chemical structures that meet a set of desired criteria. |

| Starting Point | Requires a known active molecule or scaffold (hit/lead). | Often starts from random or seed distributions (e.g., a latent space); no explicit scaffold required. |

| Chemical Space | Explores a confined local region around the initial scaffold. | Can explore vast, uncharted regions of chemical space, potentially beyond known bioactive motifs. |

| Typical Constraints | High similarity to parent molecule, synthetically feasible modifications (e.g., R-group replacements). | Broad property profiles (QED, SA), target-specific docking scores, and novel chemical patterns. |

| Dominant Historical Methods | QSAR, Matched Molecular Pairs, Analogue-by-Catalogue, Pharmacophore modeling. | Genetic Algorithms, Fragment-based assembly, Generative AI (VAEs, GANs, Transformers). |

| Key Challenge | The "scaffold hop" limitation; inability to escape local chemical maxima. | Ensuring synthetic accessibility and realistic physicochemical profiles of generated molecules. |

Historical Progression of Methodologies

Traditional SAR & QSAR Analysis

SAR analysis involves qualitative assessment of how structural changes affect biological activity. Quantitative SAR (QSAR) formalizes this relationship via statistical models.

Experimental Protocol for a Classic 2D-QSAR Study:

- Data Curation: Assemble a congeneric series of molecules (50-500 compounds) with measured biological activity (e.g., IC50, Ki). Convert activity to pIC50/pKi.

- Descriptor Calculation: Compute molecular descriptors (e.g., logP, molar refractivity, topological indices, electronic parameters) for each compound using software like Dragon or RDKit.

- Model Building: Use multivariate regression (e.g., PLS, MLR) to correlate descriptors with activity. Split data into training (~80%) and test sets (~20%).

- Validation: Assess model performance via metrics: R² (goodness-of-fit), Q² (cross-validated predictive power), and RMSE on the external test set.

- Interpretation: Analyze model coefficients to infer which physicochemical properties enhance activity, guiding the design of the next synthetic batch.

Title: The Classic QSAR Modeling Workflow

The Rise of Generative AI Models

Generative AI models learn the probability distribution of chemical structures from large datasets and sample novel molecules from this distribution.

Experimental Protocol for Training a Conditional Molecule Generator (e.g., cVAE):

- Data Preparation: Curate a dataset (e.g., from ChEMBL) of SMILES strings (1M-10M compounds). Clean and canonicalize. Define property labels (e.g., logP, molecular weight, target activity).

- Model Architecture: Implement a Conditional Variational Autoencoder (cVAE). The encoder (RNN/Transformer) compresses a SMILES and its properties into a latent vector

z. The decoder reconstructs the SMILES fromzand a target property condition. - Training: Train the model to minimize reconstruction loss (cross-entropy for SMILES) and the Kullback–Leibler divergence loss, ensuring a smooth, regular latent space. Conditioning is enforced via concatenation of property vectors to latent codes.

- Sampling & Optimization: For de novo generation, sample random latent vectors and decode with desired property conditions. For optimization, encode a lead molecule, then interpolate in latent space or perform gradient ascent on the latent vector towards improved property predictions.

- Post-processing & Validation: Filter generated molecules for validity, uniqueness, and synthetic accessibility (SA Score). Virtually screen via docking or property predictors. Select a subset for synthesis and experimental validation.

Title: Architecture of a Conditional Molecular Generator (cVAE)

The Scientist's Toolkit: Key Research Reagents & Solutions

| Tool/Reagent | Category | Function in Experimentation |

|---|---|---|

| ChEMBL Database | Data Resource | Public repository of bioactive molecules with drug-like properties, used as the primary source for training generative models and SAR analysis. |

| RDKit | Software Library | Open-source cheminformatics toolkit for descriptor calculation, molecule manipulation, fingerprint generation, and model integration. |

| AutoDock Vina/GOLD | Software Suite | Molecular docking programs used to virtually screen generated/optimized molecules against a protein target, providing a binding affinity score. |

| SA Score | Computational Metric | Synthetic Accessibility Score (1-10) estimates the ease of synthesizing a generated molecule, filtering out overly complex structures. |

| pIC50/pKi | Assay Metric | Negative log of the half-maximal inhibitory/affinity constant, standardizing bioactivity data for QSAR modeling and objective functions in AI models. |

| Directed Diversity Library | Chemical Reagents | Commercially available sets of building blocks (e.g., amino acids, heterocycles) designed for rapid analog synthesis in lead optimization campaigns. |

| qPCR/ELISA Assay Kits | Biological Reagents | Standardized kits for medium-throughput biological validation of compound activity on target pathways in cellular or biochemical assays. |

Quantitative Comparison of Modern Methods

Recent benchmarking studies (2023-2024) highlight the performance of different approaches. The table below summarizes key metrics on standard tasks like optimizing DRD2 activity or QED while maintaining similarity.

| Model Type | Success Rate* (%) | Novelty | Diversity | Synthetic Accessibility (SA) Score |

|---|---|---|---|---|

| Reinforcement Learning (REINVENT) | 85-95 | Medium | Low-Medium | 2.5 - 3.5 |

| Conditional VAE | 70-85 | High | High | 3.0 - 4.0 |

| Generative Transformer (GPT-based) | 80-90 | High | High | 2.8 - 3.8 |

| Flow-Based Models | 75-88 | High | Medium-High | 3.2 - 4.2 |

| Traditional Genetic Algorithm | 60-75 | Low-Medium | Medium | 3.0 - 3.8 |

*Success Rate: Percentage of generated molecules meeting all specified objectives (e.g., activity threshold, similarity constraint). Results aggregated from benchmarks on GuacaMol, MOSES, and related frameworks.

Conclusion: The historical trajectory from SAR to generative AI underscores a shift from local, human-guided interpolation to global, AI-driven exploration. Molecular optimization remains crucial for lead development, operating as a precision tool. In contrast, de novo generation is a discovery engine for novel scaffolds, fundamentally expanding the accessible medicinal chemistry universe. The future lies in hybrid models that strategically combine the constraints of optimization with the creative potential of generation.

Molecular optimization and de novo molecular generation represent two fundamental, complementary paradigms in computational drug discovery. Optimization refers to the systematic modification of a known starting molecule (a "hit" or "lead") to improve its properties, such as potency, selectivity, or pharmacokinetics. De novo generation involves creating novel chemical structures from scratch, typically guided by desired target properties, without a predefined scaffold. The core thesis is that optimization is a local search within a constrained chemical space, while de novo generation is a global search across a vast, unexplored chemical universe. The choice between them hinges on the project's stage, objectives, and available data.

Quantitative Comparison of Paradigms

Table 1 summarizes the key distinctions, derived from recent literature and benchmark studies (2019-2024).

Table 1: Comparative Analysis of Molecular Optimization vs. De Novo Generation

| Aspect | Molecular Optimization | De Novo Generation |

|---|---|---|

| Primary Objective | Improve specific properties of a known scaffold. | Generate novel, drug-like structures satisfying target criteria. |

| Starting Point | One or several existing lead molecules. | Empty or seed fragments; target structure or pharmacophore. |

| Chemical Space | Explores local neighborhood of starting scaffold. | Explores vast, global chemical space (e.g., >10^60 possibilities). |

| Key Algorithms | Matched molecular pairs, QSAR, scaffold hopping, evolutionary algorithms. | Generative models (VAEs, GANs, Transformers, Diffusion Models), reinforcement learning. |

| Success Metrics | Property delta (e.g., ΔpIC50, ΔLogP), synthetic accessibility (SA) score. | Novelty, diversity, quantitative estimate of drug-likeness (QED), docking scores. |

| Typical Use Case | Lead series progression, mitigating a specific liability (e.g., hERG inhibition). | Hit identification for novel targets, scaffold discovery for undruggable targets. |

| Major Risk | Getting trapped in local minima; limited novelty. | Generating unrealistic, unsynthesizable molecules. |

| Recent Benchmark (MOSES/GuacaMol) | Focused optimization tasks show >80% success in improving 2+ properties. | Top models achieve >0.9 novelty and ~0.5 validity on standard benchmarks. |

Methodological Deep Dive

Core Experimental Protocol for Molecular Optimization

Protocol: Multi-Objective Lead Optimization using a Genetic Algorithm

- Input: A lead compound with associated property data (e.g., IC50, LogD, metabolic stability).

- Representation: Encode the molecule as a SMILES string or a graph.

- Initialization: Create a population of variants via defined mutation operations (e.g., atom/bond change, ring addition/removal, functional group replacement).

- Evaluation: Score each variant using predictive models (e.g., QSAR for activity, ADMET predictors) for key objectives (Obj1: pIC50, Obj2: -LogD, Obj3: Synthetic Accessibility).

- Selection & Evolution: Apply a multi-objective selection algorithm (e.g., NSGA-II) to select parents for the next generation. Perform crossover and mutation.

- Iteration: Repeat steps 4-5 for a set number of generations (e.g., 100).

- Output: A Pareto front of optimized compounds representing the best trade-offs between objectives.

Core Experimental Protocol forDe NovoMolecular Generation

Protocol: Target-Conditioned Molecule Generation with a Diffusion Model

- Input: A 3D protein target structure (e.g., from PDB or AlphaFold2).

- Conditioning: Extract the binding site's 3D pharmacophoric or geometric features.

- Generation: A 3D diffusion model (e.g., Pockets2Mol, DiffDock) denoises a random point cloud within the binding site coordinates over a series of timesteps, guided by the target conditioning.

- Sampling: Multiple molecules are sampled from the generative process.

- Post-processing & Filtering: Generated 3D molecular graphs are converted to 2D structures. Apply rules-based filters (e.g., PAINS, medicinal chemistry alerts) and property filters (e.g., 200 < MW < 500, QED > 0.6).

- Validation: Top candidates are evaluated via molecular docking, binding affinity prediction (e.g., using a trained ΔΔG model), and visual inspection.

- Output: A set of novel, synthetically accessible candidate molecules predicted to bind the target.

Strategic Decision Framework: When to Use Which Approach

The decision is governed by the state of available chemical matter and project goals (See Figure 1).

Figure 1: Decision Workflow for Selecting Molecular Design Paradigm

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Tools for Molecular Design Experiments

| Tool/Reagent Category | Specific Example(s) | Function in Experiment |

|---|---|---|

| Commercial Compound Libraries | Enamine REAL, Mcule, ChemDiv | Source of purchable compounds for virtual screening or validation of generated structures. |

| Benchmark Datasets | ZINC20, ChEMBL, MOSES, GuacaMol | Provide standardized training and testing data for model development and comparison. |

| Cheminformatics Toolkits | RDKit, Open Babel, OEChem | Core libraries for molecule manipulation, descriptor calculation, and fingerprint generation. |

| Generative Model Platforms | REINVENT, MolDQN, DiffLinker | Open-source or proprietary frameworks for implementing de novo generation algorithms. |

| Optimization Suites | OpenChem, DeepChem, proprietary vendor software | Provide algorithms for focused library design and lead optimization. |

| Property Prediction Services | SwissADME, pkCSM, ADMET Predictor | Web servers or software for in silico prediction of key pharmacokinetic and toxicity endpoints. |

| Synthesis Planning Tools | AiZynthFinder, ASKCOS, Reaxys | Evaluate synthetic feasibility and propose routes for generated or optimized molecules. |

Integrated Workflow and Future Outlook

The future lies in hybrid systems. An integrated workflow (Figure 2) begins with de novo generation to explore novelty, then switches to optimization for fine-tuning.

Figure 2: Hybrid De Novo Generation & Optimization Feedback Loop

Conclusion: Molecular optimization is the precision tool for refining known chemical matter, whereas de novo generation is the discovery engine for uncharted territory. The strategic integration of both, powered by the latest AI and fed by high-quality experimental data, defines the cutting edge of modern molecular design. The key is to apply global generation when novelty is paramount and local optimization when efficiency and specific property enhancement are the critical paths to a candidate.

The central thesis framing this discussion posits that molecular optimization and de novo molecular generation, while both operating within the chemical space, are fundamentally distinguished by their initial search space definition and subsequent strategic balance between exploitation and exploration.

- Molecular Optimization begins with a defined, narrow chemical subspace anchored by one or more known starting points (e.g., a hit or lead compound). The strategy is inherently exploitative, focusing on iterative modifications to improve specific properties while maintaining core structural motifs.

- De Novo Molecular Generation initiates from a vastly broader, often underspecified, region of chemical space, typically constrained only by basic chemical rules or desired properties. The strategy is exploratory, aiming to discover novel scaffolds without direct reference to existing templates.

This whitepaper provides a technical guide to the methodologies defining these search spaces and the algorithms governing the exploitation-exploration trade-off.

Quantitative Landscape of Chemical Space

The effective search space is defined by both its theoretical size and the practical constraints applied by researchers.

Table 1: Scale and Constraints of Molecular Search Spaces

| Parameter | Theoretical Chemical Space (Exploration Context) | Typical Optimization Subspace (Exploitation Context) | Common Constraints Applied |

|---|---|---|---|

| Estimated Size | >10^60 drug-like molecules | 10^2 to 10^6 analogs | N/A |

| Starting Point | Random or seed-based sampling | Defined lead compound(s) | N/A |

| Structural Diversity | High; novel scaffolds sought | Low to moderate; core scaffold preserved | Syntactic (SMILES grammar), structural (substructure filters) |

| Primary Goal | Discover novel chemotypes | Improve ADMET, potency, selectivity | Property-based (QED, SA Score, LogP ranges) |

| Key Algorithms | Generative Models (VAEs, GANs, Transformers), Genetic Algorithms | Similarity Search, Matched Molecular Pairs, Scaffold Hopping | Predictive Models (QSAR, ML Potency/ADMET) |

| Exploration/Exploitation | Exploration-heavy: Broad sampling of uncharted regions. | Exploitation-heavy: Local search near known optima. | Guides both strategies towards feasible regions. |

Methodologies & Experimental Protocols

Protocol for Exploitative Optimization (SAR Expansion)

This protocol details a standard structure-activity relationship (SAR) exploration cycle for lead optimization.

- Define Chemical Neighborhood: Using the lead molecule as centroid, generate a virtual library using enumerated reactions (e.g., amide couplings, Suzuki-Miyaura) on available sites or via matched molecular pair analysis.

- In-Silico Filtering: Apply property filters (e.g., -1 < LogP < 5, 200 < MW < 500, TPSA < 140 Ų) and structural alerts to remove undesirable chemotypes.

- Priority Ranking: Score filtered compounds using a pre-trained QSAR model for the primary target activity. Select top-ranked compounds for synthesis (typically 20-50).

- Synthesis & Assaying: Execute parallel synthesis. Subject compounds to primary in vitro assay (e.g., enzyme inhibition IC₅₀).

- Iterative Analysis: Feed new SAR data into the predictive model to refine it. Use the updated model to guide the next round of library design, focusing on regions of property space showing improvement.

Protocol for ExploratoryDe NovoGeneration (Generative Model Training & Sampling)

This protocol outlines the training and application of a deep generative model for de novo design.

- Data Curation: Assemble a large (>100,000 compounds), cleaned dataset of drug-like molecules (e.g., from ChEMBL) in SMILES format. Standardize and canonicalize all structures.

- Model Architecture Selection: Implement a Recurrent Neural Network (RNN), Variational Autoencoder (VAE), or a Transformer model. The model learns the probability distribution of the training set.

- Conditioning Strategy: For goal-directed generation, condition the model on desired properties using a reinforcement learning (RL) framework or a conditional VAE architecture. The property predictor (a separate neural network) provides rewards or gradients.

- Training: Train the model to reconstruct or generate valid SMILES strings. For RL-based methods, fine-tune the model using policy gradients (e.g., REINFORCE) to maximize a composite reward (e.g., R = p(Activity) + QED - SA_Score).

- Sampling & Validation: Generate a large sample of molecules (e.g., 10,000) from the trained model. Filter for novelty (Tanimoto similarity < 0.3 to training set), synthetic accessibility (SA Score < 4.5), and drug-likeness. Select top candidates for in silico docking or in vitro screening.

Diagrams of Core Concepts

Exploitation-Centric Molecular Optimization Cycle

Exploration-Driven De Novo Generation Workflow

Relationship: Exploration, Exploitation & Blended Strategies

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational & Experimental Tools

| Category | Tool/Reagent | Function / Purpose |

|---|---|---|

| Computational Library Design | Enamine REAL Space, WuXi GalaXi | Ultra-large, commercially accessible virtual libraries for virtual screening and idea mining. |

| Chemical Data Source | ChEMBL, PubChem | Public repositories of bioactive molecules and associated assay data for model training. |

| Generative Modeling | REINVENT, ChemBERTa, GuacaMol | Open-source frameworks for implementing deep generative models with reinforcement learning. |

| Property Prediction | RDKit (Descriptors), SwissADME, pkCSM | Calculates key molecular descriptors and predicts pharmacokinetic properties. |

| Synthesis Enabling | Building Blocks (e.g., amino acids, boronic acids), DNA-encoded libraries | High-quality reagents for rapid analog synthesis; technology for ultra-high-throughput screening. |

| In Vitro Profiling | Biochemical Assay Kits (e.g., Kinase-Glo), Caco-2 cells, hERG patch clamp | Standardized kits for primary activity screening; assays for early ADMET assessment (permeability, cardiotoxicity). |

The central thesis distinguishing molecular optimization from de novo molecular generation lies in the role of the starting point. Optimization is an iterative, knowledge-driven process that begins with a known chemical entity—a hit molecule or a privileged scaffold—and refines it toward a target product profile. In contrast, de novo generation typically starts from a blank slate or a minimal constraint set, using generative models to explore vast chemical space ab initio. This guide delves into the critical importance of the starting point in optimization campaigns, examining how initial hits, core scaffolds, and defined property profiles dictate the strategy, trajectory, and ultimate success of lead discovery and development.

Defining the Starting Point: Hits, Scaffolds, and Profiles

Hit Molecules

A hit is a compound identified through screening that exhibits a predefined level of activity against a biological target. Hits are the primary output of high-throughput screening (HTS) or virtual screening campaigns.

Scaffolds

A scaffold is the core structural framework of a molecule. Privileged scaffolds are chemotypes recurring across known bioactive compounds, offering a versatile starting point for generating novel analogs with optimized properties.

Property Profiles

A property profile is a multi-parameter set of desired characteristics, including potency (e.g., IC50), selectivity, solubility, metabolic stability, permeability, and lack of toxicity. It defines the objective function for optimization.

Table 1: Comparative Analysis of Starting Point Strategies

| Starting Point Type | Definition | Typical Source | Key Advantage | Primary Risk |

|---|---|---|---|---|

| Hit Molecule | A confirmed active from a screen. | HTS, Virtual Screen, Fragment Screen. | Validated pharmacological activity. | Often poor "drug-likeness"; requires significant optimization. |

| Privileged Scaffold | A core structure with known bioactivity relevance. | Medicinal chemistry literature, known drugs. | Higher probability of success; synthetically tractable. | Potential for lack of novelty or IP issues. |

| Property Profile | A set of target values for key parameters. | Therapeutic area requirements, prior knowledge. | Goal-oriented; reduces late-stage attrition. | May be difficult to achieve all parameters simultaneously. |

Experimental Methodologies for Hit-to-Lead Optimization

Protocol: Structure-Activity Relationship (SAR) Expansion

Objective: Systematically explore chemical space around a hit to understand SAR and improve potency.

- Analog Library Design: Using the hit's structure, generate a virtual library of analogs focusing on R-group variations, core ring modifications, and bioisosteric replacements.

- Synthesis or Sourcing: Procure compounds via parallel synthesis, purchased libraries, or contract research organizations.

- Primary Assay: Test all analogs in the primary biochemical or cell-based assay to determine IC50/EC50.

- Data Analysis: Plot potency changes against structural modifications to identify key pharmacophores and detrimental moieties.

- Iteration: Select the most promising leads (typically 10-100x more potent than the original hit) for the next round of design and synthesis.

Protocol: Scaffold Hopping

Objective: Identify novel chemotypes with similar bioactivity to a known lead, potentially improving properties or circumventing IP.

- Pharmacophore Model: Define the essential steric and electronic features responsible for biological activity from the known lead.

- Virtual Screening: Use the pharmacophore model to query large chemical databases (e.g., ZINC, Enamine REAL) for structurally distinct molecules that match the feature set.

- Similarity Searching: Employ 2D/3D molecular similarity methods (e.g., ECFP4 fingerprints, shape similarity) to find diverse matches.

- Experimental Validation: Test top virtual hits in biological assays to confirm activity transfer.

Protocol: Multi-Parameter Optimization (MPO)

Objective: Balance multiple property constraints simultaneously during lead optimization.

- Profile Definition: Establish target ranges for all key parameters (e.g., pIC50 > 7, logD 2-3, clearance < 15 mL/min/kg, hERG IC50 > 10 µM).

- High-Throughput ADME/Tox Screening: Implement parallel assays for permeability (PAMPA, Caco-2), metabolic stability (microsomal/hepatocyte clearance), and early toxicity flags (hERG, cytotoxicity).

- Scoring: Apply an MPO scoring function (e.g., ( \text{MPO Score} = \sum{i} wi \cdot Si ), where ( wi ) is weight and ( S_i ) is the normalized score for property i) to rank compounds.

- Design Cycle: Use MPO scores to guide the next round of chemical design, prioritizing compounds with the best balanced profile.

Table 2: Representative Quantitative Data from a Hit-to-Lead Campaign (Illustrative)

| Compound ID | Core Scaffold | pIC50 | LogD (pH 7.4) | Human Microsomal Stability (% Remaining) | Caco-2 Papp (10⁻⁶ cm/s) | hERG IC50 (µM) | MPO Score |

|---|---|---|---|---|---|---|---|

| Hit-1 | Aminopyridine | 5.2 | 4.8 | 12 | 5 | 2.1 | 3.1 |

| Lead-10 | Aminopyridine | 7.1 | 2.5 | 85 | 18 | >30 | 6.8 |

| Lead-22 | Pyrazolopyridine | 7.8 | 2.1 | 92 | 22 | >30 | 7.5 |

| Target Profile | - | >7.0 | 2.0 - 3.0 | >70% | >15 | >10 | >6.5 |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for Molecular Optimization

| Reagent/Material | Supplier Examples | Function in Optimization |

|---|---|---|

| Kinase/GPCR Assay Kits | Cisbio, Thermo Fisher, Promega | Provide standardized, cell-based or biochemical assays for rapid potency and selectivity screening of analog series. |

| Ready-to-Assay Frozen Cells | Eurofins, Reaction Biology | Express the target protein of interest, enabling consistent functional assays without cell culture variability. |

| Human Liver Microsomes & Hepatocytes | Corning, BioIVT, Xenotech | Critical for in vitro assessment of metabolic stability and metabolite identification. |

| PAMPA Plate Systems | pION, Corning | Enable high-throughput, low-cost prediction of passive membrane permeability. |

| Caco-2 Cell Lines | ATCC, Sigma-Aldrich | The gold-standard cell model for assessing intestinal permeability and active transport. |

| hERG Channel Expressing Cells | ChanTest (Eurofins), MilliporeSigma | Used in patch-clamp or flux assays to evaluate cardiac safety risk early in optimization. |

| Fragment Libraries | Enamine, Life Chemicals, Maybridge | Provide small, diverse chemical fragments for growing or linking from a hit via structural biology. |

| DNA-Encoded Library (DEL) Kits | X-Chem, HitGen | Enable ultra-high-throughput screening of billions of compounds against purified protein targets to identify novel hits/scaffolds. |

Visualizing Workflows and Relationships

Diagram 1: The Molecular Optimization Cycle from Diverse Starting Points.

Diagram 2: Convergent Pathways from Hits, Scaffolds, and Profiles.

Tools of the Trade: AI Methods, Algorithms, and Real-World Applications

This whitepaper details core molecular optimization techniques within the thesis that molecular optimization and de novo molecular generation represent distinct, complementary paradigms in computational drug discovery. Molecular optimization is an iterative, guided search within a known chemical space, starting from a lead compound to improve specific properties. In contrast, de novo generation is a constructive process that designs novel molecular structures from scratch, often guided by generative models. Optimization is typically applied post high-throughput screening to refine potency, selectivity, and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties, whereas de novo generation aims to explore vast, uncharted chemical spaces for novel scaffolds.

Core Techniques and Methodologies

Matched Molecular Pairs (MMP) Analysis

Definition: An MMP is defined as two molecules that differ only by a single, well-defined structural transformation (e.g., -Cl → -OCH₃).

Experimental Protocol for MMP Identification:

- Data Curation: Assemble a dataset of molecules with associated property data (e.g., pIC50, LogP, solubility).

- Fragmentation: Apply the Hussain-Rea algorithm to systematically cleave exocyclic single bonds in each molecule, generating a core and a context fragment pair.

- MMP Identification: Group molecules that share an identical core but differ in their context fragments. Each pair forms an MMP.

- Delta Calculation: For each MMP, calculate the property difference (Δ) between the two molecules (ΔpIC50, ΔLogP, etc.).

- Statistical Analysis: Aggregate all MMPs sharing the same transformation. Compute the mean, median, and standard deviation of the property Δ. A large, consistent Δ indicates a robust, context-independent Structure-Property Relationship (SPR).

Quantitative Data Summary: Table 1: Example MMP Transformations and Mean Property Shifts (Hypothetical Data from Recent Literature)

| Transformation (R → R') | Mean ΔpIC50 | Std Dev | N (Pairs) | Property Interpretation |

|---|---|---|---|---|

| -H → -F | +0.35 | 0.21 | 150 | Moderate potency gain |

| -CH₃ → -CF₃ | +0.60 | 0.45 | 89 | Potency gain, high variance |

| -Cl → -CN | -0.20 | 0.30 | 120 | Slight potency loss |

| -OCH₃ → -NH₂ | +0.80 | 0.25 | 65 | Strong potency gain |

R-Group Decomposition

Definition: A method to dissect a congeneric series into a common core scaffold and variable substituents (R-groups) at specified attachment points.

Experimental Protocol:

- Series Alignment: Align a set of analogous compounds from a screening campaign using maximum common substructure (MCS) algorithms.

- Core Definition: Define the invariant core structure shared by all molecules in the series.

- R-Group Assignment: Assign all non-core atoms to specific R-group positions (R1, R2, etc.).

- Data Matrix Creation: Populate a table with compounds as rows and R-group descriptors (e.g., Morgan fingerprints, physicochemical properties) for each position as columns. The target property (e.g., activity) is the dependent variable.

- Analysis: Use the matrix for SAR visualization, linear free-energy relationship (LFER) studies like Craig or Topliss plots, or as input for machine learning models.

Title: R-Group Decomposition Workflow

Quantitative Structure-Activity Relationship (QSAR)

Definition: A quantitative model that relates a set of molecular descriptors (independent variables) to a biological or physicochemical activity (dependent variable).

Experimental Protocol for QSAR Modeling:

- Dataset Preparation: Curate a homogeneous set of 50-500 molecules with reliable, continuous activity data. Apply chemical standardization.

- Descriptor Calculation: Compute numerical descriptors (e.g., topological, electronic, geometric) for each molecule using software like RDKit, PaDEL, or Dragon.

- Dataset Splitting: Split data into training (70-80%), validation (10-15%), and test sets (10-15%) using chemical diversity or time-based splits.

- Feature Selection: Reduce dimensionality using methods like Variance Threshold, Pearson Correlation, or LASSO to select the most relevant descriptors.

- Model Building: Train a model (e.g., Partial Least Squares (PLS), Random Forest, or Support Vector Machine) on the training set.

- Validation & Optimization: Tune hyperparameters using the validation set and cross-validation. Apply principles of the OECD for QSAR validation (e.g., goodness-of-fit, robustness, predictivity).

- External Testing: Evaluate the final model on the held-out test set. Report key metrics: R², Q² (cross-validated R²), RMSE, and MAE.

Quantitative Data Summary: Table 2: Performance Metrics for Common QSAR Modeling Algorithms (Generalized from Recent Studies)

| Algorithm | Typical R² (Test) | Typical RMSE (pIC50) | Key Strengths | Key Weaknesses |

|---|---|---|---|---|

| Partial Least Squares | 0.65 - 0.75 | 0.50 - 0.70 | Robust, handles collinearity | Linear, may miss complex patterns |

| Random Forest | 0.70 - 0.80 | 0.45 - 0.65 | Captures non-linearity, feature import | Can overfit without tuning |

| Support Vector Machine | 0.72 - 0.82 | 0.40 - 0.60 | Effective in high-dimensional spaces | Sensitive to kernel/parameters |

| Graph Neural Network | 0.75 - 0.85 | 0.35 - 0.55 | Learns from raw structure, high potential | High data/compute requirements |

The Integrated Optimization Workflow

These techniques are synergistically integrated in modern lead optimization campaigns. R-Group Decomposition provides an organized view of the SAR. MMP analysis extracts localized, interpretable transformation rules from this data. These rules, along with R-group descriptors, feed into a QSAR model that predicts the effect of new, unexplored combinations, creating a closed-loop design-make-test-analyze (DMTA) cycle.

Title: Integrated Molecular Optimization Cycle

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Computational Tools for Molecular Optimization

| Item/Category | Example Solutions | Primary Function in Optimization |

|---|---|---|

| Cheminformatics Toolkit | RDKit, OpenEye Toolkit, Schrödinger Canvas | Core library for molecule handling, fragmentation, descriptor calculation, and MMP analysis. |

| QSAR Modeling Platform | Scikit-learn, KNIME, Orange, MOE | Environment for building, validating, and deploying machine learning QSAR models. |

| Descriptor Software | PaDEL-Descriptor, Dragon, Mordred | Calculate thousands of molecular descriptors for QSAR input. |

| Visualization & Analysis | Spotfire, DataWarrior, Matplotlib (Python) | Visualize R-group matrices, SAR landscapes, and model results. |

| Database & Curation | ChEMBL, corporate DB, ICliDo, Pipeline Pilot | Source of historical compound data for MMP mining and model training. |

| High-Performance Compute | Local GPU clusters, Cloud (AWS, GCP) | Accelerate computationally intensive tasks like GNN-QSAR or large library enumeration. |

Within computational drug discovery, de novo molecular generation and molecular optimization are distinct but interrelated research paradigms. De novo generation aims to create novel, chemically valid molecular structures from scratch, often targeting broad chemical space exploration or generating structures with a desired property profile. In contrast, molecular optimization typically starts with a known lead compound and seeks to iteratively improve specific properties (e.g., potency, solubility, synthetic accessibility) while maintaining core desirable features. The architectures discussed herein are fundamental to both tasks but are applied with differing objectives and constraints.

Core Architectures and Technical Foundations

Variational Autoencoders (VAEs)

VAEs provide a probabilistic framework for generating continuous latent representations of molecular structures, usually encoded as SMILES strings or graphs.

Core Methodology: A molecular structure is encoded into a latent vector z sampled from a learned distribution (typically Gaussian). The decoder reconstructs the molecule from z. Generation involves sampling a new z from the prior distribution and decoding it.

Key Experimental Protocol (Characteristic VAE Training):

- Data Preparation: Assemble a dataset of canonical SMILES strings. Apply tokenization (atom-wise or via a vocabulary).

- Encoder Construction: Implement a Recurrent Neural Network (RNN) or Graph Neural Network (GNN) to map the input molecule to latent parameters μ and log(σ²).

- Latent Sampling: Sample a latent vector

z = μ + exp(log(σ²)/2) * ε, where ε ~ N(0, I). - Decoder Construction: Implement an RNN decoder to reconstruct the SMILES string from

z. - Loss Optimization: Minimize the combined loss:

L = L_reconstruction (Cross-Entropy) + β * D_KL(N(μ, σ²) || N(0, I)), where β is a weighting coefficient.

Quantitative Performance Data (Representative Studies):

| Model (VAE Variant) | Dataset | Validity (%) | Uniqueness (%) | Novelty (%) | Property Optimization Result (e.g., QED) |

|---|---|---|---|---|---|

| Grammar VAE (Gomez-Bombarelli et al.) | ZINC | 60.2 | 99.9 | 81.7 | Successfully generated molecules with higher logP and QED |

| JT-VAE (Jin et al.) | ZINC | 100 | 99.9 | 99.9 | Optimized for penalized logP: +4.02 avg improvement |

| Graph VAE (Simonovsky et al.) | QM9 | 87.5 | 98.5 | 95.2 | N/A |

Generative Adversarial Networks (GANs)

GANs train a generator and a discriminator in an adversarial game, where the generator learns to produce realistic molecules that fool the discriminator.

Core Methodology: The generator (G) maps noise vectors to molecular structures. The discriminator (D) distinguishes real molecules from generated ones. Training alternates between improving G to fool D and improving D to correctly classify real vs. fake.

Key Experimental Protocol (Organic GAN with RL Fine-tuning):

- Adversarial Pretraining: Train a GAN where G is an RNN and D is a CNN/RNN on SMILES strings from a corpus like ChEMBL.

- Policy Gradient Fine-tuning: Use a Reinforcement Learning (RL) paradigm. The pre-trained G acts as an agent. After generating a molecule, a reward (e.g., predicted activity, QED) is provided by an external scoring function.

- Objective Maximization: Update G using the REINFORCE or PPO algorithm to maximize the expected reward, often with a pre-training likelihood penalty to maintain chemical realism.

Reinforcement Learning (RL)

RL frames molecular generation as a sequential decision-making process, where an agent builds a molecule step-by-step and receives rewards based on the final structure's properties.

Core Methodology: The agent (a generative model) interacts with an environment (chemical space). Actions are adding an atom or bond. States are partial molecular graphs. The policy (π) is updated to maximize the cumulative reward from a critic or direct property calculator.

Key Experimental Protocol (Deep Q-Network for Molecular Design):

- Environment Definition: Define the action space (e.g., add atom type X, add bond type Y, terminate) and state representation (e.g., molecular graph).

- Reward Shaping: Design a final reward function R(m) combining multiple objectives:

R(m) = w1 * Activity(m) + w2 * SA(m) + w3 * QED(m). - Q-Learning: Train a Deep Q-Network (DQN) with experience replay. The Q-network estimates the future discounted reward for each action in a given state.

- Exploration: Use an ε-greedy policy to balance exploration of new chemical space and exploitation of known high-reward actions.

Quantitative Performance Data (RL in Optimization):

| RL Algorithm | Benchmark Task | Starting Point | Optimization Target | Performance Gain |

|---|---|---|---|---|

| REINFORCE (Olivecrona et al.) | Penalized logP | Random | Maximize penalized logP | Achieved scores > 5 in 80% of runs |

| PPO (Zhou et al.) | DRD2 activity & QED | Random SMILES | Multi-objective: DRD2 pXC50 > 7.5 & QED > 0.6 | Success rate: 73.4% for desired profile |

| DQN (Liu et al.) | JAK2 inhibition | Known lead | Improve pIC50 & maintain SA | Generated novel analogs with pIC50 > 8.0 |

Transformers

Adapted from NLP, Transformer models treat molecular generation as a sequence-to-sequence task, leveraging self-attention to capture long-range dependencies in SMILES or SELFIES strings.

Core Methodology: A Transformer decoder (auto-regressive) or encoder-decoder architecture is trained to predict the next token in a molecular string given the previous tokens. Attention mechanisms weight the importance of all previous tokens when generating the next.

Key Experimental Protocol (Transformer-based De Novo Generation):

- Tokenization: Convert SMILES or SELFIES strings into a vocabulary of tokens.

- Model Architecture: Implement a multi-layer Transformer decoder with masked self-attention.

- Training: Use teacher forcing to minimize cross-entropy loss on next-token prediction over a large corpus (e.g., PubChem).

- Conditional Generation: For property-guided generation, prepend a property-valued token or use a conditional encoder to bias the generation towards desired attributes.

Quantitative Performance Data (Transformer Models):

| Model | Training Data | Params | Validity (SELFIES) | Novelty (%) | Use Case Highlight |

|---|---|---|---|---|---|

| Chemformer (Irwin et al.) | ZINC & PubChem | ~100M | 99.6% | 99.8 | Transfer learning for reaction prediction |

| MoLeR (Maziarz et al.) | ZINC | - | 99.9% (Graph-based) | - | Scaffold-constrained generation |

| Galactica (Taylor et al.) | Scientific Corpus | 120B | High (implicit) | - | Zero-shot molecule generation from text |

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Experimental Workflow |

|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, descriptor calculation, and validation. |

| PyTor3D / TensorFlow (DeepChem) | Deep learning frameworks with specialized libraries for molecular graph representation and model building. |

| ZINC / ChEMBL / PubChem | Primary databases for sourcing training data (commercial compounds, bioactive molecules, general chemistry). |

| SELFIES (Self-Referencing Embedded Strings) | Robust molecular string representation that guarantees 100% syntactic validity, used as an alternative to SMILES. |

| Oracle Functions (e.g., AutoDock Vina, QSAR models) | External scoring functions used as reward signals in RL or for filtering generated libraries (docking, property prediction). |

| GPU Computing Cluster | Essential hardware for training large-scale generative models (VAEs, Transformers) in a feasible timeframe. |

| SMILES/SELFIES Tokenizer | Converts molecular strings into discrete tokens suitable for sequence-based models (RNNs, Transformers). |

Architectural Comparison and Application Context

Decision Workflow for Architecture Selection

Core Technical Comparison of Architectures

| Architecture | Typical Molecular Representation | Key Strength | Key Limitation | Best Suited For |

|---|---|---|---|---|

| VAE | SMILES, Graph, SELFIES | Continuous, interpolatable latent space. | Can generate invalid structures (SMILES). | Exploring neighborhoods of known actives. |

| GAN | SMILES, Graph | Can produce highly realistic samples. | Training instability, mode collapse. | Generating molecules resembling a target distribution. |

| RL | SMILES, Graph (step-wise) | Direct optimization of complex reward functions. | Reward shaping is critical; can be sample-inefficient. | Multi-property lead optimization. |

| Transformer | SELFIES, SMILES (tokenized) | Captures long-range dependencies, state-of-the-art quality. | Large data requirements, autoregressive generation can be slow. | De novo generation from large, diverse corpora. |

Integrated Pipeline for Molecular Design

A modern pipeline often integrates multiple architectures.

The selection and application of VAEs, GANs, RL, and Transformers are fundamentally guided by the overarching research question: is the goal de novo generation or molecular optimization? De novo research prioritizes novelty, diversity, and fundamental model capacity, favoring Transformers and VAEs. Optimization research prioritizes directed improvement under constraints, favoring RL and conditioned VAEs. The ongoing synthesis of these architectures—such as Transformer-based policy networks for RL or VAEs with Transformer decoders—represents the frontier of the field, aiming to harness the explorative power of de novo generation with the precise control required for lead optimization.

Within the domain of computational drug discovery, molecular optimization and de novo molecular generation represent two distinct research paradigms with overlapping yet divergent goals. This guide focuses on the Hit-to-Lead and Lead Optimization phase, which is quintessentially an optimization problem. The core thesis is that optimization research iteratively refines known starting points against a multi-parametric objective, whereas de novo generation research aims to create novel chemical matter from scratch, often with a stronger emphasis on fundamental chemical novelty and exploration of vast chemical space without a specific starting scaffold.

Core Principles of Lead Optimization

Lead Optimization (LO) is a multiparameter, iterative process aimed at improving the profile of a confirmed hit or lead series. The goal is to enhance potency, selectivity, metabolic stability, pharmacokinetics (PK), and safety while reducing off-target activities. It is a constrained optimization problem where chemical modifications are made to a core scaffold.

Quantitative Optimization Parameters & Data

The success of LO is measured by a battery of in vitro and in vivo assays. Key quantitative parameters are summarized below.

Table 1: Key Quantitative Parameters in Lead Optimization

| Parameter | Target Range | Typical Assay | Optimization Goal |

|---|---|---|---|

| Biochemical IC₅₀ | < 100 nM | Enzyme/Receptor Inhibition | Increase potency (lower IC₅₀) |

| Cellular EC₅₀ | < 1 µM | Cell-based functional assay | Improve cellular activity |

| Selectivity Index | > 10-100x | Counter-screening vs. related targets | Enhance specificity |

| Microsomal Stability (HLM/RLM) | % remaining > 30% (30 min) | Liver microsome incubation | Improve metabolic stability |

| Permeability (Papp) | Caco-2: > 10 x 10⁻⁶ cm/s | Caco-2 assay | Ensure adequate absorption |

| CYP Inhibition | IC₅₀ > 10 µM | Cytochrome P450 assay | Reduce drug-drug interaction risk |

| hERG Inhibition | IC₅₀ > 10 µM | Patch-clamp / binding assay | Mitigate cardiac toxicity risk |

| Kinetic Solubility | > 100 µM | Nephelometry | Ensure sufficient solubility |

| Plasma Protein Binding | % Free > 1% | Equilibrium dialysis | Optimize free drug concentration |

| In Vivo Clearance | < Liver blood flow | Rodent PK study | Reduce clearance for longer half-life |

| Oral Bioavailability | > 20% | Rodent PK study | Maximize fraction of dose absorbed |

Detailed Methodologies for Key Experiments

Protocol: Structure-Activity Relationship (SAR) Expansion via Parallel Synthesis

Objective: Systematically explore chemical space around a lead scaffold to establish SAR.

- Design: Use reagent-based enumeration. Select 3-5 variable sites (R1-R5) on the core scaffold. Curate building blocks (BBs) for each site focusing on diverse physicochemical properties (e.g., logP, H-bond donors/acceptors, size). Use 96-well plate format for design.

- Synthesis: Employ automated solid-phase or solution-phase parallel synthesis. For amide coupling example: a) Pre-load resin with core scaffold (if SP). b) In 96-well plate, dispense core (0.1 mmol/well). c) Add coupling agent (HATU, 1.1 eq) and base (DIPEA, 2 eq) to each well. d) Add unique carboxylic acid BB (1.2 eq) to each well according to design matrix. e) Agitate at room temperature for 12 hours. f) Quench, wash, and cleave (if SP). g) Purify via automated reverse-phase HPLC.

- Analysis: Confirm identity/purity via LC-MS (UV214/254 nm, ESI+). Compounds with >90% purity proceed to screening.

Protocol:In VitroADMET Profiling (Microsomal Stability & CYP Inhibition)

Objective: Assess metabolic stability and cytochrome P450 inhibition potential. A. Human Liver Microsome (HLM) Stability:

- Incubation: Prepare test compound (1 µM) in 0.1 M phosphate buffer (pH 7.4) with 0.5 mg/mL HLM. Pre-warm for 5 min at 37°C.

- Initiation: Start reaction by adding NADPH regenerating system (1 mM NADP⁺, 3.3 mM G6P, 0.4 U/mL G6PDH, 3.3 mM MgCl₂). Final volume: 100 µL.

- Time Points: Aliquot 10 µL at t=0, 5, 15, 30, 45, 60 min into 40 µL of stop solution (acetonitrile with internal standard).

- Analysis: Centrifuge (3000xg, 10 min). Analyze supernatant via LC-MS/MS. Quantify parent compound peak area.

- Data Processing: Plot Ln(peak area) vs. time. Calculate half-life (t₁/₂ = 0.693/k) and intrinsic clearance (CLint = (0.693 / t₁/₂) * (Incubation Volume / Protein Amount)).

B. CYP450 Inhibition (Fluorometric):

- Incubation: In black 96-well plate, add 50 µL of human CYP isoform (e.g., 3A4) with substrate (e.g., BzResorufin for CYP3A4) in buffer.

- Inhibitor Addition: Add 25 µL of test compound (at 8 concentrations, e.g., 0.03-30 µM) or control (buffer for 0% inhibition, ketoconazole for 100% inhibition).

- Initiation: Add 25 µL of NADPH regenerating system to start reaction. Incubate at 37°C for 30 min.

- Detection: Stop with stop solution. Measure fluorescence (Ex/Em specific to metabolite, e.g., 530/590 nm for resorufin).

- Data Processing: Calculate % inhibition relative to controls. Determine IC₅₀ using a 4-parameter logistic curve fit.

Computational Approaches in Optimization

Optimization relies on QSAR, molecular modeling, and free energy perturbation (FEP) to guide synthesis. Unlike de novo generation's generative models, optimization uses predictive models trained on project-specific data.

Table 2: Core Computational Methods in Optimization vs. De Novo Generation

| Method | Role in Optimization | Role in De Novo Generation |

|---|---|---|

| QSAR/QSPR | Predict ADMET/Potency for congeneric series. Primary Tool. | Used for post-generation scoring/filtering. |

| Molecular Docking | Propose binding modes to explain SAR; suggest targeted modifications. | Used to score/validate generated structures for target binding. |

| Free Energy Perturbation (FEP) | Accurately predict relative binding affinities (< 1 kcal/mol) for close analogs. Gold Standard. | Computationally prohibitive for vast virtual libraries. |

| Generative AI (VAE, GAN) | Can be used for limited "scaffold morphing" or R-group suggestion. | Primary Tool for creating novel scaffolds from latent space. |

| Reinforcement Learning | Can be applied with multi-parameter reward functions (e.g., QED, SA, potency). | Used to generate molecules optimizing single/multi-objective rewards. |

Visualizing the Lead Optimization Workflow

Diagram 1: LO Iterative Cycle

Diagram 2: Multiparameter Optimization

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Lead Optimization Experiments

| Item & Example Supplier | Function in LO |

|---|---|

| Human Liver Microsomes (HLM) (Corning, Xenotech) | In vitro system to assess Phase I metabolic stability and metabolite identification. |

| CYP450 Isoenzymes & Substrates (Reaction Biology, Thermo Fisher) | Profiling inhibition potential against key drug-metabolizing enzymes (CYP3A4, 2D6, etc.). |

| Caco-2 Cell Line (ATCC) | Model for predicting intestinal permeability and absorption potential. |

| hERG-Expressing Cell Line (ChanTest, Eurofins) | In vitro safety assay to assess risk of QT interval prolongation. |

| Kinase/GPCR Profiling Panels (Eurofins, DiscoverX) | Broad selectivity screening to identify off-target interactions. |

| NADPH Regenerating System (Promega, Sigma) | Essential cofactor for oxidative metabolism assays with microsomes or cytosol. |

| Solid-Phase Synthesis Resins & Building Blocks (Sigma-Aldrich, Combi-Blocks, Enamine) | Enables high-throughput parallel synthesis for SAR exploration. |

| LC-MS/MS Systems (Sciex, Agilent, Waters) | Core analytical platform for compound purity analysis, metabolic identification, and bioanalysis. |

The central thesis of modern computational molecular design distinguishes between two paradigms. Molecular Optimization operates on a known chemical starting point (a hit or lead), aiming to improve specific properties (e.g., potency, selectivity, ADMET) through iterative, localized modifications. In contrast, De Novo Molecular Generation constructs molecules atom-by-atom or fragment-by-fragment from scratch, guided by target constraints and objective functions, with no requirement for a pre-existing scaffold. This guide focuses on the latter's application in scaffold hopping and novel target exploration, where the goal is to discover structurally novel chemotypes with desired bioactivity.

Core Methodologies and Protocols

1. Generative Model Architectures The field is dominated by deep generative models trained on vast chemical libraries (e.g., ZINC, ChEMBL).

Protocol for Training a Recurrent Neural Network (RNN) / Long Short-Term Memory (LSTM) Model for SMILES Generation:

- Data Curation: Assemble a dataset of >1 million canonical SMILES strings. Filter for drug-like properties (e.g., MW < 500, LogP < 5).

- Tokenization: Convert each SMILES string into a sequence of unique tokens (atoms, bonds, rings).

- Model Architecture: Implement an encoder-decoder LSTM. The encoder maps the token sequence to a latent vector; the decoder reconstructs the sequence.

- Training: Train using teacher forcing with cross-entropy loss (Adam optimizer, learning rate 0.001) until validation loss plateaus.

- Conditioning: For target-specific generation, integrate a conditioning layer (e.g., a dense network) that takes target descriptors (e.g., ECFP fingerprints of known binders, protein sequence features) as input, influencing the latent space.

Protocol for Training a Generative Adversarial Network (GAN) with Reinforcement Learning (RL) Fine-Tuning:

- Generator (G): A network that produces molecular graphs or SMILES from noise.

- Discriminator (D): A network that distinguishes real molecules (from training set) from generated ones.

- Adversarial Training: Train G and D concurrently. G aims to fool D; D aims to correctly classify. Use Wasserstein loss with gradient penalty for stability.

- RL Fine-Tuning (e.g., Policy Gradient): Post-training, fine-tune G using a reward function R(m) that combines multiple objectives:

- R(m) = w₁ * QED(m) + w₂ * SA(m) + w₃ * (Docking Score(m, Target)) (where QED= drug-likeness, SA= synthetic accessibility).

- Sampling: Generate novel molecules by sampling noise vectors and passing them through the fine-tuned generator.

2. Scaffold Hopping via Latent Space Interpolation

- Protocol:

- Encode two known active scaffolds (A and B) into the latent space (zA, zB) of a trained variational autoencoder (VAE).

- Perform linear interpolation: znew = α * zA + (1-α) * zB, for α in [0, 1].

- Decode the intermediate vectors znew to generate novel molecular structures that hybridize features of the parent scaffolds.

- Filter generated structures using a predictive activity model (e.g., a Random Forest or CNN classifier trained on active/inactive data for the target).

3. Exploration for Novel or "Dark" Targets

- Protocol (Ligand-Based, No Known Structure):

- Input Definition: Compile sparse known actives or use the pharmacophore of a natural ligand.

- Constraint Definition: Use a generative model conditioned on:

- A predicted bioactivity profile (from a proteochemometric model).

- A 3D pharmacophore query (if available).

- Required molecular interaction fingerprints.

- Generation & Validation: Generate molecules satisfying constraints. Prioritize candidates using in silico off-target profiling (against a panel of pharmacologically relevant targets) and de novo synthesis followed by phenotypic screening.

Data Presentation: Comparative Performance of Generative Models

Table 1: Benchmarking Metrics for De Novo Generative Models in Scaffold Hopping

| Model Type | Novelty (vs. Training Set) | Validity (% Chemically Valid) | Uniqueness (% Unique in Set) | Diversity (Avg. Tanimoto Distance) | Success Rate in Identified Scaffold Hops* |

|---|---|---|---|---|---|

| RNN/LSTM | 70-85% | 80-95% | 60-80% | 0.70-0.85 | ~15% |

| VAE | 75-90% | 85-98% | 70-90% | 0.75-0.90 | ~20% |

| GAN | 80-95% | 90-99% | 85-95% | 0.80-0.95 | ~25% |

| Graph-based (GCPN) | 85-99% | 95-100% | 90-99% | 0.85-0.98 | ~30% |

*Success rate: Percentage of generated molecules predicted active (by a robust QSAR model) and representing a Bemis-Murcko scaffold not present in the training actives.

Table 2: Key Software/Tools for De Novo Generation & Evaluation

| Tool Name | Type | Primary Function | Key Metric Output |

|---|---|---|---|

| REINVENT | RL-based Generative | Multi-parameter optimization from scratch. | Custom Reward Score, Internal Diversity |

| MolGPT | Transformer-based | Conditional generation via SMILES. | Perplexity, Synthesizability Score |

| DeepScaffold | Graph-based | Scaffold-constrained generation. | Scaffold Recovery Rate, Property Deviation |

| GuacaMol | Benchmarking Suite | Evaluating generative model performance. | Fréchet ChemNet Distance, KL Divergence |

| MOSES | Benchmarking Suite | Standardized benchmarking of generative models. | Novelty, Uniqueness, Filters, SAscore |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Experimental Validation of De Novo Generated Hits

| Item/Reagent | Function/Benefit |

|---|---|

| DNA-Encoded Library (DEL) Screening | Enables ultra-high-throughput experimental screening of billions of de novo designed scaffolds against a purified protein target. |

| Covalent Fragment Libraries | For exploring novel binding pockets in "undruggable" targets; generated molecules can be designed to incorporate warheads. |

| Cryo-Electron Microscopy (Cryo-EM) Services | Critical for novel target exploration, providing structural insights for targets without crystal structures to inform generation. |

| Chemically Diverse Building Block Sets (e.g., from Enamine REAL Space) | Provides synthetic feasibility grounding; in silico generation can be filtered for compounds synthesizable from available blocks. |

| Phenotypic Screening Assay Kits (e.g., for oncology, neurodegeneration) | Essential for validating molecules generated de novo for novel targets with complex or unknown biology. |

| Selectivity Screening Panels (e.g., kinase, GPCR panels) | Evaluates the off-target profile of novel scaffolds early in the validation process. |

Visualizations

Title: *De Novo Scaffold Generation & Prioritization Workflow*

Title: Optimization vs. De Novo Design Paradigm

The pursuit of novel molecular entities in drug discovery is guided by two distinct but complementary paradigms. Framed within our broader thesis, de novo molecular generation research focuses on the creation of novel, chemically valid structures from scratch, often leveraging deep generative models (e.g., VAEs, GANs, Transformers) trained on large chemical libraries. Its primary metric is structural novelty and diversity. In contrast, molecular optimization research is an iterative refinement process. It starts from one or more lead compounds and aims to improve specific properties—such as potency, selectivity, or ADMET—while maintaining core desirable features. The core challenge is navigating the constrained chemical space around the lead.

Hybrid approaches represent the synthesis of these paradigms, integrating continuous optimization loops within generative frameworks. This creates a feedback-driven cycle where generative models propose candidates, which are evaluated via predictive models or simulations, and the results are used to steer subsequent generation toward optimal regions of chemical space.

Core Technical Architecture

The architecture of a hybrid system typically involves three interconnected components:

- A Generative Model: Proposes candidate molecular structures.

- An Evaluation Function: Scores candidates based on multi-parametric objectives (e.g., QSAR model, docking score, synthetic accessibility).

- An Optimization Controller: Maps evaluation feedback to updates for the generative model, closing the loop.

Table 1: Comparison of Generative and Optimization Research Paradigms

| Feature | De Novo Molecular Generation | Molecular Optimization | Hybrid Approach |

|---|---|---|---|

| Primary Goal | Explore vast chemical space for novel scaffolds. | Improve specific properties of a lead series. | De novo generation biased toward optimal property regions. |

| Starting Point | Random noise or broad chemical distributions. | One or more known lead molecules. | Can be either, with iterative feedback. |

| Key Metrics | Validity, Uniqueness, Novelty, Diversity. | Property Delta (e.g., ΔpIC50, ΔLogP), Similarity. | Multi-objective Pareto efficiency, Success Rate (%). |

| Typical Methods | JT-VAE, REINVENT, GPT-based SMILES generators. | Matched Molecular Pairs, Analogue-by-Catalogue, SMILES-based RNNs with transfer learning. | Bayesian Optimization over latent space, Reinforcement Learning (e.g., Policy Gradient), Genetic Algorithms coupled with deep generators. |

| Risk | High risk of non-developable molecules. | Limited exploration, potential for local minima. | Balances exploration and exploitation. |

Experimental Protocols & Methodologies

Protocol 3.1: Latent Space Bayesian Optimization (LS-BO)

This protocol integrates a variational autoencoder (VAE) with Bayesian Optimization (BO).

- Training: Train a VAE (e.g., using SMILES or Graph representations) on a large dataset (e.g., ChEMBL) to learn a continuous latent space z.

- Initial Sampling: Encode a set of known actives and inactives to seed the latent space. Define an acquisition function (e.g., Expected Improvement).

- Optimization Loop: a. Use the BO algorithm to select the next latent point z to evaluate based on the acquisition function. b. Decode z to generate a molecular structure. c. Evaluate the molecule using the objective function (e.g., a docking score from AutoDock Vina or a predicted pIC50 from a random forest QSAR model). d. Update the BO surrogate model (Gaussian Process) with the new {z*, score} pair.

- Iteration: Repeat steps 3a-d for a set number of cycles (typically 50-500).

- Output: A set of proposed molecules ranked by the objective function.

Protocol 3.2: Reinforcement Learning (RL) Scaffold Decorator

This protocol uses an RNN as a policy network to decorate a core scaffold.

- Environment Setup: Define the core scaffold (e.g., from a known inhibitor) and the allowed attachment points and substituents.

- Agent & Policy: An RNN agent generates a SMILES string representing the decorated molecule, one token at a time.

- Reward Function: Design a composite reward R = w1Score(Activity) + w2SAScore + w3*QED - w4*SimilarityPenalty. Activity scores can come from a predictive model.

- Training Loop: Use a policy gradient method (e.g., REINFORCE or PPO) to update the RNN parameters. The agent generates a batch of molecules, receives rewards, and gradients are calculated to increase the probability of actions leading to high rewards.

- Evaluation: Monitor the increase in average reward and the properties of the top-performing generated molecules over training epochs.

Key Diagrams

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for Hybrid Method Development

| Item / Resource | Function in Hybrid Approaches | Example / Provider |

|---|---|---|

| Curated Chemical Libraries | Training data for generative models; benchmarking. | ChEMBL, ZINC, Enamine REAL. |

| Chemistry Toolkits | Handle molecular representation, featurization, and basic transformations. | RDKit (Open Source), OEChem (OpenEye). |

| Deep Learning Frameworks | Build and train generative (VAE, GAN) and predictive models. | PyTorch, TensorFlow, JAX. |

| Optimization Libraries | Implement Bayesian Optimization, RL, and evolutionary algorithms. | BoTorch (PyTorch), DEAP (GA), RLlib. |

| Molecular Simulation/Docking | Provide in silico evaluation functions for the optimization loop. | AutoDock Vina, Schrodinger Suite, OpenMM. |

| Cloud/High-Performance Compute | Manage computationally intensive training and sampling loops. | AWS, Google Cloud, Slurm clusters. |

| Specialized Software Platforms | Integrated environments for molecular design with some hybrid capabilities. | Atomwise, BenevolentAI, Schrödinger's AutoDesigner. |

Quantitative Performance Data

Recent literature demonstrates the efficacy of hybrid methods. The following table summarizes key results from benchmark studies.

Table 3: Benchmark Performance of Hybrid Methods on Molecular Optimization Tasks

| Method (Study) | Base Generative Model | Optimization Engine | Task & Benchmark | Key Quantitative Result |

|---|---|---|---|---|

| LatentGAN (Gómez-Bombarelli et al., 2018, extended) | VAE | Bayesian Optimization | Optimizing LogP & QED for generated molecules. | 80% of latent space points decoded to valid molecules after tuning. BO achieved target LogP >90% success rate. |

| REINVENT (Olivecrona et al., 2017) | RNN (SMILES) | Reinforcement Learning (Policy Gradient) | DRD2 activity optimization from random start. | >95% of generated molecules predicted active after 500 RL steps. Novelty ~70%. |

| Graph GA (Jensen, 2019) | Graph-Based Crossover/Mutation | Genetic Algorithm | Optimizing solubility and activity per the GuacaMol benchmark. | State-of-the-art performance on several GuacaMol multi-property benchmarks (e.g., Median score >0.8 for Isometric Multiproperty Optimization). |

| Fragment-based RL (Zhou et al., 2019) | Fragment-based Growth | Deep Q-Network (DQN) | De novo design with multiple property constraints (cLogP, MW, TPSA). | Achieved all property targets for >75% of generated molecules, significantly outperforming simple generation. |

| JT-VAE BO (Jin et al., 2018) | Junction Tree VAE | Bayesian Optimization | Optimizing penalized LogP on QM9 dataset. | Improved penalized LogP by >4 points on average over starting set, maintaining high validity. |

Integrating optimization loops within generative frameworks creates a powerful paradigm that directly addresses the core objective of molecular optimization research: the iterative, goal-directed improvement of compounds. It moves beyond pure de novo generation by incorporating a critical feedback mechanism, aligning the creative process with complex, real-world objectives. As predictive models (for ADMET, potency) and generative architectures improve, these hybrid systems are poised to become central to computational drug discovery, effectively bridging the gap between initial hit generation and lead optimization. The future lies in developing more sample-efficient optimizers, handling more complex and noisy biological objectives, and integrating synthetic feasibility directly into the loop.

Benchmark Datasets and Commonly Used Platforms (e.g., REINVENT, MolGPT)

The development of generative artificial intelligence for chemistry necessitates a clear conceptual distinction between two related but divergent research paradigms: de novo molecular generation and molecular optimization. This guide frames the discussion of benchmark datasets and platforms within this critical distinction.

- De Novo Molecular Generation aims to produce novel, chemically valid molecular structures from scratch, typically by learning from a broad distribution of chemical space. The primary objective is diversity, novelty, and fundamental validity.

- Molecular Optimization starts from one or more existing lead compounds with a defined property profile (e.g., moderate activity) and iteratively modifies them to improve specific, often multiple, objective functions (e.g., potency, solubility, synthetic accessibility). The objective is targeted, stepwise improvement.

While both utilize generative models, their success metrics, benchmark datasets, and software platforms are tailored to their respective goals. This guide provides a technical deep dive into the datasets for evaluation and the platforms for implementation central to both fields.

Core Benchmark Datasets

The performance of generative models is quantified against standardized datasets. The tables below categorize them by their primary research paradigm.

Table 1: Foundational Datasets for Training & Benchmarking De Novo Generation

| Dataset Name | Source & Size | Primary Use | Key Metrics Assessed |

|---|---|---|---|

| ZINC20 | Public, ~1.3B commercially available compounds | Training and validation for broad chemical space learning. | Chemical validity, uniqueness, internal diversity, fidelity to chemical space. |

| ChEMBL | Public, >2M bioactive molecules with annotations | Training conditional generators or benchmarking bio-like property distributions. | Ability to generate molecules with bio-relevant property ranges (MW, LogP, etc.). |

| GuacaMol | Benchmark Suite (based on ChEMBL) | Standardized benchmarks for de novo generation. | Validity, uniqueness, novelty, diversity, and distribution-learning for specific properties. |

| MOSES | Benchmark Suite (based on ZINC) | Standardized benchmarks for drug-like molecular generation. | Similar to GuacaMol, with emphasis on penalizing unrealistic molecules. |

Table 2: Key Datasets for Benchmarking Molecular Optimization