Cost-Effective Quantum Chemistry: Modern Strategies for Accelerating Molecular Property Calculations in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on reducing the computational cost of evaluating molecular properties.

Cost-Effective Quantum Chemistry: Modern Strategies for Accelerating Molecular Property Calculations in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on reducing the computational cost of evaluating molecular properties. We explore the foundational bottlenecks in traditional quantum chemical methods, detail cutting-edge algorithmic and hardware-accelerated solutions, offer practical troubleshooting for cost/accuracy trade-offs, and present validation frameworks for comparing method performance. The content synthesizes the latest advancements in machine learning potentials, transfer learning, and cloud-scale computing to enable faster, cheaper, and scalable in-silico screening and property prediction in biomedical research.

Why Molecular Simulations Are So Expensive: Understanding the Computational Bottlenecks

Technical Support Center: Troubleshooting Guides and FAQs

Q1: My Hartree-Fock (HF) calculation is failing to converge with a "SCF convergence failure" error for a medium-sized organic molecule (~50 atoms). What are the primary troubleshooting steps?

A: This is a common issue. Follow this protocol:

- Check Initial Guess: Use a better initial density matrix. Switch from the default

Hückelguess toCore Hamiltonianor, preferably, use aFragmentguess if your code supports it. - Modify SCF Algorithm: Increase the

DIISspace size (e.g., from 6 to 10) and consider enablingADIISorEDIISfor difficult cases. Alternatively, switch to a slower but more robustGDM(Gradient Descent Minimization) algorithm. - Dampening: Apply a damping factor (e.g., 0.5) for the first few iterations to prevent large oscillations in the density.

- Basis Set: Verify your basis set is appropriate. Using a large, diffuse basis set on all atoms can cause linear dependence issues. Consider removing diffuse functions on non-critical atoms.

- Geometry: Re-check your molecular geometry for unrealistic bond lengths or angles.

Q2: When attempting a CCSD(T) calculation on a transition metal cluster, I receive an "out of memory" error. How can I reduce the memory footprint?

A: CCSD(T) scales as O(N⁷), making memory a critical bottleneck. Implement these strategies:

- Integral Direct/Disk: Use a

directorsemi-directalgorithm that recomputes electron repulsion integrals (ERIs) on-the-fly rather than storing them in memory. This trades memory for increased CPU time. - Frozen Core Approximation: Freeze the innermost molecular orbitals (e.g., 1s for C, N, O; up to 3d for transition metals). This dramatically reduces the number of active orbitals (N).

- Local Correlation Methods: If available, switch to a local coupled cluster method (e.g., LCCSD(T)). These methods scale asymptotically lower (O(N⁵) or better) by exploiting the locality of electron correlation.

- Parallelization & Chunking: Distribute the calculation across more compute nodes. Some codes allow "chunking" the T3 amplitude calculation.

- Basis Set Reduction: Use a smaller basis set for the initial correlation treatment, then apply a correction (e.g., MP2) with a larger basis.

Q3: My DFT calculation for a large protein-ligand system (>5000 basis functions) is proceeding extremely slowly. What are the key performance optimizations?

A: For large systems in DFT (formally O(N³)), focus on linear-scaling techniques:

- Enable Linear-Scaling DFT: Use codes that implement

LinKorONETEP. Ensure the "linear scaling" or "sparse matrix" option is activated. - Adjust Cutoffs: Increase integral screening thresholds (

GRID_XC,SCHWARZ) and adjust density fitting (RI-J,RIJK) auxiliary basis set cutoffs. A balanced setting is crucial. - Parallelization Strategy: Use

MPIfor distributed memory parallelization across multiple nodes, not just OpenMP on a single node. Ensure efficient load balancing. - Sparse Solver: Confirm the use of a sparse linear algebra solver for the Kohn-Sham equations.

- Solvent Model: If using an implicit solvent model (e.g., PCM), switching to a faster model like

SMDor a linear-scalingCOSMOimplementation can help.

Q4: For Full CI or selected CI (e.g., DMRG, FCIQMC) calculations, the wavefunction file size is unmanageable. How is this handled?

A: These methods have exponential (e^N) scaling in wavefunction complexity.

- Truncation: Use a

weightorenergythreshold to truncate the configuration space. Keep only determinants with coefficients > 1e-5, for example. - Compressed Storage: Utilize libraries like

SHCIorDMRGthat store the wavefunction in a compressed, sparse format (e.g., as a matrix product state). - On-the-Fly Generation: Methods like

FCIQMCdo not store the full wavefunction but sample it via a stochastic walk. - Active Space Selection: Carefully restrict the active space (number of electrons and orbitals) using CASSCF or automated tools. The table below shows the explosive growth.

Quantitative Data on Configuration Interaction Scaling

| Method | Formal Scaling | Active Electrons/Orbitals (10e,10o) | Approx. # of Determinants | Active Electrons/Orbitals (16e,16o) | Approx. # of Determinants |

|---|---|---|---|---|---|

| CISD | O(N⁶) | (10,10) | ~ 6.4 x 10⁴ | (16,16) | ~ 2.5 x 10⁸ |

| Full CI | O(e^N) | (10,10) | ~ 8.5 x 10⁷ | (16,16) | ~ 2.3 x 10¹⁵ |

Experimental Protocol: Benchmarking Computational Cost Reduction

- Objective: Compare the accuracy vs. cost trade-off between a canonical CCSD(T) calculation and a local LCCSD(T) approximation for a model system (e.g., a hydrocarbon chain).

- Software: Use a quantum chemistry package with both capabilities (e.g.,

Psi4,PySCF, orORCA). - Procedure:

- System Generation: Optimize the geometry of octane (C₈H₁₈) and hexadecane (C₁₆H₃₄) at the B3LYP/6-31G* level.

- Baseline Calculation: Run a canonical

CCSD(T)/cc-pVDZcalculation on octane. Record the total wall time, peak memory usage, and correlation energy. - Local Calculation: Run an

LCCSD(T)/cc-pVDZcalculation on octane with default local thresholds. Record the same metrics. - Accuracy Check: Compute the relative energy error (%) of the local method vs. the canonical result.

- Scaling Test: Repeat steps 2-4 for hexadecane.

- Analysis: Plot wall time vs. number of atoms (or basis functions) for both methods to visualize the scaling difference.

Research Reagent Solutions (Computational Toolkit)

| Item/Software | Function | Key Consideration for Cost Reduction |

|---|---|---|

| Basis Set Library (e.g., EMSL, Basis Set Exchange) | Pre-defined mathematical functions representing atomic orbitals. | Use polarized double/triple-zeta (e.g., cc-pVDZ/TZ) for accuracy; employ basis set extrapolation. |

| Pseudopotentials/Effective Core Potentials (ECPs) | Replace core electrons with an effective potential, reducing the number of explicit electrons. | Essential for heavy atoms (beyond Kr). Use small-core ECPs for higher accuracy in valence properties. |

| Density Fitting (RI) Auxiliary Basis Sets | Approximate 4-center electron repulsion integrals using 3-center integrals, reducing O(N⁴) steps. | Must be matched to the primary basis set (e.g., cc-pVDZ → cc-pVDZ-RI). Critical for DFT and MP2. |

Linear-Scaling SCF Solver (e.g., in ONETEP, CP2K) |

Solves Kohn-Sham equations with O(N) effort using sparse matrix algebra and localization. | Required for systems >10,000 atoms. Performance depends on system bandgap. |

Local Correlation Module (e.g., DLPNO-CCSD(T) in ORCA, LCCSD in Psi4) |

Limits correlation treatment to local electron pairs, reducing scaling to near O(N). | Accuracy controlled by TCut (pair) and TCutDO (domain) thresholds. Benchmark for your system type. |

Fragment-Based Method Code (e.g., FMO in GAMESS, MFCC) |

Divides a large system into smaller fragments, computed separately and combined. | Ideal for non-covalent interactions in very large systems like proteins. Error depends on fragmentation scheme. |

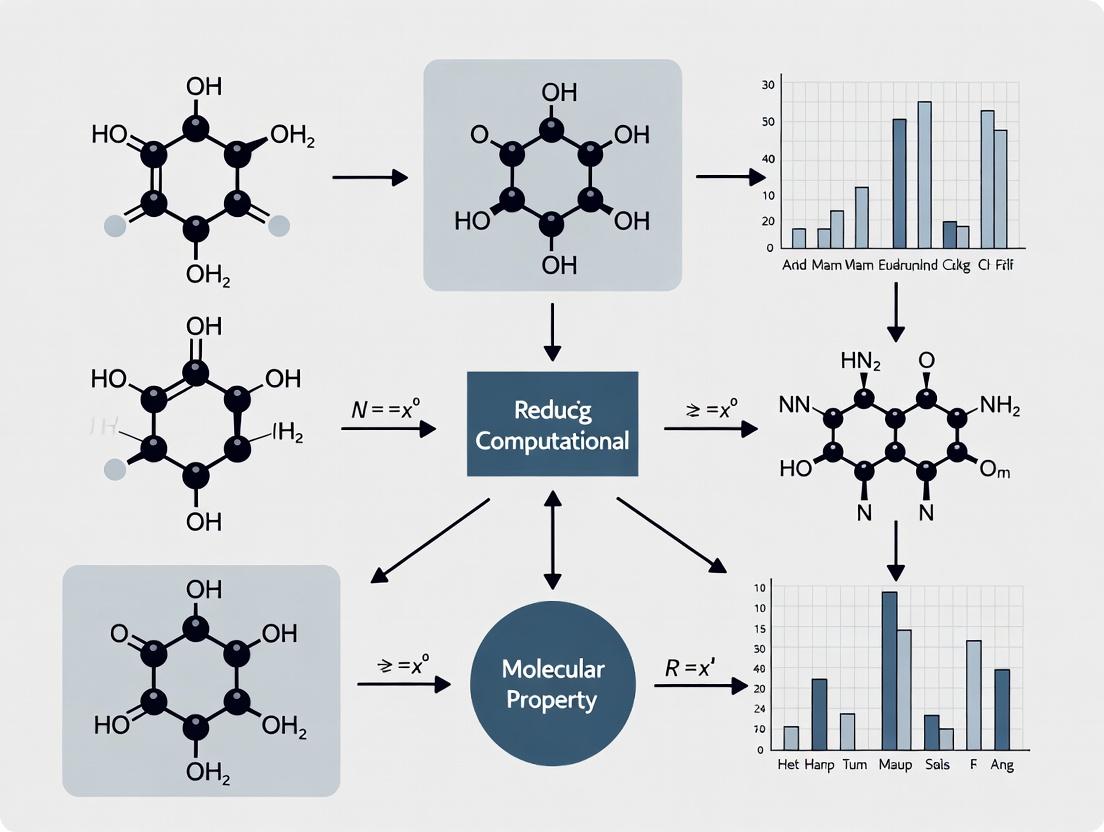

Diagram: Workflow for Tackling the Scaling Problem

Diagram: Hierarchy of Ab Initio Method Scalings

Troubleshooting Guides & FAQs

Q1: My CCSD(T) calculation fails with an "out of memory" error, even for a small molecule. What are the most effective ways to reduce the memory cost? A: This error stems from the steep scaling (N⁷) of the coupled-cluster method. First, verify your basis set. Using a large basis like aug-cc-pVQZ on a 20-atom system is prohibitive. Implement these steps:

- Basis Set Reduction: Switch to a smaller, correlation-consistent basis (e.g., from aug-cc-pVQZ to aug-cc-pVTZ). The memory requirement scales with the number of basis functions (N) to the 4th power.

- Frozen Core Approximation: By default, freeze the core electrons. This significantly reduces the number of active orbitals.

- Use a Local Correlation Method: If available, employ domain-based local coupled-cluster (DLPNO-CCSD(T)), which reduces scaling to near linear.

Protocol for Memory-Efficient CCSD(T):

- Perform a HF/DFT optimization with a moderate basis (e.g., cc-pVDZ).

- Run a single-point energy calculation at CCSD(T) level with

frozen_core = on. - Start with the cc-pVTZ basis. If memory fails, step down to cc-pVDZ for a cost reference.

- Consider incremental approaches:

CCSD(T)/cc-pVDZ // DFT/cc-pVTZ.

Q2: When calculating Gibbs free energy, how do I choose between conformational sampling and a higher-level theory for my limited computational budget? A: This is a key trade-off. For flexible molecules, neglecting conformational sampling often introduces errors (>2 kcal/mol) that dwarf the electronic energy error from a moderate method. A systematic protocol is recommended.

Protocol for Balanced Accuracy/Cost in Free Energy:

- Conformational Search: Use a fast molecular mechanics (MM) or semi-empirical (PM7, GFN2-xTB) method to generate an ensemble. Apply an energy window (e.g., 10 kcal/mol).

- Low-Level Optimization: Optimize all conformers within a DFT functional (e.g., B3LYP-D3(BJ)) with a small basis (e.g., 6-31G*).

- Boltzmann Population: Calculate single-point energies at a better level (e.g., ωB97X-D/def2-SVP) and compute Boltzmann weights at your target temperature.

- High-Level Refinement: For the top 2-3 conformers contributing >90% population, perform a final single-point at your highest affordable theory (e.g., DLPNO-CCSD(T)/def2-TZVP).

Q3: For DFT calculations, when does increasing the basis set size yield diminishing returns compared to the computational cost? A: The cost of a DFT calculation scales formally as O(N³), but practically with N²–N³, where N is the number of basis functions. The error reduction becomes asymptotic. The table below summarizes the trade-off for a typical organic molecule.

Table 1: Basis Set Cost-Accuracy Trade-off for DFT (Example: C₇H₁₀O₂)

| Basis Set | Approx. No. of Functions | Relative CPU Time | Expected Error in E vs. CBS (kcal/mol) | Best For |

|---|---|---|---|---|

| 6-31G* | ~150 | 1x (Reference) | 10 - 20 | Geometry optimization, initial scans |

| def2-SVP | ~200 | 2x | 5 - 10 | Standard single-point, vibrational freq |

| def2-TZVP | ~400 | 8x | 2 - 5 | Accurate single-point, property calc |

| def2-QZVP | ~700 | 30x | ~1 | Benchmarking, charge density |

Protocol for Systematic Basis Set Selection:

- Optimize geometry with a double-zeta basis (e.g., 6-31G* or def2-SVP).

- Perform single-point energy calculations with a series of basis sets (e.g., SVP, TZVP, QZVP).

- Fit the results to a completion function (e.g., 1/X³) to extrapolate to the Complete Basis Set (CBS) limit and quantify convergence.

Q4: My drug-like molecule has many rotatable bonds. What is a cost-effective workflow to ensure my calculated binding affinity is conformationally robust? A: The greatest error often comes from using a single, non-representative conformation. A multi-level filtering workflow is essential.

Diagram Title: Multi-Level Conformational Sampling Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Method Tools for Cost-Reduced Calculations

| Tool Name | Type | Primary Function | Key Benefit for Cost Reduction |

|---|---|---|---|

| GFN-xTB | Software | Semi-empirical quantum mechanics | Ultra-fast conformational search and pre-optimization. |

| CREST | Software | Conformer-Rotamer Ensemble Sampling | Automated, physics-based sampling using GFN-xTB. |

| DLPNO-CCSD(T) | Method | Local correlation coupled-cluster | Near-chemical-accuracy for large systems (100+ atoms). |

| RI/DF-JK Approx. | Approximation | Resolution of Identity, Density Fitting | Speeds up DFT integral evaluation by 10x or more. |

| Frozen Core Approximation | Methodological Setting | Excludes core electrons from correlation | Reduces active space size in post-HF methods. |

| implicit Solvent (SMD, PCM) | Model | Continuum solvation | Avoids costly explicit solvent sampling for bulk effects. |

| Composite Methods (e.g., CBS-QB3) | Multi-level Scheme | Extrapolates to high accuracy | Strategically combines theory levels for best cost/accuracy. |

Welcome to the Technical Support Center for Computational Molecular Evaluation. This guide provides troubleshooting and FAQs for researchers navigating the trade-off between high-throughput screening (HTS) and high-accuracy calculation within the thesis framework of Reducing computational cost in molecular property evaluation research.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My high-throughput virtual screening (HTVS) campaign is returning an unmanageably high number of false-positive hits. What are the primary causes and mitigation strategies?

A1: This is a classic symptom of the speed/accuracy trade-off. Common causes and solutions are summarized below.

| Cause | Diagnostic Check | Recommended Action |

|---|---|---|

| Low-fidelity force field/score function | Compare a random subset of hits with a higher-level method (e.g., DFT vs. MM-GBSA). | Implement a multi-tiered screening funnel. Use fast methods first, then re-score top hits with more accurate (but slower) calculations. |

| Overly simplistic conformational sampling | Check if hit molecules have strained geometries or improbable binding poses. | Integrate brief MD simulations (10-50 ps) or enhanced sampling for top HTVS hits before progression. |

| Inadequate chemical space filtering | Analyze hit list for pan-assay interference compounds (PAINS) or undesirable properties. | Apply strict ligand-based filters (e.g., Lipinski's rules, PAINS filters, toxicophore alerts) before the primary HTVS run. |

Q2: When moving from semi-empirical (high-throughput) to DFT (high-accuracy) calculations for excitation energies, my results diverge significantly. How should I validate and correct this?

A2: This indicates a potential failure of the lower-level method for your specific chemical class. Follow this protocol:

- Validation Set Protocol: Select a benchmark set of 20-30 small molecules with experimentally known excitation energies from databases like NIST.

- Multi-level Calculation: Compute excitation energies for this set using both your HT method (e.g., DFTB, ZINDO) and your high-accuracy target method (e.g., TD-DFT with a tuned functional).

- Calibration: Create a correlation plot and calculate systematic errors (Mean Absolute Error, MAE). If a consistent offset is observed, a linear correction can be applied to future HT results for similar compounds.

Q3: My molecular dynamics (MD) simulations for protein-ligand binding are computationally expensive, limiting throughput. What are the best methods to reduce cost while maintaining reliability?

A3: Implement a hybrid workflow that combines speed and accuracy.

| Strategy | Typical Cost Reduction | Potential Accuracy Impact | Best For |

|---|---|---|---|

| GPU-accelerated MD (e.g., OpenMM, AMBER GPU) | 5-10x faster than CPU | No impact | All production MD. |

| Coarse-grained (CG) simulations (e.g., MARTINI) | 100-1000x faster | Loss of atomic detail, good for large assemblies. | Initial binding events, membrane protein dynamics. |

| Enhanced Sampling (e.g., Well-Tempered Metadynamics) | Reduces required simulation time by driving sampling. | Correctly implemented, it improves accuracy per unit time. | Calculating binding free energies (ΔG), conformational changes. |

Experimental Protocol: Multi-Tiered Binding Affinity Funnel

This protocol is designed to maximize the discovery rate while managing computational cost.

Tier 1: Ultra-High-Throughput Docking.

- Method: Use a fast docking program (e.g., AutoDock Vina, FRED).

- Library: Screen an ultra-large library (1M+ compounds).

- Action: Retain the top 10,000 compounds based on docking score.

Tier 2: MM-GBSA/PBSA Re-scoring.

- Method: Perform constrained minimization and single-point MM-GBSA calculations on the top 10,000 docking poses.

- Action: Re-rank compounds. Retain the top 1,000 based on MM-GBSA ΔG.

Tier 3: Short, Explicit-Solvent MD & Re-scoring.

- Method: Solvate the top 1,000 complexes. Run a short MD simulation (5-10 ns per system) to relax poses. Perform MM-GBSA on multiple snapshots.

- Action: Identify the top 100 stable complexes with consistent favorable binding scores.

Tier 4: High-Accuracy Free Energy Calculation.

- Method: Apply alchemical free energy methods (e.g., FEP, TI) or longer, well-equilibrated MD simulations to the top 100 hits.

- Output: A final ranked list of 20-30 compounds with predicted binding affinities within ~1 kcal/mol of accuracy.

Title: Multi-Tier Computational Screening Funnel Workflow

Title: HTS vs. HAC: Attribute Comparison & Hybrid Strategy Direction

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Software | Category | Primary Function in Cost-Reduction Research |

|---|---|---|

| AutoDock Vina/QuickVina 2 | Docking Software | Provides a very fast, open-source docking engine for initial Tier 1 screening of massive libraries. |

| GPU Computing Cluster | Hardware | Essential for accelerating MD simulations and quantum chemistry calculations, directly reducing wall-clock time. |

| Generalized Born (GB) Model | Implicit Solvent | Enables rapid MM-GBSA/PBSA calculations for Tier 2 re-scoring, avoiding explicit solvent cost. |

| OpenMM | MD Engine | A highly optimized, GPU-first MD toolkit for running fast, production-level simulations (Tiers 3 & 4). |

| Alchemical Free Energy Software (e.g., FEP+, CHARMM) | Calculation Method | Provides high-accuracy binding free energies (Tier 4) with controlled error, replacing costly wet-lab screening. |

| Benchmark Dataset (e.g., PDBbind, SAMPLE) | Validation Data | Critical for calibrating and validating multi-tier workflows, ensuring accuracy is maintained despite cost-cutting. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My DFT (PBE0/def2-SVP) calculation on a 50-atom drug-like molecule is taking over 72 hours on a standard 28-core node. What are the primary bottlenecks and immediate mitigation steps?

A: The primary bottlenecks are typically:

- Coulomb Matrix Diagonalization (O(N³) scaling): Dominates for systems with >1000 basis functions.

- Dense Grid Integration for XC: Cost scales linearly with grid points, which is high for large, flexible molecules.

- SCF Convergence Issues: Common for molecules with small HOMO-LUMO gaps, leading to wasted cycles.

Immediate Mitigation Protocol:

- Increase SCF Convergence Aids: Use

ADIISorFermibroadening (e.g.,0.05 eV) in the initial cycles. - Employ a Linear Scaling Exchange Approximation: Switch to

RI-JKwith appropriate auxiliary basis sets (def2/JKfor def2-SVP). This reduces the Coulomb/exchange cost to near O(N²). - Reduce Integration Grid: Temporarily switch from

Grid5toGrid4for testing. The error introduced (< 0.1 kcal/mol for energies) is often acceptable for geometry steps. - Utilize Resolution-of-the-Identity (RI) for Coulomb (RI-J): Mandatory for DFT with diffuse basis sets.

Q2: When moving from DFT to CCSD(T)/def2-TZVP for binding energy validation, the job fails with "Out of Memory" on a node with 512 GB RAM for a 30-atom complex. How can I estimate memory needs and reduce them?

A: CCSD(T) memory scales as O(o²v²), where o and v are occupied and virtual orbitals. For your system, a rough estimate is: Memory (GB) ≈ (N_basis⁴ * 16 bytes) / (1024³). With ~500 basis functions, this can exceed 1 TB.

Reduction Protocol:

- Employ Cholesky Orbital Decomposition (CD): Use

cc-pVTZ-RIbasis sets. This approximates the electron repulsion integrals and reduces memory to O(o²v). - Utilize Frozen Core Approximation: Freeze core orbitals (e.g.,

ccsd(t)/def2-tzvp frozen_core = 5). This reducesosignificantly. - Switch to Local Correlation Methods (DLPNO-CCSD(T)): This is the most effective step. Use the protocol:

! DLPNO-CCSD(T) def2-TZVPP def2-TZVPP/C TightPNO- Set

TightPNOthresholds for chemical accuracy (< 1 kcal/mol error). - This reduces scaling to near-linear and memory to O(N).

Q3: For high-throughput virtual screening of 10,000 compounds, what is the optimal cost/accuracy trade-off between GFN2-xTB, PM7, and low-cost DFT (r²SCAN-3c)?

A: The choice depends on the target property. Below is a quantitative comparison based on recent benchmarks (2023-2024).

Table 1: Cost vs. Accuracy for High-Throughput Methods

| Method | Avg. Time per Molecule (50 atoms) | Avg. Error vs. CCSD(T)/CBS (Geometry) | Avg. Error vs. Exp. (ΔG_solv) | Primary Use Case in Screening |

|---|---|---|---|---|

| GFN2-xTB | 5-30 sec | ~0.05 Å (RMSD) | > 3 kcal/mol | Geometry pre-optimization, conformer generation |

| PM7 | 10-60 sec | ~0.10 Å (RMSD) | > 5 kcal/mol | Rapid crude filtering, very large libraries (>100k) |

| r²SCAN-3c | 10-30 min | ~0.02 Å (RMSD) | ~1.5 kcal/mol | Lead series refinement, final ranking |

| ωB97X-D4/def2-mSVP | 20-60 min | ~0.01 Å (RMSD) | ~1.0 kcal/mol | High-accuracy ranking for top 100-1000 hits |

Recommended Protocol:

- Stage 1 (Filtering): Use GFN2-xTB to pre-optimize all 10,000 structures and filter based on crude scoring.

- Stage 2 (Refinement): Re-optimize and score the top 1000 hits with r²SCAN-3c in implicit solvent (e.g., SMD).

- Stage 3 (Validation): Apply DLPNO-CCSD(T) or DFT-D4 to the top 100 hits for critical binding/interaction energies.

Q4: My alchemical free energy perturbation (FEP) simulations for protein-ligand binding are prohibitively expensive. What are the key cost drivers and proven strategies to improve throughput?

A: The main cost drivers are: 1) System size (>100,000 atoms), 2) Long equilibration times, 3) Many lambda windows (12-24), 4) Need for replica exchange.

Accelerated FEP Protocol (using OpenMM or GROMACS):

- Reduce System Size: Use a strict 10-12 Å cutoff from the ligand, not the entire protein.

- Employ Hydrogen Mass Repartitioning (HMR): Allows a 4 fs integration timestep. Use the

parmedtool to apply HMR to your topology. - Use a Soft-Core Potential: This allows fewer lambda windows (e.g., 12 instead of 24). Implement

sc-alpha=0.5, sc-power=1, sc-sigma=0.3in your molecular dynamics (MD) engine. - Leverage GPUs: This is non-negotiable for throughput. A single RTX 4090 can be 20x faster than a 28-core CPU for explicit solvent FEP.

- Run Multiple Lambda Windows Concurrently: Use a job array to run all windows in parallel across a cluster, not sequentially.

Experimental & Computational Protocols

Protocol 1: DLPNO-CCSD(T) Binding Energy Benchmark

- Purpose: Obtain "gold-standard" interaction energy for a ligand-fragment complex.

- Software: ORCA 5.0+

- Steps:

- Input Prep: Use

def2-TZVPPanddef2-TZVPP/Cbasis sets. Ensure geometry is optimized atr²SCAN-3clevel. - Calculation Block:

- Post-Processing: Use the

echocommand to extract theTotal E(CCSD(T))and apply the basis set superposition error (BSSE) correction via theAutoAuxprocedure.

- Input Prep: Use

Protocol 2: r²SCAN-3c Geometry Optimization for Drug-like Molecules

- Purpose: Reliable, low-cost structure optimization.

- Software: Any (ORCA, Gaussian, Q-Chem)

- Steps:

- Start from a

GFN2-xTBpre-optimized structure. - Use the

r²SCAN-3ccomposite method (r²SCAN functional +def2-mTZVPbasis +gCP/D4corrections). - Employ

RI-Jwith thedef2/Jauxiliary basis. - Convergence Criteria:

OPT(tight),SCF(tight), andGrid6for final energy.

- Start from a

Visualizations

Diagram 1: Computational cost hierarchy in drug discovery.

Diagram 2: Multi-fidelity screening workflow for cost reduction.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Hardware Solutions for Cost-Effective Quantum Chemistry

| Item | Function / Purpose | Example/Note |

|---|---|---|

| ORCA | Quantum chemistry suite with efficient DLPNO, DFT, and semi-empirical methods. | Free for academics. Excellent for single-node calculations. |

| Psi4 | Open-source quantum chemistry, strong in CCSD(T) and automatic derivative code. | Free. Good for scripting complex workflows. |

| Gaussian 16 | Industry-standard for DFT, stable, wide range of methods and solvents. | Commercial license required. Robust for production work. |

| xtb (GFNn-xTB) | Semi-empirical extended tight-binding program for very fast geometry optimizations. | Free. Critical for pre-optimizing thousands of structures. |

| OpenMM | GPU-accelerated MD & FEP library. Dramatically reduces sampling cost. | Free. Python API. Integrates with TorchMD for ML. |

| GPU (NVIDIA) | Critical hardware accelerator for FEP, MD, and ML inference. | RTX 4090 (consumer) or A100/A6000 (datacenter). |

| def2 Basis Sets | Balanced Gaussian-type orbital basis sets for elements H-Rn. | def2-SVP for screening, def2-TZVPP for final. |

| CCCBDB / NIST | Computational Chemistry Comparison & Benchmark Database. | Essential for validating methods and error expectations. |

| ChemCompute | Web-based platform for managing computational chemistry jobs and workflows. | Free. Reduces setup overhead and improves reproducibility. |

| ANI-2x / TorchANI | Machine learning potentials for near-DFT accuracy at MD speed. | Free. For long-time MD where ab initio MD is impossible. |

Practical Strategies for Cost Reduction: Algorithms, Hardware, and Hybrid Approaches

Leveraging Machine Learning Potentials (MLPs) and Force Fields for Pre-Screening

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During MD simulation setup with an MLP, the energy minimization fails with a "NaN" (Not a Number) error in the forces. What are the likely causes and solutions? A: This is often caused by extrapolation into unsampled regions of chemical space or poor initial geometry.

- Cause 1: The atomic configuration is far outside the training data distribution of the MLP.

- Solution: Pre-optimize the initial molecular structure using a traditional force field (e.g., GAFF2, UFF) before applying the MLP. This brings the configuration closer to a reasonable energy basin.

- Cause 2: A clash or unrealistically short bond in the initial structure.

- Solution: Visually inspect the initial coordinates. Use a protocol with steepest descent minimization first, followed by a more advanced algorithm.

- Cause 3: Incompatibility between the system's atom types and the MLP's supported chemical elements.

- Solution: Verify that all elements (e.g., transition metals, specific halogens) in your system are explicitly listed as supported in the MLP's documentation (e.g., MACE, NequIP, ANI).

Q2: When using a classical force field for pre-screening, how do I handle ligands or residues with missing parameters? A: Parameter missing is a common bottleneck. Follow this systematic protocol:

- Identify: The simulation engine (e.g., GROMACS, AMBER) will log the exact missing atom/bond type.

- Search Repositories: Check if parameters exist in the CGenFF (for CHARMM), GAFF2 (for AMBER), or ATB databases.

- Generate: Use automated tools like

antechamber(for GAFF) orCGenFF serverto generate initial parameters. Always note the penalty scores; high penalties indicate poor analogy and unreliable parameters. - Validate: Perform a short, gas-phase geometry optimization and vibrational frequency calculation on the isolated molecule. Compare the resulting conformational energy and bond lengths with a quick DFT (e.g., ωB97X-D/6-31G*) calculation as a benchmark. Significant deviations (>5% in key bonds) require manual refinement.

Q3: My MLP inference is unexpectedly slow, negating the pre-screening efficiency gains. How can I improve performance? A: MLP inference speed depends on hardware and software setup.

- Check Hardware Utilization: Ensure you are using a GPU if the MLP implementation supports it (most modern ones like Allegro, MACE do). Use

nvidia-smito confirm GPU usage and memory. - Optimize Batch Size: For screening thousands of configurations, batch them into the largest size your GPU memory allows. This dramatically improves throughput.

- Model Format: Use the optimized, deployed version of the model (e.g., TorchScript for PyTorch models) rather than the standard training checkpoint.

- Software Stack: Update to the latest versions of the MLP package (e.g.,

nequip,mace) and PyTorch/CUDA drivers for performance fixes.

Q4: How do I rigorously validate that a faster, pre-screening method (FF or low-fidelity MLP) maintains correlation with high-fidelity reference data (e.g., CCSD(T), DLPNO-CCSD(T))? A: Implement a standardized validation protocol for your chemical space of interest.

- Curate a Benchmark Set: Select 50-100 diverse, representative molecular structures or reaction intermediates relevant to your study.

- Calculate Reference Data: Compute single-point energies or key geometric parameters (bond lengths, angles) using your high-fidelity method for all structures.

- Calculate Screening Data: Compute the same properties using the pre-screening method (FF or MLP).

- Statistical Analysis: Calculate the following metrics and populate a validation table (see example below). The Mean Absolute Error (MAE) should be within an acceptable threshold for your screening purpose (e.g., < 3 kcal/mol for rough energy ranking).

Data Presentation: Validation Metrics for Pre-Screening Methods

Table 1: Example Performance Metrics of Different Methods on a Benchmark Set of Small Organic Molecules (Energy in kcal/mol, Distance in Å).

| Method | Type | Speed (ms/calc) | Energy MAE vs. CCSD(T) | Bond Length MAE | Max Energy Error | Suitable for Phase |

|---|---|---|---|---|---|---|

| ANI-2x | MLP | ~10 (GPU) | 1.2 | 0.012 | 5.1 | Gas-Phase Pre-Screen |

| GFN2-xTB | Semi-empirical QM | ~100 (CPU) | 4.5 | 0.025 | 12.3 | Large System Geometry |

| GAFF2 | Classical FF | ~1 (CPU) | N/A | 0.045 | N/A | Solvated MD Pre-Screen |

| DFT (ωB97X-D) | Ab-initio | ~3600 (CPU) | 0.8 (Ref) | 0.008 (Ref) | - | Reference/Validation |

*N/A: Classical FFs do not provide quantum electronic energies directly comparable to CCSD(T).

Experimental Protocols

Protocol: Two-Tiered Pre-Screening for Catalyst Candidate Selection Objective: To identify the most promising ligand candidates for a transition-metal catalyzed reaction from a library of 10,000 compounds.

Materials: Ligand library (SMILES strings), MLP (e.g., MACE-MP-0), semi-empirical code (xTB), DFT software (ORCA, Gaussian), high-performance computing cluster.

Methodology:

- Tier 1 - Ultra-Fast Filtering (MLP):

- Generate 3D conformers for each ligand SMILES string using RDKit.

- Use the MLP (e.g., MACE-MP-0, trained on inorganic materials) to perform a single-point energy calculation on each conformer. Note: This step assumes the MLP's training data covers relevant metal-ligand motifs.

- Filter out the bottom 80% of ligands ranked by the relative stability (energy) of their most stable conformer. Pass the top 20% (~2000 ligands) to Tier 2.

Tier 2 - Geometry Refinement & Property Calculation (Semi-empirical QM):

- For each surviving ligand, generate a simplified model complex with the metal center (e.g., Pd(II) square-planar).

- Perform a full geometry optimization and vibrational frequency calculation using the GFN2-xTB method with the

--gfn 2flag to confirm minima (no imaginary frequencies). - Extract key properties: HOMO/LUMO energy, metal-ligand bond length, and ligand binding energy.

- Rank the ~2000 complexes by ligand binding energy. Select the top 5% (~100 complexes) for final high-fidelity DFT evaluation.

Validation (High-Fidelity DFT):

- On the final 100 complexes, perform a rigorous DFT geometry optimization and energy calculation (e.g., using B3LYP-D3(BJ)/def2-SVP with SDD pseudopotential for the metal).

- Compare the ranking from Tier 2 (xTB) with the final DFT ranking. Calculate the Spearman correlation coefficient (ρ). A ρ > 0.7 indicates a robust pre-screening protocol.

Mandatory Visualization

Diagram Title: Two-Tiered Computational Screening Workflow for Reduced Cost

Diagram Title: Troubleshooting Decision Tree for Common Pre-Screening Issues

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Tools for MLP/FF Pre-Screening Research.

| Item Name | Type/Provider | Primary Function in Pre-Screening |

|---|---|---|

| ANI-2x / ANI-2xt | MLP (Roitberg et al.) | Fast, general-purpose MLP for organic molecules and drug-like compounds. Good for initial energy ranking. |

| MACE / MACE-MP | MLP (Batatia et al.) | State-of-the-art MLP for materials and molecules with high accuracy across the periodic table. |

| GFN2-xTB | Semi-empirical QM Code (Grimme) | Rapid geometry optimization and property calculation for systems with thousands of atoms. |

| GAFF2 (General AMBER Force Field) | Classical FF | Standard FF for organic molecules. Used in AMBER and OpenMM for solvated MD pre-screening. |

| OpenMM | MD Simulation Toolkit | Flexible, GPU-accelerated engine for running MD with both classical FFs and imported MLPs. |

| RDKit | Cheminformatics Library | Handles molecule I/O (SMILES), conformation generation, and basic molecular manipulation. |

| ASE (Atomic Simulation Environment) | Python Library | Universal interface for setting up, running, and analyzing calculations from many codes (DFT, MLP, xTB). |

| Pymatgen | Python Library | Advanced structure analysis and generation, particularly robust for periodic materials systems. |

| LAMMPS | MD Simulator | High-performance MD code with growing support for on-the-fly MLP inference via plugins. |

Troubleshooting Guides & FAQs

FAQ: Core Concepts & Selection

Q1: What is the fundamental computational advantage of fragment-based methods over whole-molecule simulation? A: Fragment-based methods decompose a large molecular system into smaller, chemically meaningful fragments (e.g., functional groups, rings). The property of the whole molecule is then approximated by summing the contributions of these fragments and their interactions. This reduces computational cost from O(N^3) or worse (for ab initio methods on the whole molecule) to nearly linear scaling O(N), where N is related to the number of fragments.

Q2: When should I use a molecular embedding method versus a classical fragment decomposition? A: Use classical fragment decomposition (like QSAR descriptors or group contribution methods) for high-throughput screening of known chemical spaces for properties like LogP or molar refractivity. Use modern neural network-based molecular embedding methods when dealing with complex, non-linear property prediction (e.g., biological activity) where the relationship between structure and function is not easily captured by additive fragment rules.

Q3: My fragment-based calculation yields large errors for conjugated systems or molecules with strong intramolecular interactions. What's the likely issue? A: This is a common pitfall. Your fragment definition likely ignores critical inter-fragment interactions (e.g., π-orbital overlap across fragment boundaries, strong hydrogen bonds, or steric strain). You must include interaction correction terms between connected fragments in your model. See the protocol for "Including Pairwise Interaction Corrections" below.

Troubleshooting: Technical Implementation

Q4: During embedding generation, my graph neural network (GNN) fails to distinguish obvious stereoisomers. How can I fix this? A: Standard GNNs are invariant to stereochemistry. You must encode chiral centers explicitly.

- Solution 1: Add node features that specify the Cahn-Ingold-Prelog (CIP) descriptor (R/S) or use 3D coordinate information as an initial node feature.

- Solution 2: Use a message-passing framework that incorporates directional information, such as using bond angles or torsion angles in the edge update function.

Q5: The property prediction from my fragment additive model shows systematic bias for certain molecular weights. What should I check? A: Perform the following diagnostic steps:

- Check Fragment Library Coverage: Ensure your fragment library was trained on a dataset containing similarly sized molecules. A library trained only on small drug fragments may fail for larger macrocycles.

- Validate the Additivity Assumption: Plot residual error vs. molecular weight. A trend indicates non-additive effects scale with size. You may need to introduce a global size-dependent correction term (e.g., a simple polynomial in heavy atom count).

- Audit for Missing Fragment Types: Identify fragments in your test molecules not present in your training set and flag them for manual evaluation.

Experimental Protocols

Protocol 1: Building a Simple Group Contribution Model for LogP

Objective: Predict the octanol-water partition coefficient (LogP) using additive atomic/fragment contributions. Method:

- Data Curation: Obtain a curated dataset of experimental LogP values (e.g., from PHYSPROP). Split into training (80%) and test (20%) sets.

- Fragment Definition: Decompose each molecule into predefined atom/fragment types (e.g., -CH3, >CH2, -OH, -NH2, aromatic C-H).

- Matrix Assembly: Create a matrix X where each row is a molecule, each column is a fragment type, and values are the count of that fragment. The vector y contains experimental LogP values.

- Regression: Solve the linear equation y = Xβ + ε for the contribution vector β using ordinary least squares regression with L2 regularization to prevent overfitting.

- Prediction: For a new molecule, decompose it into fragments, sum the corresponding β values, and add a constant intercept term.

Protocol 2: Including Pairwise Interaction Corrections

Objective: Improve a basic fragment model by accounting for interactions between adjacent fragments. Method:

- Run Base Model: Generate predictions using the simple additive model from Protocol 1.

- Identify Adjacent Fragments: For each molecule, list all pairs of fragments that are directly bonded (1,2-interactions) or separated by one bond (1,3-interactions).

- Create Interaction Matrix: Augment the training matrix X with new columns for each observed interaction pair type (e.g., -OH next to aromatic ring). Populate with counts.

- Refit Model: Perform a new regression on the augmented matrix. The resulting β coefficients for interaction terms will correct for non-additive effects.

- Validation: Compare the Mean Absolute Error (MAE) on the test set before and after adding interaction terms. A significant decrease confirms their importance.

Data Presentation

Table 1: Comparison of Computational Cost for Property Prediction Methods

| Method | Typical Scaling | Time for 1k Molecules* | Accuracy (MAE) on ESOL LogP | Best Use Case |

|---|---|---|---|---|

| DFT (Full Molecule) | O(N³) | ~100-500 hours | ~0.10-0.20 | High-accuracy single-molecule |

| Classical Force Field | O(N²) | ~1-5 hours | ~0.50-1.00 | Conformational sampling |

| Group Contribution (This Guide) | O(N) | < 1 second | ~0.40-0.60 | High-throughput screening |

| GNN Embedding (Inference) | O(N) | ~10-30 seconds | ~0.20-0.35 | Balanced accuracy & throughput |

| Estimated time on a standard research compute node. Accuracy is method-dependent and shown for illustrative comparison. |

Table 2: Example Fragment Contributions (β) for LogP Prediction (Hypothetical Data)

| Fragment Type | Contribution (β) | Interpretation |

|---|---|---|

| cH (aromatic C-H) | +0.23 | Increases hydrophobicity |

| -CH3 | +0.55 | Significant hydrophobic contribution |

| -OH | -1.43 | Strong hydrophilic contribution |

| -NH2 | -1.15 | Hydrophilic contribution |

| -COOH | -0.85 | Hydrophilic (ionizable) |

| Interaction: -OH/aro | -0.35 | H-bonding to π-system reduces hydrophobicity |

Diagrams

Title: Fragment-Based Method Computational Workflow

Title: Molecular Embedding via Graph Neural Network

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Libraries

| Item / Software | Function / Purpose | Key Feature for Cost Reduction |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. | Performs fast molecular fragmentation, descriptor calculation, and fingerprint generation, replacing costly quantum calculations for initial screening. |

| PyTor Geometric / DGL | Libraries for Graph Neural Networks (GNNs). | Enable efficient batch processing of molecular graphs on GPU, dramatically speeding up embedding generation vs. sequential methods. |

| Psi4 | Open-source quantum chemistry package. | Can be used to calculate accurate electronic properties for a curated set of fragments to build a fragment library, avoiding full-molecule DFT. |

| ALFABET (Model) | Pre-trained deep learning model for property prediction. | Provides instant, accurate predictions of small molecule properties using pre-computed molecular embeddings, eliminating runtime simulation. |

| Fragment Library Database | A curated database of pre-computed fragment properties (e.g., energies, partial charges). | The core reagent of fragment-based design. Look-up is O(1), replacing O(N³) calculations for every new molecule. |

| High-Throughput Computing Cluster | Orchestrates parallel calculation of thousands of fragments or molecules. | Enables the "embarrassingly parallel" nature of fragment and embedding methods to be fully exploited. |

Cloud HPC, GPU Acceleration, and Quantum Computing Readiness

Technical Support & Troubleshooting Center

FAQs & Troubleshooting Guides

Q1: My molecular dynamics simulation on a cloud HPC cluster is failing with an "out of memory" error during the energy minimization phase, even with a large instance. What could be the cause? A: This is often due to improper domain decomposition in parallel simulations. The problem size per core exceeds available memory.

- Troubleshooting Steps:

- Check your simulation input file (e.g., for GROMACS, AMBER). Ensure the

-dor-ddflags for domain decomposition are set appropriately. Start by allowing the software to auto-determine decomposition (-dd auto). - Manually adjust the number of grid cells (

-dd x y z) so that the system size divided by the number of cells is manageable per MPI rank. - Monitor memory usage per node using cloud monitoring tools (e.g., AWS CloudWatch, GCP Monitoring) to identify imbalance.

- Consider using a cloud instance type with more memory per core (e.g., memory-optimized instances).

- Check your simulation input file (e.g., for GROMACS, AMBER). Ensure the

Q2: After migrating my density functional theory (DFT) calculations to a GPU-accelerated cloud instance, I see no performance improvement. How do I diagnose this? A: The software must be specifically compiled for GPU offload and configured correctly at runtime.

- Diagnostic Protocol:

- Verify GPU Detection: Run

nvidia-smion the instance to confirm GPU presence and activity. - Check Software Build: Ensure your quantum chemistry package (VASP, Quantum ESPRESSO, etc.) is the GPU-enabled variant. Run the binary with

--helpor-vto check for GPU-related flags. - Inspect Input/Config: Many codes require explicit flags in the input file to enable GPU usage (e.g.,

use_gpu = .TRUE.in CP2K). Consult your software's GPU documentation. - Profile: Use

nvprofornsysto see if kernels are executing on the GPU. Idle GPU time indicates a CPU-bound step or misconfiguration.

- Verify GPU Detection: Run

Q3: When submitting hybrid MPI/OpenMP jobs to a cloud HPC scheduler (Slurm, AWS ParallelCluster), some nodes remain idle. What's wrong with my job script? A: This is typically a mismatch between the resources requested and the tasks launched.

- Solution Guide:

- For Slurm-based systems, ensure

--nodes,--ntasks-per-node, and--cpus-per-taskmultiply to your total desired CPU count. - Example Correct Configuration for 4 nodes, 128 total cores (32 per node), with 4 MPI tasks per node, each using 8 OpenMP threads:

- Check Scheduler Logs: Use

squeueorsacctto examine job state. Review the instance type in your cloud cluster configuration to confirm core count matches your script assumptions.

- For Slurm-based systems, ensure

Q4: What are the first steps to prepare my molecular evaluation algorithm for potential future quantum computing hardware? A: Focus on algorithm design and quantum resource estimation using classical simulators.

- Readiness Protocol:

- Identify Subroutine: Isolate a computationally expensive subroutine (e.g., ground state energy calculation of a small active site).

- Algorithm Mapping: Reformulate the problem into a form amenable to quantum algorithms (e.g., Quantum Phase Estimation, Variational Quantum Eigensolver).

- Classical Simulation: Use development kits (IBM Qiskit, Google Cirq, Amazon Braket) to simulate the quantum circuit classically on small, toy problem sizes.

- Resource Estimation: The simulator will report the estimated number of logical qubits, gate depth, and coherence time required. This highlights the hardware scale needed for a real advantage.

Comparative Performance & Cost Data

Table 1: Benchmark: Free Energy Perturbation (FEP) Simulation for Protein-Ligand Binding (500 ns total)

| Compute Configuration | Instance Type (Sample) | Total Wall-clock Time | Estimated Cloud Cost (USD)* | Key Advantage |

|---|---|---|---|---|

| CPU-only Cluster (Baseline) | c5n.18xlarge (72 vCPU) | 48 hours | ~$350 | Broad software compatibility |

| GPU-Accelerated Single Node | p3.2xlarge (1x V100) | 6 hours | ~$45 | Highest cost-performance for scalable MD |

| GPU-Accelerated Multi-Node (Strong Scaling) | 4x p3.2xlarge | 1.8 hours | ~$55 | Fastest time-to-solution for urgent results |

| Spot/Preemptible Instances (GPU) | p3.2xlarge (Spot) | 6 hours | ~$12 | Lowest absolute cost for fault-tolerant jobs |

*Cost estimates are illustrative based on list pricing in US-East-1 region as of 2023-2024. Actual costs vary by provider, region, and discounts.

Table 2: Quantum Algorithm Resource Estimation for Molecular Orbital Calculation (H₂O)

| Algorithm | Target Molecule | Logical Qubits Required | Estimated Gate Depth | Classical Simulator Runtime (on c6g.16xlarge) |

|---|---|---|---|---|

| Variational Quantum Eigensolver (VQE) | H₂O (min. basis) | 10 | ~1,000 | 15 seconds |

| Quantum Phase Estimation (QPE) | H₂O (min. basis) | 10 | ~1,000,000 | 8 hours (approximation) |

| Classical DFT (Reference) | H₂O (min. basis) | N/A | N/A | < 1 second |

Note: This table highlights the significant overhead of simulating quantum algorithms classically and the nascent stage of quantum advantage for chemistry.

Experimental Protocols

Protocol 1: Benchmarking GPU-Accelerated Molecular Dynamics for Cost Reduction Objective: To determine the optimal cloud GPU instance type for throughput of protein-ligand simulations.

- System Preparation: Prepare a standardized simulation system (e.g., a solvated protein-ligand complex from the PDB).

- Software Setup: Install GPU-enabled MD software (e.g., GROMACS, AMBER) on selected cloud instances (e.g., AWS p3, p4, g5; Azure NCv3/NCv4; Google Cloud a2).

- Benchmark Run: Execute a fixed-length MD simulation (e.g., 10 ns NPT production) using identical input parameters.

- Metrics Collection: Record (a) nanoseconds-per-day performance, (b) total job runtime, (c) instance cost per hour.

- Analysis: Calculate cost-per-nanosecond. Plot performance vs. instance hourly rate to identify the "sweet spot" for your research budget.

Protocol 2: Hybrid Quantum-Classical Workflow Simulation for Energy Evaluation Objective: To prototype and assess a variational quantum algorithm for calculating the bond dissociation energy of a diatomic molecule.

- Problem Definition: Select a molecule (e.g., H₂, LiH). Obtain reference geometry.

- Classical Pre/Post-Processing: Use a classical library (e.g., PySCF) to generate the molecular Hamiltonian in a qubit basis (e.g., via Jordan-Wigner transformation).

- Quantum Circuit Construction: Design a parameterized quantum circuit (ansatz) using Qiskit/Cirq.

- Classical Optimization Loop:

- Run the quantum circuit on a noisy circuit simulator.

- Measure the expectation value of the Hamiltonian (energy).

- Use a classical optimizer (e.g., COBYLA, SPSA) to update circuit parameters to minimize energy.

- Validation: Compare the final, optimized energy with the classical full configuration interaction (FCI) result computed in Step 2 to assess algorithm accuracy.

Diagrams

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Cloud-Enabled Computational Chemistry Research

| Item/Category | Example Specific Solutions | Function in Research |

|---|---|---|

| Cloud HPC Orchestration | AWS ParallelCluster, Azure CycleCloud, Google Cloud HPC Toolkit | Automates deployment and management of scalable, custom HPC clusters in the cloud. |

| Job Scheduler | Slurm, AWS Batch, Azure Batch | Manages distribution and queuing of computational workloads across the cluster. |

| GPU-Accelerated MD Software | GROMACS (CUDA), AMBER (pmemd.cuda), NAMD (CUDA) | Drastically speeds up molecular dynamics and free energy calculations. |

| Quantum Chemistry Packages | Quantum ESPRESSO (GPU), VASP (GPU), PySCF, Q-Chem | Performs ab initio electronic structure calculations; some offer GPU acceleration. |

| Quantum Computing SDKs | IBM Qiskit, Google Cirq, Amazon Braket SDK | Provides tools to design, simulate, and test quantum algorithms for chemistry. |

| Containerization | Docker, Singularity/Apptainer | Ensures software portability and reproducibility across different cloud environments. |

| Data & Workflow Management | Nextflow, Snakemake, AWS Step Functions | Automates multi-step computational pipelines, handling software and data dependencies. |

| Cost Monitoring & Optimization | Cloud Provider Cost Explorer, NetApp Cloud Insights | Tracks spending, identifies cost drivers, and recommends use of spot/ preemptible instances. |

Technical Support Center

Troubleshooting Guide & FAQs

Q1: During fine-tuning of a pre-trained molecular property model, my validation loss is decreasing but my test set performance is poor. What could be the cause? A: This is a classic sign of overfitting to your small, fine-tuning dataset. Recommended actions:

- Check Dataset Splits: For small datasets (< 1000 samples), use stratified splitting or scaffold splitting to ensure chemical space is represented in both training and test sets. Random splitting can lead to data leakage.

- Increase Regularization: Apply dropout with a rate of 0.2-0.5, weight decay (L2 regularization), or early stopping with a patience of 10-20 epochs.

- Reduce Model Complexity: Freeze more layers of the pre-trained model. Start by only unfreezing and fine-tuning the final 1-2 classification/regression layers.

Q2: When loading a pre-trained model (e.g., from Hugging Face Transformers), I get a shape mismatch error for the output layer. How do I resolve this? A: This is expected. Pre-trained models have an output layer sized for their original training task. You must replace it for your new task (e.g., predicting a different molecular property).

- Protocol: Identify the final layer (often named

output,head, orpredictor). In PyTorch, reconstruct the model as:

Q3: My fine-tuning process is unstable, with large fluctuations in loss. How can I stabilize training? A: This often stems from an inappropriate learning rate.

- Protocol: Implement a learning rate schedule. Use a low learning rate (e.g., 1e-5 to 1e-4) for the pre-trained layers and a higher one (e.g., 1e-4 to 1e-3) for the newly added head. Use cosine annealing or linear warmup.

- Code Snippet:

Q4: How do I choose which layers of a pre-trained model to freeze versus fine-tune? A: This depends on dataset size and similarity to the pre-training data. A standard experimental protocol is:

- Freeze all layers, train only the new head for 5 epochs. This establishes a baseline.

- Unfreeze the last n transformer blocks (e.g., last 2 blocks). Train for 10-20 epochs.

- Unfreeze the entire model with a very low learning rate (1e-5) for final "full-model" fine-tuning if data allows.

- Compare validation performance at each stage. See table below for a guideline.

Comparative Performance of Fine-Tuning Strategies

| Strategy | Layers Unfrozen | Dataset Size Required | Typical Use Case | Expected Relative Computational Cost (vs. Training from Scratch) |

|---|---|---|---|---|

| Feature Extraction | 0 (Only new head) | Very Small (50-200) | Target vastly different from pre-training task. | ~5-10% |

| Partial Fine-Tuning | Last 1-4 Blocks | Small to Medium (200-2000) | Target related but not identical to pre-training. | ~15-40% |

| Full Fine-Tuning | All Layers | Medium to Large (2000+) | Target very similar to pre-training task. | ~60-90% |

Q5: I have a very small proprietary dataset (<100 molecules). Can transfer learning still help? A: Yes, but a rigorous protocol is essential to avoid overfitting and obtain reliable estimates.

- Protocol: Nested Cross-Validation with Fixed Hyperparameters:

- Outer Loop (Performance Estimation): Perform 5-fold scaffold split on your full dataset.

- Inner Loop (Model Selection): For each training set of the outer fold, run a separate 3-4 fold split to decide the best fine-tuning strategy (e.g., freeze all vs. last 2 layers). Use a fixed, low learning rate and early stopping.

- Train & Evaluate: Train the model with the selected strategy on the outer training fold and evaluate on the held-out outer test fold.

- Report: The mean performance across all 5 outer test folds is your final estimate. This method provides a more realistic performance assessment on tiny datasets.

Key Experimental Protocol: Benchmarking Fine-Tuning Efficiency

Objective: Quantify the computational savings and performance gain of transfer learning vs. training from scratch for a novel molecular property.

- Models: Select a pre-trained model (e.g., ChemBERTa, MGNN) and an equivalent randomly initialized architecture.

- Data: Prepare your small target dataset (Dtarget, e.g., 500 samples) and identify the large source dataset used for pre-training (Dsource, e.g., 1M samples like ZINC15).

- Baseline (From Scratch): Train the random model on Dtarget. Record total GPU hours (Tscratch) and best validation metric (P_scratch).

- Transfer Learning:

- Load the pre-trained model.

- Replace the output head.

- Fine-tune on D_target using a low learning rate (e.g., 1e-5) and early stopping.

- Record fine-tuning GPU hours (Tft) and best validation metric (Pft).

- Calculation:

- Performance Delta: ΔP = Pft - Pscratch

- Computational Cost Saving: Cost Saved = 1 - (Tft + Tpretrainload) / Tscratch.

- Note: Tpretrainload is the one-time cost of pre-training, amortized across many users. For a single lab, assume Tpretrainload = 0, emphasizing the benefit of using publicly available models.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Transfer Learning for Molecular Property Prediction |

|---|---|

| Pre-trained Model Repositories (Hugging Face, Chemformer) | Source of large, foundational models pre-trained on massive chemical corpora (e.g., PubChem, ZINC), providing initialized feature representations. |

| Scaffold Splitting Scripts (e.g., from DeepChem) | Ensures chemically distinct molecules are separated into training/test sets, providing a rigorous evaluation for small datasets and preventing optimistic bias. |

| Learning Rate Finder/Linear Warmup Scheduler | Critical for stabilizing fine-tuning. Gradually increases the learning rate at the start of training to prevent early divergence from the pre-trained weights. |

| Molecular Featurizer Alignment Tool | Ensures the input representation (e.g., SMILES, graph) for your data exactly matches the format the pre-trained model was trained on. |

| Low-Rank Adaptation (LoRA) Libraries | Advanced technique that injects trainable rank-decomposition matrices into transformer layers, drastically reducing the number of parameters to fine-tune and memory footprint. |

Visualizations

Title: Transfer Learning and Fine-Tuning Workflow for Molecular Models

Title: Rigorous Evaluation Protocol for Small Dataset Fine-Tuning

Technical Support Center: Troubleshooting & FAQs

This support center addresses common issues encountered when implementing cost-reduction strategies for molecular property predictions. All content is framed within the broader thesis of reducing computational cost in molecular property evaluation research.

Frequently Asked Questions (FAQs)

Q1: My ligand-based solubility prediction model shows high accuracy on the training set but poor generalization to new chemical series. What could be the cause and how can I fix it?

A: This is often a case of overfitting due to a small or non-diverse training dataset, compounded by high-dimensional feature vectors. To resolve:

- Apply Feature Selection: Use mutual information or SHAP analysis to identify and retain only the top 25-50 most predictive molecular descriptors (e.g., logP, topological polar surface area, number of rotatable bonds). This reduces noise and computational load.

- Implement Data Augmentation: Use open-source tools like RDKit to generate valid, subtle variations (tautomers, low-energy conformers, stereoisomers) of your existing training molecules to improve dataset diversity without new experimental costs.

- Switch to a Simpler Model: If using a deep neural network, try a more interpretable and less parameter-heavy model like Gradient Boosting (e.g., XGBoost) for initial testing. It often generalizes better on smaller datasets and trains faster.

Q2: When performing ensemble docking to improve binding affinity prediction, the run time has become prohibitively long. How can I reduce this cost?

A: Ensemble docking (docking against multiple protein conformations) is costly. Optimize with these steps:

- Pre-Filter Conformations: Cluster your protein conformational ensemble (from MD simulations or crystal structures) by binding site RMSD. Select only 1-3 representative conformations from the largest clusters instead of docking against all 20+.

- Utilize Hybrid Workflows: Perform high-throughput, lower-accuracy docking (e.g., using Vina or QuickVina 2) across the filtered ensemble to identify top 1000 hits. Then, subject only these top hits to more accurate, expensive methods (e.g., MM/GBSA, FEP+) on a single, most-likely protein conformation.

- Leverage GPU Acceleration: Ensure your docking software (e.g., AutoDock-GPU, PLANTS) is configured to use available GPU resources, which can speed up calculations by an order of magnitude.

Q3: The predicted ADMET properties (e.g., CYP inhibition) from my QSAR model conflict with later, more expensive experimental results. How should I audit my pipeline?

A: A systematic audit of the prediction pipeline is required.

- Check Applicability Domain: Use distance-based (e.g., Euclidean distance in descriptor space) or leverage methods to verify if the new experimental compounds fall within the chemical space your model was trained on. Predictions for compounds outside this domain are unreliable.

- Review Data Quality: Re-examine the public data (e.g., from ChEMBL) used to train the model. Check for conflicting annotations, inappropriate aggregation of data from different assay types, and correct unit normalization. Clean the label noise.

- Validate with a Hold-Out Test Set: Ensure your original model validation used a stringent temporal or structural cluster hold-out test set, not a simple random split, to better simulate real-world performance.

Q4: I need to run large-scale virtual screening but my compute budget is limited. What is the most effective tiered approach to reduce cost?

A: Implement a sequential filtering funnel to minimize the use of expensive methods.

- Apply Rapid Physicochemical Filters: Use rule-based filters (e.g., PAINS, REOS) and simple property calculations (MW, logP) to remove clearly undesirable compounds first. This is nearly free computationally.

- Utilize Ultra-Fast Methods: Employ very fast machine learning models (like random forests or shallow nets) trained on public data for coarse-grained solubility and toxicity scoring.

- Reserve High-Fidelity Methods: Use docking, MD, and FEP only for the final few hundred prioritized compounds.

Table 1: Comparative Cost & Performance of Prediction Methods

| Method Category | Example Technique | Approx. Computational Cost (CPU/GPU hrs per 1k compounds) | Typical Performance Metric (Task) | Best Use Case |

|---|---|---|---|---|

| Rule-Based | Lipinski's Ro5, PAINS filters | < 0.1 | Qualitative Pass/Fail (Early ADMET) | Initial library triage |

| Classical QSAR/QSPR | Random Forest, XGBoost on 2D descriptors | 1-5 | R² ~ 0.6-0.8 (Solubility, LogD) | Medium-throughput prioritization |

| 2D Deep Learning | Graph Neural Networks (GNNs) | 5-20 (requires GPU) | R² ~ 0.7-0.85 (ADMET endpoints) | High-accuracy prediction where data is abundant |

| Molecular Dynamics | Explicit Solvent MD (100 ns) | 200-1000 (per compound) | RMSD, Binding Free Energy (ΔG) | Detailed mechanism & binding pose validation |

| Free Energy | Alchemical FEP/MM-PBSA | 500-2000 (per compound pair) | ΔG error ~ 0.5-1.0 kcal/mol (Affinity) | Lead optimization for critical compounds |

Table 2: Public Dataset Utility for Cost Reduction

| Dataset Name | Primary Property | Number of Data Points | Key Benefit for Cost Reduction | Access Link |

|---|---|---|---|---|

| ChEMBL | Bioactivity, ADMET | >20 million | Eliminates cost of primary assay data collection | https://www.ebi.ac.uk/chembl/ |

| ESOL | Aqueous Solubility | ~1,000 | High-quality curated data for model benchmarking | 10.1039/b508262b (DOI) |

| PDBbind | Protein-Ligand Binding Affinity | ~23,000 complexes | Provides structures & measured Kd/Ki for affinity models | http://www.pdbbind.org.cn/ |

| Tox21 | Toxicology | ~12,000 compounds | Multi-target toxicity data for parallel QSAR training | https://tripod.nih.gov/tox21/ |

Detailed Experimental Protocols

Protocol 1: Building a Cost-Effective Solubility Prediction Model Using Public Data Objective: Train a machine learning model to predict logS (molar solubility) using only open-source tools and data.

- Data Curation: Download the ESOL dataset or extract solubility data from ChEMBL using specific assay filters. Clean the data: remove duplicates, standardize units to logS (mol/L), and handle salts by generating the parent neutral compound.

- Descriptor Calculation: Use RDKit (

rdkit.Chem.Descriptors) to compute a set of 200+ 2D molecular descriptors (e.g.,MolWt,MolLogP,TPSA,NumRotatableBonds). - Feature Selection & Splitting: Remove low-variance descriptors. Split data using a scaffold split (based on Bemis-Murcko frameworks) to evaluate generalization, not a random split.

- Model Training & Validation: Train an XGBoost regressor. Optimize hyperparameters (maxdepth, nestimators) via Bayesian optimization on the validation set. Evaluate final model on the held-out test set using R² and RMSE.

Protocol 2: Tiered Virtual Screening for Hit Identification Objective: Identify potential binders for a target from a 1-million compound library with a limited compute budget.

- Stage 1 - Physicochemical Filtering (Cost: ~$0): Apply hard filters (e.g., 150 < MW < 500, -2 < LogP < 5, TPSA < 150) and remove pan-assay interference compounds (PAINS) using RDKit or OpenEye toolkits. Expected attrition: ~60%.

- Stage 2 - Fast Pre-Scoring (Cost: Low): Dock the remaining ~400k compounds using a fast, simplified scoring function (e.g., SMINA with Vinardo scoring) against a single rigid receptor conformation. Take the top 10,000 (2.5%) ranked by score.

- Stage 3 - Standard-Precision Docking (Cost: Medium): Re-dock the 10,000 compounds using a more accurate method (e.g., AutoDock Vina or Glide SP) with flexible side chains in the binding site. Use a consensus score from multiple software if possible. Select top 500 compounds.

- Stage 4 - Experimental Prioritization (Cost: High but focused): Apply an accurate but costly MM/GBSA rescoring or a short MD simulation (10 ns) to the top 500. Visually inspect the top 50-100 poses. Select 20-50 for experimental purchase and testing.

Diagrams

Title: Tiered Virtual Screening Funnel to Reduce Computational Cost

Title: Low-Cost QSAR Model Development & Deployment Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Cost-Effective Computational Predictions

| Item/Category | Example(s) | Function & Role in Cost Reduction |

|---|---|---|

| Cheminformatics Toolkit | RDKit (Open Source), OpenEye Toolkit (Commercial) | Calculates molecular descriptors, fingerprints, and performs substructure filtering. Open-source RDKit eliminates license costs. |

| Machine Learning Library | scikit-learn, XGBoost, DeepChem (TensorFlow/PyTorch) | Provides efficient algorithms for building QSAR/QSPR models. Optimized libraries reduce development time and compute time. |

| Docking Software | AutoDock Vina, SMINA (Open Source); Schrodinger Glide, CCDC GOLD (Commercial) | Predicts binding pose and affinity. Open-source options remove per-job licensing fees for screening. |

| Molecular Dynamics Engine | GROMACS (Open Source), AMBER, OpenMM | Simulates dynamic protein-ligand interactions. GROMACS is highly scalable and free, reducing simulation costs. |

| Free Energy Calculation | PMX, FEP+ (Commercial), OpenMM for MM/PBSA | Calculates relative binding free energies. Open-source tools like PMX enable FEP without suite licensing. |

| Workflow Manager | Nextflow, Snakemake | Automates multi-step pipelines (e.g., tiered screening), ensuring reproducibility and efficient resource use on HPC/clusters. |

| Public Data Repository | ChEMBL, PubChem, PDBbind | Provides free, high-quality experimental data for training and validation, eliminating primary data generation costs. |

Balancing Speed and Accuracy: A Troubleshooting Guide for Computational Workflows

Technical Support Center: Troubleshooting Computational Cost in Molecular Property Evaluation

Troubleshooting Guides

Guide 1: Identifying Bottlenecks in Simulation Workflows

Issue: My molecular dynamics (MD) simulations are consuming more core-hours than budgeted, causing project delays.

Diagnosis & Steps:

- Profile the Job: Use profiling tools (e.g.,

gprof,vtune, or built-in MD engine profilers) to identify the most time-consuming functions (e.g., PME, bond calculations). - Check System Setup: Verify that the simulation box size is appropriate (not excessively large with solvent) and that the time step is optimally set (typically 2 fs for classical MD).

- Analyze Hardware Utilization: Use cluster monitoring commands (e.g.,

htop,sacct) to check if all allocated CPUs/GPUs are being fully utilized (>90%). Low usage indicates poor parallel scaling. - Review I/O Operations: Excessive trajectory writing frequency (e.g., saving coordinates every 1 ps) can consume >15% of runtime. Increase interval where possible.

- Solution Path: Based on profiling, consider switching to a GPU-accelerated PME solver, increasing the time step using hydrogen mass repartitioning, or adjusting cutoffs. Reduce trajectory output frequency to every 10-100 ps for analysis.

Guide 2: Managing High Costs in Quantum Chemistry Calculations

Issue: Density Functional Theory (DFT) calculations for large molecular systems (50+ atoms) are prohibitively expensive.

Diagnosis & Steps:

- Method/Basis Set Audit: The computational cost of DFT scales with system size (N) as O(N³) for exact exchange, and basis set size greatly impacts cost. A double-zeta basis set is far cheaper than a triple-zeta.

- Check for Redundant Calculations: Are you re-running single-point energy calculations on nearly identical geometries? Consider using a cheaper method for geometry optimization.

- Evaluate Convergence Settings: Overly tight SCF or geometry convergence criteria (e.g., energy delta of 1e-7 vs. 1e-5) can double computation time for marginal gain.

- Parallelization Efficiency: Confirm that the calculation is efficiently parallelized across available cores. Performance often plateaus after a core count specific to the method and system.

- Solution Path: Implement a multi-level approach: Optimize geometry with a lower-cost method/basis set (e.g., GFN2-xTB), then run a single-point energy calculation at a higher level. Use machine-learned force fields for preliminary screening.

Frequently Asked Questions (FAQs)

Q1: My model training for molecular property prediction is taking weeks. How can I accelerate it? A: The issue likely stems from dataset size, model complexity, or hyperparameter search. First, ensure your dataset is curated and free of redundancies. Use a subset for rapid prototyping. Consider switching from a graph neural network (GNN) to a lighter-weight model like Random Forest for initial feature importance analysis. Implement early stopping during training and use Bayesian optimization for more efficient hyperparameter tuning compared to grid search.

Q2: I'm running virtual screening on a library of 1M compounds. How can I estimate the cost and reduce it? A: Cost is driven by the method used per compound. Perform a pilot study on a representative 1,000-compound subset. Extrapolate the time/cost to the full library.

Table: Virtual Screening Method Cost-Benefit Analysis

| Method | Approx. Time per Compound | Relative Cost | Best Use Case |

|---|---|---|---|

| Classical Force Field (MD) | 10-60 min | High | Binding affinity (with careful setup) |

| DFT (Geometry Opt) | 5-30 min | Very High | Accurate electronic properties |

| Semi-empirical (e.g., PM7) | 10-60 sec | Medium | Large library pre-screening |

| Machine Learning Model | < 1 sec | Very Low | Ultra-high-throughput initial screening |

| 2D Fingerprint Similarity | < 0.1 sec | Negligible | Identify structural analogs |

To reduce cost: Implement a tiered funnel: Use the fastest method (ML or 2D) to filter the 1M down to 100k. Apply a mid-tier method (semi-empirical) to filter to 10k. Reserve high-cost methods (DFT, MD) for the final top 100-1000 candidates.

Q3: My free energy perturbation (FEP) calculations are unstable and failing, wasting computational resources. What should I do? A: FEP failures are often due to poor overlap between intermediate states. Follow this protocol:

- System Preparation: Ensure identical atom ordering and alchemical region setup for ligand A and B. Use a robust solvation and neutralization protocol.

- Lambda Scheduling: Increase the number of intermediate λ windows (e.g., from 12 to 16-24), especially near end-states (λ=0, λ=1) where changes are more dramatic.

- Simulation Length: Extend equilibration time at each λ window. For production, ensure sufficient sampling (≥ 5 ns/window is often needed for convergence).

- Monitoring: Analyze the energy difference time series per window. If variance is extremely high or there are jumps, overlap is poor—you need more windows or longer simulation time.

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Computational Tools for Cost-Effective Molecular Research

| Tool / Reagent | Category | Function in Reducing Computational Cost |

|---|---|---|

| GPU-Accelerated MD Code (e.g., OpenMM, Amber, NAMD) | Software | Drastically reduces time for molecular dynamics simulations compared to CPU-only codes. |

| Machine-Learned Force Fields (e.g., ANI, MACE) | Method/Model | Provides near-DFT accuracy for energies and forces at orders-of-magnitude lower cost, usable in MD. |

| Extended Tight-Binding (xTB) Methods | Software/Method | Fast quantum mechanical method for geometry optimization and pre-screening (GFN2-xTB). |

| Equivariant Graph Neural Networks (e.g., MACE, NequIP) | Model | State-of-the-art ML models for accurate property prediction, trained once then used for instant predictions. |

| Alchemical FEP Software (e.g., FEP+, PMX) | Software/Protocol | Provides robust, automated workflows for relative binding free energy calculations, reducing setup errors and waste. |

| High-Throughput Screening Workflow (e.g., HTMD, Schrödinger Glide) | Software Pipeline | Automates the setup, running, and analysis of thousands of simulations, improving throughput and reproducibility. |

Experimental Protocols

Protocol 1: Multi-Fidelity Screening for Hit Identification Objective: To identify potential binders from a large compound library with optimal computational budget allocation.

- Library Preparation: Standardize and curate the initial library (e.g., 1M compounds) using RDKit. Generate 2D molecular fingerprints.

- Tier 1 - Ultra-Fast Screening: Screen against a pharmacophore model or perform similarity search (Tanimoto similarity >0.7) against known actives. Retain top 100,000 compounds.

- Tier 2 - Machine Learning Screening: Use a pre-trained QSAR or GNN model to predict binding affinity or activity. Retain top 10,000 compounds.

- Tier 3 - Molecular Docking: Dock the 10,000 compounds using a fast docking program (e.g., AutoDock Vina or FRED). Cluster results and retain top 1,000 diverse poses.

- Tier 4 - Mid-Fidelity Scoring: Re-score the top 1,000 docked poses using a more rigorous method (e.g., MM-GBSA with implicit solvent) or a short MD simulation (1-5 ns). Select top 100 compounds.

- Tier 5 - High-Fidelity Validation: Subject the final 100 compounds to alchemical FEP or long-timescale MD (≥100 ns) for definitive ranking.

Protocol 2: Profiling an MD Simulation for Performance Bottlenecks Objective: To identify the components consuming the most time in an MD run.

- Instrument the Run: Launch your MD simulation (e.g., using GROMACS) with built-in profiling flags (e.g.,

gmx mdrun -v -stepout 1000). For detailed profiling, compile GROMACS/AMBER with internal timers enabled. - Run a Benchmark: Execute a short simulation (e.g., 1000 steps) on a representative, isolated test system.

- Collect Timing Data: At the end of the run, the log file will output a detailed breakdown of time spent in different modules (Pair Search, PME, Bonded Forces, etc.).

- Analyze Parallel Efficiency: Run the same benchmark on 1, 2, 4, 8, 16, and 32 cores/GPUs. Record the total simulation time and compute the parallel efficiency: (Time{1 core} / (Ncores * Time_{N cores})).

- Identify Bottleneck: If PME takes >40% of time, consider adjusting cutoff or using GPU PME. If parallel efficiency drops below 70% at high core counts, the system may be too small for good scaling.

Visualizations

Title: MD Performance Bottleneck Diagnosis Workflow

Title: Multi-Tier Computational Screening Funnel

Troubleshooting Guides & FAQs

Q1: My single-point energy calculation fails with a segmentation fault when using a large basis set (e.g., aug-cc-pVQZ) on a high-core-count node. What should I check?

A: This is often a memory distribution issue in parallelized calculations. First, verify that the total available RAM is sufficient for the basis set size. For correlated methods (e.g., CCSD(T)), memory scales as O(N⁴). Use the formula in Table 1 to estimate needs. Ensure your computational chemistry suite (e.g., Gaussian, GAMESS, ORCA) is configured to limit the number of cores per memory region. A common fix is to reduce the number of processes and increase the memory per core in the input file (e.g., in ORCA: %pal nprocs 24 end %maxcore 8000).