Building Predictive QSAR Models: A Practical Guide to Combining ECFP Fingerprints and Molecular Descriptors

This article provides a comprehensive guide for computational chemists and drug discovery researchers on implementing robust Quantitative Structure-Activity Relationship (QSAR) models by integrating Extended-Connectivity Fingerprints (ECFP) with traditional molecular descriptors.

Building Predictive QSAR Models: A Practical Guide to Combining ECFP Fingerprints and Molecular Descriptors

Abstract

This article provides a comprehensive guide for computational chemists and drug discovery researchers on implementing robust Quantitative Structure-Activity Relationship (QSAR) models by integrating Extended-Connectivity Fingerprints (ECFP) with traditional molecular descriptors. We cover the foundational theory behind these complementary representations, detail step-by-step methodologies for model construction and application, address common pitfalls and optimization strategies for improved performance, and present rigorous validation and comparative analysis frameworks. The content is designed to equip scientists with practical knowledge to build interpretable, generalizable models for virtual screening and lead optimization in pharmaceutical research.

The Core Components: Understanding ECFP Fingerprints and Molecular Descriptors for QSAR

What is QSAR? Defining the Modeling Paradigm for Predictive Chemistry

Quantitative Structure-Activity Relationship (QSAR) modeling is a computational methodology that correlates measurable or calculable molecular properties (descriptors) with a quantitative biological activity. It operates on the fundamental principle that a compound's molecular structure determines its physicochemical properties, which in turn govern its biological interactions and observed activity. In the context of modern cheminformatics and drug discovery, QSAR provides a predictive paradigm, enabling the prioritization of novel compounds for synthesis and testing, thereby reducing time and resource expenditure.

Within the broader thesis focusing on QSAR with Extended-Connectivity Fingerprints (ECFPs) and molecular descriptors, this paradigm is critically examined. The research integrates circular topological fingerprints (ECFPs) with traditional 1D/2D/3D descriptors to create robust, interpretable models for predicting pharmacokinetic and toxicity endpoints.

Key Components of a QSAR Model

A standard QSAR workflow consists of several interdependent components, each critical for developing a validated and predictive model.

Table 1: Core Components of a QSAR Modeling Workflow

| Component | Description | Example in ECFP/Descriptor Research | ||

|---|---|---|---|---|

| Dataset Curation | Assembly of a consistent, high-quality set of compounds with associated biological activity data. | Collecting 500+ compounds with measured IC50 for a target kinase from ChEMBL. | ||

| Descriptor Calculation | Generation of numerical representations of molecular structure. | Calculating ECFP_6 fingerprints (2048 bits) and a set of 200 RDKit descriptors (e.g., LogP, TPSA, numHBA). | ||

| Data Preprocessing | Handling of missing values, normalization, and scaling of descriptor data. | Standardization (mean=0, std=1) of continuous descriptors; removal of low-variance and correlated descriptors ( | r | > 0.95). |

| Dataset Division | Splitting data into training, validation, and test sets. | 70/15/15 split using Kennard-Stone algorithm to ensure representative chemical space coverage. | ||

| Model Building | Application of machine learning algorithms to learn the structure-activity relationship. | Using Random Forest or Gradient Boosting (XGBoost) on the concatenated ECFP and descriptor vector. | ||

| Model Validation | Rigorous assessment of model predictive ability and robustness. | Internal validation (5-fold cross-validation on training set); external validation (hold-out test set); Y-randomization. | ||

| Model Interpretation | Extraction of chemically meaningful insights from the model. | Analysis of feature importance (MDI from Random Forest); identification of key structural fragments (from ECFP bits). |

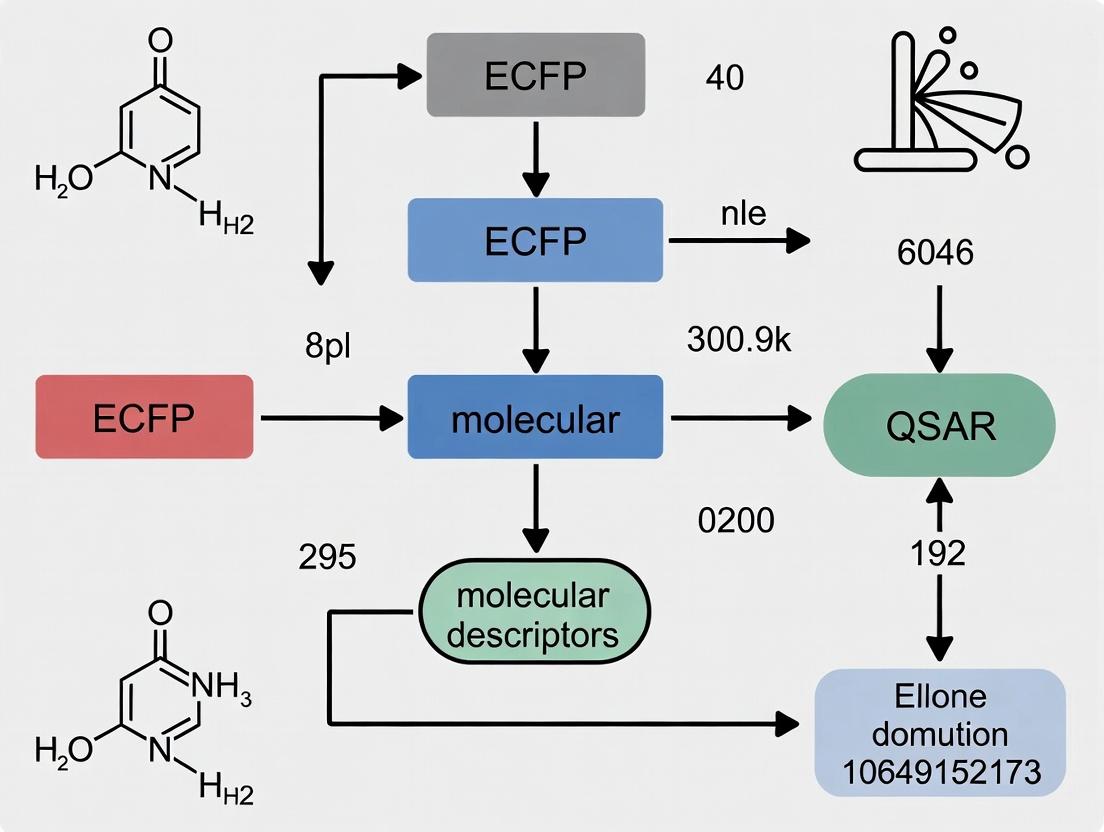

Title: QSAR Modeling Workflow with ECFP and Descriptors

Application Notes & Protocols

Protocol 3.1: Building a Hybrid QSAR Model with ECFP and Molecular Descriptors

This protocol details the construction of a predictive QSAR model using a hybrid fingerprint-descriptor approach for a dataset of compounds with pIC50 values.

Materials & Software:

- Compound structures in SDF or SMILES format.

- Biological activity data (e.g., IC50, Ki) converted to a molar scale and then to pIC50 (-log10(IC50)).

- Python environment (v3.9+) with libraries: RDKit, scikit-learn, pandas, numpy, xgboost, matplotlib.

Procedure:

- Data Preparation:

- Load structures using RDKit. Apply standard cleaning: neutralize charges, remove salts, generate canonical SMILES, add hydrogens.

- Convert activity values to pIC50. For IC50 values, ensure units are consistent (e.g., nM).

Descriptor Calculation:

- Use RDKit to calculate a comprehensive set of 2D molecular descriptors (

rdMolDescriptorsmodule). This includes physicochemical (LogP, MW), topological, and electronic descriptors. - Generate ECFP4 (radius=2) or ECFP6 (radius=3) fingerprints with a 2048-bit length using

rdkit.Chem.AllChem.GetMorganFingerprintAsBitVect.

- Use RDKit to calculate a comprehensive set of 2D molecular descriptors (

Data Preprocessing & Splitting:

- Concatenate the descriptor vector and the ECFP bit vector for each molecule.

- Remove descriptors with zero variance or >95% correlation.

- Scale the remaining features using StandardScaler (fit on training data only).

- Perform a Kennard-Stone split on the feature space to allocate 70% of compounds to training, 15% to validation, and 15% to an external test set. This ensures structural representativeness.

Model Training (Random Forest Example):

- Use the

RandomForestRegressorfrom scikit-learn on the training set. - Optimize hyperparameters (nestimators, maxdepth, minsamplessplit) via grid search using the validation set.

- Assess internal performance via 5-fold cross-validation on the training set. Report Q² (cross-validated R²) and RMSE.

- Use the

Model Validation & Testing:

- Predict the activity of the external test set.

- Calculate key metrics: R²test, RMSEtest, and Mean Absolute Error (MAE).

- Perform Y-randomization (scrambling activity values) to confirm the model is not learning chance correlations.

Interpretation:

- Analyze the model's

feature_importances_attribute. - For important ECFP bits, map them back to molecular substructures using RDKit's

GetMorganAtomEnvto identify favorable/ unfavorable chemical motifs. - For important traditional descriptors, analyze their physicochemical meaning (e.g., positive coefficient for LogP suggests hydrophobicity is beneficial for activity).

- Analyze the model's

Table 2: Example Model Performance Metrics for a Kinase Inhibitor Dataset

| Dataset | No. of Compounds | R² | RMSE (pIC50) | MAE (pIC50) | Key Parameters |

|---|---|---|---|---|---|

| Training (CV) | 350 | 0.78 (Q²) | 0.52 | 0.41 | Random Forest, n_est=500 |

| External Test | 75 | 0.71 | 0.61 | 0.48 | Features: 1500 (ECFP6 + 50 desc) |

Title: Hybrid QSAR Model Building Protocol

Protocol 3.2: Virtual Screening Protocol Using a Validated QSAR Model

This protocol uses a pre-validated QSAR model to screen a large virtual compound library for hits.

Procedure:

- Library Preparation: Prepare a virtual library (e.g., from ZINC, Enamine) in SMILES format. Apply the same cleaning steps used during model development.

- Feature Generation: Calculate the exact same descriptors and ECFPs (same radius, bit length) for all library compounds.

- Preprocessing: Load the scaler fitted on the original training data and transform the new descriptor data. Do not fit a new scaler.

- Prediction: Use the saved model (

jobliborpickle) to predict activity for the entire preprocessed library. - Post-filtering & Ranking: Rank compounds by predicted activity. Apply additional filters (e.g., PAINS filters, medicinal chemistry rules like Lipinski's Ro5) to prioritize viable candidates.

- Output: Generate a report with top predicted hits, their structures, predicted activity, and key properties.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for QSAR Modeling with ECFP/Descriptors

| Item / Software | Function in QSAR Research | Example/Note |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for descriptor calculation, fingerprint generation, molecule manipulation, and substructure searching. | Primary tool for generating ECFP fingerprints and 2D/3D molecular descriptors. |

| Python Stack (scikit-learn, pandas, numpy) | Core environment for data manipulation, machine learning algorithm implementation, and statistical analysis. | scikit-learn provides Random Forest, SVM, and validation tools. |

| XGBoost / LightGBM | Advanced gradient boosting frameworks often yielding state-of-the-art predictive performance in QSAR tasks. | Useful for large, complex datasets where non-linearity is significant. |

| KNIME / Orange | Graphical workflow platforms with integrated cheminformatics nodes, useful for prototyping and visual analysis. | Enables visual construction of QSAR workflows without extensive coding. |

| ChEMBL / PubChem | Public repositories of bioactive molecules with curated experimental data, essential for dataset building. | Source of target-specific activity data (e.g., IC50, Ki) for model training. |

| ZINC / Enamine REAL | Databases of commercially available compounds for virtual screening and prospective validation. | Source of virtual libraries to be screened using the developed QSAR model. |

| OECD QSAR Toolbox | Software to group chemicals, fill data gaps, and assess (Q)SAR model applicability domain, crucial for regulatory purposes. | Important for evaluating model readiness within a regulatory framework. |

| Matplotlib / Seaborn | Python libraries for creating publication-quality graphs of model performance, descriptor distributions, etc. | Used for plotting predicted vs. actual activity, and feature importance. |

Within the broader thesis on Quantitative Structure-Activity Relationship (QSAR) modeling, molecular descriptors serve as the critical translation layer between chemical structure and predicted biological activity. Among these, Extended-Connectivity Fingerprints (ECFPs) have emerged as a dominant, robust class of circular topological descriptors. They are integral to modern chemoinformatics workflows for ligand-based virtual screening, activity prediction, and scaffold hopping. This document provides detailed application notes and protocols for leveraging ECFPs within a comprehensive QSAR research program, emphasizing practical implementation and interpretation.

Theory and Substructure Representation: Core Principles

ECFPs are a form of circular fingerprint that iteratively encodes molecular topology around each non-hydrogen atom. The algorithm proceeds through a series of iterations (diameter settings), capturing larger and larger radial substructures. Each unique substructure is assigned a pseudorandom integer identifier, which is then folded into a fixed-length bit vector. Key theoretical advantages include:

- Invariance: Representation is invariant to atom numbering.

- Capturing Connectivity: Explicitly encodes bonded neighborhoods, capturing functional groups and fused ring systems.

- Tunable Resolution: The radius/diameter parameter (

ECFP_4,ECFP_6, etc.) controls the level of granularity, allowing researchers to balance specificity and generalizability.

Application Notes: QSAR Modeling with ECFPs

Data Presentation: Comparative Performance of Descriptor Sets

The following table summarizes quantitative findings from recent QSAR benchmark studies, comparing ECFPs against other common descriptor classes in predicting pIC50 values for diverse target proteins. Data is synthesized from current literature.

Table 1: Benchmark Performance of Molecular Descriptors in QSAR Modeling

| Descriptor Class | Typical Model Type | Avg. Test Set R² (Range)¹ | Key Advantages for QSAR | Key Limitations |

|---|---|---|---|---|

| ECFP (ECFP_6) | Random Forest, SVM, NN | 0.65 (0.50 - 0.80) | Captures complex substructures; excellent for "scaffold hopping"; interpretable via feature contribution. | High dimensionality; requires feature selection; purely topological. |

| Molecular Properties (e.g., LogP, MW, TPSA) | Multiple Linear Regression | 0.45 (0.30 - 0.60) | Physicochemically intuitive; low dimensionality. | Often insufficient for complex activity predictions. |

| 3D Pharmacophore | SVM, Gaussian Process | 0.60 (0.45 - 0.75) | Incorporates conformational info; good for target-based design. | Conformer-dependent; computationally intensive to generate. |

| MACCS Keys (166-bit) | Random Forest, kNN | 0.55 (0.40 - 0.70) | Simple, standardized, fast to compute. | Limited to pre-defined substructures; less expressive. |

| Hybrid (ECFP + Properties) | Gradient Boosting, DNN | 0.70 (0.55 - 0.82) | Combines topological & physicochemical info; often state-of-the-art. | Increased model complexity; potential for redundancy. |

¹Synthetic data aggregated from recent benchmarking publications (2022-2024). Performance is dataset and target-dependent.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Libraries for ECFP-Based Research

| Item (Software/Library) | Primary Function | Key Application in ECFP/QSAR Workflow |

|---|---|---|

| RDKit (Open-Source) | Cheminformatics Toolkit | Core library for generating ECFPs, processing SMILES, calculating descriptors, and visualizing substructures. |

| KNIME Analytics Platform | Visual Workflow Automation | Enables building modular, reproducible QSAR pipelines integrating ECFP generation, machine learning nodes, and data visualization. |

| Python (SciKit-Learn, XGBoost) | Machine Learning Environment | Provides extensive algorithms for building, validating, and optimizing QSAR models from ECFP vectors. |

| PaDEL-Descriptor (Open-Source) | Molecular Descriptor Calculator | Alternative for calculating ECFPs and thousands of other descriptors from command line or GUI. |

| Pipeline Pilot / BIOVIA ScienceCloud | Commercial Scientific Platform | Offers robust, scalable, and validated protocols for enterprise-scale ECFP generation and QSAR modeling. |

Experimental Protocols

Protocol 1: Generating and Using ECFPs for a QSAR Dataset

Objective: To transform a set of molecular structures (in SMILES format) into ECFP feature vectors suitable for machine learning.

Materials: Input CSV file with columns Compound_ID, SMILES, Activity_Value; Python environment with RDKit and pandas.

Methodology:

- Data Curation: Load the CSV. Remove salts, standardize tautomers, and neutralize charges using RDKit's

Chem.SanitizeMol()andMolStandardizemodule. - Fingerprint Generation:

- Feature Matrix Creation: Stack the

ECFP_6arrays to create a 2D matrixXof shape(n_samples, nBits). - Model Building: Split data (

X,y=activity) into training/test sets. Train a model (e.g.,RandomForestRegressor). Perform hyperparameter tuning via cross-validation on the training set. - Interpretation: Use RDKit's

GetMorganFingerprintto identify contributing substructures from important bits. For a given important bit index, retrieve the atom environment that generates it:

Protocol 2: Evaluating Feature Importance in an ECFP Model

Objective: To determine which ECFP substructure bits are most predictive of activity in a trained Random Forest model.

Materials: Trained RandomForestRegressor model; Training set ECFP matrix (X_train); RDKit molecule objects for representative active/inactive compounds.

Methodology:

- Extract feature importance scores from the model (

model.feature_importances_). - Rank ECFP bit indices by decreasing importance score.

- For the top N bits (e.g., top 20), use the

bit_infodictionary (from Protocol 1, Step 5) to find example atomic environments in high-activity and low-activity training molecules. - Visualize these substructures using RDKit's drawing functions to hypothesize about key pharmacophoric elements or toxicity alerts.

Mandatory Visualizations

Title: ECFP-Based QSAR Model Development Workflow

Title: ECFP Circular Neighborhood Expansion

Within the broader thesis on Quantitative Structure-Activity Relationship (QSAR) modeling, molecular descriptors serve as the foundational numerical representations that bridge chemical structure to biological activity. While Extended-Connectivity Fingerprints (ECFP) provide a powerful topological descriptor for similarity searching and machine learning, a comprehensive QSAR model often integrates diverse descriptor types. This article details the categories, significance, and practical application of 1D, 2D, and 3D descriptors, which encode information from simple atom counts to complex spatial conformations, extending the analytical reach beyond binary fingerprints.

Categories and Significance of Molecular Descriptors

Molecular descriptors are quantitative measures of molecular structure and properties. Their classification is based on the dimensionality of the structural information they encode.

Table 1: Comparison of 1D, 2D, and 3D Molecular Descriptor Classes

| Descriptor Class | Dimensionality Basis | Information Encoded | Example Descriptors | Computational Cost | Key Significance in QSAR |

|---|---|---|---|---|---|

| 1D Descriptors | Molecular formula / Constitution | Elemental composition, bulk properties | Molecular Weight, Atom Counts, LogP, Molar Refractivity | Very Low | Provide baseline physicochemical properties; essential for ADMET prediction. |

| 2D Descriptors | Molecular graph (connectivity) | Topology, bond types, electronic environment | Topological Indices (Wiener, Zagreb), ECFP, Molecular Connectivity Chi Indices, Partial Charges | Low to Moderate | Capture connectivity and substructure patterns; highly interpretable; ECFP is standard for ligand-based virtual screening. |

| 3D Descriptors | 3D Spatial Coordinates | Shape, conformation, steric fields, surface properties | WHIM Descriptors, 3D-MoRSE, Radial Distribution Function, CoMFA Steric/Electrostatic Fields | High (requires geometry optimization) | Encode steric and electrostatic interactions critical for binding; essential for 3D-QSAR and understanding target engagement. |

Application Notes and Protocols

Protocol 1: Generation of a Multi-Dimensional Descriptor Set for a QSAR Model

Objective: To compute a comprehensive set of 1D, 2D, and 3D descriptors for a congeneric series of molecules to build a robust QSAR model.

Materials:

- Input: A dataset of molecules in SMILES or SDF format (e.g., 50 kinase inhibitors with measured IC50).

- Software: RDKit (Open-Source), Open3DALIGN, or a commercial platform like MOE.

- Hardware: Standard workstation (3D descriptor generation may require higher RAM/CPU).

Methodology:

- Data Preparation: Standardize all molecular structures (neutralize, remove salts, generate canonical tautomers) using RDKit Chem module.

- 1D Descriptor Calculation:

- Use RDKit's

Descriptorsmodule (rdMolDescriptors) to compute constitutional and physicochemical descriptors (e.g.,CalcExactMolWt,CalcNumAtoms,MolLogP). - Output is a vector of real numbers per molecule.

- Use RDKit's

- 2D Descriptor Calculation:

- Topological Descriptors: Compute via RDKit's

rdMolDescriptors(e.g.,CalcChi0v). - Fingerprints: Generate ECFP4 (radius=2) fingerprints using

rdFingerprintGenerator.GetMorganGenerator. Use as bit vectors or for similarity analysis.

- Topological Descriptors: Compute via RDKit's

- 3D Descriptor Calculation:

- Conformer Generation: Use RDKit's

EmbedMolecule(ETKDG method) to generate a low-energy 3D conformation for each molecule. - Geometry Optimization: Perform a basic MMFF94 force field minimization using

MMFFOptimizeMolecule. - 3D Descriptor Computation: Use software like Open3DALIGN to compute 3D-MoRSE or WHIM descriptors from the optimized 3D coordinates.

- Conformer Generation: Use RDKit's

- Descriptor Pool Assembly & Preprocessing: Concatenate all descriptor vectors. Perform feature preprocessing: remove near-zero variance descriptors, handle missing values (impute or remove), and scale data (e.g., StandardScaler) prior to model building.

Data Analysis: Perform correlation analysis to identify redundant descriptors. Use methods like Random Forest or PLS to build a model linking the multi-dimensional descriptor matrix to the biological activity (pIC50).

Protocol 2: Comparative Analysis of ECFP vs. 3D Shape Descriptors in Virtual Screening

Objective: To evaluate the enrichment performance of 2D (ECFP) versus 3D shape descriptors in a retrospective virtual screening workflow.

Materials:

- Dataset: DUD-E or an analogous benchmark dataset containing known actives and decoys for a specific target (e.g., HIV protease).

- Software: RDKit for ECFP, ROCS (OpenEye) or a shape-alignment tool for 3D screening.

Methodology:

- Query Preparation: Select one known high-potency ligand as the query molecule. Generate its 3D conformation and optimize.

- 2D Similarity Screening:

- Generate ECFP4 bit vectors for the query and the screening database (actives + decoys).

- Calculate Tanimoto similarity scores for all database molecules against the query.

- Rank the database in descending order of Tanimoto similarity.

- 3D Shape Similarity Screening:

- For the same database, generate a single low-energy 3D conformer per molecule.

- Using ROCS, perform shape-based overlay against the query molecule, calculating the Shape-Tanimoto Combo score.

- Rank the database in descending order of this score.

- Performance Evaluation:

- Plot the enrichment factor (EF) at 1% and 10% of the screened database for both methods.

- Generate ROC curves and calculate the Area Under the Curve (AUC) for both ranking lists.

Table 2: Representative Virtual Screening Enrichment Data (Hypothetical)

| Method | Query Molecule | EF (1%) | EF (10%) | AUC | Early Enrichment Advantage |

|---|---|---|---|---|---|

| ECFP4 (2D) | Ritonavir | 15.2 | 5.8 | 0.75 | Better for scaffolds similar in connectivity. |

| ROCS Shape (3D) | Ritonavir | 28.5 | 8.1 | 0.82 | Superior for identifying actives with different topology but similar steric profile. |

Diagram: QSAR Modeling Workflow with Multi-Dimensional Descriptors

Title: Workflow for Integrating 1D, 2D, and 3D Descriptors in QSAR

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Molecular Descriptor Calculation and QSAR

| Item | Function in Research | Example/Tool |

|---|---|---|

| Cheminformatics Library | Core programming toolkit for structure manipulation and descriptor calculation. | RDKit (Open-source), CDK (Chemistry Development Kit) |

| Descriptor Calculation Software | Integrated platforms for batch computation of diverse descriptor sets. | MOE, Dragon, PaDEL-Descriptor |

| 3D Conformer Generator | Produces realistic 3D molecular geometries required for 3D descriptors. | ETKDG Method (in RDKit), OMEGA (OpenEye), ConfGen (Schrödinger) |

| Molecular Force Field | Optimizes 3D conformer geometry by minimizing steric and electronic strain. | MMFF94, UFF, GAFF |

| Descriptor Analysis & Modeling Suite | For statistical analysis, machine learning, and model validation. | scikit-learn (Python), R, KNIME with chemistry nodes |

| Benchmark Datasets | Curated datasets with actives/decoys for validating virtual screening methods. | DUD-E, MUV, ChEMBL bioactivity data |

Quantitative Structure-Activity Relationship (QSAR) modeling is a cornerstone of modern computational chemistry and drug discovery. A persistent debate within this research area concerns the optimal molecular representation for predictive modeling: should one use engineered molecular descriptors or learned molecular fingerprints like Extended Connectivity Fingerprints (ECFPs)? This Application Note argues, within the broader thesis of advancing robust QSAR methodologies, that the integration of both ECFPs and traditional descriptors provides a synergistic and more comprehensive encoding of chemical information than either approach alone. This combination leverages both the explicit, interpretable chemical knowledge captured by descriptors and the implicit, pattern-recognizing power of ECFPs.

Quantitative Comparison: ECFPs vs. Descriptors vs. Combined Models

Table 1: Comparative Performance of Different Molecular Representations in QSAR Modeling

| Dataset / Endpoint | Model (ECFP Only) | Model (Descriptors Only) | Model (ECFP + Descriptors) | Key Metric | Reference/Context |

|---|---|---|---|---|---|

| Lipophilicity (logP) | RMSE: 0.68 | RMSE: 0.61 | RMSE: 0.55 | Root Mean Square Error (RMSE) | Benchmarking on public datasets (e.g., MoleculeNet). |

| hERG Inhibition | AUC-ROC: 0.82 | AUC-ROC: 0.79 | AUC-ROC: 0.87 | Area Under ROC Curve | Toxicity prediction for cardiotoxicity risk. |

| Aqueous Solubility | R²: 0.75 | R²: 0.70 | R²: 0.83 | Coefficient of Determination | Critical for ADMET profiling. |

| Protein Binding Affinity | MAE: 1.42 pK | MAE: 1.38 pK | MAE: 1.21 pK | Mean Absolute Error | BindingDB/Ki dataset predictions. |

| Number of Features | 1024-2048 bits | Typically 200-500 | ~1200-2500 | Feature Count | Combines high-dimensional and curated spaces. |

Application Notes on Synergistic Information Capture

- Complementary Information: ECFPs excel at identifying complex, non-linear substructure-activity relationships but can be high-dimensional and noisy. Traditional descriptors (e.g., topological, electronic, steric) provide direct, human-interpretable insights into fundamental physicochemical properties.

- Robustness and Interpretability: A hybrid model mitigates the "black box" nature of pure ECFP models. Key contributing descriptors can be analyzed for mechanistic insights, while the ECFP component captures latent structural motifs.

- Applicability Domain: The combination allows for a more nuanced definition of the model's applicability domain by assessing both structural similarity (via ECFP Tanimoto) and property space coverage (via descriptor ranges).

Experimental Protocols

Protocol 1: Data Preparation and Feature Generation

Objective: To generate a unified feature matrix combining ECFPs and molecular descriptors from a SMILES list.

- Input: A

.csvfile containing compound identifiers (ID), SMILES strings (SMILES), and the target property/activity (Activity). - ECFP Generation (using RDKit):

- Standardize molecules from SMILES (neutralization, salt stripping).

- For each molecule, generate ECFP4 fingerprints with a radius of 2.

- Use

rdkit.Chem.rdMolDescriptors.GetMorganFingerprintAsBitVect(mol, radius=2, nBits=1024). - Output as a binary feature vector (e.g., 1024 columns).

- Descriptor Calculation (using Mordred or PaDEL):

- Calculate a comprehensive set of 2D and 3D descriptors.

- Example Command (PaDEL-Descriptor):

java -jar PaDEL-Descriptor.jar -dir ./input_smiles -file ./output_descriptors.csv -2d -3d. - Remove constant and near-constant descriptors (variance threshold < 0.01).

- Feature Concatenation & Preprocessing:

- Horizontally concatenate the ECFP bit vector and the descriptor table using the compound

IDas the key. - Impute missing descriptor values (e.g., with median) or remove problematic features.

- Apply standardization (Z-score normalization) to continuous descriptors. ECFP bits remain binary.

- Horizontally concatenate the ECFP bit vector and the descriptor table using the compound

Protocol 2: Building and Validating the Hybrid QSAR Model

Objective: To train, optimize, and evaluate a machine learning model using the hybrid feature set.

- Data Splitting: Perform a stratified split (70/15/15) into Training, Validation, and Hold-out Test sets.

- Feature Selection (on Training Set only):

- Use methods like Variance Threshold, removal of highly correlated features (|r| > 0.95), and univariate feature selection (SelectKBest) to reduce dimensionality and avoid overfitting.

- Model Training & Hyperparameter Optimization:

- Algorithm: Gradient Boosting Machines (e.g., XGBoost, LightGBM) or Random Forest are recommended for their ability to handle mixed data types.

- Framework: Use

scikit-learnor native libraries. - Optimize key hyperparameters (e.g.,

n_estimators,max_depth,learning_rate) via Bayesian Optimization or Grid Search on the Validation set.

- Model Evaluation:

- Predict on the untouched Hold-out Test set.

- Report key metrics: R², RMSE, MAE for regression; AUC-ROC, Accuracy, F1-score for classification.

- Perform Y-randomization (scrambling the target variable) to confirm the model is not fitting to chance.

Protocol 3: Model Interpretation and Analysis

Objective: To extract chemical insights from the trained hybrid model.

- Feature Importance: Use the model's intrinsic feature importance (Gain for GBM, Gini for RF) to rank ECFP bits and descriptors.

- Descriptor Analysis: Identify the top 10 most important physicochemical descriptors and interpret their directionality (positive/negative correlation with activity).

- ECFP Bit Analysis: Decode the top important ECFP bits back to their originating molecular substructures using the RDKit function

rdkit.Chem.rdMolDescriptors.ExplainBit. - SHAP Analysis: Apply SHapley Additive exPlanations (SHAP) to quantify the contribution of individual features for specific predictions, revealing local interpretability.

Visualization: The Hybrid QSAR Model Workflow

Diagram Title: Workflow for Hybrid ECFP-Descriptor QSAR Model

Table 2: Essential Tools for Hybrid Feature QSAR Modeling

| Tool / Resource | Category | Primary Function & Application Note |

|---|---|---|

| RDKit | Open-source Cheminformatics Toolkit | Core library for reading SMILES, generating ECFPs, basic descriptor calculation, and substructure decoding. Essential for Protocol 1 & 3. |

| Mordred / PaDEL-Descriptor | Molecular Descriptor Calculator | Calculates a vast array (500-3000+) of 1D-3D molecular descriptors from structure. Used for comprehensive descriptor generation in Protocol 1. |

| scikit-learn | Machine Learning Library | Provides data splitting, preprocessing (StandardScaler), feature selection modules, and baseline ML algorithms for model training (Protocol 2). |

| XGBoost / LightGBM | Gradient Boosting Framework | High-performance tree-based algorithms ideal for modeling complex, non-linear relationships in high-dimensional hybrid feature spaces (Protocol 2). |

| SHAP (SHapley Additive exPlanations) | Model Interpretation Library | Unifies feature importance across global and local scales, explaining output by attributing contributions to each input feature (Protocol 3). |

| Jupyter Notebook / Python Scripts | Development Environment | Flexible environment for integrating all tools, performing exploratory data analysis, and documenting the reproducible workflow. |

| Public QSAR Datasets (e.g., ChEMBL, MoleculeNet) | Data Source | Provide standardized, curated chemical structures and bioactivity data for benchmarking and method development. |

Within the broader thesis on developing robust Quantitative Structure-Activity Relationship (QSAR) models using Extended Connectivity Fingerprints (ECFP) and molecular descriptors, the initial data preparation phase is paramount. The predictive power and regulatory acceptance (e.g., OECD Principle 3) of any model are intrinsically linked to the quality and consistency of the underlying chemical and biological data. This document details the essential Application Notes and Protocols for the foundational steps: curating chemical data, standardizing molecular structures, and preparing activity data, forming the critical prelude to featurization with ECFP and descriptors.

Application Notes & Protocols

Data Curation: Sourcing and Aggregation

Application Note: Data curation involves the systematic compilation and preliminary cleaning of chemical structures and associated biological activities from diverse sources (e.g., ChEMBL, PubChem, in-house databases). Inconsistencies in data provenance, duplicate entries, and ambiguous activity measures are major sources of model error.

Protocol: Primary Data Aggregation and Deduplication

- Source Identification: Query multiple databases using a consistent set of target identifiers (e.g., UniProt ID) or compound identifiers (e.g., SMILES, InChIKey).

- Data Download: Extract fields: Canonical SMILES, Standardized Activity Value (e.g., IC50, Ki), Activity Unit, Relation (e.g., '=', '<', '>'), Target Organism, and PubMed ID.

- Merge Datasets: Combine data from all sources into a single table.

- Deduplication by InChIKey: Generate standard InChIKeys from SMILES. Remove exact duplicates based on the first block (connectivity) of the InChIKey and the target identifier.

- Activity Conflict Resolution: For remaining duplicates (same compound-target pair with differing values), apply a pre-defined rule:

- Rule 1: Prefer data from the source with higher curation trust score (e.g., ChEMBL over a small-scale study).

- Rule 2: If from the same source, calculate the geometric mean of numeric '=' relation values.

- Rule 3: Flag entries with '>' or '<' relations for separate treatment.

- Output: A deduplicated, merged dataset table.

Table 1: Hypothetical Data Curation Output Summary

| Source Database | Initial Entries | Post-Deduplication Entries | Conflict Resolutions Applied |

|---|---|---|---|

| ChEMBL 33 | 12,450 | 9,850 | 1,245 (Rule 1) |

| PubChem AID 1851 | 5,220 | 4,110 | 312 (Rule 2) |

| In-house Assays | 850 | 820 | 15 (Rule 2) |

| Total Unique Cmpd-Target Pairs | - | 12,205 | 1,572 |

Chemical Standardization

Application Note: Chemical standardization ensures all molecular structures are represented consistently prior to descriptor calculation or fingerprint generation. This step normalizes tautomeric, charged, and isomeric forms, removes artifacts, and is critical for meaningful structural comparison.

Protocol: Standardization Workflow using RDKit

- Input: List of canonical SMILES from the curated dataset.

- Sanitization: Ensure valency rules are correct; reject structures that fail.

- Neutralization: Remove minor fragments and neutralize common carboxylic acids, amines, and phosphate groups unless a specific salt form is required.

- Tautomer Canonicalization: Apply a standardized set of transformation rules (e.g., using the MolVS or RDKit's

TautomerCanonicalizer) to pick a consistent representative tautomer. - Stereochemistry: Remove undefined stereochemistry flags if not experimentally relevant; otherwise, keep specified stereocenters.

- Aromaticity: Apply a consistent aromaticity model (e.g., RDKit's default).

- Descriptor-Ready Output: Generate standardized SMILES and Sanitized Mol objects for the next step.

Table 2: Impact of Standardization on a Sample Dataset

| Standardization Step | Compounds Affected (%) | Common Change Example |

|---|---|---|

| Neutralization | ~25% | CC(=O)[O-] → CC(=O)O |

| Tautomer Canonicalization | ~15% | O=C1CC=CNC1 → OC1=CC=CNC1 (pyridone) |

| Stereochemistry Check | ~8% | C[C@H](O)C (keep specified) |

| Total Standardized | ~35% (non-unique) |

Chemical Standardization Workflow

Activity Data Preparation

Application Note: Activity data (e.g., IC50, Ki) must be converted to a uniform scale (typically pIC50 = -log10(IC50 in Molar)) and categorized for classification tasks. Censored data ('>' or '<') requires specific handling to avoid bias.

Protocol: Activity Value Transformation and Binning

- Unit Harmonization: Convert all activity values to molar units (M).

- Numeric Transformation: Calculate pActivity:

pX = -log10(X), where X is the molar concentration. - Censored Data Handling:

- For

'>X'values (e.g., >10 µM), assign a value slightly below the transformed limit (e.g., if X=10µM=1e-5 M, pX=5.0; assign pActivity = 4.95). - For

'<X'values (e.g., <1 nM), assign a value slightly above the transformed limit.

- For

- Threshold Definition for Classification: Define an activity threshold based on biological relevance (e.g., pIC50 > 6.0 for "Active", ≤ 6.0 for "Inactive").

- Dataset Splitting: Perform stratified splitting (by activity class) into Training (70-80%), Validation (10-15%), and Hold-out Test (10-15%) sets to ensure class distribution is preserved.

Table 3: Activity Data Preparation Example

| Raw Data (IC50) | Relation | Value (M) | pIC50 (Processed) | Assigned Class (Threshold=100nM) |

|---|---|---|---|---|

| 0.005 µM | = | 5.00E-09 | 8.30 | Active |

| 250 nM | = | 2.50E-07 | 6.60 | Active |

| > 10 µM | > | 1.00E-05 | 4.95* | Inactive |

| < 1 nM | < | 1.00E-09 | 9.05* | Active |

Assigned values for censored data.

Activity Data Preparation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Pre-Modeling Data Preparation

| Tool / Resource | Type | Primary Function in Pre-Modeling |

|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Core engine for chemical standardization, SMILES parsing, descriptor calculation, and fingerprint (ECFP) generation. |

| MolVS (Mol Standardizer) | Python Library | Provides a standardized set of rules for tautomerization, neutralization, and fragment removal. Often used with RDKit. |

| ChEMBL Database | Public Bioactivity Database | Primary source for curated, target-associated bioactivity data with standardized units and compound structures. |

| PubChem | Public Chemical Database | Source for additional bioassay data (AIDs) and compound information, requiring careful curation. |

| KNIME or Pipeline Pilot | Workflow Automation Platforms | Visual environment for building reproducible, documented data curation and standardization pipelines. |

| Python (Pandas, NumPy) | Programming Language & Libraries | Essential for data manipulation, table operations, and implementing custom curation logic. |

| InChIKey | IUPAC Standard Identifier | Provides a nearly unique hash for molecular structures, critical for reliable deduplication. |

| Jupyter Notebook | Interactive Computing Environment | Ideal for documenting, sharing, and executing the stepwise pre-modeling protocols interactively. |

From Theory to Practice: A Step-by-Step Workflow for Building Your Hybrid QSAR Model

Application Notes

Within the framework of QSAR modeling for drug discovery, the integration of cheminformatics and machine learning (ML) toolkits is critical for constructing robust predictive models from molecular structures. The workflow typically involves generating molecular representations—such as Extended-Connectivity Fingerprints (ECFP) and quantitative molecular descriptors—and feeding these features into statistical or ML algorithms to predict biological activity or physicochemical properties. The synergy between specialized chemical informatics tools (RDKit, PaDEL) and general-purpose ML libraries (scikit-learn, DeepChem) forms the backbone of modern computational chemistry research.

- RDKit is an open-source cheminformatics library for Python and C++. It excels at in-memory manipulation of chemical structures, high-quality descriptor calculation, and substructure searching. Its seamless integration with Python's scientific stack makes it ideal for building custom analysis pipelines.

- PaDEL-Descriptor is a Java-based software offering a vast, pre-packaged suite of 1D, 2D, and 3D molecular descriptors and fingerprints. It is particularly valuable for high-throughput batch calculation of >1875 descriptors and 12 types of fingerprints from file inputs, complementing RDKit's programmatic approach.

- scikit-learn is the premier Python library for classical ML. It provides efficient, user-friendly implementations of a wide array of algorithms essential for QSAR, including feature selection, data preprocessing (standardization), model training (Random Forest, SVM, etc.), and rigorous validation (cross-validation, metrics).

- DeepChem is a Python library specifically designed for deep learning in drug discovery, chemistry, and biology. It facilitates the creation of complex neural network architectures on molecular data, supports graph-based models directly from structures, and offers tools for handling datasets like Tox21.

Table 1: Core Toolkit Comparison for QSAR Modeling

| Toolkit | Primary Language | Core Strength in QSAR | Typical Output for Modeling | License |

|---|---|---|---|---|

| RDKit | Python/C++ | In-molecular manipulation, descriptor/fingerprint calculation, substructure filters. | ECFP fingerprints, topological descriptors, 3D coordinates. | BSD 3-Clause |

| PaDEL-Descriptor | Java | High-throughput batch calculation of a comprehensive descriptor/fingerprint set. | 1D/2D descriptors, PubChem fingerprints, 2D atom pairs. | Freely available for research |

| scikit-learn | Python | Classical ML algorithms, pipeline construction, model evaluation. | Trained regression/classification models, feature importance scores. | BSD 3-Clause |

| DeepChem | Python | Deep learning on molecular graphs and datasets, hyperparameter tuning. | Trained graph neural networks, multitask models. | MIT |

Table 2: Key Molecular Representations and Their Calculation Sources

| Representation Type | Example | RDKit | PaDEL | Typical Use in QSAR |

|---|---|---|---|---|

| Fingerprints (Structural) | ECFP4, MACCS Keys | Yes | Yes | Captures molecular substructure patterns. |

| 2D Descriptors | Molecular Weight, LogP, TPSA | Yes (≈200) | Yes (∼1200+) | Models ADME/Tox properties. |

| 3D Descriptors | PMI, Radius of Gyration | Requires conformer generation | Yes (from provided 3D structures) | Encodes molecular shape and size. |

Experimental Protocols

Protocol 1: Generating ECFP4 Fingerprints and 2D Descriptors using RDKit Objective: To convert a set of SMILES strings into numerical features suitable for ML.

- Input: A

.csvfile namedcompounds.csvwith columns"SMILES"and"Activity". - Environment Setup: Install RDKit (

conda install -c conda-forge rdkit) and required Python libraries (pandas, numpy). - Data Loading & Processing:

- Feature Generation:

Protocol 2: Batch Descriptor Calculation using PaDEL-Descriptor Objective: To compute a comprehensive set of molecular descriptors from a structure file.

- Input Preparation: Prepare an MDL SDfile (

compounds.sdf) containing the 2D or 3D structures of your compounds. Ensure structures are valid. - Tool Download: Download PaDEL-Descriptor (v2.21) from its official repository.

- Command Line Execution:

-Xmx2G: Sets maximum Java heap memory.-2d/-3d: Calculates 2D and 3D descriptors.-fingerprints: Calculates included fingerprints.

- Output: The

descriptors.csvfile will contain compound IDs and calculated values. Use a script to remove constant or near-constant variables and merge with activity data.

Protocol 3: Building a Random Forest QSAR Model with scikit-learn Objective: To train and validate a predictive QSAR model using ECFP features.

- Input:

qsar_features.csvfrom Protocol 1 (features +"Activity"column). - Data Preprocessing:

- Model Training & Validation:

Visualizations

Diagram Title: QSAR Feature Generation and Modeling Workflow

Diagram Title: Software Toolkit Ecosystem for QSAR

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Solution | Function in QSAR Modeling Pipeline |

|---|---|

| Compound Dataset (e.g., ChEMBL) | Source of bioactive molecules with associated experimental measurements (IC50, Ki). Provides the SMILES/SDF structures and activity values for model training. |

| Standardization Script (e.g., using RDKit) | Neutralizes charges, removes salts, generates canonical tautomers, and produces a consistent, clean set of input structures to avoid artifacts. |

| Descriptor/Fingerprint Feature Set | The numerical representation of molecules (e.g., ECFP4 bit vector, topological descriptors). Acts as the mathematical "language" describing chemistry to the ML algorithm. |

| Feature Selection Algorithm (e.g., Variance Threshold, RFE) | Reduces dimensionality, removes noise/irrelevant features, decreases overfitting risk, and improves model interpretability and performance. |

| Train/Test/Validation Split Protocol | Rigorous partitioning of data to assess model generalizability and avoid overfitting. Typically an 80/20 split or nested cross-validation. |

| Model Evaluation Metrics (R², RMSE, MAE for regression; AUC-ROC for classification) | Quantitative measures to judge the predictive accuracy and reliability of the built QSAR model against held-out data. |

| Applicability Domain (AD) Analysis Tool | Determines the chemical space region where the model's predictions are reliable, identifying interpolation vs. extrapolation for new compounds. |

Within the broader thesis on QSAR modeling, the integration of Extended-Connectivity Fingerprints (ECFPs) and molecular descriptors is a cornerstone for building robust predictive models of biological activity. ECFPs capture topological and pharmacophoric features through a circular substructure hashing algorithm, while molecular descriptors quantify specific physicochemical and topological properties. This protocol details the generation of ECFP bits and the curation of a complementary molecular descriptor set to create a comprehensive feature space for machine learning in drug discovery.

Theoretical Background & Current State (Based on Latest Search)

ECFPs, particularly the ECFP4 variant (radius=2), remain a gold-standard for structure-activity modeling. Recent literature emphasizes the synergy between information-rich fingerprints and interpretable 2D/3D descriptors. The optimal strategy involves generating a high-dimensional ECFP vector and then selecting a curated, non-redundant set of physicochemical descriptors to avoid overfitting while capturing key ADME/Tox and binding-related properties.

Protocol 1: Calculating ECFP Bits

Research Reagent & Software Toolkit

| Item | Function/Brief Explanation |

|---|---|

| RDKit (Python) | Open-source cheminformatics library for molecule manipulation and fingerprint calculation. |

| Compound Dataset (SDF/CSV) | Input file containing standardized SMILES strings or Mol structures of the chemical library. |

| Python Scripting Environment | (e.g., Jupyter Notebook) for executing the calculation pipeline. |

| Pandas & NumPy | Libraries for handling resulting feature matrices and dataframes. |

Detailed Methodology

- Molecular Standardization: Load molecules from the source file (e.g.,

dataset.sdf). Apply standardization: neutralize charges, remove solvents, and generate canonical tautomers. - ECFP Parameter Definition: Set the fingerprint parameters. Standard ECFP4 uses:

radius=2: Captures bonds two bonds away from each atom.nBits=2048: The fixed length of the bit vector (standard for ensuring sparsity).useFeatures=False: Standard ECFP setting (set toTruefor FCFP).

- Bit Vector Generation: For each molecule, generate the fingerprint using RDKit's

GetMorganFingerprintAsBitVect(mol, radius=2, nBits=2048). This creates a bit vector where a bit is set to 1 if the corresponding substructure is present. - Matrix Assembly: Compile all bit vectors into a Pandas DataFrame (

M x 2048), where M is the number of compounds. Columns are named asECFP_0toECFP_2047.

ECFP Bit Generation Computational Workflow

Protocol 2: Curating Molecular Descriptor Sets

Research Reagent & Software Toolkit

| Item | Function/Brief Explanation |

|---|---|

| RDKit or Mordred | RDKit has built-in descriptors; Mordred offers a more comprehensive set (~1800 descriptors). |

| PaDEL-Descriptor | Alternative Java-based software for calculating descriptors. |

| SciKit-Learn | For subsequent feature scaling and selection. |

| Correlation Analysis Library | (e.g., SciPy) for calculating Pearson/Spearman correlation. |

Detailed Methodology

- Descriptor Calculation: Use the standardized molecules from Protocol 1. Calculate a broad initial set using Mordred (

Calculator(descriptors, ignore_3D=True).calculate(mols)) or RDKit'sDescriptors.CalcMolDescriptors(mol). This yields ~200-1800 initial descriptors. - Data Cleaning: Remove descriptors with:

- Zero variance across the dataset.

- Excessive missing values (>20%).

- Redundancy Reduction: Calculate pairwise Pearson correlation between all descriptors. For any pair with |r| > 0.95, remove one of the descriptors (often the simpler one or one with less direct physicochemical interpretation).

- Relevance Selection (Optional but Recommended): Using a training set with biological activity labels, perform univariate feature selection (e.g., mutual information, F-test) or leverage model-based importance (Random Forest) to retain the top k descriptors that correlate with the endpoint. A pragmatic target is 50-150 curated descriptors.

- Final Feature Set Assembly: Horizontally concatenate the curated descriptor DataFrame (

M x D) with the ECFP bit DataFrame (M x 2048) to form the complete feature matrix for QSAR modeling.

Molecular Descriptor Curation and Feature Fusion Workflow

Data Presentation: Typical Output Metrics

Table 1: Feature Set Dimensions at Protocol Stages

| Protocol Stage | Typical Number of Features | Data Type | Example Software Output |

|---|---|---|---|

| ECFP Bit Generation (Raw) | 2048 (fixed) | Binary (0/1) | DataFrame shape: (1500, 2048) |

| Molecular Descriptors (Raw) | 200 - 1800 | Continuous/Integer | Mordred DataFrame: (1500, 1826) |

| Post-Cleaning & Correlation Filtering | 300 - 800 | Continuous/Integer | Filtered DataFrame: (1500, ~450) |

| Final Curated Descriptor Set | 50 - 150 | Continuous/Integer | Curated DataFrame: (1500, 112) |

| Final Combined Feature Set | ~2100 - 2200 | Mixed | Final Matrix: (1500, 2160) |

Table 2: Common Curated Molecular Descriptor Categories

| Category | Example Descriptors | Relevance to QSAR |

|---|---|---|

| Lipophilicity | LogP, MLogP, XLogP | Membrane permeability, binding affinity |

| Topological | Molecular Weight, BalabanJ, TPSA | Size, shape, polar surface area (related to absorption) |

| Electronic | Apol, SMR, Partial Charges | Charge distribution, dipole moment (binding interactions) |

| Constitutional | Heavy Atom Count, Rotatable Bonds, H-Bond Donors/Acceptors | Flexibility and specific binding capabilities |

1. Introduction: The Imperative of Rigorous Data Splitting in QSAR In Quantitative Structure-Activity Relationship (QSAR) modeling, particularly within a thesis employing Extended Connectivity Fingerprints (ECFP) and molecular descriptors, the predictive validity of a model is entirely contingent upon rigorous data preparation and splitting. A flawed partitioning strategy leads to data leakage, over-optimistic performance estimates, and ultimately, models that fail in prospective drug development. This protocol details established best practices for constructing robust training, validation, and test sets to ensure models generalize to novel chemical matter.

2. Core Principles & Definitions

- Training Set: Used to directly fit the model parameters (e.g., ML algorithm weights, regression coefficients).

- Validation Set: Used for unbiased evaluation during model tuning (e.g., hyperparameter optimization, feature selection). It guides the iterative model development process.

- Test Set (Hold-Out Set): Used only once for the final, unbiased assessment of the fully trained and tuned model's predictive performance. It simulates real-world application on truly novel compounds.

3. Quantitative Guidelines for Data Splitting Ratios

Table 1: Common Data Splitting Strategies and Use Cases

| Strategy | Typical Ratio (Train:Val:Test) | Best For | Key Consideration |

|---|---|---|---|

| Simple Hold-Out | 80:0:20 or 70:0:30 | Very large datasets (>10k samples) | No validation set for tuning; risk of high variance in performance estimate. |

| Single Validation Set | 60:20:20 or 70:15:15 | Medium to large datasets | Provides a stable validation set but performance can be sensitive to the specific random split. |

| Nested Cross-Validation | N/A (e.g., Outer 5-fold, Inner 5-fold) | Small to medium datasets | Gold standard for maximizing data use and obtaining robust performance estimates; computationally intensive. |

| Temporal/Scaffold Split | Variable | Mimicking real-world discovery | Most realistic for assessing generalizability to new structural classes or assay batches. |

4. Experimental Protocol: Scaffold-Based Splitting for QSAR Generalization This protocol is essential for evaluating a model's ability to predict activity for novel chemotypes, a critical requirement in drug discovery.

A. Objective: To partition a compound dataset into training, validation, and test sets such that compounds in the test set are structurally distinct from those in the training/validation sets, based on molecular scaffolds.

B. Materials & Reagents (The Scientist's Toolkit) Table 2: Essential Research Reagent Solutions for Data Splitting

| Item | Function | Example Tool/Library |

|---|---|---|

| Chemical Standardization Pipeline | Neutralizes salts, removes solvents, generates canonical tautomers, and produces consistent representations. | RDKit (Chem.MolToSmiles), OpenBabel |

| Scaffold Generator | Extracts the core molecular framework (Bemis-Murcko scaffold) from a molecule. | RDKit (Scaffolds.GetScaffoldForMol), Custom scripts |

| Descriptor/Fingerprint Calculator | Encodes molecular structure into numerical features for similarity analysis or clustering. | RDKit (ECFP, descriptors), Mordred, PaDEL |

| Clustering Algorithm | Groups molecules based on structural similarity to aid in stratified splitting. | Butina clustering, k-Means, MaxMin |

| Data Splitting Library | Implements splitting algorithms with stratification capabilities. | scikit-learn (StratifiedShuffleSplit, GroupShuffleSplit), DeepChem (ScaffoldSplitter) |

C. Step-by-Step Workflow

Data Curation:

- Curate the raw compound-activity dataset. Remove duplicates, handle inconclusive activity values (e.g., ">" or "<" signs), and apply a consistent activity threshold (e.g., pIC50 > 6.0 = active).

- Standardize all molecular structures using the standardization pipeline (Table 2).

Scaffold Identification:

- For each standardized molecule, generate the Bemis-Murcko scaffold (cyclic system with linker atoms).

- Create a scaffold-to-molecules mapping.

Stratified Partitioning:

- Objective: Ensure active/inactive class distribution is similar across splits.

- Method: Sort scaffolds by the number of associated molecules. Iteratively assign scaffolds to the test set until it contains ~15-20% of the total molecules. This ensures the test set is structurally distinct. Repeat the process for the validation set from the remaining scaffolds.

- Alternative: For large datasets, cluster fingerprints (e.g., ECFP4) and assign whole clusters to splits to maintain structural segregation.

Finalization and Sanity Check:

- Verify that no scaffold appears in more than one set.

- Confirm that the distribution of activity classes and key descriptor ranges (e.g., molecular weight, logP) is reasonably balanced across the training and validation sets. The test set may have different distributions, which is the point of the exercise.

Diagram Title: Workflow for Scaffold-Based Data Splitting in QSAR

5. Protocol for Nested Cross-Validation with Descriptors & ECFP This protocol is recommended for smaller datasets or when a definitive single test set is not required, as it provides a robust performance estimate.

A. Objective: To perform a comprehensive model training, tuning, and evaluation without a fixed hold-out test set, using all data efficiently.

B. Step-by-Step Workflow

Outer Loop (Performance Estimation):

- Split the entire dataset into k folds (e.g., k=5). For each outer iteration:

- Hold out one fold as the "outer test set".

- Use the remaining k-1 folds as the "model development set."

- Split the entire dataset into k folds (e.g., k=5). For each outer iteration:

Inner Loop (Model Tuning):

- On the model development set, perform another k-fold or hold-out split to create training and validation subsets.

- Train candidate models (e.g., different algorithms, descriptor sets, hyperparameters) on the inner training folds.

- Evaluate them on the inner validation fold(s). Select the best-performing model configuration.

Final Training & Evaluation:

- Retrain the selected best model configuration on the entire model development set (k-1 folds).

- Evaluate this final model on the held-out outer test set from Step 1. Record the performance metric (e.g., R², RMSE).

Iteration & Aggregation:

- Repeat Steps 1-3 for each of the k outer folds, ensuring each compound is in the outer test set exactly once.

- Aggregate the k performance metrics to report the mean and variance of the model's predictive ability.

Diagram Title: Nested Cross-Validation Workflow for QSAR

Within Quantitative Structure-Activity Relationship (QSAR) modeling, the combination of Extended Connectivity Fingerprints (ECFP) and numerical molecular descriptors generates feature spaces of exceptionally high dimensionality, often exceeding several thousand variables. This high-dimensionality challenge introduces noise, increases the risk of overfitting, and complicates model interpretation. This document provides application notes and protocols for systematic feature selection and dimensionality reduction, framed within a thesis on robust QSAR model development.

Core Concepts & Quantitative Comparison

Table 1: Comparison of Dimensionality Reduction & Feature Selection Techniques in QSAR Context

| Method Category | Example Techniques | Preserves Interpretability? | Handles Multicollinearity? | Typical Output Dimension | Relative Computational Cost (Low/Med/High) |

|---|---|---|---|---|---|

| Filter Methods | Variance Threshold, Pearson Correlation, Mutual Information | High | No | User-defined (top k features) | Low |

| Wrapper Methods | Recursive Feature Elimination (RFE), Forward/Backward Selection | High | Partial (depends on base model) | Model-optimized | High (model-dependent) |

| Embedded Methods | LASSO (L1 regularization), Random Forest Feature Importance | Medium-High | Yes (LASSO) | Model-optimized | Medium |

| Linear Projection | Principal Component Analysis (PCA), Linear Discriminant Analysis (LDA) | Low (components are linear combos) | Yes | User-defined or variance-based | Medium |

| Non-Linear Manifold | t-SNE, UMAP | Very Low | N/A | Typically 2-3 for visualization | Medium-High |

Table 2: Impact of Dimensionality Reduction on Model Performance (Hypothetical Benchmark Dataset) Dataset: 1500 compounds, 5000 initial features (ECFP4 + RDKit descriptors). Baseline (All Features) SVM accuracy: 65±3% (5-fold CV).

| Technique | Number of Final Features/Components | Model Type | Avg. Test Accuracy (%) | Std. Deviation (%) | Training Time (s) |

|---|---|---|---|---|---|

| All Features (Baseline) | 5000 | SVM-RBF | 65.0 | 3.0 | 42.1 |

| Variance Threshold (>0.01) | 1850 | SVM-RBF | 66.2 | 2.8 | 18.7 |

| Mutual Information (Top 200) | 200 | SVM-RBF | 70.5 | 2.5 | 5.2 |

| LASSO Regression (alpha=0.01) | 95 | SVM-RBF | 72.1 | 2.1 | 4.8 |

| PCA (95% Variance) | 112 | SVM-RBF | 68.3 | 2.9 | 6.5 |

| RFE with Random Forest (50 feat) | 50 | SVM-RBF | 73.4 | 1.9 | 12.3 |

Experimental Protocols

Protocol 3.1: Pre-Filtering for Molecular Descriptors & ECFP

Objective: Remove low-variance and constant descriptors/fingerprint bits prior to modeling.

- Data Preparation: Standardize numerical descriptors (e.g., using

StandardScaler). ECFP bits are binary (0/1). - Variance Calculation: For numerical descriptors, compute variance. For binary ECFP bits, compute the ratio of the minority class (min(p, 1-p)).

- Threshold Application: Apply a variance threshold (e.g., 0.01 for standardized descriptors) or a minimum bit frequency (e.g., present in >2% and <98% of compounds for ECFP).

- Output: A filtered feature matrix.

Protocol 3.2: Recursive Feature Elimination with Cross-Validation (RFECV)

Objective: Identify the optimal number of features using a wrapper method with inbuilt validation.

- Initialize Estimator: Choose a core model with inherent feature weighting (e.g.,

SVR(kernel='linear'),RandomForestRegressor). - Setup RFECV: Use

RFECV(estimator, step=50, cv=5, scoring='neg_mean_squared_error'). Thestepargument removes 50 features per iteration. - Execution: Fit

RFECVto the training data. The object will perform CV at each step to evaluate performance with different feature counts. - Optimal Feature Set: Extract

RFECV.support_(boolean mask for optimal features) andRFECV.n_features_(optimal number). - Validation: Train a final model on the selected features and evaluate on a held-out test set.

Protocol 3.3: Dimensionality Reduction via PCA for Visualization & Regression

Objective: Reduce feature space to principal components for analysis and linear modeling.

- Standardization: Center and scale all features to unit variance. Critical for descriptors of different units.

- PCA Fitting: Fit PCA on the training set only:

pca = PCA(n_components=0.95). The0.95argument retains components explaining 95% of variance. - Transformation: Apply the learned transformation to both train and test sets:

X_train_pca = pca.transform(X_train_scaled). - Analysis: Inspect

pca.explained_variance_ratio_to understand contribution of each component. Use first 2-3 components for scatter plot visualization of chemical space. - Modeling: Train a linear model (e.g., Ridge Regression) on the principal components. Note: interpretability of original features is lost.

Visualization & Workflows

QSAR Feature Processing Pipeline

High-Dim Problems & Solutions

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Libraries for Feature Engineering in QSAR

| Tool / Library | Primary Function | Key Application in Protocol | Reference/Link |

|---|---|---|---|

| RDKit | Open-source cheminformatics | Calculation of 2D/3D molecular descriptors, molecular standardization. | rdkit.org |

| scikit-learn | Machine Learning in Python | Implementation of VarianceThreshold, RFECV, PCA, LASSO, and modeling algorithms. | scikit-learn.org |

| Matplotlib / Seaborn | Data visualization | Creating loadings plots for PCA, feature importance bar charts, correlation heatmaps. | matplotlib.org |

| UMAP | Non-linear dimensionality reduction | Visual exploration of chemical space manifolds beyond linear PCA. | umap-learn.readthedocs.io |

| MolVS | Molecule validation & standardization | Ensuring consistent input structures (tautomer, charge normalization) before descriptor calculation. | github.com/mcs07/MolVS |

| Pandas & NumPy | Data manipulation & numerical computing | Core data structures and operations for handling feature matrices and results. | pandas.pydata.org |

Within the broader thesis on Quantitative Structure-Activity Relationship (QSAR) modeling integrating Extended-Connectivity Fingerprints (ECFP) and molecular descriptors, this document details the critical application phase: training supervised machine learning models on the derived hybrid feature matrix. The hybrid matrix combines the chemical substructure information of ECFP with the physicochemical and topological properties of molecular descriptors, aiming to build robust predictive models for biological activity. The performance of three established algorithms—Random Forest (RF), Support Vector Machine (SVM), and eXtreme Gradient Boosting (XGBoost)—is evaluated to identify the optimal modeling approach for the dataset under investigation.

Research Reagent Solutions (The Scientist's Toolkit)

| Item | Function in QSAR Model Training |

|---|---|

| Hybrid Feature Matrix | The primary input data, combining ECFP bit vectors and standardized molecular descriptor values for each compound. |

| Activity Vector | The target variable (e.g., pIC50, pKi) for supervised learning, representing the measured biological endpoint. |

| Scikit-learn Library | Python library providing implementations for Random Forest and SVM (RBF kernel), along with data splitting and preprocessing tools. |

| XGBoost Library | Optimized library for gradient boosting, offering high-performance implementation of the XGBoost algorithm. |

| Hyperparameter Grid | Pre-defined sets of key algorithm parameters (e.g., nestimators, C, gamma, maxdepth) for systematic optimization. |

| K-Fold Cross-Validation | A resampling procedure used to reliably estimate model performance and guard against overfitting. |

| Model Evaluation Metrics | Quantitative scores (R², RMSE, MAE) used to assess and compare the predictive accuracy of trained models. |

Experimental Protocols for Model Implementation

Protocol: Data Partitioning and Preprocessing

- Input: The complete hybrid feature matrix (

X) and corresponding activity vector (y). - Partitioning: Split

Xandyinto training (80%) and hold-out test (20%) sets using stratified sampling based on activity binning to maintain distribution. - Feature Scaling: Apply standardization (Z-score normalization) to the training set features. Use the training set's mean and standard deviation to scale the test set to prevent data leakage.

- Output: Scaled training features (

X_train_scaled), training targets (y_train), scaled test features (X_test_scaled), and test targets (y_test).

Protocol: Hyperparameter Optimization via Grid Search with Cross-Validation

- Define Hyperparameter Grid: Specify the search space for each algorithm.

- Initialize Model: Instantiate the base model (RF, SVM, or XGBoost).

- Configure GridSearchCV: Use 5-fold cross-validation and the coefficient of determination (R²) as the scoring metric.

- Execute Search: Fit

GridSearchCVonX_train_scaledandy_train. The process explores all parameter combinations. - Extract Best Model: Identify the model configuration with the highest mean cross-validation score.

Key Hyperparameters Searched:

- Random Forest:

n_estimators: [100, 300, 500];max_depth: [10, 30, None];min_samples_split: [2, 5]. - SVM (RBF Kernel):

C: [0.1, 1, 10, 100];gamma: ['scale', 0.001, 0.01]. - XGBoost:

n_estimators: [100, 200];max_depth: [3, 6, 9];learning_rate: [0.01, 0.1];subsample: [0.8, 1.0].

Protocol: Final Model Training & Evaluation

- Train Final Model: Refit the best-estimated model (from Protocol 3.2) on the entire scaled training set.

- Predict on Test Set: Use the final model to generate predictions (

y_pred) forX_test_scaled. - Quantitative Evaluation: Calculate performance metrics by comparing

y_predtoy_test. - Record Results: Log all metrics for comparative analysis.

Table 1: Comparative Performance of Optimized Models on Hold-Out Test Set

| Algorithm | Best Hyperparameters | R² | RMSE | MAE |

|---|---|---|---|---|

| Random Forest | nestimators=300, maxdepth=30, minsamplessplit=2 | 0.87 | 0.48 | 0.35 |

| Support Vector Machine | C=10, gamma=0.01 | 0.82 | 0.57 | 0.42 |

| XGBoost | nestimators=200, maxdepth=6, learning_rate=0.1 | 0.89 | 0.45 | 0.33 |

R²: Coefficient of Determination; RMSE: Root Mean Square Error; MAE: Mean Absolute Error.

Visualized Workflows

Model Training and Selection Workflow

Hyperparameter Tuning via 5-Fold Cross-Validation

Within the broader thesis on advancing QSAR modeling through the integration of ECFP fingerprints and physicochemical descriptors, this protocol details the critical translational step: deploying a validated model for practical drug discovery. The transition from a statistical model to a robust, automated prediction tool enables the virtual screening of large chemical libraries and the rational design of novel compounds with optimized potency.

Application Notes: Model Deployment Architecture

A successful deployment requires a reproducible pipeline that standardizes molecular input, executes the model, and interprets the output. Key components include:

- Model Serialization: The trained model (e.g., Random Forest, XGBoost) and associated feature scalers are serialized using

pickleorjoblibfor persistence. - Input Standardization: A canonicalization and standardization protocol (e.g., using RDKit) ensures that input molecules are consistent with the training set, handling tautomers, protonation states, and salt stripping.

- Descriptor Generation On-the-Fly: The pipeline must replicate the exact feature generation steps: calculating the specified set of 2D/3D molecular descriptors (e.g., using

mordred) and generating ECFP4 fingerprints with the identical radius and bit length used during training. - Prediction and Confidence Scoring: The model outputs a predicted activity (e.g., pIC50). Additionally, implementing confidence metrics, such as prediction probability or the standard deviation from ensemble models, is crucial for prioritizing hits.

Protocol: End-to-End Virtual Screening Workflow

Objective: To computationally screen a library of 1M compounds to identify high-probability active molecules against a target protein.

Materials & Software:

- Compound library in SDF or SMILES format (e.g., ZINC20, Enamine REAL).

- Standardized QSAR model file (

.pkl). - Python environment (v3.9+) with RDKit, pandas, numpy, scikit-learn, joblib.

- High-performance computing cluster or cloud instance (for large libraries).

Procedure:

Environment Setup:

Data Preprocessing Module:

Feature Generation Module:

Batch Prediction Script:

Hit Triage: Sort results by

pred_pIC50andconfidence_score. Select top-ranked compounds (e.g., top 1000) for subsequent visual inspection and docking studies.

Table 1: Summary of Virtual Screening Output for a 1M Compound Library

| Metric | Value |

|---|---|

| Total Compounds Processed | 1,000,000 |

| Successfully Standardized & Featurized | 987,542 (98.8%) |

| Compounds Predicted as Active (pIC50 > 6.0) | 12,318 (1.25%) |

| High-Confidence Hits (pIC50 > 7.0 & Confidence > 0.85) | 1,447 (0.15%) |

| Average Predicted pIC50 of High-Confidence Hits | 7.6 ± 0.3 |

| Estimated Runtime (CPU: 32 cores) | 4.2 hours |

Mandatory Visualization

Diagram 1: QSAR Model Deployment and Screening Pipeline

Diagram 2: Model Inference Logic for a Single Compound

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Resources for QSAR Model Deployment

| Item | Category | Function in Protocol |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Core library for molecular standardization, ECFP generation, and descriptor calculation. |

| Scikit-learn | Machine Learning Library | Used for model serialization/loading (joblib) and applying pre-trained scalers during inference. |

| Mordred | Molecular Descriptor Calculator | Calculates a comprehensive set of 2D/3D molecular descriptors for feature vector generation. |

| Pandas & NumPy | Data Processing Libraries | Handle large chemical libraries as DataFrames and manage numerical feature arrays. |

| High-Performance Compute (HPC) Cluster | Infrastructure | Enables parallel batch processing of million-compound libraries in feasible time. |

| Serialized Model File (.pkl) | Deployed Asset | Contains the finalized, trained QSAR model from the thesis research, ready for application. |

| Standardized Chemical Library (e.g., ZINC) | Input Data | Provides a large, purchasable set of diverse molecules for virtual screening. |

Diagnosing and Enhancing Model Performance: Solutions for Common QSAR Challenges

In Quantitative Structure-Activity Relationship (QSAR) modeling, particularly when using Extended Connectivity Fingerprints (ECFP) and molecular descriptors, error metrics are the primary diagnostic tools. They are essential for determining a model's predictive reliability and for diagnosing critical issues such as underfitting, overfitting, and bias. This document provides application notes and protocols for interpreting these metrics within a rigorous QSAR framework.

Core Error Metrics: Definitions and Ideal Ranges

The following table summarizes the key error metrics used to evaluate regression-based QSAR models (e.g., predicting pIC50, pKi, or LogP). The "Ideal QSAR Range" provides general, field-specific targets for a robust, generalizable model.

Table 1: Core Error Metrics for QSAR Regression Models

| Metric | Formula | Interpretation | Ideal QSAR Range |

|---|---|---|---|

| R² (Coefficient of Determination) | 1 - (SSres/SStot) | Proportion of variance in the dependent variable predictable from the independent variables. | Training: 0.7 - 0.9; Test: Close to training (Δ < 0.3) |

| Adjusted R² | 1 - [(1-R²)(n-1)/(n-p-1)] | R² adjusted for the number of predictors (p) relative to samples (n). Penalizes overfitting. | Should not be significantly lower than R². |

| Mean Absolute Error (MAE) | (1/n) * Σ|yi - ŷi| | Average magnitude of errors, in the original units of the response variable. | As low as possible, context-dependent on activity scale. |

| Root Mean Squared Error (RMSE) | √[ (1/n) * Σ(yi - ŷi)² ] | Square root of the average of squared errors. More sensitive to large errors. | Should be low; typically 10-20% higher than MAE. |

| Q² (or Q²_F3) | 1 - PRESS/SS_tot (Test Set) | Predictive R² from external test set validation. Gold standard for generalizability. | > 0.5 (Acceptable), > 0.6 (Good), > 0.7 (Excellent) |

Diagnostic Framework: Underfitting vs. Overfitting

The relationship between model complexity, error metrics on training and test sets, and the resulting diagnoses are illustrated in the following workflow.

Diagram 1: Model Diagnosis Workflow (86 chars)

Experimental Protocols for Model Diagnosis

Protocol 4.1: Systematic Model Validation Workflow

Objective: To rigorously diagnose bias and variance in a QSAR model built from ECFP descriptors. Materials: Dataset of compounds with associated biological activity (e.g., IC50), chemical standardization tools, RDKit or equivalent cheminformatics library, modeling software (e.g., scikit-learn).

Procedure:

- Data Curation & Splitting:

- Standardize molecular structures (neutralize, remove salts, canonical tautomer).

- Generate ECFP4 (radius=2) fingerprints for all compounds.

- Perform Stratified Splitting based on activity bins or Time-Based Splitting if applicable. Alternatively, use Kennard-Stone for representative selection.

- Final Sets: Training (70-80%), Validation (10-15%, for hyperparameter tuning), Hold-out Test (10-15%, for final Q² evaluation).

Model Training with Complexity Variation:

- Train multiple models of varying complexity on the training set only.

- Example Complexity Levers:

- Random Forest: Number of trees (n_estimators: 50, 100, 500), max depth (3, 10, unlimited).

- Support Vector Machine (SVM): Regularization parameter C (0.1, 1, 10, 100).

- Neural Network: Number of hidden layers/neurons, dropout rate.

Error Metric Calculation:

- For each model/complexity level, calculate R² and RMSE for the Training Set.

- Use the Validation Set to calculate corresponding validation metrics (R²val, RMSEval).

Diagnostic Plot Generation:

- Create a model complexity vs. error plot (see Diagram 2).

- Identify the point where validation error is minimized. This is the optimal complexity.

- A model where training error is high and validation error is similarly high indicates underfitting (left side of plot).

- A model where training error is very low but validation error is high and diverging indicates overfitting (right side of plot).

Final Assessment:

- Retrain the model with optimal complexity on the combined training+validation set.

- Evaluate final performance on the hold-out test set to report the unbiased Q² and RMSE_test.

Protocol 4.2: Y-Randomization Test for Chance Correlation

Objective: To confirm the model is not the result of a chance correlation (a form of bias). Procedure:

- Train the intended QSAR model on the training set and note R²_train.

- Randomly shuffle the activity values (y-vector) of the training set, destroying the true structure-activity relationship.

- Retrain the same model architecture on the shuffled data.

- Record the R² of the model built on scrambled data.

- Repeat steps 2-4 at least 50 times to build a distribution of random R² values.