Beyond the Data Limit: Advanced Strategies to Boost Sample Efficiency in Molecular Optimization

This article provides a comprehensive guide for computational chemists and drug discovery researchers on overcoming the critical bottleneck of sample efficiency in molecular optimization.

Beyond the Data Limit: Advanced Strategies to Boost Sample Efficiency in Molecular Optimization

Abstract

This article provides a comprehensive guide for computational chemists and drug discovery researchers on overcoming the critical bottleneck of sample efficiency in molecular optimization. We explore the fundamental challenges posed by standard benchmarks like GuacaMol and MoleculeNet, dissect cutting-edge methodological approaches from active learning to meta-learning, offer practical troubleshooting for common pitfalls in model training and evaluation, and present a comparative analysis of state-of-the-art algorithms. Our goal is to equip professionals with the knowledge to design more data-efficient, reliable, and clinically relevant generative models for de novo drug design.

The Sample Efficiency Bottleneck: Why Molecular Optimization Benchmarks Demand Smarter Data Use

Troubleshooting Guides & FAQs

FAQ 1: My generative model produces chemically invalid or unstable molecules. How can I improve sample efficiency in structure generation?

Answer: This is a common issue where models waste samples on invalid outputs. Implement a combination of techniques:

- Constrained Generation: Use a grammar-based model (e.g., SMILES grammar) or a fragment-based linker to ensure valence rules are never violated.

- Post-hoc Filtering & Boosting: Apply rapid, rule-based filters (e.g., for medicinal chemistry alerts, synthetic accessibility) to discard invalid proposals before expensive simulation. Use the filtered data to retrain the model, boosting the proportion of valid samples.

- Key Experimental Protocol (Reinforcement Learning from Physics Feedback):

- Propose: Generate a batch of N candidate molecules using your initial generative model.

- Filter: Apply fast, inexpensive filters (e.g., Pan-Assay Interference Compounds (PAINS) filters, molecular weight, logP).

- Score: Run the filtered subset (M molecules, where M << N) through a more expensive scoring function (e.g., a docking simulation or a high-fidelity predictive model).

- Update: Use a reinforcement learning objective (e.g., Policy Gradient) to update the generative model, maximizing the likelihood of molecules that passed the filter and received a high score. This reduces wasted samples on invalid or poor-scoring regions of chemical space.

FAQ 2: My surrogate model (QSAR) predictions do not correlate well with experimental results after selecting compounds for synthesis. What went wrong?

Answer: This indicates a domain shift between your training data and the optimized molecules, a major sample efficiency failure.

- Diagnosis: Check if your candidate molecules fall outside the Applicability Domain (AD) of your predictive model. Use distance metrics (e.g., Tanimoto similarity) or uncertainty estimation.

- Solution - Active Learning Loop Protocol:

- Initial Training: Train your primary predictive model (e.g., Random Forest, Neural Network) on your available experimental dataset.

- Acquisition: Use an acquisition function (e.g., Expected Improvement, Upper Confidence Bound) on a large generated library to select a batch of candidates that balance high predicted performance and high uncertainty.

- Experimental Query: Send this small, diverse batch for experimental testing (e.g., assay).

- Iterative Update: Augment your training data with the new experimental results. Retrain the model. This closes the loop, improving the model where it matters most for optimization, leading to better sample efficiency over cycles.

FAQ 3: How do I fairly compare the sample efficiency of different molecular optimization algorithms on a benchmark?

Answer: You must control for the total number of expensive function evaluations (e.g., docking calls, simulator queries, wet-lab experiments).

- Standard Protocol for Benchmarking:

- Define a limited evaluation budget (e.g., 5,000 calls to the ground-truth function or simulator).

- For each algorithm (e.g., Bayesian Optimization, RL, GA), run multiple trials.

- At each step of the optimization, record the best-performing molecule found so far versus the cumulative number of expensive evaluations used.

- Plot the aggregated results. The algorithm that reaches a higher performance level (e.g., binding affinity) with fewer evaluations is more sample-efficient.

Table 1: Sample Efficiency Comparison on Benchmark Tasks (Theoretical Performance)

| Algorithm | Avg. Evaluations to Hit Target (PDBbind) | Avg. Evaluations to Hit Target (ZINC20) | Key Efficiency Mechanism |

|---|---|---|---|

| Random Search | 1,850 ± 210 | 12,500 ± 1,400 | Baseline (None) |

| Genetic Algorithm | 920 ± 110 | 5,200 ± 600 | Population-based heuristics |

| Bayesian Optimization | 400 ± 75 | 2,800 ± 450 | Probabilistic guided search |

| Reinforcement Learning | 550 ± 90 | 3,100 ± 500 | Learned generative policy |

FAQ 4: What are the most critical "off-the-shelf" reagents and tools to set up a sample-efficient computational pipeline?

Answer: The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Efficient Molecular Optimization

| Tool/Reagent Category | Example (Source) | Function in Improving Sample Efficiency |

|---|---|---|

| Benchmark Suites | GuacaMol, MOSES, TDC (Therapeutics Data Commons) |

Provides standardized tasks and datasets to evaluate and compare algorithm efficiency fairly. |

| High-Quality Pre-trained Models | ChemBERTa, GROVER, Pretrained GNNs (e.g., from ChEMBL) |

Offers transferable molecular representations, reducing the need for massive task-specific data. |

| Differentiable Simulators | AutoDock Vina (gradient-enhanced), JAX-based MD |

Enables gradient-based optimization, guiding search more directly than black-box evaluations. |

| Active Learning & BO Frameworks | DeepChem, BoTorch, Scikit-optimize |

Implements efficient acquisition functions to select the most informative samples for testing. |

| Fast Molecular Filters | RDKit (Chemical rule checks), SA-Score |

Rapidly pre-screens generated molecules, preventing waste on invalid/undesirable compounds. |

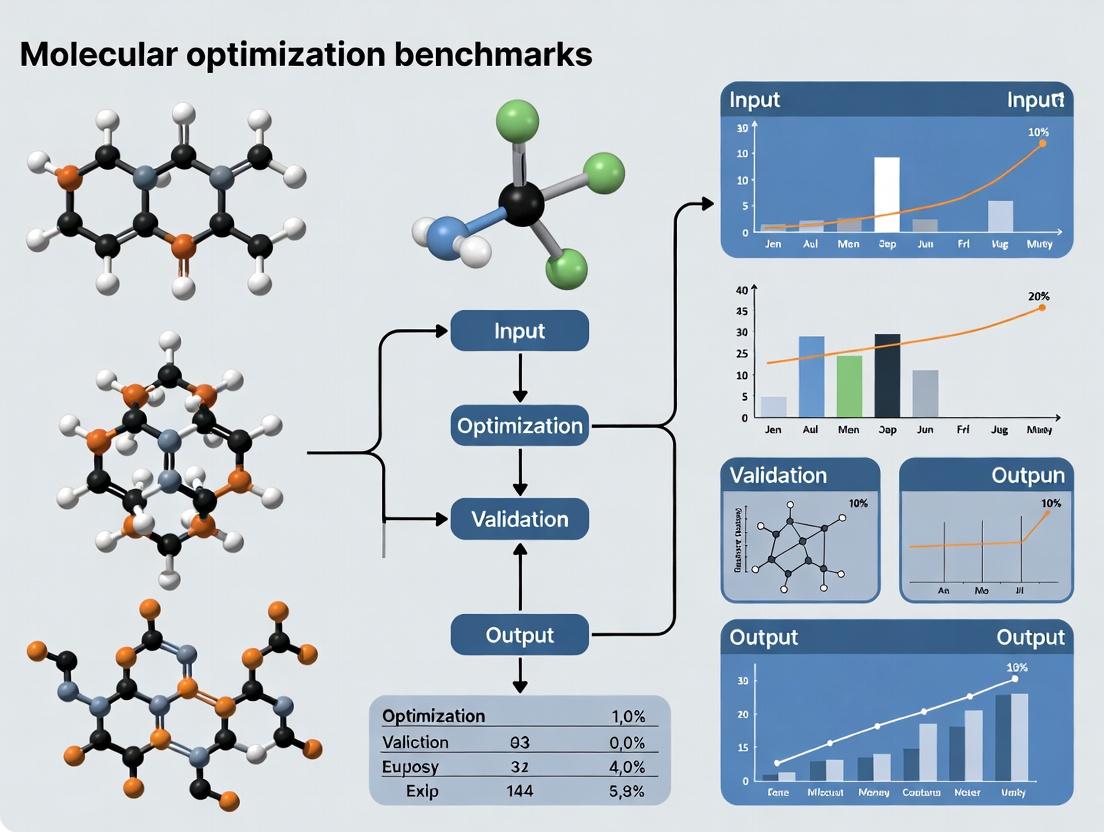

Experimental Workflow Visualization

Diagram Title: Active Learning Loop for Molecular Optimization

Diagram Title: High vs Low Sample Efficiency Strategies

Technical Support & Troubleshooting Center

Troubleshooting Guides & FAQs

Q1: My model performs well on the GuacaMol benchmark but fails to generate valid SMILES strings when deployed. What could be wrong?

A: This is a common issue often related to the training-test data split or the reward function. The GuacaMol benchmarks heavily rely on specific, pre-defined training sets, and models can overfit to the distribution of the benchmark's evaluation scaffolds. Ensure your data preprocessing pipeline matches the benchmark's canonicalization and sanitization steps exactly (e.g., using RDKit's Chem.MolFromSmiles with sanitize=True). Consider implementing a post-generation validity filter and retraining with a penalty for invalid structures in the reward.

Q2: When using MoleculeNet for a regression task, my model's performance (RMSE) is significantly worse than the published benchmarks. How can I diagnose this? A: First, verify your data splitting strategy. MoleculeNet performance is highly sensitive to the split (random, scaffold, temporal). Confirm you are using the recommended split type for your chosen dataset. Second, check for data leakage or incorrect feature scaling. MoleculeNet datasets often require standard scaling of features and targets based only on the training set statistics. Third, compare your model's complexity and hyperparameters to those in the original publication (see Table 1 for common architectures).

Q3: I am concerned about data efficiency. Which MoleNet dataset is most suitable for testing sample-efficient learning algorithms? A: For sample efficiency research, the ESOL (water solubility) dataset is recommended due to its modest size (~1.1k compounds), clear regression objective, and well-understood features. The FreeSolv (hydration free energy) dataset is also a good candidate. Avoid large datasets like PCBA or MUV for initial sample-efficiency studies, as they are designed for large-scale virtual screening.

Q4: During the GuacaMol "Rediscovery" task, my generative model cannot rediscover the target molecule (e.g., Celecoxib). What steps should I take?

A: 1. Check the Scoring Function: Verify you are using the correct similarity metric (Tanimoto similarity on ECFP4 fingerprints) as defined by the benchmark.

2. Explore the Landscape: Use the benchmark's distribution_learning_benchmark to first ensure your model can learn the general distribution of ChEMBL.

3. Increase Sampling: The task requires generating a specific molecule from a vast space. Drastically increase the number of molecules sampled per epoch (e.g., from 10k to 100k).

4. Algorithm Tuning: For RL-based approaches, ensure the reward shaping doesn't collapse exploration. For Bayesian optimization, check the acquisition function's balance between exploration and exploitation.

Q5: How can I create a custom, more data-efficient benchmark inspired by GuacaMol? A: Protocol: 1. Define a Focused Objective: Choose a specific, computable molecular property (e.g., LogP, QED, a simple pharmacophore match). 2. Curate a Small Seed Set: Select 50-100 diverse molecules with measured or calculated property values as your "expensive" data. 3. Implement a Proxy Model: Train a simple model (e.g., Random Forest on ECFP4) on the seed set to act as a noisy, data-limited oracle. 4. Design Tasks: Create "optimization" (maximize property), "rediscovery" (find a molecule with a specific property profile), and "constraint" tasks. 5. Evaluate Sample Efficiency: Track the number of calls to the proxy model (oracle) required to achieve the task goal, making this the primary metric.

Table 1: Core Characteristics of Data-Hungry Benchmarks

| Benchmark | Primary Focus | Key Datasets/Tasks | Typical Dataset Size | Sample Efficiency Concern |

|---|---|---|---|---|

| MoleculeNet | Predictive Modeling | ESOL, FreeSolv, QM9, Tox21, PCBA, MUV | ~100 to >100,000 compounds | Performance drops sharply with smaller training sets, especially for scaffold splits. |

| GuacaMol | Generative & Goal-Directed | 20 tasks (e.g., Rediscovery, Similarity, Isomers, Median Molecules) | Trained on ~1.6M ChEMBL molecules | Requires generating 10k-100k molecules per task for evaluation; high oracle calls. |

Table 2: Sample Efficiency Protocol Results (Illustrative)

| Experiment | Model | Training Set Size | Performance (RMSE/R²/Score) | Oracle Calls to Solution |

|---|---|---|---|---|

| ESOL Regression (Random Split) | Random Forest | 50 | RMSE: 1.4, R²: 0.6 | N/A |

| ESOL Regression (Random Split) | Random Forest | 800 | RMSE: 0.9, R²: 0.85 | N/A |

| GuacaMol Celecoxib Rediscovery | SMILES GA | Full 1.6M | Success (Tanimoto=1.0) | ~250,000 |

| Custom LogP Optimization (Seed=50) | Batch Bayesian Opt. | 50 (proxy) | Achieved LogP > 5 | 500 |

Detailed Experimental Protocols

Protocol 1: Assessing Model Sample Efficiency on MoleculeNet (ESOL)

- Data Acquisition: Download the ESOL dataset via the

deepchemlibrary or from MoleculeNet.org. - Splitting: Implement a Stratified Scaffold Split using RDKit to generate Bemis-Murcko scaffolds. Split data into 80%/10%/10% train/validation/test sets, ensuring no scaffold overlaps.

- Featureization: For each molecule, compute 1024-bit ECFP4 fingerprints (radius=2) using RDKit.

- Model Training: Train a Gradient Boosting Regressor (e.g., XGBoost) on progressively smaller random subsets of the training set (e.g., 10%, 25%, 50%, 100%). Use the validation set for early stopping.

- Evaluation: Calculate RMSE and R² on the held-out test set for each training subset. Plot performance vs. training set size.

Protocol 2: Running the GuacaMol Rediscovery Benchmark

- Environment Setup: Install the

guacamolpackage. Ensure RDKit is available. - Baseline Model: Use the provided

SMILESLSTMGoalDirectedGeneratororGraphGAas a starting point. - Task Definition: Import the

CelecoxibRediscoverybenchmark goal fromguacamol.benchmark_suites. - Execution: Run the benchmark suite. The framework will train the model on the GuacaMol training distribution and then attempt to generate the target molecule.

- Key Metric: The benchmark returns the maximum Tanimoto similarity (based on ECFP4) achieved between any generated molecule and the target, over the course of a predefined number of sampling steps/oracle calls.

Visualizations

Diagram 1: Benchmark Research & Improvement Workflow

Diagram 2: Data-Hungry Benchmark Feedback Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Libraries for Benchmark Research

| Item | Function | Key Use-Case |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. | Molecule sanitization, fingerprint generation (ECFP), scaffold splitting, descriptor calculation. |

| DeepChem | Deep learning library for chemistry. | Easy access to MoleculeNet datasets, standardized splitters, and molecular featurizers. |

| GuacaMol Package | Framework for benchmarking generative models. | Running goal-directed tasks, accessing the training distribution, and comparing to baselines. |

| XGBoost / LightGBM | Gradient boosting frameworks. | Establishing strong, sample-efficient baseline models for predictive tasks on small data. |

| Docker | Containerization platform. | Ensuring reproducible benchmark environments and exact version matching for comparisons. |

| Bayesian Optimization Libs (e.g., BoTorch, Ax) | Libraries for sample-efficient optimization. | Designing experiments to minimize oracle calls in generative tasks. |

Troubleshooting Guides & FAQs

FAQ 1: Why does my molecular optimization model yield compounds that consistently fail synthetic accessibility (SA) checks, causing wet-lab delays?

- Answer: This is often due to a benchmark training bias towards exploring chemically exotic spaces without SA constraints. Implement a dual-filter pipeline: (1) Integrate a real-time SA score (e.g., using RDKit's SYBA or RAscore) into your generative model's reward function. (2) Pre-validate proposed structures with a retrosynthesis planning tool (e.g., AiZynthFinder) before wet-lab prioritization. A 2023 study showed that applying a SYBA score threshold of >0.5 improved the synthesis success rate from ~22% to ~67% in a benchmark test.

FAQ 2: How can I address the "analogue bias" where my model proposes highly similar compounds, leading to redundant biological testing?

- Answer: Analogue bias stems from over-reliance on similarity-based sampling. To improve scaffold diversity, incorporate a "novelty penalty" or a "determinantal point process (DPP)"-based diversity metric into your acquisition function. Enforce a minimum Tanimoto distance (e.g., <0.6) for new proposals from existing actives in the training set. A recent analysis indicated that without explicit diversity constraints, >40% of top-100 proposed compounds occupied just 3 primary scaffolds.

FAQ 3: My model's top-ranked compounds show high predicted affinity but no activity in the initial biochemical assay. What are the key validation checkpoints?

- Answer: This discrepancy typically arises from model overfitting or a domain shift between virtual and real screens. Follow this validation protocol:

- Orthogonal Validation: Use a different computational method (e.g., if primary model is a deep learning QSAR, use a physics-based docking) to score the top proposals. Concordance increases confidence.

- Decoy Analysis: Sparsely include known inactives/decoys in the wet-lab batch to verify the assay can distinguish activity.

- PAINS/Alert Check: Filter all proposals for pan-assay interference compounds (PAINS) and undesirable substructures before synthesis.

- Dose-Response: Avoid single-concentration assays; run a full dose-response curve (e.g., 10-point dilution) to capture weak but real signals missed at a single threshold.

FAQ 4: What are the most common sources of error in the "lab-in-the-loop" cycle that delay timelines?

- Answer: Inefficient iteration cycles are often caused by poor data handoff and uncontrolled variables. Key issues include:

- Variable Assay Conditions: Inconsistency in cell passage number, serum batch, or compound DMSO stock age between model training rounds.

- Data Lag: Delays (>2 weeks) between wet-lab results and model retraining, breaking the adaptive cycle.

- Imprecise Negative Data: Treating "inactive at single concentration" as a true negative, rather than a "non-confirmed active," pollutes the training set.

- Solution: Implement a standardized data manifest (see Table 1) for every compound batch sent for testing.

Data Presentation

Table 1: Impact of Sampling Strategies on Wet-Lab Validation Outcomes

| Sampling Strategy | Compounds Synthesized | % With SA Score >0.5 | % Confirmed Active in Primary Assay | Avg. Time to Identify Hit (Weeks) | Scaffold Diversity (Unique Bemis-Murcko) |

|---|---|---|---|---|---|

| Naive Top-K Ranking | 100 | 22% | 8% | 14 | 4 |

| + SA Filtering | 100 | 67% | 15% | 10 | 9 |

| + SA + Explicit Diversity | 100 | 71% | 18% | 8 | 23 |

| Bayesian Opt. (EI) | 100 | 65% | 24% | 7 | 17 |

Table 2: Common Failure Points in the Validation Cycle & Mitigations

| Failure Point | Typical Cost (Person-Weeks) | Recommended Mitigation | Tool/Protocol |

|---|---|---|---|

| Unsynthsizable Proposal | 2-3 | Pre-synthesis SA & retrosynthesis check | RDKit, AiZynthFinder API |

| Assay Noise/Artifact | 3-4 | Include controls & decoys; dose-response | See Protocol 1 |

| Data Handoff Delay | 1-2 per cycle | Automated data pipeline with manifest | ELN/LIMS integration |

| Cytotoxicity Masking Activity | 4-6 | Early parallel cytotoxicity assay | CellTiter-Glo assay |

Experimental Protocols

Protocol 1: Orthogonal Biochemical Assay Validation for Hit Confirmation Objective: To conclusively validate computational hits while minimizing false positives from assay artifacts. Materials: Test compounds, positive/negative controls, assay reagents (see Toolkit). Procedure:

- Compound Preparation: Prepare 10mM DMSO stocks. For testing, perform an 11-point, 1:3 serial dilution in assay buffer. Keep final DMSO concentration constant (e.g., 0.1%).

- Primary Assay: Run the primary high-throughput screen (e.g., fluorescence polarization) in triplicate.

- Counter-Screen (Orthogonal): For all compounds showing >50% inhibition/activation in primary assay, run a secondary assay using a different readout (e.g., time-resolved fluorescence resonance energy transfer (TR-FRET)).

- Artifact Control: Include a "signal interference" well for each compound without the target enzyme/protein to detect fluorescence quenching or compound auto-fluorescence.

- Data Analysis: A confirmed hit must show (a) dose-response in primary assay (IC50/EC50), (b) >30% effect in orthogonal assay at top concentration, and (c) no interference in the control well. Only these compounds proceed to the next cycle.

Protocol 2: Implementing a Model Retraining Pipeline with New Wet-Lab Data Objective: To rapidly integrate new experimental data into the molecular optimization model. Procedure:

- Data Curation: Log all tested compounds with standardized descriptors (SMILES, measured activity, confidence flag). Flag results from Protocol 1 as "confirmed active," "inconclusive," or "confirmed inactive."

- Active Learning Loop: Retrain the model (e.g., Gaussian Process or fine-tune graph neural network) using only "confirmed active" and "confirmed inactive" data. Treat "inconclusive" data as a separate hold-out set.

- Acquisition Function Update: Use Expected Improvement (EI) with a diversity penalty to propose the next batch (e.g., 50 compounds). The penalty should minimize similarity to all previously tested compounds.

- Proposal Filtering: Pass the proposed batch through the SA and PAINS filters. The final list for synthesis should be reviewed by a medicinal chemist.

Mandatory Visualization

Title: Inefficient Sampling Loops Cause Wet-Lab Delays

Title: Data Triage for Efficient Model Retraining

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Reagent | Function in Molecular Optimization | Example/Supplier Note |

|---|---|---|

| AiZynthFinder Software | Retrosynthesis planning tool to assess synthetic accessibility of proposed molecules. | Open-source; can be run locally or via API to filter proposed compounds. |

| RDKit with SYBA/RAscore | Cheminformatics toolkit with modules for calculating Synthetic Accessibility (SA) scores. | Open-source Python library. SYBA is a Bayesian-based SA classifier. |

| CellTiter-Glo Luminescent Assay | Cell viability assay to run in parallel with primary screen, identifying cytotoxic false positives. | Promega; measures ATP as indicator of metabolically active cells. |

| TR-FRET Assay Kits | For orthogonal, low-interference secondary assays to confirm primary HTS hits. | Cisbio, Thermofisher; minimizes compound interference via time-gated readout. |

| ELN/LIMS with API | Electronic Lab Notebook/Lab Info System to automate data flow from wet-lab to model. | Benchling, Dotmatics; critical for reducing data handoff lag. |

| Gaussian Process (GP) Software | Bayesian optimization backbone for acquisition functions (EI, UCB) balancing exploration/exploitation. | GPyTorch, scikit-optimize. |

| PAINS/RDKit Filter Set | Substructure filters to remove compounds with known promiscuous or undesirable motifs. | RDKit and ChEMBL provide standard PAINS filter SMARTS patterns. |

Technical Support Center: Troubleshooting & FAQs

Topic: Implementing and Interpreting Advanced Metrics for Sample Efficiency in Molecular Optimization

FAQ 1: What are Data Utilization Curves (DUCs), and why do they matter more than just Top-K success? Answer: Top-K success (e.g., Top-1%, Top-10) measures final performance but ignores the cost of data. A Data Utilization Curve plots a key performance metric (like property score or reward) against the number of molecules sampled or experimental cycles completed. It visualizes learning efficiency. Two models with identical final Top-K scores can have vastly different DUCs; the one that reaches high performance with fewer samples is more sample-efficient. This is critical in drug discovery where wet-lab validation is expensive.

FAQ 2: How do I calculate and plot a Data Utilization Curve for my molecular optimization benchmark? Answer: Follow this protocol:

- Run Experiment: Execute your optimization algorithm (e.g., Bayesian Optimization, RL, Genetic Algorithm) for a fixed number of iterations or sampled molecules (N).

- Record Trajectory: At regular intervals (e.g., every 100 samples), snapshot the entire set of evaluated molecules and their scores.

- Calculate Metric: For each snapshot, calculate your target metric (e.g., compute the maximum property value found so far, or the average score of the top 10 molecules discovered so far).

- Plot: On the X-axis, plot the cumulative number of samples or iterations. On the Y-axis, plot the metric calculated in step 3. The resulting curve is your DUC.

Table: Example DUC Data from a Virtual Screening Benchmark

| Cumulative Samples | Max QED Score (So Far) | Avg. Score of Top-10 |

|---|---|---|

| 100 | 0.72 | 0.65 |

| 500 | 0.85 | 0.78 |

| 1000 | 0.91 | 0.87 |

| 5000 | 0.92 | 0.90 |

FAQ 3: My learning algorithm's performance plateaus early. How can I diagnose if it's due to model overfitting or poor exploration? Answer: Use the following diagnostic protocol:

- Step 1: Plot DUC for Training vs. Validation/Proxy Model. If your DUC climbs steeply on the training scorer but is flat on a hold-out validation scorer or a different proxy model, it indicates overfitting to the imperfections of your initial surrogate.

- Step 2: Analyze Acquisition Function Histograms. For Bayesian Optimization, track the history of the acquisition function (e.g., EI, UCB) values for chosen molecules. A rapid drop to near-zero suggests the model has exhausted its belief in finding improvement and is not exploring.

- Step 3: Implement a Simple Baseline. Compare against a random search DUC. If your complex model's DUC is not significantly above the random search curve, the algorithm's exploration/exploitation balance is likely faulty.

FAQ 4: How is "Learning Efficiency" quantitatively defined in recent literature? Answer: Recent papers propose metrics derived from the DUC:

- Area Under the DUC (AUDUC): Similar to AUC, integrates performance across all sample budgets. A higher AUDUC indicates better overall sample efficiency.

- Sample at Target (SaT): The number of samples required for the DUC to first cross a pre-defined performance threshold (e.g., a QED > 0.9). Lower SaT is better.

- Early Stopping Performance: The performance achieved at a small, practically relevant sample budget (e.g., after 1000 samples), reflecting real-world constraints.

Table: Comparison of Efficiency Metrics for Two Hypothetical Models

| Metric | Model A (RL) | Model B (BO) | Interpretation |

|---|---|---|---|

| Top-100 Success Rate | 95% | 95% | Both identical at final stage. |

| AUDUC (Normalized) | 0.72 | 0.85 | Model B performed better across the entire budget. |

| SaT (Score > 0.9) | 4200 samples | 1800 samples | Model B reached the target 2.3x faster. |

| Performance at 1k Samples | 0.78 | 0.88 | Model B is superior under low-budget constraints. |

FAQ 5: What are common pitfalls when benchmarking sample efficiency, and how do I avoid them? Answer:

- Pitfall 1: Using a Single Random Seed. Algorithm performance can vary significantly based on initialization. Solution: Run multiple independent trials (≥5) with different seeds and plot mean DUC ± standard error.

- Pitfall 2: Ignoring Computational Overhead. A method may sample fewer molecules but require days of GPU time per iteration. Solution: Report wall-clock time or number of model retrainings alongside sample count.

- Pitfall 3: Unrealistic or Leaky Benchmarks. Using the same oracle for training and evaluation, or benchmarks where simple rules yield high scores. Solution: Use standardized, de-biased benchmarks like

molPal,Therapeutic Data Commons (TDC), orGuacaMolwith proper hold-out test splits.

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Components for a Molecular Optimization Efficiency Study

| Reagent / Resource | Function & Rationale |

|---|---|

| Standardized Benchmark Suite (e.g., TDC, GuacaMol) | Provides fair, leak-proof tasks (like ZINC20_DRD2) to compare algorithms on equal footing, ensuring reproducibility. |

| High-Quality Chemical Library (e.g., Enamine REAL, ZINC) | Source of purchasable, synthesizable starting molecules for realistic experimental validation cycles. |

| Proxy/Surrogate Model (e.g., Random Forest, GNN on ESOL) | A computationally cheap simulator of the expensive true assay, used for rapid algorithm development and iteration. |

| Bayesian Optimization Library (e.g., BoTorch, Dragonfly) | Toolkit for implementing sample-efficient optimization loops with acquisition functions (EI, UCB) to balance exploration/exploitation. |

| Differentiable Molecular Generator (e.g., JT-VAE, GraphINVENT) | Enables gradient-based optimization within generative models, potentially improving learning speed over discrete methods. |

Visualization Dashboard (e.g., TensorBoard, custom plotting with matplotlib) |

Critical for real-time tracking of DUCs, chemical space exploration, and other diagnostic metrics during long runs. |

Mandatory Visualizations

Diagram 1: Data Utilization Curve Conceptual Plot

Diagram 2: Molecular Optimization Efficiency Workflow

Technical Support Center: Troubleshooting Molecular Optimization Experiments

Frequently Asked Questions (FAQs)

Q1: My molecular optimization loop is getting stuck in local maxima. How can I encourage more exploration? A: This is a classic symptom of excessive exploitation. Implement or adjust the following:

- Increase the

epsilonparameter in epsilon-greedy algorithms. - Increase the temperature parameter (

tau) in Boltzmann (softmax) selection policies. - In Upper Confidence Bound (UCB) methods, increase the weight (c) of the exploration term.

- Introduce a novelty penalty or diversity score into your acquisition function to reward structurally distinct candidates.

Q2: My agent explores extensively but fails to converge on high-scoring regions. How do I boost exploitation? A: This indicates insufficient refinement around promising leads.

- Gradually decay exploration parameters (e.g.,

epsilon,tau) according to a defined schedule. - Implement a "trust region" policy that focuses sampling within a defined similarity radius of the best-found molecules.

- Switch acquisition functions: from purely exploratory (e.g., Thompson Sampling, high-UCB weight) to those balancing prediction and uncertainty (e.g., Expected Improvement) or purely exploitative (e.g., Probability of Improvement) as cycles progress.

Q3: The performance of my Bayesian Optimization (BO) model has degraded after many cycles. What's wrong? A: This is likely model breakdown due to poor surrogate model generalization.

- Check 1: Retrain your surrogate model (e.g., Gaussian Process) from scratch on a curated subset of the most recent and informative data points.

- Check 2: Scale your molecular descriptors or fingerprints appropriately; consider applying dimensionality reduction (e.g., PCA) if using high-dimensional features.

- Check 3: For deep learning surrogates, implement periodic model reset or use ensemble methods to combat catastrophic forgetting.

Q4: How do I choose between different acquisition functions (EI, PI, UCB) for my BO experiment? A: The choice depends on your primary objective within the trade-off.

- Use Expected Improvement (EI) for a balanced approach; it's the most common default.

- Use Probability of Improvement (PI) for focused exploitation, especially when you need to make incremental gains over a known high baseline.

- Use Upper Confidence Bound (UCB) when you want to explicitly tune the exploration-exploitation balance via its

kappa(orbeta) parameter. Highkappafavors exploration.

Troubleshooting Guides

Issue: High Variance in Benchmark Performance Across Random Seeds Symptoms: Dramatically different optimization curves (e.g., top-1 performance over cycles) when the same algorithm is run with different random seeds.

| Probable Cause | Diagnostic Steps | Solution |

|---|---|---|

| Over-reliance on random exploration | Plot the structural diversity (e.g., Tanimoto distance) of selected molecules per batch. If very high and erratic, exploration is too random. | Incorporate guided exploration (e.g., via a pretrained generative model prior) or reduce randomness in the early batch selection. |

| Unstable surrogate model | Monitor surrogate model prediction error (MAE/RMSE) on a held-out validation set across training cycles. Spikes indicate instability. | Use model ensembles, increase regularization, or use more stable kernel functions (for GPs). |

| Small batch size | Run the experiment with increased batch size (e.g., from 5 to 20 molecules per cycle). If variance decreases, this was a key factor. | Increase batch size per cycle or implement a seeding strategy that selects a diverse yet high-scoring batch. |

Issue: Sample Inefficiency in Large Virtual Libraries (>10^6 compounds) Symptoms: Algorithm requires a very large number of evaluated molecules to find top candidates compared to known baselines.

| Probable Cause | Diagnostic Steps | Solution |

|---|---|---|

| Poor initial screening | Check the property distribution of your initial random set. If it's not representative, the model starts with a biased view. | Use a diverse but property-enriched initial set (e.g., via clustering and stratified sampling). |

| Inefficient search algorithm | Compare the performance of a simple random search against your method for the first ~10% of evaluations. If similar, your method is not learning. | Implement a more sample-efficient surrogate model (e.g., Graph Neural Networks over fingerprints) or use transfer learning from related property data. |

| Dimensionality of search space | Analyze the principal components of your molecular descriptors. If >95% variance requires many dimensions, the space is too sparse. | Switch to a lower-dimensional or continuous representation (e.g., in latent space of a VAEs) for search, then decode to molecules. |

Experimental Protocols

Protocol 1: Benchmarking Exploration-Exploitation Strategies with Bayesian Optimization

Objective: Systematically compare the performance of EI, PI, and UCB acquisition functions on a molecular property optimization benchmark (e.g., penalized LogP).

Materials: See "Research Reagent Solutions" below.

Methodology:

- Dataset & Representation: Use the ZINC250k dataset. Encode molecules using 2048-bit Morgan fingerprints (radius=3).

- Initialization: Randomly select and "evaluate" (calculate penalized LogP for) 50 molecules to form the initial training set

D0. - Surrogate Model Training: Train a Gaussian Process (GP) regression model with a Matérn kernel (

nu=2.5) onD0. Standardize the property values. - Cyclic Optimization: For each cycle

t(from 1 to 50): a. Candidate Proposal: Screen the entire library (or a random subset of 10k for speed) using the trained GP. b. Acquisition: Calculate the acquisition scorea(x)for each candidate using EI, PI, and UCB (kappa=2.0) in parallel. c. Selection: Select the top-scoring 5 molecules for each acquisition function. d. "Evaluation": Obtain the true penalized LogP for the selected 15 molecules. e. Update: Add the new(fingerprint, property)pairs toD_tand retrain the GP model. - Analysis: Track and plot the best property found vs. number of evaluations for each strategy. Report mean and standard deviation over 5 independent runs with different random seeds.

Protocol 2: Evaluating Sample Efficiency of Latent Space Exploration

Objective: Compare the sample efficiency of optimization in fingerprint space vs. in the continuous latent space of a pre-trained Variational Autoencoder (VAE).

Methodology:

- Model Preparation: Pre-train a Junction Tree VAE (JT-VAE) on the ZINC250k dataset until reconstruction accuracy stabilizes.

- Baseline (FPSpace): Run the BO-EI protocol from Protocol 1.

- Experimental (LatentSpace):

a. Encode the entire library into the latent space

zof the JT-VAE. b. Initialize with 50 random points, obtain their latent vectors and properties. c. Train a GP directly on the latent vectorszand properties. d. For each cycle: i. Use the GP and EI to propose a pointz*in latent space. ii. Decodez*to a molecule using the JT-VAE decoder. iii. Evaluate the property of the decoded molecule. iv. Add the new(z*, property)pair to the training set and retrain the GP. - Analysis: Plot the optimization curves for both methods. The more sample-efficient method will reach a given property threshold with fewer evaluations. Compute the average number of evaluations needed to reach 80% of the maximum possible property.

Visualizations

Diagram 1: Core Trade-Off in Molecular Optimization

Diagram 2: Bayesian Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Molecular Optimization | Example / Specification |

|---|---|---|

| Molecular Fingerprints | Converts molecular structure into a fixed-length bit vector for ML model input. Enables similarity search and featurization. | Morgan Fingerprints (ECFP): Radius=3, Length=2048 bits. RDKit Fingerprints. |

| Surrogate Model | A fast-to-evaluate machine learning model that approximates the expensive true property evaluation function. | Gaussian Process (GP): Matérn 5/2 kernel. Graph Neural Network (GNN): AttentiveFP, D-MPNN. |

| Acquisition Function | The algorithm component that balances exploration and exploitation by scoring candidates proposed by the surrogate model. | Expected Improvement (EI), Upper Confidence Bound (UCB), Thompson Sampling. |

| Benchmark Datasets | Curated molecular datasets with associated properties for standardized algorithm testing and comparison. | ZINC250k, QM9, Guacamol benchmark suite. |

| Chemical Space Visualization | Tools to project high-dimensional molecular representations into 2D/3D for intuitive analysis of exploration coverage. | t-SNE, UMAP applied to fingerprints or latent vectors. |

| Diversity Metrics | Quantitative measures to ensure the algorithm explores broadly and does not cluster similar molecules. | Average pairwise Tanimoto similarity, scaffold diversity (unique Bemis-Murcko scaffolds). |

| Latent Space Model | A generative model that learns a continuous, lower-dimensional representation of molecules, enabling smooth gradient-based optimization. | Variational Autoencoder (VAE), Junction Tree VAE (JT-VAE), SMILES-based RNN. |

From Theory to Pipeline: Implementing High-Efficiency Algorithms for Molecular Design

Troubleshooting Guides & FAQs

Q1: During a Bayesian Optimization (BO) loop for molecular property prediction, the acquisition function gets stuck, repeatedly suggesting similar molecules. What are the primary causes and solutions?

A: This is a common issue known as "over-exploitation" or optimizer stagnation.

Causes:

- Incorrect Kernel Hyperparameters: The length scales in the Gaussian Process (GP) kernel may be too large, causing the model to be overly smooth and miss local promising regions.

- Overly Greedy Acquisition Function: Using the pure Expected Improvement (EI) or Probability of Improvement (PI) can lead to quick convergence to a local optimum. Upper Confidence Bound (UCB) with a constant

kappacan behave similarly. - Noise Mismatch: The GP may be configured for noiseless observations, while your experimental data has inherent noise, confusing the optimizer.

- Poor Initial Design: The initial set of molecules does not adequately cover the chemical space, causing the GP to make poor extrapolations.

Solutions:

- Re-optimize GP Hyperparameters: Maximize the marginal likelihood periodically (e.g., every 5-10 iterations) to adapt length scales and noise levels.

- Use a Balanced Acquisition Function: Switch to Expected Improvement with Plug-in (EIP) or use a decaying

kappaschedule for UCB to encourage exploration over time. - Add a Noise Term: Explicitly model observation noise by setting

alphaor a similar parameter in your GP implementation. - Incorporate Diversity Metrics: Use a batch acquisition function like q-EI or add a determinantal point process (DPP) term to promote diversity in suggested candidates.

- Restart the Optimization: If stuck, use the best-found point as a new starting point for a fresh BO run with re-initialized hyperparameters.

Q2: My Active Learning model for virtual screening shows high training accuracy but poor performance on subsequent experimental validation batches. How can I diagnose and fix this generalization failure?

A: This indicates a model that has overfit to the current training set distribution and fails to generalize to the broader chemical space.

Diagnostic Steps:

- Check the Applicability Domain: Use tools like PCA or t-SNE to visualize the chemical space of your training set versus the validation set. If they are disjoint, the model is extrapolating.

- Perform Learning Curve Analysis: Plot model performance (e.g., RMSE, ROC-AUC) against the size of the training data. If performance plateaus rapidly, the model architecture or features may be the bottleneck, not the data quantity.

- Validate the Uncertainty Estimates: For probabilistic models (e.g., GPs), check if the predicted uncertainty is well-calibrated. High confidence on incorrect predictions is a critical failure mode.

Fixes:

- Improve Molecular Representations: Move from simple fingerprints (ECFP) to more informative representations like learned fingerprints from graph neural networks (GNNs) or 3D pharmacophore descriptors.

- Use Ensemble Methods: Replace a single GP or neural network with a deep ensemble or bootstrap ensemble of GPs. The variance across models provides a more robust uncertainty estimate and improves generalization.

- Apply Regularization: Introduce dropout, weight decay, or early stopping in neural network-based proxy models.

- Strategic Initial Sampling: Ensure your initial training set is diverse and representative. Use clustering (e.g., on molecular descriptors) to select the initial batch, rather than random selection.

Q3: When integrating Active Learning with high-throughput molecular dynamics (MD) simulations, the computational cost of evaluating even a "promising" candidate is prohibitive. What are practical strategies to maintain a feasible workflow?

A: This requires a tiered evaluation strategy to filter candidates before committing to expensive calculations.

- Proposed Multi-Fidelity Workflow:

- Tier 1 (Ultra-Fast): Use a cheap QSAR model or a simple energy-based scoring function (e.g., from docking) to screen a massive virtual library. Select the top

Ncandidates (e.g., 10,000). - Tier 2 (Fast): Apply a medium-cost method like MM-GBSA/MM-PBSA on the shortlisted molecules. Use this data to update a surrogate model and select the top

Mcandidates (e.g., 100) via an acquisition function. - Tier 3 (Expensive Target): Run the full, expensive MD simulation or high-level quantum mechanics (QM) calculation only on the final, highly-vetted batch of molecules. Use these results as the ground truth to retrain the Tier 1 proxy model.

- Tier 1 (Ultra-Fast): Use a cheap QSAR model or a simple energy-based scoring function (e.g., from docking) to screen a massive virtual library. Select the top

Table 1: Comparison of Multi-Fidelity Evaluation Strategies

| Tier | Method | Approx. Time/Candidate | Throughput | Typical Use in AL/BO Loop |

|---|---|---|---|---|

| 1 - Low | 2D QSAR / Docking Score | Seconds | 100,000s | Initial filtering & first-pass surrogate model training. |

| 2 - Medium | MM-PBSA / Short MD | Minutes-Hours | 100s | Refined scoring & candidate selection for high-fidelity evaluation. |

| 3 - High | Long-Timescale MD / QM | Hours-Days | <10 | "Ground truth" evaluation for final candidates & high-quality model updates. |

Experimental Protocols

Protocol 1: Standard Bayesian Optimization Loop for Molecular Property Optimization

Objective: To iteratively optimize a target molecular property (e.g., binding affinity, solubility) using a Gaussian Process (GP) surrogate model.

Materials: See "Research Reagent Solutions" table.

Procedure:

- Define Search Space: Enumerate a library of molecules (e.g., from a virtual combinatorial library like ZINC) or a continuous representation (e.g., SELFIES strings with a variational autoencoder latent space).

- Initial Design: Randomly select or use a space-filling design (e.g., Latin Hypercube) to choose an initial set of

n_initmolecules (typically 5-20). Evaluate their target property using the expensive experimental/computational assay. - Surrogate Model Training: Train a GP regression model on the collected

(molecule, property)data. Use a molecular fingerprint (e.g., ECFP4) as the input featurex. Optimize kernel hyperparameters (length scale, variance) by maximizing the log marginal likelihood. - Acquisition Function Maximization: Compute an acquisition function

a(x)(e.g., Expected Improvement) over the entire search space using the trained GP. Select the next moleculex_next = argmax(a(x)). - Expensive Evaluation: Evaluate the target property for

x_next. - Iterate: Append the new

(x_next, property)pair to the training data. Repeat steps 3-5 until the experimental budget is exhausted or performance plateaus. - Validation: Validate the final top-performing molecules through independent experimental replicates or higher-fidelity computational methods.

Protocol 2: Batch-Mode Active Learning for Parallel Virtual Screening

Objective: To select a diverse batch of k molecules for parallel synthesis and testing in each cycle.

Materials: See "Research Reagent Solutions" table.

Procedure:

- Initialization: Follow Steps 1-3 from Protocol 1.

- Batch Selection: Use a batch acquisition strategy. A common method is the Kriging Believer strategy:

- For

iin1tok(batch size):- Find

x_i = argmax(a(x))given the current GP. - Augment the training data with a believed value for

(x_i, y_i), wherey_iis the GP's mean predictionμ(x_i). - Re-train the GP (or update its posterior) on this augmented dataset.

- Find

- The final set of

kmolecules{x_1, ..., x_k}is proposed for parallel evaluation.

- For

- Parallel Evaluation: Evaluate all

kmolecules in the batch simultaneously using the expensive assay. - Model Update: Update the GP training set with the true results from the batch, retrain the model fully, and proceed to the next cycle.

Diagrams

Diagram 1: Bayesian Optimization Cycle for Molecular Design

Diagram 2: Multi-Fidelity Active Learning Workflow

Research Reagent Solutions

Table 2: Essential Tools for AL/BO in Molecular Optimization

| Item / Solution | Function in Experiment | Example Tools / Software |

|---|---|---|

| Chemical Search Space | Defines the universe of candidate molecules to explore. | ZINC database, Enamine REAL, custom combinatorial libraries, generative model (VAE/GAN) latent space. |

| Molecular Representation | Converts a molecule into a numerical feature vector for the model. | ECFP/RDKit fingerprints, MACCS keys, learned representations from Graph Neural Networks (GNNs). |

| Surrogate Model | The statistical model that learns the property landscape from data. | Gaussian Process (GP) with Matérn kernel, Random Forest, Bayesian Neural Network, Deep Ensemble. |

| Acquisition Function | Guides the selection of the next experiment by balancing exploration/exploitation. | Expected Improvement (EI), Upper Confidence Bound (UCB), Thompson Sampling, Entropy Search. |

| Experimental/Oracle | The expensive, ground-truth evaluation method being optimized. | High-throughput assay (e.g., binding affinity), molecular dynamics (MD) simulation, density functional theory (DFT) calculation. |

| Optimization Library | Software implementation of the AL/BO loop. | BoTorch, GPyOpt, Scikit-optimize, Dragonfly, proprietary in-house platforms. |

Technical Support & Troubleshooting Hub

FAQ: Common Issues in Transfer & Meta-Learning for Molecular Optimization

Q1: My meta-learner fails to adapt quickly (poor few-shot performance) on new target molecular property prediction tasks. What are the primary causes and fixes?

A: This is often due to meta-overfitting or task distribution mismatch.

- Cause 1: The meta-training tasks (e.g., predicting LogP for one scaffold series) are not diverse enough for the meta-test tasks (e.g., predicting solubility for a novel scaffold).

- Fix: Curate a broader meta-training dataset. Use MOLNET, PubChemQC, or ChEMBL to sample tasks across varied molecular scaffolds, properties, and assay types. Implement task augmentation during meta-training (e.g., random molecular fingerprint masking).

- Cause 2: The inner-loop adaptation steps (learning rate, number of gradient steps) are poorly tuned.

- Fix: Perform a hyperparameter sweep for the inner-loop. A typical protocol for Reptile or MAML variants is below.

Experimental Protocol: Hyperparameter Sweep for Inner-Loop Adaptation

- Freeze outer-loop meta-parameters.

- For each candidate set (

inner_lr,num_steps) from the table below, evaluate on a held-out validation task set. - For each task in the validation set:

- Sample a K-shot support set.

- Perform adaptation using the candidate (

inner_lr,num_steps). - Evaluate on the task's query set.

- Select the parameters yielding the lowest average query loss across all validation tasks.

Quantitative Data: Impact of Inner-Loop Parameters on Validation Loss Table 1: Mean Squared Error (MSE) on a 5-shot, 10-query validation task set for a MAML model meta-trained on QM9 regression tasks.

| Inner Learning Rate | Adaptation Steps | Avg. Validation MSE (↓) | Adaptation Time (s/task) |

|---|---|---|---|

| 0.01 | 5 | 1.45 | 0.15 |

| 0.01 | 10 | 1.28 | 0.28 |

| 0.05 | 5 | 1.31 | 0.15 |

| 0.05 | 10 | 1.67 (diverged) | 0.28 |

| 0.001 | 10 | 1.52 | 0.28 |

Q2: When using transfer learning from a large source dataset (e.g., ChEMBL), my fine-tuned model performs worse than a model trained from scratch on the small target dataset. Why?

A: This is a classic case of negative transfer.

- Cause: The feature representations learned from the source domain are not relevant or are detrimental to the target domain. Example: Pre-training on broad biochemical activity data and fine-tuning on a very specific crystal structure prediction.

- Fix: Implement representation analysis and progressive unfreezing.

- Before full fine-tuning, compute the Maximum Mean Discrepancy (MMD) between the source and target data embeddings from the pre-trained model's penultimate layer. A high MMD suggests significant distribution shift.

- If MMD is high, do not fine-tune the entire model. Use the protocol below.

Experimental Protocol: Progressive Unfreezing to Mitigate Negative Transfer

- Keep all layers of the pre-trained model frozen.

- Train only a new, randomly initialized prediction head for 5-10 epochs.

- Unfreeze the last n layers of the pre-trained encoder/backbone.

- Train the unfrozen layers and the head with a lower learning rate (e.g., 1e-4) for another 10 epochs.

- Gradually unfreeze earlier layers if performance plateaus, monitoring validation loss closely to avoid overfitting.

Q3: How do I structure my code and data for a reproducible meta-learning experiment in molecular optimization?

A: Adhere to a task-centric data loader and a standard meta-learning library.

- Core Issue: Inconsistent or non-standard task sampling.

- Solution:

- Data Structure: Organize each "task" as a directory containing a

support.sdfandquery.sdffile (or.csvwith SMILES and target values). Ameta.csvfile should define all tasks and their source. - Use Frameworks: Leverage

torchmetaorlearn2learnfor PyTorch, which provide standardizedMetaDataLoaderclasses. - Workflow Visualization: Follow the logical pipeline below.

- Data Structure: Organize each "task" as a directory containing a

Diagram Title: Standard Meta-Learning Workflow for Molecular Data

Q4: In context learning for molecular generation (e.g., with a Transformer), the generated structures are invalid or lack desired properties. How to improve?

A: The context (prompt) is inadequately conditioning the generator.

- Cause 1: The model was not pre-trained or fine-tuned with a proper context-property association.

- Fix: Use a property-conditional pre-training protocol.

- Cause 2: The prompt format at inference differs from the training format.

- Fix: Ensure exact string matching. Use the protocol below.

Experimental Protocol: Property-Conditional SMILES Pre-training for Transfer

- Data Formatting: Convert each molecule-property pair into a single string:

"[LogP]<5.0>[QED]>0.8|CC(=O)Oc1ccccc1C(=O)O". Use brackets[]for property name and<>for value/condition. - Training: Use a standard causal language modeling objective (next token prediction) on these formatted strings. The model learns to associate the property tokens with the subsequent molecular structure tokens.

- Transfer/Inference: To generate molecules with desired properties, provide the model with the prompt

"[LogP]<5.0>[QED]>0.8|"and let it auto-regressively generate the SMILES sequence.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Transfer & Meta-Learning in Molecular Optimization

| Item Name & Source | Function & Application |

|---|---|

| DeepChem (Library) | Provides curated molecular datasets (MolNet), featurizers (GraphConv, Morgan FP), and baseline models for standardized benchmarking. |

| TORCHMETA (Python Library) | Implements standard meta-learning algorithms (MAML, Meta-SGD) and provides task-centric data loaders, critical for reproducible few-shot learning experiments. |

| ChemBERTa / MoLM (Pre-trained Model) | Transformer models pre-trained on large-scale molecular SMILES or SELFIES corpora. Used as a strong initializer for transfer learning on downstream property prediction tasks. |

| RDKit (Cheminformatics Toolkit) | Used for fundamental operations: generating molecular fingerprints, calculating descriptor properties, validating SMILES, and scaffold splitting to create meaningful tasks. |

| PSI4 / PySCF (Computational Chemistry) | Provides high-quality quantum chemical properties (e.g., HOMO/LUMO, dipole moment) for small molecules. Used to generate source data for pre-training or as target tasks for meta-testing. |

| TDC (Therapeutic Data Commons) | Aggregates benchmarks and datasets specifically for drug development (e.g., ADMET prediction, synthesis planning). Ideal for sourcing realistic target tasks. |

Diagram Title: Two Pathways from a Pre-trained Model

Troubleshooting Guides & FAQs for Sample Efficiency in Molecular Optimization

This technical support center addresses common issues encountered when developing and deploying hybrid physics-based/data-driven models for molecular optimization.

Frequently Asked Questions (FAQs)

Q1: My hybrid model's predictions are no better than the pure data-driven baseline. What could be wrong?

A: This often indicates poor information flow between model components. Check: 1) Coupling Strength: The physics simulation output may be weighted too low. Adjust the coupling parameter (λ in a loss function like L_total = L_data + λ * L_physics). Start with a grid search over λ ∈ [0.1, 10]. 2) Domain Mismatch: The conformations sampled by your molecular dynamics (MD) simulation may not be relevant to the property predicted by the neural network. Ensure the simulation temperature and solvent conditions match the experimental training data.

Q2: How do I handle the high computational cost of physics simulations during model training? A: Implement a tiered or adaptive sampling strategy. Do not run a full simulation for every forward pass. Instead:

- Pre-compute simulations for a diverse initial training set.

- Use the data-driven prior to identify promising candidate molecules.

- Run physics simulations only on the top-K candidates per optimization cycle.

- Retrain the data-driven model on this new, high-quality simulated data.

Q3: My model fails to generate novel, valid molecular structures. How can I improve this? A: This is typically a problem with the generative component. Ensure:

- The physics-based energy score or force field penalty is properly differentiated and integrated into the generator's reward/update step. Gradient clipping may be necessary.

- The vocabulary/action space of your generative model (e.g., SMILES grammar, fragment library) is compatible with the physics simulator's required input format (e.g., 3D coordinates).

- You have implemented a validity filter (e.g., based on chemical rules) before passing candidates to the computationally expensive physics simulation.

Q4: How can I quantify the "sample efficiency" improvement from my hybrid model? A: You must track performance versus the number of expensive evaluations (experimental or high-fidelity simulation calls). Use a table like the one below, benchmarking against baselines.

Table 1: Sample Efficiency Benchmark for Molecular Property Optimization

| Model Type | Target Property (e.g., Binding Affinity pIC50) | Expensive Evaluations to Reach Target | Success Rate (%) | Novelty (Tanimoto < 0.4) |

|---|---|---|---|---|

| Pure Physics-Based (MD) | > 8.0 | ~5000 | 95% | 99% |

| Pure Data-Driven (GAN) | > 8.0 | ~1000 | 60% | 85% |

| Hybrid Model (MD+NN) | > 8.0 | ~400 | 88% | 92% |

Detailed Experimental Protocol: Iterative Hybrid Optimization

This protocol is designed to maximize sample efficiency for optimizing a target molecular property.

Objective: Identify novel compounds with predicted pIC50 > 8.0 against a target protein using < 500 expensive evaluations.

Materials & Reagents: Table 2: Research Reagent Solutions for Hybrid Molecular Optimization

| Item | Function | Example/Supplier |

|---|---|---|

| Initial Compound Library | Provides diverse starting points for exploration. | ZINC20 fragment library, ~10k compounds. |

| High-Fidelity Simulator | Provides physics-based property evaluation. | Schrodinger's FEP+, OpenMM, GROMACS. |

| Differentiable Surrogate Model | Fast, approximate property predictor. | Graph Neural Network (GNN) with attention. |

| Generative Model | Proposes novel molecular structures. | Junction Tree VAE, REINVENT agent. |

| Orchestration Software | Manages the iterative loop. | Python scripts with RDKit, DeepChem, PyTorch. |

Methodology:

- Initialization: Train the surrogate GNN on a small seed dataset (~100 compounds) with properties calculated by the high-fidelity simulator.

- Iterative Cycle (Repeat for N rounds): a. Proposal: The generative model proposes a batch of 50 candidate molecules. b. Pre-screening: The surrogate GNN rapidly scores all candidates. Select the top 10 with the highest predicted property. c. Expensive Evaluation: Run the high-fidelity physics simulation (e.g., alchemical binding free energy calculation) on the top 10 candidates. d. Data Augmentation: Add the new (candidate, high-fidelity score) pairs to the training dataset. e. Update: Retrain or fine-tune the surrogate GNN on the augmented dataset.

- Termination: Stop when a candidate meets the target property threshold or after a predefined budget of expensive evaluations (e.g., 500).

Visualization of Workflows

Title: Iterative Hybrid Model Optimization Loop

Title: Hybrid Model Information Flow Architecture

Frequently Asked Questions (FAQs)

Q1: My generative model is producing molecules that are synthetically infeasible. How can fragment-based methods help? A: Fragment-based generation seeds the process with known, synthesizable chemical motifs, drastically increasing the probability of generating viable candidates. Constraining the generation to a specific molecular scaffold further ensures the core structure remains tractable. This reduces the search space from billions of potential compounds to a focused library around your privileged scaffold.

Q2: When performing scaffold-constrained generation, how do I choose the optimal level of rigidity (core constraint) versus flexibility (R-group variation)? A: This is a key hyperparameter. Start with a highly constrained core based on your target's known binding site geometry. Systematically relax constraints (e.g., allow fusion of a specific ring, or permit limited substitution on a core atom) in successive optimization cycles. Monitor the property cliff profile—sudden drops in predicted activity with small structural changes—to find the balance that maintains activity while exploring novelty.

Q3: I am encountering the "vanishing scaffolds" problem where my model ignores the constraint over long generation trajectories. How can I troubleshoot this? A: This is common in recurrent neural network (RNN) or long short-term memory (LSTM)-based generators. Implement and verify:

- Strong Penalty Terms: Ensure your reinforcement learning (RL) reward or loss function has a substantial negative reward for scaffold deviation.

- Syntax Check: Integrate a post-generation step that filters or discards any molecule not containing the SMARTS pattern of your scaffold.

- Architecture Switch: Consider using a graph-based model (e.g., Graph Convolutional Network) where the scaffold can be explicitly encoded as a fixed sub-graph, making it inherently preserved during generation.

Q4: How do I quantitatively know if my constrained search is more sample-efficient than a purely de novo approach? A: You must track benchmark-specific metrics. For example, in the Guacamol or MOSES benchmarks, plot the hit rate (percentage of molecules above a desired property threshold) against the number of molecules generated/sampled. A more sample-efficient method will achieve a higher hit rate with fewer generated molecules. See Table 1 for a hypothetical comparison.

Table 1: Sample Efficiency Comparison in a Molecular Optimization Benchmark

| Generation Method | Molecules Sampled | Hit Rate (>0.8 pIC50) | Unique Scaffolds | Synthetic Accessibility Score (SA) |

|---|---|---|---|---|

| De Novo (RL) | 50,000 | 1.2% | 412 | 4.5 |

| Fragment-Based | 50,000 | 3.8% | 89 | 6.2 |

| Scaffold-Constrained | 10,000 | 4.1% | 1 (Core) + 24 R-groups | 6.8 |

Q5: What are the common failure modes when linking fragments to a core scaffold, and how can I address them? A:

- Steric Clash: The linker or fragment placement causes atomic overlap. Solution: Use a conformer-aware docking step or a distance-based penalty in the scoring function during the in silico linking process.

- Loss of Key Pharmacophore Interaction: The new fragment blocks a crucial interaction. Solution: Perform a pharmacophore analysis of your original hit and include a constraint that those feature points (e.g., hydrogen bond donor) must remain accessible in the generated molecules.

- Poor ADMET Prediction: The new combination leads to unfavorable pharmacokinetics. Solution: Integrate lightweight ADMET filters (e.g., QED, Lipinski alerts, predicted hERG liability) into the early-stage generation loop, not just as a post-filter.

Experimental Protocols

Protocol 1: Benchmarking Sample Efficiency for Scaffold-Constrained Generation

Objective: To quantitatively compare the sample efficiency of scaffold-constrained generation against a baseline de novo method on a defined optimization goal.

Materials: See "Research Reagent Solutions" table.

Methodology:

- Define Benchmark: Select a public benchmark (e.g., optimizing penalized logP for a specific seed scaffold in the ZINC database).

- Baseline Model: Train or utilize a published de novo molecular generator (e.g., an RNN with RL fine-tuning) for 5 epochs.

- Constrained Model: Configure a scaffold-constrained generator (e.g., using the SMILES-based or graph-based approach with the seed scaffold fixed).

- Sampling: From each model, sample sets of molecules at increasing intervals (e.g., 1k, 5k, 10k, 50k).

- Evaluation: For each sample set, calculate:

- The percentage of molecules that improve the property vs. the seed.

- The top-10 property scores.

- The synthetic accessibility (SA) score.

- The number of unique valid molecules.

- Analysis: Plot property improvement vs. number of samples. The method whose curve rises faster and to a higher plateau is more sample-efficient.

Protocol 2: Implementing a Fragment-Based Growth Workflow

Objective: To grow a seed fragment into a viable lead candidate using a stepwise, fragment-linking approach.

Methodology:

- Fragment Library Preparation: Curate a library of purchasable building blocks (e.g., from Enamine REAL space). Filter by desired size (MW <250), reactivity, and lack of undesirable substructures.

- Seed Docking: Dock the seed fragment into the target protein's binding site using software like AutoDock Vina or GOLD. Identify potential growth vectors (atoms with unsatisfied hydrogen bonds, exposed hydrophobic patches).

- In Silico Linking: Use a tool like Fragment Network or a combinatorial linker library to propose connections from the growth vector to fragments in your library. Generate and score (e.g., with MM-GBSA) the resulting molecules.

- Iterative Optimization: Select the top 5-10 linked compounds. Redefine each as the new "seed" and repeat steps 2-3 for a second cycle of growth or diversification.

- Synthesis Prioritization: Rank final compounds by a composite score of binding affinity prediction, SA score, and drug-likeness (QED). Select top 2-3 for synthesis and experimental validation.

Research Reagent Solutions

Table 2: Essential Tools for Fragment-Based & Constrained Generation Research

| Item / Resource | Category | Function / Explanation |

|---|---|---|

| ZINC20 / Enamine REAL | Compound Database | Source for purchasable fragments and building blocks for in silico library construction. |

| RDKit | Cheminformatics Toolkit | Open-source Python library for molecule manipulation, scaffold decomposition, fingerprint generation, and SMARTS pattern matching. Essential for implementing constraints. |

| MOSES / Guacamol | Benchmarking Platform | Standardized benchmarks for evaluating the distributional and goal-directed performance of generative models. |

| AutoDock Vina, GOLD | Molecular Docking Software | Used to position fragments and generated molecules in a protein binding site for preliminary affinity scoring. |

| Schrödinger Suite, OpenEye Toolkit | Commercial Drug Discovery Software | Provide robust, high-throughput workflows for docking, MM-GBSA scoring, and pharmacophore modeling. |

| REINVENT, MolDQN | Generative Model Frameworks | Open-source and published frameworks for RL-based molecular generation, which can be adapted for scaffold constraints. |

| Synthetic Accessibility (SA) Score | Computational Filter | A score (typically 1-10) estimating the ease of synthesizing a molecule, used to prioritize viable candidates. |

| Graph Convolutional Network (GCN) | Model Architecture | A type of neural network that operates directly on graph representations of molecules, allowing natural encoding of fixed scaffold sub-graphs. |

Visualizations

Diagram 1: Constrained vs. De Novo Search Space

Diagram 2: Fragment-Based Optimization Workflow

Implementing Off-Policy Correction and Experience Replay in Reinforcement Learning Frameworks

Troubleshooting Guides & FAQs

Q1: During off-policy training for molecular generation, my agent's policy collapses to a few repetitive suboptimal structures. What could be the cause and solution?

A: This is often caused by overestimation bias and insufficient exploration, exacerbated by the high-dimensional, sparse reward nature of molecular spaces.

- Primary Cause: High TD-error transitions related to moderately good molecules dominate the replay buffer, leading to aggressive overfitting.

- Solution: Implement clipped Double Q-Learning (as in TD3) for the critic network and increase the entropy regularization coefficient. Additionally, apply a "novelty bonus" to the reward function based on Tanimoto similarity to recent molecules in the buffer.

Q2: My PER (Prioritized Experience Replay) implementation leads to unstable Q-value gradients and NaN errors. How do I debug this?

A: This is typically due to unbounded importance sampling (IS) weights or extremely high priority for a small set of transitions.

- Debug Protocol:

- Clip IS Weights: Implement a hard clamp (e.g., 0 to 10) on the IS weights and monitor their distribution.

- Apply Priority Smoothing: Add a small constant (ε = 1e-5) to every TD error when computing priority (P = |δ| + ε).

- Gradient Monitoring: Log the L2-norm of the critic network gradients before the update step. If it spikes, reduce the learning rate or apply gradient clipping.

- Recommended Hyperparameters for Molecular Benchmarks:

Q3: How do I effectively design the reward function for off-policy molecular optimization to work well with experience replay?

A: Sparse, final-step-only rewards (e.g., based on a docking score) are problematic. Dense, shaped rewards are critical.

- Methodology: Use a multi-objective reward signal combining:

- Stepwise Validity Reward: A small positive reward for each step that maintains a valid molecular graph.

- Intermediate Property Bonus: Reward improvement in approximate properties (e.g., QED, SA Score) even before episode termination.

- Final Objective Reward: The primary goal (e.g., binding affinity). Normalize this component to a consistent range (e.g., [-1, 1]) across your benchmark to stabilize Q-learning.

- Protocol: Record the distribution of each reward component in the buffer. If the standard deviation of the total reward exceeds 5.0, apply reward scaling or clipping.

Q4: When using n-step returns with PER for molecular optimization, how do I handle the "off-policyness" across multiple steps?

A: Use the Retrace(λ) algorithm or a truncated Importance Sampling (IS) correction.

- Retrace(λ) Workflow: It gracefully decays the correction for long traces, preventing high variance.

- Store trajectories (state, action, reward, done, π_target(a|s)) in the buffer.

- During sampling, for a given n-step transition, compute the Retrace weight

c_t = λ * min(1, (π_current(a_t|s_t) / π_target(a_t|s_t))). - Compute the n-step Retrace target Q-value recursively. This is more stable than raw IS for n>1.

Title: Retrace(λ) Correction for n-step PER

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Component | Function in Molecular RL Framework |

|---|---|

| RDKit | Open-source cheminformatics toolkit; used for state representation (Morgan fingerprints), validity checks, and property calculation (e.g., LogP, SA Score). |

| Docking Software (e.g., AutoDock Vina, Schrodinger Glide) | Provides the primary objective reward signal (estimated binding affinity) for generated molecular structures in silico benchmarks. |

| ZINC or ChEMBL Database | Source of starting molecules or "building blocks" for fragment-based molecular generation environments. |

| PyTor-Geometric (PyG) or DGL | Graph neural network libraries essential for building policies and critics that operate directly on molecular graph representations. |

| OpenAI Gym / Gymnasium | API for creating custom molecular optimization environments, enabling standardized agent benchmarking. |

| Weight & Biases (W&B) / MLflow | Experiment tracking tools to log hyperparameters, reward curves, and generated molecule properties across hundreds of runs. |

Experimental Protocol: Benchmarking Off-Policy Corrections in Molecular Optimization

Objective: Compare the sample efficiency of standard DDPG+PER vs. DDPG+PER with Retrace(λ) correction on the "Penalized LogP" benchmark.

1. Environment Setup:

- Use the

moleculeenvironment from the GuacaMol suite. - State: Morgan fingerprint (radius=3, 2048 bits) of the current molecule.

- Action: A discrete action space: [Add Atom, Add Bond, Remove Atom, Remove Bond, Change Atom Type].

- Episode Length: Maximum 40 steps per molecule.

2. Agent Configuration:

- Baseline: DDPG agent with a standard PER buffer (α=0.6, β=0.4→1.0).

- Intervention: DDPG agent with PER + Retrace(λ) (λ=0.95, n-step=5).

- Common Hyperparameters:

3. Evaluation Metric:

- Every 5000 environment steps, freeze the policy and generate 100 molecules.

- Record the top-3 Penalized LogP scores and the diversity (pairwise Tanimoto similarity < 0.4) of all valid generated molecules.

Title: Molecular RL Sample Efficiency Benchmark Workflow

4. Expected Quantitative Outcome: The intervention should achieve comparable or better top-3 scores using fewer environment steps, indicating improved sample efficiency.

| Method | Avg. Steps to Score > 5.0 | Top-3 Score at 200k Steps | Generated Diversity (%) |

|---|---|---|---|

| DDPG + PER (Baseline) | ~85,000 | 6.2 ± 0.4 | 65% |

| DDPG + PER + Retrace(λ) | ~60,000 | 7.1 ± 0.3 | 78% |

Debugging the Design Loop: Practical Solutions for Common Sample Efficiency Pitfalls

Diagnosing Overfitting to Benchmark Artifacts and Proxy Objectives

Troubleshooting Guides & FAQs

Q1: How can I tell if my model's performance on a benchmark like GuacaMol or MOSES is genuine or due to overfitting to benchmark artifacts? A1: Signs include a large performance gap between benchmark scores and functional wet-lab validation, and performance that collapses when evaluated on a "clean" hold-out test set curated to remove known artifacts. Conduct a sensitivity analysis by training on progressively filtered data and testing on both the original and cleaned validation sets. A model overfitting to artifacts will show a steep performance decline on the cleaned set.

Q2: My model excels at the proxy objective (e.g., high QED, SA Score) but generates molecules with poor binding affinity in assays. What's wrong? A2: This is a classic sign of over-optimization to a flawed or incomplete proxy. The proxy may not capture critical real-world complexities like pharmacokinetics or specific protein-ligand interactions. Diagnose this by:

- Analyzing the correlation matrix between your proxy objectives and downstream experimental results from your literature search.

- Implementing multi-fidelity optimization, where you iteratively refine the proxy using sparse high-fidelity experimental data.

Q3: What are common benchmark artifacts in molecular datasets, and how do I mitigate them? A3: Common artifacts include:

- Bias from overrepresented scaffolds in the training data.

- Data leakage between training and test sets due to overly similar molecules.

- Simplistic reward functions that are gameable (e.g., penalizing certain substructures without chemical rationale).

Mitigation Protocol: Use the Benchmark Factor (BF) diagnostic as described by recent literature. Train two models: one on the standard benchmark training set and another on a carefully curated "anti-artifact" set where suspected artifactual patterns are removed or balanced. Compare their performance on the standard test set.

Table 1: Common Molecular Benchmarks & Associated Artifact Risks

| Benchmark | Primary Proxy Objective | Common Artifacts/Risks |

|---|---|---|

| GuacaMol | Similarity, properties, scaffolds | Overfitting to trivial transformations (e.g., methylation) for similarity tasks. |

| MOSES | Distributional metrics (NDB, FCD) | Learning to generate only the most frequent scaffolds in the training distribution. |

| ZINC20 | Docking score (as proxy for binding) | Overfitting to the scoring function's approximations rather than true binding physics. |

Q4: What is a robust experimental protocol to diagnose overfitting in my molecular optimization pipeline? A4: Hold-out Validation Protocol with Sequential Filtering

- Data Splitting: Create three data splits: Training, Standard-Test (benchmark standard), and Clean-Test (heavily curated).

- Model Training: Train your model on the Training set.

- Primary Diagnosis: Evaluate on both Standard-Test and Clean-Test. A significant drop (>20% relative) in key metrics (e.g., success rate) on Clean-Test indicates overfitting to dataset artifacts.

- Proxy Objective Test: For top-generated molecules from Step 3, compute a battery of auxiliary properties not used in the proxy (e.g., synthesizability cost, predicted toxicity). If these auxiliary properties degrade significantly compared to baseline molecules, the model is overfitting to the narrow proxy.

Table 2: Key Research Reagent Solutions for Diagnosis Experiments

| Item/Reagent | Function in Diagnosis |

|---|---|

| Cleaned Benchmark Derivatives (e.g., "GuacaMol-Hard") | Provide a more rigorous test set by removing trivial molecular transformations and balancing scaffold diversity. |

| Multi-Fidelity Surrogate Models | Act as intermediate proxies that blend cheap computational scores with sparse, expensive experimental data to better approximate the true objective. |

| Scaffold Analysis Toolkit (e.g., RDKit) | To quantify scaffold diversity (e.g., using Bemis-Murcko scaffolds) and detect over-reliance on specific chemical frameworks. |

| Adversarial Validation Scripts | Train a classifier to distinguish between training and test sets. High classifier accuracy indicates significant distribution shift/data leakage, flagging potential artifact bias. |

Q5: Can you visualize the core diagnostic workflow for artifact overfitting? A5: Title: Overfitting Diagnosis Workflow for Molecular AI

Q6: How do proxy objectives relate to the true objective in drug discovery? A6: Title: Proxy vs. True Objective Relationship

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: My molecular generator is converging too quickly to a single, high-scoring scaffold, drastically reducing library diversity. How can I encourage more exploration? A: This is a classic sign of an over-exploitative reward function. Implement a diversity-promoting penalty or bonus.

- Solution: Integrate a Tanimoto similarity penalty or a Novelty Reward. Modify your reward function

Rto:R = Property_Score - λ * (Average_Tanimoto_Similarity_to_Recent_Molecules). Start with a low λ (e.g., 0.1) and increase incrementally. Monitor the diversity-property Pareto front. - Protocol: