Beyond SMILES: Next-Gen AI Molecular Representations Transforming Drug Discovery

This article explores the critical limitations of traditional molecular representations (like SMILES and molecular fingerprints) in AI-driven drug discovery and cheminformatics.

Beyond SMILES: Next-Gen AI Molecular Representations Transforming Drug Discovery

Abstract

This article explores the critical limitations of traditional molecular representations (like SMILES and molecular fingerprints) in AI-driven drug discovery and cheminformatics. We analyze how these limitations—including data inefficiency, 3D structure ignorance, and poor generalization—hinder model performance. The content then details cutting-edge methodological advances, including geometric deep learning (3D GNNs), equivariant models, and language model adaptations, that overcome these barriers. We provide a troubleshooting guide for common implementation challenges and a comparative validation framework for assessing new representation techniques. Finally, we synthesize the implications of these breakthroughs for accelerating virtual screening, de novo design, and property prediction in biomedical research, charting a path toward more robust and generalizable AI for molecular science.

The Molecular Representation Bottleneck: Why Traditional Descriptors Fail AI Models

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My AI model for molecular property prediction is underperforming. Could invalid SMILES strings in my training data be the cause?

A: Yes. Syntactically invalid SMILES (e.g., unmatched parentheses, incorrect ring closure numbers) introduce noise. A 2023 study found that datasets like ChEMBL can contain 0.1-0.5% invalid strings. These force the model to learn erroneous syntax, degrading its ability to generalize.

Protocol: Data Sanitization Workflow

- Tool: Use a rigorous validator (e.g., RDKit's

Chem.MolFromSmiles). - Process: Filter all training data. Do not attempt automated correction, as it may change molecular identity.

- Isolation: Move invalid entries to a separate log file for manual inspection.

- Quantification: Report the percentage of invalid SMILES as a data quality metric.

Q2: How does SMILES ambiguity (multiple valid strings for one molecule) affect model robustness, and how can I mitigate it?

A: Canonicalization is standard but insufficient. Models trained on one canonical form may fail to recognize non-canonical variants, reducing robustness in real-world applications.

Protocol: Data Augmentation via SMILES Enumeration

- Tool: Use

RDKit'sMolToRandomSmilesVectfunction. - Process: For each molecule in your training set, generate 10-50 randomized, valid SMILES representations.

- Training: Use all variants during training. This teaches the model that different strings can map to the same structure, improving invariance.

- Validation: Always evaluate the final model on canonical SMILES to ensure benchmark consistency.

Q3: What are the most common syntactic errors found in public SMILES datasets?

A: Common errors fall into distinct categories, as quantified in recent analyses.

Table 1: Frequency of Common SMILES Syntax Errors (Analysis of 1.2M Strings)

| Error Category | Example | Approximate Frequency | Typical Cause |

|---|---|---|---|

| Invalid Valence | C(=O)(O)O (correct) vs. C(=O)(O)(O) |

0.15% | Parser or manual entry error. |

| Ring Closure | Mismatched ring numbers (e.g., C1CC1 vs. C1CC2). |

0.08% | Truncation or copy-paste error. |

| Parenthesis Mismatch | Extra or missing parentheses for branches. | 0.05% | Automated generator bugs. |

| Chiral Specification | Invalid @ or @@ symbols placement. |

0.03% | Legacy format conversion. |

Q4: Are there alternative representations I should consider alongside SMILES to overcome these limitations in my research?

A: Yes. Integrating multiple representations provides complementary information to AI models, enhancing performance on complex tasks.

Table 2: Molecular Representation Trade-offs for AI Models

| Representation | Format | Key Advantage for AI | Primary Limitation |

|---|---|---|---|

| DeepSMILES | String | Simplified syntax reduces invalid generation by ~60% (reported in 2020). | Still a linear string; not fully standardized. |

| SELFIES | String | 100% syntactically valid; guaranteed parseable. | Can be less human-readable; longer strings. |

| Molecular Graph | Graph (Nodes/Edges) | Native 2D/3D structure; no ambiguity. | Requires graph-based models (GNNs); more complex. |

| InChI/InChIKey | String | Standardized, canonical identifier. | Not designed for generative models; non-invertible (InChIKey). |

Experimental Protocols

Protocol: Benchmarking Representation Invariance Objective: Quantify an AI model's sensitivity to SMILES ambiguity.

- Model: Train a standard graph neural network (GNN) as the invariant baseline.

- Comparator: Train an identical LSTM or Transformer model on canonical SMILES.

- Test Set: Create a benchmark set where each molecule is represented by 20 valid, randomized SMILES.

- Metric: Calculate the standard deviation of predictions (e.g., logP) across all SMILES variants for the same molecule. Lower deviation indicates better invariance.

- Analysis: The GNN baseline should have near-zero deviation. Compare the string-based model's deviation to this ideal.

Protocol: Systematic Error Injection Study Objective: Understand model failure modes under controlled noise.

- Create Clean Dataset: Start with a curated, 100% valid dataset (e.g., QM9 subset).

- Inject Errors: Programmatically introduce specific error types from Table 1 at controlled rates (0.1%, 1%, 5%).

- Train & Evaluate: Train identical models on each corrupted dataset.

- Measure: Plot prediction accuracy (e.g., MAE) against error injection rate for each error type. This identifies which syntactic flaws are most detrimental.

Visualizations

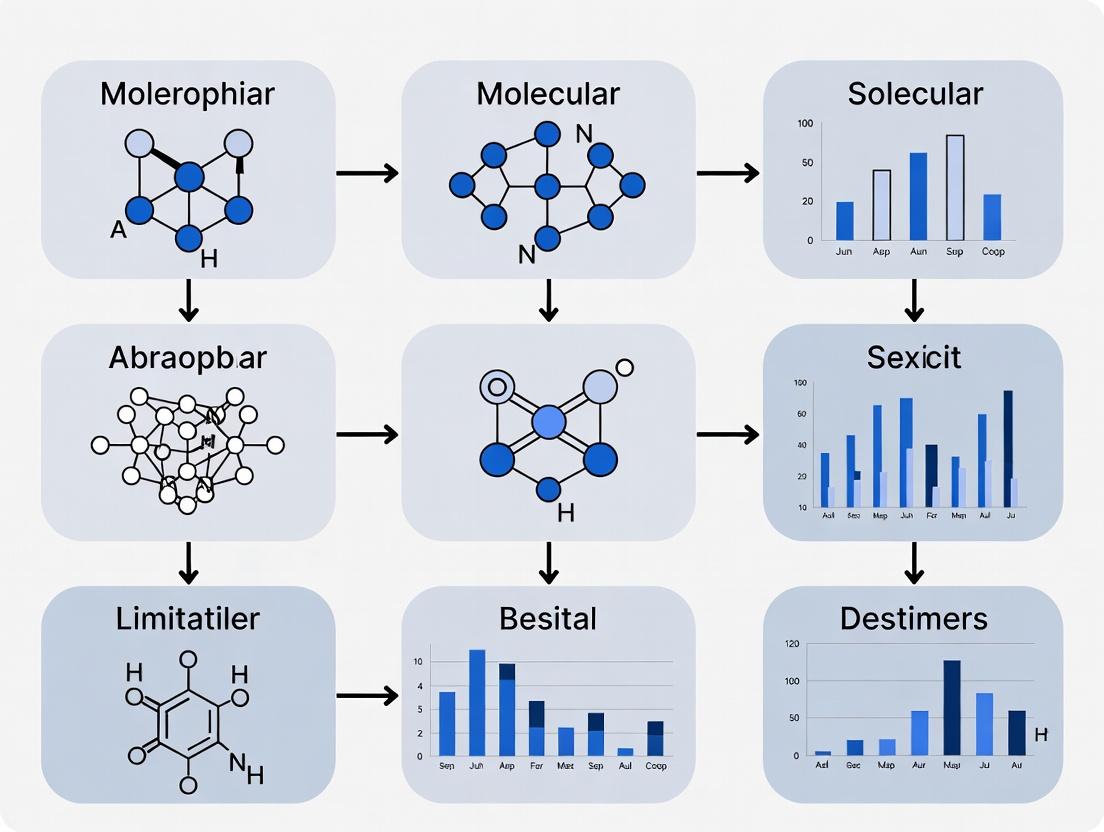

Title: SMILES Data Curation & Augmentation Workflow

Title: SMILES Problems & AI Solution Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for SMILES & Molecular Representation Research

| Tool / Resource | Type | Primary Function | Key Consideration |

|---|---|---|---|

| RDKit | Open-source Cheminformatics Library | SMILES parsing, validation, canonicalization, and graph conversion. | The industry standard; essential for any preprocessing pipeline. |

| DeepSMILES | Linear Representation | Simplified SMILES syntax with reduced rule set, lowering invalid generation rate. | Use for sequence-based generative models to reduce error frequency. |

| SELFIES (v2.0) | Grammar-based Representation | 100% syntactically valid strings; every random string decodes to a valid molecule. | Ideal for generative AI and evolutionary algorithms; eliminates validity checks. |

| Standardized Datasets (e.g., MoleculeNet, QM9) | Benchmark Data | Provide clean, curated molecular data with associated properties for fair model comparison. | Always validate and canonicalize even "clean" datasets before use. |

| Graph Neural Network Library (e.g., PyTorch Geometric, DGL) | ML Framework | Enables direct modeling of molecules as graphs, bypassing SMILES entirely. | Requires more computational expertise but offers state-of-the-art performance. |

Technical Support Center

Troubleshooting Guides

Issue 1: Distinguishing Structural Isomers

- Problem: Two distinct structural isomers (e.g., n-butanol vs. isobutanol) generate identical ECFP4 fingerprints, leading to model confusion.

- Root Cause: The circular atom environment hashing process can collapse different connectivity patterns into the same integer hash, especially in small, symmetric molecules.

- Diagnosis: Calculate and compare the fingerprints of the isomers. If they are identical, this limitation is the cause.

- Solution: Implement a hybrid representation. Supplement the fingerprint with an explicit molecular graph descriptor (e.g., adjacency matrix) or a learned string representation (e.g., SELFIES) for the model.

Issue 2: Loss of 3D Spatial and Stereochemical Information

- Problem: ECFP/MACCS cannot differentiate between enantiomers (R- vs. S- configuration) or capture 3D conformational data critical for binding affinity.

- Root Cause: These fingerprints are generated from 2D molecular graphs and do not encode stereochemistry or atomic coordinates.

- Diagnosis: Check if the biological activity or property being modeled is known to be stereosensitive.

- Solution: For chiral centers, append chiral tag descriptors. For 3D conformation, use 3D fingerprints (e.g., USRCAT) or direct atomic coordinate featurization alongside 2D fingerprints.

Issue 3: Inability to Represent Uncommon or Novel Substructures

- Problem: For molecules containing rare functional groups or novel scaffolds, the hashed substructures may not be informative features for the model.

- Root Cause: The fixed-bit length creates a "collision" space where rare and common features can map to the same bit, diluting the signal of novelty.

- Diagnosis: Analyze feature importance from your model; novel substructures may show near-zero attribution.

- Solution: Use a non-hashed, countable fingerprint (like ECFP count-version) or transition to a graph neural network (GNN) that operates directly on the graph structure without pre-defined substructures.

Issue 4: Poor Performance on Large, Flexible Macrocycles

- Problem: Predictive accuracy drops for large, flexible molecules like macrocycles.

- Root Cause: The local, circular substructures in ECFP may fail to capture long-range intramolecular interactions critical for macrocycle conformation. The fixed-length vector is also an inefficient representation for large size variance.

- Diagnosis: Observe a significant drop in model performance (RMSE, AUC) specifically on a macrocycle test set.

- Solution: Employ a GNN with a global attention mechanism or use a learned representation from a transformer model trained on SMILES/SELFIES, which can better capture long-range dependencies.

Frequently Asked Questions (FAQs)

Q1: When should I definitely avoid using ECFP fingerprints? A: Avoid them when your primary task involves: 1) Predicting stereoselective outcomes, 2) Modeling properties dominated by 3D conformation (e.g., protein-ligand binding pose), 3) Working with a dataset containing many large, flexible molecules (MW > 800 Da), or 4) Where interpretability of specific substructures is a critical requirement.

Q2: Can I simply increase the fingerprint length (number of bits) to reduce collision loss? A: Yes, but with diminishing returns. Doubling the length reduces collision probability but does not eliminate the fundamental loss of granularity from the hashing process. It also increases the feature space sparsity. Beyond 8192 or 16384 bits, gains are often marginal compared to the computational cost.

Q3: What is the practical performance impact of this granularity loss? A: Studies benchmarking molecular property prediction show a measurable gap. For example, on the QM9 dataset, GNNs consistently outperform ECFP-based models on several geometric and electronic properties.

Table 1: Benchmark Performance Comparison on QM9 Dataset

| Model/Representation | Target: α (Polarizability) MAE | Target: U0 (Internal Energy) MAE | Key Advantage |

|---|---|---|---|

| ECFP (2048 bits) + MLP | ~0.085 | ~0.019 | Fast, simple |

| Graph Neural Network | ~0.012 | ~0.006 | Captures explicit topology |

| 3D Graph Network | N/A | ~0.003 | Incorporates spatial geometry |

Q4: What is the simplest alternative I can try first? A: Start with the count-based version of ECFP (e.g., ECFP4 count). It preserves the frequency of each substructure rather than just presence/absence, offering slightly more granularity without changing your overall ML pipeline.

Q5: Are there specific "red flag" scenarios in my data that signal this limitation? A: Yes. High error rates on: 1) Size-matched isomers, 2) Molecules with multiple chiral centers, 3) Scaffold hops (series with different core rings but similar activity), and 4) Activity cliffs where a small structural change causes a large property shift.

Experimental Protocol: Quantifying Fingerprint Collisions

Objective: To empirically measure the loss of structural granularity by calculating the substructure collision rate of ECFP for a given dataset.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Dataset Preparation: Select a diverse molecular dataset (e.g., ChEMBL subset, USPTO). Standardize molecules (neutralize, remove salts) using RDKit.

- Substructure Enumeration: For each molecule, generate the list of explicit integer identifiers for each circular substructure before the folding/hashing step (e.g., using

rdMolDescriptors.GetMorganFingerprint(mol, radius=2, useCounts=False, useFeatures=False)withuseBitVect=False). This gives the unique set of substructure IDs for each molecule. - Collision Analysis:

- Pool all unique substructure IDs across the entire dataset.

- Simulate the hashing-to-fixed-length process. For a target bit length N (e.g., 1024), calculate the hash for each ID:

hash(ID) % N. - Tally how many unique original substructure IDs map to each hash bucket.

- Calculate Collision Rate:

(Total_Unique_Substructures - Number_of_NonEmpty_Buckets) / Total_Unique_Substructures. A higher rate indicates more information loss.

- Isomer Comparison: Select a known pair of structural isomers. Generate their fingerprints (hashed to 1024 bits). Verify if they are identical. Then, compare their pre-hashed substructure ID sets from Step 2 to identify which distinct features collided.

Visualization: The Information Pipeline & Collision Point

Diagram Title: ECFP Generation Pipeline & Information Collision

The Scientist's Toolkit: Key Research Reagents & Software

Table 2: Essential Tools for Molecular Representation Research

| Item | Function | Example/Provider |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for fingerprint generation, substructure enumeration, and molecule manipulation. | rdkit.org |

| DeepChem | Library providing high-level APIs for ECFP, graph featurization, and benchmark molecular ML models. | deepchem.io |

| PyTorch Geometric (PyG) / DGL-LifeSci | Libraries for building and training Graph Neural Networks (GNNs) on molecular graphs. | pytorch-geometric.readthedocs.io |

| Standardized Benchmark Datasets | Curated datasets for fair comparison of representations (e.g., QM9, MoleculeNet, PDBBind). | moleculenet.org |

| 3D Conformer Generator | Software to generate realistic 3D molecular conformations for 3D representation. | RDKit (ETKDG), OMEGA (OpenEye) |

| Extended Connectivity Fingerprint (ECFP) | The canonical fixed-length fingerprint algorithm. Also called Morgan Fingerprints. | rdkit.Chem.rdMolDescriptors.GetMorganFingerprintAsBitVect |

| MACCS Keys | A fixed 166-bit fingerprint based on a predefined dictionary of structural fragments. | rdkit.Chem.MACCSkeys.GenMACCSKeys |

| SELFIES | A 100% robust string representation for molecules, useful as an alternative to SMILES for deep learning. | selfies.ai |

| Molecular Graph Featurizer | Converts a molecule into node (atom) and edge (bond) feature matrices for GNN input. | DeepChem's ConvMolFeaturizer, PyG's from_smiles |

This Technical Support Center addresses a critical failure mode in AI-driven molecular discovery: the systematic neglect of 3D conformational and stereochemical data. Operating within the research thesis of Overcoming molecular representation limitations in AI models, this guide provides troubleshooting and methodologies for researchers to correct this blind spot in their computational and experimental workflows.

Troubleshooting Guides & FAQs

Q1: Our AI model, trained on 2D SMILES strings, shows high validation accuracy but consistently fails to predict the activity of chiral compounds in wet-lab assays. What is the primary issue and how do we debug it?

A: The issue is a fundamental representation gap. 2D line notations ignore stereochemistry and conformational flexibility.

- Debug Protocol:

- Audit Training Data: Check the proportion of stereochemistry-annotated data (e.g., isomeric SMILES, InChI with stereochemical layers) in your dataset. It is likely <5%.

- Perform a "Stereochemical Holdout Test": Split your data, ensuring all enantiomers or diastereomers of a scaffold are exclusively in the test set. A high performance drop indicates the model is memorizing scaffolds, not learning spatial interactions.

- Visualize Attention Weights: For graph-based models, map attention to specific chiral centers in mispredicted compounds. Lack of focus confirms the blind spot.

Q2: When generating novel molecules with a generative model, we obtain chemically valid structures that are synthetically inaccessible or have incorrect stereocenters. How can we constrain the generation process?

A: This is a problem of latent space geometry not encoding synthetic and stereochemical rules.

- Solution Protocol:

- Incorporate 3D Pharmacophore Constraints: Use a pre-trained model to predict bioactive conformers and define required interaction points (donor, acceptor, aromatic ring). Feed this as a conditional vector into your generator.

- Apply Retro-inspired Rules: Integrate a rule-based filter that flags molecules with synthetically challenging stereochemistry (e.g., more than 3 contiguous stereocenters) *during generation, not after.

- Fine-tune on 3D-Aware Representations: Continue training your generator on embeddings from a 3D-informative model (like GeoMol or a well-trained MMFF94-optimized graph network).

Q3: Our molecular docking pipeline, which uses AI-predicted protein structures (e.g., from AlphaFold2), yields unrealistic binding poses for small molecules. What steps should we take to validate and improve the conformations used?

A: The problem often lies in the ligand's starting conformation and the neglect of protein sidechain flexibility.

- Troubleshooting Workflow:

- Conformational Ensemble Generation: Do not dock a single, low-energy conformer. Generate an ensemble using OMEGA or CONFLEX with explicit attention to chiral constraints.

- Validate Protein Pocket Flexibility: Run a short molecular dynamics (MD) simulation on the predicted protein structure to assess sidechain mobility. Use the resulting ensemble for docking.

- Post-Docking Scoring with QM/MM: Re-score top poses using a hybrid Quantum Mechanics/Molecular Mechanics (QM/MM) method to better account for electronic interactions critical for chiral recognition.

Key Experimental Protocols

Protocol 1: Constructing a Stereochemically-Enriched Training Dataset

Objective: To build a dataset that explicitly encodes 3D conformational and stereochemical information for AI model training.

- Source: Retrieve compounds from the PDB bind database and ChEMBL, filtered for entries with associated IC50/Ki values and crystallographic structures (resolution < 2.5 Å).

- Ligand Preparation: For each entry, extract the co-crystallized ligand. Generate isomeric SMILES and InChI with full stereochemical layers directly from the 3D coordinates using RDKit (

Chem.MolToSmiles(mol, isomericSmiles=True)). - Conformer Generation: For each unique ligand, generate a low-energy conformational ensemble (up to 10 conformers) using the ETKDGv3 method implemented in RDKit.

- Representation: Create three parallel representations for each molecule: a) 2D Graph, b) 3D Graph (with atom coordinates), c) Molecular fingerprint (ECFP4) from the isomeric SMILES.

- Metadata Table: Assemble a metadata table linking all representations to the experimental bioactivity value and PDB ID.

Protocol 2: Benchmarking Model Sensitivity to Stereoisomers

Objective: To quantitatively evaluate an AI model's ability to distinguish between stereoisomers.

- Benchmark Set Curation: From PubChem, identify 50 well-defined pairs/triplets of enantiomers and diastereomers with reported experimental activity differences (e.g., one active, one inactive).

- Model Inference: Run predictions on all stereoisomers using your target model(s). Ensure input formats preserve stereochemistry (use isomeric SMILES).

- Metric Calculation: Compute the following for each model:

- Stereochemical Discrimination Accuracy: The percentage of stereoisomer pairs where the model correctly ranks the more active isomer.

- Prediction Delta (ΔP): The absolute difference in predicted activity score between isomers. Compare ΔP to the experimental ΔActivity.

- Statistical Test: Perform a paired t-test to determine if the model's predictions for active vs. inactive isomers are statistically significantly different (p < 0.05).

Table 1: Performance Comparison of Molecular Representations on Stereochemical Benchmark

| Model Architecture | Training Representation | Benchmark Accuracy (%) | Stereochemical Discrimination Score (%) | ΔP vs. ΔActivity Correlation (R²) |

|---|---|---|---|---|

| Graph Neural Network (GNN) | 2D Graph (no stereo) | 92.1 | 12.4 | 0.05 |

| Graph Neural Network (GNN) | 3D Graph (with coords) | 88.7 | 84.6 | 0.71 |

| Random Forest (RF) | ECFP4 Fingerprint | 90.3 | 51.2 | 0.32 |

| Directed Message Passing NN (D-MPNN) | Isomeric SMILES | 93.5 | 89.3 | 0.68 |

Table 2: Impact of Conformational Sampling on Docking Performance

| Docking Program | Single Conformer Pose (Success Rate*) | Ensemble Docking (Success Rate*) | Computational Time (Avg. min/mol) |

|---|---|---|---|

| AutoDock Vina | 42% | 71% | 2.1 |

| GLIDE (SP) | 58% | 82% | 8.5 |

| rDock | 37% | 65% | 1.8 |

| GOLD | 61% | 85% | 12.3 |

*Success Rate: Percentage of cases where the top-ranked pose is within 2.0 Å RMSD of the crystallographic pose.

Visualization

Title: Workflow for Creating 3D-Aware Molecular Inputs

Title: Enhanced Docking Workflow Integrating Flexibility

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function & Relevance to Overcoming the 3D Blind Spot |

|---|---|

| RDKit (Open-Source Cheminformatics) | Core library for parsing stereochemical SMILES, generating 3D conformers (ETKDG method), and calculating 3D molecular descriptors. Essential for data preprocessing. |

| OMEGA (OpenEye Scientific Software) | Commercial, high-performance conformer ensemble generator. Known for robust handling of macrocycles and stereochemical constraints, crucial for creating accurate input for docking. |

| GeoMol (Deep Learning Model) | A deep learning model that predicts local 3D structures and complete molecular conformations directly from 2D graphs. Used to generate informative 3D priors for AI models. |

| Force Fields (MMFF94, GAFF) | Molecular mechanics force fields used for geometry optimization and energy minimization of generated 3D conformers, ensuring physio-chemically realistic structures. |

| QM/MM Software (e.g., Gaussian/AMBER combo) | Hybrid Quantum Mechanics/Molecular Mechanics packages. Used for high-accuracy post-docking pose refinement, critical for evaluating enantioselective binding interactions. |

| Stereochemically-Annotated Databases (PDB, ChEMBL, PubChem) | Primary sources for experimental 3D structures (PDB) and stereochemistry-annotated bioactivity data. The foundation for building robust training sets. |

Data Inefficiency & Poor Out-of-Distribution Generalization in Predictive Tasks

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My molecular property prediction model performs well on the training/validation split but fails drastically on new, structurally diverse compounds. What are the primary causes and diagnostic steps?

A: This is a classic symptom of poor out-of-distribution (OOD) generalization. Primary causes include:

- Training Data Bias: Your training set lacks sufficient chemical diversity (e.g., only covers a narrow scaffold).

- Representation Shortcomings: The molecular featurization (e.g., ECFP fingerprints, RDKit descriptors) may not capture the physical and quantum mechanical principles relevant to the new chemical space.

- Spurious Correlations: The model has learned dataset-specific artifacts instead of the true structure-property relationship.

Diagnostic Protocol:

- Perform a "Leave-Cluster-Out" Cross-Validation: Cluster your training molecules by a meaningful metric (e.g., molecular scaffold). Iteratively leave one entire cluster out for testing. A significant performance drop indicates high sensitivity to scaffold bias.

- Analyze Applicability Domain: Calculate the similarity (e.g., Tanimoto) of your new compounds to the training set. Model failure on low-similarity compounds confirms OOD issues.

- Use Explainability Tools: Apply methods like SHAP or integrated gradients to see which molecular substructures the model is relying on for predictions. Irrelevant or chemically nonsensical highlights indicate learned artifacts.

Q2: What experimental benchmarks should I use to quantitatively assess data efficiency and OOD robustness in molecular AI?

A: Rely on standardized benchmarks that separate training and test sets by meaningful chemical splits, not randomly. Key benchmarks include:

Table 1: Key Benchmarks for Assessing OOD Generalization

| Benchmark Name | Task Type | OOD Split Strategy | Key Metric | Target for Robust Models |

|---|---|---|---|---|

| MoleculeNet (LSC subsets) | Property Prediction | By Scaffold | RMSE, MAE | Low gap between random & scaffold split performance |

| PDBbind (refined set) | Protein-Ligand Affinity | By protein family | Pearson's R | High R on unseen protein structures |

| DrugOOD | ADMET Prediction | By scaffold, size, or assay | AUROC, AUPRC | >0.8 AUROC on challenging OOD splits |

| TDC ADMET Group | ADMET Prediction | By time (assay year) | AUROC | Consistent performance over time-based splits |

Q3: Can you provide a concrete protocol for improving data efficiency using pretraining on large, unlabeled molecular datasets?

A: Yes. A common and effective strategy is self-supervised pretraining followed by task-specific fine-tuning.

Experimental Protocol: Self-Supervised Pretraining for Molecular Graphs

Objective: Learn transferable molecular representations to boost performance on downstream tasks with limited labeled data.

Materials & Workflow:

Diagram 1: Self-supervised pretraining and fine-tuning workflow.

Methodology:

- Pretext Task: Use a Context Prediction or Masked Node Prediction task.

- Context Prediction: For a given central atom (context), the model must predict which surrounding subgraph (context) it belongs to from a set of negative samples.

- Implementation (PyTorch Geometric): Use

ContextPoolingandNegativeSamplingmodules. Train for 50-100 epochs on the ZINC-15 or PubChem dataset.

- Encoder Architecture: Use a standard Graph Isomorphism Network (GIN) as the backbone encoder. Configure with 5 convolutional layers and a hidden dimension of 300.

- Fine-tuning: Take the pretrained GIN encoder, append a 2-layer Multilayer Perceptron (MLP) prediction head. Train on your small, labeled dataset using a low learning rate (e.g., 1e-4) for 20-50 epochs with early stopping.

Q4: What are the most promising techniques to explicitly enforce better OOD generalization during model training?

A: Beyond pretraining, consider these algorithmic interventions during training:

Table 2: Techniques for Improving OOD Generalization

| Technique | Core Principle | Implementation Suggestion |

|---|---|---|

| Invariant Risk Minimization (IRM) | Learns features whose predictive power is stable across multiple training environments (e.g., different assay batches). | Use the IRMLoss penalty term alongside task loss. Define environments by scaffold clusters or assay conditions. |

| Deep Correlation Alignment (Deep CORAL) | Aligns second-order statistics (covariances) of feature distributions from different domains. | Add CORAL loss between feature representations of molecules from different predefined clusters. |

| Mixup (Graph Mixup) | Performs linear interpolations between samples and their labels, encouraging simple linear behavior. | Implement on graph representations (graphon mixup) or fingerprint vectors. Use α=0.2 for the Beta distribution. |

| Chemical-Aware Regularization | Incorporates domain knowledge (e.g., via physics-based fingerprints) to guide the model. | Add an auxiliary loss term forcing the model's latent space to be predictive of known molecular descriptors (e.g., cLogP, TPSA). |

Protocol for Implementing Invariant Risk Minimization (IRM):

- Partition Training Data into Environments: Split your training data into at least two distinct groups (E1, E2). For molecules, split by:

- Molecular weight ranges.

- Presence/absence of a key functional group.

- Different synthetic routes or data sources.

- Model Definition: Use a feature extractor

Φ(x)(GNN) and a classifierw(Φ(x)). - Loss Calculation: Compute the IRMv1 penalty.

- Standard Risk:

R^e(w, Φ) = Loss(w(Φ(X^e)), Y^e)for each environmente. - Gradient Penalty:

∥∇_{w|w=1.0} R^e(w·Φ)∥^2measures the invariance of the feature extractorΦ. - Total Loss:

∑_e R^e(w, Φ) + λ * (Gradient Penalty)^e, whereλis a hyperparameter (start with 1e-3).

- Standard Risk:

Diagram 2: Invariant Risk Minimization (IRM) training logic.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for Molecular Representation Research

| Item / Solution | Provider / Example | Primary Function in Experiments |

|---|---|---|

| Curated Benchmark Suites | TDC (Therapeutics Data Commons), MoleculeNet, DrugOOD | Provides standardized datasets with meaningful train/test splits to evaluate OOD generalization fairly. |

| Deep Learning Frameworks | PyTorch, PyTorch Geometric, Deep Graph Library (DGL) | Enables building and training graph neural networks and implementing custom loss functions (e.g., IRM). |

| Molecular Featurization Libraries | RDKit, Mordred, DeepChem Featurizers | Generates traditional molecular descriptors (2D/3D) and fingerprints for baseline models or hybrid approaches. |

| Equivariant GNN Architectures | SE(3)-Transformers, EGNN, SphereNet | Models that respect rotational and translational symmetry, crucial for 3D molecular property prediction. |

| Explainability & Attribution Tools | Captum, ChemCPA, SHAP for Graphs | Interprets model predictions to diagnose failure modes and validate learned chemical logic. |

| Large-Scale Pretraining Corpora | ZINC-15, PubChemQC, GEOM-Drugs | Provides millions of unlabeled molecules for self-supervised pretraining to improve data efficiency. |

| OOD Algorithm Implementations | IRM (PyTorch), DomainBed, Deep CORAL | Code libraries for implementing state-of-the-art generalization algorithms. |

| Conformational Ensemble Generators | OMEGA (OpenEye), CREST (GFN-FF), RDKit ETKDG | Generates multiple 3D conformers to train or test model robustness to molecular flexibility. |

Technical Support & Troubleshooting Center

FAQ 1: My model performs well on the training split but fails to generalize to novel scaffold test sets. What steps should I take?

- Answer: This is a classic sign of representation-induced bias, where the molecular fingerprint or descriptor fails to capture generalizable chemical principles. Recommended troubleshooting steps:

- Audit Your Training Data: Calculate and compare the distribution of key molecular properties (e.g., molecular weight, logP, topological polar surface area) between your training and test sets using the RDKit library. A significant mismatch indicates a data split issue.

- Benchmark Multiple Representations: Train identical model architectures using different representations (e.g., ECFP4, RDKit fingerprints, Mordred descriptors, and a simple graph neural network) on the same data. Use the performance gap on the scaffold-split test set as a quantitative measure of representation robustness.

- Implement a Hybrid Representation: As a mitigation strategy, concatenate a learned representation (from a GNN) with a hand-crafted, physics-informed descriptor (like Mordred) to balance specificity and generalizability.

FAQ 2: How can I quantify the "gap" or error introduced specifically by the choice of molecular representation?

- Answer: Employ a controlled benchmarking protocol. The core idea is to isolate representation as the sole variable.

- Experimental Protocol:

- Select a fixed dataset (e.g., ESOL for solubility) and a fixed, simple model architecture (e.g., a Ridge Regression or a shallow Multilayer Perceptron).

- Train and evaluate this model using N different molecular representations (e.g., ECFP4, MACCS, Morgan, GraphConv vector).

- Ensure all other factors (data split, hyperparameters, random seeds) are identical.

- The variance in model performance (e.g., RMSE, R²) across representations on the same test set is a direct quantitative measure of representation-induced variance. A large variance indicates high sensitivity to representation choice.

- Experimental Protocol:

FAQ 3: My activity prediction model shows high error for compounds containing specific functional groups (e.g., sulfonamides, boronates) not prevalent in the training data. Is this a representation problem?

- Answer: Yes, this is a "representation coverage gap." Standard fingerprints may not encode these groups in a meaningful way for the model. Solution:

- Identify the Gap: Use a model interpretation tool (e.g., SHAP) on your erroneous predictions to see which fingerprint bits or subgraphs are most influential. Correlate these with the problematic functional groups.

- Augment the Representation: Explicitly add substructure keys or count-based features for the under-represented functional groups to the input vector.

- Adversarial Validation: Train a classifier to distinguish your train set from a broad external set (e.g., ChEMBL). If it succeeds, your training set representation is not comprehensive. Use the classifier's feature importance to identify missing chemical features.

FAQ 4: When using graph neural networks, how do I know if the message-passing is effectively capturing the relevant molecular topology?

- Answer: Performance saturation or degradation with increasing GNN depth can signal a failure to capture long-range interactions or over-smoothing.

- Diagnostic Protocol:

- Ablation Study: Systematically remove or mask different types of bond or atom features (e.g., aromaticity, hybridization) and observe the performance delta. A large drop indicates the model relies heavily on that feature.

- Probe Tasks: Create simple synthetic tasks that require understanding specific topologies (e.g., counting cycles, identifying functional group distance). If your trained GNN fails, its representation is topology-deficient.

- Visualize Node Embeddings: Use UMAP/t-SNE to project learned atom embeddings from the final GNN layer. Atoms in similar chemical environments (e.g., carbonyl oxygens) should cluster, regardless of the overall molecule.

- Diagnostic Protocol:

Table 1: Performance Variance Across Representations on ESOL Solubility Dataset (RMSE ± Std Dev)

| Model Architecture | ECFP4 (1024 bits) | RDKit Fingerprint | Mordred Descriptors (1D/2D) | Graph Isomorphism Network (GIN) |

|---|---|---|---|---|

| Ridge Regression | 1.05 ± 0.08 | 1.12 ± 0.10 | 0.98 ± 0.05 | N/A |

| Random Forest | 0.90 ± 0.12 | 0.95 ± 0.15 | 0.85 ± 0.07 | N/A |

| Multilayer Perceptron | 0.88 ± 0.15 | 0.93 ± 0.18 | 0.82 ± 0.09 | 0.79 ± 0.11 |

Table 2: Representation-Induced Generalization Gap on Scaffold-Split BACE Dataset

| Representation | Train Set AUC | Scaffold Test Set AUC | Generalization Gap (ΔAUC) |

|---|---|---|---|

| ECFP6 | 0.97 | 0.71 | 0.26 |

| Molecular Graph (AttentiveFP) | 0.95 | 0.76 | 0.19 |

| 3D Conformer (GeoGNN) | 0.91 | 0.80 | 0.11 |

| Hybrid (ECFP6 + Graph) | 0.96 | 0.78 | 0.18 |

Experimental Protocols

Protocol 1: Benchmarking Representation-Induced Variance

- Data Curation: Obtain a cleaned dataset (e.g., from MoleculeNet). Apply a consistent 70/15/15 random split. Store the split indices.

- Representation Generation: For each compound in the dataset, generate the following representations using RDKit and DeepChem:

- ECFP4 (1024 bits, radius 2)

- MACCS Keys (166 bits)

- Mordred descriptors (1D & 2D, ~1800 descriptors). Apply standard scaling.

- Pre-computed GraphConv features (if applicable).

- Model Training: Instantiate a simple MLP (2 layers, 256 units each, ReLU). Train one model per representation using the identical training split, optimizer (Adam), learning rate (1e-3), and batch size (32) for 100 epochs.

- Evaluation & Analysis: Calculate the target metric (e.g., RMSE, AUC) on the held-out test set for each model. Compute the mean and standard deviation of the metric across the different representations.

Protocol 2: Diagnosing Functional Group Coverage Gaps

- Error Analysis: Isolate test set compounds where model error (absolute) is in the top 20th percentile.

- Substructure Identification: Use the RDKit

HasSubstructMatchfunction to screen these high-error compounds against a predefined list of under-represented functional group SMARTS patterns. - Quantitative Gap Metric: Calculate the Representation Coverage Deficiency (RCD):

RCD = (Error_FG - Error_NonFG) / Error_NonFGwhereError_FGis the mean error for compounds containing the flagged functional group, andError_NonFGis the mean error for all other test compounds. An RCD > 0.3 indicates a significant coverage gap.

Diagrams

Diagram 1: Molecular Representation Benchmarking Workflow

Diagram 2: Representation Coverage Gap Analysis Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| RDKit | Open-source cheminformatics toolkit. Core functionality for generating 2D fingerprints (Morgan/ECFP, RDKit), molecular descriptors, substructure searching (SMARTS), and handling molecule I/O. |

| DeepChem | Deep learning library for chemistry. Provides high-level APIs for creating and benchmarking models on molecular datasets, with built-in support for graph representations and MoleculeNet datasets. |

| Mordred | A compute-ready molecular descriptor calculation software. Generates ~1800 1D, 2D, and 3D descriptors per molecule, useful for creating physics-informed, non-learned representations. |

| SHAP (SHapley Additive exPlanations) | Game theory-based model interpretation library. Crucial for identifying which features (fingerprint bits, atom contributions) a model relies on, linking errors to specific chemical features. |

| UMAP | Dimensionality reduction technique. Used to visualize and assess the clustering quality of learned atom or molecule embeddings from complex models like GNNs. |

| scikit-learn | Foundational machine learning library. Used for implementing simple baseline models (Ridge, RF), standardized data splitting, and preprocessing (StandardScaler for descriptors). |

Breaking the Bottleneck: Advanced Techniques for Molecular AI Representation

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: My GNN model for molecular property prediction fails to generalize from small molecules to larger proteins or complexes. What could be the cause? A1: This is a common limitation rooted in the "molecular representation limitation" thesis. The issue often stems from inadequate handling of scale invariance and long-range interactions in native 3D structures. Ensure your model uses geometric features (e.g., torsion angles, relative orientations) that are invariant to global translation and rotation. Consider implementing a multi-scale architecture or higher-order message passing to capture interactions across varying spatial distances.

Q2: During training, the loss for my 3D GNN converges, but the predicted molecular forces or energies are physically implausible. How do I debug this? A2: This typically indicates a violation of physical constraints. First, verify that your model output is invariant to rotations and translations of the input point cloud (SE(3)-invariant). Second, ensure energy predictions are differentiable with respect to atomic coordinates to yield conservative forces. Implement gradient checks. Third, incorporate physical priors directly into the loss function, such as penalty terms for unrealistic bond lengths or angles.

Q3: What is the most efficient way to represent sparse 3D molecular graphs for training without running into memory issues? A3: For native 3D structures, use a k-nearest neighbors or radial cutoff to create sparse adjacency lists. Employ vectorized operations for message aggregation. Utilize PyTorch Geometric or Deep Graph Library (DGL) with their built-in sparse graph operations. For very large structures, consider hierarchical sampling or subgraph batching strategies.

Q4: How can I incorporate chiral information or other stereochemical properties into a GNN that processes 3D coordinates? A4: Native 3D coordinates inherently contain chiral information. However, your model must use features that can distinguish enantiomers, such as signed dihedral angles or local volume descriptors. Avoid using only interatomic distances, as they are achiral. Incorporate directed angle features or learnable geometric features that are sensitive to mirror symmetry.

Troubleshooting Guides

Issue: Model Performance Degrades with Increased Graph Depth

- Symptoms: Vanishing gradients, over-smoothing of node features, and decreased accuracy after 4-5 message-passing layers.

- Diagnosis: This is the over-smoothing problem, exacerbated in 3D graphs where topological and geometric neighborhoods may not align.

- Resolution:

- Implement residual/skip connections between GNN layers.

- Use attention-based or gated message passing to weight the influence of neighbors.

- Consider hybrid models that combine shallow GNNs with a post-processing Feed-Forward Network (FFN).

- Apply differential normalization techniques like PairNorm or MessageNorm.

Issue: Inconsistent Results on Rotated or Translated Molecular Conformations

- Symptoms: Model predictions change when the same 3D structure is input with different global orientations or positions.

- Diagnosis: The model lacks rotation and translation invariance, a critical requirement for learning from native 3D structures.

- Resolution:

- Preprocessing: Centroid-subtract and optionally align all input structures.

- Model-Level: Use only invariant geometric features (e.g., distances, angles) as edge or node attributes.

- Architecture: Employ an SE(3)-invariant or SE(3)-equivariant GNN architecture like SE(3)-Transformers, Tensor Field Networks, or EGNNs.

Experimental Protocols

Protocol 1: Benchmarking GNNs on Quantum Mechanical Datasets (e.g., QM9) Objective: Evaluate a GNN's ability to predict molecular properties (e.g., HOMO-LUMO gap, dipole moment) from 3D geometry.

- Data Preparation: Download the QM9 dataset. Split into training/validation/test sets (80/10/10) using a scaffold split to assess generalization.

- Graph Construction: For each molecule, define nodes as atoms. Create edges between all atom pairs within a cutoff radius (e.g., 5 Å) or using k-nearest neighbors.

- Node/Edge Features: Node features: atomic number, hybridization. Edge features: Euclidean distance, optionally encoded via a radial basis function (RBF).

- Model Training: Train an Equivariant GNN (e.g., from the

e3nnlibrary) using a Mean Squared Error (MSE) loss. Use the Adam optimizer with an initial learning rate of 1e-3 and a learning rate scheduler. - Evaluation: Report Mean Absolute Error (MAE) on the test set for the target property.

Protocol 2: Training a GNN for Protein-Ligand Binding Affinity Prediction Objective: Predict binding score (pKi/pIC50) from the 3D structure of a protein-ligand complex.

- Data Source: Use the PDBbind database (refined set).

- Graph Representation: Construct a heterogeneous graph. Nodes: protein residues (Cα atoms) and ligand atoms. Edges: Intra-protein (sequence distance < 5), intra-ligand (bonds), and inter-molecular (atoms < 6 Å apart).

- Feature Engineering: Atom-level features: element type, partial charge, etc. Residue-level features: amino acid type, secondary structure.

- Model Architecture: Implement a dual-stream GNN (one for protein, one for ligand) with a subsequent interaction network, or a single heterogeneous GNN.

- Training & Validation: Train with a robust k-fold cross-validation scheme stratified by protein family to avoid data leakage. Use a Huber loss function.

Table 1: Performance Comparison of GNN Architectures on 3D Molecular Datasets

| Model Architecture | QM9 (MAE - μH in D) | OC20 (IS2RE - MAE in eV) | PDBbind (RMSE - pK) | Key Invariance Property |

|---|---|---|---|---|

| SchNet | 0.033 | 0.580 | 1.40 | Translation, Rotation |

| DimeNet++ | 0.028 | 0.420 | 1.32 | Rotation |

| SphereNet | 0.026 | N/A | N/A | Rotation |

| SE(3)-Transformer | 0.031 | 0.350 | N/A | Full SE(3) |

| EGNN | 0.025 | 0.390 | 1.28 | Full E(3) |

| GemNet | 0.027 | 0.350 | N/A | Rotation |

Data aggregated from respective model papers (2021-2023). Lower values are better. N/A indicates results not widely reported on this benchmark.

Table 2: Common Failure Modes and Diagnostic Metrics

| Symptom | Likely Cause | Diagnostic Check | Corrective Action |

|---|---|---|---|

| High training loss | Improfective optimization, poor feature scaling | Plot loss curve, check gradient norms | Adjust learning rate, normalize input features |

| Large train-test gap | Overfitting to training set | Compare train vs. validation MAE | Increase regularization (Dropout, Weight Decay), use early stopping |

| Poor performance on rotated inputs | Lack of rotational invariance | Test model on randomly rotated copies of validation data | Switch to an invariant/equivariant architecture |

| Memory overflow | Dense graph representation | Monitor GPU memory usage during batch loading | Implement sparse graphs, reduce batch size, use neighbor sampling |

Visualizations

Title: Workflow for 3D Molecular GNN Prediction

Title: GNN for 3D Structures: Troubleshooting Guide

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in GNN for 3D Structure |

|---|---|

| PyTorch Geometric (PyG) | A library for deep learning on irregular graphs. Provides fast, batched operations for message passing, crucial for handling 3D molecular graphs. |

| Deep Graph Library (DGL) | Another high-performance graph neural network library with strong support for heterogeneous graphs (e.g., protein-ligand complexes). |

| e3nn Library | A specialized library for building E(3)-equivariant neural networks, which are fundamental for correct processing of 3D geometric data. |

| RDKit | A cheminformatics toolkit used for parsing molecular file formats, generating 2D/3D coordinates, and calculating molecular descriptors for feature engineering. |

| MDTraj | A library for analyzing molecular dynamics trajectories. Useful for loading and preprocessing large sets of 3D conformations from simulations. |

| Radial Basis Function (RBF) Encoding | A method to encode continuous edge features (like interatomic distance) into a fixed-dimensional vector, improving model sensitivity. |

| Cutoff / Neighbor Search Algorithms (e.g., KD-Tree) | Essential for efficiently constructing the sparse graph from a 3D point cloud based on a distance cutoff, scaling to large systems. |

| SE(3)-Transformer / EGNN Implementation | Pre-built models that guarantee the necessary geometric invariances, providing a strong baseline and reducing implementation error. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My SE(3)-equivariant model fails to converge when trained on small molecular datasets (< 10k samples). What could be the issue? A: This is a common issue related to limited data for a high-parameter model. Implement the following protocol:

- Apply Stochastic Frame Averaging: For each training batch, randomly sample a set of reference frames (rotations/translations) and average the model's predictions across them. This acts as a data augmentation regularizer.

- Use Pre-trained Equivariant Features: Leverage a model pre-trained on a large corpus like QM9 or GEOM-Drugs. Freeze the initial layers and fine-tune only the final prediction head.

- Hyperparameter Adjustment: Increase weight decay (range 1e-4 to 1e-3) and reduce initial learning rate by a factor of 5-10.

Q2: During inference, my model's predictions are not invariant to input rotation, despite using an SE(3)-invariant architecture. A: This indicates a likely implementation error in the invariant output head.

- Diagnostic Test: Create a script that rotates a single test molecule through 8 random SO(3) rotations and passes each through the model. Record the variance of the scalar output (e.g., energy). A correct model will have near-zero variance.

- Solution: Ensure the final layer(s) producing the prediction are strictly invariant. This typically involves:

- Taking norms of irreducible representations (irreps).

- Performing a tensor product to a 0-order irrep (scalar).

- Using only

l=0(scalar) features in the final multilayer perceptron (MLP).

Q3: Training is computationally expensive and runs out of memory on large protein-ligand complexes. A: Optimize using sparse implementations and hierarchical pooling.

- Use Sparse Tensor Operations: If using libraries like

e3nnorTorchMD-Net, ensure you are leveraging sparse neighbor lists for constructing atomic graphs. - Implement Hierarchical Pooling: Do not operate on all atoms at the same resolution. Use a multi-scale approach:

- Level 1: Atom-level SE(3)-equivariant features.

- Level 2: Cluster atoms into radial chunks (e.g., 5Å radius); pool features using an invariant reduction (mean/max).

- Level 3: Process pooled chunk features with a lighter-weight GNN.

Q4: How do I incorporate atomic charge or spin (non-geometric features) into an equivariant model?

A: These are invariant scalar features (l=0 irreps). The standard method is to concatenate them with the learned invariant node features at each layer before the message-passing or state-update function. Treat them as additional inputs alongside the initial embedding of the atomic number.

Table 1: Benchmark Performance of SE(3)-Invariant Models on QM9

| Model Architecture | MAE (HOMO eV) ↓ | MAE (μ Debye) ↓ | Training Epochs | Params (M) |

|---|---|---|---|---|

| SchNet (Invariant) | 0.041 | 0.033 | 500 | 4.1 |

| DimeNet++ (Invariant) | 0.028 | 0.030 | 500 | 1.9 |

| SE(3)-Transformer | 0.023 | 0.027 | 500 | 3.8 |

| NequIP | 0.014 | 0.018 | 300 | 0.8 |

Table 2: Inference Speed & Memory Usage (Protein with 5k Atoms)

| Model | Inference Time (ms) | GPU Memory (GB) | Batch Size=1 | Batch Size=8 |

|---|---|---|---|---|

| SchNet | 120 | 1.2 | 4.5 | |

| TFN (Tensor Field Net) | 450 | 3.8 | OOM | |

| SE(3)-Transformer | 380 | 3.1 | OOM | |

| NequIP (Optimized) | 95 | 1.5 | 3.2 |

Experimental Protocol: Evaluating SE(3)-Invariance on a Binding Affinity Task

Objective: Quantify the robustness of an SE(3)-equivariant graph neural network (GNN) to rigid transformations in a docking pose regression task.

Materials:

- Dataset: PDBBind refined set (v2020).

- Software: PyTorch, PyTorch Geometric,

e3nnlibrary. - Hardware: GPU with ≥8GB VRAM.

Methodology:

- Data Preparation:

- Isolate protein-ligand complexes. Separate ligand from protein.

- For each complex, generate 50 random SE(3) transformations (rotations and translations).

- Apply each transformation to the ligand's coordinates to create a transformed input.

- The target (binding affinity, pK) remains unchanged for all transformed versions of the same complex.

Model Training:

- Train a single SE(3)-invariant NequIP model on the original training set poses.

- Loss Function: Mean Squared Error (MSE) on pK.

- Key: The model never sees randomly rotated poses during training.

Invariance Testing:

- On the held-out test set, pass all 50 transformed versions of each complex through the trained model.

- For each complex, calculate the standard deviation (σ) of the 50 predicted pK values.

- The mean σ across all test complexes is the Invariance Error Metric. A perfect SE(3)-invariant model will have σ = 0.

Control Experiment:

- Repeat the above with a non-equivariant GNN (e.g., standard GIN or GAT) as a baseline. Expect high invariance error.

Visualizations

Title: SE(3)-Invariant Model Workflow Under Input Transformation

Title: Core Equivariant Message-Passing Step

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Libraries for SE(3)-Equivariant Molecular Modeling

| Item | Function / Purpose | Key Feature |

|---|---|---|

| e3nn (v0.5.0+) | Core library for building E(3)-equivariant neural networks in PyTorch. | Implements irreducible representations (irreps), spherical harmonics, and tensor products. |

| PyTorch Geometric (PyG) | Library for graph neural networks. Handles molecular graph batching and data loading. | Integrates with e3nn via the torch_geometric.nn wrapper modules. |

| NequIP (Neural Equivariant Interatomic Potential) | A highly performant, ready-to-use framework for developing interatomic potentials. | Demonstrates state-of-the-art accuracy and efficiency on molecular dynamics tasks. |

| TorchMD-Net | Framework for equivariant models for molecular simulations. | Offers multiple modern SE(3)-equivariant architectures (TorchMD-ET, etc.). |

| RDKit | Cheminformatics toolkit. | Used for initial molecule processing, SMILES parsing, and basic conformer generation. |

| Open Babel / PyMOL | Molecular visualization and format conversion. | Critical for inspecting and preparing 3D molecular structures pre- and post-analysis. |

Fragment-Based and Graph-Transformer Hybrid Architectures

Troubleshooting Guides & FAQs

Q1: During model training, I encounter the error: "RuntimeError: The size of tensor a must match the size of tensor b at non-singleton dimension." What does this mean in the context of merging fragment and graph representations? A1: This typically indicates a mismatch in the dimensionality of the latent vectors produced by your fragment encoder and your molecular graph encoder before they are concatenated or fused. Common root causes are:

- Inconsistent hidden dimensions defined for the fragment GNN and the main graph transformer.

- A pooling operation (global mean, sum) on the fragment subgraphs that yields an output size different from the node-level representation size of the primary graph.

- Incorrect indexing when aligning fragment embeddings to specific atoms/nodes in the global graph.

Troubleshooting Protocol:

- Isolate Encoders: Run a forward pass with a batch of dummy data through each encoder (fragment and graph) separately.

- Print Tensor Shapes: Log the output shape of each encoder just before the fusion step (e.g.,

print(fragment_emb.shape, graph_emb.shape)). - Verify Alignment Logic: If using node-level alignment, ensure the lookup indices for placing fragment embeddings are within the bounds of the primary graph node list.

- Adjust Architecture: Explicitly define a projection layer (linear layer) after one or both encoders to ensure their output dimensions match exactly before fusion.

Q2: My hybrid model fails to learn and shows no performance improvement over a vanilla graph transformer. What are potential architectural or data-related issues? A2: This suggests the model is not effectively utilizing the fragment information. The problem may lie in data representation, fusion mechanism, or training strategy.

Diagnostic Experimental Protocol:

- Ablation Study: Systematically remove components and benchmark performance.

- Baseline A: Standard Graph Transformer.

- Baseline B: Fragment Network only (on pooled fragment sets).

- Model C: Your full hybrid architecture. Compare results on a fixed validation set.

Fragment Relevance Check: Implement a simple sanity-check experiment. Train a small classifier only on the fragment embeddings (e.g., for a simple property like molecular weight or presence of a pharmacophore). If it cannot learn, your fragment decomposition or representation may be flawed.

Gradient Flow Analysis: Use tools like

torchvizto create a computational graph for one batch. Check if gradients are flowing back into the fragment encoder branch. If not, the fusion point may be a bottleneck.

Q3: How do I handle variable-sized sets of fragments for different molecules within a single batch? A3: This is a key challenge. Padding to the maximum number of fragments across the entire dataset is inefficient. Preferred solutions involve advanced batching or attention.

Recommended Methodologies:

- Per-Batch Padding: Pad fragment sets to the maximum number in the current batch, then use an attention mechanism with a padding mask.

- Graph-of-Graphs Representation: Represent the entire batch as a large graph where:

- Each molecule's primary graph is a connected component.

- Fragment subgraphs are connected via special "contains" edges to their constituent atoms in the primary graph. This allows message-passing across fragments within a molecule naturally via the transformer.

- Hierarchical Pooling: Independently process all fragments through the fragment encoder, then use a permutation-invariant pooling operator (e.g., DeepSet, Set Transformer) to create a fixed-size molecular-level fragment summary vector for fusion.

Q4: What are the best practices for splitting data (train/validation/test) to avoid data leakage when fragments are shared across molecules? A4: Standard random splits are invalid. You must perform a scaffold split or fragment-based split to prevent leakage.

Mandatory Data Splitting Protocol:

- Generate the Bemis-Murcko scaffold or a key functional fragment (e.g., largest ring system) for each molecule in your dataset.

- Use these scaffolds/fragments as the grouping key for the split.

- Employ a stratified split (e.g., via

GroupShuffleSplitin sklearn) to ensure molecules sharing a core scaffold/fragment land in the same partition (train, val, or test). - Critical Verification Step: After splitting, check that no fragment in your training set's fragment vocabulary appears for the first time in the validation or test set. Retrain fragment embeddings if this occurs.

Table 1: Benchmark Performance of Hybrid Architectures vs. Baselines on MoleculeNet Datasets

| Model Architecture | HIV (AUC-ROC ↑) | FreeSolv (RMSE ↓) | Clintox (Avg. AUC-ROC ↑) | Params (M) | Training Speed (ms/step) |

|---|---|---|---|---|---|

| Graph Transformer (GT) | 0.783 ± 0.012 | 1.58 ± 0.11 | 0.855 ± 0.025 | 12.4 | 125 |

| Fragment GNN Only | 0.721 ± 0.018 | 2.21 ± 0.15 | 0.812 ± 0.031 | 8.7 | 95 |

| GT + Fragment Attention | 0.801 ± 0.010 | 1.42 ± 0.09 | 0.872 ± 0.022 | 16.2 | 185 |

| GT + Fragment Graph Fusion | 0.812 ± 0.009 | 1.39 ± 0.08 | 0.881 ± 0.020 | 18.5 | 210 |

Table 2: Impact of Fragment Definition on Model Performance (HIV Dataset)

| Fragment Decomposition Method | Avg. Frags/Mol | Hybrid Model AUC-ROC | Interpretability Score |

|---|---|---|---|

| BRICS (Default) | 8.2 | 0.812 | High |

| RECAP | 7.5 | 0.806 | Medium |

| Functional Group | 12.1 | 0.795 | Low |

| Rule-Based (Custom) | 6.8 | 0.809 | Very High |

Visual Workflows & Architectures

Title: Hybrid Model Architecture Workflow

Title: Cross-Attention Fusion Mechanism

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Hybrid Architecture Experiments

| Resource Name / Tool | Type | Primary Function | Key Consideration |

|---|---|---|---|

| RDKit | Software Library | Core cheminformatics: SMILES parsing, graph generation, BRICS fragmentation. | Standard for molecule handling; ensure canonicalization is consistent. |

| PyTorch Geometric (PyG) / DGL | Deep Learning Library | Efficient graph neural network operations and batching. | Critical for handling variable-sized graph and fragment sets. |

| BRICS or RECAP Algorithm | Fragmentation Method | Decomposes molecules into chemically meaningful, reassemble-able fragments. | Choice affects model interpretability and generalization. |

| Weisfeiler-Lehman (WL) Kernel | Algorithm | Provides a strong baseline for graph similarity; useful for analyzing fragment diversity. | Use to validate that your fragment sets capture meaningful chemical diversity. |

| Set Transformer or DeepSets | Neural Architecture | Models permutation-invariant functions on sets of fragments. | Replaces simple pooling for a richer fragment set representation. |

| Scaffold Splitting Script | Data Utility | Ensures data splits prevent leakage of fragment information. | Mandatory for rigorous evaluation; often custom-built on top of RDKit. |

| Attention Visualization Toolkit | Interpretability Tool | Visualizes cross-attention weights between graph nodes and fragments. | Key for validating that the model learns chemically plausible associations. |

Troubleshooting & FAQ Center

Q1: My model fails to tokenize valid SELFIES strings during fine-tuning. What could be wrong?

A1: This is often a library version or canonicalization issue. Ensure you are using the same version of the selfies library (currently v2.1.0+) that was used to pre-train your model. SELFIES is inherently canonical, but verify that no pre-processing script is inadvertently applying SMILES canonicalization to your SELFIES inputs.

Q2: When fine-tuning a model on my dataset, the loss plateaus immediately. How can I diagnose this? A2: This typically indicates a data representation mismatch. Follow this protocol:

- Isolate the Issue: Run a sanity check by trying to reconstruct your input sequences. Use the model to encode then decode 100 random molecules from your dataset.

- Calculate Reconstruction Accuracy: Measure the percentage of molecules that decode to an identical and valid string.

- Interpret Results: If accuracy is <95%, your data's representation likely differs from the model's pre-training corpus. For example, your SMILES may be canonical while the model was trained on non-canonical forms, or you may be using a different aromaticity model.

Table 1: Reconstruction Accuracy Diagnosis

| Reconstruction Accuracy | Likely Issue | Recommended Action |

|---|---|---|

| >98% | Learning problem (e.g., low LR, frozen weights) | Unfreeze encoder layers, increase learning rate. |

| 85-98% | Representation mismatch | Align tokenizer, check canonicalization/aromaticity. |

| <85% | Severe mismatch or corrupted data | Verify data format (SMILES vs. SELFIES), inspect samples. |

Q3: How do I choose between a SMILES-based model (e.g., ChemBERTa) and a SELFIES-based model (e.g., SELFIES-BERT) for property prediction? A3: The choice depends on data robustness and task specificity. Conduct a controlled benchmark:

- Dataset Preparation: Curate a dataset of 10k molecules with target properties. Create two versions: one with standard SMILES and one with SELFIES.

- Experimental Protocol: Use identical model architectures (e.g., 12-layer transformer, 768 hidden dim). Pre-train a model on 1M molecules from PubChem in each representation, or use available checkpoints. Fine-tune both models on your dataset using a 80/10/10 split. Repeat with 5 random seeds.

- Key Metric: Compare mean absolute error (MAE) on the held-out test set, prioritizing both average performance and outlier analysis (molecules where predictions diverge significantly).

Table 2: Benchmark Results: SMILES vs. SELFIES for QSAR

| Model | Representation | Avg. MAE (logP) | Std. Dev. | Invalid Output % |

|---|---|---|---|---|

| ChemBERTa-77M | SMILES (canonical) | 0.42 | ± 0.03 | 0.1% |

| SELFIES-BERT-77M | SELFIES (v2.1) | 0.45 | ± 0.02 | 0.0% |

| Model A | SMILES (non-canonical) | 0.40 | ± 0.05 | 2.3% |

Q4: The generated molecules from my fine-tuned model are chemically invalid. How can I improve validity? A4: High invalidity rates stem from the model learning incorrect grammar rules.

- For SMILES models: Implement a valency check during or post-generation. Use a parser like RDKit to filter out intermediates or final products with invalid valency states.

- For SELFIES models: Invalidity is rare by construction. If it occurs, it is almost certainly due to a tokenizer vocabulary mismatch. Ensure the special

[nop]token is correctly defined and managed in your tokenizer's vocabulary. - General Protocol: Use a constrained beam search or masked sampling that only allows transitions to tokens that maintain a valid partial structure according to a defined grammar.

Q5: How can I extract meaningful, fixed-size embeddings for large-scale virtual screening? A5: The standard method is to use the [CLS] token embedding or average over all token embeddings from the final layer. For a more informed approach:

- Protocol: Pass your molecular library through the model.

- Extraction: Extract the hidden state representations for all tokens.

- Pooling: Apply a self-attention pooling layer (learned during fine-tuning) to weight the importance of each token (e.g., an aromatic ring vs. a methyl group) before summing them into a single vector. This creates task-aware embeddings.

Title: Workflow for Generating Fixed-Size Molecular Embeddings

The Scientist's Toolkit: Key Research Reagents

Table 3: Essential Software & Libraries for Molecular Language Modeling

| Item | Function | Current Version |

|---|---|---|

| RDKit | Core cheminformatics toolkit for molecule manipulation, validation, and descriptor calculation. | 2023.09.5 |

| Transformers (Hugging Face) | Library to load, fine-tune, and share pre-trained models (e.g., ChemBERTa, MoLFormer). | 4.36.2 |

| SELFIES Python Library | Encodes/decodes molecular graphs into and from the SELFIES string representation. | 2.1.0 |

| Tokenizers (Hugging Face) | Creates and manages custom vocabularies for SMILES/SELFIES subword tokenization. | 0.15.2 |

| PyTorch / TensorFlow | Backend deep learning frameworks for model training and inference. | 2.1 / 2.15 |

| Molecular Transformer | Specialized model for reaction prediction, often used as a benchmark. | N/A |

Title: Thesis Context: From Representation to Application

Troubleshooting Guides & FAQs

FAQ: Model Performance & Training

Q1: Why does my model fail to generate chemically valid or synthetically accessible molecules during de novo generation?

A: This is a core limitation tied to molecular representation. Models using string-based representations (like SMILES) often violate syntactic or semantic rules.

- Primary Cause: The model's latent space contains points that do not map to valid molecular structures.

- Solution:

- Incorporate Validity Checks: Use a post-generation valency checker and sanitizer (e.g., RDKit's

SanitizeMol). - Switch Representation: Adopt a graph-based (directed message-passing neural networks) or 3D geometric representation (SE(3)-equivariant models) that inherently respects molecular connectivity rules.

- Use Reinforcement Learning (RL): Fine-tune the generative model with an RL reward that penalizes invalid structures and rewards synthetic accessibility (SA) scores.

- Incorporate Validity Checks: Use a post-generation valency checker and sanitizer (e.g., RDKit's

Q2: My virtual screening model shows high performance on hold-out test sets but fails to identify active compounds in real-world experimental validation. What could be wrong?

A: This indicates a generalization failure, often due to the "similarity principle" limitation in the training data.

- Primary Cause: The model has learned to recognize structural motifs from the training actives rather than generalizable bioactivity patterns. It fails on structurally novel scaffolds.

- Solution:

- Employ Extensive Data Augmentation: Use randomized SMILES, coordinate roto-translations (for 3D models), or realistic atom/ bond noise during training.

- Use Domain Adaptation Techniques: Apply transfer learning from a model trained on a large, diverse chemical corpus (e.g., ChEMBL, ZINC) before fine-tuning on your specific target.

- Implement Out-of-Distribution (OOD) Detection: Use techniques like confidence calibration or Mahalanobis distance in the latent space to flag predictions on compounds too dissimilar from the training domain.

Q3: How can I handle the lack of reliable negative (inactive) data when training a virtual screening classifier?

A: The assumption that unlabeled compounds are negative is flawed and introduces bias.

- Primary Cause: Using assumed negatives from random or untested compound libraries contaminates the training dataset.

- Solution – Robust Experimental Protocol:

- Apply Positive-Unlabeled (PU) Learning: Train your model using only confirmed active (Positive) and untested/unknown (Unlabeled) data.

- Protocol:

- Step 1: Compile your confirmed actives (P set).

- Step 2: From a large, diverse compound library (e.g., ZINC), randomly sample a portion to serve as your Unlabeled (U) set. Do not label them as inactive.

- Step 3: Train a PU-learning compatible algorithm (e.g., a two-step positive sample weighting method).

- First, identify reliable negatives (RN) from U by identifying samples most dissimilar to all P.

- Second, train a weighted binary classifier on P (weight=1) and RN (weight=1), with the remainder of U receiving dynamically adjusted weights.

- Alternative: Use one-class classification or anomaly detection methods focused solely on the active compounds.

FAQ: Technical & Computational Issues

Q4: Training 3D-aware molecular models (e.g., for binding affinity prediction) is extremely slow and memory-intensive. How can I optimize this?

A: 3D convolutions and full graph attention over atomic pairs are computationally expensive.

- Solutions:

- Use Efficient Operations: Implement optimized libraries like

torch_geometricfor graph networks ore3nnfor SE(3)-equivariant operations. - Adopt a Hierarchical Model: Use a coarse-grained representation first (e.g., at the residue or fragment level) before moving to atomic detail.

- Leverage Pre-computed Features: For static molecular structures, pre-compute and cache expensive 3D features (e.g., radial basis function expansions of distances, spherical harmonics of angles) to avoid on-the-fly recomputation.

- Hardware: Utilize GPUs with high VRAM (e.g., NVIDIA A100, 40GB+) and consider mixed-precision training (

torch.cuda.amp).

- Use Efficient Operations: Implement optimized libraries like

Q5: How do I choose the optimal molecular representation and AI architecture for my specific drug discovery project?

A: The choice depends on the task and available data. See the decision table below.

Table 1: Selection Guide for Molecular Representation & Model Architecture

| Primary Task | Recommended Representation | Recommended Model Architecture | Key Rationale | Typical Data Requirement |

|---|---|---|---|---|

| High-Throughput 2D Virtual Screening | Molecular Graph / Extended-Connectivity Fingerprints (ECFP) | Graph Neural Network (GNN) / Random Forest | Balances topological accuracy with computational speed. Excellent for scaffold hopping. | 10^3 - 10^4 labeled compounds |

| De Novo Molecule Generation | Molecular Graph (with explicit nodes/edges) | Graph Generative Model (e.g., JT-VAE, GraphINVENT) | Inherently generates valid, connected molecular structures. | Large unlabeled corpus (e.g., 10^6+ compounds) for pre-training |

| Binding Affinity Prediction (Structure-Based) | 3D Atom Point Cloud / Voxelized Grid | 3D Convolutional Neural Network (3D-CNN) / SE(3)-Equivariant Network (e.g., NequIP) | Captures essential spatial and geometric interactions with the protein target. | 10^2 - 10^3 complexes with high-resolution structures & Kd/IC50 data |

| Binding Affinity Prediction (Ligand-Based) | 3D Conformer Ensemble | Geometry-Enhanced GNN (e.g., DimeNet, SphereNet) | Models intramolecular forces and pharmacophore without protein structure. | 10^3 - 10^4 labeled compounds with defined bioactive conformations |

| Reaction or Synthetic Pathway Prediction | Sequence of Graph Edit Operations / SMILES | Sequence-to-Sequence Model (Transformer) / Graph-to-Graph Model | Naturally models the transformation from reactants to products. | 10^4 - 10^5 reaction examples |

Experimental Protocol: Benchmarking Generative Model Performance

Objective: To systematically evaluate and compare the performance of different de novo generative models in the context of overcoming representation limitations.

Materials: See "The Scientist's Toolkit" below. Protocol:

- Model Training: Train or load pre-trained instances of three model types: a) SMILES-based RNN, b) Molecular Graph-based GNN, c) Fragment-based generative model.

- Generation: Generate 10,000 molecules from each model using identical sampling parameters (e.g., temperature=0.7 for stochastic models).

- Validity & Uniqueness Calculation:

- Pass all generated strings/graphs through RDKit to parse them into molecules.

- Validity (%) = (Number of RDKit-successfully parsed molecules / 10,000) * 100.

- Uniqueness (%) = (Number of unique canonical SMILES / Number of valid molecules) * 100.

- Novelty Assessment:

- Compare canonical SMILES of unique, valid molecules against the training set (or a reference like ZINC).

- Novelty (%) = (Number of molecules not found in reference set / Total unique valid molecules) * 100.

- Diversity Calculation:

- Compute pairwise Tanimoto distances based on ECFP4 fingerprints for the first 1000 valid molecules.

- Internal Diversity = mean of all pairwise distances (range 0-1, higher is more diverse).

- Analyze Failed Cases: Manually inspect a sample of invalid outputs for each model to diagnose representation-specific failure modes (e.g., invalid valence in SMILES, disconnected graphs in GNNs).

Table 2: Example Benchmark Results for Generative Models

| Evaluation Metric | SMILES-RNN Model | Graph-GNN Model | Fragment-Based Model | Interpretation |

|---|---|---|---|---|

| Validity (%) | 85.2 | 99.8 | 97.5 | Graph models inherently enforce chemical rules. |

| Uniqueness (%) | 95.1 | 89.3 | 99.1 | Fragment models excel at exploring combinatorial space. |

| Novelty w.r.t. Training Set (%) | 70.5 | 65.8 | 80.2 | Fragment models are more likely to produce novel scaffolds. |

| Internal Diversity (Mean Tanimoto Dist.) | 0.72 | 0.68 | 0.75 | Fragment and RNN models can cover broader chemical space. |

| Avg. Synthetic Accessibility Score (SA Score) | 4.8 | 3.5 | 2.9 | Fragment models build from synthetically plausible units. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Resources for AI-Driven Molecular Design

| Item / Resource | Function / Purpose | Example / Provider |

|---|---|---|

| Cheminformatics Toolkit | Core library for molecule parsing, standardization, descriptor calculation, and basic operations. | RDKit (Open-source) |

| Deep Learning Framework | Flexible platform for building, training, and deploying custom molecular AI models. | PyTorch, TensorFlow |

| Geometric Deep Learning Library | Specialized libraries for efficient graph and 3D molecular neural network implementations. | PyTorch Geometric, DGL-LifeSci, e3nn |

| Large-Scale Compound Database | Source of molecules for pre-training generative models or for prospective virtual screening. | ZINC, ChEMBL, PubChem |

| Synthetic Accessibility Predictor | Quantifies the ease of synthesizing a generated molecule, a critical real-world metric. | RAScore, SA Score (RDKit), AiZynthFinder |

| Molecular Docking Software | For structure-based validation of generated hits, providing an initial binding pose and score. | AutoDock Vina, Glide, FRED |

| High-Performance Computing (HPC) / Cloud | Necessary computational resources for training large 3D models and screening ultra-large libraries. | Local GPU Cluster, Google Cloud Platform, Amazon Web Services |

| Benchmarking Datasets | Standardized datasets for fair comparison of virtual screening and generative models. | MOSES, Guacamol, PDBbind |

Workflow & Relationship Diagrams

Title: AI-Driven Molecular Discovery Workflow

Title: Molecular Representation Challenges & Solutions

Implementation Guide: Solving Key Challenges in Next-Gen Molecular AI

Balancing Model Complexity with Data Scarcity in Drug Discovery Projects

Technical Support Center

Frequently Asked Questions (FAQs)