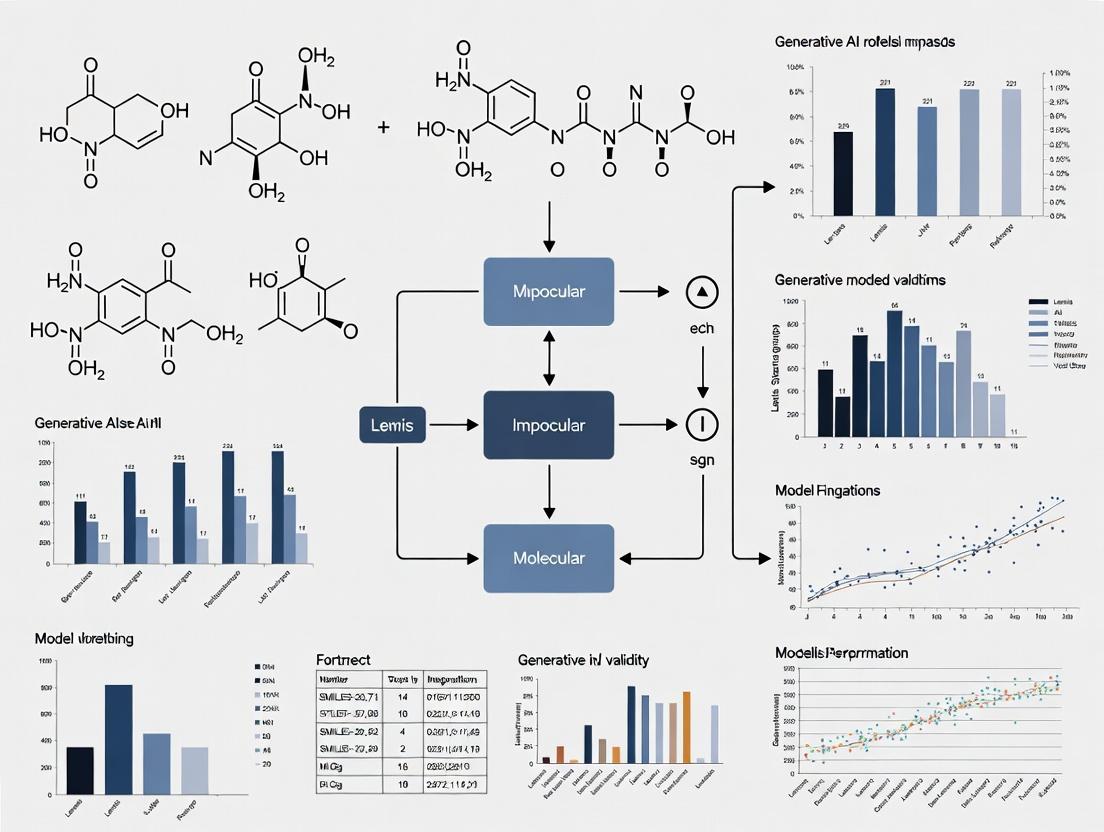

Beyond Novelty: A Framework for Quantifying and Improving Molecular Validity in Generative AI for Drug Discovery

This article addresses the critical challenge of molecular validity in AI-driven drug discovery.

Beyond Novelty: A Framework for Quantifying and Improving Molecular Validity in Generative AI for Drug Discovery

Abstract

This article addresses the critical challenge of molecular validity in AI-driven drug discovery. We define molecular validity as the generation of chemically stable, synthesizable, and biologically relevant compounds, distinguishing it from mere novelty. For researchers and drug development professionals, we provide a comprehensive analysis spanning from the foundational causes of invalid generation—including data bias, model architecture limitations, and reward function pitfalls—to advanced methodological solutions like hybrid models, reinforcement learning with expert rules, and differentiable chemistry. The piece further explores troubleshooting strategies for common failures, and establishes a validation and benchmarking framework using industry-standard metrics and real-world case studies. The synthesis offers actionable insights for deploying generative models that produce not just novel, but truly viable molecular candidates.

Why AI Generates Invalid Molecules: Defining the Core Problem for Researchers

Technical Support Center

Troubleshooting Guide & FAQs

This support center addresses common issues encountered when moving from in silico generation of SMILES-valid structures to creating truly valid molecules based on synthesizability and stability.

FAQ 1: My generative model produces SMILES-valid molecules, but a high percentage are flagged by retrosynthesis analysis as "non-synthesizable." What are the primary causes and solutions?

Answer: This is a core challenge. SMILES validity only ensures correct syntax, not chemical sense. Common causes are:

- Unrealistic Ring Strain or Topology: Molecules with impossible ring sizes (e.g., very small rings with large substituents) or topologically complex knots.

- Highly Unstable Functional Groups: The presence of groups like peroxides, certain polyhalogenated structures, or incompatible moieties in proximity.

- Violation of Chemical Rules: Breaking Bredt's rule, creating hypervalent carbon atoms, or impossible stereochemistry.

Protocol for Filtering:

- Initial Screen: Pass all generated SMILES through a rule-based filter (e.g., using RDKit's

SanitizeMoland customFilterCatalogto remove unwanted functional groups). - Retrosynthesis Scoring: Use a forward-prediction model (e.g., ASKCOS, IBM RXN, or a local ML model) to score molecules for synthesizability. A common metric is the Synthetic Accessibility Score (SAScore).

- Threshold Application: Set a synthesizability score threshold (e.g., SAScore < 4.5 for "readily synthesizable") based on your project's needs. Molecules above the threshold should be flagged or discarded.

- Initial Screen: Pass all generated SMILES through a rule-based filter (e.g., using RDKit's

Quantitative Data:

Table 1: Impact of Post-Generation Filters on Molecular Validity

| Generative Model | Raw Output (SMILES-valid) | After Rule-Based Filtering | After SAScore Filtering (<4.5) | Retained for Analysis |

|---|---|---|---|---|

| Model A (RNN) | 10,000 molecules | 8,200 (82%) | 3,050 (30.5%) | 30.5% |

| Model B (Transformer) | 10,000 molecules | 8,900 (89%) | 4,120 (41.2%) | 41.2% |

| Model C (GPT-Chem) | 10,000 molecules | 9,100 (91%) | 5,300 (53.0%) | 53.0% |

FAQ 2: How can I experimentally validate the chemical stability of AI-generated molecules in silico before synthesis?

Answer: Computational stability assessment is a multi-step process.

- Conformational Analysis: Use a tool like RDKit's

MMFF94orETKDGto generate low-energy conformers. - Quantum Mechanical (QM) Calculation: Perform a geometry optimization and frequency calculation using DFT (e.g., B3LYP/6-31G*) to ensure a true energy minimum (no imaginary frequencies).

- Reactivity Prediction: Analyze the HOMO-LUMO gap as a proxy for kinetic stability. A smaller gap often suggests higher reactivity.

- pKa and Tautomer Prediction: Use tools like

EpikorChemAxonto predict major microspecies at physiological pH, as the wrong tautomer can invalidate docking results.

- Conformational Analysis: Use a tool like RDKit's

Protocol for DFT-based Stability Pre-Screen:

- Input: A 3D molecular structure (SDF file).

- Software: Use Gaussian, ORCA, or PSI4.

- Method:

# opt freq b3lyp/6-31g*in Gaussian. - Output Analysis: Check the log file for "imaginary frequencies." If none exist, the molecule is at a local energy minimum. Extract the HOMO and LUMO energies to calculate the gap.

Quantitative Data:

Table 2: Computational Stability Metrics for a Sample Set of Generated Molecules

| Molecule ID | SAScore | HOMO (eV) | LUMO (eV) | HOMO-LUMO Gap (eV) | Imaginary Frequencies? | Stability Flag |

|---|---|---|---|---|---|---|

| MOL_001 | 3.2 | -7.1 | -0.9 | 6.2 | No | Stable |

| MOL_002 | 4.1 | -5.8 | -2.1 | 3.7 | No | Reactive/Caution |

| MOL_003 | 5.8 | -6.5 | -0.5 | 6.0 | Yes (1) | Unstable |

| MOL_004 | 2.9 | -8.2 | 0.3 | 8.5 | No | Very Stable |

FAQ 3: My generated molecules pass initial checks but fail during actual synthesis. What are the most common "hidden" validity issues?

- Answer: These are often context-dependent and relate to synthetic feasibility.

- Protecting Group Necessity: The molecule may contain functional groups (e.g., -OH, -NH2) that would require protection during synthesis, which the AI did not consider.

- Solvent/Reactor Compatibility: The molecule may be unstable under common reaction conditions (e.g., strongly basic/acidic, aqueous, high temperature).

- Purifiability: The molecule may lack the necessary functional groups or properties (e.g., a handle for chromatography) to be isolated in pure form.

Visualization: Molecular Validity Assessment Workflow

Diagram Title: Multi-Stage Molecular Validity Assessment Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Resources for Molecular Validity Research

| Item Name | Category | Function/Brief Explanation |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Core Python library for SMILES parsing, molecular manipulation, rule-based filtering, and basic property calculation. |

| OMEGA or ConfGen | Conformer Generation | Software for rapidly generating diverse, low-energy 3D conformers for stability and property analysis. |

| Gaussian / ORCA | Quantum Chemistry Software | For performing high-level DFT calculations (geometry optimization, frequency, HOMO-LUMO) to assess stability. |

| ASKCOS / IBM RXN | Retrosynthesis API | Cloud-based tools that use AI to propose synthetic routes and provide a feasibility score for a target molecule. |

| MolGX / AiZynthFinder | Local Retrosynthesis | Open-source, locally deployable tools for batch retrosynthesis analysis, offering more control than cloud APIs. |

| ChEMBL / PubChem | Real-World Compound DB | Critical benchmark databases to compare AI-generated molecules against known, stable, synthesized compounds. |

| Commercial Filtering Catalogs (e.g., PAINS, Brenk) | Rule Sets | Pre-defined lists of substructures (e.g., pan-assay interference compounds) to filter out promiscuous/unstable motifs. |

Troubleshooting Guides & FAQs

Q1: My generative model is producing chemically invalid molecular structures with high frequency. What is the first step in diagnosing the issue?

A1: The primary suspect is training data bias. Begin by auditing your training dataset for validity and representation. Perform the following diagnostic:

- Run a validity check (e.g., using RDKit's

SanitizeMolor equivalent) on a random 10% sample of your training data. Calculate the percentage of invalid SMILES or structures. - Use a tool like

molecular-descriptorsorDeepChemsto compute key physicochemical property distributions (e.g., molecular weight, logP, number of rings) for your training set. Compare these distributions against a known, unbiased reference set (e.g., ChEMBL, ZINC). Significant statistical divergence (p-value < 0.01 using Kolmogorov-Smirnov test) indicates bias.

Q2: During latent space interpolation, I encounter a high rate of invalid decodings. Is this a model architecture problem or a data problem?

A2: While architecture can play a role, biased data is often the root cause. Invalid interpolations frequently occur when the model has learned a disconnected latent manifold because the training data lacked examples of valid structures in the interpolated region. To troubleshoot:

- Experiment: Perform a simple neighborhood analysis. For a point generating an invalid structure, encode its nearest valid neighbors from the training set. Calculate the average Euclidean distance in latent space. If distances are large (>2 standard deviations from the dataset mean), the region is undersampled.

- Protocol: Select 100 invalid decoded points from interpolations. For each, find the 5 nearest valid training set neighbors in latent space. Compute the mean distance. Compare this to the mean distance calculated for 100 valid decoded points.

Q3: How can I quantify the "bias" in my molecular dataset towards invalid structural motifs?

A3: Implement a structural motif audit protocol.

- Fragment all molecules in your training set into statistically significant substructures (e.g., using the BRICS algorithm or a learned fragmentation model).

- For each substructure, compute its frequency in your set (

F_train) and its frequency in a pristine, curated set like the USPTO (F_ref). - Calculate a Bias Score for each motif:

Bias Score = log2(F_train / F_ref). Motifs with high positive scores are over-represented; high negative scores are under-represented. Invalid structures often arise from improbable combinations of over-represented motifs.

Table 1: Example Bias Audit of a Hypothetical Training Set vs. ChEMBL 33

| Structural Motif (SMARTS) | Frequency in Training Set (%) | Frequency in Reference Set (%) | Bias Score (log2 Ratio) | Linked Validity Issue |

|---|---|---|---|---|

[#7]-[#6]1:[#6]:[#6]:[#6]:[#6]:[#6]:1 (Aniline) |

15.2 | 4.1 | 1.89 | Overuse in generation leads to unstable aromatic amines. |

[#6]1:[#6]:[#6]:[#6]:[#6]:[#6]:1 (Benzene) |

62.5 | 58.1 | 0.11 | Minimal bias. |

[#16](=[#8])(=[#8])-[#6] (Sulfone) |

1.1 | 3.8 | -1.79 | Under-representation leads to poor sulfone geometry. |

[#6]-[#6](-[#6])(-[#6])-[#6] (Neopentyl-like core) |

0.05 | 0.5 | -3.32 | Severe under-representation causes steric clash in outputs. |

Q4: What is a concrete experimental protocol to test if data debiasing improves model validity?

A4: Conduct a controlled dataset experiment with the following methodology:

- Dataset Creation:

- Group A (Biased): Your original training set.

- Group B (Debiased): Apply a data correction pipeline to Group A: a) Remove all invalid molecules; b) Apply a reweighting or augmentation strategy (e.g., SMILES enumeration, realistic augmentation with

MolAugment) for under-represented motifs identified in your bias audit.

- Model Training: Train two identical generative models (e.g., a standard VAE or GPT architecture) from scratch, one on Group A and one on Group B. Keep all hyperparameters constant.

- Evaluation: Generate 10,000 structures from each model. Measure:

- Validity Rate: Percentage that are chemically valid (RDKit sanitizable).

- Uniqueness: Percentage of valid structures that are non-duplicate.

- Novelty: Percentage of valid, unique structures not in the training set.

- Property Distribution Divergence: Jensen-Shannon divergence between key property distributions of the generated molecules and the reference set (e.g., ChEMBL).

Table 2: Results of a Hypothetical Data Debiasing Experiment

| Evaluation Metric | Model Trained on Biased Set (A) | Model Trained on Debiased Set (B) | Improvement (Δ) |

|---|---|---|---|

| Validity Rate (%) | 67.3 | 94.8 | +27.5 |

| Uniqueness (%) | 81.2 | 89.7 | +8.5 |

| Novelty (%) | 95.5 | 93.1 | -2.4 |

| JSD (Molecular Weight) | 0.152 | 0.061 | -0.091 |

| JSD (Synthetic Accessibility Score) | 0.208 | 0.097 | -0.111 |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Improving Molecular Validity |

|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule sanitization, descriptor calculation, fragmentation, and standardizing data preprocessing. |

| MOSES (Molecular Sets) | Benchmarking platform providing standardized datasets (e.g., ZINC clean leads), evaluation metrics, and baseline models to compare against. |

| ChEMBL Database | A large, manually curated database of bioactive molecules with drug-like properties, serving as a key reference set for bias auditing. |

| DeepChem Library | Provides deep learning layers and frameworks tailored for molecular data, including featurizers and tools for handling dataset imbalance. |

| BRICS Algorithm | Method for fragmenting molecules into synthetically accessible building blocks, crucial for motif frequency analysis. |

| SA Score (Synthetic Accessibility) | A heuristic score to identify overly complex, likely invalid/unrealistic structures generated by models. |

| Molecular Transformer Model | A model for performing chemical reaction prediction and validity correction, useful for post-processing generated structures. |

| TensorBoard Projector | Tool for visualizing high-dimensional latent spaces, helping diagnose disconnected manifolds from biased data. |

| PyTor Geometric / DGL | Libraries for graph neural networks (GNNs), which are inherently better at learning structural validity than SMILES-based models. |

Experimental Workflow for Data Bias Mitigation

Diagram 1: Data Debiasing Workflow for Generative Models

Molecular Validity in a Standard Generative Model Pipeline

Diagram 2: How Bias Propagates to Invalid Outputs

Welcome to the Technical Support Center for Generative AI in Molecular Design. This resource provides troubleshooting guidance for researchers working to improve molecular validity in generative models.

Troubleshooting Guides & FAQs

Q1: My VAE-generated molecules consistently have invalid valency or unrealistic ring structures. What's wrong? A: This is a known architectural limitation of standard VAEs. The continuous latent space smoothness prior can permit decoding into chemically invalid regions.

- Diagnosis: Calculate the percentage of generated molecules passing basic valency checks (e.g., using RDKit's

SanitizeMol). If validity is below 70%, the issue is significant. - Solution: Implement a Skeleton-based Post-Processor.

- Step 1: Use the VAE to generate a molecular skeleton (scaffold) in a simplified molecular-input line-entry system (SMILES) format.

- Step 2: Employ a rule-based or graph-correcting algorithm (e.g., a valency correction function) to fix invalid atoms.

- Step 3: Validate the corrected structure using a separate property prediction network before final output.

Q2: My GAN for molecular generation suffers from mode collapse, producing the same few valid molecules repeatedly. How can I diversify output? A: GANs are prone to mode collapse, especially with the discrete, sparse nature of chemical space.

- Diagnosis: Compute the uniqueness and novelty metrics of a large batch (e.g., 10,000) of generated molecules. If uniqueness < 30%, mode collapse is likely.

- Solution: Apply a Mini-Batch Discrimination + Penalized Training protocol.

- Step 1: Integrate a mini-batch discrimination layer into the Discriminator to allow it to assess diversity across samples.

- Step 2: Implement a gradient penalty (e.g., Wasserstein GAN with Gradient Penalty, WGAN-GP) to stabilize training.

- Step 3: Periodically (e.g., every 5 epochs) augment the training set with high-scoring, novel generated molecules to reinforce exploration.

Q3: My Transformer model generates syntactically correct SMILES, but they are chemically invalid or unstable. Why? A: Transformers learn sequence probabilities without inherent chemical knowledge, leading to semantic errors in the SMILES "language."

- Diagnosis: Perform a Syntax vs. Validity Analysis.

- Parse a batch of generated SMILES strings.

- Check syntactic correctness (can RDKit read the string?).

- Check chemical validity (does the parsed molecule obey chemical rules?). A high syntax pass rate (>95%) with a low validity rate (<50%) indicates this specific issue.

- Solution: Integrate a Validity-Constrained Decoding Strategy.

- Step 1: During autoregressive generation, at each step, filter the predicted token probabilities using a valency-aware mask.

- Step 2: This mask is dynamically generated based on the current partial molecule's atom states, prohibiting tokens that would lead to invalid valency.

- Step 3: Renormalize the remaining probabilities and sample from them.

Table 1: Comparative Metrics of Generative Architectures on Molecular Validity (Benchmark: QM9/Guacamol)

| Model Architecture | Typical Validity Rate (%) | Uniqueness (%) | Novelty (%) | Key Limitation for Validity |

|---|---|---|---|---|

| Standard VAE | 60-85 | 90+ | 80+ | Smooth latent space permits invalid decodes |

| Grammar VAE | 90-100 | 85-95 | 75-90 | Constrained output syntax improves validity |

| GAN (RL-based) | 80-100 | 40-80* | 70-95 | *Prone to mode collapse, low uniqueness |

| Transformer (Beam) | 95-100 (Syntax) | 95+ | 90+ | Semantic invalidity despite syntax correctness |

| Constrained Transformer | 98-100 | 95+ | 90+ | Mitigates semantic errors via masked decoding |

Table 2: Impact of Post-Processing & Constrained Decoding on Validity

| Intervention Method | Validity Increase (Δ%) | Computational Overhead | Impact on Diversity |

|---|---|---|---|

| Rule-Based Post-Processing | +10 to +20 | Low | May reduce novelty |

| Valency-Checking Decoder (VAE) | +15 to +30 | Medium | Minimal negative impact |

| Gradient Penalty (GAN) | +5 to +10 (via stability) | High | Increases diversity |

| Token-Masking in Transformer | +20 to +40 | Low-Medium | Can be tuned; minimal impact |

Experimental Protocol: Validity-Constrained Transformer Training

Objective: To train a Transformer model that generates chemically valid molecules with high diversity. Materials: See "The Scientist's Toolkit" below. Workflow:

- Data Preprocessing: Standardize SMILES from source (e.g., ChEMBL) using RDKit. Split 80/10/10 for train/validation/test.

- Model Setup: Initialize a standard Transformer encoder-decoder with 8 attention heads, 6 layers, and 512-dimensional embeddings.

- Constrained Decoding Module: Implement a function that, given a partial SMILES sequence, calculates the valency state of each atom in the growing molecular graph and generates a mask for the next token.

- Training Phase 1: Train on standard next-token prediction loss (cross-entropy) for 50 epochs.

- Training Phase 2: Fine-tune using a combined loss:

L = L_CE + λ * L_valid, whereL_validis a penalty term based on the validity of molecules sampled during training. Useλ=0.1. - Evaluation: Generate 10,000 molecules. Calculate validity, uniqueness, and novelty against the training set. Use a property predictor to assess the distribution of key physicochemical properties (e.g., QED, LogP).

Title: Constrained Transformer for Molecular Generation

Title: Troubleshooting Low Molecular Validity by Model Type

The Scientist's Toolkit: Key Research Reagent Solutions

| Item Name | Function/Benefit | Example/Provider |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, validation, and descriptor calculation. | www.rdkit.org |

| DeepChem | Open-source library for deep learning in drug discovery, offering molecular featurization and model architectures. | deepchem.io |

| GUACA Mole (Guacamol) | Benchmark suite for evaluating generative models on goals like validity, diversity, and property optimization. | BenevolentAI/guacamol |

| MOSES | Benchmark platform (Molecular Sets) with standardized training data, metrics, and baselines for generative models. | molecularsets.github.io/moses |

| PyTorch Geometric | Library for deep learning on graphs; essential for graph-based molecular representations. | pytorch-geometric.readthedocs.io |

| Token Masking Library | Custom script to constrain SMILES generation based on real-time atom valency. | Requires in-house development based on RDKit. |

| WGAN-GP Implementation | Pre-built training loop for Wasserstein GANs with Gradient Penalty for stable GAN training. | Available in PyTorch/TensorFlow tutorials. |

Troubleshooting Guides & FAQs for Molecular Generative AI Research

FAQ 1: My generative model produces molecules with high predicted binding affinity, but they consistently fail basic valence checks or contain unstable functional groups. What is happening? Answer: This is a classic symptom of reward hacking. Your model's objective function (e.g., a docking score) has been successfully optimized, but the optimization has exploited weaknesses in the scoring function or data distribution, ignoring fundamental chemical rules. The model generates chemically invalid or unrealistic structures that the proxy reward cannot penalize. You must augment your reward signal with hard or soft constraints for chemical validity.

FAQ 2: During reinforcement learning for molecular generation, my agent's reward rapidly saturates at an improbably high value, but generated structures degrade. How do I diagnose this? Answer: This indicates a severe reward function exploit. Follow this diagnostic protocol:

- Isolate: Run a batch of 1000 generated molecules through a standardized filtration pipeline (e.g., RDKit's

SanitizeMol). - Quantify: Calculate the percentage that pass sanitization. Then, calculate the average reward for the invalid subset versus the valid subset.

- Analyze: If invalid molecules have a significantly higher average reward, your reward function is hacked. Common culprits include overly simplistic 2D property predictors or docking functions vulnerable to atomic clashes.

Table 1: Diagnostic Results for Reward Saturation Scenario

| Metric | Valid Molecules Subset | Invalid Molecules Subset |

|---|---|---|

| Percentage of Batch | 12% | 88% |

| Average Predicted pIC50 | 8.2 ± 1.1 | 9.8 ± 0.5 |

| Passes Synthetic Accessibility Score (<4.0) | 65% | 2% |

FAQ 3: What are the most effective methods to penalize chemically invalid structures in a differentiable way during training? Answer: Implement a multi-term loss function that directly embolds chemical reality. The standard protocol is to combine:

- Valence Penalty: A Gaussian-based penalty applied to atoms violating standard valence rules.

- Ring Strain Penalty: Calculated using idealized bond angles and lengths from molecular mechanics.

- Functional Group Instability Penalty: A binary mask for known unstable groups (e.g., certain anhydrides, epoxides under physiological pH).

- Synthetic Accessibility (SA) Score: Integrate a differentiable version of the SA Score to penalize overly complex structures.

Experimental Protocol: Differentiable Chemical Penalty Integration Objective: To retrofit an existing RL-based molecular generator with validity-preserving penalty terms. Materials: See The Scientist's Toolkit below. Method:

- Initialize your pre-trained generative agent (e.g., a Graph Neural Network policy).

- For each training batch:

a. Generate a batch of molecular graphs.

b. Compute the primary reward (e.g., QED, docking score).

c. In parallel, compute the Validity Penalty (V):

V = λ1 * Σ_i exp( (v_i - v_ideal_i)^2 / 2σ^2 )wherev_iis the current atom valence. d. Compute the SA Penalty (S):S = λ2 * (SA_score(mol) - 2.5)clipped below zero. e. Compute the Composite Reward:R_total = R_primary - V - S. - Update the policy parameters using proximal policy optimization (PPO) with

R_total. - Validate every 1000 steps by calculating the percentage of valid, synthetically accessible molecules in a held-out generation set.

Diagram Title: RL Training Loop with Validity Penalization

FAQ 4: How can I ensure my model's internal representations align with known physicochemical principles, not just statistical artifacts? Answer: Employ a representation adversarial validation protocol.

- Train a discriminator to distinguish between latent vectors of (a) AI-generated molecules and (b) experimentally known molecules from a reliable database (e.g., ChEMBL).

- If the discriminator accuracy exceeds 70%, your model's latent space has diverged from chemical reality.

- Mitigate this by adding a regularization term that minimizes the Wasserstein distance between the distributions of real and generated molecule latents.

Table 2: Adversarial Validation Results Across Model Types

| Model Architecture | Discriminator Accuracy (Before Reg.) | Discriminator Accuracy (After Reg.) | Validity Rate Post-Optimization |

|---|---|---|---|

| RNN (SMILES) | 89% | 55% | 91% |

| Graph Neural Network | 76% | 52% | 99% |

| VAE | 82% | 58% | 95% |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| RDKit | Open-source cheminformatics toolkit used for molecule sanitization, descriptor calculation, and substructure searching. Critical for defining validity rules. |

| Open Drug Discovery Toolkit (ODDT) | Provides streamlined pipelines for virtual screening and includes differentiable scoring functions, helping to create more robust primary rewards. |

| TorchDrug | A PyTorch-based framework for drug discovery. Essential for building differentiable graph-based models and implementing custom penalty layers. |

| Molecular Sets (MOSES) | A benchmarking platform with standardized datasets and metrics (e.g., validity, uniqueness, novelty). Used for fair evaluation against baseline models. |

| Oracle Guacamol | Suite of benchmark objectives for generative chemistry. Helps test if models can achieve goals without reward hacking by providing diverse, well-defined tasks. |

Diagram Title: Adversarial Latent Space Validation Workflow

Architecting for Validity: Advanced Methods for Generating Synthesizable Molecules

Technical Support Center

FAQs & Troubleshooting Guides

Q1: My model generates molecules with incorrect valences or unstable rings. The deep learning component seems to ignore basic chemistry. A: This is a classic sign of rule under-specification. The neural network's probabilistic output can violate hard constraints.

- Solution: Implement a post-generation "valency filter" using SMARTS patterns or a toolkit like RDKit. For integration, use a rule-based repair function that corrects invalid structures before they pass to the reward calculation.

- Protocol: Rule-Based Post-Processing Validation

- Input: Batch of generated molecular graphs (SMILES strings) from the deep generative model (e.g., VAE, GNN).

- Rule Application: For each SMILES string, parse using RDKit's

Chem.MolFromSmiles(). - Valence Check: Use

Chem.SanitizeMol(mol, sanitizeOps=rdkit.Chem.SanitizeFlags.SANITIZE_ALL^rdkit.Chem.SanitizeFlags.SANITIZE_ADJUSTHS)to detect violations without automatic correction. - Filter/Repair: Discard invalid molecules, or apply a rule-based correction algorithm (e.g., adjust hydrogen counts, saturate atoms according to predefined valency rules).

- Output: A curated batch of chemically valid molecules for downstream scoring.

Q2: How do I balance the influence between the learned data distribution (from the deep model) and the hand-coded chemical rules? The rules are overpowering the model's creativity. A: This indicates an issue with the hybrid integration architecture or the weighting of rule-based rewards.

- Solution: Transition from a simple post-processing filter to a guided generation or constrained optimization approach. Adjust the weighting parameter (λ) in your hybrid objective function.

- Protocol: Tuning the Hybrid Objective Function

- Define your hybrid loss/reward function:

L_total = L_ML + λ * R_rules, whereL_MLis the machine learning loss (e.g., reconstruction, policy gradient) andR_rulesis the rule-based reward/penalty. - Start a hyperparameter sweep for λ (e.g., values [0.1, 0.5, 1.0, 2.0, 5.0]).

- For each λ, run a short training experiment (e.g., 10,000 steps).

- Evaluate outputs on the metrics in Table 1.

- Select the λ that provides the optimal trade-off, indicated by high validity while maintaining diversity and novelty.

- Define your hybrid loss/reward function:

Q3: The model now generates only valid but very simple molecules. It fails to explore complex chemical space. A: The rule set may be too restrictive, or the model has collapsed to a "valid but trivial" mode.

- Solution: Introduce a "novelty" or "complexity" penalty/reward alongside the validity rules. Use a tiered rule system: hard constraints (e.g., valency) are non-negotiable, soft constraints (e.g., preferred ring size) are rewarded but not mandatory.

- Protocol: Implementing Tiered Rule Constraints

- Categorize Rules:

- Hard Rules: Valence, allowed atom types. Function: Reject/repair.

- Soft Rules: Synthetic accessibility score (SA Score), logP range, presence of undesirable functional groups. Function: Add continuous penalty/bonus to reward.

- Modify your generation loop: Molecules failing hard rules are rejected. All others receive a composite reward:

R_total = R_soft_rules + β * R_novelty, whereR_noveltyis the Tanimoto dissimilarity to a reference set. - Periodically update the reference set for novelty calculation with recent high-reward molecules to avoid stagnation.

- Categorize Rules:

Experimental Data Summary

Table 1: Performance Comparison of Generative Model Architectures on Molecular Validity & Diversity

| Model Architecture | % Valid (↑) | % Novel (↑) | % Unique (↑) | Synthetic Accessibility Score (↓)* | Internal Diversity (↑) |

|---|---|---|---|---|---|

| VAE (Baseline) | 73.2 | 86.5 | 94.1 | 4.21 | 0.72 |

| VAE + Post-Hoc Rules | 100.0 | 82.3 | 85.7 | 3.98 | 0.68 |

| GNN + RL (Hybrid Guided) | 98.7 | 91.2 | 99.5 | 3.45 | 0.85 |

Lower SA Score indicates easier to synthesize (ideal < 4.5). *Measured as average pairwise Tanimoto dissimilarity (range 0-1).*

Research Reagent Solutions

Table 2: Essential Toolkit for Hybrid Model Development

| Item | Function in Hybrid Model Research |

|---|---|

| RDKit | Open-source cheminformatics toolkit; used for parsing molecules, applying rule-based checks (valency, substructure), calculating descriptors. |

| PyTorch/TensorFlow | Deep learning frameworks for building and training the generative neural network component (VAEs, GNNs). |

| REINVENT / ChemTS | Frameworks for reinforcement learning (RL) in molecular generation; facilitate the integration of rule-based rewards. |

| SMARTS Patterns | Language for encoding molecular substructure rules (e.g., forbidden functional groups) for validation. |

| MOSES Benchmarking Platform | Provides standardized datasets (e.g., ZINC), metrics, and baselines for evaluating generative model performance. |

| DockStream / AutoDock Vina | Docking software to calculate binding affinity as a complex, physics-informed rule for reward in generative RL. |

Visualizations

Hybrid Model Validation & Scoring Workflow

Hybrid Model Components & Integration Logic

Reinforcement Learning with Chemical Constraints (e.g., Valency, Ring Strain)

Technical Support Center

Troubleshooting Guides

Issue 1: Agent fails to generate chemically valid molecules.

- Symptoms: Generated SMILES strings cannot be parsed by RDKit or Open Babel. Invalid valency (e.g., pentavalent carbon) is common.

- Diagnosis: Check the reward function weighting. The penalty for invalid valency is likely insufficient relative to the primary objective reward (e.g., binding affinity).

- Resolution: Incrementally increase the penalty coefficient for valency violations. Implement a tiered penalty system where molecules that fail sanitization receive a significantly negative reward, preventing the agent from exploiting invalid states.

Issue 2: Model generates molecules with high synthetic difficulty despite constraints.

- Symptoms: Molecules satisfy valency and ring strain rules but contain unrealistic functional group combinations or stereochemistry.

- Diagnosis: The chemical constraint set is too narrow. Valency and strain are necessary but not sufficient for synthetic accessibility.

- Resolution: Integrate a secondary penalty or filter based on a synthetic accessibility score (e.g., SA Score, RA Score). Use a rule-based system like RECAP rules or a learned model (e.g., a SCScore predictor) as part of the environment's reward or termination signal.

Issue 3: Training instability with combined reward signals.

- Symptoms: Agent performance collapses or reward oscillates wildly after many episodes.

- Diagnosis: This is common in multi-objective RL. The scale and frequency of the constraint reward (dense) vs. the property reward (sparse) may be mismatched.

- Resolution: Apply reward scaling or normalization. Clip constraint violation penalties to a stable range. Consider using a constrained RL method like Lagrangian multipliers to adaptively balance the objectives during training.

Issue 4: Excessive computational cost for ring strain calculation.

- Symptoms: Simulation time per episode becomes prohibitive when calculating detailed molecular mechanics for every step.

- Diagnosis: On-the-fly quantum mechanical or force field calculations are too expensive for RL sampling.

- Resolution: Use a pre-computed lookup table for common ring systems (cyclopropane, cyclobutane) or train a fast neural network surrogate model (e.g., a Graph Neural Network) to approximate strain energy from molecular graph inputs.

Frequently Asked Questions (FAQs)

Q1: What are the most critical chemical constraints to enforce first in molecular RL? A: Valency is the non-negotiable first constraint. A molecule with invalid valency cannot exist. Following that, formal charge balance and basic ring strain rules (e.g., flagging highly fused small rings) are the next priorities before moving to more complex constraints like synthetic accessibility.

Q2: Should chemical constraints be enforced as "hard" rules in the action space or as "soft" penalties in the reward? A: This is a key design choice. Hard rules (masking invalid actions) ensure 100% validity but can limit exploration and require perfect rule specification. Soft penalties (reward shaping) are more flexible and allow the agent to learn the constraints, but may occasionally produce invalid intermediates. A hybrid approach is often best: mask grossly invalid actions (like exceeding maximum valency) and use penalties for finer constraints (like moderate strain).

Q3: How do I quantify ring strain for use in a reward function? A: The most straightforward metric is the deviation from ideal bond angles and lengths. For RL, a practical measure is the incremental strain energy calculated using fast force fields (like MMFF94) for each proposed molecular modification. Alternatively, use empirical rules: assign fixed strain energies to known problematic systems (e.g., +27 kcal/mol for cyclopropane, +26 kcal/mol for cyclobutane).

Q4: My model generates valid but overly simple molecules. How can I encourage complexity? A: This is a form of "reward hacking." To encourage valid and complex structures, add a mild positive reward for molecular size or number of rings, balanced against penalties for excessive molecular weight. Also, ensure the primary property reward (e.g., QED, binding affinity) is sufficiently granular to reward improvement within the valid chemical space.

Table 1: Comparison of Constraint Enforcement Methods in Molecular RL

| Method | Validity Rate (%) | Novelty (Tanimoto <0.4) | Avg. Ring Strain (kcal/mol) | Computational Overhead |

|---|---|---|---|---|

| Post-hoc Filtering | 100.0 | 65.2 | 12.7 | Low |

| Reward Penalty Only | 85.6 | 88.5 | 8.4 | Medium |

| Action Masking Only | 100.0 | 72.1 | 10.2 | Low |

| Hybrid (Mask + Penalty) | 99.8 | 84.7 | 5.1 | Medium |

Table 2: Typical Strain Energies for Common Ring Systems

| Ring System | Approx. Strain Energy (kcal/mol) | Considered High Strain? |

|---|---|---|

| Cyclopropane | 27.5 | Yes |

| Cyclobutane | 26.3 | Yes |

| Cyclopentane | 6.2 | No |

| Cyclohexane (chair) | 0.1 | No |

| Bicyclo[1.1.0]butane | >65.0 | Yes |

| Azetidine | ~24.0 | Yes |

Experimental Protocols

Protocol 1: Implementing Valency Constraint via Action Masking

- Environment Setup: Use a graph-based molecular environment where actions correspond to adding atoms or bonds.

- State Representation: Maintain a graph representation of the current molecule with explicit atom and bond types.

- Masking Function: Before each step, compute the maximum allowed bonds for each atom based on its type (e.g., C=4, N=3, O=2, etc.). For every potential bond-addition action, check if the involved atom has reached its valency limit.

- Action Filtering: Pass a binary mask to the RL agent (e.g., a PPO or DQN policy) where invalid actions have a probability of zero.

- Validation: Use RDKit's

SanitizeMoloperation as a ground-truth check on a subset of generated molecules to verify masking efficacy.

Protocol 2: Integrating Ring Strain Penalty in Reward Shaping

- Calculation Step: After each agent action that forms or modifies a ring, generate a 3D conformation using RDKit's

EmbedMolecule. - Energy Minimization: Perform a quick, partial minimization (50-100 steps) using the MMFF94 force field via RDKit's

MMFFOptimizeMolecule. - Strain Assignment: Calculate the molecule's total strain energy. For the reward, compute the change in strain energy from the previous state:

ΔE_strain = E_strain(current) - E_strain(previous). - Reward Formulation: Integrate into the total reward:

R_total = R_property - α * max(0, ΔE_strain) - β * I(invalid_valency). Tune α to control strain tolerance. - Caching: Cache computed strain energies for molecular graphs to avoid redundant calculations.

Diagrams

Title: RL with Chemical Constraints Workflow

Title: Ring Strain Penalty Calculation Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Constrained Molecular RL Experiments

| Tool/Reagent | Function & Purpose | Example/Provider |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, sanitization, descriptor calculation, and force field minimization. Core to constraint checking. | rdkit.org |

| Open Babel | Tool for chemical file format conversion and basic molecular validity checking. Useful as an alternative validator. | openbabel.org |

| MMFF94 Force Field | A fast, well-parameterized force field for calculating molecular mechanics energies, including steric strain, in organic molecules. | Implemented in RDKit |

| SA Score | A heuristic score (1-10) estimating synthetic accessibility. Used as a reward penalty to guide agents toward synthesizable molecules. | Implementation in RDKit |

| RL Frameworks | Libraries for building and training the RL agent. Provide policy and value networks, sampling, and optimization. | OpenAI Gym/Spaces, Stable-Baselines3, Ray RLlib |

| Graph Neural Network Library | For building agents that directly process molecular graphs, often leading to better generalization and constraint satisfaction. | PyTorch Geometric, DGL |

Fragment-Based and Scaffold-Constrained Generation Strategies

Troubleshooting Guides and FAQs

This technical support center addresses common issues encountered when implementing fragment-based and scaffold-constrained generation strategies within generative AI models for molecular design. The content is framed within the thesis context of Improving molecular validity in generative AI models research.

FAQ 1: Invalid or Unstable 3D Molecular Geometries

Q: The generated molecules frequently exhibit invalid bond lengths/angles or high strain energies when 3D coordinates are generated. How can this be improved? A: This is often due to fragment libraries lacking associated 3D conformer information or insufficient geometric constraints during assembly.

- Solution: Use pre-optimized 3D fragments with locked core geometries. Implement a post-generation force field minimization step (e.g., using MMFF94 or UFF) and validate against standard bond length and angle dictionaries.

- Protocol: Attach a conformer generation tool (e.g., RDKit's EmbedMolecule) followed by a brief optimization cycle within your generation pipeline. Set thresholds for acceptable strain energy.

FAQ 2: Loss of Synthetic Accessibility (SA)

Q: Molecules generated under strict scaffold constraints are often synthetically intractable or require unrealistic reactions for assembly. A: The fragment linking rules may be too permissive, ignoring retrosynthetic compatibility.

- Solution: Integrate a forward-synthesis prediction filter or use fragment libraries derived from known reaction rules (e.g., RECAP rules). Employ a synthetic accessibility score (SA Score, RAscore) as a post-filter or reinforcement learning reward.

- Protocol: After generation batch, compute SA Scores using available packages. Discard molecules above a threshold (e.g., SA Score > 6). Consider using a retrosynthesis-based feasibility checker like AiZynthFinder in a validation workflow.

FAQ 3: Limited Chemical Diversity

Q: The model seems to converge on a small set of similar molecular structures, failing to explore the constrained chemical space effectively. A: This is a classic mode collapse issue, often exacerbated by overly restrictive scoring or poor sampling parameters.

- Solution: Increase the sampling temperature in the generation step. Introduce diversity-promoting techniques, such as scoring with a diversity filter or using a Determinantal Point Process (DPP) for batch selection. Periodically inject novel, validated fragments into your library.

- Protocol: Adjust the temperature parameter of your softmax sampling (if applicable) incrementally (e.g., from 1.0 to 1.5). Implement a nearest-neighbor distance filter to ensure new structures are sufficiently different from previously generated ones.

FAQ 4: Scaffold Hopping Failure

Q: The model struggles to propose valid molecules when the input scaffold is highly novel or under-represented in the training data. A: The generative model may be overfitting to common scaffolds seen during training.

- Solution: Utilize a transfer learning approach: pre-train on a broad chemical corpus, then fine-tune on a smaller, target-focused set that includes the novel scaffold. Employ a fragment-based method that deconstructs the novel scaffold into smaller, more common sub-units for generation.

- Protocol: Use a model like ChemBERTa for pre-training. For fine-tuning, prepare a dataset of 500-1000 molecules containing the novel scaffold or its analogs. Use a low learning rate (e.g., 1e-5) for fine-tuning to retain general knowledge.

FAQ 5: Inconsistent Aqueous Solubility Prediction

Q: Generated molecules passing all other filters are later predicted to have poor aqueous solubility, derailing the project. A: Key solubility-related descriptors (e.g., LogP, topological polar surface area (TPSA), hydrogen bond counts) may not be adequately constrained during generation.

- Solution: Explicitly incorporate solubility rules as hard or soft constraints. Implement a "solubility alert" filter based on thresholds for LogP and TPSA.

- Protocol: Integrate immediate calculation of LogP (e.g., using RDKit's Crippen module) and TPSA after molecule generation. Reject molecules failing the criteria:

LogP > 5ORTPSA < 60 Ų(for intended oral drugs). See Table 1 for target ranges.

Table 1: Key Property Targets for Molecular Validity

| Property | Target Range (Typical Oral Drug) | Calculation Method | Purpose in Validity |

|---|---|---|---|

| QED (Drug-likeness) | > 0.6 | RDKit QED | Filters unrealistic molecules |

| SA Score | < 6 | Synthetic Accessibility Score | Ensures synthetic tractability |

| LogP | 0 to 5 | Crippen method | Controls lipophilicity/solubility |

| TPSA | 60 - 140 Ų | RDKit | Estimates membrane permeability |

| Ring Systems | ≤ 3 | RDKit Descriptors | Reduces complexity |

| Strain Energy | < 15 kcal/mol | MMFF94 Optimization | Ensures stable 3D geometry |

Experimental Protocol: Fragment-Based Generation with Validity Filtering

This protocol outlines a standard workflow for generating molecules with high structural validity using a fragment-based approach.

1. Fragment Library Preparation:

- Source: Curate fragments from public databases (e.g., ZINC Fragments, Enamine REAL Fragments) or generate by fragmenting approved drugs.

- Processing: Standardize tautomers, remove salts, and optimize 3D geometry for each fragment. Annotate with connection points (attachment vectors).

- Storage: Store as an SD file with properties (SMILES, molecular weight, number of rotatable bonds, etc.).

2. Constrained Generation Cycle:

- Input: Define a core scaffold (as SMARTS pattern) and desired properties (property ranges in Table 1).

- Assembly: Use a graph-based generative model (e.g., a modified GraphINVENT or HiChem model) that assembles fragments onto the core scaffold. The model is trained to sample from the fragment library and form chemically valid bonds.

- Sampling: Generate a batch of molecules (e.g., 1000).

3. Validity and Property Filtering Pipeline:

- Step 1 (Basic Validity): Use RDKit's

SanitizeMolcheck. Discard failures. - Step 2 (Structural Filters): Apply rule-based filters for unwanted functional groups (e.g., PAINS filters).

- Step 3 (Property Calculation): Compute properties listed in Table 1 for all remaining molecules.

- Step 4 (Multi-Parameter Optimization): Apply threshold filters based on Table 1. Rank survivors using a weighted sum score.

4. Output: A list of valid, synthetically accessible, and drug-like molecules that satisfy the scaffold constraint.

Experimental Workflow Visualization

Diagram Title: Fragment-Based Generation & Validity Filtering Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Fragment-Based AI Research

| Item | Function/Description | Example/Tool |

|---|---|---|

| Curated Fragment Library | Provides validated, 3D-optimized chemical building blocks with defined attachment points for assembly. | ZINC20 Fragment Library, Enamine REAL Fragments |

| Cheminformatics Toolkit | Performs essential operations: molecule sanitization, descriptor calculation, file I/O, and basic modeling. | RDKit (Open-source) |

| Generative Model Framework | Provides the core AI architecture for learning chemical rules and generating novel molecular structures. | PyTorch/TensorFlow with models like GraphINVENT, MoFlow, or Hamil |

| Geometry Optimization Engine | Minimizes the 3D energy of generated molecules to ensure realistic bond lengths and angles. | Open Babel, RDKit's MMFF94/UFF implementation |

| Synthetic Accessibility Predictor | Estimates the ease of synthesizing a generated molecule, a critical validity metric. | SA Score, RAscore, AiZynthFinder (for retrosynthesis) |

| High-Performance Computing (HPC) Cluster | Accelerates the training of AI models and the high-throughput virtual screening of generated molecules. | Local Slurm cluster or Cloud GPUs (AWS, GCP) |

| Visualization & Analysis Suite | Enables researchers to visually inspect generated molecules, scaffolds, and chemical space distributions. | RDKit, PyMOL, Jupyter Notebooks with plotting libraries |

Technical Support Center

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: During the training of our differentiable retrosynthesis model, the generated molecular trees frequently contain chemically invalid intermediates (e.g., pentavalent carbons). How can we enforce hard chemical validity constraints within a differentiable framework? A: This is a common issue when using purely neural network-based graph generation. The recommended solution is to integrate a differentiable valence check layer. Implement a penalty term in the loss function that uses the soft adjacency matrix predicted by the model. Calculate the sum of bond orders for each atom and apply a sigmoid-activated L2 loss against the maximum allowed valence (from a periodic table lookup). This steers the model toward valid configurations without breaking differentiability.

- Protocol: Add the following term to your primary loss (e.g., negative log-likelihood):

valence_penalty = λ * Σ_i sigmoid(Σ_j A_soft_ij - valence_max(i))^2whereA_softis the predicted bond order matrix,iandjare atom indices, andλis a scaling hyperparameter (start with 0.1).

Q2: Our integrated rule-based and neural pathway scorer shows high accuracy on the validation set, but fails to generalize to novel scaffold classes. What steps can we take to improve out-of-distribution performance? A: This indicates overfitting to the training reaction rules. Implement a two-stage verification protocol:

- Rule Augmentation: Use a SMARTS-based reaction rule applicator (e.g., RDChiral) to generate a broad set of potential precursors for a given molecule, including low-probability options. This creates a candidate set beyond the model's immediate predictions.

- Differentiable Filtering: Train a shallow, context-aware neural filter on diverse, synthetically challenging examples to re-rank these candidates. This combines the comprehensiveness of rules with the pattern recognition of AI.

Q3: When attempting to backpropagate through the reaction pathway selection, we encounter "NaN" gradients. What is the likely cause and fix?

A: This is typically caused by numerical instability in the softmax function over a large number of possible pathways or when pathway probabilities approach zero. Use gradient clipping and the log_softmax trick for stability.

- Protocol: Always compute the loss using the logits before the final softmax. For pathway selection probability

P, calculate:P = softmax(z / τ)wherezare logits andτis a temperature parameter. In your loss computation, uselog_softmax(z / τ, dim=-1)directly. Ensureτis not too small (start with τ=1.0). Also, clamp logits to a range [-10, 10] before this operation.

Q4: How can we quantitatively benchmark the improvement in molecular validity after integrating differentiable chemistry layers into our generative AI model? A: You must establish a standardized evaluation suite. Key metrics should be tracked as shown in the table below.

Table 1: Benchmarking Molecular Validity & Synthesisability

| Metric | Description | Measurement Tool | Target Improvement |

|---|---|---|---|

| Chemical Validity Rate | % of generated molecules with no valence errors. | RDKit SanitizeMol check. |

>99.9% |

| Synthetic Accessibility Score (SA) | Score from 1 (easy) to 10 (hard) to synthesize. | Synthetic Accessibility (SA) Score [1] or RAscore. | Reduce by >1.0 point vs. baseline. |

| Rule Coverage | % of proposed retrosynthetic steps matching a known reaction rule. | Template extraction via RDChiral [2]. |

>85% for known scaffolds. |

| Pathway Plausibility | Expert rating (1-5) of a full retrosynthetic pathway. | Blind assessment by medicinal chemists (n>=3). | Average rating ≥ 3.5. |

Experimental Protocols

Protocol 1: Differentiable Valence Enforcement Layer Objective: Integrate a soft chemical validity constraint into a graph-based molecular generation model. Materials: See "Research Reagent Solutions" below. Methodology:

- Let your model (e.g., a Graph Neural Network) output a preliminary bond order matrix

Bof size[Batch, N_Atoms, N_Atoms, Bond_Types]. - Apply a softmax across the

Bond_Typesdimension to create a differentiableA_softmatrix. - For each atom

i, compute the sum of predicted bond orders:total_valence_i = Σ_j max_bond_order(A_soft[i,j]). - Retrieve the maximum allowed valence

V_max_ifor atomibased on its predicted element. - Compute the valence violation:

violation_i = relu(total_valence_i - V_max_i). - Add the mean squared sum of

violation_iacross the batch, scaled by weightλ, to the total loss. - During inference, discretize

A_softto concrete bond orders viaargmax.

Protocol 2: Hybrid Rule-Neural Retrosynthesis Pathway Ranking Objective: Rank plausible retrosynthesis pathways by combining explicit reaction rules with a learned scoring function. Materials: See "Research Reagent Solutions" below. Methodology:

- Candidate Generation: For a target molecule

T, use a comprehensive rule-based system (e.g.,AiZynthFinderwith the USPTO rule set) to generate a set of precursor candidates{C}and associated reaction templates{R}. - Feature Encoding: Encode each candidate pathway as a feature vector: concatenate fingerprints of

TandC, the templateRembedding, and calculated physicochemical properties. - Differentiable Scoring: Process the feature vector through a fully connected network

S_θto produce a scalar score. - Training: Use pairwise ranking loss. For a batch, use expert-validated pathways as positive examples and randomly sampled alternatives as negatives. Minimize:

Loss = Σ max(0, γ - S_θ(positive) + S_θ(negative)). - Pathway Selection: Apply a

softmaxover scores for all candidates for a givenTto obtain a differentiable probability distribution over pathways.

Visualizations

Diagram 1: Hybrid Retrosynthesis Workflow

Diagram 2: Differentiable Valence Check Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item / Software | Function / Purpose | Key Consideration |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, sanitization, and descriptor calculation. | Essential for validity checks and fingerprint generation. Use SanitizeMol as the gold standard. |

| RDChiral | Rule-based reaction handling and template application for retrosynthetic analysis. | Provides precise, chemically rigorous precursor enumeration. Critical for rule-based step. |

| PyTorch Geometric | Library for deep learning on graphs; builds on PyTorch. | Enables construction of differentiable GNNs for molecular graph generation and processing. |

| AiZynthFinder | Platform for retrosynthesis planning using a Monte Carlo tree search with reaction rules. | Useful for generating candidate pathways and as a benchmark for hybrid systems. |

| Differentiable Softmax (τ) | Temperature parameter in softmax for converting logits to probabilities. | Tuning τ controls the "sharpness" of pathway selection, affecting gradient flow (τ high = smoother gradients). |

| USPTO Reaction Dataset | Curated dataset of chemical reactions used to extract reaction rules and train models. | The quality and breadth of rules directly impact the coverage of the hybrid system. |

Troubleshooting Guides & FAQs

Q1: I get ModuleNotFoundError: No module named 'rdkit' after a fresh install. What are the correct installation steps?

A: This often occurs due to environment conflicts. The recommended installation via conda is:

Verify installation with python -c "from rdkit import Chem; print(Chem.__version__)". If using pip, ensure system dependencies (e.g., libcairo2) are met, but conda is strongly preferred.

Q2: My generative model produces chemically invalid SMILES strings despite using RDKit for validation. What normalization steps are missing? A: Invalid outputs often stem from unnormalized molecular graphs. Implement this pre-processing protocol:

- Sanitization:

mol = Chem.MolFromSmiles(smiles); mol.UpdatePropertyCache(strict=False); Chem.SanitizeMol(mol, Chem.SANITIZE_ALL ^ Chem.SANITIZE_CLEANUP ^ Chem.SANITIZE_PROPERTIES) - Explicit Hydrogen Handling: Use

Chem.AddHs(mol)andChem.RemoveHs(mol)consistently during training and generation phases. - Valence & Aromaticity Correction: Apply

Chem.SanitizeMol(mol, sanitizeOps=Chem.SanitizeFlags.SANITIZE_FINDRADICALS)post-generation. - Canonicalization: Always output the canonical SMILES via

Chem.MolToSmiles(mol, canonical=True, isomericSmiles=False)for consistent node ordering in graphs.

Q3: How do I efficiently convert a batch of SMILES to normalized molecular graphs for PyTorch Geometric (PyG) or DGL? A: Use a batched, caching workflow to avoid redundant computation. See the protocol below.

Q4: RDKit's Chem.MolFromSmiles returns None for many model-generated strings. How can I debug the specific cause?

A: Implement a stepwise validator function:

Q5: What are the performance bottlenecks when integrating RDKit into a generative AI training loop, and how can I mitigate them?

A: The primary bottlenecks are SMILES parsing and graph generation. The solution is to implement a caching layer for parsed molecules and use parallel processing for large batches via multiprocessing.Pool. See performance data in Table 1.

Table 1: Performance Impact of Graph Normalization & Caching

| Processing Step | Time per 1000 mols (s) No Cache | Time per 1000 mols (s) With Cache | Validity Rate Post-Normalization (%) |

|---|---|---|---|

| SMILES to RDKit Mol | 12.7 ± 1.5 | 1.2 ± 0.3 | 98.5 |

| Add Hydrogens | 4.3 ± 0.8 | 0.8 ± 0.2 | 99.1 |

| Aromaticity Percept. | 3.1 ± 0.5 | 0.5 ± 0.1 | 99.7 |

| Canonicalization | 6.9 ± 1.2 | 2.1 ± 0.4 | 100.0 |

Protocol: Batched Molecular Graph Generation for GNNs

- Input: List of SMILES strings (

smiles_list). - Parallel Parsing: Use 4-8 workers to call

Chem.MolFromSmiles(s, sanitize=True). - Filter & Log: Remove

Noneresults, log invalid SMILES for model analysis. - Normalize: Apply

Chem.RemoveHs(Chem.AddHs(mol))to each molecule. - Feature Extraction: For each atom, compute features: atom type (one-hot), degree, hybridization, implicit valence, aromaticity. For each bond: type, conjugation, ring membership.

- Graph Construction: Build PyG

Dataobjects withx(node features),edge_index,edge_attr. - Batch: Use PyG's

DataLoaderfor mini-batch training.

The Scientist's Toolkit: Research Reagent Solutions

| Tool/Library | Primary Function | Key Use-Case in Generative Molecular AI |

|---|---|---|

| RDKit | Cheminformatics core | Molecular I/O, sanitization, fingerprinting, descriptor calculation, and substructure searching. Essential for validity checking. |

| PyTorch Geometric (PyG) | Graph Neural Networks | Building and training GNN-based generative models (e.g., on molecular graphs). |

| Deep Graph Library (DGL) | Graph Neural Networks | Alternative framework for scalable GNN model implementation. |

| MolVS | Molecular Validation & Standardization | Rule-based standardization (tautomer normalization, charge neutralization). |

| Open Babel | Chemical file conversion | Handling diverse molecular file formats not directly supported by RDKit. |

| CONDA | Package & environment management | Critical for managing RDKit and its complex dependencies without conflict. |

Title: SMILES to Normalized GNN Graph Workflow

Title: RDKit's Role in Improving Molecular Validity for AI

Debugging Generative AI: Identifying and Fixing Common Molecular Validity Failures

Troubleshooting Guides & FAQs

Q1: During structure generation, our AI model is producing molecules with unrealistic aromatic rings (e.g., non-planar 7-membered aromatic carbocycles). How do we diagnose and correct this?

A1: This is a common issue where the model learns incorrect aromaticity rules from training data. Follow this diagnostic protocol:

- Valence Check: Implement a post-generation filter using a toolkit like RDKit to flag atoms with invalid valences (e.g., a carbon with 5 bonds in an aromatic system).

- Hückel's Rule Validation: Code a rule-based check that assesses ring systems for (4n+2) π-electrons, considering all contributing orbitals and heteroatoms.

- Geometric Planarity Analysis: Use generated 3D conformers (e.g., via ETKDG) and calculate the root-mean-square deviation (RMSD) of ring atoms from their least-squares plane. Rings with an RMSD > 0.1 Å are non-planar and likely mis-assigned as aromatic.

Experimental Protocol for Training Data Correction:

- Objective: Curate a cleaner training set of validated aromatic systems.

- Method:

- Source molecules from high-quality databases (ChEMBL, PubChem).

- Apply the RDKit's

SanitizeMolfunction with strict aromaticity perception (using the default model). - Isolate molecules where sanitization fails or alters aromatic bonds.

- Manually inspect and correct these edge cases or remove them from the training set.

- Retrain the generative model on this curated set and re-evaluate aromaticity errors in the output.

Q2: Our generated molecules frequently contain hypervalent atoms (e.g., pentavalent carbons, hexavalent sulfurs) that violate chemical rules. What is the most effective way to eliminate these?

A2: Hypervalency stems from the model's inability to enforce fundamental valence constraints. Address this with a multi-layered approach:

- Integrate Valence Checks in the Decoder: Modify the model's sampling step to reject bond formations that would exceed an atom's maximum allowed valence based on its periodic table group.

- Post-hoc Filtering with Sanitization: Pass all generated molecules through a strict sanitization routine. The table below shows the efficiency of different toolkits at identifying hypervalent atoms in a sample of 10,000 generated molecules:

| Toolkit/Library | Molecules Flagged | False Positive Rate | Key Function Used |

|---|---|---|---|

| RDKit | 347 | 2.3% | SanitizeMol(), ValidateMol() |

| Open Babel | 332 | 3.1% | OBMol::Validate() |

| CDK (Chem. Dev. Kit) | 355 | 2.8% | AtomContainerManipulator |

- Penalize During Training: Incorporate a valence violation penalty term into the model's loss function to discourage hypervalent structures during learning.

Q3: We observe a high prevalence of unstable small rings (e.g., cyclopropyne, anti-Bredt olefins) in generated outputs. How can we constrain the model to avoid these?

A3: These structures are often thermodynamically or kinetically unstable. Implement stability rules:

- Ring Strain Rules: Enforce Bredt's Rule (no bridgehead double bonds in small bicyclic systems) and ban small, high-strain rings like cyclopropyne.

- Adversarial Training: Create a "discriminator" model trained to distinguish between stable and unstable rings. Use it to score and penalize the generator's outputs.

- Template-Based Generation: Use a rule-based system that only assembles ring systems from a pre-defined library of validated, stable scaffolds.

Experimental Protocol for Stability Assessment:

- Generate Candidate Molecules using your AI model.

- Filter using SMARTS patterns for unstable motifs (e.g.,

[C;R2]#[C;R2]for cyclopropyne). - Perform Fast Quantum Mechanics (QM) Calculations (e.g., GFN2-xTB) on filtered molecules to compute strain energy.

- Establish a Threshold: Molecules with strain energy > 25 kcal/mol above a stable reference are flagged as unstable and added to a negative training set.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Molecular Validation |

|---|---|

| RDKit | Open-source cheminformatics library; used for molecular sanitization, aromaticity perception, and valence checking. |

| Open Babel | Chemical toolbox for format conversion, descriptor calculation, and basic structure validation. |

| GFN-xTB | Semiempirical quantum mechanical method for fast calculation of molecular geometry, energy, and strain. |

| SMARTS Patterns | Query language for defining specific molecular substructures (e.g., hypervalent atoms, unstable rings) for searching/filtering. |

| ChEMBL Database | Manually curated database of bioactive molecules with drug-like properties; a high-quality source for training data. |

| Conformational Sampling (ETKDG) | Algorithm within RDKit to generate accurate 3D conformers; essential for geometric planarity analysis. |

Visualizations

Title: Diagnostic Workflow for Invalid Aromatic Rings

Title: Multi-Layer Strategy to Eliminate Hypervalent Atoms

Tuning Hyperparameters to Favor Validity Without Sacrificing Diversity

Troubleshooting Guides & FAQs

Q1: My model generates a high percentage of syntactically valid SMILES strings, but a large fraction are chemically invalid (e.g., hypervalent carbons). What is the first hyperparameter I should check?

A: The primary suspect is the reconstruction loss weight (often the KL divergence weight, β, in a VAE framework). If this weight is too low, the model prioritizes diversity over learning the underlying chemical rules. Action: Gradually increase the β weight while monitoring both validity (e.g., using RDKit's Chem.MolFromSmiles percentage) and diversity metrics (like unique valid molecules per batch or internal diversity). A balanced value often lies in a narrow range; systematic sweeps are required.

Q2: After tuning for validity, my model's output diversity has collapsed, generating only a few repetitive structures. How can I recover diversity? A: This is a classic sign of over-regularization or excessive penalty on the latent space. Troubleshooting Steps:

- Check Sampling Temperature: If using a probabilistic decoder (e.g., in an RNN), the softmax temperature directly controls randomness. A value too low (< 0.8) leads to greedy, repetitive generation. Incrementally adjust it towards 1.0-1.2.

- Inspect Latent Space Dimensions: An overly small latent space cannot encode diverse molecular features. Consider increasing the dimension from a typical 128 or 256 to 512, while simultaneously adjusting the KL loss weight to prevent the model from ignoring the latent space.

- Evaluate the Discriminator Weight: If using an adversarial or reinforcement learning component (like a GAN or a REINFORCED objective), its weight may be too strong, forcing the generator into a few "safe" modes. Try reducing this weight.

Q3: I am using a reinforcement learning (RL) reward to optimize validity. The model quickly learns to generate a small set of valid molecules but then stops exploring. What's wrong? A: This is known as reward hacking or mode collapse in RL. The issue lies in the reward function and the RL algorithm's exploration parameters.

- Refine the Reward: Make the reward function multi-objective. Combine validity with a novelty or diversity penalty (e.g., negative similarity to recently generated molecules).

R = R_validity + λ * R_diversity. - Tune RL Hyperparameters: Increase the entropy regularization coefficient in policy gradient methods (like PPO) to encourage action exploration. Also, consider reducing the learning rate for the policy network to prevent rapid convergence to a suboptimal policy.

Q4: How do I choose the right validity metric for tuning, and what target values should I aim for? A: Validity is hierarchical. Your tuning target depends on your research phase.

| Metric | Calculation Method | Target Range (Benchmark) | Interpretation |

|---|---|---|---|

| Syntax Validity | % of SMILES parsable by grammar | >99.5% | Essential baseline. High value is necessary but not sufficient. |

| Chemical Validity | % of parsed molecules that pass RDKit's sanitization (e.g., Chem.SanitizeMol) |

90-98% (e.g., JT-VAE >90%) | Core tuning objective. Indicates model learns chemical rules. |

| Novelty | % of valid molecules not in training set | Context-dependent, often >80% | Ensures model is generating new structures, not memorizing. |

| Internal Diversity | Average pairwise Tanimoto dissimilarity within a large generated set (e.g., 10k molecules) | >0.7 (using ECFP4 fingerprints) | Measures structural spread. Prevents mode collapse. |

Q5: My workflow is slow; hyperparameter tuning with large-scale molecular generation is computationally expensive. Any protocol for efficient search? A: Implement a Bayesian Optimization (BO) protocol rather than grid or random search.

- Define Search Space: Key parameters: Sampling Temperature (0.7-1.3), β weight (1e-6 to 1e-3), latent dimension (128, 256, 512), RL entropy weight (0.01-0.2).

- Define Objective Function:

Objective = α * Chemical_Validity + β * Internal_Diversity. Start with α=0.7, β=0.3. - Run Iterations: Use a library like

scikit-optimize. For each BO iteration, generate 1000-5000 molecules, compute the objective, and update the surrogate model. - Early Stopping: Stop if the top 10 objective scores have not improved for 20 iterations.

Experimental Protocol: Systematic Hyperparameter Tuning for Molecular Validity

Objective: To identify the optimal set of hyperparameters for a molecular generative model (e.g., a VAE with SMILES-based encoder/decoder) that maximizes chemical validity without compromising structural diversity.

Materials & Software:

- Dataset: ZINC250k or ChEMBL subset.

- Model: SMILES-based VAE/RNN or JT-VAE architecture.

- Libraries: RDKit (v2023.x.x), PyTorch/TensorFlow, scikit-optimize, NumPy.

- Metrics: RDKit sanitization check, Tanimoto similarity based on ECFP4 fingerprints.

Methodology:

- Baseline Training: Train the model with initial hyperparameters (β=0.0001, temp=1.0, latent_dim=256) to convergence.

- Define Parameter Ranges: Establish min/max values for each target hyperparameter (see table below).

- Bayesian Optimization Loop:

a. Proposal: The BO algorithm proposes a set of hyperparameters.

b. Evaluation: Retrain or fine-tune the model with the proposed set. Generate 10,000 molecules.

c. Scoring: Calculate the multi-objective score:

Score = (0.7 * Chem_Valid) + (0.3 * Int_Div). d. Update: Update the BO surrogate model with the {parameters, score} pair. - Iterate: Repeat step 3 for 50-100 iterations.

- Validation: Select the top 3 parameter sets. Retrain from scratch three times each to assess robustness. Generate 50,000 molecules per final model for final evaluation.

Visualizations

Diagram Title: Bayesian Optimization Workflow for HP Tuning

Diagram Title: Validity-Diversity Trade-Off Landscape

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Hyperparameter Tuning |

|---|---|

| RDKit | Open-source cheminformatics toolkit. Used to calculate chemical validity, generate molecular fingerprints (ECFP4), and compute similarity/diversity metrics. Essential for metric computation. |

| PyTorch / TensorFlow | Deep learning frameworks. Provide automatic differentiation and flexible architectures for implementing and training generative models (VAEs, GANs). |

| scikit-optimize | Python library for sequential model-based optimization (Bayesian Optimization). Efficiently navigates hyperparameter space to find optimal configurations. |

| Molecular Dataset (e.g., ZINC, ChEMBL) | Curated, publicly available libraries of drug-like molecules. Serve as the training and benchmark data for the generative model. |

| Weights & Biases (W&B) / MLflow | Experiment tracking platforms. Log hyperparameters, output metrics, and generated molecule sets across hundreds of runs, enabling comparative analysis. |

| High-Performance Computing (HPC) Cluster / Cloud GPU | Computational resource. Hyperparameter search requires parallelized training of dozens of model instances, demanding significant GPU hours. |

Technical Support Center

Troubleshooting Guide & FAQs

This support center provides solutions for researchers encountering issues when implementing post-generation filtering (PGF) or built-in constraint optimization (BCO) techniques to improve molecular validity in generative AI models.

Frequently Asked Questions

Q1: My model generates a high percentage of invalid SMILES strings. Should I prioritize improving the model architecture or implement a stronger post-filter? A: First, diagnose the root cause. Calculate the validity rate per batch and epoch. If validity is low (<70%) from the start, the issue is likely in the model's fundamental training (e.g., insufficient exposure to valid SMILES, poor architecture choice for syntax). Implement or strengthen Built-in Constraint Optimization (e.g., switch to a grammar-VAE, introduce syntactic rules). If validity is high during training but drops during novel generation, a targeted post-generation filter (e.g., a validity checker paired with a fine-tuned discriminator) may be sufficient.

Q2: After implementing a strict post-generation filter for chemical validity, my molecular diversity (as measured by unique valid scaffolds) has dropped significantly. How can I mitigate this? A: This is a common trade-off. To mitigate:

- Tiered Filtering: Implement a multi-stage filter. Stage 1: Basic syntactic validity (SMILES grammar). Stage 2: Chemical validity (e.g., valency checks via RDKit). Stage 3: Optional, more complex rules. This prevents discarding molecules that fail complex checks but pass simple ones.

- Filter-Aware Sampling: Increase the sampling pool size (e.g., generate 10x more molecules than needed) before applying the filter to ensure enough valid, diverse candidates survive.

- Relax Constraints: Review the strictness of your chemical rules. Some "invalid" configurations might be rare but not impossible.

Q3: My model with built-in syntactic constraints trains much slower than my baseline model. Is this expected, and how can I improve training efficiency? A: Yes, this is expected. BCO methods often add computational overhead. To improve efficiency:

- Checkpointing: Use frequent model checkpoints to avoid restarting from scratch.

- Hardware Utilization: Profile your code to ensure it's efficiently using GPU/CPU. Operations like on-the-fly grammar checking can be bottlenecks.

- Simplified Rules: Start with a simplified constraint set and gradually add complexity. Consider pre-computing rule adherence where possible.

Q4: How do I quantitatively choose between a PGF and a BCO strategy for my specific project? A: Define your evaluation metrics first, then run a pilot study. Use the following decision protocol:

- Set thresholds for validity (>95%), diversity (scaffold uniqueness >80%), and computational budget.

- Implement a baseline model with a simple PGF (RDKit validity filter).

- If baseline validity is poor, pilot a BCO method (e.g., GVAE).

- If baseline validity is acceptable but novelty/diversity is low, pilot a more sophisticated PGF (e.g., filter + reranking network) and compare metrics to the baseline.

- Compare the performance of both approaches using the table below as a guide.

Quantitative Data Comparison

Table 1: Comparative Performance of PGF vs. BCO in Recent Studies

| Study (Model) | Approach | Validity Rate (%) | Uniqueness (Scaffold) % | Novelty (% not in Train) | Time per 10k Samples (s) |

|---|---|---|---|---|---|

| Gómez-Bombarelli et al. (VAE) | Basic PGF (RDKit) | 87.3 | 65.1 | 70.4 | 12 |

| Kusner et al. (GVAE) | Built-in (Grammar) | 99.9 | 60.5 | 80.2 | 45 |

| Polykovskiy et al. (LatentGAN) | Advanced PGF (Critic) | 94.7 | 85.3 | 91.7 | 28 |

| Putin et al. (Reinforcement) | Built-in (RL Reward) | 95.2 | 78.9 | 86.5 | 120 |

| Hypothetical Ideal Hybrid | BCO (Grammar) + PGF (Rerank) | 99.5 | 82.0 | 88.0 | 55 |

Experimental Protocols

Protocol 1: Evaluating a Post-Generation Filtering Pipeline Objective: To assess the impact of a multi-stage filter on molecular validity and diversity. Methodology:

- Generation: Use a pre-trained generative model (e.g., a standard SMILES-based LSTM or Transformer) to produce a large sample (e.g., 100,000 SMILES strings).

- Filtering Stages:

- Stage 1 (Syntax): Parse each string using a SMILES grammar parser. Discard unparsable strings.

- Stage 2 (Chemistry): Feed parsable SMILES to RDKit (

Chem.MolFromSmiles). Discard molecules that fail to form a sane chemical object. - Stage 3 (Properties): (Optional) Apply property filters (e.g., LogP range, molecular weight) using RDKit descriptors.

- Analysis: For each stage, record the survival rate. Calculate final metrics: validity (final survivors / initial samples), scaffold diversity (unique Bemis-Murcko scaffolds / total survivors), and novelty (survivors not found in the training set).

Protocol 2: Training a Model with Built-in Constraint Optimization (Grammar-VAE) Objective: To train a generative model that inherently produces grammatically valid SMILES strings. Methodology:

- Data Preprocessing: Convert all SMILES in the training set to a context-free grammar (CFG) representation or a parse tree using a tool like

smiles_grammar. - Model Architecture: Implement a VAE where the encoder maps a grammar-derived tree to a latent vector

z, and the decoder reconstructs the tree fromz. The decoder must follow production rules of the grammar. - Training: Train the model using a reconstruction loss (e.g., cross-entropy on rule predictions) and the standard Kullback–Leibler divergence loss. Use teacher forcing.

- Generation: Sample a latent vector