Bayesian Optimization for Drug Discovery: Accelerating Chemical Space Exploration with AI

This article provides a comprehensive guide to Bayesian Optimization (BO) for chemical space exploration, tailored for researchers and drug development professionals.

Bayesian Optimization for Drug Discovery: Accelerating Chemical Space Exploration with AI

Abstract

This article provides a comprehensive guide to Bayesian Optimization (BO) for chemical space exploration, tailored for researchers and drug development professionals. It begins by establishing the foundational principles of BO as a solution to high-cost, black-box optimization in vast molecular landscapes. The methodological section details practical implementation, from acquisition function selection to active learning cycles in virtual screening and molecular design. We address common pitfalls in surrogate model training and hyperparameter tuning for robust performance. Finally, the article validates BO's effectiveness through comparative analysis against traditional methods and grid search, highlighting its transformative potential to reduce experimental cycles and accelerate the discovery of novel therapeutics.

What is Bayesian Optimization? The Foundational Framework for Navigating Chemical Space

The chemical space of potential drug-like molecules is astronomically large, estimated at between 10^60 to 10^100 possible compounds. Exhaustive synthesis and screening of this space is physically and temporally impossible. This necessitates the development of intelligent, guided search strategies, such as Bayesian optimization (BO), to efficiently navigate this vast combinatorial landscape for materials and drug discovery.

Quantitative Data on Chemical Space

Table 1: Estimated Scales of Relevant Chemical Spaces

| Space Description | Estimated Size | Practical Screening Limit (Compounds) | Coverage Fraction |

|---|---|---|---|

| Drug-like (Rule of 5) | ~10^60 | 10^7 (HTS) | 10^-53 |

| Synthetically Feasible (e.g., Enamine REAL) | ~10^11 | 10^6 | ~10^-5 |

| PubChem Database (Actual Compounds) | ~1.1 x 10^8 | - | - |

| Organic molecules ≤ 17 Daltons (C, N, O, S, halogens) | 1.66 x 10^11 | - | - |

| Peptide space (20 aa, length 10) | 10^13 | 10^10 (DNA-encoded) | 10^-3 |

Table 2: Computational Screening Throughput & Cost Estimates

| Method | Compounds/ Day (Est.) | Cost/ Compound (Est.) | Primary Limitation |

|---|---|---|---|

| Traditional HTS | 50,000 - 100,000 | $0.50 - $1.00 | Assay development, false positives |

| Virtual Screening (Docking) | 10^6 - 10^7 | <$0.001 | Force field accuracy, scoring |

| DNA-Encoded Libraries (DEL) | Up to 10^10 | <$0.0001 | Chemistry compatibility, decoding |

| Quantum Chemistry (DFT) | 10^2 - 10^3 | $1 - $10 | Computational expense, system size |

Bayesian Optimization Protocol for Iterative Chemical Space Exploration

Protocol 1: Iterative Library Design & Testing Using Bayesian Optimization

Objective: To identify a hit compound with IC50 < 10 µM for a target protein within 5 iterative cycles, synthesizing < 500 compounds total.

I. Initialization Phase

- Define Search Space: Represent molecules as continuous numerical vectors (descriptors: ECFP6 fingerprints, molecular weight, logP, # of rotatable bonds, etc.). Use a chemical reaction-based rule set (e.g., from RDKit) to define synthetically accessible transformations.

- Acquire Initial Data: Assay a diverse subset of 50-100 compounds from available corporate collection or purchaseable library (e.g., Enamine REAL). Record quantitative activity readout (e.g., % inhibition at 10 µM).

- Construct Surrogate Model: Train a Gaussian Process (GP) regression model using the initial data. The kernel function is typically a combination of a Tanimoto kernel for fingerprints and a Matérn kernel for continuous descriptors.

II. Iterative Cycle (Repeat for N cycles)

- Acquisition Function Optimization: Using the trained GP model, compute the Expected Improvement (EI) or Upper Confidence Bound (UCB) for all candidate molecules in the defined virtual library (millions to billions).

- Candidate Selection & Synthesis:

- Select the top 80-100 candidates proposed by the acquisition function.

- Apply synthetic feasibility filters (e.g., using the

rdchiralPython package for retrosynthesis analysis). - Send the final list of 20-30 top-ranked, synthetically accessible compounds for parallel synthesis.

- Experimental Testing: Purify compounds (>90% purity by LCMS) and test in the biological assay. Include appropriate controls (positive, negative, DMSO).

- Model Update: Augment the training dataset with the new experimental results. Retrain/update the GP surrogate model with the expanded data.

III. Termination & Analysis

- Criteria: Cycle concludes when a compound meeting the primary activity endpoint (IC50 < 10 µM) is identified, or after a maximum of 5 cycles.

- Validation: Confirm dose-response of top hits in triplicate. Assess selectivity against a related anti-target if applicable.

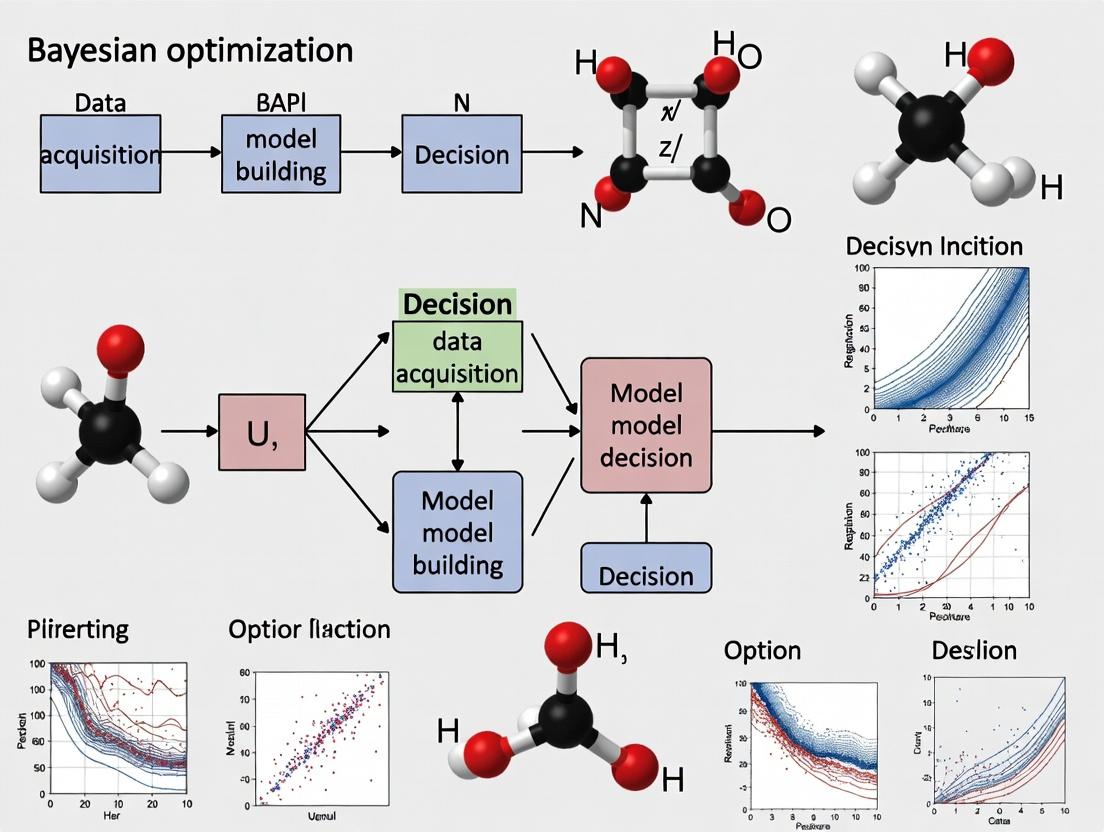

Bayesian Optimization Cycle for Chemical Exploration

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Bayesian-Optimized Chemical Exploration

| Item/Category | Example Vendor/Product | Function in Workflow |

|---|---|---|

| Commercial Screening Libraries | Enamine REAL Space, WuXi GalaXi, ChemDiv Core Libraries | Provide immediate source of diverse, synthesizable compounds for initial data acquisition. |

| Building Blocks for Synthesis | Enamine Building Blocks, Sigma-Aldrich Aldrich CPB, Combi-Blocks | Essential for the rapid parallel synthesis of proposed candidate molecules in each iteration. |

| Chemical Descriptor Software | RDKit (Open Source), MOE, Dragon | Generate numerical representations (fingerprints, descriptors) of molecules for the machine learning model. |

| Bayesian Optimization Platform | Gryffin, Olympus, BoTorch, Google Vizier | Software packages that implement GP regression and acquisition function optimization for scientific domains. |

| High-Throughput Assay Kits | Cisbio HTRF, Promega Glo, Invitrogen LanthaScreen | Enable rapid, quantitative biological testing of synthesized compounds to generate training data for the model. |

| Automated Synthesis Hardware | Chemspeed, Unchained Labs F3, Biolytic LabExpert | Automated platforms for parallel synthesis, purification, and sample handling to increase iteration speed. |

In the context of a thesis on accelerating molecular discovery for therapeutics, Bayesian Optimization (BO) serves as a strategic computational framework for navigating high-dimensional, expensive-to-evaluate chemical spaces. It enables the efficient identification of candidate molecules with desired properties (e.g., binding affinity, solubility, low toxicity) by iteratively guiding experiments, thereby reducing costly synthesis and assay cycles.

Core Principles: A Dual-Component Engine

The power of BO stems from its two interconnected components: a probabilistic surrogate model that approximates the unknown objective function, and an acquisition function that decides where to sample next by balancing exploration and exploitation.

Surrogate Models: Gaussian Processes as the Standard

The most common surrogate model in BO for chemical applications is the Gaussian Process (GP). It provides a full predictive distribution over functions.

Key Protocol: Constructing a GP Surrogate for a Molecular Property Prediction Task

- Input Representation: Encode molecular structures (e.g., SMILES) into numerical feature vectors using descriptors (Morgan fingerprints, RDKit descriptors) or learned representations (from a pre-trained graph neural network).

- Kernel Selection: Choose a kernel function ( k(\mathbf{x}, \mathbf{x}') ) to define covariance. For molecular fingerprints, a Matérn or scaled dot-product kernel is often effective.

- Model Initialization: Start with a small, diverse set of molecules ( \mathbf{X} = {\mathbf{x}1, ..., \mathbf{x}n} ) and their measured properties ( \mathbf{y} = {y1, ..., yn} ).

- Posterior Inference: Compute the posterior GP distribution. For a new molecule ( \mathbf{x}* ), the predictive mean ( \mu(\mathbf{x}) ) and variance ( \sigma^2(\mathbf{x}_) ) are: [ \mu(\mathbf{x}*) = \mathbf{k}^T (K + \sigma_n^2 I)^{-1} \mathbf{y} ] [ \sigma^2(\mathbf{x}_) = k(\mathbf{x}*, \mathbf{x}) - \mathbf{k}_^T (K + \sigman^2 I)^{-1} \mathbf{k}* ] where ( K ) is the kernel matrix for training points, ( \mathbf{k}* ) is the vector of covariances between ( \mathbf{x}* ) and training points, and ( \sigma_n^2 ) is noise variance.

- Hyperparameter Optimization: Optimize kernel hyperparameters (length scales, variance) by maximizing the marginal log-likelihood of the observed data.

Table 1: Common Kernel Functions for Chemical Data

| Kernel | Formula | Typical Use Case in Chemistry |

|---|---|---|

| Matérn 5/2 | ( k(\mathbf{x}, \mathbf{x}') = \sigma_f^2 (1 + \sqrt{5}r + \frac{5}{3}r^2) \exp(-\sqrt{5}r) ) | Default for continuous molecular descriptors; accommodates moderate smoothness. |

| Squared Exponential | ( k(\mathbf{x}, \mathbf{x}') = \sigma_f^2 \exp(-\frac{1}{2} r^2) ) | Assumes very smooth functions; less common for high-dimensional chemical data. |

| Dot Product | ( k(\mathbf{x}, \mathbf{x}') = \sigma_f^2 + \mathbf{x} \cdot \mathbf{x}' ) | Useful for sparse, high-dimensional representations like fingerprints. |

| ( r = \sqrt{(\mathbf{x} - \mathbf{x}')^T \Lambda^{-1} (\mathbf{x} - \mathbf{x}')} ), where ( \Lambda ) is a diagonal matrix of length scales. |

Acquisition Functions: The Decision Maker

The acquisition function ( \alpha(\mathbf{x}) ) uses the GP posterior to score the utility of evaluating a candidate point.

Key Protocol: Implementing and Optimizing an Acquisition Function

Function Selection: Choose an acquisition function based on the optimization goal.

- Expected Improvement (EI): Maximizes the expected improvement over the current best value ( y{\text{best}} ). [ \alpha{\text{EI}}(\mathbf{x}) = \mathbb{E}[\max(y - y{\text{best}}, 0)] = (\mu(\mathbf{x}) - y{\text{best}} - \xi)\Phi(Z) + \sigma(\mathbf{x})\phi(Z) ] where ( Z = \frac{\mu(\mathbf{x}) - y_{\text{best}} - \xi}{\sigma(\mathbf{x})} ), and ( \xi ) is a small exploration parameter.

- Upper Confidence Bound (UCB): Directly optimists an upper confidence bound. [ \alpha_{\text{UCB}}(\mathbf{x}) = \mu(\mathbf{x}) + \kappa \sigma(\mathbf{x}) ] where ( \kappa ) controls the exploration-exploitation trade-off.

- Probability of Improvement (PI): Focuses on the probability that a point improves upon ( y_{\text{best}} ).

Optimization: Maximize ( \alpha(\mathbf{x}) ) over the chemical space (e.g., a large virtual library) to propose the next experiment. This is typically done via quasi-random search, multi-start gradient descent, or genetic algorithms due to the non-convex, combinatorial nature of molecular space.

Table 2: Comparison of Acquisition Functions for Drug Property Optimization

| Function | Key Parameter | Exploration Bias | Advantage in Chemical Context |

|---|---|---|---|

| Expected Improvement (EI) | ( \xi ) (jitter) | Moderate, tunable | Balanced performance; industry standard for sample efficiency. |

| Upper Confidence Bound (UCB) | ( \kappa ) | High, tunable | Explicit control over exploration; good for initial space coverage. |

| Probability of Improvement (PI) | ( \xi ) (jitter) | Low | Focuses on incremental gains; can get stuck in local maxima. |

| Entropy Search (ES) | Heuristic | Strategic | Aims to reduce uncertainty about the optimum location; computationally heavy. |

Experimental Workflow Protocol for Molecular Optimization

Protocol Title: Iterative Bayesian Optimization for Lead Compound Series Expansion

Objective: To identify novel molecular structures with improved target binding affinity (pIC50 > 8.0) within a budget of 50 synthesis/assay cycles.

Materials & Computational Setup:

- Virtual Library: Enamine REAL Space subset (500k compounds).

- Initial Training Set: 20 compounds with known pIC50 from historical assays.

- Property Prediction: GP surrogate model with Matérn 5/2 kernel.

- Acquisition: Expected Improvement (( \xi = 0.01 )).

- Optimizer: Differential evolution for acquisition function maximization.

Procedure:

- Featurization: Encode all molecules in the virtual library and training set using 2048-bit Morgan fingerprints (radius 2).

- Surrogate Training: Train the GP on the initial 20 data points. Optimize hyperparameters via type-II maximum likelihood.

- Proposal Generation: a. Compute the posterior mean ( \mu(\mathbf{x}) ) and variance ( \sigma^2(\mathbf{x}) ) for all compounds in the virtual library. b. Evaluate the EI acquisition function for all compounds. c. Select the top 5 compounds with the highest EI scores that pass a simple chemical novelty filter (Tanimoto similarity < 0.7 to any previously tested compound).

- Experimental Evaluation: a. Synthesize the 5 proposed compounds (protocols detailed in Scientist's Toolkit). b. Perform the standardized binding affinity assay (e.g., FRET-based enzymatic assay). c. Record pIC50 values.

- Iteration: Add the new compound-property data to the training set. Retrain the GP model. Repeat steps 3-4 until the experimental budget is exhausted or a candidate meets the success criterion.

- Analysis: Compare the optimization trajectory (best-found-value vs. iteration) against a random search baseline.

Visualization of the Bayesian Optimization Cycle

Title: Bayesian Optimization Cycle for Chemical Experimentation

Title: Surrogate Model and Acquisition Function Interaction

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for a Bayesian-Optimization-Driven Chemistry Campaign

| Category | Item / Solution | Function in the Protocol |

|---|---|---|

| Chemical Space | Enamine REAL Database | Provides a large, synthesizable virtual library of molecules for proposal generation. |

| Featurization | RDKit (Open-Source) | Generates molecular descriptors (Morgan fingerprints, MQNs) and handles chemical validity checks. |

| Computational Core | GPyTorch / BoTorch | Specialized Python libraries for efficient Gaussian Process modeling and Bayesian Optimization. |

| Synthesis | High-Throughput Automated Synthesis Platform (e.g., Chemspeed Swing) | Enables rapid synthesis of proposed compounds in microtiter plates. |

| Purification | Mass-Directed Automated Purification System (e.g., Waters Prep 150) | Ensures compound purity (>95%) prior to biological testing. |

| Primary Assay | Cell-Free Target Assay Kit (e.g., LanthaScreen Eu Kinase Binding Assay) | Provides the expensive-to-evaluate objective function (e.g., binding affinity) for new compounds. |

| Validation Assay | Cellular Phenotypic Assay (e.g., NanoBRET Target Engagement) | Confirms activity in a more physiologically relevant context for top BO-proposed hits. |

Application Notes

Within the framework of Bayesian optimization (BO) for chemical space exploration, Gaussian Process Regression (GPR) serves as the canonical surrogate model. Its ability to quantify prediction uncertainty makes it uniquely suited for guiding iterative molecular design cycles where acquisition functions (e.g., Expected Improvement) balance exploration and exploitation.

Table 1: Comparison of GPR Kernels for Molecular Property Prediction

| Kernel Name | Mathematical Form (for molecules x, x') | Key Hyperparameters | Best Suited For | Typical RMSE Range (on QM9 benchmark) | ||||

|---|---|---|---|---|---|---|---|---|

| Matérn 5/2 | k(x,x') = σ²(1+√5r+5r²/3)exp(-√5r) |

Length scale (l), Variance (σ²) | Robust, less smooth functions | 0.05 - 0.15 eV (for atomization energy) | ||||

| Squared Exponential (RBF) | `k(x,x') = σ² exp(- | x-x' | ²/2l²)` | Length scale (l), Variance (σ²) | Very smooth, continuous functions | 0.04 - 0.12 eV (for atomization energy) | ||

| Dot Product | k(x,x') = σ² + x · x' |

Variance (σ²) | Linear trends in feature space | 0.15 - 0.30 eV (for atomization energy) | ||||

| Composite (RBF + White Noise) | `k(x,x') = σ_rbf² exp(- | x-x' | ²/2l²) + σn² δxx'` | l, σrbf², σn² | Noisy experimental data | Varies with noise level |

Table 2: Performance of GPR vs. Other Surrogates in BO Cycles

| Surrogate Model | Avg. BO Cycles to Find Optimum (Test on Redox Potential) | Uncertainty Calibration (Average Z-Score) | Computational Cost per Iteration (O(n³)) | Scalability to >10k Datapoints |

|---|---|---|---|---|

| Gaussian Process (GPR) | 12.4 ± 2.1 | ~0.99 | High | Requires approximations (e.g., SVGP) |

| Random Forest | 18.7 ± 3.5 | ~0.65 | Low | Good |

| Neural Network (MLP) | 15.8 ± 2.9 | ~0.45 (poor without ensembles) | Medium | Excellent |

| Bayesian Neural Network | 14.1 ± 2.7 | ~0.85 | Very High | Moderate |

Experimental Protocols

Protocol 2.1: Implementing a GPR Surrogate for Bayesian Optimization of Molecular Properties

Objective: To train a GPR model using molecular fingerprints for predicting a target property (e.g., solubility, binding affinity) and integrate it into a BO loop.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Data Curation: Assemble a dataset of SMILES strings and associated measured property values. Pre-process molecules: standardize tautomers, remove salts, and neutralize charges using RDKit.

- Feature Representation: Convert each SMILES string to a numerical fingerprint. For this protocol, use the 2048-bit Morgan fingerprint (radius=2).

- Dataset Splitting: Split data into an initial training set (n=100-500) and a hold-out validation set. The training set seeds the first BO iteration.

GPR Model Definition: Using GPyTorch or scikit-learn, define a kernel. A recommended starting point is a Matérn 5/2 kernel combined with a White Noise kernel to model experimental error.

Hyperparameter Optimization: Train the model by maximizing the marginal log likelihood (Type II MLE) using an Adam optimizer for 200 iterations. This learns the kernel length scales and noise level.

- Bayesian Optimization Loop:

a. Surrogate Prediction: Use the trained GPR to predict the mean (μ) and variance (σ²) for all molecules in a candidate pool (e.g., ZINC20 subset).

b. Acquisition Function Calculation: Compute the Expected Improvement (EI) for each candidate:

EI(x) = (μ(x) - f(x*)) Φ(Z) + σ(x) φ(Z), whereZ = (μ(x) - f(x*)) / σ(x),f(x*)is the best observed value, and Φ/φ are the CDF/PDF of the standard normal distribution. c. Candidate Selection: Choose the molecule with the maximum EI score. d. Virtual "Experiment": Obtain the target property for the selected molecule from the hold-out set (simulating a lab measurement). e. Data Augmentation & Retraining: Append the new {molecule, property} pair to the training set. Retrain the GPR model. f. Iteration: Repeat steps a-e for a fixed number of cycles (e.g., 50) or until a performance threshold is met. - Validation: Assess performance by tracking the best property value found versus BO iteration, plotted against a random search baseline.

Protocol 2.2: Active Learning for Expensive Computational Simulations

Objective: To use GPR as a surrogate to selectively choose molecules for density functional theory (DFT) calculation, minimizing computational cost.

Procedure:

- Initial Sampling: Select a diverse set of 50 molecules from a large virtual library using k-means clustering on fingerprint space.

- High-Fidelity Calculation: Run DFT simulations (e.g., using Gaussian, ORCA, or QE) to compute the target property (e.g., HOMO-LUMO gap) for the initial set.

- GPR Model Training: Train a GPR as per Protocol 2.1, steps 4-5, using the DFT results.

- Uncertainty Sampling: Predict μ and σ for all remaining molecules in the library. Select the next batch of 10 molecules with the highest predictive uncertainty (σ).

- Iterative Loop: Run DFT on the selected molecules, add results to training data, retrain GPR, and repeat uncertainty sampling. This rapidly improves model accuracy in underrepresented regions of chemical space.

Mandatory Visualizations

Title: Bayesian Optimization Loop with GPR Surrogate

Title: GPR Uncertainty Quantification Drives Query

The Scientist's Toolkit

Table 3: Essential Research Reagents & Software for GPR-Driven Molecular Optimization

| Item Name | Type (Software/Data/Library) | Function in Protocol | Key Notes |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Molecule standardization, fingerprint generation (Morgan/ECFP), descriptor calculation. | Foundation for molecular representation. |

| GPyTorch / scikit-learn | Machine Learning Libraries | Building and training scalable GPR models with various kernels (Matern, RBF). | GPyTorch is preferred for GPU acceleration and flexibility. |

| BoTorch / Dragonfly | Bayesian Optimization Frameworks | Provides acquisition functions (EI, UCB), and handles the BO loop infrastructure. | Built on PyTorch, integrates seamlessly with GPyTorch. |

| ZINC20 / ChEMBL | Public Molecular Databases | Source of candidate molecules for virtual screening and initial training data. | ZINC20 for purchasable compounds, ChEMBL for bioactivity data. |

| ORCA / Gaussian | Quantum Chemistry Software | Provides high-fidelity property labels (e.g., energy, orbital levels) for training data in Protocol 2.2. | Computationally expensive but accurate. |

| Matplotlib / Seaborn | Visualization Libraries | Plotting convergence curves, uncertainty estimates, and molecular property distributions. | Critical for interpreting BO progress and model behavior. |

| PyMOL / CCDC Mercury | Molecular Visualization Software | Visualizing the top-ranked molecules discovered by the BO cycle. | For structural analysis and hypothesis generation. |

In Bayesian optimization (BO) for chemical space exploration, the "search space" is the defined universe of candidate molecules over which the algorithm iteratively proposes experiments. The representation of molecules within this space is the foundational step that determines the efficiency and success of the optimization campaign. This document provides application notes and protocols for defining this space using three core paradigms: classical molecular descriptors, structural fingerprints, and learned latent representations. The choice of representation directly impacts the behavior of the Gaussian Process (GP) surrogate model and the acquisition function in a BO loop.

Core Representation Types: Data and Comparison

The following table summarizes the key characteristics, advantages, and limitations of the three primary representation classes.

Table 1: Comparison of Molecular Representation Schemes for Bayesian Optimization

| Representation Type | Key Examples | Dimensionality | Interpretability | Primary Use in BO | Data Dependency |

|---|---|---|---|---|---|

| Molecular Descriptors | RDKit descriptors (200+), MOE descriptors, Dragon descriptors | Moderate to High (~50-5000) | High | Direct property prediction; space defined by physicochemical rules | Low (calculated ab initio) |

| Structural Fingerprints | ECFP4/Morgan, MACCS Keys, RDKit Fingerprint | Fixed (1024-4096 bits) | Moderate (substructure-based) | Similarity search, kernel-based GP models | Low (calculated ab initio) |

| Latent Representations | SMILES-based VAEs, Graph Neural Network (GNN) embeddings, JT-VAE | Low (~50-256) | Low | Navigating continuous, generative latent spaces; high-dimensional optimization | High (requires training data/model) |

Experimental Protocols

Protocol 3.1: Generating and Standardizing a Descriptor-Based Search Space

Objective: To create a standardized, ready-to-use numerical matrix for BO from a library of SMILES strings.

Materials:

- Input: A

.smior.csvfile containing SMILES strings and optional identifiers. - Software: RDKit (Python), Pandas, NumPy, Scikit-learn.

Procedure:

- Data Loading: Use

Pandasto read the input file. Employrdkit.Chem.PandasToolsto add a ROMol column. - Descriptor Calculation: Instantiate a

rdkit.ML.Descriptors.DescriptorCalculator. Use a predefined list (e.g.,rdkit.Chem.Descriptors.descListfor a comprehensive set). Calculate descriptors for all valid molecules. - Handling Missing/Invalid Values: Remove descriptors with

NaNorInfvalues for >5% of molecules, or impute using column median for minor missing data. - Standardization: Apply

sklearn.preprocessing.StandardScalerto all descriptor columns. Fit the scaler on the entire dataset (or a reference set) to transform data to zero mean and unit variance. - Output: Save the final

(n_molecules, n_descriptors)matrix as a NumPy array (.npy) for integration into the BO framework.

Application Note: High-dimensional descriptor spaces (>1000) may require dimensionality reduction (e.g., PCA) prior to BO to avoid the "curse of dimensionality" degrading GP performance.

Protocol 3.2: Building a Tanimoto Kernel for Fingerprint-Based BO

Objective: To implement a GP surrogate model using a molecular similarity kernel suitable for bit-vector fingerprints.

Materials:

- Input: A list of Morgan fingerprints (radius=2, nBits=2048) for the training set molecules.

- Software: RDKit, GPyTorch or GPflow, NumPy.

Procedure:

- Fingerprint Generation: For each SMILES, generate an ECFP4/Morgan fingerprint:

AllChem.GetMorganFingerprintAsBitVect(mol, radius=2, nBits=2048). - Define Tanimoto Kernel Function: The Tanimoto (Jaccard) similarity for bit vectors A and B is:

T(A,B) = (A·B) / (|A|² + |B|² - A·B). Implement a custom kernel function in your GP library that computes this pairwise similarity matrix. - GP Model Configuration: Construct a GP model using this custom Tanimoto kernel as the covariance function. The GP likelihood is typically Gaussian for continuous properties.

- Model Training: Optimize the GP hyperparameters (output scale, noise variance) by maximizing the marginal log likelihood on your training data (observed molecules and their target property).

- Integration with BO: Use the trained GP to predict the mean and variance at candidate points (new fingerprints) for the acquisition function (e.g., Expected Improvement).

Application Note: The Tanimoto kernel is a valid positive-definite kernel for binary vectors and is the natural choice for structural similarity, directly encoding the "similar property" principle.

Protocol 3.3: Constructing a Continuous Latent Space via Molecular Autoencoder

Objective: To train a variational autoencoder (VAE) to project discrete molecular structures into a continuous, smooth latent space suitable for BO.

Materials:

- Input: A large dataset of canonical SMILES (e.g., 500k+ from ZINC).

- Software: PyTorch or TensorFlow, RDKit, specialized libraries (ChemVAE, JT-VAE).

Procedure:

- Data Preprocessing: Tokenize SMILES strings (character-level or SMILES syntax-aware). Pad/truncate to a uniform length.

- Model Architecture:

- Encoder: A recurrent neural network (GRU/LSTM) or 1D CNN that processes the token sequence into a mean (

μ) and log-variance (logσ²) vector defining a multivariate Gaussian. - Latent Space: Sample a latent vector

zusing the reparameterization trick:z = μ + ε * exp(0.5*logσ²), whereε ~ N(0, I). - Decoder: A complementary RNN that conditions on

zand generates a token sequence (the reconstructed SMILES).

- Encoder: A recurrent neural network (GRU/LSTM) or 1D CNN that processes the token sequence into a mean (

- Training: Use a loss function combining reconstruction cross-entropy and the Kullback-Leibler (KL) divergence loss (weighted by a

βparameter) to enforce a regularized latent space. - Validation: Monitor reconstruction accuracy and validity of novel molecules sampled from the latent space.

- BO Integration: The search space for BO is the continuous

d-dimensional latent space. The objective function involves decoding a proposedzto a SMILES, calculating its properties (via oracle or simulation), and returning the value to the BO loop.

Application Note: The smoothness of the latent space is critical. A well-trained VAE ensures that small steps in latent space correspond to small structural changes, enabling efficient gradient-based acquisition function optimization.

Visualizations

Diagram 1: BO Workflow with Different Molecular Representations

Title: Bayesian Optimization Loop with Molecular Inputs

Diagram 2: Molecular Variational Autoencoder (VAE) Architecture

Title: Molecular Variational Autoencoder (VAE) Training

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Libraries for Molecular Representation

| Tool/Reagent | Type | Primary Function in Search Space Definition | Key Feature |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Calculates molecular descriptors (e.g., rdkit.Chem.Descriptors), generates structural fingerprints (e.g., Morgan/ECFP), and handles SMILES I/O. |

Comprehensive, well-documented, and the de facto standard for Python-based cheminformatics. |

| Dragon | Commercial Descriptor Software | Generates an extremely large set (~5000) of molecular descriptors for QSAR and property prediction. | Unmatched breadth of descriptor types (0D-3D, topological, quantum-chemical). |

| mol2vec | Open-Source Python Library | Generates unsupervised molecular embeddings by applying Word2vec to SMILES substrings. | Provides a fixed-dimensional, continuous representation without a deep learning model. |

| ChemVAE / JT-VAE | Specialized Deep Learning Models | Trains variational autoencoders on molecular graphs (JT-VAE) or SMILES strings (ChemVAE) to create generative latent spaces. | Learns a continuous, interpolatable representation capturing chemical rules and semantics. |

| GPyTorch / GPflow | Gaussian Process Libraries | Enables building of custom GP surrogate models with tailored kernels (e.g., Tanimoto) for BO on molecular representations. | Scalable, flexible, and integrates seamlessly with modern deep learning frameworks. |

| Scikit-learn | Machine Learning Library | Provides essential utilities for data preprocessing (StandardScaler), dimensionality reduction (PCA), and baseline models. | Simplifies the pipeline from raw descriptors to a standardized input matrix for modeling. |

Within the broader thesis on Bayesian Optimization (BO) for chemical space exploration in drug discovery, the Closed-Loop Workflow represents the operational engine. This framework systematically encodes prior knowledge from computational models and historical data, designs optimal experiments to reduce uncertainty, and updates beliefs to iteratively guide the search for molecules with target properties (e.g., high potency, metabolic stability). It transforms a high-dimensional, sparse exploration problem into a data-efficient, adaptive learning process.

Foundational Data & Core Principles

Table 1: Key Quantitative Components of the Bayesian Optimization Loop

| Component | Symbol | Role in Chemical Space Exploration | Typical Value/Range |

|---|---|---|---|

| Prior Mean Function (μ₀(x)) | μ₀(x) | Encodes initial belief about molecular property (e.g., pIC₅₀ predicted by QSAR). | Domain-specific (e.g., 5.0 ± 2.0) |

| Kernel Function (k(x, x')) | k(x, x') | Quantifies molecular similarity; governs model smoothness. | Matérn 5/2 or Tanimoto kernel for fingerprints. |

| Acquisition Function (α(x)) | α(x) | Balances exploration/exploitation to select next compound(s). | Expected Improvement (EI), Upper Confidence Bound (UCB). |

| Batch Size | B | Number of compounds synthesized & tested per iteration. | 4-20 (dictated by lab throughput). |

| Convergence Threshold | Δ | Minimum improvement in best observed property to continue loop. | Δ pIC₅₀ < 0.1 over 3 iterations. |

Detailed Application Notes & Protocols

Application Note 1: Constructing the Informative Prior

- Objective: Initialize the BO surrogate model with a prior distribution that reflects existing knowledge, accelerating convergence.

- Protocol:

- Data Curation: Assemble historical assay data for related chemical series or public datasets (e.g., ChEMBL).

- Feature Representation: Encode molecules using learned representations (e.g., ECFP4 fingerprints, RDKit descriptors, or graph neural network embeddings).

- Prior Model Training: Train a fast, preliminary model (e.g., Random Forest or Gaussian Process) on the historical data to predict the target property.

- Prior Integration: Set the BO prior mean function μ₀(x) to the predictions of this preliminary model. The prior covariance is defined by the chosen kernel with initial hyperparameters.

Application Note 2: The Iterative Closed-Loop Cycle

- Objective: Execute one complete cycle of the BO loop, from candidate selection to model update.

- Protocol:

- Surrogate Model State: A Gaussian Process (GP) surrogate model represents the current belief about the property landscape across chemical space.

- Candidate Selection (Acquisition Optimization):

- Maximize the acquisition function α(x) (e.g., Expected Improvement) over the chemical space.

- Use a hybrid optimizer: genetic algorithm for global search followed by local gradient ascent.

- Select the top-B molecules (batch) that maximize α(x) while incorporating diversity penalties (e.g., via K-means clustering in the feature space) to avoid redundant tests.

- Experimental Erosion (Wet-Lab Testing):

- Synthesize the selected batch of compounds via automated or manual synthesis.

- Subject compounds to the target biochemical or cellular assay. Record quantitative dose-response data (e.g., IC₅₀).

- Posterior Update (Bayesian Inference):

- Append the new experimental data (molecule features Xnew, observed properties ynew) to the training set.

- Update the GP surrogate model via Bayesian inference, recalculating the posterior mean μₜ(x) and posterior variance σ²ₜ(x). This step analytically incorporates the new information, reducing uncertainty around tested regions and refining predictions globally.

Workflow Visualization

Diagram 1: The Bayesian Optimization Closed-Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Implementing the Closed-Loop Workflow

| Item/Reagent | Function in the Workflow | Example/Supplier Note |

|---|---|---|

| Chemical Building Blocks | Enables rapid synthesis of BO-selected compound structures. | COMBI-Blocks, Enamine REAL Space. Diverse, high-quality reactants for automated synthesis. |

| Automated Synthesis Platform | Executes parallel synthesis of batch candidates from BO. | Chemspeed Technologies SWING, Opentrons OT-2. Crucial for rapid iteration. |

| High-Throughput Screening (HTS) Assay Kit | Provides quantitative biological readout for tested compounds. | Target-specific biochemical assay (e.g., Kinase-Glo Max for kinases). Must be robust, miniaturizable. |

| Liquid Handling Robot | Automates assay setup and compound dispensing to ensure data quality and throughput. | Beckman Coulter Biomek, Hamilton Microlab STAR. |

| Molecular Featurization Software | Generates numerical descriptors/representations from chemical structures. | RDKit (open-source), MOE from Chemical Computing Group. |

Advanced Protocol: Handling Multi-Objective & Constrained Optimization

Protocol: Constrained Expected Improvement for Drug-like Compounds

- Objective: Optimize for primary activity (pIC₅₀) while enforcing constraints on drug-like properties (e.g., solubility > -5 logS, synthetic accessibility score < 4.5).

- Methodology:

- Modeling: Build independent GP surrogate models for the primary objective and each constraint property.

- Constrained Acquisition: Modify the Expected Improvement acquisition function to be zero where constraint GPs predict failure:

EI_C(x) = EI(x) * Πᵢ p(gᵢ(x) ≥ threshold). - Candidate Selection: Optimize

EI_C(x)to propose compounds that are likely to be active and drug-like. - Validation: Prioritize compounds passing in silico ADMET filters (e.g., using QikProp) before synthesis.

Diagram 2: Multi-Objective Bayesian Optimization Flow

Data Analysis & Posterior Interpretation

Table 3: Posterior Analysis for Iterative Decision-Making

| Posterior Output | Analytical Action | Guidance for Next Cycle |

|---|---|---|

| Posterior Mean Map (μₜ(x)) | Identify chemical subspaces with highest predicted property values. | Focus synthesis efforts around these "hot spots". |

| Posterior Uncertainty Map (σₜ(x)) | Identify large, unexplored regions of chemical space. | Design exploratory experiments or incorporate diverse library compounds. |

| Kernel Hyperparameters (length-scales) | Perform feature importance analysis; short length-scale indicates high sensitivity to that molecular feature. | Refine molecular representation or focus library design on key substructures. |

Implementing Bayesian Optimization: A Step-by-Step Guide for Molecular Design and Virtual Screening

Choosing the Right Acquisition Function (EI, UCB, PI) for Drug Discovery Objectives

Within the broader thesis on Bayesian optimization (BO) for chemical space exploration, the selection of an acquisition function is the critical strategic decision that guides the iterative search. This protocol details the application of three core functions—Expected Improvement (EI), Probability of Improvement (PI), and Upper Confidence Bound (UCB)—within drug discovery campaigns. The choice directly influences the balance between exploring novel chemical regions (exploration) and refining promising leads (exploitation), impacting the efficiency of identifying compounds with optimal properties like binding affinity, selectivity, and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity).

The following table summarizes the mathematical formulation, core rationale, and key trade-offs for each function, based on a current synthesis of literature and practice.

Table 1: Quantitative and Qualitative Comparison of Key Acquisition Functions

| Function | Mathematical Formulation | Key Parameter | Primary Rationale | Exploration-Exploitation Balance | Best Suited For Drug Discovery Phase |

|---|---|---|---|---|---|

| Probability of Improvement (PI) | PI(x) = Φ((μ(x) - f(x⁺) - ξ) / σ(x)) |

ξ (jitter/trade-off) |

Maximizes the chance of exceeding the current best value (f(x⁺)). |

High exploitation bias; prone to getting stuck in local optima unless ξ is tuned. |

Late-stage lead optimization where fine-tuning a known scaffold is required. |

| Expected Improvement (EI) | EI(x) = (μ(x) - f(x⁺) - ξ)Φ(Z) + σ(x)φ(Z) where Z = (μ(x) - f(x⁺) - ξ)/σ(x) |

ξ (jitter/trade-off) |

Maximizes the expected magnitude of improvement over f(x⁺), considering both mean (μ) and uncertainty (σ). |

Balanced; automatically incorporates uncertainty. Considered the default robust choice. | General-purpose: virtual screening, hit-to-lead, and lead optimization. |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + κ * σ(x) |

κ (exploration weight) |

Optimistic assessment of potential: mean plus weighted uncertainty. | Explicit, tunable via κ. High κ forces exploration. |

Early-stage exploration of vast, uncharted chemical space or targeting multi-objective Pareto fronts. |

Key: μ(x): Posterior mean prediction; σ(x): Posterior standard deviation (uncertainty); Φ: Cumulative distribution function (CDF); φ: Probability density function (PDF); f(x⁺): Current best observed value; ξ, κ: Tunable hyperparameters.

Table 2: Empirical Performance Summary from Benchmark Studies (Representative)

| Study Focus | Dataset/Test Case | Relative Performance Summary (Typical Finding) |

|---|---|---|

| Single-Objective BO | Synthetic Functions, Aqueous Solubility Prediction | EI consistently performs robustly. PI converges quickly but to inferior optima. UCB performance highly dependent on careful κ scheduling. |

| Multi-Objective BO | Drug-like Molecules w/ Affinity & Synthetic Accessibility Scores | UCB-variants (e.g., UCB-EI hybrids) often excel in exploring the Pareto front. EI (via expected hypervolume improvement) is also strong. PI is seldom used. |

| Batch / Parallel BO | Parallelized Molecular Docking | UCB-based methods (e.g., q-UCB) and hallucination-enabled EI (q-EI) are preferred for selecting diverse, informative batches of compounds for simultaneous evaluation. |

Experimental Protocol: Implementing BO with Acquisition Functions for a Binding Affinity Campaign

Objective: To identify a compound with sub-100 nM binding affinity (pIC₅₀ > 8) for a target protein within a budget of 200 molecular simulations (e.g., docking, free energy perturbation).

Materials & Computational Setup

- Hardware: High-performance computing cluster with GPU acceleration.

- Software: Python with BO libraries (BoTorch, GPyOpt), molecular simulation suite (Schrödinger, OpenMM), cheminformatics toolkit (RDKit).

- Chemical Space: Pre-enumerated virtual library of ~50,000 purchasable molecules (e.g., from ZINC20) with relevant descriptors/fingerprints.

Procedure

Step 1: Initialization (Iteration 0)

- Design of Experiment: Randomly select 20 diverse molecules from the virtual library using MaxMin diversity algorithm.

- Initial Evaluation: Run the defined binding affinity assay (e.g., molecular docking with MM/GBSA scoring) on the 20 initial molecules. Record pIC₅₀ values.

- Define Objective: Set the objective to maximize pIC₅₀.

Step 2: Iterative Bayesian Optimization Loop (Iterations 1 to N)

- Model Training: Train a Gaussian Process (GP) surrogate model using all accumulated

(molecule, pIC₅₀)data. Use a Matérn kernel. - Acquisition Function Selection & Optimization:

- Scenario A (General): Use Expected Improvement (EI) with

ξ=0.01. Maximize EI over the entire library using a multi-start optimization strategy. - Scenario B (Exploration-Focused): If the top compounds show high similarity, switch to UCB with

κ=2.5for the next 5 iterations to explore uncertain regions. - Scenario C (Exploitation-Focused): After identifying a promising region (pIC₅₀ > 7), use PI with a low

ξ=0.001to finely search the local chemical space.

- Scenario A (General): Use Expected Improvement (EI) with

- Candidate Selection: Select the molecule (

x*) that maximizes the chosen acquisition function. - Experimental Evaluation: Run the binding affinity assay on

x*. Record the result. - Data Augmentation: Add the new

(x*, pIC₅₀)pair to the training dataset. - Stopping Criterion: Check if pIC₅₀ > 8 (success) or iteration count = 200 (budget exhausted). If not met, return to Step 2.1.

Visual Workflows and Relationships

Title: Bayesian Optimization Workflow for Drug Discovery

Title: Decision Tree for Acquisition Function Selection

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools & Materials for BO-Driven Discovery

| Tool/Reagent | Category | Function in Protocol | Example/Provider |

|---|---|---|---|

| GP Regression Library | Software | Core surrogate model for predicting compound properties and uncertainty. | GPyTorch, scikit-learn, GPflow |

| BO Framework | Software | Implements acquisition functions (EI, UCB, PI) and optimization loops. | BoTorch, GPyOpt, Dragonfly |

| Cheminformatics Toolkit | Software | Handles molecular representation (fingerprints, descriptors), filtering, and substructure search. | RDKit, OpenBabel |

| Molecular Simulation Suite | Software | Provides the "experimental" activity evaluation (e.g., docking, MD, FEP). | Schrödinger Suite, OpenMM, AutoDock Vina |

| Diverse Compound Library | Data | The search space of molecules, often pre-filtered for drug-likeness and purchaseability. | ZINC20, Enamine REAL, MCule |

| High-Throughput Assay | In-silico or Wet-lab | The function evaluator. Must be scalable to 100s-1000s of compounds. | Parallelized Cloud Docking, Automated Microplate Readers (for wet-lab) |

Integrating BO with Molecular Generative Models (VAEs, GANs, Diffusion Models)

The integration of Bayesian Optimization (BO) with deep molecular generative models represents a paradigm shift in the exploration and optimization of chemical space for drug discovery. This approach synergizes the sample efficiency of BO with the high-dimensional representation and generative power of models like Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Diffusion Models. Within a broader thesis on chemical space exploration, this integration provides a robust, iterative, and goal-directed framework for de novo molecular design, moving beyond pure generation to targeted optimization of properties such as binding affinity, solubility, and synthetic accessibility.

Core Paradigm: A learned latent space from a generative model serves as a compact, continuous representation of discrete molecular structures. BO operates within this latent space, using a probabilistic surrogate model (e.g., Gaussian Process) to model the relationship between latent vectors and a target property (objective function). It then proposes new latent points expected to improve the objective, which are decoded into novel molecular structures. This closes the loop between generative AI and experimental design.

Key Advantages:

- Efficiency: Dramatically reduces the number of expensive property evaluations (e.g., wet-lab assays, computational simulations) needed to find high-performing candidates.

- Goal-Directed: Actively steers generation towards regions of chemical space with desired properties, unlike unconditional generation.

- Handles Black-Box Objectives: Optimizes complex, non-differentiable, or noisy objective functions common in chemistry.

Quantitative Comparison of Generative Model-BO Frameworks

Table 1: Performance Comparison of BO-Guided Generative Models on Benchmark Tasks

| Generative Model | Benchmark Task (Dataset) | Success Rate (%) | Avg. Improvement in Objective* | No. of Iterations to Hit Target | Key Reference (Year) |

|---|---|---|---|---|---|

| VAE (JT-VAE) | Penalized LogP Optimization (ZINC) | 76.2 | +4.52 | ~20 | Gómez-Bombarelli et al. (2018) |

| GAN (MolGAN) | QED Optimization (ZINC) | 91.5 | +0.31 | < 10 | De Cao & Kipf (2018) |

| Diffusion Model (GeoDiff) | DRD2 Activity & SA (ZINC) | 99.0 | +0.85 (AUC) | ~15 | Xu et al. (2022) |

| VAE + GNN Predictor | Guacamol Benchmarks | 95.8 (avg.) | Varies by task | 50-100 | Winter et al. (2019) |

| Hierarchical GAN | Multi-Property Optimization (Solubility, LogP) | 88.3 | +1.7 (Composite Score) | ~30 | Putin et al. (2018) |

*Improvement over random sampling from the generative model's prior distribution.

Table 2: Characteristics of Generative Models for BO Integration

| Characteristic | VAEs | GANs | Diffusion Models |

|---|---|---|---|

| Latent Space | Continuous, regularized. Smooth interpolation. | Often discontinuous. Can have "holes". | Typically operates in input space or a learned latent; noise space is structured. |

| Training Stability | Stable. Prone to posterior collapse. | Unstable; requires careful tuning. | High stability, but computationally intensive. |

| Sample Diversity | Good, but can be less sharp. | High, sharp samples. | Very high, state-of-the-art quality. |

| Ease of BO Integration | High. Natural continuous space for GP. | Moderate. May require latent space regularization. | Moderate to High. Can optimize in noise or latent space. |

| Key Challenge for BO | Balancing reconstruction and property loss. | Navigating non-smooth latent manifolds. | High-dimensional optimization; longer generation time. |

Detailed Experimental Protocols

Protocol 3.1: Benchmarking BO-VAE for Penalized LogP Optimization

Objective: To optimize the penalized octanol-water partition coefficient (Penalized LogP) of generated molecules.

Materials: ZINC250k dataset, JT-VAE model, Gaussian Process (GP) with Matern kernel, acquisition function (Expected Improvement).

Procedure:

- Model Pre-training: Train a JT-VAE on the ZINC250k dataset to learn a continuous latent space

z(e.g., 56 dimensions) and a decoder for molecular graphs. - Latent Space Mapping: Encode the entire training set into latent vectors

Z_train. - Initial Data Collection: Randomly sample 100 points from

Z_train, decode them to SMILES, and compute their Penalized LogP scores (y_train) using the RDKit-based objective function. - BO Loop (for n = 100 iterations):

a. Surrogate Model Training: Train a GP on the current set of latent vectors and corresponding scores (

Z_obs,y_obs). b. Acquisition Optimization: Find the latent pointz_nextthat maximizes the Expected Improvement (EI) acquisition function:z_next = argmax EI(z | GP). c. Evaluation: Decodez_nextto a molecular graph and compute its Penalized LogP scorey_next. d. Data Augmentation: Append the new pair (z_next,y_next) to the observation set. - Validation: Assess the top 20 molecules identified by BO for validity, uniqueness, and structural novelty relative to the training set.

Protocol 3.2: BO-Driven Diffusion for Targeted Activity (DRD2)

Objective: To generate novel molecules with high predicted activity against the dopamine receptor DRD2 while maintaining favorable synthetic accessibility (SA).

Materials: GuacaMol/DRD2 subset, GraphMVP or GeoDiff model, Random Forest (RF) surrogate, Noisy Expected Improvement (NEI).

Procedure:

- Diffusion Model Training: Train a diffusion model on molecular graphs to learn the forward (noising) and reverse (denoising) processes.

- Define Objective:

F(m) = p(active | m) - λ * SA_score(m), wherep(active)is from a pre-trained DRD2 predictor. - Initialize: Generate 50 initial molecules via random sampling from the diffusion model and evaluate

F(m). - Latent/Noise Optimization: a. Map or associate generated molecules with their initial noise variables or a latent representation from the diffusion process. b. Train an RF surrogate model on the noise/latent vectors and their objective scores. c. Propose new noise/latent vectors by optimizing the NEI acquisition function over the surrogate. d. Use the diffusion model's reverse process to decode the proposed vectors into new molecules.

- Iterate: Repeat step 4 for 50-100 cycles, maintaining a batch size of 5-10 molecules per iteration.

- Analysis: Perform clustering on generated actives and visualize the chemical trajectory in a reduced dimensional space (e.g., t-SNE of molecular fingerprints).

Visualizations

Diagram 1: BO-Generative Model Integration Workflow

Diagram 2: Comparative Model-Specific BO Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Computational Tools for BO-Generative Model Research

| Tool / Library | Category | Primary Function | Key Notes |

|---|---|---|---|

| RDKit | Cheminformatics | Molecule manipulation, fingerprinting, descriptor calculation, and basic property calculation (e.g., LogP, SA). | Foundational open-source toolkit. Essential for objective function implementation. |

| PyTorch / TensorFlow | Deep Learning | Framework for building, training, and deploying generative models (VAEs, GANs, Diffusion). | PyTorch is prevalent in recent research. Autograd enables gradient-based acquisition optimization. |

| BoTorch / GPyTorch | Bayesian Optimization | Provides state-of-the-art GP models, acquisition functions, and optimization utilities. | Built on PyTorch. Supports batch, multi-fidelity, and constrained BO. |

| DeepChem | ML for Chemistry | High-level APIs for molecular datasets, featurization, and model architectures. | Simplifies pipeline construction. Includes graph neural networks and molecular metrics. |

| GuacaMol | Benchmarking | Suite of standardized tasks for assessing generative model performance. | Critical for fair comparison. Includes objectives like similarity, isomer generation, and medicinal chemistry tasks. |

| MOSES | Benchmarking | Another benchmarking platform with standardized datasets (ZINC), metrics, and baseline models. | Compliments GuacaMol. Focus on distribution-learning metrics. |

| Open Babel / ChemAxon | Cheminformatics | File format conversion, standardization, and advanced chemical property calculations. | Commercial options (ChemAxon) offer enterprise-grade stability and features. |

| Docker / Singularity | Containerization | Ensures computational environment and dependency reproducibility. | Crucial for replicating published work and deploying pipelines on clusters. |

Within the broader thesis on Bayesian optimization for chemical space exploration, this protocol details the application of active learning (AL) as a sequential decision-making strategy to maximize the discovery of hits in virtual screening campaigns. It frames the virtual screening pipeline as an adaptive Bayesian optimization loop, where an acquisition function balances exploration and exploitation to select the most informative compounds for subsequent assay.

Application Notes

Core Principles

Active learning iteratively selects compounds from a large, unlabeled library (10^6 - 10^9 molecules) for labeling (i.e., experimental assay or accurate simulation) based on a machine learning model's uncertainty or expected improvement. This contrasts with random screening or single-pass docking, dramatically improving hit rates and resource efficiency.

Key Quantitative Outcomes from Recent Studies

Table 1: Benchmark Performance of Active Learning vs. Conventional Virtual Screening

| Study (Year) | Library Size | Method | Hit Rate (Active) | Hit Rate (Random) | Fold Improvement |

|---|---|---|---|---|---|

| Yang et al. (2022) | 500,000 | AL w/ Graph Neural Net | 31.2% | 5.1% | 6.1x |

| Ghanakota et al. (2023) | 2.1 million | Bayesian Optimization | 15.7% | 2.3% | 6.8x |

| Janet et al. (2024) | 850,000 | Uncertainty Sampling (Docking) | 12.4% | 3.8% | 3.3x |

| Graff et al. (2023) | 5 million | Expected Improvement | 8.9% | 1.2% | 7.4x |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational & Experimental Materials

| Item | Function | Example Tools/Platforms |

|---|---|---|

| Molecular Library | Source of candidate compounds for screening. | ZINC20, Enamine REAL, Mcule, in-house collections. |

| Descriptor/Fingerprint Generator | Encodes molecular structures into numerical vectors for ML. | RDKit (Morgan fingerprints), Mordred descriptors, E3FP. |

| Docking Software | Provides initial, computationally cheap activity proxy. | AutoDock Vina, Glide, FRED, QuickVina 2. |

| Machine Learning Model | Predicts activity and quantifies uncertainty. | Gaussian Process, Random Forest, Deep Neural Networks, Graph Convolutional Networks. |

| Acquisition Function | Balances exploration/exploitation to select next compounds. | Expected Improvement, Upper Confidence Bound, Thompson Sampling. |

| Assay Platform | Provides experimental "labels" (activity data) for selected compounds. | Biochemical ELISA, SPR, Cell-based viability assay (e.g., CellTiter-Glo). |

| Automation & Orchestration | Manages iterative AL workflow and data flow. | Python (scikit-learn, PyTorch), Nextflow, Kubernetes, Kubeflow. |

Experimental Protocols

Protocol A: Initial Model Training & Acquisition Setup

Objective: Establish a baseline model from a seed set of known actives/inactives.

- Seed Data Curation: Compile a minimum of 50-100 known active and 200-500 known inactive compounds from public data (ChEMBL) or prior assays.

- Feature Calculation: For all seed molecules and the large unlabeled library, compute molecular features (e.g., 2048-bit Morgan fingerprints, radius=2).

- Model Training: Train a probabilistic classifier (e.g., Gaussian Process Classifier or Random Forest with calibrated probabilities) on the seed data.

- Acquisition Function Definition: Select a function (e.g., Expected Improvement, EI). EI for a molecule x is:

EI(x) = (μ(x) - f(x_best) - ξ) * Φ(Z) + σ(x) * φ(Z), where Z = (μ(x) - f(x_best) - ξ)/σ(x), μ is predicted mean, σ is uncertainty, Φ and φ are CDF and PDF of normal distribution, and ξ is an exploration parameter (typically 0.01).

Protocol B: Iterative Active Learning Cycle (Detailed)

Objective: Execute a single cycle of compound selection, experimental testing, and model update.

Materials: Trained model (Protocol A), unlabeled compound library, 96- or 384-well assay plates, reagents for target-specific assay.

Procedure:

- Prediction & Prioritization:

- Use the current model to predict mean activity (μ) and uncertainty (σ) for all compounds in the unlabeled library.

- Calculate the acquisition function score (e.g., EI) for each compound.

- Rank compounds by this score and select the top N (e.g., 96) for assay. Include 5-10% of randomly selected compounds for validation.

- Experimental Assay:

- Physically procure or synthesize the selected compounds.

- Prepare compound plates at 10 mM concentration in DMSO.

- Perform the target-specific activity assay (e.g., inhibition of enzyme activity at 10 μM). Include controls (positive, negative, DMSO-only).

- Process raw data (e.g., luminescence, absorbance) to determine percent inhibition or IC50.

- Apply a threshold (e.g., >50% inhibition) to label compounds as "active" or "inactive."

- Model Retraining:

- Append the newly assayed compounds and their labels to the training dataset.

- Retrain the machine learning model on the expanded dataset.

- Remove the newly assayed compounds from the unlabeled library pool.

- Iteration: Repeat steps 1-3 for a predefined number of cycles (e.g., 5-10) or until a target number of hits is identified.

Protocol C: Validation & Triaging of Final Hits

Objective: Confirm activity and prioritize top candidates for further development.

- Dose-Response Confirmation: Re-test all putative hits from the AL campaign in a dose-response format (e.g., 10-point, 1:3 serial dilution) to determine accurate IC50/EC50 values.

- Counter-Screening: Test confirmed hits against related but undesired targets to assess selectivity.

- Computational ADMET Profiling: Use QSAR models (e.g., in ADMETLab 3.0) to predict properties like solubility, metabolic stability, and CYP inhibition.

- Structural Clustering & Inspection: Cluster hits by fingerprint similarity and visually inspect representatives for sensible binding poses and chemical tractability.

Visualizations

Diagram Title: Active Learning Cycle for Virtual Screening

Diagram Title: Thesis Context of This Protocol

Multi-Objective Bayesian Optimization for Balancing Potency, ADMET, and Synthesizability

Within the broader thesis on Bayesian optimization (BO) in chemical space exploration, this document details its application to the central challenge of multi-objective drug discovery. The goal is to efficiently navigate the high-dimensional chemical space to identify compounds that simultaneously optimize multiple, often competing, properties: biological potency, favorable ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiles, and chemical synthesizability. Traditional sequential screening is inefficient and often fails to find optimal compromises. Multi-Objective Bayesian Optimization (MOBO) provides a principled framework to model these objectives and intelligently select compounds for synthesis and testing, thereby accelerating the identification of viable lead candidates.

Application Notes

1. Core MOBO Workflow for Compound Design The MOBO cycle iteratively refines a probabilistic surrogate model (typically Gaussian Processes) of each objective function based on accumulated experimental data. An acquisition function, such as Expected Hypervolume Improvement (EHVI) or ParEGO, guides the selection of the next batch of compounds to evaluate by balancing exploration of uncertain regions and exploitation of known high-performance areas in the multi-objective space. The outcome is a Pareto front of non-dominated solutions, representing optimal trade-offs between the objectives.

2. Key Objectives and Their Descriptors

- Potency (e.g., pIC50): Predicted using structure-based (docking scores, protein-ligand interaction fingerprints) or ligand-based (quantitative structure-activity relationship - QSAR) models.

- ADMET Properties: Modeled as a composite of individual predictions:

- Absorption: Caco-2 permeability, P-gp substrate liability.

- Metabolism: CYP450 inhibition (e.g., 2C9, 2D6, 3A4).

- Toxicity: hERG channel inhibition, Ames mutagenicity.

- Physicochemical: LogP, LogD, topological polar surface area (TPSA).

- Synthesizability: Scored using computational tools like Synthetic Accessibility (SA) score, retrosynthetic complexity (RAscore), or via integration with a forward synthesis predictor.

3. Quantitative Data Summary

Table 1: Representative Benchmark Results of MOBO vs. Random Search Data from simulated benchmarks using public datasets (e.g., ChEMBL).

| Optimization Method | Number of Iterations | Hypervolume (Normalized) | Pareto Front Size | Average Synthetic Accessibility Score |

|---|---|---|---|---|

| Random Search | 100 | 0.32 | 8 | 4.2 |

| MOBO (EHVI) | 100 | 0.78 | 15 | 3.5 |

| MOBO (ParEGO) | 100 | 0.71 | 12 | 3.7 |

Table 2: Target Ranges for Key ADMET and Physicochemical Parameters

| Property | Optimal Range | High-Risk Range | Prediction Model Used |

|---|---|---|---|

| LogP | 1 - 3 | >5 | AlogP |

| Topological PSA (Ų) | < 140 | >180 | RDKit |

| hERG pIC50 | < 5.0 | ≥ 5.0 | Proprietary QSAR |

| CYP3A4 Inhibition (IC50) | > 10 µM | ≤ 10 µM | Random Forest Classifier |

| Caco-2 Permeability | > 20 10⁻⁶ cm/s | < 5 10⁻⁶ cm/s | PAMPA-based Model |

Experimental Protocols

Protocol 1: Initialization of the MOBO Cycle Objective: To establish the initial dataset and surrogate models for a new chemical series.

- Compound Library Curation: Select a diverse set of 20-50 compounds from the chemical series of interest, ensuring availability for synthesis and testing.

- Baseline Profiling: Synthesize and experimentally profile all initial compounds for:

- Potency: Determine IC50/EC50 in primary biochemical or cellular assay (see Protocol 2).

- Key ADMET: Measure LogD (pH 7.4), microsomal stability, and hERG inhibition (see Protocol 3).

- Descriptor Calculation: For all compounds (initial and in virtual library), compute molecular descriptors/fingerprints (e.g., ECFP4, RDKit descriptors) and predicted properties using the QSAR models from Table 2.

- Model Training: Train independent Gaussian Process (GP) models for each objective (Potency, ADMET Score, SA Score) using the initial experimental data. Standardize all output values.

Protocol 2: Primary Potency Assay (Cell-Based Example) Objective: Determine the half-maximal inhibitory concentration (IC50) of a compound. Reagents: Target-expressing cell line, assay medium, reference agonist/antagonist, test compounds (10 mM DMSO stocks), detection kit (e.g., cAMP, calcium flux). Procedure:

- Seed cells in 384-well plates at optimal density. Incubate (37°C, 5% CO₂) for 24h.

- Prepare 10-point, 1:3 serial dilutions of test compounds in assay buffer. Include DMSO vehicle and reference control wells.

- Aspirate medium and add compound dilutions. Pre-incubate for 30 minutes.

- Add EC80 concentration of agonist to stimulate pathway response. Incubate per assay kinetics.

- Add detection reagent, incubate, and read signal on a plate reader (e.g., luminescence).

- Data Analysis: Normalize signals to vehicle (100%) and reference control (0%). Fit dose-response curve using a four-parameter logistic model to calculate IC50. Convert to pIC50 (-log10(IC50)).

Protocol 3: High-Throughput ADMET Screening Triad Objective: Obtain key ADMET parameters for a batch of MOBO-selected compounds (10-20).

- LogD Measurement (Shake Flask Method):

- Add compound to a vial containing equal volumes (0.5 mL) of 1-octanol and phosphate buffer (pH 7.4).

- Vortex vigorously for 30 min, then centrifuge to separate phases.

- Analyze concentration in each phase by UPLC/UV. LogD = log10([Compound]ₒcₜₐₙₒₗ / [Compound]բᵤբբᵣ).

- Microsomal Stability Assay:

- Incubate 1 µM compound with human liver microsomes (0.5 mg/mL) in NADPH-regenerating system at 37°C.

- At t = 0, 5, 15, 30, 45 min, remove aliquot and quench with cold acetonitrile.

- Analyze by LC-MS/MS to determine remaining parent compound. Calculate half-life (t₁/₂).

- hERG Inhibition (Patch Clamp Surrogate: FluxOR Assay):

- Use HEK293 cells stably expressing the hERG channel. Load cells with FluxOR dye.

- Add test compound and incubate for 10 min.

- Add stimulus solution containing high K⁺ to depolarize cells and open hERG channels. Measure fluorescence.

Visualizations

Title: MOBO Cycle for Drug Property Optimization

Title: Multi-Objective Trade-off & Pareto Front

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in MOBO-driven Discovery | Example/Note |

|---|---|---|

| Chemical Starting Materials | Building blocks for synthesizing MOBO-proposed compounds. | Diverse, readily available commercial libraries (e.g., Enamine REAL). |

| Molecular Descriptor Software | Generates numerical features representing chemical structures for GP models. | RDKit (open-source), MOE, Dragon. |

| Gaussian Process Modeling Library | Core engine for building surrogate models of each objective. | GPyTorch, scikit-learn, or proprietary implementations. |

| Acquisition Function Optimizer | Solves the high-dimensional problem of selecting the next best compounds. | BoTorch (for EHVI), custom evolutionary algorithms. |

| High-Throughput ADMET Assay Kits | Provide standardized, rapid in vitro profiling of key properties. | CYP450 Inhibition (Promega), Caco-2 Permeability (Corning), hERG FluxOR (Invitrogen). |

| Automated Synthesis Platform | Enables rapid compound synthesis based on MOBO selections. | Chemspeed, Unchained Labs, or flow chemistry setups. |

| Laboratory Information System (LIMS) | Tracks compound identity, experimental data, and links to calculated descriptors. | Critical for maintaining the central MOBO database. |

This application note contributes to the broader thesis on Bayesian Optimization (BO) in chemical space exploration by providing a pragmatic, experimentally validated case study. It demonstrates how BO can iteratively guide the simultaneous optimization of molecular properties (e.g., potency, solubility) and facilitate scaffold hopping—discovering novel core structures with retained or improved activity—thereby de-risking intellectual property and physicochemical profiles in drug discovery campaigns.

Core Bayesian Optimization Protocol

Objective: To identify compounds within a defined virtual library (>50,000 molecules) that maximize a multi-parameter objective function, F, within 50 sequential synthesis-test cycles.

A. Pre-Experimental Setup Protocol

- Define Chemical Space: Enumerate a virtual library based on available building blocks and robust reaction schemes (e.g., amide coupling, Suzuki-Miyaura).

- Featurization: Compute numerical descriptors (e.g., ECFP6 fingerprints, molecular weight, cLogP, topological polar surface area) for all virtual compounds.

- Define Objective Function: Construct a composite desiderability function. F(compound) = w₁ * Normalized(pIC₅₀) + w₂ * Normalized(Solubility) + w₃ * Penalty(Lipinski Violations) (Example weights: w₁=0.6, w₂=0.3, w₃=0.1).

- Initialize: Select 8-12 diverse seed compounds from the virtual library using MaxMin algorithm and synthesize/test to create initial training data.

B. Iterative BO Cycle Protocol

- Model Training: Train a Gaussian Process (GP) regression model using the historical data (compound features → experimental F score).

- Acquisition Function Optimization: Calculate the Expected Improvement (EI) for all compounds in the virtual library using the trained GP.

- Compound Selection: Select the top 4-6 compounds with the highest EI scores for synthesis.

- Experimental Testing:

- Potency Assay: Perform dose-response in target enzyme assay (e.g., 10-point, 1:3 serial dilution, n=2). Fit curve to determine pIC₅₀.

- Solubility Assay: Use kinetic turbidimetric solubility assay (pH 7.4 phosphate buffer).

- Data Integration: Append new experimental results to the training dataset.

- Iterate: Repeat steps 1-5 for 8-12 cycles or until a candidate meets all target product profile (TPP) criteria.

Key Experimental Data & Results

Table 1: Optimization Progression for Lead Series A

| BO Cycle | Compounds Tested | Best pIC₅₀ | Best Solubility (µg/mL) | Best Objective Function (F) |

|---|---|---|---|---|

| Initial Seeds | 10 | 6.2 | 15 | 0.41 |

| 3 | 24 | 7.1 | 8 | 0.58 |

| 6 | 42 | 7.8 | 22 | 0.76 |

| 9 | 60 | 8.5 | 52 | 0.92 |

Table 2: Scaffold Hop Discovery via BO (Cycle 7)

| Parameter | Original Lead (Scaffold A) | BO-Identified Hop (Scaffold B) |

|---|---|---|

| Core Structure | Benzimidazole | Indole |

| pIC₅₀ | 7.8 | 8.1 |

| Solubility (µg/mL) | 22 | 105 |

| clogP | 4.1 | 2.8 |

| Synthetic Steps | 5 | 4 |

| Patent Novelty | Known | Novel |

Visualizations

Bayesian Optimization Iterative Cycle for Drug Discovery

BO Balances Exploitation and Exploration in Chemical Space

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Featured Experiments

| Item / Reagent | Function in Protocol | Key Consideration |

|---|---|---|

| Building Block Libraries (e.g., carboxylic acids, boronic esters, amines) | Provide the chemical diversity for virtual library enumeration and rapid synthesis. | Ensure chemical stability, orthogonality of protecting groups, and availability in milligram to gram quantities. |

| High-Throughput Chemistry Kit (e.g., peptide synthesizer, flow reactor) | Enables rapid synthesis of 4-12 compounds per BO cycle as directed by the algorithm. | Compatibility with anhydrous solvents and air-sensitive reagents is often required. |

| Target Protein / Enzyme Assay Kit | Provides the essential biological components for reliable, quantitative potency (pIC₅₀) measurement. | Assay signal-to-noise (Z'-factor >0.5) and reproducibility are critical for high-quality BO training data. |

| Pre-Solubilized DMSO Stock Plates | Used to prepare serial dilutions for biochemical and solubility assays from synthesized powders. | Use low-evaporation, sealed plates. Final DMSO concentration must be consistent and non-perturbing (e.g., ≤1%). |

| Kinetic Turbidity Solubility Assay Plate | Enables rapid, medium-throughput measurement of aqueous solubility (µg/mL) in physiologically relevant buffer. | Includes positive/negative controls and a reference standard curve for quantitation. |

| Gaussian Process Software (e.g., GPyTorch, scikit-learn, custom scripts) | The core machine learning model that predicts compound performance and uncertainty from features. | Must be configured for the chosen molecular descriptors and allow custom composite objective functions. |

Overcoming Pitfalls: Troubleshooting and Advanced Optimization Strategies for Robust BO Performance

Managing Noisy and Sparse Data from Biological Assays

Introduction & Thesis Context Within the broader thesis on Bayesian optimization (BO) for chemical space exploration, managing noisy and sparse biological assay data is a foundational challenge. BO's efficiency in guiding iterative molecular design cycles is critically dependent on the quality of the initial training data and the handling of uncertainty in subsequent measurements. Noisy data (high experimental variance) and sparse data (few data points across a vast chemical space) can lead to poor surrogate model performance, misguided acquisition function decisions, and ultimately, failed optimization campaigns. This document outlines protocols and analytical strategies to mitigate these issues, ensuring robust BO performance in early-stage drug discovery.

Core Challenges in Quantitative Analysis

Table 1: Common Sources of Noise and Sparsity in Biological Assays

| Source Type | Specific Example | Impact on Data | Typical Z'-factor Range |

|---|---|---|---|

| Biological Noise | Cell passage number variability, differential receptor expression. | High well-to-well variance, outliers. | 0.3 - 0.5 (Moderate) |

| Technical Noise | Pipetting inaccuracy, edge effects in microplates, reagent instability. | Systematic error, increased CVs (>20%). | 0.0 - 0.3 (Poor) |

| Assay Sparsity | Limited HTS data on target, few confirmed actives in a chemical series. | Inadequate coverage of chemical space for model training. | N/A |

| Compound Sparsity | Poor solubility, compound aggregation, fluorescence interference. | False negatives/inactives, erroneous dose-response. | Can drive Z' negative |

Protocol 1: Pre-BO Data Curation and Quality Control

Objective: To establish a robust, standardized dataset for initializing the Bayesian optimization surrogate model.

Materials & Workflow:

- Data Aggregation: Collect all historical assay data for the target. Include primary readouts (e.g., % inhibition, IC₅₀) and associated metadata (compound structure, batch ID, plate layout, control values).

- Noise Filtering & Normalization:

- Calculate plate-wise Z'-factor and signal-to-noise ratio (SNR). Exclude entire plates with Z' < 0.5 from the training set.

- Apply robust intra-plate normalization (e.g., using median positive and negative controls) to minimize plate-to-plate systematic bias.

- Identify and flag statistical outliers using methods like Median Absolute Deviation (MAD), but do not automatically exclude—review for biological plausibility.

- Uncertainty Quantification: For each measurement, assign an estimate of variance (σ²). This can be:

- Empirical: Replicate-derived standard error.

- Assay-derived: A function of the mean and historical coefficient of variation (CV) for the assay (e.g., σ = mean * CV).

- Default variance for single-point data can be set based on the assay's historical performance.

- Sparsity Mitigation: Enrich the initial training set with relevant public domain data (e.g., ChEMBL) and computationally predicted activity scores from QSAR models, clearly labeling the source and associated higher uncertainty.

Visualization 1: Data Curation Workflow for BO Initialization

Title: Data Curation Workflow for BO Initialization

Protocol 2: Experimental Design for Iterative BO Cycles

Objective: To guide the selection of compounds for synthesis and testing in each BO batch, balancing exploration (sparse regions) and exploitation (potent regions) while accounting for noise.

Detailed Protocol:

- Surrogate Model Configuration: Use a Gaussian Process (GP) model with a Matérn kernel. Input molecular fingerprints (e.g., ECFP4) and assay uncertainty estimates (σ²) as heteroscedastic noise.

- Acquisition Function Selection: Employ the Noisy Expected Improvement (NEI) or Predictive Entropy Search, which explicitly model measurement noise.

- Batch Design: For a batch size of n (e.g., 24 compounds):

- Optimize the acquisition function to select the top n x 3 candidates.

- Apply a Diversity Filter: Cluster candidates by structural fingerprints (e.g., Tanimoto similarity). Select the top-ranked compound from each major cluster to ensure chemical diversity and mitigate over-sampling a local, potentially noisy region.

- Include Replication Compounds: Randomly select 2-3 compounds from previous batches for re-testing within the same experimental batch to provide a live estimate of inter-batch noise.

- Experimental Execution: Test the final batch in a single, randomized plate layout to minimize technical confounding. Include standard control compounds in replicates.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Robust Assay Development

| Reagent/Material | Function & Rationale |

|---|---|

| Cell Line with Inducible Target Expression | Controls for target-specific effects vs. cytotoxicity; reduces biological noise from constitutive expression. |

| NanoBRET or HTRF Assay Kits | Homogeneous, ratiometric assays minimize washing steps and plate handling errors, reducing technical noise. |

| QC Reference Compound Set | A panel of tool compounds (high/low potency, aggregators) run in every assay batch to monitor performance drift. |

| Automated Liquid Handler with Acoustic Dispensing | Enables non-contact, precise nanoliter dispensing of DMSO stocks, reducing solvent effects and pipetting error. |

| 384-well Low Binding, Solid-Bottom Microplates | Minimizes compound adsorption and provides optimal optical characteristics for read consistency. |

Visualization 2: Bayesian Optimization Cycle with Noise Handling

Title: BO Cycle with Noise-Aware Protocols

Data Integration & Reporting

Table 3: Example Output from a Single BO Batch

| Compound ID | Predicted pIC₅₀ (μ) | Predicted Uncertainty (σ) | Experimental pIC₅₀ | Replicate Result | Notes |

|---|---|---|---|---|---|

| BO-B1-01 | 6.7 | 0.4 | 6.5 | 6.6 | New chemotype, confirmed. |