AI-Powered Molecular Design: Revolutionizing Drug Discovery from Virtual Screening to Clinical Candidates

This article provides a comprehensive analysis of the transformative role of Artificial Intelligence (AI) in molecular design for researchers, scientists, and drug development professionals.

AI-Powered Molecular Design: Revolutionizing Drug Discovery from Virtual Screening to Clinical Candidates

Abstract

This article provides a comprehensive analysis of the transformative role of Artificial Intelligence (AI) in molecular design for researchers, scientists, and drug development professionals. We first explore the foundational shift from traditional methods to AI-driven approaches, defining key concepts like generative chemistry and predictive modeling. We then detail the core methodologies, including Generative Adversarial Networks (GANs), Reinforcement Learning (RL), and Transformer models, with specific applications in de novo design and property prediction. The discussion addresses critical challenges such as data scarcity, model interpretability (the 'black box' problem), and synthetic feasibility, offering strategies for optimization. Finally, we evaluate validation frameworks, benchmark AI performance against traditional computational chemistry, and assess the real-world impact through case studies of AI-derived molecules entering clinical trials. This resource synthesizes current capabilities, practical hurdles, and the future trajectory of AI in accelerating biomedical innovation.

From Serendipity to Simulation: How AI is Redefining the Foundations of Molecular Design

Within the broader thesis on the role of artificial intelligence in molecular design research, the transition from Traditional High-Throughput Screening (HTS) to AI-Driven Virtual Screening (VS) represents a fundamental paradigm shift. This shift is characterized by a move from brute-force empirical testing to predictive, knowledge-driven computational intelligence, accelerating the discovery of novel bioactive compounds.

Core Methodologies

Traditional High-Throughput Screening (HTS) Protocol

Traditional HTS is an empirical, experimental process for identifying hits from large chemical libraries.

- Assay Development: A biochemical or cellular assay is designed to measure a specific target activity (e.g., enzyme inhibition, receptor antagonism). The signal-to-noise ratio and Z'-factor (>0.5) are optimized.

- Library Preparation: Compound libraries (100,000 to 2+ million compounds) are solubilized in DMSO and arrayed in high-density microtiter plates (384, 1536-well).

- Automated Screening: Robotic liquid handlers transfer assay reagents and compounds. Plates are incubated and read by detectors (e.g., spectrophotometers, fluorimeters).

- Primary Screen & Hit Identification: Activity is measured for all compounds. Hits are identified using a statistical threshold (e.g., >3σ from mean activity or >50% inhibition/activation).

- Confirmation & Counterscreening: Primary hits are re-tested in dose-response and against related targets to confirm activity and assess selectivity.

- Hit-to-Lead: Confirmed hits undergo initial medicinal chemistry optimization for potency and physicochemical properties.

AI-Driven Virtual Screening (VS) Protocol

AI-Driven VS uses machine learning models to computationally prioritize compounds for experimental testing.

- Data Curation & Featurization: High-quality bioactivity data (e.g., Ki, IC50) is assembled from public (ChEMBL, PubChem) and proprietary sources. Compounds are encoded as numerical features (e.g., ECFP fingerprints, molecular descriptors, 3D pharmacophores).

- Model Training & Validation: A machine learning model (e.g., Random Forest, Graph Neural Network, Transformer) is trained to predict activity from features. The dataset is split into training, validation, and hold-out test sets using temporal or structural clustering to avoid bias.

- Virtual Library Generation/Enumeration: A virtual chemical space is defined, often using large make-on-demand libraries (e.g., Enamine REAL, ZINC) containing billions of synthesizable molecules.

- In Silico Screening: The trained AI model scores and ranks every compound in the virtual library by predicted activity or binding affinity.

- Post-Filtering & Inspection: Top-ranked compounds are filtered by medicinal chemistry rules (e.g., Lipinski's Rule of Five, PAINS filters) and inspected via molecular docking or expert review.

- Experimental Validation: A small subset (50-500) of top-ranked, diverse compounds is selected for synthesis or acquisition and tested in the biological assay.

Quantitative Comparison

Table 1: Comparative Metrics of HTS vs. AI-Driven VS

| Metric | Traditional HTS | AI-Driven Virtual Screening |

|---|---|---|

| Typical Library Size | 10^5 - 10^6 physical compounds | 10^8 - 10^11 virtual compounds |

| Primary Screen Cost | $0.10 - $0.50 per compound | < $0.00001 per compound (compute cost) |

| Time for Primary Screen | Weeks to months | Hours to days |

| Hit Rate | 0.01% - 0.1% (often lower) | 5% - 30% (model-dependent) |

| Required Starting Data | Assay only | Large, consistent bioactivity dataset |

| Key Output | Experimental activity of whole library | Predicted activity & prioritized shortlist |

| Resource Intensity | High (reagents, robotics, compounds) | High (compute, data science expertise) |

Table 2: Retrospective Validation Study Results (2020-2024)

| Study (Target) | HTS Hit Rate | AI-VS Enrichment (EF1%)* | AI Model Type | Citation |

|---|---|---|---|---|

| SARS-CoV-2 Mpro | Not reported | 30.2 (vs. 1.2 for random) | Graph Neural Network | Science, 2021 |

| Dopamine Receptor D2 | 0.8% | 14.5 | Deep Learning / SVM | Nat. Commun., 2023 |

| Tankyrase | 0.01% | 22.0 | Bayesian Optimization | J. Med. Chem., 2022 |

*Enrichment Factor at 1% of screened library (EF1%): (Hit rate in top 1%) / (Random hit rate).

Visualized Workflows

Traditional HTS Experimental Workflow

AI-Driven Virtual Screening Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents & Materials for Featured Methods

| Item | Function in HTS | Function in AI-VS |

|---|---|---|

| Target Protein / Cell Line | Biological source for assay development. Purified protein or engineered cell line. | Not used directly in screening. Used for final experimental validation of AI-prioritized compounds. |

| Fluorescent/Luminescent Probe | Generates quantifiable signal proportional to target activity in microtiter plates. | Not applicable. |

| DMSO & Compound Libraries | Solvent for compound storage. Physical collections from vendors (e.g., MLSMR). | Source of training data structures. Virtual libraries in digital format (SDF, SMILES). |

| Microtiter Plates (384/1536-well) | Reaction vessels for miniaturized, parallel assays. | Not applicable. |

| Robotic Liquid Handlers | Automate reagent and compound dispensing for ultra-high-throughput. | Not applicable. |

| Bioactivity Databases (ChEMBL, PubChem) | Reference for assay design and hit comparison. | Primary source of labeled data for supervised machine learning model training. |

| Molecular Featurization Software (RDKit, MOE) | Basic compound analysis. | Critical for converting chemical structures into numerical feature vectors (descriptors, fingerprints). |

| AI/ML Platform (TensorFlow, PyTorch) | Not typically used. | Core engine for building, training, and deploying predictive models. |

| High-Performance Computing (HPC) Cluster | For data analysis. | Essential for processing billion-scale libraries and training complex deep learning models. |

Within the broader thesis on the role of artificial intelligence in molecular design research, understanding the distinction between machine learning (ML) and deep learning (DL) is fundamental. This guide provides a technical framework for researchers, scientists, and drug development professionals to select and apply appropriate AI methodologies for chemical discovery.

Foundational Concepts: ML vs. DL

Machine Learning is a subset of AI where algorithms learn patterns from data to make predictions or decisions without being explicitly programmed for the task. Deep Learning is a specialized subset of ML based on artificial neural networks with multiple layers (deep architectures) that automatically learn hierarchical feature representations.

Table 1: Core Comparative Analysis of ML and DL in Chemical Research

| Aspect | Traditional Machine Learning (ML) | Deep Learning (DL) |

|---|---|---|

| Data Dependency | Effective with small to medium datasets (10^2-10^4 samples). | Requires large datasets (10^4-10^7 samples) for robust performance. |

| Feature Engineering | Critical. Requires domain expertise to design molecular descriptors (e.g., LogP, MW, topological indices). | Automatic. Learns relevant features directly from raw or minimally processed data (e.g., SMILES, graphs). |

| Model Interpretability | Generally high (e.g., decision rules from Random Forest, coefficients in SVM). | Often a "black box"; requires specialized techniques (e.g., attention mechanisms, saliency maps). |

| Computational Cost | Lower; can run on standard CPUs. | High; typically requires GPUs/TPUs for training. |

| Typical Chemical Applications | QSAR modeling, virtual screening with fixed fingerprints, reaction yield prediction. | De novo molecular generation, protein-ligand binding affinity prediction (e.g., AlphaFold), spectral analysis. |

Key Methodologies and Experimental Protocols

Protocol for a Traditional ML QSAR/QSPR Workflow

This protocol outlines the standard pipeline for building a Quantitative Structure-Activity/Property Relationship model using classical ML algorithms.

A. Data Curation & Splitting:

- Source a labeled dataset of molecules with associated target property/activity.

- Apply chemical standardization (e.g., using RDKit): neutralize charges, remove duplicates, handle tautomers.

- Split data into training (≈70%), validation (≈15%), and test (≈15%) sets using stratified splitting or time-split to avoid data leakage.

B. Feature Engineering (Descriptor Calculation):

- Calculate a comprehensive set of molecular descriptors (e.g., using RDKit or Mordred).

- 1D/2D Descriptors: Molecular weight, LogP, topological polar surface area (TPSA), atom counts, bond counts, fingerprint vectors (ECFP, MACCS keys).

- 3D Descriptors (if structures available): Pharmacophore features, molecular moment of inertia. Requires geometry optimization.

- Perform feature preprocessing: imputation of missing values, variance filtering, and normalization (e.g., StandardScaler).

C. Model Training & Validation:

- Train multiple ML algorithms (e.g., Random Forest, Gradient Boosting, Support Vector Regression/Classification) on the training set.

- Optimize hyperparameters (e.g., via grid/random search) using the validation set. Key metrics: RMSE, MAE for regression; ROC-AUC, precision-recall for classification.

- Apply rigorous cross-validation (e.g., 5-fold GroupKFold if molecules share scaffolds) to avoid overfitting.

D. Model Evaluation & Interpretation:

- Evaluate the final optimized model on the held-out test set.

- Interpret the model using feature importance rankings (e.g., Gini importance from Random Forest) or SHAP (SHapley Additive exPlanations) values to identify critical molecular features.

Protocol for a Deep Learning-Based Molecular Property Prediction

This protocol details an approach using a Graph Neural Network (GNN), which directly operates on the molecular graph structure.

A. Data Representation & Preparation:

- Represent each molecule as a graph: atoms as nodes, bonds as edges.

- Node Features: Encode atom type, degree, hybridization, formal charge, aromaticity, etc., as a feature vector.

- Edge Features: Encode bond type (single, double, triple), conjugation, and stereo.

- Use a graph data loader to batch graphs of varying sizes for efficient GPU processing (e.g., PyTorch Geometric, DGL).

B. Model Architecture (Graph Neural Network):

- Input Layer: Takes the batched graph (node + edge features).

- Graph Convolution Layers (Message Passing): 3-5 layers where nodes aggregate feature information from their neighbors (e.g., using GCN, GAT, or MPNN convolutions). This builds up progressively more complex representations of molecular substructures.

- Readout/Pooling Layer: Aggregates the updated node features from the final layer into a single, fixed-length graph-level representation (e.g., global mean/sum pooling).

- Prediction Head: Fully connected neural network layers map the graph-level vector to the final property prediction (a scalar for regression, a probability vector for classification).

C. Training & Evaluation:

- Loss Function: Use Mean Squared Error (MSE) for regression or Cross-Entropy for classification.

- Optimization: Use Adam optimizer with a learning rate scheduler (e.g., ReduceLROnPlateau).

- Monitor performance on the validation set after each epoch to prevent overfitting. Apply early stopping if validation loss plateaus.

- Final evaluation is performed on the completely independent test set.

Visualization of Workflows

Title: Traditional ML QSAR Workflow

Title: Deep Learning GNN Workflow

Table 2: Quantitative Performance Comparison (Representative Examples)

| Task | Best ML Model (Descriptor-Based) | Performance | Best DL Model | Performance | Key Insight |

|---|---|---|---|---|---|

| ESOL (Solubility) | Random Forest on Mordred Descriptors | RMSE ≈ 0.70 log mol/L | AttentiveFP (GNN) | RMSE ≈ 0.59 log mol/L | DL outperforms with automated feature learning. |

| FreeSolv (Hydration Free Energy) | XGBoost on ECFP4 + RDKit Descriptors | RMSE ≈ 1.10 kcal/mol | Chemprop (MPNN) | RMSE ≈ 0.95 kcal/mol | DL shows advantage even on smaller datasets (~600 molecules). |

| Tox21 (Classification) | SVM on Combined Fingerprints | Avg. ROC-AUC ≈ 0.84 | DeepTox (Multitask DNN) | Avg. ROC-AUC ≈ 0.86 | DL excels at joint learning across multiple related tasks. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software & Libraries for AI-Driven Molecular Design

| Item (Tool/Library) | Category | Primary Function | Typical Use Case |

|---|---|---|---|

| RDKit | Cheminformatics | Open-source toolkit for molecule I/O, descriptor calculation, and substructure operations. | Generating SMILES, calculating molecular fingerprints, and basic 2D/3D molecular manipulations. |

| PyTorch / TensorFlow | Deep Learning | Core frameworks for building and training neural networks with GPU acceleration. | Implementing custom DL architectures like GNNs for molecular graphs. |

| PyTorch Geometric / DGL-LifeSci | Specialized DL | Libraries built on top of PyTorch specifically for graph-based deep learning. | Easily constructing GNN models for molecules with built-in convolutions and dataloaders. |

| Scikit-learn | Machine Learning | Comprehensive library for classical ML algorithms, data preprocessing, and model evaluation. | Training Random Forest/SVM models, performing cross-validation, and pipeline construction for QSAR. |

| Mordred | Descriptor Calculation | Calculates a vast array (>1800) of 2D/3D molecular descriptors efficiently. | Providing a comprehensive feature vector for traditional ML models beyond simple fingerprints. |

| SHAP | Model Interpretation | Explains the output of any ML/DL model by computing feature importance values. | Interpreting predictions of complex models to identify "chemical drivers" of activity. |

| Omig | Chemical Data | A commercial solution offering curated, ready-to-model chemical datasets. | Sourcing high-quality, pre-processed bioactivity data to train predictive models. |

| DeepChem | Ecosystem | An open-source toolkit integrating multiple DL and ML frameworks for chemistry. | Rapid prototyping of AI models on chemical data with standardized pipelines. |

The integration of AI into molecular design is not a choice of ML or DL, but a strategic selection based on problem constraints. Traditional ML offers interpretability and efficiency for well-defined tasks with limited data, making it a robust choice for many QSAR campaigns. Deep Learning, particularly graph-based approaches, provides a powerful, end-to-end framework for discovering complex, non-intuitive relationships in large-scale chemical data, enabling breakthroughs in de novo design and complex property prediction. The future of molecular design research lies in the adept application of both paradigms within the AI toolkit.

The integration of artificial intelligence into molecular design research is revolutionizing the discovery of novel materials and therapeutics. This paradigm shift is underpinned by three interconnected pillars: Generative Chemistry, which creates new molecular structures; Predictive QSAR/QSPR, which forecasts molecular properties; and sophisticated Molecular Representations that enable machines to interpret chemical space. Together, these components form a closed-loop AI-driven pipeline, accelerating the transition from hypothesis to candidate compound in drug and materials development.

Generative Chemistry

Generative chemistry employs deep learning models to propose novel molecular structures with desired properties, de novo.

Core Architectures & Current Data (2023-2024):

| Model Type | Example Architectures | Reported Novelty Rate* | Typical Library Size Generated | Primary Application in Literature |

|---|---|---|---|---|

| VAE | JT-VAE, ChemVAE | 40-60% | 10^4 - 10^5 | Scaffold hopping, lead optimization |

| GAN | ORGAN, MolGAN | 30-50% | 10^4 - 10^6 | Generating drug-like molecules |

| Transformer | GPT-based (ChemGPT) | 70-90% | 10^5 - 10^7 | Large-scale exploration of chemical space |

| Diffusion | GeoDiff, DiffLinker | 60-85% | 10^4 - 10^5 | 3D molecule generation, binding pose |

*Novelty rate: Percentage of generated molecules not found in the training set.

Detailed Experimental Protocol for a Standard Molecular Generation & Validation Workflow:

- Data Curation: Assemble a dataset of molecules (e.g., from ChEMBL, ZINC) in SMILES format. Filter for drug-likeness (e.g., Lipinski's Rule of Five).

- Model Training: Train a generative model (e.g., a VAE).

- Encoder: A bidirectional GRU or Transformer encodes a SMILES string into a latent vector

z. - Latent Space: Regularize with a Kullback–Leibler divergence loss to ensure continuity.

- Decoder: A second GRU decodes a sampled latent vector

zback into a SMILES string. - Loss: Combined reconstruction loss (cross-entropy for SMILES tokens) and KL loss.

- Encoder: A bidirectional GRU or Transformer encodes a SMILES string into a latent vector

- Sampling: Sample random vectors from the latent space or interpolate between known molecules and decode them.

- Post-processing & Filtering: Use RDKit to validate chemical correctness of generated SMILES. Apply chemical filters (e.g., PAINS, synthetic accessibility score).

- Initial Evaluation: Calculate key physicochemical properties (cLogP, MW, TPSA) and compare distribution to the training set. Assess internal diversity using Tanimoto similarity metrics.

- Downstream Prediction: Input generated, valid molecules into a pre-trained QSAR model to predict activity against a target of interest.

- Prioritization & Experimental Testing: Select top candidates for in vitro synthesis and assay.

Title: AI-Driven Molecular Generation & Validation Workflow

Predictive QSAR/QSPR

Quantitative Structure-Activity/Property Relationship models use mathematical relationships to predict biological activity or physicochemical properties from molecular descriptors.

Performance Benchmarks of Modern AI-based QSAR Models (2024):

| Model Class | Typical Algorithm(s) | Avg. RMSE (Regression)* | Avg. AUC-ROC (Classification)* | Key Advantage |

|---|---|---|---|---|

| Traditional ML | Random Forest, SVM | 0.8 - 1.2 (LogP) | 0.85 - 0.90 | Interpretability, small data |

| Graph Neural Networks | MPNN, GCN, GAT | 0.6 - 0.9 (LogP) | 0.90 - 0.95 | Learns features directly |

| Transformer-based | ChemBERTa, SMILES-BERT | 0.7 - 1.0 (LogP) | 0.88 - 0.93 | Pre-training on large corpora |

*Example benchmarks on common datasets like ESOL (Solubility), HIV, BACE. RMSE for LogP prediction; AUC for binary activity classification.

Detailed Protocol for Constructing a GNN-based QSPR Model:

- Dataset Splitting: Use a stringent scaffold split (e.g., Bemis-Murcko) to separate training, validation, and test sets, ensuring generalizability to novel chemotypes.

- Molecular Graph Representation: For each molecule, create a graph where atoms are nodes and bonds are edges.

- Node Features: Include atom type, degree, hybridization, formal charge, aromaticity.

- Edge Features: Include bond type (single, double, triple), conjugation, stereo.

- Model Architecture (Message Passing Neural Network - MPNN):

- Message Passing Phase (3-5 steps): Each node aggregates feature vectors from its neighbors.

m_v = Σ_{u∈N(v)} M(h_v, h_u, e_uv), whereMis a learned function. - Update Phase: The node updates its own feature vector:

h_v' = U(h_v, m_v), whereUis a GRU or MLP. - Readout Phase: After

Tsteps, a global pooling (sum, mean, or attention) aggregates all node features into a single graph-level representation:h_G = R({h_v^T | v ∈ G}). - Prediction Head: Pass

h_Gthrough a multi-layer perceptron (MLP) to produce the final prediction (e.g., pIC50).

- Message Passing Phase (3-5 steps): Each node aggregates feature vectors from its neighbors.

- Training: Use Mean Squared Error (regression) or Cross-Entropy loss (classification). Optimize with Adam. Employ early stopping on the validation set.

- Validation & Interpretation: Assess on the held-out test set. Use gradient-based attribution (e.g., Guided Grad-CAM) to highlight sub-structures important for the prediction.

Title: Graph Neural Network QSAR Model Architecture

Molecular Representations

The representation of a molecule is a critical first step that determines what patterns an AI model can learn.

Comparison of Molecular String Representations:

| Representation | Format Example (Aspirin) | Key Characteristics | Validity Guarantee? | Primary Use Case |

|---|---|---|---|---|

| SMILES | CC(=O)Oc1ccccc1C(=O)O | Compact, human-readable. Canonical forms are unique. | No | Standard input for many ML models, database storage. |

| SELFIES | [C][C][=Branch1][C][=O][O][C][Ring1][=Branch1][C][=O][O] | Grammar-based. Every string corresponds to a valid molecule. | Yes | Robust generation in AI models, avoids invalid structures. |

| InChI | InChI=1S/C9H8O4/c1-6(10)13-8-5-3-2-4-7(8)9(11)12/h2-5H,1H3,(H,11,12) | Unique, standardized, non-proprietary. | Yes (by design) | International identifier, database linking. |

The Scientist's Toolkit: Research Reagent Solutions for AI Molecular Design

| Item/Category | Function in AI Molecular Design Research | Example Tools/Libraries |

|---|---|---|

| Chemical Databases | Source of training data for generative and predictive models. Provide experimentally validated structures and properties. | ChEMBL, PubChem, ZINC, BindingDB |

| Cheminformatics Suites | Process, validate, and featurize molecules. Calculate descriptors, apply filters, and handle file formats. | RDKit, Open Babel, ChemAxon |

| Deep Learning Frameworks | Build, train, and deploy generative (VAE, GAN) and predictive (GNN) models. | PyTorch, TensorFlow, JAX |

| Specialized ML Libraries | Provide pre-built implementations of state-of-the-art molecular ML models and utilities. | DeepChem, DGL-LifeSci, PyTorch Geometric |

| Molecular Generation Platforms | Integrated environments for de novo design, often with property optimization. | REINVENT, MOSES, GuacaMol |

| High-Performance Computing (HPC) | Accelerate model training and large-scale virtual screening. | GPU clusters (NVIDIA), Cloud computing (AWS, GCP) |

| Automated Synthesis Planning | Assess synthetic accessibility and propose routes for AI-generated molecules. | ASKCOS, Retro*, IBM RXN |

| Laboratory Automation | Physically execute the synthesis and testing of AI-prioritized candidates. | Liquid handlers, automated reactors, HTS platforms |

The central thesis of modern molecular design research posits that artificial intelligence (AI) is not merely an adjunct tool but a foundational paradigm shift, enabling the predictive in silico navigation of chemical space with unprecedented speed and accuracy. This evolution from traditional computational chemistry to the AI-accelerated era represents a continuum of increasing abstraction, automation, and predictive power. This whitepaper delineates this historical progression, anchoring each phase within the context of its contribution to the overarching goal of rational molecular design.

The Pre-AI Era: Foundational Computational Methods

The bedrock of computational chemistry was established on first-principles quantum mechanics and molecular mechanics.

2.1 Quantum Chemistry Methods These methods solve approximations of the Schrödinger equation to compute electronic structure.

- Hartree-Fock (HF): The mean-field starting point, neglecting explicit electron correlation.

- Post-Hartree-Fock Methods: Introduce electron correlation at high computational cost (e.g., MP2, CCSD(T), the "gold standard" for small molecules).

- Density Functional Theory (DFT): Uses electron density rather than wavefunctions, offering a favorable cost/accuracy trade-off and dominating materials and catalysis research.

2.2 Molecular Mechanics and Dynamics

- Molecular Mechanics (MM): Uses classical force fields (e.g., AMBER, CHARMM, OPLS) to calculate potential energy based on bonded and non-bonded terms.

- Molecular Dynamics (MD): Solves Newton's equations of motion for atoms under an MM force field, simulating temporal evolution. Enhanced sampling methods (e.g., metadynamics) tackle the timescale problem.

Table 1: Comparison of Core Pre-AI Computational Methods

| Method | Theoretical Basis | Typical System Size | Key Limitation | Role in Molecular Design |

|---|---|---|---|---|

| Hartree-Fock (HF) | Quantum Mechanics (Wavefunction) | 10s of atoms | Poor treatment of electron correlation | Historical foundation, rarely used directly |

| CCSD(T) | Quantum Mechanics (Wavefunction) | <50 atoms | O(N⁷) scaling, computationally prohibitive | Benchmark accuracy for small molecules |

| Density Functional Theory (DFT) | Quantum Mechanics (Electron Density) | 100s of atoms | Accuracy depends on functional choice | Workhorse for geometry, reactivity, spectra |

| Molecular Dynamics (MD) | Classical Newtonian Mechanics | 100,000s of atoms | Force field accuracy; microsecond timescales | Conformational sampling, binding pathways |

2.3 Key Experimental Protocol: Protein-Ligand Binding Free Energy Calculation (FEP/MBAR) A pivotal application of classical methods is the calculation of binding free energy (ΔG_bind) for lead optimization.

- Protocol: Alchemical Free Energy Perturbation (FEP) with Multistate Bennett Acceptance Ratio (MBAR) analysis.

- System Preparation: Ligand and protein are parameterized with a force field (e.g., GAFF2/AMBER). The system is solvated in an explicit water box and neutralized with ions.

- Equilibration: Energy minimization, followed by NVT and NPT ensemble MD simulations to relax the system.

- Alchemical Transformation: A series of non-physical intermediate states (λ windows) are defined to morph the ligand of interest (Ligand A) into a reference ligand (Ligand B), both bound and unbound.

- Sampling: Independent MD simulations are run at each λ window for both the bound and unbound complexes.

- Analysis: The MBAR algorithm analyzes energy differences across all λ windows to compute the relative ΔΔG_bind between Ligand A and B with high precision (~1 kcal/mol).

Title: Free Energy Perturbation (FEP) Workflow

The AI-Accelerated Era: Paradigm Shifts in Prediction and Generation

AI, particularly deep learning, has revolutionized computational chemistry by learning directly from data, bypassing explicit physical laws.

3.1 Key AI Methodologies

- Supervised Learning for Property Prediction: Graph Neural Networks (GNNs) and message-passing networks (e.g., MPNNs) directly map molecular graphs or 3D structures to quantum mechanical properties, solubility, or toxicity. They replace expensive DFT calculations for high-throughput screening.

- Generative AI for De Novo Design: Models like Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Transformer-based architectures learn the distribution of chemical space and generate novel, synthetically accessible structures with optimized properties.

- Reinforcement Learning (RL) for Optimization: RL agents are trained to iteratively modify molecular structures to maximize a multi-parametric reward function (e.g., binding affinity, synthetic accessibility, ADMET).

Table 2: Comparison of AI-Driven vs. Traditional Methods for Key Tasks

| Task | Traditional Method (Typical Time) | AI-Driven Method (Typical Time) | Accuracy/Speed Gain |

|---|---|---|---|

| Potential Energy Surface | DFT Calculation (Hours-Days) | GNN Potential (Milliseconds) | ~10³-10⁵ speedup, near-DFT accuracy |

| Protein-Ligand Affinity | FEP/MD (Days-Weeks) | Trained GNN/CNN Scorer (Seconds) | ~10⁴ speedup, lower absolute precision |

| De Novo Molecule Generation | Fragment-Based Design (Manual) | Generative Model (Seconds for 1000s) | Explores vast chemical space autonomously |

| Retrosynthesis Planning | Expert Knowledge / Rule-Based | Transformer Model (Seconds) | Predicts routes with expert-level accuracy |

3.2 Key Experimental Protocol: Training a Graph Neural Network for HOMO-LUMO Gap Prediction This protocol exemplifies the supervised learning paradigm.

- Dataset Curation: Acquire a large, curated quantum chemistry dataset (e.g., QM9, ~134k molecules). Features include atom types, bonds, and coordinates. Targets are DFT-calculated HOMO-LUMO gaps.

- Model Architecture: Implement a Message Passing Neural Network (MPNN). Each node (atom) and edge (bond) is embedded as a feature vector. Messages are passed between connected nodes for T steps, aggregating neighborhood information.

- Training Loop: Split data (80/10/10 train/validation/test). Use Mean Absolute Error (MAE) loss. Optimize with Adam. Employ early stopping based on validation loss.

- Validation & Deployment: The trained model predicts the gap for new molecules in milliseconds, enabling virtual screening of organic semiconductors.

Title: GNN Training for Electronic Property Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Platforms for AI-Accelerated Computational Chemistry

| Item / Solution | Function & Explanation |

|---|---|

| Schrödinger Suite | Industry-standard platform integrating classical (FEP, Glide) and ML (e.g., AutoQSAR) tools for drug discovery. |

| OpenMM | High-performance, open-source toolkit for molecular dynamics simulations on GPUs. |

| PyTorch Geometric / DGL | Python libraries built on PyTorch/TensorFlow specifically for developing and training Graph Neural Networks. |

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, descriptor generation, and model interpretation. |

| AlphaFold2 (ColabFold) | Provides highly accurate protein structure predictions, essential for structure-based design when no crystal structure exists. |

| DiffDock | An AI model that performs diffusion-based docking of small molecules to protein pockets, outperforming traditional scoring functions. |

| MOE (Molecular Operating Environment) | Integrated software with classical computational methods and growing AI/ML components for molecular modeling. |

| ANI-2x / MACE | Pre-trained, transferable neural network potentials that provide DFT-level accuracy at MD speed for organic molecules and materials. |

The evolution has culminated in a synergistic workflow where AI handles high-throughput screening, generative design, and fast scoring, while rigorously validated physics-based methods (FEP, DFT) provide ultimate validation on prioritized candidates. This hybrid, AI-accelerated pipeline is radically compressing the design-make-test-analyze cycle, directly fulfilling the thesis that AI is the transformative engine for next-generation molecular design research.

Why Now? The Convergence of Big Data, Algorithmic Advances, and Computational Power

The acceleration of artificial intelligence (AI) in molecular design research is not a gradual trend but a recent explosion. This whitepaper examines the critical convergence of three technological pillars—Big Data, Algorithmic Advances, and Computational Power—that has uniquely positioned this moment in history for transformative progress in drug discovery.

The Three Converging Pillars

Big Data: The Foundational Fuel

The digitization of chemical and biological research has generated unprecedented datasets. These are not merely large in volume but rich in annotation, enabling supervised learning at scale.

Key Quantitative Data on Molecular Datasets:

| Dataset Name | Approximate Size (Compounds) | Data Type | Primary Use in AI |

|---|---|---|---|

| PubChem | 114+ Million | Chemical Structures, Bioactivities | Pre-training, QSAR, Virtual Screening |

| ChEMBL | 2.4+ Million | Curated Bioactivity Data | Target-based Model Training |

| ZINC20 | 750+ Million (purchasable) | 3D Conformers | Generative Chemistry & Docking |

| Protein Data Bank (PDB) | 200,000+ Structures | 3D Protein Structures | Structure-Based Drug Design |

| UniProt | 200+ Million Sequences | Protein Sequences | Protein Language Model Training |

Table 1: Representative public data sources fueling AI in molecular design. Sizes are approximate as of 2024.

Experimental Protocol: High-Throughput Screening (HTS) Data Generation

- Objective: To generate dose-response bioactivity data for thousands of compounds against a specific protein target.

- Methodology:

- Target Preparation: Purify the recombinant protein of interest (e.g., a kinase).

- Assay Development: Configure a fluorescence- or luminescence-based biochemical assay to measure target activity.

- Compound Library Dispensing: Use acoustic or pintool dispensers to transfer nanoliter volumes of compounds from library plates into assay plates.

- Automated Liquid Handling: Add the target protein and substrate to all wells robotically.

- Incubation & Readout: Incubate plates under controlled conditions and measure signal using a plate reader.

- Data Processing: Normalize signals, fit dose-response curves, and calculate IC50/EC50 values for each compound.

- Curation: Annotate data with chemical descriptors (SMILES, fingerprints) and store in a structured database (e.g., ChEMBL format).

Algorithmic Advances: The Intelligent Engine

The shift from traditional machine learning (e.g., Random Forest) to deep learning architectures has provided the tools to learn complex patterns from high-dimensional data.

Key Advancements:

- Graph Neural Networks (GNNs): Natively model molecules as graphs (atoms as nodes, bonds as edges), learning representations that capture topology and features.

- Transformers & Attention Mechanisms: Excel at processing sequential (SMILES, protein sequences) and structured data, enabling models like Molecular Transformers for reaction prediction.

- Generative Models: Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and diffusion models can design novel molecular structures with optimized properties de novo.

- Reinforcement Learning (RL): Guides generative models by using scoring functions (e.g., predicted binding affinity, synthetic accessibility) as rewards.

Computational Power: The Enabling Infrastructure

Specialized hardware and scalable cloud computing provide the necessary cycles for training massive models on enormous datasets.

Key Quantitative Data on Computational Demand:

| Model Type | Example | Estimated Training Compute (FLOPs) | Typical Hardware |

|---|---|---|---|

| Large Protein Language Model | ESM-2 (15B params) | ~10^21 | Cluster of 512+ NVIDIA A100 GPUs |

| Generative Chemistry Model | GFlowNet/ DiffDock | ~10^19 | 8-64 NVIDIA V100/A100 GPUs |

| Traditional QSAR Model | Random Forest | ~10^14 | Single Multi-core CPU |

Table 2: Comparative computational requirements for different AI models in molecular design.

Experimental Protocol: Training a Graph Neural Network for Property Prediction

- Objective: Train a GNN to predict a molecular property (e.g., solubility) from its structure.

- Methodology:

- Data Preparation: From a source like PubChem, extract SMILES strings and corresponding measured solubility (LogS). Split data into training (80%), validation (10%), and test (10%) sets.

- Molecular Featurization: Use a library (e.g., RDKit) to convert each SMILES into a graph representation: node features (atom type, hybridization), edge features (bond type), and a global label (LogS).

- Model Architecture: Implement a GNN (e.g., Message Passing Neural Network) using PyTorch Geometric. The network comprises:

- Multiple message-passing layers to aggregate neighbor information.

- A global pooling layer (e.g., global mean) to generate a molecule-level embedding.

- Fully connected layers for regression output.

- Training Loop:

- Hardware: Configure a server with at least one NVIDIA GPU (e.g., A100, V100).

- Use the Adam optimizer and Mean Squared Error (MSE) loss.

- Perform mini-batch training. For each epoch, evaluate the model on the validation set.

- Implement early stopping based on validation loss.

- Evaluation: Apply the final model to the held-out test set and report metrics: RMSE, R², and Mean Absolute Error (MAE).

The Convergence in Action: A Case Study on AI-Driven Hit Discovery

The synergy of the three pillars is best illustrated through a contemporary workflow for identifying novel hit compounds.

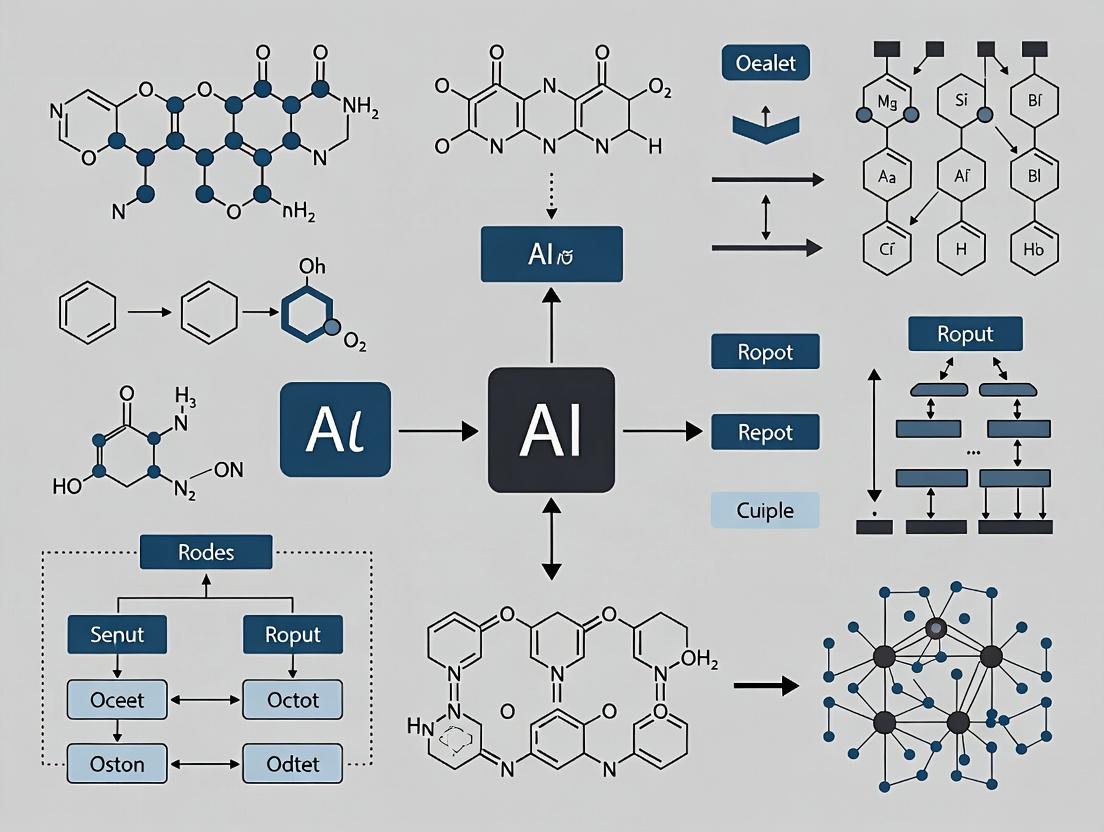

Visualization 1: AI-Driven Molecular Design Workflow

AI-Driven Hit Discovery Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in AI/Experimentation |

|---|---|

| Recombinant Protein (Target) | Purified, biologically active protein for in vitro assay development and structural studies (e.g., X-ray crystallography for docking). |

| Validated Biochemical Assay Kit | Standardized, reliable assay (e.g., luminescence-based kinase assay) for generating high-quality training data and validating AI predictions. |

| Diverse Compound Library | A collection of 10,000+ small molecules with known structures for primary screening and model validation. |

| AI/ML Software Suite (e.g., RDKit, PyTorch, DeepChem) | Open-source libraries for molecular featurization, deep learning model building, and cheminformatics analysis. |

| GPU-Accelerated Cloud Compute Credits | Access to scalable computational resources (e.g., AWS, GCP, Azure) for training large AI models without local hardware investment. |

| Structural Biology Services | Cryo-EM or X-ray crystallography services to determine novel protein-ligand complex structures, providing critical feedback for model refinement. |

Signaling Pathways in Modern AI-Driven Research

The operational "pathway" of an AI-driven project is a feedback loop between computation and experiment.

Visualization 2: AI-Experiment Feedback Cycle

AI-Experiment Iterative Cycle

Conclusion The question "Why Now?" is answered by the simultaneous maturity of vast, accessible biological data; sophisticated algorithms capable of modeling its complexity; and the democratized computational power to execute these tasks. This triad has moved AI in molecular design from a promising concept to an indispensable, production-level tool, fundamentally accelerating the path from target identification to viable drug candidates.

Inside the AI Chemist's Toolbox: Key Algorithms and Their Real-World Applications in Drug Discovery

Within the broader thesis on the Role of Artificial Intelligence in Molecular Design Research, generative models represent a paradigm shift. They are no longer just predictive tools but become creative engines for de novo molecular design. This aims to accelerate the discovery of novel chemical entities with desired properties, directly addressing the high costs and long timelines of traditional drug discovery. This technical guide provides an in-depth analysis of three foundational architectures: Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), and Autoregressive Models (AR), with a focus on their adaptation for molecular structures.

Core Architectures & Technical Foundations

Generative Adversarial Networks (GANs)

GANs operate on a game-theoretic framework involving two neural networks: a Generator (G) and a Discriminator (D). G learns to map random noise z to realistic molecular representations, while D distinguishes between real data samples and synthetic ones from G. The adversarial loss is formulated as: [ \minG \maxD V(D, G) = \mathbb{E}{x \sim p{data}(x)}[\log D(x)] + \mathbb{E}{z \sim pz(z)}[\log(1 - D(G(z)))] ] In molecular design, the output is typically a string (SMILES) or graph representation.

Variational Autoencoders (VAEs)

VAEs are probabilistic generative models consisting of an encoder and a decoder. The encoder maps an input molecule x to a distribution over latent variables z, while the decoder reconstructs the molecule from z. The model is trained by maximizing the Evidence Lower Bound (ELBO): [ \mathcal{L}(\theta, \phi; x) = \mathbb{E}{q\phi(z|x)}[\log p\theta(x|z)] - D{KL}(q_\phi(z|x) \parallel p(z)) ] where the first term is reconstruction loss and the second regularizes the latent space, ensuring smooth interpolation and generation.

Autoregressive Models (e.g., GPT for Molecules)

Autoregressive models generate sequences step-by-step, factoring the joint probability of a molecular sequence (e.g., SMILES string, SELFIES) as the product of conditional probabilities:

[

p(x) = \prod{t=1}^{T} p(xt | x_{

Quantitative Performance Comparison

Recent benchmark studies (2023-2024) compare the performance of these models across key metrics for de novo molecular design. The following table summarizes aggregated findings.

Table 1: Comparative Performance of Generative Models in Molecular Design

| Model Type | Exemplary Architecture | Validity (%) | Uniqueness (%) | Novelty (%) | Diversity (Tanimoto) | Optimization Success Rate |

|---|---|---|---|---|---|---|

| GAN-based | ORGAN, MolGAN | 70 - 98.5 | 85 - 100 | 80 - 99 | 0.70 - 0.85 | 60 - 80 |

| VAE-based | JT-VAE, GraphVAE | 60 - 100 | 90 - 100 | 90 - 100 | 0.75 - 0.90 | 50 - 75 |

| Autoregressive | MolGPT, TransMol | 95 - 100 | 95 - 100 | 95 - 100 | 0.80 - 0.95 | 70 - 90 |

| Hybrid (e.g., GAN+VAE) | GVAE, AAE | 85 - 99 | 90 - 100 | 85 - 99 | 0.75 - 0.88 | 65 - 85 |

Note: Ranges reflect performance across different datasets (e.g., ZINC250k, ChEMBL) and target properties (e.g., QED, logP, binding affinity). Optimization success rate refers to the fraction of generated molecules meeting a specified property threshold.

Experimental Protocols

Protocol for Benchmarking Generative Models

This protocol is standard for evaluating and comparing GANs, VAEs, and AR models.

Data Curation:

- Source: Download a canonical dataset (e.g., ZINC250k, MOSES).

- Preprocessing: Standardize molecules (remove salts, neutralize charges), filter by molecular weight (e.g., 250-500 Da) and logP. Convert to a unified representation (SMILES, SELFIES, or graph).

- Split: Perform a random 80/10/10 train/validation/test split.

Model Training:

- GAN: Train the generator and discriminator alternately. Use gradient penalty (WGAN-GP) for stability. Monitor the discriminator's accuracy to avoid collapse.

- VAE: Train to minimize the combined reconstruction and KL divergence loss. Anneal the KL weight to improve initial learning.

- Autoregressive: Train using teacher forcing with cross-entropy loss. Use a causal attention mask to prevent information leakage.

Sampling & Generation:

- Generate 10,000-50,000 molecules from each trained model by sampling from the prior noise distribution (GAN, VAE) or initiating the autoregressive process with a start token.

Evaluation Metrics:

- Validity: Percentage of generated strings that correspond to chemically valid molecules (checked via RDKit).

- Uniqueness: Percentage of valid molecules that are non-duplicate.

- Novelty: Percentage of unique, valid molecules not present in the training set.

- Diversity: Average pairwise Tanimoto dissimilarity (1 - similarity) based on Morgan fingerprints among generated molecules.

- Property Optimization: Use a Bayesian Optimization loop or reinforcement learning scaffold (like REINVENT) to fine-tune the model towards a desired property profile (e.g., high QED, low cLogP). Report the success rate after N optimization steps.

Protocol for a Conditional Generation Experiment (Targeting a Specific Protein)

This protocol outlines generating molecules predicted to bind to a target (e.g., KRAS G12C).

Affinity Predictor Training:

- Assemble a dataset of known binders and non-binders for the target.

- Train a separate supervised model (e.g., a Graph Neural Network) to predict binding affinity/activity (pIC50).

Conditional Model Setup:

- Implement a conditional variant of the chosen generative architecture (cGAN, CVAE, or conditional AR).

- The condition is a learned embedding of the target protein (e.g., from its amino acid sequence or structure).

Latent Space Optimization:

- For VAEs, perform gradient-based walk in the continuous latent space, guided by the affinity predictor, to find z that decodes to high-affinity molecules.

- For GANs/AR, use the predictor as a reward function in a reinforcement learning or Bayesian optimization loop to iteratively refine the generator.

Validation:

- Synthesize and test top in silico candidates via in vitro assays (e.g., SPR, enzymatic assay) to confirm generated molecule activity.

Visualizations

Core Generative Model Workflows for Molecules

Title: Generative Model Core Workflows Compared

Integrated AI-Driven Molecular Design Pipeline

Title: AI-Driven De Novo Molecular Design Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for AI Molecular Design Research

| Category | Item / Software | Function / Purpose |

|---|---|---|

| Chemical Datasets | ZINC20, ChEMBL, PubChem QC | Large-scale, commercially available and bioactive molecular structures for model training and benchmarking. |

| Representation | RDKit, DeepChem | Open-source cheminformatics toolkits for converting SMILES to/from molecular graphs, calculating fingerprints and descriptors. |

| Deep Learning Framework | PyTorch, TensorFlow, JAX | Flexible frameworks for building and training custom GAN, VAE, and Transformer architectures. |

| Specialized Libraries | MOSES, GuacaMol, TDC (Therapeutics Data Commons) | Standardized benchmarking platforms with datasets, metrics, and baseline models for fair comparison. |

| Property Prediction | Schrödinger Suite, OpenEye Toolkits, AutoDock Vina | Commercial & open-source software for molecular docking, physics-based scoring, and ADMET property prediction. |

| Cloud/Compute | AWS EC2 (P3/G4 instances), Google Cloud TPUs, NVIDIA DGX Systems | High-performance computing resources for training large-scale generative models, which are computationally intensive. |

| Validation | Enamine REAL Space, Mcule, Sigma-Aldrich | Commercial compound catalogues for checking synthetic accessibility and procuring physical samples for wet-lab testing. |

The integration of artificial intelligence into molecular design research represents a paradigm shift, moving from high-throughput screening to in silico generation and optimization. Within this framework, Reinforcement Learning (RL) has emerged as a powerful optimization engine. Unlike supervised learning, which relies on static datasets, RL agents learn to make sequential decisions—atom-by-atom or fragment-by-fragment—to construct molecules that maximize a multi-objective reward function. This guide provides a technical deep dive into RL methodologies for goal-directed molecular generation, framed within the broader thesis of AI-driven de novo design.

Core RL Paradigms in Molecular Generation

The field primarily utilizes two RL architectures: Actor-Critic models for continuous optimization of molecular properties via a learned policy, and Deep Q-Networks (DQN) for discrete action selection in molecular graph construction. A third, Model-Based RL, is gaining traction for incorporating learned predictive models of chemistry (e.g., of ADMET properties) into the reward landscape.

Table 1: Comparison of Key RL Paradigms for Molecular Generation

| RL Paradigm | Action Space | Typical Agent Architecture | Key Advantage | Primary Challenge |

|---|---|---|---|---|

| Actor-Critic (e.g., REINFORCE w/ baseline) | Continuous (e.g., latent vector manipulation) | Policy Network (Actor) + Value Network (Critic) | Stable learning, handles continuous optimization. | High variance in policy gradients, requires careful tuning. |

| Deep Q-Network (DQN) | Discrete (e.g., add atom/bond type X) | Q-Network estimating action-value function | Suitable for sequential graph-building steps. | Can be sample-inefficient; large action space complexity. |

| Model-Based RL | Continuous or Discrete | Agent + Learned Predictive Model (Dynamics) | Can plan using internal model, potentially more sample-efficient. | Compounded error from inaccurate model predictions. |

| Proximal Policy Optimization (PPO) | Continuous | Clipped Objective Policy Network | Robust performance, mitigates large policy updates. | More complex implementation than basic REINFORCE. |

The Reward Function: Encoding Chemical Goals

The reward function is the cornerstone of goal-directed generation. It quantitatively encodes the objectives for the desired molecule, often as a weighted sum of multiple property scores.

Standard Multi-Objective Reward Formulation:

R(m) = w₁ * QED(m) + w₂ * SA(m) + w₃ * [Target_Score(m)] + w₄ * [Synth_Score(m)]

Where m is the generated molecule, QED is Quantitative Estimate of Drug-likeness, SA is Synthetic Accessibility score, Target_Score is a predicted binding affinity or activity from a proxy model, and Synth_Score is a retrosynthesis feasibility metric. Penalties for invalid SMILES or undesired substructures are also applied.

Table 2: Common Reward Components and Their Quantitative Ranges

| Reward Component | Description | Typical Range | Target for Optimization |

|---|---|---|---|

| QED | Quantitative Estimate of Drug-likeness | 0.0 to 1.0 | Maximize (e.g., >0.6) |

| Synthetic Accessibility (SA) Score | Ease of synthesis (from fragment contributions) | 1 (easy) to 10 (hard) | Minimize (e.g., <4.5) |

| LogP | Octanol-water partition coefficient (lipophilicity) | Varies by target | Optimize to desired range (e.g., 0 to 5) |

| Molecular Weight | - | Da | Constrain (e.g., <500 Da) |

| Target Activity (pIC50/pKi) | Negative log of activity from a predictive model | >6 is typically potent | Maximize |

| Ligand Efficiency (LE) | Binding energy per heavy atom | >0.3 kcal/mol/HA is favorable | Maximize |

| Pan-Assay Interference (PAINS) Alert | Presence of problematic substructures | Binary (0 or 1) | Penalize (0) |

Experimental Protocols & Methodologies

Protocol 4.1: Standard RL Training Cycle forDe NovoDesign

Objective: Train an RL agent to generate molecules optimizing a multi-property reward.

Materials: See "The Scientist's Toolkit" below. Software: Python, RDKit, PyTorch/TensorFlow, RL library (e.g., Stable-Baselines3, custom).

Procedure:

- Environment Setup: Implement a

MolEnvclass. State (s_t): current molecular graph or SMILES. Action (a_t): defined by the action space (e.g., "add carbon," "form double bond," "terminate"). The environment must validate chemical validity after each step. - Agent Initialization: Initialize policy network (e.g., a Graph Neural Network or RNN) with random weights.

- Episode Execution:

a. Reset environment to an initial state (e.g., a single atom or empty graph).

b. For each step

tuntil termination (max steps or "terminate" action): i. Agent observes states_t. ii. Agent selects actiona_tbased on its policy π(a|s). iii. Environment executes action, transitions to new states_{t+1}. iv. Environment calculates intermediate rewardr_t(e.g., validity check). c. At episode end, generate final moleculem. Calculate final rewardR(m)using the full multi-objective function. d. Assign final reward to all steps in the episode (dense reward) or use discounting. - Policy Update: Using collected trajectories, compute policy gradient (e.g., REINFORCE) or update Q-values (DQN). For Actor-Critic, update the value network to reduce baseline variance.

- Validation Loop: Every N episodes, freeze policy and generate a batch of molecules. Evaluate against all reward metrics and record top performers.

- Termination: Stop after a fixed number of episodes or when performance plateaus.

Protocol 4.2: Training a Proxy (Predictive) Model for Reward

Objective: Create a surrogate model to predict a costly property (e.g., binding affinity) as a reward component.

Procedure:

- Data Curation: Assemble a dataset of molecules with experimentally measured target property (e.g., 10,000 compounds with pIC50 values).

- Featurization: Convert molecules to numerical features (e.g., ECFP4 fingerprints, molecular descriptors, or graph representations).

- Model Training: Split data (80/10/10 train/validation/test). Train a model (e.g., Random Forest, Gradient Boosting, or GNN) to predict the property from features.

- Validation: Assess model on hold-out test set. Require Pearson R > 0.7 for meaningful guidance.

- Integration: Deploy the trained model within the reward function

R(m)to provide instant, computationally cheap property estimates during RL training.

Visualizing the RL-Molecular Design Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Libraries for RL-Driven Molecular Generation

| Item / Reagent | Function / Purpose | Example / Source |

|---|---|---|

| Chemical Representation Library | Handles molecule I/O, validity checks, basic descriptors. | RDKit (Open-source) |

| Deep Learning Framework | Provides automatic differentiation and neural network modules for building agents. | PyTorch, TensorFlow |

| RL Algorithm Library | Offers pre-implemented, benchmarked RL algorithms (PPO, DQN, SAC). | Stable-Baselines3, RLlib |

| Molecular Featurizer | Converts molecules into machine-learnable features or descriptors. | Mordred (for 1800+ descriptors), DeepChem (for graph feats) |

| Property Prediction Models | Pretrained or custom models for QED, SA, toxicity, target activity. | ChEMBL web resource, proprietary models |

| High-Performance Computing (HPC) | GPU clusters for accelerated neural network training across millions of steps. | In-house cluster, Cloud (AWS/GCP) |

| Chemical Database | Source of initial training data for predictive models or benchmark sets. | PubChem, ChEMBL, ZINC |

| Visualization & Analysis Suite | For analyzing generated chemical space and properties. | Matplotlib, Seaborn, CheTo (Chemical space plotting) |

Advanced Considerations & Future Directions

Current research focuses on improving sample efficiency through offline RL (learning from fixed datasets), hierarchical RL (planning at fragment level), and multi-objective Pareto optimization. Integrating generative pre-trained models (like GPT for molecules) as initialization for the policy is another frontier. The ultimate validation remains in vitro and in vivo testing, closing the loop between in silico generation and empirical discovery, solidifying AI's central role in molecular design research.

Within the overarching thesis on the transformative role of artificial intelligence in molecular design research, the development of deep learning models for ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) and physicochemical property prediction represents a critical evolution. The shift from primarily structure-based activity prediction (QSAR) to these complex, systems-level biological and chemical endpoints is pivotal. It moves AI from a tool for initial hit discovery to a central engine for de novo design and lead optimization, enabling the in silico triage of molecules with poor pharmacokinetic or safety profiles before costly synthesis and experimental assays.

Core Deep Learning Architectures & Methodologies

Graph Neural Networks (GNNs), particularly Message Passing Neural Networks (MPNNs), are the dominant architecture. They operate directly on molecular graphs, where atoms are nodes and bonds are edges.

- Experimental Protocol for GNN-based Property Prediction:

- Data Curation: Assemble a dataset (e.g., from ChEMBL, PubChem) with molecular structures (SMILES) and associated experimental endpoint values (e.g., LogP, clearance, hERG inhibition IC50). Apply stringent data cleaning and standardization.

- Molecular Featurization: Encode atoms (node features: atomic number, hybridization, degree) and bonds (edge features: bond type, conjugation).

- Model Architecture: Implement an MPNN framework:

- Message Passing Phase (T steps): For each node, aggregate feature vectors from neighboring nodes and edges. Update the node's hidden state using a learned function (e.g., GRU).

- Readout/Global Pooling Phase: Aggregate the final hidden states of all nodes into a single, fixed-length graph-level representation using sum, mean, or attention-based pooling.

- Prediction Head: Pass the graph representation through fully connected neural network layers to produce the final prediction (regression or classification).

- Training & Validation: Use a stratified split to ensure chemical space diversity. Employ mean squared error (MSE) or binary cross-entropy loss. Validate using robust metrics (see Table 1) and external test sets.

Transformer-based Models (e.g., SMILES transformers, MoLFormer) treat the SMILES string as a sequential language, capturing long-range dependencies within the molecular representation.

Multitask Learning (MTL) models simultaneously predict multiple ADMET/physchem endpoints, leveraging shared feature representations and improving data efficiency for tasks with limited data.

Quantitative Performance Benchmarks

Table 1: Performance of Representative Deep Learning Models on Key ADMET/PhysChem Benchmarks (e.g., MoleculeNet datasets).

| Property (Dataset) | Model Type | Key Metric | Reported Performance | Traditional Method Baseline (e.g., Random Forest) |

|---|---|---|---|---|

| Lipophilicity (LogP) | MPNN (Attentive FP) | RMSE | ~0.40 - 0.50 | ~0.60 - 0.70 |

| Solubility (ESOL) | GNN (D-MPNN) | RMSE | ~0.58 - 0.68 | ~0.90 - 1.00 |

| hERG Toxicity | MTL-GNN | ROC-AUC | ~0.86 - 0.90 | ~0.80 - 0.83 |

| Hepatic Clearance | Graph Transformer | MAE | ~0.35 (log mL/min/g) | ~0.45 (log mL/min/g) |

| Caco-2 Permeability | Directed MPNN | Accuracy | ~0.85 - 0.90 | ~0.78 - 0.82 |

| Bioavailability | Ensemble of GNNs | ROC-AUC | ~0.81 - 0.85 | ~0.75 - 0.78 |

Detailed Experimental Protocol: Building a GNN for LogP Prediction

Aim: To train a GNN model to predict the octanol-water partition coefficient (LogP) of small molecules.

Materials & Workflow:

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Libraries for Deep Learning in Molecular Property Prediction

| Item | Function/Description | Example (Open Source) |

|---|---|---|

| Molecular Representation Library | Converts SMILES to graph/feature representations. | RDKit, DeepChem (featurizers) |

| Deep Learning Framework | Provides core tensors, autograd, and neural network modules. | PyTorch, TensorFlow (JAX) |

| Graph Neural Network Library | Offers pre-built, optimized GNN layers and models. | PyTorch Geometric (PyG), DGL |

| Chemistry-Aware ML Toolkit | High-level APIs for molecule-specific datasets, models, and tasks. | DeepChem |

| Hyperparameter Optimization | Automates the search for optimal model configurations. | Optuna, Ray Tune |

| Experiment Tracking | Logs parameters, metrics, and model artifacts for reproducibility. | Weights & Biases (W&B), MLflow |

Visualization of a Multitask Learning (MTL) Architecture

Challenges and Future Directions

Challenges: Data quality, size, and standardization; model interpretability ("black-box" problem); generalization to novel chemical scaffolds; and integration of physiological context (e.g., protein structures, cell-type specific data).

Future Trends: The integration of physics-informed neural networks to respect known physicochemical constraints, geometric deep learning for 3D conformational ensembles, and foundation models pre-trained on vast, unlabeled molecular corpora that can be fine-tuned for specific ADMET tasks with limited data. This progression is central to the thesis that AI will ultimately enable holistic, in silico-first molecular design cycles.

This whitepaper explores the transformative role of Artificial Intelligence (AI) in the structure-based design pipeline, specifically focusing on protein-ligand docking and binding affinity prediction. This topic sits within the broader thesis on the role of AI in molecular design research, which posits that AI is not merely an incremental tool but a paradigm-shifting force that is redefining the discovery and optimization of bioactive molecules. By integrating deep learning with physical and geometric principles, AI methods are dramatically accelerating the pace and improving the accuracy of predicting how small molecules interact with protein targets, a cornerstone of rational drug design.

Core AI Methodologies in Docking and Affinity Prediction

The integration of AI has revolutionized traditional computational approaches. Key methodologies include:

- Geometric Deep Learning for Pose Prediction: Unlike traditional scoring functions, models like EquiBind and DiffDock use graph neural networks (GNNs) and diffusion models that are inherently equivariant to rotations and translations. They learn to predict ligand binding poses directly from 3D structures of proteins and ligands without relying on exhaustive search, achieving superior speed and accuracy for novel binding sites.

- End-to-End Affinity Prediction with 3D Convolutions: Frameworks such as Pafnucy and 3D-CNN based models take the 3D structural complex (or the protein and ligand separately) as input and use convolutional layers to extract spatial features correlating with binding strength (ΔG, Ki, IC50).

- Hybrid Physics-Informed Neural Networks (PINNs): These models, like DeepMind's AlphaFold 3 underpinnings, combine learned representations with physical constraints (e.g., van der Waals forces, electrostatic potentials) within the neural network architecture, ensuring predictions are both data-informed and physically plausible.

- Pre-Trained Protein Language Models (pLMs): Models such as ESM-2 and ProtBERT provide rich, contextual embeddings of protein sequences. These embeddings can be used as features to augment structure-based models, especially when high-resolution structures are unavailable, improving generalization across protein families.

Quantitative Performance Comparison

Table 1: Benchmark Performance of AI-Driven Docking Tools vs. Classical Methods Data aggregated from PDBbind, CASF-2016, and comparative studies (2022-2024).

| Method / Tool | Type | Avg. RMSD (Å) <2.0 Å (%) | Top-1 Success Rate (%) | Mean Inference Time (s) | Key Innovation |

|---|---|---|---|---|---|

| DiffDock | AI (Diffusion) | 1.67 | 52.0 | 3.2 | Diffusion on SE(3) manifold |

| EquiBind | AI (GNN) | 2.15 | 37.5 | 0.1 | E(3)-Equivariant GNN |

| TANKBind | AI (GNN) | 1.89 | 45.1 | 1.5 | Global attention for pockets |

| GNINA | Hybrid CNN/Classical | 2.01 | 40.2 | 8.5 | CNN scoring of AutoDock Vina poses |

| AutoDock Vina | Classical (SF) | 2.47 | 26.3 | 21.0 | Empirical scoring function + search |

| GLIDE (SP) | Classical (SF) | 2.23 | 34.1 | 45.0 | Force-field-based scoring |

Table 2: Performance of AI Models on Binding Affinity Prediction Benchmarked on the PDBbind v2020 core set (285 complexes). Performance metrics: RMSE (Root Mean Square Error), Pearson's R. Lower RMSE and higher R are better.

| Model / Approach | RMSE (kcal/mol) | Pearson's R | Input Features | Publication Year |

|---|---|---|---|---|

| Δ-Δ Learning (ens.) | 0.89 | 0.86 | 3D complex, Δ-comparison | 2024 |

| AlphaFold3 (reported) | ~1.00 | ~0.83 | Sequences + structures | 2024 |

| GraphDelta | 1.10 | 0.82 | Molecular graphs + 3D cues | 2023 |

| PIGNet2 | 1.13 | 0.80 | Physics-informed GNN | 2022 |

| OnionNet-2 | 1.31 | 0.78 | Rotation-free features | 2021 |

| Standard MM/GBSA | 1.80 - 2.50 | 0.40 - 0.65 | Molecular dynamics, solvation | N/A |

Experimental Protocols for AI Model Validation

Protocol 1: Benchmarking an AI Docking Model Using CASF-2016 Objective: To evaluate the pose prediction accuracy of a new AI docking model against a standard benchmark.

- Data Preparation: Download the CASF-2016 (Comparative Assessment of Scoring Functions) benchmark set. This includes protein-ligand complex structures (PBD format) with known, experimentally validated binding poses.

- Input Processing: For each complex, separate the ligand (sdf/mol2 format) and the protein. Remove all water molecules and co-factors. Prepare the protein by adding hydrogen atoms and computing partial charges using a standard toolkit (e.g., RDKit, PDBFixer).

- Pose Generation: Run the AI docking model (e.g., DiffDock) on the prepared protein and ligand files, generating a ranked list of predicted ligand poses.

- Alignment & RMSD Calculation: Superimpose the predicted ligand pose onto the experimentally determined crystal structure ligand using heavy atom alignment. Calculate the Root-Mean-Square Deviation (RMSD) in Angstroms (Å).

- Success Rate Calculation: A prediction is considered successful if the RMSD of the top-ranked pose is ≤ 2.0 Å. Calculate the percentage of successful predictions across the entire benchmark set.

- Comparative Analysis: Compare the success rate and average RMSD against baseline methods (e.g., AutoDock Vina, GNINA) reported in the literature or run locally.

Protocol 2: Training a GNN for Relative Binding Affinity Prediction (ΔΔG) Objective: To train a model to predict the change in binding affinity (ΔΔG) for a series of ligands against a common target.

- Dataset Curation: Assemble a dataset from public sources (e.g., PDBbind, BindingDB) containing protein-ligand complexes with measured Ki/Kd/IC50 values. Convert all measurements to ΔG (kcal/mol). Focus on congeneric series for a specific target (e.g., kinase inhibitors).

- Structure Preparation & Featurization: Generate consistent 3D structures for all protein-ligand pairs. For each complex, create a graph representation: nodes are atoms, and edges represent bonds or spatial proximity. Node features include atom type, hybridization, partial charge. Edge features include bond type and distance.

- Model Architecture: Implement a Message Passing Neural Network (MPNN). The network should include:

- Multiple message-passing layers to aggregate atomic environment information.

- A global pooling layer (e.g., sum or attention) to generate a fixed-size graph embedding.

- Fully connected layers to regress the final ΔG value.

- Loss Function & Training: Use a Mean Squared Error (MSE) loss between predicted and experimental ΔG. Employ a stratified train/validation/test split. Use an optimizer like Adam with a learning rate scheduler.

- Evaluation: Report standard metrics: RMSE, MAE, and Pearson's R on the held-out test set. Critically, analyze the model's ability to correctly rank ligands by potency (Spearman's ρ).

Visualizations

Title: AI-Driven Docking & Affinity Prediction Workflow

Title: Hybrid AI Model Architecture for Binding Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for AI-Enhanced Structure-Based Design

| Item / Resource | Type | Function / Application |

|---|---|---|

| PDBbind Database | Database | Curated collection of protein-ligand complexes with binding affinity data for training and benchmarking AI models. |

| CASF Benchmark Sets | Benchmark Suite | Standardized sets (e.g., CASF-2016) for fair comparison of docking power, scoring power, and ranking power of algorithms. |

| AlphaFold Protein Structure Database | Database | Provides highly accurate predicted protein structures for targets without experimental crystallographic data, expanding the scope of AI docking. |

| RDKit | Software Library | Open-source cheminformatics toolkit for ligand preparation, featurization (SMILES, molecular graphs), and basic molecular operations. |

| OpenMM / AMBER | Molecular Dynamics Engine | Used for generating conformational ensembles or refining AI-predicted poses through physics-based simulation, adding robustness. |

| PyTorch Geometric / DGL | Deep Learning Library | Specialized libraries for building and training Graph Neural Networks (GNNs) on molecular graph data. |

| DiffDock or EquiBind Implementation | AI Model Code | Pre-trained, state-of-the-art models for pose prediction, usable via GitHub repositories for inference or fine-tuning. |

| GNINA | Software | Open-source docking program that uses convolutional neural networks to score poses, serving as a strong hybrid baseline. |

| High-Performance Computing (HPC) Cluster or Cloud GPU (e.g., NVIDIA A100) | Hardware | Essential for training large AI models and performing high-throughput virtual screening with AI-based docking tools. |

The broader thesis on the Role of artificial intelligence in molecular design research posits that AI is transitioning from a supportive tool to a core driver of de novo molecular generation. This case study exemplifies that shift, focusing on two of the most active areas in oncology and targeted protein degradation: kinase inhibitors and Proteolysis-Targeting Chimeras (PROTACs). Generative AI models are now capable of navigating the complex, multi-parameter optimization landscape required for these modalities, which includes binding affinity, selectivity, pharmacokinetics, and for PROTACs, the critical "hook effect" and ternary complex formation.

Generative AI Architectures for Molecular Design

Current approaches leverage several deep learning architectures, each with distinct advantages.

- Chemical Language Models (CLMs): Treat Simplified Molecular-Input Line-Entry System (SMILES) or SELFIES strings as sequences. Models like GPT-based architectures learn the statistical likelihood of molecular "tokens" to generate novel, synthetically accessible structures.

- Variational Autoencoders (VAEs): Encode molecules into a continuous latent space where interpolation and sampling generate novel structures with desired properties predicted by a coupled predictor network.

- Generative Adversarial Networks (GANs): Employ a generator to create molecules and a discriminator to distinguish them from real molecules in a training set, often conditioned on specific properties.

- Graph-Based Models: Operate directly on the molecular graph structure, performing iterative message-passing to add/remove atoms and bonds, offering fine-grained control over structural generation.

Table 1: Comparison of Generative AI Models for Molecular Design

| Model Type | Molecular Representation | Key Strength | Key Challenge |

|---|---|---|---|

| Chemical Language Model | SMILES, SELFIES | High novelty, scalable | May generate invalid strings |

| Variational Autoencoder | Latent Vector | Smooth latent space, good for optimization | Can produce "fuzzy" outputs |

| Generative Adversarial Network | Graph/SMILES | High-quality, sharp outputs | Training instability, mode collapse |

| Graph-Based Generator | Molecular Graph | Structurally precise, explainable | Computationally intensive |

Core Experimental Protocols in AI-Driven Workflow

The standard iterative workflow integrates generative models with computational and experimental validation.

Protocol 1: Model Training & Conditional Generation

- Data Curation: Assemble a dataset of known kinase inhibitors or PROTACs (from ChEMBL, PDB) with associated properties (IC50, DC50, LogP, etc.). For PROTACs, include warhead, E3 ligase ligand, and linker structures.

- Model Training: Train a conditional generative model (e.g., a Conditional VAE or a GFlowNet) where the conditioning vector includes desired properties (e.g., high potency against BTK, low activity against EGFR).

- Sampling: Generate a library of 10,000-100,000 novel molecules by sampling from the model under the desired conditional constraints.

Protocol 2: In Silico Screening & Prioritization

- Property Prediction: Pass the generated library through high-throughput in silico filters using pre-trained models or rapid simulations:

- Docking: Use AutoDock Vina or Glide to score predicted binding poses against the target kinase or the PROTAC ternary complex structure.

- ADMET Prediction: Use models like Random Forest or Graph Neural Networks to predict permeability, solubility, metabolic stability, and toxicity risks.

- Selectivity Screening: Perform rapid molecular docking against a panel of off-target kinases (e.g., 50+ kinome members).

- Multi-parameter Optimization: Apply Pareto ranking or a weighted scoring function to identify top candidates balancing potency, selectivity, and developability. Typically, the top 50-200 molecules are selected for synthesis.

Protocol 3: Experimental Validation Cascade

- Synthesis: Synthesize the top AI-generated candidates (typically 20-50 compounds) using parallel and medicinal chemistry approaches.

- In Vitro Biochemical Assay: Test purified compounds in a target kinase activity assay (e.g., ADP-Glo kinase assay) to determine IC50 values.

- Cellular Potency Assay: Evaluate compounds in a cell-based viability or pathway inhibition assay (e.g., p-STAT5 inhibition for JAK2 inhibitors) to determine cellular IC50.

- Selectivity Profiling: For lead compounds, perform a broad kinome screen using a platform like KINOMEscan to generate selectivity heatmaps.

- PROTAC-Specific Assays: For PROTAC candidates, measure:

- DC50: Concentration causing 50% target degradation in cells via Western blot.

- Ternary Complex Formation: Use techniques like SPR or AlphaLISA to confirm and characterize the target-PROTAC-E3 ligase interaction.

- Hook Effect: Perform dose-response degradation assays at high concentrations to identify loss of efficacy due to binary complex formation.

Visualizing Key Concepts and Workflows

Diagram 1: AI-Driven Molecular Design Workflow

Diagram 2: PROTAC Mechanism & Design Parameters

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Validating AI-Designed Kinase Inhibitors & PROTACs

| Reagent / Material | Function in Validation | Example Vendor/Platform |

|---|---|---|

| Recombinant Kinase Protein | Target for biochemical activity assays (IC50 determination). | Carna Biosciences, SignalChem |

| ADP-Glo Kinase Assay Kit | Luminescent, homogeneous assay to measure kinase activity and inhibition. | Promega |

| KINOMEscan Profiling Service | High-throughput competitive binding assay to assess kinome-wide selectivity. | DiscoverX |

| Cell Line with Target Dependency | Cellular model for testing compound potency (e.g., Ba/F3 cells with oncogenic kinase). | ATCC, DSMZ |

| Phospho-Specific Antibodies | Detect pathway inhibition via Western blot (e.g., p-STAT5, p-AKT). | Cell Signaling Technology |

| VHL or CRBN E3 Ligase Complex | Recombinant protein for SPR or ITC to measure ternary complex formation for PROTACs. | BPS Bioscience |

| Proteasome Inhibitor (MG-132) | Control to confirm PROTAC-induced degradation is proteasome-dependent. | Selleck Chemicals |

| CETSA (Cellular Thermal Shift Assay) Kit | Confirm target engagement of inhibitors/PROTACs in cells. | Cayman Chemical |

Navigating the Pitfalls: Solving Data, Bias, and Practical Challenges in AI-Driven Molecular Design