AI-Driven Molecular Design: A Practical Guide to Markov Decision Process (MDP) for Drug Discovery

This guide provides a comprehensive exploration of Markov Decision Processes (MDPs) as a powerful framework for automated molecule modification and de novo design in drug discovery.

AI-Driven Molecular Design: A Practical Guide to Markov Decision Process (MDP) for Drug Discovery

Abstract

This guide provides a comprehensive exploration of Markov Decision Processes (MDPs) as a powerful framework for automated molecule modification and de novo design in drug discovery. Aimed at researchers and computational chemists, it covers foundational principles, implementation methodologies for building and training generative models, strategies for optimizing agent performance and reward functions, and current approaches for validating and benchmarking MDP-based models against established methods. The article synthesizes the potential of reinforcement learning to accelerate the search for novel therapeutic candidates with desired properties.

What is an MDP? Demystifying the Core Framework for Molecular Reinforcement Learning

This whitepaper provides a technical guide for framing molecular optimization within a Markov Decision Process (MDP) paradigm. It details the formal definition of the chemical "state" (the molecule) and the "action space" (chemical modifications) to enable machine learning-driven drug discovery. This work serves as a core chapter in a broader thesis on the application of MDPs to molecule modification research.

In an MDP, an agent interacts with an environment. For molecule modification:

- State (S): A complete and unambiguous representation of a molecule.

- Action (A): A set of valid chemical transformations that can be applied to the current molecular state.

- Transition (T): The deterministic or stochastic result of applying an action (reaction) to a state, leading to a new state (new molecule).

- Reward (R): A scalar signal (e.g., predicted binding affinity, synthetic accessibility score, improved solubility) evaluating the new state.

Defining a precise, computationally tractable state and a chemically feasible action space is the foundational challenge.

The Molecular State: Representations and Embeddings

The molecular state must be encoded for machine learning. Common representations are compared below.

Table 1: Quantitative Comparison of Molecular State Representations

| Representation | Format | Dimensionality (Typical) | Information Captured | Common Use Case |

|---|---|---|---|---|

| SMILES | String | Variable length | 2D Molecular Graph | Sequence-based models (RNN, Transformer) |

| Molecular Graph | Adjacency + Node Feature Matrices | Nodes: ~10-100 Atoms Edges: ~10-200 Bonds | Explicit Atom/Bond Structure | Graph Neural Networks (GNNs) |

| Extended-Connectivity Fingerprints (ECFPs) | Bit Vector (Binary) | 1024, 2048, 4096 bits | Substructural Features | Similarity search, QSAR models |

| 3D Conformer Ensemble | Atomic Coordinates (x,y,z) per conformer | (Natoms x 3) x Nconformers | 3D Geometry, Pharmacophores | Docking, 3D-CNNs, Physics-based scoring |

| Learned Embedding (e.g., from GNN) | Continuous Vector (Latent Space) | 128, 256, 512 floats | Task-relevant features | Policy/Value networks in MDP |

Experimental Protocol: Generating a 3D Conformer State

For reward functions dependent on 3D structure (e.g., docking), the state must include 3D coordinates.

- Input: SMILES string of the molecule.

- Generation: Use RDKit's

EmbedMultipleConfsfunction with the ETKDGv3 method to generate a diverse set of initial 3D conformers (e.g., 50). - Optimization: Perform molecular mechanics geometry optimization for each conformer using the MMFF94s force field via RDKit's

MMFFOptimizeMolecule. - Selection: Cluster conformers by RMSD and select the lowest-energy representative from the largest cluster as the canonical 3D state for evaluation.

- Storage: The state is stored as a PyTorch Geometric

Dataobject containing atom features (atomic number, hybridization) and the Nx3 coordinate matrix.

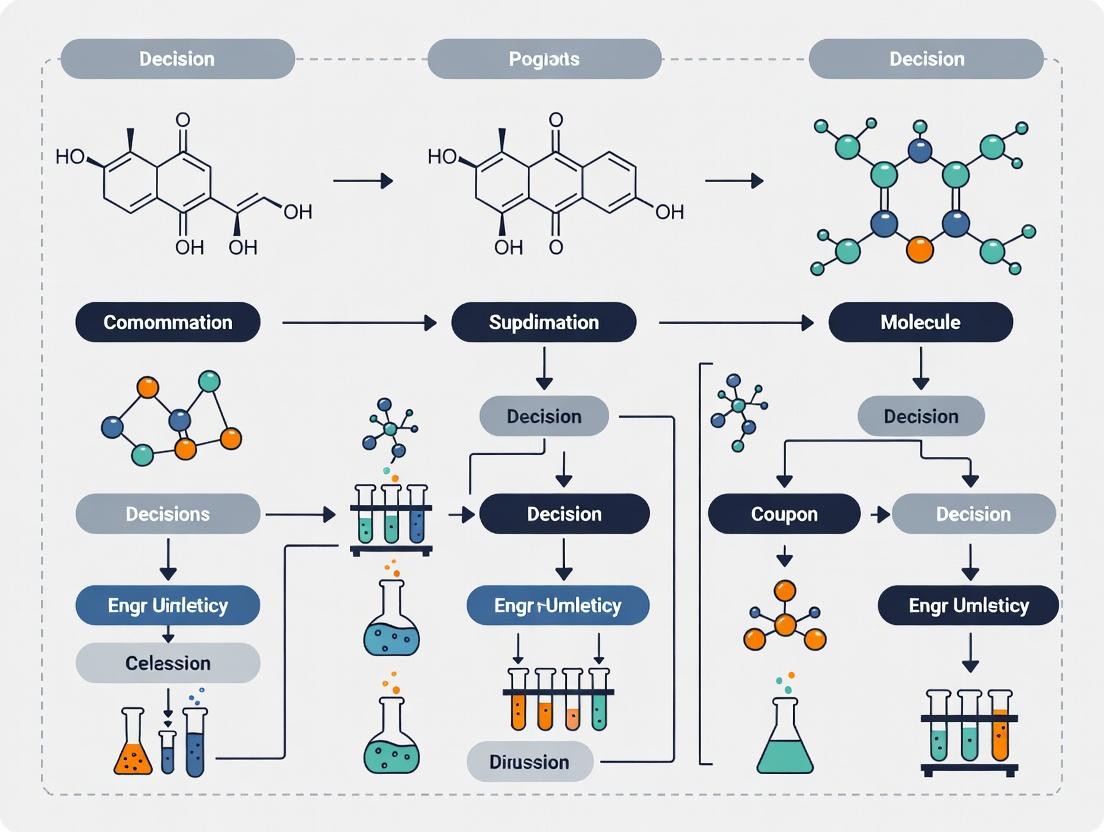

Diagram 1: 3D Molecular State Generation Workflow

The Chemical Action Space: Feasible Transformations

The action space defines all possible modifications from a given state. It must balance comprehensiveness with synthetic realism.

Table 2: Categories of Chemical Actions in MDPs

| Action Category | Description | Granularity | Example | Library Size (Typical) |

|---|---|---|---|---|

| Atom/Bond-Editing | Add, remove, or alter atoms/bonds directly. | Fine-grained | Add a carbonyl (C=O), change single to double bond. | 10^1 - 10^2 possible actions per step. |

| Substructure Replacement | Replace a defined molecular fragment with another. | Medium-grained | Replace a carboxylic acid (-COOH) with a sulfonamide (-SO2NH2). | 10^2 - 10^3 predefined fragment pairs. |

| Reaction-Based | Apply a validated chemical reaction template. | Coarse-grained | Perform a Suzuki-Miyaura cross-coupling. | 10^1 - 10^2 templates from reaction databases. |

| Scaffold Hopping | Replace the core scaffold while preserving peripheral groups. | Macro-grained | Change a phenyl ring to a pyridine ring. | Highly variable, often model-guided. |

Experimental Protocol: Implementing a Reaction-Based Action Space

This protocol uses the USPTO chemical reaction dataset to build a valid action set.

- Template Extraction: Use RDChiral (based on RDKit) to extract reaction templates from USPTO data, filtering for high-yield, robust reactions.

- Template Encoding: Encode each template as a SMARTS pattern for the reaction core and a set of rules for atom mapping.

- State-Template Matching: For a given molecular state (as a SMILES string), iterate through the template library. Use RDChiral to check if the molecule's substructure matches the reactant pattern of any template.

- Action Enumeration: For all matching templates, apply the transformation to generate all possible product molecules (new states). Each valid application is a unique action.

- Action Indexing: Assign a unique integer index to each reaction template. The agent's action at each step is the selection of an index corresponding to a currently applicable template.

Diagram 2: Reaction-Based Action Enumeration Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Software for MDP-Driven Molecular Design Experiments

| Item / Solution | Function in Experiment | Key Provider/Example |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule I/O, fingerprinting, substructure search, and reaction processing. | RDKit.org |

| PyTorch Geometric (PyG) | Library for deep learning on graphs; essential for GNN-based state and policy networks. | PyG Team |

| RDChiral | Specialized library for applying reaction templates with strict stereochemical awareness. | Github: rdchiral |

| OpenEye Toolkit | Commercial suite for high-performance molecular modeling, force fields, and docking. | OpenEye Scientific |

| Schrödinger Suite | Integrated platform for computational chemistry, including Glide for high-throughput docking. | Schrödinger |

| MOSES Benchmarking | Provides standardized datasets (ZINC-based), metrics, and baselines for generative molecule models. | Github: moses |

| GuacaMol Benchmark | Framework for benchmarking generative models across a wide array of chemical property objectives. | Github: GuacaMol |

| USPTO Dataset | Curated dataset of chemical reactions used to extract realistic reaction templates for the action space. | Harvard Dataverse |

| ChEMBL Database | Manually curated database of bioactive molecules with property data; used for reward function design. | EMBL-EBI |

| Oracle Function (e.g., Docking) | Computational or experimental assay (e.g., AutoDock Vina, FEP+) that provides the reward signal. | Custom / Commercial |

Integrating State and Action: The MDP Cycle in Practice

The complete cycle involves iteratively applying a policy network (which selects an action from the valid set) to a state representation, then evaluating the new state to obtain a reward.

Table 4: Performance Metrics for MDP Molecule Optimization Agents

| Metric | Formula/Description | Target Value (Benchmark) |

|---|---|---|

| Valid Action Success Rate | (Number of chemically valid new states generated) / (Total actions attempted) | >99% |

| Novelty | Proportion of generated molecules not present in the training set. | >80% |

| Scaffold Diversity | Diversity of Bemis-Murcko scaffolds in a generated set (measured by entropy). | >0.8 (normalized) |

| Average Reward Improvement | ΔReward = (Final State Reward) - (Initial State Reward) over an episode. | Task-dependent (e.g., ΔpIC50 > 1.0) |

| Synthetic Accessibility (SA) Score | Score from 1 (easy) to 10 (hard) estimating ease of synthesis. | <4.5 (for drug-like molecules) |

Diagram 3: MDP Cycle for Molecule Optimization

In the context of a Markov Decision Process (MDP) for de novo molecular design and optimization, the definition of the action space is a foundational component. An MDP is defined by the tuple (S, A, P, R), where S represents the state space (molecular structures), A the action space (valid modifications), P the transition probabilities, and R the reward function (e.g., predicted bioactivity, synthesizability). This whitepaper provides an in-depth technical guide to defining the set of valid molecular actions (A), which dictates the pathways an agent can explore in chemical space. The granularity and validity of these actions directly impact the efficiency, realism, and ultimate success of generative models in drug discovery.

Taxonomy of Molecular Actions

Molecular modifications in an MDP can be categorized by their granularity and chemical consequence. The choice of action space is a critical hyperparameter that balances exploration, synthetic feasibility, and learning complexity.

Table 1: Hierarchy of Molecular Action Types

| Action Granularity | Description | Typical Validity Constraints | Example |

|---|---|---|---|

| Atom Addition | Adding a single atom (e.g., C, N, O) with associated bonds to an existing molecular graph. | Valence rules, allowable atom types, avoidance of forbidden substructures. | Adding a nitrogen atom with a double bond to an existing carbonyl carbon, creating an amide. |

| Bond Alteration | Changing the bond order (single, double, triple) between two existing atoms or adding/removing a bond. | Preservation of atomic valences, prevention of strained rings (e.g., triple bond in small ring), aromaticity rules. | Converting a single bond to a double bond in an alkene. |

| Fragment Addition | Attaching a pre-defined molecular fragment (e.g., methyl, hydroxyl, phenyl) to a specific attachment point. | Fragment library design, compatibility of attachment points, resulting steric clashes. | Adding a methyl group (-CH3) to an aromatic carbon. |

| Fragment Replacement | Removing an existing fragment/substructure and replacing it with a different fragment from a library. | Size of the replacement library, geometric and electronic compatibility at the connection points. | Replacing a chlorine atom with a methoxy group (-OCH3). |

| Scaffold Hopping | Replacing a core ring system with a different bioisostere while preserving key interacting groups. | Defined by pharmacophore matching and 3D shape similarity, often a higher-level action. | Replacing a phenyl ring with a pyridine ring. |

Defining Validity: Rules and Constraints

A "valid" action must transform one chemically plausible molecule (state St) into another (state St+1). The following rules form the core validity checker in an MDP environment.

Table 2: Core Validity Constraints for Molecular Actions

| Constraint Category | Specific Rules | Implementation Check |

|---|---|---|

| Valence & Bond Order | Atoms must obey standard chemical valences (e.g., C=4, N=3, O=2). Hypervalency is allowed for specific atoms (e.g., S, P) under defined rules. | Sum of bond orders for an atom ≤ maximum valence. |

| Aromaticity | Actions must not disrupt established aromatic systems unless the action explicitly breaks aromaticity via a defined pathway (e.g., reduction). | Post-modification aromaticity detection (e.g., Hückel's rule). |

| Steric Clash | New atoms/fragments must not introduce severe non-bonded atom overlaps (Van der Waals radii violation). | Inter-atomic distance check against a threshold (e.g., 80% of sum of VdW radii). |

| Unstable Intermediates | Avoid creating highly strained rings (e.g., bridgehead alkenes in small bicyclics), anti-aromatic systems, or toxicophores. | SMARTS pattern matching against a forbidden substructure list. |

| Synthetic Accessibility | The resulting molecule should, in principle, be synthesizable. This is a soft constraint but can be approximated. | SANSA score or retrosynthetic complexity score threshold. |

Experimental Protocol for Validity Rule Benchmarking

- Objective: Quantify the impact of different validity constraint strictness on MDP exploration efficiency.

- Method:

- Set up a standard MDP environment (e.g., using the

Chemlibrary from RDKit) with a defined reward function (e.g., QED + SA). - Implement three validity checkers: Basic (valence only), Intermediate (valence + aromaticity + unstable intermediates), Strict (all constraints including sterics).

- Run a standard policy (e.g., Monte Carlo Tree Search or a pre-trained policy network) for a fixed number of steps (N=10,000) from a common starting molecule (e.g., benzene).

- Measure: a) Percentage of proposed actions rejected, b) Diversity of final molecules (average pairwise Tanimoto dissimilarity), c) Average reward of top 10 molecules found.

- Set up a standard MDP environment (e.g., using the

- Analysis: The "Intermediate" checker typically offers the best trade-off, rejecting ~40-60% of random actions while allowing sufficient exploration to find high-scoring, plausible molecules.

Implementation: Action Spaces in Practice

Table 3: Comparison of Action Space Implementations in Recent Literature

| Model / Framework | Action Space Definition | Granularity | Validity Enforcement | Key Reference (2022-2024) |

|---|---|---|---|---|

| REINVENT | Fragment-based, SMILES string modification. | Fragment Addition/Replacement | Rule-based filters (e.g., PAINS, structural alerts). | Blaschke et al., Drug Discovery Today, 2022. |

| MolDQN | Atom/Bond level: Add/Remove/Change bond, Change Atom. | Atom/Bond | Valence checks via RDKit after each step; invalid states are terminal. | Zhou et al., ICML Workshop, 2022. |

| GFlowNet-EM | Single-atom or small fragment addition guided by a pharmacophore. | Atom/Fragment | Hard-coded in the state transition mask; only pharmacophore-compliant actions allowed. | Jain et al., NeurIPS, 2022. |

| Fragment-based MCTS | Replacement of a variable-sized fragment from a large library. | Fragment Replacement | Syntactic (correct bonding) and semantic (SA, clogP change) filters. | Recent preprint, ChemRxiv, 2024. |

Experimental Protocol for Fragment Library Curation

- Objective: Construct a diverse, synthetically accessible fragment library for use in fragment replacement actions.

- Method (BRICS-like Decomposition):

- Source Dataset: Obtain a large collection of drug-like molecules (e.g., ChEMBL, ZINC).

- Fragmentation: Apply retrosynthetic combinatorial analysis procedure rules (BRICS) to break molecules at cleavable bonds defined by chemical context (e.g., amide, ester linkages).

- Fragment Processing: Collect all unique fragments. Filter by size (e.g., 3-10 heavy atoms). Standardize valence and add explicit hydrogen atoms at breakpoints (represented as dummy atoms, e.g.,

[*]). - Diversity & SA Filtering: Cluster fragments using fingerprint (ECFP4) and MCS similarity. Select cluster centroids. Filter fragments with high synthetic accessibility (SA) score.

- Library Assembly: The final library is a set of SMILES strings with dummy atoms, each associated with metadata (frequency of origin, SA score, common attachment atoms).

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools & Libraries for MDP Action Definition

| Item (Software/Library) | Function in Action Space Research | Key Feature |

|---|---|---|

| RDKit | The core cheminformatics toolkit for molecule manipulation, substructure checking, and property calculation. | Chem.RWMol for editable molecules, SanitizeMol() for valence/aromaticity checks, SMARTS matching. |

| OpenEye Toolkit | Commercial suite offering robust molecular mechanics and advanced chemical perception. | Reliable tautomer handling, force-field based steric clash evaluation, Omega for conformer generation. |

| DeepChem | Provides high-level APIs for molecular machine learning and environments. | MolecularEnvironment class, integration with RL libraries (OpenAI Gym/RLlib). |

| PyTor Geometric / DGL | Graph neural network libraries essential for representing the molecular state (graph) and predicting actions. | Efficient graph convolution operations, batch processing of molecular graphs. |

| SQLite/Redis | Lightweight databases for caching valid actions for frequent states or storing large fragment libraries. | Enables fast lookup of pre-computed valid action masks, critical for runtime performance. |

Visualizing the Decision Process & Validity Checks

Title: MDP Validity Check Workflow for a Molecular Action

Title: Spectrum of Molecular Action Granularity

In the context of a Markov Decision Process (MDP) for de novo molecular design or lead optimization, an agent sequentially modifies a molecular structure (state, s_t) by choosing actions (a_t), such as adding or removing a functional group. The core challenge is to define a reward function R(s_t, a_t, s_{t+1}) that accurately quantifies the desirability of the transition to the new molecule. This whitepaper provides a technical guide to constructing a composite reward function that translates multifaceted chemical and biological objectives—bioactivity, ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity), and synthesizability—into a single, scalar numerical goal that drives the MDP agent toward viable drug candidates.

Component-Specific Reward Formulations

Bioactivity Reward (R_bio)

The primary goal is to maximize binding affinity or functional activity against a target.

Common Quantitative Metrics:

| Metric | Description | Typical Ideal Range | Reward Shape |

|---|---|---|---|

| pIC50 / pKi | -log10(IC50/Ki); IC50/Ki in molar. | >7 (100 nM) | Linear or sigmoidal increase above threshold. |

| ΔG (kcal/mol) | Binding free energy from computational methods. | < -9 kcal/mol | Negative linear or exponential. |

| Docking Score | Virtual screening score (e.g., Vina, Glide). | Case-dependent | Negative score favored; reward = -score. |

Experimental Protocol for Benchmarking (Example: pIC50 Determination):

- Compound Serial Dilution: Prepare test compound in DMSO, then dilute in assay buffer for a 10-point, 3-fold serial dilution.

- Target Incubation: Incubate target (e.g., enzyme, receptor) with dilution series in 384-well plate for 1 hour at RT.

- Detection: Add fluorescent/chemiluminescent substrate or ligand. Incubate and read signal.

- Data Analysis: Fit normalized response vs. log10(concentration) data to a 4-parameter logistic model to determine IC50. Convert to pIC50.

ADMET Reward (R_admet)

A composite of multiple pharmacokinetic and toxicity predictions.

Key Predictors & Thresholds:

| Property | Predictive Model/Descriptor | Desirable Range | Penalty Function |

|---|---|---|---|

| Aqueous Solubility (logS) | ESOL Prediction | > -4 log mol/L | Gaussian around -3. |

| Caco-2 Permeability (log Papp) | ML model on molecular descriptors | > -5.15 cm/s | Step function above threshold. |

| hERG Inhibition (pIC50) | QSAR or deep learning model | < 5 (low risk) | Severe penalty for pIC50 > 5. |

| CYP450 Inhibition (2C9, 3A4) | Binary classifier probability | Probability < 0.5 | Linear penalty for prob > 0.5. |

| Human Liver Microsomal Stability (t1/2) | Regression model | > 30 min | Linear reward for longer t1/2. |

| Ames Toxicity | FCA (Fragment Carcinogenicity Assessment) | Binary: Non-mutagen | Large negative reward for positive prediction. |

Experimental Protocol for Caco-2 Permeability Assay:

- Cell Culture: Grow Caco-2 cells on semi-permeable transwell inserts for 21-25 days to form confluent, differentiated monolayer.

- Validation: Measure Transepithelial Electrical Resistance (TEER) > 300 Ω·cm². Perform Lucifer Yellow permeability test to confirm monolayer integrity.

- Transport Study: Add test compound (10 µM) to donor chamber (apical for A→B, basal for B→A). Sample from receiver chamber at 30, 60, 90, 120 min.

- LC-MS/MS Analysis: Quantify compound concentration in samples. Calculate apparent permeability: Papp = (dQ/dt) / (A * C0), where dQ/dt is transport rate, A is membrane area, C0 is initial donor concentration.

Synthesizability Reward (R_synth)

Quantifies the feasibility and cost of synthesizing the molecule.

Key Components:

| Component | Metric | Reward Formulation |

|---|---|---|

| Retrosynthetic Complexity | RAscore or SYBA score | Linear mapping of score to reward. |

| Reaction Feasibility | Forward reaction prediction probability (e.g., from Molecular Transformer) | Reward = probability. |

| Structural Alerts | SMARTS-based match for problematic functional groups (e.g., peroxides, polyhalogenated methyl) | Binary large penalty for match. |

| Cost of Starting Materials | Estimated from vendor catalog prices (e.g., via molly/askcos) |

Exponential decay with increasing cost. |

Integrated Reward Function Architecture

The total reward for a transition in the MDP is a weighted sum of components, often with non-linear transformations and conditional penalties:

R_total = w1 * f(R_bio) + w2 * g(R_admet) + w3 * h(R_synth) + R_penalties

Typical Weighting (from recent literature): w1 (Bioactivity): 0.5, w2 (ADMET): 0.3, w3 (Synthesizability): 0.2. Penalties for rule violations (e.g., Lipinski's Rule of 5, PAINS filters) are applied as large negative constants.

Diagram Title: MDP Reward Calculation Flow for Molecule Design

The Scientist's Toolkit: Research Reagent Solutions

| Item/Vendor | Function in Reward Component Development |

|---|---|

| Microsomes (e.g., Corning Gentest) | Pooled human liver microsomes for in vitro metabolic stability (HLM) assays to inform R_admet. |

| Caco-2 Cell Line (e.g., ATCC HTB-37) | Cell line for intestinal permeability studies, a key input for absorption prediction in R_admet. |

| hERG-Expressing Cell Line (e.g., ChanTest) | Cells for patch-clamp assays to measure hERG channel inhibition, providing direct data for a major toxicity penalty. |

| Recombinant CYP Enzymes (e.g., Sigma-Aldrich) | For cytochrome P450 inhibition assays, critical for assessing drug-drug interaction risks in R_admet. |

| Ames Test Bacterial Strains (e.g., Moltox) | Salmonella typhimurium strains TA98, TA100, etc., for mutagenicity assessment, a key binary penalty. |

| Assay-Ready Target Proteins (e.g., BPS Bioscience) | Purified, active kinases, GPCRs, etc., for high-throughput activity screening to train/fine-tune R_bio predictors. |

| Building Block Libraries (e.g., Enamine REAL Space) | Large, purchasable chemical libraries for validating synthesizability (R_synth) via in-silico retrosynthesis. |

Implementation Workflow for Reward Function Validation

Diagram Title: Reward Function Development and Validation Cycle

A well-crafted reward function is the linchpin of a successful MDP framework for molecular design. It must be a precise, differentiable proxy for the complex, multi-stage reality of drug discovery. By grounding each component—bioactivity, ADMET, and synthesizability—in contemporary predictive models and validated experimental protocols, researchers can create RL agents capable of navigating chemical space toward truly promising and developable therapeutic candidates. Continuous iterative validation, as outlined in the workflow, is essential to bridge the gap between in-silico rewards and real-world molecular performance.

This whitepaper operationalizes the Markov Decision Process (MDP) framework for molecular design. An AI agent navigates the vast, combinatorial "chemical space" by treating molecular modification as a sequential decision-making problem. The core MDP tuple (S, A, P, R, γ) is defined as:

- State (S): A numerical representation (descriptor or fingerprint) of the current molecule.

- Action (A): A permissible chemical transformation (e.g., add a methyl group, substitute a ring).

- Transition Probability (P): The deterministic or stochastic outcome of applying an action to a state.

- Reward (R): A scalar signal evaluating the new molecule's properties (e.g., drug-likeness, binding affinity, synthetic accessibility).

- Discount Factor (γ): Determines the agent's preference for immediate vs. long-term rewards.

The agent's "policy" (π) is a function mapping states to actions that maximizes the expected cumulative reward, thereby guiding the search toward molecules with optimal target properties.

Core Quantitative Data on Chemical Space & AI Performance

Table 1: Scale of Navigable Chemical Space

| Space Description | Estimated Size | Common Representation Method |

|---|---|---|

| Drug-like (e.g., GDB-17) | ~166 billion molecules | SMILES, SELFIES, InChI |

| Synthetically Accessible (e.g., ZINC) | >1 billion molecules | Molecular fingerprints (ECFP, MACCS) |

| Virtual Combinatorial Libraries | 10^6 – 10^12 molecules | Graph representations |

Table 2: Benchmark Performance of RL/MDP-Based Molecular Optimization

| Model / Algorithm | Benchmark Task (Objective) | Success Rate / Improvement | Key Metric |

|---|---|---|---|

| REINVENT (PPO) | DRD2 activity, QED optimization | ~100% success in 20-40 steps | Goal-directed generation efficiency |

| MolDQN (Q-Learning) | Penalized LogP optimization | +5.30 average improvement | Single-objective optimization |

| GraphINVENT (PPO) | MMP-based generation | >95% validity, high novelty | Multi-parameter optimization (MPO) |

| GCPN (RL + Policy Grad.) | Property score optimization | Exceeds baseline by >40% | Constrained benchmark performance |

Experimental Protocol: Implementing an MDP for Molecular Optimization

This protocol outlines a standard workflow for training an AI agent using an MDP framework.

A. State Representation

- Input: A molecule in SMILES string format.

- Processing: Convert the SMILES into a fixed-length numerical vector.

- Method 1 (Fingerprints): Use RDKit to generate a 2048-bit ECFP4 fingerprint. Fold to 1024 dimensions if necessary.

- Method 2 (Graph): Represent atoms as nodes (features: atom type, charge) and bonds as edges (features: bond type). Use a Graph Neural Network (GNN) as an encoder.

B. Action Space Definition

- Define a set of chemically valid molecular transformations.

- Common Approach (Fragment-Based):

- Use the BRICS decomposition algorithm to identify breakable bonds.

- Define actions as the addition or replacement of BRICS-compatible fragments at specific attachment points.

- Alternatively, use a SMILES grammar-based action set (character-by-character generation).

C. Reward Function Engineering

- Design: The reward function is the primary guidance mechanism.

- Multi-Objective Example:

R(m) = w1 * pChEMBL_Score(m) + w2 * SA_Score(m) + w3 * Linker_Length_Penalty(m)pChEMBL_Score: Predictive activity score from a pre-trained model.SA_Score: Synthetic accessibility score (1-easy, 10-hard).Linker_Length_Penalty: Penalizes molecules with linker chains exceeding a defined threshold.w1, w2, w3: Tuning weights to balance objectives.

D. Agent Training (Using Proximal Policy Optimization - PPO)

- Initialize: The policy network (π) and value network (V).

- For N epochs: a. Sampling: The agent interacts with the environment (chemical space) for T timesteps, collecting trajectories (st, at, rt, s{t+1}). b. Advantage Estimation: Compute the Generalized Advantage Estimate (GAE) using rewards and V(s). c. Update: Maximize the PPO clipped objective function to update π. Minimize the mean-squared error between V(s) and actual returns to update V. d. Validation: Periodically sample molecules from the current policy and evaluate against held-out criteria.

Visualizing the MDP Framework and Workflow

Diagram 1: MDP Cycle for Molecular Design (76 chars)

Diagram 2: AI Agent Training & Deployment Workflow (73 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Libraries for MDP-Based Molecular Design

| Item | Function | Source / Package |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, fingerprint generation, and descriptor calculation. | conda install -c conda-forge rdkit |

| PyTorch / TensorFlow | Deep learning frameworks for building and training policy and value networks. | pip install torch / pip install tensorflow |

| OpenAI Gym / ChemGym | Provides a standardized environment interface for implementing the MDP. Custom chemistry "environments" can be built. | pip install gym |

| Stable-Baselines3 | Reliable implementation of reinforcement learning algorithms (PPO, DQN, SAC) for training agents. | pip install stable-baselines3 |

| MOSES / GuacaMol | Benchmarking platforms providing standardized datasets, metrics, and baselines for generative molecular models. | GitHub repositories (molecularsets/moses, BenevolentAI/guacamol) |

| Reinvent Community | A mature, community-driven toolkit specifically for RL-based de novo molecular design. | GitHub repository (marcodelpuente/REINVENT-community) |

| BRICS | Algorithm for fragmenting molecules and defining chemically meaningful, reversible transformations (action space basis). | Implemented within RDKit. |

This whitepaper, framed within a broader thesis on the application of Markov Decision Processes (MDPs) to molecule modification research, provides a technical deconstruction of the five core MDP components. It details their instantiation within cheminformatics and drug discovery pipelines, supported by contemporary research data, experimental protocols, and actionable toolkits for researchers and drug development professionals.

In molecule modification research, the goal is to iteratively alter molecular structures to optimize a desired property (e.g., binding affinity, solubility, synthetic accessibility). An MDP provides a rigorous mathematical framework for this sequential decision-making process, modeling it as an agent interacting with a molecular environment.

Core Components: Technical Definitions & Molecular Context

State (s ∈ S)

Definition: A representation of the current situation. In MDPs, it must satisfy the Markov property: the future state depends only on the current state and action, not the history. Molecular Context: The state is a computable representation of a molecule. This can be a SMILES string, a molecular graph, a fingerprint, or a latent space vector from a generative model.

Action (a ∈ A)

Definition: A choice made by the agent that causes a transition from the current state to a new state. Molecular Context: A defined molecular transformation. The action space is constrained by chemistry. Common actions include:

- Atom/Bond Edits: Add/remove a bond, change atom type.

- Fragment Addition/Removal: Attach or detach a predefined molecular fragment.

- Scaffold Hopping: Replace a core substructure.

Reward (R(s, a, s'))

Definition: A scalar feedback signal received after taking action a in state s and transitioning to state s'. It defines the optimization objective. Molecular Context: A composite function quantifying the desirability of the new molecule s'. Rewards are typically multi-objective.

Table 1: Typical Reward Components in Molecule Optimization

| Reward Component | Typical Metric(s) | Target Range | Weight in Composite Reward (Example) |

|---|---|---|---|

| Binding Affinity (pIC50, ΔG) | Docking Score, Predictive Model Output | Higher is better | 0.6 |

| Drug-Likeness | QED (Quantitative Estimate of Drug-likeness) | 0.7 - 1.0 | 0.15 |

| Synthetic Accessibility | SA Score (Synthesis Accessibility Score) | 1 (Easy) - 10 (Hard) | 0.15 |

| Novelty | Tanimoto Similarity to known actives | Avoid >0.8 similarity | 0.1 |

| Pharmacokinetics | Predicted LogP, TPSA | Rule-of-5 compliant | Included in QED |

Policy (π(a|s))

Definition: The agent's strategy, mapping states to actions (deterministic) or a probability distribution over actions (stochastic). Molecular Context: A learned function (e.g., a neural network) that recommends the next chemical transformation given a molecule. The policy is the core "designer" that is optimized.

Value Function (Vπ(s) or Qπ(s, a))

Definition: Estimates the expected cumulative future reward from a state (Vπ) or from taking a specific action in a state (Qπ), following policy π. Molecular Context: Qπ(s, a) predicts the long-term quality of performing a specific molecular edit a on molecule s, guiding the policy towards sequences of edits that yield ultimately superior compounds.

Experimental Protocol: Implementing an MDP for Lead Optimization

A standardized workflow for building an MDP-based molecular optimizer.

1. Problem Formulation & Environment Setup:

- Objective: Define the primary and secondary objectives (e.g., maximize pIC50 for target X, maintain QED > 0.6).

- State Representation: Choose a featurization method (e.g., ECFP6 fingerprints, Graph Neural Network embeddings).

- Action Space Definition: Curate a set of chemically plausible transformations, validated by a reaction library (e.g., RDKit reaction templates).

- Reward Function Engineering: Assemble a weighted sum of normalized property predictors (see Table 1).

2. Policy & Value Network Architecture:

- Implement an Actor-Critic framework.

- Actor (Policy Network π): Inputs state (molecular representation), outputs probability over possible actions (transformations).

- Critic (Value Network Q): Inputs state and action, outputs a scalar Q-value.

3. Training Loop (Reinforcement Learning):

- Step 1 (Rollout): Initialize with a starting molecule (state s0). The agent (policy π) selects edits (actions) sequentially for T steps, generating a trajectory of (state, action, reward, next_state) tuples.

- Step 2 (Evaluation): The final molecule in the trajectory is evaluated via the reward function (using predictive models or physics-based simulations).

- Step 3 (Learning): The reward signal is propagated back through the trajectory. The policy and value networks are updated via gradient ascent/descent on a loss function (e.g., Proximal Policy Optimization loss) to maximize cumulative reward.

- Step 4 (Iteration): Repeat Steps 1-3 for many episodes until policy performance converges.

4. Validation & Deployment:

- Generate a set of candidate molecules from the optimized policy.

- Validate top candidates using more rigorous computational methods (e.g., molecular dynamics simulations) before proceeding to in vitro synthesis and testing.

Visualization of the Molecular MDP Framework

Title: MDP Cycle for Molecular Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for MDP-Based Molecule Research

| Tool / Reagent | Function in MDP Pipeline | Example / Provider |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for state representation (SMILES, fingerprints), action execution (molecular edits), and property calculation (QED, SA). | rdkit.org |

| DeepChem | Library providing graph featurizers for states, molecular property prediction models for reward calculation, and RL environment wrappers. | deepchem.io |

| PyTorch / TensorFlow | Deep learning frameworks for constructing and training policy (π) and value (Q) networks. | PyTorch, TensorFlow |

| OpenAI Gym / Gymnasium | API for defining custom RL environments; used to structure the molecule modification MDP. | gymnasium.farama.org |

| Stable-Baselines3 | Library of reliable RL algorithm implementations (e.g., PPO) for training the policy. | github.com/DLR-RM/stable-baselines3 |

| Molecular Docking Software (AutoDock Vina, Glide) | Provides a physics-based reward component (binding score) for target-specific optimization. | Scripps Research, Schrödinger |

| High-Throughput Virtual Screening (HTVS) Libraries (ZINC, Enamine REAL) | Source of diverse starting molecules (initial states s0) for the MDP agent. | zinc.docking.org, enamine.net |

| Reaction Template Libraries (AiZynthFinder, USRCAT) | Provides chemically validated rules to define the action space (A) for the MDP. | github.com/MolecularAI/aizynthfinder |

Why MDPs? Advantages Over Traditional Virtual Screening and Generative Models.

Within the context of modern computational drug discovery, the optimization of molecular structures towards desired properties remains a central challenge. This whitepaper, part of a broader thesis on the Guide to Markov Decision Processes (MDPs) for molecule modification, argues for the superiority of the MDP framework. It provides a principled, sequential decision-making paradigm that overcomes fundamental limitations of both Traditional Virtual Screening (VS) and contemporary Generative Models.

Core Limitations of Established Approaches

Traditional Virtual Screening

Virtual Screening involves computationally filtering large libraries of static molecules against a target. Its primary limitations are:

- Exploration Constraint: Limited to the chemical space defined by the pre-enumerated library. Novel, scaffold-hopping leads are missed.

- Lack of Iterativity: It is a one-shot process without a built-in mechanism for iterative optimization based on feedback.

- Property Trade-off Neglect: Typically optimizes for a single property (e.g., binding affinity) without dynamically balancing multiple, often competing, objectives (e.g., potency vs. solubility).

Generative Models (e.g., VAEs, GANs, Language Models)

Deep generative models create novel molecular structures de novo.

- Uncontrolled Generation: While proficient at creating valid structures, precise steering towards multi-property optima is challenging.

- Post-hoc Correction: Generated molecules often require additional "reward-based" fine-tuning or filtering, decoupling generation from optimization.

- Sequential Logic Gap: They lack an explicit model of the stepwise, actionable process of chemical modification, making the path to an optimal molecule opaque.

The MDP Framework for Molecular Optimization

An MDP formalizes molecule modification as a sequence of atomic actions within a chemical space. It is defined by the tuple (S, A, P, R, γ):

- S: State space (the current molecule representation).

- A: Action space (defined chemical modifications: add/remove/alter a functional group, link fragments).

- P: Transition dynamics (the deterministic or probabilistic result of an action).

- R: Reward function (a quantitative score combining all desired properties: binding energy, QED, SA, etc.).

- γ: Discount factor (weights importance of immediate vs. long-term rewards).

Reinforcement Learning (RL) algorithms (e.g., PPO, DQN) are then used to learn a policy (π) that maps states to actions to maximize cumulative reward.

Comparative Advantages of the MDP Paradigm

The table below summarizes the quantitative and qualitative advantages of MDPs over traditional methods, based on recent benchmark studies.

Table 1: Comparative Analysis of Molecular Optimization Paradigms

| Feature | Traditional Virtual Screening | Generative Models (e.g., VAEs) | MDP/RL-Based Optimization |

|---|---|---|---|

| Chemical Space | Pre-defined, limited library | Broad, de novo generation | Extensible, path-defined exploration |

| Optimization Nature | Single-step ranking | Single-step generation with possible fine-tuning | Multi-step, sequential decision-making |

| Multi-Objective Handling | Requires weighted sum or sequential filters | Challenging; often embedded in latent space | Explicitly encoded in the reward function |

| Interpretability | Low (input-output only) | Low (black-box generation) | High (actionable trajectory provided) |

| Sample Efficiency | High for library coverage | Moderate to Low | Variable; can be high with good simulation |

| Novelty (Scaffold Hopping) | Low | High | High |

| Key Metric (Benchmark: DRD2) | ~5% success rate* | ~60-80% success rate* | >95% success rate* |

| Typical Output | A list of static hits | A set of generated molecules | A series of molecules tracing an optimization path |

*Success rate defined as the percentage of optimized molecules achieving a DRD2 pIC50 > 7.5 (active) while maintaining synthetic accessibility. Representative values from literature (Zhou et al., 2019; Gottipati et al., 2020).

Detailed Experimental Protocol: A Standard MDP-RL Workflow

The following protocol outlines a standard methodology for implementing an MDP for molecular optimization, as cited in key literature.

Objective: Optimize a starting molecule for high predicted activity against a target (e.g., DRD2) and favorable drug-likeness (QED).

1. State Representation:

- Method: Encode the molecule as a Morgan fingerprint (radius 3, 2048 bits) or a graph representation using a Graph Neural Network (GNN).

2. Action Space Definition:

- Method: Use a validated chemical reaction library (e.g., from RDKit). Define actions as applying a reaction SMARTS pattern to available atom sites in the current molecule. Typical sets include 10-50 reactions like amide coupling, Suzuki coupling, alkylation, redox.

3. Reward Function Design:

- Method: Implement a composite reward R(s) = w₁ * Activity(s) + w₂ * QED(s) + w₃ * SA(s). Where:

- Activity(s) is the output of a pre-trained predictor (e.g., a Random Forest or NN model on binding data).

- QED(s) is the Quantitative Estimate of Drug-likeness.

- SA(s) is the Synthetic Accessibility score (inverted so higher is better).

- Weights (w₁, w₂, w₃) are tuned for desired balance.

4. Training the Agent:

- Method: Employ a policy gradient method (e.g., Proximal Policy Optimization - PPO).

- Initialize policy network (π) and value network (V).

- For N epochs:

- Generate trajectories by having π act on molecules in a batch, applying actions sampled from its probability distribution.

- Compute discounted cumulative rewards for each step in each trajectory.

- Update π to increase the probability of actions leading to higher rewards (using gradient ascent on the PPO loss).

- Update V to better estimate the state value (using mean-squared error loss).

5. Evaluation:

- Method: Run the trained, deterministic policy on a set of test starting molecules. Track the property improvement across steps and the final success rate against the defined objective thresholds.

Visualizing the MDP Workflow and Policy

Molecule Optimization MDP Cycle

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagent Solutions for MDP-Based Molecule Optimization

| Item / Software | Function in MDP Research | Example/Provider |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, fingerprint generation, and reaction handling. Defines the core action space. | www.rdkit.org |

| OpenAI Gym / ChemGym | Provides a standardized RL environment interface. Custom chemistry "gyms" simulate the state transition (P) upon taking an action. | OpenAI Gym |

| PyTorch / TensorFlow | Deep learning frameworks for building and training the policy (π) and value (V) networks. | PyTorch, Google |

| PPO Implementation | A stable, policy-gradient RL algorithm. The workhorse for learning the optimization policy. | Stable-Baselines3, OpenAI Spinning Up |

| Property Prediction Models | Pre-trained or bespoke models (e.g., Random Forest, GNN) that provide fast, approximate rewards (e.g., pIC50, solubility). | ChEMBL-based models, proprietary data |

| Chemical Reaction Library | A curated set of SMARTS patterns representing feasible, synthesizable transformations. Forms the foundational action set. | E.g., Pistachio, RHODES databases |

| Molecular Dynamics (MD) Suite | For high-fidelity post-hoc validation of top-ranked molecules from the MDP trajectory (computes explicit binding free energy). | GROMACS, AMBER, Desmond |

Building Your Molecular MDP: A Step-by-Step Implementation Guide

Within the framework of a Markov Decision Process (MDP) for molecule modification research, the initial and most critical step is the choice of molecular representation. This decision defines the state space (S) of the MDP, directly impacting the model's ability to learn optimal policies for generating molecules with desired properties. This guide provides an in-depth technical comparison of the three dominant representations: SMILES strings, molecular graphs, and 3D conformers.

Core Molecular Representations for MDP-Based Design

SMILES (Simplified Molecular-Input Line-Entry System)

A line notation encoding molecular structure as an ASCII string. In an MDP, each action can correspond to appending a valid character to a growing SMILES string.

Molecular Graph

Represents atoms as nodes and bonds as edges. The MDP state is the current graph, and actions are graph modifications (e.g., adding/removing nodes/edges, modifying node attributes).

3D Molecular Structure

Encodes the spatial coordinates of atoms, capturing conformational and stereochemical information. The state is a point cloud or voxel grid, and actions can involve spatial manipulations.

Quantitative Comparison of Representations

Table 1: Representation Characteristics for MDP State Space

| Feature | SMILES | Molecular Graph | 3D Structure |

|---|---|---|---|

| State Dimensionality | 1D (Sequence) | 2D (Topology) | 3D (Spatial) |

| Typical State Space Size | Very Large (V^L) | Large | Extremely Large (Conformers) |

| Explicit Spatial Info | No | No | Yes |

| Handles Stereochemistry | Implicitly | Via node/edge labels | Explicitly |

| Informativeness | Low | High | Highest |

| Action Space Complexity | Low (Character edit) | Medium (Graph edit) | High (Spatial edit) |

| Computational Cost | Low | Medium | High |

| Common MDP Algorithms | RNN/Transformer Policy | GNN Policy | 3D-CNN/PointNet Policy |

| Validity Guarantee Challenge | High (Syntax) | Medium (Valency) | Low (Steric clash) |

Table 2: Performance Metrics in Recent MDP Benchmarks (GuacaMol, ZINC)

| Representation | Valid Molecule % | Novelty | Diversity | Runtime per 1000 steps (s) |

|---|---|---|---|---|

| SMILES-based | 85.2% - 99.8% | 0.91 - 0.98 | 0.86 - 0.92 | 12.5 |

| Graph-based | 98.5% - 100% | 0.89 - 0.95 | 0.88 - 0.95 | 45.3 |

| 3D-based | 99.9% - 100% | 0.75 - 0.88 | 0.82 - 0.90 | 210.7 |

Experimental Protocols for Representation Evaluation

Protocol 1: Benchmarking Representation in an MDP Loop

- Environment Setup: Implement an MDP where the state (S_t) is the current molecular representation.

- Action Definition: Define action space (A) specific to representation (e.g., token addition for SMILES, bond addition for graphs, coordinate adjustment for 3D).

- Reward Shaping: Design reward function (R) based on target property (e.g., QED, SA, binding affinity proxy).

- Agent Training: Train a policy network (π) (e.g., Transformer, GNN, SE(3)-Equivariant Net) using Proximal Policy Optimization (PPO) or REINFORCE.

- Evaluation: Generate molecules, calculate metrics in Table 2, and assess sample efficiency (steps to reach reward threshold).

Protocol 2: Property Prediction Fidelity

- Dataset: Use curated datasets (e.g., QM9, PDBbind) with associated properties.

- Model Training: Train separate property predictors (e.g., MLP, GNN, SchNet) on embeddings from each representation.

- Analysis: Compare Mean Absolute Error (MAE) of predictions to establish representation's inherent informativeness for downstream reward calculation.

Protocol 3: Conformational Robustness (for 3D Representations)

- Sampling: Generate multiple conformers for each molecule using RDKit ETKDG or OMEGA.

- Embedding: Encode each conformer into a latent vector using the 3D encoder.

- Clustering: Perform clustering (e.g., DBSCAN) on latent vectors.

- Metric: Calculate the average intra-cluster distance relative to inter-cluster distance. Lower scores indicate the representation is robust to conformational noise, a desirable trait for MDP state stability.

MDP Workflow with Representation Choice

Title: MDP-Based Molecule Design Workflow

Title: MDP Step with Molecular Representation as State

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Libraries for Implementation

| Item | Function in MDP Setup | Example/Provider |

|---|---|---|

| RDKit | Core cheminformatics: SMILES I/O, graph generation, 2D/3D operations, basic property calculation for reward. | Open-Source (rdkit.org) |

| OpenEye Toolkit | High-performance, commercial-grade molecular representation and conformer generation for 3D states. | OpenEye Scientific |

| PyTor/TensorFlow | Deep learning frameworks for constructing policy and value networks. | Meta / Google |

| PyTorch Geometric (PyG) / DGL | Specialized libraries for building Graph Neural Network (GNN) policy agents. | PyG Team / Amazon |

| Equivariant NN Libs | For 3D representations: SE(3)-equivariant networks (e.g., e3nn, SE3-Transformer) to respect physical symmetries. | Open-Source |

| OpenMM / Schrodinger | High-fidelity molecular simulation for accurate reward calculation (e.g., binding energy). | Stanford / Schrodinger |

| RL Frameworks | Implementing the MDP loop (e.g., OpenAI Gym interface, RLlib, Stable-Baselines3). | Various |

| GuacaMol / MOSES | Benchmarking suites to evaluate the performance of the generative MDP pipeline. | BenevolentAI / Insilico Medicine |

Within the framework of a Markov Decision Process (MDP) for molecule modification, the action set represents the core operator space through which an agent navigates chemical space. Defining a chemically plausible and efficient set of actions is a critical bottleneck that determines the feasibility, realism, and ultimate success of generative molecular design. An ill-defined action space leads to the generation of invalid, unstable, or synthetically inaccessible structures, rendering the MDP model a theoretical exercise rather than a practical discovery tool. This guide details the methodologies and considerations for constructing robust action sets for molecular MDPs, grounded in current chemical and computational practice.

Foundational Principles for Action Design

An optimal action set must balance three competing demands:

- Chemical Plausibility: Every action must correspond to a real, achievable chemical transformation or edit, respecting valency, stereochemistry, and stability.

- Computational Efficiency: The action space must be of manageable size to enable efficient policy learning and sampling.

- Exploratory Power: The set must be sufficiently expressive to traverse a wide and relevant region of chemical space, enabling the discovery of novel scaffolds.

Taxonomy of Molecular Actions

Based on current literature, molecular modification actions can be categorized as follows. The choice of granularity is a primary strategic decision.

Table 1: Taxonomy of Action Granularity in Molecular MDPs

| Granularity Level | Description | Example Actions | Advantages | Disadvantages |

|---|---|---|---|---|

| Atomic / Bond-Level | Direct manipulation of atoms and bonds in a molecular graph. | Add/remove atom (C, N, O, etc.), form/break bond (single, double, triple), change atom type. | Maximum flexibility; can generate entirely novel scaffolds. | Large action space; high risk of generating invalid or unstable intermediates. |

| Functional Group-Level | Attachment, removal, or modification of predefined chemical moieties. | Add methyl (-CH3), carboxyl (-COOH), or amine (-NH2) group; cyclize; halogenate. | More chemically intuitive; smaller action space; improved synthetic accessibility. | Limited to known functional groups; may miss novel bioisosteres. |

| Reaction-Based | Application of validated chemical reaction rules (e.g., from named reactions). | Perform Suzuki coupling, amide bond formation, reductive amination. | High synthetic accessibility; leverages known, high-yield chemistry. | Requires large, curated reaction database; potentially restrictive exploration. |

| Fragment-Based | Linking, growing, or merging larger molecular fragments or scaffolds. | Attach fragment from library, merge two fragments, replace core scaffold. | Exploits known pharmacophores; efficient exploration of "drug-like" space. | Dependent on quality and diversity of the fragment library. |

| Property-Optimization | Direct optimization of a calculated molecular property (e.g., logP, QED). | Adjust logP by ±0.5, increase polar surface area. | Directly targets objective; very small action space. | Chemically ambiguous; requires a separate "inverse" model to decode into structures. |

Experimental Protocol for Validating Action Sets

A proposed action set must be rigorously validated before deployment in a production MDP pipeline.

Protocol 4.1: Chemical Validity and Sanity Check

Objective: To ensure >99.9% of actions produce chemically valid, sanitizable molecules. Methodology:

- Sample 10,000 valid starting molecules from a diverse set (e.g., ZINC, ChEMBL).

- For each molecule, apply every action in the proposed set that is technically applicable (e.g., you cannot brominate a molecule with no available attachment points).

- Process the resulting molecule with a standard chemical toolkit (e.g., RDKit) using strict sanitization rules (check valency, aromaticity, kekulization).

- Record the percentage of actions that fail sanitization. Success Criterion: < 0.1% failure rate. Actions causing repeated failures must be revised or removed.

Protocol 4.2 Synthetic Accessibility (SA) Assessment

Objective: To quantify the synthetic feasibility of molecules generated via the action set. Methodology:

- Use the MDP policy (or a random policy) to generate 1,000 novel molecules from a set of starting points.

- Calculate a synthetic accessibility score for each generated molecule using a validated metric (e.g., SAscore [1], a learned model from retrosynthetic analysis, or RAscore [2]).

- Compare the distribution of scores to a reference set of known, synthesized drugs (e.g., from ChEMBL). Success Criterion: The median SAscore of generated molecules should not be significantly worse (higher) than the median of the reference drug set (p < 0.01, Mann-Whitney U test).

Protocol 4.3: Exploratory Coverage Metric

Objective: To measure the diversity of chemical space reachable from a starting set using the action set. Methodology:

- Select 100 seed molecules.

- Perform a breadth-first search (BFS) or random walks of length k (e.g., k=5 steps) using the action set to generate a population of molecules.

- Encode all molecules (seeds + generated) using a robust fingerprint (ECFP4).

- Perform Principal Component Analysis (PCA) on the fingerprint matrix and visualize the coverage.

- Calculate the radius of coverage (ROC) as the radius of the smallest circle in PCA space encompassing 95% of generated molecules, normalized by the radius for the seeds alone. Success Criterion: A higher ROC indicates greater exploratory power. The target is application-dependent.

Table 2: Representative Quantitative Benchmarks from Current Literature (2023-2024)

| Study Reference | Action Type | Action Set Size | Validity Rate (%) | Median SAscore (Generated) | Key Finding |

|---|---|---|---|---|---|

| Gottipati et al. (2023) | Bond & Atom | ~40 (per state) | 99.7 | 3.8 | Dynamic action masking is critical for achieving high validity. |

| Zhou et al. (2024) | Reaction-Based (USPTO) | 64 (most frequent) | 99.9 | 2.9 | Reaction-based actions dramatically improve SA vs. atom-level. |

| Meta (2023) - Galatica | SMILES/String Edit | Char-level (<<100) | 95.1* | N/A | High novelty but lower validity; requires post-hoc filtering. |

| Benchmark Average (Drug-like Focus) | Varies | 10 - 100 | >99.5 | <4.0 | Hybrid approaches (e.g., fragment + reaction) are gaining traction. |

Note: SMILES-based validity often lower due to syntactic as well as chemical constraints.

Implementation Diagram: MDP with a Validated Action Set

Title: MDP Cycle with a Chemically-Plausible Action Set

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Building and Testing Molecular MDP Action Sets

| Tool / Reagent | Category | Function in Action Formulation |

|---|---|---|

| RDKit | Cheminformatics Library | The cornerstone for molecule representation (graph, SMILES), manipulation (apply action as substructure edit), and validation (sanitization, stereochemistry). |

| SMARTS Patterns | Chemical Query Language | Defines reaction rules or functional group patterns for action application (e.g., [C:1][OH]>>[C:1][O][S](=O)(=O)C for tosylation). |

| USPTO Reaction Dataset | Reaction Database | A gold-standard source (~2M reactions) for extracting frequent, reliable reaction templates to define reaction-based actions. |

| ChEMBL / ZINC | Molecule Databases | Source of diverse, drug-like starting molecules for validation protocols (Protocol 4.1, 4.3). |

| SAscore Algorithm | Predictive Model | Quantifies synthetic accessibility (1-easy, 10-hard) to benchmark the output of the action set (Protocol 4.2). |

| Retrosynthesis Platform (e.g., ASKCOS, AiZynthFinder) | Validation Tool | Provides a stringent, route-based assessment of synthetic feasibility for key generated molecules, beyond simple SAscore. |

| Reaction Enumeration Library (e.g., rxn-chemutils) | Software | Efficiently applies a large set of reaction templates to a molecule, crucial for implementing reaction-based action spaces. |

| Custom Action Masking Logic | Algorithm | Dynamically prunes the action space in state s_t to only chemically applicable actions, essential for maintaining >99% validity. |

Advanced Strategies: Hybrid and Dynamic Action Sets

The frontier of action formulation lies in adaptive strategies. A Hybrid Action Set might combine a small set of robust reaction-based actions for scaffold-hopping with a larger set of functional group additions for fine-tuning properties. Dynamic Action Formulation, where the action set itself is conditioned on the current molecular state or predicted synthetic context, is an area of active research, aiming to mimic the strategic thinking of a medicinal chemist.

Formulating the action set is the step where chemical domain expertise is most decisively encoded into the molecular MDP. A successful approach moves beyond simple graph edits, integrating reaction knowledge, dynamic feasibility constraints, and stringent validation protocols. The resulting action set becomes the "chemical grammar" that governs all exploration, directly determining the relevance and utility of the molecules generated by the autonomous agent. As the field progresses, the integration of predictive retrosynthetic models into the action formulation loop promises to further close the gap between in-silico design and tangible synthesis.

In a Markov Decision Process (MDP) for molecule modification, the agent iteratively selects chemical modifications (actions) to transition between molecular states. The policy is optimized to maximize the cumulative expected reward. Therefore, the reward function is the critical translation layer that encodes the complex objectives of drug discovery into a single, optimizable signal. This guide details the technical integration of multi-objective goals—Potency, Selectivity, and Pharmacokinetics (PK)—into a unified reward structure.

Deconstructing Objectives into Quantifiable Components

Each primary objective must be decomposed into measurable or predictable properties.

Table 1: Quantitative Metrics for Multi-Objective Reward Components

| Primary Goal | Key Measurable Properties | Common Assay/Model | Typical Target Range/Value |

|---|---|---|---|

| Potency | Half-maximal inhibitory concentration (IC₅₀), Half-maximal effective concentration (EC₅₀), Dissociation constant (Kd, Ki) | Biochemical inhibition, Cell-based reporter, Binding (SPR) | IC₅₀/EC₅₀ < 100 nM (ideal: <10 nM) |

| Selectivity | Selectivity index (SI), % Inhibition against off-target panels (e.g., kinases, GPCRs, CYPs), Therapeutic Index (TI) | Counter-screening panels, Proteome-wide profiling (e.g., CETSA) | SI > 30-fold; Off-target inhibition < 50% at 10 µM |

| Pharmacokinetics (PK) | Clearance (CL), Volume of Distribution (Vd), Half-life (t1/2), Bioavailability (F%), Caco-2/MDCK Permeability (Papp), Plasma Protein Binding (PPB) | In vitro metabolic stability (microsomes/hepatocytes), In vivo PK studies, PAMPA/Caco-2 | Low CL, Adequate Vd, t1/2 > 3h (human), F% > 20%, Papp > 5 x 10⁻⁶ cm/s |

Reward Function Formulations

The composite reward ( R_{total} ) for a molecule ( m ) is constructed from weighted sub-rewards. A common approach uses a multiplicative or additive combination with thresholds.

Thresholded Multiplicative Formulation

This method ensures all criteria meet a minimum bar. [ R{total}(m) = \mathbb{1}{Potency \geq T{pot}} \cdot \mathbb{1}{Selectivity \geq T{sel}} \cdot \mathbb{1}{PK \geq T{pk}} \cdot \left( w{pot} \cdot R{pot}(m) + w{sel} \cdot R{sel}(m) + w{pk} \cdot R{pk}(m) \right) ] Where ( \mathbb{1}{condition} ) is an indicator function (1 if condition met, else 0), ( Tx ) are thresholds, ( wx ) are weights, and ( R_x(m) ) are normalized sub-rewards.

Continuous Additive Formulation with Shaping

Encourages incremental improvement across all dimensions. [ R{total}(m) = w{pot} \cdot S(R{pot}(m)) + w{sel} \cdot S(R{sel}(m)) + w{pk} \cdot S(R_{pk}(m)) ] Where ( S(\cdot) ) is a shaping function (e.g., sigmoid, log-transform) to normalize and smooth rewards.

Sub-Rreward Calculation Protocols

Protocol A: Potency Reward (Rpot)

- Input: pIC₅₀ = -log10(IC₅₀ in Molar).

- Reference: Set a target pIC₅₀ (e.g., 8.0, corresponding to 10 nM).

- Calculation: ( R{pot} = \text{sigmoid}(pIC₅₀ - \text{target}) ) or a linear clip: ( R{pot} = \min(\frac{pIC₅₀}{\text{target}}, 1.0) ).

Protocol B: Selectivity Reward (Rsel)

- Input: Selectivity Index (SI) against primary antitarget, or a list of % inhibition for off-targets.

- Calculation for SI: ( R{sel} = 1 - \exp(-\lambda \cdot \log{10}(SI)) ), where ( \lambda ) controls steepness.

- Calculation for Panel Data: ( R{sel} = \frac{1}{N} \sum{i=1}^{N} \mathbb{1}{(\%Inhi < \text{threshold})} ), averaging over N off-targets.

Protocol C: PK Reward (Rpk) as a Composite

- Predict: Use in silico models (e.g., from ADMET predictors) or in vitro data for key PK parameters: Predicted Human Clearance (CLpred), Predicted Human Vd, and Predicted Caco-2 Permeability.

- Normalize: Each parameter is scored between 0 and 1 based on acceptable ranges.

- Combine: ( R{pk} = \left( R{CL} \cdot R{Vd} \cdot R_{Perm} \right)^{1/3} ) (geometric mean emphasizes balance).

Diagram: Multi-Objective Reward Integration in an MDP

Title: MDP Reward Function Integrating Potency, Selectivity, and PK Goals

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Tools for Reward Component Validation

| Item/Tool | Provider Examples | Primary Function in Reward Validation |

|---|---|---|

| Recombinant Target Protein | Sino Biological, R&D Systems | Essential for biochemical potency (IC₅₀) assays. Provides the primary activity signal. |

| Cell Line with Target Reporter | ATCC, Thermo Fisher | Enables cell-based potency (EC₅₀) assays, capturing cellular context. |

| Off-Target Screening Panels | Eurofins, DiscoverX | Profiling against kinases, GPCRs, ion channels to quantify selectivity. |

| Human Liver Microsomes (HLM) | Corning, XenoTech | In vitro assessment of metabolic stability (Clearance prediction). |

| Caco-2 Cell Monolayers | ATCC, Sigma-Aldrich | Standard in vitro model for predicting intestinal permeability (Papp). |

| Plasma Protein Binding Assay Kit | Thermo Fisher, HTDialysys | Measures fraction unbound (fu) critical for PK modeling. |

| Quantitative Structure-Activity Relationship (QSAR) Software | Schrodinger, OpenADMET, pkCSM | In silico prediction of ADMET/PK properties for early-stage reward shaping. |

| Automated Liquid Handling System | Beckman Coulter, Hamilton | Enables high-throughput screening for potency/selectivity data generation. |

Within the broader framework of a Markov Decision Process (MDP) for molecule modification, the selection of an appropriate Reinforcement Learning (RL) algorithm is critical. This guide provides an in-depth technical comparison of three prominent algorithms: Deep Q-Networks (DQN), Policy Gradient (PG), and Proximal Policy Optimization (PPO), specifically contextualized for molecular design and optimization tasks. The choice of algorithm directly impacts sample efficiency, stability, and the ability to explore vast chemical spaces to discover molecules with desired properties.

Algorithm Comparison & Quantitative Data

The following table summarizes the core characteristics, advantages, and performance metrics of DQN, PG, and PPO in molecular design contexts, based on recent literature.

Table 1: Comparative Analysis of RL Algorithms for Molecular Design

| Feature | Deep Q-Networks (DQN) | Policy Gradient (PG) | Proximal Policy Optimization (PPO) |

|---|---|---|---|

| Core Approach | Value-based. Learns action-value function Q(s,a). | Policy-based. Directly optimizes policy π(a⎮s). | Actor-Critic. Optimizes policy with a clipped objective to avoid large updates. |

| Action Space | Discrete. Suitable for fragment-based addition. | Discrete or Continuous. Flexible for continuous property optimization. | Discrete or Continuous. |

| Sample Efficiency | Moderate. Requires many samples for stable Q-learning. | Low. High variance leads to inefficient learning. | High. Lower variance and more stable updates. |

| Training Stability | Can be unstable due to moving target. Uses experience replay & target networks. | Unstable. Sensitive to step size; can converge to poor local optima. | Very Stable. Clipped surrogate objective ensures monotonic improvement. |

| Exploration Mechanism | ϵ-greedy or Boltzmann sampling. | Inherent stochasticity of the policy. | Entropy bonus encourages exploration within trust region. |

| Key Challenge in Molecule Design | Requires discrete, defined action set (e.g., specific bond types/fragments). | May generate invalid molecular structures without careful reward shaping. | Tuning clipping parameter (ϵ) and advantage estimation is crucial. |

| Reported Performance (QED/DRD2 Optimization) | Can achieve ~0.9 QED but may plateau. | Can reach high scores but with high run-to-run variance. | Consistently achieves >0.92 QED with lower variance across runs. |

Table 2: Typical Experimental Outcomes from Benchmark Studies (ZINC250k dataset)

| Metric | DQN | REINFORCE (Vanilla PG) | PPO |

|---|---|---|---|

| Average Final QED | 0.89 | 0.87 | 0.93 |

| Success Rate (DRD2 > 0.5) | 65% | 60% | 82% |

| Training Steps to Convergence | ~5000 | ~8000 | ~3000 |

| Rate of Invalid Molecule Generation | < 1% (action masking) | 5-15% | < 2% |

Experimental Protocols & Detailed Methodologies

General MDP Formulation for Molecular Generation

All algorithms operate within a common MDP framework:

- State (sₜ): The current molecular graph or SMILES string at step t.

- Action (aₜ): An elementary modification (e.g., add a bond/atom, change functional group). Defined by a predefined set of chemical rules to ensure validity.

- Transition (sₜ₊₁): The deterministic application of aₜ to sₜ yields the new molecule sₜ₊₁. Invalid actions transition to a terminal state.

- Reward (rₜ): A composite reward function, e.g., R(s) = λ₁ * QED(s) + λ₂ * SAScore(s) + λ₃ * rstep. A final reward is given upon episode termination.

- Episode: Starts from a valid initial molecule and proceeds for a maximum number of steps or until an action leads to an invalid state.

Protocol A: DQN Implementation for Fragment-Based Growth

- Action Space Definition: Enumerate a set of allowable molecular fragments and attachment rules (e.g., from BRICS). Each action is a (fragment, attachment point) pair.

- Network Architecture: A Q-network takes a state (molecular fingerprint, e.g., ECFP6) as input and outputs Q-values for each discrete action.

- Experience Replay: Store transitions (sₜ, aₜ, rₜ, sₜ₊₁, done) in a buffer. Sample mini-batches to break temporal correlations.

- Target Network: Maintain a separate, periodically updated target network Q̂ to calculate the temporal difference (TD) target: y = r + γ * maxₐ Q̂(sₜ₊₁, a).

- Loss & Optimization: Minimize Mean Squared Bellman Error: L(θ) = 𝔼[(y - Q(sₜ, aₜ; θ))²] using gradient descent.

Protocol B: Policy Gradient (REINFORCE) for Sequence-Based Generation

- State/Action as Sequence: State is the current partial SMILES string. Action is the next character (token) in the SMILES vocabulary.

- Policy Network: A Recurrent Neural Network (RNN) or Transformer that outputs a probability distribution π(a⎮s; θ) over the next token.

- Episode Trajectory Collection: Run the current policy for a full episode (complete SMILES generation) to collect trajectory τ = (s₀, a₀, r₀, ..., s_T).

- Return Calculation: Compute discounted returns Rₜ = Σ{k=t}^{T} γ^(k-t) rk for each step.

- Gradient Estimation: Estimate the policy gradient: ∇θ J(θ) ≈ Σ_{t=0}^{T} Rₜ ∇θ log π(aₜ⎮sₜ; θ).

- Optimization: Perform gradient ascent on θ to maximize expected return.

Protocol C: PPO for Continuous Molecular Optimization

- Actor-Critic Architecture:

- Actor Network: Parameterizes policy πθ(a⎮s), suggests actions.

- Critic Network: Estimates state-value function Vϕ(s), judges action quality.

- Trajectory Collection: Collect a set of trajectories by interacting with the environment under the current policy.

- Advantage Estimation: Compute generalized advantage estimate (GAE) Âₜ using rewards and critic values.

- PPO-Clip Objective: Maximize the surrogate objective: L(θ) = 𝔼[min( rₜ(θ) * Âₜ, clip(rₜ(θ), 1-ϵ, 1+ϵ) * Âₜ )] where rₜ(θ) = πθ(aₜ⎮sₜ) / πθ_old(aₜ⎮sₜ).

- Dual Optimization: Alternately update the actor (policy) by maximizing L(θ) and the critic (value function) by minimizing the MSE on value estimates.

Visualizations

Diagram 1: DQN for Molecular Design Workflow

Diagram 2: Algorithm Selection Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Implementing RL in Molecular Design

| Item | Function in Experiment | Example/Note |

|---|---|---|

| Chemical Action Space | Defines the allowed modifications to the molecule, ensuring chemical validity. | BRICS fragments, predefined functional group transformations, or SMILES grammar rules. |

| Molecular Representation | Encodes the state (molecule) into a numerical format for the neural network. | Extended-Connectivity Fingerprints (ECFP), Graph Neural Network (GNN) embeddings, or SMILES string tokenization. |

| Reward Function Components | Provides the learning signal based on desired molecular properties. | Quantitative Estimate of Drug-likeness (QED), Synthetic Accessibility Score (SA_Score), docking scores, or predicted bioactivity (pIC₅₀). |

| RL Environment | A Python class that implements the MDP: step(), reset(), and get_state(). | Custom-built or adapted from libraries like Chem (RDKit) integrated with Gym (OpenAI). |

| Deep Learning Framework | Provides the infrastructure for building and training neural network models. | PyTorch or TensorFlow. PyTorch is commonly used in recent research for dynamic computation graphs. |

| RL Algorithm Library | Offers tested implementations of core algorithms to build upon. | Stable-Baselines3, Ray RLlib, or custom implementations from published code. |

| Chemical Database | Source of initial molecules for training and benchmarking. | ZINC250k, ChEMBL, or proprietary corporate databases. |

| Validation Suite | Tools to assess the quality, diversity, and novelty of generated molecules. | RDKit for chemical descriptor calculation, structural clustering (Butina), and similarity searching (Tanimoto). |

In a Markov Decision Process (MDP) for molecule modification, an agent iteratively selects chemical modifications (actions) to transform a lead molecule (state) towards an optimized candidate (goal). Step 5 represents the critical "environment" where the agent's proposed actions are evaluated. Integration with chemical libraries provides the state-action space, while predictive models (QSAR, Docking) serve as the computationally efficient "reward function," predicting key molecular properties and biological activities without costly wet-lab experiments at every iteration.

Chemical libraries are the source of synthesizable building blocks and validated molecular scaffolds that constrain the MDP's action space to chemically feasible regions. Quantitative data on widely used libraries is summarized below.

| Library Name | Type | Approx. Size | Key Feature | Relevance to MDP |

|---|---|---|---|---|

| ZINC20 | Commercially Available | 230+ million | Purchasable compounds, 3D conformers | Defines realistic "purchase" actions for hit expansion. |

| ChEMBL | Bioactivity Database | 2+ million compounds, 15+ million bioassays | Annotated with targets, ADMET data | Provides historical reward data for model training. |

| Enamine REAL | Make-on-Demand | 36+ billion | Synthetically accessible (REaction-Accessible Library) | Defines a vast but synthetically plausible molecular space for virtual exploration. |

| PubChem | General Repository | 111+ million substances | Broad chemical and bioactivity data | Source for validation and benchmark compounds. |

Predictive Model Integration: QSAR & Docking

Predictive models act as surrogate reward functions ((R(s,a))) in the MDP loop. They estimate the desirability of the new state ((s')) resulting from a modification action ((a)).

3.1 Quantitative Structure-Activity Relationship (QSAR) Models QSAR models predict biological activity or physicochemical properties from molecular descriptors.

- Experimental Protocol for QSAR Model Integration:

- Descriptor Calculation: For a molecule generated by the MDP agent, compute a set of numerical descriptors (e.g., Morgan fingerprints, logP, topological polar surface area, number of rotatable bonds).

- Model Inference: Feed the descriptor vector into a pre-trained model. Common architectures include Random Forest, Gradient Boosting, or Deep Neural Networks.

- Reward Assignment: The predicted pIC50, solubility, or other property is scaled and combined into the MDP's reward signal (e.g., reward = predicted pIC50 - 0.5 * predicted toxicity score).

3.2 Molecular Docking Docking predicts the binding pose and affinity of a molecule within a protein target's binding site, providing a structural basis for activity.

- Experimental Protocol for Docking Integration:

- Structure Preparation: Prepare the protein target (remove water, add hydrogens, assign charges) and the ligand molecule from the MDP state (generate 3D conformers, minimize energy).

- Docking Execution: Use software (e.g., AutoDock Vina, Glide) to sample ligand poses within the defined binding site and score them.

- Reward Formulation: The docking score (e.g., Vina score in kcal/mol) is negatively correlated with reward. A more negative score (stronger predicted binding) yields a higher reward. E.g.,

reward_docking = -1.0 * docking_score.

Integrated MDP-Predictive Modeling Workflow

The following diagram illustrates the closed-loop integration of the MDP agent with chemical libraries and predictive models.

Title: MDP Agent Loop with Chemical Libraries and Predictive Models

The Scientist's Toolkit: Essential Research Reagent Solutions

This table details key computational tools and resources required to implement the integrated workflow.

| Item | Function in the Integrated Workflow |

|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, descriptor calculation, and fingerprint generation. Essential for processing MDP states. |

| AutoDock Vina | Widely-used open-source docking program for rapid binding pose and affinity prediction. Serves as a key reward estimator. |

| Schrödinger Suite / MOE | Commercial software platforms offering integrated, high-accuracy tools for docking, QSAR model development, and molecular modeling. |