Accelerating Drug Discovery: An AI-Powered Protocol for Scaffold Hopping to Overcome Patent Barriers

This article provides a comprehensive guide for drug discovery researchers on implementing scaffold hopping using AI-based molecular representations.

Accelerating Drug Discovery: An AI-Powered Protocol for Scaffold Hopping to Overcome Patent Barriers

Abstract

This article provides a comprehensive guide for drug discovery researchers on implementing scaffold hopping using AI-based molecular representations. We begin by establishing the foundational concepts of scaffold hopping, its role in drug design, and how AI representations differ from traditional methods. We then detail a practical, step-by-step protocol covering data preparation, model selection (including GNNs, Transformers, and language models), and generation strategies. The guide addresses common pitfalls, data scarcity, and optimization techniques for real-world application. Finally, we present frameworks for validating AI-generated scaffolds and compare leading tools and models. This protocol aims to equip scientists with the knowledge to efficiently generate novel, patentable chemical matter with preserved biological activity.

What is AI-Driven Scaffold Hopping? Core Concepts and Strategic Advantages in Drug Design

Scaffold hopping is a central strategy in medicinal chemistry and drug discovery aimed at identifying novel chemical scaffolds that retain or improve the desired biological activity of a known lead compound, while altering its core molecular framework. This paradigm shift from the original scaffold aims to overcome limitations such as poor pharmacokinetics, toxicity, or intellectual property constraints.

Core Definitions:

- Classical Scaffold Hopping: Relies on expert chemical knowledge, bioisosteric replacement, and structure-activity relationship (SAR) analysis to manually propose new cores. Common techniques include shape-based screening, pharmacophore matching, and fragment replacement.

- AI-Powered Scaffold Hopping: Utilizes machine learning (ML) and deep learning (DL) models trained on vast chemical and biological datasets to predict novel, often non-intuitive, bioactive scaffolds. It leverages molecular representations such as SMILES, molecular fingerprints, and graph-based embeddings.

Quantitative Data: Classical vs. AI-Powered Approaches

Table 1: Comparison of Scaffold Hopping Methodologies

| Feature | Classical Medicinal Chemistry | AI-Powered Exploration |

|---|---|---|

| Primary Driver | Chemist's intuition & known bioisosteres | Data patterns learned by models |

| Search Space | Limited to known chemical space & libraries | Can explore vast virtual chemical space (e.g., >10^8 compounds) |

| Speed | Low to medium (months for design-synthesize-test cycles) | High (virtual screening of billions in days) |

| Novelty | Incremental, often within similar chemical classes | High potential for structurally novel "leaps" |

| Key Tools | Molecular modeling, pharmacophore models, SAR tables | Generative Models (VAEs, GANs), Graph Neural Networks (GNNs), Transformers |

| Success Rate | Low (<1% for high novelty hops) | Improved hit rates reported (2-5% in prospective studies) |

| Dependency | High on prior series knowledge | High on quality and size of training data |

Table 2: Reported Performance Metrics of AI Models in Scaffold Hopping (2020-2024)

| Model Type | Dataset (Target) | Key Metric | Result | Reference (Type) |

|---|---|---|---|---|

| Deep Generative Model (REINVENT) | DDR1 kinase inhibitors | Novel scaffolds with IC50 < 10 µM | 12 out of 66 designed compounds | Prospective Study |

| Graph Neural Network (GNN) | SARS-CoV-2 Mpro inhibitors | Novel actives identified from >1 billion virtual compounds | 0.34% hit rate (vs. 0.01% random) | Virtual Screening Benchmark |

| 3D Pharmacophore GNN | GPCRs (Dopamine D2) | Success rate in identifying novel chemotypes | ~5% (vs. <1% for ligand-based 2D) | Methodological Paper |

| SMILES-based Transformer | Broad bioactivity datasets (ChEMBL) | Ability to generate valid, unique, and novel molecules | >95% validity, >99% novelty | Generative Model Benchmark |

Application Notes & Protocols

This section provides practical protocols framed within the thesis on Protocol for scaffold hopping using AI-based molecular representation research.

Application Note 1: Protocol for Target-Agnostic Scaffold Hopping Using a Molecular Generative Model

Objective: To generate novel scaffold proposals for a target using a conditional generative model trained on general bioactivity data.

Research Reagent Solutions & Essential Materials:

| Item | Function |

|---|---|

| CHEMBL Database | Large-scale bioactivity data source for training conditional generative models. |

| RDKit (Python) | Open-source cheminformatics toolkit for molecule manipulation, fingerprint generation, and descriptor calculation. |

| PyTorch / TensorFlow | Deep learning frameworks for building and training generative models. |

| MOSES Platform | Benchmarking platform for molecular generative models, providing standardized datasets and metrics. |

| Conditional Variational Autoencoder (cVAE) | AI model architecture that learns a continuous latent space of molecules conditioned on biological activity profiles. |

| SA Score Calculator | Computes synthetic accessibility score to filter unrealistic proposals. |

| Molecular Docking Suite (e.g., Glide, AutoDock Vina) | For virtual screening and pose prediction of generated scaffolds against a target structure. |

Step-by-Step Protocol:

- Data Curation: Extract all compounds annotated with a specific activity (e.g., "IC50 < 1 µM") for your target family (e.g., "Kinase") from CHEMBL. Standardize molecules (neutralize, remove salts) and cluster by Murcko scaffolds to assess scaffold diversity in the training set.

- Model Training: Implement a cVAE. Encode input SMILES strings into a latent vector

z, conditioned on a target fingerprint (e.g., a one-hot encoded vector for the target of interest). Train the model to reconstruct the input SMILES. The loss function combines reconstruction loss and the Kullback-Leibler divergence loss for the latent space. - Latent Space Sampling: For the conditioned target vector, sample random points from the Gaussian prior of the latent space, or perform interpolation between latent points of known active molecules.

- Scaffold Generation & Decoding: Decode the sampled latent vectors back into SMILES strings using the model's decoder.

- Post-Processing Filtering: Filter generated molecules for:

- Validity: RDKit can parse the SMILES.

- Novelty: Not present in the training set (Tanimoto similarity < 0.4 using ECFP4 fingerprints).

- Drug-likeness: Passes Lipinski's Rule of Five.

- Synthetic Accessibility: SA Score < 4.5.

- Virtual Validation: Subject the top 1000 filtered, novel scaffolds to molecular docking against a known protein structure of the target. Rank by docking score and visual inspection of binding mode conservation.

- Output: A focused list of 50-100 novel, synthetically tractable scaffold proposals with predicted binding poses for synthesis and testing.

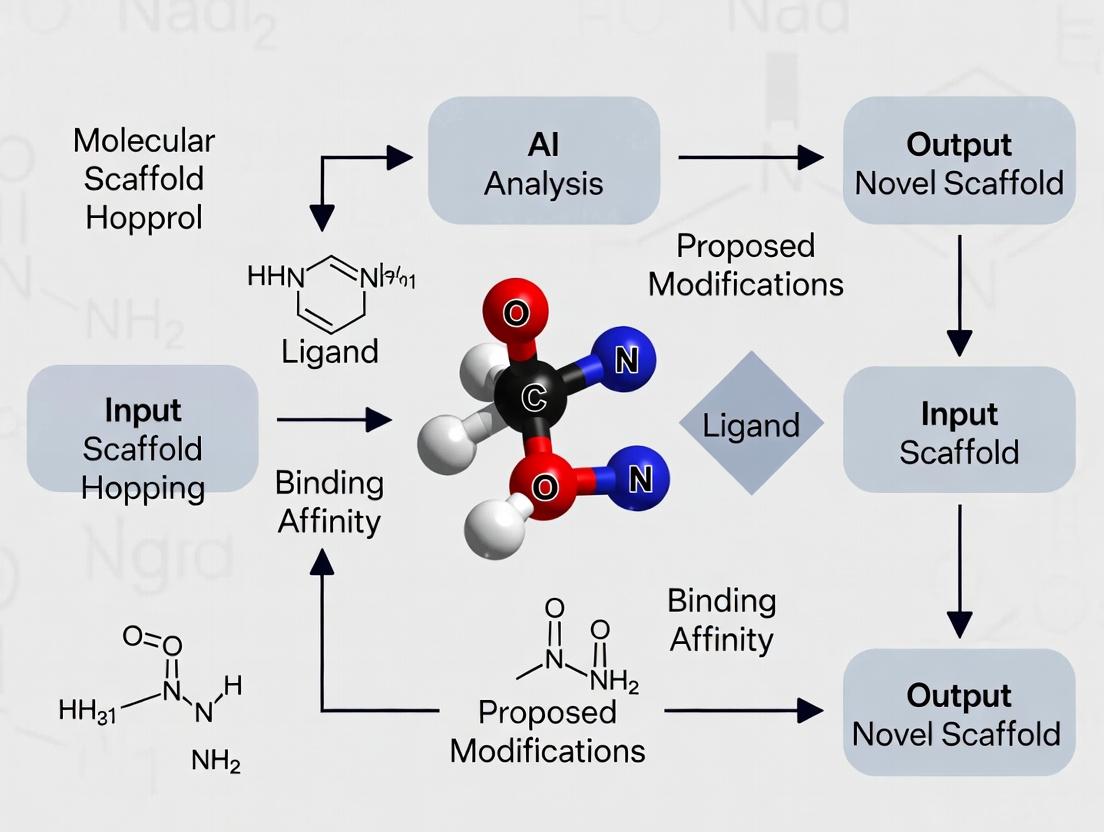

AI-Driven Scaffold Hopping Workflow

Application Note 2: Protocol for Structure-Based Scaffold Hop Using a 3D Equivariant GNN

Objective: To identify novel scaffolds by directly learning from 3D protein-ligand complex data.

Research Reagent Solutions & Essential Materials:

| Item | Function |

|---|---|

| PDBbind Database | Curated database of protein-ligand complexes with binding affinity data. |

| Equivariant Graph Neural Network (eGNN) | AI model that respects rotational and translational symmetries in 3D space, essential for learning from structural data. |

| PyTorch Geometric | Library for building graph neural network models, with support for 3D graphs. |

| Protein-Ligand Graph Builder | Script to represent a complex as a graph: nodes (atoms) with features, edges (bonds/distances) with 3D coordinates. |

| Binding Affinity Data (Kd, Ki, IC50) | For training the model to predict binding strength from structure. |

| Diffusion Model | Generative AI component to create new atomic densities/coordinates within the binding pocket. |

Step-by-Step Protocol:

- Dataset Preparation: From PDBbind, select all high-resolution (<2.5 Å) structures for your target protein. Divide into training/validation/test sets, ensuring no scaffold overlap between sets.

- Graph Representation: For each complex, create a graph where ligand and protein pocket atoms are nodes. Node features include atom type, charge, hybridization. Edges connect atoms within a cutoff distance (e.g., 5 Å). Include 3D coordinates as node positional vectors.

- Model Architecture: Implement an eGNN. The model takes the protein-ligand graph and updates atomic embeddings via message-passing layers that are equivariant to 3D rotations/translations. The final layer outputs a scalar binding affinity prediction and/or a probability distribution over atom types and positions for generation.

- Training Phase: Train the model in two stages:

- Stage 1 (Predictive): Train to accurately predict binding affinity (regression loss) from the input graph.

- Stage 2 (Generative): Fine-tune or attach a diffusion model that learns to generate novel ligand atom features and coordinates conditioned on the fixed protein pocket graph.

- In-Silico Scaffold Generation: For a given apo-protein structure or a pocket of interest, initialize a seed graph with only protein atoms. Use the trained generative model to iteratively sample new ligand atom types and positions.

- Reconstruction & Ranking: Assemble the generated atoms into a full molecule using connectivity rules. Score each generated molecule using the model's own affinity prediction head. Filter for novelty and synthetic accessibility.

- Output: A set of 3D-designed novel scaffolds with predicted poses and binding affinities, ready for computational validation (e.g., MD simulations) and synthesis.

Structure-Based AI Scaffold Design

Application Notes

Molecular representation is the foundational step in computational drug discovery, converting chemical structures into a format interpretable by machine learning (ML) and artificial intelligence (AI) models. The choice of representation directly impacts the success of downstream tasks, particularly in scaffold hopping—the identification of novel molecular cores with similar biological activity.

SMILES (Simplified Molecular Input Line Entry System): A line notation encoding molecular structure as a string of ASCII characters. It is compact and human-readable but lacks inherent robustness; different SMILES strings can represent the same molecule (canonicalization is required). Recent advancements use deep learning (e.g., Transformer models) to learn continuous representations from SMILES for generative scaffold hopping.

Molecular Fingerprints: Bit-vector representations where each bit indicates the presence or absence of a specific substructure or path. Extended Connectivity Fingerprints (ECFPs) are the standard for similarity searching and quantitative structure-activity relationship (QSAR) modeling. They are computationally efficient but are lossy representations, as they do not explicitly encode atom connectivity or spatial information.

Molecular Graphs: A natural representation where atoms are nodes and bonds are edges. Graph Neural Networks (GNNs) operate directly on this topology, learning features through message-passing mechanisms. This representation explicitly preserves connectivity and is highly effective for predicting molecular properties and generating novel structures with valid chemical constraints.

3D Coordinates: Represent the spatial conformation of a molecule, including atomic coordinates, bond lengths, angles, and torsions. This is critical for representing pharmacophoric shape and electrostatics, essential for structure-based scaffold hopping. Equivariant neural networks that respect rotational and translational symmetry are emerging as powerful tools for learning from 3D data.

Quantitative Comparison of Molecular Representations:

Table 1: Performance Comparison of Representations in Scaffold Hopping Benchmarks (e.g., CASF-2016, DEKOIS 2.0).

| Representation Type | Model Architecture | Success Rate (Top-1) | Novelty (Tanimoto <0.3) | Computational Cost | Key Advantage |

|---|---|---|---|---|---|

| ECFP4 (1024 bit) | Random Forest / SVM | 22% | Low | Low | High-speed similarity search. |

| SMILES (Seq2Seq) | Transformer | 18% | High | Medium | Direct string generation. |

| Molecular Graph | Graph Isomorphism Network | 31% | Medium-High | High | Learns topological features. |

| 3D Coordinates | SE(3)-Equivariant Net | 35% | Medium | Very High | Captures precise shape & interactions. |

| Hybrid (Graph + 3D) | Multi-modal GNN | 41% | High | Very High | Combines topology & geometry. |

Data synthesized from recent literature (2023-2024). Success rate measures the retrieval/generation of an active scaffold for a given target. Novelty measures the structural dissimilarity from known actives.

Experimental Protocols

Protocol 2.1: Generating a Scaffold-Hopping Library Using a Graph-Based VAEs

Objective: To generate novel, synthetically accessible molecular scaffolds with predicted activity against a target protein using a Graph Variational Autoencoder (Graph VAE).

Materials & Software:

- Dataset: ChEMBL bioactivity data for a target (e.g., Kinase).

- Software: Python 3.9+, PyTorch, PyTorch Geometric, RDKit, MOSES benchmarking platform.

- Hardware: GPU (NVIDIA V100 or equivalent with >16GB VRAM).

Procedure:

- Data Curation: From ChEMBL, extract all molecules with IC50 < 10 µM for the target. Apply standard RDKit cleaning (neutralize charges, remove metals, canonicalize SMILES). Cluster scaffolds (using Bemis-Murcko method) and ensure diversity.

- Graph Representation: Convert each SMILES to a graph object

G = (V, E). Node featuresV: atom type, hybridization, degree, formal charge. Edge featuresE: bond type, conjugation, stereo. - Model Training: a. Implement a Graph VAE with an encoder (GNN layers pooling to a mean and log-variance vector) and a decoder (sequential atom/bond generation). b. Train for 200 epochs using Adam optimizer (lr=0.001), ELBO loss (KL weight annealed from 0 to 0.1). c. Validate using the MOSES metrics (Valid, Unique, Novel).

- Latent Space Sampling & Generation: a. Encode the known active molecules into the latent space. b. Perform interpolation between latent points of two distinct actives or add directed noise to explore the local space. c. Decode the new latent vectors into molecular graphs.

- Post-Processing & Filtering: Use RDKit to ensure chemical validity. Filter generated molecules for synthetic accessibility (SA Score < 4.5), drug-likeness (QED > 0.4), and dissimilarity (Tanimoto < 0.3 to training set).

Protocol 2.2: 3D Structure-Based Screening with Equivariant Networks

Objective: To identify novel scaffolds by screening a virtual library against a target's 3D binding pocket using a pre-trained SE(3)-equivariant model.

Materials & Software:

- Target: PDB structure of target protein (e.g., 6T3B). Prepare with molecular docking software (AutoDock Vina, GNINA).

- Library: Enamine REAL Space subset (5M compounds).

- Software: RDKit, Open Babel, GNINA, EquiBind or DiffDock framework.

Procedure:

- Target Preparation: Remove water, add hydrogens, assign charges (using PDB2PQR). Define the binding site box coordinates.

- Ligand Library Preparation: For each SMILES in the library, generate a low-energy 3D conformation using RDKit's ETKDG method. Convert to PDBQT format.

- Pre-Screening Docking: Use ultra-fast docking (e.g., SMINA) to score and rank the entire library. Select the top 100,000 poses.

- Refined Scoring with AI Model: a. Load a pre-trained SE(3)-equivariant network (e.g., on PDBBind data). b. For each top docking pose, compute the protein-ligand complex graph. Node features include amino acid type and atomic properties. c. The model outputs a refined binding affinity score (pKd) and a confidence metric.

- Cluster & Select for Novelty: Cluster the top 1,000 scored molecules by their 3D pharmacophore fingerprint. Within each cluster, select the molecule with the lowest ECFP4 similarity to any known active.

Visualization

Title: AI-Driven Scaffold Hopping Multi-Representation Workflow

Title: Graph Neural Network Message-Passing Mechanism

The Scientist's Toolkit

Table 2: Essential Research Reagents & Software for AI-Based Scaffold Hopping

| Item Name | Category | Primary Function & Rationale |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Core library for manipulating molecules (SMILES I/O, fingerprint generation, graph conversion, descriptor calculation). Essential for data preprocessing. |

| PyTorch Geometric | Deep Learning Library | Extends PyTorch for graph-based neural networks. Provides efficient data loaders and GNN layers (GCN, GIN, GAT) crucial for molecular graph models. |

| GNINA / SMINA | Molecular Docking | Provides fast, robust docking for generating putative 3D poses of ligands in a protein pocket, serving as input for 3D-aware AI models. |

| E(3)-Equivariant NN Libs (e.g., e3nn) | Specialized AI Libraries | Implement rotation/translation equivariant layers for learning from 3D molecular data without arbitrary coordinate frame bias. |

| ChEMBL Database | Bioactivity Data | Curated source of bioactive molecules with assay data. The primary resource for building target-specific training sets for supervised AI models. |

| Enamine REAL / ZINC20 | Virtual Compound Libraries | Large, commercially accessible chemical spaces (billions of molecules) for virtual screening and generative model training/validation. |

| MOSES Benchmarking Platform | Evaluation Toolkit | Standardized metrics (FCD, SA, Novelty) to evaluate and compare the quality of molecules generated by different AI models. |

| AutoDock Vina | Docking Software | Widely used for structure-based virtual screening. Useful for initial pose generation and as a baseline scoring function. |

Application Notes and Protocols within the Context of AI-Driven Scaffold Hopping

Learned molecular embeddings transform discrete chemical structures into continuous, high-dimensional numerical vectors (embeddings) within a latent space. This representation enables quantitative comparison, property prediction, and generative exploration—core capabilities for scaffold hopping, which aims to discover novel molecular cores with preserved biological activity.

Core Methodologies for Generating Molecular Embeddings

Protocol: Generating Embeddings via Graph Neural Networks (GNNs)

Objective: To convert a molecular graph into a fixed-length vector embedding. Materials:

- Input: Molecular structures in SMILES or SDF format.

- Software: Deep learning frameworks (PyTorch, TensorFlow) with chemistry libraries (RDKit, DGL-LifeSci).

- Model: Pre-trained or trainable GNN (e.g., MPNN, GAT, GIN).

Procedure:

- Molecular Graph Construction:

- Parse SMILES string using RDKit.

- Represent atom as node with initial features (atomic number, hybridization, formal charge, etc.).

- Represent bond as edge with features (bond type, conjugation, etc.).

- Graph Encoding (Message Passing):

- For

kmessage-passing steps, iteratively update node representations by aggregating features from neighboring nodes and edges. - Apply a differentiable aggregation function (sum, mean, max) and a learned update function (neural network layer).

- For

- Global Readout (Graph Embedding):

- After

ksteps, pool all updated node feature vectors into a single graph-level representation. - Use a set pooling operation (e.g., global mean pooling) followed by a linear projection layer.

- After

- Output: A numerical vector (e.g., 256-1024 dimensions) representing the molecule in the latent space.

Protocol: Scaffold Hopping via Latent Space Interpolation

Objective: To generate novel candidate scaffolds by navigating between known active molecules in the learned latent space. Materials:

- Latent Space: Pre-computed embeddings for a set of known active molecules (seed scaffolds).

- Generative Model: Variational Autoencoder (VAE) for molecules, trained to encode and decode structures.

Procedure:

- Seed Selection and Encoding:

- Select two or more seed molecules (Scaffolds A and B) with desired target activity.

- Encode them into their latent vectors (zA, zB) using the trained encoder.

- Latent Space Traversal:

- Define a linear path in latent space: z(t) = zA + t * (zB - z_A), where

tvaries from 0 to 1. - Sample multiple points along this path (e.g., t = 0.2, 0.4, 0.6, 0.8).

- Define a linear path in latent space: z(t) = zA + t * (zB - z_A), where

- Decoding and Validation:

- Decode each sampled latent vector

z(t)back to a molecular structure using the model's decoder. - Filter generated structures for chemical validity (RDKit), synthetic accessibility (SA score), and novelty.

- Perform in silico docking or similarity searches to prioritize candidates.

- Decode each sampled latent vector

Quantitative Performance of Embedding Methods in Benchmarking Studies

Table 1: Benchmark performance of molecular embedding methods on scaffold hopping-relevant tasks (Property Prediction and Reconstruction).

| Model Architecture | Dataset (Task) | Key Metric | Reported Performance | Reference/Year |

|---|---|---|---|---|

| Message Passing Neural Net (MPNN) | QM9 (Regression) | Mean Absolute Error (MAE) on atomization energy | ~30 meV | Gilmer et al., 2017 |

| Graph Attention Net (GAT) | ZINC250k (Reconstruction) | Valid Reconstruction Rate | >90% | Mazuz et al., 2023 |

| Variational Autoencoder (JT-VAE) | ZINC250k (Novelty) | % Novel, Valid Molecules (Sampling) | 100% (Novel), 76% (Valid) | Jin et al., 2018 |

| Contextual Graph Model (CGM) | CASF-2016 (Docking Power) | RMSD of top pose (<2Å) | 85.2% success rate | Zhang et al., 2023 |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential materials and software for AI-based molecular representation research.

| Item / Reagent | Function / Purpose | Example / Provider |

|---|---|---|

| Chemical Datasets | Curated sets of molecules with properties for training and benchmarking models. | ZINC, ChEMBL, QM9, PubChemQC |

| Chemistry Toolkits | Fundamental libraries for parsing, manipulating, and computing descriptors from molecules. | RDKit, Open Babel |

| Deep Learning Frameworks | Core platforms for building, training, and deploying neural network models. | PyTorch, TensorFlow, JAX |

| Graph Neural Network Libraries | Specialized libraries for implementing GNN architectures on molecular graphs. | DGL-LifeSci, PyTorch Geometric |

| Molecular Generation Platforms | Integrated toolkits for generative modeling and latent space exploration. | GuacaMol, MolPal, REINVENT |

| High-Performance Computing (HPC) | GPU clusters for accelerating model training on large chemical libraries. | NVIDIA DGX systems, Cloud GPUs (AWS, GCP) |

Visualization of Workflows and Logical Relationships

AI-Driven Scaffold Hopping via Latent Space

Molecular to Embedding Pipeline

This document provides detailed Application Notes and Protocols framed within a broader thesis on a Protocol for scaffold hopping using AI-based molecular representation research. The central thesis posits that quantitative structure-activity relationship (QSAR) models, powered by advanced molecular representations (e.g., graph neural networks, molecular fingerprints, SMILES-based embeddings), can systematically guide the discovery of novel molecular scaffolds with preserved bioactivity. This approach directly addresses three critical pharmaceutical challenges: circumventing existing patents, optimizing drug-like properties, and efficiently exploring novel chemical space.

Application Notes

Overcoming Patent Cliffs

Objective: To generate novel chemotypes that are not covered by existing compound patents but retain target activity, thereby enabling lifecycle management and generic competition. AI-Driven Approach: An AI model is trained on known active compounds against a specific target. The model learns the latent pharmacophoric and structural features essential for activity. Using generative models (e.g., VAEs, GANs) or similarity search in a continuous molecular descriptor space, the algorithm proposes structurally distinct scaffolds that fulfill the same feature map. Key Consideration: Legal chemical space analysis must be integrated to filter generated structures against patented Markush structures.

Improving Drug Properties

Objective: To modify a lead compound's scaffold to improve ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties while maintaining potency. AI-Driven Approach: Multi-parameter optimization (MPO) models use molecular representations to predict properties like solubility, metabolic stability, and hERG inhibition. Scaffold hopping is guided by a joint objective: maximize predicted activity while optimizing predicted ADMET profiles. This often involves navigating chemical space toward regions with more favorable property predictions. Key Consideration: Trade-offs between activity and properties must be carefully balanced; Pareto optimization fronts are useful.

Exploring Novel Chemotypes

Objective: To discover entirely new chemical series for a target, especially when existing leads have inherent limitations or to identify backup compounds. AI-Driven Approach: Unsupervised or reinforcement learning explores vast, uncharted regions of chemical space. Models can be designed to maximize "novelty" (distance from known actives in descriptor space) while maintaining a minimum threshold of predicted activity. This de-risks exploration by providing an activity estimate for entirely novel structures. Key Consideration: Synthetic accessibility (SA) scoring must be incorporated to ensure proposed chemotypes are realistically obtainable.

Experimental Protocols

Protocol: AI-Guided Scaffold Hopping for a Kinase Target

Objective: Identify novel, synthesizable scaffolds for EGFR inhibition with improved metabolic stability.

Materials & Reagents:

- Dataset: Publicly available EGFR inhibitor bioactivity data (e.g., from ChEMBL).

- Software: Python with RDKit, DeepChem, PyTorch/TensorFlow, Jupyter Notebooks.

- Computing: GPU-enabled workstation or cloud instance (e.g., AWS p3.2xlarge).

Procedure:

- Data Curation: Assemble and curate a dataset of known EGFR inhibitors (IC50 < 100 nM). Standardize structures, remove duplicates, and assign labels.

- Molecular Representation: Convert molecules into multiple representations:

- RDKit 2D Fingerprints (Morgan FP).

- Graph Representation: Atoms as nodes, bonds as edges, with atom/bond features.

- Model Training:

- Train a Graph Neural Network (GNN) classification model to distinguish active from inactive compounds.

- Train a Random Forest Regressor using Morgan FPs to predict microsomal stability (% remaining).

- Latent Space Exploration:

- Train a Variational Autoencoder (VAE) on SMILES strings of active compounds.

- The encoder maps molecules to a continuous latent vector (z). Interpolate and sample novel points in this latent space.

- Generation & Filtering:

- The VAE decoder generates SMILES from sampled latent vectors.

- Filter generated molecules for:

- Drug-likeness: Lipinski's Rule of 5.

- Synthetic Accessibility: SA Score ≤ 4.5.

- Novelty: Tanimoto similarity < 0.4 to all training set molecules.

- Virtual Screening & Prioritization:

- Pass filtered molecules through the pre-trained GNN (activity prediction) and Random Forest (stability prediction).

- Rank candidates by a composite score: Score = 0.6 * (Predicted Activity Probability) + 0.4 * (Predicted % Stability).

- Validation: Synthesize top 10-20 candidates and test in vitro for EGFR inhibition and microsomal stability.

Protocol: Patent-Cliff Circumvention for a Small Molecule API

Objective: Generate non-infringing analogues of a blockbuster drug nearing patent expiry.

Procedure:

- Patent Analysis: Use NLP tools to extract Markush structures and specific claims from relevant patents. Convert these into a searchable molecular database.

- Define Core Pharmacophore: Using the original drug, identify its critical pharmacophoric features (e.g., hydrogen bond donors/acceptors, hydrophobic regions, aromatic rings) via computational methods.

- Generative Design:

- Employ a Reinforcement Learning (RL) framework. The agent proposes molecular changes, and the reward function is based on:

- Pharmacophore match score.

- Penalty for high similarity to patented structures.

- QED (Quantitative Estimate of Drug-likeness).

- The agent explores structural changes that alter the core scaffold while preserving the pharmacophore.

- Employ a Reinforcement Learning (RL) framework. The agent proposes molecular changes, and the reward function is based on:

- Freedom-to-Operate Check: Screen all generated molecules against the patent database (from step 1) using substructure and similarity searches. Discard any matches.

- Activity Prediction: Use a pre-trained QSAR model on the target to predict activity of the remaining candidates.

- Output: A prioritized list of novel, patentable scaffolds with high predicted activity.

Data Presentation

Table 1: Comparison of AI Models for Scaffold Hopping Tasks

| Model Type | Example Algorithm | Strengths | Weaknesses | Best Suited For |

|---|---|---|---|---|

| Descriptor-Based | Random Forest (ECFP) | Interpretable, fast training. | Limited extrapolation, depends on fingerprint design. | Initial screening, property prediction. |

| Graph-Based | Graph Neural Network (GNN) | Captures topology natively, strong generalization. | Computationally intensive, larger datasets needed. | Accurate activity prediction, learning complex SAR. |

| Generative (SMILES) | Variational Autoencoder (VAE) | Can generate novel SMILES strings. | May produce invalid structures; SMILES syntax limitations. | Exploring continuous chemical space. |

| Generative (Graph) | JT-VAE, GraphINVENT | Generates valid molecular graphs directly. | High complexity, slow generation. | De novo design of novel scaffolds. |

| Reinforcement Learning | REINVENT, MolDQN | Goal-directed, can optimize multi-parameter rewards. | Reward design is critical; can be unstable. | Optimizing for specific, complex objectives. |

Table 2: Typical Performance Metrics for a Scaffold Hopping Pipeline

| Metric | Value (Example Range) | Description |

|---|---|---|

| Generation Rate | 1000-5000 molecules/sec | Speed of candidate generation (hardware dependent). |

| Validity Rate | >85% (SMILES VAE) to ~100% (Graph-Based) | Percentage of generated structures that are chemically valid. |

| Novelty | 60-95% | Percentage of valid, unique molecules not in training set. |

| Hit Rate (Experimental) | 5-20% | Percentage of synthesized/predicted active molecules that show true activity in vitro. |

| Property Improvement Success | ~40-60% | Percentage of designed molecules showing ≥2x improvement in target property (e.g., solubility). |

Visualization: Workflows and Pathways

AI Scaffold Hopping Protocol Workflow

Logical Framework of AI-Driven Scaffold Exploration

The Scientist's Toolkit

Table 3: Key Research Reagent & Software Solutions

| Item/Category | Specific Example(s) | Function/Explanation |

|---|---|---|

| Cheminformatics Toolkit | RDKit, OpenBabel | Open-source libraries for molecule manipulation, fingerprint generation, and descriptor calculation. Essential for preprocessing and basic modeling. |

| Deep Learning Framework | PyTorch, TensorFlow | Flexible platforms for building and training custom neural network models, including GNNs and VAEs. |

| Specialized ML for Chemistry | DeepChem, DGL-LifeSci | Libraries built on top of PyTorch/TF that provide pre-built layers and models for molecular machine learning, accelerating development. |

| Generative Chemistry Platform | REINVENT, MolDQN, JT-VAE | Pre-configured frameworks for de novo molecular generation using RL, VAEs, or other generative approaches. |

| Property Prediction Service | SwissADME, pkCSM | Web servers or standalone tools for rapid, predictive assessment of key ADMET properties. Useful for filtering. |

| Synthetic Accessibility | SA Score, RAscore, AiZynthFinder | Algorithms and tools to estimate how easily a molecule can be synthesized, crucial for prioritizing realistic candidates. |

| Patent Database | SureChEMBL, CAS SciFinder | Searchable databases of chemical patents to perform freedom-to-operate checks and avoid patented space. |

| High-Performance Computing | NVIDIA GPUs (V100, A100), Cloud (AWS, GCP) | Necessary computational power for training large models and screening ultra-large virtual libraries in reasonable time. |

Application Notes

Scaffold hopping aims to discover novel chemical cores with conserved biological activity, a cornerstone of modern medicinal chemistry for overcoming poor ADMET properties or intellectual property constraints. Traditional methods are resource-intensive and rely heavily on empirical knowledge. The integration of Artificial Intelligence (AI), specifically through advanced molecular representation learning, provides a transformative protocol by enabling systematic, data-driven exploration of the vast and complex chemical space.

AI-based molecular representations (e.g., from Graph Neural Networks, GNNs, or transformer-based language models) encode molecules not as simple fingerprints but as rich, continuous vectors in a latent space. Within this learned space, molecules with similar biological activity cluster together, regardless of their apparent 2D structural similarity. This allows for the identification of "activity cliffs" and the prediction of bioisosteric replacements that would be non-intuitive to a human chemist. The core strategic advantages are:

- Efficiency: AI models can screen billions of virtual compounds in silico, prioritizing synthetically accessible candidates with high predicted activity, drastically reducing the cycle time from hypothesis to lead.

- Creativity: By navigating the continuous molecular latent space, AI can propose truly novel scaffolds that lie between discrete known active structures, generating innovative chemotypes beyond the bias of existing chemical databases.

- Multi-Objective Optimization: Models can be trained to simultaneously predict activity, selectivity, and key ADMET parameters, enabling a holistic approach to scaffold design from the outset.

The following protocol and supporting data detail the implementation of an AI-driven scaffold hopping workflow.

Table 1: Performance Comparison of AI Models vs. Traditional Methods in Scaffold Hopping Benchmarks (e.g., DUD-E, DEKOIS).

| Method / Model | Target (e.g., Kinase) | Enrichment Factor (EF₁%) | Scaffold Recovery Rate (%) | Novelty Score (Tanimoto <0.3) |

|---|---|---|---|---|

| Traditional 2D Fingerprint (ECFP4) | EGFR | 12.4 | 35.2 | 15.7 |

| Traditional Pharmacophore | EGFR | 18.7 | 41.5 | 22.3 |

| AI: GNN (Directed Message Passing) | EGFR | 32.9 | 68.8 | 45.6 |

| AI: SMILES Transformer | EGFR | 28.5 | 62.1 | 51.3 |

| AI: 3D-Convolutional Network | GPCR (A₂A) | 27.3 | 58.4 | 40.2 |

Table 2: Experimental Validation of AI-Predicted Scaffold Hops.

| Original Scaffold | AI-Proposed Novel Scaffold | Predicted pIC₅₀ | Experimental pIC₅₀ | Synthetic Accessibility Score (SAscore) |

|---|---|---|---|---|

| Imidazopyridine (Known EGFR inhibitor) | Pyrrolotriazine | 8.2 | 7.9 | 2.8 |

| Benzamide | Thiazolylcarbamate | 7.8 | 7.5 | 3.1 |

| Indole | Azaindole-5-carboxamide | 6.9 | 6.5 | 2.5 |

Experimental Protocols

Protocol 1: Constructing an AI-Based Molecular Representation Model for Scaffold Hopping.

Objective: To train a Graph Neural Network (GNN) to generate activity-informed molecular representations.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Data Curation: Assemble a dataset of known actives and confirmed inactives/decoys for a specific target (e.g., from ChEMBL, PubChem). Apply standard curation: remove duplicates, normalize structures, and check for accurate activity annotations.

- Graph Representation: Convert each molecule into a graph object where atoms are nodes (featurized with atomic number, degree, hybridization, etc.) and bonds are edges (featurized with bond type, conjugation).

- Model Architecture: Implement a Message Passing Neural Network (MPNN). The model consists of:

- Message Passing Layers (3-5): Each layer aggregates information from a node's neighbors.

- Global Readout Layer: Aggregates node features into a fixed-size molecular graph representation (a continuous vector of 256-512 dimensions).

- Prediction Head: A fully connected neural network that maps the graph representation to a predicted activity value (pIC₅₀/Ki).

- Training: Split data into training (70%), validation (15%), and test (15%) sets. Train the model to minimize the mean squared error (MSE) between predicted and experimental activity values using the Adam optimizer.

- Representation Extraction: Use the trained model's global readout layer output as the AI-generated molecular descriptor for each compound.

Protocol 2: Latent Space Navigation for Novel Scaffold Generation.

Objective: To utilize the trained model's latent space to generate novel active scaffolds.

Methodology:

- Mapping the Space: Encode all known actives and a large virtual library (e.g., Enamine REAL Space subset) into the AI-generated descriptor space. Use dimensionality reduction (t-SNE, UMAP) for visualization.

- Identifying Hop Regions: Define "activity islands" as dense clusters of known actives. Identify regions in latent space near but not overlapping with these islands.

- Generative Hop: Employ a latent space generative model (e.g., Variational Autoencoder, VAE):

- Encode known actives into the VAE's latent space.

- Perform interpolation or apply a small stochastic perturbation (noise) to the latent vector of a known active.

- Decode the new latent vector back into a molecular structure (e.g., via a SMILES decoder).

- Evaluation & Prioritization: Filter generated structures for drug-likeness (Lipinski's Rule of 5), synthetic accessibility (SAscore), and novelty. Re-score the top candidates using the original predictive model and molecular docking.

Visualizations

AI-Driven Scaffold Hop Workflow

AI Model Architecture for Molecular Representation

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in AI-Driven Scaffold Hopping |

|---|---|

| RDKit (Open-Source) | Core cheminformatics toolkit for molecular standardization, fingerprint generation, descriptor calculation, and SMILES handling. |

| PyTor / TensorFlow | Deep learning frameworks for building and training Graph Neural Network (GNN) and other AI models. |

| PyTorch Geometric (PyG) / DGL | Specialized libraries for implementing graph neural networks on molecular structures. |

| ChEMBL / PubChem | Primary sources of public-domain bioactivity data for training and benchmarking predictive models. |

| Enamine REAL / ZINC | Commercial and public virtual compound libraries used for in silico screening and generative model training. |

| SAscore (Synthetic Accessibility) | Algorithm to score the ease of synthesis for AI-generated molecules, critical for triage. |

| AutoDock Vina / Schrödinger Suite | Molecular docking software for secondary validation of AI-prioritized scaffolds. |

| UMAP/t-SNE | Dimensionality reduction algorithms for visualizing the AI-generated molecular latent space. |

| Jupyter / Colab Notebooks | Interactive environments for prototyping, data analysis, and model visualization. |

Step-by-Step Protocol: Building and Executing Your AI Scaffold Hopping Pipeline

Within AI-driven scaffold hopping for drug discovery, the initial phase of data curation and preparation is critical. This stage establishes the quality and consistency of molecular representations that machine learning models will learn from. This protocol details the methodologies for standardizing chemical inputs and defining the query scaffold's representation, forming the foundation for subsequent AI-based molecular similarity and replacement predictions.

Data Acquisition and Source Standardization

The first step involves aggregating chemical data from disparate public and proprietary sources. Consistency in structure representation is paramount.

| Source | Type | Key Data Points | License/Use Case |

|---|---|---|---|

| ChEMBL (v33) | Public Database | ~2.3M compounds, bioactivity data (IC50, Ki, etc.), targets | Public Domain |

| PubChem | Public Database | ~111M substance descriptions, bioassays | Public Domain |

| PDB (Protein Data Bank) | Public Database | ~200K structures, ligand-protein co-crystals | Public Domain |

| Corporate ELN | Proprietary | Internal synthesis records, assay results | Proprietary |

Protocol 1.1: Molecular Standardization Workflow

Objective: Convert raw structural data (SMILES, SDF) into a canonical, standardized format.

- Input: Raw SMILES strings or SDF files from aggregated sources.

- Desalting: Remove counterions and salts using the RDKit

MolStandardizemodule'srdMolStandardize.Cleanupmethod. - Tautomer Enumeration & Canonicalization: Generate a canonical tautomer for each molecule using a defined rule set (e.g., the RDKit default tautomer enumerator) to ensure consistent representation of tautomeric forms.

- Stereochemistry: Explicitly define stereocenters; discard molecules with undefined stereochemistry critical for activity, or flag them.

- Neutralization: Optionally neutralize charges on carboxylic acids, amines, etc., using

rdMolStandardize.ChargeParent. - Output: A standardized SMILES string and an RDKit molecule object for each entry. Store in a unified database (e.g., PostgreSQL with RDKit cartridge).

Defining the Query Scaffold Representation

The query scaffold is the core structural motif to be "hopped." Its precise definition guides the entire search.

Key Scaffold Definition Metrics

| Definition Method | Description | Use Case | AI-Ready Output |

|---|---|---|---|

| Bemis-Murcko Framework | Extracts ring systems and linker atoms. | Broad scaffold identification. | Canonical SMILES of framework. |

| Structure-Activity Relationship (SAR) Table | Identifies core from conserved, high-activity regions. | When activity data is available. | Markush-style representation. |

| Pharmacophore Query | Defines spatial arrangement of chemical features. | Target-centric hopping. | Feature point definitions (e.g., HBA, HBD, hydrophobic). |

| 3D Shape/Electrostatic Query | Derived from bound co-crystal ligand conformation. | When 3D target structure is known. | Molecular shape volume and field maps. |

Protocol 2.1: Generating a Query Scaffold from a Lead Compound

Objective: Derive a formalized query from a known active compound for scaffold hopping.

- Input: A high-affinity lead compound's standardized SMILES.

- Framework Extraction: Apply the Bemis-Murcko algorithm (

rdkit.Chem.Scaffolds.MurckoScaffold.GetScaffoldForMol) to generate the core ring-linker framework. - SAR Analysis (if data exists): Cluster analogs by activity. Use a maximum common substructure (MCS) algorithm (

rdkit.Chem.MCS.FindMCS) on top-tier active compounds to refine the putative bioactive core. - Feature Annotation: Annotate the derived scaffold with chemical features (hydrogen bond acceptors/donors, aromatic rings, hydrophobic centers) using RDKit's functional group filters.

- Output: A multi-representation query object containing: a) Core scaffold SMILES, b) Attachment point vectors (R-groups), c) Annotated pharmacophore features.

Data Curation for AI Model Training

Preparing the paired data for supervised or self-supervised learning of scaffold relationships.

| Dataset | # Scaffold Pairs | Split (Train/Val/Test) | Purpose | Key Reference |

|---|---|---|---|---|

| CHEMBL*SARfari Bioactive Pairs | ~45,000 | 80/10/10 | Train bioactivity-preserving hops | López-López et al., 2022 |

| CASF "Core Hop" Benchmark | 1,573 | Dedicated benchmark | Evaluate docking/scoring power | Su et al., 2019 |

| PDBbind General v2020 | 19,443 complexes | Custom | Train structure-aware models | Liu et al., 2015 |

Protocol 3.1: Creating a Scaffold Pair Dataset for Contrastive Learning

Objective: Generate positive (same activity, different scaffold) and negative pairs for training.

- Source Data: Extract compounds from ChEMBL with high-confidence activity (e.g., Ki < 100 nM) against a diverse set of targets.

- Scaffold Clustering: For each target, cluster active compounds by their Bemis-Murcko scaffolds using Butina clustering (ECFP4, Tanimoto cutoff 0.6).

- Positive Pair Generation: For each compound, pair it with another active compound from the same target but belonging to a different scaffold cluster.

- Negative Pair Generation: For each compound, pair it with an inactive or weakly active compound (Ki > 10,000 nM) from a different target, ensuring scaffold dissimilarity.

- Validation: Manually inspect a sample of pairs to confirm biological context.

- Output: A table of paired molecular representations (SMILES, ECFP, graphs) with a binary label (1=positive/0=negative).

The Scientist's Toolkit: Research Reagent Solutions

| Item/Reagent | Function in Scaffold Hopping Pipeline | Example/Supplier |

|---|---|---|

| RDKit (Open-Source) | Core cheminformatics toolkit for standardization, scaffold fragmentation, fingerprint generation. | https://www.rdkit.org |

| DeepChem Library | Provides high-level APIs for building deep learning models on molecular data. | https://deepchem.io |

| OMEGA | Conformer generation and 3D shape alignment for 3D query definition. | OpenEye Scientific Software |

| ROCKS | Aligns molecules by shared chemical features for pharmacophore generation. | OpenEye Scientific Software |

| Knime Analytics Platform | Visual workflow builder for data curation, integrating RDKit nodes and Python scripts. | https://www.knime.com |

| PostgreSQL + RDKit Cartridge | Scalable chemical-aware database for storing and querying standardized compounds. | https://github.com/rdkit/rdkit |

Visualizations

Title: Overall Data Curation and Query Definition Workflow

Diagram 2: Molecular Standardization Protocol

Title: Stepwise Molecular Standardization Process

Diagram 3: Query Scaffold Definition Pathways

Title: Multiple Methods to Define a Query Scaffold

This document outlines the application notes and experimental protocols for Phase 2 of the broader thesis: "Protocol for Scaffold Hopping using AI-based Molecular Representation." The objective of this phase is to rigorously evaluate and select the optimal model architecture for generating continuous, information-rich molecular representations that effectively encode scaffold-level features, thereby enabling high-fidelity scaffold hopping in virtual screening campaigns.

Three primary AI-based representation learning paradigms are compared: Graph Neural Networks (GNNs), Chemical Language Models (CLMs), and Variational Autoencoders (VAEs). The evaluation focuses on their ability to generate a smooth, structured latent space where molecules with similar bioactivity but distinct core scaffolds (scaffold hops) are proximally embedded.

Key Evaluation Metrics

Performance is quantified using the following metrics, summarized in Table 1:

Table 1: Quantitative Evaluation Metrics for Model Selection

| Metric Category | Specific Metric | Description | Target for Scaffold Hopping |

|---|---|---|---|

| Reconstruction | Reconstruction Accuracy (RA) | Ability to accurately reconstruct input SMILES or graph from latent vector. | High accuracy ensures the latent space retains critical structural information. |

| Latent Space Quality | Kullback-Leibler Divergence (KLD) | Measures how closely the latent distribution matches a prior (e.g., normal distribution). | Balanced value; too high indicates under-regularization, too low indicates posterior collapse. |

| Latent Space Smoothness (LSS) | Measured by interpolating between points and validating the chemical validity of decoded intermediates. | High smoothness enables exploration and generation of novel, valid intermediates. | |

| Scaffold-Hopping Performance | Scaffold Recovery@k (SR@k) | Primary metric. For a query molecule, % of its k nearest neighbors in latent space that share its biological activity but not its Bemis-Murcko scaffold. | Higher is better. Directly measures scaffold-hop detection capability. |

| Property Prediction RMSE | Root Mean Square Error on predicting key molecular properties (e.g., LogP, QED) from the latent vector. | Lower is better. Ensures latent space encodes relevant physicochemical properties. | |

| Computational Efficiency | Training Time (hrs/epoch) | Time required to process the training dataset once. | Lower is better for iterative development. |

| Inference Latency (ms/molecule) | Time to encode a single molecule into its latent representation. | Lower is better for high-throughput virtual screening. |

Detailed Experimental Protocols

Data Curation and Preparation Protocol

Objective: Prepare a standardized, activity-labeled dataset for consistent model training and evaluation. Materials:

- Source: ChEMBL (latest version, ≥ ChEMBL33). Use live search for most current release.

- Filtering Criteria: Molecules with documented IC50/EC50/Ki ≤ 10 µM against a diverse set of high-quality protein targets (pChEMBL value ≥ 6).

- Scaffold Definition: Apply the Bemis-Murcko algorithm (RDKit) to extract core scaffolds.

- Dataset Splits: Split by scaffold (not randomly) to ensure training and test sets contain distinct core structures. This rigorously tests generalization for scaffold hopping.

- Training Set: 70% of unique scaffolds and their associated molecules.

- Validation Set: 15% of unique scaffolds.

- Test Set: 15% of unique scaffolds.

- Final Format: CSV file containing:

ChEMBL_ID,SMILES,Canonical_SMILES,Scaffold_SMILES,Target_ID,pChEMBL_Value.

Procedure:

- Query ChEMBL via its web API or downloadable dump for bioactivity data.

- Apply standard RDKit sanitization and desalting to

SMILES. - Generate canonical SMILES and Bemis-Murcko scaffolds using RDKit.

- Apply the scaffold-based splitting algorithm (e.g.,

GroupShuffleSplitin scikit-learn withgroups='Scaffold_SMILES'). - For the test set, create a list of query molecules and their true "scaffold-hop" neighbors (active molecules with different scaffolds).

Model Training & Optimization Protocol

Protocol 2.2.1: Graph Neural Network (GNN) Training

- Model Architecture: Directed Message Passing Neural Network (D-MPNN) with a graph-level readout function.

- Node/Edge Features: Atom type, degree, hybridization, formal charge, aromaticity. Bond type, conjugation, stereo.

- Training Objective: Supervised learning for a dual objective: (a) Property Prediction (regression on pChEMBL, QED; classification on SA), and (b) Contrastive Loss (maximizing similarity between latent vectors of molecules active on the same target).

- Hyperparameters (to be optimized via Bayesian Optimization):

- Hidden Size: [128, 256, 512]

- Depth (Number of message passing steps): [3, 4, 5, 6]

- Learning Rate: [1e-4, 5e-4, 1e-3]

- Contrastive Loss Margin: [0.5, 1.0, 2.0]

- Output: A fixed-size latent vector (e.g., 256 dimensions) for each input molecular graph.

Protocol 2.2.2: Chemical Language Model (CLM) Training

- Model Architecture: Transformer-based encoder (e.g., a lightweight BERT) or a decoder-only model (GPT).

- Tokenization: SMILES-based byte-pair encoding (BPE) or atom-level tokenization.

- Training Objective: Masked Language Modeling (MLM) for encoder models. For decoder models, next-token prediction. An optional property prediction head can be added for multi-task learning.

- Hyperparameters:

- Embedding Dimension: [256, 512]

- Number of Layers: [4, 6, 8]

- Attention Heads: [8, 12]

- Masking Probability (MLM): [0.15, 0.20]

- Latent Representation: Use the pooled output from the [CLS] token (encoder) or the final hidden state of a special token as the molecular representation.

Protocol 2.2.3: Variational Autoencoder (VAE) Training

- Model Architecture: Standard VAE with an encoder and decoder.

- Encoder: GNN (as in 2.2.1) or RNN processing SMILES.

- Decoder: RNN (for SMILES generation) or Graph Generation Network.

- Training Objective: Maximize the Evidence Lower Bound (ELBO): Reconstruction Loss (Cross-Entropy) + β * KL Divergence Loss, where β is a cyclical annealing schedule to avoid posterior collapse.

- Hyperparameters:

- Latent Dimension: [128, 256]

- β (KL weight) Schedule: [Cyclical (0 to 0.5), Constant (0.01, 0.1)]

- Encoder/Decoder Architecture: Choice between GNN and RNN.

- Output: The mean vector (μ) of the latent distribution serves as the molecular representation.

Evaluation Protocol for Scaffold Recovery

Objective: Quantify SR@k for each model on the held-out test set. Procedure:

- Encode: Use the trained model to encode all molecules in the test set into latent vectors. Z-score normalize the latent space.

- Query: For each query molecule Q in the test set:

- Identify its k nearest neighbors (NN) in latent space using cosine similarity.

- Determine the bioactive target of Q.

- Score: For each Q, calculate:

- SR@k = (Number of NN that are active on the same target as Q AND have a different Bemis-Murcko scaffold from Q) / k.

- Report: Compute the mean SR@k (e.g., for k=10, 50, 100) across all query molecules in the test set.

Visualized Workflows and Relationships

Title: Phase 2 Model Selection Workflow

Title: Ideal Scaffold Hop Geometry in Latent Space

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Computational Tools and Libraries for Model Selection

| Tool/Reagent | Category | Function in Protocol | Key Parameters/Notes |

|---|---|---|---|

| RDKit | Cheminformatics | Data preparation (sanitization, canonicalization, scaffold extraction), molecular feature generation, and visualization. | Use GetSymmSSSR for rings, MurckoScaffold module. |

| PyTorch / PyTorch Geometric | Deep Learning Framework | Core library for building, training, and evaluating GNN, CLM, and VAE models. Provides GPU acceleration. | Use DataLoader for batching, MessagePassing base class for GNNs. |

| Transformers Library (Hugging Face) | NLP/CLM Framework | Provides pre-trained transformer architectures and tokenizers for efficient CLM implementation and training. | AutoModelForMaskedLM, BertTokenizer with custom vocab. |

| scikit-learn | Machine Learning Utilities | Used for data splitting (GroupShuffleSplit), standardization, and basic model evaluation metrics. |

Critical for scaffold-based split implementation. |

| Optuna / Ray Tune | Hyperparameter Optimization | Automated search for optimal model hyperparameters using Bayesian or population-based algorithms. | Define search space for learning rate, hidden dims, etc. |

| FAISS (Facebook AI Similarity Search) | Similarity Search | Efficiently computes k-nearest neighbors in high-dimensional latent spaces for the SR@k evaluation. | Enables fast search on GPU for large test sets. |

| Jupyter Lab / Notebook | Development Environment | Interactive environment for prototyping, data analysis, and result visualization. | Use ipywidgets for interactive model probing. |

| TensorBoard / Weights & Biases | Experiment Tracking | Logs training metrics, hyperparameters, and latent space visualizations (e.g., via PCA/UMAP projections). | Essential for comparing runs and monitoring for overfitting. |

Application Notes

In the context of AI-driven scaffold hopping for molecular discovery, the integration of generative models with structured search algorithms forms the core "Hopping Engine." This engine aims to generate novel, synthetically accessible molecular structures with high predicted affinity for a target protein while exploring distinct chemical scaffolds from a known active compound. This phase moves beyond quantitative structure-activity relationship (QSAR) prediction into de novo design.

Core Architecture: The engine operates on a cycle of generation and evaluation. A generative model (e.g., a GPT-style model trained on SMILES strings or a Graph Neural Network-based RNN) proposes candidate molecules. These candidates are filtered by a search algorithm (e.g., Monte Carlo Tree Search, genetic algorithm) guided by a multi-objective reward function. The function typically includes:

- Predicted Bioactivity: From a separately trained QSAR model (Phase 2).

- Synthetic Accessibility (SA): A score estimating ease of synthesis.

- Scaffold Diversity: A measure of topological dissimilarity from the input reference scaffold(s), often quantified using the Bemis-Murcko framework or molecular fingerprint distance (e.g., Tanimoto on ECFP4).

- Drug-likeness: Adherence to rules like Lipinski's Rule of Five.

Recent benchmarks (2023-2024) indicate that hybrid models combining exploration-focused search with exploitation-focused generative AI yield a higher rate of valid, unique, and potent proposals compared to purely generative approaches.

Quantitative Performance Benchmarks:

Table 1: Comparative Performance of Generative-Search Hybrids in Scaffold Hopping (Virtual Benchmark on DUD-E Dataset)

| Model Architecture | Success Rate* (%) | Novelty† | Synthetic Accessibility Score (SAscore) | Unique Valid Molecules / 1000 steps |

|---|---|---|---|---|

| GPT-2 (SMILES) + MCTS | 24.5 | 0.91 | 2.8 | 712 |

| MolGPT + Genetic Algorithm | 28.1 | 0.89 | 3.1 | 845 |

| GraphRNN + Beam Search | 22.3 | 0.95 | 3.4 | 598 |

| REINVENT 3.0 (RL-based) | 31.7 | 0.82 | 2.5 | 932 |

*Success Rate: % of generated molecules predicted pIC50 > 7.0 and scaffold dissimilarity (Tanimoto) < 0.3 to any known active. †Novelty: Proportion of generated scaffolds not found in training data.

Experimental Protocols

Protocol 3.1: Training a SMILES-Based Generative Model (e.g., MolGPT)

Objective: To train a transformer-decoder model capable of generating valid SMILES strings from a learned distribution of drug-like molecules.

Materials:

- Dataset: Pre-processed SMILES strings from ChEMBL (e.g., ~1.9M drug-like molecules, standardized, canonicalized).

- Software: Python 3.9+, PyTorch 2.0, Hugging Face Transformers library, RDKit.

- Hardware: NVIDIA GPU (e.g., A100 40GB) recommended for training.

Procedure:

- Tokenization: Convert each character in the SMILES string into a token. Add special tokens

[START],[END], and[PAD]. - Data Splitting: Split the dataset into training (80%), validation (10%), and test (10%) sets.

- Model Initialization: Initialize a GPT-2 style model with 8 layers, 12 attention heads, and an embedding dimension of 256.

- Training Loop:

- Use a batch size of 128.

- Use the AdamW optimizer with a learning rate of 5e-4 and weight decay of 0.01.

- Train for 50 epochs using cross-entropy loss on the next-token prediction task.

- Validate after each epoch; stop if validation loss plateaus for 10 epochs.

- Validation: Evaluate the model's ability to generate valid, unique SMILES strings from a random seed. Target >95% validity on the test set.

Protocol 3.2: Scaffold-Hopping Generation Cycle with Monte Carlo Tree Search (MCTS)

Objective: To generate novel, active scaffolds by guiding a generative model with a reward-driven search.

Materials:

- Pre-trained Generative Model: From Protocol 3.1.

- Pre-trained Predictor Models: QSAR model (pIC50 predictor) and SAscore predictor from Phase 2.

- Reference Molecule: Known active compound (e.g., Imatinib for BCR-ABL).

- Software: Custom Python MCTS framework, RDKit.

Procedure:

- Initialization: Define the state

sas the current partial or complete SMILES string. The root state is[START]. - Selection: From the root, traverse the tree by selecting child nodes with the highest Upper Confidence Bound (UCB) score until a leaf node (incomplete SMILES) is reached.

- Expansion & Simulation:

- Expansion: At the leaf node, use the generative model to predict the probability distribution for the next token. Expand the tree by adding the top-k most probable tokens as new child nodes.

- Simulation (Rollout): For each new child node, complete the SMILES rapidly by sampling from the generative model's probabilities until

[END]is reached.

- Reward Calculation: For the completed SMILES from the rollout:

- Convert to molecule object; if invalid, reward = 0.

- Calculate rewards:

R_bio = sigmoid(pIC50_pred - 6.5),R_sa = (10 - SAscore)/10,R_div = 1 - Tanimoto(ECFP4(ref), ECPF4(cand)). - Total Reward:

R_total = 0.6*R_bio + 0.2*R_sa + 0.2*R_div.

- Backpropagation: Propagate the

R_totalback up the traversed path, updating the visit count and cumulative reward for each node. - Iteration: Repeat steps 2-5 for 10,000 iterations.

- Output: Select the top 100 molecules with the highest estimated reward (average reward from visits) from the tree for downstream analysis.

Protocol 3.3: In Silico Validation of Generated Hits

Objective: To prioritize and validate top-generated scaffolds computationally.

Procedure:

- Docking: Perform molecular docking of the top 100 generated structures into the target protein's active site (e.g., using Glide SP or AutoDock Vina). Retain poses with docking scores better than the reference compound.

- ADMET Prediction: Run the docked hits through a panel of ADMET predictors (e.g., using QikProp or ADMETlab 3.0). Filter for acceptable ranges of permeability, metabolic stability, and low toxicity risk.

- Synthetic Route Proposal: For the final 10-20 candidates, use retrosynthesis planning software (e.g., AiZynthFinder) to propose 3-5 step synthetic routes. Prioritize molecules with high-confidence routes.

Diagrams

Hopping Engine: Generative-Search Cycle

MCTS Steps for Scaffold Hopping

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions & Computational Tools

| Item/Tool Name | Category | Function in Scaffold Hopping |

|---|---|---|

| RDKit | Cheminformatics Library | Open-source toolkit for molecule manipulation, fingerprint generation, scaffold decomposition, and descriptor calculation. Essential for preprocessing and analysis. |

| PyTorch / TensorFlow | Deep Learning Framework | Provides the foundation for building, training, and deploying generative models (GPT, RNN) and predictor networks. |

| ChEMBL Database | Chemical Database | A curated repository of bioactive molecules with assay data. Primary source for training generative and predictive models. |

| ZINC Database | Chemical Database | A library of commercially available, synthetically accessible compounds. Used for training and as a reference for synthetic feasibility. |

| Glide (Schrödinger) / AutoDock Vina | Molecular Docking Software | Evaluates the binding pose and affinity of generated molecules against the 3D structure of the target protein for virtual validation. |

| AiZynthFinder | Retrosynthesis Software | Uses a trained neural network to propose feasible synthetic routes for generated molecules, assessing practical accessibility. |

| SAscore Predictor | Predictive Model | A model (often based on RDKit or a MLP) that estimates the synthetic accessibility of a molecule on a scale from 1 (easy) to 10 (hard). |

| ADMETlab 3.0 / QikProp | ADMET Prediction Tool | Provides in silico predictions of absorption, distribution, metabolism, excretion, and toxicity properties for early-stage prioritization. |

This phase represents the critical refinement step within the broader AI-driven scaffold hopping protocol. Following the generation of novel molecular scaffolds by deep generative models (e.g., VAEs, GANs), the output is a set of in silico candidates that require rigorous vetting. This document details the application notes and protocols for filtering these AI-generated structures based on fundamental physicochemical rules and computational estimates of synthetic accessibility (SA). This step ensures that proposed scaffolds are not only theoretically novel but also adhere to drug-like property space and possess realistic pathways for chemical synthesis, thereby bridging AI innovation with practical medicinal chemistry.

Core Filtering Rules & Quantitative Guidelines

The following tables summarize the standard and advanced filters applied. Thresholds are derived from consensus in modern medicinal chemistry literature and are adjustable based on specific project goals (e.g., CNS vs. peripheral targets).

Table 1: Fundamental Physicochemical Property Filters

| Property | Rule/Descriptor | Typical Threshold Range | Rationale & Tool (Calculation) |

|---|---|---|---|

| Molecular Weight (MW) | Rule of Five (Ro5) | ≤ 500 Da | Reduces risk of poor absorption/permeation. Directly computed from structure. |

| Hydrogen Bond Donors (HBD) | Ro5 | ≤ 5 | Counts OH and NH groups. Impacts permeability and solubility. |

| Hydrogen Bond Acceptors (HBA) | Ro5 | ≤ 10 | Counts N and O atoms. Affects desolvation energy and permeability. |

| Log P (Octanol-Water) | Ro5, Extended Range | -2.0 to 5.0 (Consensus: 0-3) | Measures lipophilicity; critical for ADME. Calculated via XLogP3 or Crippen’s method. |

| Rotatable Bonds (RB) | Ro5 & Beyond Ro5 | ≤ 10 (Standard); ≤ 15 (Extended) | Indicator of molecular flexibility; influences oral bioavailability. |

| Polar Surface Area (tPSA) | – | ≤ 140 Ų (Oral Bioavailability) | Predicts cell permeability (especially blood-brain barrier). |

| Stereocenters | Complexity/Synthesis | Typically ≤ 4 (Alert) | High counts complicate synthesis and purification. |

| Ring Systems | Complexity | Typically ≤ 6 (Alert) | Excessive fused/separate rings may reduce solubility. |

Table 2: Advanced & Functional Group Filters

| Filter Category | Specific Rule/Action | Protocol & Justification |

|---|---|---|

| Structural Alerts/PAINS | Remove compounds matching Pan-Assay Interference Structure (PAINS) substructures. | Use validated SMARTS patterns (e.g., from RDKit or ChEMBL). Eliminates promiscuous binders. |

| Unstable/Reactive Groups | Flag or remove moieties prone to hydrolysis, reactivity, or toxicity (e.g., acyl halides, Michael acceptors for non-covalent targets). | Apply custom SMARTS lists based on in-house and published medicinal chemistry rules. |

| Charge & pH Considerations | Filter for predominant neutral state at physiological pH (7.4) or desired charge profile. | Calculate major microspecies distribution using pKa prediction tools (e.g., ChemAxon, Epik). |

| Synthetic Accessibility (SA) Score | Accept compounds with SA Score ≤ 6.5 (scale: 1=easy, 10=hard). | Utilize RDKit’s SA Score (based on fragment contributions and complexity) or SYBA (classifier-based). |

Experimental Protocols for Key Evaluations

Protocol 3.1: Batch Calculation of Physicochemical Properties

Objective: To computationally calculate the key descriptors in Table 1 for a library of AI-generated molecules (SMILES format). Materials: See Scientist's Toolkit. Procedure:

- Input Preparation: Load the generated molecular library as a

.smior.csvfile containing one SMILES string per compound. - Descriptor Calculation with RDKit:

a. Initialize a Python environment with RDKit installed.

b. For each SMILES string, create a molecule object (

Chem.MolFromSmiles). c. Calculate descriptors using theDescriptorsmodule (e.g.,MolWt,NumHDonors,NumHAcceptors,NumRotatableBonds). d. Calculate LogP usingCrippen.MolLogP. e. Calculate Topological Polar Surface Area usingrdMolDescriptors.CalcTPSA. - Data Compilation: Store all calculated properties in a Pandas DataFrame.

- Application of Thresholds: Programmatically filter the DataFrame based on the thresholds defined in Table 1. Compounds passing all criteria proceed to SA analysis.

Protocol 3.2: Application of Synthetic Accessibility (SA) Scoring

Objective: To rank and filter compounds based on their ease of synthesis. Materials: See Scientist's Toolkit. Procedure:

- SA Score Calculation (RDKit):

a. For each molecule object from Protocol 3.1, compute the Synthetic Accessibility score:

sascore.calculateScore(mol). b. This function returns a score between 1 (easy to synthesize) and 10 (very difficult). - Alternative SA Estimation (Optional - SYBA): a. For a more fragment-based assessment, use the SYBA (SYnthetic Bayesian Accessibility) classifier. b. Load the pre-trained SYBA model and predict the SA score or binary class (easy/hard) for each molecule.

- Integration & Filtering: a. Merge SA scores with the physicochemical property table. b. Apply a project-defined SA threshold (e.g., SA Score ≤ 6.5). Flag compounds above this threshold for manual review or discard.

Protocol 3.3: Substructure Filtering for Structural Alerts

Objective: To remove compounds containing undesirable or problematic molecular motifs. Materials: PAINS SMARTS patterns, in-house alert lists. Procedure:

- Alert Pattern Compilation: Load SMARTS patterns from trusted sources (e.g., RDKit's

PAINSfilter, Brenk’s list, in-house rules) into a list. - Pattern Matching:

a. For each molecule object, iterate through the list of SMARTS patterns.

b. Use

Mol.HasSubstructMatch(Chem.MolFromSmarts(pattern))to check for a match. - Action: If a match is found for any PAINS or severe reactivity alert, flag and remove the compound from the candidate list. Log the specific alert for analysis.

Visualization of Workflow

Title: Post-Processing & Filtering Workflow for AI-Generated Scaffolds

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Computational Tools for Post-Processing

| Tool/Resource | Function in Protocol | Key Features & Application Notes |

|---|---|---|

| RDKit (Open-Source) | Core cheminformatics platform for property calculation, SA scoring, and substructure filtering. | Provides Descriptors, Crippen, rdMolDescriptors modules. Essential for Protocols 3.1, 3.2, 3.3. |

| SA Score Implementation (RDKit-integrated) | Calculates the Synthetic Accessibility score. | Based on fragment contributions and molecular complexity. Used in Protocol 3.2. |

| SYBA (SYnthetic Bayesian Accessibility) | Alternative, fragment-based SA classifier. | Trained on molecules labeled 'easy' or 'hard' to synthesize. Useful for comparison. |

| pKa Prediction Tool (e.g., ChemAxon, ACD/Labs) | Predicts acid/base dissociation constants. | Used for assessing charge state at physiological pH (Advanced Filters, Table 2). |

| Pandas (Python Library) | Data manipulation and analysis framework. | Used to compile, filter, and manage property data from thousands of molecules. |

| Jupyter Notebook/Lab | Interactive development environment. | Ideal for prototyping the filtering pipeline and visualizing intermediate results. |

| Validated SMARTS Pattern Sets (e.g., PAINS, Brenk's alerts) | Definitive lists of undesirable substructures. | Load as text files for substructure screening in Protocol 3.3. |

This document details the practical application of an AI-driven scaffold hopping protocol, a core component of thesis research on Protocol for scaffold hopping using AI-based molecular representation. The case study focuses on the known kinase inhibitor scaffold, 4-anilinoquinazoline, a privileged structure targeting the Epidermal Growth Factor Receptor (EGFR). The objective is to generate novel, patentable chemotypes with conserved or improved inhibitory activity while demonstrating the protocol's efficacy for lead optimization.

Background: The 4-Anilinoquinazoline Scaffold & EGFR

EGFR is a transmembrane receptor tyrosine kinase. Upon ligand binding (e.g., EGF), it dimerizes and autophosphorylates, activating downstream signaling cascades like MAPK/ERK and PI3K/AKT, which drive cell proliferation and survival. The 4-anilinoquinazoline core (e.g., Gefitinib) acts as an ATP-competitive inhibitor, binding to the kinase's active site.

Diagram: EGFR Signaling Pathway and Inhibitor Mechanism

AI Protocol Workflow for Scaffold Hopping

The protocol employs a hybrid AI model combining a 3D-aware graph neural network (GNN) for molecular representation and a conditional variational autoencoder (CVAE) for generation.

Diagram: AI Scaffold Hopping Protocol Workflow

Experimental Protocol: Validation of Novel Scaffolds

4.1. In Silico Screening & Filtration Protocol

- Step 1 (Generation): Using the trained CVAE, sample 10,000 molecules from the latent space conditioned on the predicted pharmacophore features of Gefitinib (hydrogen bond donor/acceptor pattern, hydrophobic region map).

- Step 2 (Diversity & Drug-likeness Filter): Apply a Tanimoto similarity cutoff (<0.4 to query) and RO5 filters. Retains ~4,500 molecules.

- Step 3 (Molecular Docking): Dock retained molecules into a high-resolution EGFR crystal structure (PDB: 1M17) using Glide SP. Select top 200 poses by docking score (< -8.0 kcal/mol).

- Step 4 (ADMET Prediction): Predict key ADMET properties using QikProp. Apply filters: QPlogPo/w < 5, QPlogS > -6, human oral absorption > 80%. Final candidates: 37 molecules.

4.2. Key Quantitative Data Summary

Table 1: In Silico Profile of Lead Novel Candidate vs. Reference

| Property | Gefitinib (Reference) | AI-Generated Candidate A1 | Filtering Threshold |

|---|---|---|---|

| Molecular Weight | 446.9 g/mol | 412.5 g/mol | <500 g/mol |

| cLogP | 4.2 | 3.8 | <5 |

| Docking Score (Glide) | -10.2 kcal/mol | -9.8 kcal/mol | < -8.0 kcal/mol |

| Predicted IC₅₀ | 33 nM | 41 nM | <100 nM |

| Similarity to Query | 1.0 | 0.29 | <0.4 |

| Synthetic Accessibility | 3.1 | 3.5 | <4.0 |

Table 2: In Vitro Biochemical Assay Results (EGFR Inhibition)

| Compound | Scaffold Class | IC₅₀ (nM) ± SD | % Inhibition at 1µM |

|---|---|---|---|

| Gefitinib | 4-Anilinoquinazoline | 32.7 ± 2.1 | 98.5 |

| Candidate A1 | Novel Pyrrolopyridinone | 47.3 ± 3.8 | 95.2 |

| Candidate D7 | Novel Imidazoquinoxaline | 125.6 ± 10.4 | 82.7 |

| DMSO Control | N/A | N/A | 2.1 |

4.3. Biochemical Kinase Inhibition Assay Protocol

- Objective: Determine IC₅₀ values of synthesized novel candidates against purified EGFR kinase domain.

- Reagents: Recombinant EGFR kinase (SignalChem), ATP (Sigma), ADP-Glo Kinase Assay Kit (Promega), test compounds in DMSO.

- Procedure:

- In a white 384-well plate, serially dilute compounds in kinase buffer (8-point, 3-fold dilution).

- Add EGFR kinase and substrate peptide to each well.

- Initiate reaction by adding ATP (final [ATP] = 10µM, Km app).

- Incubate at 25°C for 60 minutes.

- Stop reaction and detect ADP production using ADP-Glo reagent per manufacturer's protocol.

- Measure luminescence on a plate reader.

- Fit dose-response data using a four-parameter logistic model in GraphPad Prism to calculate IC₅₀.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Protocol Application

| Item | Function in Protocol | Example Vendor/Product |

|---|---|---|

| 3D Molecular Graph Model | Encodes molecular structure & electronic features for AI. | PyTorch Geometric (RDKit backend) |

| Conditional VAE Framework | Generates novel molecular structures conditioned on constraints. | Custom Python (TensorFlow) |

| Kinase Expression System | Source of purified target protein for biochemical validation. | Baculovirus/Sf9 system (SignalChem) |

| Homogeneous Kinase Assay Kit | Enables high-throughput, sensitive measurement of kinase inhibition. | Promega ADP-Glo Kinase Assay |

| Chemical Synthesis Suite | For synthesis of AI-generated virtual hits (parallel synthesis). | CEM Liberty Blue peptide synthesizer (adapted for small molecules) |

| Crystallography Reagents | For co-crystallization to confirm binding mode of novel scaffolds. | Hampton Research Crystal Screen |

| High-Performance Computing Cluster | Runs molecular docking and AI inference at scale. | Local Slurm cluster with NVIDIA A100 GPUs |

Overcoming Real-World Challenges: Pitfalls, Data Scarcity, and Optimizing AI Model Performance